Variational Autoencoders for Molecular Generation: A Comprehensive Guide for Drug Discovery

This article provides a comprehensive exploration of Variational Autoencoders (VAEs) and their transformative role in generative molecular design for drug discovery.

Variational Autoencoders for Molecular Generation: A Comprehensive Guide for Drug Discovery

Abstract

This article provides a comprehensive exploration of Variational Autoencoders (VAEs) and their transformative role in generative molecular design for drug discovery. It covers the foundational principles of VAE architecture, including encoders, decoders, and latent space representation. The content delves into advanced methodological implementations such as graph-based VAEs and transformer-integrated models, alongside their practical applications in de novo drug design. Critical challenges like posterior collapse and molecular representation limitations are addressed with current optimization strategies. The review further examines benchmarking platforms and performance metrics for validating model efficacy, synthesizing key insights to outline future directions for VAE-driven innovation in biomedical research and clinical applications.

Understanding VAEs: From Basic Architecture to Molecular Representation

Variational Autoencoders (VAEs) have emerged as a powerful generative model architecture, finding significant utility in the field of molecular generation research. Since their debut in 2013, VAEs have transformed the landscape of generative modeling by blending deep learning with probabilistic inference [1]. Unlike traditional autoencoders that merely compress and reconstruct data, VAEs learn a continuous, probabilistic latent representation that enables the generation of novel data samples [1] [2]. This capability is particularly valuable in drug discovery, where exploring the vast chemical space of potential drug-like molecules (estimated at 10²³ to 10⁶⁰ compounds) presents a formidable challenge [3]. The core components of a VAE—the encoder, decoder, and latent space—work in concert to enable this generative capability, making them indispensable tools for researchers aiming to design new molecular entities with desired pharmacological properties.

Architectural Fundamentals of VAEs

Core Components and Their Functions

The VAE architecture consists of three primary components working harmoniously: an encoder network, a decoder network, and a structured latent space [1]. Together, these elements form the foundation that enables VAEs to generate new molecular content with remarkable fidelity while ensuring the continuity and completeness of the generated chemical structures.

Table: Core Components of a Variational Autoencoder

| Component | Function | Key Features | Molecular Generation Relevance |

|---|---|---|---|

| Encoder | Maps input data to probabilistic latent representation | Outputs mean (μ) and standard deviation (σ) vectors; uses convolutional or transformer layers | Encodes molecular structures (SMILES, SELFIES, graphs) into continuous latent representations |

| Latent Space | Compressed, probabilistic representation of data | Continuous, structured space following multivariate Gaussian distribution; enables interpolation and sampling | Serves as search space for novel molecules; nearby points decode to structurally similar compounds |

| Decoder | Reconstructs data from latent representations | Uses transposed convolutional or autoregressive layers; outputs reconstructed data samples | Generates novel molecular structures from sampled latent points; ensures syntactic validity |

The Encoder Network

The encoder network in a VAE transforms input data into a latent representation embodying learned attributes [1]. Unlike traditional autoencoders, VAE encoders produce probabilistic representations by outputting both mean and standard deviation vectors for each dimension of the latent space [2]. During this process, input data (x) maps to latent variables (z), commonly written as z|x [1]. A critical sampling layer acts as a constraint point, enabling latent representations that facilitate both reconstruction and generation.

In molecular generation applications, encoder designs have evolved from simple convolutional networks to sophisticated architectures like Transformers and Graph Neural Networks (GNNs) to better handle molecular representations [4] [5]. For instance, the Transformer Graph VAE (TGVAE) employs a molecular graph as input data, capturing complex structural relationships within molecules more effectively than string-based models [4].

The Latent Space Representation

In VAEs, the latent space provides a continuous, probabilistic condensed representation of input data [1]. Each attribute is represented probabilistically, with latent vector values associated with comparable reconstructions from input data [1]. This statistical distribution, determined by mean and variance parameters, ensures minor latent space changes generate consistent new data points [1] [6].

The latent space must exhibit two critical types of regularity: continuity (nearby points yield similar content when decoded) and completeness (any point sampled yields meaningful content) [6]. These properties are enforced by structuring the latent space to follow a known distribution, typically a standard Gaussian, through the Kullback-Leibler (KL) divergence component of the loss function [1] [6]. For molecular generation, this structured latent space enables smooth interpolation between molecular structures and provides a foundation for optimizing molecular properties [3] [5].

The Decoder Network

The decoder network restores the original input using encoded latent variables, essentially reversing the encoding process [1]. Its goal is learning a transformation that takes latent space variables (z) and maps them back into data (x) closely approximating initial inputs [1]. Decoder output dimensions typically match input data dimensions, enabling it to function as a generative model producing new examples similar to training data.

In advanced molecular VAEs, decoder architectures have progressed from recurrent neural networks to autoregressive Transformer decoders, which generate molecular sequences token by token while maintaining chemical validity [5]. For example, STAR-VAE employs an autoregressive Transformer decoder trained on SELFIES representations to guarantee 100% syntactic validity of generated molecules [5].

Mathematical Foundations

VAEs rely on solid mathematical principles for both functionality and efficiency. A well-organized continuous latent space forms their core, critical for enhanced generative capabilities [1]. The key mathematical concepts include variational inference, KL divergence, and the Evidence Lower Bound (ELBO) [1].

The VAE objective function combines reconstruction loss with KL divergence:

Where the first term represents reconstruction error and the second term regularizes the latent space by minimizing the divergence between the learned distribution q(z|x) and the prior distribution p(z) [2]. The full loss function can be written as:

This formulation is known as the Evidence Lower Bound (ELBO), which balances high-quality data reconstruction with appropriate regularization of the latent space [1]. The reparameterization trick enables efficient training by expressing the random latent variable z as a deterministic function of the encoder parameters and an independent random variable: z = μ + σ ⊙ ε, where ε ∼ N(0,1) [1] [7].

Experimental Protocols for Molecular VAE Implementation

Protocol 1: Building a Basic Molecular VAE

Objective: Implement a fundamental VAE for molecular generation using SMILES or SELFIES representations.

Materials and Software:

- Python 3.8+

- Deep learning framework (TensorFlow/Keras or PyTorch)

- RDKit for chemical validation

- MOSES benchmark tools for evaluation

Procedure:

- Data Preprocessing:

- Curate molecular dataset (e.g., from PubChem, ZINC)

- Convert structures to SELFIES representation to guarantee syntactic validity [5]

- Tokenize sequences and create vocabulary

- Split data into training/validation sets (80/20 ratio)

Encoder Implementation:

- Implement embedding layer for token inputs

- Design encoder architecture (e.g., Transformer with 4 layers, 8 attention heads)

- Add output layers for mean (μ) and log-variance (log σ²) of latent distribution

- Implement sampling layer using reparameterization trick

Decoder Implementation:

- Implement autoregressive Transformer decoder with masked self-attention

- Add output projection layer to vocabulary size

- Apply softmax activation for token probability distribution

Training Configuration:

- Initialize optimizer (Adam with learning rate 0.0001)

- Set batch size to 128 and train for 100 epochs

- Use categorical cross-entropy for reconstruction loss

- Weight KL divergence term with annealing schedule (β from 0.01 to 1.0)

Validation:

- Monitor reconstruction accuracy and validity rate

- Evaluate generated molecules using MOSES benchmark metrics [3]

- Assess latent space organization using dimensionality reduction (t-SNE, UMAP)

Protocol 2: Conditional Molecular Generation

Objective: Extend VAE for property-guided molecular generation.

Procedure:

- Property Prediction Module:

- Add property prediction head to encoder output

- Pre-train on labeled molecular property data (e.g., solubility, toxicity)

- Use multi-task learning during VAE training

Conditional Training:

- Concatenate property vectors with latent representations

- Modify decoder to accept conditional inputs

- Implement classifier-guided sampling for generation

Evaluation:

- Assess property optimization capabilities using GuacaMol benchmark [5]

- Evaluate target-specific generation using docking scores

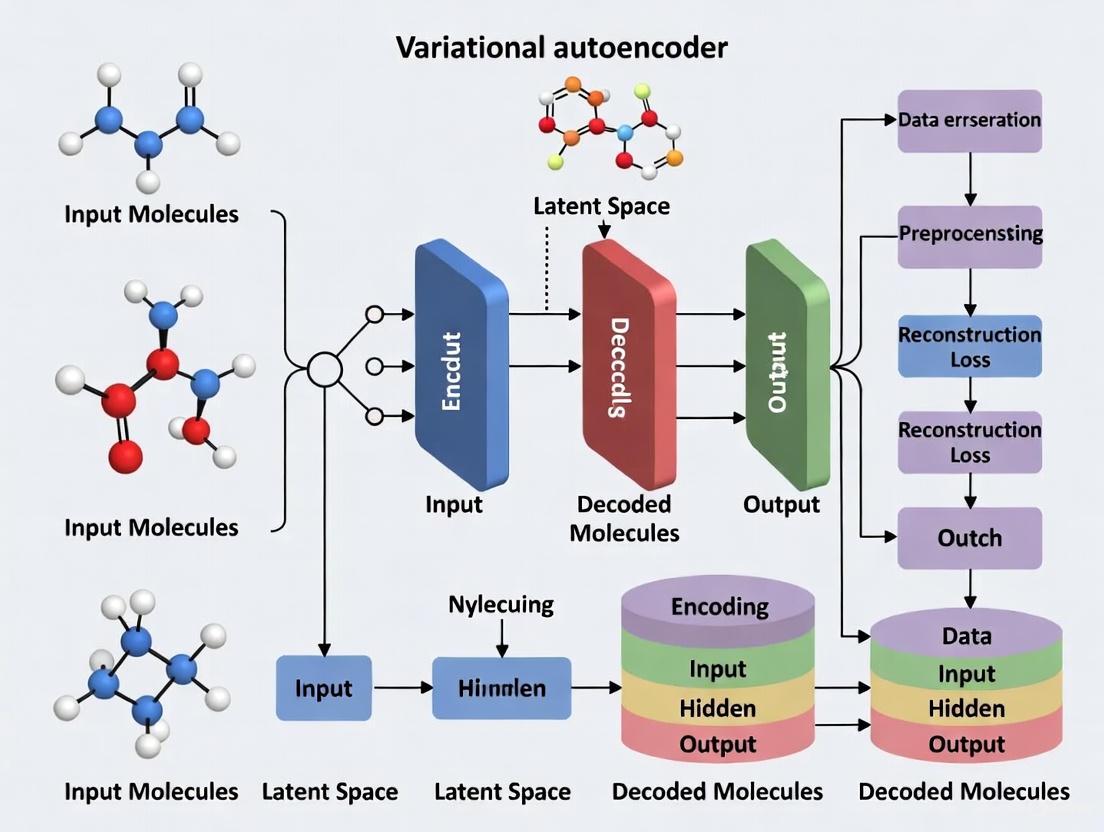

Diagram Title: VAE Architecture for Molecular Generation

Advanced Applications in Molecular Research

Addressing Posterior Collapse in Molecular VAEs

Posterior collapse remains a significant challenge in molecular VAEs, where the model fails to utilize the latent space effectively, limiting the diversity of generated molecules [3]. The PCF-VAE approach addresses this by reparameterizing the loss function and incorporating a diversity layer between the latent space and decoder [3]. This architecture modification, combined with GenSMILES representations that simplify molecular complexity, has demonstrated validity rates of 95-98% across different diversity levels while maintaining 100% uniqueness in generated structures [3].

Table: Performance Comparison of Advanced Molecular VAEs

| Model | Architecture | Representation | Validity Rate | Uniqueness | Novelty | Internal Diversity |

|---|---|---|---|---|---|---|

| PCF-VAE [3] | VAE with diversity layer | GenSMILES | 95.01-98.01% | 100% | 93.77-95.01% | 85.87-89.01% |

| STAR-VAE [5] | Transformer VAE | SELFIES | High (MOSES benchmark) | Competitive | Competitive | Structured latent space |

| TGVAE [4] | Transformer-Graph VAE | Molecular graph | Enhanced vs. string models | Improved diversity | Novel structures | Effective structural capture |

Latent Space Visualization and Analysis

Visualizing the VAE latent space helps understand how the model discerns and assimilates fundamental data structure [8]. By condensing input data into compact latent space, VAEs extract pivotal attributes while neglecting superfluous details [8]. Methods like t-SNE and PCA reduce dimensions, enabling understanding of learned features through visible clusters and patterns [8] [7].

In ensemble visualization applications, VAEs transform spatial features of ensembles into latent spaces following multivariate standard Gaussian distributions, enabling analytical computation of confidence intervals and density estimation [8]. This capability is valuable for understanding uncertainty in molecular property predictions and exploring chemical space neighborhoods around promising candidate molecules.

Diagram Title: Molecular VAE Training and Optimization Workflow

Research Reagent Solutions

Table: Essential Tools for Molecular VAE Research

| Reagent/Tool | Function | Application Example |

|---|---|---|

| SELFIES Representation [5] | Guarantees 100% syntactically valid molecular strings | STAR-VAE uses SELFIES to ensure validity in generated molecules |

| Graph Neural Networks [4] | Processes molecular graph structures directly | TGVAE employs GNNs to capture structural relationships in molecules |

| Low-Rank Adaptation (LoRA) [5] | Enables parameter-efficient finetuning with limited data | STAR-VAE uses LoRA for fast adaptation with property data |

| GenSMILES [3] | Simplified SMILES representation reducing complexity | PCF-VAE uses GenSMILES to enhance robustness and diversity |

| Transformer Architectures [5] | Handles long-range dependencies in molecular sequences | Replaces RNNs in modern VAEs for improved sequence modeling |

| Molecular Property Predictors [5] | Provides conditioning signals for guided generation | Integrated into conditional VAE frameworks for target-oriented design |

Future Directions and Challenges

The field of molecular VAEs continues to evolve with several promising research directions. Hybrid models that combine VAEs with other generative approaches such as GANs or diffusion models show potential for enhancing sample quality and diversity [1] [9]. Addressing the challenge of posterior collapse remains an active area of investigation, with approaches like PCF-VAE demonstrating significant improvements in generating diverse, valid molecules [3].

Future work may focus on better integration of 3D structural information, improved conditioning mechanisms for multi-property optimization, and more efficient training strategies for scaling to larger chemical spaces [9] [5]. As molecular VAEs mature, they are poised to become increasingly valuable tools in the drug discovery pipeline, enabling more efficient exploration of chemical space and acceleration of therapeutic development.

Variational inference provides a scalable framework for approximate probabilistic inference, which has become a cornerstone of modern machine learning applications, including the generation of molecular structures for drug discovery. The fundamental challenge that necessitates variational inference is the intractability of the posterior distribution in complex latent variable models. When working with latent variable models, we often have observed variables (e.g., molecular structures) and latent variables (e.g., hidden representations capturing chemical properties). In a Bayesian framework, we specify a prior over the latent variables 𝑝(𝐳) and a likelihood function 𝑝(𝐱|𝐳) that connects latents to observables. The cornerstone of Bayesian inference is the posterior distribution 𝑝(𝐳|𝐱) = 𝑝(𝐱,𝐳)/𝑝(𝐱), which requires computation of the marginal likelihood or evidence 𝑝(𝐱) = ∫ 𝑝(𝐱,𝐳) 𝑑𝐳. This integral is generally intractable for complex models, as it involves integration over all possible configurations of latent variables, often with exponential computational cost [10].

Key Mathematical Concepts

Kullback-Leibler Divergence

The Kullback-Leibler (KL) divergence measures the similarity between two probability distributions. Given the true posterior 𝑝(𝐳|𝐱) and a variational approximation 𝑞(𝐳), the KL divergence is defined as:

KL ( 𝑞(𝐳) ‖ 𝑝(𝐳|𝐱) ) = ∫ 𝑞(𝐳) log [ 𝑞(𝐳) / 𝑝(𝐳|𝐱) ] 𝑑𝐳 = - ∫ 𝑞(𝐳) log [ 𝑝(𝐳|𝐱) / 𝑞(𝐳) ] 𝑑𝐳

This divergence is non-negative (KL ≥ 0) and zero only when 𝑞(𝐳) equals 𝑝(𝐳|𝐱). However, it is not symmetric (KL(𝑝‖𝑞) ≠ KL(𝑞‖𝑝)) and does not satisfy the triangle inequality, thus not a true distance metric. The forward KL (𝑝‖𝑞) tends to be "mode-covering" (averaging), while the reverse KL (𝑞‖𝑝) tends to be "mode-fitting" [10].

The Evidence Lower Bound (ELBO)

Direct minimization of KL(𝑞(𝐳)‖𝑝(𝐳|𝐱)) is intractable because it requires the very evidence term we cannot compute. The derivation of the Evidence Lower Bound (ELBO) provides a solution through mathematical transformation:

KL ( 𝑞(𝐳) ‖ 𝑝(𝐳|𝐱) ) = 𝔼₍𝐳∼𝑞₎ [ log 𝑞(𝐳) ] - 𝔼₍𝐳∼𝑞₎ [ log 𝑝(𝐳|𝐱) ] = 𝔼₍𝐳∼𝑞₎ [ log 𝑞(𝐳) ] - 𝔼₍𝐳∼𝑞₎ [ log 𝑝(𝐱,𝐳) - log 𝑝(𝐱) ] = 𝔼₍𝐳∼𝑞₎ [ log 𝑞(𝐳) - log 𝑝(𝐱,𝐳) ] + log 𝑝(𝐱)

Since KL divergence is non-negative, we have: log 𝑝(𝐱) ≥ 𝔼₍𝐳∼𝑞₎ [ log 𝑝(𝐱|𝐳) ] - KL( 𝑞(𝐳) ‖ 𝑝(𝐳) )

The right-hand side is the ELBO. Maximizing the ELBO minimizes the KL divergence and provides a lower bound to the log evidence [10].

Visualizing the Core Relationships

The following diagram illustrates the fundamental relationship between evidence, KL divergence, and ELBO:

Application to Molecular Generation

Variational Autoencoders for Molecular Design

In molecular generation, variational autoencoders (VAEs) leverage the variational inference framework to learn continuous latent representations of molecular structures. The encoder network approximates the posterior 𝑞(𝐳|𝐱), while the decoder network parameterizes the likelihood 𝑝(𝐱|𝐳). During training, the ELBO objective is maximized, forcing the model to learn chemically meaningful representations while regularizing the latent space [3].

Current research addresses specific challenges in molecular VAEs, particularly posterior collapse, where the model fails to utilize the latent space effectively, resulting in low diversity of generated molecules. The PCF-VAE approach introduces reparameterization of the loss function and transforms SMILES strings into GenSMILES to reduce complexity and enhance robustness [3].

Advanced Variational Methods

Recent advancements incorporate more expressive latent distributions. The Variational Mean Flow (VMF) framework models the latent space as a mixture of Gaussians rather than a unimodal Gaussian, better capturing the multimodal nature of molecular distributions. This approach enables efficient one-step inference while maintaining generation quality and diversity [11].

Another innovation combines variational inference with causal modeling through Causality-Aware Transformers (CAT), which enforce directional dependencies in molecular assembly through masked attention mechanisms, ensuring causally coherent generation of molecular substructures [11].

Experimental Protocols & Implementation

Protocol: Implementing a Basic Molecular VAE

Purpose: To create a variational autoencoder for generating novel molecular structures with desired properties.

Materials:

- Molecular dataset (e.g., ZINC, QM9)

- Graph neural network libraries (PyTorch Geometric, DGL)

- Chemical validation tools (RDKit, OpenBabel)

Procedure:

- Data Preparation:

- Convert molecular structures to appropriate representation (SMILES, graphs)

- Apply standardization: neutralize charges, remove solvents, normalize tautomers

- Split dataset into training/validation/test sets (80/10/10)

Model Architecture:

- Encoder: Graph isomorphism network (GIN) or graph attention network (GAT)

- Latent space: Multivariate Gaussian (mean and variance vectors)

- Decoder: Recurrent network (for SMILES) or graph generation network

Training Configuration:

- Objective: ELBO = 𝔼[log p(𝑥|𝑧)] - β⋅KL(𝑞(𝑧|𝑥)‖𝑝(𝑧))

- Optimizer: Adam (learning rate: 0.001, β₁: 0.9, β₂: 0.999)

- Batch size: 128-256 depending on memory constraints

- β annealing: Gradually increase β from 0 to 1 over training

Validation:

- Chemical validity: Percentage of valid molecular structures

- Uniqueness: Fraction of duplicate molecules in generated set

- Novelty: Percentage of generated molecules not in training set

- Diversity: Structural diversity metrics (Tanimoto similarity, scaffold diversity)

Protocol: Mitigating Posterior Collapse in Molecular VAEs

Purpose: To address the posterior collapse problem where the model ignores latent codes.

Procedure:

- Architectural Modifications:

- Use weaker decoders (e.g., single-layer LSTM instead of multi-layer)

- Add skip connections from encoder to decoder

- Implement stochastic recurrent connections in decoder

Training Techniques:

- Apply KL annealing: gradually increase weight of KL term in ELBO

- Use free bits: enforce minimum KL per latent dimension

- Add auxiliary losses: property prediction, fragment conservation

Alternative Objectives:

- Implement InfoVAE: Add mutual information term to objective

- Use β-VAE: Weight KL term more heavily (β > 1)

Workflow for Molecular Generation with VAEs

The complete experimental workflow for molecular generation integrates each component of the variational framework:

Research Reagent Solutions

Table 1: Essential computational tools for molecular generation with variational autoencoders

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| RDKit | Cheminformatics toolkit for molecule manipulation and validation | Essential for processing SMILES, calculating molecular properties, and validating generated structures [3] |

| PyTorch Geometric | Graph neural network library | Implements graph encoders for molecular structures; supports message passing and graph pooling [4] |

| TensorFlow Probability | Probabilistic programming | Provides distributions, bijectors, and probabilistic layers for building VAE components |

| MOSES Benchmark | Evaluation framework for molecular generation | Standardized metrics for validity, uniqueness, novelty, and diversity [3] |

| Graphviz | Graph visualization | Creates publication-quality diagrams of molecular structures and model architectures |

Performance Metrics and Comparative Analysis

Table 2: Performance comparison of VAE-based molecular generation methods

| Model | Validity (%) | Uniqueness (%) | Novelty (%) | Internal Diversity | Key Innovation |

|---|---|---|---|---|---|

| PCF-VAE [3] | 95.01-98.01 | 100 | 93.77-95.01 | 85.87-89.01% | Posterior collapse mitigation via loss reparameterization |

| TGVAE [4] | High (exact values not reported) | High | High | High | Transformer + GNN integration for graph-based generation |

| MolSnap [11] | 100 | Not reported | Up to 74.5 | Up to 70.3% | Variational Mean Flow with mixture priors |

| Standard VAE [3] | Typically <90% | Variable | Variable | Often limited | Baseline for comparison |

Advanced Applications and Future Directions

The integration of variational inference with molecular generation continues to evolve. Recent approaches combine VAEs with flow-based methods, where normalizing flows transform simple distributions into complex ones through a series of invertible transformations, providing more flexible posterior approximations [11].

In genome-wide association studies (GWAS), variational inference enables scalable analysis of large biobanks. The Quickdraws method uses stochastic variational inference with spike-and-slab priors to increase association power without sacrificing computational efficiency [12].

For single-cell RNA sequencing data, probabilistic matrix factorization with variational inference (PMF-GRN) infers gene regulatory networks by decomposing gene expression into latent factors representing transcription factor activity and regulatory relationships [13].

These applications demonstrate how the mathematical foundations of variational inference—KL divergence and ELBO—enable scalable, probabilistic modeling across diverse scientific domains, particularly in molecular generation where handling complexity and uncertainty is paramount for effective drug discovery.

The reparameterization trick is a foundational technique in machine learning that enables the training of probabilistic models, most notably Variational Autoencoders (VAEs), through standard gradient-based methods. In the context of molecule generation for drug discovery, this trick is indispensable. It allows researchers to efficiently explore vast chemical spaces by learning smooth, continuous latent representations of molecular structures. By providing a pathway for gradient flow through random sampling nodes, the reparameterization trick facilitates the optimization of complex objective functions that balance molecular validity, diversity, and desired pharmacological properties. This document details the application of this technique and its associated sampling processes within molecular generative models, providing structured protocols and data for research and development professionals.

Technical Foundation of the Reparameterization Trick

The Problem: Non-Differentiable Sampling

In a standard VAE, the encoder neural network does not output a deterministic latent vector z. Instead, it learns the parameters (mean μ and standard deviation σ) of a Gaussian distribution, from which the latent vector z is sampled. This sampling operation is inherently stochastic and non-differentiable, which blocks the flow of gradients during backpropagation, preventing the model from learning the parameters μ and σ [14] [15].

The core objective is to optimize the Evidence Lower Bound (ELBO), which includes a reconstruction loss and a regularization term (Kullback-Leibler divergence). Computing the gradient of the expectation term, ∇φ E_{z∼qφ(z|x)}[f(z)], with respect to the distribution parameters φ is not straightforward due to the sampling step [16].

The Solution: Reparameterization

The reparameterization trick addresses this by decoupling the randomness from the learnable parameters. Instead of sampling z directly from N(μ, σ²), the random variable z is expressed as a deterministic function of the parameters and an independent noise variable. For a Gaussian distribution, this is achieved as follows:

Deterministic Function: z = gφ(ε, x) = μφ(x) + σφ(x) ⊙ ε

Noise Variable: ε ∼ N(0, I)

Here, ε is an auxiliary noise variable sampled from a standard normal distribution, μφ(x) and σφ(x) are the outputs of the encoder network, and ⊙ denotes element-wise multiplication [14] [16]. This reformulation moves all stochasticity to the variable ε, which is independent of the parameters φ. The path from the parameters φ to the latent variable z is now entirely deterministic and differentiable, allowing gradients to flow through the model via z to the encoder, enabling end-to-end training with stochastic gradient descent [15] [16].

Table 1: Reparameterization Formulations for Common Distributions

| Distribution | Reparameterization Function z = gφ(ε) |

Noise Distribution p(ε) |

|---|---|---|

| Gaussian | z = μ + σ ⊙ ε |

ε ∼ N(0, I) |

| Exponential | z = -log(ε) / λ |

ε ∼ Uniform(0, 1) |

Application in Molecular Variational Autoencoders

In molecular generation, VAEs map input molecular representations (e.g., SMILES, SELFIES) into a structured latent space. The reparameterization trick is the engine that makes this learning process feasible.

Molecular VAE Architecture and Workflow

The following diagram illustrates the flow of data and gradients in a molecular VAE that utilizes the reparameterization trick.

Diagram 1: Molecular VAE with Reparameterization. This workflow shows how gradients flow from the reconstruction loss back through the deterministic latent vector z to the encoder's parameters.

Quantitative Performance in Molecular Generation

The effectiveness of VAEs trained with the reparameterization trick is measured by their ability to generate valid, unique, and novel molecules. The following table summarizes key performance metrics from recent state-of-the-art molecular VAE models on standard benchmarks.

Table 2: Performance Metrics of Molecular VAEs on Generation Tasks

| Model | Validity (%) | Uniqueness (%) | Novelty (%) | Internal Diversity (IntDiv %)* | Key Feature |

|---|---|---|---|---|---|

| PCF-VAE [3] | 95.01 - 98.01 | 100 | 93.77 - 95.01 | 85.87 - 89.01 | Mitigates posterior collapse |

| STAR-VAE [5] | High (SELFIES) | - | - | - | Transformer-based, uses SELFIES |

| VAE-CYC [17] | Good | - | - | Good | Cyclical annealing to prevent collapse |

| MolMIM [17] | High | - | - | Good | Alternative architecture |

Internal Diversity (IntDiv) measures the structural variety within a set of generated molecules. A higher value indicates greater diversity [3].

Experimental Protocols

Protocol: Implementing the Reparameterization Trick for a Gaussian VAE

This protocol details the steps to implement the reparameterization trick in a molecular VAE using a Gaussian latent space.

Objective: To enable gradient-based optimization of a VAE for molecular generation by implementing the reparameterization trick.

Materials:

- Hardware: GPU-enabled workstation.

- Software: Python 3.x, PyTorch or TensorFlow, RDKit, molecular dataset (e.g., ZINC, ChEMBL).

Procedure:

- Encoder Forward Pass:

- Pass an input molecule (as a SMILES or SELFIES string, suitably encoded) through the encoder network.

- The encoder outputs two vectors: the mean

μand the logarithm of the variancelog σ². Usinglog σ²ensures the standard deviation is always positive during optimization [15].

Sampling with Reparameterization:

Decoder Forward Pass & Loss Computation:

- Pass the latent vector

zthrough the decoder network to reconstruct the original input molecule. - Compute the total loss

L, which is the negative ELBO:L = L_reconstruction + β * D_KL(q_φ(z|x) || p(z))where:

- Pass the latent vector

Backward Pass & Optimization:

- Compute the gradients of the total loss

Lwith respect to all model parameters (includingφfrom the encoder) using backpropagation. Becausezis a deterministic function ofφ, gradients can flow through it. - Update the model parameters using a stochastic gradient descent optimizer (e.g., Adam).

- Compute the gradients of the total loss

Troubleshooting:

- Posterior Collapse: If the KL divergence term collapses to zero early in training, the encoder ignores the input. Mitigation strategies include KL annealing (gradually increasing

βfrom 0) or using a cyclical annealing schedule [17] [3]. - Low Validity: When using SMILES strings, invalid outputs are common. Consider switching to SELFIES representations, which guarantee 100% syntactic validity [5].

Protocol: Optimizing Molecules in Latent Space with Reinforcement Learning

This protocol describes a method for optimizing generated molecules for specific properties by combining a pre-trained VAE with a latent space optimization algorithm.

Objective: To generate molecules with optimized properties (e.g., high drug-target affinity, specific lipophilicity) by navigating the continuous latent space of a pre-trained VAE.

Materials:

- A pre-trained molecular VAE with a well-structured latent space.

- A reward function

R(m)that scores a moleculembased on the desired properties.

Procedure:

- Latent Space Continuity Check:

- Before optimization, verify the continuity of the VAE's latent space. Encode a set of test molecules to their latent points

z₀. - Perturb

z₀by adding Gaussian noise with varying variancesσto createz' = z₀ + ε, ε ∼ N(0, σI). - Decode the perturbed points and measure the Tanimoto similarity between the original and perturbed molecules. A gradual decrease in similarity with increasing

σindicates a continuous space suitable for optimization [17].

- Before optimization, verify the continuity of the VAE's latent space. Encode a set of test molecules to their latent points

Optimization Loop:

- Initialization: Start from an initial latent point

z(e.g., encoding of a seed molecule or a random point). - Action: At each step

t, the optimization algorithm (e.g., Proximal Policy Optimization - PPO) proposes a stepΔzin the latent space, moving to a new pointz_t = z_{t-1} + Δz. - Evaluation: Decode

z_tto a moleculem_tand compute the rewardR(m_t). - Feedback: The reward signal

R(m_t)is used to update the policy of the optimization algorithm, encouraging it to explore regions of latent space that decode to high-scoring molecules. - Iteration: Repeat steps b-d for a fixed number of iterations or until convergence [17].

- Initialization: Start from an initial latent point

Candidate Selection:

- Decode the final latent points from the optimization trajectory to obtain the optimized candidate molecules.

- Validate the chemical structures and properties using external tools (e.g., RDKit, molecular docking simulations).

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Molecular VAE Research

| Item Name | Function / Purpose | Example Use Case |

|---|---|---|

| ZINC Database | A public repository of commercially available chemical compounds for training and benchmarking. | Serves as the primary dataset for training generative models [17]. |

| ChEMBL Database | A large-scale bioactivity database for drug discovery. | Used for training property prediction models and conditional generation [18]. |

| SELFIES | A 100% robust molecular string representation. | Replaces SMILES in VAEs to guarantee generation of syntactically valid molecules [5]. |

| RDKit | Open-source cheminformatics software. | Used for parsing SMILES/SELFIES, calculating molecular properties, and validating generated structures [17]. |

| PCF-VAE Loss | A modified VAE loss function designed to prevent posterior collapse. | Improves the diversity and validity of generated molecules in de novo drug design [3]. |

| Low-Rank Adapters (LoRA) | A parameter-efficient fine-tuning method. | Adapts a large pre-trained VAE to new property prediction tasks with limited labeled data [5]. |

This application note provides a detailed comparison of SMILES strings and molecular graphs as molecular representations, contextualized within Variational Autoencoder (VAE)-based molecular generation research. We include structured data, experimental protocols, and visualization tools to aid researchers in selecting and implementing these representations for drug discovery applications.

Molecular representation is a foundational step in computational chemistry, bridging the gap between chemical structures and their biological properties [19]. In VAE-based molecular generation, the choice of representation directly influences the model's ability to learn a continuous, meaningful latent space from which valid and novel molecules can be decoded [20] [21]. SMILES and molecular graphs are two dominant representations, each with distinct strengths and limitations for deep learning applications. This note provides a practical framework for their evaluation and use.

Core Representation Formats: A Technical Comparison

SMILES (Simplified Molecular Input Line Entry System) is a line notation that uses short ASCII strings to describe molecular structure [22] [23]. Molecular Graphs represent a molecule as a set of nodes (atoms) and edges (bonds), directly encoding its topological structure [20].

Table 1: Quantitative Comparison of Representation Performance in VAE Models

| Feature | SMILES-Based VAE (e.g., CVAE, GVAE) | Graph-Based VAE (e.g., JT-VAE, NP-VAE) |

|---|---|---|

| Representation Type | Sequential, string-based [19] | Topological, graph-based [20] |

| Inherent Validity of Generated Structures | Low; many outputs are invalid SMILES [20] [21] | High; outputs are inherently valid molecular graphs [20] |

| Handling of Large/Complex Molecules | Limited; struggles with complex structures like large natural products [20] | Excellent; newer models (e.g., NP-VAE) are designed for large compounds [20] |

| Inclusion of Stereochemistry | Supported in isomeric SMILES [22] [24] | Can be incorporated as node/edge parameters [20] |

| Example Reconstruction Accuracy | Lower than graph-based models [20] | NP-VAE demonstrated higher reconstruction accuracy [20] |

Table 2: Qualitative Analysis of Strengths and Weaknesses

| Aspect | SMILES Strings | Molecular Graphs |

|---|---|---|

| Primary Strengths | Compact, human-readable, vast existing support in cheminformatics [22] [24] | Natural representation of structure, high validity rates, flexible feature attachment [20] |

| Key Limitations | Non-uniqueness, sensitivity to small syntax errors, abstract representation [20] [25] | Computational complexity, requires specialized canonicalization, historically limited to smaller molecules [20] |

| Best-Suited VAE Tasks | Initial prototyping, exploration of chemical language models [19] | Generation of syntactically valid, complex molecules, and scaffold hopping [20] [19] |

Experimental Protocols for VAE Modeling

Protocol: Building a SMILES-Based VAE (CVAE)

This protocol outlines the steps for constructing a SMILES-based Conditional VAE (CVAE) for multi-property molecular generation [21].

1. Data Preprocessing and Canonicalization

- Input: Raw molecular dataset (e.g., from ZINC database [21]).

- Procedure:

- Canonicalization: Use a cheminformatics toolkit (e.g., RDKit) to convert all structures into a unique, canonical SMILES string. This ensures consistent representation [21].

- Tokenization: Treat the SMILES string as a sequence of characters. Pad the end of the string with a unique termination character (e.g., 'E').

- Vectorization: Convert each character into a one-hot encoded vector.

- Property Conditioning: Create a condition vector (c) containing normalized values of target molecular properties (e.g., Molecular Weight, LogP, TPSA). For integer properties like HBD/HBA, use one-hot encoding [21].

2. Model Architecture and Training

- Encoder: A Recurrent Neural Network (RNN), typically with LSTM cells, processes the one-hot encoded SMILES sequence and the condition vector to produce a latent vector (z) [21].

- Decoder: Another RNN (the decoder) takes the latent vector (z) and the same condition vector (c) to reconstruct the SMILES string sequence step-by-step [21].

- Training Objective: Minimize the loss function of the CVAE:

E[logP(X|z,c)] - D_KL[Q(z|X,c) || P(z|c)], where the first term is the reconstruction loss and the second is the Kullback-Leibler divergence, which regularizes the latent space [21].

3. Molecular Generation and Validation

- Sampling: Generate molecules by sampling a latent vector (z) from the prior distribution

N(0, I)and concatenating it with a desired condition vector (c). - Decoding: Use a "stochastic write-out" process where each character in the SMILES string is sampled from the probability distribution output by the decoder's softmax layer. Perform multiple decodings (e.g., 100x) per latent vector to maximize valid output [21].

- Validation: Pass the generated SMILES string to RDKit to check for chemical validity and calculate its properties for comparison with the target condition [21].

Protocol: Building a Graph-Based VAE (NP-VAE)

This protocol is based on the NP-VAE model, which is designed to handle large molecular structures with 3D complexity [20].

1. Molecular Graph Decomposition and Featurization

- Input: Large, complex molecular structures (e.g., natural products from DrugBank).

- Procedure:

- Graph Construction: Represent the molecule as a hydrogen-suppressed molecular graph where nodes are atoms and edges are bonds [26] [20].

- Tree Decomposition: Use a graph decomposition algorithm (e.g., Junction Tree algorithm) to break the molecular graph into meaningful chemical substructures or fragments, which are organized into a tree structure [20].

- Feature Extraction: Encode each node (atom) in the graph using features such as chemical element, Morgan vertex degree, and electronic structure (e.g., 1s2, 2p3). For isomeric SMILES, chirality and bond stereochemistry are also encoded [26] [20].

2. Model Architecture and Latent Space Construction

- Encoder: A Graph Neural Network (GNN) or Tree-LSTM processes the molecular graph (or its junction tree) to map it into a latent vector (z) [20].

- Decoder: The decoder network reconstructs the molecular graph from the latent vector, typically by assembling the predicted substructures. This ensures the output is always a valid molecular graph [20].

- Latent Space: The model constructs a continuous, low-dimensional latent space. Exploration of this space allows for the generation of novel compound structures optimized for specific functions [20].

3. Generation and Functional Optimization

- Exploration: Sample points from the latent space or interpolate between known active molecules to generate novel molecular structures.

- Validation: The graph-based decoding process guarantees 100% syntactically valid molecules. The validity of the chemical structure itself is checked with RDKit [20].

- Docking Analysis: Generated molecules with desired properties can be virtually screened using molecular docking simulations to assess their potential as drug candidates [20].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software and Resources for Molecular VAE Research

| Tool Name | Type | Primary Function in VAE Research |

|---|---|---|

| RDKit [20] [21] | Cheminformatics Library | Checks SMILES validity, calculates molecular properties, handles file format conversion. |

| CORAL [26] | QSAR Software | Calculates optimal descriptors from SMILES and molecular graphs for model building. |

| ZINC Database [21] | Molecular Library | Provides large, publicly available datasets of drug-like molecules for model training. |

| DrugBank [20] | Pharmaceutical Database | Source of approved drug and natural product structures for training complex generative models. |

| NP-VAE [20] | Deep Learning Model | Specialized graph-based VAE for handling large natural product structures with chirality. |

| JT-VAE [20] | Deep Learning Model | A foundational graph-based VAE that uses junction tree decomposition for high reconstruction accuracy. |

The field of molecular representation is dynamically evolving. While graph-based VAEs currently show superior performance in generating valid and complex molecules, SMILES-based models remain relevant for specific applications and as a component of chemical language models [20] [19]. Future directions point toward hybrid models and the increased use of multimodal learning and contrastive learning frameworks to create even more powerful and interpretable chemical latent spaces, further accelerating AI-driven drug discovery [19].

The Role of Latent Space in Capturing Chemical Similarity

In the field of molecular generation research, the latent space of a Variational Autoencoder (VAE) serves as a crucial low-dimensional mathematical representation that captures the essential features of chemical compounds [20]. This continuous, probabilistic space is fundamental for enabling tasks such as molecule generation, optimization, and the meaningful exploration of chemical properties [1] [5]. By learning to project high-dimensional, complex molecular structures into a structured, lower-dimensional manifold, the VAE's latent space provides a powerful framework for navigating the vast chemical universe and identifying novel compounds with desired characteristics [20] [27]. Its ability to implicitly encode chemical similarity—where molecules with similar structures or properties are located near each other in the latent space—makes it an indispensable tool for modern computational drug discovery [5] [28].

Latent Space Fundamentals in Molecular VAEs

The latent space in a VAE is a compressed, probabilistic representation of input data, learned by aligning the distribution of encoded data points with a prior distribution, typically a unit Gaussian [1] [27]. This is achieved through the optimization of the Evidence Lower Bound (ELBO), which balances two objectives: reconstruction loss, ensuring the decoded output closely matches the original input, and the Kullback-Leibler (KL) divergence, which regularizes the structure of the latent space to be continuous and smooth [1]. This structured continuity is what allows for meaningful interpolation and navigation within the latent space, as small changes in the latent vector correspond to coherent and gradual changes in the generated molecular structure [20] [27].

Unlike traditional autoencoders that may learn a non-smooth, disjointed latent manifold, the variational formulation enforces a well-behaved space [27]. This property is critical for molecular optimization, as it allows for the use of efficient continuous optimization techniques, such as Bayesian optimization, to traverse the latent space and discover molecules with optimized properties [28]. The latent space thus acts as a "chemical cartography" tool, mapping discrete molecular structures onto a continuous domain where their relationships can be quantified and exploited for generative design [20].

Quantitative Performance of Molecular VAE Frameworks

Different VAE architectures have been developed to efficiently capture chemical similarity and generate valid molecules. The table below summarizes the performance of several key models on standard benchmarks, highlighting their effectiveness in reconstruction and generation.

Table 1: Performance Comparison of Molecular VAE Frameworks

| Model Name | Key Architecture | Molecular Representation | Reconstruction Accuracy | Validity | Key Innovation |

|---|---|---|---|---|---|

| NP-VAE [20] | Graph-based VAE with Tree-LSTM | Molecular graph | ~90% (on evaluation dataset) | 100% (fragment-based) | Handles large, complex molecules & chirality |

| STAR-VAE [5] | Transformer Encoder-Decoder | SELFIES | Matches/exceeds baselines on GuacaMol & MOSES | High (SELFIES guarantee) | Scalable pretraining & property-guided generation |

| JT-VAE [20] | Junction Tree Graph VAE | Molecular graph | High (for small molecules) | High | Treats molecular graphs as tree structures |

| CLaSMO [28] | Conditional VAE (CVAE) | Molecular graph | N/A (Modification-based) | N/A | Scaffold optimization via latent space Bayesian optimization |

| TGVAE [4] | Transformer & Graph Neural Network | Molecular graph | Outperforms existing approaches | High & Diverse | Combins Transformer, GNN, and VAE |

A critical challenge in molecular VAEs is the choice of representation. Early models like CVAE used SMILES strings, but often suffered from low validity, as many generated strings did not correspond to valid molecules [20] [5]. Subsequent innovations have largely shifted to graph-based representations (e.g., JT-VAE, NP-VAE) or modern string-based representations like SELFIES, which guarantee 100% syntactic validity and thus improve the utility of the latent space for reliable generation [5] [4].

Experimental Protocols for Latent Space Application

Protocol: Constructing a Chemical Latent Space with NP-VAE

This protocol details the procedure for building a latent space for large, complex molecules, such as natural products, using the NP-VAE model [20].

Data Curation and Preparation

- Source: Obtain molecular structures from databases like DrugBank and natural product libraries [20].

- Preprocessing: Standardize structures and compute molecular features/fingerprints (e.g., ECFP). For large molecules, apply the model's decomposition algorithm to break compounds into fragment units and convert them into tree structures [20].

- Split: Divide the dataset into training, validation, and test sets (e.g., 76,000/5,000/5,000 compounds) [20].

Model Training and Latent Space Formation

- Architecture: Configure the NP-VAE encoder and decoder using Tree-LSTM networks to process the molecular tree structures [20].

- Training Loop: Train the model by minimizing the VAE loss function (ELBO), which combines reconstruction loss and KL divergence. Use the validation set for early stopping [20].

- Encoding: Pass the training and test sets through the trained encoder to project each molecule into a point in the low-dimensional latent space, defined by the mean (μ) and standard deviation (σ) vectors [20].

Latent Space Evaluation

- Reconstruction Accuracy: For each test compound, perform multiple stochastic encodings and decodings (e.g., 10x10). Calculate the proportion where the output structure exactly matches the input [20].

- Generalization Assessment: Sample latent vectors from the prior distribution ( N(0, I) ), decode them, and use RDKit to check the validity of the generated structures [20].

Protocol: Property-Guided Molecular Generation with STAR-VAE

This protocol outlines the use of a transformer-based VAE for generating molecules conditioned on specific properties [5].

Large-Scale Pretraining

- Data: Curate a large dataset of drug-like molecules (e.g., ~79 million from PubChem). Apply standard drug-likeness filters (e.g., Molecular Weight ≤ 600) [5].

- Representation: Convert all molecules to SELFIES representations to ensure syntactic validity. Tokenize the SELFIES strings [5].

- Model Pretraining: Train the STAR-VAE model (Transformer encoder and autoregressive Transformer decoder) on the SELFIES corpus using the ELBO objective. This creates a general-purpose chemical latent space [5].

Conditional Finetuning

- Property Predictor: Attach a property prediction head to the pretrained encoder. Finetune it on a smaller dataset labeled with the target property (e.g., docking scores) [5].

- Conditional Generation Signal: The property predictor supplies a conditioning signal that is consistently applied to the latent prior, the inference network, and the decoder during generation [5].

- Parameter-Efficient Adaptation: Employ Low-Rank Adaptation (LoRA) in both the encoder and decoder to adapt the model to the conditional generation task with limited labeled data [5].

Evaluation of Conditional Generation

- Benchmarking: Evaluate the unconditional generation performance on benchmarks like GuacaMol and MOSES for validity, uniqueness, and diversity [5].

- Property-Specific Assessment: For conditional generation (e.g., optimizing docking scores), generate molecules and compare the distribution of their target properties against a baseline VAE to demonstrate a statistically significant shift towards improved values [5].

Protocol: Scaffold-Based Optimization with CLaSMO

This protocol describes a sample-efficient method for optimizing existing molecular scaffolds by performing Bayesian optimization in the latent space of a Conditional VAE [28].

Data Preparation for Scaffold Modification

- Input: Define a set of molecular scaffolds to be optimized.

- Substructure Enumeration: Create a dataset of valid substructures and their corresponding attachment points. For each scaffold, generate examples of how substructures can be bonded to it [28].

- Conditioning Feature Extraction: For each attachment point on a scaffold, compute the atomic environment features that will serve as the conditioning input for the CVAE [28].

Training the Conditional VAE

- Model: Train a CVAE where the encoder learns a latent distribution for a substructure, and the decoder generates a substructure conditioned on the scaffold's atomic environment features [28].

- Objective: The model learns to generate chemically compatible substructures that can be integrated into the scaffold.

Latent Space Bayesian Optimization (LSBO)

- Define Objective: Formulate the target property (e.g., binding affinity, solubility) as a black-box function to be maximized.

- Optimization Loop: a. Select a scaffold and an attachment point to modify. b. Use the CVAE encoder to project known substructures for that context into the latent space. c. Fit a Bayesian optimization surrogate model (e.g., Gaussian Process) to the latent points and their property values. d. Propose the next latent point to evaluate by maximizing an acquisition function (e.g., Expected Improvement). e. Decode the proposed latent point into a new substructure, attach it to the scaffold, and evaluate the property of the new molecule (or use a predictor). f. Update the surrogate model with the new data point and repeat [28].

- Constrained Output: The process maintains a similarity constraint to the original scaffold, ensuring synthesizability.

Essential Research Reagent Solutions

The following table catalogs key computational tools and resources essential for conducting research on latent space and chemical similarity.

Table 2: Key Research Reagents and Tools for Molecular VAE Research

| Reagent / Resource | Type | Function in Research | Example Use Case |

|---|---|---|---|

| RDKit [20] | Cheminformatics Software | Handles molecular I/O, fingerprint calculation, and validity checks. | Evaluating the chemical validity of molecules generated from the latent space. |

| SELFIES [5] | Molecular Representation | String-based representation guaranteeing 100% syntactic validity. | Used in STAR-VAE to prevent generation of invalid molecular strings. |

| ECFP [20] | Molecular Fingerprint | Represents molecular structure as a bit vector; used as input feature. | Providing structural features for the VAE encoder to learn meaningful representations. |

| PubChem [5] | Chemical Database | Large-scale source of drug-like molecules for model training. | Curating a dataset of ~79 million molecules for pretraining STAR-VAE. |

| GuacaMol / MOSES [5] | Benchmarking Framework | Standardized benchmarks for evaluating generative model performance. | Quantifying the validity, uniqueness, and diversity of molecules generated by a trained VAE. |

| Low-Rank Adaptation (LoRA) [5] | Fine-tuning Technique | Efficiently adapts large pre-trained models to new tasks with limited data. | Fine-tuning STAR-VAE for property-guided generation without full retraining. |

| Bayesian Optimization [28] | Optimization Algorithm | Efficiently optimizes expensive black-box functions in continuous spaces. | Navigating the latent space of CLaSMO to find molecules with optimal properties. |

Workflow Visualization

The following diagram illustrates the high-level logical workflow of a VAE for molecular generation, from input to generation and optimization, integrating the components discussed in the protocols.

Diagram 1: Molecular VAE Workflow. This figure outlines the core process of molecular encoding, latent space formation, and molecule generation/optimization, highlighting the pathways for both reconstruction and conditional generation. BO: Bayesian Optimization.

Advanced VAE Architectures and Their Application in De Novo Drug Design

Variational Autoencoders (VAEs) have emerged as a powerful deep learning framework for generative tasks, particularly in domains with complex, structured data like chemistry and drug discovery. Among these, graph-based VAEs represent a significant advancement as they operate directly on molecular graphs, inherently preserving the structural relationships between atoms. This application note details the operational principles, performance metrics, and experimental protocols for two prominent graph-based VAE architectures—the Junction Tree VAE (JT-VAE) and the Natural Product-oriented VAE (NP-VAE)—within the context of molecule generation research. We focus on their enhanced ability to generate chemically valid and structurally accurate molecular structures compared to earlier methods.

Junction Tree VAE (JT-VAE)

The JT-VAE addresses the critical challenge of molecular graph reconstruction by decomposing a molecule into a hierarchical, tree-like structure of chemical substructures, or "junction trees." This decomposition constrains the generation process to chemically plausible steps, dramatically improving the validity of the output.

- Core Principle: The model encodes a molecule using two parallel encoders: one for the original molecular graph and another for its junction tree. The junction tree represents the molecule as a tree where nodes are chemically valid fragments (e.g., rings, functional groups) and edges represent their adjacency. This simplifies the complex graph into a tractable tree structure.

- Architecture and Training: The model uses a Graph Neural Network (GNN) to encode the molecular graph and a Tree-based GNN to encode the junction tree. These two latent representations are concatenated to form the final molecular embedding. The decoder first generates a junction tree and then assembles the final molecular graph by combining the generated fragments, guided by the molecular graph embedding [29]. Training involves an initial deterministic autoencoder phase, followed by fine-tuning with a Kullback-Leibler (KL) divergence penalty to regularize the latent space [29].

Natural Product-Oriented VAE (NP-VAE)

The NP-VAE was developed to handle large, complex molecular structures that are intractable for earlier models, such as natural products with significant 3D complexity and chirality.

- Core Principle: NP-VAE is a graph-based VAE that combines an algorithm for decomposing compound structures into fragment units and converting them into tree structures with Extended Connectivity Fingerprints (ECFP) and a Tree-LSTM network [30].

- Advancements over Predecessors: NP-VAE represents a significant improvement over models like JT-VAE and HierVAE, with 12 million parameters. Its key innovations include the ability to handle chirality (an essential factor for 3D structure and biological activity) and a mechanism to train the chemical latent space by incorporating functional information alongside structural data [30]. This allows for the generation of novel compounds optimized for a target property.

Performance and Comparative Analysis

The performance of graph-based VAEs is quantitatively assessed based on their ability to accurately reconstruct input molecules (reconstruction accuracy) and to generate novel, valid, and unique molecular structures.

Table 1: Comparative Performance of Generative Models on Molecular Tasks

| Model | Reconstruction Accuracy | Validity | Key Strengths and Applicable Scope |

|---|---|---|---|

| JT-VAE [29] | ~76% (on QM9 dataset with HOMO prediction task) | High (by design) | High validity for small molecules; enables property prediction and optimization via latent space. |

| NP-VAE [30] | >80% (outperforms baselines on St. John's dataset) | 100% (generates in substructure units) | Handles large, complex structures & chirality; suited for natural product-like compounds. |

| CVAE (SMILES-based) [30] | Lower than graph-based models | Very Low (majority invalid) | Pioneering application of VAE; now largely superseded by graph-based methods. |

| HierVAE [30] | Lower than NP-VAE | High | Handles larger compounds with repeating structures; cannot consider stereochemistry. |

The data from Table 1 demonstrates the clear superiority of graph-based models over SMILES-based approaches in generating chemically valid structures. NP-VAE shows a marked improvement in reconstruction accuracy, establishing it as a high-performance generative model for complex molecular structures [30].

Table 2: Latent Space Utilization for Molecular Optimization (e.g., HOMO energy)

| Model / Strategy | Property Prediction Performance (e.g., HOMO) | Successful Optimization Capability |

|---|---|---|

| JT-VAE with Regression Model [29] | Achieved state-of-the-art results in HOMO prediction. | Yes: Latent space allows for gradient-based search to find molecules with a predefined HOMO value. |

| NP-VAE with Functional Latent Space [30] | Latent space trained with functional information. | Yes: Enables generation of novel compounds optimized for a target function by exploring the latent space. |

Experimental Protocols

Protocol 1: Training a JT-VAE for Property Prediction and Optimization

This protocol outlines the steps for pre-training a JT-VAE and employing its latent space for molecular property optimization [29].

Model Pre-training

- Dataset: Utilize a large-scale molecular dataset such as ZINC for initial training.

- Two-Phase Training:

- Phase 1 (Deterministic AE): Train the encoder-decoder pair as a standard autoencoder to minimize reconstruction error.

- Phase 2 (VAE Tuning): Introduce the KL divergence penalty between the latent vectors and a standard normal distribution to regularize the latent space.

- Objective: Learn a robust latent space that captures the fundamental distribution of molecular structures.

Regression Model Training

- Dataset: Use a property-specific dataset like QM9, which includes quantum chemical properties such as HOMO energy.

- Procedure: With the pre-trained JT-VAE encoder frozen, train a feedforward neural network (FFNN) regressor. The regressor maps latent vectors (

Z) from the JT-VAE to the target property values (e.g., HOMO). - Architecture: A typical regressor can consist of two hidden layers (e.g., size 1024) with ReLU activation functions [29].

Molecular Optimization (Reverse-QSAR)

- Input: A target property value

v₀(e.g., a specific HOMO energy). - Optimization Loop:

- Initialize a latent vector

Z(e.g., from a known molecule's encoding). - Define the loss

L = |v₀ - f_R(Z)|, wheref_Ris the trained regressor. - Use gradient descent within the latent space to minimize

Lby updatingZ, keeping the weights of the encoder and regressor frozen. - Stop when

f_R(Z)is sufficiently close tov₀.

- Initialize a latent vector

- Decoding: Pass the optimized latent vector

Zthrough the JT-VAE decoder to generate the molecular structureD(Z)with the desired property [29].

- Input: A target property value

Protocol 2: Evaluating Model Generalization Ability

This protocol describes a standard evaluation method for assessing the reconstruction and generative capabilities of a molecular VAE [30].

- Data Splitting: Divide a standardized dataset (e.g., St. John et al.'s dataset with 76,000 training, 5,000 validation, and 5,000 test compounds) into training, validation, and test sets.

- Model Training: Train the VAE model on the training set.

- Reconstruction Accuracy (Generalization Ability):

- For each test compound, perform multiple stochastic encodings and decodings (e.g., 10 encodings, each decoded 10 times, for 100 total outputs per test compound).

- Calculate the proportion of output structures that exactly match the input test compound.

- Validity and Novelty:

- Sample a large number of latent vectors (e.g., 1000) from the prior distribution

N(0, I). - Decode each vector multiple times.

- Use a toolkit like RDKit to determine the proportion of outputs that are chemically valid molecules.

- Check the uniqueness of the generated valid molecules against the training set.

- Sample a large number of latent vectors (e.g., 1000) from the prior distribution

Visualization of Workflows

JT-VAE Encoding and Optimization Pathway

NP-VAE Molecular Decomposition Workflow

The Scientist's Toolkit: Research Reagents and Solutions

Table 3: Essential Computational Tools for Graph-Based Molecular VAEs

| Item / Resource | Function / Description | Example Tools / Libraries |

|---|---|---|

| Molecular Datasets | Provide structured data for training and benchmarking models. | ZINC database (for general molecules); QM9 (with quantum properties); DrugBank & Natural Product libraries [30]. |

| Cheminformatics Toolkit | Handles molecular I/O, validity checks, fingerprint generation, and stereochemistry. | RDKit [30]. |

| Deep Learning Framework | Provides flexible environment for building and training complex neural networks. | PyTorch, TensorFlow. |

| Graph Neural Network Library | Offers pre-built modules for implementing graph convolutions and message passing. | PyTorch Geometric (PyG), Deep Graph Library (DGL). |

| Latent Space Analysis Package | Aids in visualization and interpolation within the learned latent space. | scikit-learn (for PCA, t-SNE). |

The exploration of chemical space for novel drug candidates represents a monumental challenge in pharmaceutical research, necessitating advanced computational approaches for efficient molecular design. Within the framework of variational autoencoder (VAE) research for molecule generation, two prominent strategies have emerged for processing the Simplified Molecular Input Line-Entry System (SMILES) representation: Grammar Variational Autoencoders (GVAEs) and Character-Level Recurrent Neural Networks (Char-RNNs). These approaches fundamentally differ in how they interpret and generate SMILES strings, with GVAEs employing grammatical constraints to ensure syntactic validity and Char-RNNs utilizing statistical sequence modeling at the character level. This article provides detailed application notes and experimental protocols for these methodologies, enabling researchers to effectively implement and evaluate these models for de novo molecular design tasks. The structured comparison and standardized protocols presented herein aim to facilitate reproducibility and advance the field of AI-driven drug discovery.

Theoretical Foundations

SMILES Representation for Molecules

The Simplified Molecular Input Line-Entry System (SMILES) provides a string-based representation that encodes molecular structures as linear sequences of characters, offering a compact and human-readable format for computational processing [31]. This notation utilizes an alphabet of characters where elemental symbols (e.g., 'C' for carbon, 'N' for nitrogen) are combined with special characters representing chemical features: '-' for single bonds, '=' for double bonds, '#' for triple bonds, and numerals to indicate ring closures [32] [33]. For example, benzene is represented in aromatic SMILES notation as "c1ccccc1" [32]. Despite its widespread adoption, standard SMILES notation suffers from limitations including limited token diversity, lack of chemical information within individual tokens, and potential for generating invalid structures due to its context-free nature [31] [20].

Grammar-Based VAEs for Molecular Design

Grammar Variational Autoencoders (GVAEs) represent a significant advancement over character-level models by incorporating formal grammatical constraints to ensure syntactic validity of generated outputs [34]. The fundamental innovation of GVAEs lies in their treatment of structured discrete data whose validity can be characterized by a formal grammar. Rather than processing raw SMILES characters, GVAEs encode molecules as sequences of production rules derived from context-free grammars (CFGs) or molecular hypergraph grammars (MHGs) [34]. This approach guarantees that all decoder outputs comply with the grammatical rules of SMILES syntax, effectively eliminating invalid structure generation.

The GVAE framework employs a standard VAE architecture where the encoder receives a sequence of grammar production rules representing the parse of an input molecule. These production sequences are typically one-hot encoded into binary matrices and processed through deep convolutional neural networks or recursive LSTM architectures to output parameters (μ, σ²) of a Gaussian variational posterior [34]. The decoder maps latent codes to valid production rule sequences using a recurrent neural network (LSTM or GRU) with dynamic masking that ensures only syntactically valid derivations can be produced at each decoding step [34].

For molecular applications specifically, molecular hypergraph grammars (MHGs) have been developed to overcome the limitations of context-free grammars in expressing chemical constraints such as atom valency [34]. MHGs generalize CFGs by representing molecules as hypergraphs, with productions that operate on hyperedges and rigorously adhere to molecular validity constraints including regularity and cardinality [34].

Character-Level RNNs for Molecular Generation

Character-Level Recurrent Neural Networks (Char-RNNs) approach molecular generation as a sequence modeling problem, analogous to statistical language modeling in natural language processing [32] [33]. These models learn the probability distribution of the next character in a SMILES string given a sequence of previous characters, enabling the generation of novel molecules one character at a time [32]. Char-RNNs operate directly on the character-level representation of SMILES strings without explicit grammatical constraints, relying instead on the statistical patterns learned from large datasets of valid molecules.

The architecture typically employs Long Short-Term Memory (LSTM) networks, which are well-suited for capturing long-range dependencies in sequential data [32] [35]. The model processes input sequences through an embedding layer, followed by multiple LSTM layers that maintain hidden states to capture contextual information, and finally a fully-connected output layer that predicts the probability distribution over the next possible character [33]. During training, the model learns to maximize the likelihood of the training sequences, effectively capturing the statistical regularities of valid SMILES strings in its parameters.

Performance Comparison and Quantitative Assessment

Table 1: Comparative Performance Metrics of SMILES-Based Generative Models

| Model | Validity Rate | Uniqueness | Novelty | Reconstruction Accuracy | Internal Diversity (intDiv) |

|---|---|---|---|---|---|

| GVAE | 99% [34] | 100% [34] | 93.77% [3] | 53.7% [34] | 85.87-89.01% [3] |

| MHG-VAE | 100% [34] | 100% [34] | 94.71% [3] | 94.8% [34] | 85.87-86.33% [3] |

| Char-RNN | ~90% [20] | >95% [32] | ~85% [32] | N/A | ~80% [32] |

| PCF-VAE | 95.01-98.01% [3] | 100% [3] | 93.77-95.01% [3] | >90% [3] | 85.87-89.01% [3] |

Table 2: Optimization Performance for Molecular Properties

| Model | Best Penalized logP | Synthesizability Improvement | Binding Affinity Improvement | Drug-likeness (QED) |

|---|---|---|---|---|

| GVAE | -9.57 [34] | +5% [31] | +6% [31] | 0.7 [20] |

| MHG-VAE | 5.24 [34] | +6% [31] | +7% [31] | 0.72 [20] |

| Char-RNN | 2.91 [20] | +3% [31] | +4% [31] | 0.68 [20] |

| PCF-VAE | 4.85 [3] | +5% [3] | +6% [3] | 0.71 [3] |

Experimental Protocols

Protocol 1: Implementing Grammar VAE for Molecular Generation

Objective: To implement and train a Grammar VAE model for generating valid molecular structures with optimized properties.

Materials:

- ZINC or ChEMBL dataset (SMILES representations)

- RDKit or OpenBabel cheminformatics toolkit

- Python 3.7+ with PyTorch/TensorFlow

- GPU-enabled computational environment

Procedure:

Data Preprocessing:

- Curate a dataset of drug-like molecules in SMILES format (e.g., ZINC database containing ~250,000 compounds)

- Apply standardization of SMILES representation using RDKit's CanonicalSMILES

- Implement grammar derivation from SMILES strings using context-free grammar rules

- Split data into training (80%), validation (10%), and test sets (10%)

Model Architecture Configuration:

- Implement encoder network with 3 convolutional layers (filter sizes: 9, 9, 10; stride: 3, 3, 2)

- Design decoder with stacked LSTM layers (2 layers, 512 hidden units)

- Set latent space dimension to 196 continuous variables

- Implement rule masking mechanism during decoding to ensure grammatical validity

Training Protocol:

- Initialize model weights using Xavier initialization

- Set batch size to 128 and initial learning rate to 0.001

- Use Adam optimizer with β1=0.9, β2=0.999

- Implement learning rate reduction on plateau (factor=0.5, patience=5 epochs)

- Train for maximum 100 epochs with early stopping (patience=10 epochs)

Validation and Testing:

- Evaluate reconstruction accuracy on test set

- Assess validity of generated molecules using RDKit's SMILES parser

- Calculate novelty (% of generated molecules not in training set)

- Measure uniqueness (% of unique molecules among generated set)

Diagram 1: GVAE Architecture for Molecular Generation

Protocol 2: Character-Level RNN for SMILES Generation

Objective: To train a character-level RNN model for generating novel molecular structures using SMILES notation.

Materials:

- ChEMBL database (≥2 million bio-active molecules) [32]

- PyTorch deep learning framework

- NVIDIA GPU with ≥8GB memory

- Custom Python scripts for data processing

Procedure:

Data Preparation:

- Extract SMILES strings from ChEMBL database

- Create character vocabulary from all unique characters in SMILES

- Implement character-to-integer mapping dictionary

- Convert all SMILES to one-hot encoded representations

- Generate training sequences with fixed length (100 characters)

Model Architecture:

- Implement character embedding layer (dimensionality: 256)

- Design multi-layer LSTM network (2 layers, 512 hidden units each)

- Add dropout regularization (rate=0.2) between LSTM layers

- Implement fully-connected output layer with softmax activation

Training Configuration:

- Set batch size to 128 and sequence length to 100

- Use cross-entropy loss function and Adam optimizer

- Implement gradient clipping (max norm: 5.0)

- Train for 50 epochs with teacher forcing ratio of 0.5

Sampling and Generation:

- Implement priming with starting characters (e.g., 'C' or 'c')

- Use temperature-based sampling for diversity control

- Generate molecules of varying lengths (50-120 characters)

- Validate generated SMILES using RDKit chemical validation

Diagram 2: Char-RNN Architecture for SMILES Generation

Protocol 3: Latent Space Optimization for Property Enhancement

Objective: To optimize molecular properties through latent space exploration of trained VAEs.

Materials:

- Pre-trained GVAE or Char-RNN model

- Bayesian optimization library (e.g., GPyOpt)

- Molecular property predictors (e.g., for logP, QED, synthesizability)

- Target protein structure for binding affinity calculations

Procedure:

Latent Space Characterization:

- Encode training set molecules to latent representations

- Perform principal component analysis to identify major variation axes

- Train property predictors on latent representations (Random Forest or MLP)

Bayesian Optimization Setup:

- Define objective function combining multiple properties

- Set acquisition function (Expected Improvement)

- Initialize with 100 random points in latent space

- Run optimization for 500 iterations

Multi-objective Optimization:

- Balance conflicting properties (e.g., potency vs. solubility)

- Implement Pareto front identification

- Generate diverse candidate molecules from optimal latent points

Validation:

- Synthesize top candidates (10-20 molecules)

- Experimental testing of key properties

- Iterative model refinement based on experimental results

Advanced Hybridization Techniques

Recent advancements in SMILES representation have led to hybrid approaches that enhance model performance. The SMI+AIS(N) representation method seamlessly integrates standard SMILES tokens with Atom-In-SMILES (AIS) tokens, which incorporate local chemical environment information into a single token [31]. This hybrid approach maintains SMILES simplicity while enriching the representation with critical chemical context, addressing the token frequency imbalance inherent in standard SMILES [31].

The SMI+AIS representation demonstrates significant improvements in binding affinity (7% improvement) and synthesizability (6% increase) compared to standard SMILES in molecular generation tasks [31]. This enhancement stems from the method's ability to differentiate chemical elements based on their chemical context without introducing unnecessary tokens for less frequent elements [31]. The hybridization effectively mitigates the token frequency imbalance by replacing frequently observed SMILES tokens (e.g., 'C') with multiple AIS tokens distinguished by chemical environment (e.g., '[cH;R;CC]', '[c;R;CCC]', and '[CH3;!R;C]') [31].

Table 3: Research Reagents and Computational Tools

| Resource | Type | Application | Access |

|---|---|---|---|

| ZINC Database | Compound Library | Training data for generative models | Public |

| ChEMBL Database | Bioactive Molecules | Training data for drug-like molecules | Public |

| RDKit | Cheminformatics | SMILES validation and manipulation | Open Source |

| PyTorch/TensorFlow | Deep Learning Frameworks | Model implementation | Open Source |

| MOSES Benchmark | Evaluation Platform | Standardized model assessment | Public |

| OpenBabel | Chemical Toolbox | Format conversion and descriptor calculation | Open Source |

Grammar VAEs and Character-Level RNNs represent complementary approaches to SMILES-based molecular generation, each with distinct advantages and limitations. GVAEs provide guaranteed syntactic validity through grammatical constraints and demonstrate superior performance in reconstruction accuracy and latent space organization, making them ideal for targeted molecular optimization [34]. Char-RNNs offer flexibility and have demonstrated remarkable success in generating novel molecular structures with properties correlating well with those of the training molecules [32] [33]. The emerging hybrid approaches, such as SMI+AIS representation, further enhance model performance by incorporating chemical context directly into the molecular representation [31]. As the field advances, the integration of these methodologies with experimental validation cycles will play a crucial role in accelerating drug discovery and development pipelines.