Validating Synthesis Methods in Drug Discovery: From AI Design to Lab Verification

This article provides a comprehensive framework for the validation of material synthesis methods, tailored for researchers and professionals in drug development.

Validating Synthesis Methods in Drug Discovery: From AI Design to Lab Verification

Abstract

This article provides a comprehensive framework for the validation of material synthesis methods, tailored for researchers and professionals in drug development. It explores the critical role of validation in bridging computational design and experimental success, covering foundational principles, modern methodological applications, strategies for troubleshooting and optimization, and robust comparative validation frameworks. With the increasing adoption of AI-driven synthesis planning and automated high-throughput experimentation, establishing rigorous validation practices is more crucial than ever to ensure the synthesizability, scalability, and efficacy of novel drug candidates. The content synthesizes current best practices, highlights common pitfalls, and outlines future directions for integrating validation throughout the drug discovery pipeline.

The Critical Role of Validation in Modern Drug Synthesis

Why Validation is the Bridge Between Computational Design and Real-World Molecules

In the field of computational drug discovery, a significant gap often exists between in silico predictions and tangible, real-world results. Computational models can generate millions of novel molecular structures, but without rigorous experimental validation, these digital designs hold no practical value for drug development [1]. Validation serves as the critical bridge, transforming theoretical designs into confirmed, synthesizable molecules with desired properties and biological activities. This process is essential for demonstrating that a proposed computational method is not only innovative but also practically useful and reliable [2]. This guide objectively examines the experimental data and protocols that underpin this crucial step, providing researchers with a framework for assessing and comparing computational design methodologies.

Comparative Analysis of Computational Design Validation

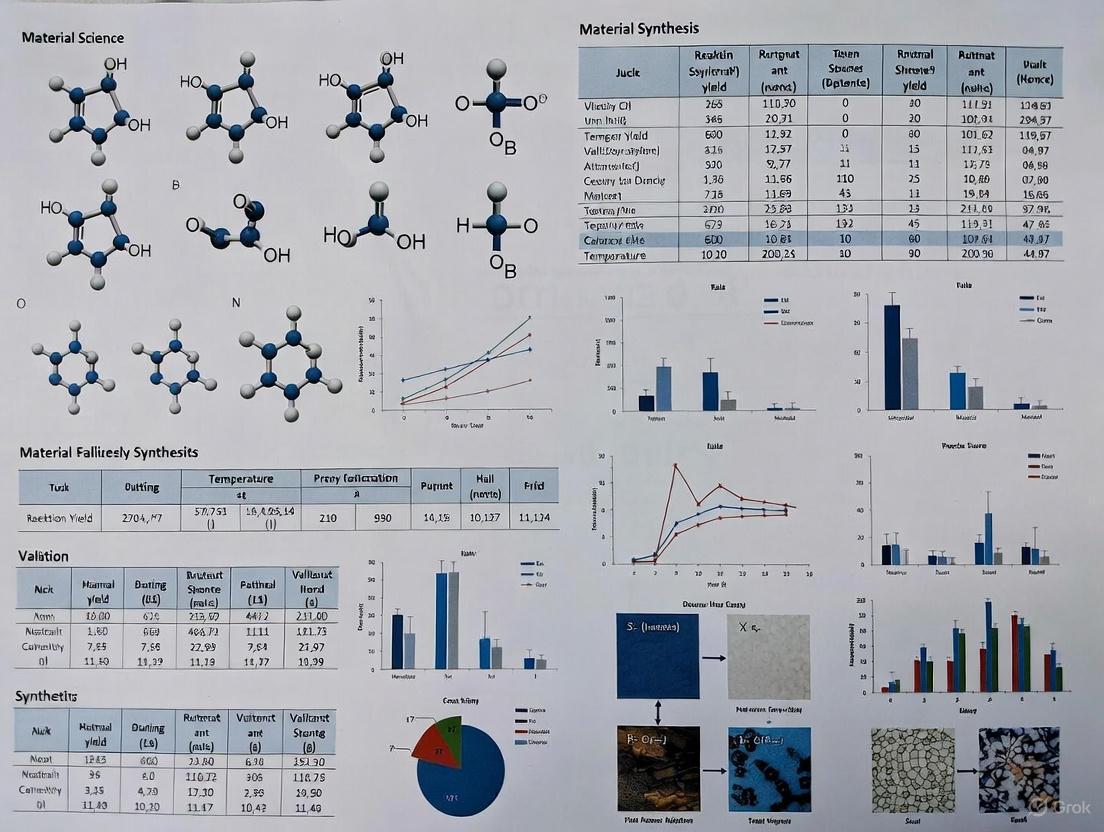

The following table synthesizes key quantitative findings from a validated computational design study, highlighting performance metrics that bridge digital design and physical reality [3].

Table 1: Experimental Validation Metrics for a Computationally Designed Drug Carrier

| Validation Metric | Experimental Result | Significance in Validation |

|---|---|---|

| Drug Loading Capacity | 4.25 wt% (for Doxorubicin) | Confirms computational prediction of enhanced polymer-drug interactions through non-covalent binding. |

| In Vitro Drug Release (pH 5.0) | Faster release compared to pH 7.4 | Validates the computationally informed design for pH-sensitive, targeted drug release (e.g., in tumor microenvironments). |

| In Vitro Drug Release (pH 7.4) | Slower release compared to pH 5.0 | Demonstrates reduced premature leakage, a key limitation of previous carriers that was addressed by the new design. |

| Cytotoxicity in MDA-MB-231 Cells | Confirmed cytotoxicity | Provides functional biological validation of cellular uptake and intended therapeutic effect of the loaded micelles. |

| Key Computational Prediction | PFuCL hydrophobic block has highest polymer-drug interactions | The foundational computational insight that guided the selection of the polymer for synthesis. |

Detailed Experimental Protocols for Validation

To ensure the validity, reproducibility, and meaningful comparison of computational design studies, researchers must adhere to detailed experimental protocols. The following methodologies are central to the validation process.

Protocol for Molecular Dynamics (MD) Simulations

This protocol is used to analyze polymer-drug interactions at the atomic level prior to synthesis [3].

- Objective: To computationally screen and rank different amphiphilic block copolymers based on their potential interaction with a target drug molecule.

- Key Steps:

- System Setup: Model the micelle system of each candidate polymer in an explicit solvent environment.

- Simulation Run: Perform all-atom or coarse-grained MD simulations for a sufficient timescale to achieve system equilibrium.

- Interaction Analysis: Calculate key interaction metrics, including:

- Linear Interaction Energy (LIE) between the hydrophobic polymer block and the drug.

- Analysis of non-covalent interactions (e.g., π-π stacking, hydrogen bonding).

- Solvent-accessible surface area (SASA) and radius of gyration of the micelle.

- Output: A ranked list of candidate polymers based on predicted drug-loading efficiency and stability, guiding which polymer to synthesize.

Protocol for In Vitro Drug Release and Efficacy

This protocol physically tests the synthesized material's performance against computational predictions [3].

- Objective: To experimentally determine the drug loading capacity, release profile, and biological activity of the synthesized carrier.

- Key Steps:

- Micelle Synthesis and Drug Loading: Synthesize the top-ranked polymer (e.g., via ring-opening polymerization) and form micelles by self-assembly in aqueous media. Incubate with the drug (e.g., Doxorubicin) to achieve loading.

- Drug Loading Quantification: Use a method like UV-Vis spectroscopy to determine the amount of drug encapsulated, calculated as (weight of loaded drug / total weight of loaded micelles) × 100%.

- Drug Release Kinetics: Place the drug-loaded micelles in dialysis bags immersed in buffers at different pH levels (e.g., 7.4 and 5.0). Measure the cumulative drug release in the external medium over time.

- Cell-Based Viability Assay: Culture relevant cell lines (e.g., MDA-MB-231 breast cancer cells). Treat with blank and drug-loaded micelles. Use a standard assay like MTT to quantify cell viability after a defined period.

- Output: Quantitative data on loading capacity, pH-responsive release behavior, and cytotoxicity, providing a direct link back to the original computational design hypotheses.

The Scientist's Toolkit: Essential Research Reagents and Materials

The validation workflow relies on a specific set of reagents and analytical techniques. The following table details these essential components and their functions [3] [4].

Table 2: Key Research Reagent Solutions for Experimental Validation

| Reagent / Material | Function in Validation |

|---|---|

| Amphiphilic Diblock Copolymer (e.g., PEG-b-PFuCL) | The core structural component of the self-assembled drug delivery system; its design is the output of the computational model. |

| Therapeutic Agent (e.g., Doxorubicin) | The model drug compound used to test the loading and release capabilities of the designed carrier. |

| Ring-Opening Polymerization Catalysts | Chemicals required to synthesize the novel polymer block (e.g., PFuCL) identified as optimal by computational screening. |

| Dynamic Light Scattering (DLS) | An analytical technique used to characterize the size distribution and stability of the formed micelles in solution. |

| Dialysis Membranes (with specific MWCO) | Used in the drug release study to physically separate the micelles from the release medium while allowing free drug molecules to diffuse out. |

| Cell Culture Lines (e.g., MDA-MB-231) | Relevant biological models used to assess the cytotoxicity and therapeutic efficacy of the drug-loaded formulation. |

Visualizing the Validation Workflow

The following diagram maps the integrated computational and experimental workflow, illustrating how validation forms a closed feedback loop.

Diagram 1: Integrated Computational-Experimental Validation Workflow.

The journey from a computational design to a real-world molecule is incomplete without the critical bridge of experimental validation. As demonstrated, this process relies on a multi-faceted approach combining quantitative computational screening with rigorous experimental protocols to assess physicochemical properties and biological efficacy [3]. The iterative feedback loop, where experimental outcomes refine computational models, is what ultimately advances the field [1]. For researchers and drug development professionals, a methodology's credibility is not determined by its computational sophistication alone, but by the strength and transparency of its validation data—the definitive proof that a beautiful digital model can become a functional, real-world solution.

The concept of "synthesizability" has traditionally served as a fundamental gatekeeper in materials science and drug development, determining whether a predicted or designed molecule can be successfully realized in the laboratory. In the age of artificial intelligence, this concept is undergoing a profound transformation. No longer limited to simple thermodynamic stability or synthetic pathway feasibility, synthesizability now encompasses a more comprehensive set of criteria that balance predictive computational modeling with experimental validation across multiple scales.

This evolution is critically important because the primary bottleneck to technological impact remains the transition from lab-scale synthesis to robust, industrial-scale manufacturing—a challenge known as the "valley of death" that most promising materials fail to traverse [5]. AI technologies are now transforming this landscape by enabling automated, parallel, and iterative processes that augment traditional manual, serial, and human-intensive work [6]. This guide examines how these new capabilities are reshaping our understanding and assessment of synthesizability, providing researchers with a framework for validating material synthesis methods in an increasingly AI-driven research environment.

The Evolving Framework of Synthesizability

From Traditional Foundations to AI-Augmented Principles

The classical understanding of synthesizability has centered on three fundamental pillars:

- Thermodynamic Stability: Assessing whether a compound represents a local or global energy minimum

- Kinetic Accessibility: Determining whether viable reaction pathways exist under practical conditions

- Synthetic Tractability: Evaluating the feasibility of assembling molecular components with available reagents and methods

While these principles remain relevant, AI technologies have dramatically expanded the synthesizability framework to include additional dimensions essential for modern discovery workflows. The expansion includes data-driven synthesizability metrics derived from historical synthesis data, multi-fidelity prediction combining computational and experimental results, and transfer learning across material classes and synthesis techniques.

This evolution is particularly evident in evidence synthesis, where AI tools are being integrated into traditionally human-centric workflows. A 2025 study of information specialists found significant interest in automating repetitive and time-consuming tasks, though respondents emphasized the need for "structure, education, training, ethical guidance, and systems to support the responsible use and transparency of AI" [7]. This same balanced approach—embracing automation while maintaining rigorous validation—applies directly to assessing synthesizability in materials science.

The AI-Driven Synthesizability Workflow

The integration of AI has transformed the traditional linear research process into an iterative, closed-loop workflow that continuously refines synthesizability predictions. This paradigm shift amplifies the impact of each discovery stage by creating a feedback cycle between prediction, synthesis, and validation.

This AI-augmented workflow demonstrates how synthesizability assessment has evolved from a one-time gatekeeping function to a continuous evaluation process that informs each stage of materials development. Platforms like IBM DeepSearch exemplify this approach by using natural language processing to extract materials data from unstructured patents, papers, and reports, creating knowledge graphs that support rich queries about previously patented materials and their properties [6].

AI Technologies Reshaping Synthesizability Assessment

Natural Language Processing for Historical Data Extraction

The vast repository of historical synthesis knowledge represents an invaluable resource for predicting synthesizability, but until recently, this information remained largely inaccessible to computational methods. Natural Language Processing (NLP) technologies have transformed this situation by enabling the systematic extraction of synthesis information from scientific literature and patents.

These platforms employ multiple AI models working concurrently to convert documents from PDF to structured formats, segment pages into component structures, assign labels to segments, and extract data from embedded tables [6]. The resulting knowledge graphs enable complex queries about previously synthesized materials and their properties, providing critical data for synthesizability predictions. Key capabilities include:

- Document conversion at scale (0.25 pages/sec/core, enabling conversion of entire ArXiv repository in less than 24 hours on 640 cores)

- Named Entity Recognition for materials, properties, material classes, and unit values

- Relationship detection between extracted entities to reconstruct synthesis protocols

AI-Augmented Simulation and Bayesian Optimization

While traditional virtual high-throughput screening approaches rely on exhaustive computation of all possible candidates, AI-augmented simulation employs Bayesian optimization to selectively allocate computational resources to the most promising candidates [6]. This approach is particularly valuable for synthesizability assessment because it enables more accurate models to be applied to smaller, better-targeted datasets.

Bayesian optimization balances exploration of unknown regions of chemical space with exploitation of known synthesizability trends, using acquisition functions to estimate the value of acquiring each new data point. Advanced implementations like Parallel Distributed Thompson Sampling and K-means Batch Bayesian optimization enable parallel evaluation of multiple candidates, dramatically accelerating the identification of synthesizable materials [6].

Knowledge Graphs and Unstructured Data Integration

The creation of comprehensive knowledge graphs from diverse data sources addresses one of the fundamental challenges in synthesizability prediction: the diffuse nature of materials specification across multiple modalities in scientific documents. A material sample might be described in text, subdivided and processed according to parameters in a table, with properties graphed using symbolic references that require combining information from both text and tables for accurate identification [6].

Knowledge graphs resolve these entity resolution challenges by creating structured representations that link materials, processing conditions, and resulting properties. This enables progressively more complex synthesizability queries, moving from simple existence checks ("Has this material been made?") through performance assessment ("What's the highest recorded property value?") to hypothesis generation ("Could this material class be useful for a specific application?") [6].

Experimental Validation of AI Synthesizability Predictions

High-Throughput Screening Methodologies

Experimental validation remains the ultimate arbiter of synthesizability, and high-throughput screening (HTS) methodologies have evolved to provide the rapid experimental feedback needed to train and refine AI models. These systems offer parallel experimentation capabilities with compact reaction volumes that enhance overall throughput, enabling rapid selection and analysis from extensive genetic diversity [8].

Table 1: High-Throughput Screening Platforms for Experimental Validation

| Screening System | Reaction Volume | Technology Foundation | Applications in Synthesizability | Throughput Capacity |

|---|---|---|---|---|

| Microwell-based | 1-100 μL | Microfabricated well plates | Parallel reaction condition screening | 10^3-10^4 reactions |

| Droplet-based | 0.1-10 nL | Microfluidics, emulsion technologies | Ultra-high-throughput biomolecular screening | 10^6-10^7 reactions |

| Single cell-based | <1 nL | Microfluidics, fluorescence-activated sorting | Genetic construct validation, enzyme evolution | 10^7-10^8 cells |

These HTS platforms have become increasingly sophisticated through integration with digital technologies like machine learning and artificial intelligence, enhancing the precision of predictions by rapidly connecting numerous genotypes and phenotypes [8]. This creates a virtuous cycle where AI models predict synthesizability, HTS systems test those predictions, and results feedback to improve model accuracy.

Phase-Appropriate Validation Framework

The validation of synthesizability predictions follows a phase-appropriate framework similar to that used in drug development, where validation stringency increases as materials progress toward commercial application [9]. This approach provides flexibility in initial discovery stages while ensuring rigorous assessment before resource-intensive scale-up.

Table 2: Phase-Appropriate Validation of Synthesizability Predictions

| Development Phase | Primary Synthesizability Focus | Validation Activities | AI Integration Level |

|---|---|---|---|

| Discovery | Structural stability, synthetic pathway existence | Computational screening, literature validation | High - Generative design, pathway prediction |

| Early Development | Reaction yield, impurity profile | Small-scale synthesis, analytical method qualification | Medium - Reaction optimization, condition suggestion |

| Process Optimization | Scalability, reproducibility | Method validation, parameter range identification | High - Bayesian optimization, process control |

| Manufacturing | Cost-effectiveness, robustness | Production-scale validation, quality control | Medium - Real-time monitoring, anomaly detection |

This phased approach ensures efficient resource allocation while maintaining rigorous standards, with AI integration appropriately calibrated to each development stage. The framework recognizes that synthesizability is not a binary property but a continuum that evolves throughout development.

Implementing Responsible AI for Synthesizability Assessment

Validation Frameworks and Best Practices

The rapid adoption of AI methodologies necessitates robust validation frameworks to ensure that synthesizability predictions maintain scientific rigor. Leading evidence synthesis organizations have established the RAISE (Responsible AI in Evidence Synthesis) recommendations and guidance to provide tailored advice for diverse roles in the research ecosystem [10]. While developed for evidence synthesis, these principles apply equally to synthesizability assessment:

- Transparency: Documenting AI tools, versions, and training data used for synthesizability predictions

- Validation: Establishing appropriate performance metrics and benchmarks for AI predictions

- Human Oversight: Maintaining expert review of critical synthesizability decisions

- Continuous Monitoring: Tracking performance and updating models as new data emerges

These principles are being implemented through cross-organizational methods groups that aim to "define best practice and ensure guidance for accepted methods is up to date" while supporting "the implementation of new or amended methods" [10].

Integration with Traditional Experimental Methods

Despite advances in AI prediction, traditional experimental methods remain essential for synthesizability validation. Analytical method development—establishing identity, purity, physical characteristics, and potency of compounds—provides the critical experimental foundation for validating AI predictions [11]. The most common analytical procedures include identification tests, quantitative tests for impurity content, limit tests for impurity control, and quantitative tests for the active moiety in drug substance or product [11].

The lifecycle of an analytical method begins with recognizing the requirement for a new method, followed by development, validation, and continual monitoring for fitness of purpose [11]. This systematic approach to experimental validation provides the ground truth data essential for training and refining AI synthesizability models.

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementation of AI-driven synthesizability assessment requires specific research tools and platforms that bridge computational prediction and experimental validation. The following table details essential solutions currently advancing this field.

Table 3: Essential Research Reagent Solutions for AI-Driven Synthesizability Assessment

| Tool/Category | Primary Function | Key Applications | Implementation Considerations |

|---|---|---|---|

| IBM DeepSearch Platform | Unstructured data extraction from technical documents | Historical synthesizability data mining, knowledge graph creation | Requires document access rights management; handles 100K+ documents in ~6 hours |

| Bayesian Optimization Algorithms | Selective computational resource allocation | Virtual screening prioritization, process optimization | Compatible with existing simulation workflows; reduces computation by 50-90% |

| Microfluidic HTS Platforms | Ultra-high-throughput experimental validation | Reaction condition screening, synthetic route optimization | Requires specialized instrumentation; enables 10^6-10^7 reactions |

| Robotic Laboratory Systems | Automated synthesis and characterization | Reproducible protocol execution, 24/7 experimentation | High capital investment; eliminates manual variability |

| Electronic Lab Notebooks (ELNs) | Structured data capture | Experimental data standardization, metadata preservation | Requires organizational adoption; enables machine-readable data |

| Materials Knowledge Graphs | Relationship mapping between synthesis parameters and outcomes | Synthesizability pattern recognition, hypothesis generation | Dependent on data quality and completeness |

These tools collectively enable the continuous feedback between prediction and validation that defines modern synthesizability assessment. Their integrated implementation creates a workflow where AI models generate synthesizability hypotheses, automated systems test them experimentally, and results feed back to improve model accuracy—progressively refining our understanding of what makes a material synthesizable.

The definition of synthesizability is evolving from a static barrier to a dynamic, multidimensional property that can be progressively optimized throughout the discovery and development process. AI technologies are enabling this transformation by providing the tools to predict, assess, and experimentally validate synthesizability with unprecedented speed and accuracy. The core principles emerging from this integration emphasize responsible implementation, phase-appropriate validation, and continuous feedback between prediction and experimentation.

As these methodologies mature, synthesizability assessment will increasingly focus on manufacturability and economic viability—shifting from simply finding new materials to creating viable, economical, and scalable pathways to produce them [5]. This paradigm shift promises to accelerate materials discovery while ensuring that promising candidates can successfully navigate the "valley of death" between laboratory demonstration and commercial application, ultimately enabling a new era of synthesis-aware materials innovation.

In modern drug discovery, the Design-Make-Test-Analyze (DMTA) cycle is the fundamental iterative process for optimizing novel drug candidates. Despite advances in computational design and high-throughput testing, the "Make" phase—the actual synthesis of target compounds—remains a critical bottleneck. This phase is often the most costly and time-consuming part of the cycle, impeding the rapid iteration needed to bring new medicines to patients [12] [13]. This guide objectively compares emerging solutions designed to overcome these synthesis bottlenecks, framing the analysis within the broader context of validating new material synthesis methods.

Understanding the Synthesis Bottleneck in DMTA

The DMTA cycle is an iterative framework driving drug optimization. The Design phase involves computational proposal of new molecular entities. The Make phase encompasses their physical synthesis, purification, and characterization. The Test phase evaluates these compounds through biological and physicochemical assays, and the Analyze phase interprets data to inform the next design iteration [13].

The synthesis step is particularly problematic because it is inherently labor-intensive and requires specialized expertise. It involves multiple sub-steps: synthesis planning, sourcing starting materials, reaction setup, monitoring, work-up, purification, and final compound characterization [12]. For complex targets, this can necessitate multi-step synthetic routes with numerous variables to optimize. Furthermore, traditional DMTA implementations often run these phases sequentially rather than in parallel, creating significant delays and underutilizing resources [13]. When synthesis fails, the entire cycle grinds to a halt, wasting the resources invested in design and delaying testing, which ultimately increases the cost and timeline of drug discovery programs.

Comparative Analysis of Solutions for Synthesis Bottlenecks

Several strategic approaches are being developed to accelerate the "Make" phase. The table below compares the core methodologies, their underlying technologies, key performance outputs, and validation contexts.

Table 1: Comparison of Strategic Solutions for Synthesis Bottlenecks

| Solution Approach | Core Technology / Methodology | Key Performance / Output | Reported Validation Context |

|---|---|---|---|

| AI-Powered Synthesis Planning [12] | Computer-Assisted Synthesis Planning (CASP) using machine learning (ML) and retrosynthetic analysis. | Generates innovative synthetic routes; identifies most promising pathways from the outset. | Used for complex, multi-step routes; requires enrichment with experimental data for robustness. |

| Agentic AI for Workflow Automation [13] | Multi-agent AI systems (e.g., Tippy) with specialized agents (Molecule, Lab, Analysis). | Autonomous coordination of DMTA workflows; improves decision-making speed and cross-disciplinary coordination. | Production-ready implementation for automating DMTA cycles; demonstrated improved workflow efficiency. |

| Precursor Selection & Robotic Synthesis [14] | Precursor selection based on phase diagrams & pairwise reactions; validated via robotic labs (e.g., ASTRAL). | Higher purity products (32 out of 35 target materials); synthesis of 224 reactions in weeks. | Accelerated discovery of inorganic materials; method tested across 35 oxide materials with 27 elements. |

| Integrated Laboratory Automation [15] | Parallel automated synthesis systems integrating reaction setup, execution, isolation, and purification. | Production of 1-10 mg of final compound for hit-to-lead phase; rapid generation of target compounds. | Showcased by pharmaceutical companies (Novartis, JNJ/Janssen) for efficient parallel synthesis. |

Experimental Protocols for Key Methodologies

Protocol: AI-Guided Retrosynthetic Planning

This protocol outlines the use of Computer-Assisted Synthesis Planning (CASP) tools to design synthetic routes [12].

- Input Target Molecule: The desired small molecule structure is provided to the CASP platform, typically via a chemical drawing interface or SMILES notation.

- Retrosynthetic Analysis: The AI model performs recursive deconstruction of the target molecule into simpler, commercially available precursors using rule-based and data-driven ML models.

- Route Generation & Evaluation: The system employs search algorithms (e.g., Monte Carlo Tree Search) to generate multiple complete synthetic routes. Proposed routes are evaluated for feasibility, step count, and availability of starting materials.

- Condition Prediction: For each synthetic step, the model predicts viable reaction conditions (e.g., solvent, catalyst, temperature). For uncertain transformations, the system may propose screening plate layouts for experimental validation.

- Human-in-the-Loop Review: A synthetic chemist reviews the proposed routes, applying expert intuition and practical knowledge to select the most promising path for laboratory execution.

Protocol: High-Throughput Robotic Synthesis and Validation

This protocol describes a automated workflow for rapid synthesis and testing, as applied in inorganic materials research [14].

- Precursor Selection: Apply criteria based on phase diagrams and analysis of pairwise precursor reactions to select starting materials that minimize unwanted impurity phases.

- Automated Reaction Setup: A robotic system (e.g., the ASTRAL lab) weighs and mixes precursor powders in the desired stoichiometries for hundreds of separate reactions.

- High-Temperature Reaction: The robotic system places the reaction mixtures into ovens and subjects them to programmed high-temperature reaction cycles.

- Product Characterization: The phase purity and composition of the resulting solid-state materials are analyzed using techniques like X-ray diffraction (XRD).

- Data Analysis: The yield and purity of products synthesized using the new precursor selection method are compared against those obtained from traditional precursors to validate the approach's effectiveness.

Workflow and Solution Pathways

The following diagram illustrates the operational workflow of an agentic AI system automating the DMTA cycle, highlighting how it addresses sequential bottlenecks.

Diagram 1: Agentic AI Automating the DMTA Cycle

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of advanced synthesis strategies relies on key reagents, materials, and software.

Table 2: Key Reagents and Solutions for Accelerated Synthesis

| Item / Solution | Function / Description | Relevance to Synthesis Bottlenecks |

|---|---|---|

| Make-on-Demand Building Blocks [12] | Vast virtual catalogues (e.g., Enamine MADE) of synthesizable compounds not held in physical stock. | Drastically expands accessible chemical space for design, enabling synthesis of complex targets upon request. |

| Pre-validated Synthetic Protocols [12] | Pre-tested reaction procedures associated with make-on-demand building blocks. | Increases first-pass synthesis success rate, reducing time spent on reaction scouting and optimization. |

| Chemical Inventory Management System [12] | Software for real-time tracking, secure storage, and regulatory compliance of chemical inventory. | Streamlines sourcing of starting materials, saving critical time at the beginning of the "Make" phase. |

| DNA-Encoded Libraries (DELs) [16] | Vast libraries of small molecules covalently tagged with DNA barcodes for affinity screening. | Enables ultra-high-throughput screening of billions of compounds, identifying hit matter for synthesis. |

| Specialized AI Agents (e.g., Tippy) [13] | Autonomous AI agents (Molecule, Lab, Analysis) that manage specific DMTA tasks. | Replaces manual, error-prone tasks with automated, coordinated workflows for seamless cycle iteration. |

Synthesis bottlenecks represent a significant cost driver and source of delay in the DMTA cycle, directly impacting the pace and economics of drug discovery. The comparative analysis presented here demonstrates that no single solution exists; rather, a synergistic combination of strategies shows the most promise. AI-powered synthesis planning accelerates route design, robotic automation expedites physical execution, and emerging agentic AI systems integrate these steps into a cohesive, parallel workflow. The validation of these approaches through high-throughput robotic labs and production-level AI implementations marks a significant shift from traditional sequential methods. For researchers, the strategic integration of these tools—from make-on-demand building blocks to specialized AI agents—is becoming essential to overcome the high cost of synthesis failure and realize a more efficient and productive drug discovery pipeline.

FAIR Data as a Foundation for Predictive and Validatable Models

The Findable, Accessible, Interoperable, and Reusable (FAIR) data principles have emerged as a critical framework for enhancing scientific research reproducibility and accelerating discovery. This review examines the implementation and impact of FAIR data principles within materials science and drug development, focusing on their role in creating predictive and validatable computational models. We compare experimental outcomes from various FAIR initiatives, provide detailed protocols for assessing data FAIRness, and visualize the workflow connecting FAIR data to model validation. The analysis demonstrates that FAIR-compliant data management significantly improves model accuracy, reproducibility, and cross-disciplinary interoperability, establishing it as a foundational requirement for next-generation research infrastructure in material synthesis and biomedical applications.

The FAIR data principles were established in 2016 as guiding concepts for scientific data management and stewardship to optimize the reuse of scholarly data [17]. These principles emphasize machine-actionability alongside human understanding, recognizing our increasing reliance on computational systems for data analysis [18]. The acronym FAIR represents four core attributes:

- Findable: Data and metadata should be easily discoverable by humans and computers through persistent identifiers and rich metadata [19].

- Accessible: Data should be retrievable using standardized protocols, with authentication where necessary [20].

- Interoperable: Data should integrate with other datasets and applications through common formats and vocabularies [19].

- Reusable: Data should be sufficiently described with clear licensing and provenance to enable replication and combination [20].

In both materials science and pharmaceutical research, FAIR principles address critical challenges in data-driven innovation. The biopharmaceutical industry has recognized FAIR implementation as fundamental for digital transformation, enabling powerful artificial intelligence and machine learning analytics to access data automatically and at scale [21]. Similarly, materials science initiatives leverage FAIR frameworks to manage complex synthesis and characterization data, facilitating the development of predictive models for material properties and performance [17].

Experimental Comparisons: FAIR vs. Traditional Data Management

Cross-Disciplinary FAIR Initiatives and Outcomes

Multiple government-funded initiatives have implemented FAIR principles with measurable outcomes for predictive modeling and research validation.

Table 1: Major FAIR Data Initiatives and Research Outcomes

| Initiative | Funding Agency | Research Focus | Key Outcomes |

|---|---|---|---|

| FAIR4HEP | DOE (US) | Physics-inspired AI in High Energy Physics | Developed FAIR framework for novel AI approaches; enabled exploration of new ML techniques [17] |

| ENDURABLE | DOE (US) | Benchmark datasets and AI models | Provided robust, scalable tools for aggregating diverse scientific datasets; improved training of state-of-the-art ML models [17] |

| Materials Data Facility (MDF) | NIST | Materials science data | Collected >80TB across nearly 1,000 datasets; enabled access to ML-ready datasets with minimal code [17] |

| Neurodata Without Borders (NWB) | NIH BRAIN Initiative | Neurophysiology data | Created standard for sharing neurophysiology data; growing software ecosystem for data analysis [17] |

| BioDataCatalyst | NIH | Heart, lung, and blood datasets | Enhanced annotated metadata complying with FAIR principles; improved dataset interoperability [17] |

Quantitative Assessment of FAIR Implementation Benefits

Educational interventions implementing FAIR principles demonstrate measurable improvements in research reproducibility and data quality. A 2022-2023 study with postgraduate biomedical students developed an 11-item questionnaire with strong internal consistency (Cronbach's α and McDonald's ω) to assess FAIRness in master's thesis research [22]. The implementation of Data Management Plans (DMPs) that included system descriptions, data flow, management roles, and methods for back-ups and storage resulted in significant improvements in data reusability and transparency [22].

In industrial contexts, pharmaceutical R&D has reported efficiency improvements through FAIR implementation. By making data machine-readable and accessible to powerful analytical tools, companies have accelerated early drug discovery and target identification processes [20]. The interoperability aspect of FAIR principles has been particularly valuable for integrating diverse datasets—from genomic research to clinical trial results—which is a cornerstone of advancing research and discovery [20].

Methodologies: Implementing and Assessing FAIR Data

Practical Framework for FAIR Data Preparation

Implementing FAIR data practices requires systematic approaches throughout the research lifecycle. Cornell University's Research Data Management Service Group provides a comprehensive checklist for preparing FAIR data [18]:

Dataset/Files Requirements:

- Deposit in open, trusted repositories with registered DOIs

- Use standard, open file formats where possible

- Ensure data and metadata are retrievable via API or discoverable through open search protocols

Metadata Documentation:

- Provide unambiguous descriptions of all data files including file types and software requirements

- Include disciplinary notation and terminology (SI units, domain identifiers)

- Incorporate machine-readable standards (ORCIDs, W3C/ISO 861 date standards)

- Include clear citation formats and licensing information

- Make metadata exportable in machine-readable formats (XML, JSON)

The European Commission's guidelines emphasize that FAIR does not necessarily mean "open"—data can remain restricted while still adhering to FAIR principles, particularly when privacy or intellectual property concerns exist [19]. This "as open as possible, as closed as necessary" approach enables compliance with FAIR principles while addressing legitimate data protection requirements.

FAIRness Assessment Protocol

The methodology for assessing FAIR implementation involves structured evaluation tools. The educational study at Universidad Europea de Madrid adapted existing self-assessment tools to create an 11-item questionnaire evaluating all FAIR components [22]:

Findability Assessment (4 items):

- Evaluation of persistent identifier implementation

- Assessment of metadata richness and completeness

- Analysis of search resource registration

Accessibility Assessment (2 items):

- Verification of retrieval protocols

- Confirmation of metadata persistence

Interoperability Assessment (3 items):

- Evaluation of common language knowledge representation

- Assessment of standardized vocabulary implementation

- Analysis of qualified references to other data

Reusability Assessment (2 items):

- Verification of clear usage licenses

- Confirmation of detailed provenance information

- Assessment of domain-relevant community standard compliance

This protocol demonstrated strong internal consistency for measuring FAIR implementation levels, providing researchers with a validated tool for evaluating their data management practices [22].

Visualizing the FAIR Data to Validation Workflow

The relationship between FAIR data principles and model validation can be visualized through a systematic workflow that transforms raw data into predictive, validatable models:

FAIR Data to Validation Workflow: This diagram illustrates the systematic transformation of raw experimental data into validated predictive models through the application of FAIR principles, enabling diverse research applications.

Essential Research Reagents and Solutions

Implementing FAIR-compliant research requires specific tools and platforms that facilitate data management, sharing, and reuse across material synthesis and drug development domains.

Table 2: Essential FAIR Data Management Tools and Solutions

| Tool/Category | Primary Function | Application in Research |

|---|---|---|

| Persistent Identifier Services (DOI, Handle) | Provide unique, permanent identifiers for datasets | Enables reliable citation and locating of datasets over time [19] |

| Domain Repositories (Materials Data Facility, Neurodata Without Borders) | Discipline-specific data storage with specialized curation | Maintains context-specific standards and metadata requirements [17] |

| General Repositories (Zenodo, Harvard Dataverse) | Cross-disciplinary data preservation and sharing | Provides FAIR-compliant storage when domain repositories unavailable [19] |

| Metadata Standards (Dublin Core, domain-specific schemas) | Structured description of data content and context | Enhances discoverability and enables automated integration [19] |

| Controlled Vocabularies/Ontologies (FAIRsharing, OBO Foundry) | Standardized terminology for data annotation | Ensures semantic interoperability across datasets and platforms [19] |

| Data Management Plan Tools | Formalize data collection, storage, and sharing protocols | Documents roles, responsibilities, and preservation strategies [22] |

| FAIR Assessment Tools (ARDC FAIR Self-Assessment, F-UJI) | Evaluate compliance with FAIR principles | Provides metrics for improvement and standardization [22] |

The implementation of FAIR data principles establishes a robust foundation for developing predictive and validatable models in material synthesis and drug development. Experimental evidence from multiple initiatives demonstrates that FAIR-compliant data management enhances model accuracy, accelerates discovery, and improves research reproducibility. The structured methodologies for FAIR implementation and assessment provide researchers with practical frameworks for optimizing their data practices. As research becomes increasingly data-intensive and interdisciplinary, the FAIR principles offer an essential framework for ensuring that scientific data remains valuable, meaningful, and impactful for future discoveries.

Modern Tools and Techniques for Synthesis and Validation

The field of chemical synthesis is undergoing a profound transformation, driven by the integration of artificial intelligence. AI-powered Computer-Aided Synthesis Planning (CASP) represents a fundamental shift from traditional, intuition-dependent approaches to data-driven, predictive science. This revolution is occurring across multiple domains, from pharmaceutical development to materials science, where researchers face increasing pressure to accelerate discovery timelines while reducing costs and environmental impact [23] [24]. The global AI in CASP market, valued at USD 2.13-3.1 billion in 2024-2025, is projected to grow at a remarkable 38.8%-41.4% CAGR to reach USD 68.06-82.2 billion by 2034-2035, reflecting the significant value and adoption of these technologies [23] [25].

The convergence of AI with synthesis planning has enabled capabilities that were previously unimaginable. Traditional chemical synthesis relied heavily on manual expertise and trial-and-error experimentation, but AI-driven CASP systems now leverage predictive modeling, data-driven retrosynthesis, and automated route optimization to suggest efficient synthetic pathways [25]. By analyzing vast chemical reaction databases and applying deep learning algorithms, these systems can anticipate potential side reactions and identify cost-effective, sustainable routes for compound development [25]. This technological evolution is particularly crucial in pharmaceuticals, where AI capabilities can reduce conventional drug discovery timelines of 10-15 years by 30-50% in preclinical discovery phases [23].

The CASP Technology Landscape: A Comparative Analysis

Core Methodologies and Algorithms

Modern CASP tools employ diverse computational approaches, each with distinct strengths and applications. The foundation of these systems lies in their ability to navigate the complex space of possible synthetic pathways, optimizing for multiple objectives including yield, cost, safety, and environmental impact [26].

A significant algorithmic advancement involves formulating synthesis planning as a combinatorial optimization problem on hypergraphs, where individual synthesis plans are modeled as directed hyperpaths embedded in a hypergraph of reactions (HoR) representing the chemistry of interest [26]. This approach enables polynomial-time algorithms to find the K shortest hyperpaths, corresponding to the K best synthesis plans for a given target molecule. This methodology represents a substantial improvement over greedy retrosynthetic approaches, which may leave out synthesis plans with costly last steps but much better first steps [26].

Table 1: Comparative Analysis of AI Synthesis Planning Approaches

| Approach Type | Core Methodology | Key Advantages | Limitations | Representative Tools |

|---|---|---|---|---|

| Retrosynthetic Analysis | Top-down decomposition using heuristic rules | Mimics chemist's reasoning; intuitive bond disconnection | Greedy approach may miss globally optimal paths; rule-dependent | LHASA, SynGen [26] |

| Hypergraph-based Pathfinding | Models synthesis as hyperpaths in reaction hypergraphs | Finds K best plans efficiently; polynomial time complexity | Requires well-defined reaction network | Custom implementations [26] |

| Machine Learning/Deep Learning | Neural networks trained on reaction databases | Adapts to new data; handles complex pattern recognition | Data quality dependent; black box limitations | IBM RXN, Molecule.one [25] [27] |

| Generative AI | Generates novel synthetic routes using pattern recognition | Creative route discovery; multi-step planning | Limited accuracy with complex molecules | ChatGPT, Bard (with limitations) [28] |

Performance Metrics and Validation

The transition of CASP from theoretical promise to practical tool requires robust validation against experimental outcomes. Performance evaluation encompasses multiple dimensions, including synthetic accessibility, route efficiency, and computational requirements.

Recent research demonstrates that CASP systems can successfully transfer from commercial building block libraries to constrained laboratory environments. One study deployed the open-source synthesis planning toolkit AiZynthFinder with two different building block sets: 5,955 in-house university building blocks versus 17.4 million commercial compounds [29]. The results revealed that the performance difference was surprisingly small despite the 3000-fold reduction in available building blocks. Using the limited in-house building blocks, solvability rates for drug-like molecules were approximately 60%, compared to around 70% with extensive commercial libraries—a decrease of only 12% [29]. The primary trade-off was route length, with in-house building blocks requiring synthesis routes that were, on average, two reaction steps longer [29].

Table 2: Quantitative Performance Comparison of AI Chemistry Tools

| Tool/Platform | Primary Function | Accuracy/Performance Metrics | Experimental Validation | Key Limitations |

|---|---|---|---|---|

| ChatGPT | Text-based chemistry assistance | 38% accuracy converting condensed structures to IUPAC names; 94% accuracy identifying functional groups from condensed structures [28] | Limited laboratory validation; primarily educational assessment | Struggles with InChi (22-17% accuracy) and SMILES (56-44% accuracy) notations [28] |

| Bard | Text-based chemistry assistance | Consistently lower performance than ChatGPT across most tasks [28] | Limited laboratory validation; primarily educational assessment | Significant limitations with structural notations [28] |

| AiZynthFinder | Retrosynthesis planning | ~60-70% solvability rates for drug-like molecules; route length increase of ~2 steps with limited building blocks [29] | Comprehensive validation with 200,000+ molecules; experimental synthesis follow-up [29] | Performance depends on building block inventory [29] |

| IBM RXN | Reaction prediction & retrosynthesis | Industry adoption in pharmaceutical workflows | Published case studies; pharmaceutical industry adoption | Commercial platform with limited free access [27] |

Experimental Validation: Methodologies and Protocols

Robotic Laboratory Validation of Precursor Selection

The integration of AI-powered synthesis planning with automated experimental validation represents the cutting edge of materials research methodology. A landmark study demonstrated a novel approach to precursor selection for inorganic materials synthesis, validated through high-throughput robotic experimentation [14].

Experimental Protocol: Researchers developed new criteria for selecting precursor powders based on careful study of phase diagrams and consideration of pairwise reactions between precursors [14]. To test this approach, they selected 224 reactions spanning 27 elements with 28 unique precursors targeting production of 35 oxide materials [14]. The validation utilized the Samsung ASTRAL robotic laboratory to accelerate experimentation, completing in a few weeks what would typically require months or years of manual effort [14].

Results and Impact: The new precursor selection method obtained higher purity products for 32 of the 35 target materials compared to traditional approaches [14]. This methodology directly addresses the synthesis bottleneck in new technology development by enabling more efficient production of known materials and facilitating the synthesis of computationally predicted materials with improved performance [14]. The combination of AI-guided precursor selection with robotic synthesis represents a powerful framework for accelerating materials discovery.

Diagram 1: Robotic Validation Workflow

In-House Synthesizability Scoring Protocol

Bridging the gap between computational prediction and practical synthesis requires specialized methodologies tailored to resource-constrained environments. A 2025 study established a comprehensive protocol for developing in-house synthesizability scores that reflect actual laboratory capabilities rather than theoretical commercial availability [29].

Experimental Workflow: The methodology involves multiple stages of data collection, model training, and experimental validation:

- Building Block Inventory Assessment: Precisely catalog available in-house building blocks (5,955 compounds in the case study) [29].

- Comparative CASP Performance Benchmarking: Evaluate synthesis planning success rates using both in-house building blocks and extensive commercial libraries (17.4 million compounds) across diverse molecular datasets (200,000 randomly sampled drug-like molecules) [29].

- Synthesizability Model Training: Train machine learning models to predict CASP outcomes using molecular structure features, requiring approximately 10,000 molecules for effective training [29].

- De Novo Molecular Design Integration: Incorporate the synthesizability score as an objective in multi-objective optimization alongside activity predictions (e.g., QSAR models) [29].

- Experimental Validation: Synthesize and biologically evaluate top-ranked candidates using CASP-suggested routes with exclusively in-house building blocks [29].

Key Findings: The research demonstrated that including the in-house synthesizability score in de novo drug design enabled generation of thousands of potentially active and easily synthesizable molecules [29]. Experimental evaluation of three candidates yielded one with evident biological activity, validating the practical utility of the approach [29].

Diagram 2: In-House Synthesizability Workflow

K-Best Synthesis Planning Methodology

Traditional synthesis planning often focuses on identifying a single optimal route, but practical chemistry requires consideration of multiple alternatives. A fundamental algorithmic advancement enables efficient identification of the K best synthesis plans using hypergraph representations [26].

Computational Protocol:

- Hypergraph Construction: Represent the set of chemical reactions as a directed hypergraph (hypergraph of reactions, or HoR), where reactions correspond to hyperedges connecting precursor sets to product sets [26].

- Hyperpath Identification: Model individual synthesis plans as hyperpaths within the HoR, establishing a direct correspondence between synthetic routes and mathematical structures [26].

- K-Shortest Hyperpaths Algorithm: Apply a polynomial-time algorithm to find the K shortest hyperpaths, corresponding to the K best synthesis plans for any given number K [26].

- Multi-objective Optimization: Compute sets of best plans for different quality measures (e.g., yield, cost, environmental impact) and identify intersections to find plans satisfying multiple criteria [26].

Advantages Over Traditional Methods: This approach provides robustness against later-stage feasibility issues, enables optimization across multiple cost functions, and handles imprecise yield estimates through intersection of plan sets for different yield values [26]. The methodology is not restricted to bond-set based approaches and can incorporate any set of known reactions and starting materials [26].

The Research Toolkit: Essential Solutions for AI-Powered Synthesis

Computational Infrastructure and Software Tools

Table 3: Essential Research Reagents and Computational Tools

| Tool/Category | Specific Examples | Function/Role in Research | Implementation Considerations |

|---|---|---|---|

| Retrosynthesis Platforms | IBM RXN, Molecule.one, ChemPlanner (Elsevier), Chematica (Merck KGaA) [23] [27] | Predict synthetic routes for target molecules; retrosynthetic analysis | Varying building block databases; different algorithm approaches (ML, rule-based) |

| Molecular Design Suites | Schrödinger Materials Science Suite, BIOVIA (Dassault Systèmes) [23] [27] | Molecular modeling, simulation, and property prediction | High computational requirements; integration with experimental data |

| Open-Source Libraries | DeepChem, RDKit, OpenEye [23] [27] | Democratize AI capabilities; enable custom model development | Require programming expertise; flexible but implementation-heavy |

| Building Block Databases | Zinc (17.4M compounds), Led3 (5,955 in-house compounds) [29] | Provide available starting materials for synthesis planning | Critical for practical implementation; requires curation and maintenance |

| Laboratory Automation | Samsung ASTRAL robotic lab [14] | High-throughput experimental validation of predicted syntheses | Significant capital investment; programming and maintenance expertise |

| AI-Chatbots | ChatGPT, Bard [28] | Educational assistance; preliminary synthesis ideation | Limited accuracy with complex chemical notations; improving rapidly |

Building Block Management and Curation

The practical implementation of AI-powered synthesis planning requires careful management of building block resources, which serve as the fundamental "alphabet" for constructing target molecules. Research demonstrates that strategic curation of building block collections can maintain high synthetic coverage while dramatically reducing resource requirements [29].

Key Considerations:

- Diversity Over Size: A carefully selected collection of ~6,000 building blocks can achieve only 12% lower solvability rates compared to 17.4 million commercial compounds [29].

- Route Length Trade-offs: Limited building block inventories typically result in synthesis routes that are approximately two steps longer on average, but remain synthetically feasible [29].

- Domain-Specific Optimization: Building block collections should be tailored to specific research domains (e.g., pharmaceuticals, materials science) to maximize relevant chemical space coverage [29].

The integration of AI-powered synthesis planning with experimental automation represents a paradigm shift in chemical research and development. The methodologies and validation protocols detailed in this analysis demonstrate the rapid maturation of CASP from theoretical concept to practical tool. The emergence of chemical chatbots, while currently limited in accuracy, points toward increasingly intuitive interfaces that will democratize access to complex synthesis planning capabilities [28].

The validation framework for AI-powered synthesis continues to evolve, incorporating more sophisticated metrics beyond simple route prediction to include practical considerations such as in-house synthesizability, environmental impact, and scalability [29]. The successful experimental validation of AI-designed synthesis routes for pharmacologically active compounds provides compelling evidence of the technology's readiness for mainstream adoption [29].

As the field advances, the convergence of algorithmic improvements, expanded reaction databases, and automated laboratory systems will further accelerate the discovery and development of novel molecules and materials. Researchers who strategically integrate these AI-powered tools while maintaining rigorous experimental validation will lead the next wave of innovation across pharmaceuticals, materials science, and sustainable chemistry.

High-Throughput Experimentation (HTE) Platforms as Validation Engines

In material synthesis methods research, validation is the critical process of confirming that a proposed synthetic route or condition is reproducible, scalable, and effective across a broad chemical space. High-Throughput Experimentation (HTE) has emerged as a powerful engine for this validation, transforming it from a linear, confirmation-based activity into a parallel, knowledge-generating process. By enabling the rapid empirical testing of hundreds to thousands of hypotheses simultaneously, HTE platforms provide the dense experimental data necessary to rigorously validate the scope, limitations, and optimal parameters of synthetic methodologies [30] [31]. This capability is crucial across diverse fields, from pharmaceutical development to the discovery of functional materials for energy applications [32] [33].

The traditional model of validation, often relying on one-factor-at-a-time (OFAT) experimentation, is inefficient and can miss complex variable interactions. HTE addresses this by integrating automation, miniaturization, and parallelization, allowing researchers to empirically map a reaction's behavior across a wide landscape of conditions in a single, coordinated experimental campaign [32] [34]. The resulting datasets move validation beyond singular success/failure outcomes, instead creating a multivariate understanding of a method's robustness. Furthermore, the rise of machine learning (ML) and active learning (AL) approaches has created a symbiotic relationship with HTE; these algorithms rely on high-quality, high-volume HTE data to build predictive models, and in turn, guide HTE campaigns to explore chemical spaces more efficiently, accelerating the validation feedback loop [32] [33].

A Comparative Analysis of HTE Platform Architectures

HTE platforms can be broadly categorized by their core operational mode—batch or flow—each with distinct advantages, limitations, and suitability for specific validation tasks. The choice of platform dictates the type of variables that can be controlled, the nature of the chemistry that can be performed, and the ease of translating validated conditions to scale.

Batch vs. Flow HTE Platforms

Table 1: Comparison of Batch and Flow HTE Platforms for Method Validation

| Feature | Batch HTE Platforms | Flow HTE Platforms |

|---|---|---|

| Core Principle | Parallel reactions in discrete, closed vessels (e.g., well plates) [32] | Continuous reactions in a stream of fluid pumped through tubing or microchannels [35] |

| Throughput Strength | High parallelization (24 to 1536 reactions per run) [32] | High serial throughput via process intensification; lower inherent parallelization [35] |

| Optimal Validation Use Case | Screening categorical variables (catalysts, ligands, bases) and stoichiometries [32] | Optimizing continuous variables (time, temperature, pressure); hazardous chemistry [35] |

| Parameter Control | Limited independent control of time/temperature per well; challenges with volatile solvents [35] [32] | Precise, dynamic control of residence time, temperature, and pressure [35] |

| Heat/Mass Transfer | Less efficient, can be a scaling liability [35] | Highly efficient due to large surface-area-to-volume ratio [35] |

| Scale-Up Translation | Often requires re-optimization due to changing transfer properties [35] | Easier scale-up by numbering up or prolonged operation [35] |

| Process Windows | Limited by solvent boiling points and safety in miniaturized wells [32] | Access to superheated solvents and extreme conditions via pressurization [35] |

| Example Applications | Suzuki couplings, Buchwald-Hartwig aminations, photoredox catalysis [32] [34] | Photochemical reactions, electrochemical synthesis, reactions with hazardous intermediates [35] |

Specialized and Integrated HTE Systems

Beyond these core categories, specialized platforms address unique validation challenges. For radiochemistry, where the short half-life of isotopes like ¹⁸F (109.8 minutes) is a major constraint, HTE workflows using 96-well blocks and parallel analysis via PET scanners or gamma counters have been developed to validate radiofluorination conditions orders of magnitude faster than manual methods [36]. In materials science, integrated robotic platforms combine sample handling, synthesis, and characterization. For instance, a platform for discovering redox flow battery electrolytes used a robotic arm for powder and liquid dispensing, automated sample preparation for qNMR analysis, and an active learning advisor to guide experiments, validating high-solubility conditions for a target molecule from a library of over 2000 candidates by testing fewer than 10% [33].

Experimental Protocols for HTE-Driven Validation

The following case studies exemplify standardizable HTE protocols that yield high-quality, validation-ready data.

Case Study 1: Validating a Photoredox Fluorodecarboxylation in Flow

This protocol details the escalation from initial microtiter plate screening to validated kilogram-scale synthesis in flow, demonstrating how HTE de-risks process development [35].

- Objective: To discover and validate optimal conditions for a flavin-catalyzed photoredox fluorodecarboxylation reaction, and subsequently scale the process.

- Workflow Diagram:

- Initial HTE Screening: A 96-well plate-based photoreactor was used to screen 24 photocatalysts, 13 bases, and 4 fluorinating agents in parallel. This high-level scan identified several promising "hits" outside previously reported optimal conditions [35].

- Hit Validation and DoE: The most promising conditions from the HTE screen were validated in a batch reactor. Subsequently, a Design of Experiments (DoE) approach was employed to model the reaction landscape and fine-tune the interplay of critical variables like temperature and concentration [35].

- Follow-up HTE for Flow: As the optimized batch procedure was heterogeneous, a subsequent small-scale HTE campaign was conducted to identify a homogeneous photocatalyst, mitigating the risk of clogging in a flow reactor [35].

- Flow Translation and Scale-up: The process was first transferred to a lab-scale Vapourtec UV150 photoreactor, achieving 95% conversion on a 2g scale. After further optimization of flow-specific parameters (light power, residence time), the process was successfully scaled to a kilogram scale, producing 1.23 kg of product at 97% conversion and 92% yield, validating the initial HTE findings at a commercially relevant scale [35].

Case Study 2: Validating Electrolyte Formulations via Active Learning

This protocol showcases a closed-loop validation system where HTE and machine learning are integrated to efficiently navigate a vast experimental space [33].

- Objective: To rapidly discover and validate binary solvent mixtures that maximize the solubility of a redox-active molecule (2,1,3-benzothiadiazole, BTZ) for redox flow batteries.

- Workflow Diagram:

- HTE Robotic Platform: An automated platform prepared saturated solutions of BTZ in various solvents. A robotic arm handled powder and liquid dispensing, samples were equilibrated for 8 hours, and then automatically sampled into NMR tubes for analysis. This automated "excess solute" method produced thermodynamic solubility data in about 39 minutes per sample, over 13 times faster than manual processing [33].

- Active Learning Guidance: The workflow was guided by Bayesian optimization (BO), an active learning algorithm. The BO advisor acted as a surrogate model, predicting the solubility of untested solvent combinations and balancing exploration of unknown spaces with exploitation of promising areas. It recommended the next set of solvents for experimental validation by the HTE platform [33].

- Validation Outcome: This closed-loop system identified multiple binary solvent mixtures (notably with 1,4-dioxane) that achieved a solubility exceeding 6.20 M for BTZ. Critically, it required solubility measurements for fewer than 10% of the 2,000+ candidate solvents, demonstrating highly efficient empirical validation of optimal formulations [33].

The Scientist's Toolkit: Essential Reagents & Materials

Successful execution of HTE campaigns relies on a suite of reliable reagents, hardware, and software solutions.

Table 2: Key Research Reagent Solutions for HTE

| Item | Function in HTE Validation | Examples & Notes |

|---|---|---|

| Microtiter Plates (MTP) | Standardized vessels for parallel batch reactions in 24-, 96-, 384-, or 1536-well formats [32]. | Widespread availability enables adoption; material must be chemically compatible with reaction conditions. |

| Liquid Handling Robots | Automation of repetitive pipetting tasks for accurate, rapid dispensing of reagents and solvent across many wells [32] [30]. | Vendors: Tecan, Hamilton, Chemspeed. Critical for reproducibility and throughput. |

| Modular Flow Reactors | Enable continuous flow chemistry for screening and optimization; often specialized (photochemical, electrochemical) [35]. | Vendors: Vapourtec, Corning. Allow precise control of reaction parameters and safe handling of hazardous reagents. |

| Process Analytical Technology (PAT) | Inline or online analysis (e.g., IR, UV) for real-time reaction monitoring, providing immediate data for validation [35]. | Reduces need for manual quenching and offline analysis, accelerating the feedback loop. |

| Electronic Lab Notebooks (ELN) & LIMS | Software for capturing experimental design, raw data, and results in a FAIR (Findable, Accessible, Interoperable, Reusable) manner [30]. | Essential for managing the large data volumes generated by HTE and enabling subsequent analysis. |

| Analysis & Informatics Platforms | Tools for parsing analytical data (e.g., LCMS), statistical analysis, and visualizing results from HTE campaigns [31] [37]. | Examples: HTE OS (open-source), Spotfire, HiTEA (High-Throughput Experimentation Analyser) [37] [31]. |

Performance Data and Validation Metrics

The efficacy of HTE as a validation engine is quantifiable through direct comparisons with traditional methods and key performance indicators from published studies.

Table 3: HTE Performance Metrics in Validation Campaigns

| Validation Context | Traditional Method | HTE Approach | Validated Outcome & Performance Gain |

|---|---|---|---|

| Reaction Optimization [32] | One-factor-at-a-time (OFAT), sequential optimization | Parallel screening of multi-variable experimental spaces using automated batch platforms | Drastically reduced optimization time; enables exploration of complex variable interactions. |

| Solubility Screening [33] | Manual "excess solute" method (~525 min/sample) | Automated HTE robotic platform with qNMR | ~13x faster (39 min/sample); discovered solvents with >6.20 M solubility after testing <10% of search space. |

| Radiochemistry (CMRF) [36] | Manual setup & analysis (1.5–6 h for 10 reactions) | 96-well block with parallel analysis (PET, gamma) | Enabled setup/analysis of 96 reactions within 20 min; optimal conditions translated to 10-fold larger scale. |

| Data-Driven Insight [31] | Literature meta-analysis, prone to success bias | Statistical analysis of large HTE datasets (e.g., HiTEA on 39,000+ reactions) | Identifies statistically significant best/worst-in-class reagents and reveals dataset biases. |

The future of HTE as a validation engine is inextricably linked to advances in artificial intelligence and data infrastructure. While the ability to generate data has accelerated, the challenge remains to optimally leverage this data for decision-making [30]. The next evolutionary step is the widespread adoption of closed-loop, self-optimizing systems where HTE platforms operate autonomously under the guidance of active learning algorithms [32] [33]. This will further compress the validation cycle for complex synthetic problems.

Furthermore, the establishment of FAIR (Findable, Accessible, Interoperable, Reusable) data principles and robust open-source software platforms like HTE OS will be crucial for consolidating knowledge and building predictive models that generalize beyond single campaigns [30] [37]. The development of sophisticated statistical frameworks like HiTEA (High-Throughput Experimentation Analyser) allows researchers to deconvolute the "reactome" hidden within large HTE datasets, moving from simple condition optimization to deep chemical understanding [31]. As these technologies mature, HTE will solidify its role as the indispensable engine for validating the synthetic methods that will underpin future innovations in medicine and materials science.

Integrating Retrosynthesis Models Directly into the Optimization Loop

The discovery of new molecules for applications in drug development and functional materials represents a frontier of scientific innovation. However, a significant bottleneck persists between computational design and practical application: synthesizability. A generated molecule holds little value if it cannot be practically synthesized for experimental validation. Traditionally, assessing synthesizability has relied on two principal approaches. The first utilizes heuristic metrics—such as the Synthetic Accessibility (SA) score or SYnthetic Bayesian Accessibility (SYBA)—which estimate complexity based on molecular fragment frequencies found in known compounds [38]. The second approach employs post hoc filtering with retrosynthesis models like AiZynthFinder or IBM RXN, which predict viable synthetic pathways after molecules have been generated [38]. While heuristics are computationally inexpensive, they are often derived from known bio-active molecules and may not generalize well to novel chemical spaces, such as functional materials. Conversely, while retrosynthesis models provide a more rigorous assessment, their computational cost has historically been prohibitive for direct use within an optimization loop, limiting them primarily to a final filtering role [38] [39].

A paradigm shift is emerging, moving away from post hoc analysis toward direct integration. This approach directly incorporates retrosynthesis models as oracles within the goal-directed optimization loop itself. By doing so, every generated molecule is evaluated not just for its target properties (e.g., binding affinity, catalytic activity) but also for the feasibility of its synthesis pathway from available starting materials. This article provides a comparative analysis of this nascent methodology against established alternatives, examining its performance, resource demands, and validity within the broader context of material synthesis method validation.

Comparative Analysis of Synthesizability Assessment Methods

The table below objectively compares the three primary strategies for ensuring synthesizability in generative molecular design.

Table 1: Comparison of Synthesizability Assessment Methods in Molecular Optimization

| Methodology | Key Examples | Underlying Principle | Advantages | Limitations |

|---|---|---|---|---|

| Heuristic Metrics | SA Score, SYBA, SC Score [38] | Rule-based or frequency-based scoring of molecular fragments from known compounds. | - Computationally inexpensive- Fast to compute- Well-correlated with retrosynthesis solvability for drug-like molecules [38] | - Imperfect proxies for true synthesizability- Poor correlation for non-drug-like molecules (e.g., functional materials) [38]- Can overlook promising, synthetically accessible chemical space [38] [39] |

| Post Hoc Retrosynthesis Filtering | AiZynthFinder, IBM RXN, ASKCOS, SYNTHIA [38] | Molecules are generated first, then filtered based on a predicted synthetic pathway from commercial building blocks. | - Higher confidence in synthesizability assessment- Provides an actual synthetic route- Independent of pre-defined reaction rules during generation | - High computational inference cost- Inefficient optimization cycle; resources wasted generating unsynthesizable molecules [38]- Risk of discarding molecules late in the design process |

| Direct Integration into Optimization | Saturn model with AiZynthFinder or other retrosynthesis oracles [38] [39] | Retrosynthesis model is used as an oracle within the active learning loop to directly optimize for synthesizability alongside target properties. | - Directly optimizes for the desired outcome (a synthesizable molecule with good properties)- High sample efficiency under constrained budgets [38]- Superior performance on non-drug-like molecule classes [38] | - Computationally demanding per oracle call- Requires a highly sample-efficient generative model (e.g., Saturn) to be feasible [38]- Increased complexity in reward function design |

Performance and Experimental Data

Recent research demonstrates the tangible impact of directly integrating retrosynthesis models. A key study utilized the Saturn generative model, a sample-efficient language-based model built on the Mamba architecture, to perform Multi-Parameter Optimization (MPO) under a heavily constrained computational budget of only 1,000 property evaluations [38].

Quantitative Performance Comparison

The table below summarizes key experimental results that compare the direct integration method against other approaches.

Table 2: Experimental Performance Data for Synthesizability Optimization Methods

| Experiment Context | Metric | Heuristic (SA Score) Optimization | Direct Retrosynthesis Integration (Saturn) | Notes & Experimental Conditions |

|---|---|---|---|---|

| Drug Discovery MPO | Success Rate in finding synthesizable, high-scoring molecules [38] | Competitive | Competitive | Under the tested conditions, both methods performed similarly, reaffirming the correlation between heuristics and retrosynthesis solvability for drug-like molecules. |

| Functional Materials Design | Correlation between heuristic score and retrosynthesis solvability [38] | Diminished correlation | N/A | Highlights the fundamental weakness of heuristics outside their training domain. |

| Advantage in finding synthesizable, high-performing materials [38] | None | Clear benefit | Direct integration proved advantageous where heuristics failed. | |

| Formate Fuel Cell Catalyst Discovery | Improvement in Power Density per Dollar [40] | Benchmark not specified | 9.3-fold improvement over pure palladium | CRESt AI platform explored >900 chemistries, conducted 3,500 tests, discovering an 8-element catalyst [40]. |

| Precious Metal Loading [40] | Benchmark not specified | Reduced to one-fourth of previous devices | The discovered catalyst achieved record power density with drastically less precious metal. | |

| Computational Resource Use | Oracle Budget Required [38] | Low | High, but feasible with sample-efficient models | Earlier methods required budgets of 32,000-256,000 evaluations; direct integration succeeded with only 1,000 [38]. |

Detailed Experimental Protocol: Direct Optimization with Saturn

To validate the direct integration approach, researchers established a rigorous experimental protocol centered on the Saturn model [38]:

- Model Pre-training & Preparation: The Saturn model was initially pre-trained on large molecular datasets (ChEMBL or ZINC). In a key experimental design choice, a model was intentionally pre-trained on data unsuitable for generating synthesizable molecules to demonstrate the optimization recipe's power [38].

- Objective Function Formulation: The goal-directed optimization task was framed as a Multi-Parameter Optimization (MPO). The objective function combined:

- Primary Target Properties: These could include docking scores (predicting binding affinity to a protein target) or results from semi-empirical quantum-mechanical simulations (predicting electronic properties). These computations are themselves expensive, justifying the need for a constrained oracle budget [38].