Validating Structure-Property Relationships in Materials: From AI-Driven Discovery to Experimental Confirmation

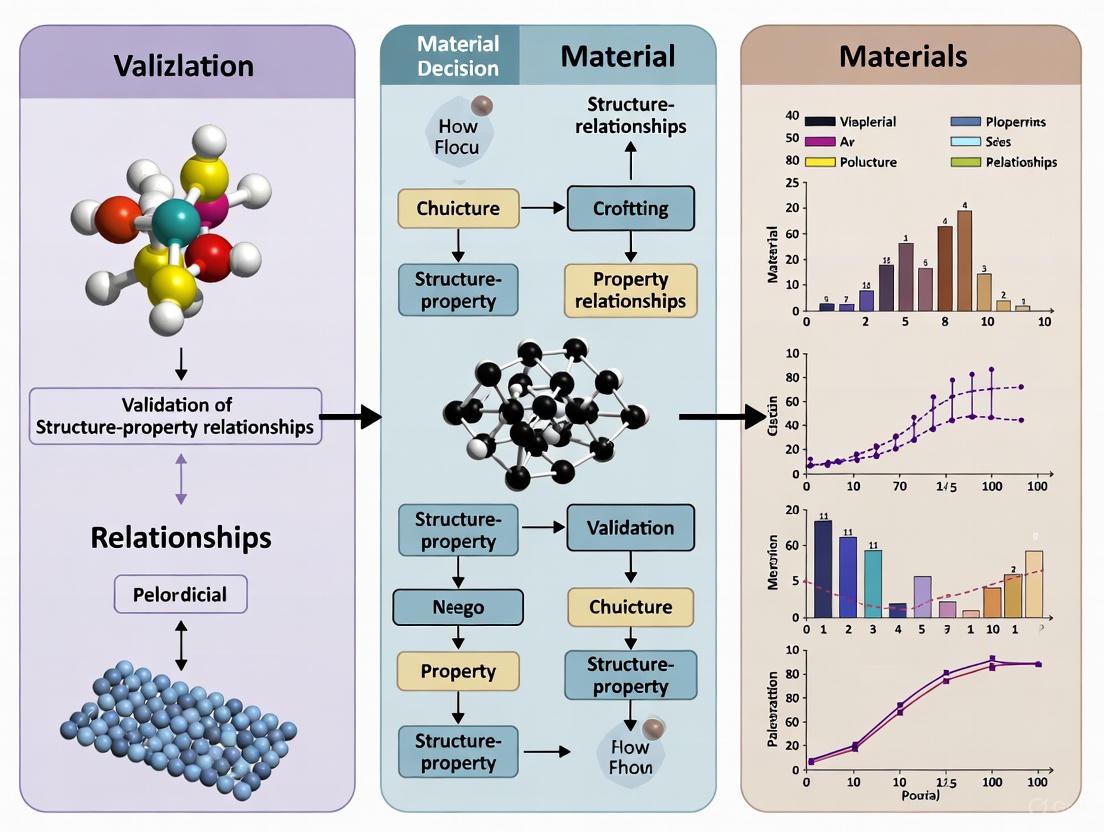

This article provides a comprehensive overview of modern strategies for validating structure-property relationships in materials science.

Validating Structure-Property Relationships in Materials: From AI-Driven Discovery to Experimental Confirmation

Abstract

This article provides a comprehensive overview of modern strategies for validating structure-property relationships in materials science. It explores the foundational principles connecting atomic structure to macroscopic properties, examines cutting-edge computational and AI-driven methodologies, addresses key challenges in data quality and model interpretability, and highlights rigorous experimental validation techniques. By integrating insights from interpretable deep learning, expert-curated AI frameworks, and high-throughput computational infrastructures, this review serves as a critical resource for researchers and scientists seeking to accelerate the discovery and deployment of novel materials, with significant implications for biomedical and clinical applications.

The Fundamental Link: How Atomic Structure Governs Material Properties

The principle that a material's properties are fundamentally determined by its internal structure—from the atomic scale to the macroscopic level—forms the foundational paradigm of materials science. Establishing quantitative structure-property relationships (SPRs) enables the prediction of material behavior and the rational design of new materials with targeted performance. Traditional experimental approaches to establishing SPRs are often time-consuming and resource-intensive, relying on iterative physical experiments and researcher intuition [1] [2]. The emergence of computational modeling, high-throughput computing (HTC), and advanced machine learning (ML) methods has revolutionized this process, creating new paradigms for accelerating materials discovery and validation [3] [4] [2]. This guide compares the performance and methodologies of contemporary computational frameworks dedicated to establishing and validating SPRs across diverse material classes.

Comparative Analysis of Structure-Property Relationship Methodologies

The following table provides a systematic comparison of major computational frameworks used for establishing SPRs, highlighting their core approaches, applications, and key performance characteristics.

Table 1: Performance Comparison of Structure-Property Relationship Methodologies

| Methodology / Framework | Core Approach | Reported Applications | Key Performance Strengths | Limitations / Challenges |

|---|---|---|---|---|

| Interpretable Deep Learning (SCANN) [3] | Self-consistent attention neural network using local attention layers to learn atomic structure representations. | Prediction of molecular orbital energies, formation energies of crystals [3]. | High predictive accuracy comparable to state-of-the-art models; identifies critical local structural features [3]. | Interpretability is achieved through architecture design but can remain complex. |

| Transductive OOD Prediction (MatEx) [4] | Bilinear transduction predicting properties based on differences in material representation. | Extrapolation for property prediction in solids and molecules [4]. | Improves extrapolative precision (1.8x for materials); boosts recall of high-performing candidates by up to 3x [4]. | Performance dependent on analogical relations in data; different from traditional regression. |

| XAI with LLMs (XpertAI) [5] | Combines XGBoost with XAI methods (SHAP/LIME) and LLMs for natural language explanations. | Toxicity/solubility of molecules, MOF properties [5]. | Generates scientifically accurate, human-interpretable explanations from raw data [5]. | Relies on human-interpretable input features; potential for LLM hallucination without RAG. |

| Graph-Based ML (MatDeepLearn) [6] | Graph neural networks (e.g., MPNN) to represent materials, followed by dimensional reduction for visualization. | Construction of materials maps for thermoelectric properties (zT) [6]. | Effectively captures structural complexity; creates visual maps to guide material discovery [6]. | High computational cost for large graphs; performance does not always translate to prediction accuracy [6]. |

| Active Learning for Experimentation [7] | Machine learning guides automated scanning probe microscopy to discover relationships between domain structure and properties. | Discovering polarization-switching characteristics in ferroelectric materials [7]. | Automates experimental discovery; identifies relationships without pre-defined hypotheses [7]. | Requires integration with specialized, automated experimental hardware. |

| Specialized LLMs (ElaTBot) [8] | Fine-tuned large language models (e.g., Llama2) on text-based material descriptions for property prediction. | Prediction of full elastic constant tensors and generation of new material compositions [8]. | Reduces prediction error by 33.1% vs. other domain-specific LLMs; enables multi-task prediction and design [8]. | Requires fine-tuning on domain-specific data; performance varies with dataset size [8]. |

Experimental Protocols and Workflows

This section details the experimental and computational methodologies employed by the frameworks discussed, providing a roadmap for their implementation and validation.

Interpretable Deep Learning with SCANN

The Self-Consistent Attention Neural Network (SCANN) architecture provides a structured approach to predicting material properties while identifying critical structural features [3].

- Input Representation: Each material structure ( S ) is represented by the atomic numbers and coordinates of its ( M ) atoms. Voronoi tessellation identifies the set of neighboring atoms ( \mathcal{N}i ) for each atom ( ai ) [3].

- Geometric and Atomic Embedding: A vector ( \mathbf{g}{ij}^0 ) is defined to capture the geometrical influence (Euclidean distance, Voronoi solid angle) of a neighboring atom ( aj ) on a central atom ( ai ). An embedding layer converts the atomic information of each atom into an initial ( h )-dimensional vector ( \mathbf{c}i^0 ) [3].

- Local Attention Layers: The architecture employs ( L ) local attention layers. The representation ( \mathbf{c}i^{l+1} ) of a local structure at layer ( (l+1) ) is derived via a local attention mechanism that incorporates information from neighboring atomic environments [3]: [ \mathbf{c}i^{l+1} = \mathrm{Attention}(\mathbf{q}i^l, \mathbf{K}{\mathcal{N}i}^l) + \mathbf{q}i^l ]

- Global Attention and Prediction: A final global attention layer aggregates the representations of all local structures to form a unified representation of the material structure, which is used for property prediction. The attention weights explicitly identify which local structures contribute most significantly to the target property [3].

Transductive Out-of-Distribution (OOD) Prediction

The Bilinear Transduction method (implemented as MatEx) addresses the challenge of predicting property values outside the range of training data, which is crucial for discovering high-performance materials [4].

- Data Splitting: The dataset is split such that the test set contains property values that are strictly higher (or lower) than all values in the training set, ensuring an OOD evaluation scenario [4].

- Model Training and Reparameterization: Rather than learning a direct mapping from a material's representation to its property value, the model is trained to predict how the property value changes as a function of the difference in representation between two materials [4].

- Inference: For a new test material, its property is predicted based on a chosen training example and the representational difference between the training and test materials. This facilitates extrapolation [4].

- Performance Metrics: The model is evaluated on OOD Mean Absolute Error (MAE) and Extrapolative Precision—the fraction of true top-performing OOD candidates correctly identified by the model [4].

Explainable AI with Literature Integration (XpertAI)

The XpertAI framework bridges the gap between complex ML models and human understanding by generating natural language explanations for SPRs [5].

- Surrogate Model Training: A machine learning model (default: XGBoost) is trained on the raw data, using human-interpretable input features (e.g., molecular descriptors, MACCS keys) to map structures to properties [5].

- Feature Impact Analysis: Explainable AI (XAI) methods, specifically SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations), are applied. Mean SHAP values or LIME Z-scores are computed to identify the molecular features with the strongest global impact on the target property [5].

- Evidence Retrieval: A Retrieval-Augmented Generation (RAG) system is used. The framework queries a vector database of scientific literature (e.g., from arXiv) to find text excerpts that provide scientific context for the relationships identified by the XAI analysis [5].

- Explanation Generation: A large language model (LLM), such as GPT-4, synthesizes the XAI output and the retrieved literature excerpts. Using a chain-of-thought prompt, it generates a final, cited natural language explanation that describes the structure-property relationship in scientifically accurate terms [5].

Diagram 1: XpertAI workflow for interpretable SPRs.

Active Learning for Experimental Discovery

This protocol automates the discovery of SPRs in real experimental settings, such as scanning probe microscopy (SPM) [7].

- Initialization: The process begins with an initial, sparse grid of measurements (e.g., piezoresponse force microscopy hysteresis loops) on the material sample [7].

- Model and Acquisition Function: A Gaussian Process (GP) model is trained on the collected data. The model's prediction and associated uncertainty are combined in an acquisition function (e.g., Expected Improvement) that balances exploration and exploitation [7].

- Automated Experimentation: The SPM system is directed to the next measurement location selected by the acquisition function. The hysteresis loop is measured, and scalar descriptors (nucleation bias, coercive bias, loop area) are extracted [7].

- Iterative Discovery: The model is updated with the new data, and the cycle repeats. The autonomous experiment actively discovers regions of interest based on the evolving understanding of the structure-property relationship, without pre-defined hypotheses [7].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table catalogues key computational tools and data resources that constitute the modern toolkit for data-driven SPR research.

Table 2: Key Research Reagents & Computational Tools for SPR Studies

| Tool / Resource Name | Type | Primary Function in SPR Research | Application Context |

|---|---|---|---|

| MatDeepLearn (MDL) [6] | Software Framework | Provides environment for graph-based material property prediction and materials map construction. | Implements GNNs (CGCNN, MPNN, MEGNet) for deep learning on crystal structures [6]. |

| Materials Project [3] [4] [8] | Computational Database | Repository of computed properties for inorganic compounds; provides training data and benchmarking. | Source of formation energies, band structures, and elastic properties [3] [4] [8]. |

| StarryData2 (SD2) [6] | Experimental Database | Systematically collects and organizes experimental data from published papers. | Provides experimental data for training models that predict real-world material performance [6]. |

| ElaTBot / ElaTBot-DFT [8] | Specialized LLM | Fine-tuned language model for predicting elastic constant tensors and generating new materials. | Case study in using LLMs for predicting complex, multi-component material properties [8]. |

| robocrystallographer [8] | Software Tool | Generates text descriptions of crystal structures from CIF files. | Converts structural data into text for fine-tuning or prompting LLMs [8]. |

| XGBoost [5] | ML Algorithm | A fast and effective gradient-boosting algorithm used as a surrogate model for SPRs. | Used in XpertAI framework for initial property prediction before XAI analysis [5]. |

| SHAP / LIME [5] | XAI Library | Explainable AI methods that quantify the contribution of input features to a model's prediction. | Identifies which structural descriptors most strongly influence a predicted property [5]. |

Diagram 2: Graph-based learning and visualization workflow.

The validation of structure-property relationships is being transformed by a new generation of computational tools. As evidenced by the comparative data, methodologies range from interpretable deep learning models like SCANN that provide atomic-level insights, to transductive frameworks like MatEx that excel at extrapolation, and hybrid systems like XpertAI that leverage LLMs to generate human-readable scientific explanations. The choice of methodology depends critically on the research goal: whether it is high-accuracy interpolation, discovery of out-of-distribution extremes, or fundamental scientific understanding. The integration of these data-driven approaches with high-throughput computing and automated experimentation, as seen in active learning workflows, creates a powerful, closed-loop paradigm for accelerating the design and discovery of next-generation materials.

A central challenge in materials science and drug development lies in transforming tacit knowledge—the subjective, cognitive, and experience-based understanding held by researchers—into robust, quantitative prediction models [9] [3]. This tacit knowledge, gained through repeated application and personal experience, is often intangible and difficult to articulate, yet it is a critical driver of innovation and intuition in the laboratory [10] [11]. The field of materials informatics has emerged to address this very challenge by employing data-driven methods to extract practical knowledge from both experimental and computational data, thereby accelerating the discovery of new materials with desired properties [3] [12].

This guide objectively compares the platforms, artificial intelligence (AI) methods, and experimental data infrastructures that are central to this quantitative transformation. By benchmarking performance and detailing experimental protocols, we provide researchers with a framework for validating structure-property relationships, a cornerstone of reliable materials design [13] [14].

Comparative Analysis of Quantitative Prediction Platforms

Rigorous benchmarking is fundamental to transforming tentative methods into trusted tools. The table below compares key platforms that enable the validation of quantitative prediction methods.

Table 1: Comparison of Platforms for Benchmarking Quantitative Predictions in Materials Science.

| Platform Name | Primary Focus | Key Benchmarking Features | Data Modalities Handled | Notable Contributions/Metrics |

|---|---|---|---|---|

| JARVIS-Leaderboard [13] | Integrated, multi-method benchmarking | Community-driven platform for custom benchmarks; covers AI, Electronic Structure, Force-fields, Quantum Computation, and Experiments. | Atomic structures, atomistic images, spectra, text. | 1281 contributions to 274 benchmarks using 152 methods; over 8 million data points. |

| MatBench [13] | AI/ML for material property prediction | Leaderboard for supervised machine learning tasks on inorganic materials. | Primarily atomic structures. | Provides 13 learning tasks from 10 datasets (e.g., from Materials Project). |

| HTEM-DB [15] | Experimental materials data repository | Database of high-throughput experimental data for inorganic thin films, enabled by a Research Data Infrastructure (RDI). | Material synthesis conditions, chemical composition, structure, properties. | Houses data from over 70 instruments; nearly 4 million files; focuses on experimental data for machine learning. |

The selection of an appropriate platform depends heavily on the research goal. JARVIS-Leaderboard offers the most comprehensive framework for direct method-to-method comparison across a wide spectrum of computational and experimental techniques [13]. In contrast, MatBench provides a more specialized environment for evaluating AI model performance on specific property prediction tasks [13]. For research grounded in experimental data, HTEM-DB provides a curated repository of high-throughput data, essential for training and validating models on real-world measurements [15].

Benchmarking AI Models for Structure-Property Relationships

A critical step in the shift from tacit knowledge to quantitative prediction is the development of AI models that are not only accurate but also interpretable. The following table compares different deep learning (DL) architectures used for predicting material properties from structure.

Table 2: Performance Comparison of Deep Learning Architectures for Structure-Property Prediction.

| Model Architecture | Key Principle | Interpretability Strength | Reported Validation Performance | Notable Limitations |

|---|---|---|---|---|

| SCANN (Self-Consistent Attention Neural Network) [3] | Uses attention mechanisms to represent local atomic structures and their global integration. | High; explicitly identifies crucial atoms/local structures via attention scores. | Strong predictive capabilities on QM9 and Materials Project datasets, comparable to state-of-the-art models. | Requires domain knowledge (e.g., Voronoi tessellation) for defining local environments. |

| Message-Passing Neural Networks (MPNNs) [3] | Passes "messages" (information) between connected atoms in a graph representation. | Moderate; relies on heuristic bonding information but can be a "black box" for long-range interactions. | High accuracy on many molecular and crystalline material properties. | Challenges with long-range interactions, feature interpretability, and global information representation. |

| Graph Convolutional Networks (GCNs) [3] | Applies convolutional operations on graph representations of molecules/crystals. | Low to Moderate; can identify important fingerprint fragments but may lack 3D structural context. | Useful for property prediction where 2D connectivity is primary. | Limited by the absence of full 3D structural information, affecting accuracy. |

The SCANN architecture represents a significant advance by incorporating an attention mechanism that quantitatively measures the degree of attention given to each local atomic structure when determining the representation of the overall material structure [3]. This provides a direct, interpretable link between specific structural features and the target property, moving away from "black box" predictions and towards a model that offers insights akin to an expert's intuition.

Experimental Protocol for Interpretable AI Model Validation

The validation of an interpretable DL model like SCANN involves a structured workflow to ensure both predictive accuracy and physico-chemical relevance.

Title: Interpretable AI Validation Workflow

Key Steps:

- Dataset Curation: Select a benchmark dataset with known structures and target properties, such as the QM9 dataset for molecular properties or the Materials Project dataset for crystalline materials [3].

- Data Preprocessing and Local Environment Definition: For each material structure, defined by atomic numbers and coordinates, perform Voronoi tessellation to algorithmically determine the set of neighboring atoms for each central atom, forming its local structure [3].

- Model Training: Train the SCANN model, which consists of a series of local attention layers and a global attention layer. The local layers recursively learn and refine the representations of each atomic local environment. The global layer then integrates these local representations into a unified material structure representation, assigning an attention weight to each local structure [3].

- Performance Validation: Conduct a standard train-test-split validation to assess the model's predictive accuracy for the target property, ensuring it is comparable to state-of-the-art models [3].

- Interpretation and Physical Validation: Analyze the attention scores produced by the global attention layer. These scores quantitatively indicate which local atomic structures the model deemed most critical for the prediction. This interpretation must be validated against prior tacit knowledge or first-principles calculations to ensure physico-chemical consistency [3].

High-Throughput Data Infrastructure for Experimental Validation

The reliability of any predictive model is contingent on the quality and volume of the data it is trained on. High-throughput experimentation (HTE) generates the large-scale, standardized data required for this purpose.

Title: High-Throughput Data Pipeline

Experimental Protocol for HTE Data Generation:

- Sample Library Creation: Utilize combinatorial deposition chambers (e.g., for thin-film materials) to synthesize libraries of samples on a single substrate, such as a 50x50 mm plate with a 4x11 sample mapping grid [15].

- Automated Characterization: Employ spatially resolved characterization instruments to measure properties (e.g., composition, structure, optoelectronic properties) for each sample in the library [15].

- Data Harvesting and Metadata Collection: The Research Data Infrastructure (RDI) automatically collects all digital files generated by instruments via a specialized Research Data Network (RDN). Critical contextual information (metadata), such as synthesis conditions and measurement parameters, is collected using a Laboratory Metadata Collector (LMC) [15].

- Data Processing and Storage: Custom ETL (Extract, Transform, Load) scripts process the raw data and metadata, which are then stored in a centralized Data Warehouse (DW) and subsequently published to the High-Throughput Experimental Materials Database (HTEM-DB) [15].

- Data Utilization: This structured, FAIR (Findable, Accessible, Interoperable, Reusable) data asset serves as a high-quality foundation for training and validating machine learning models, linking processing conditions to final material properties [15].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key computational and data resources that constitute the modern toolkit for tackling the central challenge of quantitative prediction.

Table 3: Essential Research Reagent Solutions for Quantitative Materials Informatics.

| Tool Name/Type | Primary Function | Key Application in Research |

|---|---|---|

| JARVIS-Leaderboard [13] | Benchmarking Platform | To compare and validate the performance of different computational methods (AI, DFT, FF) against standardized tasks and datasets. |

| HTEM-DB [15] | Experimental Data Repository | To access high-quality, curated experimental data for model training, validation, and to discover new structure-property relationships. |

| SCANN Model [3] | Interpretable Deep Learning | To predict material properties from atomic structure while providing explanations by identifying critical local structural features. |

| COMBIgor [15] | Data Analysis Software | To load, aggregate, and visualize combinatorial materials science data from high-throughput experiments. |

| Molecular Dynamics (MD) Simulations [16] | Biophysical Feature Extraction | To generate dynamic, biophysical descriptors (e.g., RMSF, SASA) for proteins or materials, used to build predictive QDPR models. |

| Crystal Plasticity Models [14] | Microstructure-Property Simulation | To simulate and quantify the relationship between microstructural features (e.g., phase fraction, grain size) and macroscopic mechanical properties and damage. |

The journey from tacit knowledge to quantitative prediction is complex but essential for accelerating scientific discovery. This guide has demonstrated that the convergence of interpretable AI models like SCANN, rigorous benchmarking platforms like JARVIS-Leaderboard, and robust experimental data infrastructures like HTEM-DB provides a powerful, integrated framework for validating structure-property relationships [13] [3] [15].

The future of this field lies in the wider adoption of these benchmarking practices and the continued development of hybrid models that combine the speed of AI with the transparency of physics-based models [12]. This will ultimately create a new, data-supported scientific intuition, allowing researchers to move beyond reliance on unstructured experience and make more confident, quantitative predictions in materials science and drug development.

In materials research, the fundamental principle that a material's properties are determined by its structure is well-established. The critical challenge lies in quantitatively validating these structure-property relationships (SPRs) through computational methods. Structural descriptors—mathematical representations of material structures—serve as the essential bridge between atomic-scale arrangements and macroscopic properties. Recent advances have produced two complementary classes of descriptors: those encoding local atomic environments (LAEs) and those capturing global material representation. This guide provides a comparative analysis of leading descriptors, their performance metrics, and experimental protocols, offering researchers a framework for selecting appropriate tools for SPR validation.

Comparative Analysis of Local Atomic Environment Descriptors

Local atomic environment descriptors mathematically encode the arrangement of atoms within a defined neighborhood, enabling the identification of crystal structures, defects, and phase transitions in atomistic simulations.

Table 1: Performance Comparison of Local Atomic Environment Descriptors

| Descriptor | Key Principle | Computational Efficiency | Best Applications | Accuracy (Typical R²) | Limitations |

|---|---|---|---|---|---|

| Neighbors Map [17] | Encodes LAEs into 2D images for CNN processing | High | Distorted crystals, phase transitions, vitrification | >0.95 (structure identification) | Limited to pre-defined cutoff radius |

| SOAP [18] | Smooth overlap of atomic positions | Medium | Grain boundary energy prediction | 0.99 (GB energy) [18] | Computationally intensive for large systems |

| Atomic Cluster Expansion (ACE) [18] | Complete body-ordered basis set | Medium-High | General purpose LAE description | Comparable to SOAP [18] | Complex implementation |

| ACSF [18] | Atom-centered symmetry functions | Medium | Molecular dynamics simulations | Intermediate accuracy [18] | Limited angular resolution |

| Common Neighbor Analysis (CNA) [18] | Identification of common neighbor patterns | Very High | Crystal structure identification | Lower for distorted systems [18] | Sensitive to thermal noise |

Table 2: Technical Characteristics of Local Environment Descriptors

| Descriptor | Dimensionality | Invariance | Required Parameters | Software Implementation |

|---|---|---|---|---|

| Neighbors Map [17] | 2D image | Rotational, translational | Cutoff radius, image size | Python, HPC-optimized |

| SOAP [18] | Vector | Rotational, translational | Cutoff radius, basis size | QUIP, DScribe |

| ACE [18] | Vector | Rotational, translational | Cutoff radius, correlation order | ACE, Julia |

| ACSF [18] | Vector | Rotational, translational | Cutoff radius, function parameters | DScribe, AMP |

| CNA [18] | Scalar | Rotational, translational | Cutoff radius | OVITO, LAMMPS |

Neighbors Map Descriptor: Image-Based Encoding

The Neighbors Map descriptor represents a novel approach that encodes local atomic environments into 2D images, enabling the application of standard image processing techniques like Convolutional Neural Networks (CNNs) for structural analysis [17].

Experimental Protocol for Neighbors Map Analysis:

- Input Data Preparation: Atomic coordinates from molecular dynamics simulations or experimental measurements

- Neighbor Identification: For each atom, identify neighboring atoms within a defined cutoff radius using Voronoi tessellation or distance-based criteria

- Image Generation: Create a pixelated representation of the graph-like architecture with weighted edge connections between neighboring atoms

- Descriptor Processing: Feed the resulting 2D images into a CNN classifier for structural identification

- Validation: Compare classification results against traditional analysis methods for benchmark systems

This approach preserves fundamental symmetries and requires relatively small training datasets, making it particularly effective for analyzing distorted crystalline systems, tracking phase transformations up to melting temperature, and studying liquid-to-amorphous transitions in pure metals and alloys [17].

SOAP and Spectral Descriptors: Mathematical Foundation

The Smooth Overlap of Atomic Positions (SOAP) descriptor and related spectral approaches represent LAEs using a mathematical framework based on spherical harmonics and radial basis functions, providing a comprehensive characterization of atomic neighborhoods [18].

Advanced Methods for Global Material Representation

While local descriptors capture atomic environments, understanding macroscopic material properties requires descriptors that encode global structural characteristics.

Table 3: Global Material Representation Approaches

| Method | Descriptor Type | Representation | Target Properties | Key Innovation |

|---|---|---|---|---|

| Electronic Charge Density [19] | Physical property-based | 3D electron density grid | Multiple properties simultaneously | Direct DFT mapping, universal predictor |

| SCANN [3] | Attention-based | Learned representation | Formation energies, orbital energies | Self-consistent attention mechanism |

| Domain Adaptation [20] | Transfer learning | Adapted feature space | OOD material properties | Improved generalization |

| XpertAI [21] | Explainable AI | Natural language explanations | Chemical properties | Interpretable SPR extraction |

Electronic Charge Density as Universal Descriptor

Electronic charge density represents a groundbreaking approach to global material representation, leveraging the fundamental principle from density functional theory that all ground-state properties are uniquely determined by the electron density [19].

Experimental Protocol for Charge Density Analysis:

- Data Acquisition: Obtain electronic charge density data from DFT calculations (e.g., VASP CHGCAR files)

- Data Standardization: Convert 3D charge density matrices to standardized image representations through interpolation

- Feature Extraction: Process images using Multi-Scale Attention-Based 3D Convolutional Neural Network (MSA-3DCNN)

- Property Prediction: Map extracted features to target material properties through fully connected layers

- Validation: Compare predictions against DFT-calculated or experimental property values

This approach has demonstrated exceptional capability as a universal descriptor, achieving accurate prediction of eight different material properties with R² values up to 0.94 in multi-task learning scenarios [19].

Interpretable Deep Learning with SCANN Architecture

The Self-Consistent Attention Neural Network (SCANN) incorporates attention mechanisms to learn representations of local atomic structures while providing interpretable insights into structure-property relationships [3].

Experimental Protocols and Workflow Visualization

Generalized Workflow for Structure-Property Analysis

Neighbors Map Specific Workflow

Electronic Charge Density Processing

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 4: Essential Computational Tools for Descriptor Implementation

| Tool/Software | Function | Compatible Descriptors | Application Context |

|---|---|---|---|

| OVITO [17] | Visualization and analysis | CNA, Neighbors Map | Molecular dynamics simulations |

| DScribe [18] | Descriptor calculation | SOAP, ACSF | Materials informatics |

| VASP [19] | DFT calculations | Charge density | Electronic structure |

| Python [17] | Custom implementation | Neighbors Map, SCANN | Flexible algorithm development |

| XGBoost [21] | Machine learning | Feature importance analysis | Property prediction |

| SHAP/LIME [21] | Explainable AI | Any black-box model | Interpretation of predictions |

| MatDA [20] | Domain adaptation | Structure-based descriptors | Out-of-distribution prediction |

Performance Benchmarking and Validation Metrics

Rigorous validation of structural descriptors requires comprehensive benchmarking across diverse material systems and properties.

Table 5: Quantitative Performance Metrics for Descriptor Evaluation

| Descriptor | Structure Identification Accuracy | Property Prediction R² | Computational Cost | Robustness to Noise |

|---|---|---|---|---|

| Neighbors Map [17] | >95% (distorted crystals) | N/A | Low | High |

| SOAP [18] | N/A | 0.99 (GB energy) | Medium | Medium |

| Electronic Charge Density [19] | N/A | 0.78 avg (multi-task) | High | High |

| SCANN [3] | N/A | >0.9 (formation energy) | Medium-High | Medium |

| CNA [17] | ~70% (distorted crystals) | N/A | Very Low | Low |

Domain Adaptation for Real-World Validation Scenarios

Traditional random train-test splits often overestimate model performance due to redundancy in materials datasets. Domain adaptation techniques address this limitation by improving prediction accuracy for known subsets of out-of-distribution materials, mirroring real research scenarios where scientists predict properties for specific material families [20].

Experimental Protocol for Domain Adaptation:

- Dataset Partitioning: Split data into source and target domains based on material composition or structure

- Feature Alignment: Use domain adaptation algorithms to align feature distributions between source and target domains

- Model Training: Train property prediction models on the aligned feature space

- OOD Evaluation: Evaluate performance on out-of-distribution target materials

- Comparison: Benchmark against standard machine learning approaches

This approach has demonstrated significant improvements in OOD test set prediction performance where standard ML models often deteriorate [20].

Emerging Frontiers: Interpretability and Universal Frameworks

Explainable AI for Structure-Property Relationships

The XpertAI framework represents a groundbreaking approach that integrates XAI methods with large language models to generate natural language explanations of structure-property relationships from raw chemical data [21].

Experimental Protocol for XAI Analysis:

- Surrogate Model Training: Train ML model (e.g., XGBoost) on structural features and target properties

- Feature Impact Analysis: Apply SHAP or LIME to identify impactful structural features

- Literature Retrieval: Gather relevant scientific literature using retrieval-augmented generation

- Explanation Generation: Integrate feature importance with scientific knowledge to produce natural language explanations

- Validation: Compare generated explanations with domain expert knowledge

This approach combines the specificity of XAI methods with the scientific grounding of literature evidence, producing interpretable structure-property relationships [21].

Towards Universal Property Prediction

No single descriptor currently provides universal prediction of all material properties, but electronic charge density shows exceptional promise as it inherently encodes multiple degrees of freedom governing material behavior [19]. Multi-task learning frameworks that simultaneously predict multiple properties from a unified descriptor representation demonstrate improved accuracy compared to single-property models, suggesting a path toward truly universal material property prediction [19].

The validation of structure-property relationships has long been the cornerstone of materials research and drug development. Traditionally, this process relied heavily on iterative, trial-and-error experimentation guided by researcher intuition and incremental scientific advance. This approach, while effective, often required decades and significant financial investment to bring a new material from discovery to application [22]. The emergence of Materials Informatics (MI) represents a fundamental paradigm shift, applying data-centric approaches and pattern recognition technologies to dramatically accelerate the extraction of meaningful structure-property relationships from complex, high-dimensional data [23].

This transformation is powered by the convergence of large-scale computational power, advanced machine learning (ML) algorithms, and the growing availability of materials data. Where traditional methods struggled with sparse, biased, and noisy data, MI employs sophisticated pattern recognition to identify hidden correlations and predictive patterns that elude human observation [23]. This guide provides an objective comparison of traditional and MI-driven approaches, detailing the experimental protocols and performance data that underscore the quantitative advantages of informatics in validating the structural relationships that underpin material behavior.

Comparative Analysis: Traditional Methods vs. Materials Informatics

The following analysis quantitatively compares the performance of traditional experimental methods against modern materials informatics approaches across key metrics relevant to research and development.

Table 1: Performance Comparison of Traditional Methods vs. Materials Informatics

| Performance Metric | Traditional Methods | Materials Informatics | Comparative Advantage |

|---|---|---|---|

| Development Timeline | Several years to decades [22] | Months to years [22] | 10x reduction in time-to-market reported [24] |

| Experimental Throughput | Low (manual experimentation) | High (AI-driven screening & autonomous labs) | 80% reduction in repetitive characterization tasks [24] |

| Data Utilization | Relies on direct human interpretation of limited data points | Leverages large historical datasets & high-throughput simulations [22] | Explores compositional spaces that are cost-prohibitive traditionally [24] |

| Pattern Recognition Capability | Limited to human-discernible relationships | Identifies complex, non-linear, multi-parameter relationships [23] [2] | Enables the "inverse design" of materials given desired properties [23] |

| Cost Efficiency | High (resource-intensive physical experiments) | Lower (shift towards virtual screening & simulation) | 30-50% cuts in formulation spend reported by early adopters [24] |

The data reveals that MI's primary advantage lies in its ability to reframe the research problem from one of sequential experimentation to one of intelligent pattern extraction. While traditional methods excel in-depth physical validation, MI accelerates the initial discovery and optimization phases by orders of magnitude, guiding researchers toward the most promising candidates with a higher probability of success.

Experimental Protocols in Materials Informatics

The validated performance advantages of MI are realized through rigorous, data-driven experimental workflows. Below, we detail two key protocols that highlight the application of pattern recognition.

Protocol 1: High-Throughput Virtual Screening for Molecular Discovery

This protocol, derived from NTT DATA's project to accelerate CO2 capture catalyst discovery, outlines a systematic workflow for screening molecular structures [22].

1. Objective: To identify and design novel molecular catalysts that efficiently capture and convert CO2. 2. Data Acquisition & Curation:

- Input Data: Gather existing experimental and simulation data on molecular structures and their properties.

- Feature Representation: Convert molecular structures into computer-readable descriptors (e.g., using graph-based representations that encode atomic connectivity and bond types) [23] [2]. 3. Model Training & Pattern Recognition:

- ML Model Selection: Employ machine learning models (e.g., Graph Neural Networks) to learn the structure-property mapping from the training data [2].

- Generative AI Phase: Use Generative Artificial Intelligence to propose new molecular structures with optimized properties, moving beyond the constraints of known chemical space [22]. 4. Validation & Synthesis:

- HPC Simulation: Leverage High-Performance Computing (HPC) to run simulations on the most promising candidate molecules identified by the ML and generative models.

- Expert Review: The shortlisted molecules are evaluated by chemistry experts before any physical synthesis is initiated [22].

Protocol 2: A Hybrid Physics-Informed Machine Learning Framework

This protocol, based on recent academic research, integrates physical laws with data-driven learning to enhance the predictive accuracy and generalizability of pattern recognition models [2].

1. Objective: To predict material performance with high accuracy while ensuring physical interpretability. 2. Multi-Modal Data Integration:

- Input Data: Combine data from various sources: historical experimental results, computational simulations (e.g., Density Functional Theory), and existing material databases.

- Physics-Guided Constraint: Embed domain-specific priors and physical laws (e.g., conservation laws, symmetry rules) directly into the model's architecture or loss function [2]. 3. Hybrid Model Architecture:

- Graph Embedding: Use a graph-embedded material property prediction model to map complex structure-property relationships.

- Reinforcement Learning: Implement a generative model for structure exploration using reinforcement learning to navigate the material design space effectively. 4. Uncertainty Quantification & Validation:

- Uncertainty Quantification: Incorporate techniques to measure the confidence of predictions, which is crucial for prioritizing experimental validation.

- Experimental Verification: The final, computationally identified materials are synthesized and tested, with results fed back to refine the model [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key computational and data resources that form the modern MI researcher's toolkit, enabling the advanced pattern recognition workflows described above.

Table 2: Key Research Reagent Solutions in Materials Informatics

| Tool Category | Specific Examples | Function in Pattern Recognition |

|---|---|---|

| Software Platforms | Citrine Informatics, Exabyte.io, Dassault Systèmes BIOVIA [25] [24] | Cloud-native hubs that connect data, features, and models; provide the core environment for building and deploying ML workflows. |

| AI/ML Algorithms | Graph Neural Networks (GNNs), Generative Adversarial Networks (GANs), Bayesian Optimization [23] [2] | Core pattern recognition engines; GNNs excel at learning from graph-structured data like molecules, while GANs generate novel material structures. |

| Data Resources | The Materials Project, proprietary corporate databases [2] [24] | Curated sources of historical experimental and simulation data used to train and validate predictive models. |

| Computational Resources | High-Performance Computing (HPC), Cloud Computing (AWS, Google Cloud) [22] [24] | Provide the processing power required for high-throughput virtual screening and training complex deep learning models. |

| Laboratory Automation | Autonomous experimentation platforms, closed-loop robotics [24] | Integrates with MI software to physically execute experiments suggested by AI models, creating a high-throughput discovery loop. |

The integration of materials informatics into the materials research and drug development lifecycle is no longer a speculative future but a present-day reality that delivers quantifiable gains in efficiency and capability. By placing advanced pattern recognition at the center of the discovery process, MI directly addresses the core challenge of validating structure-property relationships in a more systematic, accelerated, and insightful manner. While traditional methods retain their value for final physical validation, the evidence demonstrates that a hybrid approach—leveraging the speed of MI for screening and guidance alongside the rigor of targeted experimentation—represents the most powerful strategy for advancing materials innovation. As AI models evolve and datasets expand, the role of informatics in deciphering the complex patterns of material behavior is poised to become the dominant paradigm in the field.

The central challenge in modern materials research lies in predicting macroscopic, functional properties from fundamental atomic and quantum-level interactions. This "bridging of scales" is essential for the rational design of novel materials, from high-performance polymers to quantum computing components. Structure-property relationships form the conceptual backbone of this endeavor, describing how a material's atomic-scale structure dictates its observable behavior at larger scales. The validation of these relationships requires a convergent approach, integrating advanced computational modeling with precise experimental techniques across multiple length and time scales.

Multiscale modeling has emerged as a transformative framework to address this challenge, enabling researchers to hierarchically connect models across different scales of detail. These approaches bridge quantum mechanics, which describes electronic structure at the angstrom and femtosecond level, with molecular dynamics that simulate atomic motion at the nanometer and nanosecond scale, mesoscale methods that provide coarse-grained representations at micrometer and microsecond resolutions, and finally continuum models that capture macroscopic behavior using partial differential equations at millimeter and second scales [26]. This systematic integration allows researchers to navigate the vast complexity between electron behavior and bulk material performance, ultimately accelerating the discovery and validation of new materials with tailored properties.

Computational Methodologies for Scale Integration

Hierarchical and Concurrent Multiscale Frameworks

Computational strategies for bridging scales generally fall into two categories: hierarchical and concurrent coupling. In hierarchical coupling, information is passed sequentially between models at different scales, where lower-scale simulations inform parameters for higher-scale models. For instance, quantum mechanical calculations might determine force field parameters for molecular dynamics simulations, which in turn provide constitutive relations for continuum models [26]. This approach leverages the strengths of each modeling method while maintaining computational efficiency.

In contrast, concurrent coupling solves models at different scales simultaneously, with dynamic information exchange during the simulation. The bridging scale method, inspired by the pioneering work of Professor T.J.R. Hughes on the variational multi-scale method, offers a sophisticated implementation of this approach [27]. This technique employs a two-scale decomposition where the coarse scale is simulated using continuum methods like finite elements, while the fine scale is handled with atomistic approaches. The method offers unique advantages: the coarse and fine scales evolve on separate time scales, and high-frequency waves emitted from the fine scale are eliminated using lattice impedance techniques, allowing for efficient and accurate multiscale simulation [27].

Emerging AI-Driven Approaches

Recent advances in artificial intelligence are further enhancing multiscale modeling capabilities. Interpretable deep learning architectures that incorporate attention mechanisms show particular promise for predicting material properties while providing insights into structure-property relationships [3]. The proposed Self-Consistent Attention Neural Network (SCANN) focuses on representing material structures from local atomic environments with learned weights, facilitating both prediction and interpretation of material properties [3].

For complex material systems with incomplete data, multimodal learning frameworks like MatMCL offer robust solutions. This approach jointly analyzes multiscale material information and enables property prediction even with missing modalities, addressing a common challenge in experimental materials science where certain characterizations (e.g., microstructure data from SEM or XRD) are expensive to obtain [28]. Through structure-guided pre-training, MatMCL aligns processing parameters with structural modalities to create fused material representations, uncovering potential correlations between multiscale information even when structural data is absent during inference [28].

Experimental Validation: Case Studies Across Material Classes

Macroscopic Quantum Phenomena

The 2025 Nobel Prize in Physics recognized groundbreaking experiments that demonstrated quantum mechanical effects at macroscopic scales, providing compelling validation for scale-bridging principles. John Clarke, Michel H. Devoret, and John M. Martinis conducted a series of experiments in 1984-1985 showing that macroscopic quantum tunneling could occur in superconducting electrical circuits "big enough to be held in the hand" [29] [30].

Table 1: Experimental Parameters for Macroscopic Quantum Tunneling Validation

| Parameter | Experimental Implementation | Measurement Technique | Key Finding |

|---|---|---|---|

| System Composition | Two superconductors separated by thin insulating layer (Josephson junction) | Material fabrication and characterization | Electronic circuit behaving as single quantum entity |

| Quantum State Detection | Current-fed Josephson junction with voltage measurement | Statistical analysis of zero-voltage state duration | System tunnels from zero-voltage state to voltage state |

| Energy Quantization | Microwave irradiation at varying wavelengths | Absorption spectroscopy | System moved to higher energy levels, demonstrating quantized states |

| Statistical Validation | Multiple measurements of zero-state duration | Graphical plotting of state persistence | Half-life behavior analogous to radioactive decay |

Their experimental system exhibited two distinct modes: one where current was "trapped" in a zero-voltage state and another where it escaped via quantum tunneling to produce a measurable voltage. This clearly demonstrated the quantized nature of the system, where only specific amounts of energy could be emitted or absorbed, exactly as predicted by quantum mechanics but now observable in a macroscopic system [30]. The duration of the zero-voltage state followed statistical patterns analogous to the half-life measurements of atomic nuclei, providing a critical bridge between quantum prediction and macroscopic observation [29].

Polymeric Materials for Biomedical Applications

In the biomedical domain, researchers have successfully established quantitative structure-property relationships (QSPRs) for polymer coatings that resist bacterial biofilm formation—a significant challenge for medical devices. Dundas et al. developed a predictive QSAR (Quantitative Structure-Activity Relationship) using calculated molecular descriptors of monomer units to discover novel, biofilm-resistant (meth-)acrylate-based polymers [31].

Table 2: Experimental Protocol for Validating Biofilm-Resistant Polymers

| Experimental Component | Methodology Details | Validation Metrics | Outcome Measures |

|---|---|---|---|

| Polymer Synthesis | (Meth-)acrylate-based monomers polymerized into coatings | Chemical characterization (FTIR, NMR) | Successful polymerization with target structures |

| Pathogen Testing | Six bacterial pathogens: Pseudomonas aeruginosa, Proteus mirabilis, Enterococcus faecalis, Klebsiella pneumoniae, Escherichia coli, Staphylococcus aureus | Standardized microbial culture conditions | Broad-spectrum resistance across pathogen types |

| Biofilm Assessment | Polymer microarrays exposed to bacterial cultures | Biomass quantification, viability staining | Significant reduction in biofilm formation |

| QSPR Validation | Molecular descriptor calculation correlated with biofilm resistance | Statistical correlation analysis | Predictive model successfully guided discovery of effective polymers |

This research demonstrated that computational prediction based on molecular structure could successfully guide the discovery of materials with desired macroscopic biological properties. The synthesized polymers showed significant resistance to biofilm formation across all six tested bacterial pathogens, validating the QSPR approach and demonstrating a direct connection between molecular structure and functional performance in a biomedical context [31].

The Research Toolkit: Essential Methods and Materials

Table 3: Essential Research Toolkit for Multiscale Materials Investigation

| Tool/Technique | Function | Scale of Application |

|---|---|---|

| Josephson Junction | Creates macroscopic quantum system using superconductors separated by thin insulator | Macroscopic quantum phenomena [29] [30] |

| Molecular Dynamics (MD) | Simulates motion of atoms and molecules using classical force fields | Atomic/Nanoscale (nanometers, nanoseconds) [26] |

| Finite Element Analysis (FEA) | Solves continuum-scale partial differential equations for stress, heat transfer, etc. | Macroscopic (millimeters and larger) [27] |

| Scanning Electron Microscopy (SEM) | Characterizes material microstructure and morphology | Microscale (micrometer resolution) [28] |

| Iterative Boltzmann Inversion (IBI) | Derives effective potentials for coarse-grained interactions from atomistic simulations | Mesoscale coarse-graining [26] |

| Self-Consistent Attention Neural Network (SCANN) | Interpretable deep learning for structure-property relationships with attention mechanisms | Atomic to macroscopic prediction [3] |

Workflow Integration

The experimental and computational methodologies described can be integrated into a comprehensive workflow for validating structure-property relationships, as illustrated below:

Comparative Analysis: Method Performance Across Applications

Quantitative Assessment of Multiscale Approaches

Table 4: Performance Comparison of Scale-Bridging Methodologies

| Methodology | Accuracy Metrics | Computational Cost | Time/Length Scale Limits | Key Advantages |

|---|---|---|---|---|

| Bridging Scale Method | Exact wave elimination at atomistic/continuum border [27] | High, but enables separate time stepping | Finite-temperature dynamic problems [27] | No need to mesh FE region to atomic scale; natural wave dissipation |

| QM/MM Coupling | Chemical accuracy in reactive regions [26] | Moderate to High | Limited QM region size | Accurate reaction modeling in complex environments |

| Coarse-Graining (e.g., Martini) | Reproduces thermodynamic properties [26] | Low compared to atomistic | Enables μm/μs simulation | 4:1 mapping (heavy atoms:bead) for biomolecular systems |

| AI/ML Approaches (SCANN) | Comparable to state-of-the-art on benchmark datasets [3] | Training: High; Inference: Low | Limited by training data diversity | Interpretable predictions; attention identifies crucial features |

| Multimodal Learning (MatMCL) | Improved prediction without structural info [28] | Moderate | Handles incomplete modalities | Cross-modal generation and retrieval capabilities |

Integration Pathways for Optimal Performance

The most effective strategies for bridging scales often combine multiple approaches. For instance, the bridging scale method has been extended to couple quantum mechanical methods like the tight-binding approach with continuum representations for quasistatic analysis of nanomaterials [27]. Similarly, equation-free multiscale methods use microscopic simulators like molecular dynamics as "black boxes" without explicit governing equations, deriving macroscopic behavior from short bursts of microscopic simulations through techniques like coarse projective integration [26]. This approach is particularly valuable when macroscopic equations are unknown or difficult to derive.

For complex hierarchical materials like polymeric metamaterials, a comprehensive multi-scale modeling approach integrates design across micro, meso, and macro scales. At the microscale, molecular dynamics simulations reveal how polymer composition and additives affect thermal and mechanical properties. At the mesoscale, finite element analysis and homogenization techniques simulate deformation of architectural unit cells. Finally, at the macroscale, computational models predict bulk performance for applications like impact protection and energy absorption [32]. This integrated approach enables the rational design of metamaterials with tailored properties before physical fabrication.

The validation of structure-property relationships requires convergent approaches that integrate computational prediction with experimental verification across multiple scales. From macroscopic quantum tunneling in superconducting circuits to biofilm-resistant polymers and engineered metamaterials, successful case studies demonstrate that properties emerging at macroscopic scales can be traced to fundamental interactions at smaller scales through appropriate modeling frameworks. The continuing development of multiscale methods—particularly those incorporating artificial intelligence, interpretable machine learning, and robust handling of experimental data limitations—promises to further accelerate the discovery and design of next-generation materials with tailored functional properties.

As methodologies mature, the integration of uncertainty quantification and sensitivity analysis across scales becomes increasingly important. Techniques like Monte Carlo simulations and Sobol indices help identify key parameters and dominant mechanisms while assessing the reliability of multiscale predictions [26]. This rigorous approach to validation ensures that structure-property relationships derived from multiscale modeling can reliably guide materials design, ultimately bridging the quantum and macroscopic worlds to address pressing challenges in technology and society.

Methodological Innovations: AI, Machine Learning and Computational Frameworks for Relationship Mapping

The validation of structure-property relationships is a cornerstone of materials research, enabling the prediction of material behavior from its atomic structure. Traditional machine learning models often operate as "black boxes," providing accurate predictions but limited physical insights. Interpretable deep learning addresses this limitation by making the reasoning behind predictions transparent. Among these approaches, the Self-Consistent Attention Neural Network (SCANN) framework incorporates attention mechanisms to explicitly identify which structural features most influence property predictions. This guide provides a comparative analysis of SCANN against other prominent architectures, detailing their performance, experimental protocols, and applicability for research in materials science and drug development.

Comparative Analysis of Interpretable Architectures

Key Architectures and Their Interpretability Approaches

| Architecture | Core Interpretability Mechanism | Primary Materials Application | Key Advantage for Interpretation |

|---|---|---|---|

| SCANN [3] | Self-consistent local & global attention weights | Predicting formation energies, molecular orbital energies [3] | Quantifies attention of atomic local structures to the global material representation |

| CGCNN [33] | Crystal graph convolutional networks | Accurate and interpretable prediction of material properties [33] | Provides atomic-level chemical insight from graph representations |

| Matformer [33] | Periodic self-attention | Crystal material property prediction [33] | Captures long-range interactions in periodic structures |

| ALIGNN [33] | Graph neural networks with line graphs | Improved materials property predictions [33] | Incorporates bond angles for richer geometric insight |

| GATGNN [33] | Global attention on graph nodes | Materials property prediction [33] | Uses attention to weight the importance of different nodes |

Quantitative Performance Comparison

The predictive accuracy and computational efficiency of an architecture are critical for its practical adoption. The table below summarizes benchmark results for key interpretable models on common materials informatics tasks.

Table: Performance comparison of interpretable deep learning models on material property prediction.

| Model | Dataset | Target Property | MAE | Key Interpretable Output |

|---|---|---|---|---|

| SCANN [3] | QM9, Materials Project | Formation energy, Molecular orbital energy | Comparable to SOTA | Attention scores for local atom structures |

| CGCNN [33] | Materials Project | Formation energy | ~0.08 eV/atom (from original paper) | Atomic-level contributions |

| Matformer [33] | Materials Project | Formation energy | 0.026 eV/atom (reported) | Attention maps between atoms |

| ALIGNN [33] | Materials Project | Formation energy | ~0.026 eV/atom (reported) | Contributions from atoms and bonds |

| GATGNN [33] | Various | Multiple properties | Improved over GCNNs | Global importance of atomic nodes |

Experimental Protocols for SCANN Validation

Workflow for SCANN-Based Structure-Property Analysis

The following diagram illustrates the primary workflow for applying the SCANN framework to establish structure-property relationships.

SCANN Experimental Workflow

Detailed Methodological Framework

The SCANN framework employs a structured multi-step process to learn and interpret structure-property relationships [3].

Input Representation and Voronoi Tessellation

- Input Data: Each material structure S is represented using the atomic numbers and corresponding coordinates of its M atoms [3].

- Neighbor Identification: Voronoi tessellation is applied to identify a set of neighboring atoms ( \mathcal{N}i ) for each atom ( ai ) in the structure S. This method is chosen as it clearly determines neighboring atoms based on material domain knowledge [3].

- Geometrical Influence Vector: A vector ( \mathbf{g}{ij}^{0} ) is defined as the geometrical influence of a neighboring atom ( aj ) on atom ( a_i ) based on Euclidean distance and Voronoi solid angle between them [3].

Embedding and Local Attention Layers

- Atom Embedding: An embedding layer expresses the atomic information of each atom ( ai ) in S by an h-dimensional vector ( \mathbf{c}{i}^{0} ) [3].

- Recursive Local Attention: The architecture comprises a series of L local attention layers that iteratively learn and enhance the consistency of local structure representations. The representation vector ( \mathbf{c}{i}^{l+1} ) of the local structure ( {ai, \mathcal{N}i} ) at the (l+1)th local attention layer is derived using attention mechanisms [3]: [ \mathbf{c}{i}^{l+1} = \mathrm{LocalAttention}^{l+1}(\mathbf{c}{i}^{l}, \mathbf{C}{\mathcal{N}i}^{l} \times \mathbf{G}{\mathcal{N}i}^{l}) = \mathrm{Attention}(\mathbf{q}{i}^{l}, \mathbf{K}{\mathcal{N}i}^{l}) + \mathbf{q}{i}^{l} ] where ( \mathbf{C}{\mathcal{N}i}^{l} = [\mathbf{c}{j}^{l}]{aj \in \mathcal{N}i} ) denotes neighboring local structures and ( \mathbf{G}{\mathcal{N}i}^{l} = [\mathbf{g}{ij}^{l}]{aj \in \mathcal{N}_i} ) represents geometrical influences [3].

Global Attention and Interpretation

- Global Attention Layer: After processing through local attention layers, a global attention layer quantitatively measures the degree of attention given to each local structure when determining the representation of the entire material structure [3].

- Interpretation of Attention Weights: The trained model uses attention mechanisms to identify which local atomic environments most significantly influence the target property prediction, providing direct insight into structure-property relationships [3] [34].

The Scientist's Toolkit: Essential Research Reagents

Table: Key computational resources for implementing interpretable deep learning in materials research.

| Resource Category | Specific Tool / Dataset | Function in Research |

|---|---|---|

| Benchmark Datasets | QM9 Dataset [3] | Standardized quantum chemical data for organic molecules with 19 thermodynamic properties |

| Materials Project Dataset [3] | Computational database of crystal structures and their calculated properties | |

| Software Frameworks | CGCNN Codebase [33] | Open-source implementation for crystal graph convolutional neural networks |

| ALIGNN Implementation [33] | Code for atomistic line graph neural network for improved predictions | |

| Interpretability Tools | Attention Visualization [3] [34] | Methods to visualize and quantify attention scores from models like SCANN |

| SHAP Analysis [34] | Comparative interpretability method to validate attention-based explanations |

Attention Mechanism Performance in Comparative Studies

Efficiency and Accuracy Trade-offs

Studies across domains demonstrate that specialized attention mechanisms can maintain performance while significantly improving efficiency [35] [36].

Table: Performance of attention variants in specialized deep learning applications.

| Application Domain | Model/Attention Type | Accuracy | Efficiency Advantage |

|---|---|---|---|

| Radio Signal Classification [35] | Baseline Multi-Head Attention | 85.05% | Baseline (reference) |

| Causal Attention | ~84% | Inference time reduced by 83% | |

| Sparse Attention | ~84% | Inference time reduced by 75% | |

| MRI Tumor Classification [37] | ResNet50V2 (Baseline) | 92.6% | Baseline (reference) |

| Squeeze-and-Excitation (SE) | 98.4% | Best performance among attention types | |

| Convolutional Block Attention | 93.5% | Moderate improvement | |

| Traffic Forecasting [36] | Dot-Product Attention | SOTA level | Quadratic complexity bottleneck |

| Efficient Attention Variants | On par with baseline | Training times reduced by up to 28% |

Interpretability Analysis Across Domains

The interpretability of attention mechanisms has been validated across multiple domains, demonstrating their utility for scientific insight:

Materials Science: In the analysis of ferroelectric properties of PbTiO₃ thin films, attention-based Transformer models successfully identified the influence of distinct domain patterns on polarization switching processes. The attention scores provided physically meaningful interpretations that aligned with domain knowledge [34].

Cultural Heritage: Studies comparing Vision Transformers (ViTs) with CNNs for classifying pigment manufacturing processes found that while ViTs achieved superior accuracy (100% vs. 97-99%), CNNs offered more detailed interpretations through class activation maps, highlighting the ongoing trade-off between performance and interpretability in some applications [38].

Medical Imaging: Enhanced ResNet50V2 models with Squeeze-and-Excitation attention demonstrated not only improved classification accuracy for brain tumors (98.4% vs. 92.6%) but also more precise localization of relevant features, providing both diagnostic and interpretative benefits [37].

These cross-domain studies confirm that attention mechanisms consistently provide both performance improvements and valuable interpretability, with SCANN specifically designed to leverage these advantages for materials science applications.

The discovery and development of new materials have long relied on a combination of quantitative modeling and human expertise. While artificial intelligence (AI) has dramatically accelerated materials research, many properties of the world's most advanced materials, particularly quantum materials, exist beyond the reach of purely quantitative modeling. Understanding these complex systems has traditionally required human expert reasoning and intuition—elements that even the most powerful AI cannot spontaneously replicate [39]. This gap between human insight and computational power represents a significant bottleneck in the acceleration of materials discovery.

The emerging paradigm of Expert-Curated AI addresses this fundamental challenge by systematically integrating human scientific intuition into machine learning frameworks. At the forefront of this approach is the Materials Expert-Artificial Intelligence (ME-AI) framework, developed through a collaboration between Cornell University and Princeton University researchers [39]. This innovative methodology "bottles" valuable human intuition into quantifiable descriptors that can predict functional material properties, creating a powerful synergy between human expertise and machine learning capabilities. This comparison guide examines the performance of ME-AI against other AI approaches in validating structure-property relationships, providing researchers with actionable insights for implementing expert-curated AI strategies in materials and drug development workflows.

Comparative Analysis of AI Approaches in Materials Research

The landscape of AI-driven materials discovery encompasses several distinct methodologies, each with characteristic strengths and limitations. The table below provides a systematic comparison of the ME-AI framework against other prevalent AI approaches in materials informatics.

Table 1: Comparison of AI Approaches in Materials Discovery

| AI Approach | Core Methodology | Data Requirements | Interpretability | Domain Expertise Integration | Best-Suited Applications |

|---|---|---|---|---|---|

| ME-AI Framework [39] | Transfer of expert knowledge via curated data and fundamental feature selection | Expert-curated and labeled datasets | High (reproduces human reasoning process) | Direct and foundational | Quantum materials, complex functional properties, limited data scenarios |

| Foundation Models [40] | Self-supervised pre-training on broad data followed by fine-tuning | Massive, diverse datasets (millions to billions of data points) | Variable (often low without specific design) | Indirect through fine-tuning data | General property prediction, molecular generation, synthesis planning |

| Generative AI [41] [42] | Generation of new structures/molecules via deep learning architectures | Large-scale materials databases | Moderate to Low (black-box generation) | Limited to embedded patterns in training data | Inverse design, de novo molecular generation, hypothesis generation |

| Traditional ML [41] [43] | Statistical learning on hand-crafted features and descriptors | Moderate, structured datasets | High (feature importance analysis) | Manual feature engineering | Quantitative structure-property relationship (QSPR) modeling, screening |

Table 2: Performance Metrics Across AI Approaches for Property Prediction

| Approach | Accuracy on Complex Quantum Properties | Data Efficiency | Generalization to Unseen Material Classes | Experimental Validation Rate |

|---|---|---|---|---|

| ME-AI Framework [39] | High (matches expert intuition) | Very High (879 materials in validation study) | Demonstrated capability | Not explicitly reported |

| Foundation Models [40] | Variable (depends on training data coverage) | Low (requires massive datasets) | Moderate (through transfer learning) | Emerging |

| Generative AI [42] | Limited for complex quantum behaviors | Low | Limited for out-of-distribution materials | Growing but inconsistent |

| Traditional ML [43] | Moderate for well-defined properties | Moderate | Often poor without retraining | Established |

The ME-AI Framework: Methodology and Experimental Validation

Core Principles and Workflow

The ME-AI framework represents a fundamental shift in how human expertise interfaces with machine learning. Rather than treating AI as an autonomous discovery tool, ME-AI positions it as an amplifier of human intelligence, specifically designed to capture and systematize the intuitive reasoning processes of domain specialists [39].

The framework operates on several core principles:

- Knowledge Transfer: Experts transfer their knowledge, particularly intuition and insight, by curating data and deciding on the fundamental features of the model

- Process Transparency: The machine learns from data to think the way experts think, making the reasoning process apparent in the conclusions

- Generalization: The captured intuition can be applied to expand predictions beyond the immediate training data

The following diagram illustrates the complete ME-AI workflow, from expert knowledge transfer to model validation and application:

Experimental Protocol and Case Study

The validation of the ME-AI framework was conducted through a specific quantum materials problem, focusing on identifying which of 879 materials shared a particular desirable characteristic [39]. The experimental methodology followed a rigorous protocol to ensure proper knowledge transfer from human expert to machine learning model.

Table 3: Key Research Reagents and Computational Tools for ME-AI Implementation

| Research Component | Function/Role | Implementation in ME-AI Study |

|---|---|---|

| Expert-Curated Dataset [39] | Foundation for knowledge transfer; ensures relevant feature space | Human expert (Leslie Schoop group) curated and labeled training data |

| Machine Learning Model [39] | Learns and quantifies expert intuition | Model architecture trained to reproduce expert decision patterns |

| Descriptor Quantification Algorithm [39] | Translates learned intuition into predictive descriptors | Generated descriptors predicting functional material properties |

| Validation Material Sets [39] | Tests model performance and generalization | 879-material set for primary validation; additional sets for generalization testing |

Detailed Experimental Protocol:

Problem Identification Phase

- A specific quantum materials problem was identified with a clearly defined desirable characteristic

- A group of 879 materials was selected as the primary test set

Expert Knowledge Capture Phase

- Human experts (Leslie Schoop and her research group at Princeton) curated and labeled the training data

- Experts determined the fundamental features and descriptors for the initial model

- This process explicitly encoded domain knowledge and intuition into the data structure

Model Training Phase

- The machine learning model was trained using the expert-curated data

- The training objective was to reproduce the expert's classification of materials based on the target characteristic

- The model learned to associate expert-identified features with the target property

Intuition Bottling Phase

- The trained model generated quantifiable descriptors that captured the expert's intuitive reasoning

- These descriptors formalized the previously implicit decision-making process

Validation and Generalization Phase

- The model's predictions were validated against expert judgments on the test set

- Generalization was tested by applying the model to different material sets

- Unexpected insights generated by the model were evaluated by experts for scientific validity

The successful implementation of this protocol demonstrated that the ME-AI framework could not only reproduce expert insight but expand upon it. Notably, the model generated insights that the human expert recognized as valid, stating, "Oh, that makes a lot of sense," when presented with the model's output [39].

Performance Analysis and Comparative Advantages

Quantitative Performance Metrics

In the validation study, the ME-AI framework demonstrated several significant performance advantages over conventional AI approaches:

Accuracy and Fidelity: The ME-AI model successfully reproduced the human expert's intuition and classification decisions across the 879-material test set. More importantly, it achieved this while providing explicit descriptors that quantified the previously implicit reasoning process [39].

Generalization Capability: The framework demonstrated exciting generalization performance, successfully predicting similar materials among different compound sets not included in the original training data. This suggests that the captured intuition was fundamental enough to transfer across material classes [39].

Data Efficiency: By leveraging expert guidance in feature selection and data curation, the ME-AI approach achieved high performance with relatively modest dataset sizes compared to the massive datasets required by foundation models and other data-heavy AI approaches [39] [40].

Unique Advantages for Structure-Property Relationship Validation

The ME-AI framework provides distinctive benefits specifically for validating structure-property relationships in materials research:

Interpretability and Scientific Insight: Unlike black-box models that provide predictions without explanation, ME-AI generates explicit descriptors that illuminate the relationship between material structure and functional properties. This explicates the "why" behind predictions, advancing scientific understanding rather than just providing empirical correlations [39].

Handling Complex Quantum Phenomena: For quantum materials and other complex systems where first-principles modeling is computationally prohibitive and human intuition is essential, ME-AI provides a structured approach to formalizing expert knowledge. This is particularly valuable for properties that emerge from complex interactions not fully captured by existing physical models [39].