Validating Material Properties: Bridging In Vitro and In Vivo Studies for Safer Biomedical Products

This article provides a comprehensive overview of the critical process of validating material properties for biomedical applications, from initial biocompatibility screening to advanced in vivo correlation.

Validating Material Properties: Bridging In Vitro and In Vivo Studies for Safer Biomedical Products

Abstract

This article provides a comprehensive overview of the critical process of validating material properties for biomedical applications, from initial biocompatibility screening to advanced in vivo correlation. Aimed at researchers, scientists, and drug development professionals, it explores foundational principles, established and emerging methodologies, strategies for troubleshooting and optimization, and robust validation frameworks. By integrating current standards like ISO-10993 with cutting-edge approaches such as 3D tissue models, in silico simulations, and sensor technologies, this resource offers a strategic roadmap for enhancing predictive accuracy, ensuring regulatory compliance, and accelerating the development of safe and effective medical devices and therapeutics.

The Bedrock of Biocompatibility: Principles and Preclinical Requirements

Biocompatibility is a foundational requirement for any medical device, defined by its ability to function within a biological system without eliciting an unacceptable adverse biological response. This evaluation is not a single test but a systematic process conducted within a risk management framework to ensure patient safety and device efficacy. The International Standard ISO 10993-1, titled "Biological evaluation of medical devices - Part 1: Evaluation and testing within a risk management process," serves as the cornerstone document for this assessment, providing manufacturers with a globally recognized approach to evaluating biological safety [1] [2]. This standard emphasizes that biocompatibility assessment must consider the complete medical device in its final finished form, including the impacts of manufacturing processes, sterilization, and potential interactions between components [3].

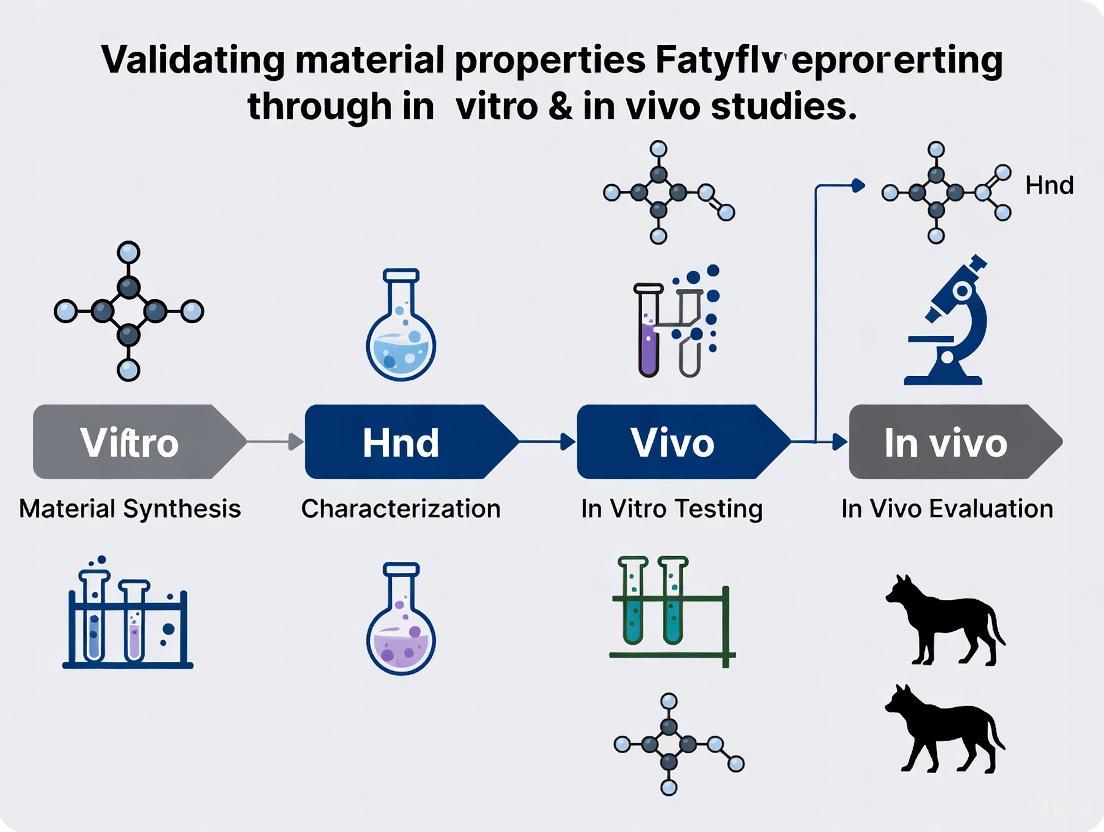

The journey from material selection to clinical implementation involves rigorous evaluation through both in vitro (laboratory) and in vivo (animal) studies, with a growing industry trend toward embracing alternative in vitro methods due to technological advancements, ethical considerations, and regulatory support [4]. This comprehensive review examines the structured approach to defining and validating biocompatibility, from ISO-10993 standards to clinical safety, providing researchers and drug development professionals with a detailed comparison of testing methodologies, experimental protocols, and the essential toolkit required for biological evaluation.

The ISO 10993 Series: A Structured Approach to Biological Evaluation

The Risk Management Foundation

The ISO 10993 series represents a comprehensive collection of standards that guide the biological evaluation of medical devices throughout their development lifecycle. These standards operate within a risk management process aligned with ISO 14971, requiring manufacturers to systematically identify, evaluate, and control potential biological risks [2]. This process begins with a thorough characterization of the device, including its material composition, manufacturing processes, intended anatomical location, and the frequency and duration of patient exposure [3]. The fundamental question driving the evaluation is whether the device materials, in their final processed form, present any unacceptable risk of adverse biological reactions when placed in contact with body tissues [1].

The framework requires assessment of the medical device in its final finished form, as this represents the state that will have clinical contact with patients. However, understanding the biocompatibility of individual components remains crucial, particularly when component interactions could mask or complicate interpretation of biological responses [3]. This systematic approach ensures that all potential biological hazards are considered, including toxicity, irritation, sensitization, and other tissue-specific reactions that might compromise clinical safety.

Key Standards and Their Applications

The ISO 10993 series comprises multiple specialized documents, each addressing specific aspects of biological evaluation. These standards provide detailed methodologies for assessing various biological endpoints and material interactions.

Table 1: Key Standards in the ISO 10993 Series for Biocompatibility Evaluation

| Standard Number | Focus Area | Key Application in Biocompatibility Assessment |

|---|---|---|

| ISO 10993-1 | Evaluation and testing within a risk management process [2] | Provides overarching principles and the risk management framework for all biological evaluations [2] |

| ISO 10993-2 | Animal welfare requirements [5] | Guides ethical treatment of animals and emphasizes reduction, replacement, and refinement of animal testing [4] |

| ISO 10993-5 | Tests for in vitro cytotoxicity [5] | Details procedures for assessing cell death and toxicity using mammalian cell cultures [4] |

| ISO 10993-10 | Tests for skin sensitization [5] | Outlines methods for evaluating potential allergic contact dermatitis responses [4] |

| ISO 10993-23 | Tests for irritation [5] | Provides tests to predict and classify irritation potential of devices or their extracts [5] |

Additional specialized standards address specific biological endpoints and material considerations. ISO 10993-3 covers genotoxicity, carcinogenicity, and reproductive toxicity testing, while ISO 10993-4 focuses on interactions with blood [5]. Standards 10993-12 through 10993-19 provide critical guidance on sample preparation, degradation product identification, and material characterization, forming the chemical basis for biological safety assessments [5]. This comprehensive suite of standards enables manufacturers to develop a testing strategy tailored to their specific device characteristics and intended clinical application.

The "Big Three" Biocompatibility Tests: Core Methodologies and Protocols

Cytotoxicity Testing: Assessing Cellular Response

Cytotoxicity testing evaluates whether a medical device or its extracts cause damage to living cells, serving as the most fundamental biocompatibility assessment. As specified in ISO 10993-5:2009, this testing typically involves exposing cultured mammalian cells to device extracts for approximately 24 hours, then evaluating multiple endpoints including cell viability, morphological changes, cell detachment, and cell lysis [4]. Commonly used cell lines include Balb 3T3 fibroblasts, L929 fibroblasts, and Vero kidney-derived epithelial cells, which provide consistent and reproducible models for assessing cellular responses [4].

Quantitative assessment of cell viability employs several established methods. The MTT assay measures mitochondrial function via reduction of 3-(4,5-dimethylthiazol-2-yl)-2,5-diphenyl-2H-tetrazolium bromide to formazan crystals, while the XTT assay uses a similar principle with 2,3-bis-(2-methoxy-4-nitro-5-sulfophenyl)-2H-tetrazolium-5-carboxanilide [4]. The neutral red uptake assay assesses lysosomal integrity and cellular health through the ability of living cells to incorporate and bind the supravital dye neutral red. Additional methods include the Bradford protein assay, Crystal violet staining, Resazurin dye reduction, and Trypan blue exclusion testing [4]. While ISO 10993-5 doesn't define strict acceptance criteria, it provides guidance for data interpretation, with cell survival of 70% and above generally considered a positive indicator, particularly when testing neat extracts [4].

Irritation Testing: Evaluating Localized Tissue Responses

Irritation testing assesses the potential of a device, its materials, or extracts to cause localized inflammatory responses in tissues. ISO 10993-23 provides specific test methods designed to predict and classify the irritation potential of medical devices [5]. These evaluations typically utilize reconstructed human epidermis models, such as the EpiDerm RhE model, which follows OECD guideline 439 [6] [4]. These advanced in vitro models offer human-relevant data while reducing reliance on animal testing, aligning with the 3Rs principles (Replacement, Reduction, and Refinement) emphasized in Directive 2010/63/EU [4].

The test protocol involves applying device extracts to the reconstructed epidermis and measuring cell viability after a defined exposure period. A significant reduction in viability compared to controls indicates potential irritation. These models have been validated for their ability to distinguish between irritant and non-irritant materials, providing valuable data for classifying medical device irritation potential without animal testing [6]. For devices with specific tissue contact profiles, such as those contacting mucosal membranes or implanted tissues, additional specialized irritation models may be employed to more accurately simulate the intended clinical exposure.

Sensitization Testing: Assessing Allergic Potential

Sensitization testing evaluates the potential of a medical device to cause allergic contact dermatitis, a delayed-type hypersensitivity reaction mediated by T lymphocytes. This endpoint is particularly important for devices that contact skin or mucosal membranes repeatedly or for extended durations. The current approach increasingly utilizes in vitro methods that follow OECD guideline 442D, which assesses key events in the skin sensitization adverse outcome pathway [6] [4].

These innovative in vitro models measure dendritic cell activation or peptide reactivity to predict sensitization potential without animal testing. The assays evaluate the molecular initiating events and cellular responses associated with the development of allergic contact dermatitis, providing human-relevant data for safety assessments [4]. When properly validated and implemented, these methods can effectively identify potential sensitizers, enabling manufacturers to select materials that minimize allergic risks for patients and healthcare providers using medical devices.

Comparative Analysis: In Vitro versus In Vivo Testing Approaches

Methodological Comparison and Applications

The biological evaluation of medical devices employs both in vitro and in vivo testing approaches, each with distinct advantages, limitations, and applications. A comparative analysis of these methodologies enables researchers to design optimized testing strategies that maximize scientific value while addressing ethical considerations and regulatory requirements.

Table 2: Comparative Analysis of In Vitro versus In Vivo Biocompatibility Testing

| Parameter | In Vitro Testing | In Vivo Testing |

|---|---|---|

| Definition | Methods used to identify potential health hazards from a sample without the use of in vivo animal testing [6] | Evaluation of biological responses in living organisms, typically animals [7] |

| Experimental Model | Cell cultures (e.g., mammalian cells), reconstructed human tissues (e.g., EpiDerm), bacterial systems (Ames test) [6] [4] | Living animals (e.g., guinea pigs, mice, rabbits) with implanted devices or injected extracts [7] |

| Key Advantages | More humane, cost-effective, faster results, controlled environment, mechanistic insights, high-throughput capability [6] | Provides integrated whole-organism response, accounts for metabolic processes and systemic effects, currently broader regulatory acceptance for certain endpoints [4] |

| Key Limitations | May not fully replicate complex tissue interactions and systemic physiology of whole organisms [4] | Ethical concerns, higher costs, longer duration, species-specific variations may not always predict human responses [4] |

| Primary Applications | Initial screening, mechanistic studies, quality control, lot release testing, sensitization and irritation assessment using reconstructed tissues [6] [4] | Assessment of complex endpoints like implantation effects, systemic toxicity, pyrogenicity when in vitro methods are insufficient [4] [7] |

| Regulatory Status | Increasing acceptance, particularly for specific endpoints like cytotoxicity, irritation, and sensitization [6] [4] | Still required for certain endpoints when existing scientific data and in vitro studies provide insufficient information [4] |

Strategic Implementation in Device Development

The integration of in vitro and in vivo approaches follows a strategic sequence throughout device development. In vitro methods typically serve as initial screening tools during early research and development, identifying potential biological risks before proceeding to more complex and costly in vivo studies [6]. This tiered testing approach allows for early identification and mitigation of biocompatibility concerns, potentially reducing the need for animal testing in later stages of development.

According to ISO 10993-1 and FDA guidance, animal testing should only be conducted when existing scientific data and in vitro studies fail to provide sufficient information for a comprehensive safety assessment [4] [3]. This principle aligns with the "3Rs" framework (Replacement, Reduction, and Refinement) embedded in European Directive 2010/63/EU and incorporated into the Medical Device Regulation (EU 2017/745) [4]. The continuing evolution of sophisticated in vitro models, including three-dimensional tissue constructs and organ-on-a-chip technologies, promises to further enhance the predictive capacity of non-animal methods for biocompatibility assessment.

Experimental Design and Workflow Visualization

The biological evaluation of medical devices follows a structured workflow that begins with material characterization and progresses through a risk-based selection of appropriate tests. This systematic approach ensures comprehensive safety assessment while avoiding unnecessary testing.

Biocompatibility Testing Workflow

Sample Preparation and Testing Stratification

Critical to any biological evaluation is proper sample preparation, as detailed in ISO 10993-12:2021. Medical devices are typically tested as extracts prepared by immersing the device or its components in appropriate extraction solvents such as physiological saline, vegetable oil, or cell culture medium under specified conditions [4]. The extraction conditions (time, temperature, surface area to volume ratio) are carefully selected based on the device's intended use and the chemical properties of its materials. This process standardizes the assessment of potential leachables that could interact with biological systems during clinical use.

The selection of specific biological endpoints for evaluation follows a risk-based approach stratified according to the nature and duration of body contact. The FDA-modified matrix, outlined in the guidance "Use of International Standard ISO 10993-1," provides a structured framework for determining which tests are necessary based on device categorization [1] [3]. This stratification ensures that the testing burden is appropriate to the device's risk profile, with more extensive evaluations required for implantable devices and those with prolonged contact with critical tissues like blood or nervous system structures.

Testing Stratification by Contact

The Scientist's Toolkit: Essential Reagents and Materials

Successful biocompatibility testing requires specific research reagents and materials carefully selected and standardized to ensure reproducible and meaningful results. The following toolkit details essential components for conducting proper biological evaluations of medical devices.

Table 3: Essential Research Reagent Solutions for Biocompatibility Testing

| Reagent/Material | Function and Application | Standardized Reference |

|---|---|---|

| Cell Culture Lines | Mammalian cells (e.g., L929, Balb 3T3 fibroblasts) used as biological models for cytotoxicity testing [4] | ISO 10993-5 specifies appropriate cell lines and culture conditions [4] |

| Extraction Media | Solvents (physiological saline, vegetable oil, culture medium) used to prepare device extracts [4] | ISO 10993-12 provides guidelines for selection based on device properties [4] |

| Viability Assays | Chemical indicators (MTT, XTT, Neutral Red) that measure metabolic activity and cell health [4] | ISO 10993-5 describes validated methods for quantitative assessment [4] |

| Reconstructed Human Epidermis | 3D human skin models (e.g., EpiDerm) for irritation and corrosion testing [6] | OECD Guideline 439 standardizes protocol for in vitro skin irritation testing [6] |

| Reference Materials | Control articles with known biological responses to validate test system performance [4] | ISO 10993-12 describes use of positive and negative controls [4] |

| Culture Media Components | Nutrients, growth factors, and supplements that maintain cell viability and function during testing [4] | Specific formulations referenced in ISO 10993-5 for different cell types [4] |

Additional specialized reagents may be required for specific evaluations, including those for genotoxicity assessment (Ames test components, micronucleus assay materials), hemocompatibility testing (whole blood, anticoagulants, platelet function reagents), and implantation studies (histological stains, tissue processing chemicals). The selection of all reagents should consider their compatibility with the test device, particularly regarding potential interactions that might confound results. Proper preparation, qualification, and documentation of all research reagents are essential for generating reliable data that will support regulatory submissions and clinical safety determinations.

The journey from ISO-10993 standards to clinical safety represents a carefully structured scientific process that integrates material science, biology, and risk management to ensure medical device safety. The evaluation begins with comprehensive material characterization and proceeds through a tiered testing approach that emphasizes scientifically valid methods while respecting ethical considerations. The "Big Three" assessments—cytotoxicity, irritation, and sensitization—form the essential foundation of this evaluation, required for nearly all medical devices regardless of their classification or contact duration [4].

The future of biocompatibility testing continues to evolve toward more sophisticated in vitro models that better predict human responses, driven by scientific advancement, regulatory acceptance, and ethical imperatives. The successful navigation of this landscape requires researchers to maintain current knowledge of both ISO standards and region-specific regulatory expectations, particularly as the FDA and other global authorities update their guidance documents to reflect scientific progress [1] [3]. Through rigorous application of these principles and methodologies, researchers and drug development professionals can confidently advance medical devices from concept to clinical implementation, ensuring patient safety while facilitating access to innovative healthcare technologies.

In vitro assays have become indispensable tools in toxicology and drug development, offering a pathway to more human-relevant, efficient, and ethical safety assessments. The global regulatory landscape is undergoing a significant transformation, actively promoting the adoption of New Approach Methodologies (NAMs). In a landmark decision, the U.S. Food and Drug Administration (FDA) now advocates for the use of technologies like organ-on-a-chip systems and cytotoxicity tests to replace traditional animal models in certain contexts, particularly for monoclonal antibody therapies [8]. This shift is driven by the need to improve drug safety profiling, accelerate evaluation processes, and reduce development costs [8].

Similarly, the European Union is advancing this paradigm shift. The recent EU Commission Regulation (EU) 2023/464 has formally removed the two-generation reproductive toxicity study (OECD 416) and the Unscheduled DNA Synthesis (UDS) test, replacing them with modern in vitro methods and the extended one-generation reproductive toxicity test (OECD 443) [9]. These changes underscore a global move toward a next-generation risk assessment (NGRA) framework, where in vitro assays for cytotoxicity, metabolism, and membrane integrity provide the critical data needed to validate material properties and assess chemical safety.

This guide objectively compares the performance of established and emerging in vitro technologies within this new paradigm, providing experimental data and protocols to inform researchers and drug development professionals.

Cytotoxicity Assays

Cytotoxicity assays form the first line of screening in toxicological assessments, evaluating the fundamental ability of a substance to cause cell damage or death.

Performance Comparison of Cytotoxicity Assays

The choice of cytotoxicity assay can significantly impact the sensitivity, throughput, and relevance of the data obtained. The table below compares several common and emerging methods.

Table 1: Performance Comparison of Common Cytotoxicity Assays

| Assay Type | Mechanistic Endpoint | Throughput | Key Advantages | Key Limitations | Example Experimental Data (IC50) |

|---|---|---|---|---|---|

| MTT Assay | Mitochondrial reductase activity | Medium | Well-established, inexpensive | Can be influenced by metabolic perturbations; not suitable for suspension cells | Doxorubicin: 0.5 µM (HepG2 cells, 48h) |

| Neutral Red Uptake | Lysosomal integrity and cell viability | Medium | Simple, cost-effective, good for adherent cells | Limited for non-phagocytic cells; affected by pH | Cadmium Chloride: 15 µM (NIH/3T3 cells, 24h) |

| High-Content Screening (HCS) | Multiparametric (membrane integrity, mitochondrial membrane potential, etc.) | Low to Medium (image-based) | Provides rich, multi-parameter data on single-cell level | Requires specialized equipment and analysis; more complex | Not applicable (multiparametric output) |

| Organ-on-a-Chip | Integrated tissue/organ function (e.g., albumin production, beating) | Low (complex models) | Human-relevant; captures tissue-level complexity and dynamics; can model organ-specific toxicity | Higher cost; longer assay time; more variable | Aflatoxin B1 (Liver Chip): 10x higher sensitivity in predicting human hepatotoxicity than 2D models |

Experimental Protocol: High-Content Analysis for Multiparametric Cytotoxicity

This protocol assesses multiple cytotoxicity endpoints simultaneously in a 96-well format, providing a comprehensive profile.

- Cell Seeding: Seed adherent cells (e.g., HepG2) at a density of 10,000 cells/well in a black-walled, clear-bottom 96-well plate. Culture for 24 hours to allow adherence.

- Compound Treatment: Expose cells to a dilution series of the test compound and a negative control (vehicle) for 24-48 hours. Include a positive control (e.g., 1% Triton X-100).

- Staining: After treatment, load cells with a cocktail of fluorescent probes:

- Hoechst 33342 (2 µg/mL): Incubate for 15 minutes to label nuclei.

- Propidium Iodide (PI, 1 µg/mL) and TMRM (Tetramethylrhodamine, Methyl Ester, 100 nM): Add both for the final 30 minutes of incubation to assess plasma membrane integrity (PI) and mitochondrial membrane potential (TMRM).

- Image Acquisition and Analysis: Wash plates with PBS and image using a high-content imaging system. Acquire at least 4 fields per well. Analyze images to determine:

- Total Cell Count from Hoechst signal.

- % Dead Cells from PI-positive nuclei.

- % Cells with Depolarized Mitochondria from cells with low TMRM signal.

High-content cytotoxicity assay workflow.

Metabolism Assays

Understanding how a substance is metabolized and its potential to disrupt metabolic pathways or cause organ-specific metabolic damage is crucial for safety assessment.

Performance Comparison of Metabolism Assays

Metabolism assays range from simple enzyme activity tests to complex models that predict whole-body metabolic interactions.

Table 2: Performance Comparison of Metabolism Assays

| Assay Type | Biological Model | Metabolic Capability | Key Applications | Throughput | Human-Relevance | |

|---|---|---|---|---|---|---|

| Microsomal Stability | Liver microsomes (human/animal) | Phase I oxidation | Intrinsic clearance prediction; metabolic stability | High | Medium (lacks full cellular context) | |

| Hepatocyte Assays | Primary hepatocytes (human/animal) or cell lines | Phase I & II metabolism | Metabolite ID; bioactivation; hepatotoxicity | Medium | High (primary human) | |

| Metabolomics (e.g., AMIX) | Cell lines, biofluids, tissues | Profiling of endogenous metabolites | Discovery of metabolic biomarkers; mode-of-action analysis | Low (data analysis) | High (human-derived samples) | Platform can integrate NMR, LC-MS, and UV data for comprehensive profiling [10] |

| Metabolite Prediction (e.g., MMINP) | In silico from microbial data | Predicts metabolite profiles from microbiome data | Hypothesis generation; biomarker discovery | High (computational) | Context-dependent | In one IBD study, 61.2% of metabolites were accurately predicted from microbial gene data [11] |

Experimental Protocol: Untargeted Metabolomics Using LC-MS for Hepatotoxicity Screening

This protocol is used to discover metabolic shifts induced by compound treatment, which can reveal mechanisms of toxicity.

- Sample Preparation:

- Cell Treatment: Treat HepaRG cells or primary human hepatocytes with the test article and vehicle control for 24 hours. Use at least 6 biological replicates.

- Metabolite Extraction: Wash cells quickly with cold saline. Quench metabolism with 80% cold methanol (-80°C) and scrape cells. Centrifuge at 14,000 x g for 15 minutes at 4°C to pellet proteins.

- Sample Storage: Transfer the supernatant (containing metabolites) to a new vial and dry under a gentle stream of nitrogen. Store at -80°C until analysis.

- LC-MS Analysis:

- Reconstitution: Reconstitute dried extracts in 100 µL of water:acetonitrile (1:1).

- Chromatography: Inject 5 µL onto a HILIC or reverse-phase UHPLC column. Use a gradient from water (0.1% formic acid) to acetonitrile (0.1% formic acid) over 15 minutes.

- Mass Spectrometry: Acquire data in both positive and negative ionization modes on a high-resolution mass spectrometer (e.g., Q-TOF) with a mass range of 50-1000 m/z.

- Data Processing:

Untargeted metabolomics workflow.

Membrane Integrity Tests

Membrane integrity assays are vital for detecting acute cytotoxic effects resulting from chemical disruption of cellular or organellar membranes.

Performance Comparison of Membrane Integrity Assays

These assays detect the physical compromise of membranes, a classic hallmark of necrosis and other forms of cell death.

Table 3: Performance Comparison of Membrane Integrity Assays

| Assay Type | Principle | Throughput | Direct/Indirect Measure | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Lactate Dehydrogenase (LDH) Release | Measures release of cytosolic enzyme LDH into supernatant | High | Indirect, functional | Easy, scalable, quantitative | Can be confounded by serum in media; not real-time |

| Propidium Iodide (PI) / SYTOX Uptake | Fluorescent DNA dyes excluded by intact membranes; entry indicates rupture | Medium (flow cytometry) | Direct, morphological | Real-time kinetics possible (with plate reader); specific for dead cells | Requires permeabilization for intracellular targets |

| Bubble Point Test (Adapted for biological membranes) | Measures pressure required to displace liquid from a membrane's largest pore [13] [14] | Low | Direct, physical | Highly sensitive for detecting large pores/defects | Primarily used for filter validation; adaptation to cellular systems is complex |

| Trans-Epithelial Electrical Resistance (TEER) | Measures integrity of tight junctions in cell monolayers | Low | Functional, non-invasive | Ideal for barrier models (e.g., intestine, BBB); real-time | Only applicable to barrier-forming cell cultures |

Experimental Protocol: Real-time Kinetic Analysis of Membrane Integrity Using Propidium Iodide

This protocol allows for the continuous monitoring of membrane integrity in a 96-well format, providing temporal data on the onset of cytotoxicity.

- Cell Seeding and Staining:

- Seed cells in a 96-well black-walled plate and culture until 80% confluent.

- Replace the medium with a Hanks' Balanced Salt Solution (HBSS) containing 1 µM Propidium Iodide (PI) and 5 µg/mL Hoechst 33342.

- Incubate for 20 minutes at 37°C to allow dye equilibration.

- Baseline Measurement:

- Place the plate in a pre-warmed (37°C) fluorescent plate reader.

- Measure fluorescence (Ex/Em for PI: ~535/617 nm; for Hoechst: ~350/461 nm) to establish a baseline for 3-5 cycles.

- Compound Addition and Kinetic Reading:

- Without removing the plate, use the injector system to add a 5X concentrated solution of the test compound.

- Immediately initiate kinetic readings, taking measurements every 5 minutes for 2-4 hours.

- Maintain temperature at 37°C.

- Data Analysis:

- Normalize the PI fluorescence signal to the Hoechst signal (cell number) for each well.

- Plot normalized PI fluorescence versus time. Calculate the time-to-onset and rate of membrane integrity loss.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of these in vitro assays relies on high-quality, well-characterized reagents and models. The following table details key solutions for the featured fields.

Table 4: Essential Research Reagents and Tools for In Vitro Toxicology

| Category | Item | Function in Assays | Example Application |

|---|---|---|---|

| Cell Models | Primary Human Hepatocytes | Gold standard for hepatic metabolism and toxicity studies | Metabolite identification, hepatotoxicity screening |

| Immortalized Cell Lines (e.g., HepG2, Caco-2) | Consistent, scalable models for high-throughput screening | Initial cytotoxicity, genotoxicity, mechanistic studies | |

| Organ-on-a-Chip Systems (e.g., Liver-chip) | Physiologically relevant models that mimic human organ microstructure and function | Predictive toxicology, disease modeling, ADME studies [8] | |

| Critical Reagents | Fluorescent Viability Probes (e.g., PI, TMRM, Hoechst) | Label cellular components and report on health status (membrane integrity, MMP) | High-content screening, live-cell imaging, flow cytometry |

| Matrices for 3D Culture (e.g., BME, alginate hydrogels) | Provide a 3D scaffold to support complex cell growth and tissue-like organization | Spheroid and organoid culture, enhancing in vitro model relevance | |

| LC-MS Grade Solvents | Ensure minimal background interference and high signal-to-noise in analytical chemistry | Sample preparation for metabolomics, pharmaceutical analysis | |

| Software & Databases | Metabolic Profiling Software (e.g., AMIX) | Processes and statistically analyzes complex spectral data from NMR and MS | Metabolite identification, biomarker discovery [10] |

| Network Analysis Tools (e.g., Filigree, CorrelationCalculator) | Constructs data-driven interaction networks from omics data | Identifying novel associations between metabolites/microbes in toxicology [12] | |

| Pathway Databases (e.g., KEGG, Reactome) | Annotate and map experimental data onto known biological pathways | Functional interpretation of transcriptomic and metabolomic results |

The landscape of toxicology is unequivocally shifting towards an integrated use of in vitro assays and in silico tools, a move strongly endorsed by global regulatory bodies [8] [9] [15]. As emphasized by experts, the future lies in Next Generation Risk Assessment (NGRA), which leverages these new approach methodologies (NAMs) to build more predictive and human-relevant safety cases [15].

The assays detailed in this guide—cytotoxicity, metabolism, and membrane integrity—are not standalone tests but essential, interconnected components of a robust safety assessment strategy. The most powerful applications will come from their integration within defined testing strategies, such as the use of metabolism data to inform cytotoxicity study concentrations, or the application of membrane integrity tests to validate findings from high-content cytological profiling.

For researchers, the path forward involves the thoughtful combination of these tools, leveraging their respective strengths. This includes using high-throughput cytotoxicity screens for initial prioritization, employing metabolomics and organ-chip models for deeper mechanistic insight, and utilizing computational tools to extrapolate and predict outcomes. As these technologies continue to mature and their regulatory acceptance expands, they will form the cornerstone of a more efficient, ethical, and biologically accurate paradigm for validating material properties and ensuring drug and chemical safety.

In the field of drug development and chemical safety assessment, international standards provide the critical foundation for ensuring reliability, reproducibility, and regulatory acceptance of scientific data. The landscape is primarily shaped by three key players: the Organisation for Economic Co-operation and Development (OECD), the International Council for Harmonisation (ICH), and various regional regulatory agencies including the European Medicines Agency (EMA) and the United States Food and Drug Administration (FDA). These organizations develop complementary yet distinct guidelines that researchers must navigate to validate material properties through both in vitro and in vivo studies [16] [17] [18].

The contemporary approach to validation has evolved from a discrete, compliance-driven exercise to a proactive, science-based lifecycle model integrated throughout product development and commercial manufacturing [17]. This paradigm shift, championed globally, emphasizes that quality must be built into products through profound process understanding rather than merely verified through end-product testing. For researchers and drug development professionals, understanding the intricate relationships between these frameworks is not merely administrative—it is fundamental to designing studies that will generate mutually acceptable data across jurisdictions, thereby accelerating global market access while upholding rigorous safety standards [16] [18].

Organizational Profiles and Core Mandates

OECD: Setting the Standard for Chemical Safety

The OECD Test Guidelines form the universal benchmark for non-clinical environmental and health safety testing of chemicals and chemical products [16]. These guidelines are uniquely positioned within the international regulatory ecosystem because they are formally linked to the Mutual Acceptance of Data (MAD) system. Under MAD, data generated in accordance with OECD Test Guidelines and Good Laboratory Practice (GLP) in one member country must be accepted by all others, eliminating costly and duplicative testing [16] [18]. This system has yielded significant benefits, saving millions of dollars and countless test animals by preventing redundant studies [18].

The OECD Guidelines are organized into five comprehensive sections:

- Section 1: Physical Chemical Properties

- Section 2: Effects on Biotic Systems

- Section 3: Environmental Fate and Behaviour

- Section 4: Health Effects

- Section 5: Other Test Guidelines [16]

These guidelines are living documents, continuously expanded and updated to reflect scientific progress. A notable update in June 2025 revised numerous guidelines to incorporate New Approach Methodologies (NAMs), promote best practices, and further the principles of Replacement, Reduction, and Refinement (3Rs) of animal testing [16]. For instance, Test Guideline 442E was updated to include a new Defined Approach for determining the point of departure for skin sensitization potential, illustrating the integration of advanced in vitro methods [16].

ICH: Harmonizing Pharmaceutical Regulation

The International Council for Harmonisation (ICH) brings together regulatory authorities and the pharmaceutical industry to discuss scientific and technical aspects of product registration. Its mission is to achieve greater harmonization worldwide to ensure safe, effective, and high-quality medicines are developed and registered in the most resource-efficient manner. While the OECD focuses broadly on chemical safety, ICH guidelines specifically address the entire pharmaceutical product lifecycle, from development to manufacturing [17].

ICH guidelines form the conceptual bedrock for modern quality systems, with several being particularly relevant to validation:

- ICH Q8 (Pharmaceutical Development): Advocates for a systematic approach to development, including establishing a Design Space.

- ICH Q9 (Quality Risk Management): Provides a systematic process for risk assessment and management.

- ICH Q10 (Pharmaceutical Quality System): Describes a comprehensive model for an effective pharmaceutical quality system [17].

These guidelines collectively advocate for a system where product quality is ensured through scientific understanding and proactive risk management rather than being confirmed solely by end-product testing [17].

Regional Regulators: Implementing and Enforcing Standards

Regional regulatory agencies such as the EMA and FDA operationalize the principles established by international harmonization efforts. While they increasingly align with ICH guidelines, they maintain distinct regional requirements and emphases in their regulatory frameworks.

The FDA's guidance on process validation establishes a structured, three-stage lifecycle model: Process Design, Process Qualification, and Continued Process Verification [17] [19]. This framework requires manufacturers to demonstrate deep process understanding and implement ongoing verification programs to ensure processes remain in a state of control [19].

The EMA incorporates similar lifecycle concepts but expresses them through EU Good Manufacturing Practice (GMP) Annex 15, which acknowledges multiple validation approaches including prospective, concurrent, and retrospective validation [17] [20]. A distinctive feature of the EU framework is its explicit classification of processes as 'standard' or 'non-standard,' which directly dictates the level of validation data required in regulatory submissions [17].

Figure 1: Relationship Between International Standard-Setting Bodies. This diagram illustrates how OECD, ICH, and regional regulators establish complementary frameworks that collectively support global market access for pharmaceuticals and chemicals.

Comparative Analysis of Regulatory Frameworks

Process Validation Lifecycle Approaches

While all major regulatory bodies have embraced a lifecycle approach to process validation, their implementation frameworks show notable differences, particularly between the FDA and EMA.

FDA's Three-Stage Model:

- Stage 1: Process Design: Building and capturing process knowledge to establish a robust control strategy.

- Stage 2: Process Qualification: Confirming the process design through rigorous evaluation, culminating in the Process Performance Qualification (PPQ).

- Stage 3: Continued Process Verification: Ongoing monitoring during commercial production to ensure the process remains in a state of control [17] [19].

EMA's Flexible Framework:

- While not explicitly divided into stages, EMA's guidelines cover prospective, concurrent, and retrospective validation.

- Strongly recommends use of a Validation Master Plan.

- Requires ongoing process verification but offers multiple pathways to demonstrate validation [17] [20].

A critical distinction lies in the EU's formal recognition of different development approaches. A "traditional approach" defines set points and operating ranges, while an "enhanced approach" uses scientific knowledge and risk management more extensively. This distinction directly influences the validation strategy permitted; an enhanced approach is a prerequisite for utilizing Continuous Process Verification [17].

Key Divergences in Validation Requirements

The following table summarizes the principal differences between FDA and EMA expectations for process validation, which researchers must accommodate when designing global development programs.

Table 1: Comparison of FDA and EMA Process Validation Requirements

| Aspect | US FDA | EU EMA |

|---|---|---|

| Process Stages | Clearly defined 3-stage model | Life-cycle focused, less explicitly staged |

| Validation Master Plan | Not mandatory, but expected equivalent | Mandatory |

| Use of Statistics | High emphasis | Encouraged, but flexible |

| Retrospective Validation | Discouraged | Permitted with justification |

| Number of PQ Batches | Minimum 3 recommended (commercial scale) | Risk-based, scientifically justified |

| Approach to CPV/OPV | Continued Process Verification (CPV) with statistical process control | Ongoing Process Verification (OPV) incorporated in Product Quality Review |

These divergences have profound strategic implications. A company developing a product for both US and EU markets must devise two distinct validation submission strategies. For the US, the focus is on executing a comprehensive PPQ. For the EU, the strategy involves justifying the process classification and choosing between providing full traditional validation data or justifying a Continuous Process Verification model [17].

Experimental Applications and Case Studies

Integrating Omics Technologies into Regulatory Frameworks

The convergence of international standards is particularly evident in the emerging field of omics technologies (transcriptomics, metabolomics, proteomics) for chemical safety assessment. A 2025 review highlighted the critical role of standards in facilitating the uptake of these New Approach Methodologies (NAMs) into regulatory testing [18].

Experimental Protocol: Transcriptomics-Based In Vitro Method

- Experimental Design: Determine number of biological and technical replicates using statistical power analysis.

- Test System Exposure: Expose in vitro system to test chemical following OECD Guidance on Good In Vitro Method Practices (GIVIMP).

- Sample Collection: Preserve biomolecular profiles (e.g., by immediate cell washing and freezing).

- Sample Preparation: Extract and purify RNA using standardized protocols.

- Data Generation: Perform RNA sequencing (RNA-seq) following established library preparation and sequencing standards.

- Data Processing & Analysis: Apply bioinformatics pipelines for quality control, alignment, and differential expression analysis.

- Data Interpretation: Use standardized approaches to derive toxicological conclusions.

- Reporting: Document all steps according to relevant reporting standards [18].

This workflow demonstrates how existing documentary standards can be leveraged across different stages of omics-based methods. For transcriptomics using RNA-seq, standards have been produced by formal standardization bodies like ISO, while for metabolomics using mass spectrometry, best practices have primarily been driven by the scientific community [18].

Case Study: GARDskin Test Method

The Genomic Allergen Rapid Detection (GARD) test method for skin sensitization assessment illustrates the multi-stage pathway for regulatory acceptance of novel methodologies. GARD distinguishes between skin sensitizers and non-sensitizers through measurement of gene expression in a cell-based test system [18].

Key Milestones:

- 2011: Pre-submission to EURL ECVAM (Tracking System for Alternative methods towards Regulatory acceptance - TSAR)

- 2019: Inter-laboratory validation study published

- 2021: Formal scientific peer review by ESAC (EURL ECVAM Scientific Advisory Committee)

- 2022: Incorporated into OECD TG 442E on in vitro skin sensitization [18]

This case study reveals that the path to regulatory acceptance of NAMs is a multistage, technically complex, resource-intensive endeavor requiring rigorous validation to meet MAD requirements. The entire process from pre-submission to OECD adoption spanned over a decade, highlighting both the meticulous nature of regulatory acceptance and the critical importance of standardization throughout the process [18].

Figure 2: Regulatory Acceptance Pathway for New Approach Methodologies. This workflow illustrates the multi-stage process from initial development to formal adoption into OECD Test Guidelines, as demonstrated by the GARDskin case study.

Research Reagent Solutions for Omics-Based Methods

The successful implementation of standardized omics methods requires specific research reagents and materials. The following table details essential solutions for conducting transcriptomics and metabolomics studies aligned with regulatory standards.

Table 2: Essential Research Reagents for Omics-Based Regulatory Studies

| Reagent/Material | Function | Application in Standardized Methods |

|---|---|---|

| Reference Materials | Characterize analytical repeatability and reproducibility within and across laboratories | Essential for demonstrating method reliability as required by OECD TGs |

| RNA Extraction Kits | Isolate and purify high-quality RNA from in vitro test systems | Must follow standardized protocols for sample preparation (e.g., ISO standards) |

| Library Preparation Kits | Prepare RNA-seq libraries for next-generation sequencing | Should incorporate unique molecular identifiers to control for technical variability |

| Mass Spectrometry Standards | Calibrate instruments and enable metabolite quantification | Critical for achieving reproducible metabolomics data across laboratories |

| Quality Control Materials | Monitor performance of analytical platforms over time | Required for maintaining longitudinal data quality in compliance with GLP |

Strategic Implementation for Global Drug Development

Navigating Divergent Regulatory Expectations

For researchers and drug development professionals, successfully navigating the complex landscape of international standards requires strategic planning from the earliest stages of program development. The divergences between regulatory frameworks, particularly between FDA and EMA, necessitate thoughtful approaches.

Strategic Considerations:

- Early Assessment: Determine target markets during preclinical development to shape validation strategies accordingly.

- Documentation Strategy: Prepare a Validation Master Plan regardless of FDA's non-mandatory status, as it satisfies EMA requirements and provides comprehensive documentation.

- Batch Justification: For the EU, develop scientifically rigorous risk-based justifications for the number of validation batches rather than defaulting to three.

- Statistical Planning: Incorporate robust statistical approaches for Continued Process Verification that will satisfy FDA's emphasis while meeting EMA's flexible expectations [17] [20].

The EU's explicit connection between development approach and permitted validation pathway creates a tangible regulatory incentive for adopting enhanced, science-based development principles. Companies targeting the EU market should consider investing in the enhanced development approach outlined in ICH Q8, as this opens the door to Continuous Process Verification, potentially reducing long-term validation burdens [17].

Leveraging International Harmonization

Despite areas of divergence, significant convergence has been achieved through international harmonization efforts. The universal adoption of the lifecycle approach to validation represents a fundamental shift in regulatory philosophy, emphasizing continuous verification over one-time validation events [17].

The foundational role of ICH guidelines (Q8, Q9, Q10) across both FDA and EMA frameworks provides a common language and set of principles that researchers can leverage [17]. Furthermore, the OECD's MAD system offers a powerful mechanism to avoid redundant testing, underscoring the value of adhering to OECD Test Guidelines and GLP principles for nonclinical safety studies [16] [18].

As noted in a 2025 analysis, "Modern process validation for pharmaceutical products has undergone a significant global transformation, moving from a retrospective, compliance-driven exercise to a proactive, science- and risk-based lifecycle model" [17]. This transformation, embodied in the evolving guidelines of OECD, ICH, and regional regulators, provides a more efficient and scientifically robust pathway for validating the safety and efficacy of pharmaceuticals and chemicals worldwide.

The intricate ecosystem of international standards—spanning OECD, ICH, and regional regulators—creates both challenges and opportunities for researchers validating material properties through in vitro and in vivo studies. While divergences exist, particularly in implementation details between FDA and EMA, the overarching trend is toward greater harmonization grounded in scientific understanding and risk-based approaches.

The successful 21st-century researcher must therefore be not only a scientific expert but also a strategic navigator of this regulatory landscape. By understanding the distinct roles, requirements, and interrelationships of these standard-setting bodies, and by implementing robust, standardized experimental protocols from the earliest research stages, professionals can design development programs that efficiently meet global regulatory expectations while advancing the shared goals of product quality, patient safety, and environmental protection.

Limitations of Traditional 2D Monolayer Cultures and the Need for Advanced Models

For decades, two-dimensional (2D) monolayer cultures have been the standard workhorse in biological research, drug discovery, and toxicity testing. Grown on flat, rigid plastic substrates, these models are valued for their cost-effectiveness, simplicity, and high reproducibility [21]. However, a growing body of evidence underscores a critical weakness: their frequent failure to accurately predict drug efficacy and toxicity in living organisms (in vivo) [21]. This limitation is a significant contributor to the high attrition rate in drug development, where at least 75% of novel drugs that demonstrate efficacy during preclinical testing fail in clinical trials [21]. The primary reason for this discrepancy is the inability of 2D models to replicate the intricate tissue microenvironment found in vivo, where cells are surrounded by an extracellular matrix (ECM) and engage in complex three-dimensional interactions with neighboring cells [21]. This article will objectively compare the performance of traditional 2D cultures with advanced three-dimensional (3D) models, framing the discussion within the broader thesis of validating material properties and biological responses through integrated in vitro and in vivo studies.

Core Limitations: A Quantitative and Qualitative Comparison

The table below provides a structured, objective comparison of the core characteristics of 2D and 3D culture models, highlighting the fundamental limitations of the traditional approach.

Table 1: Fundamental Comparison of 2D and 3D Cell Culture Models

| Feature | Traditional 2D Models | Advanced 3D Models |

|---|---|---|

| Cell Morphology & Polarization | Flat, elongated; partial polarization due to forced apical-basal polarity on a single surface [21]. | In vivo-like morphology; allows for correct cell polarization and architecture [22]. |

| Cell-Cell & Cell-ECM Interactions | Limited to a single plane; lack physiologically relevant interactions [21]. | Physiologically high levels of interaction; strong cell-cell adhesion and cell-ECM engagement [21]. |

| Microenvironment | Homogeneous exposure to nutrients, oxygen, and drugs; no gradients formed [21]. | Recapitulates physiological gradients of oxygen, nutrients, pH, and metabolic waste [21]. |

| Predictivity of Drug Effects | Often fails to accurately predict in vivo efficacy and toxicity [21]. | Better predictors of clinical outcomes; more accurately reflect drug responses in vivo [23]. |

| Phenotypic & Gene Expression | Altered phenotype and gene expression due to non-physiological growth conditions [22]. | Preserves native tissue-specific functions and gene expression profiles [22]. |

| Throughput & Cost | High reproducibility and performance; ease-of-use; low cost [21]. | More expensive and time-consuming; culture procedures are more complicated [21]. |

The limitations of 2D cultures are not merely theoretical. For instance, in neurological research, genetically engineered mice expressing human microcephaly-related gene mutations have failed to recapitulate the severely reduced brain size seen in human patients, highlighting the translatability gap between animal models and human disease [24]. Furthermore, numerous prospective drugs for stroke, traumatic brain injury, and Alzheimer's disease that were effective in animal experiments failed in clinical trials, a failure attributed in part to the inability of existing models to adequately model human neurological disorders [24]. The advent of human induced pluripotent stem cells (iPSCs) has opened new avenues, but their potential is maximized when differentiated in 3D environments that better mimic the complex architecture of the human brain [24].

Experimental Validation: Data from Comparative Studies

The superior biological relevance of 3D models translates into tangible differences in experimental outcomes, particularly in drug screening. The following table summarizes key experimental findings that compare the performance of both models.

Table 2: Experimental Data Comparison in Drug Screening Applications

| Experimental Parameter | Observation in 2D Models | Observation in 3D Models | Implications for Drug Discovery |

|---|---|---|---|

| Drug Sensitivity & IC50 | Often shows higher sensitivity to chemotherapeutics; lower IC50 values [21]. | Demonstrates higher resistance; IC50 values can be several folds higher [21]. | 3D models can identify false positives from 2D screens, preventing costly late-stage failures. |

| Tumor Microenvironment | Fails to replicate the core and periphery of tumors, including oxygen gradients [22]. | Forms nutrient/oxygen gradients; reproduces tumor physiology for immunotherapy testing [22]. | Enables study of immune cell homing, tumor cytotoxicity, and immune evasion in a more realistic setting [22]. |

| Cell Surface Area Exposure | ~50% of cell surface exposed to media and compounds [22]. | Nearly 100% of surface area in contact with other cells or matrix [22]. | Alters compound penetration and kinetics, providing a more accurate assessment of bioavailability. |

| Correlation with In Vivo Outcomes | Poor correlation for many drug candidates, contributing to high clinical failure rates [21]. | Serves as a better predictor of in vivo drug responses, improving clinical translatability [23]. | Bridges the gap between conventional cell culture and in vivo models, de-risking the pipeline [23]. |

Methodologies: Protocols for Model Generation and Validation

Protocol 1: Generating Neural Stem Cells (NSCs) from iPSCs in 2D

This protocol is foundational for neurological disease modeling [24].

- iPSC Culture: Maintain human iPSCs as uniform flat colonies on a suitable substrate.

- Embryoid Body (EB) Formation: Detach iPSC colonies and culture them in low-attachment dishes with a chemically defined medium to form 3D aggregates called embryoid bodies, mimicking early embryogenesis.

- Neural Induction: Transfer EBs to a medium containing specific growth factors like fibroblast growth factor 2 (FGF-2) to promote the formation of neural rosettes—radial arrangements of cells that mimic the neural tube.

- NSC Expansion: Manually isolate and re-plate the neural rosettes in a monolayer culture. The resulting population, characterized by triangle-like morphology and expression of markers like SOX2 and Nestin, consists of proliferative NSCs [24].

- Differentiation: NSCs can be further differentiated into neurons, astrocytes, and oligodendrocytes by altering culture conditions.

Protocol 2: Establishing 3D Spheroid Models for High-Throughput Screening

Spheroids are simple yet powerful 3D models suitable for drug screening [21].

- Cell Preparation: Create a single-cell suspension at a predetermined concentration.

- Spheroid Formation: Seed the cells into 96-well or 384-well spheroid microplates that are coated with an Ultra-Low Attachment (ULA) surface. This coating prevents cell adhesion to the plastic, forcing cells to aggregate.

- Culture and Maturation: Centrifuge the plate to ensure all cells are gathered at the bottom of the well. Incubate the plate for 3-5 days, during which a single, multicellular spheroid will form in the center of each well.

- Drug Treatment and Analysis: Add chemical compounds or drug candidates directly to the wells. Assess spheroid viability, size, and morphology using high-content imaging and assays like ATP-based luminescence.

Validation Workflow: Integrating In Vitro and In Vivo Data

The path to validating a new model or material involves a structured framework to establish its reliability and relevance for a defined purpose [25]. The following diagram illustrates this integrated validation workflow.

Diagram 1: Model Validation Workflow. This diagram outlines the sequential process for validating new test methods, from analytical rigor to regulatory application, as guided by frameworks from ICCVAM and IOM [25].

Advanced 3D Model Technologies: Moving Beyond the Monolayer

Several biofabrication technologies have been developed to create more physiologically relevant 3D models [21]. The choice of technology depends on the research question, required throughput, and complexity.

- Scaffold-Based Models (Hydrogels): Natural (e.g., Collagen, Matrigel, fibrin) or synthetic hydrogels are used to encapsulate cells, providing an ECM-like 3D structure that supports physiological cell functions and modulates drug responses [21].

- Scaffold-Free Models (Spheroids & Organoids): These are self-assembled aggregates of cells. Spheroids are often used for cancer research, while organoids are more complex, self-organizing structures that can mimic specific organ features [24] [23].

- Bioprinting: This technology uses automated printers to deposit cells, biomaterials, and growth factors (bioinks) in a precise, layer-by-layer manner to create complex, heterocellular 3D tissue constructs [26].

- Organ-on-a-Chip (OOAC): These are microfluidic devices that culture living cells in continuously perfused, micrometer-sized chambers to simulate the activities, mechanics, and physiological responses of entire organs and organ systems [23].

The Scientist's Toolkit: Essential Reagents for 3D Research

Table 3: Key Research Reagent Solutions for 3D Cell Culture

| Reagent / Material | Function and Application in Advanced Models |

|---|---|

| Matrigel Matrix | A natural, ECM-based hydrogel derived from mouse sarcoma. It is the "gold standard" for providing a biologically active scaffold that supports complex 3D growth and differentiation, such as in organoid cultures [22]. |

| Ultra-Low Attachment (ULA) Plates | Microplates with a covalently bonded hydrogel coating that inhibits cell attachment. This forces cells to aggregate and form spheroids in a high-throughput manner, ideal for drug screening [22]. |

| Induced Pluripotent Stem Cells (iPSCs) | Somatic cells (e.g., from skin or blood) reprogrammed to an embryonic-like state. They are the foundational cell source for generating patient-specific neurons, glial cells, and complex organoids for disease modeling [24]. |

| EDC/NHS Crosslinker | A chemical crosslinking system used to modify and stabilize natural polymer scaffolds (e.g., collagen). It enhances the mechanical integrity and degradation profile of biomaterial scaffolds for in vivo implantation [27]. |

| Specialized Bioreactors | Devices like Rotating Wall Vessel (RWV) bioreactors that create a low-shear, simulated microgravity environment. This promotes the formation of large, complex 3D tissue aggregates that are difficult to achieve in static culture [23]. |

The evidence overwhelmingly indicates that traditional 2D monolayer cultures, while historically indispensable, possess profound limitations in their ability to model human physiology and predict therapeutic outcomes. Their two-dimensional nature, lack of a tissue-specific microenvironment, and altered cellular phenotypes contribute directly to the high failure rates in drug development. The scientific community is therefore increasingly adopting advanced 3D models—including spheroids, organoids, and bioprinted tissues—that bridge the critical gap between conventional cell culture and in vivo reality. Framing the development and use of these advanced models within a rigorous validation framework, which integrates both in vitro and in vivo data, is essential for improving their predictive power and gaining regulatory acceptance. This paradigm shift is not merely a technical improvement but a necessary evolution to enhance the efficacy and safety of future therapeutics.

The clinical success of any implantable biomaterial—from orthopedic screws to drug-eluting scaffolds—is fundamentally governed by its interaction with the host's biological environment. These interactions are not random but are directly orchestrated by specific physicochemical properties of the material itself [28]. The paradigm in regenerative medicine has shifted from merely minimizing the host's reaction to actively modulating the immune response through intelligent material design to trigger and control tissue regeneration [29]. Achieving this requires a deep understanding of the cause-and-effect relationships between a material's properties and the biological cascades they initiate. This guide provides a comparative analysis of key material properties—surface, mechanical, and chemical characteristics—and their documented influence on biological responses, framing this discussion within the critical context of validating these relationships through robust in vitro and in vivo experimental models.

Comparative Analysis of Key Material Properties and Biological Responses

The following section synthesizes experimental data from published literature to compare how specific material properties influence cellular and tissue-level outcomes. The tables below summarize these relationships, providing a reference for researchers to anticipate biological responses based on material design choices.

Table 1: Influence of Surface and Mechanical Properties on Biological Responses

| Property Category | Specific Parameter | Experimental Data & Observed Biological Response | Reported Model (In Vitro/In Vivo) |

|---|---|---|---|

| Surface Properties | Topography & Roughness (Rq) | • Rq ~5 nm (Smooth): Poor cell adhesion [29].• Rq ~225 nm (CHCl3 etched): Significant increase in fibroblast adhesion and proliferation; 2-fold upregulation of TGF-β1, indicating pro-regenerative signaling [29].• Micro-porous structures (0.5-20 μm): Enhanced cell interlocking and homogeneous tissue layer formation [29]. | In vitro co-culture (fibroblasts/macrophages) [29]. |

| Wettability (Contact Angle) | • Hydrophilic (40°-70°): Promoted more homogeneous cell layer formation; associated with M2-like wound healing cytokine profile (e.g., TGF-β1) [29].• Hydrophobic (e.g., 158.6°): Created cell-repellent surfaces; minimal cell spread observed [30]. | In vitro cell culture [29] [30]. | |

| Mechanical Properties | Stiffness | • Optimized Stiffness: Materials with mechanical properties matching the target tissue support correct cell differentiation and prevent adverse fibrotic reactions [28].• Extreme Values: High stiffness can lead to stress shielding in bone applications, while very low stiffness may not provide necessary structural support. | Reviews of in vivo outcomes [28] [31]. |

| Degradation Rate | • Controlled, matching tissue regeneration: Supports constructive remodeling and M2 macrophage polarization [32].• Rapid or no degradation: Can lead to excessive inflammation, foreign body reaction, or fibrous encapsulation [33] [32]. | Rodent skeletal muscle and abdominal wall defect models [32]. |

Table 2: Influence of Chemical and Biological Properties on Biological Responses

| Property Category | Specific Parameter | Experimental Data & Observed Biological Response | Reported Model (In Vitro/In Vivo) |

|---|---|---|---|

| Chemical Properties | Surface Chemistry & Functional Groups | • High Oxygen Content (from Ar/O2 plasma): Increased hydrophilicity and TGF-β1 production (pro-healing) [29].• Introduction of C-F bonds (from CHF3 plasma): Created hydrophobic surfaces, decreased TGF-β1 production in fibroblast cultures [29]. | In vitro co-culture (fibroblasts/macrophages) [29]. |

| Material Composition | • Synthetic Polymers (e.g., PCL, PEOT/PBT): Can be tailored for mechanical properties; surface modification is often crucial for bioactivity [29].• Natural Polymers (e.g., ECM, Chitosan): ECM scaffolds demonstrated constructive remodeling and M2 macrophage presence; Chitosan showed immunomodulatory effects via IL-10 and NF-κB suppression [34] [32]. | Rodent skeletal muscle and abdominal wall models [32]; In vitro macrophage assays [34]. | |

| Biological Properties | Bioactivity & Innate Motifs | • Presence of bioactive motifs (e.g., in collagen, silk): Enhances cell adhesion, signaling, and tissue-specific regeneration [34].• Decellularized ECM (vs. Crosslinked ECM): Non-crosslinked ECM promoted constructive remodeling, while crosslinked versions triggered a foreign body reaction and dominant M1 macrophage response [32]. | In vivo rodent implantation; in vitro human macrophage model [32]. |

| Drug Delivery Functionality | • Laser-modified surfaces (Grid/Line patterns): Showed increased Prednisolone (PDS) retention and controlled release. The released PDS maintained anti-inflammatory effect, reducing M1 macrophage cytokines [30]. | In vitro drug release and macrophage culture [30]. |

Experimental Protocols for Validating Biomaterial Responses

To generate comparative data as summarized above, standardized and rigorous experimental protocols are essential. Below are detailed methodologies for key assays cited in the literature, providing a template for researchers to validate material properties.

Protocol 1: In Vitro Macrophage Immunomodulation Assay

This protocol, adapted from a study predicting in vivo responses, uses human macrophages to profile the immunomodulatory potential of biomaterials [32].

- Objective: To characterize the dynamic inflammatory response of human macrophages to biomaterials and correlate it with in vivo remodeling outcomes.

- Materials:

- Research Reagents: Human monocytes isolated from peripheral blood; Macrophage colony-stimulating factor (M-CSF) for differentiation; Cell culture media (RPMI-1640 + 10% FBS); Biomaterial test specimens (sterilized, 2-cm diameter discs); Multiplex cytokine ELISA kits (e.g., for IL-1β, IL-6, IL-10, TNF-α, TGF-β1); RNA extraction kit; reagents for flow cytometry (antibodies for CD68, CD80, CD206).

- Methodology:

- Macrophage Differentiation: Isolate CD14+ monocytes from human peripheral blood and differentiate them into macrophages by culturing with 50 ng/mL M-CSF for 7 days.

- Material Exposure: Seed the matured macrophages onto the test biomaterial discs or a tissue culture plastic control. Maintain cultures for a time course (e.g., 1, 3, 7 days).

- Sample Collection: At each time point, collect:

- Supernatant: For cytokine secretion analysis via ELISA.

- Cells: For RNA extraction (qPCR analysis of M1/M2 markers) and flow cytometry for surface marker expression.

- Data Analysis: Use multivariate in silico analysis techniques, such as Principal Component Analysis (PCA) and Dynamic Network Analysis (DyNA), to identify patterns in the high-dimensional dataset (cytokines, genes) that distinguish material responses.

- Validation: The in vitro macrophage response profiles are then associated with the in vivo host response (e.g., macrophage polarization and tissue remodeling) in a corresponding animal model [32].

Protocol 2: In Vivo Rodent Abdominal Wall Implantation Model

This model is a standard for evaluating the host response and functional integration of biomaterials intended for soft tissue repair [32].

- Objective: To assess the host remodeling response and macrophage polarization to implanted biomaterials in a relevant in vivo environment.

- Materials:

- Research Reagents: Female Sprague-Dawley rats (250–300 g); Test and control biomaterial coupons (1x1 cm², sterilized); Isoflurane anesthetic; Sutures (4-0 Prolene for fixation, 4-0 Vicryl for skin); Neutral buffered formalin for fixation; Paraffin for embedding; Hematoxylin and Eosin (H&E) stain; Antibodies for immunofluorescence (e.g., CCR7 for M1, CD206 for M2 macrophages).

- Methodology:

- Surgical Implantation: Create a 1x1 cm² partial-thickness defect in the abdominal wall muscles (external and internal oblique), leaving the transversalis fascia intact.

- Graft Placement: Inlay the test material coupon into the defect and fix it to the adjacent muscle at the four corners with non-degradable sutures.

- Endpoint Analysis: Sacrifice animals at pre-determined time points (e.g., 14 and 35 days).

- Histological Remodeling Score: Process explants for H&E staining. Score sections semiquantitatively (0-3) for foreign body giant cell formation, degradation, connective tissue organization, encapsulation, and muscle ingrowth. A higher total score indicates more favorable remodeling.

- Macrophage Phenotyping: Perform immunofluorescent staining on tissue sections for M1 (CCR7) and M2 (CD206) markers. Quantify the ratio and spatial distribution of macrophage phenotypes at the implant interface.

Protocol 3: Surface Modification and Cell-Material Interaction Screening

This in vitro protocol systematically correlates surface properties with cell activity to predict the fate of regenerated tissue [29].

- Objective: To decipher the effect of surface properties (wettability, topography, chemistry) on cellular behavior and ECM secretion.

- Materials:

- Research Reagents: Polymeric materials (e.g., PCL, PEOT/PBT); Solvents for etching (e.g., CHCl₃, NaOH); Gas plasma systems (Ar, O₂, CHF₃); Atomic Force Microscopy (AFM) equipment; Contact Angle Goniometer; X-ray Photoelectron Spectroscopy (XPS) system; Cell culture of fibroblasts and macrophages; ELISA kits for cytokines (IL-1β, IL-6, TGF-β1, IL-10) and ECM proteins (Collagen, Elastin).

- Methodology:

- Surface Modification: Create a library of surface properties by treating smooth polymer rods with various methods (gas plasma, solvent etching) and varying exposure times/concentrations.

- Material Characterization: For each modification, quantify:

- Topography/Roughness: via AFM.

- Wettability: via Contact Angle.

- Surface Chemistry: via XPS.

- Cell Culture Screening: Culture fibroblasts and macrophages, both in mono-culture and co-culture conditioned medium, on the modified surfaces.

- Response Measurement: Assess:

- Cell Adhesion & Proliferation: Using microscopy and metabolic assays.

- Soluble Factor Secretion: Measure pro/anti-inflammatory cytokines and growth factors in the medium via ELISA.

- ECM Production: Quantify collagen and elastin synthesis.

The workflow for this integrated validation approach, from material processing to outcome analysis, is depicted below.

Diagram 1: Integrated experimental workflow for validating biomaterial properties, combining in vitro screening, in silico modeling, and in vivo validation.

Signaling Pathways in Biomaterial-Host Interactions

The biological responses to biomaterials are mediated by specific biochemical signaling pathways that are triggered by material properties. Understanding these pathways is key to rational biomaterial design.

Macrophage Polarization (M1 vs. M2): The phenotype of macrophages at the implant site is a critical determinant of outcome.

- M1 (Pro-inflammatory) Pathway: Can be activated by adsorbed proteins or damage-associated molecular patterns (DAMPs) from tissue injury. This often involves NF-κB signaling, leading to the production of cytokines like IL-1β and IL-6 [34] [32].

- M2 (Pro-regenerative) Pathway: Promoted by specific material topographies and bioactive factors (e.g., from ECM). Key pathways include STAT3 and STAT6 phosphorylation, driving the expression of CD206 and the secretion of anti-inflammatory cytokines like IL-10 and TGF-β1, which promote tissue repair and angiogenesis [33] [34] [32].

Foreign Body Giant Cell (FBGC) Formation: A hallmark of the foreign body reaction to non-degradable or bioinert materials. FBGCs are formed by the fusion of macrophages, a process mediated by IL-4 and IL-13 signaling, which activates STAT6. The persistence of FBGCs is associated with the chronic release of reactive oxygen species and degradative enzymes, leading to material disintegration and failure [35].

Cell Adhesion and Mechanotransduction: The initial attachment of cells to a material surface is governed by the adsorption of proteins (e.g., fibronectin, vitronectin) and the engagement of integrin receptors. This triggers intracellular signaling cascades, including focal adhesion kinase (FAK) and Rho GTPase pathways, which regulate cytoskeletal organization, cell spreading, and downstream gene expression. Surface properties like topography and stiffness directly influence these mechanotransduction pathways [28].

The pivotal role of macrophage polarization, driven by material properties, is illustrated in the following pathway diagram.

Diagram 2: Macrophage polarization pathways influenced by biomaterial properties, leading to distinct clinical outcomes.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and materials essential for conducting experiments in biomaterial-biological response validation, as cited in the featured research.

Table 3: Essential Research Reagent Solutions for Biomaterial Testing

| Reagent/Material | Function & Application in Research | Example from Literature |

|---|---|---|

| Polycaprolactone (PCL) | A synthetic, biodegradable polymer used to fabricate scaffolds for tissue engineering. Easily processable and modifiable. | Used as a base material for extruded rods; surface etching with CHCl₃ significantly improved cell adhesion and TGF-β1 secretion [29]. |

| Decellularized Extracellular Matrix (dECM) | A naturally derived biomaterial (e.g., from urinary bladder, dermis) that retains innate bioactive motifs. Serves as a gold standard for pro-regenerative scaffolds. | MatriStem (urinary bladder ECM) promoted constructive remodeling and M2 macrophage polarization in vivo, unlike its crosslinked version [32]. |

| Chitosan | A natural polysaccharide with inherent immunomodulatory properties. Used in scaffolds and drug delivery. | Shown to induce IL-10 secretion and suppress colitis in animal models via modulation of NF-κB signaling [34]. |

| Gas Plasma Systems | Equipment for surface modification (e.g., with Ar, O₂, CHF₃) to alter topography, chemistry, and wettability without changing bulk properties. | Used to create defined hydrophilic (Ar, O₂) and hydrophobic (CHF₃) surfaces on polymers to study their effect on cell activity [29]. |

| Femtosecond Laser Systems | High-precision equipment for surface patterning of biomaterials to create micro/nano-topographies for controlling cell behavior and drug loading. | A novel High Focus Laser Scanning (HFLS) system created "Line" (hydrophilic) and "Grid" (hydrophobic) patterns on polystyrene, controlling cell spread and drug release [30]. |

| Cytokine ELISA Kits | Essential reagents for quantifying the secretion of soluble factors (e.g., IL-1β, IL-6, IL-10, TGF-β1) in cell culture supernatants to profile immune responses. | Used to measure macrophage cytokine secretion profiles in response to different biomaterials for subsequent PCA and DyNA [32] [29]. |

| Antibodies for Flow Cytometry/IF | Tools for identifying and quantifying specific cell types and phenotypes (e.g., CCR7 for M1 macrophages, CD206 for M2 macrophages) in in vitro and in vivo samples. | Used in rodent implantation studies to characterize the macrophage phenotype at the implant site via immunofluorescence [32]. |

The journey from a novel biomaterial concept to a clinically successful implant is guided by a rigorous understanding of structure-function-response relationships. As this guide has detailed, properties such as surface roughness, wettability, chemical composition, and degradation profile are not mere material specifications but are direct levers controlling critical biological processes like macrophage polarization, foreign body reaction, and tissue integration. The future of biomaterial development lies in the strategic manipulation of these properties to create "instructive" materials that actively guide the host response toward regeneration. This endeavor is critically dependent on integrated validation strategies that combine predictive in vitro and in silico models with definitive in vivo studies, ensuring that safety and efficacy are built into the material design from the outset.

Advanced Testing Models: From 3D Cultures to In Vivo Sensor Technologies