Validating Machine Learning for XRD Phase Identification: A Guide for Biomedical Researchers

The integration of machine learning (ML) with X-ray diffraction (XRD) is transforming phase identification in materials science and drug development.

Validating Machine Learning for XRD Phase Identification: A Guide for Biomedical Researchers

Abstract

The integration of machine learning (ML) with X-ray diffraction (XRD) is transforming phase identification in materials science and drug development. This article provides a comprehensive guide for researchers and pharmaceutical professionals on validating these powerful ML-driven methods. We explore the foundational principles of XRD and the unique capabilities of ML, detail specific algorithms like convolutional neural networks (CNNs) and their application to biomedical phantoms and polymorph screening, address critical troubleshooting and data quality requirements, and finally, present a rigorous validation framework. This framework compares ML performance against traditional rule-based methods using metrics such as classification accuracy and area under the curve (AUC), ensuring these new tools meet the stringent standards required for research and regulatory acceptance in clinical applications.

The Foundation: Understanding XRD and the Machine Learning Revolution in Phase Analysis

Core Principles of X-ray Diffraction and Bragg's Law

X-ray Diffraction (XRD) is a powerful analytical technique that has been fundamental to understanding the atomic structure of crystalline materials for over a century [1]. The technique relies on the principle that when monochromatic X-rays interact with a crystalline material, they undergo constructive and destructive interference caused by the periodic arrangement of atoms within the crystal lattice [1]. This interference generates a diffraction pattern that can be recorded and analyzed to deduce structural information about the sample [1].

The theoretical foundation of XRD lies in Bragg's Law, formulated by Sir William Lawrence Bragg and his father Sir William Henry Bragg in 1913 [2] [1]. This law provides the mathematical relationship that predicts the angles at which constructive interference of X-rays occurs in a crystal lattice [1]. Bragg's Law states that constructive interference occurs when the path difference between X-rays reflected from successive crystal planes equals an integer multiple of the wavelength [3] [4] [5]. This condition is expressed by the famous equation:

Where:

- n is the order of reflection (a positive integer)

- λ is the wavelength of the incident X-rays

- d is the interplanar spacing of the crystal lattice

- θ is the angle between the incident ray and the crystal plane

The profound importance of Bragg's Law stems from its ability to connect a measurable quantity (the diffraction angle θ) with atomic-scale structural information (the interplanar spacing d) [4]. This connection enables researchers to identify crystalline phases, determine their relative abundances, and investigate microstructural features such as crystallite size and lattice strain [1]. For their pioneering work, the Braggs were awarded the Nobel Prize in Physics in 1915, making Lawrence Bragg the youngest Nobel laureate at that time [2].

Fundamental Principles of Bragg's Law

Physical Interpretation of Bragg's Law

Bragg's Law can be understood through a physical model that treats crystal structures as composed of discrete parallel planes of atoms separated by a constant distance d [2]. When X-rays interact with these atomic planes, they are scattered in all directions. However, constructive interference occurs only when the conditions of Bragg's Law are satisfied [3] [2] [5].

The derivation of Bragg's Law considers the path difference between two parallel X-ray waves scattering from adjacent crystal planes [2] [6]. As illustrated in Figure 1, this path difference is equal to 2d sinθ. When this path difference equals an integer multiple of the X-ray wavelength (nλ), the scattered waves remain in phase and produce a strong diffracted beam [2]. At other angles, destructive interference occurs, resulting in weak or no detectable signal [5].

It is important to note that while Bragg's conceptual model describes diffraction as "reflection" from crystal planes, the actual physical process involves scattering by the electrons surrounding atoms [5]. This distinction explains why Bragg's Law represents a special case of the more general Laue diffraction theory [2] [6]. Nevertheless, the plane reflection analogy proved to be a tremendous simplification that made XRD accessible for practical structure determination [5].

Applications of Bragg's Law in Materials Characterization

Bragg's Law enables two primary applications in materials characterization [4] [6]:

Crystal Structure Determination: In XRD analysis, the wavelength λ is known, and measurements are made of the incident angles (θ) at which constructive interference occurs [4]. Solving Bragg's Equation yields the d-spacings between crystal lattice planes, which serve as a unique fingerprint for crystal identification [4]. Crystals with high symmetry (e.g., cubic systems) tend to produce relatively few diffraction peaks, while those with low symmetry (triclinic or monoclinic systems) typically generate numerous peaks [4].

Elemental Analysis: In techniques like X-ray fluorescence spectroscopy (XRF) or Wavelength Dispersive Spectrometry (WDS), crystals of known d-spacings are used as analyzing crystals [4] [6]. Since each element produces X-rays of characteristic wavelengths, positioning the crystal at angles satisfying Bragg's Law for specific wavelengths enables detection and quantification of elements of interest [4] [6].

Traditional XRD Analysis Methods: Experimental Protocols

Traditional XRD analysis has relied on well-established methodologies for data collection and interpretation. The standard workflow involves sample preparation, data acquisition, and structural analysis based on Bragg's Law.

Experimental Setup and Data Collection

Conventional XRD instrumentation typically includes [1]:

- An X-ray source (sealed tube or synchrotron radiation source)

- A collimation system to produce a parallel or divergent beam

- A goniometer for precise angular positioning of sample and detector

- A detector (e.g., scintillation counter or semiconductor detector) that records diffracted X-ray intensity as a function of angle (2θ)

The most common configuration for powdered samples is the Bragg-Brentano geometry, where the sample and source rotate through the same angles while the detector moves at twice the angular speed to maintain the focusing conditions [1]. For single crystal analysis, four-circle diffractometers are employed to collect comprehensive diffraction data from multiple crystal orientations [7].

Phase Identification and Rietveld Refinement

The primary method for quantitative phase analysis in traditional XRD is Rietveld refinement [8] [7]. This approach involves:

Initial Phase Identification: Manual comparison of diffraction patterns with reference patterns from databases such as the International Centre for Diffraction Data (ICDD) or Inorganic Crystal Structure Database (ICSD) [9] [7].

Pattern Fitting: Iterative refinement of structural parameters (lattice constants, atomic positions, thermal parameters) and instrumental parameters until the calculated pattern matches the observed diffraction data [8] [7].

Quantitative Analysis: Calculation of phase fractions based on scale factors derived during the refinement process [8].

The Rietveld method can achieve high accuracy in quantitative phase analysis but requires significant expertise and is time-consuming, particularly for complex multi-phase systems or large datasets [8].

Machine Learning Approaches for XRD Analysis

The emergence of machine learning (ML) has introduced transformative approaches to XRD data analysis, particularly for handling large datasets generated by high-throughput experimentation [9] [1] [7].

ML-Driven Phase Identification and Quantification

Recent ML approaches for XRD analysis include:

- Convolutional Neural Networks (CNNs) for phase identification from diffraction patterns [10] [8]

- Non-negative Matrix Factorization (NMF) for solving phase mapping problems in combinatorial libraries [9]

- Deep Neural Networks (DNNs) for quantitative phase analysis, trained exclusively on synthetic data derived from crystallographic information files [8]

These methods can automatically extract features from XRD patterns and correlate them with specific crystal structures or phase mixtures, significantly reducing analysis time compared to traditional methods [8] [1].

Adaptive XRD Guided by Machine Learning

A particularly innovative application integrates ML directly with the diffraction experiment itself [10]. This adaptive XRD approach uses real-time pattern analysis to guide data collection:

- An initial rapid scan identifies potential phases and their confidence levels [10]

- Class Activation Maps (CAMs) highlight diagnostically important regions of the pattern [10]

- The system selectively acquires additional data in angular regions that maximize information gain [10]

- This process iterates until confidence thresholds are met [10]

This method has demonstrated improved detection of trace phases and identification of short-lived intermediate phases during in situ studies [10].

Performance Comparison: Traditional vs. ML-Based Approaches

Quantitative Analysis Accuracy

Table 1: Comparison of Quantitative Phase Analysis Performance

| Method | Typical Phase Quantification Error | Analysis Time | Multi-phase Capability | Expertise Required |

|---|---|---|---|---|

| Traditional Rietveld | 1-5% (highly dependent on analyst expertise) [8] | Hours to days | Typically ≤ 5 phases | Advanced crystallographic knowledge |

| Neural Network (Synthetic Data) | 0.5% on synthetic test sets, 6% on experimental data [8] | Seconds to minutes | Demonstrated for 4-phase systems [8] | Basic ML implementation |

| Non-negative Matrix Factorization | Varies with system complexity [9] | Minutes | Successful on 3+ phase systems [9] | Understanding of algorithm parameters |

| Adaptive XRD | Improved trace phase detection [10] | Optimized data collection | Multi-phase capable [10] | Cross-disciplinary expertise |

Throughput and Scalability

Table 2: Throughput Comparison for Different Analysis Methods

| Method | Patterns Processed per Day | Suitable for High-Throughput | Automation Potential | Large Dataset Handling |

|---|---|---|---|---|

| Manual Rietveld | 5-20 patterns [8] | Limited | Low | Impractical |

| Automated Rietveld | 50-100 patterns [8] | Moderate | Medium | Requires significant tuning |

| ML Classification | 1,000+ patterns [1] | Excellent | High | Native capability |

| Unsupervised ML | 10,000+ patterns [9] [1] | Excellent | High | Native capability |

Experimental Protocols for ML-Based XRD Analysis

Automated Phase Mapping Protocol

A recently developed automated workflow for high-throughput XRD analysis involves [9]:

Candidate Phase Identification: Collect relevant candidate phases from crystallographic databases (ICDD, ICSD), followed by elimination of duplicates and thermodynamically unstable phases based on first-principles calculations [9].

Domain Knowledge Integration: Encode crystallographic knowledge, thermodynamic data, and composition constraints into the loss function of optimization algorithms [9].

Iterative Pattern Fitting: Use simulated XRD patterns of candidate phases to fit experimental data, solving for phase fractions and peak shifts with an encoder-decoder neural network structure [9].

Solution Refinement: Prioritize "easy" samples (1-2 major phases) first to establish reliable solutions, then address complex multi-phase samples using previously determined solutions as constraints [9].

This approach has been successfully applied to experimental combinatorial libraries including V-Nb-Mn oxide, Bi-Cu-V oxide, and Li-Sr-Al oxide systems, identifying previously missed phases such as α-Mn₂V₂O₇ and β-Mn₂V₂O₇ [9].

Neural Network Training Protocol for Quantitative Analysis

For deep learning-based quantitative phase analysis, the following protocol has demonstrated success [8]:

Synthetic Data Generation: Calculate XRD patterns from crystallographic information files, incorporating variability in lattice parameters, crystallite size, and preferred orientation [8].

Data Augmentation: Apply instrument-specific corrections including absorption phenomena and wavelength convolution to match experimental conditions [8].

Network Architecture: Implement convolutional neural networks with specifically designed loss functions (e.g., Dirichlet modeling) for proportion inference [8].

Validation: Test trained networks on both synthetic and experimental patterns, with performance benchmarks against Rietveld refinement results [8].

This approach achieved 0.5% phase quantification error on synthetic test sets and 6% error on experimental data for a four-phase system containing calcite, gibbsite, dolomite, and hematite [8].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials and Tools for Modern XRD Research

| Item | Function | Examples/Specifications |

|---|---|---|

| Reference Crystals | Calibration and method validation | NIST standard reference materials (e.g., Si, Al₂O₃) |

| Crystallographic Databases | Phase identification reference | ICDD PDF-4+, ICSD, Crystallography Open Database [9] [7] |

| High-Throughput Sample Libraries | Accelerated materials discovery | Composition-spread thin films; 317-sample V-Nb-Mn oxide library [9] |

| Specialized Diffractometers | Data collection for specific sample types | Bragg-Brentano (powders), 4-circle (single crystals), grazing incidence (thin films) [7] |

| ML Analysis Software | Automated phase identification and quantification | XRD-AutoAnalyzer [10], AutoMapper [9], custom neural networks [8] |

| Synchrotron Access | High-resolution, time-resolved studies | Beamline facilities for in situ/operando experiments [9] [7] |

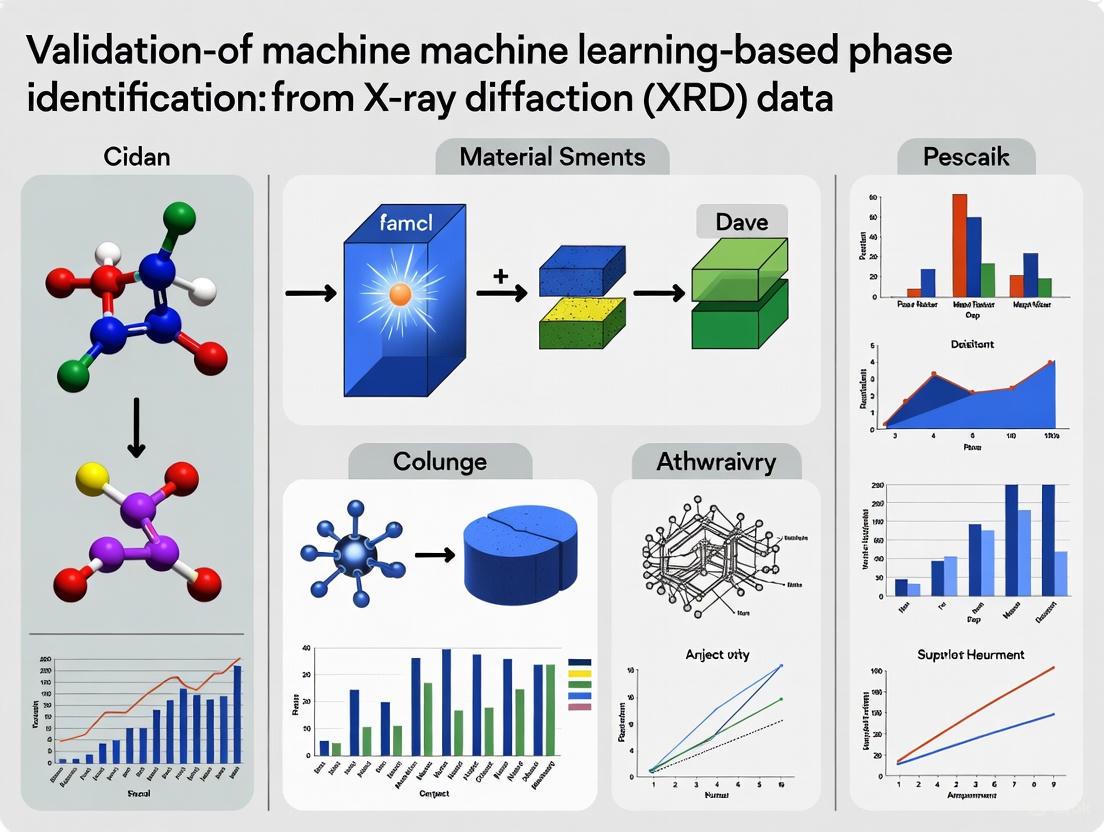

Workflow Visualization: Traditional vs. ML-Based XRD Analysis

The following diagram illustrates the key steps and decision points in traditional versus machine learning-based approaches to XRD analysis:

Traditional vs. ML-Based XRD Analysis Workflow

The integration of machine learning with X-ray diffraction represents a significant advancement in materials characterization. While Bragg's Law remains the fundamental principle underlying all XRD analysis, ML methods have demonstrated compelling advantages for certain applications:

Performance Advantages of ML Approaches:

- Speed: ML algorithms can analyze thousands of patterns in the time required for manual analysis of a single pattern [9] [1]

- Scalability: Unsupervised ML methods naturally handle large datasets from high-throughput experiments [9]

- Adaptive Optimization: ML-guided data collection improves measurement efficiency and trace phase detection [10]

Persistent Challenges:

- Interpretability: ML models often function as "black boxes" with limited physical insight [7]

- Data Requirements: Effective training requires extensive, high-quality datasets [8] [7]

- Generalizability: Models trained on specific material systems may not transfer well to unrelated chemistries [7]

The most promising path forward involves hybrid approaches that combine the physical foundation of Bragg's Law with the computational power of machine learning [7]. By encoding domain knowledge—crystallography, thermodynamics, kinetics—into ML algorithms, researchers can develop systems that leverage the strengths of both paradigms [9]. This integration is particularly valuable for autonomous materials discovery platforms, where rapid structural analysis is essential for establishing composition-structure-property relationships [9].

As ML methodologies continue to evolve and incorporate more physical constraints, they are poised to become increasingly reliable tools for XRD analysis, complementing rather than replacing the fundamental principles established by Bragg over a century ago.

Why ML? Overcoming Limitations of Traditional Rule-Based XRD Analysis

X-ray diffraction (XD) is a cornerstone technique for determining the crystal structure and phase composition of materials, crucial for fields ranging from drug development to materials science. For decades, analysis of XRD data has relied on traditional, rule-based methods. However, the emergence of machine learning (ML) is now overcoming their fundamental limitations. This guide objectively compares the performance of these two paradigms, providing researchers with the data to validate ML-based phase identification.

Head-to-Head: Rule-Based Analysis vs. Machine Learning

The table below summarizes the core limitations of traditional methods and how specific ML approaches address them.

| Traditional Rule-Based Limitation | ML Solution | Key Experimental Evidence |

|---|---|---|

| Laborious, manual process | Full automation of phase identification and quantification. | A CNN model identified phases in multiphase inorganic compounds in less than a second, a task requiring several hours for an expert using Rietveld refinement [11]. |

| Poor scalability for high-throughput analysis. | Real-time, high-throughput analysis of large datasets and even autonomous steering of experiments [10] [12]. | ML models have enabled the interpretation of XRD patterns up to three orders of magnitude faster than traditional techniques, making real-time analysis feasible [12]. |

| Struggles with complex mixtures (overlapping peaks, trace phases). | High accuracy in identifying multiple phases and detecting trace impurities, even with peak overlap [11] [10]. | A deep-learning technique achieved nearly 100% accuracy in phase identification and 86% accuracy in three-step-phase-fraction quantification on real experimental data [11]. |

| "Black-box" process reliant on expert intuition. | Quantified Uncertainty and Interpretability via Bayesian methods and explainable AI (XAI). | A Bayesian-VGGNet model provided uncertainty estimates, while SHAP analysis quantified the importance of input features, aligning model decisions with physical principles [13]. |

| Difficulty with imperfect data (noise, preferred orientation). | Enhanced robustness through data augmentation and graph-based representations. | A GCN-based framework, which represents XRD patterns as graphs, achieved a precision of 0.990 and recall of 0.872, demonstrating robustness to overlapping peaks and noise [14]. |

Experimental Protocols and Performance Data

Protocol for Multi-Phase Mixture Identification

Objective: To automate the identification and quantification of constituent phases in a multiphase inorganic compound mixture [11].

- Dataset Construction: A large-scale synthetic dataset was generated by combinatorically mixing the simulated powder XRD patterns of 170 known inorganic compounds, resulting in 1,785,405 synthetic XRD patterns for training [11].

- Model Architecture: A Convolutional Neural Network (CNN) was built and trained on the large prepared dataset. The model treats the XRD pattern as a 1D image and learns underlying features without human intervention [11].

- Validation: The fully trained CNN model was tested on both a hold-out set of 100,000 simulated patterns and 100 real experimental XRD patterns measured in the lab [11].

Results:

| Test Dataset | Model Accuracy |

|---|---|

| Simulated XRD Test Dataset | ~100% [11] |

| Real Experimental XRD Data (Li₂O-SrO-Al₂O₃ mixture) | 100% [11] |

| Real Experimental XRD Data (SrAl₂O₄-SrO-Al₂O₃ mixture) | 97.33% - 98.67% [11] |

Protocol for Autonomous and Adaptive XRD Measurement

Objective: To autonomously steer XRD measurements for faster and more confident phase identification, especially for detecting trace phases or monitoring dynamic processes [10].

- Workflow: An ML algorithm is physically coupled with a diffractometer in a closed loop.

- Initial Scan: A rapid, low-resolution scan is performed over a limited angular range (e.g., 2θ = 10°–60°).

- Analysis & Decision: A pre-trained deep learning algorithm (XRD-AutoAnalyzer) predicts phases and, crucially, assesses its own confidence. It also uses Class Activation Maps (CAMs) to identify which angular regions are most important for distinguishing between the most probable phases.

- Adaptive Steering: If confidence is below a threshold (e.g., 50%), the algorithm commands the diffractometer to either:

- Resample specific, high-value 2θ regions with higher resolution.

- Expand the angular range to collect more data.

- This process repeats until confidence is high or a maximum angle is reached [10].

- Validation: The method was tested on both simulated and experimentally acquired patterns from the Li-La-Zr-O chemical space [10].

Results: The adaptive approach consistently outperformed conventional fixed-time scans, providing more precise detection of impurity phases with significantly shorter measurement times. It also successfully identified a short-lived intermediate phase during the in situ synthesis of LLZO, a phase that was missed by conventional measurements [10].

Protocol for Robust Phase Identification with Graph Convolutional Networks

Objective: To accurately identify phases in multi-phase materials by capturing complex, non-Euclidean relationships between diffraction peaks, even in the presence of overlap and noise [14].

- Data Preprocessing: XRD patterns are not treated as simple 1D signals. Instead, each diffraction peak is represented as a node in a graph. Edges between nodes encode interactions, such as peak proximity and intensity relationships.

- Model Architecture: A Graph Convolutional Network (GCN) is used to learn from this graph-structured data. The GCN propagates information between connected nodes, allowing it to capture both local and global patterns within the XRD spectrum [14].

- Data Augmentation: Techniques like noise injection and synthetic data generation are employed to simulate experimental variations (e.g., instrumental noise, slight peak shifts), making the model more robust [14].

Results:

| Metric | Model Performance |

|---|---|

| Precision | 0.990 |

| Recall | 0.872 |

The framework outperformed traditional ML models with minimal hyperparameter tuning, showing high accuracy despite overlapping peaks and noisy data [14].

Visualizing the Analytical Workflows

The diagrams below illustrate the fundamental differences in how rule-based and ML-driven analyses operate.

Rule-Based XRD Analysis Workflow

ML-Driven XRD Analysis Workflow

For researchers looking to implement or validate ML-based XRD analysis, the following tools and data resources are essential.

| Item | Function in ML-Based XRD Analysis |

|---|---|

| Crystallographic Databases (ICSD, COD, MP) | Provide the structural information (CIF files) required to generate large-scale synthetic training datasets of XRD patterns [13] [15]. |

| Synthetic Data Generation Software | Creates training data by simulating XRD patterns from CIF files, incorporating parameters like peak width and instrumental factors to enhance realism [11] [12]. |

| Pre-Trained ML Models (e.g., XRD-AutoAnalyzer) | Offer ready-made solutions for phase identification, allowing researchers to bypass the resource-intensive training phase and apply ML directly to their data [10]. |

| Data Augmentation Tools | Improve model robustness by programmatically adding noise, shifting peaks, and creating variations to simulate real-world experimental conditions [14]. |

| Explainable AI (XAI) Libraries (e.g., SHAP) | Provide post-hoc interpretations of ML model predictions, helping to validate that the model's reasoning aligns with established physical principles [13]. |

Key Insights for Researchers

The experimental data confirms that machine learning is not merely an incremental improvement but a paradigm shift in XRD analysis. ML models deliver superior speed, accuracy, and scalability, enabling previously challenging or impossible applications like real-time phase identification and autonomous self-steering experiments. The integration of uncertainty quantification and interpretability methods is critical for building trust and integrating these tools into the scientific workflow. For research and drug development professionals, adopting ML-based XRD analysis translates to faster materials discovery, more reliable characterization, and the ability to extract deeper insights from complex data.

The identification and quantification of crystalline phases from X-ray diffraction (XRD) data is fundamental to materials science, chemistry, and pharmaceutical development. Traditional analysis methods, such as Rietveld refinement, require significant expertise, are time-consuming, and struggle with the analysis of very large datasets generated by high-throughput methodologies [8] [1]. The emergence of machine learning (ML) offers a promising alternative, capable of automating and accelerating this process. However, a primary limitation for supervised ML is the scarcity of large, accurately labeled experimental datasets, particularly for rare phases or complex mixtures [16] [11].

This challenge has propelled synthetic data generation to the forefront of ML-based XRD analysis. By creating large, realistic, and perfectly labeled datasets in silico, researchers can train robust neural network models that would otherwise be infeasible. This guide provides a comparative analysis of synthetic data generation methods and the neural network architectures they support, framing them within the experimental protocols essential for validating ML-based phase identification in research.

Comparative Analysis of Synthetic Data Generation Methods

Various methodologies exist for generating synthetic XRD data, each with distinct advantages, limitations, and optimal use cases. The choice of method significantly impacts the quality, diversity, and ultimate utility of the data for training ML models.

Table 1: Comparison of Synthetic XRD Data Generation Methods

| Method | Core Principle | Strengths | Weaknesses | Best-Suated For |

|---|---|---|---|---|

| Physics-Based Simulation [8] [11] | Uses crystallographic information files (CIFs) and physics models (e.g., Bragg's law, structure factors) to calculate theoretical XRD patterns. | High physical accuracy; generates pristine, perfectly labeled data; can model variations in lattice parameters, crystallite size, and strain. | May lack experimental noise and artifacts; requires robust CIF databases and simulation parameters. | Creating large-scale foundational training datasets; systems with well-defined crystal structures. |

| Data Augmentation & Mixing [11] | Creates new patterns by combinatorically mixing simulated single-phase patterns with varying relative fractions. | Efficiently generates a vast number of complex multi-phase patterns from a limited set of single-phase patterns. | Underrepresents peak shifts from solid solutions or strain; pattern complexity is limited by the base single-phase library. | Multi-phase identification and quantification tasks, especially in high-throughput screening. |

| Generative AI (e.g., GANs) [17] [18] | Employs generative models, like Generative Adversarial Networks (GANs), to learn the distribution of experimental data and generate new, realistic patterns. | Can capture complex, non-ideal characteristics of experimental data, including noise and peak broadening. | Requires large experimental datasets for training; risk of generating physically implausible patterns if not properly constrained. | Augmenting experimental datasets; learning and replicating specific instrumental or microstructural signatures. |

| Rule-Based & Stochastic [19] | Generates data based on predefined rules (e.g., peak positions for known phases) or stochastic (random) processes. | Simple and computationally inexpensive; useful for testing data structures. | Lacks physical realism and meaningful information content; random data is not useful for model training. | Software testing and initial system validation, not for training ML models. |

The selection of a method is not mutually exclusive. A common and powerful paradigm in XRD analysis involves training models on synthetic data and testing them on experimental data [8] [11]. This approach leverages the scalability and perfect labels of simulation while aiming for model generalizability to real-world conditions.

Synthetic Data Generation Workflow for XRD Phase Identification

Neural Network Architectures for XRD Phase Analysis

Once a synthetic dataset is generated, the next critical step is selecting an appropriate neural network architecture to learn the mapping between XRD patterns and phase information.

Table 2: Comparison of Neural Network Architectures for XRD Analysis

| Architecture | Common Application in XRD | Key Features | Reported Performance Highlights |

|---|---|---|---|

| Convolutional Neural Network (CNN) [8] [11] | Phase identification and classification in multi-phase mixtures. | Treats XRD patterns as 1D images; excels at detecting local patterns (peaks) and hierarchical features; requires minimal feature engineering. | Trained on ~1.7M synthetic patterns, achieved nearly 100% accuracy on experimental phase identification and 86% on 3-step-phase-fraction quantification [11]. |

| Fully Connected/Dense Network (Multilayer Perceptron) [20] | Regression tasks for predicting microstructural descriptors (e.g., dislocation density, phase fraction). | Connects every neuron in one layer to every neuron in the next; good for learning global patterns from flattened input vectors. | Used for predicting software effort; performance varies with dataset size and architecture [20]. Analogous to regression of material properties from XRD features. |

| Hybrid & Custom Architectures [9] | Automated phase mapping integrating domain knowledge. | Combines neural networks (e.g., encoder-decoders) with optimization constraints based on crystallography and thermodynamics. | Outperforms standard NMF by integrating material constraints; identifies subtle phases like α/β-Mn₂V₂O₇ missed in prior analyses [9]. |

A critical consideration is model transferability. Models trained on synthetic data from a specific set of crystal orientations may not generalize well to data from new orientations or polycrystalline systems unless the training data is diverse enough to encompass this variability [16]. Incorporating multiple crystallographic orientations and microstructural states during synthetic data generation is essential for building robust models.

Neural Network Architectures for XRD Analysis

Experimental Protocols for Method Validation

Robust validation is the cornerstone of establishing credibility for any ML-based phase identification pipeline. The following protocols are essential.

Train-Synthetic, Test-Real (TSTR) Validation

This is the gold-standard validation protocol for models trained on synthetic data. The model is trained exclusively on a large, synthetic dataset and then evaluated on a separate set of real, experimental XRD patterns [8] [11]. This tests the model's ability to generalize from ideal, simulated data to noisy, complex real-world data. Successful application of this protocol demonstrates the physical realism and utility of the synthetic data generation process.

Benchmarking Against Traditional Methods

The performance of the ML model must be compared against traditional analysis methods like Rietveld refinement. Key metrics for comparison include:

- Accuracy of Phase Identification: Percentage of correct phase labels identified in a multi-phase mixture [11].

- Quantification Error: The difference between the predicted phase fraction and the ground truth, often measured by Mean Absolute Error (MAE) or similar metrics. One study reported a quantification error of 0.5% on synthetic test data and 6% on experimental data for a four-phase system [8].

- Processing Speed: A significant advantage of ML is speed. A trained CNN can identify phases in "less than a second," a task that might take an expert several hours using Rietveld refinement [11].

Incorporating Domain Knowledge as Constraints

To ensure solutions are physically reasonable, advanced workflows integrate domain-specific knowledge directly into the model's loss function or architecture. This can include:

- Compositional Constraints (Lcomp): Ensuring the sum of phase fractions and their cationic composition matches the known sample composition [9].

- Thermodynamic Constraints: Using data from first-principles calculations to penalize the selection of highly unstable phases [9].

- Diffraction Fidelity (LXRD): Minimizing the difference between the reconstructed pattern (from predicted phases) and the experimental pattern, similar to the Rietveld method [9].

Successful implementation of an ML-driven XRD analysis pipeline relies on a suite of key resources and tools.

Table 3: Essential Research Reagents and Resources

| Resource Category | Specific Examples | Function in the Workflow |

|---|---|---|

| Crystallographic Databases | Inorganic Crystal Structure Database (ICSD), Crystallography Open Database (COD) [11] [9] | Provides the foundational CIF files required for physics-based simulation of XRD patterns for known phases. |

| Synthetic Data Generation Code | Custom XRD pattern calculation codes (e.g., using LAMMPS diffraction package [16] or other simulation software) | Generates the raw synthetic data used for training. The code must model instrumental parameters and microstructural effects. |

| ML Frameworks & Libraries | TensorFlow, Keras, PyTorch, Scikit-learn [20] | Provides the programming environment to build, train, and validate the neural network models (CNNs, Dense networks, etc.). |

| High-Performance Computing (HPC) | GPU clusters, cloud computing resources [19] | Accelerates the computationally intensive processes of generating large synthetic datasets and training complex neural network models. |

| Experimental Validation Datasets | In-house measured XRD patterns, published combinatorial libraries (e.g., V-Nb-Mn oxide [9]) | Serves as the ground-truth benchmark for evaluating the real-world performance and transferability of the trained ML models. |

The Critical Importance of High-Quality, Crystalline Samples for ML Success

The integration of machine learning (ML) with X-ray diffraction (XRD) analysis has ushered in a new era of high-throughput materials discovery and characterization. However, the performance and reliability of these ML models are fundamentally constrained by the quality and characteristics of the crystalline samples used for both training and application. This guide objectively compares how different sample quality factors influence the success of ML-based phase identification from XRD data, providing researchers with a structured framework for evaluating and optimizing their experimental approaches.

The critical relationship between sample quality and ML performance stems from the fundamental nature of how these models learn. Unlike traditional analysis methods that explicitly encode physical principles, many ML approaches are fundamentally pattern recognition systems that identify statistical relationships within data [7]. When these patterns are obscured by poor crystallinity, preferred orientation, or phase impurities, the models' ability to learn and generalize is severely compromised.

Sample Quality Parameters and Their Impact on ML Performance

Crystallinity and Phase Purity

The degree of crystallinity and phase purity in samples directly influences the signal-to-noise ratio in XRD patterns, which is a critical factor for ML model accuracy. Models trained on high-quality simulated data often struggle with experimental data due to factors like amorphous backgrounds, impurity phases, and peak broadening that are not fully represented in training sets [13].

Table 1: Impact of Crystallinity on ML Model Performance

| Sample Characteristic | Effect on XRD Pattern | Impact on ML Models | Experimental Evidence |

|---|---|---|---|

| High Crystallinity | Sharp, well-defined peaks with high intensity | High accuracy in phase identification and structure determination | PXRDGen achieved 96% accuracy with high-quality samples [21] |

| Low Crystallinity/Amorphous Content | Broadened peaks, elevated background, reduced peak intensity | Decreased model confidence, misclassification, difficulty detecting minor phases | Bayesian models show increased uncertainty with noisy data [13] |

| Phase Impurities | Additional peaks not present in reference patterns | Incorrect multi-phase identification, confusion in classification | CrystalShift uses probabilistic labeling to handle minor impurities [22] |

Preferred Orientation and Texture

Preferred orientation in powdered samples or textured thin films presents a significant challenge for ML models, as it alters relative peak intensities from their reference values. This effect is particularly pronounced in materials with anisotropic crystal structures, such as perovskites used in photovoltaic applications [23].

Table 2: Impact of Texture and Orientation on Model Transferability

| Sample Type | XRD Characteristics | ML Performance Challenges | Mitigation Strategies |

|---|---|---|---|

| Ideally Random Orientation | Peak intensities match powder reference patterns | Optimal performance for models trained on simulated powder data | Standard powder preparation techniques (side-loading) |

| Textured Polycrystals | Altered relative intensities, missing peaks | Reduced accuracy if texture not represented in training data | Data augmentation with simulated textures [23] |

| Single Crystals | Single orientation pattern, not representative of powder average | Models trained on powder data fail completely | Orientation-specific training sets [16] |

The transferability of ML models across different sample orientations was systematically investigated in shock-loaded copper crystals, revealing that models trained on specific single-crystal orientations showed limited ability to predict microstructural descriptors for other orientations [16]. However, training on multiple orientations significantly improved transferability to both new orientations and polycrystalline systems.

Comparative Analysis of ML Approaches Under Different Sample Conditions

Performance Across Material Systems

Different ML approaches exhibit varying robustness to sample quality issues, with physics-informed models generally demonstrating better performance on imperfect experimental data compared to purely data-driven approaches.

Table 3: ML Approach Comparison for Different Sample Qualities

| ML Method | Ideal Sample Performance | Degraded Sample Performance | Key Limitations |

|---|---|---|---|

| Deep Learning (B-VGGNet) | 84% accuracy on simulated spectra [13] | Drops to 75% on external experimental data [13] | Requires large diverse datasets, black-box nature |

| Physics-Informed (CrystalShift) | Robust probability estimates [22] | Handles peak shifting and background effectively [22] | Requires candidate phase list |

| Traditional ML (Random Forest) | 83.62% crystal system accuracy [23] | Vulnerable to peak shifting and intensity variations | Limited capacity for complex patterns |

| Time Series Forest (TSF) | 97.76% crystal system accuracy [23] | Maintains performance with data augmentation | Treats XRD as time series data |

Experimental Protocols for Quality Assessment

To ensure ML model success, researchers should implement standardized quality assessment protocols before submitting samples for analysis:

- Crystallinity Validation: Calculate crystallinity index from the ratio of crystalline peak areas to total scattering area [1].

- Phase Purity Check: Compare experimental patterns with database references using similarity metrics before ML analysis.

- Texture Assessment: Analyze relative peak intensity deviations from reference patterns to identify preferred orientation.

- Signal-to-Noise Quantification: Measure background intensity relative to strongest peak to predict model confidence.

The Bayesian-VGGNet model developed for perovskite classification demonstrated how uncertainty quantification can automatically flag samples where prediction confidence is low due to quality issues, achieving 75% accuracy on external experimental data compared to 84% on simulated data [13].

Visualization of the ML-Sample Quality Relationship

The following diagram illustrates the critical relationship between sample quality factors and ML model success, highlighting how quality issues propagate through the analysis pipeline:

Experimental Workflow for Reliable ML-Based XRD Analysis

The following workflow outlines a robust methodology for preparing and analyzing samples to maximize ML model performance:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Materials for High-Quality XRD Samples

| Material/Solution | Function in Sample Preparation | Impact on ML Success |

|---|---|---|

| Standard Reference Materials (NIST Si, Al₂O₃) | Instrument calibration and peak position reference | Ensures pattern alignment with database entries |

| Isotropically Orienting Additives | Reduce preferred orientation in powder samples | Maintains correct relative peak intensities |

| Crystallization Solvents | Control crystal growth rate and habit | Influences crystallite size and phase purity |

| Matrix Matching Compounds | Dilute samples without interfering patterns | Enable analysis of minor phases in mixtures |

| Internal Standards | Quantify amorphous content and strain | Provides quality metrics for pattern validation |

The critical importance of high-quality, crystalline samples for ML success in XRD analysis cannot be overstated. The comparative data presented demonstrates that sample quality factors—particularly crystallinity, phase purity, and preferred orientation—directly control the accuracy, confidence, and transferability of ML models. Researchers can optimize their experimental workflows by selecting appropriate ML approaches based on sample quality assessment, with physics-informed models like CrystalShift offering robust solutions for lower-quality samples, and advanced deep learning models like B-VGGNet and TSF providing high accuracy for well-characterized systems. As ML continues to transform materials characterization, adherence to rigorous sample preparation standards remains the foundation for reliable, reproducible results that accelerate materials discovery and development.

ML in Action: Algorithms, Workflows, and Biomedical Applications

The identification of crystalline phases from X-ray diffraction (XRD) data is a fundamental task in materials science, chemistry, and pharmaceutical development. Traditional methods, while effective, often require significant expert intervention and can be time-consuming for analyzing large datasets or complex multi-phase mixtures. Machine learning (ML) has emerged as a powerful alternative, promising to automate and accelerate this process. This guide provides a comparative analysis of three prominent ML classifiers—Convolutional Neural Networks (CNNs), Support Vector Machines (SVMs), and Shallow Neural Networks (SNNs)—within the context of phase identification from XRD patterns. The objective is to validate their performance, elucidate their operational protocols, and offer a clear framework for researchers to select the appropriate tool based on their specific project needs, data availability, and desired level of interpretability.

Performance Comparison at a Glance

The following table summarizes the key performance metrics and characteristics of CNNs, SVMs, and Shallow Neural Networks as reported in recent literature on XRD-based phase identification.

Table 1: Comparative performance of ML classifiers for XRD phase identification.

| Classifier | Reported Accuracy | Best For | Strengths | Limitations |

|---|---|---|---|---|

| Convolutional Neural Network (CNN) | ~75% to ~100% on experimental data [11] [13] [24] | Complex, multi-phase mixtures; Raw XRD pattern analysis | High accuracy with raw data; Automatic feature extraction; Robust to peak shifts/overlap [11] [25] | High computational cost; Requires very large datasets (~10^5 - 10^6 samples) [14] [13] |

| Support Vector Machine (SVM) | ~64% to ~95% [26] | Smaller, curated datasets with pre-computed features | Effective in high-dimensional spaces; Less prone to overfitting than SNNs with small data [26] | Performance depends on manual feature engineering (e.g., δ, VEC, ΔH) [26] [1] |

| Shallow Neural Network (SNN) / DNN | ~74% to >95% [26] | Balanced performance with metallurgical parameters | High accuracy with good feature sets; Can model complex non-linear relationships [26] | Requires manual feature curation; Risk of overfitting with small datasets [26] |

Detailed Experimental Protocols

A clear understanding of the methodologies behind the cited performance metrics is crucial for validation and replication.

Convolutional Neural Network Protocol

CNNs are designed to process XRD patterns as one-dimensional images, automating the feature extraction process.

- Data Preparation: A large dataset of synthetic XRD patterns is generated to train the model. For instance, one study created 1,785,405 synthetic patterns by combinatorically mixing the simulated patterns of 170 known inorganic compounds in a quaternary system [11]. Another study used a Template Element Replacement (TER) strategy to generate a perovskite chemical space, creating a dataset of 24,645 virtual XRD spectra to enhance model robustness [13].

- Data Augmentation: To bridge the gap between simulated and real-world data, techniques like noise injection, peak shifting, and blending with real structure spectral data (RSS) are employed. This step is critical for improving generalization to experimental data [14] [13].

- Model Architecture & Training: A typical architecture uses multiple convolutional layers to automatically detect relevant features (e.g., peaks, backgrounds) from the raw XRD data. For example, a study employed a VGGNet-style architecture, trained with Bayesian methods to quantify prediction uncertainty. The model was trained on synthetic data and tested on reserved experimental data, achieving 84% accuracy on simulated spectra and 75% on external experimental data [13]. Another protocol used a CNN for phase identification, followed by other ML models for phase-fraction regression, achieving a 91.11% identification accuracy and a phase-fraction regression MSE of 0.0024 on real-world data [24].

Support Vector Machine & Shallow Neural Network Protocol

SVMs and SNNs typically rely on a curated set of descriptor features derived from materials science principles.

- Feature Engineering: A dataset is constructed where each alloy or compound composition is described by a set of metallurgy-specific predictor features. These commonly include [26]:

- Valence Electron Concentration (VEC)

- Atomic Size Difference (δ)

- Mixing Enthalpy (ΔH)

- Electronegativity Difference

- Configurational Entropy (ΔS)

- Model Training & Validation: Various algorithms are trained on this curated dataset. One study curated a dataset of 1,193 phase observations and trained models including SVM, Random Forest (RF), Deep Neural Networks (DNN), and XGBoost [26]. The models were validated against experimentally synthesized HEAs. Among them, DNN and XGBoost demonstrated superior predictive performance, with classification accuracies exceeding 95%, while SVM's performance was lower in comparison [26].

Workflow Visualization

The diagram below illustrates the typical machine learning workflow for XRD phase identification, highlighting the divergent paths for CNN-based and feature-based (SVM/SNN) approaches.

Successful implementation of ML for XRD analysis relies on key databases, software, and computational resources.

Table 2: Key resources for ML-based XRD phase identification.

| Resource Name | Type | Function in Research |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Database | Primary source for crystal structures used to simulate training data for CNNs and validate identified phases [11] [13] [9]. |

| International Centre for Diffraction Data (ICDD) | Database | Repository of reference powder diffraction patterns used for phase identification and validation [9]. |

| Synthetic XRD Data | Computational Data | Large, computationally generated datasets of XRD patterns, crucial for training data-intensive models like CNNs and mitigating data scarcity [11] [27] [24]. |

| Materials Project Database | Database | Source of thermodynamic data and crystal structures used to filter plausible candidate phases and enrich training datasets [13] [9]. |

| Domain Knowledge Features (VEC, δ, ΔH, etc.) | Curated Features | Physicochemical descriptors required for training non-CNN models like SVMs and SNNs, bridging composition and structure [26]. |

| High-Performance Computing (HPC) / GPU | Hardware | Essential for training complex models like deep CNNs on large synthetic datasets in a reasonable time frame [14]. |

The choice between CNNs, SVMs, and Shallow Neural Networks for XRD phase identification involves a fundamental trade-off between data requirements and model capability. CNNs excel in handling raw, complex data and achieving high accuracy but demand substantial computational resources and large training datasets, often necessitating sophisticated synthetic data generation. In contrast, SVMs and Shallow Neural Networks offer a more accessible entry point for projects with well-defined, pre-computed features and smaller datasets, though their performance is inherently limited by the quality and completeness of the manual feature engineering. The validation of these tools within materials science underscores that there is no single "best" classifier; the optimal choice is dictated by the specific research context, data availability, and the desired balance between automation and interpretability.

Adaptive X-ray diffraction (XRD) represents a paradigm shift in materials characterization, moving from static measurement collection to an intelligent, closed-loop process guided by machine learning (ML). This workflow integrates an ML model directly with a physical diffractometer, enabling the experiment to autonomously steer itself towards the most informative data points in real-time. By making on-the-fly decisions about where and how long to measure, adaptive XRD achieves more confident phase identification, especially for trace impurities or transient intermediate phases, while significantly reducing total measurement time compared to conventional approaches [10] [28]. This guide provides a detailed comparison of this emerging methodology against established alternatives, supported by experimental data and protocols.

What is Adaptive XRD?

Traditional XRD analysis is a linear process: a full diffraction pattern is collected over a predetermined angular range, and the data is analyzed afterward, often manually. In contrast, adaptive XRD creates a feedback loop between data collection and analysis. The process begins with a rapid, initial scan. An ML algorithm then analyzes this preliminary data and assesses its own confidence in identifying the crystalline phases present. If confidence is below a set threshold, the algorithm autonomously directs the diffractometer to collect additional data only in specific regions that will maximize information gain, such as areas with distinguishing peaks between candidate phases [10].

This "smart" resampling, often guided by techniques like Class Activation Maps (CAMs) that highlight discriminative features in the pattern, avoids the need for time-consuming, high-resolution scans of the entire angular range [10]. The core innovation is this real-time, ML-driven decision-making, which optimizes the experiment for speed and precision simultaneously.

Comparative Analysis: Adaptive XRD vs. Alternative Methods

The performance of adaptive XRD can be objectively evaluated against traditional methods and other ML-assisted approaches. The table below summarizes key differentiators, while subsequent sections provide experimental validation.

Table 1: Comparison of XRD Phase Identification Methods

| Method | Core Principle | Human Intervention | Multi-Phase & Trace Detection | Speed & Efficiency | Interpretability & Data Use |

|---|---|---|---|---|---|

| Adaptive XRD [10] | ML-guided real-time feedback loop | Minimal (post-validation) | High; excels at identifying minor impurities | Fast; optimized, selective data collection | High via CAMs; uses experimental data |

| Search/Match Libraries [29] | Pattern matching against a database | High for complex mixtures | Low; struggles with novel phases and peak overlap | Moderate for screening | Low; relies on pre-existing database |

| Rietveld Refinement [29] [8] | Physics-based model fitting | High; requires expert input | Moderate; can be sensitive to initial model | Slow; computationally intensive | High; provides full structural parameters |

| Standard ML (CNN) Models [11] [29] | One-shot pattern classification | Model training, then minimal | High for trained phases, but static | Very fast post-training | Often a "black-box"; uses static datasets |

Performance Benchmarking: Key Experimental Data

The comparative advantages of adaptive XRD are demonstrated in quantitative studies. The following table summarizes results from key experiments that benchmark its performance against conventional non-adaptive XRD.

Table 2: Experimental Performance Benchmarking

| Study / System | Metric | Adaptive XRD Performance | Conventional XRD Performance |

|---|---|---|---|

| Li-La-Zr-O System (Simulated) [10] | Accuracy of phase detection in multi-phase mixtures | Consistently high accuracy with shorter measurement times. | Required longer scans to achieve comparable accuracy. |

| Li-La-Zr-O System (Experimental, in situ) [10] | Identification of short-lived intermediate phases | Successfully identified a transient intermediate phase. | Missed the intermediate phase with standard scan protocols. |

| Multi-phase Mineral System (Experimental) [8] | Quantitative phase analysis error (4 phases) | N/A (Standard ML used) | Standard ML CNN achieved ~6% error vs. Rietveld. |

| Sr-Li-Al-O System (Experimental) [11] | Phase identification accuracy | N/A (Standard ML used) | A deep CNN model achieved nearly 100% accuracy on real experimental data. |

Experimental Protocols for Adaptive XRD

The validation of adaptive XRD, as documented in the literature, follows a rigorous and reproducible protocol [10]:

- Initialization: The experiment begins with a fast, low-resolution scan over a strategically chosen angular range (e.g., 2θ = 10° to 60°). This range is optimized to capture a sufficient number of peaks for an initial ML prediction while conserving time.

- ML Analysis & Confidence Assessment: The acquired pattern is fed into a convolutional neural network (CNN) model (e.g., XRD-AutoAnalyzer) trained to identify crystalline phases. The model outputs the predicted phases and, crucially, a confidence score (0-100%) for each.

- Decision Point: If the confidence for all suspected phases exceeds a predefined threshold (e.g., 50%), the analysis is complete. If not, the algorithm initiates an adaptive loop.

- Adaptive Action - Resampling: The algorithm uses Class Activation Maps (CAMs) to identify the specific 2θ regions where the diffraction patterns of the two most likely phases differ most. It then commands the diffractometer to rescan only these critical regions with higher resolution (slower scan rate) to clarify distinguishing peaks [10].

- Adaptive Action - Range Expansion: If confidence remains low after resampling, the scan range can be iteratively expanded (e.g., in +10° steps) to capture additional peaks that may assist identification.

- Termination: The loop (Steps 2-5) continues until the ML model's confidence threshold is met or a maximum scan angle (e.g., 140°) is reached.

Workflow Visualization: The Adaptive XRD Feedback Loop

The following diagram illustrates the closed-loop, adaptive process, integrating the physical instrument with the ML algorithm in real-time.

The Scientist's Toolkit: Essential Research Reagents & Materials

The implementation of an adaptive XRD workflow, as validated in recent studies, relies on a combination of computational and experimental components.

Table 3: Essential Research Reagents & Solutions for Adaptive XRD

| Item | Function in the Workflow | Example/Description |

|---|---|---|

| ML Model (CNN) [10] [11] | Performs real-time phase identification and confidence quantification from diffraction patterns. | e.g., XRD-AutoAnalyzer; a CNN trained on synthetic or experimental patterns from a target chemical space (Li-La-Zr-O, Sr-Li-Al-O). |

| Class Activation Maps (CAMs) [10] | Provides model interpretability and guides adaptive sampling by highlighting discriminative 2θ regions. | A gradient-based technique that generates a heatmap overlay on the XRD pattern, showing areas most important for the ML's classification. |

| Synthetic Training Data [11] [8] | Used to train the initial ML model where experimental data is scarce; allows for massive, variable datasets. | Large datasets (e.g., >1 million patterns) generated by simulating XRD patterns for known crystal structures and combinatorically mixing them. |

| Laboratory Diffractometer [10] | The physical instrument that performs the measurements; must be software-controlled to accept real-time commands. | A standard in-house X-ray diffractometer, demonstrating the method's applicability without requiring synchrotron sources. |

| Candidate Phase Database [9] | A curated list of potential phases used to train the ML model and validate results. | Entries from crystallographic databases (ICSD, ICDD) filtered by chemical system and thermodynamic stability. |

The evidence confirms that the adaptive XRD workflow represents a significant advance over traditional and static ML methods for phase identification. Its primary strength lies in its autonomous efficiency, achieving high-confidence results—particularly for challenging scenarios involving trace phases or transient reaction intermediates—in a fraction of the time required by conventional methods [10]. By creating a closed-loop system that strategically collects only the most valuable data, adaptive XRD moves beyond mere automation to true intelligent experimentation. This workflow is a powerful tool for accelerating materials discovery and characterization, promising to unlock new insights into dynamic solid-state reactions and complex multi-phase systems.

The validation of machine learning (ML) for phase identification from X-ray diffraction (XRD) data represents a critical frontier in materials characterization, with significant implications for biomedical imaging and diagnostic development. This guide objectively compares the performance of rules-based and ML-based classifiers applied to XRD images of medically relevant phantoms. Such phantoms provide essential well-characterized ground-truths for quantitatively testing classification algorithms before transitioning to complex biological tissues [30] [31]. Researchers utilize tissue surrogates like water and polylactic acid (PLA) plastic to simulate cancerous and healthy tissue, respectively, enabling controlled evaluation of classification performance across spatially complex environments that mimic real clinical scenarios [31]. The experimental data and comparative analyses presented herein provide researchers, scientists, and drug development professionals with critical benchmarks for selecting appropriate classification methodologies for XRD-based material analysis.

Experimental Protocols for Classifier Comparison

Phantom Design and Data Acquisition

Medically relevant phantoms were constructed with varying spatial complexity and biologically relevant features to facilitate quantitative testing of classifier performance [30] [31]. Water and polylactic acid (PLA) plastic served as validated simulants for cancerous and adipose (fat) tissue, respectively, based on their closely matching XRD spectral characteristics [31]. The phantoms provided perfectly known material locations, enabling direct comparison between ground truth and classifier-predicted results [31].

A previously developed X-ray fan beam coded aperture imaging system acquired co-registered transmission and diffraction images [31]. For transmission imaging, the system operated at 80 kVp/6 mA/100 ms fan slice-exposures. For XRD data acquisition, parameters shifted to 160 kVp/3 mA/15 s fan slice-exposures [31]. The system achieved an XRD spatial resolution of ≈1.4 mm² with 0.01 1/Å momentum transfer resolution (q), reconstructing the XRD spectrum at each pixel from raw scatter data using a physics-based forward model [31].

Classifier Implementation and Training

The study compared two rules-based classifiers—cross-correlation (CC) and linear least-squares (LS) unmixing—against two machine learning classifiers—support vector machines (SVM) and shallow neural networks (SNN) [30] [31].

- Rules-based classifiers: Implemented using reference XRD spectra measured by a commercial diffractometer (Bruker D2 Phaser) [31].

- Machine learning classifiers: Trained on 60% of measured XRD pixels, utilizing the remaining data for testing [30] [31].

Performance was quantified using the area under the receiver operating characteristic curve (AUC) and classification accuracy at the midpoint threshold for each classifier [30] [31].

Table 1: Classifier Performance Comparison on XRD Images of Medical Phantoms

| Classifier Type | Specific Algorithm | Overall Accuracy (%) | AUC | Boundary Region Accuracy* (%) |

|---|---|---|---|---|

| Rules-based | Cross-correlation (CC) | 96.48 | 0.994 | 89.32 |

| Rules-based | Least-squares (LS) | 96.48 | 0.994 | 89.32 |

| Machine Learning | Support Vector Machine (SVM) | 97.36 | 0.995 | 92.03 |

| Machine Learning | Shallow Neural Network (SNN) | 98.94 | 0.999 | 96.79 |

*Boundary regions defined as pixels ±3 mm from water-PLA boundaries where partial volume effects occur due to imaging resolution limits [30] [31].

Comparative Performance Analysis

All classifiers demonstrated strong performance when applied to XRD image data, significantly outperforming classification by transmission data alone, which achieved only 85.45% accuracy and an AUC of 0.773 [31]. As shown in Table 1, machine learning classifiers, particularly the shallow neural network, delivered superior performance across both overall accuracy and AUC metrics [30] [31]. The SNN achieved near-perfect AUC (0.999) and the highest overall classification accuracy (98.94%), indicating exceptional capability in distinguishing materials based on their XRD signatures [30].

Performance in Challenging Boundary Regions

The comparative advantage of ML classifiers became more pronounced in boundary regions where partial volume effects occur due to imaging resolution limits [30] [31]. In these critical areas, the accuracy gap widened substantially between approaches (Table 1). The SNN maintained 96.79% accuracy at boundaries, significantly outperforming rules-based approaches (89.32%) [30] [31]. This demonstrates ML algorithms' considerably improved performance when multiple materials exist within a single voxel, a common scenario in clinical imaging where tissues interface [30].

Broader Context in ML for XRD Analysis

These findings align with broader developments in machine learning applied to XRD data analysis. Recent research continues to validate that ML models can successfully identify crystalline phases [10] [1], quantify phase fractions [8], and even adaptively steer XRD measurements toward features that improve identification confidence [10]. The integration of ML with XRD instrumentation enables autonomous phase identification and significantly improved detection of trace materials and short-lived intermediate phases [10].

Experimental and Analytical Workflow for Classifier Comparison

The Scientist's Toolkit: Essential Research Materials

Table 2: Key Research Reagent Solutions for XRD Phantom Experiments

| Item | Function/Application | Specific Examples/Parameters |

|---|---|---|

| Tissue Surrogates | Simulate biological tissues with matching XRD spectral characteristics | Water (cancer surrogate), PLA plastic (adipose tissue surrogate) [31] |

| XRD Imaging System | Acquire co-registered transmission and diffraction images | Fan-beam coded aperture system: 160 kVp/3 mA/15 s exposures, 1.4 mm² spatial resolution [31] |

| Reference Diffractometer | Measure reference XRD spectra for rules-based classifiers | Bruker D2 Phaser commercial diffractometer [31] |

| Classification Algorithms | Implement and compare material classification approaches | CC, LS unmixing, SVM, shallow neural networks [30] [31] |

| Performance Metrics | Quantitatively evaluate and compare classifier performance | AUC, classification accuracy at boundaries and overall [30] [31] |

The experimental comparison demonstrates that machine learning classifiers, particularly shallow neural networks, outperform rules-based approaches for classifying tissue surrogates in medical phantoms using XRD imaging data. The significant performance advantage of ML algorithms in boundary regions where partial volume effects occur highlights their potential for improved performance in clinical applications where precise tissue discrimination is critical [30] [31]. These findings contribute substantially to the broader validation of ML-based phase identification from XRD research, confirming that ML approaches can more effectively harness the rich information content of XRD imaging data to improve material analysis for research, industrial, and clinical applications [30]. For researchers and drug development professionals, these results provide compelling evidence for adopting ML methodologies in XRD-based classification tasks, particularly those involving complex material interfaces or requiring high spatial precision.

Polymorph screening is a crucial and mandatory step in pharmaceutical development, as the crystalline form of an Active Pharmaceutical Ingredient (API) fundamentally influences its solubility, stability, bioavailability, and manufacturability [32]. Different polymorphs of the same compound can exhibit dramatically different properties; a less stable form can lead to phase transformation during storage or processing, potentially compromising drug product quality and efficacy. The infamous case of ritonavir in the late 1990s, where a previously unknown polymorph emerged with significantly different solubility, necessitating a reformulation, underscores the substantial regulatory and financial risks associated with inadequate polymorph screening [32].

Traditionally, polymorph screening has been a time-consuming and labor-intensive process, relying on extensive experimental crystallization trials to explore a vast landscape of possible conditions. However, recent advancements in artificial intelligence (AI) and machine learning (ML) are revolutionizing this field. These computational approaches, particularly when applied to X-ray diffraction (XRD) data analysis, are enabling faster, more accurate, and more comprehensive identification of polymorphic forms. This review compares these emerging AI/ML-driven methodologies against traditional experimental approaches, framing the discussion within the broader thesis of validating ML-based phase identification from XRD data. The integration of these technologies is creating a new paradigm for de-risking drug development and accelerating the journey from candidate selection to clinical formulation [32] [33].

Methodologies and Experimental Protocols in Modern Polymorph Screening

Traditional Experimental Screening

Conventional experimental polymorph screening involves a systematic approach to crystallize an API under diverse conditions. Key steps and reagents include:

- Sample Preparation: The API is subjected to a wide array of crystallization experiments. This includes varying solvents (polar, non-polar, protic, aprotic), techniques (slow evaporation, cooling crystallization, slurry conversion), and environmental conditions (temperature, humidity).

- Data Collection: Solid forms obtained from these experiments are analyzed primarily using X-ray Powder Diffraction (XRPD). Each distinct polymorph produces a unique XRD pattern, which serves as a fingerprint for its crystal structure.

- Data Analysis: Traditionally, experts interpret these XRD patterns by comparing them to known databases, a process that requires significant crystallographic expertise and can be subjective and slow, especially for complex or multi-phase samples [1].

Computational and AI-Driven Screening

Computational methods have emerged as powerful complements to experiments. A notable large-scale study published in Nature Communications in 2025 validates a robust Crystal Structure Prediction (CSP) method [33]. Its protocol is hierarchical:

- Systematic Crystal Packing Search: A novel algorithm explores possible crystal packing arrangements for a given molecule across different space group symmetries.

- Hierarchical Energy Ranking:

- Initial Ranking: Molecular dynamics (MD) simulations using a classical force field.

- Re-ranking: Structure optimization using a Machine Learning Force Field (MLFF) to improve accuracy.

- Final Ranking: Periodic Density Functional Theory (DFT) calculations provide a precise ranking of the most energetically favorable polymorphs.

- Validation: The method was validated on a large set of 66 diverse molecules encompassing 137 known polymorphic forms, successfully reproducing all known structures and identifying potential new, low-energy polymorphs that pose a potential risk [33].

Machine Learning for XRD Phase Identification

ML models are being developed to automate the analysis of XRD patterns, a critical step in high-throughput screening. A key challenge is the "black box" nature of many models. To address this, a 2025 study employed SHAP (SHapley Additive exPlanations) to interpret a Bayesian-VGGNet model, quantifying the importance of specific XRD features to the model's crystal symmetry predictions [13]. Furthermore, to overcome data scarcity, the study used a Template Element Replacement (TER) strategy. This involved generating a "virtual" library of perovskite structures by element substitution within a known template framework, thereby augmenting the training dataset and improving the model's understanding of the relationship between XRD patterns and crystal structure [13]. Another study focused on multi-phase mixtures used a deep Convolutional Neural Network (CNN) trained on a massive dataset of ~1.8 million synthetic XRD patterns, simulating mixtures of 170 inorganic compounds. This model achieved near-perfect accuracy in phase identification for both simulated and real experimental test data [34].

Comparative Performance Analysis

Comparison of Screening Approaches

The table below summarizes the core characteristics of the main screening methodologies.

Table 1: Comparison of Polymorph Screening Approaches

| Feature | Traditional Experimental Screening | Computational Crystal Structure Prediction (CSP) | ML-Based XRD Analysis |

|---|---|---|---|

| Primary Focus | Empirically discovering crystallizable forms | Predicting thermodynamically stable crystal structures from a molecule's chemical structure | Rapidly identifying phases from experimental XRD patterns |

| Throughput | Low to Medium (weeks to months) | Medium (days to weeks for simulation) | Very High (minutes for pattern analysis) |

| Key Advantage | Direct experimental evidence of crystallizable forms | Identifies potentially missed, high-risk stable forms | Unprecedented speed and automation for phase ID |

| Main Limitation | Can miss metastable or elusive forms; time/resource intensive | Computationally expensive; accuracy depends on force fields | Requires large, high-quality training data; model generalizability |

| Data Source | Laboratory crystallization experiments | Molecular structure (e.g., SMILES string) | Experimental XRD diffraction patterns |

| Key Output | Physical samples of solid forms for characterization | Ranked list of predicted crystal structures and their energies | Phase identity and/or crystal system classification |

Performance Metrics of ML Models for XRD Analysis

The performance of ML models varies based on their architecture, training data, and specific task. The following table consolidates quantitative results from recent studies.

Table 2: Performance Metrics of Recent ML Models for XRD-Based Classification

| Study (Context) | ML Model | Dataset | Task | Key Performance Metric |

|---|---|---|---|---|

| Massuyeau et al. (Hybrid Perovskites) [23] | Convolutional Neural Network (CNN) | 23 samples | Perovskite vs. Non-perovskite Classification | 92% Accuracy |

| DeepXRD (Perovskites) [23] | Deep Neural Network | 37,211+ samples | Predicting XRD from Composition | Peak Position Match: ~68% |

| TSF Model (Perovskites) [23] | Time Series Forest (TSF) | Augmented XRD data | Crystal System Prediction | 97.76% Accuracy, F1 Score: 0.92 |

| Bayesian-VGGNet (General Crystals) [13] | Bayesian-VGGNet | 24,645 virtual + real spectra | Space Group Classification | 84% Accuracy (simulated data), 75% Accuracy (experimental data) |

| Multi-phase CNN (Inorganic Mixtures) [34] | Convolutional Neural Network (CNN) | ~1.8 million synthetic patterns | Phase Identification in Mixtures | ~100% Accuracy (simulated), ~100% Accuracy (real test data) |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful high-throughput polymorph screening relies on a suite of specialized reagents, tools, and software.

Table 3: Key Reagents and Solutions for Polymorph Screening

| Item | Function/Description | Application Context |

|---|---|---|

| High-Purity API | The active pharmaceutical ingredient of interest, required in high purity to avoid confounding crystallization results. | Foundational for all screening approaches (Experimental and Computational). |

| Organic Solvent Library | A diverse collection of solvents (e.g., alcohols, ketones, esters, ethers, hydrocarbons) to explore a wide crystallization space. | Essential for experimental screening to induce crystallization under different conditions. |

| Crystallization Plates | High-throughput microplates (e.g., 96-well or 384-well) that allow for parallel small-volume crystallization trials. | Experimental screening. |

| X-ray Diffractometer | Instrument for generating X-ray diffraction patterns from solid samples, the primary source of data for phase identification. | Experimental screening and data generation for ML analysis. |

| Reference XRD Databases (CSD, ICDD) | Databases of known crystal structures and their reference XRD patterns for comparison and identification. | Traditional XRD analysis and validation of ML/AI predictions. |

| Machine Learning Force Fields (MLFFs) | AI-derived force fields that enable accurate and faster energy calculations for molecular packing during simulation. | Computational CSP (e.g., used in the hierarchical ranking protocol [33]). |

| CSP Software Suites | Integrated software for crystal structure prediction, often combining molecular dynamics, quantum mechanics, and data analysis tools. | Computational screening (e.g., methods described in [33]). |

| Data Augmentation Algorithms (e.g., TER) | Computational methods like Template Element Replacement to generate synthetic but physically plausible crystal structures and XRD data for training. | Addressing data scarcity in ML model training [13]. |

Integrated Workflow and Future Outlook

The future of polymorph screening lies in the tight integration of computational and experimental methods, creating a closed-loop, AI-driven design-make-test-analyze cycle. This synergistic workflow is depicted in the following diagram.

Diagram 1: AI-Driven Polymorph Screening Workflow. This integrated approach uses computational predictions to guide experiments and ML to accelerate analysis, creating a continuous learning cycle.

This workflow demonstrates how computational CSP acts as a risk-assessment tool first, guiding the experimental design toward high-risk conditions. High-throughput experiments then generate real-world data, which is rapidly interpreted by ML models. The results are fed into a growing digital database that not only supports final form selection but also refines the computational and ML models, creating a powerful feedback loop. This synergy, as seen in the merger of Recursion's phenomic screening with Exscientia's generative chemistry, is building full end-to-end AI-powered discovery platforms [35].