Validating High-Throughput Computational Screening Workflows: A Framework for Accelerating Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on validating high-throughput computational screening (HTCS) workflows.

Validating High-Throughput Computational Screening Workflows: A Framework for Accelerating Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating high-throughput computational screening (HTCS) workflows. It covers the foundational principles of HTCS and its role in revolutionizing pharmaceutical R&D by overcoming the cost, time, and high failure rates of traditional methods. The scope extends to methodological advances, including the integration of AI and machine learning for virtual screening and de novo molecule design. It also addresses critical troubleshooting and optimization strategies to enhance data reliability and decision-making, and concludes with rigorous validation frameworks and comparative analysis of HTCS approaches, using real-world case studies from recent literature to illustrate the path from in silico prediction to confirmed biological activity.

Laying the Groundwork: Core Principles and the Rise of Computational Screening in Modern Biology

Defining High-Throughput Computational Screening (HTCS) and Its Strategic Value

High-throughput computational screening (HTCS) is a paradigm in materials science and molecular discovery that uses automated, multi-stage computational workflows to rapidly evaluate vast libraries of candidates for targeted properties. [1] This approach integrates physics-based models, surrogate predictors, machine learning, and robust database infrastructure to triage, prioritize, and rank candidates with substantially reduced labor and computational cost compared to traditional one-at-a-time simulations. [1] Within the context of workflow validation research, HTCS provides a formal, quantifiable framework for maximizing the yield of high-performing candidates—or "hits"—while adhering to strict computational budgets. [1]

Table of Contents

- Principles and Mathematical Foundations of HTCS

- The Multi-Fidelity Screening Pipeline

- Domain-Specific Workflows and Validation

- Implementation and Workflow Validation

- Quantitative Benchmarks and Limitations

- Conclusions and Outlook

Principles and Mathematical Foundations of HTCS

The formal structure of an HTCS pipeline is a sequential, multi-stage process. A candidate library ( \mathbb{X} ), which can contain from ( 10^4 ) to over ( 10^8 ) distinct entities (molecules, crystals, defects, etc.), is filtered through a series of ( N ) surrogate models of increasing fidelity and cost: ( S1 \to S2 \to \dots \to S_N ). [1]

Each stage ( Si ) is defined by a triplet ( (fi, \lambdai, ci) ), where:

- ( fi ) is a predictive model that assigns a score ( yi = f_i(x) ) to candidate ( x ).

- ( \lambda_i ) is a threshold value that the score must meet or exceed for the candidate to proceed.

- ( ci ) is the computational cost of applying ( fi ) to a single candidate. [1]

The final set of validated "positives" or "hits" is defined as ( \mathbb{Y} = {x \in \mathbb{X}N : fN(x) \geq \lambda_N} ). [1]

The central optimization problem in HTCS pipeline design is to maximize the Return on Computational Investment (ROCI). The objective is to find the threshold values ( \psi^* = [\lambda1, ..., \lambda{N-1}] ) that maximize the expected yield ( r(\lambda) ) while ensuring the total computational cost ( h(\lambda) ) does not exceed a budget constraint ( C ). [1]

[ \psi^* = \mathrm{argmax}{\psi = [\lambda1,...,\lambda{N-1}]} r([\psi, \lambdaN]) \quad \text{subject to} \quad h([\psi, \lambda_N]) \leq C ]

These thresholds are tuned via grid or gradient-based search, often using numerically estimated joint score distributions ( p(y1,...,yN) ) learned through methods like Expectation-Maximization (EM) algorithms. [1]

The Multi-Fidelity Screening Pipeline

HTCS strategically exploits models of differing accuracy and cost, known as multi-fidelity models. The general principle is to apply rapid, low-fidelity surrogates in the initial stages to prune the search space, reserving expensive, high-fidelity methods for a smaller subset of promising candidates. [1]

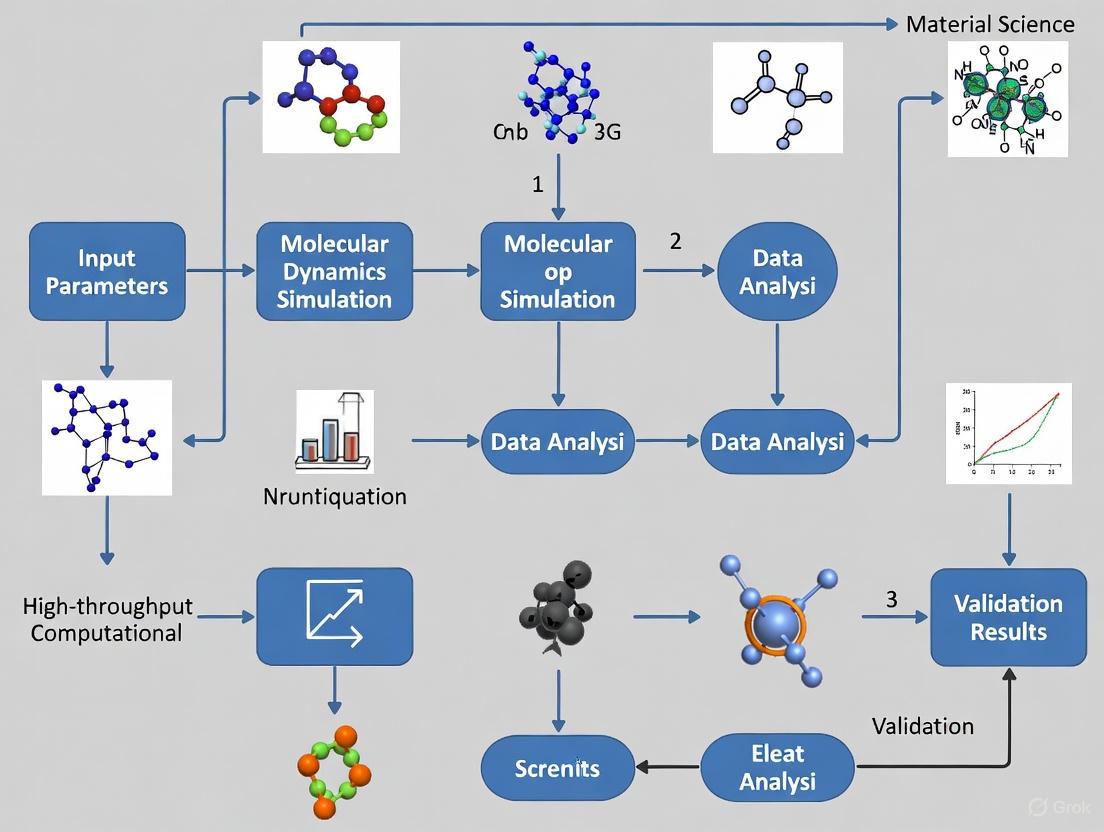

Figure 1: A generalized multi-stage HTCS pipeline. Candidates are filtered through successive stages of increasing computational cost and fidelity. Thresholds (λ) at each stage are optimized to maximize return on investment.

Adaptive sampling strategies allow for dynamic adjustment of thresholds (( \lambda_i )) in response to real-time monitoring of pass rates and budget consumption. Empirically, high inter-stage score correlation (e.g., ( \rho \sim 0.8-0.9 )) yields near-maximal cost savings. Even moderate correlation (( \rho \sim 0.5 )) provides substantial gains over single-fidelity approaches. In one documented deployment screening ~50,000 molecules, an adaptive four-stage pipeline achieved over 44% cost savings while maintaining accuracy greater than 96%. [1]

Domain-Specific Workflows and Validation

HTCS methodologies are highly adaptable and have been successfully tailored to a range of material properties and discovery goals. The table below summarizes several validated domain-specific workflows.

Table 1: Domain-Specific HTCS Workflows and Their Experimental Validation

| Application Domain | Screening Descriptor/Method | High-Fidelity Validation | Performance and Validation Metrics |

|---|---|---|---|

| Thermal Conductivity [1] | Quasi-harmonic Debye (AGL) models computing Debye temperature (ΘD) and lattice conductivity (κl) from DFT energy/volume curves. | Full Boltzmann Transport Equation (BTE) phonon calculations. | Throughput: 1-2 orders faster than BTE. Pearson r ≈ 0.88, Spearman ρ ≈ 0.80 to experiment. |

| Thermoelectrics [1] | Descriptor χ (effective mass, deformation potential) for power factor; descriptor γ (elastic constants) for anharmonicity. | Full electron-phonon BTE calculations. | Enables rapid ranking, bypassing computationally prohibitive BTE. |

| Ion Conductors [1] | "Pinball model" using frozen-host electrostatic potential energy surface (PES) for automated molecular dynamics. | On-the-fly DFT molecular dynamics (DFT-MD). | Drastically accelerates Li-diffusion screening. Led to discovery of Li10Ge2P4S24 and Li5ClO3. |

| Catalysis [1] | Density of states (DOS)-based pattern similarity metrics for bimetallic catalysts. | Full slab DFT calculations of productivity/selectivity. | Identified Ni61Pt39, achieving 9.5-fold cost-normalized productivity increase over Pd. |

| Porous Materials (MOFs) [1] | Geometric descriptors (PLD, LCD, void fraction), Henry coefficient (K_H), followed by GCMC or ML-predicted selectivity. | Grand Canonical Monte Carlo (GCMC) simulations; ML-potentials (e.g., PFP) for flexibility. | Identified MOFs with six-membered aromatic rings and N-rich linkers for high iodine capture. |

Implementation and Workflow Validation

Robust automation and data management frameworks are critical for the success and reproducibility of HTCS campaigns. Best practices have been established to ensure workflow validity and reliability. [1]

Essential Software Infrastructure and Research Toolkit

HTCS relies on specialized software for workflow orchestration, data management, and atomic-scale simulation. The table below catalogues key tools that form the modern HTCS researcher's toolkit.

Table 2: Essential Research Toolkit for HTCS Workflow Implementation

| Tool Category | Representative Solutions | Primary Function in HTCS Workflow |

|---|---|---|

| Workflow Orchestration [1] | FireWorks, AiiDA, MAPTLAB, Custodian | Manages complex job dependencies, schedules calculations on HPC systems, and automates error recovery. |

| Data Management & Analysis [1] [2] | pymatgen, ASE, JSON/HDF5 checkpointing | Provides structural analysis, input file generation, and standardized data storage for computational materials data. |

| Atomic-Scale Simulation [1] | VASP, VASPsol, Quantum ESPRESSO | Performs high-fidelity ab initio calculations (e.g., DFT) for energy and property evaluation. |

| Machine Learning Integration [1] [3] | Graph Neural Networks, Active Learning Loops | Accelerates property prediction and guides iterative candidate selection for complex systems like MOFs and perovskites. |

Best Practices for Workflow Validation

To ensure robustness and reproducibility, HTCS workflows should incorporate several key practices, which also serve as focal points for validation research: [1]

- Systematic Parameterization: Carefully define structural and computational parameters, such as

max_area,max_mismatchfor interfaces, slab thickness, and vacuum size for surfaces. - Automated Failure Recovery: Implement protocols to handle common simulation failures, such as electronic convergence issues in DFT or ionic instability, allowing the workflow to proceed gracefully.

- Provenance Tracking and Checkpointing: Use versioned storage and detailed record-keeping for all calculations. Checkpointing allows workflows to be restarted from intermediate points, which is essential for large-scale campaigns on HPC systems.

- Multi-Fidelity Calibration: Conduct preliminary exploratory runs with low-fidelity models to calibrate the workflow and establish appropriate thresholds before committing to full-scale, high-fidelity screening.

Quantitative Benchmarks and Limitations

HTCS frameworks have led to pivotal discoveries across multiple domains, demonstrating their tangible strategic value. Key achievements include the identification of novel solid electrolytes like Li_10Ge_2P_4S_24 and Li_5ClO_3, the discovery of high-performance catalytic alloys such as Ni_61Pt_39, and the finding of iodine-capture MOFs with specific structural motifs. [1]

The performance of these workflows can be quantified. For instance, in the screening of porous materials for iodine capture, machine learning-driven HTCS can achieve a top-k recall exceeding 90% against full simulation, while screening hundreds of thousands to millions of candidates. [1] Adaptive multi-stage pipelines have demonstrated the ability to reduce computational costs by over 44% while maintaining accuracy levels above 96%. [1]

Despite these successes, several limitations must be acknowledged and addressed in validation research:

- Model Accuracy: The accuracy of force fields or surrogate models can be insufficient for certain properties, such as host-guest energetics in flexible metal-organic frameworks (MOFs) or non-linear mixing enthalpies in complex alloys. [1]

- Database Biases: The libraries screened are often constructed from existing databases, which may contain biases and not fully represent the entire chemical space of interest. [1]

- Synthetic Feasibility: A primary limitation is the gap between in silico predictions and the actual synthetic accessibility of the proposed materials. [1]

- Accuracy vs. Ranking Trade-off: Descriptor-driven pipelines necessarily sacrifice some physical detail for scale. Consequently, predictions of absolute property magnitudes may differ from experimental measurements, though the ordinal ranking of candidates (hit identification) is generally robust. [1]

Figure 2: The HTCS pipeline optimization framework. The goal is to find pipeline parameters that maximize a performance objective (like ROCI) within defined computational constraints.

High-Throughput Computational Screening represents a fundamental shift in the paradigm for materials and molecule discovery. By formalizing the process into a multi-stage, multi-fidelity pipeline optimized for return on computational investment, HTCS enables the efficient navigation of vast chemical spaces that are intractable with traditional methods. Its strategic value is proven by its successful application across diverse domains—from thermoelectrics and ion conductors to catalysis and porous materials—leading to the discovery of novel, high-performing compounds.

For workflow validation research, the future of HTCS lies in addressing its current limitations. Key focus areas will be the development of more accurate and transferable machine learning potentials, the creation of better and less biased chemical libraries, and the increasing integration of AI and active learning to create closed-loop, self-improving discovery systems. [1] [3] As these tools and methodologies mature, HTCS is poised to become an even more powerful and indispensable engine for innovation across the chemical and materials sciences.

The Evolution from Manual Screening to Automated and AI-Driven Workflows

The pursuit of scientific discovery has always been constrained by the scale and speed at which researchers can explore complex experimental spaces. Traditional manual screening methods, while valuable for detailed investigation, are fundamentally limited by human bandwidth, inherent variability, and temporal constraints. The emergence of automated and AI-driven workflows represents a paradigm shift in scientific research, enabling the rapid evaluation of thousands to millions of candidates through integrated computational and experimental pipelines. This evolution is particularly critical for high throughput computational screening workflow validation research, where the accuracy, reproducibility, and predictive power of these accelerated methods must be rigorously established. As the volume of data generation increases exponentially, robust validation frameworks ensure that discoveries made through high-throughput methods are translatable to real-world applications across materials science, drug discovery, and biotechnology.

The transition from manual to automated screening is not merely a change in velocity but a fundamental restructuring of the scientific process itself. Manual screening typically involves researchers proposing, synthesizing, and testing one material or compound at a time, a process that can take months or even years per material [4]. In contrast, high-throughput (HT) methods involve setups or techniques designed for fully synthesizing, characterizing, screening, or analyzing multiple samples in a significantly shorter time than traditional benchtop approaches [4]. This acceleration is made possible through the integration of robotic automation, advanced computational modeling, and machine learning algorithms that can identify patterns and relationships beyond human perception capacity.

Theoretical Foundations: From Manual Execution to Computational Intelligence

The Limitations of Traditional Manual Screening

Traditional manual screening methodologies have served as the backbone of scientific discovery for centuries, but their limitations become increasingly apparent when addressing complex, multi-parameter research questions. Manual approaches typically involve researchers conducting individual experiments through sequential processes including literature review, hypothesis formation, experimental design, manual execution, data collection, and analysis. While this method allows for deep investigation of specific phenomena, it suffers from several critical constraints:

- Low throughput: The serial nature of manual experimentation severely limits the number of candidates that can be evaluated within practical timeframes [4]

- Operator dependency: Results are often influenced by individual technique, experience level, and subjective interpretation [5]

- Experimental variability: Inconsistent execution across experiments and research groups challenges reproducibility

- High resource consumption: Significant requirements for materials, time, and specialized personnel [5]

- Limited exploration space: Practical constraints restrict investigation to narrow, predetermined chemical or biological spaces

These limitations become particularly problematic in fields like drug discovery, where the potential chemical space is estimated to contain >10^60 compounds, or materials science, where compositional variations create virtually infinite possibilities.

Computational Foundations of Automated Screening

The theoretical underpinnings of automated screening rest on advances in computational power, algorithmic sophistication, and data integration. Density Functional Theory (DFT) has emerged as a cornerstone computational method due to its relatively low computational cost and semiquantitative accuracy in predicting material properties based on electronic structure [6] [4]. DFT enables researchers to explore and screen materials in the order of 10^6 in a single project, providing deep insight into materials' electronic structure and enabling prediction of properties such as bandgaps, adsorption energies, and catalytic activity [4].

The development of effective descriptors—quantifiable representations of specific properties that connect complex electronic structure calculations to macroscopic properties—has been crucial for large-scale material screening. In electrocatalyst research, for example, the reactivity descriptor quantifying catalyst activity is often represented by the Gibbs free energy (ΔG) associated with the rate-limiting step of a reaction [4]. Similar descriptor-based approaches have been successfully applied to biological systems, such as screening natural compounds that enhance butyrate production by targeting specific bacterial enzymes [7].

Table 1: Key Computational Methods in High-Throughput Screening

| Method | Theoretical Basis | Applications | Throughput Capacity |

|---|---|---|---|

| Density Functional Theory (DFT) | Quantum mechanics, electronic structure analysis | Prediction of material properties, catalytic activity, piezoelectric coefficients | 10^3-10^6 compounds [6] [4] |

| Molecular Docking | Molecular recognition, binding affinity prediction | Drug discovery, enzyme-ligand interactions, virtual screening | 10^4-10^5 compounds/day [7] |

| Machine Learning | Pattern recognition, predictive modeling | Quantitative Structure-Activity Relationships (QSAR), material property prediction | 10^6+ compounds with trained models [4] |

| Computer Vision | Image segmentation, classification | Crystal morphology analysis, cellular imaging, phenotypic screening | 10^3-10^4 images/experiment [5] |

Experimental Design and Protocol Development

Computational Screening Workflows

Modern computational screening employs structured, hierarchical workflows that combine multiple computational techniques to efficiently explore vast chemical spaces. A representative protocol for virtual screening of compound libraries demonstrates this integrated approach [7] [8]:

Library Preparation

- Compile comprehensive compound libraries from databases like FooDB, PubChem, or ZINC (typically 25,000-1,000,000+ compounds) [7]

- Convert 2D chemical structures to 3D conformers using software like Open Babel

- Perform energy minimization and convert to appropriate formats (PDBQT) with defined rotatable bonds

Target Preparation

- Retrieve protein structures from PDB or generate 3D models via homology modeling (SWISS-MODEL)

- Prepare active site through identification of binding cavities (ProteinsPlus server)

- Define grid boxes around predicted active sites for docking simulations

Docking Execution

- Perform virtual screening using AutoDock Vina v1.2 with exhaustiveness levels of 8-12 [7]

- Set binding energy thresholds for hit selection (typically ≤ -10 kcal/mol)

- Analyze top-ranking binding poses using Discovery Studio or similar software to evaluate hydrogen bond networks, hydrophobic interactions, and key contact residues

This protocol can be automated through sequential scripting, significantly reducing human intervention and enabling continuous operation [6] [8].

Integrated Computational-Experimental Validation

Validating computational predictions through experimental confirmation represents a critical phase in workflow validation research. The following protocol outlines a robust approach for experimental validation of computationally screened hits:

Experimental Validation Phase

- Culture target biological systems (bacteria, cells) under controlled conditions

- Treat with top-ranked computational hits across concentration gradients (0-48 hours)

- Measure response outcomes (butyrate production, cell viability, etc.) using analytical methods like gas chromatography, HPLC, or spectrophotometry [7]

- Assess gene expression changes via qRT-PCR for relevant pathway genes

- Evaluate protein-level changes through Western blotting or immunofluorescence

Data Integration and Model Refinement

- Compare experimental results with computational predictions

- Calculate correlation metrics to validate screening accuracy

- Refine computational models based on experimental discrepancies

- Iterate screening with refined parameters for improved hit rates

This integrated approach demonstrated remarkable success in identifying natural compounds that enhance butyrate production, with computational predictions strongly correlating with experimental measurements (e.g., hypericin showing 2.5-fold upregulation for BCD enzyme and 0.58 mM butyrate production) [7].

Computational-Experimental Validation Workflow

Quantitative Comparison: Manual vs. Automated Screening Performance

The performance differential between manual and automated screening approaches can be quantified across multiple dimensions, providing compelling evidence for the adoption of accelerated workflows. The data reveal not merely incremental improvements but order-of-magnitude enhancements in research efficiency.

Table 2: Performance Metrics - Manual vs. Automated Screening

| Performance Metric | Manual Screening | Automated/AI-Driven Screening | Improvement Factor |

|---|---|---|---|

| Screening Throughput | 1 material/compound per months-years [4] | 10^3-10^6 compounds via DFT [4] | 1000x-1,000,000x |

| Screening Time | 20+ hours/hire for resume screening [9] | 3x faster candidate screening with 87% accuracy [10] | 70% reduction in time [10] |

| Experimental Consistency | High variability between operators [5] | Standardized evaluation criteria [9] | Qualitative improvement |

| Bias Reduction | Unconscious human bias in evaluation [9] | Structured assessment minimizes bias [9] | Qualitative improvement |

| Resource Requirements | High material consumption [5] | Miniaturized approaches (e.g., computer vision) [5] | 50-90% reduction in materials |

| Data Generation Volume | Limited by manual processing capacity | Massive datasets from HT experiments [5] | 10-1000x increase |

The performance advantages extend beyond simple velocity metrics to include qualitative improvements in research outcomes. For example, in piezoelectric materials discovery, high-throughput DFT screening of ~600 noncentrosymmetric organic structures identified numerous crystals with promising piezoelectric properties, some exceeding the performance of well-known inorganic materials [6]. The validation of these computational predictions against experimental data demonstrated strong correlations, with γ-glycine showing experimental strain coefficients of 5.33 pC/N (d16) and 11.33 pC/N (d33) compared to DFT-predicted values of 5.15 pC/N and 10.72 pC/N, respectively [6].

Similarly, in recruitment screening—a parallel application of screening methodologies—AI-powered platforms reduced time-to-hire by up to 63% with automated workflows while delivering 3x faster candidate screening at 87% accuracy compared to manual reviews [10]. These consistent findings across disparate fields suggest fundamental advantages to automated approaches that transcend specific application domains.

Research Reagent Solutions for Screening Workflows

The implementation of robust automated screening workflows requires specialized computational tools, experimental platforms, and data analysis resources. These components form an integrated technological ecosystem that supports the end-to-end screening process from initial candidate generation to validated hits.

Table 3: Essential Research Reagents and Platforms for Screening Workflows

| Resource Category | Specific Tools/Platforms | Function in Workflow | Application Examples |

|---|---|---|---|

| Compound Libraries | FooDB, PubChem, ZINC, ChEMBL | Source of screening candidates | Natural compound screening [7] |

| Computational Docking | AutoDock Vina, SWISS-MODEL | Structure-based virtual screening | Molecular docking against enzyme targets [7] |

| Materials Databases | Crystallographic Open Database (COD) | Source of material structures | Piezoelectric materials discovery [6] |

| Property Prediction | DFT codes (VASP, Quantum ESPRESSO) | Prediction of material properties | Piezoelectric coefficient calculation [6] |

| High-Throughput Experimentation | Computer Vision-Assisted HTPS [5] | Automated experimental screening | Crystal morphology regulation [5] |

| Data Analysis | Machine Learning (scikit-learn, TensorFlow) | Pattern recognition, model building | Structure-property relationships [4] |

Workflow Automation and Integration Platforms

Beyond individual tools, integrated platforms provide end-to-end solutions for automating the entire screening workflow. In recruitment screening—which offers a analogous case study for research screening—platforms like MokaHR, HireVue, and TestGorilla demonstrate the power of integrated automation [10]. These systems combine AI-powered resume analysis, skills assessments, video interviews, and automated communication to create seamless workflows that reduce manual intervention while improving selection quality [10].

Similar integration paradigms are emerging in scientific screening, with platforms that combine computational prediction, experimental design, automated execution, and data analysis. For example, the computer vision-assisted high-throughput additive screening system (CV-HTPASS) integrates high-throughput screening devices, in situ imaging equipment, and AI-assisted image-analysis algorithms to regulate crystal properties [5]. This system generated thousands of crystal images with diverse morphologies, with AI algorithms successfully segmenting, classifying, and extracting valuable crystal information from massive datasets [5].

Validation Frameworks and Quality Control Measures

Computational Validation Protocols

Establishing the validity of computational predictions is fundamental to high-throughput screening workflow validation research. Several systematic approaches have emerged as standards for computational method validation:

Benchmarking Against Experimental Data

- Compile historical data with known outcomes for validation sets

- Calculate correlation metrics between predicted and observed values

- Establish confidence intervals for prediction accuracy

- For piezoelectric materials discovery, comparison of DFT-predicted values with experimental measurements demonstrated strong correlations across 16 single-crystal systems and 30 distinct components of the piezoelectric strain tensor [6]

Cross-Validation Techniques

- Implement k-fold cross-validation to assess model robustness

- Utilize hold-out validation sets untouched during model development

- Apply statistical measures (R², RMSE) to quantify predictive performance

Protocol Validation

- Replicate published studies using established protocols

- Confirm ability to reproduce previously reported results

- The automated virtual screening protocol described by [8] provides a validated workflow for library generation and docking evaluation that can be implemented and verified across research groups

Experimental Validation Standards

Experimental validation of computational predictions requires rigorous standards to ensure reliability and reproducibility:

Multi-level Validation Hierarchy

- Primary validation: Direct measurement of target properties/activities

- Secondary validation: Assessment of related properties/pathway effects

- Tertiary validation: Functional outcomes in relevant systems/environments

Quantitative Assessment Metrics

- Statistical significance testing between experimental conditions

- Dose-response relationships for bioactive compounds

- Consistency across technical and biological replicates

- In butyrate enhancement studies, researchers implemented comprehensive validation including bacterial growth (OD600), butyrate production (gas chromatography), gene expression (qRT-PCR), and signaling pathway analysis (Western blot) [7]

Cross-platform Verification

- Correlation of results across different measurement technologies

- Orthogonal validation methods to eliminate technique-specific artifacts

- Independent replication across research laboratories

The evolution from manual screening to automated and AI-driven workflows represents a fundamental transformation in scientific methodology that transcends specific disciplines. This paradigm shift enables researchers to navigate exponentially larger exploration spaces while extracting deeper insights from complex data relationships. The validated workflows discussed herein demonstrate consistent performance advantages across multiple domains, from materials science to drug discovery to biotechnology.

The future trajectory of screening methodologies points toward increasingly integrated and autonomous systems. Closed-loop discovery platforms that combine computational prediction, automated experimentation, and machine learning analysis are emerging as the next frontier [4]. These systems minimize human intervention while maximizing learning efficiency through iterative design-test-learn cycles. The development of autonomous laboratories represents the ultimate expression of this trend, with systems capable of self-directed hypothesis generation, experimental execution, and knowledge extraction [4].

For researchers engaged in high throughput computational screening workflow validation, several critical challenges remain. These include improving the accuracy of predictive models for complex multi-parameter systems, developing standardized validation frameworks across domains, addressing data quality and standardization issues, and creating more efficient integration between computational and experimental components. Additionally, consideration of practical implementation factors such as cost, safety, and scalability must be embedded earlier in the screening process [4].

As these methodologies continue to mature, their impact will extend beyond acceleration of discovery to enabling entirely new research modalities. The ability to systematically explore vast experimental spaces will uncover phenomena and relationships that would remain inaccessible through traditional approaches. This represents not merely an improvement in efficiency but a fundamental expansion of human capacity to understand and manipulate the natural world.

High-Throughput Computational Screening (HTCS) has become a cornerstone of modern drug discovery, enabling the rapid identification of hit compounds by seamlessly integrating advanced computational simulations with experimental validation. This guide details the core components and workflows essential for a robust HTCS campaign, framed within the critical context of validation research to ensure predictive accuracy and experimental reproducibility.

Compound Libraries: The Foundation of Screening

The screening library is the foundational element of any HTCS workflow. Its quality, diversity, and drug-likeness directly influence the probability of identifying viable hit compounds.

Library Composition and Curation

A well-curated library provides a broad coverage of biologically relevant chemical space while maintaining lead-like properties. Key characteristics of high-quality libraries from leading providers are summarized in the table below.

Table 1: Composition of Representative High-Throughput Screening Compound Libraries

| Library / Provider | Total Compounds | Key Characteristics | Quality Control |

|---|---|---|---|

| HTS Compound Collection (Life Chemicals) [11] | >575,000 stock compounds | Drug-like compounds, optimal physicochemical properties, broad chemical space | >90% purity confirmed by 400MHz NMR and/or LCMS |

| LeadFinder Diversity Library (Sygnature) [12] | 150,000 compounds | Low Molecular Weight, lead-like, high diversity, strict similarity control | Vetted by senior medicinal chemists; fresh solids sourced |

| Screening Library (Evotec) [13] | >850,000 compounds | Includes diverse compounds, fragments, natural products, macrocycles, and covalent libraries | Continual reinvestment and curation for purity and solubility |

Key Considerations for Library Selection and Validation

From a validation perspective, several factors are paramount:

- Chemical Diversity and Novelty: Libraries should encompass a wide range of scaffolds and structures to maximize the chances of discovering novel hit series. Computational methods like Extended-Connectivity Fingerprints (ECFPs) and Tanimoto coefficients are often used to quantify diversity [11].

- Drug-Likeness and Lead-Likeness: Compounds should adhere to rules such as Lipinski's Rule of Five and possess optimal physicochemical properties (e.g., molecular weight, lipophilicity) to enhance their potential for successful optimization [11] [13].

- Quality Assurance: Rigorous quality control, typically via LCMS and NMR to confirm identity and purity (often >90%), is non-negotiable for generating reliable screening data [11] [12].

The Integrated HTCS Workflow

A validated HTCS protocol is not a linear path but an iterative cycle of computational prediction and experimental testing. The following workflow diagram encapsulates the key stages.

High-Throughput Computational Screening

The initial phase involves using computational power to prioritize a manageable number of candidates from vast virtual or tangible libraries.

- Diversity-Based High-Throughput Virtual Screening (D-HTVS): This efficient method first screens a diverse set of molecular scaffolds from a large database (e.g., the ChemBridge library). Based on docking scores, the top scaffolds are selected, and all structurally related molecules (e.g., with a Tanimoto score >0.6) are retrieved for a more thorough secondary docking analysis [14]. This two-stage process balances broad exploration with focused assessment.

- Descriptor-Based Screening: An alternative or complementary approach uses physically relevant descriptors to predict catalytic or binding properties. For instance, in bimetallic catalyst discovery, the similarity of electronic Density of States (DOS) patterns to a known catalyst (like Palladium) has been successfully used as a screening descriptor. The similarity can be quantified using a root-mean-square difference metric, weighted by a Gaussian function near the Fermi energy to emphasize the most relevant electronic states [15].

- Molecular Docking and Dynamics: Docking algorithms (e.g., AutoDock Vina) predict the binding pose and affinity of a compound to the target protein [14]. For higher-fidelity validation, atomistic Molecular Dynamics (MD) simulations (e.g., using GROMACS with OPLS/AA forcefield) are employed to understand the stability and dynamics of protein-ligand complexes in a solvated, physiological environment. Subsequent binding free energy calculations using methods like MM-PBSA (Molecular Mechanics Poisson-Boltzmann Surface Area) provide a more accurate estimate of binding affinity [14].

Experimental Screening and Hit Identification

Computational predictions must be rigorously tested experimentally. This phase is designed to confirm activity and eliminate false positives.

- Primary Screen: The computationally selected compounds are tested in a single concentration ("single shot") against the biological target using a robust, miniaturized, and automated assay [12] [13]. This high-throughput step identifies initial "actives."

- Hit Confirmation: Active compounds from the primary screen are re-tested using the same assay conditions to confirm the activity is reproducible [13].

- Dose-Response and Potency Assessment: Confirmed hits are tested over a range of concentrations to generate concentration-response curves and determine half-maximal inhibitory/effective concentrations (IC50/EC50), providing a quantitative measure of potency [12] [13].

- Counter-Screening and Orthogonal Assays: To eliminate compounds that interfere with the assay technology (e.g., fluorescent compounds in a fluorescence-based assay), counter-screens are essential [13]. Orthogonal assays, which use a different technology or readout, provide further confirmation of the target engagement and biological effect [12] [13].

- Secondary Functional Assays: These assays evaluate the functional consequences of target engagement in a more physiologically relevant context, such as a cell-based model, confirming the desired biological outcome [13] [14].

Essential Research Reagents and Materials

The execution of a validated HTCS campaign relies on a suite of specialized reagents, software, and materials.

Table 2: Essential Research Reagent Solutions for HTCS

| Item / Resource | Function / Application | Example Specifications / Notes |

|---|---|---|

| Kinase Assay Kit | Biochemical screening for enzyme activity and inhibition. | Commercial kits available (e.g., BPS Bioscience EGFR/HER2 Kinase Assay Kits) [14]. |

| Cell Lines | Secondary and phenotypic screening in a physiological context. | Cancer cell lines such as KATO III and SNU-5 for gastric cancer research [14]. |

| Protein Structures | Foundation for computational docking and simulations. | Retrieved from PDB (e.g., 4HJO for EGFR, 3RCD for HER2); processed by removing water and adding hydrogens [14]. |

| Automation & Dispensing | Enables rapid, precise, and reproducible assay execution. | Platforms like HighRes Biosolutions with Echo acoustic dispensing technology [12]. |

| Data Analysis Software | Manages and analyzes large, complex HTS datasets. | Genedata Screener for data processing and analysis [12]. |

| Simulation Software | Performs molecular dynamics and free energy calculations. | GROMACS simulation package with OPLS/AA forcefield and MM-PBSA analysis [14]. |

Advanced Data Analytics and Hit Prioritization

The volume of data generated in HTCS necessitates sophisticated data analysis and management. Integrated informatics platforms, such as Titian Mosaic SampleBank, are used for precise compound tracking, while data processing tools like Genedata Screener handle the complex dataset analysis [12]. Furthermore, Artificial Intelligence and Machine Learning (AI/ML) are increasingly deployed for hit expansion and prioritization. AI/ML models can be trained on primary screening data to identify additional active compounds from virtual libraries and to prioritize confirmed hits based on predicted activity, off-target effects, and drug-likeness [13].

A Case Study in Validation: Discovery of a Dual EGFR/HER2 Inhibitor

A published study on discovering a dual EGFR/HER2 inhibitor for gastric cancer provides a concrete example of a validated HTCS workflow [14].

- Computational Screening: Diversity-based high-throughput virtual screening (D-HTVS) of the ChemBridge library was performed against EGFR and HER2 kinase structures. Top scaffolds were identified, and related compounds were subjected to standard docking.

- Validation through Dynamics: The top candidate, compound C3, underwent rigorous validation via 100 ns molecular dynamics simulations. The stability of the protein-ligand complexes was analyzed, and the binding free energy was calculated using MM-PBSA, confirming a strong affinity for both kinases.

- Experimental Confirmation:

- Biochemical Assays: C3 inhibited EGFR and HER2 kinases with IC50 values of 37.24 nM and 45.83 nM, respectively.

- Cellular Efficacy: The compound demonstrated potent anti-proliferative effects in gastric cancer cell lines (KATOIII and Snu-5) with GI50 values of 84.76 and 48.26 nM.

- Dual Inhibition: The study successfully identified a novel lead-like molecule with plausible dual inhibitory activity, showcasing the power of the integrated HTCS protocol for tackling complex therapeutic targets.

This end-to-end process, from computational prediction to experimental validation across multiple assay types, exemplifies the rigorous approach required for validating a HTCS workflow and generating high-quality, translatable hit compounds.

The pharmaceutical industry operates at the nexus of profound scientific innovation and immense financial risk, facing a development process that is a decade-plus marathon fraught with staggering costs, high attrition rates, and significant timeline uncertainty [16]. The journey of a new drug from laboratory concept to patient bedside is governed by a rigorous, multi-stage process designed to ensure safety and efficacy but consequently establishes a long and complex path to market [16]. Multiple industry analyses consistently place the average time to develop a single new medicine at 10 to 15 years from initial discovery through regulatory approval [16]. This protracted timeline, coupled with staggering failure rates, creates a high-stakes environment where any improvement in predictability or efficiency can yield substantial returns. Within this context, high-throughput computational screening represents a paradigm shift, transforming intellectual property from a reactive legal necessity into a proactive, predictive tool for structuring and de-risking multi-billion-dollar research and development timelines [16].

Table 1: The Drug Development Lifecycle by the Numbers

| Development Stage | Average Duration (Years) | Probability of Transition to Next Stage | Primary Reason for Failure |

|---|---|---|---|

| Discovery & Preclinical | 2-4 | ~0.01% (to approval) | Toxicity, lack of effectiveness |

| Phase I | 2.3 | ~52% | Unmanageable toxicity/safety |

| Phase II | 3.6 | ~29% | Lack of clinical efficacy |

| Phase III | 3.3 | ~58% | Insufficient efficacy, safety |

| FDA Review | 1.3 | ~91% | Safety/efficacy concerns |

Quantifying the Bottlenecks: Economic and Attrition Challenges

The true cost of drug development is not merely the sum of direct, out-of-pocket expenses for research, materials, and trials but must account for the capitalized cost, which includes the time value of money and the opportunity cost of investing vast sums of capital for over a decade with no guarantee of return [16]. This distinction explains why cost estimates vary widely, with one study estimating average out-of-pocket costs at $172.7 million per drug, ballooning to $879.3 million when accounting for failures and capital costs; other widely cited estimates place the average capitalized cost even higher, at $2.6 billion per approved drug [16]. The clinical trial process represents the most significant financial burden, accounting for approximately 68-69% of total out-of-pocket R&D expenditures [16].

The immense cost of drug development is a direct consequence of incredibly low probability of success. For every 10,000 compounds that begin in preclinical research, only one will ultimately receive FDA approval and reach the market [16]. The overall likelihood of approval for a drug candidate entering Phase I clinical trials is a mere 7.9%, meaning that more than nine out of every ten drugs that begin human testing will fail [16]. Phase II represents the single largest hurdle in drug development, with a success rate of only 29% to 40% [16]. Between 40% and 50% of all clinical failures are due to a lack of clinical efficacy discovered at this stage, positioning Phase II as the epicenter of value destruction and the most crucial leverage point for intervention [16].

High-Throughput Computational Screening: A Paradigm Shift

High-throughput computational screening offers a transformative approach to these challenges by enabling the rapid evaluation of massive parametric spaces that would be impossible to investigate through traditional laboratory work alone [17]. This methodology, combined with artificial intelligence and machine learning, represents a new research paradigm that combines data science with chemistry, proving to be an efficient tool for analyzing computational data, revealing structure-property relationships, identifying promising candidates, and guiding molecular design [18]. The convergence of artificial intelligence and comprehensive data transforms intellectual property into a proactive, predictive tool for building dynamic, probabilistic timelines that allow for forecasting competitor milestones, predicting litigation and regulatory risks, and strategically identifying low-competition innovation pathways [16].

Core Methodological Framework

The implementation of high-throughput computational screening follows a systematic workflow that integrates multiple computational disciplines. As demonstrated in research on metal-organic frameworks for iodine capture—a relevant case study for computational material discovery—the process begins with establishing a large-scale database of candidate structures [18]. In this study, researchers selected 1,816 I2-accessible MOF materials from the well-established CoRE MOF 2014 database, employing Grand Canonical Monte Carlo simulations to study their adsorption performance [18]. This computational approach enables the investigation of relationships between structural characteristics and adsorption properties to identify optimal parameters [18].

Following initial screening, researchers extract multiple classes of descriptors, including structural features (pore limiting diameter, largest cavity diameter, void fraction, pore volume, surface area, density), molecular features (types of metal and ligand atoms, bonding modes), and chemical features (heat of adsorption, Henry's coefficient) [18]. These comprehensive descriptor sets are then used to train machine learning algorithms—such as Random Forest and CatBoost—to predict performance properties and assess feature importance to determine the relative influence of various factors [18]. Finally, molecular fingerprint techniques can be introduced to provide comprehensive and detailed structural information, revealing key structural motifs that enhance target properties [18].

Experimental Protocols and Implementation

High-Throughput Screening Protocol

The implementation of high-throughput computational screening requires meticulous protocol design to ensure robust and reproducible results. Based on established methodologies in the field, the screening process typically follows this detailed protocol [18]:

Database Curation: Begin with a well-established database of potential candidates (e.g., CoRE MOF 2014 database for materials research). Apply initial accessibility filters based on physical constraints (e.g., pore limiting diameter > 3.34 Å for iodine molecules) to generate a refined candidate set [18].

Molecular Simulations: Employ Grand Canonical Monte Carlo simulations using specialized software (e.g., RASPA) to evaluate target properties under specific environmental conditions. For gas adsorption studies, simulate conditions replicating operational environments (e.g., humid air conditions for iodine capture) [18].

Descriptor Calculation: Compute comprehensive descriptor sets encompassing structural parameters (pore limiting diameter, largest cavity diameter, void fraction, pore volume, surface area, density), molecular features (elemental types, hybridization states, bonding modes), and chemical properties (heat of adsorption, Henry's coefficient) [18].

Performance Metrics: Calculate relevant performance metrics based on simulation results. For adsorption applications, this includes adsorption capacity and selectivity. Establish optimal value ranges for each structural parameter through relationship analysis between structure and performance [18].

Machine Learning Integration Protocol

The integration of machine learning with high-throughput screening follows a structured approach to maximize predictive accuracy and interpretability [18]:

Feature Set Construction: Gradually incorporate feature sets of increasing complexity, beginning with basic structural descriptors, then adding molecular descriptors, and finally incorporating chemical descriptors to enhance prediction accuracy [18].

Model Training and Validation: Employ multiple machine learning algorithms (e.g., Random Forest, CatBoost) to train regression models for predicting target properties. Utilize appropriate validation techniques to assess model performance and prevent overfitting [18].

Feature Importance Analysis: Use built-in feature importance metrics from machine learning algorithms to determine the relative influence of various descriptors on target properties. Identify the most crucial factors governing performance [18].

Molecular Fingerprint Analysis: Introduce molecular fingerprint techniques (e.g., Molecular ACCess Systems - MACCS keys) to provide comprehensive structural information. Identify specific structural features that correlate with enhanced performance [18].

Table 2: Research Reagent Solutions for High-Throughput Computational Screening

| Research Tool | Function | Application Example |

|---|---|---|

| CoRE MOF Database | Curated database of metal-organic frameworks | Provides initial candidate structures for screening [18] |

| RASPA Software | Molecular simulation package for adsorption/diffusion | Performs Grand Canonical Monte Carlo simulations [18] |

| Molecular Fingerprints | Structural representation using bit strings | Identifies key structural features enhancing performance [18] |

| Random Forest Algorithm | Ensemble machine learning method | Predicts target properties from descriptor sets [18] |

| CatBoost Algorithm | Gradient boosting on decision trees | Handles categorical features in predictive modeling [18] |

Workflow Validation and Structure-Performance Relationships

Validating the high-throughput computational screening workflow requires establishing robust structure-performance relationships that provide actionable insights for candidate selection and design. Research on metal-organic frameworks for iodine capture demonstrates how these relationships can be quantified and visualized [18]. For instance, analysis of 1,816 MOF structures revealed that the largest cavity diameter optimal for iodine capture falls between 4 and 7.8 Å, with steric hindrance limiting adsorption below 4 Å and diminished molecule-framework interaction reducing performance above 7.8 Å [18]. Similarly, void fraction optimal values were identified between 0 and 0.17, with an initial increase in performance up to 0.09 followed by a decrease as void fraction expanded to 0.6 [18].

The integration of machine learning enhances these insights by quantifying feature importance across descriptor categories. In the referenced study, Henry's coefficient and heat of adsorption were identified as the two most crucial chemical factors governing iodine adsorption performance [18]. Molecular fingerprint analysis further revealed that the presence of six-membered ring structures and nitrogen atoms in the MOF framework were key structural factors that enhanced iodine adsorption, followed by the presence of oxygen atoms [18]. These insights establish a robust guideline framework for accelerating the screening and targeted design of high-performance materials, demonstrating how computational approaches can systematically elucidate the multifaceted factors governing performance.

The implementation of high-throughput computational screening represents a fundamental shift in addressing the traditional bottlenecks of drug discovery: cost, time, and high failure rates. By leveraging automated workflows, high-throughput technologies, and AI-driven data analysis, researchers can access optimization spaces not possible using the throughput allowed by traditional laboratory work [17]. These approaches generate robust data for artificial intelligence and machine learning approaches, creating a virtuous cycle of improved prediction and design [17]. Despite considerable breakthroughs, implementation in biological domains still has hurdles that need to be overcome, but the direction of travel is clear [17].

In an era where R&D productivity is paramount, the integration of AI-driven intelligence is not merely an operational enhancement; it is a strategic imperative for securing a competitive advantage and ensuring long-term viability [16]. The ability to move beyond deterministic project plans to build dynamic, probabilistic timelines allows for forecasting competitor milestones, predicting litigation and regulatory risks, and strategically identifying low-competition innovation pathways [16]. The ultimate goal is to compress the lengthy development cycle, thereby maximizing the commercially valuable period of patent exclusivity and delivering innovative therapies to patients more efficiently [16]. As these computational methodologies continue to mature and validate against experimental results, they establish a new paradigm for accelerating discovery while managing the profound risks inherent in pharmaceutical development.

The Expanding Role of HTCS in Precision Medicine and Personalized Therapeutic Development

The advent of high-throughput computational screening (HTCS) has fundamentally transformed the paradigm of therapeutic development for precision medicine. This computational approach enables the rapid evaluation of vast chemical and biological spaces to identify candidate compounds with specific therapeutic properties, effectively bridging the gap between large-scale data generation and personalized treatment strategies. The evolution of HTCS technologies has allowed researchers to move beyond traditional one-size-fits-all drug development toward tailored therapeutic solutions that account for individual genetic, proteomic, and metabolic variations [19]. In quantitative HTS (qHTS), concentration–response data can be generated simultaneously for thousands of different compounds and mixtures, creating rich datasets for identifying personalized treatment options [19].

The integration of HTCS within precision medicine frameworks addresses several critical challenges in modern therapeutics. First, it enables the systematic identification of compound candidates that target specific molecular pathways altered in individual patients or patient subpopulations. Second, it facilitates the prediction of adverse drug reactions and toxicity profiles based on personal genomic information, thereby enhancing treatment safety. Third, HTCS allows for the repurposing of existing drugs for new indications by systematically screening established compounds against novel cellular models or genetic profiles. This approach is particularly valuable for rare diseases and oncology applications, where traditional drug development pipelines are often economically challenging or temporally impractical [20].

Core Computational Workflows in HTCS for Precision Medicine

Integrated HTCS Workflow for Personalized Therapeutic Development

The implementation of robust computational workflows is essential for reliable HTCS in precision medicine applications. These workflows typically follow a structured pathway that begins with data curation and proceeds through multiple analytical stages to identify and validate candidate therapeutics. A well-designed computational workflow for HTCS incorporates several critical components: (1) comprehensive data curation and preparation; (2) ADME/T (absorption, distribution, metabolism, excretion, and toxicity) profiling; (3) assessment of promiscuous binders or frequent HTS hitters; (4) evaluation of chemical diversity; (5) similarity assessment to known active compounds; and (6) comparison to existing compound collections [20]. Such workflows have been successfully deployed across multiple screening projects targeting rare diseases such as Leukoencephalopathy with vanishing white matter (VWM disease), amyotrophic lateral sclerosis (ALS), and cystic fibrosis (CF) [20].

The critical path for HTCS workflow implementation can be visualized as follows:

Data Curation and Preparation

The foundation of any successful HTCS campaign lies in rigorous data curation and preparation. This initial phase involves collecting, cleaning, and standardizing chemical and biological data from diverse sources to ensure consistency and reliability in subsequent analyses. Data curation addresses several critical challenges: (1) identification and correction of erroneous chemical structures that may be present in available databases (reported to be up to 10% in some public repositories); (2) standardization of chemical representations and descriptors; and (3) normalization of biological activity measurements across different experimental systems and platforms [20]. For precision medicine applications, this stage must also incorporate comprehensive patient-specific data, including genomic variants, protein expression profiles, and clinical parameters, all of which require careful harmonization to enable meaningful computational screening.

Effective data curation employs multiple computational techniques to ensure data quality. Structure validation checks identify chemically improbable or impossible structures, while standardization routines normalize tautomeric representations, charge states, and stereochemistry. For biological data, outlier detection methods identify potentially erroneous measurements, and normalization procedures account for systematic biases across different experimental batches. Additionally, cheminformatics tools perform chemical structure unification to ensure consistent representation of compounds across different databases. This meticulous approach to data preparation is essential for building reliable predictive models that can accurately identify personalized therapeutic options [20].

Screening Library Design Strategies

The design of screening libraries represents a critical strategic decision in HTCS for precision medicine, significantly influencing the probability of success in identifying effective personalized therapeutics. Library design strategies generally fall into two main categories: focused (biased) libraries and diverse (unbiased) libraries. Focused libraries are employed when prior knowledge about the biological target or disease mechanism exists, allowing researchers to enrich the screening collection with compounds likely to exhibit activity. In contrast, diverse libraries are preferable when targeting novel pathways or when the precise disease mechanism is incompletely understood, as they maximize the exploration of chemical space and increase the probability of identifying novel chemotypes [20].

Several key factors inform screening library selection and design for precision medicine applications. Chemical diversity ensures broad coverage of chemical space and is typically assessed using pairwise distances between library members in a predefined descriptor space, with two-dimensional fingerprints coupled with the Tanimoto coefficient serving as effective metrics for diversity assessment [20]. ADME/T properties (absorption, distribution, metabolism, excretion, and toxicity) must be considered early in the screening process to prioritize compounds with favorable pharmacokinetic and safety profiles; this includes adherence to Lipinski's "rule of five," Veber's rules, and specific toxicophore filters [20]. Additionally, libraries should be evaluated for the presence of promiscuous binders or frequent HTS hitters that often produce false-positive results, using substructure filters to identify and potentially exclude such compounds [20].

Table 1: Key Considerations for Screening Library Design in Precision Medicine

| Consideration | Description | Application in Precision Medicine |

|---|---|---|

| Library Type Selection | Choice between focused or diverse libraries based on available knowledge | Focused libraries for known targets; diverse libraries for novel mechanisms |

| Chemical Diversity | Assessment of structural variety using molecular descriptors and fingerprints | Ensures broad coverage of chemical space for identifying personalized therapeutics |

| ADME/T Profiling | Evaluation of absorption, distribution, metabolism, excretion, and toxicity properties | Prioritizes compounds with favorable pharmacokinetic and safety profiles |

| Promiscuous Binder Filtering | Identification and potential exclusion of compounds prone to nonspecific binding | Reduces false positives and identifies more specific therapeutic candidates |

| Similarity to Known Actives | Assessment of chemical similarity to established therapeutic compounds | Enables targeted exploration around known successful chemotypes |

Data Analysis and Hit Identification

The analysis of HTCS data and identification of promising hits constitute a crucial phase in the personalized therapeutic development pipeline. This process involves applying statistical models and machine learning algorithms to extract meaningful patterns from large-scale screening data and prioritize compounds for further investigation. The Hill equation (HEQN) serves as the fundamental model for analyzing concentration-response relationships in qHTS data, providing parameters that characterize compound potency and efficacy [19]. The logistic form of the Hill equation is expressed as:

[ Ri = E0 + \frac{(E\infty - E0)}{1 + \exp{-h[\log Ci - \log AC{50}]}} ]

where (Ri) represents the measured response at concentration (Ci), (E0) is the baseline response, (E\infty) is the maximal response, (AC_{50}) is the concentration for half-maximal response, and (h) is the shape parameter [19].

Despite its widespread use, fitting data to the Hill equation presents significant statistical challenges, particularly in the context of HTCS. Parameter estimates can be highly variable when the tested concentration range fails to include at least one of the two asymptotes, when responses exhibit heteroscedasticity, or when concentration spacing is suboptimal [19]. This variability can lead to both false negatives (truly active compounds misclassified as inactive) and false positives (inactive compounds misclassified as active), potentially derailing personalized therapeutic development efforts. The implementation of robust statistical approaches that account for parameter estimate uncertainty is therefore essential for reliable hit identification in precision medicine applications [19].

Table 2: Key Parameters in HTS Data Analysis Using the Hill Equation

| Parameter | Symbol | Interpretation | Impact on Therapeutic Development |

|---|---|---|---|

| Baseline Response | (E_0) | Response in absence of compound | Establishes baseline activity for patient-specific models |

| Maximal Response | (E_\infty) | Maximum achievable response | Indicates compound efficacy for personalized treatment |

| Half-Maximal Activity Concentration | (AC_{50}) | Concentration producing 50% of maximal response | Measures compound potency; informs dosing considerations |

| Hill Coefficient | (h) | Steepness of concentration-response curve | Suggests cooperative binding mechanisms; informs mechanism of action |

Validation Frameworks for HTCS Workflows in Regulatory Environments

Standards for Computational Workflow Communication

As HTCS becomes increasingly integral to therapeutic development, establishing robust validation frameworks and communication standards has emerged as a critical requirement, particularly in regulatory contexts. The complexity of modern computational analyses, especially in precision medicine applications, creates significant challenges in effectively communicating methodological details, parameters, and results to stakeholders including regulatory agencies. The BioCompute Objects (BCOs) standard (IEEE 2791-2020) addresses this challenge by providing a formal framework for documenting and sharing computational workflows, ensuring transparency, reproducibility, and regulatory compliance [21]. This standard establishes a structured mechanism for reporting computational analyses in sufficient detail to enable informed decisions and experimental repeats, which is particularly crucial for applications in regulatory submissions [21].

The implementation of standardized computational workflow documentation offers several significant advantages for precision medicine. First, it enhances reproducibility across different research groups and institutions, facilitating collaboration and verification of findings. Second, it provides regulatory agencies with clear insight into analytical methods and parameters, streamlining the review process for personalized therapeutics. Third, it enables more effective knowledge transfer between research and development teams, particularly important in the complex landscape of precision medicine where multiple specialized analyses must be integrated. The adoption of such standards is especially valuable for complex computational pipelines such as those used in viral contaminant detection in biological manufacturing, which share methodological similarities with precision medicine applications [21].

Benchmarking and Performance Assessment

The validation of HTCS workflows requires rigorous benchmarking against established reference datasets and performance metrics to ensure reliability and predictive accuracy. The development of specialized benchmark datasets, such as the HTSC-2025 dataset for superconducting materials, illustrates the importance of standardized evaluation in computational screening methodologies [22]. Although focused on superconductors rather than therapeutic compounds, the HTSC-2025 benchmark demonstrates key principles relevant to precision medicine: comprehensive compilation of reference data, systematic categorization of materials, and standardized performance metrics including mean absolute error (MAE) and prediction success rates across different value intervals [22]. Similar benchmarking approaches can be adapted for therapeutic screening by establishing well-characterized compound collections with thoroughly validated activity profiles against specific therapeutic targets.

Performance assessment in HTCS for precision medicine should incorporate multiple complementary metrics to comprehensively evaluate workflow effectiveness. Predictive accuracy measures how well computational models forecast experimental results, typically quantified using metrics such as MAE, root mean square error (RMSE), or area under the receiver operating characteristic curve (AUC-ROC). Reproducibility assesses the consistency of results across repeated experiments or different implementations of the same workflow. Robustness evaluates performance stability in the presence of noisy or incomplete data, reflecting real-world screening conditions. Computational efficiency measures the resource requirements of the screening workflow, particularly important for large-scale personalized therapeutic screening. Together, these metrics provide a comprehensive framework for validating HTCS workflows in precision medicine applications [22] [19].

Essential Research Reagents and Computational Tools for HTCS

The successful implementation of HTCS in precision medicine relies on a comprehensive toolkit of research reagents and computational resources that enable large-scale screening and analysis. These essential components form the foundation for robust, reproducible screening campaigns aimed at identifying personalized therapeutic options.

Table 3: Essential Research Reagent Solutions for HTCS in Precision Medicine

| Reagent/Tool Category | Specific Examples | Function in HTCS Workflow |

|---|---|---|

| Compound Libraries | Commercial libraries (e.g., ChemDiv, Enamine), focused libraries, diversity sets | Source of chemical matter for screening against patient-specific targets |

| Cell-Based Assay Systems | Patient-derived primary cells, iPSC-derived models, engineered cell lines | Provide biologically relevant systems for evaluating compound effects |

| Assay Reagents | Fluorescent dyes, luminescent substrates, antibody conjugates | Enable detection and quantification of biological responses in HTS formats |

| Bioinformatics Databases | PubChem, ChEMBL, DrugBank, COSMIC, TCGA | Provide reference data on compound properties, targets, and disease associations |

| Cheminformatics Tools | Molecular fingerprints, descriptor packages, similarity search algorithms | Enable computational representation and analysis of chemical compounds |

| ADME/T Prediction Tools | QSAR models, PBPK modeling software, toxicity predictors | Forecast compound pharmacokinetics and safety profiles |

The computational infrastructure supporting HTCS continues to evolve, incorporating increasingly sophisticated algorithms and modeling approaches. Graph neural networks have demonstrated remarkable performance in predicting material properties, with models such as the atomistic line graph neural network (ALIGNN) achieving mean absolute errors of less than 2K in predicting superconducting transition temperatures [22]. Similar approaches can be adapted for therapeutic compound screening by representing molecules as graphs and using neural network architectures to learn structure-activity relationships. Additional advanced modeling techniques include bootstrapped ensemble methods, 3D vision transformer architectures, and equivariant graph neural networks, all of which contribute to more accurate prediction of compound properties and activities [22]. The integration of these advanced computational methods with comprehensive experimental reagent systems creates a powerful platform for identifying personalized therapeutics through HTCS.

The field of HTCS in precision medicine continues to evolve rapidly, driven by advances in computational methods, screening technologies, and biological understanding. Several emerging trends are poised to further expand the role of HTCS in personalized therapeutic development. The integration of artificial intelligence and machine learning approaches with HTCS data is enabling more accurate prediction of compound efficacy and toxicity, potentially reducing the need for extensive experimental screening [22]. The development of more sophisticated patient-derived cellular models, including complex organoid systems and microphysiological devices, is providing more clinically relevant screening platforms that better recapitulate patient-specific biology. Additionally, the increasing availability of multi-omics data from individual patients is creating opportunities for truly personalized screening approaches that account for the unique genomic, proteomic, and metabolic features of each individual.

The expanding role of HTCS in precision medicine represents a paradigm shift in therapeutic development, moving away from population-based approaches toward truly personalized strategies. The structured computational workflows, validation frameworks, and research tools described in this article provide a foundation for implementing effective HTCS campaigns aimed at identifying personalized therapeutic options. As these technologies continue to mature and integrate with clinical care, HTCS promises to accelerate the development of tailored treatments for individual patients, particularly those with rare diseases or specific genetic profiles that are not adequately addressed by conventional therapeutics. The ongoing standardization of computational workflows and enhanced collaboration between computational scientists, screening specialists, and clinical researchers will be essential for realizing the full potential of HTCS in precision medicine.

Executing the Screen: Advanced Methodologies and Cross-Disciplinary Applications

High-throughput computational screening (HTCS) has revolutionized the early stages of drug discovery by enabling the rapid identification and optimization of potential lead compounds [23]. This paradigm leverages advanced algorithms, molecular simulations, and increasing computational power to efficiently explore vast chemical spaces that are experimentally intractable. The core pillars of this structure-based approach include molecular docking, molecular dynamics (MD), and binding free energy calculations, each providing distinct and complementary insights into molecular recognition events [24] [23]. Within the context of workflow validation research, understanding the capabilities, limitations, and appropriate application of these tools is paramount. This guide provides an in-depth technical examination of these computational methodologies, detailing their theoretical foundations, practical implementation, and role in a validated high-throughput screening pipeline.

Molecular Docking in Virtual Screening

Theoretical Foundations and Methodological Classification

Molecular docking is a computational process that predicts the preferred orientation and binding mode of a small molecule (ligand) when bound to a target macromolecule (receptor) [24]. The primary goal is to predict the binding affinity and geometry of the resulting complex. The underlying possibility of receptor-ligand binding and the strength of their interaction depend on the change in free energy (ΔG_binding) that occurs during the binding process, as described by the equation:

ΔGbinding = -RTlnKi = ΔHbinding - TΔSbinding

where Ki is the binding constant, ΔHbinding represents the enthalpy change, and ΔSbinding represents the entropy change [24]. In practice, most docking scoring functions approximate the interaction energy (Einteraction) using simplified terms:

Einteraction = EVDW + Eelectrostatic + EH-bond

These functions often ignore entropic effects and more complex enthalpy contributions to maintain computational efficiency, which is a key limitation affecting accuracy [24].

Docking methods are broadly classified into three categories based on their treatment of molecular flexibility:

- Rigid Docking: Neither the receptor nor the ligand conformation changes during docking. This method is often used for examining large systems like protein-protein interactions [24].

- Flexible Docking: Both ligand and target structures are free to change during the docking process. This provides more accurate recognition modeling but demands significantly more computational resources [24].

- Semi-flexible Docking: The ligand is allowed to be flexible while the receptor structure remains rigid. This approach offers a practical balance between accuracy and computational cost and is most commonly used for virtual screening in drug discovery [24].

Common Docking Software and Algorithms

The number of software applications for molecular docking exceeds 100, each with distinct algorithmic approaches and scoring functions [24]. The table below summarizes key features of commonly used docking software.

Table 1: Commonly Used Molecular Docking Software and Their Algorithmic Features

| Software | License | Algorithm Features | Scoring Function |

|---|---|---|---|

| AutoDock Vina [24] | Free | Iterated local search global optimizer with gradient optimization | Knowledge-based and empirical combination |

| AutoDock [24] | Free | Lamarckian Genetic Algorithm | Empirical binding free energy function |

| rDock [24] | Free | Stochastic/deterministic search techniques | Fast intermolecular scoring + pseudo-energy terms |

| DOCK 6 [24] | Free | Anchor-and-grow search algorithm | Footprint similarity scoring |

| LeDock [24] | Free | Simulated annealing & genetic algorithm | Based on AutoDock 4 with hydrogen bonding penalty |

| Glide [24] | Commercial | Complete systematic search | Emodel (ChemScore, force-field terms, solvation) |

| GOLD [24] | Commercial | Genetic algorithm | Hydrogen bonding, dispersion potentials, MM terms |

| FlexX [24] | Commercial | Fragment growth method | Empirical scoring function |

Experimental Docking Protocol

A standardized molecular docking protocol involves several critical steps to ensure reproducible and reliable results:

Protein Preparation: Obtain the three-dimensional structure of the target from databases like the Protein Data Bank (PDB). Using MOE (Molecular Operating Environment) or similar software, prepare the protein by adding hydrogen atoms, assigning protonation states of titratable residues (e.g., using the Protonate-3D tool at pH 7.4), and removing water molecules and original ligands [25]. Energy minimization may be performed to relieve steric clashes.

Ligand Library Preparation: Collect small molecules from chemical databases (e.g., ZINC, CHEMBL). Generate 3D structures for each ligand and optimize their geometry using force fields like MMFF94× until the root mean square (RMS) gradient falls below 0.05 kcal mol⁻¹ Å⁻¹ [25]. Define rotatable bonds and generate possible tautomers and stereoisomers.

Binding Site Definition: The binding site on the protein target must be clearly defined. This can be done by using the known location of a co-crystallized native ligand or by using computational methods to predict potential binding pockets.

Docking Execution: Perform the docking calculation using the chosen software (e.g., Vina, LeDock). For virtual screening, ensure consistent parameters across all ligands in the library. The software will generate multiple putative binding poses for each ligand.

Pose Scoring and Ranking: The scoring function ranks the generated poses based on predicted binding affinity. It is standard practice to output multiple top-scoring poses (e.g., 5-20) per ligand for subsequent analysis. Post-docking analysis often involves visual inspection of top-ranked complexes and clustering of similar binding modes.

Molecular Dynamics in Binding Validation

The Role of MD in Refining Docking Results