Validating Density Functional Theory: Bridging Computational Predictions with Experimental Results in Materials and Drug Discovery

This article provides a comprehensive overview of the validation of Density Functional Theory (DFT) against experimental results, a critical process for establishing its reliability in predicting material and molecular properties.

Validating Density Functional Theory: Bridging Computational Predictions with Experimental Results in Materials and Drug Discovery

Abstract

This article provides a comprehensive overview of the validation of Density Functional Theory (DFT) against experimental results, a critical process for establishing its reliability in predicting material and molecular properties. We explore the foundational principles of DFT and the critical need for experimental benchmarking. The article then delves into diverse methodological applications, from predicting the mechanical properties of semiconductors to optimizing chemotherapy drugs. We address common challenges and troubleshooting strategies, including the selection of functionals and basis sets for complex systems like actinides. Finally, we present a rigorous framework for the validation and comparative analysis of DFT methods, highlighting benchmark studies against experimental data for properties like reduction potential and electron affinity. This resource is tailored for researchers and professionals in computational chemistry, materials science, and drug development, offering practical insights for employing DFT with confidence in real-world applications.

The Principles and Imperative of DFT Validation

Core Tenets of Density Functional Theory

Density Functional Theory (DFT) stands as a cornerstone computational method in quantum chemistry and materials science, enabling the prediction of electronic structure and properties of many-body systems from first principles. This whitepaper details the core theoretical tenets of DFT, its practical computational methodologies, and its integral role in a modern research pipeline that validates theoretical predictions with experimental results. Designed for researchers and scientists in fields ranging from drug development to energy storage, this guide provides a technical foundation for employing DFT as a robust tool for rational material design and mechanistic investigation, with a specific focus on bridging theoretical calculations and experimental validation.

Density Functional Theory (DFT) is a computational quantum mechanical modelling method used to investigate the electronic structure of many-body systems, particularly atoms, molecules, and condensed phases [1]. Its foundational principle is a paradigm shift from the traditional wavefunction-based approach. In conventional quantum chemistry, the complex many-electron wavefunction, which depends on 3N spatial coordinates for an N-electron system, is the central quantity [2]. DFT, by contrast, uses the electron density n(r) as the fundamental variable [1]. The electron density is a function of only three spatial coordinates, making it a conceptually and computationally simpler quantity than the many-body wavefunction [2]. The properties of a many-electron system can be determined by using functionals—functions of a function—which in this case are functionals of the electron density [1].

DFT has become one of the most popular and versatile methods available in condensed-matter physics, computational physics, and computational chemistry due to its favorable balance between computational cost and accuracy [1]. Its applicability spans from predicting molecular geometries and reaction energies to calculating spectroscopic properties and designing novel materials for energy and biomedical applications [2] [3]. The theory's power lies in its ability to provide a first-principles pathway to understanding and predicting material behavior without requiring empirical parameters, thus serving as a virtual experimental platform for researchers [4].

Theoretical Foundations

The modern formulation of DFT rests on two fundamental theorems introduced by Hohenberg and Kohn, and the subsequent practical implementation developed by Kohn and Sham.

The Hohenberg-Kohn Theorems

The rigorous theoretical basis for DFT was established by the Hohenberg-Kohn theorems [1].

The First Hohenberg-Kohn Theorem states that the ground-state electron density n(r) of a system uniquely determines the external potential V(r), and consequently, the full many-body Hamiltonian and all ground-state properties [2] [1]. This means that the electron density is as valid a fundamental quantity as the many-body wavefunction for describing a system's ground state. It provides the justification for using the electron density, instead of the complex wavefunction, as the central variable.

The Second Hohenberg-Kohn Theorem defines a universal energy functional E[n] for any external potential. This theorem states that the ground-state electron density is the density that minimizes this energy functional, and the value of the functional at this minimum is the exact ground-state energy [1]. This variational principle provides a strategy for finding the ground-state density: minimize the energy functional with respect to the density.

The Kohn-Sham Equations

While the Hohenberg-Kohn theorems are exact, they do not provide a practical way to compute the energy or density. The Kohn-Sham approach addresses this by introducing a clever mapping [2]. It replaces the complex, interacting system of electrons with a fictitious system of non-interacting electrons that has the same ground-state density as the original, interacting system [1]. For this non-interacting system, the many-body problem simplifies to solving a set of single-electron equations, known as the Kohn-Sham equations:

[ \left[-\frac{\hbar^2}{2m}\nabla^2 + V{ext}(\mathbf{r}) + V{H}(\mathbf{r}) + V{XC}(\mathbf{r})\right] \psii(\mathbf{r}) = \epsiloni \psii(\mathbf{r}) ]

In these equations:

- (\psi_i(\mathbf{r})) are the Kohn-Sham orbitals.

- (V_{ext}(\mathbf{r})) is the external potential from the nuclei.

- (V_{H}(\mathbf{r})) is the Hartree potential, representing the classical electrostatic repulsion between electrons.

- (V_{XC}(\mathbf{r})) is the exchange-correlation potential, which encapsulates all the complex many-electron interactions, including quantum mechanical exchange and correlation effects [1].

The electron density is constructed from the Kohn-Sham orbitals: (n(\mathbf{r}) = \sum{i=1}^N |\psii(\mathbf{r})|^2). The Kohn-Sham equations must be solved self-consistently because the potentials (V{H}) and (V{XC}) themselves depend on the density (n(\mathbf{r})).

Key Approximations and Computational Methodology

The accuracy of any DFT calculation is contingent on the approximations used for the exchange-correlation functional and the numerical techniques employed.

Hierarchy of Exchange-Correlation Functionals

The exact form of the exchange-correlation functional (E_{XC}[n]) is unknown, and its approximation is the most significant challenge in DFT. The following table summarizes the primary classes of functionals.

Table 1: Hierarchy of Common Exchange-Correlation (XC) Functionals in DFT

| Functional Class | Description | Key Feature(s) | Examples | Typical Use Cases |

|---|---|---|---|---|

| Local Density Approximation (LDA) | Depends only on the local value of the electron density (n(\mathbf{r})) [2]. | Models the homogeneous electron gas; tends to overbind [2]. | - | Solid-state physics; often a starting point for more advanced functionals [2]. |

| Generalized Gradient Approximation (GGA) | Depends on both the local density and its gradient (\nabla n(\mathbf{r})) [2]. | Improved accuracy for inhomogeneous electron densities, such as in atoms and molecules [2]. | PBE [5], BP86 [2] | Good performance for structural parameters and geometries [2]. |

| Hybrid Functionals | Mixes GGA with a portion of exact Hartree-Fock exchange [2]. | Generally provides improved accuracy for a wide range of molecular properties, including energetics [2]. | B3LYP [2] | The dominant choice for transition metal-containing molecules; widely used in quantum chemistry [2]. |

| Meta-GGA & Double Hybrids | Meta-GGA includes higher derivatives (kinetic energy density). Double hybrids mix exact exchange and nonlocal correlation [2]. | Offer improved energetics and spectroscopic properties; higher computational cost [2]. | TPSSh (meta-GGA) [2], B2PLYP (double hybrid) [2] | Increasing use for high-accuracy calculations of energies and spectroscopic parameters [2]. |

Specialized corrections, such as the DFT+U method, which adds a Hubbard term to better describe strongly correlated electrons, are often necessary for specific materials like transition metal oxides [5]. The choice of functional is a critical step that depends on the system and properties of interest.

Basis Sets and Numerical Implementation

To solve the Kohn-Sham equations numerically, the Kohn-Sham orbitals (\psi_i(\mathbf{r})) must be expanded in a basis set. Two prevalent approaches are:

- Plane-Wave Basis Sets: Commonly used in solid-state physics for periodic systems [5]. The orbitals are expanded in a basis of plane waves, with an energy cutoff determining the basis set size. The interaction between core and valence electrons is typically handled using pseudopotentials, which reduce the computational cost [5].

- Localized Basis Sets: Often used in molecular quantum chemistry. These include Gaussian-type orbitals (e.g., 6-31G*) or numerical atomic orbitals. Basis sets of valence triple-zeta quality plus polarization are generally recommended for accurate geometry optimizations [2].

The workflow for a typical DFT calculation involves structural optimization, where the forces on atoms are minimized to find a stable configuration, followed by a single-point energy calculation or property prediction on the optimized structure [4].

DFT in the Research Workflow: Integration with Experiment

DFT's true power is realized when integrated into a cyclical research workflow that connects theoretical prediction with experimental validation. This synergy is critical for validating models, refining methodologies, and achieving genuine scientific insight.

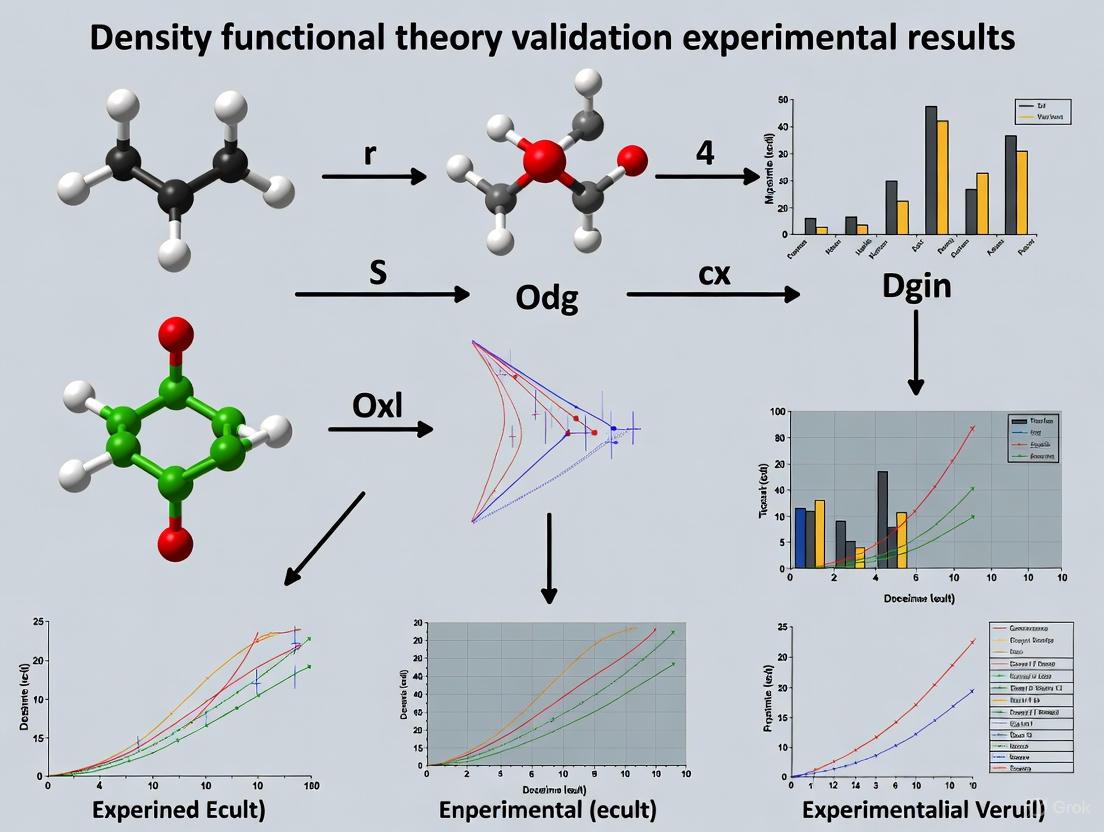

Diagram 1: DFT-Experimental Validation Workflow. This shows the iterative cycle of prediction and validation.

Case Study: Validating an Electrocatalyst for Urea Oxidation

A recent study on a NiMoO₄@MXene electrocatalyst for direct urea fuel cells (DUFCs) exemplifies this workflow [6].

- Experimental Observation: The need for efficient, bifunctional electrocatalysts for energy generation from urea and urine waste.

- DFT Modeling & Prediction: DFT calculations were used to disclose the underlying reaction mechanism, specifically analyzing adsorption energies and electronic interactions at the NiMoO₄@MXene interfaces [6].

- Experimental Validation: The fabricated NiMoO₄@MXene film was tested in a DUFC, achieving a power density of 35.4 mW cm⁻² with urea and significant power densities using human and cow urine [6]. The excellent agreement between the predicted interfacial interactions and the observed high performance validated the DFT model.

Case Study: Graphene for CO₂ Capture

Another example involves using combined DFT and Molecular Dynamics (MD) simulations to study graphene-CO₂ interactions [7].

- DFT/MD Prediction: Simulations predicted an increase in CO₂ adsorption energy with the application of an electric field [7].

- Experimental Validation: Subsequent experiments under an electric field exhibited enhanced CO₂ uptake, confirming the accuracy of the computational model and providing a pathway for optimizing carbon capture systems [7].

Experimental Protocols and Research Reagents

For researchers seeking to implement or reproduce DFT-guided experimental studies, understanding the key materials and methodologies is essential.

The Scientist's Toolkit: Key Research Reagents

The following table details essential materials and their functions, as derived from the cited electrocatalyst study [6].

Table 2: Key Research Reagents for Electrocatalyst Synthesis and Testing

| Reagent / Material | Function in Research | Specific Example / Note |

|---|---|---|

| Transition Metal Salts | Precursors for active catalytic materials. Provide redox-active metal centres (e.g., Ni²⁺/Ni³⁺) [6]. | Nickel molybdate (NiMoO₄) nanorods [6]. |

| MXene (Ti₃C₂) | Two-dimensional catalyst support. Provides high electrical conductivity, functionalizable surface, and stabilizes metal oxides [6]. | Synthesized by etching Ti₃AlC₂ (MAX phase) with HCl/LiF [6]. |

| Urea / Urine Samples | Acts as the fuel in the oxidation reaction. Enables electricity generation and wastewater remediation [6]. | Real samples of human and cow urine were used [6]. |

| Alkaline Electrolyte | Essential reaction medium for the Urea Oxidation Reaction (UOR). Facilitates the necessary redox chemistry [6]. | Typically a concentrated KOH or NaOH solution. |

Detailed Experimental Protocol: Electrocatalyst Fabrication and Testing

This protocol outlines the key steps for synthesizing and validating the NiMoO₄@MXene electrocatalyst, based on the integrated DFT-experimental study [6].

Synthesis of MXene Support:

- Method: Etching of the MAX phase.

- Procedure: Disperse 1.0 g of Ti₃AlC₂ in a mixture of 20 mL HCl (6 M) and 1.5 g LiF. Stir magnetically at 35 °C for 18 hours.

- Workup: Recover the product (Ti₃C₂Tₓ) via centrifugation. Wash with deionized water until the supernatant reaches pH ~6. Re-disperse the final cake in DI water and collect the supernatant after ultrasonication and centrifugation to obtain a colloidal solution of MXene [6].

Preparation of NiMoO₄@MXene Hybrid:

- Method: Hydrothermal treatment followed by calcination.

- Procedure: Mix the MXene colloidal solution with stoichiometric amounts of nickel and molybdenum precursors (e.g., nitrates or ammonium salts). Transfer the mixture to a Teflon-lined autoclave and heat (e.g., 120-180 °C for several hours).

- Calcination: Recover the hydrothermally formed product, dry it, and then calcine it in an inert atmosphere at a specified temperature (e.g., 400 °C) to crystallize the NiMoO₄ nanorods on the MXene support [6].

Fabrication of Freestanding Electrode:

- Method: Vacuum filtration.

- Procedure: Create a homogeneous dispersion of the NiMoO₄@MXene hybrid. Use vacuum filtration to deposit the material onto a suitable filter membrane, forming a freestanding, flexible film that can be used directly as an electrode [6].

Electrochemical Testing in DUFC:

- Setup: Assemble a Direct Urea Fuel Cell (DUFC) using the NiMoO₄@MXene film as the anode (UOR electrode) and a suitable cathode (e.g., Pt/C for oxygen reduction).

- Fuel & Conditions: Use an alkaline electrolyte solution. Feed the anode with a solution of urea (e.g., 0.33 M in 1 M KOH) or real samples like human/cow urine.

- Measurement: Record the polarization curves (current density vs. voltage) to calculate the maximum power density (in mW cm⁻²). Quantify urea removal efficiency after operation via standard analytical techniques [6].

Calculable Properties and Research Applications

DFT can compute a wide array of physical and chemical properties, making it applicable across diverse scientific domains [2] [4].

Table 3: Key Physical Properties Accessible via DFT Calculations

| Property Category | Specific Calculable Properties | Relevance to Research |

|---|---|---|

| Structural Properties | Bond lengths, bond angles, lattice constants, stable molecular geometries, elastic constants (Young's modulus, bulk modulus) [2] [4]. | Validates against XRD/EXAFS data; assesses mechanical stability and stiffness of materials [2] [5]. |

| Electronic Properties | Band structure, band gap, molecular orbital energies (HOMO, LUMO), atomic charges, density of states [4]. | Predicts electrical conductivity, optical absorption, and chemical reactivity; essential for semiconductor and catalyst design [5] [4]. |

| Spectroscopic Properties | Infrared (IR) and Raman spectra, NMR chemical shifts, Mössbauer parameters, magnetic properties (EPR) [2]. | Allows direct comparison with experimental spectra for structural elucidation, e.g., in complex bioinorganic systems like Photosystem II [2]. |

| Energetic & Thermodynamic Properties | Reaction energies, activation barriers (transition states), adsorption energies, phonon dispersion, free energy, entropy, heat capacity [5] [4]. | Determines reaction feasibility and mechanisms; evaluates catalytic activity; models temperature-dependent behavior and stability [6] [4]. |

These calculable properties underpin DFT's significant role in accelerating research in energy storage (e.g., optimizing electrode materials and ion transport in batteries) and biomedical applications (e.g., modeling drug-target interactions and the surface reactivity of implant materials) [3].

The core tenets of Density Functional Theory provide a powerful and versatile framework for understanding and predicting the behavior of matter at the quantum mechanical level. Its capacity to calculate a vast range of properties from first principles makes it an indispensable tool in the modern researcher's toolkit. However, as this guide has emphasized, its predictive power is most robust when deployed within an iterative framework of experimental validation and model refinement.

The future of DFT lies in its increasing integration with other powerful computational and data-driven approaches. The rise of machine-learned potentials is extending the spatial and time scales accessible to quantum-accurate simulations [3]. Furthermore, the integration of artificial intelligence with DFT descriptors is opening new paths for autonomous materials discovery and the rational design of complex systems, from efficient electrocatalysts to pharmaceutical compounds [6] [8]. As these methodologies mature, the synergy between density functional theory, high-throughput computing, and experimental science will continue to be a central driver of innovation across scientific disciplines.

Density Functional Theory (DFT) has established itself as the cornerstone of modern computational materials science, drug discovery, and catalyst design. This quantum mechanical approach enables researchers to calculate the electronic structure of multi-electron systems by focusing on electron density rather than the more complex many-body wavefunction [2]. The practical implementation of DFT through the Kohn-Sham equations has made it possible to predict a wide range of molecular and material properties, including geometries, energies, reaction mechanisms, and spectroscopic parameters with reasonable accuracy [2].

Despite its widespread adoption and numerous successes, DFT possesses inherent limitations that create a critical gap between computational prediction and experimental reality. The fundamental challenge lies in the approximation of the exchange-correlation functional—the term that accounts for quantum mechanical exchange and correlation effects that is not known exactly [2]. This theoretical shortcoming manifests as systematic errors in property predictions that can significantly impact research outcomes and technological development across multiple disciplines. The following sections examine the quantitative evidence for these discrepancies, detail methodologies for experimental validation, and provide frameworks for bridging this persistent gap.

Quantitative Evidence: Documenting the DFT-Experiment Gap

Formation Energy Discrepancies in Materials Databases

Large-scale comparisons between DFT-calculated and experimentally measured formation energies reveal systematic errors that affect materials discovery efforts. Multiple studies have quantified these discrepancies across major computational materials databases, as summarized in Table 1.

Table 1: Documented Errors in DFT-Predicted Formation Energies Across Major Databases

| Database | Mean Absolute Error (eV/atom) | Reference Dataset | Primary Source of Error |

|---|---|---|---|

| Materials Project | 0.133-0.172 | 1,670 experimental measurements [9] | Temperature difference (0K vs. 300K) [9] |

| Open Quantum Materials Database (OQMD) | 0.108 | 1,670 experimental measurements [9] | Phase transformations between 0-300K [9] |

| JARVIS | 0.095 | 463 materials from Matminer [9] | Systematic functional error [9] |

| Materials Project (recent) | 0.078 | 463 materials from Matminer [9] | Element-specific errors (Ce, Na, Li, Ti, Sn) [9] |

These discrepancies are particularly pronounced for compounds containing elements that undergo phase transformations between 0K (where DFT calculations are performed) and room temperature (where experiments are typically conducted) [9]. For certain applications, these errors can approach or exceed 0.1 eV/atom—sufficient to incorrectly predict phase stability in complex ternary systems [10].

Property-Specific Errors in Functional Materials

Beyond formation energies, specific material properties critical for technological applications show significant DFT-experiment gaps:

Bandgap Predictions: Standard DFT protocols exhibit approximately 20% failure rates during bandgap calculations for 3D materials, primarily due to sensitivities in pseudopotential selection, plane-wave basis-set cutoff energy, and Brillouin-zone integration parameters [11].

Mechanical and Thermal Properties: For zinc-blende CdS and CdSe semiconductors, the choice of exchange-correlation functional significantly impacts predictions of elastic constants and thermal expansion behavior. The PBE+U approach provides better alignment with experimental data compared to standard LDA or PBE functionals [5].

Alloy Formation Enthalpies: DFT calculations of ternary phase diagrams for high-temperature alloys (Al-Ni-Pd and Al-Ni-Ti) demonstrate intrinsic energy resolution errors that limit predictive capability for phase stability without correction schemes [10].

Experimental Validation Methodologies

Core Experimental Protocols for DFT Validation

Robust validation of DFT predictions requires carefully designed experimental protocols that target specific property measurements. The following methodologies represent gold-standard approaches across different domains:

Table 2: Key Experimental Methods for DFT Validation

| Experimental Method | Target DFT Property | Protocol Details | Critical Parameters |

|---|---|---|---|

| X-ray Diffraction (XRD) | Geometries, lattice parameters | Single-crystal or powder diffraction with Rietveld refinement | Bond lengths (accuracy: ±2pm for ligands, ±5pm for metal-ligand bonds) [2] |

| Calorimetry | Formation enthalpies | Solution or reaction calorimetry at controlled temperatures | ΔH measurement precision (±0.01 eV/atom) [10] |

| Solid-State NMR | Local coordination environments | Ultra-wideline NMR with Monte Carlo simulation of spectra | Chemical shift tensor parameters (δiso/Ω/κ) for metal sites [12] |

| X-ray Absorption Spectroscopy (XAS) | Oxidation states, local structure | Extended X-ray absorption fine structure (EXAFS) analysis | Coordination numbers, interatomic distances [2] |

Workflow for Systematic DFT Validation

The following diagram illustrates an integrated workflow for systematic validation of DFT predictions through experimental verification:

DFT Validation Workflow: Systematic cycle for validating computational predictions.

This workflow emphasizes the iterative nature of computational-experimental collaboration, where discrepancies lead to refinement of computational parameters rather than representing outright failures.

Cross-Domain Case Studies

Pharmaceutical Formulation Design

In drug development, DFT predictions of molecular interactions between active pharmaceutical ingredients (APIs) and excipients require experimental validation to prevent formulation failures. Specifically:

Co-crystal Stability: DFT calculations of Fukui functions predict reactive sites for API-excipient co-crystallization, but require validation through X-ray diffraction and stability testing under various temperature and humidity conditions [13].

Solvation Effects: COSMO solvation models combined with DFT predict drug release kinetics, but these must be verified through in vitro dissolution testing with UV-Vis spectroscopy or HPLC quantification [13].

The consequences of insufficient validation are significant—approximately 60% of formulation failures for BCS II/IV drugs stem from unforeseen molecular interactions between APIs and excipients that could be identified through proper DFT-experiment correlation [13].

Single-Atom Catalyst Characterization

The development of platinum single-atom catalysts (SACs) on nitrogen-doped carbon supports illustrates the critical role of experimental validation in resolving local coordination environments:

195Pt Solid-State NMR: This specialized technique validates DFT-predicted coordination environments by measuring chemical shift tensor parameters (δiso/Ω/κ) that are sensitive to Pt oxidation state, coordination number, and ligand identity [12].

Experimental Challenges: Ultra-wideline NMR methodologies requiring low temperatures (to enhance signal-to-noise) and fast repetition rates enable acquisition of complete 195Pt NMR spectra for SACs with Pt contents as low as 1 wt% [12].

Without experimental verification, DFT models of SAC structures remain speculative, as conventional techniques like XPS and XAS provide only partial or average structural information that is often interpreted through intuition and computational modeling [12].

Isotope Geochemistry

In organic geochemistry, DFT predictions of clumped isotope fractionation for organic molecules guide analytical development and calibration:

Equilibrium Fractionation Factors: DFT calculations predict Δ values (deviations from stochastic isotope distribution) for DD, 13CD, 13C13C, 13C15N, and 13C18O clumping in 32 organic molecules across 300-1000K temperature ranges [14].

Experimental Constraints: These predictions provide frameworks for assessing instrumental precision requirements but must be validated through laboratory measurements using gas source isotope ratio mass spectrometry with appropriate standardization [14].

The validation process confirms that reduced mass of atoms, bond multiplicity, and hybridization collectively explain 80% of observed differences between bond types [14].

Emerging Solutions: Bridging the Gap

Machine Learning-Enhanced DFT

Machine learning (ML) approaches now demonstrate the capability to surpass standalone DFT in predicting certain material properties:

Error Correction: Neural network models trained on DFT-experiment discrepancies can predict and correct systematic errors in formation enthalpy calculations, reducing errors in ternary alloy systems (Al-Ni-Pd, Al-Ni-Ti) below DFT-only limitations [10].

Transfer Learning: Deep neural networks pre-trained on large DFT datasets (OQMD, Materials Project) and fine-tuned on experimental measurements can achieve mean absolute errors of 0.064 eV/atom—significantly better than DFT alone (>0.076 eV/atom) for formation energy prediction [9].

Automated Computational Frameworks

Recent developments in automated DFT workflows reduce human-induced variability and improve reproducibility:

Multi-Agent Systems: The DREAMS framework employs a hierarchical, multi-agent system with Large Language Model planners for atomistic structure generation, DFT convergence testing, and error handling, achieving errors below 1% compared to human experts for lattice constant predictions [8].

Reproducibility Protocols: Standardized computational protocols for bandgap calculations address failures related to pseudopotential selection, plane-wave cutoff energies, and Brillouin-zone integration through systematic parameter optimization and error minimization [11].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational and Experimental Resources for DFT Validation

| Resource | Type | Function | Application Context |

|---|---|---|---|

| Quantum ESPRESSO | Software | Plane-wave pseudopotential DFT code | Mechanical, thermal properties of semiconductors [5] |

| 195Pt NMR Spectrometer | Instrumentation | Ultra-wideline solid-state NMR | Local coordination in single-atom catalysts [12] |

| COSMO Solvation Model | Computational | Implicit solvation for DFT | Drug release kinetics in pharmaceutical formulations [13] |

| EMTO-CPA | Software | Exact muffin-tin orbital method | Alloy formation enthalpies with chemical disorder [10] |

| Matminer | Software | Materials data mining toolkit | Benchmarking DFT predictions against experimental data [9] |

The critical gap between DFT predictions and experimental measurements represents both a challenge and an opportunity for advancing materials science, drug development, and catalyst design. Systematic documentation of discrepancies in formation energies, bandgaps, and structural properties has illuminated the fundamental limitations of current exchange-correlation functionals. However, emerging methodologies—including machine learning correction schemes, automated computational frameworks, and sophisticated experimental validation protocols—provide promising pathways toward reconciling computation and experiment.

The most productive approach recognizes DFT not as a standalone predictive tool, but as one component in an iterative validation cycle where computational predictions inform experimental design and experimental results refine computational models. This collaborative paradigm, leveraging both DFT's comprehensive screening capability and experiment's ground-truth validation, will ultimately accelerate materials discovery and technological innovation while establishing greater confidence in computational predictions.

The validation of computational methods through rigorous experimental benchmarking is a cornerstone of modern materials science research. Within the framework of density functional theory (DFT) validation, this process is critical for establishing the reliability and predictive power of simulations. The concordance between computed results and experimental data across multiple property categories—from fundamental structural parameters to complex thermodynamic behavior—serves as the ultimate test for theoretical approaches. This guide provides a comprehensive technical overview of the key experimental benchmarks essential for validating DFT calculations, detailing specific methodologies, protocols, and benchmarks that form the foundation of credible computational materials research.

The critical importance of this validation process is highlighted by large-scale benchmarking efforts like the JARVIS-Leaderboard, which integrates thousands of method comparisons to address reproducibility challenges across computational and experimental modalities. [15] Without systematic benchmarking, computational predictions risk remaining unverified hypotheses, limiting their utility in guiding experimental research and materials design.

Core Experimental Benchmark Categories

Structural Parameters: The Foundation of Validation

The most fundamental comparison between computation and experiment begins with structural parameters. The unit cell dimensions—lattice constants (a, b, c), angles (α, β, γ), and volume—provide the primary validation metric for optimized crystal structures.

Table 1: Benchmarking Lattice Parameters in ZrCr₂ Laves Phases

| Crystal Phase | Space Group | Calculated a (Å) | Calculated c (Å) | Experimental a (Å) | Experimental c (Å) | Deviation |

|---|---|---|---|---|---|---|

| C15 (cubic) | Fd(\bar{3})m | 7.210 | 7.210 | 7.205 | 7.205 | <0.07% |

| C14 (hexagonal) | P6₃/mmc | 5.106 | 8.292 | 5.100 | 8.280 | <0.12% |

| C36 (hexagonal) | P6₃/mmc | 5.240 | 8.580 | 5.230 | 8.560 | <0.19% |

Data adapted from first-principles calculations of ZrCr₂ Laves phases, showing excellent agreement with experimental values with deviations less than 1.37%. [16]

For complex framework materials like SiO₂ polymorphs, benchmarking extends to finer structural details including bond lengths (Si–O) and bond angles (Si–O–Si), which significantly impact material properties. Comprehensive assessments involving 27 DFT approaches against experimental data for multiple silica structures have established that the best-performing functionals achieve mean unsigned errors of approximately 0.2 T atoms per 1000 ų for framework densities. [17]

Thermodynamic Properties

Thermodynamic properties represent a more complex validation tier, testing a method's ability to capture temperature-dependent behavior and phase stability.

Heat Capacity Measurements: Experimental determination of heat capacity (Cₚ) over a temperature range (e.g., 2–300 K) provides critical validation data. For LuB₂C, the temperature dependence of heat capacity was fitted using the approximation Cₚ(T) = aT + ΣC₍D₎ + C₍E₎ + C₍TLS₎(T), incorporating electronic, Debye, Einstein, and two-level system contributions. [18]

Phase Stability: Relative Gibbs free energy calculations across temperature and pressure ranges validate predictive capability for phase transitions. In ZrCr₂ systems, C15 is the stable phase at low temperatures (0–1420 K at 0 GPa), C36 serves as an intermediate phase (1420–2070 K), and C14 becomes prevalent at high temperatures. [16] Above 10 GPa, C14 loses stability across the studied temperature range.

Formation Enthalpy: The formation enthalpy of compounds at 0 K provides a fundamental thermodynamic benchmark. For ZrCr₂ phases, DFT-calculated formation enthalpies show excellent agreement with experimental values, establishing baseline thermodynamic stability. [16]

Table 2: Thermodynamic Benchmarking Data Across Material Systems

| Material | Property | Temperature Range | Computational Method | Experimental Validation |

|---|---|---|---|---|

| ZrCr₂ Laves phases | Phase stability | 0–2500 K | First-principles Gibbs free energy | Phase transformation sequences |

| LuB₂C | Heat capacity Cₚ(T) | 2–300 K | Ab initio band theory | Calorimetry measurements |

| LuB₂C | Thermal expansion | 5–300 K | DFT with PBE functional | X-ray diffraction |

| Energetic ionic purine derivative | Thermodynamic functions | Not specified | DFT with GGA-PBE | Experimental IR frequencies |

Electronic Structure and Mechanical Properties

Electronic Properties: Band structure and density of states calculations require validation through experimental proxies. For Mg₃TeO₆, hybrid HSE06 functionals predicted a wide bandgap and p-type conductivity (6.95 S cm⁻¹), suggesting potential as a transparent conductive oxide pending experimental verification. [19] Similarly, for novel energetic materials, DFT calculations provide electronic structure analysis where direct experimental data may be scarce. [20]

Mechanical Properties: Elastic constants, bulk modulus, and shear modulus can be derived from DFT calculations and validated against mechanical testing. In ZrCr₂ systems, all three phases (C14, C15, C36) were confirmed to be ductile at 0 K and 0 GPa, with ductility decreasing as temperature rises and pressure decreases. [16]

Experimental Methodologies and Protocols

Structural Characterization Protocols

X-ray Diffraction (XRD):

- Sample Preparation: High-purity powders should be finely ground and uniformly loaded into sample holders to minimize preferred orientation. For temperature-dependent lattice parameter studies, samples are mounted in specialized stages with controlled atmosphere.

- Data Collection: Using Cu Kα radiation (λ = 1.5418 Å), collect data over a 2θ range of 10–90° with a step size of 0.01–0.02°. For structural refinement, higher resolution data may be necessary.

- Analysis: Rietveld refinement against XRD patterns determines lattice parameters with high precision (<0.1% error). For LuB₂C, lattice parameters a(T), b(T), and c(T) were determined across 5–300 K, revealing strong thermal expansion anisotropy. [18]

Thermodynamic Measurement Techniques

Heat Capacity Measurement:

- Apparatus: Commercial physical property measurement systems (PPMS) or custom calorimeters.

- Protocol: Using the quasi-adiabatic heat pulse method, apply small temperature increments (≤1% of base temperature) and measure energy input and temperature change. For LuB₂C, measurements spanned 2–300 K with temperature increments gradually increasing from 0.1 K at lowest temperatures to 2–3 K near room temperature. [18]

- Data Processing: Calculate Cₚ from heat capacity per unit volume, molar mass, and measured temperature change. Fit data to theoretical models incorporating electronic, lattice, and specialized contributions.

Phase Stability Studies:

- In-situ High-Temperature XRD: For ZrCr₂ phase stability determination, samples are heated in controlled atmosphere chambers while collecting XRD patterns at temperature intervals. Monitor specific peak positions and intensities corresponding to different polymorphs.

- Differential Scanning Calorimetry (DSC): Identify phase transitions through enthalpy changes during heating/cooling cycles. Heating rates of 5–20 K/min typically balance resolution and thermal lag.

Computational Methods for Benchmarking

Density Functional Theory Approaches

Successful benchmarking requires careful selection of DFT methodologies balanced with computational efficiency:

Exchange-Correlation Functionals: The Perdew-Burke-Ernzerhof (PBE) generalized gradient approximation (GGA) provides a standard choice for structural properties, often with Grimme's dispersion corrections (DFT-D3) for improved van der Waals interactions. [20] [17] For electronic properties, hybrid functionals like HSE06 offer improved bandgap accuracy at increased computational cost. [19]

Basis Sets: Plane-wave basis sets with projector-augmented wave (PAW) pseudopotentials typically offer favorable performance for periodic systems. Basis set convergence testing is essential, with triple-zeta quality bases often providing optimal accuracy-efficiency balance. [17]

Software Packages: Various codes are employed including VASP, CASTEP, and CP2K, with the latter demonstrating favorable scaling for complex zeolite structures. [18] [17]

Beyond Standard DFT: Neural Network Potentials

For properties requiring extensive configurational sampling, neural network potentials (NNPs) like EMFF-2025 trained on DFT data enable molecular dynamics simulations at quantum accuracy. These achieve mean absolute errors within ±0.1 eV/atom for energies and ±2 eV/Å for forces while accessing nanosecond timescales for studying thermal decomposition. [21]

Workflow Integration

The following diagram illustrates the integrated computational-experimental benchmarking workflow:

The Researcher's Toolkit: Essential Materials and Reagents

Table 3: Essential Research Reagents and Materials for Benchmarking Studies

| Material/Reagent | Function in Benchmarking | Application Example |

|---|---|---|

| High-purity elemental powders (Zr, Cr ≥99.9%) | Synthesis of intermetallic compounds for structural and thermodynamic benchmarking | ZrCr₂ Laves phase formation [16] |

| Lutetium hydride, boron, carbon | Precursors for complex compound synthesis | LuB₂C carboboride synthesis [18] |

| Argon atmosphere (high purity) | Inert gas protection during high-temperature synthesis | Prevents oxidation during annealing [18] |

| Silicon standard reference material | XRD instrument calibration for accurate lattice parameter determination | Verify instrument alignment before measurements |

| Single crystal sapphire | Heat capacity calibration standard | Validation of calorimeter measurements [18] |

| pH 13 electrolyte (0.1M NaOH + 0.25M Na₂SO₄) | Electrochemical characterization of functional materials | OER catalyst activity screening [22] |

Robust benchmarking of computational methods against experimental data remains essential for advancing materials discovery and design. The integration of quantitative structural, thermodynamic, and electronic property validation creates a foundation for predictive materials modeling. As benchmarking efforts expand through community initiatives like JARVIS-Leaderboard, which now contains over 1281 contributions to 274 benchmarks, the materials science community moves toward increasingly reproducible and reliable computational predictions. [15] Future developments will likely focus on automating benchmarking workflows, expanding into more complex material behaviors, and strengthening the feedback loop between computation and experiment to accelerate the design of novel materials with tailored properties.

Validation provides the critical foundation for trust in scientific and engineering disciplines, ensuring that computational models, experimental data, and technological systems perform as intended within their specified operational contexts. Systematic validation extends beyond simple verification checks to establish comprehensive evidence-based confidence in results through rigorously designed processes. The National Institute of Standards and Technology (NIST) has pioneered numerous validation frameworks that offer transferable lessons across scientific domains, from fundamental materials science to applied cybersecurity.

For researchers working at the intersection of computational and experimental sciences, particularly in density functional theory (DFT) validation against experimental results, adopting structured validation approaches is paramount. This guide synthesizes validation methodologies from NIST programs and industry best practices, translating them into actionable protocols for the research community. By implementing these systematic approaches, scientists can enhance the reliability, reproducibility, and translational potential of their computational research, especially in critical fields like drug development where accurate prediction of molecular interactions directly impacts therapeutic efficacy and safety.

NIST Validation Frameworks and Principles

NIST establishes validation as a measurement science, creating frameworks that emphasize independent testing, standardized methodologies, and evidence-based conformity assessment. Rather than providing direct certification, NIST operates validation programs where independent accredited laboratories test products and implementations against consistent standards [23]. This separation of testing authority from standards development maintains objectivity while promoting widespread adoption of validated technologies.

Core Structure of NIST Validation Programs

The NIST validation model operates through several interconnected components that together create a robust ecosystem for establishing trust in technologies and measurements. The Computer Security Division (CSD) serves as the validation authority that issues validation certificates based on test results from accredited laboratories [23]. This structure ensures that testing meets consistent quality standards while allowing for specialization across different technological domains. The National Voluntary Laboratory Accreditation Program (NVLAP) provides the oversight mechanism for testing laboratories, maintaining the authoritative list of accredited laboratories qualified to perform validation testing [23] [24].

Several specialized validation programs operate under this overarching structure. The Cryptographic Module Validation Program (CMVP) tests and validates cryptographic modules against Federal Information Processing Standards (FIPS) PUB 140-2 requirements, with validated modules listed on NIST-maintained registers [23]. The recently concluded Security Content Automation Protocol (SCAP) Validation Program advanced standardized security automation and vulnerability management from 2009 until its phased conclusion in 2025 [25]. These programs share common principles of standardized test requirements, independent verification, and public listing of validated implementations to inform procurement and deployment decisions.

Adaptable Validation Principles for Scientific Research

Several core principles from NIST validation frameworks translate effectively to scientific research contexts, particularly for computational method validation:

Conformance Testing Through Accredited Laboratories: The use of independent, accredited laboratories for conformance testing [23] translates to scientific research through the implementation of core facility models where specialized instrumentation and expertise undergo regular proficiency testing and quality control procedures.

Standardized Test Methods and Requirements: Validation programs employ standardized test requirements [23], analogous to standardized experimental protocols and assay validation in scientific research that enable cross-laboratory reproducibility and comparison.

Publicly Available Validation Lists: NIST maintains public registers of validated modules and products [23], similar to scientific practices of publishing detailed methodological supplements, negative results, and complete datasets to enable community verification.

Evolutionary Framework Adaptation: NIST retires programs that no longer serve evolving field needs, as demonstrated by the SCAP Validation Program conclusion [25], highlighting the importance of regularly reassessing validation frameworks against current scientific requirements.

Table: NIST Validation Programs and Their Research Applications

| NIST Program | Primary Focus | Research Application | Key Transferable Principle |

|---|---|---|---|

| Cryptographic Module Validation Program (CMVP) | Information security through validated cryptographic modules [23] | Verification of computational infrastructure for research | Independent testing against consensus standards |

| AI Test, Evaluation, Validation and Verification (TEVV) | Metrics and evaluations for AI technologies including accuracy, robustness, bias [26] | Validation of machine learning applications in research | Multidimensional assessment framework |

| SCAP Validation Program (2009-2025) | Standardized security automation and vulnerability management [25] | Automated validation pipelines for computational research | Standardized protocols for automated assessment |

| Density Functional Theory Validation Project | Assessing DFT accuracy for materials-oriented systems [27] | Direct methodology for computational chemistry | Systematic comparison across methods and experimental data |

Industry DFT Validation Practices

Industry approaches to Design for Test (DFT) principles in electronics manufacturing provide complementary systematic validation strategies that emphasize cost-effectiveness, scalability, and integration throughout the development lifecycle. While originating in hardware engineering, these methodologies offer valuable insights for computational chemistry validation, particularly in balancing comprehensiveness with practical constraints.

Core DFT Methodologies with Research Applications

Industry employs several established DFT techniques that translate effectively to computational chemistry workflows, particularly for validating complex computational methods against experimental results:

Boundary Scan Testing (JTAG): This technique uses a series of shift registers to input test data and capture outputs without physical probes, reducing testing time by up to 50% in densely packed designs [28]. For computational chemistry, this translates to building validation checkpoints directly into computational workflows through scripted sanity checks, boundary condition tests, and automated comparison with reference data at key stages of calculation setup and execution.

In-Circuit Testing (ICT): ICT employs a "bed-of-nails" fixture to make direct contact with test points on printed circuit boards, checking for issues like shorts, opens, and component values [28]. The computational research equivalent involves establishing specific validation nodes within complex computational pipelines where intermediate results are compared against known standards or reference calculations to isolate errors in multi-step computational procedures.

Built-In Self-Test (BIST): BIST integrates testing hardware directly into systems, allowing self-testing without external equipment [28]. For research software and computational methods, this translates to implementing automated validation suites that run each time calculations are initiated, verifying internal consistency, conservation laws, and known limits before proceeding with production calculations.

Functional Testing: This approach simulates real-world operating conditions to verify performance as expected [28]. In computational chemistry, this corresponds to validating methods against well-characterized experimental systems that represent the actual application domain, such as benchmarking DFT methods for adsorption properties against experimental measurements when studying metal-organic frameworks [27].

Proactive DFT Implementation Framework

Successful industry DFT implementation follows a structured, proactive approach rather than treating testing as an afterthought. This methodology directly informs systematic validation for computational research:

Early Integration: Industry best practices emphasize integrating test considerations during schematic design, not after layout completion [29]. For computational research, this means designing validation protocols during method development rather than after implementation, ensuring testability is built into the computational approach from its conception.

Risk-Based Test Point Allocation: Rather than treating all components equally, industry practice uses "actionable statistical analysis" to target test resources based on probability of failure [29]. For research validation, this translates to focusing validation efforts on computational components with highest sensitivity or uncertainty, such as specific functionalals in DFT calculations or particular molecular interactions in drug design studies.

Structured Implementation Process: Industry employs a stepwise approach beginning with objective definition, followed by early test point incorporation, standard interface adoption, manufacturing collaboration, and comprehensive documentation [28]. This systematic process directly translates to computational method validation through defined test objectives, embedded validation checkpoints, standard benchmark adoption, cross-disciplinary collaboration, and thorough methodological documentation.

Table: Industry DFT Techniques Adapted for Computational Research

| Industry DFT Technique | Original Application | Computational Research Adaptation | Implementation Example |

|---|---|---|---|

| Boundary Scan (JTAG) | Testing interconnections without physical probes [28] | Automated workflow validation checkpoints | Scripted verification of calculation inputs/outputs at process boundaries |

| In-Circuit Testing (ICT) | Direct measurement at specific test points [28] | Validation nodes in computational pipelines | Energy component analysis at specific simulation stages |

| Built-In Self-Test (BIST) | Integrated self-testing hardware [28] | Automated software validation suites | Pre-calculation verification of basis sets and functionals |

| Flying Probe Testing | Flexible probe-based testing with collision avoidance [29] | Adaptive validation targeting specific system aspects | Focused validation of problematic molecular interactions or conformations |

| Functional Testing | Real-world condition simulation [28] | Experimental benchmark validation | Direct comparison with spectroscopic or thermodynamic data |

Experimental Protocols for DFT Validation

Robust validation of density functional theory requires systematic experimental protocols that generate high-quality, comparable data. These protocols establish standardized methodologies for benchmarking computational predictions against physical measurements, creating a foundation for assessing and improving theoretical methods.

Reference Data Generation for Solid-State Systems

For validation of DFT methods applied to materials relevant to pharmaceutical solid forms, including pure and alloy solids with crystal structures important in CALPHAD methods, specific experimental protocols provide critical validation data [27]:

Single-Crystal X-ray Diffraction: High-resolution structural determination provides benchmark geometric parameters for comparison with DFT-optimized structures. Protocol requires measurement at multiple temperatures (typically 100K, 150K, 200K, 298K) to assess thermal expansion effects and enable comparison with zero-Kelvin computational results through extrapolation. Data collection should achieve resolution of at least 0.8Å with R-factor below 5% for high-quality reference data.

Inelastic Neutron Scattering: Phonon density of states measurements provide direct experimental validation of computational vibrational predictions. Measurements should be performed over 5-500K temperature range with energy resolution better than 2% for direct comparison with DFT-calculated vibrational spectra and thermodynamic properties derived from partition functions.

High-Precision Calorimetry: Determination of enthalpy of formation and heat capacity provides thermodynamic validation data. Measurement protocol requires multiple samples (minimum n=5) with purity verification through chromatography, using calibration standards traceable to NIST reference materials with reported uncertainty budgets accounting for systematic error sources.

Molecular System Validation Protocols

For drug development applications focusing on molecular systems, specialized protocols address the validation of electronic structure predictions:

Gas-Phase Electron Diffraction: Provides experimental benchmark molecular geometries for comparison with DFT-optimized structures. Protocol requires supersonic expansion with nozzle temperatures optimized for each compound, data collection at multiple detector distances (typically 0.5m and 1.0m), and sophisticated refinement accounting for vibrational averaging effects.

Vibrational Spectroscopy Reference Standards: High-resolution infrared and Raman spectroscopy of isolated molecules in supersonic jets provides rotational-vibrational spectra for validation. Measurements should include absolute intensity calibration using standard reference materials with wavenumber accuracy better than 0.1 cm⁻¹ for fundamental vibrations and 1.0 cm⁻¹ for overtone and combination bands.

NMR Chemical Shift Determination: Protocol for reference NMR measurements includes temperature control to ±0.1K, internal referencing using IUPAC-recommended standards, and reporting of full uncertainty budgets including magnetic field drift corrections and digital resolution limitations for direct comparison with DFT-calculated chemical shifts.

Visualization of Systematic Validation Workflows

Systematic validation requires structured workflows that integrate computational and experimental components. The following diagrams illustrate key processes for implementing robust validation frameworks in computational chemistry research.

Comprehensive DFT Validation Workflow

This diagram outlines the complete validation lifecycle for density functional theory methods, integrating both computational and experimental components in a cyclic framework that drives method improvement.

Diagram: Comprehensive DFT validation workflow showing the integrated computational-experimental cycle.

NIST-Inspired Validation Framework

This workflow adapts the structured approach of NIST validation programs for research contexts, emphasizing independent verification and standardized assessment.

Diagram: NIST-structured validation framework emphasizing independent testing and certification.

Systematic validation requires carefully selected reference systems, computational tools, and data resources. The following table details essential components for establishing a robust validation framework for computational chemistry research, particularly focusing on DFT validation against experimental results.

Table: Essential Research Reagents and Resources for DFT Validation

| Category | Specific Resource | Function in Validation | Example Instances |

|---|---|---|---|

| Reference Molecular Systems | NIST Computational Chemistry Comparison and Benchmark Database (CCCBDB) [27] | Provides experimental reference data for method benchmarking | Gas-phase reaction energies, molecular geometries, vibrational frequencies |

| Standardized Computational Functionals | Validated DFT functionals for materials [27] | Established baseline for method performance assessment | Functionals specifically validated for transition metal nanoparticles, metal-organic frameworks |

| Reference Materials | Certified reference materials for experimental validation | Ensures experimental data quality and traceability | NIST-standard thermochemical reference compounds, diffraction calibration standards |

| Validation Software Tools | Automated validation pipelines and analysis scripts | Enables consistent application of validation protocols | Custom scripts for statistical comparison, uncertainty propagation analysis |

| Experimental Data Validation Tools | Thermodynamics data validation systems [30] | Assesses quality and consistency of experimental reference data | Tools for global validation of thermophysical property data used in benchmarks |

| Standardized Protocols | ISO biotechnology standards for predictive computational models [31] | Provides framework for model construction, verification and validation | ISO/TS 9491-1:2023 for personalized medicine research models |

Implementation Guide for Research Laboratories

Implementing systematic validation frameworks requires both technical approaches and cultural shifts within research organizations. The following actionable guidelines facilitate effective adoption of NIST and industry-inspired validation practices.

Structured Implementation Pathway

Research laboratories should adopt a phased approach to implementing systematic validation:

Validation Infrastructure Establishment: Create a core set of benchmark systems and reference data aligned with research specializations. For pharmaceutical applications, this should include relevant molecular scaffolds, intermolecular interaction prototypes, and solid-form systems. Implement automated validation pipelines that run standardized tests when methodological changes occur, similar to continuous integration practices in software development.

Validation Metrics Definition: Establish quantitative metrics for method performance assessment beyond simple correlation coefficients. These should include uncertainty quantification, application boundary delimitation, and performance degradation monitoring under extrapolation conditions. Adapt the multidimensional assessment approach from NIST's AI evaluation framework [26] covering accuracy, robustness, and limitations documentation.

Cross-Disciplinary Collaboration Protocols: Implement structured collaboration between computational and experimental groups based on the NIST model of independent testing. Establish formal procedures for blind validation studies where experimentalists generate reference data without knowledge of computational predictions to prevent confirmation bias.

Documentation and Reporting Standards

Adopt comprehensive documentation practices inspired by NIST validation programs:

Methodological Transparency: Document all computational parameters, functional selections, basis sets, convergence criteria, and post-processing techniques with sufficient detail to enable exact reproduction. Follow the model of NIST's publicly available validation certificates [23] that provide complete test results and implementation details.

Uncertainty Budgeting: Implement quantitative uncertainty estimation for both computational and experimental results, identifying and quantifying major error sources. Adopt approaches similar to NIST's measurement uncertainty frameworks that provide traceable uncertainty budgets for all reported values.

Performance Boundary Mapping: Explicitly document the domains where validated methods demonstrate acceptable performance and where limitations emerge. This practice mirrors NIST's approach to establishing application-specific validation boundaries [27] rather than claiming universal applicability.

Systematic validation transforms computational research from isolated calculations to credible scientific evidence. By adapting the rigorous frameworks developed by NIST and industry, researchers can build trust in their computational predictions and accelerate the translation of molecular-level insights to practical applications in drug development and materials design.

DFT in Action: Successful Applications Across Scientific Fields

Predicting Mechanical and Thermal Properties of Materials

The accurate prediction of mechanical and thermal properties of materials is a cornerstone of modern materials science and drug development, enabling the targeted design of novel compounds and delivery systems. Density Functional Theory (DFT) serves as a fundamental computational tool for this purpose, providing insights into electronic-scale properties. However, a significant challenge persists: DFT calculations exhibit inherent discrepancies when compared to experimental results, primarily because they are typically performed at 0 K, whereas experiments are conducted at room temperature or higher [9]. This gap highlights the critical need for robust validation frameworks that integrate computational predictions with experimental measurements. Recent advances, particularly the integration of machine learning (ML) interatomic potentials and transfer learning techniques, are now enabling predictions that can surpass the accuracy of standard DFT computations, moving closer to experimental-level accuracy [32] [9]. This guide details the methodologies and protocols for achieving this integration.

Computational Frameworks for Property Prediction

Density Functional Theory (DFT) Fundamentals

DFT remains one of the most effective computational tools for quantitatively predicting and rationalizing the mechanical response of crystalline materials [33]. Its applications extend from predicting elastic constants to understanding elastic anisotropy in complex systems.

- Best-Practice Protocols: For reliable results, it is crucial to move beyond outdated functional/basis set combinations like B3LYP/6-31G*. Recommended modern composite methods include B3LYP-3c, r2SCAN-3c, and B97M-V/def2-SVPD, which offer superior accuracy and robustness by systematically correcting for London dispersion effects and basis set superposition error (BSSE) [34].

- Addressing DFT-Experiment Discrepancy: Systematic errors exist between DFT-computed and experimentally measured formation energies, with Mean Absolute Errors (MAE) reported in major databases such as the Materials Project (0.078-0.172 eV/atom) and the Open Quantum Materials Database (0.108 eV/atom) [9]. These discrepancies necessitate careful validation and correction strategies.

Advanced Machine Learning and Hybrid Approaches

To overcome the limitations of DFT, machine learning approaches have been developed that leverage large DFT-computed datasets while achieving higher accuracy.

- Hybrid Transformer-Graph Framework (CrysCo): This framework uses a Graph Neural Network (GNN) that updates up to four-body interactions (atom type, bond lengths, angles, dihedral angles) and a parallel transformer network for compositional features. This hybrid model excels at predicting energy-related properties (formation energy, band gap) and data-scarce mechanical properties (bulk modulus, shear modulus) [35].

- Machine Learning Interatomic Potentials (MLIPs):

- MLIP for Uranium Nitride (UN): A machine learning interatomic potential (MLIP) was developed using the moment tensor potential (MTP) framework, trained on DFT data. It validated against energies, forces, elastic constants, and phonon dispersion, and was subsequently used in molecular dynamics (MD) simulations to predict thermal expansion, specific heat, and thermal conductivity with excellent agreement against new experimental measurements [32].

- EMFF-2025 for Energetic Materials: This general neural network potential (NNP) for C, H, N, O-based systems uses a transfer learning strategy, requiring minimal new DFT data. It achieves DFT-level accuracy in predicting structures, mechanical properties, and decomposition characteristics of high-energy materials (HEMs) [21].

- AI Surpassing DFT Accuracy: Deep transfer learning, which involves pre-training a model on a large DFT dataset (source task) and fine-tuning it on a smaller set of experimental data (target task), has enabled AI models to predict formation energies from material structure and composition with an MAE of 0.064 eV/atom. This accuracy significantly outperforms DFT computations alone for the same task [9].

Table 1: Comparison of Computational Methods for Property Prediction

| Method | Key Features | Typical Properties Predicted | Advantages | Limitations |

|---|---|---|---|---|

| Density Functional Theory (DFT) | First-principles quantum mechanical method [34] | Formation energy, elastic constants, electronic structure [33] [9] | Formally exact in principle; no empirical parameters needed | High computational cost; discrepancies with room-temp experiments [9] |

| Classical Molecular Dynamics (MD) | Newtonian physics for atomic motion [21] | Thermal decomposition, diffusion, melting point [21] | Allows larger spatial/temporal scales than DFT | Relies on pre-defined force fields; inaccurate for bond breaking/formation [21] |

| Machine Learning Interatomic Potentials (MLIP) | Trained on DFT data; approximates potential energy surface [32] [21] | Energies, forces, thermal properties, mechanical properties [32] | Near-DFT accuracy with MD-like speed; can describe complex reactions [21] | Requires careful training and validation; transferability can be limited |

| Graph Neural Networks (GNNs) | Learns from material structure represented as graphs [35] | Formation energy, band gap, elastic moduli [35] | High predictive accuracy for multiple properties; uncovers structure-property relationships | Requires large training datasets; can be prone to overfitting with scarce data [35] |

Key Properties and Validation Metrics

Mechanical Properties

The mechanical properties of a material, such as its response to external forces, are critical for assessing its mechanical stability and durability.

- Elastic Constants: These are fundamental properties that can be derived from DFT calculations and describe the material's stiffness [33]. They are crucial for understanding how materials deform under stress.

- Bulk Modulus (K) and Shear Modulus (G): These are key mechanical properties that indicate a material's resistance to uniform compression and shear stress, respectively. They are considered "secondary properties" in databases like the Materials Project, meaning they are less frequently calculated due to the significant additional computational resources required [35].

Thermal Properties

Thermal properties are equally vital for applications involving temperature variations or thermal management.

- Thermal Conductivity: A measure of a material's ability to conduct heat. MLIP-based MD simulations have been successfully used to predict this property for materials like uranium nitride, showing strong agreement with experimental measurements [32].

- Thermal Expansion, Specific Heat, and Melting Point: These are standard thermal properties that can be accurately predicted using MLIP-driven molecular dynamics simulations, providing a comprehensive view of a material's behavior across temperatures [32].

- Decomposition Mechanisms: For energetic materials, understanding the thermal decomposition pathways and mechanisms at high temperatures is essential. General NNPs like EMFF-2025 can map these mechanisms and uncover unexpected universal behaviors across different materials [21].

Table 2: Summary of Key Validation Metrics and Performance

| Property Category | Specific Property | Computational Method | Reported Performance / Validation |

|---|---|---|---|

| Energy Properties | Formation Energy (Ef) | AI with Transfer Learning [9] | MAE = 0.064 eV/atom on experimental test set |

| DFT (OQMD, Materials Project) [9] | MAE = 0.078 - 0.172 eV/atom vs. experiments | ||

| Energy Above Convex Hull (EHull) | Hybrid Transformer-Graph (CrysCo) [35] | Accurate prediction of thermodynamic stability | |

| Mechanical Properties | Bulk Modulus (K), Shear Modulus (G) | Hybrid Transformer-Graph with TL (CrysCoT) [35] | Addresses data scarcity; accurate prediction |

| Elastic Constants | DFT [33] | Quantitative correlation with nanoindentation experiments | |

| Thermal Properties | Thermal Conductivity | MLIP (MTP) + MD for UN [32] | Strong agreement with single-crystal measurements |

| Melting Point, Thermal Expansion | MLIP (MTP) + MD for UN [32] | Excellent agreement with DFT and prior experiments | |

| Structural & Chemical | Decomposition Mechanisms | General NNP (EMFF-2025) for HEMs [21] | Revealed universal high-temperature decomposition pathways |

| CO₂ Adsorption Energy | DFT-MD Simulations [7] | Close agreement with experiment under electric field |

Experimental Validation Protocols

Core Experimental Techniques

The following experimental methodologies are essential for validating computational predictions.

- Nanoindentation: This technique is used to measure mechanical properties like hardness and elastic modulus at small scales. It has been shown to quantitatively correlate with DFT predictions of elastic constants [33].

- High-Pressure X-ray Crystallography: This method provides direct experimental insight into the mechanical response of a crystal structure under pressure, serving as a key validation point for DFT-predicted structural behavior [33].

- Thermal Conductivity Measurement: For validating predicted thermal properties, direct measurement on fabricated samples (e.g., uranium nitride) is performed. The results are compared against MLIP-driven MD simulation results [32].

- Experimental Adsorption Uptake: In studies involving surface interactions, such as CO₂ capture by graphene-based materials, the actual gas uptake under controlled conditions (e.g., with and without an applied electric field) is measured. This experimental data is used to confirm the trends and values predicted by DFT-MD simulations [7].

Integrated Workflow for Validation

The diagram below illustrates the continuous cycle of prediction and experimental validation, which is central to modern materials science.

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details key computational and experimental "reagents" essential for research in this field.

Table 3: Key Research Reagents and Computational Tools

| Item / Solution | Function / Role | Example Context |

|---|---|---|

| Density Functional Theory (DFT) Codes | Solves electronic structure to compute total energy, forces, and primary properties [35] [34]. | VASP, Quantum ESPRESSO; used for generating training data for ML models. |

| Machine Learning Interatomic Potentials (MLIPs) | Provides a fast, accurate force field for molecular dynamics simulations at near-DFT accuracy [32] [21]. | EMFF-2025 for HEMs; MTP for Uranium Nitride. |

| Graph Neural Network (GNN) Models | Represents crystal structures as graphs to predict properties from structure and composition [35]. | CrysCo model; ALIGNN (incorporates three-body interactions). |

| Transfer Learning Framework | Leverages knowledge from data-rich tasks (e.g., formation energy) to improve performance on data-scarce tasks (e.g., elastic moduli) [35] [9]. | Fine-tuning a formation energy-pre-trained model on a small dataset of experimental mechanical properties. |

| High-Purity Material Samples | Serves as the physical subject for experimental validation of predicted properties. | Fabricated UN sample for thermal conductivity measurement [32]. |

| Applied Electric Field Setup | Modifies the interaction environment to enhance material properties for study and application. | Enhanced CO₂ uptake on graphene in experiments, corroborating DFT-MD findings [7]. |

Detailed Experimental Methodologies

Protocol: Validating Graphene-CO₂ Interaction via DFT-MD and Experiment

This protocol is adapted from studies on graphene-based CO₂ capture systems [7].

Computational Simulation Setup:

- Model Construction: Create an atomic model of the graphene sheet, assuming complete surface accessibility for CO₂ binding.

- DFT-MD Parameters: Perform Density Functional Theory Molecular Dynamics (DFT-MD) simulations under varying conditions, including the application of an external electric field.

- Data Extraction: Calculate the CO₂ adsorption energy and analyze the structural dynamics of the interaction from the simulation trajectories.

Experimental Validation:

- Sample Preparation: Fabricate the actual graphene-based adsorbent material. Note that experimental constraints typically result in a surface coverage of approximately 50-80% due to limitations in coating homogeneity.

- Adsorption Testing: Measure the CO₂ uptake capacity of the material under conditions mirroring the simulations, including the application of a similar electric field.

- Validation Metric: Compare the trend in adsorption energy/uptake enhancement under an electric field between simulation and experiment. A close agreement confirms the accuracy of the computational model.

Protocol: Developing and Validating an MLIP for Thermal Properties

This protocol is derived from the development of a machine learning potential for uranium nitride [32].

MLIP Training:

- Data Generation: Perform high-throughput DFT calculations to generate a database of energies, forces, and stresses for a wide range of atomic configurations.

- Model Training: Train a Machine Learning Interatomic Potential (e.g., using the Moment Tensor Potential framework) on the generated DFT data.

- Initial Validation: Validate the trained MLIP against a held-out set of DFT data, ensuring excellent agreement for energies, forces, elastic constants, and phonon dispersion spectra.

Property Prediction and Experimental Correlation:

- Molecular Dynamics Simulations: Employ the validated MLIP in large-scale molecular dynamics (MD) simulations to predict key thermal properties, such as thermal conductivity, thermal expansion, and specific heat.

- Experimental Benchmarking: Fabricate a high-quality sample of the material (e.g., single-crystal uranium nitride) and perform new thermal conductivity measurements that are representative of the intrinsic material properties.

- Performance Assessment: Directly compare the MLIP-MD predictions with the new experimental measurements. Strong agreement demonstrates the reliability and predictive capability of the developed potential.

Rational drug design represents a paradigm shift from traditional trial-and-error discovery to a targeted approach based on the knowledge of a biological target and its three-dimensional structure [36]. In the context of cancer therapy, this methodology enables researchers to design chemotherapy agents and formulations that specifically interact with molecular targets involved in tumor growth and survival. The core principle involves designing molecules that are complementary in shape and charge to their biomolecular targets, typically proteins or nucleic acids, thereby achieving highly specific binding [36]. This approach is particularly valuable in oncology, where targeted therapies can potentially maximize anticancer efficacy while minimizing damage to healthy tissues.

The modern framework for rational cancer therapeutics incorporates several key components: modular design, image guidance, and oligonucleotide-based targeting technologies [37]. Modularity allows for the synthesis of therapeutic agents that can be optimized for specific indications or individual patients through adjustments in size, surface properties, and targeting moieties. Image guidance enables the monitoring of drug delivery and distribution within the patient's body, facilitating personalized dosing and administration schedules. Oligonucleotide therapeutics leverage Watson-Crick complementarity to target specific genetic sequences aberrant in cancer cells, representing a prime example of computationally driven design [37].

Computational Foundations: Density Functional Theory in Drug Design

Theoretical Principles and Validation

Density Functional Theory (DFT) provides the quantum mechanical foundation for many computational approaches in rational drug design. DFT serves as a workhorse for quantum mechanics calculations of molecular and periodic structures, enabling researchers to predict the electronic properties and interaction energies of potential drug molecules with their targets [27]. The accuracy and applicability of DFT have been demonstrated across countless studies, though rigorous validation for specific biologically relevant systems remains essential [27].