Unlocking Materials Innovation: A Comprehensive Guide to PSPP Relationships in Biomedical Research

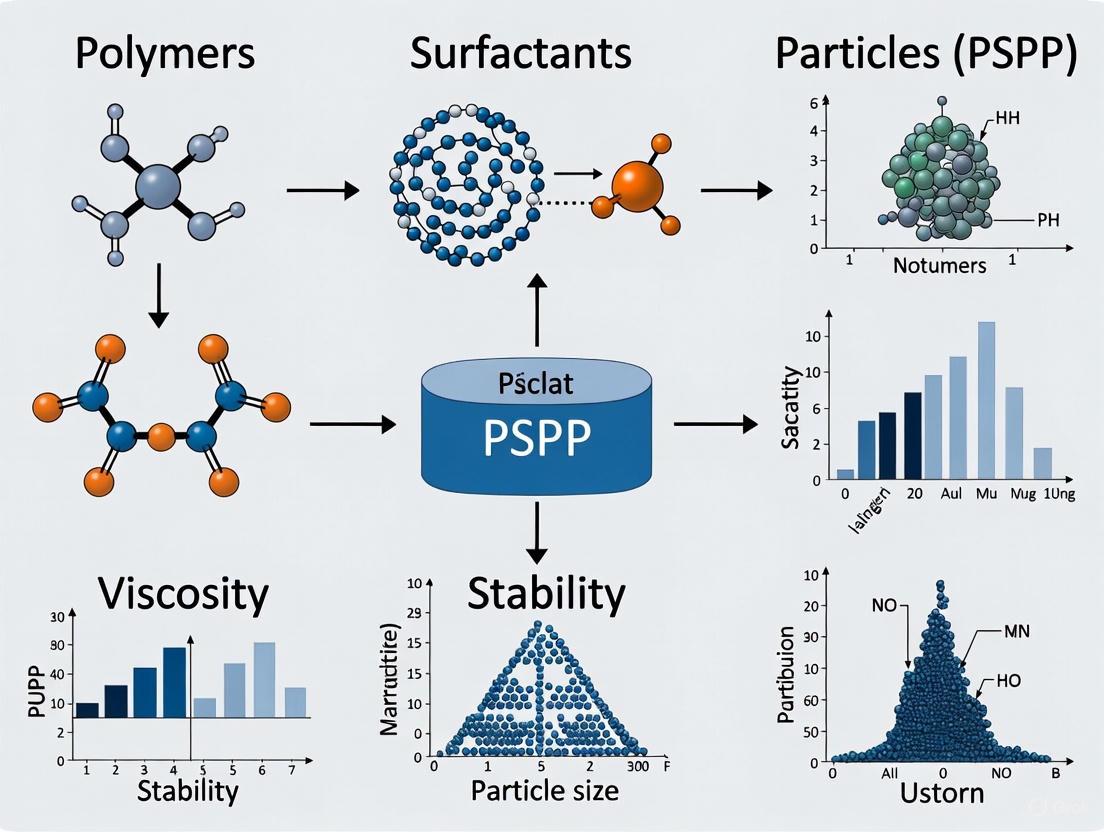

This article provides a comprehensive exploration of Processing-Structure-Property-Performance (PSPP) relationships in materials science, with specialized focus for biomedical researchers and drug development professionals.

Unlocking Materials Innovation: A Comprehensive Guide to PSPP Relationships in Biomedical Research

Abstract

This article provides a comprehensive exploration of Processing-Structure-Property-Performance (PSPP) relationships in materials science, with specialized focus for biomedical researchers and drug development professionals. It covers foundational PSPP principles, advanced methodologies including multi-information source fusion and deep learning, optimization frameworks for material design, and validation techniques for biomedical applications. The content bridges fundamental materials science with practical implementation strategies for developing advanced biomaterials, drug delivery systems, and medical devices.

Understanding PSPP Relationships: The Fundamental Framework of Materials Science

The Processing–Structure–Property–Performance (PSPP) paradigm represents the fundamental framework guiding modern materials science research and development. This holistic chain of relationships describes how a material's synthesis and processing conditions (Processing) dictate its internal architecture across multiple length scales (Structure), which in turn determines its measurable characteristics (Properties) and ultimately its effectiveness in real-world applications (Performance). The PSPP framework extends the traditional Process-Structure-Property (PSP) relationship by explicitly incorporating the critical element of performance, thereby connecting fundamental materials science directly to engineering applications [1] [2].

In goal-oriented materials design, the central challenge involves inverting these PSPP relationships to map desired performance characteristics back to the necessary processing conditions through optimal microstructures [2]. This paradigm is particularly vital for addressing society's most pressing challenges, from developing clean energy technologies to creating biomedical implants, where the current 20-year average timeline for new materials commercialization is unacceptably long [1]. The materials science field is currently undergoing a paradigm shift, with traditional experimental methods being augmented by computational techniques and data-driven approaches collectively known as Materials Informatics (MI), which leverage historical materials data to build predictive models that can dramatically accelerate the discovery and development process [1].

Foundational Principles of PSPP Relationships

The Hierarchical Nature of Materials

A fundamental challenge in applying the PSPP framework lies in the hierarchical nature of materials, where structures form over multiple time and length scales [1]. At the atomic scale, interactions between elements inform short-range order into lattice structures or repeat units. These repeat units collectively produce unique microstructures at increasing length scales that correspond to a material's macroscopic properties and morphology. This multi-scale complexity means that seemingly minor changes at the processing stage can create cascading effects throughout the PSPP chain, resulting in dramatically different performance outcomes [1].

The seemingly infinite number of ways to arrange and rearrange atoms and molecules into new lattice structures creates a diverse universe of materials with unique mechanical, optical, dielectric, and conductive properties [1]. Navigating this vast design space to discover materials with targeted performance characteristics represents the core challenge of materials design. Subsequently, countless materials remain undiscovered as it would require astronomical timescales and significant resources to test every possible composition through trial-and-error approaches [1].

The Central Paradigm of Materials Science

The PSP relationship serves as the central paradigm of materials science, creating the foundational understanding that materials processing governs microstructure, which in turn determines properties [2]. The expansion to PSPP explicitly incorporates how these properties enable specific functions in application environments. In practice, however, materials design has often been microstructure-agnostic, with the microstructure merely mediating the process-property (PP) connection rather than being actively used as an optimization parameter [2].

This pragmatic approach to materials design raises a fundamental question: is explicit knowledge and manipulation of microstructure necessary for efficient materials design, or can materials be successfully optimized by treating the microstructure as a "black box" and focusing solely on PP relationships? [2] Research indicates that while microstructure-agnostic design can succeed in finding optimal processing parameters, explicit incorporation of microstructure knowledge significantly enhances the efficiency and effectiveness of the materials optimization process [2].

Computational and Experimental Methodologies

Data-Driven Materials Informatics

Materials Informatics (MI) represents a transformative approach to navigating PSPP relationships by leveraging data science techniques to accelerate materials discovery and development [1]. MI encompasses the acquisition and storage of materials data, the development of surrogate models to make rapid property predictions, and experimental confirmation of new materials with the core objective of dramatically reducing development timelines [1].

The MI framework establishes a mapping between a suitable representation of a material (its "fingerprint") and any of its properties from existing data [1]. This fingerprint consists of an optimal number of descriptors that the model uses to learn what a material is and accurately predict its properties. In essence, the material fingerprint functions as the DNA code, with descriptors acting as individual "genes" that connect empirical or fundamental characteristics of a material to its macroscopic properties [1]. Once validated, these predictive models can instantaneously forecast the properties of existing, new, or hypothetical material compositions based solely on past data, prior to performing expensive computations or physical experiments [1].

Microstructure-Aware Bayesian Optimization

Recent advances have demonstrated the superiority of microstructure-aware approaches over traditional black-box optimization methods. In a rigorous computational study comparing PSP and PP paradigms for designing dual-phase steels, researchers developed a novel microstructure-aware closed-loop multi-fidelity Bayesian optimization framework [2]. This approach explicitly incorporated microstructure knowledge through a low-fidelity model based on microstructural descriptors, which was then fused with high-fidelity property data.

The methodology involved formulating the materials design problem as finding the right combination of material chemistry and processing conditions that maximizes a targeted mechanical property. The input space included processing parameters (intercritical annealing temperature) and material chemistry (carbon, silicon, and manganese content), while the output was a targeted mechanical property (stress-normalized strain hardening rate) [2]. The key innovation was the simultaneous learning of two Gaussian process models: one linking inputs to microstructural features (PS relationship), and another linking microstructural features to the property of interest (SP relationship) [2].

Table 1: Key Differences Between Microstructure-Agnostic and Microstructure-Aware Approaches

| Aspect | Microstructure-Agnostic (PP) | Microstructure-Aware (PSP) |

|---|---|---|

| Optimization Focus | Direct processing-property relationships | Explicit process-structure-property chains |

| Microstructure Role | Black box mediator | Active optimization parameter |

| Data Utilization | Single-fidelity property data | Multi-fidelity microstructural and property data |

| Model Complexity | Single Gaussian process model | Coupled Gaussian process models |

| Experimental Efficiency | Requires more high-fidelity evaluations | More efficient high-fidelity evaluation strategy |

The results demonstrated that the microstructure-aware (PSP) approach identified the global optimum in the materials design space with significantly fewer high-fidelity evaluations compared to the microstructure-agnostic (PP) approach [2]. This provides compelling evidence that explicit inversion of PSP relationships represents a superior paradigm for materials design, at least for problems where microstructure plays a crucial role in determining properties.

Workflow Visualization

The following diagram illustrates the comparative workflows for microstructure-agnostic (PP) versus microstructure-aware (PSP) materials design approaches:

Case Study: PSPP in Magnetic Polymer Composites

Application in Magnetic Robotics

The application of the PSPP paradigm is particularly well-demonstrated in the development of magnetically responsive polymer composites (MPCs) for untethered miniature robots [3]. These systems require precise control over processing-structure-property-performance relationships to achieve targeted locomotion and functionality in biomedical, environmental, and industrial applications.

In this context, the Processing parameters include techniques such as hot-pressing, dip-coating, solvent casting, photolithography, replica molding, and 3D printing [3]. The Structure encompasses the distribution of magnetic fillers (e.g., homogeneous distribution versus directionally assembled structures), the architecture of the polymer matrix (thermoset vs. thermoplastic), and the overall robot geometry. The Properties include magnetic anisotropy, mechanical stiffness, thermal stability, and rheological behavior. The Performance is measured by the robot's locomotion capabilities (pulling, rolling, crawling, undulating) and its effectiveness in applications such as targeted drug delivery, microfluidic control, or pollutant removal [3].

Critical Processing Considerations

The processing of MPCs requires careful consideration of multiple factors that influence the resulting PSPP relationships. For mixing magnetic particles in polymer matrices, the rheological properties of the polymer are critical [3]. High-viscosity thermoset precursors or thermoplastic melts can prevent sedimentation of micro-scale magnetic particles, whereas low-viscosity polymer solutions may require viscosity-tuning fillers to reduce the high terminal velocity of particles. For nano-scale magnetic particles, thermodynamic and kinetic stabilization strategies are essential to enhance polymer-particle interactions against polymer-polymer and particle-particle attractive forces [3].

Thermal properties represent another crucial consideration in the PSPP chain for MPCs. Processing temperatures above the glass transition temperature (Tg) or melting temperature (Tm) can unintentionally demagnetize magnetic fillers, erasing pre-programmed magnetization profiles according to the Curie-Weiss law [3]. Conversely, localized heating above the Curie temperature (Tcurie) of magnetic fillers enables selective reprogramming of magnetization in designated areas of magnetic robots. The thermal stability of polymer composites is equally important, as temperatures exceeding the thermal degradation temperature (Td) can cause undesired defect formations in polymeric bodies [3].

Table 2: Key Processing Parameters and Their Impact on PSPP Relationships in Magnetic Polymer Composites

| Processing Parameter | Structural Impact | Property Influence | Performance Outcome |

|---|---|---|---|

| Magnetic Field Application During Processing | Directional particle alignment | Enhanced magnetic anisotropy | Improved locomotion efficiency and directional control |

| Particle Size Distribution | Homogeneity of filler dispersion | Uniform vs. localized magnetic response | Consistent vs. targeted actuation behavior |

| Polymer Matrix Selection (Thermoset vs. Thermoplastic) | Cross-link density or crystalline structure | Mechanical stiffness and elasticity | Shape-morphing capabilities and durability |

| Processing Temperature | Polymer chain mobility and filler distribution | Thermal stability and magnetic strength | Operation temperature range and actuation force |

| Manufacturing Technique (3D Printing vs. Molding) | Architectural complexity and resolution | Anisotropic properties based on build direction | Customized locomotion modes and application-specific designs |

Research Reagent Solutions for Magnetic Polymer Composites

Table 3: Essential Materials and Their Functions in Magnetic Polymer Composite Research

| Material Category | Specific Examples | Function in PSPP Workflow |

|---|---|---|

| Magnetic Fillers | Nickel (Ni) nanolayers, Neodymium–iron–boron (NdFeB) microflakes, Iron (Fe) microspheres, Magnetite (Fe₃O₄) nanospheres | Provide magnetic responsiveness for actuation under external magnetic fields |

| Polymer Matrices | Thermosets (epoxy, acrylates), Thermoplastics (PLA, PEG) | Form structural body of robot, determine mechanical properties and processability |

| Surface Modifiers | Silane coupling agents, polymer grafts (e.g., polyacrylic acid) | Enhance polymer-filler compatibility, improve dispersion, prevent aggregation |

| Solvent Systems | Dichloromethane, chloroform, dimethylformamide (DMF) | Enable processing through solvent casting, regulate viscosity for filler dispersion |

| Photoinitiators | Irgacure 2959, LAP | Facilitate photopolymerization in UV-based processing techniques |

| Viscosity Modifiers | Fumed silica, cellulose nanocrystals | Adjust rheological properties for specific manufacturing techniques |

Advancing the PSPP Paradigm

The future of the PSPP paradigm lies in the continued integration of data-driven approaches with fundamental materials science principles. As demonstrated in the case of microstructure-aware Bayesian optimization, explicit incorporation of structural information throughout the design process significantly enhances efficiency in identifying optimal processing parameters for targeted performance [2]. This approach is particularly valuable for problems where microstructure plays a determining role in property outcomes.

The ongoing development of autonomous materials research (AMR) platforms represents the next frontier in implementing the PSPP paradigm [2]. These closed-loop systems integrate computational prediction, automated synthesis, high-throughput characterization, and machine learning to continuously refine PSPP models with minimal human intervention. The success of such platforms depends critically on the formulation of accurate PSPP relationships that can guide the autonomous decision-making process.

The PSPP paradigm provides an essential framework for accelerated materials design and development. While microstructure-agnostic approaches that focus solely on PP relationships can succeed in identifying optimal processing parameters, rigorous computational studies have demonstrated the superiority of explicitly modeling and optimizing the complete PSP chain [2]. This microstructure-aware approach enables more efficient navigation of the complex materials design space, reducing the number of expensive high-fidelity experiments required to reach performance targets.

The application of the PSPP paradigm to diverse material systems, from structural alloys to functional polymer composites, underscores its universal importance in materials science [2] [3]. As the field continues to evolve through the integration of data-driven methodologies and autonomous research platforms, the explicit inversion of PSPP relationships will become increasingly central to materials innovation. This approach promises to substantially compress the traditional 20-year materials development timeline, enabling more rapid translation of new materials from fundamental discovery to practical application [1].

The foundational paradigm of materials science is the Processing-Structure-Property-Performance (PSPP) relationship, which describes how a material's processing history dictates its internal microstructure, which in turn determines its properties and ultimate performance in applications [4] [2]. A material's microstructure encompasses the arrangement of phases, defects, and interfaces at various length scales, from atomic to macroscopic dimensions [5]. This internal arrangement is not static; it evolves dynamically through competitive formation processes with different physical origins, leading to spatially ordered configurations that define the material's characteristics [6]. Understanding and controlling these microstructural features is essential for designing advanced materials for demanding applications in aerospace, energy, healthcare, and transportation [7] [4].

The central role of microstructure is that it mediates the connection between the processing conditions a material undergoes and the final properties it exhibits [2]. For example, in structural alloys, the specific morphological features formed during thermomechanical processing—such as grain size, phase distribution, and defect density—directly control mechanical properties like strength, toughness, and ductility [8]. The pursuit of a fundamental understanding of these microstructure-property relationships has been intensively investigated for centuries and continues to drive innovation in structural materials [8].

Fundamental Microstructural Features and Their Property Relationships

Microstructures are "unbounded irregular structures" that can be precisely characterized using global parameters expressible as totals in a unit volume [9]. These fundamental parameters include volume fraction, surface area, length of line, curvature, and connectivity. When a physical property relates simply to one of these parameters, the relationship becomes shape-insensitive, meaning it is independent of other geometric properties of the structure [9].

Table 1: Fundamental Microstructural Parameters and Their Property Influences

| Microstructural Parameter | Description | Influence on Material Properties |

|---|---|---|

| Volume Fraction | Proportion of a specific phase or component in a unit volume | Directly controls composite properties (e.g., rule of mixtures) [9] |

| Interfacial Area | Total area of boundaries between phases or grains | Influences strength (Hall-Petch relationship) and corrosion resistance [9] |

| Grain Boundary Characteristics | Crystallographic misorientation and boundary geometry | Affects deformation transfer, corrosion, and electrical properties [7] |

| Connectivity | Degree of interconnection between phases | Determines electrical/thermal conductivity and fracture behavior [9] |

The grain boundary character is particularly important in governing how deformation propagates through a material. In TiAl-based alloys, for instance, high-angle grain boundaries act as strong barriers to deformation twin propagation, requiring specific dislocation-based mechanisms to transfer strain across boundaries [7]. The ability of incoming twinning dislocations to react with grain boundaries and generate reflected and transmitted glide dislocations determines how effectively a material can accommodate plastic deformation without fracturing [7].

Advanced Characterization and Analysis Techniques

Multi-Modal Electron Microscopy

Modern microstructure characterization increasingly relies on multi-modal approaches that combine different imaging and spectroscopy techniques. Scanning Transmission Electron Microscopy (STEM) generates various signals—imaging, spectroscopic, and diffraction—that collectively inform the microstructure [5]. The challenge lies in integrating these data streams to reconstruct a comprehensive picture of the material's internal structure.

A multi-modal machine learning approach has been demonstrated for the complex oxide La₁₋ₓSrₓFeO₃, combining High-Angle Annular Dark-Field (HAADF) imaging with Energy Dispersive X-ray Spectroscopy (EDS) [5]. This approach applies:

- Graph-based segmentation requiring minimal prior knowledge

- Unsupervised clustering assuming a known number of discrete regions

- Semi-supervised few-shot classification using limited user-selected examples [5]

Table 2: Multi-Modal Characterization Techniques for Microstructural Analysis

| Technique | Signal Type | Information Obtained | Applications |

|---|---|---|---|

| HAADF-STEM | Scattered electrons | Atomic number contrast, crystal structure | Imaging perovskite lattices, defect structures [5] |

| Energy Dispersive X-ray Spectroscopy (EDS) | Characteristic X-rays | Elemental composition, chemical distribution | Delineating material layers, identifying chemical order [5] |

| 4D-STEM | Diffraction patterns | Crystallographic orientation, strain mapping | Nanostructure analysis, phase identification [5] |

| Atom Probe Microscopy (APM) | Ion evaporation | 3D atomic-scale elemental mapping | Determining atomic identity and position [7] |

Automated Data Extraction Frameworks

The growing volume of materials data has necessitated automated extraction methods. ChatExtract is an advanced approach that uses conversational large language models (LLMs) with engineered prompts to accurately extract materials data from research papers with both precision and recall close to 90% [10]. The method involves:

- Initial relevancy classification to identify sentences containing target data

- Expansion to text passages including title, preceding sentence, and target sentence

- Separation of single-valued and multi-valued data extraction

- Uncertainty-inducing redundant prompts to minimize hallucinations [10]

This workflow demonstrates how prompt engineering in a conversational context can overcome traditional limitations of LLMs for technical data extraction, enabling efficient database development for microstructure-property relationships [10].

Computational Frameworks for Microstructure-Property Prediction

Microstructure-Aware Bayesian Optimization

The fundamental question of whether microstructure information genuinely accelerates materials design has been addressed through a novel microstructure-aware closed-loop multi-fidelity Bayesian optimization framework [2]. This approach explicitly incorporates microstructure knowledge into the materials design process, contrasting with traditional microstructure-agnostic methods that only consider processing-property (PP) relationships.

In a case study optimizing the chemistry and processing parameters of dual-phase steels, the microstructure-aware approach significantly enhanced the materials optimization process compared to traditional methods [2]. This demonstrates that PSP relationships are superior to PP relationships for materials design, proving that explicit inversion of PSP relationships is necessary to efficiently optimize material properties [2].

Machine Learning Mimicking Metallurgical Thinking

A machine learning framework implementing metallurgists' thought processes has been developed to identify microstructural features critically affecting material properties [6]. This approach recognizes that material microstructures comprise finite kinds of characteristic small-scale structures that develop through competitive formation kinetics with completely different physical backgrounds [6].

The framework combines:

- Vector Quantized Variational Autoencoder (VQVAE) to extract characteristic microstructures

- PixelCNN to determine spatial order among the extracted features [6]

When applied to optimize fracture elongation in dual-phase steels using the Gurson-Tvergaard-Needleman (GTN) fracture model, this framework successfully identified critical microstructural regions affecting fracture properties, matching results from numerical simulations based on explicit physical models [6].

Phase Field Modeling

Phase field method simulations have emerged as powerful tools for quantitatively predicting spatiotemporal evolution of microstructures during thermal processing [7]. By integrating thermodynamic modeling with phase field simulation, researchers can explicitly account for precipitate morphology, spatial arrangement, and anisotropy. For example, phase field simulations of Ti-6Al-4V have successfully modeled the formation of side plates (α-phase lamellae growing off grain boundary α) by introducing random fluctuations at the α/β interface and simulating their evolution into colonies of side plates [7]. These simulations capture both the spatial variation and shape anisotropy in precipitate microstructure that traditional average-value models cannot represent.

Experimental Protocols for Microstructure-Property Analysis

Protocol for Multi-Modal Electron Microscopy Analysis

Objective: To characterize microstructural order and chemical distribution in complex oxide materials [5].

Materials and Methods:

- Sample Preparation: Epitaxially grow LaFeO₃ (LFO) thin films on single-crystal SrTiO₃ (STO) substrates. Prepare both pristine samples and samples with intentional structural defects (columnar regions with varying composition/crystallinity).

- Irradiation: Irradiate subsets of samples to 0.1 displacements per atom (dpa) using appropriate radiation sources to induce crystalline and chemical disorder.

- TEM Sample Preparation: Deposit protective capping layers (Cr or Pt) on film surfaces and prepare cross-sectional STEM samples using focused ion beam (FIB) milling or conventional thinning methods.

- Data Acquisition:

- Collect High-Angle Annular Dark-Field (HAADF) images to visualize perovskite lattices and defect structures.

- Acquire Energy Dispersive X-ray Spectroscopy (EDS) spectra to determine elemental distribution across interfaces.

- Register images from different modalities to ensure spatial alignment.

- Data Pre-processing:

- Sub-divide images into small uniform "chips" (0.5-1 nm size) to capture meaningful structural motifs.

- For EDS data, process full spectral information or derive atomic percentages from detected elements.

- Multi-Modal Computer Vision Analysis:

- Apply graph-based, unsupervised clustering, or semi-supervised few-shot classification approaches.

- Evaluate segmentation performance by examining elemental composition and crystallinity of identified clusters.

- Compare uni-modal and multi-modal results to identify latent correlations informing material disordering.

Protocol for Microstructure-Aware Materials Optimization

Objective: To identify optimal chemistry and processing parameters that maximize targeted mechanical properties in dual-phase steels using microstructure-aware Bayesian optimization [2].

Materials and Methods:

- Define Input and Output Spaces:

- Input space (XI): Intercritical annealing temperature (TIA), and concentrations of carbon (XC), silicon (XSi), and manganese (X_Mn).

- Output space (XO): Stress-normalized strain hardening rate, (1/τ)(dτ/dεpl).

- Initial Data Collection:

- Generate initial dataset through experiments or simulations covering representative points in the input space.

- For each point, characterize resulting microstructure (e.g., phase fractions, grain sizes) and measure mechanical response.

- Model Construction:

- Build Gaussian process models using initial data for both microstructure-agnostic (PP) and microstructure-aware (PSP) approaches.

- For microstructure-aware approach, include microstructural descriptors as intermediate variables.

- Closed-Loop Optimization:

- Implement multi-fidelity Bayesian optimization framework.

- Iteratively select next evaluation points based on acquisition function (e.g., expected improvement).

- Update models with new data after each evaluation.

- Performance Comparison:

- Compare convergence rates and final achieved properties between microstructure-agnostic and microstructure-aware approaches.

- Analyze selected optimal conditions and corresponding microstructures to identify governing PSP relationships.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Microstructure-Property Studies

| Research Reagent/Material | Function/Application | Specific Examples |

|---|---|---|

| Dual-Phase Steel Systems | Model material for studying microstructure-property relationships | Fe-C-X alloys for investigating phase transformations [2] [6] |

| Complex Oxide Thin Films | Investigating interface effects and radiation damage | La₁₋ₓSrₓFeO₃, LaMnO₃/SrTiO₃ heterostructures [5] |

| TiAl-Based Alloys | Studying deformation mechanisms and grain boundary effects | γ-TiAl alloys with duplex microstructures [7] |

| Refractory High-Entropy Alloys | Developing high-temperature materials with superior properties | Alloys optimized for enhanced ductility [2] |

| Undercooled Liquid Alloys | Investigating solidification kinetics and microstructure formation | Refractory alloys studied in space microgravity [11] |

| Shape Memory Alloys | Studying phase transformations and functional properties | Fe-Mn-Al-Ni alloys fabricated via laser powder bed fusion [8] |

The field of microstructure-property relationships is rapidly evolving with several emerging trends. Multi-modal computer vision approaches are enabling more reproducible, scalable, and informed microstructural descriptors compared to traditional human-in-the-loop analyses [5]. Space materials science offers unique opportunities to study microstructural evolution under microgravity conditions, providing insights into fluid flow, crystal nucleation, and growth kinetics without gravitational effects [11]. The integration of advanced characterization with computational methods and new processing techniques like additive manufacturing is creating unprecedented capabilities for controlling microstructures [8].

The explicit incorporation of microstructure information into materials design frameworks has been rigorously demonstrated to enhance the optimization process, proving that PSP relationships are superior to simple PP relationships for goal-oriented materials design [2]. As machine learning frameworks continue to evolve, their ability to mimic metallurgists' thinking processes and identify critical microstructural features will further bridge the gap between computational prediction and experimental realization [6]. The continuing mastery of microstructural insights will enable the development of next-generation materials with tailored properties for extreme environments and advanced technologies.

The Property-Structure-Processing-Performance (PSPP) relationship, often visualized as the materials tetrahedron, represents a foundational paradigm in materials science and engineering. This framework provides a systematic approach for understanding the complex interdependencies that govern material behavior, enabling the rational design of new materials for specific applications. The four facets of the tetrahedron are deeply interconnected: a material's intrinsic and extrinsic properties are dictated by its structure across multiple length scales (atomic, micro-, meso-, and macro-), which is itself a direct consequence of the processing techniques and conditions employed during synthesis and manufacturing. Ultimately, the combination of properties and structure determines a material's performance in real-world applications, closing the iterative design loop.

In the context of a broader thesis on PSPP relationships, this framework moves beyond theoretical concept to become a practical scaffold for data-driven materials development. It is particularly crucial for addressing complex challenges in sustainability and advanced technology, where traditional trial-and-error approaches are prohibitively time-consuming and costly. The application of this tetrahedron to polyhydroxyalkanoate (PHA) biopolymers exemplifies its power in guiding the development of sustainable material alternatives, illustrating how deliberate manipulation at one vertex inevitably induces changes throughout the entire system [12].

The PSPP Tetrahedron: A Detailed Analysis

Property-Structure Relationships

The connection between a material's structure and its resulting properties is perhaps the most fundamental relationship in materials science. Structure encompasses everything from atomic arrangement and chemical bonding to crystalline phases, microstructural features, and defect populations.

- Atomic and Molecular Structure: At the most fundamental level, the specific elements present, their bonding characteristics (covalent, ionic, metallic), and bond strengths determine intrinsic properties such as density, electrical conductivity, and chemical stability. For biopolymers like PHAs, the molecular weight, stereoregularity, and side-chain chemistry directly influence thermal and mechanical behavior [12].

- Microstructure: This includes features such as grain size and orientation, phase distribution, porosity, and the presence of interfaces. Microstructure profoundly impacts mechanical properties (strength, toughness, hardness) and transport phenomena (electrical and thermal conductivity). Processing history is the primary determinant of microstructure.

- Hierarchical Structures: Many advanced materials, including biological and bio-inspired systems, exhibit complex structures across multiple length scales. The interaction between these hierarchical levels often leads to emergent properties not predictable from constituents alone.

Processing-Structure Relationships

Processing encompasses all methods used to synthesize, synthesize, and manufacture a material, from initial synthesis to final forming. It is the primary tool engineers use to manipulate and control structure.

- Synthesis and Synthesis: The initial creation of a material, whether from melt, solution, or vapor phase, establishes the initial phase, composition, and often the crystal structure. For PHAs, biosynthesis conditions (e.g., carbon source, microbial strain) directly control monomer incorporation and molecular weight [12].

- Thermomechanical Processing: Techniques such as heat treatment (annealing, quenching, aging), mechanical deformation (forging, rolling, extrusion), and their combinations enable precise control over microstructural evolution, including recrystallization, phase transformations, and texture development.

- Additive and Advanced Manufacturing: Modern techniques like 3D printing allow for the creation of complex geometries and tailored microstructures previously impossible to achieve, opening new frontiers in the processing-structure relationship.

Performance-Property Relationships

Performance describes how a material behaves in a specific application or environment, representing the ultimate criterion for material selection and design.

- Functional Performance: This includes characteristics such as efficiency in energy conversion (e.g., in batteries or catalysts), sensitivity and selectivity in sensing applications, and durability in harsh environments. Performance metrics are always application-specific.

- Structural Performance: For load-bearing applications, performance is measured by metrics like fatigue life, fracture resistance, creep tolerance, and stability under operational stresses and temperatures.

- In-Service Degradation: Performance must be evaluated over a component's entire lifecycle, accounting for property evolution due to environmental interactions (corrosion, oxidation, UV degradation) and mechanical damage accumulation. For degradable materials like PHAs, the degradation profile is a key performance metric [12].

Table 1: Key Processing Techniques and Their Influences on Structure and Performance

| Processing Method | Key Structural Controls | Resulting Properties & Performance |

|---|---|---|

| Biosynthesis (for PHAs) | Molecular weight, copolymer composition, crystallinity | Biocompatibility, degradation rate, mechanical flexibility [12] |

| Melt Extrusion | Grain orientation, density, anisotropy | Tensile strength (direction-dependent), barrier properties |

| Heat Treatment | Grain size, phase distribution, stress relief | Hardness, toughness, thermal stability, electrical conductivity |

| Additive Manufacturing | Porosity, custom geometry, graded structure | Design freedom, lightweight potential, complex functionality |

Experimental Characterization for PSPP Workflows

Establishing robust PSPP relationships requires comprehensive experimental characterization at each vertex of the tetrahedron. The following protocols outline key methodologies relevant to advanced material systems, including polymers, ceramics, and metals.

Protocol 1: Structural Characterization Suite

This protocol details the determination of material structure across multiple length scales.

Materials & Reagents:

- Sample Material: Prepared specimens appropriate for each technique (e.g., powder for XRD, thin section for microscopy).

- Sample Preparation Kits: Including mounting resins, polishing suspensions (e.g., diamond paste), and chemical etchants specific to the material system.

- Reference Standards: Certified standard materials for instrument calibration (e.g., silicon powder for XRD, latex beads for SEM).

Methodology:

X-ray Diffraction (XRD):

- Grind a representative portion of the sample to a fine powder (< 44 µm).

- Pack the powder into a sample holder, ensuring a flat, level surface.

- Mount the holder in the diffractometer and run a scan from 5° to 80° 2θ with a step size of 0.02° and a counting time of 1-2 seconds per step.

- Identify crystalline phases by comparing peak positions and intensities with reference patterns in the International Centre for Diffraction Data (ICDD) database.

Scanning Electron Microscopy (SEM):

- Cut a representative sample to a size of ~1 cm².

- Mount the sample on an aluminum stub using conductive carbon tape.

- Sputter-coat the sample with a thin layer (5-10 nm) of gold or platinum to ensure conductivity.

- Image the sample under high vacuum at accelerating voltages of 5-20 kV, using both secondary electron (SE) and backscattered electron (BSE) detectors to reveal topography and atomic number contrast, respectively.

Atomic Environments Analysis:

- For crystalline inorganic materials, search platforms like the Materials Platform for Data Science (MPDS) to identify coordination polyhedra (e.g., TiO₆, HgX₁₂) [13].

- This analysis reveals the local bonding environment of specific atoms, which is a critical determinant of property-structure relationships.

Protocol 2: Thermo-Mechanical Property Mapping

This protocol characterizes the thermal and mechanical properties, which are critical performance predictors.

Materials & Reagents:

- Differential Scanning Calorimetry (DSC) Panals: Hermetically sealed aluminum pans and lids.

- Tensile Test Specimens: Dog-bone specimens machined or molded to standard geometries (e.g., ASTM D638).

- Calibration Standards: Indium and Zinc for DSC temperature and enthalpy calibration.

Methodology:

Differential Scanning Calorimetry (DSC):

- Weigh 5-10 mg of sample into a tared DSC pan and seal it hermetically.

- Load the pan into the DSC alongside an empty reference pan.

- Run a heat/cool/heat cycle under nitrogen purge (e.g., -50°C to 300°C at 10°C/min).

- From the second heating cycle, determine the glass transition temperature (Tg), melting temperature (Tm), and enthalpy of fusion (ΔH_f).

Tensile Testing:

- Measure the cross-sectional dimensions of the gauge section of the dog-bone specimen using a calibrated micrometer.

- Mount the specimen in the tensile tester grips, ensuring proper alignment.

- Apply a uniaxial tensile strain at a constant crosshead speed (e.g., 5 mm/min) until failure.

- Record the stress-strain curve and calculate properties: Young's modulus (slope of initial linear region), yield strength, ultimate tensile strength, and elongation at break.

Data-Driven Materials Science and the PSPP Framework

The modern application of the PSPP tetrahedron is increasingly powered by data science and materials informatics. Platforms like the Materials Platform for Data Science (MPDS), which is based on the manually curated PAULING FILE database, provide critical experimental data for establishing and validating PSPP relationships [13]. This platform integrates crystallographic data, phase diagrams, and physical properties, allowing researchers to search across multiple criteria, including chemical elements, physical properties, and structural prototypes. The ability to query such integrated data enables the discovery of previously hidden correlations between processing conditions, resulting structures, and final material performance, thereby accelerating the materials design cycle.

Furthermore, machine learning (ML) models are now being trained on these vast materials datasets to predict new structures with desired properties and to recommend optimal synthesis pathways. As highlighted in the context of PHA research, machine learning can be used to study complex relationships, such as degradation profiles, and to optimize biomanufacturing processes [12]. This represents a paradigm shift from intuition-guided experimentation to predictive, data-validated material design, fully leveraging the interconnected nature of the PSPP tetrahedron.

Table 2: Quantitative Property Ranges for Select Polyhydroxyalkanoate (PHA) Biopolymers Illustrating PSPP Links

| PHA Type | Processing Method | Crystallinity (%) | Tensile Strength (MPa) | Young's Modulus (GPa) | Degradation Time (Months) |

|---|---|---|---|---|---|

| P(3HB) | Biosynthesis & Solvent Casting | 60-80 | 24-40 | 3.5-4.0 | 24-36 [12] |

| P(3HB-co-3HV) | Biosynthesis & Melt Extrusion | 30-60 | 20-25 | 0.5-1.5 | 18-24 [12] |

| P(4HB) | Biosynthesis & Electrospinning | ~45 | ~50 | ~0.15 | 12-18 [12] |

Visualization of PSPP Relationships

The following diagrams, created using Graphviz's DOT language and adhering to the specified color and contrast guidelines, illustrate the core concepts and workflows of the PSPP framework.

The Core Materials Tetrahedron

Diagram 1: The PSPP Materials Tetrahedron. The bidirectional relationships form an iterative design loop. The dashed line from Performance to Processing represents the feedback that drives material re-design and optimization.

A Data-Driven PSPP Research Workflow

Diagram 2: Data-Driven PSPP Workflow. This chart outlines a modern research cycle where data from successful experiments is fed into a database, informing machine learning models that generate new, improved processing hypotheses, thereby accelerating discovery.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools and Databases for PSPP Studies

| Tool / Resource | Type | Primary Function in PSPP Research |

|---|---|---|

| MPDS Platform | Database | Provides manually curated experimental data on inorganic crystals (structures, phase diagrams, properties) to establish and validate PSPP relationships [13]. |

| PAULING FILE | Foundational Database | The underlying relational database integrating crystallography, phase diagrams, and physical properties, upon which systems like MPDS are built [13]. |

| Contrasting Color Algorithm | Software Tool | Evaluates color pairs against a background to select the option with the best visual contrast (e.g., using APCA), crucial for creating accessible and clear data visualizations [14]. |

| BioRender | Diagramming Tool | Enables the creation of professional-quality scientific diagrams, particularly useful for visualizing complex biological or chemical processes in materials synthesis [15]. |

The Processing-Structure-Property-Performance (PSPP) paradigm represents a fundamental framework for understanding and engineering materials across multiple scientific disciplines, including biomedical research and drug development. This computational approach establishes critical relationships between how a material is processed, its resulting internal structure, its measurable properties, and its ultimate performance in specific applications [4]. In the context of drug development, PSPP principles enable researchers to systematically design and optimize biomaterials, protein-based therapeutics, and drug delivery systems with enhanced efficacy and safety profiles.

The integration of PSPP methodologies has become increasingly vital in addressing complex challenges in pharmaceutical development. By applying structure-property relationship analysis to biological systems, researchers can predict how molecular modifications will affect drug behavior, stability, and therapeutic performance [16]. This approach is particularly valuable for understanding and engineering protein-based therapeutics, where subtle changes in structure can significantly impact biological activity, immunogenicity, and pharmacokinetics. The PSPP framework provides a systematic methodology for optimizing these critical parameters during drug development.

PSPP Fundamentals and Computational Frameworks

Core Principles of PSPP Analysis

The PSPP framework operates on the fundamental principle that a material's (or biomolecule's) internal structure dictates its observable properties and ultimate performance. In biomedical contexts, this translates to understanding how molecular and supramolecular structures influence biological activity, stability, and safety. The paradigm encompasses multiple hierarchical levels of structural organization, from atomic arrangements to macroscopic morphology, each contributing to the overall performance characteristics of pharmaceutical compounds and biomaterials [4] [17].

Computational implementation of PSPP relies on sophisticated pipelines that integrate multiple analytical tools and prediction algorithms. These systems typically employ a structured workflow beginning with sequence preprocessing and analysis, progressing through secondary and tertiary structure prediction, and culminating in performance characterization [16]. The centerpiece of many PSPP pipelines involves fold recognition and structural modeling programs that can predict three-dimensional configurations from primary sequence data, enabling researchers to connect structural features with functional outcomes in biological systems.

PROSPECT-PSPP Computational Pipeline

The PROSPECT-PSPP pipeline represents an advanced implementation of the PSPP framework specifically designed for protein structure prediction and analysis. This automated computational system integrates multiple specialized tools through a SOAP (Simple Object Access Protocol)-based architecture, enabling comprehensive structural analysis and property prediction [16]. The pipeline's modular design allows for targeted application to various aspects of biomolecular characterization relevant to drug development.

As illustrated in the following workflow, the PROSPECT-PSPP system employs a sequential approach to protein structure analysis:

Table 1: Key Components of the PROSPECT-PSPP Computational Pipeline

| Pipeline Stage | Tool/Program | Function in Drug Development Context |

|---|---|---|

| Sequence Preprocessing | SignalP | Identifies and removes signal peptide sequences to focus on mature protein structure |

| Protein Type Classification | SOSUI | Distinguishes between soluble and membrane proteins, informing formulation strategies |

| Domain Partition | ProDom | Identifies structural domains for targeted therapeutic development |

| Secondary Structure Prediction | Prospect-SSP | Predicts local structural elements (α-helices, β-sheets) affecting stability and binding |

| Fold Recognition | PROSPECT | Identifies structural homologs and templates for unknown proteins |

| 3D Model Generation | Homology Modeling | Constructs atomic-level structural models for binding site analysis |

The PROSPECT threading program serves as the centerpiece of this pipeline, employing a divide-and-conquer algorithm that rigorously treats pairwise residue contacts [16]. This approach enables the identification of distant structural relationships that may not be detectable through sequence-based methods alone, providing crucial insights for engineering protein therapeutics with modified properties. The system also incorporates a confidence index using a combined z-score scheme that quantifies prediction reliability—a critical consideration when applying computational predictions to drug development decisions.

Special Considerations for Drug Development Applications

Biomaterial Characterization and Optimization

In drug development, PSPP methodologies enable systematic characterization and optimization of biomaterials used in formulations and delivery systems. Researchers can correlate processing parameters (e.g., lyophilization conditions, emulsion methods) with structural features (e.g., crystallinity, porosity) and resulting properties (e.g., dissolution rate, stability) to optimize drug product performance [17]. This approach is particularly valuable for complex formulations such as controlled-release systems, where material structure directly controls drug release kinetics.

Advanced characterization techniques, including Scanning Electron Microscopy and Transmission Electron Microscopy, provide the structural analysis component of PSPP by revealing material microstructures down to the atomic level [17]. These structural insights guide the optimization of processing parameters to achieve desired performance characteristics. For example, in developing materials for aircraft braking systems used in biomedical devices (e.g., centrifuge brakes), researchers have applied PSPP principles to enhance strength, reduce weight, and improve reliability—considerations equally important to medical equipment and device manufacturing.

Federated Learning for Collaborative Drug Development

The complexity and proprietary nature of pharmaceutical research creates significant barriers to data sharing, potentially limiting the application of PSPP approaches that benefit from large datasets. Federated Learning (FL) has emerged as a promising framework to address this challenge by enabling collaborative model training without centralizing sensitive data [18]. This approach is particularly valuable for PSPP-based drug development, where structural and property data may be distributed across multiple institutions.

Federated Learning operates on the principle of transmitting machine learning models to the locus of data rather than moving sensitive data to a central repository. Local models are trained on distributed datasets, and only model parameter updates are shared to refine a global model [18]. This architecture maintains data privacy and security while leveraging the collective insights available across multiple organizations. The MELLODDY (MachinE Learning Ledger Orchestration for Drug DiscoverY) project demonstrated the potential of this approach, with ten pharmaceutical companies collaboratively analyzing 20 million small molecule drug candidates across 40,000 biological screens without sharing proprietary assay details [18].

The following diagram illustrates how Federated Learning integrates with PSPP workflows in multi-institutional drug development:

Accelerating Neurodegenerative Disease Drug Development

PSPP approaches show particular promise in addressing the complex challenges of developing treatments for neurodegenerative diseases such as Parkinson's Disease (PD), which affects nearly 12 million people worldwide [18]. The multifaceted pathophysiology and heterogeneous clinical manifestations of PD necessitate therapeutic approaches that can accommodate diverse biological mechanisms and patient-specific factors. PSPP methodologies contribute to this effort by enabling more precise structure-based drug design and biomarker development.

Digital monitoring technologies generate high-dimensional data that can be analyzed within the PSPP framework to identify subtle structure-property-performance relationships in therapeutic development. These technologies provide objective, frequent assessments of patient functioning that complement traditional rating scales, capturing subclinical changes that may reflect underlying biological processes [18]. When analyzed through federated learning approaches, these datasets can reveal structural features of biomarkers or therapeutic targets that correlate with disease progression or treatment response, accelerating the development of disease-modifying therapies.

Experimental Protocols and Methodologies

Protocol for Protein Structure-Function Analysis in Therapeutic Development

Objective: To characterize the structure-property-performance relationships of protein-based therapeutics using computational and experimental PSPP approaches.

Materials and Reagents:

Table 2: Essential Research Reagents for PSPP-Based Protein Therapeutic Development

| Reagent/Material | Specifications | Function in PSPP Analysis |

|---|---|---|

| Target Protein Sequence | >85% purity, confirmed sequence | Primary input for structural prediction and analysis |

| Reference Structural Templates | PDB-deposited structures with >30% sequence identity | Template for homology modeling and fold recognition |

| Molecular Biology Reagents | PCR reagents, cloning vectors, expression systems | Experimental validation of computational predictions |

| Chromatography Materials | HPLC, FPLC systems with specialized columns | Purification and characterization of protein properties |

| Biophysical Analysis Tools | CD spectroscopy, DSC, light scattering | Experimental determination of structural properties |

| Cell-Based Assay Systems | Relevant disease models, reporter systems | Functional performance assessment |

Methodology:

Sequence Preprocessing and Domain Analysis

- Input protein sequence into the PROSPECT-PSPP pipeline

- Identify and remove signal peptides using SignalP tool

- Classify protein type (soluble/membrane) using SOSUI

- Partition sequence into structural domains using ProDom

- Document potential cleavage sites and post-translational modifications

Secondary Structure Prediction

- Generate sequence profiles using iterative PSI-BLAST

- Apply Prospect-SSP neural network for secondary structure prediction

- Identify α-helical, β-sheet, and coiled regions with confidence scores

- Compare predictions across multiple algorithms for consensus

Fold Recognition and Tertiary Structure Modeling

- Search PDB for structural homologs using sequence-based methods

- Perform threading analysis using PROSPECT with divide-and-conquer algorithm

- Generate residue-level alignments with template structures

- Calculate confidence z-scores for fold assignment reliability

- Construct atomic-level models using homology modeling approaches

Structure-Property Correlation

- Map known functional residues (active sites, binding regions) to predicted structure

- Correlate structural features with experimentally determined properties (stability, activity)

- Identify potential immunogenic regions based on surface accessibility and sequence features

- Predict aggregation-prone regions that may affect product stability and performance

Experimental Validation and Model Refinement

- Express and purify target protein using appropriate expression system

- Determine secondary structure content using circular dichroism spectroscopy

- Assess thermal stability using differential scanning calorimetry

- Measure biological activity using relevant functional assays

- Iteratively refine computational models based on experimental data

Data Analysis: Evaluate prediction accuracy by comparing computational models with experimental structures (when available). Calculate root-mean-square deviation (RMSD) for backbone atoms between predicted and experimental structures. Establish correlation coefficients between predicted structural features and measured properties (e.g., melting temperature, specific activity).

Applications in Pharmaceutical Development

Protein Therapeutic Optimization

PSPP methodologies directly support the development of optimized protein therapeutics by enabling systematic analysis of structure-function relationships. By correlating specific structural features with clinically relevant properties such as half-life, immunogenicity, and potency, researchers can implement targeted modifications to enhance therapeutic performance. For example, understanding how glycosylation patterns affect both protein structure and pharmacokinetic properties allows for engineering of biologics with optimized clearance profiles and reduced immunogenicity.

The PROSPECT-PSPP pipeline has demonstrated capability to generate backbone structures with approximately 4 Å root mean square distance (RMSD) accuracy for a substantial class of proteins [16]. This level of predictive accuracy enables highly useful functional inferences, such as identifying residues involved in protein-protein interactions or predicting the effects of point mutations on structural stability. These insights directly inform the rational design of therapeutic proteins with enhanced properties, reducing the empirical optimization typically required in biopharmaceutical development.

Biomaterial Selection and Formulation Design

In drug formulation development, PSPP principles guide the selection and engineering of materials based on their structural characteristics and resulting properties. By understanding how processing parameters (e.g., spray-drying conditions, crystal polymorph selection) influence material structure and subsequent performance (e.g., dissolution rate, stability), formulation scientists can more efficiently develop robust drug products with predictable performance characteristics [4] [17].

Recent applications include the development of materials with enhanced thermal and electrical properties for specialized drug delivery systems, where microstructural engineering enables precise control over drug release kinetics [17]. Similarly, research on strengthening lightweight metals through microstructural control has parallels in the development of medical devices and delivery systems where material properties directly impact product performance and patient experience.

The integration of PSPP methodologies into biomedical research and drug development represents a promising approach to addressing the complex challenges of modern therapeutic development. As computational power increases and algorithms become more sophisticated, PSPP-based predictions will likely achieve greater accuracy across a broader range of biological targets, reducing the empirical component of drug design. The incorporation of federated learning approaches will further enhance these capabilities by enabling collaborative model refinement while preserving data privacy and proprietary interests.

Future advancements will likely include more sophisticated multi-scale modeling approaches that connect atomic-level structural features with macroscopic material properties and biological performance. The integration of real-world evidence from digital monitoring technologies will further enrich PSPP frameworks, creating more predictive models of how structural features translate to clinical outcomes. For neurodegenerative diseases and other complex disorders, these approaches offer particular promise in developing the first disease-modifying therapies by revealing previously unrecognized structure-property-performance relationships.

In conclusion, PSPP represents a powerful paradigm for systematic therapeutic development, connecting fundamental structural characteristics with clinically relevant performance metrics. Through continued refinement of computational methods, strategic application of federated learning approaches, and thoughtful integration with experimental validation, PSPP methodologies will play an increasingly important role in accelerating the development of safe, effective therapeutics for diverse medical needs.

Historical Evolution of PSPP Frameworks in Materials Science

The Process-Structure-Property-Performance (PSPP) framework represents a foundational paradigm in materials science, providing a systematic approach to understanding how manufacturing processes influence material microstructure, which in turn determines macroscopic properties and ultimate performance in applications [1]. This framework encapsulates the fundamental principle that materials possess hierarchical structures evolving over multiple time and length scales, from atomic arrangements to macroscopic features, with each level influencing the overall behavior of the material [1]. The historical development of PSPP methodologies has evolved from experience-based trial-and-error approaches to increasingly sophisticated, data-driven, and computationally enhanced frameworks capable of inverting these relationships to design materials with targeted properties [19] [1].

This evolution has been driven by the recognition that the traditional pace of materials development—often requiring 20 years or more to move from discovery to commercial application—is inadequate to address urgent global challenges in clean energy, healthcare, and sustainable manufacturing [1]. The materials science field is consequently undergoing a paradigm shift, augmenting traditional experimental methods with techniques acquired from cross-fertilization with computer and data science disciplines, leading to the emerging field of Materials Informatics (MI) [1]. This review examines the historical trajectory of PSPP frameworks, from their conceptual origins to their current expression in integrated computational materials engineering and autonomous discovery platforms.

The Traditional PSPP Framework

Foundational Principles

The traditional PSPP framework established a causal chain through materials systems: Processing conditions (e.g., heat treatment, mechanical deformation) dictate the evolution of material Structure across multiple scales (atomic, microstructural, macroscopic), which governs resultant material Properties (mechanical, electrical, thermal), ultimately determining component Performance in service conditions [1] [20]. This relationship is visually summarized in Figure 1.

This linear conceptual model provided materials scientists with a systematic approach to materials selection and processing optimization. For example, in metallurgy, specific heat treatment temperatures and cooling rates were known to produce characteristic microstructural features (phase distributions, grain boundaries), which directly influenced mechanical properties like strength, ductility, and toughness [19]. The framework was primarily employed in a forward direction: given a known process, scientists could predict the likely structure and resulting properties, but the inverse problem—determining which process would yield a desired property—remained challenging and often relied on empirical trial-and-error or deeply specialized expert knowledge [1].

Experimental and Characterization Methods

Traditional PSPP analysis relied heavily on physical experiments and characterization techniques. Key methodological approaches included:

- Process Variation: Systematically altering manufacturing parameters (e.g., laser power in sintering, heat treatment temperature, composition) and observing outcomes [19] [21].

- Multi-scale Structural Characterization: Using microscopy (optical, electron) across different length scales to quantify microstructural features such as grain size, phase distribution, and defect concentration [20].

- Property Measurement: Employing standardized mechanical tests (tensile, hardness, fracture toughness) and other property evaluations to establish structure-property relationships [22].

- Statistical Design of Experiments: Utilizing methods developed by Box, Behnken, and Taguchi to identify key variables within process-structure or structure-property linkages, though these were typically constrained to small subsets of the full PSPP chain due to experimental complexity [1].

A significant limitation of these traditional approaches was their inability to efficiently survey all relationships across multiple length scales and PSPP linkages, potentially leading to undershoot in target properties if key variables were overlooked [1].

The Computational Revolution in PSPP

Early Computational Materials Science

The advent of computational power beginning in the 1950s enabled the first principled calculations of material behavior from quantum mechanics. Techniques like Density Functional Theory (DFT) allowed for the calculation of electronic structure and thermodynamic properties from first principles, providing insights previously inaccessible through experimentation alone [1]. As computing power advanced, High-Throughput (HT) computational methods emerged, capable of screening thousands of material compositions in silico, dramatically accelerating the initial discovery phase [1]. These approaches marked a significant shift from purely empirical PSPP studies toward theoretically grounded predictions.

Integrated Computational Materials Engineering

The field evolved further with the emergence of Integrated Computational Materials Engineering (ICME), which sought to explicitly link models across different length scales and physical phenomena to create integrated PSPP chains [19]. ICME frameworks aimed to bridge process simulations (e.g., thermal-fluid models for additive manufacturing), microstructural evolution models (e.g., phase-field simulations), and property prediction (e.g., crystal plasticity finite element analysis) [21]. However, these explicit integrations presented significant challenges due to model complexity, computational cost, and difficulties in managing information transfer between different simulation tools [19].

Table 1: Evolution of Computational Approaches in PSPP Frameworks

| Era | Primary Approach | Key Technologies | Limitations |

|---|---|---|---|

| Pre-1950s | Empirical Trial-and-Error | Experimental observation, Basic characterization | Slow, resource-intensive, limited fundamental understanding |

| 1950s-1990s | Early Computational Methods | Density Functional Theory, Finite Element Analysis | Limited to specific scales, disconnected models |

| 1990s-2010s | Integrated Computational Materials Engineering | Multi-scale modeling, Phase-field simulations, Crystal plasticity | High computational cost, challenging integration, limited experimental validation |

| 2010s-Present | Data-Driven Materials Informatics | Machine learning, High-throughput screening, Bayesian optimization | Data quality and quantity requirements, interpretability challenges |

Modern Data-Driven PSPP Frameworks

The Rise of Materials Informatics

The limitations of purely physics-based modeling, combined with increasing volumes of materials data, catalyzed the emergence of Materials Informatics (MI)—a field dedicated to the acquisition, storage, and analysis of materials data to accelerate discovery and development [1]. MI leverages data-driven algorithms to identify complex, often non-linear patterns in PSPP relationships that may be difficult to capture with physics-based models alone [1] [21]. This approach enables researchers to explore significantly more PSP linkages and multiscale relationships than previously possible.

The core of modern data-driven PSPP modeling involves establishing a mapping between a suitable representation of a material (its "fingerprint" or "DNA") and its properties through machine learning algorithms [1]. This fingerprint consists of an optimal set of descriptors that the model uses to learn what a material is and predict its properties. Once validated, these predictive models can instantaneously forecast properties of new or hypothetical material compositions, guiding targeted computational or experimental validation [1].

Multi-Information Source Fusion and Bayesian Optimization

A significant advancement in modern PSPP frameworks is the ability to fuse information from multiple sources—varying in fidelity, cost, and underlying physics—within a unified optimization scheme. As highlighted in Acta Materialia, Bayesian Optimization (BO)-based frameworks are increasingly used in materials design as they efficiently balance exploration and exploitation of design spaces under resource constraints [19]. These frameworks can integrate computational models at different length scales, empirical models, and experimental data, using statistical correlation to maximize agreement with available information while minimizing responses at odds with observations [19].

This multi-information source approach addresses a critical limitation of earlier frameworks, which typically relied on a single model per linkage along PSPP chains. By leveraging Gaussian Process regression and knowledge gradient acquisition functions, these frameworks determine both where to sample next in the design space and which information source to use for querying, dramatically improving optimization efficiency [19]. The workflow for such a framework is illustrated in Figure 2.

Microstructure-Aware Bayesian Optimization

The Critical Role of Microstructure

A recent paradigm shift in PSPP frameworks involves explicitly incorporating microstructural information as a central element of the design process, rather than treating it as an emergent by-product. As noted in a 2026 Acta Materialia publication, "Microstructures form the critical link between chemistry, processing protocols, and the resulting properties and performance of materials" [20]. This microstructure-aware approach addresses a fundamental limitation in traditional materials design, which often focused exclusively on direct chemistry-process-property relationships, overlooking microstructure as an active design component [20].

Modern frameworks now integrate microstructural descriptors as latent variables, creating a comprehensive process-structure-property mapping that enhances both predictive accuracy and optimization efficiency [20]. Dimensionality reduction techniques like the Active Subspace Method identify the most influential microstructural features, reducing computational complexity while maintaining accuracy in the design process [20]. For example, in thermoelectric materials, fine-tuning grain size, phase distribution, and defect concentration can significantly enhance performance by reducing thermal conductivity while maintaining electrical conductivity [20].

Experimental Protocols for Microstructure-Aware Design

Implementing a microstructure-aware Bayesian optimization framework involves several key methodological steps:

Design Space Definition: Establish the ranges of chemistry and processing parameters to be explored (e.g., for dual-phase steels: C 0.05-1 wt%, Si 0.1-2 wt%, Mn 0.15-3 wt%, heat treatment temperatures 650-850°C) [19].

Microstructural Prediction: Use thermodynamic models (e.g., surrogate models built from Thermo-Calc predictions) to predict phase constitution and composition after processing [19].

Microstructural Descriptor Extraction: Quantify key microstructural features (phase volume fractions, grain size distributions, interface characteristics) that serve as latent variables in the optimization [20].

Property Prediction: Utilize multiple micromechanical models of varying fidelity (from analytical models to microstructure-based finite element analysis) to predict mechanical properties from microstructural descriptors [19] [20].

Bayesian Optimization Loop: Employ Gaussian Process regression to build surrogate models, followed by knowledge gradient acquisition to determine the next design point and information source to query, balancing exploration and exploitation of the design space [19] [20].

Table 2: Quantitative Performance Comparison of PSPP Frameworks for Dual-Phase Steel Design

| Framework Type | Number of Experiments to Convergence | Computational Cost | Optimal Normalized Strain Hardening Rate Achieved | Key Limitations |

|---|---|---|---|---|

| Traditional Trial-and-Error | 50+ | Low | 0.72 | Resource intensive, slow convergence |

| Physics-Based Modeling Only | 15-20 | Very High (100s CPU hours) | 0.81 | Integration challenges, high computational cost |

| Basic Bayesian Optimization | 10-12 | Medium | 0.85 | Limited to single information sources, microstructure agnostic |

| Microstructure-Aware Bayesian Optimization | 6-8 | Medium-High | 0.89 | Requires microstructural characterization, model complexity |

PSPP in Additive Manufacturing

The Additive Manufacturing Challenge

Additive manufacturing (AM) presents both unique challenges and opportunities for PSPP frameworks. The layer-by-layer manufacturing scheme introduces complex physical phenomena including powder dynamics, laser-material interactions, heat transfer, fluid flow, and phase transformations that occur across multiple spatial and temporal scales [21]. These interacting phenomena create highly complex PSP relationships that are difficult to decipher using traditional approaches. For example, in metal AM, steep temperature gradients and repeated thermal cycles cause solid-state phase transformations that influence residual stress, distortion, and mechanical properties [21].

The flexibility of AM process parameters (laser power, scan speed, scan strategy, layer thickness) creates a high-dimensional design space that challenges conventional experimental approaches [22] [21]. Additionally, quality inconsistencies in AM (variations in porosity, surface roughness, microstructural heterogeneity) further complicate the establishment of reliable PSPP linkages [21].

Integrated Multiscale Modeling for AM

Recent research has addressed these challenges through integrated multiscale modeling approaches. A 2025 study established a "comprehensive suite of high-fidelity computational models that integrate multiscale and multiphysics simulations to capture the full Selective Laser Sintering (SLS) additive manufacturing process—from initial melting and solidification to mechanical response under external loads" [22]. This framework links process simulations with mechanical analysis through Representative Volume Elements (RVEs), explicitly connecting laser characteristics and powder properties to resulting crystallinity, density, porosity distribution, and ultimately mechanical performance [22].

For metal AM, data-driven modeling has proven particularly valuable in establishing PSP relationships while circumventing costly experiments and high-fidelity simulations. Gaussian process regression models have been successfully employed to predict molten pool geometry, porosity, and defect formation from process parameters, enabling optimization of manufacturing parameters for desired part quality [21]. These surrogate models can then be used in inverse design to identify process parameters that yield target microstructural features and mechanical properties.

Implementing modern PSPP frameworks requires specialized computational and experimental resources. The following toolkit outlines essential components for contemporary PSPP research in materials science.

Table 3: Essential Research Toolkit for Modern PSPP Frameworks

| Tool Category | Specific Tools/Techniques | Function in PSPP Research | Example Applications |

|---|---|---|---|

| Process Simulation | Thermal-fluid CFD, Multiphysics Object-Oriented Simulation Environment (MOOSE) | Model manufacturing processes, temperature histories, phase transformations | Predicting molten pool dynamics in additive manufacturing [22] [21] |

| Microstructural Characterization | Scanning Electron Microscopy, Electron Backscatter Diffraction, X-ray Tomography | Quantify microstructural features (grain size, phase distribution, porosity) | Constructing Representative Volume Elements for mechanical prediction [22] [20] |

| Microstructural Modeling | Phase-field Models, Cellular Automata, CALPHAD | Predict microstructural evolution during processing | Estimating phase fractions in dual-phase steels [19] |

| Property Prediction | Crystal Plasticity FEM, Micromechanical Models, Representative Volume Elements | Predict mechanical properties from microstructure | Stress-strain response prediction in SLS parts [22] |

| Data-Driven Modeling | Gaussian Process Regression, Bayesian Optimization, Active Learning | Build surrogate models, optimize design spaces, guide experiments | Multi-information source fusion for alloy design [19] [20] |

| High-Performance Computing | Parallel Computing Architectures, Cloud Computing | Enable multiscale simulations, high-throughput screening | High-throughput density functional theory calculations [1] |

The historical evolution of PSPP frameworks in materials science reveals a clear trajectory from qualitative, experience-based approaches toward quantitative, integrated, and increasingly autonomous methodologies. The field has progressed from simple linear PSPP models to sophisticated frameworks that explicitly account for microstructure as a central design variable, leverage multiple information sources through Bayesian optimization, and harness data-driven surrogate models to accelerate materials discovery [22] [19] [20].