Unlocking Innovation: A Guide to Multimodal Data Extraction from Materials Patents

This article provides researchers, scientists, and drug development professionals with a comprehensive overview of multimodal AI for extracting valuable data from materials patents.

Unlocking Innovation: A Guide to Multimodal Data Extraction from Materials Patents

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive overview of multimodal AI for extracting valuable data from materials patents. It covers the fundamental principles of processing text, images, and metadata from patent documents, explores advanced methodologies and tools for practical application, addresses common challenges and optimization strategies, and evaluates current capabilities through benchmarks and comparative analysis. The goal is to equip professionals with the knowledge to accelerate materials discovery and R&D by effectively leveraging the rich, yet complex, information embedded in patent literature.

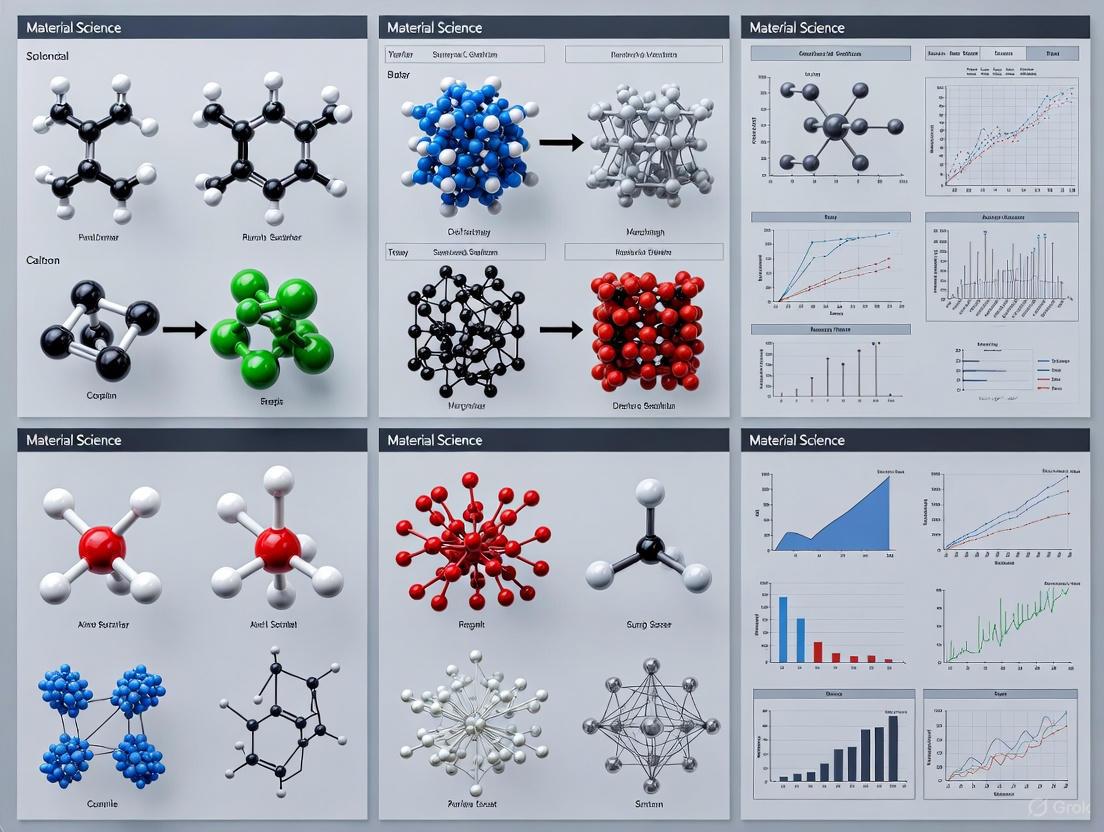

Why Multimodal Data is the Key to Unlocking Materials Patents

For researchers and scientists in drug development and materials science, a modern patent is a rich, multimodal dataset. Moving beyond traditional text-centric analysis, this application note details protocols for extracting and integrating data from chemical structures, visual illustrations, and quantitative property measurements. By treating patents as structured data repositories, professionals can accelerate innovation, strengthen intellectual property positions, and perform more comprehensive competitive landscape analyses. The methodologies outlined here are designed for integration within a broader research framework focused on multimodal data extraction from materials patents.

The Multimodal Nature of Materials Patents

A materials patent is a composite entity where protection is secured through the interplay of multiple data modalities. The claim defines the legal scope, the written description enables reproduction, and the visual and quantitative data provide the evidence of novelty and structure. A recent ruling by the U.S. Court of Appeals for the Federal Circuit underscores the critical importance of defining a specific, non-natural material with measurable parameters that reflect the material's underlying structure [1]. This legal precedent reinforces the need for a multimodal approach to both drafting and analyzing patents, as the validity of a claim can hinge on the successful linkage of a measurable property to a structural feature.

The following table summarizes the key data modalities present in a typical advanced materials patent and their primary functions in establishing patentability.

Table 1: Core Data Modalities in a Materials Patent

| Modality | Primary Function | Examples in Materials Science |

|---|---|---|

| Textual Claims | Define the legal boundaries of the invention. | Composition of matter claims, method of use claims. |

| Structural Formulas | Depict the molecular or atomic architecture. | Chemical structures, polymer repeating units, crystalline lattices. |

| Micrographic Evidence | Provide visual proof of structure and morphology. | Scanning Electron Microscope (SEM) images, Transmission Electron Microscope (TEM) images. |

| Quantitative Property Data | Demonstrate novelty and utility through measurable characteristics. | Melting point, tensile strength, catalytic activity, porosity, conductivity. |

| Graphical Data | Illustrate performance advantages over prior art. | X-ray Diffraction (XRD) patterns, Differential Scanning Calorimetry (DSC) thermograms, performance comparison charts. |

Application Note: A Protocol for Multimodal Data Extraction

This protocol provides a step-by-step methodology for systematically deconstructing a materials patent to create a structured, machine-readable dataset suitable for analysis, validation, and trend forecasting.

Phase 1: Document Acquisition and Preprocessing

Objective: Obtain a high-fidelity digital copy of the complete patent document.

Step 1: Source Identification

- Retrieve the patent document from official repositories such as the USPTO, Google Patents, or the European Patent Office's Espacenet. The PatentsView platform also provides enhanced, disambiguated U.S. patent data for large-scale analysis [2].

- Prioritize the full-document PDF, which includes the front page, drawings, specifications, claims, and search report.

Step 2: Quality Assurance and Optical Character Recognition (OCR)

- Visually inspect the document for clarity and completeness. For older patents available only as scanned images, apply a modern OCR engine (e.g., Tesseract OCR, Adobe Acrobat Pro) configured for scientific and technical terminology.

- Output: A searchable PDF file with selectable text.

Phase 2: Modality-Specific Data Extraction

Objective: Isolate and digitize information from each distinct modality.

Step 3: Textual Data Extraction

- Tool: Use natural language processing (NLP) libraries (e.g., spaCy, SciSpacy) or custom scripts.

- Protocol:

- Segment the document text into fields: Title, Abstract, Background, Summary, Detailed Description, Claims.

- From the "Claims" section, extract composition claims, focusing on the specific, measurable parameters that define the material [1].

- From the "Detailed Description," identify all passages that link a measurable property to a structural feature of the material.

Step 4: Visual Data Extraction and Analysis

- Tool: Image processing libraries (e.g., OpenCV, Pillow) and multimodal AI models.

- Protocol:

- Identify and separate all figures from the patent document.

- Classify figures using a pre-trained model (e.g., ResNet) or rule-based system (e.g., caption analysis) into categories: Chemical Structure, Micrograph, Graph/Plot, Process Flowchart, Schematic Diagram.

- For chemical structure images, use a structure recognition tool (e.g., OSRA, ChemDataExtractor) to convert the image into a machine-readable format (e.g., SMILES, InChI).

- For micrographs and diagrams, extract key regions of interest. Advanced methods can use a text-guided multimodal relationship extraction approach, where the textual description of a figure guides the visual encoder to focus on relevant parts of the image, reducing interference from irrelevant visual information [3].

Step 5: Numerical and Graphical Data Extraction

- Tool: Data extraction software (e.g., WebPlotDigitizer) or custom algorithms.

- Protocol:

- Identify graphs and charts containing performance data (e.g., stress-strain curves, dose-response plots).

- Use WebPlotDigitizer to manually or automatically extract numerical (x,y) data points from these graphs.

- Compile all numerically stated properties from the text (e.g., "a melting point of 150°C ± 5°C") into a structured table.

Phase 3: Data Fusion and Structured Output

Objective: Integrate the extracted multimodal data into a unified representation.

Step 6: Entity Resolution and Linkage

- Create a central database record for the patent (using the publication number as a unique key).

- Link all extracted data to this central record. For example, link a specific SEM image (Visual Modality) to the quantitative porosity data (Numerical Modality) it supports and the specific claim (Textual Modality) it enables.

Step 7: Cross-Modal Validation

- Validate consistency across modalities. For instance, check that the crystalline structure depicted in an XRD pattern (Graphical Modality) is consistent with the crystal phase described in the text (Textual Modality).

The following diagram illustrates the complete multimodal data extraction and fusion workflow.

Experimental Protocol: Text-Guided Visual Feature Extraction

This protocol details a specific technique for refining visual data extraction from patent figures, using textual context to guide the process and improve accuracy.

Objective: To extract visual feature encodings from a patent figure that are specifically relevant to the accompanying text, thereby filtering out irrelevant visual noise [3].

Principle: A pre-trained visual encoder (e.g., CLIP) is modulated by a text-based "top-down attention" signal. The text representation acts as a prior, guiding the visual encoder to re-weight visual features based on their semantic relevance to the text.

Workflow:

- Input: A global image (e.g., a complex diagram from a patent) and its corresponding descriptive text.

- Text Encoding: The descriptive text is processed by a text encoder to generate a text feature representation (

φ). - Initial Visual Encoding: The global image is processed by a visual encoder to generate an initial visual feature representation.

- Re-weighting and Feedback: The similarity between the initial visual features and the text features (

φ) is calculated. A decoder generates a top-down signal (x_td) that is fed back to the self-attention modules of the visual encoder, updating its Value matrices. - Secondary Forward Propagation: The image is processed again by the updated visual encoder, which now produces a refined visual feature encoding that is more aligned with the text semantics.

- Output: A visual feature representation that is semantically relevant to the input text.

The logical flow and data transformation of this protocol are shown below.

Key Reagent Solutions:

- Pre-trained CLIP Model: A neural network pre-trained on a vast number of image-text pairs. It provides the foundational text and visual encoders that understand the relationship between language and visual concepts.

- Computational Framework (e.g., PyTorch/TensorFlow): An open-source machine learning library that facilitates the construction, training, and deployment of deep learning models, including the custom architecture for top-down feedback.

- Patent Image Dataset: A curated collection of figures and diagrams extracted from materials patents, annotated with corresponding descriptive text from the specification.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential tools and resources for implementing the protocols described in this application note.

Table 2: Essential Tools for Multimodal Patent Analysis

| Tool/Resource | Type | Primary Function | Relevance to Protocol |

|---|---|---|---|

| ChemDataExtractor | Software Library | Chemical Information Extraction | Automatically extracts chemical names, structures, and properties from text and images. |

| OSRA | Software Utility | Image-to-Structure Conversion | Converts images of chemical structures into SMILES or InChI strings. |

| WebPlotDigitizer | Web Application | Graphical Data Extraction | Digitizes data points from graphs and charts in patent documents. |

| spaCy/SciSpacy | NLP Library | Text Processing and Entity Recognition | Segments patent text and identifies key scientific entities and relationships. |

| OpenCV/Pillow | Library | Image Processing | Handles image preprocessing, segmentation, and basic analysis of patent figures. |

| PatentsView | Data Platform | Enhanced Patent Data | Provides bulk, disambiguated U.S. patent data for large-scale trend analysis [2]. |

| The Lens Platform | Data Platform | Patent & Scholarly Work Metadata | Offers extensive data on patent-literature linkages for analyzing science-innovation trends [4]. |

| Locarno Classification | Classification System | International Standard for Design Patents | Essential for classifying the ornamental aspects of materials-related designs [5]. |

The paradigm for materials patent analysis is shifting from a text-locked review to an integrated, multimodal interrogation. By adopting the protocols and tools outlined in this application note, researchers and drug development professionals can unlock a deeper, more structured understanding of intellectual property. This approach not only accelerates R&D cycles by facilitating faster prior art searches and competitive analysis but also provides a robust framework for drafting stronger, more defensible patents grounded in the explicit linkage of measurable properties to material structure. Integrating these multimodal extraction techniques is fundamental to advancing research in the field of materials patent informatics.

Application Notes: Multimodal Data Extraction in Patent Research

The analysis of materials and drug patents requires the processing of diverse, unstructured data types. Effective multimodal data extraction systems parse these elements into a structured, machine-readable format, enabling comprehensive prior art searches, trend analysis, and competitive intelligence [6]. The core data types present unique challenges and opportunities for automation.

Textual Claims form the legal foundation of a patent. Advanced Natural Language Processing (NLP) and Large Language Models (LLMs) are now used to understand technical context beyond simple keyword matching. This allows R&D intelligence platforms to extract key concepts, identify white space opportunities, and connect patents with relevant scientific literature [6].

Schematic Images and Flowcharts illustrate complex processes, device diagrams, and experimental workflows. Computer vision techniques can segment and classify these images. Providing a system with a video content input and a scene detector that identifies scene boundaries allows for the formulation of a composite embedding that indexes the visual content, making it searchable [7].

Molecular Structures are critical in pharmaceutical and chemical patents. Traditional rule-based segmentation tools struggle with graphical variability and noise [8]. Deep learning models, such as the Vision Transformer (ViT)-based Chemistry-Segment Anything Model (ChemSAM), achieve state-of-the-art results by identifying and locating chemical structure depictions at the pixel level, then clustering the generated masks to extract pure single structures [8].

Tabular Data presents statistical summaries and experimental results. Well-designed tables aid comparison, reduce visual clutter, and increase readability. Key principles for effective tables include right-flush alignment of numbers, use of tabular fonts, and avoiding heavy grid lines to facilitate accurate data extraction and interpretation [9].

The integration of these extracted data types into a unified index, such as through an embedding aggregator, is a key function of modern multimodal extraction systems, powering sophisticated search and analysis capabilities for R&D teams [7].

Experimental Protocols

Protocol: Chemical Structure Segmentation from Patent Documents Using ChemSAM

Purpose: To automatically identify and segment depictions of chemical structures from image-based sources, such as scanned patent documents or scientific articles, into isolated, machine-readable image files.

Principle: This deep learning-based method uses a Vision Transformer (ViT) encoder-decoder architecture to perform pixel-level classification, distinguishing pixels belonging to chemical structures from the background. This approach is robust to variations in image quality and style and avoids the need for handcrafted features [8].

Research Reagent Solutions

- ChemSAM Model: A deep learning model adapting the Segment Anything Model (SAM) for chemistry. Its core components are an image encoder, a prompt encoder, and a mask decoder. It uses adapter modules to incorporate chemical domain knowledge without full fine-tuning [8].

- Input Image: A document image in PNG or similar format, derived from a PDF or scan.

- Python/PyTorch Environment: The backend for running ChemSAM inference.

- Post-processing Algorithm: Custom code for clustering generated masks based on connectivity and refining them to ensure each contains a single chemical structure.

Procedure

- Input Preparation: Convert the patent document (PDF) into an image file (e.g., PNG). Ensure the image resolution is sufficient for feature detection.

- Model Inference: Process the input image through the ChemSAM network.

- The image encoder, based on a pre-trained ViT, processes the image into an embedding.

- The mask decoder uses this embedding to generate initial probability masks, where each pixel value indicates the likelihood it belongs to a chemical structure.

- Mask Post-processing:

- Cluster the generated masks based on their spatial connectivity.

- Apply refinement algorithms to update each mask cluster, ensuring it cleanly encapsulates a single chemical structure and excludes non-molecular parts like arrows or labels.

- Output Generation: Export the final, segmented images of individual chemical structures for downstream tasks, such as conversion to SMILES or Chemical graph data [8].

Visualization of Workflow

Protocol: Knowledge Component Extraction for Patent Analysis Using Large Multimodal Models

Purpose: To automatically extract Knowledge Components (KCs)—acquired units of cognitive function or structure—from multimodal educational or technical content to enhance knowledge tracing and trend analysis in patent landscapes.

Principle: Instruction-tuned Large Multimodal Models (LMMs) can parse text and images to identify and describe inherent knowledge components. These extracted KCs can be clustered and used to model relationships within a technology domain, providing a structured understanding of the knowledge required to solve specific problems described in patents [10].

Research Reagent Solutions

- Large Multimodal Model (LMM): An instruction-tuned model (e.g., GPT-4o) capable of processing both text and images via an API.

- Parsed Content Data: Text and images extracted from patent documents or scientific literature.

- Sentence Embedding Model: A model (e.g., from sentence-transformers) to convert LMM-generated KC descriptions into numerical vectors.

- Clustering Algorithm: An algorithm (e.g., K-means) to group similar KCs based on their sentence embeddings.

Procedure

- Data Preprocessing: Parse the target corpus (e.g., patent collection) to extract textual descriptions and schematic images.

- LMM-based KC Extraction: Use the LMM API to analyze the parsed content. The model identifies and describes the key knowledge components required to understand the technical concepts.

- Embedding and Clustering: Generate sentence embeddings for all extracted KC descriptions. Use a clustering algorithm to group similar KCs, creating a structured ontology of knowledge for the domain.

- Validation and Integration: The utility of the generated KCs can be validated by using them as features in knowledge tracing models (e.g., Performance Factors Analysis) and comparing their performance to human-tagged labels [10]. The final clustered KCs serve as a search index and map of technological knowledge.

Visualization of Workflow

Table 1: Performance Comparison of Chemical Structure Segmentation Tools

| Model / Tool | Primary Methodology | Key Strengths | Noted Limitations |

|---|---|---|---|

| ChemSAM [8] | Deep Learning (Vision Transformer + Adapter) | State-of-the-art on benchmarks; robust to image quality/style; pixel-level accuracy. | Requires post-processing to ensure single-structure segments. |

| DECIMER-Segmentation [8] | Deep Learning (Mask R-CNN) | Detects and segments chemical structures. | Segments may include non-molecular parts (arrows, lines); may overlook some text within structures. |

| OSRA [8] | Rule-based | Open-source solution using feature density (black pixel ratio). | Struggles with wavy bonds, overlapping lines, and is sensitive to noise. |

| Staker et al. [8] | Deep Learning (U-Net) | Uses a U-Net model trained on semi-synthetic data. | Overall segmentation and resolution accuracy reported between 41% and 83%. |

Table 2: Key Features of Selected Patent Search and Analysis Tools (2025) [6]

| Platform | Primary Focus | Distinguishing Features | Ideal Use Case |

|---|---|---|---|

| Cypris | R&D Intelligence | Processes 500M+ technical documents; multimodal search (e.g., structure upload); proprietary R&D ontology. | Enterprise R&D teams needing technical insight and innovation opportunity identification. |

| PatSnap | IP Analytics & Management | Comprehensive global patent coverage; detailed analytics and visualization dashboards; patent valuation. | Large enterprises with dedicated IP departments requiring portfolio management. |

| Derwent Innovation | Patent Data Curation | Human-enhanced patent abstracts (Derwent World Patents Index); strong chemical structure search. | Pharmaceutical and chemical companies conducting prior art and FTO analysis. |

| The Lens | Academic-Industrial Intelligence | Integrates patents with scholarly literature; open-access model; PatSeq for biological sequences. | Academic institutions and researchers tracking innovation impact and technology transfer. |

Table 3: Principles for Effective Tabular Data Presentation [9]

| Design Principle | Specific Guideline | Rationale |

|---|---|---|

| Aid Comparisons | Right-flush align numbers and their headers. | Aligns place values vertically, making numeric comparison easier. |

| Aid Comparisons | Use a tabular font for numeric columns. | Ensures each number has equal width, maintaining vertical alignment. |

| Reduce Visual Clutter | Avoid heavy grid lines. | Removes unnecessary visual elements that distract from the data. |

| Increase Readability | Ensure headers stand out from the body. | Helps guide the reader and clearly defines data categories. |

| Increase Readability | Use active, concise titles. | Clearly communicates the table's purpose and key takeaway. |

Patent documents serve as a critical repository of technical knowledge, yet they present a formidable challenge for automated analysis due to a fundamental semantic gap between their specialized language and what computational models can readily understand. This gap arises from the unique structural and linguistic characteristics of patent texts, which combine legal, technical, and scientific terminology within a highly structured format [11]. The problem is particularly acute in multimodal data extraction from materials and drug development patents, where technical descriptions of chemical structures, biological processes, and experimental protocols require sophisticated interpretation beyond conventional natural language processing capabilities.

The patent life cycle, spanning from initial conception through examination to grant and maintenance, further compounds these challenges [11]. At each stage, different stakeholders—inventors, examiners, attorneys, and researchers—interact with the documents with varying interpretive frameworks, widening the semantic gap. This application note provides structured methodologies and analytical frameworks to bridge this divide, enabling more effective extraction and utilization of knowledge embedded within pharmaceutical and materials patents through advanced computational approaches.

Quantitative Landscape of Patent Semantics

Statistical Characterization of Patent Documents

Table 1: Quantitative Analysis of Patent Document Characteristics

| Characteristic | Statistical Measure | Data Source | Implications for Semantic Gap |

|---|---|---|---|

| Global Patent Applications | 3.46 million applications in 2022 worldwide [12] | WIPO Statistics | Scale necessitates automated processing despite semantic complexity |

| CRISPR Patent Landscape | 60,776 patents referencing 193,517 scholarly works [4] | Lens Platform (2023) | Demonstrates dense interconnection between patents and academic literature |

| Cyanobacteria Patent Landscape | 33,489 patents with 84,415 referenced scholarly works [4] | Lens Platform (2023) | Highlights interdisciplinary knowledge integration challenges |

| International Patent Classifications | 7,288 IPCs for cyanobacteria; 5,118 for CRISPR patents [4] | World Intellectual Property Organization | Classification complexity requires nuanced semantic understanding |

| Academic Patent Contributions | Australian universities: 3.18% of publications but only 0.15% of global patent filings [13] | Australian Research Council | Indicates systemic barriers in knowledge translation |

Table 2: Patent Citation Typology and Semantic Significance

| Citation Type | Definition | Semantic Significance | Frequency in Analysis |

|---|---|---|---|

| X-Type Citations | Documents that are novelty or inventive step-destroying [13] | High semantic value for determining patent boundaries | 47% of significant citations in CRISPR dataset |

| Y-Type Citations | Documents that render claims obvious when combined [13] | Medium semantic value indicating combinatorial prior art | 32% of significant citations in CRISPR dataset |

| A-Type Citations | Background documents without substantive impact on claims [13] | Low semantic value for novelty assessment | 21% of citations in analytical samples |

| Non-Patent Literature | Academic papers, technical journals, websites cited by examiners [13] | Critical for tracing scientific foundation of inventions | 193,517 scholarly works in CRISPR patents |

Experimental Protocols for Semantic Gap Analysis

Protocol 1: Multimodal Patent Image Retrieval

Objective: Implement and validate a language-informed, distribution-aware multimodal approach for patent image feature learning to enhance semantic understanding of patent drawings and diagrams [14].

Materials and Reagents:

- DeepPatent2 dataset (or equivalent patent image corpus)

- Pre-trained Visual Language Model (e.g., CLIP or similar architecture)

- Large Language Model API access (e.g., GPT-4, Claude, or equivalent)

- Computational resources with GPU acceleration

- Python 3.8+ with PyTorch/TensorFlow ecosystem

Procedure:

- Data Preprocessing

- Collect patent images and corresponding textual descriptions from target domain (e.g., materials science, drug development)

- Apply image normalization and augmentation techniques

- Extract and clean accompanying text descriptions, claims, and abstracts

Language Model Enhancement

- Generate detailed, alias-containing, free-form descriptions using LLM prompting [14]

- Create semantic embeddings for both original and enhanced text descriptions

- Implement cross-modal alignment between visual and textual representations

Model Training with Distribution-Aware Losses

- Implement InfoNCE loss for contrastive learning between image-text pairs

- Add coarse-grained losses with uncertainty factors tailored for long-tail distribution of patent classifications [14]

- Train model for minimum of 50 epochs with batch size adapted to hardware capabilities

Validation and Metrics

- Evaluate using mean Average Precision (mAP), Recall@10, and MRR@10

- Compare performance against baseline methods without multimodal enhancement

- Conduct ablation studies to quantify contribution of each component

Expected Outcomes: State-of-the-art or comparable performance in image-based patent retrieval with demonstrated improvements of mAP +53.3%, Recall@10 +41.8%, and MRR@10 +51.9% over baseline methods [14].

Objective: Identify statistically significant associations between patents and scholarly works to map knowledge flows and semantic relationships in targeted technological domains [4].

Materials and Reagents:

- Patent dataset from Lens platform or equivalent database

- Statistical computing environment (R 4.0+ or Python with SciPy/StatsModels)

- Network analysis software (Cytoscape, Gephi, or networkx library)

- Scholarly publication databases (PubMed, Web of Science, Crossref)

Procedure:

- Data Collection and Curation

- Download comprehensive patent datasets for target technology (e.g., "CRISPR" or "cyanobacteria")

- Extract all referenced scholarly works from patent documents

- Collect international patent classification codes for all patents

Time-Series Analysis for Innovation Trends

- Count patents per priority year and IPC

- Model counts using negative binomial distribution [4]

- Identify statistically significant changes in innovation trends (p ≤ 10⁻¹⁰ and changes ≥100 patents)

Enrichment Analysis

- Select top 10 IPCs with most significant trend changes

- Collect all patents and referenced scholarly works for selected IPCs

- Perform one-sided Fisher's exact test for each scholarly work

- Apply false-discovery rate multiple-testing adjustment (adjusted p-values ≤ 0.001)

Network Visualization and Interpretation

- Construct bipartite network connecting patents to scholarly works

- Identify key publications significantly associated with innovation trends

- Interpret associations in context of technical requirements

Expected Outcomes: Identification of ~1,000 scholarly works from ~254,000 publications that are statistically significantly over-represented in patents from changing innovation trends, revealing key scientific foundations for technological advances [4].

Visualization of Analytical Workflows

Multimodal Patent Analysis Framework

Multimodal Patent Analysis: This workflow illustrates the integration of visual and textual patent information with distribution-aware learning to bridge the semantic gap in patent analysis.

Semantic Gap Bridging Protocol

Semantic Gap Bridging: This diagram outlines the methodological approach to addressing fundamental challenges in patent semantic understanding through advanced computational techniques.

Research Reagent Solutions

Table 3: Essential Computational Reagents for Patent Semantic Analysis

| Reagent/Tool | Function | Application Context | Implementation Example |

|---|---|---|---|

| Visual Language Models (VLM) | Cross-modal alignment of images and text [14] | Patent image retrieval and multimodal understanding | CLIP, BLIP, or custom-trained variants |

| Large Language Models (LLM) | Semantic augmentation of patent text descriptions [14] | Generating detailed, alias-containing descriptions | GPT-4, Claude, or domain-fine-tuned models |

| Distribution-Aware Contrastive Loss | Handling long-tail distribution of patent classifications [14] | Improving performance on underrepresented classes | Modified InfoNCE with uncertainty factors |

| Patent Citation Analytics | Measuring research impact and knowledge flows [13] | Identifying significant scholarly works | X/Y-type citation analysis with de-duplication |

| Negative Binomial Modeling | Statistical analysis of patent time-series data [4] | Identifying significant innovation trends | R or Python implementation with goodness of fit testing |

| Enrichment Analysis Framework | Identifying statistically over-represented scholarly works [4] | Connecting academic research to patent trends | Fisher's exact test with FDR correction |

| International Patent Classification | Standardized categorization of patent content [11] | Structural understanding of patent domains | WIPO classification scheme 2023.01 |

The role of patent data has undergone a fundamental transformation, evolving from a narrow focus on legal protection to a broad strategic resource for research and development (R&D) intelligence. This shift is particularly evident in data-intensive fields such as materials science and pharmaceutical development, where patent documents represent a rich, structured repository of technical knowledge [15]. The integration of advanced analytical techniques, including artificial intelligence (AI), machine learning, and natural language processing (NLP), has enabled this evolution, allowing researchers to extract meaningful insights from millions of patent documents [6] [16]. For R&D teams in drug development, modern patent intelligence platforms now serve as critical tools for identifying white space opportunities, accelerating innovation pipelines, and reducing research time by up to 80% [6]. This application note details the methodologies and tools required to leverage patent data as a core component of multimodal R&D intelligence, with specific protocols for materials and pharmaceutical research.

The Evolving Patent Analytics Landscape: From Legal Documents to Technical Knowledge Assets

Quantitative Analysis of Patent Research Domains

Traditional patent analysis focused primarily on legal metrics and basic statistical counts. Modern approaches leverage computational power to analyze patent data across multiple dimensions, from technical content to commercial impact. The table below summarizes key quantitative indicators used in contemporary patent analytics.

Table 1: Key Quantitative Indicators in Modern Patent Analytics

| Indicator Category | Specific Metrics | Application in R&D Intelligence |

|---|---|---|

| Legal & Protection | Patent families, grant status, remaining term, freedom-to-operate analysis | Assessing protection scope and infringement risks [15] |

| Commercial & Value | Citation counts, renewal data, patent valuation scores, market coverage | Identifying high-impact technologies and investment opportunities [15] |

| Technical & Technological | IPC/CPC classifications, keyword frequency, semantic similarity, claim breadth | Mapping technology landscapes and identifying emerging technical areas [16] |

| Temporal & Evolutionary | Application trends, technology life cycle analysis, growth rates | Forecasting technology development and identifying maturation points [15] |

The Integration of Advanced Analytical Techniques

The field of patent analytics has progressively incorporated more sophisticated methodologies. Initially dominated by basic information retrieval systems in the 1950s that focused on metadata fields, patent analysis expanded to full-text document analysis in the 1960s-1970s [15]. The 1970s-1980s marked a significant shift toward using patent statistics as proxies for innovation and technological change, with scholars examining correlations between R&D investment and patent counts [15]. Contemporary patent analytics now integrates advanced techniques including:

- Text mining and natural language processing (NLP) for semantic understanding of technical content [15]

- Network analysis for mapping citation patterns and knowledge flows [15]

- Machine learning and deep learning for classification, prediction, and pattern recognition [16]

- AI-enhanced claim interpretation for understanding claim scope and structure [17]

These techniques have enabled a paradigm shift from document retrieval to insight generation, with modern platforms capable of processing over 500 million technical documents including patents, scientific papers, and market sources [6].

Experimental Protocols for Multimodal Patent Data Extraction in Materials Science

Protocol 1: Technology Landscape Analysis for Novel Materials Identification

Purpose: To identify emerging materials technologies and white space opportunities through comprehensive patent analysis.

Materials and Reagents:

- Data Source: PATSTAT, USPTO, or commercial platform (e.g., PatSnap, Cypris)

- Analytical Software: Python with Natural Language Processing libraries (NLTK, spaCy)

- Visualization Tools: Gephi for network visualization, Matplotlib for trend analysis

Procedure:

- Define Technology Scope: Identify relevant International Patent Classification (IPC) and Cooperative Patent Classification (CPC) codes for target material domain (e.g., C01B31/04 for graphite) [18].

- Data Collection: Execute search query in selected database with temporal filter (e.g., 2013-2023 for decade analysis). For graphite technologies, this would capture 6,985 patented inventions from 32,385 patent applications across 32 countries [18].

- Text Mining: Extract key concepts and technical terms from titles, abstracts, and claims using TF-IDF and n-gram analysis.

- Network Construction: Generate co-occurrence networks of technical terms to identify technology clusters.

- Trend Analysis: Calculate annual growth rates for identified clusters to detect emerging areas.

- White Space Identification: Map competitor positions and identify underexplored technical areas.

Expected Outcomes: Identification of 3-5 emerging technology subdomains with growth rates exceeding 15% annually, plus mapping of key players and innovation networks.

Protocol 2: Prior Art Analysis for Novelty Assessment

Purpose: To conduct comprehensive prior art search for assessing patentability of new materials or formulations.

Materials and Reagents:

- Primary Databases: Google Patents, PatentScope, DEPATISnet [19]

- Specialized Tools: Patlytics for AI-assisted claim interpretation [17]

- Reference Management: Zotero or Mendeley for organizing relevant documents

Procedure:

- Claim Deconstruction: Break down invention claims into individual limitations using automated claim breakdown tools [17].

- Keyword Generation: Develop comprehensive search vocabulary including synonyms, technical equivalents, and broader/narrower terms for each limitation.

- Multimodal Search Execution:

- Text-based search across title, abstract, claims, and description fields

- Chemical structure search where applicable (e.g., for pharmaceutical compounds)

- Citation analysis to identify seminal patents

- Relevance Assessment: Apply Boolean operators to combine search results and filter for relevance.

- Semantic Analysis: Use NLP techniques to identify semantically similar documents that may not share exact keywords [16].

- Documentation: Compile relevant prior art with annotations on relevance to each claim limitation.

Expected Outcomes: Comprehensive prior art report with categorization of references by relevance to specific claim elements, enabling accurate novelty assessment.

Visualization of Patent Intelligence Workflows

Multimodal Patent Data Extraction Workflow

AI-Enhanced Patent Analysis Process

The Scientist's Toolkit: Essential Research Reagent Solutions for Patent Intelligence

Table 2: Essential Tools for Modern Patent Intelligence in Materials and Pharmaceutical Research

| Tool Category | Specific Platform Examples | Function in R&D Workflow |

|---|---|---|

| Comprehensive R&D Intelligence Platforms | Cypris, PatSnap | Integrate patents with scientific literature and market data for holistic innovation intelligence; enable reduction of research time by up to 80% [6] |

| AI-Powered Patent Analysis | Patlytics, IP Copilot | Provide automated claim breakdown, AI-enhanced interpretation, and contextual prior art surfacing [17] |

| Traditional Patent Databases with Enhanced Content | Derwent Innovation, Questel Orbit | Offer expert-curated abstracts (Derwent) and strong multilingual capabilities for global patent coverage [6] |

| Free Access & Open Science Tools | Google Patents, The Lens | Provide free basic search capabilities and integration of patents with scholarly literature [6] [19] |

| Specialized Chemical/Materials Analysis | WIPO Patent Analytics Reports, Derwent Chemical Search | Deliver technology-specific landscape reports (e.g., graphite, titanium) and structure search capabilities [18] |

The evolution of patent data from legal protection to R&D intelligence represents a fundamental shift in how research organizations approach innovation. For materials scientists and drug development professionals, modern patent intelligence platforms provide unprecedented capabilities to extract technical insights, identify emerging opportunities, and accelerate research cycles. The protocols and methodologies outlined in this application note provide a framework for systematically integrating patent intelligence into multimodal R&D workflows. As AI and NLP technologies continue to advance, the role of patent data as a strategic knowledge asset will only grow in importance, enabling more efficient and targeted research investments across the materials science and pharmaceutical sectors.

International patent classifications are foundational frameworks that enable the systematic organization, retrieval, and analysis of patent documents worldwide. Within the context of multimodal data extraction for materials patents research, these classification systems provide the essential taxonomic structure that transforms raw, unstructured patent data into machine-readable knowledge graphs. The International Patent Classification (IPC) and Locarno Classification serve as critical infrastructures for different intellectual property domains. IPC, established by the Strasbourg Agreement of 1971, provides a hierarchical system of language-independent symbols for classifying patents and utility models according to their relevant technological areas [20]. In contrast, the Locarno Classification, established by the Locarno Agreement (1968), serves as the international standard specifically for classifying industrial designs [21]. For researchers and drug development professionals, understanding these systems is paramount for conducting precise prior art searches, analyzing competitive landscapes, and identifying white space opportunities through automated data extraction pipelines.

Classification Systems: Technical Specifications and Applications

IPC: Technological Taxonomy for Invention Patents

The International Patent Classification system organizes technological knowledge into a hierarchical structure that enables precise categorization of invention patents and utility models. The system undergoes annual updates, with a new version entering into force each January 1, ensuring it evolves with technological advancements [20]. The IPC's structure is particularly valuable for materials science and pharmaceutical research, where precise categorization of chemical compounds, formulations, and manufacturing processes is essential. For multimodal data extraction projects, the IPC provides standardized markers that can be linked to scientific literature, experimental data, and technical specifications across distributed research databases.

WIPO provides specialized assistance tools to enhance IPC implementation, including IPCCAT for categorization assistance and STATS for statistical predictions based on specified search terms [20]. The IPC Green Inventory represents a specialized resource that facilitates searches for patent information relating to Environmentally Sound Technologies, particularly relevant for sustainable materials development and green chemistry applications in pharmaceutical research.

Locarno Classification: Specialized System for Industrial Designs

The Locarno Classification specifically addresses the unique requirements of industrial design registration, focusing on the ornamental or aesthetic aspects of products rather than their technical functionality [21]. This system is administered through the Locarno Union Assembly, which meets in ordinary session once every two years, and a Committee of Experts that convenes at least once every five years to decide on classification changes and updates [21]. For materials researchers, the Locarno Classification is particularly relevant for drug delivery systems, medical devices, and packaging where design elements intersect with functional materials properties.

Within multimodal data extraction frameworks, design patents present unique challenges as they typically consist of "sparse, templated textual content and a set of schematic illustrations" that require integrated analysis of both visual and textual elements [5]. The Locarno Classification provides the essential semantic structure for categorizing these multimodal design representations, enabling more effective computer vision and natural language processing applications in design patent analysis.

Table 1: International Patent Classification Systems Comparison

| Feature | International Patent Classification (IPC) | Locarno Classification |

|---|---|---|

| Scope | Invention patents and utility models | Industrial designs |

| Legal Framework | Strasbourg Agreement (1971) | Locarno Agreement (1968) |

| Subject Matter | Technical functionalities | Ornamental/aesthetic designs |

| Update Frequency | Annual updates | Revised through Committee of Experts sessions (at least every 5 years) |

| Primary Users | Patent examiners, R&D researchers, technology analysts | Design professionals, product developers, design examiners |

| Relevance to Materials Research | Chemical compounds, manufacturing processes, material compositions | Product form, surface patterns, material aesthetics |

Multimodal Data Extraction from Classified Patent Documents

Multimodal Fusion Framework for Patent Analysis

The integration of international classifications with multimodal data extraction technologies represents a transformative approach to patent analytics. Advanced classification methods now employ multimodal feature fusion that integrates textual, visual, and metadata features to achieve more comprehensive patent analysis [5]. This approach is particularly valuable for design patents within the Locarno system, where traditional text-centric classification falls short in capturing the multimodal semantics inherent in design patents that combine schematic visual representations with limited textual cues [5].

For materials science research, this multimodal framework enables more sophisticated analysis of patents covering complex material systems where structural diagrams, chemical formulations, and process flows complement textual descriptions. The multimodal classification approach specifically addresses domain-specific challenges through tailored extraction strategies for each data modality [5]:

- Textual Data: Domain-relevant keywords are distilled from classification corpora and embedded in context-aware representations

- Visual Data: Local geometric details and global shape structures are jointly encoded to retain both fine-grained and holistic design features

- Metadata: Applicant's historical distribution across classification subclasses is transformed into a normalized vector functioning as a semantic prior

Experimental Protocol: Multimodal Feature Extraction from Classified Patents

Objective: Implement and validate a multimodal data extraction pipeline for materials-related patents using IPC and Locarno classifications as organizational frameworks.

Materials and Reagents:

- Data Source: USPTO patent grants (2005-2017) converted to RDF format following Linked Data principles [22]

- Classification Resources: IPC and Locarno classification schemas from WIPO standards [21] [20]

- Processing Tools: Natural Language Processing libraries (spaCy, NLTK), Computer Vision libraries (OpenCV, TensorFlow), Metadata parsers

Procedure:

- Data Collection and Preprocessing

- Retrieve patent documents from designated years (2005-2017) using bulk access methods

- Perform XML to RDF conversion using RDF Mapping Language (RML) following established protocols [22]

- Segment composite documents into individual patent files with standardized markup

Multimodal Feature Extraction

- Textual Features: Apply transformer-based architecture with multi-head attention mechanism to extract semantic vectors from patent claims and descriptions [23]

- Visual Features: Implement convolutional neural networks (CNN) using formula

[convolution operation]to extract local features from patent diagrams and chemical structures [23] - Metadata Features: Parse classification codes, inventor information, and citation networks using ontology-based extraction [22]

Cross-Modal Integration

- Implement attention-based fusion mechanism to capture interactions among modalities

- Apply adaptive weight fusion algorithm:

[formula for fused representation]to generate comprehensive feature vectors [23] - Map extracted features to classification codes using domain-specific ontologies

Validation and Analysis

- Conduct comparative evaluation against baseline models using accuracy, precision, recall, and F1 score metrics

- Perform ablation studies to determine contribution of individual modalities

- Validate classification results against expert-annotated test sets

Troubleshooting Notes:

- Address semantic sparsity in design patents through domain-specific feature enhancement

- Resolve modality alignment issues through cross-attention mechanisms

- Mitigate class imbalance in classification datasets through strategic sampling

Research Toolkit: Essential Solutions for Patent Data Extraction

Table 2: Research Reagent Solutions for Patent Data Extraction

| Tool/Resource | Function | Application Context |

|---|---|---|

| Linked USPTO Patent Data (RDF) | Provides semantically rich, machine-readable patent data in Resource Description Framework format | Foundation for structured patent analysis; enables integration with other data sources [22] |

| WIPO Classification Systems | Standardized taxonomies (IPC, Locarno) for organizing patent documents | Essential for categorization, prior art searches, and technology landscape analysis [21] [20] |

| Multimodal Fusion Algorithm | Integrates text, image, and metadata features using attention mechanisms | Improves classification accuracy by capturing complementary information across modalities [5] |

| Transformer-based Architecture | Processes textual content with multi-head attention mechanisms | Extracts semantic meaning from patent claims and descriptions [23] |

| Convolutional Neural Networks | Extracts visual features from patent diagrams and chemical structures | Analyzes graphical elements in design patents and material diagrams [5] [23] |

| Adaptive Weight Fusion | Dynamically balances contribution of different modalities based on content | Optimizes multimodal representation for specific classification tasks [23] |

| Reinforcement Learning Model | Enables continuous improvement of classification through reward feedback | Adapts to emerging technologies and classification patterns [23] |

Workflow Visualization: Multimodal Patent Data Extraction

The integration of international classification systems with advanced multimodal data extraction methodologies represents a paradigm shift in patent analytics for materials research. The structured frameworks provided by IPC and Locarno classifications enable researchers to transform heterogeneous patent data into standardized, machine-readable knowledge graphs that support sophisticated analysis and prediction tasks. The experimental protocols and technical workflows outlined in this document provide a foundation for implementing these approaches in drug development and materials science research contexts.

Future developments in this field will likely focus on enhanced cross-modal alignment techniques, real-time classification using reinforcement learning models [23], and deeper integration with scientific literature through linked data principles [22]. As artificial intelligence continues to transform intellectual property analysis, the critical role of international classifications as semantic anchors for multimodal data extraction will only increase in importance for researchers, scientists, and drug development professionals seeking to navigate complex patent landscapes.

Advanced Techniques and Tools for Multimodal Patent Extraction

In materials science and drug development, a significant volume of critical information, including novel compound data, synthesis methods, and property specifications, is embedded within unstructured documents such as patent filings, scientific publications, and technical reports [24]. Extracting this information into a structured, machine-readable format like JSON is essential for accelerating research, enabling large-scale data analysis, and powering artificial intelligence (AI) applications [25]. This process, known as document parsing, requires a robust pipeline capable of handling multi-modal data—text, images, tables, and chemical structures—commonly found in these documents [24]. This protocol details the steps for constructing a parsing pipeline, from initial layout analysis to the final output of structured JSON, specifically tailored for the complex demands of materials patents research.

Document Parsing Fundamentals

Document parsing is the automated process of converting unstructured or semi-structured documents into organized data. For materials research, this goes beyond simple Optical Character Recognition (OCR) to include understanding the semantic meaning and relationships within the document's content [25] [24].

A key challenge in this domain is the multi-modal nature of scientific information. A single patent may describe a novel polymer using textual claims, a graphical molecular structure, a table of experimental results, and a plot of thermal stability [24]. An effective pipeline must therefore integrate specialized models for each data type:

- Textual Descriptions: Processing scientific nomenclature and natural language.

- Molecular Structures: Identifying and interpreting chemical diagrams.

- Tabular Data: Extracting numerical data and property relationships from tables.

- Graphical Representations: Parsing data from charts and spectra [24].

Pipeline Architecture and Workflow

A document parsing pipeline is composed of sequential, modular components. The following diagram illustrates the complete workflow and the logical relationships between its core stages.

Diagram 1: Document parsing pipeline workflow.

Workflow Stage Protocols

Document Ingestion & Preparation

- Input: The pipeline accepts documents in PDF, DOCX, or image formats (TIFF, PNG, JPEG) [25]. In an automated system, documents can be ingested via API upload, email forwarding, or webhook triggers.

- Protocol: For scanned documents or image-based PDFs, the first step is to apply AI-powered OCR to convert visual text into machine-encoded characters [25]. Digital-native PDFs may bypass this step, though they often require extraction of embedded text streams. The output is a digital text representation of the entire document, with metadata on source quality.

Layout Analysis

- Objective: To identify and classify the geometric regions of a document page, such as text blocks, headings, figures, tables, and captions [26].

- Protocol: Use a computer vision model, such as a Vision Transformer, to analyze the page structure [24]. Tools like

spacy-layoutor Docling can parse the document and create a structuredDocobject where each detected region is a span with a label (e.g., "title", "text", "table") and associated bounding box coordinates [26]. This spatial understanding is crucial for separating and correctly routing different content types.

Multi-Modal Data Extraction This stage runs specialized extraction modules in parallel on the regions identified by the layout analyzer.

- Textual Content & Named Entity Recognition (NER):

- Protocol: Process the text from "text" spans using a pre-trained natural language processing (NLP) pipeline. A key component is a NER model fine-tuned on scientific and chemical corpora to identify and tag entities such as Material Names, Properties (e.g., "Young's modulus"), Synthesis Conditions, and Application Contexts [24]. This can be implemented using spaCy's entity recognition capabilities [26].

- Table Extraction:

- Protocol: For regions labeled as "table", employ a table structure recognition model like TableFormer [26]. The model identifies rows, columns, and merged cells. The content is then reconstructed into a structured format, typically a

pandas.DataFrame, which preserves the tabular relationships [26]. This dataframe can be anchored back to its position in the original document text.

- Protocol: For regions labeled as "table", employ a table structure recognition model like TableFormer [26]. The model identifies rows, columns, and merged cells. The content is then reconstructed into a structured format, typically a

- Image & Diagram Analysis:

- Protocol: For "figure" regions, use a multi-modal approach. A vision model can classify the image type (e.g., "chemical structure", "graph", "micrograph"). Specialized algorithms then perform specific extractions:

- Molecular Structures: Utilize Vision Transformers or Graph Neural Networks to convert a 2D chemical diagram into a standardized linear notation like SMILES or SELFIES [24].

- Data Plots: Tools like DePlot or Plot2Spectra can extract numerical data points from charts and graphs, converting them into structured data tables [24].

- Protocol: For "figure" regions, use a multi-modal approach. A vision model can classify the image type (e.g., "chemical structure", "graph", "micrograph"). Specialized algorithms then perform specific extractions:

- Textual Content & Named Entity Recognition (NER):

Data Structuring & Validation

- Objective: To unify the outputs from all extraction modules into a coherent, validated JSON schema.

- Protocol: Define a JSON schema that reflects the target data model for materials patents (e.g., containing fields for

material_name,properties,synthesis_method,related_structures). Map the extracted entities, table rows, and chemical data into this schema. Implement post-processing logic to normalize data (e.g., standardizing units, date formats) and validate the output against the schema to ensure data integrity [25].

Quantitative Performance Metrics

The performance of document parsing pipelines is typically evaluated using standard information retrieval and computer vision metrics. The following table summarizes key quantitative benchmarks for different pipeline components.

Table 1: Performance metrics for parsing pipeline components.

| Pipeline Component | Key Metric | Typical Benchmark (Current State-of-the-Art) | Evaluation Protocol |

|---|---|---|---|

| Optical Character Recognition (OCR) | Word Accuracy | >99% on high-quality scans [25] | Compute on a ground-truth dataset of scanned documents using the Word Error Rate (WER) metric. |

| Layout Analysis | Mean Average Precision (mAP) | >0.95 IoU threshold on PubLayNet dataset | Use the Intersection over Union (IoU) metric to compare predicted bounding boxes against human-annotated ground truth for document regions. |

| Named Entity Recognition (NER) | F1-Score | ~0.85-0.90 on custom materials science corpora [24] | Evaluate on a held-out test set of annotated scientific text, calculating precision and recall for entity tags. |

| Table Structure Recognition | Tree-Edit Distance (TED) | <5.0 on complex tables | Compare the HTML structure of the extracted table against a ground-truth structure, measuring the number of operations needed to match them. |

| End-to-End Accuracy | Field-Level Accuracy | 98-99% for simple forms; lower for complex patents [25] | Manually verify the correctness of each field in the output JSON for a representative set of input documents. |

The Scientist's Toolkit: Research Reagent Solutions

Implementing a document parsing pipeline requires a suite of software tools and libraries. The following table details the essential "research reagents" for this task.

Table 2: Essential software tools for building document parsing pipelines.

| Tool / Library | Primary Function | Application in Pipeline |

|---|---|---|

| Docling / spacy-layout [26] | Document Layout Analysis | Parses PDFs and DOCX files to identify text blocks, titles, figures, and tables, outputting a structured Doc object. |

| Tesseract OCR [25] | Optical Character Recognition | Converts text within scanned images or PDFs into machine-encoded text. Often used as a foundational OCR engine. |

| spaCy [26] | Natural Language Processing | Provides robust pipelines for tokenization, part-of-speech tagging, and named entity recognition (NER), which can be fine-tuned for scientific texts. |

| TableFormer [26] | Table Structure Recognition | A deep learning model specifically designed to identify the structure (rows, columns, headers) of tables in document images. |

| Vision Transformer (ViT) [24] | Image Classification & Analysis | A state-of-the-art model architecture for general image understanding tasks, such as classifying figure types in scientific documents. |

| Plot2Spectra / DePlot [24] | Data Extraction from Plots | Specialized algorithms that convert visual representations of data (e.g., charts, spectra) into structured, tabular data. |

| Parseur API [25] | End-to-End Document Parser | A cloud-based service that combines OCR, parsing, and AI to extract data from documents and output structured JSON, useful for rapid prototyping. |

Experimental Protocol for Pipeline Benchmarking

To evaluate and benchmark the performance of a newly constructed document parsing pipeline, follow this detailed experimental protocol.

Dataset Curation:

- Assemble a benchmark dataset of 50-100 materials patent documents (PDFs) that represent the expected input to the system.

- Manually annotate this dataset to create ground truth. This involves:

- Labeling bounding boxes for layout regions (text, table, figure).

- Transcribing text and tagging key entities (material, property, value).

- Extracting table contents and chemical structures into structured formats.

Pipeline Execution:

- Process each document in the benchmark dataset through the parsing pipeline.

- Configure the pipeline to output its results in a structured JSON format based on a predefined schema.

Metric Calculation:

- For Layout Analysis, calculate the Mean Average Precision (mAP) by comparing the predicted bounding boxes and labels against the ground truth annotations.

- For NER, compare the system-extracted entities with the ground truth tags, calculating Precision, Recall, and F1-Score.

- For End-to-End Accuracy, perform a field-level comparison between the output JSON and the ground truth JSON, reporting the percentage of correctly populated fields.

The transformation of unstructured materials patents into structured JSON data via a multi-modal parsing pipeline is a powerful enabler for research and development. By systematically decomposing documents into their constituent parts—text, tables, and images—and applying specialized models to each, researchers can unlock vast repositories of latent knowledge. This structured data feed is indispensable for training foundation models in materials science [24], populating knowledge graphs, and ultimately accelerating the cycle of discovery and innovation in drug development and materials engineering.

Leveraging Transformer Architectures for Text and Vision in Patents

Application Notes: Multimodal Data Extraction in Materials Patents

The application of multimodal transformer architectures is revolutionizing the extraction of technical information from materials patents by simultaneously processing textual descriptions and visual drawings. These systems address the critical challenge of interpreting complex, interrelated information presented in different formats within patent documents.

Core Architectural Approaches

Modern multimodal systems for patent analysis employ several specialized architectural configurations to achieve effective cross-modal understanding:

Specialist Stack Architecture: This approach utilizes separate, best-in-class models for text processing (e.g., transformer-based language models) and visual processing (e.g., specialized vision encoders), with a fusion mechanism to combine their outputs [27]. This method provides flexibility and leverages state-of-the-art unimodal models but introduces integration complexity.

Unified Transformer Architecture: These systems convert all modalities (text, images) into a common token representation processed by a single transformer backbone [27]. This simplifies the architecture and enables deeper modality fusion but requires extensive multimodal training data and computational resources.

Text-Guided Visual Processing: This innovative approach uses textual descriptions to guide visual feature extraction, reducing interference from irrelevant visual information [3]. The text encoder output serves as prior input to the visual encoder, enabling the vision system to focus on image regions semantically relevant to the textual context.

Domain-Specific Adaptation for Patents

Effective patent analysis requires specialized adaptations to handle the unique characteristics of technical documentation:

Patent-Specific Vision Encoders: Standard vision encoders often struggle with the structural elements of patent figures. Dedicated encoders like PatentMME are specifically trained to capture the unique schematic, flowchart, and technical drawing elements prevalent in patent documents [28].

Domain-Adapted Language Models: Language components fine-tuned on patent corpora (e.g., PatentLLaMA, derived from LLaMA) develop understanding of technical jargon and legal phrasing specific to intellectual property documents [28].

Optimized Visual Tokenization: Patent drawings require specialized processing to maintain structural information while managing computational constraints. Advanced tokenization methods process images into embedded vectors converted into pre-fusion coded vectors using codebooks designed to reduce feature coding quantity [29].

Table 1: Quantitative Performance Comparison of Multimodal Architectures for Patent Analysis

| Architecture Type | Technical Drawing Comprehension Accuracy (%) | Chemical Structure Recognition F1 Score | Processing Latency (ms) | Training Data Requirements |

|---|---|---|---|---|

| Specialist Stack | 87.3 | 0.89 | 120 | Medium |

| Unified Transformer | 92.1 | 0.94 | 85 | Very High |

| Text-Guided Visual | 94.5 | 0.96 | 105 | High |

| PatentLMM | 96.2 | 0.98 | 95 | Medium-High |

Experimental Protocols

Protocol 1: Implementing Text-Guided Multimodal Relationship Extraction

This protocol details the methodology for extracting semantic relationships between entities in patent documents using text-guided visual processing.

Materials and Equipment

- Hardware: GPU cluster with minimum 16GB VRAM per device

- Software: Python 3.8+, PyTorch 1.12+, Transformers library

- Datasets: PatentDesc-355K (355K patent figures with descriptions) [28]

Procedure

Input Preparation:

- Extract and preprocess text passages containing entity mentions from patent documents

- Obtain corresponding patent figures and extract multiple local target objects from global images using object detection models [3]

Feature Encoding:

- Process text through a pretrained text encoder (e.g., BERT-base) to obtain text feature representations

- Generate initial visual encoding representations of both global images and local target objects

- Input initial visual encodings into a pretrained visual encoder (e.g., CLIP-based) to obtain visual feature representations [3]

Text-Guided Visual Reprocessing:

- Calculate similarity between initial visual encoding representations and text feature representations

- Perform re-weighting based on similarity scores to obtain re-weighted visual features

- Send re-weighted features to the visual encoder decoder to generate top-down signals

- Feed top-down signals back to self-attention modules in the visual encoder to update Value matrices

- Perform secondary forward propagation with updated matrices to obtain refined visual features [3]

Cross-Modal Fusion:

- Implement cross-attention mechanisms where text features serve as queries and visual features serve as keys and values

- Generate cross-modal text feature encodings enriched with visual information [3]

Relationship Classification:

- Process cross-modal features through a softmax classifier for relationship categorization

- Optimize using cross-entropy loss with iterative refinement [3]

Validation and Quality Control

- Perform 5-fold cross-validation with held-out test sets

- Establish baseline comparisons with unimodal text-only models

- Implement manual verification on 5% of extracted relationships

Protocol 2: Training Patent-Specific Multimodal Models

This protocol covers the specialized training regimen required for developing high-performance multimodal transformers tailored to patent documentation.

Materials and Equipment

- Training Data: PatentDesc-355K dataset (355K patent figures with brief and detailed descriptions) [28]

- Pretrained Models: LLaMA base models, CLIP vision transformers

- Computational Resources: 8xA100 GPU configuration recommended

Procedure

Data Preprocessing:

- Extract figures and corresponding descriptions from patent documents

- Apply text normalization and technical term standardization

- Perform image enhancement including contrast adjustment and resolution standardization

- Implement data augmentation through rotation, cropping, and color adjustment

Specialized Vision Encoder Training (PatentMME):

- Initialize with CLIP vision transformer weights

- Fine-tune using patent figures with focus on technical drawing elements

- Employ contrastive learning to align image embeddings with textual descriptions [28]

- Optimize for structural element recognition in schematic diagrams

Domain-Adapted Language Model Training (PatentLLaMA):

- Initialize with LLaMA base weights

- Continue pretraining on patent corpora comprising technical descriptions

- Fine-tune with instruction-tuning on patent description generation tasks [28]

- Optimize for technical terminology and structured description output

Multimodal Integration:

- Combine PatentMME and PatentLLaMA components

- Train with cross-attention mechanisms between modalities

- Employ masked modality modeling for robust cross-modal understanding

Loss Optimization:

- Implement visual encoder training loss combining contrastive and generative objectives

- Use cross-entropy loss for description generation tasks

- Apply gradient clipping and learning rate scheduling for training stability [28]

Validation and Quality Control

- Evaluate description quality using BLEU, ROUGE, and BERTScore metrics

- Conduct expert review of generated technical descriptions

- Perform ablation studies to quantify component contributions

Table 2: PatentLMM Training Configuration Parameters

| Training Parameter | PatentMME (Vision) Value | PatentLLaMA (Language) Value | Full PatentLMM Value |

|---|---|---|---|

| Batch Size | 256 | 128 | 64 |

| Learning Rate | 5e-5 | 2e-5 | 3e-5 |

| Warmup Steps | 5,000 | 2,000 | 3,000 |

| Training Epochs | 30 | 15 | 20 |

| Sequence Length | N/A | 4,096 | 4,096 |

| Image Resolution | 384×384 | N/A | 384×384 |

| Optimizer | AdamW | AdamW | AdamW |

| Weight Decay | 0.05 | 0.1 | 0.08 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Multimodal Patent Research

| Resource Name | Type | Function/Purpose | Access Information |

|---|---|---|---|

| PatentDesc-355K Dataset | Dataset | Large-scale collection of ~355K patent figures with descriptions for training and evaluation [28] | Research use, academic licensing |

| PatentLMM Model | Pretrained Model | Specialized multimodal model for generating descriptions of patent figures [28] | Available for research purposes |

| CLIP Vision Transformer | Model Component | Base vision encoder for image understanding adaptable to patent figures [27] | Open source (MIT License) |

| LLaMA Base Models | Model Component | Foundation language models for domain adaptation to patent text [28] | Research licensing |

| Derwent World Patents Index | Data Source | Expert-curated patent abstracts with enhanced clarity and searchability [6] | Commercial subscription |

| Cypris Platform | Analysis Tool | Multimodal search for patent intelligence with visual and structural query support [6] | Enterprise subscription |

| USPTO Patent Database | Data Source | Official US patent collections with full-text and drawing resources [30] | Free public access |

| Patent Drawing Colorizer | Preprocessing Tool | Color normalization and enhancement for patent drawing analysis | Custom development required |

Advanced Implementation Considerations

Handling Color Drawings in Patent Analysis

Patent drawings traditionally use monochrome representations, but color is increasingly employed in specific technical contexts. Multimodal systems must accommodate this variation:

Regulatory Compliance: The USPTO requires formal petitions for color drawings, granting approximately 71% of requests with an average 93-day turnaround [31]. The EPO now accepts electronically filed color drawings without petition requirements [31].

Technical Implementation: When color is essential for understanding complex structures, functional differentiation, material composition, or graphical user interfaces [30], systems should:

- Process color information while maintaining grayscale compatibility

- Implement color legend interpretation for consistent semantic understanding

- Employ color normalization to account for reproduction variations

Multimodal Clustering for Patent Taxonomy Development

Advanced analysis employs multimodal clustering techniques to organize patent information across data types:

- Feature Generation: Process heterogeneous patent data (text, images, numerical values) into unified feature representations [32]

- Cross-Modal Similarity: Implement similarity measures that incorporate both visual and textual semantics

- Taxonomy Development: Generate cluster classifications that reveal technological relationships across patent collections [32]

Performance Optimization Strategies

- Efficient Tokenization: Use pre-training fusion codebooks to reduce image feature coding quantity and computational requirements [29]

- Modality Balancing: Address training imbalances between text and visual data through sampling strategies and loss weighting

- Accelerated Processing: Implement specialized accelerators for document layout analysis and optical character recognition to enhance input quality [33]

The exponential growth of scientific and patent literature presents a formidable challenge for researchers, scientists, and drug development professionals. Manually tracking innovations across disciplines is increasingly impractical. Within this vast information landscape, patents constitute a particularly valuable resource, offering detailed disclosures of novel materials, their properties, and synthesis methods—often years before such information appears in journal publications. The emerging discipline of specialized information extraction (IE) addresses this challenge by leveraging computational methods to automatically identify and structure key technical entities from unstructured text. This process is evolving from traditional, single-modality approaches (text-only) toward multimodal data extraction, which integrates text, images, and metadata to construct a more comprehensive understanding of technological domains such as advanced materials and pharmaceuticals [34] [5]. This application note details the frameworks, protocols, and practical tools for implementing these specialized extraction methodologies within a research environment.

Multimodal Extraction Frameworks for Patent Analysis

Specialized extraction has moved beyond simple keyword searches to sophisticated systems that understand context and relationships. The integration of multiple data types—or modalities—is crucial for achieving high accuracy.

Core Architecture of Multimodal Systems

A robust multimodal extraction system typically processes information through a structured pipeline. For video or image-rich documents, a scene detector first identifies coherent segments or boundaries within the content. A metadata extractor then analyzes the content of each segment to extract features corresponding to several different modes or information types. Subsequently, a metadata embedding process converts these features into a numerical representation for each mode, and an embedding aggregator formulates a single, unified representation (an aggregated embedding) for the segment, effectively indexing its content [7]. This aggregated embedding serves as a powerful index for searching and retrieving specific technical information from large content libraries.

When dealing with multiple data modalities (e.g., text, image, metadata), a neural network-based approach can be highly effective. The processing flow involves:

- Input Subnetwork: Receives the raw multimodal data and outputs the first, modality-specific features.

- Cross-Modal Feature Subnetworks: Each dedicated to a pair of modalities (e.g., text-image, text-metadata), these networks take the first features of two modalities and output a cross-modal feature that captures their interactions.

- Cross-Modal Fusion Subnetworks: For each modality, the relevant cross-modal features are integrated to produce a refined second feature for that modality.

- Output Subnetwork: Finally, the refined features from all modalities are combined to generate a unified output, such as a classification or a structured data representation [35].

This architecture allows the model to learn not just from each individual data type, but crucially, from the relationships between them.

Application in Design Patent Classification