The Reproducibility Crisis in Materials Science: Causes, Solutions, and Paths to Robust Research

This article addresses the reproducibility crisis, a critical challenge undermining progress in materials science and biomedical research.

The Reproducibility Crisis in Materials Science: Causes, Solutions, and Paths to Robust Research

Abstract

This article addresses the reproducibility crisis, a critical challenge undermining progress in materials science and biomedical research. It explores the fundamental causes, including systemic incentives and methodological variability, and provides actionable solutions for researchers and drug development professionals. Covering foundational concepts, practical methodologies, troubleshooting strategies, and validation frameworks, the content synthesizes current expert insights and data to guide the community toward more reliable, transparent, and reproducible scientific practices that enhance research translatability.

Defining the Crisis: Understanding the Scale and Root Causes in Materials Research

The reproducibility crisis presents a fundamental challenge to scientific progress, particularly in fields like materials science and drug development where findings directly influence high-stakes research and development. This crisis is characterized by the accumulation of published scientific results that other researchers are unable to reproduce [1]. In materials science, this manifests when novel material properties or synthesis methods reported in high-impact journals cannot be consistently replicated by independent laboratories, leading to wasted resources, misdirected research efforts, and delayed innovation.

A 2022 analysis highlighted the severity of this issue, noting that up to 65% of researchers have tried and failed to reproduce their own research, with irreproducible research in the United States alone wasting an estimated $28 billion USD in annual research funding [2]. These concerns are not confined to any single discipline; a 2021 survey of over 100 researchers confirmed the reproducibility crisis affects multiple scientific fields, identifying insufficient metadata, lack of publicly available data, and incomplete methodological information as primary contributing factors [3].

Addressing this crisis begins with terminology clarity. Inconsistent use of terms like reproducibility, replicability, and robustness across scientific disciplines creates confusion that hampers effective communication about scientific validity [4] [5]. This guide establishes precise, actionable definitions for these critical concepts, providing materials scientists and research professionals with a common framework for assessing and improving the reliability of their research.

Defining the Terminology

Core Concepts and Definitions

Despite their central importance in scientific discourse, the terms reproducibility and replicability lack universal definitions and are often used inconsistently across different scientific fields [4] [5]. The following table summarizes the two predominant definitional frameworks identified in the literature:

Table 1: Contrasting Terminology Frameworks

| Term | Claerbout & Karrenbach Framework | ACM Framework |

|---|---|---|

| Reproducibility | Authors provide all data and computer codes to run the analysis again, re-creating the results [5]. | (Different team, different setup) An independent group obtains the same result using artifacts they develop independently [5]. |

| Replicability | A study arrives at the same findings as another study, collecting new data (possibly with different methods) [5]. | (Different team, same setup) An independent group obtains the same result using the author's artifacts [5]. |

The terminology used by Claerbout and Karrenbach is prevalent in many computational and scientific fields. Within this framework, reproducibility is considered a more minimal standard—it should be achievable if the original researchers provide their complete data and analysis code [6]. In contrast, replication represents a more substantial test of a finding's validity, as it involves collecting new data to verify whether the same scientific conclusions hold [6].

An Expanded View: Reproducibility, Replicability, and Robustness

Building on these core concepts, The Turing Way project provides an expanded taxonomy that incorporates robustness and generalizability, offering a more nuanced understanding of research reliability [5].

Table 2: Expanded Definitions of Research Reliability

| Concept | Definition | Testing Question |

|---|---|---|

| Reproducible | The same analysis steps performed on the same dataset consistently produce the same answer [5]. | "Can I obtain the same results from the same data using the same code?" |

| Replicable | The same analysis performed on different datasets produces qualitatively similar answers [5]. | "Do I get similar results when applying the same method to new data?" |

| Robust | The same dataset subjected to different analysis workflows produces qualitatively similar answers [5]. | "Do different analytical methods applied to the same data yield consistent conclusions?" |

| Generalisable | Combining replicable and robust findings allows us to form results that apply across different datasets and analytical methods [5]. | "Is the finding valid across different data and different analysis methods?" |

The relationship between these concepts can be visualized as a pathway toward generalizable knowledge:

This conceptual framework reveals that narrow robustness (reproducibility) and broad robustness (replicability) represent different but complementary aspects of scientific reliability [7]. A finding that is merely reproducible may only be valid under highly specific conditions, whereas a replicable finding demonstrates consistency across different datasets, and a robust finding withstands variations in analytical approach [7] [5].

Quantitative Evidence of the Reproducibility Problem

Empirical studies across multiple disciplines have quantified the scope of the reproducibility challenge, revealing systematic concerns about research reliability:

Table 3: Reproducibility Assessments Across Scientific Fields

| Field/Context | Reproducibility Rate | Study Details | Source |

|---|---|---|---|

| Medical Research | <0.5% | Of studies published since 2016 that shared analytical code | [8] |

| Preclinical Cancer Research | <50% | High-impact papers assessed by the Reproducibility Project: Cancer Biology | [2] |

| Biomedical Research (Industry) | 11-20% | Landmark findings in preclinical oncology (Amgen & Bayer reports) | [1] |

| Psychology | Varies (17-82%) | Estimates of reproducible papers among those sharing code and data | [8] |

| General Science | ~65% | Researchers who have tried and failed to reproduce their own research | [2] |

Beyond these quantitative measures, surveys of researchers reveal important insights about the underlying causes. A 2021 exploratory study identified the most significant barriers to reproducibility as insufficient metadata, lack of publicly available data, and incomplete information in study methods [3]. These findings suggest that technical and cultural factors in research dissemination, rather than just methodological flaws in study design, contribute substantially to the reproducibility crisis.

Practical Frameworks for Enhancing Reproducibility

Methodological Recommendations

Based on an analysis of coding practices within the population-based Rotterdam Study cohort, medical researchers have formulated five practical recommendations to improve research reproducibility [8]:

Make reproducibility a priority by explicitly allocating time and resources throughout the research lifecycle. This includes recognizing that reproducible practices benefit individual researchers through enhanced efficiency, reduced errors, and greater impact of their work [8].

Implement systematic code review by peers to ensure adherence to coding standards and improve overall code quality. This process helps identify bugs, small errors, and fosters discussion about analytical choices [8].

Write comprehensible code through clear structure, adequate commenting, and use of ReadMe files. Comprehensibility is essential as research that cannot be understood by third parties cannot be adequately reproduced [8].

Report decisions transparently by documenting all analytical choices directly within the code or associated documentation. This includes providing annotated workflow code for data cleaning, formatting, and sample selection procedures [8].

Focus on accessibility by sharing code and data as openly as possible via institutional repositories. When sensitive data cannot be shared, researchers should provide detailed metadata and synthetic datasets that allow others to understand the research process [8].

Tool-Based Solutions

Emerging technologies offer promising approaches to standardizing research processes and enhancing reproducibility:

ReproSchema is an ecosystem that addresses inconsistencies in survey-based data collection through a schema-centric framework [9]. This approach standardizes survey design by linking each data element with its metadata, supporting version control, and ensuring consistency across studies and research sites [9]. Unlike conventional survey platforms, ReproSchema provides a structured, modular approach for defining and managing survey components, enabling interoperability across diverse research settings [9].

GPT4Designer represents another approach to reproducibility, focusing on the creation of accurate, modifiable, and reproducible scientific graphics [10]. This framework uses a novel "envision-first" strategy that combines detailed prompting and guided envisioning to generate scientific images with consistent styles aligned with initial specifications [10]. Such approaches are particularly valuable in materials science, where visual representations of molecular structures, experimental setups, and results need to be both precise and consistent across publications.

The Scientist's Toolkit: Essential Materials for Reproducible Research

Table 4: Key Research Reagent Solutions for Reproducible Experiments

| Reagent/Resource | Function | Reproducibility Considerations |

|---|---|---|

| Antibodies | Detection of specific proteins in assays like Western blotting, immunohistochemistry | Inconsistent quality, manufacturing variations, and improper storage affect performance; requires strict quality control and detailed documentation [2] |

| Cell Lines | Model systems for studying biological processes and drug responses | Contamination, misidentification, and genetic drift between laboratories; requires authentication and regular monitoring [2] |

| Chemical Reagents | Synthesis, modification, and analysis of materials | Batch-to-batch variability in purity and composition; requires precise documentation of sources and lot numbers [2] |

| Software & Code | Data processing, analysis, and visualization | Version dependencies, undocumented parameters, and platform-specific issues; requires version control, documentation, and containerization [8] |

| Research Protocols | Standardized procedures for experimental workflows | Variations in implementation across research teams; requires detailed documentation and version control [9] |

Experimental Protocol for Reproducible Research

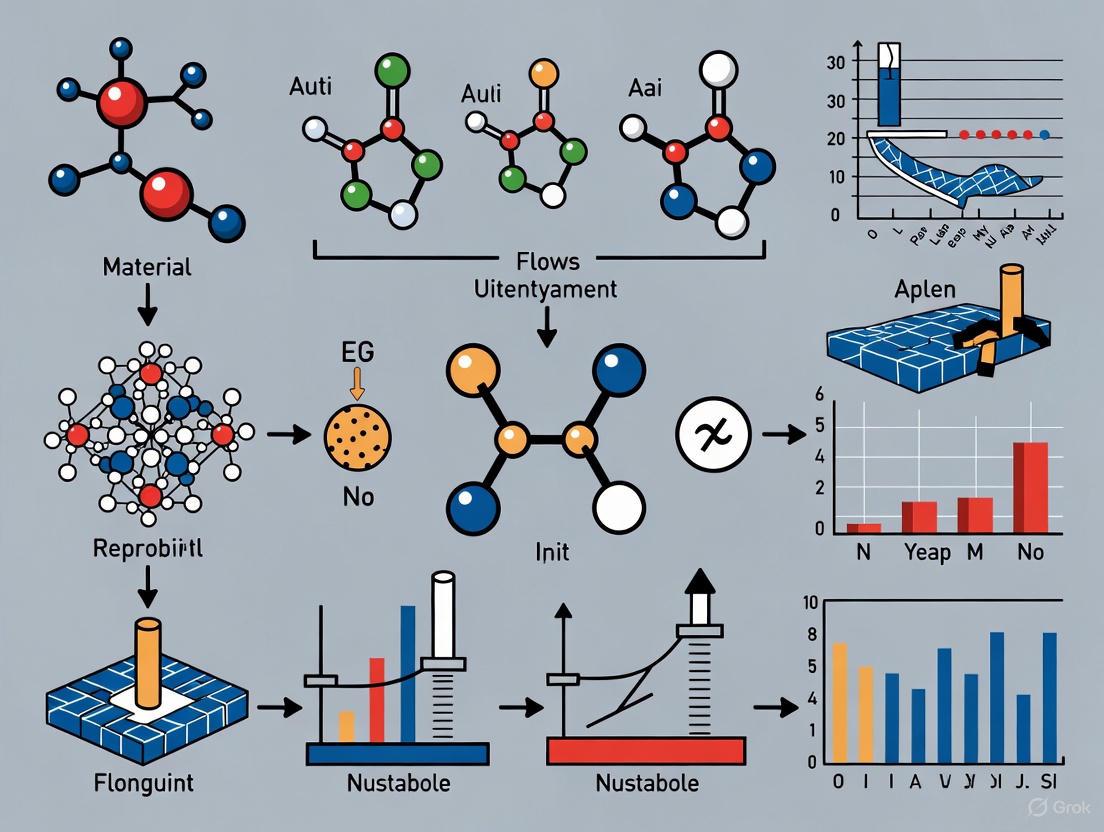

Implementing a standardized workflow is essential for achieving reproducible outcomes in materials science and drug development. The following diagram outlines a comprehensive protocol that integrates computational and experimental components:

This workflow emphasizes several critical components:

- Preregistration: Defining experimental parameters and analysis plans before conducting research to reduce selective reporting [2].

- Standardized Data Collection: Using tools like ReproSchema to implement version-controlled protocols that ensure consistency across research teams and time points [9].

- Computational Reproducibility: Implementing version control systems (e.g., Git) and containerization approaches (e.g., Docker) to capture the complete computational environment, including specific software versions and dependencies [8].

- Comprehensive Documentation: Recording all analytical decisions, parameter choices, and data processing steps through well-structured code comments and README files [8].

- Open Sharing: Depositing data, code, and materials in open repositories to enable both reproducibility (verification of analysis) and replicability (testing on new data) [8] [6].

The distinction between reproducibility, replicability, and robustness provides a crucial framework for addressing the reproducibility crisis in materials science and drug development. While reproducibility (obtaining the same results from the same data) represents a minimum standard for verifying analytical procedures, replicability (obtaining similar results from new data) and robustness (obtaining consistent conclusions across different analytical methods) represent more rigorous tests of scientific claims [5].

Addressing the reproducibility crisis requires both technical solutions and cultural shifts within the research community. Technical approaches include implementing standardized data collection frameworks [9], adopting comprehensive computational workflows [8], and developing tools for creating reproducible scientific visuals [10]. Cultural changes involve prioritizing reproducibility throughout the research lifecycle [8], reexamining incentive structures that emphasize novel findings over reliable ones [2], and fostering a scientific environment where replication attempts are valued rather than stigmatized [6].

For materials scientists and drug development professionals, embracing these principles is not merely an academic exercise but a practical necessity. The credibility of scientific findings, the efficiency of research pipelines, and the ultimate translation of discoveries into real-world applications all depend on a foundational commitment to reproducible, replicable, and robust research practices.

The reproducibility crisis refers to the accumulation of published scientific results that independent researchers are unable to reproduce. This phenomenon undermines a cornerstone of the scientific method—that empirical findings should be verifiable through repetition. While discussions of this crisis frequently center on psychology and medicine, its effects extend across virtually all scientific domains, including materials science and preclinical drug development. The crisis carries profound implications, eroding public trust in science and incurring massive economic costs estimated at $28 billion annually in the United States alone due to irreproducible preclinical research [11] [12].

Quantifying this crisis reveals alarming patterns. In preclinical biomedical research, replication rates are distressingly low. A project by the Center for Open Science found that 54% of attempted preclinical cancer studies could not be replicated, a figure considered conservative since many originally scheduled studies were excluded due to author uncooperativeness [13]. Earlier investigations by Bayer HealthCare and Amgen reported even more stark outcomes, with only 7% of projects being fully reproducible and 11% of landmark studies confirmed, respectively [13] [14]. These statistics highlight a systemic problem that demands rigorous quantification and methodological scrutiny.

Quantitative Failure Rates Across Scientific Disciplines

Reproducibility failure rates vary across disciplines but remain concerningly high throughout. The following table summarizes key findings from large-scale replication projects across multiple fields:

Table 1: Replication Failure Rates Across Scientific Disciplines

| Field | Replication Failure Rate | Key Studies & Projects |

|---|---|---|

| Psychology | 61-74% [11] | Reproducibility Project: Psychology found only 39% of studies could be replicated [11] [1] |

| Preclinical Cancer Research | 54-89% [13] | Center for Open Science (54%), Amgen (89%), Bayer HealthCare (93% including partial failures) [13] |

| Neuroscience | 65% [11] | Various replication initiatives reporting majority of published findings failed replication |

| Social Sciences | ~50% [11] | Average failure rate across multiple sub-disciplines |

| Biomedical Research | 20-25% [14] [11] | Prinz et al. validation studies showing only 20-25% of projects aligned with published data |

| Physics | ~10% [11] | Notably higher replication success compared to other fields |

| Machine Learning-Based Science | Widespread data leakage [15] | Survey found 294 papers across 17 fields affected by data leakage issues |

Beyond these field-specific rates, surveys of researcher perceptions further illuminate the crisis. A 2024 survey of biomedical researchers found that 72% believed there is a reproducibility crisis in biomedicine, with 27% considering it "significant" [16]. Additionally, 47% of researchers reported encountering difficulties reproducing their own previously published results [11]. These perceptions underscore that the problem is not merely theoretical but regularly affects active researchers.

The economic impact extends beyond wasted research funding. The drug development pipeline faces particular challenges, with a 90% failure rate for drugs progressing from Phase 1 trials to final approval—due in part to unreliable preclinical findings [17]. Each replication attempt conducted by pharmaceutical companies to validate academic research requires 3 to 24 months of work and costs between $500,000 and $2 million [12], creating substantial inefficiencies in translating basic research to clinical applications.

Methodologies for Quantifying Reproducibility

Defining Reproducibility and Replicability

A critical foundation for quantifying reproducibility involves establishing precise definitions. While terminology varies across disciplines, the improving Reproducibility In SciencE (iRISE) consortium provides helpful distinctions [18]:

Replicability: "The extent to which design, implementation, analysis, and reporting of a study enable a third party to repeat the study and assess its findings." This focuses on the clarity and completeness of methodological reporting.

Reproducibility: "The extent to which the results of a study agree with those of replication studies." This concerns the consistency of scientific findings when studies are repeated.

These definitions enable more precise measurement of different aspects of the research process, from methodological transparency to verifiability of findings.

Metrics and Assessment Frameworks

A 2025 scoping review identified approximately 50 different metrics used to quantify reproducibility, which can be categorized into several types [18]:

Table 2: Categories of Reproducibility Metrics

| Metric Category | Description | Common Applications |

|---|---|---|

| Statistical Significance | Replication is considered successful if it finds a statistically significant effect in the same direction as the original study | Psychology, Social Sciences |

| Effect Size Comparison | Success determined by similarity between effect sizes of replication and original study | Biomedical Research, Medicine |

| Meta-Analytic Methods | Combining results from original and replication studies to assess consistency | Large-scale replication projects |

| Subjective Assessments | Researcher judgment of whether replication confirms original findings | Multidisciplinary use |

| Frameworks & Questionnaires | Structured tools to assess transparency and methodological rigor | Institutional quality control |

The selection of appropriate metrics depends heavily on research context and goals. No single metric has emerged as superior across all conditions, as simulation studies reveal varying performance under different degrees of publication bias and research practices [18].

Large-Scale Replication Projects

Major replication initiatives have developed standardized protocols for assessing reproducibility across studies:

The Reproducibility Project: Cancer Biology established a framework for replicating key experiments from high-impact cancer studies [13]. Their protocol involved:

- Systematic selection of original studies based on impact and feasibility

- Collaborative engagement with original authors to obtain unpublished methodological details

- Registered reports with peer-reviewed protocols before experimentation

- Comprehensive documentation of all methodological variations from original studies

- Power-appropriate sample sizes to detect original effect sizes with high probability

The Reproducibility Project: Psychology similarly evaluated 100 studies from three high-ranking psychology journals [1]. Their approach included:

- Direct replication attempts adhering as closely as possible to original methods

- Large sample sizes to achieve adequate statistical power

- Multidisciplinary collaboration with original authors during study design

- Transparent reporting of all methodological decisions and deviations

- Multiple criteria for success including statistical significance, effect size comparison, and subjective assessment

These large-scale projects demonstrate that rigorous reproducibility assessment requires substantial resources, coordination, and methodological standardization.

Visualizing the Reproducibility Crisis Framework

The diagram below illustrates the complex ecosystem of factors contributing to the reproducibility crisis and the interconnected solutions required to address it:

Reproducibility Crisis Ecosystem

Experimental Protocols for Reproducibility Assessment

Direct Replication Methodology

Direct replication attempts to repeat an experimental procedure as exactly as possible. The protocol involves:

Pre-Replication Design Phase:

- Comprehensive literature review to identify candidate studies for replication

- Statistical power analysis to determine appropriate sample size

- Detailed protocol mapping of original study methods

- Consultation with original authors to clarify ambiguous methodological details

- Preregistration of replication protocol with analysis plan

Experimental Execution Phase:

- Reagent validation including cell line authentication and compound purity verification

- Blinded procedures where feasible to prevent experimenter bias

- Positive and negative controls to confirm assay performance

- Detailed documentation of any deviations from original protocol

Analysis and Interpretation Phase:

- Comparison of effect sizes between original and replication study

- Meta-analytic combination of original and replication results

- Sensitivity analyses to assess impact of protocol deviations

- Transparent reporting of all findings regardless of outcome

Data Leakage Detection in Machine Learning

In machine-learning-based science, data leakage—where information from the test set inadvertently influences model training—represents a significant threat to reproducibility. Detection methodology includes:

Data Collection Assessment:

- Temporal validation to ensure training data precedes test data chronologically

- Identity mapping to detect duplicate entries across training and test splits

- Feature legitimacy analysis to identify proxies for the target variable

Pre-processing Evaluation:

- Pipeline isolation to confirm preprocessing parameters derived only from training data

- Distribution analysis to compare training and test set characteristics

- Cross-validation audit to ensure no leakage between folds

Model Validation:

- Baseline comparison to simple models like logistic regression

- Performance discrepancy analysis between validation and test sets

- Ablation studies to assess contribution of potentially illegitimate features

The prevalence of data leakage is substantial, affecting 294 papers across 17 fields according to one survey, often leading to "wildly overoptimistic conclusions" [19].

Research Reagent Solutions for Enhanced Reproducibility

Certain key reagents and materials play critical roles in ensuring experimental reproducibility. The following table details essential solutions for reliable research:

Table 3: Research Reagent Solutions for Enhanced Reproducibility

| Reagent/Material | Function | Reproducibility Enhancement |

|---|---|---|

| Authenticated Cell Lines | Basic experimental units for in vitro studies | Prevents contamination and misidentification; ICLAC maintains database of contaminated lines [12] |

| Validated Antibodies | Target protein detection and quantification | Ensures specificity; reduces false positive/negative results |

| Reference Materials | Analytical standards and controls | Enables cross-laboratory calibration and comparison |

| Standardized Assay Kits | Modular experimental protocols | Reduces protocol variability between laboratories |

| Electronic Lab Notebooks | Documentation of experimental procedures | Ensures comprehensive method recording; maintains data integrity through ALCOA principles [12] |

Implementation of Good Cell Culture Practice (GCCP) provides a framework for standardizing cell culture procedures across laboratories, addressing a fundamental source of variability in experimental biology [12]. Similarly, the application of ALCOA principles (Attributable, Legible, Contemporaneous, Original, Accurate) to data management creates an audit trail that enhances transparency and verification potential [12].

Pathways to Improved Reproducibility

The following diagram outlines key pathways for addressing the reproducibility crisis, from foundational principles to practical implementation:

Reproducibility Solutions Pathway*

Substantive progress requires addressing systemic factors. Surveys indicate that researchers view "pressure to publish" as the leading cause of irreproducibility, with 62% identifying it as a frequent contributor [16]. Institutional reforms that value research quality over quantity, alongside funding mechanisms that specifically support replication work, are essential components of a comprehensive solution.

Funding allocations for reproducibility are increasing, with approximately 25% of grant funding now dedicated to replication and reproducibility projects, up from 10% five years ago [11]. This investment aligns with evidence that studies with open data policies demonstrate a 4-fold increase in reproducibility [11] and that funding agencies requiring data sharing see a 50% increase in reproducibility success rates [11].

For materials science and drug development specifically, adopting frameworks from clinical research—such as rigorous blinding, randomization, predefined statistical analysis plans, and prospective registration—could substantially enhance the reliability of preclinical findings [14] [12]. As research becomes increasingly interdisciplinary and complex, these methodological safeguards grow ever more critical for ensuring that scientific progress builds upon a foundation of verifiable evidence.

The reproducibility crisis represents a fundamental challenge to the integrity of scientific research, particularly in fields like materials science where findings directly influence downstream drug development and technological innovation. This crisis is characterized by an "alarming inability of scientists to replicate the findings of many published studies" [20]. In biomedical research specifically, a substantial majority of researchers acknowledge the problem, with nearly three-quarters (72%) of biomedical researchers believing there is a reproducibility crisis according to a recent survey [21]. The situation is quantified in replication attempts—a 2021 study attempting to replicate 53 different cancer research studies achieved only a 46% success rate [22], highlighting the systemic nature of the problem.

While the reproducibility crisis affects multiple disciplines, its implications are particularly profound in materials science and drug development, where unreliable findings can waste precious research resources, misdirect scientific trajectories, and ultimately delay the delivery of critical therapies to patients. This whitepaper examines how deeply embedded systemic drivers, primarily rooted in the "publish or perish" culture and misaligned incentive structures, create and perpetuate this crisis.

Quantifying the Problem: Key Data on Reproducibility and Research Integrity

The tables below synthesize quantitative evidence that illuminates the scope and primary causes of the reproducibility crisis.

Table 1: Survey Findings on Perceived Causes of the Reproducibility Crisis

| Survey Focus | Sample Size & Population | Key Finding | Primary Cited Causes |

|---|---|---|---|

| Perceived Reproducibility Crisis [21] | 1,600+ Biomedical Researchers | 72% believe there is a reproducibility crisis | • Pressure to publish• Small sample sizes• Cherry-picking of data |

| Academic Reward Systems [23] | 3,000+ Researchers, Publishers, Funders, Librarians | Only 33% believe academic reward and recognition systems are working well | • Publish-or-perish culture• Volume over quality• Failure to recognize diverse contributions |

Table 2: Empirical Data on Replication Success and Result Bias

| Study Focus | Replication Rate / Result Prevalence | Implications |

|---|---|---|

| Cancer Biology Replication [22] | 46% success rate in replicating 53 cancer studies | Highlights tangible difficulties in verifying published scientific findings. |

| Positive-Result Bias (1990-2007) [24] | 85% of published papers had positive results by 2007 (a 22% increase since 1990) | Indicates a systematic bias against publishing null or negative findings. |

| High-Replication Protocol [25] | Achieved an "ultra-high" replication rate in experimental psychology | Demonstrates that reproducibility can be significantly improved through methodological rigor. |

The Core Systemic Drivers

The "Publish or Perish" Culture and the Prestige Economy

The "publish or perish" culture is overwhelmingly identified as a primary driver of the reproducibility crisis [21] [23] [26]. This culture describes a research environment where career advancement, tenure, and funding are predominantly contingent upon a researcher's volume of publications in high-profile journals. This system creates a "prestige economy" where researchers are incentivized to prioritize journal brand recognition over scientific rigor [27].

The underlying mechanism is one of misaligned incentives. As Trueblood and colleagues note, "The major factors that influence tenure and promotion in science and many other academic disciplines are publications, citations, and grant funding. These factors are interdependent, as the likelihood of obtaining grants is affected by one’s publication record, and the ability to publish is dependent on getting one’s research funded. Both of these factors put a great deal of pressure on researchers, especially in the early stages of their careers" [27]. This pressure can lead to problematic research practices, including rushing studies, neglecting thorough validation, and fragmenting findings into "least publishable units" to maximize publication count.

Publication Bias and the File-Drawer Problem

Publication bias, also known as the "file-drawer problem," remains a deeply entrenched issue that distorts the scientific record. This bias arises from the systematic reluctance or inability to publish negative or null results [28]. The consequence is a published literature that overwhelmingly represents positive, novel, or statistically significant findings, while null results—which are equally critical for scientific progress—remain in researchers' file drawers.

The impact of this bias is severe and multifaceted:

- Wasted Resources: It leads to unnecessary duplication of effort, as researchers unknowingly repeat experiments that have already failed [28].

- Distorted Meta-Analyses: It results in biased meta-analyses and exaggerated effect sizes, which can misdirect entire research fields [28].

- Impaired AI Development: The rise of artificial intelligence and machine learning in fields like materials science is hampered when models are trained on incomplete data sets that lack negative results, leading to flawed predictions [24].

Despite widespread recognition of this problem, a 2022 survey showed that while 81% of researchers had produced relevant negative results and 75% were willing to publish them, only 12.5% had the opportunity to do so [24], indicating a significant gap between intent and action.

Hypercompetition and the "Gollum Effect"

A hypercompetitive environment for limited funding and positions fosters behaviors that further hinder reproducible science. A recent global study in ecology and conservation sciences identified the "Gollum Effect"—a phenomenon of academic territoriality where researchers engage in possessive behaviors to guard resources, data, and research niches [29].

This study found that 44% of respondents had experienced such territorial behaviors, which often manifest as obstructing access to data, methods, or materials, all of which are essential for replication. The problem disproportionately affects early-career and marginalized researchers [29]. This culture of competition, as opposed to cooperation, discourages the openness and transparency required for reproducible research, as researchers may feel that sharing detailed methodologies and materials aids their competitors [22].

Consequences of Misaligned Incentives

Erosion of Scientific Integrity and Public Trust

The cumulative effect of these systemic pressures is a tangible erosion of scientific integrity. When the reward structure prioritizes novelty and quantity over robustness and verification, the reliability of the scientific record is compromised. This erosion ultimately diminishes public trust in science, a critical asset especially in areas like drug development and public health policy [27]. The very phrase "replication crisis" itself can undermine confidence in scientific institutions.

Questionable Research Practices and Fraud

In extreme cases, the intense pressure to publish can lead to questionable research practices (QRPs) or even outright fraud. QRPs include practices like p-hacking (manipulating data analysis to achieve statistical significance) and HARKing (Hypothesizing After the Results are Known) [20]. While the exact prevalence of fraud is difficult to ascertain, a 2024 meta-analysis of 75,000 studies across various fields suggested that as many as one in seven may have been at least partially faked [22]. Such practices directly contribute to the proliferation of non-reproducible findings.

Pathways to Solutions: Realigning the System

Addressing the reproducibility crisis requires a fundamental rethinking of academic incentives and a shift toward practices that prioritize transparency and rigor.

Reforming Research Assessment and Incentives

A pivotal strategy is to reform how researchers are evaluated. Key recommendations include:

- Weakening the Link: Cambridge University Press has urged institutions to "weaken the link between academic reward and recognition and journal article output, and to adopt more holistic approaches to evaluating academic performance and contribution" [23].

- Valuing All Contributions: Assessment should recognize a broader range of scholarly contributions, including peer review, mentoring, data sharing, and the publication of null results [23] [28].

- Creating Career Paths for Replication: Academia should establish clear career paths and funding for researchers dedicated to conducting replication studies [22].

Embracing Open Science Practices

Open Science provides a suite of practical solutions to enhance reproducibility by promoting transparency, collaboration, and accountability [20]. The diagram below illustrates the core ecosystem of Open Science practices and their virtuous cycle in fostering more reliable research.

The following table details key research reagents and infrastructure that support the implementation of these Open Science principles, particularly in fields like materials science.

Table 3: Research Reagent Solutions for Open and Reproducible Science

| Resource / Solution | Primary Function | Role in Enhancing Reproducibility |

|---|---|---|

| Electronic Lab Notebooks (ELNs) | Digital documentation of experiments and results | Ensures detailed, time-stamped, and unalterable method records; facilitates data sharing. |

| Open Reaction Database [24] | Repository for organic reaction data, including negative results. | Provides complete data sets (positive & negative) for training AI models and prevents repetition of failed experiments. |

| Preprint Servers (e.g., arXiv, bioRxiv) | Rapid dissemination of findings pre-peer-review. | Accelerates scientific communication and allows for broader community scrutiny before formal publication. |

| Data Repositories (e.g., Figshare, Zenodo) | Archiving and sharing of raw data, code, and protocols. | Enables independent validation of results and re-analysis of data, a core tenet of reproducibility. |

Promising Publishing Models and Protocols

Innovative publishing models are being developed to directly counter perverse incentives:

- Registered Reports: This format involves peer review of the study protocol and methodology before data collection. If the proposed research is sound, the journal commits to publishing the final paper regardless of the outcome. This directly eliminates publication bias and rewards methodological rigor over exciting results [20] [28].

- Dedicated Journals for Null Results: Platforms like the Journal of Trial & Error provide a dedicated venue for publishing well-conducted studies that yield negative or null results, helping to solve the "file-drawer problem" [24].

- High-Replication Protocols: Evidence shows that methodological rigor can dramatically improve reproducibility. In experimental psychology, a field hit hard by the crisis, four groups successfully replicated each other's work at an "ultra-high" rate by adhering to best practices, including close consultation with original researchers and high statistical power [25]. The workflow for establishing such a protocol is illustrated below.

A Collective Way Forward

Overcoming the reproducibility crisis demands concerted, system-wide action. No single stakeholder can solve this alone. Researchers must adopt more rigorous and open practices. Institutions and funders must radically redesign their evaluation criteria to reward reproducibility and quality over volume and journal prestige. Publishers must continue to develop and promote innovative models like Registered Reports and lower barriers to publishing null results. As Brian Nosek of the Center for Open Science notes, "The reward system for science is not necessarily aligned with scientific values" [22]. Realigning these values is the fundamental challenge—and opportunity—facing the scientific community. By tackling the systemic drivers of the "publish or perish" culture, we can build a more robust, efficient, and trustworthy scientific enterprise, which is especially critical for accelerating discovery in materials science and drug development.

The reproducibility crisis represents a fundamental challenge across scientific disciplines, where published findings fail to stand up to independent verification. This phenomenon undermines cumulative knowledge production, delays therapeutic development, and wastes substantial research resources [30]. In materials science and related fields, the adoption of complex methodologies, including machine learning (ML), has introduced new dimensions to this crisis, particularly through subtle but critical errors like data leakage that compromise research validity [19] [15]. The crisis is not merely methodological but represents a systemic issue involving research incentives, reporting standards, and technical practices. Surveys indicate that a majority of researchers have personally encountered irreproducible results, with over 70% of researchers in one Nature survey reporting they had been unable to reproduce published data at least once [31]. This article examines the financial and scientific costs of irreproducibility, with particular attention to implications for materials science research and drug development.

The Staggering Financial Toll of Irreproducible Research

Direct Economic Costs

Irreproducible research imposes massive financial burdens on the scientific enterprise and society. Conservative estimates indicate that cumulative prevalence of irreproducible preclinical research exceeds 50%, resulting in approximately $28 billion per year spent on preclinical research in the United States alone that cannot be replicated [30]. This figure represents nearly half of the estimated $56.4 billion spent annually on preclinical research in the U.S. [30].

Table 1: Estimated Economic Impact of Irreproducible Preclinical Research in the United States

| Category | Annual Value (USD) | Notes |

|---|---|---|

| Total U.S. investment in life sciences research | $114.8 billion | Based on 2012 data extrapolation |

| Amount spent on preclinical research | $56.4 billion | 49% of total life sciences research spending |

| Estimated waste from irreproducible preclinical research | $28 billion | Based on 50% irreproducibility rate |

| Cost to replicate a single academic study (industry cost) | $500,000 - $2,000,000 | Requires 3-24 months per study [30] |

Downstream Economic Impacts

Beyond direct research waste, irreproducibility creates substantial downstream costs. Pharmaceutical companies investing in drug development based on irreproducible academic research face significant losses when attempting to replicate findings. Each replication attempt within industry requires between 3-24 months and investments between $500,000-$2,000,000 [30]. These replication failures delay lifesaving therapies and increase pressure on research budgets across the therapeutic development pipeline. The annual value added to the return on investment from taxpayer dollars would be in the billions in the U.S. alone if reproducibility rates improved substantially [30].

Data Leakage: A Critical Threat to ML-Based Science

The Pervasiveness of Data Leakage

In machine-learning-based science, data leakage has emerged as a pervasive cause of irreproducibility. Leakage occurs when information from outside the training dataset inadvertently influences the model, creating overly optimistic performance estimates that cannot be replicated in real-world applications [19] [15]. This issue affects numerous scientific fields applying ML methods, from materials science to biomedical research.

A comprehensive survey of literature found 17 fields where leakage has been identified, collectively affecting 294 papers and in some cases leading to wildly overoptimistic conclusions [19]. More recent updates to this survey indicate the problem has grown to affect 648 papers across 30 fields [15].

Table 2: Prevalence of Data Leakage Across Scientific Fields Using Machine Learning

| Field | Number of Papers Reviewed | Number with Leakage Pitfalls | Common Leakage Types |

|---|---|---|---|

| Clinical Epidemiology | 71 | 48 | Feature selection on train and test set [15] |

| Radiology | 62 | 16 | No train-test split; duplicates in datasets [15] |

| Neuroimaging | 122 | 18 | Non-independence between train and test sets [15] |

| Software Engineering | 58 | 11 | Temporal leakage [15] |

| Law | 171 | 156 | Illegitimate features; temporal leakage [15] |

| Molecular Biology | 59 | 42 | Non-independence [15] |

A Taxonomy of Data Leakage

Data leakage manifests in multiple forms, ranging from basic procedural errors to subtle methodological flaws:

- Lack of clean separation between training and test sets: The model has access to test set information during training [15].

- Use of illegitimate features: Features that should not be legitimately available, such as proxies for the outcome variable [15].

- Test set not from distribution of interest: Performance evaluation on data that doesn't match the intended application domain [15].

- Temporal leakage: Using future information to predict past events [15].

- Pre-processing on combined data: Applying scaling, normalization, or feature selection before train-test splitting [15].

- Duplicates across train-test splits: Non-independent observations appearing in both training and test sets [15].

- Feature selection without proper validation: Optimizing feature sets using information from the test set [15].

- Sampling bias: Systematic errors in how data is collected or selected for analysis [15].

Case Study: Irreproducibility in Civil War Prediction

Experimental Protocol and Methodology

A revealing case study examined the reproducibility of prominent studies on civil war prediction where complex ML models were claimed to substantially outperform traditional statistical methods like logistic regression [19] [15]. The reproduction study followed this rigorous protocol:

- Data Collection: Acquisition of identical datasets used in original studies

- Code Review: In-depth analysis of original implementation code

- Reimplementation: Careful reconstruction of experiments with leakage prevention

- Comparative Testing: Evaluation of both complex ML models and traditional baselines under corrected conditions

- Sensitivity Analysis: Testing the impact of various potential leakage sources

Results and Implications

When data leakage was identified and corrected, the supposed superiority of complex ML models disappeared—they performed no better than decades-old logistic regression models [19]. This case illustrates how methodological errors can create the illusion of scientific progress while actually impeding it. Importantly, none of these errors could have been detected by reading the original papers alone, highlighting the necessity of access to code and data for proper evaluation [15].

Consequences for Materials Science and Drug Development

Translational Challenges

In materials science and drug development, irreproducibility creates particularly severe consequences. The drug development pipeline depends heavily on robust preclinical findings to make substantial investments in clinical trials. When early-stage research proves irreproducible, it creates false hope for patients waiting for lifesaving cures and points to systemic inefficiencies in how preclinical studies are designed, conducted, and reported [30]. The problem is exacerbated in emerging fields like digital medicine, where hyperbolic claims about algorithmic performance may outpace methodological rigor [32].

Biological and Methodological Complexity

Materials science and biomedical research face unique reproducibility challenges related to biological variability and standardization limitations. As noted in cancer research, the effect of a treatment might depend on the particular metabolic or immunological state of a biological system, meaning that what appears to be a "failed" replication might actually reveal important boundary conditions for a phenomenon [33]. High levels of standardization in animal models, while intended to increase reproducibility, may actually reduce generalizability by limiting genetic diversity [33].

Solutions and Mitigation Strategies

Model Info Sheets for Leakage Prevention

To address data leakage in ML-based science, researchers have proposed model info sheets—structured documentation that requires researchers to justify the absence of different leakage types [19] [15]. These sheets provide a systematic framework for connecting ML model performance to scientific claims, addressing failure modes prevalent across scientific applications of machine learning.

Research Reagent Solutions and Essential Materials

Table 3: Key Research Reagent Solutions for Enhancing Reproducibility

| Reagent/Material | Function | Reproducibility Benefit |

|---|---|---|

| Certified Reference Materials | Provide standardized benchmarks | Enables calibration across laboratories and experiments |

| Authenticated Cell Lines | Ensure biological consistency | Prevents misidentification contamination [30] |

| Versioned Code Repositories | Track computational methods | Enforces computational reproducibility [15] |

| Standardized Protocols | Detailed methodological descriptions | Facilitates exact replication of experimental conditions [33] |

| Data Sharing Platforms | Provide access to raw datasets | Allows independent verification and reanalysis [32] |

Proposed Workflow for Reproducible Research

A three-stage process to publication has been proposed to enhance reproducibility while preserving innovation [33]:

- Exploratory Stage: Initial studies generating hypotheses without the yoke of extreme statistical rigor

- Confirmatory Stage: Independent replication performed with the highest levels of methodological rigor

- Multi-Center Validation: Large-scale verification creating foundation for application or translation

The high cost of irreproducibility—both financial and scientific—demands systematic reforms across research practice. For materials science and drug development professionals, addressing this crisis requires heightened attention to methodological rigor, particularly as machine learning approaches become more prevalent. Solutions must address both technical dimensions (like data leakage prevention) and systemic factors (including incentive structures and publication practices). By implementing structured approaches like model info sheets, adopting standardized reagents and protocols, and fostering a culture that values replication as much as innovation, the research community can reduce the staggering waste associated with irreproducibility and accelerate the discovery of robust, reliable scientific knowledge.

Building Better Science: Practical Methodologies and Open Science Frameworks

The scientific method is fundamentally built upon the principle that research findings should be verifiable through independent reproduction. However, across multiple scientific fields, including materials science, concerns have grown about a "reproducibility crisis"—a widespread inability to replicate previously published results. In preclinical biomedical research, which includes much of materials science for drug development, meta-analyses suggest that only about 50% of studies are reproducible, costing an estimated US $28 billion annually in wasted preclinical research in the United States alone [33]. This crisis delays lifesaving therapies, increases pressure on research budgets, and raises the costs of drug development [33].

The crisis stems from a complex interplay of factors. A significant vested interest in positive results exists across the research ecosystem: authors have grants and careers at stake, journals seek strong stories for headlines, pharmaceutical companies have invested heavily in positive outcomes, and patients yearn for new therapies [33]. This environment is further complicated by a divergence in needs; preclinical researchers require freedom to explore knowledge boundaries, while clinical researchers depend on replication to weed out false positives before human trials [33]. As noted by Professor Vitaly Podzorov, this crisis is fueled by the desire for rapid publications and an overreliance on scientometrics for evaluating scientists, which can prioritize career advancement over making lasting scientific contributions [34].

Defining the Concepts: Reproducibility, Replicability, and Open Science

A critical first step in addressing this challenge is to establish clear and consistent terminology. While often used interchangeably, the terms reproducibility, replicability, and related concepts have distinct meanings crucial for scientific discourse.

Table 1: Key Terminology in the Reproducibility Discourse

| Term | Definition | Key Differentiator |

|---|---|---|

| Repeatability | The original researchers perform the same analysis on the same dataset and consistently produce the same findings [35]. | Same team, same data, same analysis. |

| Reproducibility | Other researchers perform the same analysis on the same dataset and consistently produce the same findings [35] [36]. | Different team, same data, same analysis. |

| Replicability | Other researchers perform new analyses on a new dataset and consistently produce the same findings [35]. Also defined as testing the same question with new data to see if the original finding recurs [34]. | Different team, new data, same question. |

| Robustness | Testing whether the original finding is sensitive to different analytical choices, i.e., using different analyses on the same data [34]. | Same data, different analysis. |

Open Science is a broader movement that encompasses making the methodologies, datasets, analyses, and results of research publicly accessible for anyone to use freely [37]. Its core components include:

- Open Data: Making datasets and their documentation publicly available under a permissive license [37].

- Open Materials: Sharing tools, source code, and their documentation [37].

- Open Methodology: Detailing the full workflow and processes used to conduct the research [37].

- Preregistration: Publishing a research plan, including hypotheses and analysis strategy, before conducting the study to prevent outcome-driven reporting [37].

The Role of Open Science in Mitigating the Reproducibility Crisis

Embracing Open Science principles directly addresses the root causes of the reproducibility crisis by enhancing transparency, facilitating validation, and re-aligning incentives toward robust and reliable research.

Enhancing Transparency and Scrutiny

Transparency is the bedrock of a "show-me enterprise," not a "trust-me enterprise" [34]. Confidence in scientific claims stems from the ability to interrogate the evidence and how it was generated. When researchers share their detailed methodologies, raw data, and analytical code, it allows the scientific community to thoroughly evaluate and build upon the work. This process helps identify errors, omissions, or questionable practices that might otherwise go unnoticed. For example, the Centre for Open Science has found that many research papers provide too little methodological detail, forcing replication teams to spend excessive time chasing down protocols and reagents [33]. Open Science practices fill this critical gap.

Facilitating Direct Replication and Robustness Checks

Open Data and Open Materials are prerequisites for efficient reproduction and replication. They provide the necessary resources for independent teams to:

- Verify computational results by re-running analyses on the original dataset [35].

- Perform robustness checks by applying different analytical methods to the same data [34].

- Conduct direct replication studies by using the original protocols and materials to collect new data [36].

The inability to replicate can sometimes lead to new discoveries by revealing that a treatment effect is conditional on specific, previously unrecognized parameters, such as the metabolic state of a test animal [33]. Open Science makes these investigative paths feasible.

Creating Positive Incentives and Improving Efficiency

Beyond error detection, Open Science offers positive benefits for the research ecosystem:

- Accelerated Discovery: Sharing data, code, and detailed methods accelerates scientific discovery by making more research elements available for reuse and recombination [35].

- Increased Impact and Collaboration: Researchers who share their underlying data and methods often experience higher citation rates and open the door to new partnerships [35].

- Efficiency in Research: Reproducible research allows others to reuse data and methods, avoiding duplication of effort and preventing wasted time on analyses that are unlikely to yield results [35].

- Higher-Quality Peer Review: Reviewers with access to data and analytical processes can conduct more in-depth reviews, catching errors earlier and reducing back-and-forth during the publication process [35].

A Framework for Implementation: Practical Guidance for Researchers

Transitioning to Open Science requires concrete changes to research workflows. The following section provides actionable strategies and tools for materials scientists and related professionals.

Adopting Open Data and Materials Practices

A core tenet of Open Science is making research outputs FAIR (Findable, Accessible, Interoperable, and Reusable).

Table 2: Essential Research Reagent Solutions for Open Science

| Item Category | Specific Example | Function in Research | Open Science Practice |

|---|---|---|---|

| Data Repository | Open Science Framework (OSF) [37] | A free, open-source platform for managing, sharing, and preserving research projects across their entire lifecycle. | Create a project, upload datasets, code, and protocols, and use it for collaboration. |

| Code Repository | GitHub, GitLab | Version control platforms for managing source code, enabling collaboration, and tracking changes. | Share analysis scripts and software with open-source licenses. |

| Protocol Platform | Protocols.io | A platform for detailing and sharing experimental methods with dynamic, executable instructions. | Publish step-by-step methods that expand on the limited space in a manuscript. |

| Data Visualization Tool | R/ggplot2, Python/Matplotlib [38] | Programming libraries that implement robust visualization principles and the "Grammar of Graphics" for creating effective figures. | Share code used to generate publication figures to ensure complete reproducibility. |

| Preregistration Portal | OSF Preregistration, AsPredicted | Services for creating a time-stamped, immutable research plan before beginning a study. | Submit a preregistration to detail hypotheses, design, and analysis plan to reduce bias. |

Implementing Robust Methodologies and Reporting

To combat the high level of standardization that can limit external validity, researchers should:

- Report Negative Results: Encourage journals to include sections on what was tried and did not work, saving others time and providing full transparency [34].

- Use Registered Reports: This publication format involves peer review of the study plan before data collection. If the protocol is sound, the journal commits to publishing the results regardless of the outcome, mitigating publication bias [36].

- Adopt a Multi-Stage Workflow: One proposed solution involves a three-stage process: 1) exploratory studies to generate hypotheses, 2) an independent, highly rigorous confirmatory study, and 3) a multi-center study to create a foundation for clinical trials. Only after successful stage 2 would a paper be published [33].

The workflow for implementing an open, reproducible research project, from planning to sharing, can be visualized as follows:

Principles for Effective Data Visualization

Clear communication of results is vital for reproducibility. Effective data visualization ensures that the message of the data is accurately and efficiently conveyed.

Table 3: Quantitative Data Visualization: Chart Selection Guide

| Goal | Recommended Chart Type | Best Use-Case Scenario | Principles to Apply |

|---|---|---|---|

| Compare Amounts | Bar Chart [38] [39] | Comparing sales figures across different regions. | Avoid for group means with distributional information; use for counts [38]. |

| Show Trends | Line Chart [38] [39] | Displaying stock price fluctuations or temperature over time. | Ideal for continuous time-series data. |

| Display Distribution | Box Plot, Histogram [38] | Showing data distribution, including median, quartiles, and outliers. | Reveals patterns and information about data density. |

| Reveal Relationships | Scatter Plot [38] [39] | Showing the relationship between advertising spend and sales revenue. | Layer information by modifying point symbols, size, or color. |

| Show Composition | Stacked Bar Chart, Treemap [38] | Showing market share of different products. | Pie charts have fallen out of favor due to difficulties in visual comparison [38]. |

The following diagram outlines a principled approach to creating scientific visuals, emphasizing the importance of planning and design before software implementation:

Key principles for visualization include:

- Diagram First: Prioritize the information you want to share and design the visual mentally or with pen and paper before using any software [38].

- Use an Effective Geometry: Choose a geometric representation (e.g., dots, lines, bars) that best fits the data's narrative, aiming for a high data-ink ratio by removing non-data ink [38].

- Show Data: Avoid relying solely on data summaries like bar plots for group means. Instead, use geometries like box plots or violin plots that show the underlying data distribution [38].

The reproducibility crisis presents a significant challenge to the integrity and efficiency of materials science and drug development. However, it also represents an opportunity for profound improvement in scientific practice. By fully embracing the principles of Open Science—through the widespread adoption of Open Data, Open Materials, detailed methodologies, and preregistration—the research community can directly address the systemic and cultural drivers of this crisis. This transition fosters a more collaborative, efficient, and self-correcting scientific ecosystem. The result will be accelerated discovery, strengthened public trust, and a more effective translation of preclinical research into the lifesaving therapies that patients await.

The Power of Pre-registration and Registered Reports for Transparent Research

The reproducibility crisis represents a fundamental challenge across scientific disciplines, where published findings frequently fail to be replicated in subsequent investigations. In materials science and drug development, this crisis manifests through inflated effect sizes, publication biases favoring positive results, and analytical flexibility that undermines research credibility [40]. These issues stem from practices such as post-hoc hypothesizing (HARKing) and selective reporting of results, which dramatically increase false-positive rates and create unreliable foundational knowledge for future research and development [41].

Pre-registration and Registered Reports have emerged as powerful methodological solutions to combat these issues by shifting the focus from outcomes to process. Pre-registration involves publicly documenting research hypotheses, methodologies, and analysis plans before conducting experiments or analyzing data [42]. This approach distinguishes confirmatory hypothesis testing from exploratory research, preserving the diagnostic value of statistical findings. Registered Reports extend this concept further through a peer-reviewed study design that occurs before data collection, with journals committing to publish the final research regardless of outcome provided the pre-registered protocol is followed [43]. For materials science researchers and drug development professionals, these frameworks offer a structured approach to enhance methodological rigor and transparency.

Understanding Pre-registration

Core Principles and Mechanisms

Pre-registration functions as a time-stamped research plan that creates a clear distinction between hypothesis-generating (exploratory) and hypothesis-testing (confirmatory) research. By specifying analytical decisions before data collection or access, it prevents both conscious and unconscious manipulation of results based on outcome patterns [42]. The process establishes decision independence, ensuring that analytical choices are not contingent upon observed data patterns, thereby reducing researcher degrees of freedom that contribute to false positives [44].

The distinction between exploratory and confirmatory research is fundamental to pre-registration. Exploratory research serves as hypothesis-generating, curiosity-driven investigation where minimizing false negatives is prioritized. In contrast, confirmatory research involves rigorous testing of specific predictions derived from theory, where controlling false positives takes precedence [42]. Pre-registration preserves this distinction by creating a verifiable record of what was planned versus what was discovered during analysis.

Benefits for Research Credibility

Mitigates Inflation of Effect Sizes: In selective reporting environments with low statistical power, effect sizes become highly inflated, directly translating to low reproducibility. Pre-registration counteracts this by increasing the proportion of researchers adhering to confirmatory approaches [40].

Reduces Questionable Research Practices: By eliminating HARKing (Hypothesizing After Results are Known) and restricting analytical flexibility, pre-registration addresses key drivers of irreproducibility [41]. This is particularly valuable in preventing selective reporting of statistically significant outcomes while neglecting null findings.

Enhances Power Analysis Accuracy: When original studies are pre-registered with transparent effect sizes, replication studies can design more accurate power analyses rather than overestimating statistical power based on inflated effects from the literature [40].

Practical Implementation

Pre-registration can be implemented at various stages of research, including right before data collection, after being asked to collect more data during peer review, or before analyzing an existing dataset [42]. Several templates are available through registries like the Open Science Framework (OSF), with specialized forms for different research contexts [42].

Table: Types of Pre-registration Based on Data Status

| Data Status | Description | Considerations |

|---|---|---|

| No Data Collected | Data do not exist at submission | Researcher certifies data have not been collected [42] |

| Data Exist, Not Observed | Data exist but not quantified or observed by anyone | Must certify no human observation has occurred [42] |

| Data Exist, Not Accessed | Data exist but researcher has not accessed them | Researcher explains who has accessed data and justifies confirmatory nature [42] |

| Data Exist, Not Analyzed | Data accessed but no analysis conducted related to research plan | Common for large datasets or split samples; must justify confirmatory nature [42] |

Registered Reports: A Paradigm Shift in Scientific Publishing

Concept and Workflow

Registered Reports represent a transformative publication model that addresses publication bias by conducting peer review before data collection. This format judges research based on the importance of the question and robustness of the methodology rather than the direction or strength of results [43]. The process represents a fundamental shift from evaluating what was found to evaluating what will be investigated and how.

The typical Registered Report workflow involves two stages. In Stage 1, authors submit their introduction, literature review, hypotheses, and detailed methodology, which undergoes rigorous peer review. If accepted, the journal provisionally commits to publishing the final paper regardless of results. In Stage 2, authors complete the research following their approved protocol and submit the full manuscript for final review, ensuring adherence to the pre-registered plan [43].

Advantages for Scientific Progress

Removes Publication Bias: By pre-approving studies based on methodological rigor rather than results, Registered Reports eliminate the preference for statistically significant findings that plagues traditional publishing [43].

Enhances Methodological Quality: The upfront peer review process improves study design through expert feedback before implementation, strengthening methodological decisions and analytical approaches [43].

Protects Against Questionable Practices: The format inherently discourages p-hacking and selective reporting because the outcomes are unknown during the review phase, creating a firewall against result-dependent analytical decisions [43].

* Increases Efficiency*: Early feedback on methodology prevents costly mistakes in research execution and ensures appropriate statistical power before resources are committed to data collection [43].

Application to Materials Science and Drug Development

Adapting Pre-registration for Experimental Research

While pre-registration originated in social sciences, its application to materials science and drug development requires adaptation to domain-specific methodologies. For experimental research, pre-registration should comprehensively detail synthesis protocols, characterization methods, performance testing procedures, and data processing algorithms. This specificity ensures that analytical flexibility in interpreting experimental outcomes does not undermine result validity.

In drug development, pre-registration can document preclinical study designs with explicit endpoints, statistical analysis plans for dose-response relationships, and standard operating procedures for high-throughput screening. This transparency is particularly valuable for establishing robust baselines and reducing false leads in early-stage discovery.

Pre-registration of Preexisting Data Analyses

Materials science frequently involves analyzing existing datasets from literature, computational databases, or previous experimental campaigns. Pre-registration of these analyses presents unique challenges but offers significant benefits [41]. When working with preexisting data, researchers should:

- Document the extent of prior knowledge or exploration of the dataset

- Specify contingency plans for unexpected data characteristics

- Pre-register data preprocessing, feature selection, and model specification

- Clearly distinguish between replication analyses and novel investigations

For coordinated data analyses across multiple datasets—common in computational materials science—specialized pre-registration approaches are needed that address dataset selection, variable harmonization, model specification across studies, and results synthesis [44].

Table: Template for Pre-registering Coordinated Data Analyses in Materials Science

| Component | Key Elements to Pre-register | Example from Materials Science |

|---|---|---|

| Dataset Selection | Inclusion/exclusion criteria, search strategy for datasets | Databases to search (e.g., ICSD, Materials Project), required characterization data |

| Variable Harmonization | Operationalization of constructs across datasets with different measurements | Standardization of material properties across different experimental conditions |

| Model Harmonization | Statistical model specification across diverse data structures | Consistent DFT calculation parameters across different computational studies |

| Results Synthesis | Approach to summarizing findings across studies | Meta-analytic techniques for combining effect sizes from multiple material systems |

Implementation Workflow

The following diagram illustrates the complete pre-registration and Registered Report workflow, adapted for materials science research:

Essential Research Reagent Solutions for Transparent Science

Implementing pre-registration and Registered Reports requires both conceptual understanding and practical tools. The following table details key resources that support transparent research practices in experimental fields like materials science and drug development.

Table: Research Reagent Solutions for Transparent Science

| Tool Category | Specific Resources | Function & Application |

|---|---|---|

| Pre-registration Templates | OSF Preregistration Template [42] | General template for study pre-registration |

| Secondary Data Analysis Template [41] | Specialized for analyzing existing datasets | |

| Coordinated Analysis Add-on [44] | Template for multi-dataset coordination projects | |

| Registries & Platforms | Open Science Framework (OSF) [42] | Public repository for pre-registration documents |

| ClinicalTrials.gov | Domain-specific registry for clinical research | |

| AsPredicted.org | Simple pre-registration platform for quick studies | |

| Data Analysis Tools | Power Analysis Software | Calculating appropriate sample sizes before data collection |

| Data Splitting Protocols [42] | Separating data into exploratory and confirmatory sets | |

| Version Control Systems | Tracking analytical decisions and code changes | |

| Transparency Resources | Transparent Changes Document [42] | Documenting deviations from pre-registered plans |

| Open Materials Checklists | Ensuring complete documentation of research materials | |

| Data Sharing Platforms | Making research data accessible for verification |

Pre-registration and Registered Reports represent proactive methodological interventions that directly address core drivers of the reproducibility crisis in materials science and drug development. By emphasizing question importance and methodological rigor over results, these frameworks align scientific incentives with credible research practices. The materials science community stands to gain substantially from adopting these approaches, particularly as the field increasingly relies on complex datasets, computational models, and high-throughput experimentation where analytical flexibility threatens result reliability.

While implementation requires adapting templates and workflows to domain-specific research practices, the fundamental benefits—reduced bias, improved methodological quality, and enhanced credibility—transcend disciplinary boundaries. As these practices evolve, they promise to reshape how research is evaluated, published, and ultimately trusted within the scientific ecosystem and society at large [43].

The scientific community is currently grappling with a pervasive reproducibility crisis, a state where the results of many published studies are difficult or impossible to reproduce independently [45]. This crisis raises fundamental questions about research validity and practice, particularly in fields like materials science, life sciences, and drug development [45]. Notably, a study found that over 70% of life sciences researchers could not replicate the findings of others, and about 60% could not reproduce their own results [45]. A primary contributor to this crisis is the failure in record-keeping: experimental procedures, data, and protocols are often inadequately captured, recorded, and shared [46]. This is where modern digital tools—Electronic Lab Notebooks (ELNs) and version control systems—transition from being mere conveniences to essential components of robust, trustworthy scientific practice.

Electronic Lab Notebooks (ELNs): A Core Tool for Reproducible Research

What is an Electronic Lab Notebook?

An Electronic Laboratory Notebook (ELN) is a software platform designed to replace the traditional paper lab notebook. It serves as a centralized, digital environment where researchers can record and store experimental results, protocols, and data [47]. Unlike paper notebooks or general-purpose note-taking software, ELNs are custom-built for scientific research, enabling the integration of complex data types such as chemical structures, bioassay protocols, spectral data, and raw data files from instruments [48] [47]. The core function of an ELN is to aggregate all critical research information into a single, searchable, and reusable digital space, thereby moving beyond the limitations of handwritten notes [47].

How ELNs Alleviate the Reproducibility Crisis

ELNs directly address several root causes of the reproducibility crisis:

- Improved Data Integrity and Traceability: ELNs provide a permanent and secure archive for research data [47]. Features like immutable audit trails, electronic signatures, and time-stamped entries ensure that every change is logged, creating a verifiable record of the research process [49] [47]. This is crucial for complying with regulatory standards like 21 CFR Part 11 and protects intellectual property [48] [50].

- Enhanced Sharing and Collaboration: ELNs facilitate seamless sharing of protocols, data, and concepts within and between research groups [48]. Cloud-based ELNs, in particular, enable secure, real-time collaboration for globally distributed teams, ensuring that all members work from the most current information [50] [51]. This transparency within a research group accelerates the pace of discovery [47].

- Structured Data Capture and Searchability: The ability to use templates for frequently used protocols standardizes data entry and reduces errors [48]. Furthermore, ELNs make research records fully searchable, eliminating the problem of "lost" data buried in paper notebooks and allowing researchers to quickly find past experiments, materials, or results [47]. This is a significant improvement, given that not creating digital records accounts for 17% of data loss [47].

ELN Market Trends and Quantitative Data

The adoption of ELNs is rapidly growing, driven by laboratory digitization, regulatory demands, and the need for better data management. The market data reflects this strategic shift.

Table 1: Global Electronic Lab Notebook (ELN) Market Overview

| Metric | Value | Source/Timeframe |

|---|---|---|