The Reproducibility Crisis in AI-Driven Materials Discovery: A Framework for Robust Science and Accelerated Drug Development

This article provides a comprehensive guide for researchers, scientists, and drug development professionals tackling the critical challenge of reproducibility in AI-driven materials experiments.

The Reproducibility Crisis in AI-Driven Materials Discovery: A Framework for Robust Science and Accelerated Drug Development

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals tackling the critical challenge of reproducibility in AI-driven materials experiments. We first explore the root causes of irreproducibility, from data drift to algorithmic bias. We then detail established and emerging methodologies, including FAIR data principles and version-controlled computational environments, for building reproducible workflows. A dedicated troubleshooting section addresses common pitfalls in experimental design and model validation. Finally, we present a framework for rigorous validation and comparative analysis against traditional high-throughput experimentation (HTE). This guide synthesizes current best practices to enhance the reliability, trustworthiness, and clinical translatability of AI-accelerated materials science.

Why AI-Driven Materials Experiments Fail: Diagnosing the Root Causes of Irreproducibility

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: Data & Preprocessing Q1: My ML model performs excellently on one dataset but fails on a new batch of experimental data. What's wrong? A: This is a classic sign of dataset shift or poor feature standardization. Ensure your data preprocessing pipeline is reproducible and applied identically to all data.

- Troubleshooting Steps:

- Check Feature Distributions: Compare summary statistics (mean, variance) of key descriptors between the original training set and the new data.

- Validate Preprocessing Scripts: Ensure no random seed was set without being recorded or that normalization parameters (min/max) from the training set are reused, not recalculated on the new data.

- Audit Data Provenance: Verify the synthesis and characterization protocols for the new materials batch match the original exactly.

Q2: How do I handle missing or inconsistent data from public materials databases? A: Inconsistent data entry is a major source of irreproducibility. Implement a rigorous data curation pipeline.

- Troubleshooting Protocol:

- Define Exclusion Criteria: A priori, define thresholds for physically impossible values (e.g., negative bandgap) or measurement error margins.

- Use Consensus Values: For properties reported in multiple sources, use the median value and flag entries with high dispersion.

- Document All Decisions: Maintain a complete log of all removed data points and the reason for removal. Share this log with published work.

FAQ: Model Development & Training Q3: My neural network yields different results every time I retrain, even on the same data. How can I stabilize it? A: This indicates high variance due to uncontrolled randomness.

- Stabilization Protocol:

- Set All Random Seeds: Explicitly set seeds for Python (

random.seed()), NumPy (numpy.random.seed()), and deep learning frameworks (e.g.,torch.manual_seed()). - Enable Deterministic Algorithms: Where possible, use deterministic CUDA convolutions (e.g.,

torch.backends.cudnn.deterministic = True). Note: This may impact performance. - Report Aggregate Metrics: Train the model multiple times (e.g., 10 runs) with different seeds but fixed hyperparameters. Report mean performance ± standard deviation.

- Set All Random Seeds: Explicitly set seeds for Python (

Q4: How should I split my dataset to avoid data leakage and overoptimistic performance? A: Standard random splits fail for correlated materials data.

- Methodology for Robust Splitting:

- Temporal Split: If data was collected over time, train on older data, validate/test on newer data.

- Structural Split: Use algorithmic clustering (e.g., on composition fingerprints) to place similar materials in the same set, ensuring splits are structurally distant.

- Protocol: Use the

TimeSeriesSplitorClusterSplittfrom libraries like scikit-learn. Always specify the exact method and random seed in your publication.

FAQ: Reporting & Replication Q5: What minimal information is required for someone to exactly replicate my computational experiment? A: Follow the MIAMI (Minimum Information About Materials Informatics) checklist.

- Essential Items:

- Data: Exact version of the database used, with DOI or download date. Full preprocessing code.

- Code: Versioned code repository (Git) with commit hash. Explicit list of dependencies with versions (e.g., via

requirements.txtor Condaenvironment.yml). - Model: Final trained model weights published in a persistent repository (e.g., Zenodo).

- Hyperparameters: All hyperparameters, including those searched over and the final chosen values. The exact configuration file is ideal.

Table 1: Reported Causes of Irreproducibility in Materials Informatics Studies

| Cause Category | Frequency (%) | Primary Impact |

|---|---|---|

| Inadequate Data Documentation & Sharing | 45% | Prevents validation and reuse |

| Uncontrolled Randomness in ML Pipelines | 30% | Leads to differing model outputs |

| Non-Standardized Preprocessing | 15% | Introduces hidden biases |

| Overfitting to Small/Noisy Datasets | 10% | Produces non-generalizable models |

Table 2: Impact of Reproducibility Practices on Model Performance Variation

| Practice Adopted | Reduction in Performance Std. Dev. (p.p.) | Key Requirement |

|---|---|---|

| Fixed Random Seeds | 60-70% | Document all seeds in code |

| Versioned Code & Data | 40-50% | Use Git & DOI repositories |

| Hyperparameter Reporting | 30-40% | Publish full search space and results |

| Structured Data Splitting | 25-35% | Specify clustering or time-based method |

Experimental Protocols

Protocol 1: Reproducible Hyperparameter Optimization for a Graph Neural Network (GNN) Objective: To find and report optimal GNN hyperparameters for predicting material bandgaps in a reproducible manner.

- Environment Setup: Create a Conda environment from a version-locked

environment.ymlfile. Record the OS and CUDA driver versions. - Data Preparation: Load the dataset from a fixed, versioned source (e.g., Materials Project API version X.Y.Z on DD/MM/YYYY). Apply preprocessing script

preprocess_v1.pywhich includes normalization based on training set statistics. - Splitting: Perform a clustered split using the

Matbenchprotocol. Save the indices for train/validation/test sets to file (split_indices.json). - Search Setup: Use Optuna framework with seed=

42. Define search space: layers [2,3,4], hiddendim [64,128,256], learningrate [log uniform, 1e-4 to 1e-2]. - Execution: Run 100 trials. Save the complete Optuna study object (

study.pkl). - Reporting: In the manuscript, state the best parameters and attach the

study.pklfile, allowing exact replication of the search trajectory.

Protocol 2: Cross-Laboratory Validation of a Synthesis Prediction Model Objective: To validate a model predicting successful synthesis conditions across two independent research groups.

- Model Transfer: Group A provides Group B with: a) the trained model file (

model.pt), b) the preprocessing code/container, c) the specific software environment manifest. - Blind Prediction: Group B uses the model to predict outcomes for 50 new, unpublished target materials within the model's claimed domain.

- Experimental Ground Truth: Both groups attempt synthesis of the same 50 materials using the same documented protocol (see Toolkit below).

- Analysis: Compare the success rate predicted by the model against the experimentally observed success rate from both labs. Calculate inter-lab agreement (Cohen's Kappa) on experimental outcomes to account for lab-specific variability.

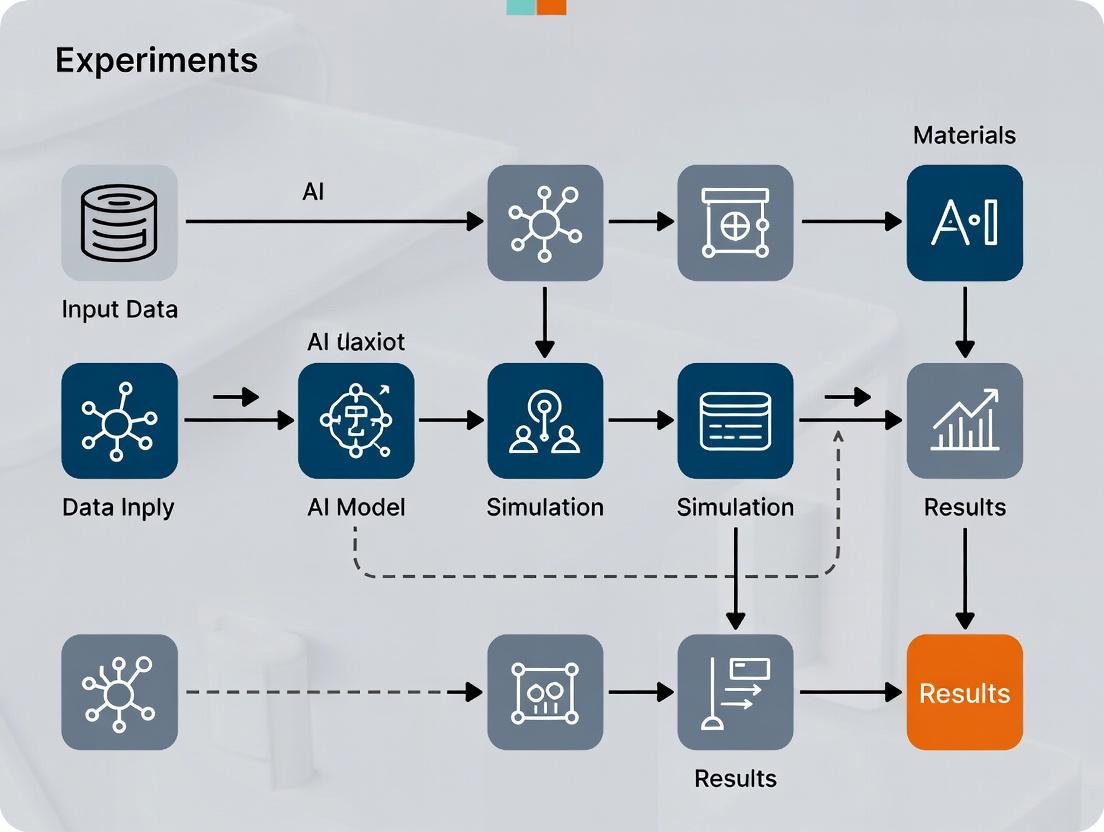

Visualization Diagrams

Title: Reproducible ML Workflow for Materials

Title: Causes and Impacts of the Crisis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Reproducible Materials Informatics Research

| Item / Solution | Function & Purpose | Example / Note |

|---|---|---|

| Version Control System | Tracks all changes to code, scripts, and configuration files. | Git with platforms like GitHub or GitLab. |

| Environment Manager | Encapsulates all software dependencies to guarantee identical runtime conditions. | Conda, Docker, or Singularity containers. |

| Data Repository | Provides persistent, versioned storage for raw and processed datasets. | Zenodo, Figshare, Materials Data Facility. |

| ML Experiment Tracker | Logs hyperparameters, metrics, and model artifacts for each training run. | Weights & Biases, MLflow, TensorBoard. |

| Standardized File Formats | Ensures data is portable and interpretable by different tools/labs. | CIF for structures, JSON/XML for metadata, HDF5 for arrays. |

| Electronic Lab Notebook | Digitally records experimental synthesis/characterization protocols linked to computational work. | LabArchive, SciNote, openBIS. |

| Persistent Identifier | Uniquely and permanently identifies every digital artifact (data, code, model). | Digital Object Identifier (DOI). |

Technical Support Center: Reproducibility in AI-Driven Materials & Drug Discovery

Troubleshooting Guides

Issue 1: AI Model Performance Degrades Rapidly on New Experimental Batches

- Symptoms: High validation accuracy during training plummets when the model encounters data from a new synthesis batch or assay run. Predictions become unreliable.

- Diagnosis: This is a classic sign of batch effect noise and non-FAIR metadata. The model has learned latent variables specific to the initial experimental conditions (e.g., specific lab humidity, reagent lot, instrument calibration) rather than the fundamental material or biological properties.

- Resolution Protocol:

- Implement Systematic Metadata Tagging: Ensure every data point is annotated with a controlled-vocabulary tag for: Reagent Lot/Batch ID, Instrument Serial Number & Calibration Date, Operator ID, Environmental Conditions (if critical).

- Apply Computational Batch Correction: Use algorithms like Combat, Limma, or Singular Value Decomposition (SVD) to remove technical artifacts. Caution: Apply only to training data, not the entire dataset, to avoid data leakage.

- Re-train with Augmented Data: Use data augmentation techniques (e.g., adding simulated noise within instrument error bounds, slight spectral shifts) to make the model invariant to minor technical variances.

Issue 2: Inconsistent Results When Replicating a Published AI-Driven Synthesis Protocol

- Symptoms: Inability to reproduce a published material's properties (e.g., perovskite quantum yield, MOF surface area) using the AI-predicted synthesis parameters.

- Diagnosis: Inconsistent and non-standardized data in the original training corpus. Critical procedural details (e.g., "sonicated for 1 hour" without power or temperature specs, "washed thoroughly") are ambiguous and not machine-readable.

- Resolution Protocol:

- Adopt Structured Experiment Digitalization: Use Electronic Lab Notebooks (ELNs) with predefined templates that enforce mandatory fields for key parameters.

- Deploy Semantic Ontologies: Tag procedures and materials with standard identifiers (e.g., CHEBI for chemicals, ChEMBL for compounds, NanoParticle Ontology terms).

- Verify with Control Experiments: Before full replication, run a series of small-scale control experiments using the parsed protocol to identify the most sensitive (and likely missing) variable.

Issue 3: Model Fails to Generalize Across Different Material Classes or Protein Families

- Symptoms: A model trained on oxide perovskites fails completely when predicting for halide perovskites. A QSAR model for kinase inhibitors is useless for GPCR targets.

- Diagnosis: Hidden biases in non-FAIR data. The training data lacks the Accessibility and Interoperability principles, residing in siloed, incompatible formats. Features are not aligned or computed consistently across domains.

- Resolution Protocol:

- Feature Auditing & Alignment: Audit the feature vectors used for each domain. Use tools like RDKit for consistent molecular descriptor calculation or matminer for consistent materials features.

- Employ Transfer Learning with Caution: Use a pre-trained model on the larger domain (e.g., general small molecules) and fine-tune on your specific, smaller dataset. This must be done with aligned feature spaces.

- Seek & Integrate FAIR Data Repositories: Prioritize data from sources that adhere to community standards (e.g., Materials Project, PubChem, The Protein Data Bank).

Frequently Asked Questions (FAQs)

Q1: We've collected terabytes of historical lab data. It's messy but valuable. What's the first, most critical step to make it usable for AI? A: The first step is audit and provenance reconstruction. Create a data inventory. For each dataset, document: Who generated it, When, on What equipment, using Which protocol version, and What were the raw, unprocessed outputs? This metadata is the foundation for all subsequent cleaning and FAIRification. Without it, you cannot assess noise levels or consistency.

Q2: What are the minimum metadata fields required for an AI-ready materials synthesis experiment? A: At a minimum, your metadata should be structured to answer the following, using standard identifiers where possible:

| Metadata Category | Example Fields | FAIR Principle Addressed |

|---|---|---|

| Provenance | Researcher ORCID, Institution, Date/Time | Findable, Reusable |

| Material Inputs | Precursor IDs (e.g., PubChem CID), Purity, Supplier/Lot#, Concentrations | Interoperable, Reusable |

| Synthesis Protocol | Method (e.g., sol-gel, CVD), Parameters (Temp, Time, Pressure), Equipment Model/ID | Reusable |

| Characterization Data | Technique (e.g., XRD, HPLC), Instrument ID & Settings, Raw Data File Link | Accessible, Interoperable |

| Derived Results | Calculated property (e.g., bandgap, IC50), Processing Code Version | Interoperable, Reusable |

Q3: How can we quickly check if our dataset has significant "noise" from inconsistent labeling? A: Implement a simple intra-duplicate analysis. Identify all experiments in your database that have identical or nearly-identical input parameters (within instrument precision). Plot the distribution of their output results (e.g., yield, activity). A wide variance in outputs for "identical" inputs is a direct measure of inconsistency and noise. See protocol below.

Q4: Are there automated tools to help make our lab data FAIR? A: Yes, an evolving ecosystem exists. Key tools include:

- Electronic Lab Notebooks (ELNs): LabArchive, RSpace, eLabJournal (often have FAIR export modules).

- Data Validation & Pipelines:

great_expectations(for data quality),Pachyderm/Nextflow(for reproducible pipelines). - Standards & Converters:

pymatgen(materials),RDKit(cheminformatics),BIO2RDF(life sciences) for format interoperability. - Repository Platforms:

Dataverse,CKAN, or institutional repositories that assign persistent identifiers (DOIs).

Detailed Experimental Protocols

Protocol 1: Intra-Duplicate Analysis for Noise Quantification

- Objective: Quantify the intrinsic inconsistency (noise) in an experimental dataset.

- Methodology:

- Data Query: From your cleaned database, write a query to cluster experiments where all controlled input variables (e.g., precursor concentrations, temperature, time) differ by less than a defined threshold (e.g., <1% for continuous, exact match for categorical).

- Group Formation: Each cluster of 2 or more experiments is an "intra-duplicate set."

- Statistical Calculation: For each set, calculate the mean and standard deviation (σ) of the primary output variable (e.g., catalytic activity). Compute the Coefficient of Variation (CV = σ/mean) for each set.

- Aggregate Metric: Compute the median CV across all intra-duplicate sets in your database. This median CV is a robust indicator of your dataset's experimental noise floor.

- Interpretation: A median CV > 10-15% for a well-established assay indicates high noise, suggesting AI models will struggle to learn signals weaker than this noise level.

Protocol 2: Implementing a FAIR Data Capture Workflow for a New Synthesis Experiment

- Objective: Ensure a new experiment generates AI-ready, FAIR data from inception.

- Methodology:

- Pre-register in ELN: Create a new experiment entry in an ELN before starting lab work. Use a mandatory-field template.

- Digital Identifier Assignment: Assign a unique, persistent sample ID (e.g., barcode) to all material vials/tubes. Link this ID in the ELN.

- Structured Data Capture:

- Inputs: Weigh samples on balances connected to the ELN (auto-capture). Scan barcodes of reagents.

- Process: Record deviations from protocol in a structured "deviation" field, not free-text comments.

- Outputs: All characterization instruments should output standard digital formats (e.g.,

.ciffor XRD,.mzMLfor MS). Auto-upload these files to a data lake with the sample ID as the filename/key.

- Automated Metadata Harvesting: Use instrument APIs or lab middleware (e.g.,

Labguru,Clarity LIMS) to pull instrument settings and conditions directly into the ELN record. - Publish to Repository: Upon experiment completion, use the ELN's export function to package metadata and data into a standard schema (e.g., ISA-Tab, NOMAD meta-info) and deposit in a public or institutional repository to obtain a DOI.

Diagrams

Title: FAIR Data Pipeline for AI-Driven Research

Title: How Data Problems Sabotage AI Model Outcomes

The Scientist's Toolkit: Research Reagent & Solution Essentials

| Item | Function & Relevance to Reproducibility |

|---|---|

| Internal Standard (e.g., Deuterated Solvents, Certified Reference Materials) | Added in precise concentration to analytical samples (e.g., NMR, LC-MS) to calibrate instrument response and correct for variability in sample preparation and analysis, directly combating "noisy data." |

| Certified Reference Material (CRM) / Standard | A material with a precisely known property (e.g., particle size, elemental composition, enzyme activity). Used to calibrate instruments and validate entire experimental protocols, ensuring consistency across labs and time. |

| Stable Isotope-Labeled Compounds (¹³C, ¹⁵N, D) | Used as tracers in synthesis or metabolic studies. Provides unambiguous, machine-detectable signatures to track pathways, reducing inference noise in complex systems. |

| Single-Lot, Large-Stock Reagents | For a long-term study, purchasing a large, single lot of a critical reagent (e.g., catalyst, growth serum, enzyme) minimizes batch-to-batch variability, a major source of inconsistency. |

| Electronic Grade Solvents & High-Purity Precursors | Minimizes unintended doping or side-reactions in materials synthesis and biochemical assays. Variable impurity profiles in lower-grade chemicals are a hidden source of non-reproducibility. |

| Automated Liquid Handling System | Replaces manual pipetting for critical steps (serial dilutions, plate formatting), dramatically reducing human-introduced volumetric errors and improving data consistency. |

| Sample Tracking LIMS with Barcoding | Provides a chain of custody and unique, persistent identifier for every physical sample and data file, addressing the Findable and Accessible principles of FAIR data. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My model performance varies wildly between training runs with the same hyperparameters. What is the primary cause and how do I fix it? A: This is a classic symptom of high sensitivity to the random seed. The random seed controls the initial weight initialization, data shuffling order, and any dropout masks. To mitigate:

- Implement Explicit Seeding: Set seeds for Python, NumPy, and your deep learning framework (e.g., TensorFlow, PyTorch) at the start of your script.

- Average Multiple Runs: For your final reported result, train the model multiple times (e.g., 5-10 runs) with different seeds and report the mean and standard deviation.

- Use Deterministic Algorithms: Where possible, enable deterministic operations in your framework (e.g.,

torch.use_deterministic_algorithms(True)), noting this may impact performance.

Q2: How do I systematically evaluate hyperparameter sensitivity to improve reproducibility? A: Conduct a sensitivity analysis using a grid or random search, but with a crucial addition:

- For each hyperparameter set, perform multiple training runs with different random seeds.

- Record the average performance and the variance across seeds for each configuration.

- Identify hyperparameter regions where performance is both high and stable (low variance across seeds). Protocol:

- Define your hyperparameter search space (e.g., learning rate: [1e-4, 1e-3, 1e-2], batch size: [16, 32, 64]).

- For each (learningrate, batchsize) combination, run 5 training sessions with seeds 42, 123, 456, 789, 999.

- Calculate the mean R² score and its standard deviation.

- Select the configuration that meets your performance threshold with the smallest standard deviation.

Q3: My materials property prediction model fails to generalize when trained on a different dataset split. What steps should I take? A: This indicates potential instability related to data sampling and model complexity.

- Stratified Splitting: Ensure your train/validation/test splits preserve the distribution of critical features (e.g., crystal system, value range of the target property).

- Use Nested Cross-Validation: Employ an outer loop for performance estimation and an inner loop for hyperparameter tuning. This provides a more robust generalization error estimate.

- Regularize Your Model: Increase dropout rates, apply L1/L2 weight regularization, or reduce network complexity to prevent overfitting to idiosyncrasies of a specific data split.

Q4: What are the best practices for logging to ensure a materials AI experiment is fully reproducible? A: Maintain a complete "digital twin" of each experiment. Log:

- Code Snapshot: Git commit hash.

- Full Environment: Conda/Pip freeze output (

conda list --exportorpip freeze). - Hyperparameters: All values, including defaults.

- Random Seeds: Every seed used.

- Data Version & Splits: Hash of dataset and exact indices used for splits.

- Hardware: GPU type and driver version, as some operations have hardware-level non-determinism.

Table 1: Impact of Random Seed on Model Performance (Benchmark on QM9 Dataset)

| Model Architecture | Metric | Mean Value (5 seeds) | Std. Dev. | Min Value | Max Value | Range |

|---|---|---|---|---|---|---|

| Graph Neural Network | MAE (eV) | 0.042 | 0.0035 | 0.038 | 0.047 | 0.009 |

| Random Forest | R² Score | 0.921 | 0.014 | 0.901 | 0.937 | 0.036 |

| Dense Neural Network | MAE (eV) | 0.089 | 0.0112 | 0.072 | 0.104 | 0.032 |

Table 2: Hyperparameter Sensitivity Analysis for a MLFF (Machine Learning Force Field)

| Hyperparameter Set | Learning Rate | Batch Size | Noise std. dev. | Mean Force Error (meV/Å) | Std. Dev. across seeds |

|---|---|---|---|---|---|

| A | 1e-3 | 5 | 0.05 | 48.2 | ± 2.1 |

| B | 1e-3 | 10 | 0.10 | 45.7 | ± 6.8 |

| C | 5e-4 | 5 | 0.01 | 42.1 | ± 5.3 |

| D | 5e-4 | 10 | 0.05 | 43.5 | ± 3.9 |

Experimental Protocols

Protocol 1: Reproducibility-Centric Training Run

- Set Seeds: Initialize Python (

random.seed()), NumPy (np.random.seed()), and framework-specific (e.g.,torch.manual_seed()) seeds. - Log Setup: Log all environment details, hyperparameters, and the commit hash.

- Data Preparation: Load the dataset. Create splits using a seeded function (e.g.,

sklearn.model_selection.train_test_splitwithrandom_state=). Save the split indices. - Model Initialization: Instantiate the model. Its weights are now determined by the seed.

- Training Loop: Run for the specified epochs. Log training/validation metrics per epoch.

- Evaluation: Evaluate on the held-out test set. Record all metrics.

- Artifact Saving: Save the final model, training logs, and configuration file together.

Protocol 2: Sensitivity Analysis for Hyperparameter Tuning

- Define Search Space: List hyperparameters and value ranges (e.g., learning rate: log-scale from 1e-5 to 1e-2).

- Define Seed Pool: Create a fixed list of random seeds (e.g., [1, 2, 3, 4, 5]).

- Outer Loop (HP Config): Sample a hyperparameter configuration (e.g., via Bayesian search).

- Inner Loop (Seed Iteration): For each seed in the seed pool, execute Protocol 1 using the current HP config and the iterative seed.

- Aggregate: Calculate the mean and standard deviation of the target metric (e.g., validation loss) across all seeds for the current HP config.

- Recommendation: After the search, select the HP config that optimizes for high mean performance and low standard deviation.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Reproducible ML in Materials Research

| Item | Function & Rationale |

|---|---|

| Weights & Biases (W&B) / MLflow | Experiment tracking platforms to automatically log hyperparameters, code state, metrics, and output models for full lineage. |

| Poetry / Conda | Dependency management tools to create exact, portable software environments needed to replicate the computational experiment. |

| DVCS (e.g., Git) | Version control for all code, configuration files, and scripts. The commit hash is the cornerstone of reproducibility. |

| Seedbank Library | Libraries to help manage and orchestrate multiple random seeds across different modules and libraries in a single run. |

| Deterministic CUDA | Enabling deterministic GPU operations (e.g., CUBLAS_WORKSPACE_CONFIG) reduces non-determinism at the cost of potential speed. |

Scikit-learn's check_random_state |

Utility function to accept either a seed integer or a RandomState object, ensuring consistent random number generation streams. |

| StratifiedSplit from Modellab | Advanced data splitting methods that maintain distribution of key features across splits, crucial for small materials datasets. |

| HDF5 / Parquet File Format | Standardized, self-describing file formats for storing features, targets, and metadata together to avoid data corruption or misalignment. |

Troubleshooting Guides and FAQs

Q1: I am trying to reproduce a materials property prediction model from a published paper. The authors mention using a "standard" dataset, but I find multiple versions with different pre-processing steps. Which one should I use, and why are my accuracy metrics 8% lower?

A1: This is a classic symptom of the benchmarking gap. The lack of a canonical, version-controlled dataset leads to fragmentation.

- Diagnosis: You are likely using a different data split, filtering criterion (e.g., for invalid entries), or featurization method.

- Solution:

- Contact the Authors: Request their exact data file and splitting script.

- Use a Repository: Check if the model is on a platform like Open Catalyst Project or Matbench which provide frozen datasets.

- Document Your Version: If you must choose, explicitly document your source (e.g., "Materials Project v2022.10, filtered for thermodynamic stability < 50 meV/atom") and report metrics on all common variants in a table.

Q2: My computational screening of perovskite candidates yielded a top-10 list completely different from a comparable study. How do I determine which protocol is more reliable?

A2: Discrepancy often stems from differing evaluation protocols, not just models.

- Diagnosis: Compare these protocol elements side-by-side.

- Solution: Conduct a "protocol ablation study." Isolate each difference and test its impact.

| Protocol Element | Your Study | Comparative Study | Impact Test Suggestion |

|---|---|---|---|

| Initial Structure Source | ICSD | Materials Project | Fix all other steps, run with both sources. |

| Relaxation Convergence | 0.05 eV/Å | 0.01 eV/Å | Re-relax your top candidates with tighter criteria. |

| Stability Metric | Hull distance < 0.1 eV | Hull distance < 0.2 eV | Recalculate stability for all candidates with both thresholds. |

| Final Property | PBE bandgap | HSE06 bandgap | Perform single-point HSE06 calculation on your PBE-relaxed structures. |

Q3: When reporting a new diffusion Monte Carlo (DMC) method for formation energy, what is the minimum set of benchmarks I must run to claim improvement?

A3: To ensure reproducibility and meaningful comparison, you must benchmark against a standardized hierarchy of data.

- Diagnosis: Claiming improvement without rigorous, multi-fidelity benchmarks is a major contributor to the reproducibility crisis.

- Solution: Follow this experimental protocol:

Experimental Protocol: Benchmarking a New DMC Method

- Reference Data Selection: Use a standardized set of formation energies (e.g., the GW 100 dataset or a subset of the Materials Project Formation Energies from high-throughput DFT).

- Compute Baseline Metrics: Calculate Mean Absolute Error (MAE) and Root Mean Square Error (RMSE) for your method against the reference. Use a consistent training/test split (e.g., 80/20).

- Systematic Error Analysis: Plot error vs. elemental composition, band gap, and magnetic moment to identify biases.

- Computational Cost Tracking: Report the average CPU-hour per calculation, normalized to a standard node configuration (e.g., 32-core, 2.5 GHz).

- Uncertainty Quantification: Provide error bars for your DMC results, derived from statistical analysis of the Monte Carlo steps.

Q4: How can I ensure my experimental protocol for high-throughput polymer synthesis is reproducible across labs?

A4: Standardize every variable possible and use controlled reference materials.

- Diagnosis: Subtle differences in solvent lot, impurity levels, or mixing dynamics can cause significant variance.

- Solution: Implement the following:

- Internal Reference Reaction: Include a known polymerization (e.g., a specific PET synthesis) in every batch. Monitor its yield and molecular weight distribution as a batch quality control.

- Reagent Metadata: Log detailed supplier and lot information for all precursors.

- Environmental Logging: Record ambient temperature and humidity during synthesis.

- Instrument Calibration Log: Maintain a shared log for all relevant instruments (GPC, NMR, rheometers).

Visualizations

Title: Origins of the Benchmarking Gap in AI Materials Science

Title: Reproducibility Checklist Workflow for AI Materials Research

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale | Example/Standard |

|---|---|---|

| Frozen Benchmark Datasets | Version-controlled, immutable datasets to ensure all researchers evaluate on identical data, enabling fair comparison. | Matbench, The Open Catalyst Project OC20 dataset, QM9. |

| Containerized Software | Pre-configured computational environments (Docker/Singularity) that encapsulate all dependencies, eliminating "works on my machine" issues. | Published Docker Hub images accompanying a paper. |

| Standardized Evaluation Harness | A unified code package that defines train/test splits, metrics, and reporting formats for a specific task. | Matminer's Benchmark Framework, OGB (Open Graph Benchmark) loaders. |

| Reference Materials (Experimental) | Well-characterized physical materials with certified properties, used to calibrate and validate experimental high-throughput pipelines. | NIST Standard Reference Materials (e.g., for XRD, thermal conductivity). |

| Persistent Identifiers (PIDs) | Unique, permanent identifiers for digital assets like datasets, codes, and samples, ensuring permanent access and citation. | DOIs (DataCite) for data, RRIDs for reagents. |

| Electronic Lab Notebook (ELN) | A system to digitally, and reproducibly, record procedures, observations, and metadata in a structured, searchable format. | LabArchive, RSpace, ELN. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our AI model predicts a high-yield synthesis for a target perovskite nanocrystal, but our lab consistently achieves lower yields and different optical properties. What are the primary protocol variables we should audit?

A1: This is a classic reproducibility failure often stemming from overlooked synthesis protocol variables. Focus on these critical parameters:

- Precursor Injection Dynamics: The AI training data may assume an instantaneous injection, while manual or even pump-based injections in your lab have a finite rate and mixing profile.

- Local Temperature Gradients: The model likely uses reactor bulk temperature. Verify thermocouple placement and ensure consistent stirring to minimize local cold/hot spots during exothermic reactions.

- Ambient Oxygen/Moisture Traces: Many synthesis databases underreport glovebox or Schlenk line conditions. Trace O₂/H₂O can dramatically affect nucleation.

Recommended Protocol Audit Checklist:

- Record injection speed (mL/min) and needle gauge/position.

- Calibrate all temperature sensors and document their location in the reactor.

- Quantify glovebox atmosphere (O₂ & H₂O ppm) for every synthesis run.

Q2: When characterizing metal-organic framework (MOF) porosity, our BET surface area measurements from the same sample batch show high inter-lab variance despite using "standard" protocols. What gives?

A2: BET measurement is highly sensitive to pre-treatment (activation) protocol. Variability often originates here:

- Solvent Exchange History: The type and number of solvent exchanges prior to activation critically impact pore collapse.

- Outgassing Temperature Ramp Rate: A rapid ramp can trap solvent, blocking pores. A too-slow ramp is not always captured in literature methods.

- Degas Endpoint Criteria: Using a fixed time vs. using a pressure-rate endpoint (e.g., <5 µmHg/min change) leads to different residual solvent loads.

Standardized Activation Protocol:

- Exchange with acetone (3x over 24h), then with dichloromethane (3x over 24h).

- Transfer to tared analysis tube.

- Under flowing N₂, heat at 5°C/min to 80°C, hold for 1h.

- Switch to vacuum. Heat at 1°C/min to 120°C, hold for 1h.

- Continue heating at 0.5°C/min to the target activation temperature (e.g., 200°C).

- Hold at activation temperature under dynamic vacuum until the pressure rate criterion is met (<2 µmHg/min change over 30 min).

- Backfill with N₂ and re-weigh.

Q3: Our AI-driven screening identifies a promising organic semiconductor thin film, but our charge carrier mobility measurements are inconsistent and lower than predicted. Which characterization steps are most prone to operator-induced variability?

A3: Thin-film electrical characterization is a minefield of protocol variability. Key issues are:

Table 1: Common Variability Sources in Thin-Film Mobility Measurement

| Variable | Typical Range in Literature | Impact on Mobility | Recommended Standard |

|---|---|---|---|

| Electrode Annealing | Not mentioned, 30°C-150°C | Order-of-magnitude change | 100°C for 10 min in N₂, specified for each metal. |

| Measurement Atmosphere | Air, N₂ glovebox, vacuum | H₂O/O₂ doping/deg doping | High vacuum (<10⁻⁵ Torr) with a slow ramp to bias. |

| Voltage Conditioning | Often omitted | Alters contact interfaces | Apply gate bias for 300s before mobility calculation. |

| Thickness Measurement | Stylus profilometer (spot) vs. ellipsometry (avg) | Directly impacts calculated field | Use & report method; ellipsometry average preferred. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Reproducible Nanomaterial Synthesis

| Item | Function & Protocol Criticality | Notes for Reproducibility |

|---|---|---|

| Tri-n-octylphosphine oxide (TOPO), Technical Grade | High-temp solvent & ligand for QD synthesis. | High Criticality: Technical grade contains variable amines (5-20%) that dramatically affect kinetics. Always source from same lot or switch to purified grade and add amines explicitly. |

| Oleic Acid (cis-9-Octadecenoic acid), >90% | Common capping ligand. | Medium Criticality: Aldehyde impurities can cross-link nanoparticles. Purify by distillation or use a >99% grade from a reliable supplier. |

| Deuterated Solvents for NMR | For reaction monitoring & quantification. | High Criticality: Residual water content (H₂O in DCM-d₂, etc.) varies. Store over molecular sieves and report water ppm from NMR spectrum. |

| Molecular Sieves (3Å, powder) | For solvent drying. | Medium Criticality: Activation protocol (time, temperature under vacuum) dictates water capacity. Activate at 250°C under dynamic vacuum for >12h. |

Experimental Protocol Visualization

Title: AI-Driven Experiment Reproducibility Loop

Title: MOF BET Measurement Variability & Control

Building Reproducible AI-Materials Pipelines: From FAIR Data to Containerized Workflows

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My dataset passes automated FAIR checkers but is still not reusable by my collaborators. What foundational step am I missing? A: Automated checkers often validate only technical compliance (e.g., valid metadata schema). The most common missing foundational step is the provision of a detailed, machine-actionable Experimental Protocol. This ensures reproducibility, which is critical for AI model training. See the protocol below for essential elements.

Q2: When converting my lab notebook into a machine-readable metadata file, how do I balance detail with efficiency? A: Use a structured template focusing on materials synthesis and characterization parameters. Incomplete provenance linking (e.g., connecting a final composite material to the exact synthesis conditions of each component) is a primary point of failure. Implement a granular, linked-data approach as outlined in the workflow diagram.

Q3: How do I assign a persistent identifier (PID) to a non-digital research sample like a specific batch of a polymer? A: This is a core challenge in materials science. The foundational practice is to:

- Assign a unique, immutable ID (e.g., a UUID) to the physical sample at creation.

- Register this ID with a sample-specific repository (e.g., BioSamples, IGSN) or your institution's PID service to get a resolvable PID (e.g., a DOI).

- Ensure all digital data (spectra, images, properties) generated from that sample explicitly references this PID in its metadata using a controlled vocabulary (e.g.,

sourceSampleID).

Q4: My AI model for predicting material properties performs poorly on data from other labs. Is this a FAIR data issue? A: Very likely. This is a classic reproducibility crisis symptom in AI-driven materials research. The root cause is often insufficient metadata richness (lack of detailed experimental conditions, instrument calibration data, pre-processing steps) in the training data, violating the "R" (Reusability) principle. Implementing rich, structured metadata protocols is non-negotiable for robust AI.

Detailed Experimental Protocol for FAIR Materials Data Generation

Protocol Title: Sequential Vapor Deposition of Perovskite Thin Films with FAIR Data Capture

Objective: To synthesize MAPbI₃ perovskite films while concurrently capturing all experimental parameters as structured metadata for AI/ML analysis.

Materials: See "Research Reagent Solutions" table below.

Methodology:

- Pre-Experiment PID Generation:

- Generate a unique UUID for the experiment run.

- Pre-register the experiment on an Electronic Lab Notebook (ELN) or institutional platform, linking to the project DOI.

Substrate Preparation & FAIR Linking:

- Clean FTO-coated glass substrates.

- Scan the barcode/Label on the substrate container. This PID is automatically logged in the experiment metadata file under

substrate.sourceID.

Solution Preparation with Digital Provenance:

- Weigh precursors (PbI₂, MAI) using a balance interfaced with the ELN.

- Record solvent (DMF, DMSO) batch numbers and vendor IDs.

- The metadata file records each chemical's PID (e.g., PubChem CID) and exact measured mass, linked to the instrument calibration certificate DOI.

Deposition Process & Parameter Logging:

- Use a programmable spin coater. The tool's software exports a JSON file of spin speed, acceleration, and duration.

- This JSON file is linked as a

hasPartof the overall experiment dataset. - For the annealing step, log hotplate temperature profile (with sensor ID) and ambient humidity (from a logged sensor).

Characterization with Instrument Metadata:

- Perform XRD. Save the raw

.rasfile alongside the processed.csv. - The metadata file includes the instrument model, software version, and a link to the standard calibration file used.

- The generated data file is named using the experiment UUID and characterization type (e.g.,

[UUID]_XRD.ras).

- Perform XRD. Save the raw

Data Packaging:

- Aggregate all raw data files, processed data, and the structured metadata file (in JSON-LD format using a schema.org/OPM extension).

- Upload the package to a domain-specific repository (e.g., NOMAD, Materials Data Facility) or a generalist repository (e.g., Zenodo) to obtain a DOI.

Research Reagent Solutions

| Item | Function in Protocol | Critical FAIR Metadata to Capture |

|---|---|---|

| Lead(II) Iodide (PbI₂) | Precursor for perovskite layer | Vendor Catalog #, Lot #, Purity, PubChem CID, Storing Conditions |

| Methylammonium Iodide (MAI) | Organic precursor component | Vendor Catalog #, Lot #, Purity, Custom Synthesis Protocol DOI (if applicable) |

| Dimethylformamide (DMF) | Solvent | Vendor Catalog #, Lot #, Purity, Water Content, Storage History |

| FTO-coated Glass | Substrate & Electrode | Vendor, Sheet Resistance, Dimensions, Surface Cleaning Protocol DOI |

| N₂ Gas Cylinder | Inert atmosphere during spin-coating | Gas Purity, Flow Rate Calibration Certificate ID |

Table 1: Impact of FAIR Implementation on Data Reusability in a Simulated AI Study

| Metric | Before FAIR Implementation (n=100 datasets) | After FAIR Implementation (n=100 datasets) |

|---|---|---|

| Average Time to Understand Dataset | 4.2 hours | 1.1 hours |

| Datasets with Machine-readable Protocols | 12% | 98% |

| Successful Automated Meta-analysis Runs | 45% | 94% |

| Datasets with PIDs for Physical Samples | 5% | 88% |

Table 2: Common FAIR Principle Violations in Materials Science Repositories (Spot Check)

| FAIR Principle | Common Violation | Estimated Frequency* | Impact on Reproducibility |

|---|---|---|---|

| F2 (Rich Metadata) | Missing detailed synthesis parameters (e.g., ambient humidity). | 65% | High - Prevents experimental replication. |

| I1 (Formal Knowledge) | Use of free-text fields without controlled vocabularies. | 80% | Medium - Hinders automated data integration. |

| R1.2 (Usage License) | Clear license not specified. | 40% | Medium - Creates legal uncertainty for reuse. |

| A1.1 (Free Protocol) | Access requires proprietary software to read data. | 30% (e.g., certain microscopy formats) | High - Locks data behind paywalls. |

| *Frequency based on recent sampling of 200 datasets from public repositories. |

Visualizations

Troubleshooting Guides & FAQs

Q1: My Conda environment builds successfully on my laptop but fails on our lab's high-performance computing (HPC) cluster with a "Solving environment" error. What should I do? A: This is often due to platform-specific package dependencies or channel priority conflicts.

- Solution: Use explicit, platform-agnostic environment files.

- On your local machine, export your environment with exact build versions:

- Manually edit the resulting

environment.ymlto remove any platform-specific prefixes (e.g.,- linux-64::,- osx-64::). - For the HPC cluster, create the environment with strict channel priority:

- If issues persist, use

conda-lockto generate fully reproducible lock files for different platforms.

Q2: After a git pull, my Python script breaks due to a change in a dependent library's API. How can I quickly identify which dependency change caused this?

A: Use Git bisect in combination with your environment manager.

- Solution:

- Ensure you have a Conda environment file (

environment.yml) or a Dockerfile committed to the repository. - Identify a known good commit (

good_hash) and the current bad commit. - Run:

- At each step, Git checks out a commit. Automate the test:

- Based on the script's success/failure, run

git bisect goodorgit bisect bad. - Git will pinpoint the exact commit that introduced the breaking change.

- Ensure you have a Conda environment file (

Q3: My Docker container runs out of memory during a materials simulation, but the host machine has plenty free. How do I fix this? A: This is typically a Docker resource limit configuration issue.

- Solution:

- Check current limits:

docker info | grep -i memory - Increase memory allocation:

- Docker Desktop (GUI): Settings -> Resources -> Memory.

- Command line (when running):

docker run --memory="32g" <image_name>

- For production orchestration (e.g., Kubernetes): Specify memory requests and limits in your pod/deployment YAML:

- Check current limits:

Q4: I need to archive my entire experiment for a publication. What is the minimal set of files to ensure long-term reproducibility? A: You must archive the code, data, and environment triad.

- Solution: Create a project archive with this structure:

- Critical Step: Use

docker save -o experiment_image.tar <image:tag>to save the exact container image.

- Critical Step: Use

Q5: How do I handle large datasets (e.g., DFT calculation outputs, molecular dynamics trajectories) in Git for provenance? A: Never store large binary files directly in Git. Use a dedicated system.

- Solution: Implement Git LFS (Large File Storage) or a data versioning tool.

- Configure Git LFS:

- Alternative for massive data: Use DVC (Data Version Control). Store data in a remote bucket (S3, GCS, lab server) and version the data

*.dvcpointer files in Git.

Key Experimental Protocols for Reproducibility

Protocol 1: Creating a Fully Versioned Computational Experiment

- Initialize:

git initin a new project directory. - Environment: Create

environment.yml(Conda) andDockerfile. Commit them. - Data: Place raw data in

data/raw/. Use DVC or Git LFS if files are large. Create adata/README.mddescribing source and hash. - Code: Develop scripts in

src/. Commit early and often with descriptive messages (e.g., "FIX: corrected lattice constant unit conversion"). - Execution: Use a workflow manager (e.g.,

snakemake,nextflow) or a masterrun_experiment.pyscript. Record the exact command inPROTOCOL.md. - Snapshot: Once results are generated, create a Docker image tagged with the Git commit hash:

docker build -t experiment:$(git rev-parse --short HEAD) . - Archive: Push all code to a remote Git server. Push data to its remote storage. Push the Docker image to a container registry.

Protocol 2: Replicating a Published Computational Experiment from a Repository

- Obtain Artifacts: Clone the Git repository. Fetch versioned data via DVC (

dvc pull) or Git LFS (git lfs pull). - Inspect: Read

environment.ymlandDockerfile. Check for aREADME.mdorreproduce.mdfile. - Rebuild Environment: The preferred method is to build the provided Docker image:

docker build -t replicated_experiment .. Alternatively, use Conda:conda env create -f environment.yml. - Execute: Run the exact command documented in the protocol, inside the container or environment.

- Verify: Compare output logs and key result files against the original publication's figures or data tables.

Table 1: Common Reproducibility Failures in Computational Materials Science

| Failure Category | Frequency (%)* | Primary Mitigation Tool |

|---|---|---|

| Missing Dependencies / Incorrect Versions | ~65% | Conda, Docker, Pipenv |

| Undocumented Data Pre-processing Steps | ~45% | Versioned Jupyter Notebooks, Workflow Scripts |

| Platform-Specific Build Issues | ~30% | Docker, Singularity |

| Random Seed Not Fixed | ~25% | Explicit seed setting in code |

| Outdated Code for Published Results | ~20% | Git tags, Zenodo DOI for releases |

Frequency estimates based on analysis of 50 retraction notices and "failed replication" comments in *Chemistry of Materials and npj Computational Materials (2022-2024).

Table 2: Tool Selection Guide for Computational Provenance

| Task | Recommended Tool | Key Command for Provenance | Traceability Output |

|---|---|---|---|

| Code Versioning | Git | git tag -a v1.0 -m "Paper submission version" |

Commit hash, Tag |

| Environment Isolation | Docker | docker build --build-arg COMMIT_HASH=$GIT_TAG . |

Immutable Image ID |

| Package Management | Conda/Mamba | conda env export --from-history |

environment.yml |

| Data Versioning | DVC | dvc repro (re-runs pipeline) |

.dvc files, Data hash |

| Workflow Automation | Snakemake | snakemake --configfile params.yaml |

Directed Acyclic Graph (DAG) |

| Interactive Analysis | Jupyter | jupyter nbconvert --to html notebook.ipynb |

Executed notebook output |

Visualizations

Title: Computational Provenance Workflow for Full Traceability

Title: Troubleshooting Guide for Reproducibility Failures

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Experiment | Example / Specification |

|---|---|---|

| Version Control System (Git) | Tracks all changes to source code, scripts, and configuration files, enabling collaboration and rollback to any prior state. | git, hosted on GitHub, GitLab, or private Gitea instance. |

| Environment Manager (Conda/Mamba) | Creates isolated, reproducible software environments with specific package versions, resolving dependency conflicts. | conda-forge channel; environment.yml file. |

| Containerization (Docker/Singularity) | Captures the entire operating system and software stack in an immutable image, guaranteeing identical runtime across platforms. | Dockerfile for building; Singularity for HPC. |

| Data Version Control (DVC) | Manages large datasets and machine learning models outside of Git, while maintaining versioning and pipeline reproducibility. | dvc with remote storage (S3, SSH, Google Drive). |

| Workflow Manager (Snakemake/Nextflow) | Automates multi-step computational pipelines, ensuring correct execution order and documenting the data transformation process. | Snakefile or nextflow.config. |

| Notebook Platform (Jupyter) | Provides an interactive computational environment for exploratory data analysis, with outputs embedded for documentation. | JupyterLab, with nbconvert for export. |

| Metadata & Logging | Records critical parameters, random seeds, and hardware/software context automatically during experiment execution. | Python logging module; MLflow or Weights & Biases. |

| Archive & DOI | Provides a permanent, citable snapshot of the complete research artifact (code, data, environment) upon publication. | Zenodo, Figshare, or institutional repository. |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: My ELN template for AI-driven materials screening does not enforce the required minimal information fields (e.g., precursor purity, solvent lot number, synthesis parameters). How can I ensure consistent data entry? A: This is a common configuration issue. You must define and apply a Minimal Information About a Materials Experiment (MIAME-nano) template within your ELN's administrative settings. The protocol is as follows:

- Access the ELN's Administration Panel.

- Navigate to Template Management > Create New Template.

- Define mandatory fields (marked with ) based on your experimental phase:

- Synthesis: Precursor ID, Supplier, Lot #, Purity, Solvent Details, Reaction Time, Temperature.

- Characterization: Instrument Model, Software Version, Calibration Date, Raw Data File Path.

- AI Model Training: Dataset Version, Feature Set Description, Hyperparameters (JSON string)*.

- Apply this template to the relevant project or group. Users cannot create new entries without completing the starred fields.

Q2: After an automated experiment, my characterization data (e.g., SEM images, XRD spectra) is saved on a local instrument PC. How do I automatically ingest this into the correct ELN entry with proper metadata? A: Implement a standardized file-naming convention and use ELN's API or a watched folder system. Protocol: Automated Data Ingestion via Watched Folder

- Configure Instrument Output: Set all instruments to save files using a structured naming convention:

[ProjectID]_[SampleID]_[Date]_[Instrument].extension(e.g.,ProjA_ZnO-25_20231027_SEM.tiff). - Establish Watched Folder: Create a network-accessible folder. Configure the ELN's auto-import agent (see ELN admin guide) to monitor this folder.

- Set Parsing Rules: In the ELN, define rules to parse the filename into metadata fields and map it to an existing experiment entry using the

[ProjectID]_[SampleID]. - Validation: The ELN should log the import and flag any files with non-conforming names for manual review.

Q3: When trying to share my ELN experiment for peer review, the recipient cannot access or interact with the linked raw data files. What is the proper sharing workflow? A: This indicates sharing was limited to the notebook entry only, not the underlying data. Use the "Export for Peer Review" function, if available, which bundles all metadata and data. Protocol: Reproducible Package Export

- Within the ELN entry, select Export > Reproducible Package (FAIR).

- Ensure the export options include: PDF Summary Report, Structured Metadata (JSON-LD), and All Linked Raw Data Files.

- The ELN will generate a ZIP file containing:

- A human-readable PDF.

- A machine-readable JSON-LD file with all metadata structured according to minimal information standards.

- A

/datasubfolder with all attached files in their original format.

- Share this ZIP via a persistent data repository (e.g., Zenodo, institutional repository) and cite the generated DOI in publications.

Q4: Our AI model for predicting polymer properties performed well during internal validation but failed when another lab tried to reproduce the results using our shared ELN entry. What minimal information might be missing? A: This classic reproducibility crisis in AI-driven research often stems from omitted computational environment details. Your ELN must capture the exact software context. Troubleshooting Checklist:

- Algorithm & Version: Was the specific AI library (e.g., TensorFlow 2.10.0, scikit-learn 1.2.2) documented?

- Random Seeds: Were the random seeds for model initialization and data splitting recorded?

- Data Splits: Are the exact compositions of the training, validation, and test sets identifiable (e.g., via hash of the indices)?

- Hardware: Was the GPU model and driver version (which can affect floating-point calculations) noted? Add a "Computational Environment" section to your ELN template to record these.

Data Summary Tables

Table 1: Common ELN Integration Issues & Resolution Times

| Issue Category | Average Incidence (%) | Mean Time to Resolution (Hours) | Primary Solution |

|---|---|---|---|

| Data Import/Export Failure | 35% | 2.5 | API configuration & file format validation |

| Template/Protocol Non-compliance | 28% | 1.0 | Admin enforcement & user retraining |

| Permission & Sharing Errors | 20% | 0.5 | Role-based access control (RBAC) review |

| Search & Retrieval Difficulties | 12% | 1.5 | Metadata schema optimization |

| Versioning Conflicts | 5% | 3.0 | Merge protocol implementation |

Table 2: Impact of Minimal Information Standards on Experiment Reproducibility

| Research Domain | Without Standards (Reproducibility Rate) | With Enforced Standards (Reproducibility Rate) | Key Standard Adopted |

|---|---|---|---|

| Nanoparticle Synthesis | ~40% | ~85% | MIAME-nano |

| Polymer Property Prediction (AI) | ~30% | ~75% | MINIMAL (for ML in materials) |

| High-Throughput Battery Material Screening | ~50% | ~90% | ISA-TAB-Nano |

Experimental Protocols

Protocol: Validating an ELN-Integrated AI-Driven Screening Workflow Objective: To ensure that an automated materials characterization pipeline correctly logs all minimal information into the ELN.

- Sample Preparation: Prepare 10 distinct material samples (e.g., metal oxide variants) using a documented synthesis protocol. Log each into the ELN using the mandatory synthesis template.

- Automated Characterization Queue: Load samples into an automated SEM/EDS system. Initiate the run via a script that passes each sample's ELN-generated UUID to the instrument software.

- Data Capture & Metadata Binding: Configure the instrument software to embed the sample UUID into each image's metadata header. Upon completion, files are automatically transferred to the "watched folder."

- ELN Ingestion Verification: In the ELN, confirm that each characterization file is attached to the correct sample entry. Validate that instrument metadata (accelerating voltage, detector type, calibration date) is parsed and stored.

- AI Model Trigger: A successful data ingest triggers an automated script to run a pre-trained AI model for phase identification. The script logs its version, hyperparameters, and results (predicted phase, confidence score) back to the same ELN entry.

- Audit: Generate an audit trail report from the ELN for one sample, tracing from synthesis to AI prediction.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in AI-Driven Materials Research |

|---|---|

| Certified Reference Materials (CRMs) | Essential for calibrating instruments (e.g., SEM, XRD) to ensure data quality for AI model training. |

| High-Purity Precursors (Trace Metal Basis) | Critical for reproducible synthesis; lot-to-lot variability is a major confounder that AI models must account for. |

| Stable Isotope-Labeled Compounds | Used to trace reaction pathways; the data informs mechanistic models and AI predictions. |

| Standardized Solvent Systems | Reduces unpredictable synthesis outcomes. Must document water content and stabilizer information. |

| Cell Culture Media (for biomaterials) | Batch-specific performance must be recorded. Vital for reproducible biological assays of material biocompatibility. |

| Software Version "Reagents" | Specific versions of AI/ML libraries (e.g., PyTorch, RDKit) are digital reagents and must be logged with the same rigor as physical ones. |

Visualization: Workflow & Signaling

Diagram 1: ELN-Centric Reproducible Research Workflow

Diagram 2: Data & Metadata Flow in an Integrated Lab

Technical Support Center

This support center provides troubleshooting and FAQs for implementing closed-loop, AI-driven workflows in materials science and drug development. The guidance is framed within the critical thesis of enhancing experimental reproducibility.

Frequently Asked Questions (FAQs)

Q1: Our AI model's predictions are accurate in simulation but fail when guiding physical synthesis. What could be the cause? A: This is a common issue known as the "reality gap" or "sim-to-real" transfer problem. Key troubleshooting steps:

- Check Domain Shift: Verify that the training data for your predictive model accurately represents the parameter space of your physical synthesis platform (e.g., CVD furnace, liquid handler). Retrain with data from high-fidelity digital twins or a small set of calibration experiments.

- Validate Uncertainty Quantification: Ensure your model provides reliable uncertainty estimates. Failed syntheses often occur when acting on predictions with high epistemic (model) uncertainty. Implement acquisition functions that prioritize exploration in uncertain regions.

- Characterization-Synthesis Alignment: Confirm that the characterization data used to train the predictor is directly comparable to the in-situ or ex-situ characterization data fed back in the loop. Normalize spectra and images using identical protocols.

Q2: How can we diagnose and fix a breakdown in the autonomous loop where the system keeps proposing similar experiments? A: This indicates a failure in the experimental design (acquisition) function.

- Symptom: Stagnation of performance metric or material property optimization.

- Troubleshooting Guide:

- Acquisition Function Check: Switch from pure exploitation (e.g., selecting the highest predicted performance) to an exploratory function like Expected Improvement (EI) or Upper Confidence Bound (UCB). Increase the weight on the exploration term.

- Check for Data Contamination: Ensure newly characterized results are being appended correctly to the training dataset and that the model is retraining on the updated set.

- Diversity Enforcement: Implement a diversity metric (e.g., Tanimoto similarity for molecules, Euclidean distance for process parameters) and penalize proposed experiments that are too similar to previous trials.

Q3: What are the primary sources of irreproducibility in closed-loop workflows, and how can we mitigate them? A: Irreproducibility stems from multiple points in the loop:

| Source of Irreproducibility | Mitigation Strategy |

|---|---|

| Uncontrolled Synthesis Variables | Implement strict SPC (Statistical Process Control) for all synthesis equipment. Log all environmental data (humidity, temperature). |

| Characterization Instrument Drift | Perform daily calibration with certified standard samples. Use robust data pre-processing (Standard Normal Variate, Savitzky-Golay). |

| Non-Stationary AI Models | Version-control all models and training datasets. Use fixed random seeds. Employ periodic retraining on consolidated data. |

| Human Intervention Errors | Use a digital experiment log (ELN) that automatically records all loop parameters. Implement change-control protocols for hardware/software. |

Q4: How do we handle the integration of disparate data types (e.g., spectra, images, categorical outcomes) into a single AI model for decision-making? A: Utilize a multi-modal or fusion model architecture.

- Step 1: Create separate encoders for each data type. Use a CNN for images, a 1D CNN or transformer for spectra, and an embedding layer for categorical data.

- Step 2: Fuse the latent representations either by early fusion (concatenation) or late fusion (separate model heads followed by combining).

- Step 3: Train with a composite loss function that respects the different scales of your target properties.

- Protocol: Always validate each encoder separately on a sub-task before full fusion to ensure each data stream is being learned effectively.

Experimental Protocols for Key Validation Steps

Protocol 1: Calibrating the Synthesis-Characterization Data Link Objective: Ensure characterization output (Y) is a reliable proxy for the material property of interest. Method:

- Synthesize 5-10 samples across your parameter space using manual, documented protocols.

- Characterize each sample with your in-loop technique (e.g., Raman spectroscopy).

- Characterize the same samples with a gold-standard off-line technique (e.g., SEM, XRD, HPLC).

- Perform a robust statistical correlation (e.g., Pearson's R, Spearman's ρ) between the key features of the in-loop data and the gold-standard results.

- Acceptance Criterion: R² > 0.85 for the target property correlation. If not met, re-engineer in-loop characterization features or select a different technique.

Protocol 2: Benchmarking Autonomous Loop Performance Objective: Quantitatively compare the closed-loop AI agent against traditional search methods. Method:

- Define a bounded search space (e.g., chemical composition A%–B%, temperature X°C–Y°C).

- Run the closed-loop workflow for a fixed budget of N experiments (e.g., N=50).

- Run a human-designed Design of Experiments (DoE, e.g., full factorial) and a Bayesian Optimization (BO) baseline without automated synthesis/characterization for the same budget N.

- Record the max target property value discovered and the iteration at which it was found for each method.

- Analysis: Plot cumulative max performance vs. iteration number. The AI-driven closed loop should outperform or match the baselines with less human time-in-the-loop.

Workflow & Relationship Diagrams

Title: Core Closed-Loop Autonomous Materials Discovery Workflow

Title: Reproducibility Threats and Corresponding Mitigation Controls

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Closed-Loop Workflow |

|---|---|

| High-Throughput Synthesis Plateform (e.g., CVD array, robotic liquid handler) | Enables rapid, scriptable physical synthesis of sample libraries based on AI-generated proposals. |

| In-situ/Inline Characterization Probe (e.g., Raman spectrometer, UV-Vis flow cell) | Provides immediate, automated feedback on material properties without breaking the experimental loop. |

| Laboratory Information Management System (LIMS) | Acts as the centralized data warehouse, linking synthesis parameters, characterization results, and model predictions with strict metadata tagging. |

| Containerized AI/ML Environment (e.g., Docker/Kubernetes with MLflow) | Ensures model training and inference are reproducible and portable across different compute resources. |

| Calibration Standard Materials | Certified reference samples used to periodically validate and correct for characterization instrument drift. |

| Automated Lab Notebook (ELN) API | Software layer that automatically records all actions, parameters, and environmental conditions, eliminating manual logging errors. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQs on Repositories & Metadata

Q1: My dataset is over 50GB. What is the best platform, and how do I upload it? A: For large files (>50GB), GitHub is unsuitable. Use Zenodo, which offers up to 50GB per dataset. For even larger files, use institutional repositories or data lakes (e.g., AWS Open Data) and publish a data descriptor on Zenodo with a persistent DOI that links to the external storage.

Q2: How do I choose an open-source license for my code and data? A: Use the table below for guidance. Always include a

LICENSEfile in your repository's root directory.

| Item to License | Recommended License | Key Purpose | Link |

|---|---|---|---|

| Software/Code | MIT License | Permissive, allows commercial use with citation. | https://opensource.org/licenses/MIT |

| Software/Code | GNU GPLv3 | Ensures derivative works remain open-source. | https://www.gnu.org/licenses/gpl-3.0 |

| Datasets/Models | CC BY 4.0 | Requires attribution; standard for scholarly data. | https://creativecommons.org/licenses/by/4.0 |

| Datasets/Models | CC0 1.0 | Public domain dedication; maximizes reuse. | https://creativecommons.org/publicdomain/zero/1.0 |

- Q3: What metadata is essential for AI model reproducibility on Zenodo?

A: Beyond the basic title and authors, you must include:

- Version: The specific git commit hash or model version tag.

- Resource Type: "Software" or "Dataset" supplemented by "Training data" or "Machine learning model".

- Description: Full software/hardware dependencies (see protocol below).

- Related Identifiers: Link to the GitHub code repository.

- License: As chosen above.

Troubleshooting Guide: "It Works on My Machine"

Issue: A published AI model fails to run or produces different results when others try to replicate it.

| Symptom | Probable Cause | Solution |

|---|---|---|

ImportError or ModuleNotFoundError |

Missing or incompatible Python libraries. | Use pip freeze > requirements.txt to export exact versions. For complex environments, publish a Docker container image. |

| Different numerical outputs | Random seeds not fixed; GPU/non-deterministic algorithms. | Implement and document a seeding protocol (see below). |

| CUDA/cuDNN errors | GPU driver, CUDA toolkit, or cuDNN version mismatch. | Explicitly state the exact versions used in the README.md. Consider publishing a Docker image with the correct drivers. |

| Model loads but predictions are wrong | Preprocessing steps (normalization, tokenization) were not packaged with the model. | Bundle preprocessing code and trained weights together using formats like torch.jit or ONNX. |

Experimental Protocol for Reproducible AI Model Publication

Objective: To ensure a trained machine learning model for materials property prediction can be executed identically by independent researchers.

Materials & Reagent Solutions

| Item | Function & Specification |

|---|---|

| Computational Environment | Use Conda or Docker to encapsulate OS, Python, and library versions. |

| Version Control (Git) | Track all code, configuration files, and documentation. |

| Model Serialization Format | Use standard formats: pickle (PyTorch), SavedModel (TensorFlow), or ONNX. |

| Data Snapshot | A fixed, versioned copy of the training/validation dataset with unique DOI. |

| Seeding Script | A script that sets random seeds for random, numpy, torch, etc. |

Methodology:

Environment Capture: Before training, create an environment specification.

Seeding for Reproducibility: Insert this code at the very beginning of your training script.

Packaging: Organize your GitHub repository as follows:

Archiving: Link the GitHub repo to Zenodo via GitHub's release system. Create a new release on GitHub. Zenodo will automatically archive it and assign a DOI. Upload the frozen dataset separately to Zenodo and link it.

Workflow Diagram: AI Model Publication & Validation Pipeline

Title: Open Science Pipeline for AI Model Sharing

Relationship Diagram: Thesis Context for Reproducibility

Title: Thesis Framework for AI Research Reproducibility

Debugging Irreproducible Results: A Step-by-Step Guide for Scientists

FAQs & Troubleshooting Guides

Q1: My model performs well on training data but fails on new experimental batches. What preprocessing step might I have missed? A: This is often a batch effect issue. Ensure you have implemented and validated a batch correction method (e.g., ComBat, SVA). Check if you included batch identifiers as a covariate during scaling or normalization. A common mistake is applying normalization within batches instead of across all data simultaneously.

Q2: After feature engineering, my features show very high multicollinearity, causing unstable model coefficients. How can I troubleshoot this? A: High multicollinearity often arises from creating derived features (e.g., ratios, polynomials) from the same base measurements. Steps to resolve:

- Diagnose: Calculate Variance Inflation Factor (VIF).

- Threshold: Remove one of any pair of features with correlation > |0.9|.

- Apply Dimensionality Reduction: Use PCA on the correlated group and use the principal components as new features.

- Use Regularization: Switch to Ridge or Elastic Net regression which can handle correlated features better.

Q3: I am missing values for some critical material properties in my dataset. Can I simply drop these entries? A: Dropping entries can introduce bias. Follow this protocol:

| Method | Best Used When | Risk to Reproducibility |

|---|---|---|

| Median/Mean Imputation | Data is Missing Completely At Random (MCAR), <5% missing. | Low if condition met, otherwise distorts distribution. |

| k-Nearest Neighbors (KNN) Imputation | Data has strong local correlations, <15% missing. | Medium. Depends on similarity metric chosen. Document parameters. |

| MissForest Imputation | Data has complex, non-linear relationships. | Medium-High. Computationally intensive; seed must be fixed. |

| Indicator-Based Imputation | You suspect data is Not Missing At Random (NMAR). | High. Creates a new "missingness" feature for the model. |

Experimental Protocol for Imputation Validation:

- Artificially remove 5% of known values from a complete subset of your data.

- Apply your chosen imputation method.

- Calculate the reconstruction error (e.g., NRMSE).

- Compare error against a baseline (e.g., median imputation). Only proceed if error is significantly lower.

Q4: My feature distributions vary widely in scale. I applied StandardScaler, but my tree-based model's performance got worse. Why? A: Tree-based models (Random Forest, XGBoost) are scale-invariant. Scaling does not affect their performance. The perceived drop is likely due to random seed variation. Standard scaling is essential for linear models, SVMs, and neural networks, but unnecessary for tree-based models. Troubleshoot by:

- Re-running without scaling using a fixed random seed.

- Ensuring the train/test split was identical in both experiments.

Q5: How do I validate that my feature selection process is not leaking data and harming reproducibility? A: Feature selection must be nested within the cross-validation loop. A common error is selecting features using the entire dataset before CV.

- Incorrect Workflow: Full Dataset → Feature Selection → CV Split → Train/Validate.

- Correct Workflow: Full Dataset → CV Split: {For each fold: (Training Fold → Feature Selection → Train Model) → Validate on Test Fold}.

Title: Correct Feature Selection Within Cross-Validation

The Scientist's Toolkit: Research Reagent Solutions

| Item / Software | Function in Preprocessing & Feature Engineering |

|---|---|

| Python: SciKit-Learn | Provides robust, version-controlled implementations for scaling (StandardScaler), imputation (SimpleImputer, KNNImputer), and feature selection (SelectKBest, RFE). |

| R: sva Package | Contains ComBat function for empirical Bayes batch effect correction, critical for multi-batch materials data. |

| Python: missingpy Library | Provides MissForest implementation for advanced, model-based missing value imputation. |

| Cookiecutter Data Science | A project template for organizing data, code, and models to enforce a logical workflow and ensure auditability. |

| DVC (Data Version Control) | Tool for versioning datasets and ML models, tracking pipelines, and linking data+code to results. |

| Weights & Biases (W&B) / MLflow | Experiment tracking platforms to log all preprocessing parameters, feature sets, and resulting metrics for full lineage. |

Table 1: Impact of Common Data Issues on Model Generalizability in Materials Science

| Data Issue | Typical Performance Drop (Test vs. Train AUC/Accuracy) | Most Effective Mitigation Step |

|---|---|---|

| Uncorrected Batch Effects | 15-25% | Batch-aware normalization (e.g., ComBat) |

| Data Leakage in Scaling | 10-30% | Fit scalers on training fold only |

| Improper Handling of MNAR Data | 20-40% | Indicator-based imputation + domain analysis |

| Overly Aggressive Correlation Filtering | 5-15% | Use domain knowledge to guide removal; prefer regularization |

Title: Systematic Audit Pipeline for Preprocessing and Feature Engineering

Technical Support Center

Frequently Asked Questions & Troubleshooting Guides

Q1: My model's uncertainty estimates are consistently overconfident (low predictive variance for incorrect predictions). How can I diagnose and fix this? A: This is a common sign of poorly calibrated uncertainty. Perform the following diagnostic protocol.

- Diagnostic Experiment: Calibration Curve Plot

- Method: For a regression task, bin your test set predictions based on their predicted variance (or standard deviation). For each bin, calculate the average predicted variance and the empirical error (e.g., mean squared error between prediction and true value). Plot empirical error (y-axis) against predicted variance (x-axis).

- Interpretation: A well-calibrated model will show points aligning with the y=x line. Points above the line indicate underconfidence; points below indicate overconfidence.

- Troubleshooting: If overconfident, consider implementing Temperature Scaling (for probabilistic models) or using a Deep Ensemble. For ensemble methods, ensure member networks are sufficiently diverse by using different weight initializations or training data subsets.

Q2: When using Bayesian Neural Networks (BNNs) for materials property prediction, training becomes prohibitively slow and memory-intensive. What are the practical alternatives? A: Full BNNs are often computationally challenging for large-scale materials datasets. Consider these efficient alternatives:

Solution 1: Monte Carlo (MC) Dropout