The Materials Tetrahedron: Mastering Processing-Structure-Properties-Performance for Advanced Drug Development

This article provides a comprehensive exploration of the Materials Science Tetrahedron, a foundational paradigm defining the interdependent relationships between a material's processing, structure, properties, and performance.

The Materials Tetrahedron: Mastering Processing-Structure-Properties-Performance for Advanced Drug Development

Abstract

This article provides a comprehensive exploration of the Materials Science Tetrahedron, a foundational paradigm defining the interdependent relationships between a material's processing, structure, properties, and performance. Tailored for researchers, scientists, and drug development professionals, we dissect this framework from four critical angles: establishing its core principles, detailing methodological applications in pharmaceuticals, addressing troubleshooting and optimization challenges, and examining validation through case studies like PHA biopolymers and magnetic drug delivery systems. The content synthesizes current research and emerging trends, including the role of AI and high-throughput experimentation, to offer a actionable guide for accelerating the rational design of advanced therapeutic materials.

Deconstructing the Paradigm: A Primer on the Materials Tetrahedron and Its Core Principles

The Materials Tetrahedron is a foundational conceptual framework that captures the essence of materials science and engineering by illustrating the profound interdependence of four fundamental elements: processing, structure, properties, and performance (PSPP). This paradigm, formally introduced by the National Research Council in its 1989 report on materials science and engineering for the 1990s, has remained an enduring visual icon of the discipline for over three decades [1]. The tetrahedron's symbolic power lies in its depiction of these four elements not as a linear sequence, but as vertices of a polyhedron, with each connecting edge representing a critical, bidirectional relationship that must be understood and optimized for successful materials design [1]. The framework concisely depicts the inter-dependent relationship among the structure, properties, performance, and processing of a material, forming a scientific basis for the design and development of new materials systems [2]. In today's context of accelerated materials development, the PSPP relationship provides a crucial framework for understanding and manipulating materials behavior across diverse fields, from sustainable polymers to pharmaceutical development and national security applications.

Core Principles of the PSPP Framework

The Four Vertices: Definitions and Interrelationships

The four vertices of the materials tetrahedron form an integrated system where each element influences and is influenced by the others:

Processing refers to the methods and conditions used to synthesize, manufacture, or shape a material, including techniques such as additive manufacturing, heat treatment, casting, and chemical synthesis [3] [2]. In pharmaceuticals, this includes unit operations like milling, granulation, and compaction [2].

Structure encompasses the material's arrangement at multiple length scales, from atomic configuration and crystal structure to microstructural features, morphology, and defect architecture [4] [2]. Structure is hierarchically organized, with features at each level influencing the material's behavior.

Properties are the material's measurable responses to external stimuli, including mechanical, thermal, electrical, optical, and chemical characteristics [4] [2]. These represent what the material is capable of in terms of function.

Performance describes how the material behaves in real-world applications and service conditions, encompassing factors such as efficiency, durability, reliability, and biocompatibility [4] [5]. Performance is the ultimate criterion for materials selection.

The following table summarizes key aspects and examples for each vertex of the PSPP framework:

Table 1: Detailed Elements of the PSPP Framework Vertices

| Vertex | Key Aspects | Representative Examples |

|---|---|---|

| Processing | Synthesis methods, manufacturing techniques, heat treatment, shaping processes | Additive manufacturing, casting, milling, compaction, annealing [3] [2] |

| Structure | Atomic arrangement, crystal structure, microstructure, morphology, defects | Crystal polymorphs, grain boundaries, phase distribution, porosity [4] [2] |

| Properties | Mechanical, thermal, electrical, optical, chemical characteristics | Tensile strength, conductivity, refractive index, dissolution rate [4] [2] |

| Performance | Application behavior, service life, reliability, biocompatibility, efficacy | Drug release profile, tablet stability, component durability [4] [2] [5] |

Visualizing the Interconnections: The PSPP Workflow

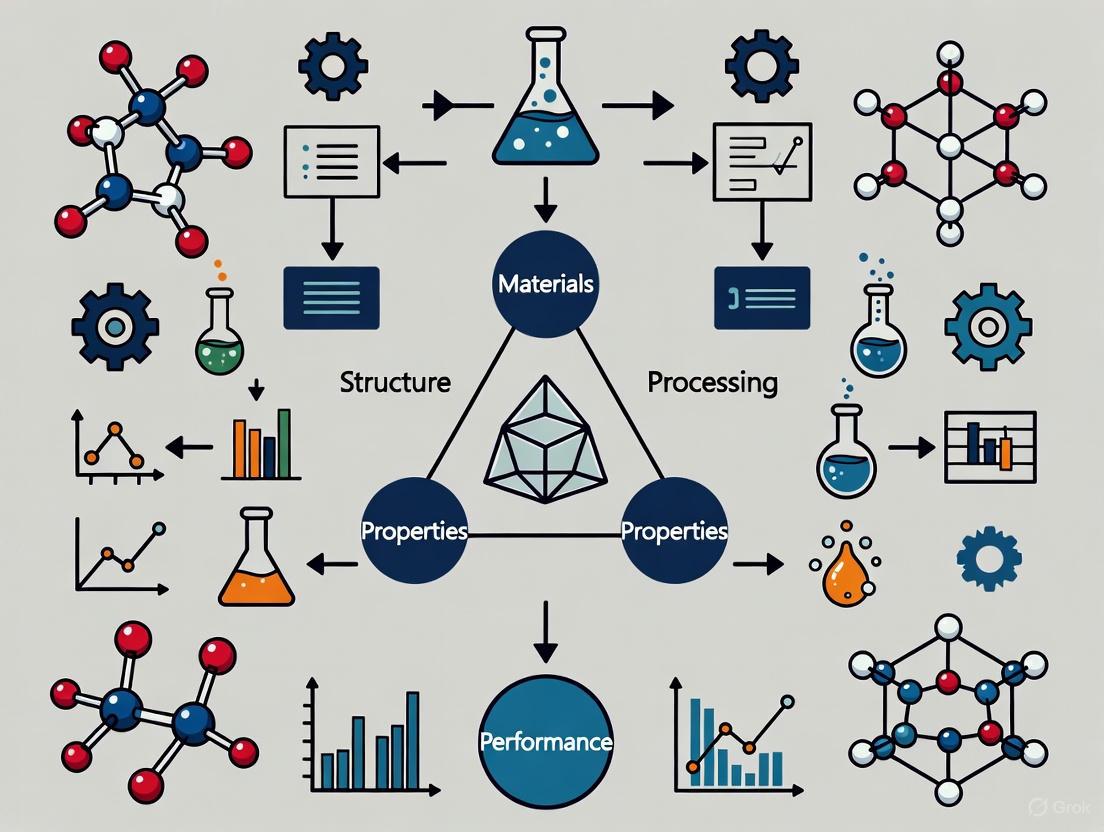

The following diagram illustrates the fundamental relationships and iterative optimization cycle of the PSPP framework:

Diagram 1: PSPP Relationship Cycle

The diagram captures the fundamental PSPP workflow where processing conditions determine the material's internal structure, which governs its properties, which in turn influence its performance in practical applications. The critical feedback loop from performance back to processing enables iterative optimization of the material system.

Evolution and Modern Extensions of the Tetrahedron

The Digital Transformation: Materials-Information Twin Tetrahedra

As computational and data science methods have transformed materials research, the classic tetrahedron has been augmented with parallel concepts from information science. The Materials-Information Twin Tetrahedra (MITT) framework, inspired by the concept of a "digital twin," creates an interdependent relationship between the physical materials tetrahedron and a corresponding information tetrahedron [1]. This contemporary extension provides a holistic perspective of materials science and engineering in the presence of modern digital tools and infrastructures [1].

The information tetrahedron comprises complementary elements: methods/workflows (corresponding to processing), representations (structure), attributes (properties), and efficacy (performance), with the additional dimensions of validation and viability ensuring data quality and sustainability [1]. This dual framework incorporates FAIR data principles (Findable, Accessible, Interoperable, Reusable) and recognizes how materials systems impact and interact with other systems throughout their life cycle [1].

The TETRA Initiative: Accelerated Materials Development

The transformative potential of integrating modern computational and robotic methods with the PSPP framework is demonstrated by initiatives such as the Transforming Evaluation and Testing via Robotics and Acceleration (TETRA) program at Johns Hopkins Applied Physics Laboratory [3]. This effort reimagines the traditional tetrahedron by integrating robotics and accelerated synthesis to simultaneously explore composition and processing variants that influence properties and performance [3].

TETRA leverages advanced manufacturing techniques, including blown-powder directed energy deposition, to create hundreds of alloy variants on a single build plate, with robotic mechanical property measurement enabling rapid evaluation [3]. The ultimate vision includes creating AI "co-engineers" that work alongside human researchers, learning from materials development data to automatically recommend subsequent experiments [3].

Experimental Approaches for PSPP Investigation

Methodologies for Establishing PSPP Relationships

Establishing quantitative PSPP relationships requires carefully designed experimental and computational approaches. The following methodological framework provides a structured approach for investigating these relationships across different material systems:

Table 2: Experimental Methodologies for PSPP Relationship Analysis

| Research Focus | Experimental Methodology | Key Measurements & Characterization | Data Analysis Approaches |

|---|---|---|---|

| Processing-Structure | Combinatorial synthesis, controlled processing parameters, heat treatment variations [3] | Microscopy (SEM, TEM), diffraction (XRD), spectroscopy [6] | Microstructural quantification, statistical correlation [6] |

| Structure-Properties | Structure-property testing, in-situ characterization, property mapping [6] | Mechanical testing, thermal analysis, electrical measurements [6] [2] | Regression modeling, structure-property linkages [6] |

| Properties-Performance | Performance testing under application conditions, lifetime studies [4] [2] | Service condition simulation, accelerated aging, biocompatibility testing [4] | Failure analysis, performance prediction models [4] |

| PSP Integration | Closed-loop experimental design, multi-fidelity Bayesian optimization [6] | High-throughput characterization, autonomous data collection [3] [6] | Machine learning, Gaussian process regression [6] |

Reagent Solutions and Research Materials

The experimental investigation of PSPP relationships requires specialized materials and analytical tools. The following table outlines key research reagents and materials used in PSPP studies, particularly relevant to pharmaceutical and polymer applications:

Table 3: Essential Research Reagents and Materials for PSPP Investigations

| Reagent/Material | Function in PSPP Research | Application Examples |

|---|---|---|

| Polyhydroxyalkanoates (PHAs) | Model biodegradable polymer system for studying structure-property relationships in sustainable materials [7] [4] | Biodegradable packaging, medical implants, drug delivery systems [4] |

| Microcrystalline Cellulose | Pharmaceutical excipient for studying compaction behavior and tablet performance [2] | Tablet formulation, compactibility studies, dissolution performance [2] |

| Dual-Phase Steel Alloys | Model system for investigating microstructure-property relationships in metallic materials [6] | Mechanical property optimization, automotive components [6] |

| Polymorphic API Systems | Active pharmaceutical ingredients with multiple crystal forms for structure-property studies [2] | Solid dosage form development, bioavailability optimization [2] |

Case Studies and Applications

PSPP Framework in Pharmaceutical Development

The application of the materials tetrahedron has proven particularly valuable in pharmaceutical materials science, where it provides a scientific foundation for the design and development of drug products [2]. In this context, the tetrahedron framework has been implemented to systematize the traditionally empirical process of formulation development.

A prominent example involves the optimization of tablet compaction, where processing parameters (compression force, speed) determine the microstructure (porosity, bond formation), which governs properties (tensile strength, dissolution rate), ultimately influencing performance (drug release profile, stability) [2]. Similarly, the investigation of polymorphic systems demonstrates how crystal structure (structure) affects compaction behavior (properties) and tableting success (performance) through careful control of crystallization conditions (processing) [2].

Sustainable Biopolymers: PHA Development

The PSPP framework has been systematically applied to polyhydroxyalkanoate (PHA) biopolymers to address challenges in sustainable material development [7] [4]. This approach examines how production methods (processing) control chemical structure and morphology (structure), which determines thermal and mechanical behavior (properties), ultimately influencing biodegradation and application suitability (performance) [4].

Research has revealed that the limited chemical diversity of commercially available PHAs creates materials selection challenges, including narrow thermal processing windows and mechanical properties that are sometimes unsuitable as direct replacements for conventional plastics [4]. The PSPP framework provides a structured approach to expand the PHA design space by systematically exploring these relationships.

Computational Implementation: PROSPECT-PSPP Pipeline

The PROSPECT-PSPP computational pipeline for protein structure prediction represents a sophisticated implementation of the PSPP framework in bioinformatics [8]. This integrated system employs multiple computational tools in a coordinated workflow that mirrors the PSPP relationships: sequence preprocessing and domain identification (processing), secondary structure prediction and fold recognition (structure), model quality assessment (properties), and functional inference (performance) [8].

The pipeline demonstrates how the PSPP framework can guide the development of automated computational methodologies, with the pipeline manager controlling the flow of the prediction process by calling various tools based on results from previous steps [8].

Advanced Implementation: Workflow for Microstructure-Aware Materials Design

The following diagram illustrates an advanced, microstructure-aware closed-loop optimization framework that explicitly incorporates PSPP relationships in computational materials design:

Diagram 2: Microstructure-Aware Optimization

This workflow demonstrates the superiority of the full PSPP approach over simplified process-property (PP) relationships. Research has confirmed that explicit incorporation of microstructure knowledge in materials design frameworks significantly enhances the optimization process, proving that PSPP is superior to PP for materials design in cases where microstructure intervenes to influence properties of interest [6].

The Materials Tetrahedron, with its integrated framework of processing-structure-properties-performance relationships, continues to provide a fundamental paradigm for materials science and engineering more than three decades after its formal introduction. As the field evolves with new computational tools, characterization techniques, and data science methods, the PSPP framework has demonstrated remarkable adaptability, expanding to incorporate digital twins, artificial intelligence, and sustainable design principles.

The continued relevance of the tetrahedron lies in its ability to provide a common conceptual framework that bridges traditional materials domains—from metallic alloys to pharmaceutical systems—while enabling communication and collaboration across academia, industry, and government sectors. As materials challenges become increasingly complex and interdisciplinary, the PSPP relationship offers a structured approach for navigating the design, development, and deployment of next-generation materials systems that address critical needs in sustainability, healthcare, and national security.

In materials science and engineering, the Process-Structure-Property-Performance (PSPP) framework provides a foundational paradigm for understanding the complex relationships that govern material behavior and application suitability. This framework, often visualized as a materials tetrahedron representing the interconnected nature of these four elements, is essential for systematic materials design and development. It establishes that a material's ultimate performance in real-world applications is directly determined by its properties, which in turn emerge from its internal structure, which is controlled through specific processing techniques [9]. A comprehensive understanding of these relationships enables researchers to reverse-engineer materials, moving backward from desired performance requirements to identify the necessary structures and processing routes to achieve them.

This PSPP approach is particularly critical in advanced fields such as polymeric magnetic robotics for biomedical applications and additive manufacturing, where precise control over material behavior is necessary for functionality [9] [10]. For drug development professionals, this framework provides a structured methodology for designing biomaterials with tailored drug release profiles, biodegradation rates, and mechanical compatibility with biological tissues. This technical guide examines each component of the PSPP framework, their interrelationships, and provides experimental methodologies for their investigation, with a focus on applications relevant to advanced material systems.

Deconstructing the Four Corners: Core Concepts and Definitions

Processing

Processing encompasses the manufacturing techniques and conditions used to transform raw materials into a final form with specific structural characteristics. In advanced materials, processing parameters directly dictate the resulting hierarchical structures. Key processing techniques include:

- Additive Manufacturing: Techniques such as Selective Laser Sintering (SLS) and Direct Ink Writing (DIW) enable precise control over material architecture across multiple scales [9] [10].

- Magnetic Field-Assisted Processing: Applied during material solidification to induce directional particle assembly and enhance magnetic anisotropy in polymer composites [9].

- Thermal Processing: Controlled heating and cooling protocols that influence crystallinity, phase distribution, and structural integrity.

Critical processing parameters include temperature profiles, pressure application, magnetic field strength, and processing environment, all of which must be optimized to achieve target structures while avoiding detrimental effects such as filler demagnetization or polymer degradation [9].

Structure

Structure refers to the material's internal architecture across multiple length scales, from atomic arrangements to macroscopic features. Key structural elements include:

- Microstructure: Crystal structure, grain boundaries, phase distribution, and defects observable at microscopic scales.

- Nanoscale Features: Molecular ordering, nanoparticle dispersion, and interfacial characteristics.

- Mesoscale Architecture: Porosity, fiber orientation, and domain patterns that emerge between microscopic and macroscopic scales.

- Macroscale Geometry: Overall shape and dimensions of the finished component.

In magnetic polymer composites, structure encompasses both the polymer matrix morphology (crystalline/amorphous regions) and the spatial distribution of magnetic fillers (uniform vs. aligned configurations), which collectively determine magnetic responsiveness and mechanical behavior [9].

Properties

Properties are the measurable responses of a material to external stimuli, representing the material's capabilities and limitations. These can be categorized as:

- Physical Properties: Density, thermal conductivity, magnetic permeability

- Mechanical Properties: Elastic modulus, yield strength, fracture toughness, ductility

- Functional Properties: Shape-memory behavior, self-healing capability, drug release kinetics

- Magnetic Properties: Saturation magnetization, magnetic anisotropy, coercivity

Properties serve as the critical link between a material's internal structure and its external performance, with structure-property relationships often quantified through computational models and experimental characterization [9] [10].

Performance

Performance describes how a material system functions under actual application conditions, representing the ultimate measure of its suitability. Performance metrics are application-specific:

- Biomedical Applications: Drug delivery efficiency, targeting precision, biocompatibility, degradation profile

- Robotic Systems: Locomotion efficiency, actuation speed, operational lifetime, environmental adaptability

- Structural Components: Load-bearing capacity, durability under cyclic loading, resistance to environmental degradation

- Electronics: Charge/discharge efficiency, signal integrity, thermal management capability

For magnetic robots in drug delivery applications, key performance metrics include navigation precision through complex biological environments, controlled drug release at target sites, and minimal tissue damage during operation [9].

Quantitative PSPP Relationships in Advanced Materials

The following tables summarize key PSPP relationships in advanced material systems, highlighting how processing parameters influence structure, which subsequently determines properties and ultimate performance.

Table 1: Processing-Structure Relationships in Selective Laser Sintering of PA12

| Processing Parameter | Structural Characteristic | Quantitative Relationship | Experimental Method |

|---|---|---|---|

| Laser Power | Porosity | 62W power reduces porosity to ~2% | Micro-CT Analysis [10] |

| Scan Speed | Crystallinity | Optimal speed achieves ~30% crystallinity | DSC [10] |

| Powder Bed Temperature | Layer Adhesion | Higher temperature improves interlayer bonding | SEM Cross-section [10] |

| Magnetic Field During Curing | Particle Alignment | 500mT field creates chain-like structures | Microscopy with Image Analysis [9] |

Table 2: Structure-Property Relationships in Magnetic Polymer Composites

| Structural Feature | Material Property | Quantitative Impact | Measurement Technique |

|---|---|---|---|

| Magnetic Particle Alignment | Magnetic Anisotropy | 3-5x increase in directional response | VSM [9] |

| Porosity Distribution | Tensile Strength | 1% porosity increase reduces strength by 5-7% | Uni-axial Testing [10] |

| Interfacial Bond Quality | Fracture Toughness | Strong interface doubles energy absorption | Fracture Mechanics Tests [9] |

| Crystallinity Degree | Elastic Modulus | 10% crystallinity increase raises modulus by 15% | DMA [10] |

Table 3: Property-Performance Relationships in Medical Robotics

| Material Property | Performance Metric | Correlation | Assessment Method |

|---|---|---|---|

| Magnetic Responsiveness | Navigation Precision | Higher anisotropy enables sharper turning | Tracking in Phantoms [9] |

| Mechanical Stiffness | Locomotion Efficiency | Optimal modulus matches tissue compliance | Motion Analysis [9] |

| Biodegradation Rate | Tissue Compatibility | Controlled degradation prevents inflammation | Histology [9] |

| Surface Chemistry | Drug Loading Capacity | Functional groups increase payload by 40-60% | Spectroscopy & HPLC [9] |

Experimental Protocols for PSPP Characterization

Protocol 1: Multiscale Modeling of PSPP Relationships in Additive Manufacturing

This integrated computational framework establishes PSPP relationships for Selective Laser Sintering (SLS) of polyamide 12 (PA12) components [10].

Process Simulation

- Develop a powder-scale model simulating laser-powder interaction using optical, thermal, and geometrical properties of PA12 powder.

- Incorporate heat transfer model coupled with crystallization kinetics and densification models.

- Calculate temperature profiles, cooling rates, and sintered density with spatial resolution of 10-50 μm.

Structure Prediction

- Predict crystallinity distribution using experimentally calibrated crystallization kinetics models.

- Estimate porosity distribution from densification models with validation through micro-CT scanning.

- Construct Representative Volume Elements (RVEs) incorporating predicted porosity and crystallinity distributions.

Property Prediction

- Implement multi-mechanism constitutive model calibrated with experimental tensile tests.

- Apply periodic boundary conditions to RVEs for stress-strain response prediction.

- Calculate effective elastic modulus, yield strength, and failure strain from simulated mechanical response.

Performance Validation

- Correlate simulated mechanical properties with experimental performance metrics under application-specific loading conditions.

- Validate framework accuracy by comparing predicted and measured dimensional stability, fatigue resistance, and functional performance.

This protocol enables inverse design by establishing quantitative PSPP relationships that allow researchers to determine optimal processing parameters for desired performance outcomes [10].

Protocol 2: Experimental Characterization of Magnetic Polymer Composites

This methodology evaluates PSPP relationships in magnetically responsive polymer composites for biomedical robotics [9].

Material Processing and Fabrication

- Prepare polymer composite by homogenously dispersing magnetic particles (Fe₃O₄, NdFeB) in polymer matrix (thermoset or thermoplastic).

- Apply rotational or static magnetic fields (100-500 mT) during curing to induce particle alignment.

- Fabricate test specimens using replica molding, micro-molding, or direct-write 3D printing.

Structural Characterization

- Analyze particle distribution and alignment using scanning electron microscopy with image analysis.

- Quantify degree of alignment through orientation index calculation from microscopic images.

- Characterize polymer morphology using differential scanning calorimetry and X-ray diffraction.

Property Measurement

- Measure magnetic properties using vibrating sample magnetometry to determine saturation magnetization and coercivity.

- Characterize mechanical properties through tensile testing, dynamic mechanical analysis, and rheology.

- Evaluate functional properties such as shape-memory behavior and actuation capability under magnetic stimulation.

Performance Evaluation

- Assess actuation performance by measuring locomotion efficiency in simulated biological environments.

- Quantify targeting precision and maneuverability under controlled magnetic fields.

- Evaluate application-specific functionality (drug release profiles, diagnostic capability, therapeutic efficacy).

This comprehensive protocol enables researchers to establish quantitative correlations between processing conditions, resulting structures, emergent properties, and ultimate performance in biomedical applications [9].

Visualization of PSPP Relationships

The following diagrams illustrate key relationships and experimental workflows within the PSPP framework for advanced materials.

PSPP Framework Interrelationships

PSPP Experimental Characterization Workflow

The Scientist's Toolkit: Essential Research Materials and Reagents

Table 4: Key Research Reagents and Materials for PSPP Studies

| Material/Reagent | Function in PSPP Research | Application Examples |

|---|---|---|

| Magnetic Fillers (Fe₃O₄, NdFeB, SrFe) | Provide magnetic responsiveness | Actuation, targeting, manipulation [9] |

| Polymer Matrices (PA12, Thermosets, Hydrogels) | Structural framework | Mechanical support, biocompatibility, degradation control [9] [10] |

| Surface Modifiers (Silanes, Polymers) | Enhance particle-matrix interface | Improve dispersion, stress transfer, functionality [9] |

| Photoinitiators (Irgacure 2959, LAP) | Enable photopolymerization | 3D printing, stereolithography, patternable composites [9] |

| Rheology Modifiers (Fumed Silica, Clays) | Control viscosity and stability | Prevent sedimentation, enable 3D printing [9] |

| Crosslinking Agents (Glutaraldehyde, PEGDA) | Modify mechanical properties | Control stiffness, swelling, degradation rate [9] |

The Process-Structure-Property-Performance framework provides an essential systematic approach for advanced materials development, particularly in complex interdisciplinary fields such as biomedical robotics and additive manufacturing. By establishing quantitative relationships between these four elements, researchers can transcend traditional trial-and-error approaches and implement rational, predictive materials design [9] [10]. The continued development of multiscale modeling frameworks coupled with advanced characterization techniques will further enhance our ability to navigate this materials tetrahedron, accelerating the development of next-generation materials with tailored performance characteristics for specific biomedical and technological applications. For drug development professionals, this PSPP approach offers a structured methodology for designing carrier systems with optimized therapeutic delivery profiles, bridging the gap between materials science and pharmaceutical applications.

For over three decades, the materials tetrahedron has served as the fundamental conceptual framework of materials science and engineering, visually representing the interdependence of four core elements: processing, structure, properties, and performance [1] [11]. This symbolic polyhedron illustrates that a material's final performance is not determined by a single factor, but emerges from the complex interplay between how it is made (processing), its internal architecture across multiple length scales (structure), and its resulting characteristics (properties) [11]. The framework provides researchers with a systematic approach for rational materials design, whether moving from desired performance to required processing parameters or understanding how processing changes affect the final material behavior.

This article explores the enduring relevance of the materials tetrahedron, its modern evolution through digital twin technologies, and its practical application in developing advanced materials from metal-organic frameworks to sustainable biopolymers.

The Core Framework: Processing, Structure, Properties, Performance

The four vertices of the materials tetrahedron form a continuous cycle of cause-and-effect relationships that guide materials development:

- Processing encompasses the synthesis and manufacturing techniques used to create a material, directly influencing its internal architecture.

- Structure refers to the material's arrangement at atomic, microscopic, and macroscopic levels, including crystal structure, defects, grain boundaries, and porosity.

- Properties describe the material's characteristics and responses to external stimuli, including mechanical, electrical, thermal, and chemical behaviors.

- Performance defines how the material functions in its intended application, encompassing durability, efficiency, and reliability under operational conditions.

The framework's power lies in its bidirectional utility. The forward path (processing → structure → properties → performance) helps predict application suitability, while the inverse path (desired performance → required properties → necessary structure → appropriate processing) enables rational, target-oriented design [11].

The Digital Evolution: The Materials-Information Twin Tetrahedra

As materials research enters an era of data-intensive science, the classic tetrahedron has evolved to incorporate digital twin technology. The Materials-Information Twin Tetrahedra (MITT) framework creates an interdependent counterpart in information science, connecting physical materials research with computational approaches [1] [11].

This dual framework enables:

- Accelerated discovery through inverse design algorithms and high-throughput computational screening

- Enhanced prediction via multiphysics simulations and machine learning methods

- Improved data utility through FAIR principles (Findable, Accessible, Interoperable, Reusable) for materials data [1]

The integration of digital tools creates a virtuous cycle where materials systems generate data, information systems analyze and model this data, and the resulting insights guide further materials optimization [11].

Digital Twin Framework: The MITT connects physical materials research with information science [1] [11].

Case Study I: MOF-Based Catalysts for the Biginelli Reaction

Metal-organic frameworks (MOFs) exemplify the tetrahedron framework in designing advanced heterogeneous catalysts. Their development for the Biginelli reaction—a multicomponent process for synthesizing dihydropyrimidinone scaffolds with pharmacological importance—demonstrates precise control across all tetrahedron vertices [12].

Processing-Structure Relationships in MOF Design

MOF processing involves coordinating metal nodes with organic linkers to create crystalline porous materials. Researchers deliberately engineer structural features including:

- Ultra-high surface areas and tunable porosity for efficient reactant diffusion

- Intrinsic acidic sites at metal nodes or functionalized organic linkers

- Structural flexibility through varying metal nodes and organic linkers

- Multivariate functionalization incorporating multiple metals and ligands within a single framework [12]

These structural characteristics directly address the catalytic requirements of the Biginelli reaction, which suffers from entropy decrease in the transition state during the one-pot-three-component process. MOF cavities function as nanoreactors that stabilize the transition state, reducing activation energy and accelerating reaction rates [12].

Properties-Performance Advantages in Catalysis

The tailored structures of MOF catalysts yield enhanced properties that translate to superior performance in the Biginelli reaction:

Table 1: MOF Catalyst Advantages in Biginelli Reaction

| Structural Feature | Resulting Property | Performance Advantage |

|---|---|---|

| Abundant acidic sites | Enhanced carbonyl activation | Reduced reaction time and temperature |

| Tunable pore size | Molecular sieving capability | Improved reaction selectivity |

| Crystalline framework | Defined active site geometry | Controlled reaction mechanism |

| Non-solubility | Easy catalyst separation | Reusability and reduced waste |

The performance benefits extend beyond catalytic efficiency to environmental advantages. MOFs enable replacement of traditional homogeneous acid catalysts (HCl, H₂SO₄) that present corrosion, toxicity, and regeneration challenges, aligning with green chemistry principles [12].

Experimental Protocol: MOF-Catalyzed Biginelli Reaction

Materials and Equipment:

- Benzaldehyde, ethyl acetoacetate, urea/thiourea (equimolar amounts)

- MOF catalyst (e.g., Zr-based UiO-66 or similar acid-functionalized framework)

- Ethanol solvent

- Round-bottom flask equipped with condenser

- Heating mantle with temperature control

- Centrifuge for catalyst separation

Procedure:

- Catalyst Activation: Pre-activate MOF catalyst (typically 5-10 mol%) under vacuum at 100-150°C for 2 hours to remove solvent molecules from pores.

- Reaction Setup: Charge flask with aldehyde (1 mmol), β-ketoester (1 mmol), urea/thiourea (1.2 mmol), activated MOF catalyst, and ethanol (5 mL).

- Reaction Execution: Heat mixture at 70-80°C with continuous stirring for 1-4 hours, monitoring reaction progress by TLC or GC-MS.

- Product Isolation: Centrifuge reaction mixture to separate solid catalyst. Concentrate supernatant under reduced pressure.

- Purification: Recrystallize crude product from ethanol to obtain pure DHPMs.

- Catalyst Reuse: Wash recovered MOF catalyst with ethanol, reactivate, and reuse for subsequent cycles to assess stability.

Characterization Techniques:

- MOF Catalyst: PXRD for structural integrity, BET surface area analysis, FT-IR for functional groups, SEM/TEM for morphology

- Reaction Products: NMR, MS, and melting point determination for structural confirmation

Case Study II: Polyhydroxyalkanoate (PHA) Biopolymers

The development of sustainable polyhydroxyalkanoate (PHA) biopolymers demonstrates the tetrahedron framework's application to environmentally-conscious materials design, addressing the critical need for alternatives to conventional petroleum-based plastics [13].

Processing-Structure Challenges in PHA Development

PHA processing faces significant challenges that impact structural development and commercial viability:

- Microbial Synthesis: PHAs are synthesized and accumulated by microbes under excess carbon conditions, acting as energy storage mechanisms

- Production Limitations: Current industrial production faces hurdles in feedstock availability, low productivity/yield, and complex genetic engineering requirements

- Cost Factors: High production costs ($1.81-3.20 per lb for PHB vs $0.45-0.68 per lb for polypropylene) limit widespread adoption [13]

These processing challenges constrain structural diversity, with commercially available PHAs dominated by narrow chemistry ranges, primarily polyhydroxybutyrate (PHB) and its copolymers PHBV and PHBHHx [13].

Properties-Performance Relationships in Application

The structural limitations of commercially available PHAs directly impact their properties and performance as plastic alternatives:

Table 2: PHA Biopolymer Properties and Performance

| PHA Type | Key Properties | Performance Advantages | Performance Limitations |

|---|---|---|---|

| PHB (P3HB) | High crystallinity, brittleness | Biocompatibility, non-toxic degradation | Narrow processing window, limited degradation rate |

| PHBV | Reduced crystallinity, improved toughness | Enhanced processability, tunable degradation | Higher cost, limited chemical diversity |

| PHBHHx | Increased flexibility, lower melting point | Improved mechanical properties | Scalability challenges, production complexity |

Despite current limitations, PHAs offer crucial performance advantages for a circular plastic economy, including effective degradation in aquatic and soil environments, recyclability through chemical and biological means, and generation of non-toxic degradation products [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Tetrahedron-Guided Materials Design

| Reagent/Material | Function in Research | Application Examples |

|---|---|---|

| Metal Salts (e.g., ZrCl₄, Zn(NO₃)₂) | Metal node precursors for MOF synthesis | Creating secondary building units (SBUs) in framework materials [12] |

| Organic Linkers (e.g., terephthalic acid, biphenyl-dicarboxylates) | Bridging ligands for framework construction | Establishing porosity and functionalization sites in MOFs [12] |

| Microbial Strains (e.g., Cupriavidus necator) | Biological factories for polymer synthesis | Producing PHA biopolymers from renewable feedstocks [13] |

| Solvothermal Reactors | High-pressure/temperature synthesis | Crystal growth for MOFs and coordination polymers [12] |

| Fermentation Bioreactors | Controlled biological production | Scaling up PHA synthesis with optimized nutrient conditions [13] |

The materials tetrahedron remains an indispensable framework for rational materials design, providing both foundational understanding for students and a strategic roadmap for advanced research. Its evolution through digital twin technologies has enhanced its predictive power and accelerated discovery cycles. As materials challenges grow increasingly complex—from sustainable polymers to energy storage and pharmaceutical development—the tetrahedron continues to offer a systematic approach for navigating the intricate relationships between processing, structure, properties, and performance. By integrating this classical framework with modern computational tools, researchers can more effectively design the advanced materials needed to address global technological and environmental challenges.

The materials tetrahedron is a fundamental conceptual framework in materials science that visualizes the dynamic and interdependent relationship between a material's processing, its resulting structure, its observable properties, and its ultimate performance in application [7] [3]. This paradigm, while foundational to centuries of metallurgical advancement, now provides an essential lens for understanding and innovating modern pharmaceutical development. In metallurgy, this relationship has long been understood: the method of cooling and forging steel (processing) determines its crystalline microstructure (structure), which directly dictates its hardness and tensile strength (properties), and thus its suitability for a bridge or a tool (performance). This paper argues that the same foundational principle is now being applied to the complex world of drug formulation and manufacturing, enabling a new era of advanced pharmaceuticals and personalized medicine.

The migration of this framework from metals to medicines represents a significant evolution in scientific approach. Where traditional pharmaceutical development often relied on empirical, batch-based methods, the modern paradigm, informed by the materials tetrahedron, seeks to establish predictive, science-driven models. This shift is critical for addressing contemporary challenges such as the formulation of poorly soluble Active Pharmaceutical Ingredients (APIs), the creation of complex drug delivery systems, and the move toward decentralized, on-demand manufacturing. By systematically exploring the connections between how a drug product is made, its internal architecture, its measurable characteristics, and its biological effect, researchers can accelerate development and achieve more precise therapeutic outcomes.

The Materials Science Tetrahedron: A Foundational Model

Core Principles and Definitions

The four components of the materials tetrahedron form a continuous cycle of cause and effect that is central to rational materials design. The following diagram illustrates the core interrelationships of this framework:

Figure 1: The Core Interrelationships of the Materials Tetrahedron

- Processing: The set of operations and conditions used to synthesize and shape a material. In pharmaceuticals, this encompasses unit operations like hot melt extrusion, spray drying, roller compaction, and 3D printing, as well as conditions such as temperature, pressure, and shear rate [14].

- Structure: The material's internal architecture across multiple length scales, including its molecular arrangement (e.g., crystalline vs. amorphous solid-state), microstructure (e.g., particle size and shape, porosity), and macrostructure. Structure is a direct consequence of processing.

- Properties: The measurable physical and chemical characteristics resulting from the structure. These include mechanical properties (hardness, elasticity), thermal properties (melting point, glass transition), solubility, dissolution rate, and stability.

- Performance: The material's effectiveness in its intended application or environment. For a drug product, this translates directly to therapeutic efficacy, safety profile, shelf-life, and manufacturability at scale [7].

Historical Metallurgical Origins

The empirical understanding of the PSPP relationship is ancient. Early blacksmiths manipulated the processing of steel (e.g., heating and quenching) to achieve a harder, more durable structure (martensite), which yielded properties superior for weaponry. The TETRA program at Johns Hopkins Applied Physics Laboratory (APL) is a modern embodiment of this principle, leveraging advanced tools to accelerate this discovery loop for defense-grade metallic components [3]. Historically, developing a new alloy was a painstakingly slow process, requiring the production of large ingots, sequential testing, and numerous iterative cycles. APL's TETRA approach disrupts this by using combinatorial synthesis and additive manufacturing to create and test hundreds of material variants on a single build plate, simultaneously exploring the entire composition and processing landscape [3]. This accelerated paradigm, pioneered in metallurgy, provides a template for pharmaceutical innovation.

The Paradigm Shift to Modern Pharmaceuticals

Drivers for Adoption in Pharma

The pharmaceutical industry is increasingly adopting the materials tetrahedron framework, driven by several powerful forces:

- Supply Chain Resilience: Recent geopolitical events and pandemics have exposed vulnerabilities in global pharmaceutical supply chains, prompting a wave of onshoring and investment in new, advanced manufacturing plants. Major announcements from companies like Eli Lilly, AstraZeneca, and Johnson & Johnson represent a strategic shift toward more resilient and controllable production networks [15].

- Regulatory and Policy Support: Governments are actively supporting this transition through initiatives like the U.S. FDA's "PreCheck" program, designed to fast-track the review and approval of new domestic manufacturing facilities, acknowledging the need for a more robust supply of essential medicines [15].

- Technological Innovation: The rise of Industry 4.0 technologies is making the implementation of this framework feasible. Cyber-physical systems, digital twins, and advanced data analytics allow for the real-time monitoring and control of the PSPP relationship in a way that was previously impossible [14].

- Product Complexity: The development of complex APIs, biologics, and personalized medicines demands a more sophisticated understanding of materials than ever before. Empirical methods are insufficient for engineering sophisticated drug delivery systems, necessitating a first-principles, science-based approach.

Industry 4.0 and the Smart Factory

The concept of Industry 4.0—the integration of cyber-physical systems, the Internet of Things (IoT), and cloud computing—is transforming pharmaceutical manufacturing into a data-rich, agile endeavor. This aligns perfectly with the materials tetrahedron framework [14]. Key technologies enabling this shift include:

- Digital Twin: A virtual digital equivalent of a physical product or process. It allows for real-time simulation, process optimization, and predictive analysis without disrupting actual production, dramatically decreasing development time and increasing confidence in the system [14].

- Additive Manufacturing (3D Printing): This technology epitomizes the direct link between processing and structure. It enables the fabrication of solid oral dosage forms with complex geometries (structure) that are impossible with traditional compression, allowing for customized release profiles (performance) [14].

- Advanced Robotics and AI: As demonstrated in the TETRA program, AI and robotics can act as "co-investigators," learning from development data to recommend the next experiment or even autonomously running a self-optimizing lab [3].

Applying the Tetrahedron: From Drug Formulation to Finished Product

The Pharmaceutical Materials Tetrahedron in Practice

In pharmaceutical development, the tetrahedron framework translates directly to the journey from a raw API to a finished drug product. The following workflow provides a concrete example of how this framework is applied in the development of a solid dispersion formulation, a common technique for enhancing the solubility of poorly soluble drugs:

Figure 2: A Pharmaceutical Workflow for Solid Dispersion Development

Quantitative Analysis of Processing Parameters

The relationship between processing parameters and final product properties is quantifiable. The table below summarizes key parameters and their measurable impacts on product structure and properties, illustrating the direct cause-and-effect relationships central to the tetrahedron.

Table 1: Impact of Hot Melt Extrusion (Processing) Parameters on Drug Product Attributes

| Processing Parameter | Typical Experimental Range | Impact on Solid Dispersion Structure | Resulting Property Changes |

|---|---|---|---|

| Barrel Temperature | 100°C - 180°C | Degree of API mixing & amorphous conversion [7] | Dissolution rate, physical stability |

| Screw Speed | 50 - 500 rpm | Shear-induced molecular dispersion | API particle size, homogeneity |

| Feed Rate | 0.2 - 2.0 kg/hr | Residence time in extruder | Extent of degradation, crystallinity |

| Cooling Rate | Quench vs. Slow Cool | Glassy state vs. crystalline formation | Stability, dissolution profile |

Experimental Protocols and Methodologies

Protocol: Formulating a Solid Dispersion via Hot Melt Extrusion

This protocol provides a detailed methodology for investigating the materials tetrahedron using Hot Melt Extrusion (HME) to enhance the solubility of a poorly water-soluble API.

1. Objective: To process an API-polymer blend via HME and characterize the resulting solid dispersion to understand the relationships between processing parameters, amorphous structure formation, and the resulting dissolution performance.

2. Materials (The Scientist's Toolkit):

Table 2: Essential Research Reagents and Materials

| Item | Function / Rationale |

|---|---|

| Poorly Soluble API (e.g., Itraconazole) | Model compound to demonstrate solubility enhancement. |

| Polymer Carrier (e.g., HPMCAS, PVPVA) | Matrix former that inhibits crystallization and maintains supersaturation. |

| Plasticizer (e.g., Triethyl Citrate) | Lowers processing temperature, mitigating API thermal degradation. |

| Twin-Screw Hot Melt Extruder | Provides the necessary shear and thermal energy to create a molecularly mixed amorphous dispersion. |

| Differential Scanning Calorimeter (DSC) | Confirms the conversion from crystalline API to an amorphous state. |

| X-Ray Powder Diffractometer (XRPD) | Provides definitive evidence of the loss of crystalline structure. |

| Dissolution Testing Apparatus (USP II) | Quantifies the performance enhancement (dissolution rate and extent). |

3. Detailed Procedure:

Step 1: Pre-blending

- Weigh the API and polymer in a predetermined ratio (e.g., 20:80 w/w).

- Add plasticizer at 5-10% w/w of the polymer mass.

- Blend the mixture in a turbula mixer for 15 minutes to ensure homogeneity before extrusion.

Step 2: Hot Melt Extrusion (Processing)

- Set the extruder barrel temperature profile along multiple zones, typically ramping from a low temperature in the feeding zone (e.g., 80°C) to a higher temperature in the melting and mixing zones (e.g., 150°C). The specific temperature is dependent on the glass transition temperature (Tg) of the polymer and the melting point of the API.

- Set the screw speed to a defined value, e.g., 200 rpm, to control shear stress.

- Calibrate the feeder and initiate the extrusion process at a fixed feed rate, e.g., 0.5 kg/hr.

- Collect the extrudate as it exits the die and cool it rapidly on a chilled roller.

Step 3: Post-Processing

- Milling: Size-reduce the cooled, brittle extrudate using a centrifugal mill fitted with a 1.0 mm screen.

- Storage: Store the milled powder in a sealed container under desiccated conditions until analysis.

4. Characterization (Linking Processing to Structure and Properties):

Solid-State Characterization (Structure):

- XRPD Analysis: Place powdered sample in a holder. Scan from 5° to 40° 2θ. The absence of sharp, crystalline peaks indicates the formation of an amorphous solid dispersion.

- DSC Analysis: Load 3-5 mg of sample into a sealed pan. Run a heat scan from 25°C to 250°C at 10°C/min. The disappearance of the API's melting endotherm confirms successful amorphization.

Performance Testing (Properties -> Performance):

- Dissolution Testing: Use a USP Apparatus II (paddles). Add a sample equivalent to 50 mg of API to 900 mL of dissolution medium (e.g., 0.1N HCl or phosphate buffer pH 6.8) at 37°C ± 0.5°C. Set the paddle speed to 75 rpm. Withdraw samples at 5, 10, 15, 30, 45, and 60 minutes, filter, and analyze by HPLC/UV. Compare the dissolution profile against the unprocessed crystalline API.

Protocol: Powder X-Ray Diffraction (XRPD) for Solid-State Analysis

1. Objective: To determine the crystalline or amorphous nature of the processed material.

2. Materials:

- X-Ray Powder Diffractometer

- Sample powder from Protocol 5.1

- Standard glass or zero-background sample holder

3. Procedure:

- Gently compress the powder into the sample holder to create a flat, uniform surface.

- Mount the holder in the diffractometer.

- Set parameters: Cu Kα radiation (λ = 1.5418 Å), voltage 45 kV, current 40 mA.

- Scan continuously from 5° to 40° 2θ with a step size of 0.02° and a dwell time of 1 second per step.

- Analyze the resulting diffractogram. A halo pattern indicates an amorphous material, while sharp peaks indicate crystallinity.

The journey from metallurgy to modern pharmaceuticals, guided by the enduring principles of the materials tetrahedron, represents a profound maturation of drug development. The framework provides a systematic, predictive, and scientific foundation for understanding how processing defines structure, structure governs properties, and properties ultimately dictate therapeutic performance. The adoption of this paradigm, supercharged by Industry 4.0 technologies like digital twins and additive manufacturing, is transforming the pharmaceutical landscape. It enables the development of more complex and effective drugs, enhances supply chain resilience through advanced manufacturing, and paves the way for truly personalized medicine. As the industry continues to embrace this holistic view, the path from a novel molecule to a reliable, high-performing medicine will become faster, more efficient, and fundamentally more robust.

The materials tetrahedron is a foundational conceptual framework in materials science and engineering that visually captures the fundamental, interdependent relationships between a material's processing, its resulting structure across multiple length scales, its intrinsic and extrinsic properties, and its final performance in application [16] [1]. First formally presented by the National Research Council in 1989, this enduring model has served for over three decades as a central paradigm for the field, guiding research, development, and education by illustrating that a change in one element necessarily affects the others [1] [5]. The tetrahedron's core principle is that a material's performance in service is not an isolated outcome but the culmination of a chain of relationships: processing conditions dictate the internal structure, which governs the material's properties, which ultimately determines its performance in a specific application [16] [17]. This framework provides a systematic approach for designing new materials and for troubleshooting existing ones, making it an indispensable mental model for researchers and engineers aiming to develop materials that meet extreme or novel application demands, from sustainable polymers to mission-critical defense components [7] [3] [5].

Core Principles and Elements of the Tetrahedron

The four vertices of the materials tetrahedron represent the critical domains of knowledge required for a holistic understanding of any material system. Their definitions and interrelationships are detailed below.

- Processing: This refers to the synthesis and manufacturing methods used to create a material or component. It encompasses the specific conditions, parameters, and pathways involved in transforming raw materials or precursors into a final form. Examples include casting, forging, additive manufacturing, heat treatment, and thin-film deposition. Processing is the initial, causative factor that instigates the chain of relationships within the tetrahedron [3] [17].

- Structure: Structure describes the material's internal architecture across multiple length scales, from the atomic and molecular arrangement (e.g., crystal structure, chemical bonding) to the microscopic features (e.g., grain boundaries, phase distribution) and up to the macroscopic morphology. Structure is the direct consequence of the processing history and is the primary determinant of the material's properties [7] [9].

- Properties: Properties are the measurable attributes and responses of a material to external stimuli. These include mechanical properties (e.g., strength, ductility, hardness), thermal properties (e.g., conductivity, expansion coefficient), electrical properties (e.g., conductivity, permittivity), optical properties, and chemical properties (e.g., corrosion resistance). Properties are the manifestation of the material's structure [7] [17].

- Performance: Performance characterizes how well a material functions in its intended application or service environment. It is a measure of the material's ability to meet specific engineering and economic requirements, such as fatigue life in an aerospace component, degradation rate in a biomedical implant, or efficiency in an energy conversion device. Performance is the final outcome, governed by the material's properties [7] [5].

The power of the tetrahedron model lies in the bidirectional relationships along its edges, which can be traversed in a "cause-and-effect" manner from processing to performance or in a "goal-oriented" manner backward from desired performance to required processing [1].

Quantitative Data in Tetrahedron Research

Table 1: Prevalence of Tetrahedron-Related Data in Materials Science Literature. A study of 2,536 peer-reviewed publications quantified where different types of information are reported [18].

| Information Entity | Reported in Text | Reported in Tables |

|---|---|---|

| Material Compositions | 33.21% of compositions | 85.92% of compositions |

| Material Properties | Information primarily in text | 82% of articles |

| Processing Conditions | Mostly reported in text | Less frequent |

| Testing Conditions | Mostly reported in text | Less frequent |

| Raw Materials/Precursors | 80% of articles | Less frequent |

Table 2: Distribution of Composition Table Types. An analysis of 100 randomly selected composition tables revealed structural variations that challenge automated information extraction [18].

| Table Type | Description | Prevalence |

|---|---|---|

| MCC-CI | Multi-Cell Composition with Complete Information | 36% |

| SCC-CI | Single-Cell Composition with Complete Information | 30% |

| MCC-PI | Multi-Cell Composition with Partial Information | 24% |

| SCC-PI | Single-Cell Composition with Partial Information | 10% |

Visualizing the Tetrahedron and Its Modern Extensions

The classical materials tetrahedron provides a static view of relationships. Modern research, however, requires frameworks that incorporate dynamic data flows and the digital tools used for discovery.

The Classical Materials Tetrahedron

The following diagram represents the fundamental four-element relationship that forms the core of materials science and engineering.

The Materials-Information Twin Tetrahedra (MITT) Framework

The classic tetrahedron has been reimagined for the digital age. The Materials-Information Twin Tetrahedra (MITT) framework introduces a "digital twin" for the physical materials tetrahedron, creating a nexus between materials science and information science [1] [19]. This paradigm accounts for the data, models, and digital workflows that are now central to materials research and development. The information tetrahedron comprises parallel elements: Methods/Workflows (corresponding to Processing), Representations (corresponding to Structure), Attributes (corresponding to Properties), and Efficacy (corresponding to Performance), along with the critical dimensions of Validation and Viability guided by FAIR (Findable, Accessible, Interoperable, Reusable) data principles [1]. The MITT framework facilitates a continuous, iterative cycle where materials systems generate data, and information systems provide insights that guide the improvement of materials systems.

The Integrated Research Cycle for Materials Science

The practical application of the tetrahedron occurs within a structured research cycle. This cycle integrates the scientific method with literature review and emphasizes that research is a process for expanding the community's collective knowledge, not just an individual pursuit [16]. The following workflow diagram visualizes this iterative process.

Experimental Protocols for Establishing PSPP Relationships

Establishing robust Processing-Structure-Property-Performance (PSPP) relationships requires carefully designed experimental and computational protocols. The following methodologies are drawn from cutting-edge research.

Protocol 1: Combinatorial Synthesis and High-Throughput Testing for Metallic Alloys

This protocol, as implemented in the TETRA program, leverages advanced manufacturing and robotics to dramatically accelerate the exploration of metallic materials [3].

Combinatorial Synthesis via Directed Energy Deposition (DED):

- Objective: To rapidly fabricate a library of alloy specimens with varied chemical compositions on a single build plate.

- Method: Use a blown-powder DED system. A laser melts metal powder as it is fed into the build area, solidifying layer-by-layer. The chemical composition is varied in each specimen by controlling the feed rate of different elemental or pre-alloyed powders. This allows for hundreds of discrete alloy variations to be synthesized in a single automated build cycle [3].

Automated Heat Treatment and Forging:

- Objective: To subject the combinatorial samples to a range of thermo-mechanical processing conditions.

- Method: Transfer the build plate to custom, automated heat treatment furnaces and hot forging equipment. Program these systems to apply different time-temperature profiles and deformation paths to individual or groups of samples, thereby exploring the effect of processing history on microstructure [3].

Robotic Mechanical Property Measurement:

- Objective: To autonomously test the mechanical properties of the synthesized and processed specimens.

- Method: Employ a robotic system to grip standard-sized test specimens from the build plate and load them into a mechanical testing frame. The system conducts tests (e.g., micro-tensile, hardness) and records the resulting property data (e.g., yield strength, elongation) for each unique processing-composition variant [3].

Protocol 2: Data-Driven Modeling for Metal Additive Manufacturing

This protocol uses machine learning to establish PSP links where traditional physics-based modeling is computationally prohibitive [20].

Data Generation and Curation:

- Objective: To create a dataset linking process parameters to structural features and properties.

- Method: Collect data from a combination of controlled experiments and high-fidelity thermal-fluid simulations. Key input variables (Processing) include laser power, scan speed, and scan strategy. Key output variables include Structure (e.g., porosity, lack-of-fusion defects, grain size) and Properties (e.g., yield strength, ultimate tensile strength) [20].

Surrogate Model Development and Training:

- Objective: To build a predictive model that maps process parameters to structural and property outcomes.

- Method: Employ machine learning algorithms such as Gaussian Process Regression or Deep Neural Networks. These are trained on the curated dataset to act as fast-running "surrogate models," bypassing the need for expensive simulations or experiments for new parameter sets [20].

Model Validation and Optimization:

- Objective: To validate model predictions and use the model for process optimization.

- Method: Compare model predictions against a held-out set of experimental results. Once validated, use the model in an inverse design loop to identify the optimal process parameters (e.g., laser power and scan speed) that will minimize porosity or achieve a target tensile strength [20].

Protocol 3: Fabrication and Actuation of Magnetic Polymer Composites

This protocol outlines the synthesis and testing of polymer composites for untethered magnetic robotics, highlighting specific PSPP considerations [9].

Composite Processing and Anisotropy Programming:

- Objective: To fabricate a soft polymer composite with homogeneously dispersed or directionally assembled magnetic particles.

- Method: Mix magnetic particles (e.g., NdFeB microflakes, Fe₃O₄ nanospheres) into a thermoset precursor (e.g., uncured silicone) or a thermoplastic melt. To induce magnetic anisotropy, apply an external magnetic field during the curing or solidification process. This causes particles to align into chains, programming a directional magnetic response into the material's structure [9].

Thermal Considerations During Processing:

- Objective: To preserve the programmed magnetization during fabrication.

- Method: Carefully control processing temperatures. Temperatures above the material's glass transition (Tg) or melting (Tm) temperature can unintentionally demagnetize fillers. Ensure processing temperatures remain below the Curie temperature (T_curie) of the magnetic filler to avoid erasing the pre-programmed magnetic structure [9].

Actuation Performance Testing:

- Objective: To characterize the locomotion performance of the magnetic robot in response to external magnetic fields.

- Method: Place the fabricated robot in a testing environment (e.g., liquid, surface) and subject it to controlled, time-varying magnetic fields below 100 mT. Use high-speed cameras to track and quantify locomotion modes (e.g., rolling, crawling, swimming) which are the performance metrics directly resulting from the magnetic properties and soft structure of the composite [9].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key materials and reagents used in the experimental protocols for investigating PSPP relationships in advanced material systems.

Table 3: Key Research Reagent Solutions for Tetrahedron-Related Experiments

| Item Name | Function / Role in Experiment |

|---|---|

| Metal Alloy Powder Blends | Precursors for combinatorial synthesis via Directed Energy Deposition (DED); varying composition to explore its effect on structure and properties [3]. |

| Magnetic Fillers (NdFeB, Fe₃O₄) | Functional particles incorporated into polymer matrices to impart magnetic responsiveness, enabling the actuation of soft robots [9]. |

| Thermoset Polymer Precursors | Low-viscosity resins (e.g., silicone elastomers) that serve as the matrix for composites, allowing particle mixing and alignment before cross-linking [9]. |

| FAIR-Compliant Datasets | Curated, Findable, Accessible, Interoperable, and Reusable data from experiments/simulations; the essential "reagent" for training and validating data-driven PSP models [20] [1]. |

| Gaussian Process Regression Models | A class of non-parametric, probabilistic machine learning models used as surrogate models for predicting process-structure and structure-property relationships with uncertainty quantification [20]. |

From Theory to Therapy: Applying the PSPP Framework in Pharmaceutical R&D

Integrating the Tetrahedron into the Pharmaceutical Research Cycle

The development of a new drug product is a complex, interdisciplinary endeavor that requires a deep understanding of the Active Pharmaceutical Ingredient (API) beyond its molecular structure. The "materials tetrahedron"—a conceptual framework illustrating the interdependence of processing, structure, properties, and performance—provides a powerful paradigm for navigating this complexity [21] [11]. Within pharmaceutical sciences, this framework is pivotal for ensuring that a lead solid form possesses the requisite bioavailability, physical and chemical stability, and manufacturability for successful development and commercialization [21]. The relationship between the internal structure of a solid form, its properties, and its performance within a drug product has been specifically described within a “pharmaceutical materials science” tetrahedron [21]. This whitepaper details how the systematic integration of this tetrahedron framework into the pharmaceutical research cycle de-risks development and accelerates the creation of robust, high-quality medicines.

The Tetrahedron Framework in Pharmaceutical Development

Core Elements of the Pharmaceutical Tetrahedron

In the pharmaceutical context, the four vertices of the tetrahedron take on specific, critical meanings:

- Processing: This encompasses the synthesis and manufacturing parameters of the API, including crystallization conditions (solvent, temperature, rate), milling, drying, and subsequent formulation steps. It defines the pathway to create the final drug product.

- Structure: This refers to the solid-form landscape of the API, including its molecular conformation, crystal packing, polymorphism, and potential formation of salts, co-crystals, hydrates, or solvates. The three-dimensional crystal packing arrangement is a primary determinant of material properties [21].

- Properties: These are the physicochemical characteristics resulting from the structure, such as solubility, dissolution rate, chemical stability, hygroscopicity, flowability, and mechanical properties (e.g., hardness, compaction).

- Performance: This represents the critical quality attributes (CQAs) of the final drug product, including therapeutic efficacy (influenced by bioavailability), stability throughout its shelf life, and manufacturability at scale.

The power of the framework lies in the dynamic interrelationships between these elements; a change in one necessarily affects the others. For instance, a change in crystallization processing can lead to a different polymorphic structure, which alters the solubility (properties), ultimately impacting the drug's bioavailability (performance) [21].

The Digital Twin and Informatics-Based Risk Assessment

Modern pharmaceutical development is augmenting the classical tetrahedron with digital tools. The concept of a materials–information twin tetrahedra (MITT) has been proposed, creating a "digital twin" for the materials tetrahedron [11]. This parallel information tetrahedron manages the data, representations, and workflows that describe the physical system, enabling predictive in-silico approaches.

A key application of this informatics-based approach is the Solid Form Health Check [21]. This is a digital risk assessment workflow that compares the crystal structure of a candidate API to knowledge derived from the Cambridge Structural Database (CSD). It analyzes:

- Intramolecular geometry to identify high-energy conformations.

- Hydrogen-bond parameters to identify weak interactions.

- Donor-acceptor pairings to rank interaction likelihoods based on statistical models [21].

This analysis, which can be performed in a matter of days once a crystal structure is obtained, provides invaluable early insight into potential stability risks and influences experimental design throughout the API development process [21].

Implementation: A Integrated Workflow for Solid Form De-risking

The following workflow, combining informatics, energetic calculations, and targeted experimentation, exemplifies the tetrahedron's integration into the pharmaceutical research cycle.

Experimental Protocol: A Combined Informatics and Energetic Health Check

This methodology is designed to comprehensively understand the solid form landscape of a given API and proactively identify stability risks [21].

- Objective: To ensure the selection of a thermodynamically or kinetically stable solid form for development by evaluating known polymorphs and assessing the risk of new forms emerging.

- Materials: The API of interest (e.g., PF-06282999, a molecule with conformational flexibility and multiple hydrogen bond donors/acceptors); materials for solvent-based and solid-state screening (various solvents, milling equipment); computational resources for informatics and density functional theory (DFT) calculations [21].

Step-by-Step Procedure:

Solid Form Screening:

- Execute a comprehensive polymorph screen using techniques like solvent-mediated transformation, slurrying, vapor sorption, and cryomilling to experimentally identify as many solid forms as possible [21].

- Characterize all discovered forms (e.g., Forms 1, 2, 3, and 4) using techniques like X-ray Powder Diffraction (XRPD) and thermal analysis.

Informatics Health Check:

- Obtain crystal structures for all identified forms.

- Using the CSD Python API, perform a Health Check analysis on each structure [21].

- Compare intramolecular geometry (bond lengths, angles, torsion angles) of the API to relevant fragments in the CSD to identify high-energy conformations.

- Analyze hydrogen-bond parameters (e.g., D-H···A distances and angles) against CSD-derived distributions to identify weak or suboptimal interactions.

- Rank hydrogen bond donor-acceptor pairings based on statistical modeling of their observed frequency in the CSD [21].

Energetic Calculations:

- Perform gas-phase Density Functional Theory (DFT) calculations to determine the relative conformational energies of the molecules as they exist in the different crystal structures.

- Calculate the lattice energies of the predicted and observed crystal structures to understand their relative thermodynamic stability [21].

- Integrate these energy calculations with the informatics analysis to gauge the risk associated with each form. A high-energy conformation in a crystal structure may indicate metastability.

Data Integration and Risk Assessment:

- Synthesize the findings from the experimental screening, informatics analysis, and energetic calculations.

- Create a relative stability ranking (e.g., Form 2 is most stable, Form 1 is metastable) to guide the final solid-form nomination [21].

The workflow for this integrated de-risking strategy is outlined in the diagram below.

Quantitative Data from a Case Study: PF-06282999

A study on PF-06282999 demonstrates the quantitative output of this workflow. The table below summarizes the key findings for its four polymorphs [21].

Table 1: Solid Form Health Check and Stability Analysis for PF-06282999 Polymorphs [21]

| Form ID | Relative Stability | Informatics Health Check Summary | Energetic Analysis (DFT) | Form Nomination Risk |

|---|---|---|---|---|

| Form 2 | Most Stable | Favorable hydrogen bonding and geometry. | Lowest lattice energy. | Low Risk |

| Form 1 | Metastable | Good hydrogen bonding; intramolecular geometry within populated distributions. | Higher energy than Form 2. | Medium Risk (Metastable) |

| Form 3 | Metastable | Reasonable hydrogen bonding; geometry within database distributions. | Higher energy than Form 2. | Medium Risk (Metastable) |

| Form 4 | Least Stable | Poor hydrogen bonding; unfavorable intramolecular geometry. | Highest lattice energy; high conformational strain. | High Risk |

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of this integrated workflow requires specific computational and experimental tools.

Table 2: Research Reagent Solutions for Tetrahedron-Based Solid Form Development

| Item / Reagent | Function / Explanation | Application in Workflow |

|---|---|---|

| Cambridge Structural Database (CSD) | A database of over 1.3 million small-molecule organic crystal structures used for informatics-based risk assessment and knowledge-based analysis [21]. | Informatics Health Check |

| CSD Python API | A programming interface that allows for partial automation of the Health Check analysis against the CSD [21]. | Informatics Health Check |

| Density Functional Theory (DFT) | A computational method for electronic structure calculations used to determine lattice energies and conformational metastability of crystal structures [21]. | Energetic Calculations |

| Crystal Structure Prediction (CSP) | A resource-intensive computational method to predict possible crystal structures ranked by their relative energies, used to assess the risk of unobserved polymorphs [21]. | Energetic & Risk Analysis |

| Solid Form Screening Kits | Collections of solvents and materials for executing high-throughput experimentation to explore the polymorphic landscape (e.g., via solvent-mediated transformation, slurrying) [21]. | Experimental Screening |

The integration of the materials tetrahedron into the pharmaceutical research cycle moves solid-form development from an empirical exercise to a predictive, knowledge-driven science. By systematically exploring the PSPP relationships and leveraging modern tools like informatics health checks, computational chemistry, and the digital twin concept, researchers can de-risk the development process. This integrated approach ensures the selection of a robust solid form, safeguarding drug product performance from the API manufacturing campaign through to the patient, and ultimately accelerating the delivery of new therapies.

The development of advanced drug delivery systems represents a significant challenge in modern medicine, requiring materials that are precisely engineered for performance, safety, and biodegradability. Polyhydroxyalkanoates (PHAs), a diverse class of microbially synthesized polyesters, have emerged as promising candidates for this application. This case study examines the design of PHA-based drug delivery systems through the conceptual framework of the materials tetrahedron, which illustrates the fundamental interrelationships between processing, structure, properties, and performance [16] [5]. This framework provides a systematic approach for materials scientists and engineers to understand how manipulation at one vertex of the tetrahedron necessarily induces changes throughout the entire system.