Streamlining HTS Assay Validation: A Practical Guide for Robust and Reproduducible Drug Discovery

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for streamlining the validation of High-Throughput Screening (HTS) assays.

Streamlining HTS Assay Validation: A Practical Guide for Robust and Reproduducible Drug Discovery

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for streamlining the validation of High-Throughput Screening (HTS) assays. It covers the foundational principles of HTS and the critical importance of validation, explores methodological choices between biochemical and cell-based assays and the application of key quality metrics, addresses common troubleshooting and optimization challenges like false positives and data bottlenecks, and establishes robust validation and comparative analysis protocols to ensure screen reproducibility and reliable hit identification. By integrating current best practices and emerging trends such as AI and 3D models, this article aims to enhance efficiency and success rates in early-stage drug discovery.

The Bedrock of Success: Core Principles and Business Case for HTS Assay Validation

High-Throughput Screening Technical Support Center

Defining HTS and Its Pivotal Role in Modern Drug Discovery

High-Throughput Screening (HTS) is an automated method for scientific discovery that enables researchers to rapidly conduct millions of chemical, genetic, or pharmacological tests using robotics, data processing software, liquid handling devices, and sensitive detectors [1]. This approach allows for the systematic screening of vast compound libraries to identify active molecules ("hits") that modulate specific biomolecular pathways, providing crucial starting points for drug design and understanding biological interactions [1] [2].

In modern drug discovery, HTS has transformed from traditional manual methods into a sophisticated, integrated process that addresses critical industry challenges. It overcomes traditional bottlenecks by allowing simultaneous testing of thousands to millions of compounds, dramatically accelerating hit identification and lead optimization while reducing costs through miniaturization and automation [3] [4]. The technology has evolved to screen over 100,000 compounds per day in ultra-high-throughput screening (uHTS) systems, with recent advances enabling even greater throughput through microfluidic technologies [1] [4].

Key HTS Applications in Drug Discovery

| Application Area | Specific Uses | Impact on Drug Discovery |

|---|---|---|

| Target Identification | Screening compound libraries against novel disease targets [5] | Identifies starting points for therapeutic development |

| Hit Identification | Primary screening of large compound libraries [3] | Rapidly identifies active compounds from thousands of candidates |

| Lead Optimization | SAR studies, potency testing, selectivity profiling [3] [5] | Refines drug candidates for improved efficacy and safety |

| Toxicity Screening | Cytotoxicity assays, metabolic stability testing [4] | Early identification of potential safety issues |

| Mechanism of Action | Pathway analysis, target engagement studies [6] | Elucidates how compounds produce biological effects |

HTS Troubleshooting Guides

Common Experimental Issues and Solutions

Problem: High False Positive Rates

Symptoms: Compounds appear active in initial screening but fail confirmation; irregular plate patterns; edge effects.

Potential Causes and Solutions:

- Assay Interference: Some compounds may interfere with detection methods. Solution: Implement counter-screens and use orthogonal assay technologies to confirm hits [3] [7].

- DMSO Incompatibility: High DMSO concentrations can affect assay results. Solution: Ensure final DMSO concentration is ≤1% for cell-based assays and test DMSO compatibility during assay validation [8].

- Compound Contamination: Contaminants in compound libraries. Solution: Use quality-controlled libraries and verify compound purity [5].

- Insufficient Controls: Lack of proper controls for normalization. Solution: Include positive and negative controls on every plate [1] [8].

Problem: Poor Assay Reproducibility

Symptoms: High well-to-well variability; inconsistent results between plates; day-to-day fluctuations.

Potential Causes and Solutions:

- Liquid Handling Inconsistency: Manual pipetting variability. Solution: Implement automated liquid handling systems with verification features (e.g., DropDetection technology) [7].

- Reagent Instability: Degradation of critical reagents. Solution: Conduct stability studies for all reagents; establish proper storage conditions [8].

- Environmental Fluctuations: Temperature or humidity changes. Solution: Implement environmental monitoring and control systems.

- Protocol Deviations: Lack of standardized procedures. Solution: Develop detailed SOPs and automate processes where possible [7].

Problem: Inadequate Signal-to-Noise Ratio

Symptoms: Poor distinction between positive and negative controls; low Z-factor values; difficulty identifying true hits.

Potential Causes and Solutions:

- Assay Design Issues: Inadequate separation between maximum and minimum signals. Solution: Optimize assay conditions through signal window experiments [8].

- Detection Limitations: Insensitive detection methods. Solution: Implement more sensitive detection technologies (e.g., TR-FRET, fluorescence polarization) [9] [5].

- Incubation Time: Suboptimal reaction times. Solution: Conduct time-course experiments to determine optimal incubation periods [8].

Data Quality Assessment and Metrics

Key Quality Control Parameters

| Quality Metric | Calculation Formula | Acceptable Range | Interpretation |

|---|---|---|---|

| Z'-factor | 1 - (3σ₊ + 3σ₋) / |μ₊ - μ₋| | 0.5 - 1.0 [5] | Excellent assay robustness |

| Signal-to-Background Ratio | Mean Signal / Mean Background | ≥3:1 [1] | Sufficient signal separation |

| Signal-to-Noise Ratio | (Mean Signal - Mean Background) / SD Background | ≥5:1 [1] | Adequate signal detection |

| Coefficient of Variation (CV) | (Standard Deviation / Mean) × 100 | <10% [5] | Acceptable well-to-well variability |

| Strictly Standardized Mean Difference (SSMD) | (Mean₁ - Mean₂) / √(SD₁² + SD₂²) | >3 for strong hits [1] | Effect size measurement |

Streamlining Validation for HTS Assays

Essential Validation Protocols

Plate Uniformity and Signal Variability Assessment

Purpose: To evaluate well-to-well and plate-to-plate consistency in assay performance [8].

Experimental Design:

- Conduct over 2-3 days using independent reagent preparations

- Test three critical signals: "Max" (maximum signal), "Min" (background signal), and "Mid" (midpoint signal)

- Use interleaved-signal format with systematic well placement

- Maintain consistent DMSO concentration throughout [8]

Procedure:

- Prepare assay plates according to standardized layout

- For agonist assays: "Max" = maximal cellular response; "Min" = basal signal; "Mid" = EC₅₀ concentration of reference agonist

- For inhibitor assays: "Max" = EC₈₀ concentration of agonist; "Min" = EC₈₀ agonist + maximal inhibitor; "Mid" = EC₈₀ agonist + IC₅₀ inhibitor

- Run complete assay procedure with all controls

- Measure signals using appropriate detection method

- Repeat across multiple days with fresh preparations [8]

Data Analysis:

- Calculate Z'-factor for each plate

- Determine signal window and coefficient of variation

- Assess positional effects across the plate

- Verify consistent mid-point accuracy

Reagent Stability and Compatibility Studies

Purpose: To establish shelf-life and handling conditions for critical assay components [8].

Experimental Design:

- Test stability under storage conditions (frozen, refrigerated)

- Evaluate freeze-thaw cycle tolerance

- Assess working solution stability at assay temperature

- Determine DMSO compatibility range [8]

Procedure:

- Prepare multiple aliquots of critical reagents

- Store under different conditions (-80°C, -20°C, 4°C, room temperature)

- Test activity at predetermined timepoints

- Subject to multiple freeze-thaw cycles (if applicable)

- Test assay performance with DMSO concentrations from 0-10%

- Use standardized assay conditions for all tests [8]

Data Analysis:

- Compare activity to fresh preparations

- Establish acceptable storage duration and conditions

- Determine maximum tolerable DMSO concentration

- Define quality acceptance criteria for reagents

Streamlined Validation Framework

For prioritization applications where HTS assays identify high-concern subsets of chemicals, a streamlined validation approach can be implemented while maintaining reliability [6]. This framework includes:

- Increased Use of Reference Compounds: Demonstrate assay reliability and relevance using well-characterized reference compounds with established biological effects [6].

- Modified Cross-Laboratory Testing: De-emphasize extensive multi-laboratory testing for prioritization applications, focusing instead on internal reproducibility [6].

- Expedited Peer Review: Implement transparent, web-based review processes that recognize the quantitative nature of HTS data [6].

- Fitness-for-Purpose Evaluation: Establish relevance through ability to detect key biological events with documented links to adverse outcomes [6].

HTS Technical FAQs

Assay Development and Validation

Q: What are the essential steps for validating a new HTS assay? A: A comprehensive validation includes: (1) Stability and process studies for all reagents [8], (2) Plate uniformity assessment over 2-3 days testing Max, Min, and Mid signals [8], (3) Replicate-experiment study to establish reproducibility [8], and (4) Determination of key quality metrics including Z'-factor, signal-to-noise ratio, and CV [1] [5].

Q: How do I determine the appropriate number of replicates for my HTS assay? A: The replication strategy depends on the screening stage. Primary screens often run without replicates using methods like z-score that assume consistent variability [1]. Confirmatory screens should include replicates (typically 2-3) to enable variability estimation for each compound using t-statistic or SSMD methods [1].

Q: What is the difference between full validation and assay transfer? A: Full validation requires 3-day plate uniformity studies and comprehensive performance characterization for new assays [8]. Assay transfer for previously validated assays moving to a new laboratory requires only 2-day plate uniformity studies and replicate-experiment studies to confirm equivalent performance [8].

Technical Troubleshooting

Q: How can I reduce false positives in my HTS campaigns? A: Implement multiple strategies: (1) Use confirmatory screens with slightly modified conditions [3], (2) Employ orthogonal assays with different detection methods [3], (3) Include interference counterscreens [5], (4) Apply robust statistical methods (z-score, SSMD) that are less sensitive to outliers [1], and (5) Use concentration-response testing (qHTS) when possible [1] [2].

Q: What are the most common sources of variability in HTS? A: Major variability sources include: (1) Liquid handling inconsistencies (addressed by automation) [7], (2) Reagent stability issues (mitigated by proper storage and handling) [8], (3) Environmental fluctuations (temperature, humidity), (4) Cell passage number and condition (for cell-based assays), and (5) Operator technique (reduced through automation and SOPs) [7].

Q: How can automation improve my HTS results? A: Automation enhances HTS by: (1) Reducing human error and variability [7], (2) Increasing throughput and efficiency [3], (3) Enabling miniaturization (reducing reagent consumption by up to 90%) [7], (4) Improving data quality through verification features (e.g., drop detection) [7], and (5) Standardizing processes across users and sites [7].

Research Reagent Solutions

Essential Materials for HTS Assays

| Reagent Category | Specific Examples | Function in HTS | Quality Considerations |

|---|---|---|---|

| Detection Reagents | Fluorescent probes, Luminescent substrates, Antibodies | Enable signal generation for activity measurement | Batch-to-batch consistency, Stability, Minimal background interference [9] [5] |

| Enzymes/Targets | Kinases, Proteases, GPCRs, Ion channels | Primary biological targets for screening | Activity validation, Purity, Appropriate storage conditions [8] |

| Cell Lines | Engineered reporter lines, Primary cells, Stem cell-derived models | Provide physiological context for cellular assays | Authentication, Passage number control, Mycoplasma testing [4] |

| Compound Libraries | Small molecule collections, Natural product extracts, Fragment libraries | Source of potential drug candidates | Purity verification, Solubility, Structural diversity [3] [5] |

| Microplates | 96-, 384-, 1536-well formats | Miniaturized reaction vessels | Surface treatment, Well geometry, Optical clarity [1] [2] |

| Buffer Components | Salts, Detergents, Cofactors, Substrates | Maintain optimal assay conditions | Grade/purity, Compatibility, Stability [8] |

Advanced Detection Technologies

| Technology | Principle | Applications | Advantages |

|---|---|---|---|

| Fluorescence Polarization (FP) | Measures molecular rotation changes upon binding | Receptor-ligand interactions, Enzyme activity [5] | Homogeneous format, No separation steps [9] |

| TR-FRET | Time-resolved fluorescence resonance energy transfer | Protein-protein interactions, Post-translational modifications [5] | Reduced background, High sensitivity [9] |

| Surface Plasmon Resonance (SPR) | Measures biomolecular interactions in real-time | Binding kinetics, Affinity measurements [9] | Label-free, Provides kinetic data [9] |

| Scintillation Proximity Assay (SPA) | Radiation-based detection when molecules bind to beads | Radioactive assays, Receptor binding [9] | Homogeneous format, No separation steps [9] |

| High-Content Screening | Multiparametric imaging of cellular phenotypes | Cytotoxicity, Morphological changes, Subcellular localization [3] | Rich data collection, Multiple endpoints [3] |

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing Poor Assay Robustness (Low Z'-factor)

Symptoms: High data variability, inconsistent results between plates, inability to distinguish true signals from background noise.

Troubleshooting Steps:

- Recalculate your Z'-factor. A Z'-factor between 0.5 and 1.0 indicates an excellent assay. Values below 0.5 require investigation [10] [11].

- Check reagent integrity. Prepare fresh positive and negative control reagents to rule out degradation [12].

- Investigate liquid handling precision. Use a dye-based test to verify that dispensers are delivering accurate and consistent volumes across the entire microplate, especially in 384-well and 1536-well formats [10] [12].

- Mitigate edge effects. Pre-incubate assay plates at room temperature after seeding to allow for thermal equilibration. Use plate sealers or humidified incubators to minimize evaporation in edge wells [10] [12].

- Optimize assay signal window. If the dynamic range between positive and negative controls is small, re-examine assay component concentrations (e.g., enzyme, substrate) or incubation times [10].

Guide 2: Mitigating High Rates of False Positives

Symptoms: Compounds identified as "hits" in the primary screen fail in confirmatory assays; activity is due to non-specific interference rather than true target engagement.

Troubleshooting Steps:

- Run an orthogonal assay. Confirm hits using a secondary assay with a different detection technology (e.g., switch from fluorescence to mass spectrometry) to rule out method-specific interference [12] [13].

- Perform a counter-screen. Test compounds in an assay that detects common interference mechanisms, such as aggregation-based inhibition or fluorescence quenching [12].

- Apply computational filters. Use software to flag compounds containing Pan-Assay Interference Compounds (PAINS) substructures or other undesirable chemical motifs [12] [13].

- Review hit chemical structures. Manually inspect the structures of potential hits for known reactive functional groups or impurities that could cause artifacts [13].

- Optimize assay conditions. Include detergents like Triton X-100 or BSA in the assay buffer to disrupt compound aggregation [12].

Frequently Asked Questions (FAQs)

Q1: What are the most critical statistical metrics for validating an HTS assay, and what are their acceptable ranges?

A: The following metrics are essential for quantifying assay robustness [10] [11]:

Table: Key Quality Control Metrics for HTS Assay Validation

| Metric | Definition | Excellent Range | Purpose |

|---|---|---|---|

| Z'-factor | A measure of assay robustness and signal dynamic range, incorporating the separation band and data variation of both positive and negative controls. | 0.5 to 1.0 [11] | Assesses the overall quality and suitability of an assay for HTS. |

| Signal-to-Background (S/B) | The ratio of the mean signal of the positive control to the mean signal of the negative control. | >3 (assay-dependent) [10] | Indicates the strength of the measurable signal. |

| Signal Window (SW) | Similar to S/B, but accounts for variability of the controls. | >3 (assay-dependent) [10] | A more robust indicator of signal strength than S/B. |

| Coefficient of Variation (CV) | The ratio of the standard deviation to the mean, expressed as a percentage. | <10% [10] | Measures the well-to-well and plate-to-plate reproducibility of controls. |

Q2: Our assay performs well manually but fails in the automated HTS workflow. What are the common causes?

A: This is a frequent challenge when transitioning from bench to automation. Key areas to investigate are [10] [12]:

- Liquid Handling Precision: Automated dispensers may be less accurate with low-volume, viscous, or solvent-containing solutions. Calibrate instruments and use non-contact dispensers for better accuracy.

- Timing and Incubation: Automated workflows have fixed time points. Ensure that reaction kinetics and incubation times are compatible with the robotic system's speed.

- Material Compatibility: Some assay reagents (e.g., proteins, cells) may adsorb to plastic tubing or reservoirs in automated systems. Use low-binding plates and surface-treated tubing.

- Solvent Tolerance: Verify that the assay can tolerate the concentration of DMSO or other solvents used to dissolve the compound library, as this can affect protein stability and cellular health [12].

Q3: What is "Plate Drift" and how can we correct for it?

A: Plate drift is a systematic temporal error where the assay's signal window or statistical performance changes over the duration of a screening run. This can be caused by reagent degradation, instrument warm-up, or environmental fluctuations [10].

Mitigation Strategies:

- Pre-run Instrument Calibration: Allow plate readers and detectors to warm up fully before starting a screen.

- Strategic Control Placement: Distribute positive and negative controls across the entire plate (e.g., in a checkerboard pattern) rather than just in the first and last columns. This allows for spatial correction of signals during data analysis.

- Plate Design: Include control wells on every plate to normalize for plate-to-plate variation [10] [12].

Experimental Protocols

Protocol: Assay Validation for High-Throughput Screening

This protocol outlines the key steps for validating a biochemical or cell-based assay before a full-scale HTS campaign.

1. Define Assay Objectives

- Clearly state the biological question and the desired output (e.g., identify inhibitors of Enzyme X).

2. Develop a Miniaturized Protocol

- Scale down the assay to the desired microplate format (e.g., 384-well). Optimize concentrations of all components (enzyme, substrate, cells, co-factors) for the smaller volume [10].

3. Establish Controls

- Positive Control: A compound or condition known to produce the full assay signal (e.g., a known potent inhibitor for an inhibition assay).

- Negative Control: A compound or condition known to produce the minimum assay signal (e.g., a no-enzyme control for a biochemical assay) [10] [12].

4. Perform a Plate Uniformity Test

- Run at least one full microplate containing only positive and negative controls distributed across the entire plate.

- Calculate the Z'-factor, S/B, SW, and CVs. The assay is not ready for HTS until these metrics are consistently within acceptable ranges [10] [11].

5. Conduct a Compound Tolerance Test

- Test the assay's tolerance to the solvent (typically DMSO) and a small set of diverse compounds to check for interference [12].

6. Assess Inter-day Reproducibility

- Repeat the plate uniformity test on three separate days to ensure the assay is robust over time [12].

Essential Research Reagent Solutions

Table: Essential Materials for HTS Assay Development and Validation

| Item | Function | Key Considerations |

|---|---|---|

| Microplates | The platform for miniaturized, parallel reactions. | Choose well density (96, 384, 1536), surface treatment (e.g., tissue-culture treated, low-binding), and material (e.g., polystyrene, polypropylene) based on assay needs [10]. |

| Liquid Handling Systems | Automated dispensers for precise, high-speed transfer of reagents and compounds. | Select between tip-based (for larger volumes) and non-contact acoustic dispensers (for nanoliter volumes) to minimize reagent use and cross-contamination [14] [12]. |

| Detection Reagents | Chemistries that generate a measurable signal (e.g., fluorescence, luminescence). | Select robust, homogeneous ("mix-and-read"), and interference-resistant reagents. Universal detection methods (e.g., ADP detection for kinases) can simplify workflows [11]. |

| Control Compounds | Pharmacologically active tools that define the upper and lower limits of the assay signal. | Source high-purity, well-characterized compounds for reliable results. Their performance is the benchmark for all QC metrics [10] [12]. |

| Compound Library | A curated collection of small molecules or biologics for screening. | Quality is paramount. Libraries should be designed for diversity and drug-likeness, and stored properly to minimize degradation and precipitation [15] [11]. |

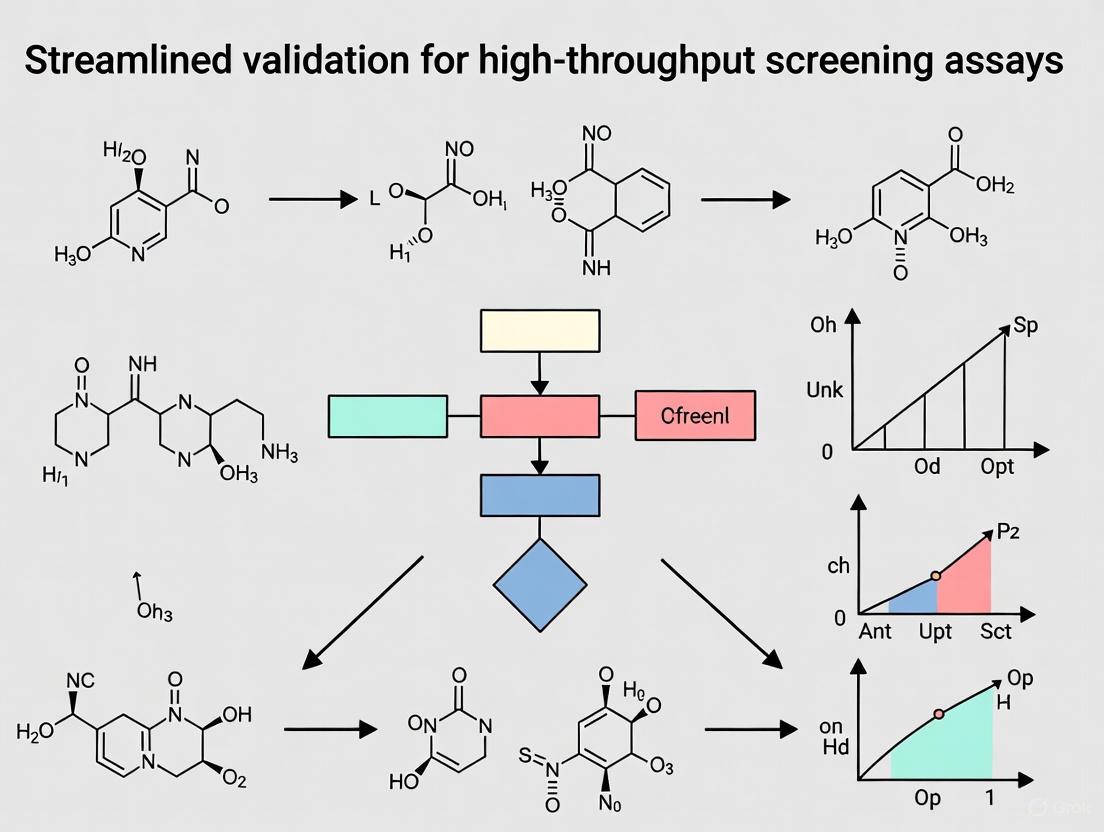

Workflow Visualization

The following diagram illustrates the logical workflow and decision points for validating a high-throughput screening assay.

HTS Assay Validation Workflow

The following diagram visualizes the relationship between key quality control metrics used to monitor assay performance.

QC Metrics Inform Decision

Troubleshooting Guides

Guide 1: Addressing Poor Reproducibility in Technical Replicates

Problem: High variability between replicate screening runs leads to unreliable data and inconsistent hit identification.

Investigation & Resolution:

- Check Traditional Quality Control (QC) Metrics: Begin by calculating standard plate-based QC metrics. The Z'-factor is a robust statistical parameter for assessing assay quality. A Z'-factor between 0.5 and 1.0 is considered excellent, indicating a high-quality, reproducible assay [16] [17].

- Analyze for Spatial Artifacts: Plates passing traditional QC may still harbor systematic spatial errors that compromise reproducibility [17]. Inspect raw data heatmaps for patterns like edge effects, column-wise striping, or gradients indicative of pipetting errors, evaporation, or temperature drift.

- Implement Advanced QC Metrics: Calculate the Normalized Residual Fit Error (NRFE), a control-independent metric that detects systematic artifacts in drug-containing wells by analyzing deviations in dose-response curves [17].

- Action Threshold: Plates with an NRFE >15 should be excluded or carefully reviewed, as they show a 3-fold lower reproducibility among technical replicates. Plates with NRFE between 10-15 require additional scrutiny [17].

- Verify Liquid Handling Systems: Calibrate robotic liquid handlers to minimize pipetting inaccuracies that cause column/row-wise artifacts. For critical applications, consider systems with non-contact liquid dispensing [14] [17].

Summary of Key QC Metrics:

| Metric | Target Value | Purpose | Limitation |

|---|---|---|---|

| Z'-factor [16] [17] | > 0.5 (Excellent) | Assesses assay robustness by measuring the separation between positive and negative controls. | Relies only on control wells; cannot detect spatial artifacts in sample wells. |

| NRFE [17] | < 10 (Acceptable) | Identifies systematic spatial errors and poor dose-response fitting directly from drug-well data. | Does not replace Z'-factor; should be used as a complementary, orthogonal metric. |

| Signal-to-Background (S/B) [17] | > 5 | Measures the ratio of mean signals from positive and negative controls. | Weak correlation with other QC metrics; less reliable alone [17]. |

Guide 2: Mitigating False-Positive Hits

Problem: A high rate of false-positive hits wastes resources on follow-up studies for invalid leads.

Investigation & Resolution:

- Identify Assay Interference Mechanisms:

- Compound Fluorescence/Absorbance: Test compounds that interfere with optical detection methods (e.g., fluorescence, luminescence) are a common cause [13].

- Chemical Reactivity: Compounds with reactive functional groups can cause undesirable chemical reactions with assay components [13].

- Colloidal Aggregation: Molecules can form aggregates that non-specifically inhibit enzymes [13].

- Mechanism-Specific Interference: New mechanisms, such as false positives specific to mass spectrometry (MS)-based screens like RapidFire MRM, have been identified that are free from classical artefacts [18].

- Employ Orthogonal Assay Technologies: Confirm initial hits using a detection method with a different readout technology. For example, confirm a fluorescence-based HTS hit using a mass spectrometry-based assay, which is less susceptible to optical interference [18].

- Implement Counter-Screens: Develop secondary assays designed specifically to identify common interferents. For instance, use detergent-based counterscreens to break up compound aggregates [19].

- Apply In-Silico Triage: Use computational filters, such as Pan-Assay Interference Compound (PAINS) filters, to flag compounds with substructures known to cause false positives [13] [16]. Machine learning models trained on historical HTS data can also help rank compounds by their probability of being true hits [13].

Guide 3: Ensuring End-to-End Data Integrity

Problem: Data integrity issues undermine the validity of the entire screening campaign and its conclusions.

Investigation & Resolution:

- Automate Data Capture: Integrate instrumentation with a Laboratory Information Management System (LIMS) to automate data transfer, minimizing manual entry errors [20].

- Ensure Regulatory Compliance: For screens supporting regulatory filings, systems must comply with standards like 21 CFR Part 11, which sets requirements for electronic records and signatures [20].

- Standardize Data Formats: Use standardized data formats (e.g., ASTM, HL7) to facilitate seamless communication between different instruments and software components, reducing errors and increasing efficiency [20].

- Implement Robust Data Analysis Pipelines: Employ automated pipelines that integrate multiple QC metrics (like Z'-factor and NRFE) to systematically flag unreliable data and improve cross-dataset correlation [17].

Frequently Asked Questions (FAQs)

Q1: Our HTS assay has a good Z'-factor (>0.5), but we still see poor reproducibility between replicates. What could be wrong? A: The Z'-factor only assesses control wells and can miss spatial artifacts in the drug wells [17]. We recommend implementing the Normalized Residual Fit Error (NRFE) metric, which evaluates quality directly from the drug response data. Plates with high NRFE (>15) show significantly lower reproducibility, even with a passing Z'-factor [17].

Q2: What is the most effective strategy to minimize false positives from our screening campaigns? A: A multi-pronged approach is most effective:

- Careful Assay Design: Use simple, mix-and-read assays without coupling enzymes to reduce complexity and opportunities for artefacts [16] [18].

- Orthogonal Confirmation: Always confirm primary hits with a secondary assay that uses a different detection technology (e.g., mass spectrometry) [18].

- Computational Triage: Apply PAINS filters and other machine learning models to flag likely interferents during data analysis [13].

Q3: What are the key performance metrics for validating a new HTS assay? A: A well-validated HTS assay should be robust, reproducible, and sensitive. Key metrics to report include [16] [17]:

- Z'-factor: > 0.5 indicates an excellent assay.

- Signal-to-Noise Ratio (S/N) & Signal Window: To distinguish active from inactive compounds.

- Coefficient of Variation (CV): Across wells and plates.

- Dynamic Range: The assay's ability to measure a wide range of responses.

Q4: How is Artificial Intelligence (AI) helping to overcome HTS challenges? A: AI and machine learning are reshaping HTS by [14] [13]:

- Analyzing Massive Datasets: AI enables predictive analytics and advanced pattern recognition to identify potential drug candidates from HTS data with unprecedented speed and accuracy.

- Reducing False Positives: ML models trained on historical HTS data can help triage output and rank compounds by their probability of success.

- Optimizing Processes: AI supports process automation, minimizing manual intervention in repetitive tasks to accelerate workflows and reduce human error.

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in HTS |

|---|---|

| Microplates (96-, 384-, 1536-well) [20] [13] | Miniaturized assay formats that maximize throughput while minimizing reagent use. |

| Liquid Handling Robots & Automation Systems [14] [20] | Precisely dispense nanoliter to microliter volumes for efficient sample preparation and assay setup. |

| Cell-Based Assays [14] [13] | Provide physiologically relevant data by replicating complex biological systems for drug discovery and disease research. |

| Biochemical Assays (e.g., Transcreener) [16] | Measure direct enzyme activity (kinases, GTPases, etc.) in a defined system for highly quantitative, interference-resistant readouts. |

| CRISPR-based Screening Systems (e.g., CIBER) [14] | Enable genome-wide functional studies to identify gene functions and regulators of biological processes. |

| Mass Spectrometry (MS) Detection [18] | Provides a direct, label-free method for detecting enzyme reaction products, free from classical fluorescence-based artefacts. |

| QC Software Packages (e.g., plateQC R package) [17] | Provides a robust toolset for calculating advanced metrics like NRFE to enhance data reliability and consistency. |

This technical support center is designed to help researchers navigate the challenges of selecting and validating high-throughput screening (HTS) assays. Choosing the right assay format—biochemical, cell-based, or phenotypic—is critical for generating reliable, reproducible data that accurately reflects biological activity. Each approach offers distinct advantages and limitations that must be carefully considered within the context of your screening goals, whether for target identification, hit validation, or lead optimization. The following guides and FAQs provide practical troubleshooting advice and methodological frameworks to streamline your assay validation process and improve the translational potential of your screening outcomes.

Assay Format Comparison

Table 1: Key Characteristics of Major Screening Assays

| Parameter | Biochemical Assays | Cell-based Assays | Phenotypic Screening |

|---|---|---|---|

| Core Principle | Measures interaction with or modulation of a purified target (e.g., enzyme inhibition) [21] | Measures compound effect in a live cellular environment, often on a specific pathway or reporter [22] [21] | Identifies compounds that produce a desired cellular or organismal phenotype without a predefined molecular target [23] [21] |

| Complexity | Defined system with minimal components [21] | More complex than biochemical, but target/pathway is often known or engineered [24] | Highly complex biological system; target is typically unknown at outset [23] |

| Throughput | Typically very high [21] | High [24] | Can be high, but often lower due to complex readouts [25] |

| Key Advantage | High precision, controlled conditions, direct mechanism of action (MOA) [21] | Cellular context provides permeability and early toxicity data [24] | Potential for novel biology and first-in-class therapies; biologically relevant [23] |

| Primary Challenge | May not reflect cellular physiology (e.g., compound permeability, off-target effects) [26] | Reproducibility can be affected by cell status (passage number, culture conditions) [27] [24] | Hit triage and target deconvolution are complex and time-consuming [23] |

| Typical Readouts | Fluorescence, TR-FRET, Absorbance, Luminescence [26] [21] | Luminescence, Fluorescence, Cell Viability, High-Content Imaging [22] [24] | High-Content Imaging, Morphological Changes, Behavioral Changes (in vivo) [25] |

Troubleshooting Guides

General Microplate Assay Setup

Table 2: Common Microplate Reader and Assay Setup Issues

| Problem | Potential Cause | Solution |

|---|---|---|

| High Background | Incorrect microplate color (e.g., using clear for fluorescence) [28] | Use black microplates for fluorescence, white for luminescence, and clear for absorbance [28]. |

| Insufficient washing [29] | Increase wash number; add a 30-second soak step between washes [29]. | |

| Autofluorescence from media components [28] | Use imaging-optimized media or PBS+; utilize bottom optics for reading [28]. | |

| High Variability (Poor Duplicates) | Pipetting errors [22] | Use calibrated multichannel pipettes; prepare a master mix for reagents [22]. |

| Uneven cell seeding or coating [29] | Ensure homogeneous cell suspension; check coating procedure and plate quality [29]. | |

| Instrument setting issues [28] | Increase the number of flashes for fluorescence/absorbance reads; use well-scanning for uneven samples [28]. | |

| Weak or No Signal | Low transfection efficiency (reporter assays) [22] | Test and optimize DNA-to-transfection reagent ratios [22]. |

| Non-functional or old reagents [22] [29] | Use newly prepared reagents; check substrate stability (e.g., luciferin) [22]. | |

| Incorrect instrument setup (TR-FRET) [26] | Verify the correct emission and excitation filters are installed for your assay [26]. | |

| Poor Assay-to-Assay Reproducibility | Variations in cell culture conditions [27] | Use consistent passage numbers, seeding densities, and media batches [27] [24]. |

| Reagent or protocol variations [29] | Adhere strictly to the same protocol; use fresh buffers and plate sealers for each run [29]. |

Assay Troubleshooting Workflow

Biochemical Assay Specific Issues

Problem: No Assay Window in TR-FRET

- Cause: The most common reason is an incorrect choice of emission filters. Unlike other fluorescence assays, TR-FRET is highly dependent on using the exact filters recommended for your instrument [26].

- Solution: Consult instrument setup guides for your specific microplate reader model. Test your reader's TR-FRET setup with known control reagents before running your actual assay [26].

Problem: Differences in EC50/IC50 Between Labs

- Cause: This is often traced back to differences in the preparation of stock compound solutions [26].

- Solution: Standardize the preparation and storage of stock solutions across collaborating labs. Verify compound solubility and stability.

Cell-based Assay Specific Issues

Problem: Weak Signal in Luciferase Reporter Assays

- Cause: Low transfection efficiency, non-functional reagents, or a weak promoter [22].

- Solution:

- Check the quality of your plasmid DNA and the functionality of your luciferase reagents.

- Systematically test different ratios of plasmid DNA to transfection reagent to find the optimal condition.

- If possible, replace the promoter with a stronger one [22].

Problem: High Variability in Luciferase Assays

- Cause: Pipetting errors, using different reagent batches between experiments, or unstable luminescent reagents [22].

- Solution:

- Prepare a master mix for your working solution.

- Use a luminometer with an injector to dispense the bioluminescent reagent.

- Normalize your data using an internal control reporter, such as in a dual-luciferase assay system (e.g., firefly vs. Renilla luciferase) [22].

Problem: Signal Interference in Bioluminescent Assays

- Cause: Some test compounds (e.g., resveratrol, certain flavonoids or dyes) can inhibit the luciferase enzyme or quench the signal [22].

- Solution:

- Avoid known inhibitory compounds where possible.

- Include proper controls to identify interference.

- Lower the concentration of the test compound or modify the incubation time [22].

Phenotypic Screening Specific Issues

- Problem: Difficulties with Hit Triage and Validation

- Cause: Unlike target-based screening, phenotypic hits act through a variety of unknown mechanisms within a large biological space, making it difficult to prioritize and validate them [23].

- Solution: Successful triage is enabled by leveraging biological knowledge in three key areas: known mechanisms of action, underlying disease biology, and safety profiles. Avoid relying solely on structure-based triage in the early stages, as it may be counterproductive to discovering novel biology [23].

Frequently Asked Questions (FAQs)

Q1: What is a Z'-factor, and what value should I aim for? The Z'-factor is a key metric for assessing the quality and robustness of an HTS assay. It takes into account both the assay window (the difference between the maximum and minimum signals) and the data variation (standard deviation) [26]. A Z'-factor between 0.5 and 1.0 is considered an excellent assay, suitable for screening [26] [21]. It indicates a strong separation between your positive and negative controls.

Q2: When should I use biochemical vs. cell-based assays? The choice depends on your goal. Use biochemical assays when you need to understand the direct interaction between a compound and a purified target (e.g., enzyme inhibition) and require high precision and throughput [21]. Use cell-based assays when you need the cellular context to account for factors like membrane permeability, metabolism, or toxicity, and when studying a specific pathway or reporter in a live environment [24] [21].

Q3: How can I improve the reproducibility of my cell-based assays? Reproducibility in cell-based assays can be improved by:

- Using consistent cell passage numbers and seeding densities [27].

- Moving towards more defined and consistent cell models, such as human iPSC-derived cells with reduced batch-to-batch variability [24].

- Strictly adhering to the same protocols and environmental conditions (e.g., incubation time and temperature) across experiments [29].

Q4: What are the key considerations for transitioning from immortalized cell lines to iPSC-derived models? Human iPSC-derived models offer greater human physiological relevance but can suffer from poor purity and batch variability with conventional differentiation protocols [24]. Next-generation deterministic programming technologies (e.g., opti-ox) can generate highly consistent iPSC-derived cells (ioCells), which help reduce variability at the source and provide more reproducible, scalable systems for phenotypic screening [24].

Q5: How do I handle hits from a phenotypic screen where the mechanism of action is unknown? Hit triage for phenotypic screening should be guided by biological knowledge rather than purely structural information. Focus on known mechanisms of action, disease biology, and safety considerations to prioritize compounds for further investigation. This approach is more likely to lead to successful validation and novel target discovery [23].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Screening Assays

| Item | Function/Application | Key Considerations |

|---|---|---|

| Microplates (96, 384, 1536-well) | The physical platform for running miniaturized, high-throughput assays [21]. | Color matters: Use clear for absorbance, black for fluorescence, white for luminescence [28]. Avoid cell culture-treated plates for absorbance, as they increase meniscus [28]. |

| TR-FRET Detection Kits | Enable homogeneous, ratiometric assays for targets like kinases (LanthaScreen Eu) [26]. | Filter selection is critical. The acceptor/donor emission ratio corrects for pipetting variance and reagent variability [26]. |

| Dual-Luciferase Reporter Assay System | Allows normalization of experimental reporter (Firefly) to a co-transfected control reporter (Renilla) [22]. | Crucial for reducing variability caused by differences in transfection efficiency and cell viability [22]. |

| Transcreener HTS Assays | Universal biochemical assays detecting ADP or GDP for various enzyme classes (kinases, GTPases) [21]. | Offers a flexible, mix-and-read format (FP, FI, TR-FRET) for multiple targets, streamlining the screening process [21]. |

| Human iPSC-derived Cells (e.g., ioCells) | Provide a human-relevant, consistent, and scalable cell source for phenotypic and target-based screening [24]. | Look for defined identity and high lot-to-lot consistency to ensure assay reproducibility and reduce background noise [24]. |

| White LED Light Box & IR Cameras | Essential equipment for behavioral phenotypic screening in model organisms like zebrafish [25]. | Allows precise control of light/dark stimuli and high-quality tracking of movement for high-throughput analysis [25]. |

Assay Selection Decision Tree

The Economic and Scientific Impact of a Streamlined Validation Process

This technical support center provides troubleshooting guides and FAQs to help researchers and scientists overcome common challenges in high-throughput screening (HTS) assay validation. A streamlined validation process is crucial for accelerating drug discovery, reducing costs, and ensuring data integrity and reproducibility.

Troubleshooting Common HTS Validation Issues

Q: How can I reduce variability and improve reproducibility in my HTS assays?

A: High inter-user variability and manual errors are primary sources of irreproducibility, with over 70% of researchers reporting an inability to reproduce others' work [7]. Implement these solutions:

- Automate Liquid Handling: Use non-contact dispensers with integrated verification features. For example, the I.DOT Liquid Handler's DropDetection technology verifies dispensed volumes, identifying and documenting errors in real-time [7].

- Standardize Protocols: Develop exhaustive validation plans detailing every step and resource. Use automated, mix-and-read assay formats to minimize manual steps and variability [30] [31].

- Conduct Plate Uniformity Studies: Assess signal variability over multiple days using interleaved-signal plate formats with Max, Min, and Mid controls to establish baseline performance [8].

Q: My HTS assay is producing a high rate of false positives/negatives. What steps should I take?

A: False results lead to wasted resources and missed opportunities [7]. Troubleshoot using the following approach:

- Validate Assay Performance Metrics: Ensure your assay meets robust statistical standards. A Z'-factor > 0.5 indicates a robust assay suitable for HTS. Calculate the Signal-to-Background ratio (S/B) and Control Coefficient of Variation (CV) to monitor quality [31] [10].

- Check Reagent Stability and DMSO Tolerance: Determine the stability of all reagents under storage and assay conditions. Conduct DMSO compatibility tests early in validation, typically using concentrations from 0% to 10%, but aim to keep final DMSO under 1% for cell-based assays [8].

- Implement Orthogonal Assays: Use universal assay platforms (e.g., Transcreener) that detect common enzymatic products (like ADP for kinases) for broader applicability and confirmation of primary screen hits [31].

Q: What are the best strategies for managing and analyzing the vast amounts of data generated by HTS?

A: HTS produces vast volumes of multiparametric data that can be challenging to manage [7].

- Automate Data Management: Use automated data pipelines and analysis software. Implement Z-score normalization or Percent Inhibition/Activation calculations to convert raw signals into biologically meaningful metrics [7] [10].

- Leverage AI and Machine Learning: Apply AI-driven analytics for predictive modeling and pattern recognition. These tools can analyze massive HTS datasets, optimize compound libraries, and streamline assay design, significantly reducing time to identify drug candidates [14].

- Establish Rigorous QC Metrics: Continuously monitor the Z'-factor, S/B ratio, and CV throughout the screen. Plates failing pre-defined QC thresholds (e.g., Z' < 0.5) should be flagged or repeated [10].

Economic Impact of Streamlined Validation

Streamlining the validation process directly enhances research efficiency and reduces operational costs, offering a significant return on investment.

Table: Economic Benefits of Streamlined HTS Validation

| Benefit Area | Impact of Streamlining | Quantitative Evidence |

|---|---|---|

| Reagent Cost Reduction | Automation enables miniaturization, drastically reducing reagent consumption. | Cost reduction by up to 90% through miniaturization [7]. |

| Increased Throughput | Automated systems screen large compound libraries more efficiently. | Screening thousands of compounds in a short timeframe; 5-fold improvement in hit identification rates [14] [32]. |

| Reduced Development Timelines | Faster, more reliable validation and screening accelerates drug discovery. | HTS can reduce development timelines by approximately 30% [32]. |

| Capital Efficiency | Focused screening via AI triage optimizes resource use. | AI/ML in-silico triage can shrink required wet-lab library size by up to 80% [33]. |

Essential Experimental Protocols for Robust Validation

Protocol 1: Plate Uniformity and Signal Variability Assessment

This protocol evaluates the robustness and signal window of an assay before a full-scale screen [8] [10].

- Objective: To assess day-to-day and within-plate variability and ensure adequate signal separation.

- Plate Layout: Use an interleaved-signal format on 96- or 384-well plates. The plate should contain three types of control wells distributed in a pre-defined pattern:

- Max Signal (H): Represents the maximum assay response (e.g., uninhibited enzyme activity, maximal agonist response).

- Min Signal (L): Represents the background or minimum signal (e.g., fully inhibited enzyme, basal cellular response).

- Mid Signal (M): Represents a mid-point signal (e.g., IC50 or EC50 concentration of a control compound).

- Procedure: Run at least three plates per day for three separate days using independently prepared reagents.

- Data Analysis:

- Calculate the Z'-factor for each day and overall: Z' = 1 - [3*(σpositive + σnegative) / |μpositive - μnegative|].

- An assay is considered excellent if Z' > 0.5, and marginal if between 0.5 and 0 [10].

- Analyze data for spatial patterns or drift across the plate.

Protocol 2: Reagent Stability and DMSO Compatibility Testing

This ensures reagents perform consistently and that the assay tolerates the solvent used for compound libraries [8].

- Reagent Stability:

- Storage Stability: Test reagent activity after storage under proposed conditions (e.g., -80°C, -20°C).

- Freeze-Thaw Stability: Subject reagents to multiple freeze-thaw cycles (e.g., 3-5 cycles) and test activity compared to a fresh aliquot.

- In-Assay Stability: Hold critical reagents for various times at assay temperature before addition to test tolerance for operational delays.

- DMSO Compatibility:

- Prepare assay plates with a final DMSO concentration series (e.g., 0%, 0.5%, 1%, 2%, 5%).

- Run the assay under standard conditions without test compounds.

- Plot the assay signal (e.g., Max and Min) against DMSO concentration. The chosen DMSO concentration should not significantly affect the signal window or Z'-factor.

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Reagents for HTS Assay Validation

| Reagent / Solution | Function in Validation | Application Notes |

|---|---|---|

| Universal Assay Kits (e.g., Transcreener) | Detects universal products of enzymatic reactions (e.g., ADP, SAH). | Simplifies development for multiple targets within an enzyme family; uses mix-and-read formats (FI, FP, TR-FRET) [31]. |

| Positive Control Agonist/Inhibitor | Generates Max, Mid, and Min signals for statistical validation. | Critical for calculating Z'-factor; used in plate uniformity studies [8]. |

| Cell Viability/Cytotoxicity Assays | Counterscreens for identifying non-specific cytotoxic compounds in cell-based HTS. | Essential for distinguishing specific target modulation from general toxicity [14]. |

| Stable Cell Lines with Fluorescent Reporters | Provides consistent, physiologically relevant models for cell-based assays. | Enables high-content phenotypic screening and complex pathway analysis [14] [33]. |

HTS Assay Validation Workflow

The following diagram illustrates the key stages and decision points in a streamlined HTS assay validation workflow, from initial setup to full-scale screening.

Statistical Decision Process for HTS Validation

This diagram outlines the logical process for analyzing data from a plate uniformity study to determine if an assay is ready for high-throughput screening.

Building a Robust Workflow: Method Selection, Metrics, and Execution

Troubleshooting Guide: Common Issues in Assay Miniaturization

This guide addresses frequent challenges encountered when adapting assays to 384-well and 1536-well formats, providing targeted solutions to ensure robust and reliable results.

1. Problem: Poor Assay Robustness and Low Z′-Factor in 1536-Well Format

- Question: My assay Z′-factor has dropped below 0.5 after moving from a 384-well to a 1536-well plate. What steps can I take to improve robustness?

- Investigation & Solution:

A low Z′-factor often signals high variability or a diminished signal window.

- Check Liquid Handling Precision: At volumes of 5-8 µL, even minor pipetting errors become significant. Verify the calibration and performance of your liquid handler. Use technologies with built-in droplet verification to confirm dispensed volumes [7].

- Re-optimize Reader Settings: Instrument parameters from 384-well formats do not directly translate. You must empirically optimize settings like gain, focal height, and the number of flashes per well for the 1536-well format [34]. For example, one study found gains needed to be increased and the focal height lowered when transitioning to a 1536-well plate [34].

- Combat Evaporation: The high surface-to-volume ratio makes low-volume assays susceptible to evaporation, leading to edge effects and concentration changes. Use effective plate seals, humidity-controlled incubators, or enclosure devices to minimize evaporation [34].

2. Problem: Inconsistent Results Across the Microplate

- Question: I am observing a "edge effect," where wells on the perimeter of my 384-well plate show different activity levels compared to interior wells.

- Investigation & Solution:

Inconsistent results across a plate are frequently caused by environmental or dispensing inhomogeneity.

- Confirm Sealing and Incubation: Ensure plate seals are applied uniformly and are compatible with your incubation conditions. Inadequate sealing exacerbates evaporation and can lead to cross-contamination between wells in high-density formats [34].

- Validate Dispenser Uniformity: Check that your liquid dispenser provides consistent volume delivery across all wells, not just the center. Perform a volume verification test using a colorimetric method across the entire plate [35].

- Review Plate Layout: When possible, avoid confining all critical controls to a single area of the plate. Distributing controls across the plate, including edges and the center, helps identify and account for spatial variations [36].

3. Problem: High Incidents of False Positives or Negatives

- Question: My miniaturized HTS campaign is yielding an unusually high rate of false positives and negatives.

- Investigation & Solution:

Artifactual results can stem from compound interference or data handling errors.

- Assay Technology: Consider using detection technologies less prone to compound interference. Assays employing far-red fluorescent tracers, for example, can reduce interference from compound autofluorescence, which is a common source of false positives [34] [37].

- Implement Stringent QC Thresholds: Define and enforce pre-set quality control metrics for each plate, such as a minimum Z′-factor (e.g., >0.5) and acceptable control CVs. Any plate failing these criteria should be flagged for re-testing [34] [37].

- Verify Sample Tracking: In high-throughput environments, misidentified assay plates or compound mixes can lead to erroneous results. Implement a robust barcode system for plates and compounds to ensure data integrity from the beginning to the end of the workflow [38].

4. Problem: Software and Hardware Integration Hurdles

- Question: Integrating my new automated liquid handler with the existing Laboratory Information Management System (LIMS) and data analysis software is creating data silos and workflow bottlenecks.

- Investigation & Solution:

Interoperability issues are common in automated workflows involving multiple vendors.

- Adopt a Modular, Vendor-Agnostic Approach: Prioritize systems designed with interoperability in mind. Investing in a modular architecture allows you to swap or add components without disrupting the entire workflow [39].

- Select for API and Open Standards: Choose hardware and software that offer well-documented Application Programming Interfaces (APIs) and support open data standards. This facilitates smoother communication between different systems, such as liquid handlers, plate readers, and data management platforms [39].

- Leverage Integration Platforms: Consider using a unified software platform that acts as a "wrapper" to integrate your disparate systems. Such platforms can manage the entire workflow, from compound management and experiment definition to data analysis, creating a single source of truth [39].

Experimental Protocol: Transitioning a Biochemical Assay to 1536-Well Format

The following detailed methodology, adapted from a Transcreener ADP² assay optimization guide, provides a step-by-step framework for validating assay performance in a 1536-well plate [34].

1. Plate and Reagent Preparation

- Plate Selection: Use a 1536-well low volume plate with black walls and a flat bottom (e.g., Corning #3728) to minimize background fluorescence and maximize signal detection [34].

- Reaction Volume: Scale the total reaction volume down to ~8 µL. Maintain the same reagent ratios that were optimized in your 384-well assay during initial testing [34].

2. Instrument Calibration and Setup

- Liquid Handler: Calibrate the liquid handler for precise nanoliter-volume dispensing. Validate performance by dispensing a colored solution and measuring well-to-well uniformity using a plate reader [7] [35].

- Plate Reader: Configure the reader with settings optimized for the 1536-well format. Do not reuse settings from 384-well assays. Example parameters for a BMG PHERAstar Plus are provided in the table below [34].

3. Assay Validation and QC Metrics

- Generate a Standard Curve: Create a dilution series of ADP in the presence of ATP to mimic enzyme conversion (e.g., 0%, 10%, 50%, 100% conversion). This curve validates the assay's ability to detect the product across a dynamic range [34].

- Calculate Z′-Factor: Perform the assay with positive controls (e.g., full reaction) and negative controls (e.g., no enzyme) on the same plate. Calculate the Z′-factor using the formula below. A Z′ ≥ 0.7 is recommended for a robust HTS assay [34] [37].

- Pilot Screen: Before running a full-scale screen, execute a pilot campaign of 10,000–50,000 wells to monitor real-world performance, including hit rates, plate-to-plate variability, and throughput [34].

Key Performance Metrics from a Transcreener ADP² Assay in 1536-Well Format [34]

| ATP Concentration | Z′-factor (at 10% conversion) | ΔmP (Signal Window) |

|---|---|---|

| 1 µM | 0.83 | >95 mP |

| 10 µM | 0.78 | >95 mP |

| 100 µM | 0.87 | >95 mP |

Optimized Plate Reader Settings for 1536-Well Format [34]

| Parameter | 384-Well Setting | 1536-Well Setting |

|---|---|---|

| Gain A | 1550 | 2000 |

| Gain B | 1695 | 2100 |

| Focal Height | 11.2 mm | 9.5 mm |

| Flashes per Well | 50 | 200 |

Frequently Asked Questions (FAQs)

Q1: What is the primary driver for moving from 96-well to 384-well or 1536-well assays? The primary drivers are cost reduction and increased throughput. Miniaturization drastically reduces reagent consumption, especially for precious enzymes and compounds, which can lead to cost savings of up to 90% [7]. Furthermore, 1536-well plates allow researchers to screen hundreds of thousands of compounds in a much smaller footprint and shorter time, significantly accelerating the drug discovery process [40] [34].

Q2: How do I know if my assay is a good candidate for miniaturization to a 1536-well format? Assays with a robust signal-to-background ratio, a homogeneous "mix-and-read" format (no wash steps), and low susceptibility to solvent evaporation are ideal candidates [40] [34]. Biochemical assays that have been successfully run in 384-well format with a high Z′-factor (e.g., >0.7) are excellent starting points. Cell-based assays can be more challenging due to increased complexity but can also be miniaturized with careful optimization.

Q3: What is the most critical parameter to monitor during miniaturization? The Z′-factor is the most critical statistical parameter for assessing assay quality and robustness in an HTS environment. It accounts for both the dynamic range of the assay signal and the variation of the positive and negative controls. A Z′-factor between 0.5 and 1.0 is considered excellent [34] [37].

Q4: Our automated workflow is fast, but we are facing audit findings for data integrity. How can automation help? Automation should be used to enforce controls, not just speed up processes. Ensure your automated systems are configured to maintain a complete and immutable audit trail for all actions, with unique user logins and electronic signatures that comply with 21 CFR Part 11 [41]. Furthermore, integrating barcode tracking for every assay plate and compound tube throughout the workflow prevents misidentification and creates a reliable chain of custody, which is a common source of errors and audit findings [38].

Q5: We use equipment from multiple vendors. How can we ensure they work together seamlessly? To overcome hardware interoperability challenges, invest in a modular and vendor-agnostic software architecture [39]. Work closely with your vendors to understand their API capabilities and driver support. Selecting equipment that supports open standards for communication and data formats, rather than proprietary, closed systems, will significantly ease integration efforts [39].

Assay Miniaturization and Automation Workflow

The diagram below illustrates the key stages and decision points in a successful assay miniaturization and automation project.

The Scientist's Toolkit: Key Research Reagent Solutions

This table lists essential materials and technologies used in the development and execution of miniaturized, automated assays.

| Item | Function in Miniaturized Assays |

|---|---|

| 1536-Well Low Volume Plates | Microplates specifically designed with a small well volume and optimal optical properties for fluorescence-based readouts in ultra-high-throughput screening [34]. |

| Precision Liquid Handler | Automated systems (e.g., non-contact dispensers) capable of accurately and reproducibly dispensing liquid volumes in the microliter to nanoliter range, which is critical for 384-well and 1536-well formats [7]. |

| Homogeneous Assay Kits | Ready-to-use reagent systems (e.g., Transcreener, HTRF) that operate on a "mix-and-read" principle without wash steps, making them ideal for automation and miniaturization [34] [37]. |

| Barcode Labels | Unique identifiers applied to microplates and tube racks that enable reliable, automated tracking of samples and data throughout complex workflows, preventing misidentification [38]. |

| Laboratory Information Management System (LIMS) | Software that manages samples, associated experimental data, and laboratory workflows. It is central to standardizing data and ensuring traceability in an automated environment [39] [38]. |

FAQs on Key Performance Metrics

What is the Z'-factor and why is it the preferred metric for HTS assay quality?

The Z'-factor is a statistical measure used to assess the quality and robustness of high-throughput screening (HTS) assays. It is preferred over simpler metrics like signal-to-background (S/B) ratio because it incorporates both the dynamic range (the difference between the means of the positive and negative controls) and the variability (the standard deviations) of both controls into a single value [42] [43]. This provides a more accurate prediction of an assay's suitability for screening by quantifying how well it can distinguish between positive and negative signals on a large scale [43]. A good Z'-factor indicates that the assay can reliably identify true hits with minimal false positives and false negatives [44].

How do I calculate the Z'-factor, S/N ratio, and CV?

The formulas for calculating these key metrics are as follows:

- Z'-factor: The formula is

Z' = 1 - [3(σp + σn) / |μp - μn|], where: - Signal-to-Noise (S/N) Ratio: This is calculated as

S/N = (μp - μn) / σn, where the noise is represented by the variability of the negative control [44]. - Coefficient of Variation (CV): This is calculated as

CV = (σ / μ) * 100%, and is often expressed as a percentage. It represents the ratio of the standard deviation to the mean, showing the extent of variability in relation to the mean signal [46].

My assay has an excellent S/B ratio but a poor Z'-factor. What does this mean?

This is a common scenario that highlights the importance of using Z'-factor. An excellent S/B ratio indicates a large difference between the average positive and negative signals. However, a poor Z'-factor reveals that the data has high variability (large standard deviations) in one or both controls [43]. This means that despite the strong signal, the data distributions overlap significantly, making it difficult to reliably distinguish between true hits and background noise during a screen, leading to potential false positives or negatives [43] [44].

What is an acceptable Z'-factor for my HTS assay?

While the ideal Z'-factor is 1, this is not achievable in practice. The following table provides the standard interpretation guidelines for Z'-factor values in HTS [42] [43] [45]:

| Z'-factor Range | Assay Quality | Interpretation |

|---|---|---|

| 0.8 – 1.0 | Excellent | Ideal separation and low variability. Highly robust for HTS. |

| 0.5 – 0.8 | Good | Suitable for HTS. Clear separation between controls. |

| 0 – 0.5 | Marginal | The assay may be usable but requires optimization for HTS. |

| < 0 | Poor | Significant overlap between controls. Screening is essentially unreliable. |

For complex assays like high-content screening (HCS), a Z'-factor in the marginal range (0 to 0.5) may sometimes be acceptable if the biological hits are considered valuable [45].

How can I improve a low Z'-factor?

A low Z'-factor can be systematically diagnosed and improved by targeting its components:

- If signal variability (σp) is high: Optimize reagent concentrations, pipetting accuracy, or incubation times for the positive control [43].

- If background variability (σn) is high: Improve washing steps, stabilize buffer conditions, or check for contaminations [43].

- If the dynamic range (|μp - μn|) is low: Increase substrate concentration, optimize detection chemistry, or use a stronger positive control to enhance the signal window [43].

Experimental Protocol: Assessing Assay Robustness with a Plate Uniformity Study

This protocol, adapted from the Assay Guidance Manual, is designed to validate assay performance across multiple plates and days, providing robust data for calculating Z'-factor, S/N, and CV [8].

1. Objective To assess the signal variability, dynamic range, and overall robustness of an HTS assay under conditions that simulate a full-scale screen.

2. Materials and Reagents

- Research Reagent Solutions:

- Positive Control (Max signal): Represents the maximum assay response (e.g., enzyme with saturating substrate, maximal agonist) [8].

- Negative Control (Min signal): Represents the background or baseline signal (e.g., no enzyme, solvent control, full inhibitor) [8].

- Mid-Point Control (Mid signal): An intermediate control (e.g., EC50 or IC50 concentration of a reference compound) to assess variability across the signal range [8].

- Assay buffer and plates (96-, 384-, or 1536-well)

- DMSO at the concentration used for compound delivery

3. Procedure

- Day 1-3: Perform the assay each day using independently prepared reagents.

- Plate Layout: Use an interleaved-signal format on each plate to control for spatial bias. A recommended layout for a 384-well plate is shown below [8].

- Execution: On each day, run multiple plates. Include the intended screening concentration of DMSO in all wells. Use the same plate reader and liquid handling systems intended for the production screen [8].

4. Data Analysis

- For each control type (Max, Min, Mid) on each day, calculate the mean (μ) and standard deviation (σ).

- Input these values into the formulas provided above to calculate the Z'-factor, S/N ratio, and CV for the assay.

- The data from the Mid-point control is valuable for understanding how variability might affect partial hits.

Troubleshooting Guide: Addressing Common Problems

| Problem | Potential Causes | Solutions |

|---|---|---|

| Low Z'-factor | High variability in controls; Small signal window. | Identify source of variability (σp or σn); Increase dynamic range by optimizing reagent concentrations [43]. |

| High CV in Positive Control | Unstable reagents; Inconsistent pipetting; Evaporation. | Aliquot and test reagent stability; Calibrate liquid handlers; Use sealed plates [8]. |

| Inconsistent S/N Ratio | Fluctuating background signal; Unstable instrumentation. | Identify and stabilize source of background noise (e.g., buffers, washing); Perform regular instrument maintenance [44]. |

| Edge Effects on Plate | Temperature and evaporation gradients across the plate. | Use plates with lids; Ensure uniform incubation; Consider using spatially alternating controls for normalization [45]. |

| Z'-factor > 0.5, but poor hit confirmation | Controls are not representative of sample behavior. | Ensure positive control strength is similar to expected hits; Re-evaluate control selection [45]. |

Relationship Between Key Metrics and Assay Quality

The following diagram summarizes how the different critical metrics interact to define the overall quality and decision-making process for an HTS assay.

Key Takeaways for Streamlining Validation

Integrating the assessment of Z'-factor, S/N ratio, and CV from the initial stages of assay development is crucial for streamlining the validation process for HTS.

- Use Z'-factor as Your Primary Metric: It provides the most comprehensive assessment of assay robustness for screening [43] [44].

- Go Beyond S/B: Do not rely solely on the signal-to-background ratio, as it can be misleading [43].

- Monitor CV for Process Control: Tracking the coefficient of variation of your controls is an excellent way to monitor assay precision and stability over time [46].

- Validate with Realistic Conditions: Plate uniformity studies conducted over multiple days with the final screening parameters are essential for predicting success in a full-scale HTS campaign [8].

Strategic Plate Design for Positive and Negative Controls

In High-Throughput Screening (HTS), controls are not merely supplementary; they are fundamental to validating your assay and interpreting your data with confidence. They serve as the benchmark for determining whether your experimental results are biologically meaningful or a consequence of technical artifact. A well-designed experiment includes controls to aid troubleshooting, confirm the assay is functioning as expected, rule out alternative interpretations, and calibrate the system against biological variation [47]. Strategic plate design ensures these controls are positioned to maximize data quality and minimize the impact of systematic biases, forming the cornerstone of streamlined assay validation [48] [49].

The Purpose and Types of Controls

Understanding the distinct roles of different controls is the first step in designing a robust HTS experiment.

Experimental vs. Biological Controls

- Experimental Controls are primarily for troubleshooting a multi-stage protocol. They help you identify where a problem occurred if the experiment fails. These include a known sample that should yield a positive result and a blank or vehicle sample that should yield a negative result at each stage of the process [47].

- Biological Controls are used to validate that your results are real and to prove both positives and negatives. A positive biological control shows that it was possible to detect an effect, while a negative biological control confirms that a detected signal is specific to the experimental condition [47].

The Essential Control Toolkit

The table below summarizes the key controls used in HTS and their specific functions.

| Control Type | Primary Function | Example in HTS |

|---|---|---|

| Positive Control | Confirms the assay can detect a true positive signal and "works." Provides a reference for maximum response [47] [19]. | A known agonist for a target receptor or a compound that induces a specific phenotypic change. |

| Negative Control | Establishes the baseline or background signal in the absence of the effect being measured. Critical for proving a positive result is specific [47]. | A vehicle control (e.g., DMSO), an untreated cell population, or a non-targeting siRNA. |

| Fluorescence-Minus-One (FMO) | Serves as a gating control in flow and mass cytometry to accurately distinguish negative from dimly positive cell populations, especially in multicolor panels [50]. | Cells stained with all antibodies except one, used to set boundaries for flow cytometry analysis. |

| Isotype Control | Helps determine the contribution of non-specific antibody binding to the signal, reducing false positives [50]. | An antibody with the same species and isotype as the primary antibody but no target specificity. |

| Counter-Screens | Identifies and filters out compounds that interfere with the assay read-out mechanism itself (e.g., auto-fluorescent compounds) [19] [51]. | A secondary assay designed to detect general interference like luciferase inhibition or fluorescence. |

Strategic Plate Layout Design

The physical location of your samples and controls on the microplate can significantly affect the resulting data due to "plate effects," such as evaporation in edge wells or temperature gradients across the plate [49]. A strategic layout is designed to mitigate these biases.

Core Principles of Plate Design

- Mitigate Edge Effects: Plate edges can exhibit different behaviors due to increased evaporation. Strategic layouts do not concentrate all critical controls on the perimeter.

- Distribute Controls Evenly: Placing controls throughout the plate allows for the detection and statistical correction of positional biases during data normalization.

- Ensure Representativeness: Controls should be subjected to the same average conditions as your test samples to be valid comparators.

- Facilitate Accurate QC Metrics: Common quality metrics like Z'-factor and Strictly Standardized Mean Difference (SSMD) can be inflated by poor plate design, giving a false sense of data quality [48] [49]. A good design reduces this risk.

Common Plate Layout Strategies

The following diagram illustrates three common strategies for arranging positive (Pos) and negative (Neg) controls on a microplate.

Quantitative Quality Control Metrics

Once controls are strategically placed, their data is used to calculate objective metrics for assessing assay quality. The table below compares two common metrics.

| Quality Metric | Formula / Principle | Interpretation | Advantage | ||

|---|---|---|---|---|---|

| Z'-Factor [19] | `1 - (3*(σp + σn) / | μp - μn | )`Where σ=std dev, μ=mean, p=positive, n=negative. | > 0.5: Excellent assay0.5 to 0: Marginally acceptable< 0: Low separation, poor assay | A simple, widely used metric for assay robustness. |

| Strictly Standardized Mean Difference (SSMD) [48] | (μ_p - μ_n) / √(σ_p² + σ_n²) |

Accounts for the variability and effect size between controls. Provides a probabilistic basis for hit selection. | Provides consistent QC results for multiple positive controls with different effect sizes, unlike Z'-factor [48]. |

Troubleshooting Guides and FAQs

High Background Signal

- Problem: The signal from negative controls is unusually high, compressing the dynamic range and potentially obscuring true positive hits.

- Investigation & Solutions:

- Check Reagent Contamination: Impurities in buffers, fixatives, or permeabilization reagents can cause high background. Test new batches of reagents [52].

- Confirm Blocking Steps: For assays involving antibodies, non-specific binding can occur. Ensure you have included a blocking step with an appropriate buffer (e.g., Fc receptor block) prior to staining [52] [50].

- Optimize Wash Steps: Increase the volume, number, or duration of wash steps to ensure all unbound reagents are removed [50].

- Review Antibody Titration: The antibody concentration may be too high. Re-titrate antibodies to find the optimal signal-to-noise ratio [50].

- Assess Cell Health: Use a viability dye. Dead cells and cells from dissociated tissues can exhibit high autofluorescence and non-specific binding [50].

No or Weak Marker Signal

- Problem: The positive control fails, or the expected signal from test samples is absent or very weak.

- Investigation & Solutions:

- Verify Cell Viability and Preparation: Use fresh, highly viable cells. If using frozen cells, ensure they are properly resuscitated. Check that enzymatic digestion (e.g., trypsin) has not destroyed the epitope of interest [52] [50].

- Confirm Target Accessibility: For intracellular targets, ensure the fixation and permeabilization steps are appropriate and have been optimized for your specific target molecule [52] [50].

- Titrate Antibodies and Reagents: The staining concentration of antibodies or other detection reagents may be too low. Perform a titration experiment. Also, titrate the concentration of fixation and permeabilization reagents [52] [50].

- Check Instrumentation: Ensure the correct lasers and filter sets are being used for your fluorochrome or detection method. Verify laser alignment and instrument performance using calibration beads [50].

High Well-to-Well Variance in Controls

- Problem: Replicate control wells show inconsistent results, leading to unreliable quality metrics.

- Investigation & Solutions:

- Review Liquid Handling: Check the precision of automated liquid handlers. Ensure they are properly calibrated and are dispensing consistently across the plate.

- Confirm Cell Counts: Normalize the number of cells per well to a consistent value. High variance in cell number will directly translate to signal variance [52].

- Check Reagent Consistency: Use the same master mix of reagents for all samples and controls to minimize preparation variance. Note the shelf life of antibodies and use fresh batches where possible [52].

- Inspect Plate Sealing: Ensure plates are properly sealed during incubation to prevent edge effects caused by evaporation.

The Scientist's Toolkit: Essential Research Reagents

A successful HTS assay relies on a suite of well-characterized reagents. The following table details key materials and their functions.