Strategic Cost Reduction in High-Throughput Experimentation: 2025 Guide for Research Efficiency

This article provides a comprehensive guide for researchers, scientists, and drug development professionals seeking to implement effective cost reduction strategies in high-throughput experimentation (HTE) workflows.

Strategic Cost Reduction in High-Throughput Experimentation: 2025 Guide for Research Efficiency

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals seeking to implement effective cost reduction strategies in high-throughput experimentation (HTE) workflows. Covering foundational principles to advanced applications, we explore how strategic automation, AI integration, workflow optimization, and validation methodologies are transforming HTE economics. Readers will gain practical insights into minimizing operational expenses while maintaining research quality and accelerating discovery timelines across pharmaceutical and materials science applications. The content synthesizes current industry best practices, real-world case studies, and emerging trends to deliver actionable frameworks for building more efficient and sustainable research operations.

Understanding HTE Cost Drivers and Fundamental Efficiency Principles

High-Throughput Experimentation (HTE) has become a cornerstone of modern research, particularly in drug discovery and materials science. While HTE enables the rapid testing of thousands of compounds or conditions, it requires significant financial investment. The core financial challenge of HTE lies in balancing the high upfront and operational costs against the potential for long-term savings through accelerated research cycles and more efficient resource utilization. This technical support center is designed within the broader thesis of implementing cost-reduction strategies, helping you identify and troubleshoot specific issues that contribute to budget overruns and inefficiency.

Frequently Asked Questions (FAQs): Cost and Operational Management

Q1: What are the largest cost drivers in a typical HTE workflow? The largest cost drivers in HTE are personnel, specialized instrumentation, and reagents/consumables. Personnel costs are high due to the need for specialized expertise to operate and maintain complex automated systems. Instrumentation, including liquid handlers, detectors, and automated solid dispensers, represents a major capital expense and requires ongoing maintenance. Furthermore, while miniaturization reduces volume, the vast number of experiments run in HTE leads to substantial cumulative spending on chemical reagents, assay kits, and consumables like tips and microplates [1] [2].

Q2: How can automation specifically lead to cost reduction in HTE? Automation reduces costs in several key ways. It directly cuts labor costs by handling repetitive tasks and enables miniaturization of reaction scales, reducing reagent consumption by up to 90% [3]. Automated systems also enhance reproducibility and data quality, minimizing the costly repetition of failed experiments due to human error. Furthermore, automation increases throughput, allowing more candidates to be screened in less time and accelerating the overall research timeline [4] [2].

Q3: My HTE results suffer from high variability, leading to costly repeats. What could be the cause? High variability often stems from manual processes, which are subject to inter- and intra-user differences. Inconsistent liquid handling, errors in compound dilution, and improper powder weighing at small scales are common culprits. Implementing automated liquid handlers and powder-dosing robots can standardize these processes. Additionally, data handling challenges can introduce variability; using integrated software for data capture and analysis ensures consistency [3].

Q4: What is a common pitfall when first implementing an HTE strategy to manage costs? A common pitfall is focusing solely on the purchase of hardware without investing in the corresponding software and personnel training. Successful HTE requires robust data management systems to handle the vast amounts of data generated. Furthermore, colocating HTE specialists with general researchers fosters a cooperative, efficient approach rather than a slow, service-led model, ensuring the technology is used to its full potential [2].

Troubleshooting Guides

Problem 1: Inconsistent Results in Automated Assays

Symptoms: High well-to-well or plate-to-plate variability, high rates of false positives/negatives, and poor reproducibility of dose-response curves.

Diagnostic Table:

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Liquid Handler Error | Use built-in verification features (e.g., DropDetection). Run a dye-based dispense test to visualize volume accuracy and consistency [3]. | Re-calibrate the liquid handler. Check for clogged tips or worn seals. For non-contact dispensers, optimize parameters for specific liquid viscosity. |

| Sample Evaporation | Check for volume loss in edge wells over time, especially in long-running assays. | Use sealed or covered microplates. Employ automation systems with resealable gaskets to prevent evaporation [2]. |

| Cell Culture Contamination | Check for microbial growth under a microscope. Assess cell viability and morphology. | Review aseptic techniques. Use antibiotics/antimycotics in media. Regularly test for mycoplasma. |

| Compound Precipitation | Visually inspect wells for turbidity. | Optimize solvent (e.g., use DMSO). Include detergents in the assay buffer to improve compound solubility. |

Problem 2: Unexplained High Operational Costs

Symptoms: Budget overruns on reagents and consumables, frequent need to repeat screens, and higher-than-expected costs per data point.

Diagnostic Table:

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Non-Optimized Reagent Use | Audit reagent consumption against theoretical usage. Compare actual costs per plate to projected costs. | Implement low-volume (nanoliter) dispensing to miniaturize assays [3] [5]. Use automated systems to precisely dispense expensive reagents. |

| High Repeater Rate | Analyze data to determine the percentage of experiments that must be repeated due to poor quality or failure. | Identify the root cause of failures using other guides in this document. Improve initial assay robustness and quality control steps. |

| Manual Powder Dosing Errors | Weigh manually dosed samples on a high-precision balance to check for deviations from target mass. | Implement an automated powder dosing system (e.g., CHRONECT XPR), which can dose from sub-mg to grams with high accuracy, eliminating human error and saving time [2]. |

| Inefficient Workflow Design | Map the current workflow and track time and resource use at each stage. | Re-design the workflow to leverage automation for parallel processing. Automate data analysis to reduce the time from experiment to insight [3]. |

Essential Research Reagent Solutions

The following table details key materials and reagents critical for successful and cost-effective HTE operations.

| Item | Function in HTE | Cost-Reduction Consideration |

|---|---|---|

| Liquid Handling Systems | Automates precise dispensing and mixing of small sample volumes across thousands of wells [5]. | Enables miniaturization, reducing reagent consumption by up to 90%. High precision reduces error-related repeat costs [3]. |

| Automated Solid Dispensers | Precisely weighs and dispenses solid reagents (catalysts, starting materials) into reaction vials [2]. | Eliminates significant human error in manual weighing, especially at sub-mg scales. Reduces weighing time from 5-10 minutes/vial to minutes for an entire plate [2]. |

| Cell-Based Assay Kits | Provide optimized reagents and protocols for high-throughput phenotypic screening [5]. | While potentially expensive per kit, they save on development and validation time, accelerating research and providing more physiologically relevant data. |

| Guard Columns & In-Line Filters | Protects the main analytical column from particulates and contaminants [6] [7]. | A low-cost consumable that extends the life of expensive analytical HPLC columns, preventing costly replacements and downtime. |

| High-Purity Solvents & Buffers | Used as mobile phases in analytical chemistry and as reaction solvents. | Using HPLC-grade solvents prevents baseline noise and column contamination, which can lead to costly instrument downtime and repeated analyses [7]. |

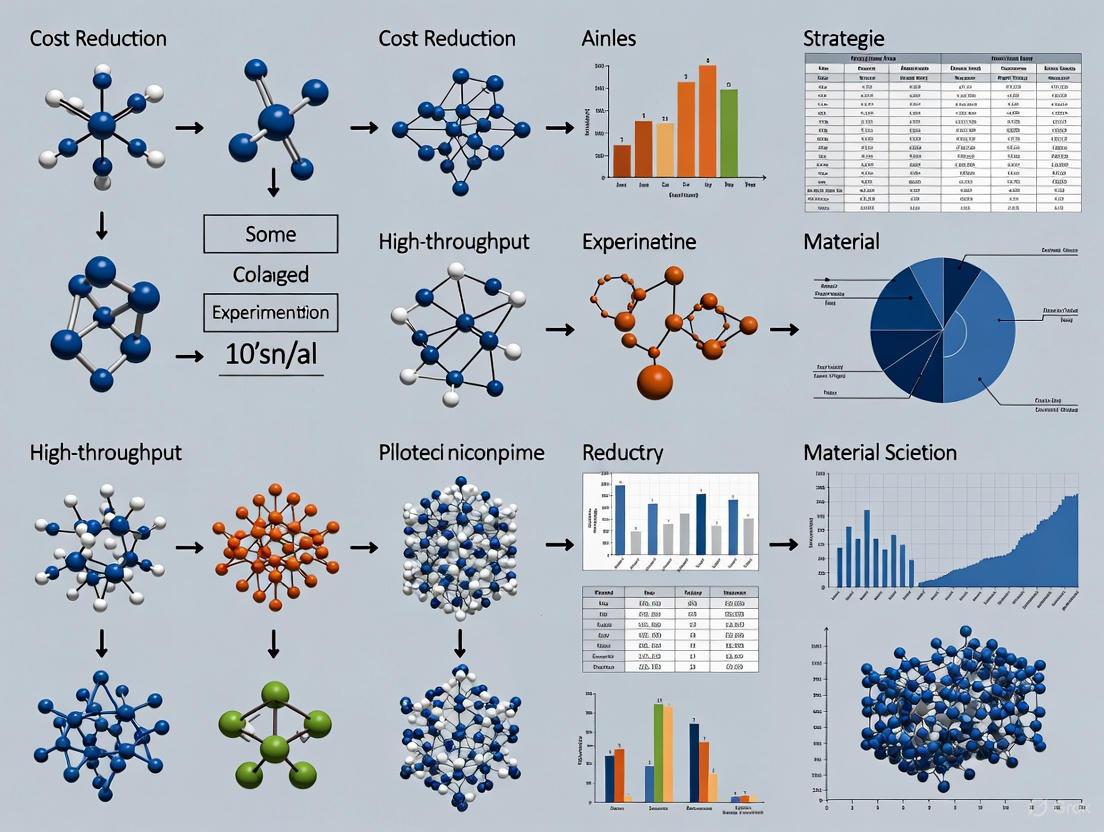

Visualizing Cost-Reduction Strategies in HTE

The following diagram illustrates the logical relationship between common HTE pain points, the underlying causes, and the targeted cost-reduction strategies that address them.

Standard Operating Procedure: Implementing an Automated Powder Dosing System

Objective: To reliably and accurately dispense solid reagents in milligram to gram quantities for HTE, reducing human error, saving time, and cutting material costs.

Background: Manual weighing of solids is a major bottleneck and source of error, especially for small masses. Automated powder dosing ensures consistency and frees highly trained personnel for more complex tasks [2].

Materials and Equipment:

- Automated Powder Dosing System (e.g., CHRONECT XPR)

- Source material (free-flowing, fluffy, or electrostatic powders)

- Target vials (e.g., 2 mL, 10 mL, 20 mL sealed or unsealed vials)

- Balance for verification

Step-by-Step Protocol:

- System Setup: Install the CHRONECT XPR system within an inert atmosphere glovebox if handling air- or moisture-sensitive materials. Load up to 32 standard dosing heads with the required compounds [2].

- Method Programming: Using the control software, create a dispensing method. Specify the target mass for each component (range: 1 mg to several grams) and assign the target vials in the array.

- Dispensing Execution: Initiate the automated sequence. The system will dispense each component. Typical dispensing time is 10-60 seconds per component, depending on the compound's properties [2].

- Quality Control: After the run is complete, randomly select a subset of vials and verify the dispensed mass using a calibrated high-precision balance.

- Acceptance Criteria: For low masses (sub-mg to low single-mg), a deviation of <10% from the target is acceptable. For higher masses (>50 mg), deviation should be <1% [2].

- Downstream Processing: Once dispensing is verified, proceed with adding liquids and running the HTE reactions.

Troubleshooting this Protocol:

- Issue: Poor accuracy for fluffy or electrostatic powders.

- Solution: Ensure the dosing head is appropriate for the powder type. The system is designed to handle a wide range of powder characteristics, but optimization might be needed [2].

- Issue: The process is not faster than manual weighing for a simple 8-vial experiment.

- Solution: The primary time savings and error reduction are realized in complex screens (e.g., 96-well plates for catalytic cross-coupling) where manual errors are "significant" [2]. The benefit scales with the number of data points.

This technical support center provides troubleshooting guides and FAQs to help researchers identify and eliminate waste in their experimental workflows. By applying the five core principles of Lean manufacturing—Define Value, Map the Value Stream, Create Flow, Establish Pull, and Pursue Perfection—you can significantly reduce costs and increase efficiency in high-throughput experimentation environments [8] [9].

Troubleshooting Guides: Applying Lean Principles to Common Research Problems

How can I identify non-value-added steps in my experimental protocol?

Problem: The experimental process feels slow, costly, or fails to deliver the expected quality of results. Solution: Systematically analyze your workflow to identify and eliminate waste.

- Define Value from a Customer Perspective: In a research context, the "customer" can be the project lead, a collaborating team, or the end goal of a drug development pipeline. Clearly define what a successful outcome looks like (e.g., a specific data point, a purified compound, a validated target) [8].

- Map the Value Stream: Visually map every step of your experimental protocol, from reagent preparation to data analysis. Use a flowchart to document each action, decision point, and transfer [8].

- Identify Waste (The 8 Wastes in Research): Compare your value stream map against common types of waste. The table below adapts classic Lean wastes to a research setting [8] [9].

| Type of Waste | Description | Research Workflow Example |

|---|---|---|

| Waiting | Idle time between process steps | Waiting for access to shared equipment (e.g., centrifuge, plate reader); waiting for approval to proceed. |

| Over-production | Producing more than is needed | Generating more data or samples than required for the immediate next step, consuming unnecessary reagents. |

| Over-processing | Doing more work than is required | Using a high-precision, costly assay when a simpler method would suffice; collecting data that is not used. |

| Inventory | Excess materials or samples | Stockpiling reagents beyond their usable shelf life, leading to spoilage and waste. |

| Motion | Unnecessary movement of people | Poor lab layout requiring scientists to walk long distances to gather supplies or use instruments. |

| Transport | Unnecessary movement of materials | Inefficient sample transport between labs or buildings, increasing risk of damage or delay. |

| Defects | Errors or rework | Experimental errors requiring the entire process to be repeated, wasting time and materials. |

| Unused Talent | Underutilizing skills and knowledge | Not leveraging a team member's expertise in automation or data analysis that could streamline the process. |

How can I reduce bottlenecks and improve the flow of my experiments?

Problem: Experiments are frequently delayed at specific points, creating a backlog and slowing down overall research progress. Solution: Reconfigure steps to create a smooth, uninterrupted flow [8].

- Break Down and Reconfigure Steps: Analyze the bottleneck step. Can it be subdivided or performed differently? For example, if cell culture is a bottleneck, can passages be scheduled more evenly?

- Level the Workload: Balance tasks across team members and instruments to prevent overloading a single resource.

- Create Cross-Functional Understanding: Ensure researchers are trained on multiple instruments or procedures to provide flexibility when bottlenecks occur [8].

- Automate Where Possible: Implement work automation platforms to manage repetitive tasks, such as data entry or sample tracking, reducing manual errors and freeing up researcher time [9].

How can I implement a "Just-in-Time" (Pull) system for lab supplies?

Problem: Lab space is cluttered with excess inventory, reagents expire before use, and capital is tied up in unused supplies. Solution: Establish a pull-based inventory system to order supplies only as they are needed [8].

- Analyze Usage Patterns: Track the consumption rate of key reagents and consumables over time.

- Establish Reorder Points: Define a minimum quantity for each item that triggers a reorder. The reorder point should be calculated based on the lead time from your supplier and your average usage rate.

- Use a Kanban System: Implement a visual system, such as a two-bin system, where an empty bin signals the need to reorder that item. This creates a simple "pull" signal based on actual consumption [9].

Frequently Asked Questions (FAQs)

What are the core principles of Lean, and how do they apply to research?

Lean is built on five principles that provide a recipe for improving workplace efficiency [8]:

- Define Value: Determine what the research customer (e.g., your project) values most.

- Map the Value Stream: Identify all steps in your workflow and eliminate those that do not add value.

- Create Flow: Ensure the remaining steps proceed smoothly without interruptions.

- Establish Pull: Shift from a "push" (make everything in advance) to a "pull" system (work is triggered by demand) to limit inventory and work-in-progress.

- Pursue Perfection: Continuously strive for improvement by making Lean thinking part of your lab's culture.

My research is creative and unpredictable. Can Lean still help?

Yes. Lean is not about stifling creativity but about eliminating unnecessary, repetitive waste that hinders it. By streamlining predictable tasks like lab maintenance, supply ordering, and data management, you free up more time and mental energy for the creative aspects of experimental design and analysis [9].

How can I track if my Lean improvements are working?

Establish Key Performance Indicators (KPIs) linked to your cost-reduction and efficiency goals. Monitor metrics such as [9]:

- Experiment Cycle Time: Time from starting an experiment to obtaining analyzed data.

- Reagent Waste Cost: Monetary value of expired or discarded materials.

- Equipment Utilization Rate: Percentage of time equipment is in productive use.

- Error/Repetition Rate: Frequency of experiments that must be repeated due to error.

What is the most important Lean principle for a lab to start with?

While all principles are interconnected, "Define Value" is the most critical starting point. Without a clear understanding of what constitutes value for your specific research, you cannot effectively identify which activities are waste. Engage your team in a discussion to define value for your key projects before mapping your value streams [8].

Workflow Visualization: Value Stream Mapping for a Standard Assay

The following diagram illustrates a simplified, non-Lean workflow for a cell-based assay, highlighting common sources of waste.

Non-Lean Assay Workflow with Waste

After applying Lean principles, the workflow is streamlined by introducing a pull system for reagents, standardizing protocols, and automating data transfer to reduce waiting, errors, and over-production.

Lean Assay Workflow with Waste Reduced

The Scientist's Toolkit: Key Research Reagent Solutions

Efficient management of reagents and materials is fundamental to reducing waste and cost. The following table details essential material categories and their functions in a high-throughput context.

| Category & Item | Function in High-Throughput Experimentation |

|---|---|

| Cell Culture | |

| Pre-measured Media & Supplement Kits | Reduces preparation time, measurement errors, and batch-to-batch variability. Enables just-in-time use. |

| Cryopreserved "Ready-to-Assay" Cells | Eliminates constant cell maintenance, allowing experiments to be initiated on demand (Pull System). |

| Assay Execution | |

| Multi-channel Pipettes & Electronic Repeaters | Dramatically increases speed and reproducibility of liquid handling in microplates. |

| Assay Kits with Lyophilized Reagents | Minimizes waste by reconstituting only the volume needed; improves stability and consistency. |

| Data Management | |

| Electronic Lab Notebook (ELN) | Centralizes protocols and data, reducing search time and risk of using outdated methods (Creating Flow). |

| Laboratory Information Management System (LIMS) | Tracks samples and reagents, monitors inventory levels, and automates data capture from instruments. |

| Integrated Data Analysis Platforms | Automates data processing and visualization, reducing manual manipulation and associated errors (Defects). |

Defining Strategic and Tactical Cost Cutting

In high-throughput experimentation research, distinguishing between strategy and tactics is fundamental to implementing sustainable cost reductions.

Strategy is the long-term vision that defines how your research organization will achieve and sustain a competitive advantage. It involves a set of choices guiding how you compete, allocate scarce resources, and adapt to achieve long-term objectives [10]. For a high-throughput lab, a strategic cost reduction goal might be: "Become a socially responsible brand known for sustainability," with an associated objective to "reduce our supply chain's carbon footprint by 10%" [10].

Tactics are the short-term, specific actions, methods, or initiatives taken to achieve strategic goals [11]. They are the concrete steps taken to head in the direction of your long-term strategy [11]. A tactical response to the above strategy could be: "Implementing green logistics operations and adopting biodegradable packaging for our products" [10].

The core difference lies in their scope and timeframe:

- Strategy is the "what" and "why"—the overarching plan and rationale [11]. It typically looks out three to five years [11].

- Tactics are the "how"—the day-to-day actions [11]. They have a finite timeline, often spanning six months to a year [11].

Confusing these concepts can be costly. A strategy without tactics is just a vision that never gets executed, while tactics without a strategic foundation are often disjointed actions that fail to produce meaningful, long-term results [10] [11].

Table: Comparison of Strategic and Tactical Cost-Cutting Elements

| Element | Strategic Cost Cutting | Tactical Cost Cutting |

|---|---|---|

| Time Horizon | Long-term (3-5 years) [11] | Short-term (6-12 months) [11] |

| Focus | "What" and "Why" – Fundamental goals and rationale [11] | "How" – Specific actions and methods [11] |

| Objective | Sustainable competitive advantage, transformative efficiency [12] | Immediate cost savings, quick wins [12] |

| Example | Adopt AI-driven discovery to fundamentally reshape R&D costs [4] [13] | Renegotiate supplier contracts for consumables [14] |

Strategic Cost Optimization Framework

Modern cost management emphasizes cost optimization over traditional, reactive cost cutting. Optimization is a perpetual efficiency play that continually rebalances the cost structure with an eye on strategic objectives, rather than simply slashing budgets [12].

Core Principles of a Sustainable Framework

- Connect Costs to Value: Not all costs should be reduced. Link expenses to the business value and strategic objectives they drive. Analyze the impact of potential cuts on your organization's ability to innovate and grow [12].

- Focus on Realized Savings: There is often a stark difference between projected and realized savings. Employ sophisticated, data-driven projections and ensure they translate into actual cost reductions [12].

- Reallocate Savings to Innovation: Companies cannot cut their way to profitability or disruptor status. Treat saved spend as capital, reallocating it to customer experience enhancements, strategic innovation projects, and other initiatives essential to future growth [12].

- Adopt an "Always-On" Approach: Cost optimization should be performed continuously, not just in response to economic downturns. This makes it a standard operating procedure and a key pathway to sustaining agility and resilience [10] [12].

The SpaceX Cost Transformation Model

A radical but effective approach to strategic cost optimization involves the following steps [15]:

- Make Requirements Less Dumb: Question the necessity and efficiency of every requirement in a process.

- Delete the Part or Process Step: If it's not essential, remove it entirely.

- Optimize: After deletion, refine the remaining steps for maximum efficiency.

- Accelerate: Speed up the process where feasible without sacrificing quality.

- Automate: Use technology to handle repetitive tasks, reducing error and cost.

Essential Tools for the Research Scientist

Implementing a cost-efficient strategy requires a toolkit of specific reagents, technologies, and methodologies.

Table: Key Research Reagent Solutions for Cost-Efficient High-Throughput Screening

| Reagent / Material | Primary Function in HTS | Cost & Efficiency Consideration |

|---|---|---|

| Compound Libraries | Collections of chemical/biological samples for screening; include FDA-approved drugs, natural extracts, or novel molecules [16]. | Leverage shared access through collaborative networks (e.g., NIH programs) to reduce costs [16]. |

| Assay Reagents | Enable biological tests to measure specific activity (e.g., enzyme activity, cell viability) [16]. | Opt for homogeneous assay formats (e.g., FLINT) to minimize liquid handling steps and save on reagents and time [16]. |

| Cell Lines | Engineered biological systems (e.g., reporter gene lines) used to model disease and test compound effects [16]. | Use cryopreservation to create stable cell banks, ensuring consistency and reducing the need for continuous cell culture. |

| Multi-Well Plates (384, 1536) | Miniaturized platforms that allow thousands of parallel experiments [16]. | Higher density plates (e.g., 1536-well) drastically reduce reagent volumes and costs per data point [16]. |

High-Throughput Experimentation Cost-Saving Protocols

Protocol: Implementing an AI-Guided Screening Cascade

This methodology uses computational analyses to reduce expensive laboratory work by prioritizing the most promising compounds for physical testing [13] [16].

Objective: To reduce the cost and time of hit identification by minimizing the number of wet-lab experiments required. Background: AI and virtual screening can predict compound efficacy and toxicity, offering a cost-effective way to accelerate discovery and reduce experimental overhead [13].

Materials:

- Compound library (in silico format)

- AI/ML prediction software (e.g., for target affinity or ADMET properties)

- High-throughput screening robotics and assay reagents [16]

Procedure:

- Virtual Screening: Use AI models to screen your entire virtual compound library against the target of interest. This prioritizes a subset of compounds with the highest predicted activity [13] [16].

- Tactical AI Triage: Apply additional AI filters to predict pharmacokinetics and toxicity (ADMET). This further narrows the list to compounds with a higher probability of success, eliminating those likely to fail later [17].

- Focused Experimental HTS: Conduct physical high-throughput screening only on the computationally prioritized compound subset. This step validates the AI predictions [16].

- Hit Confirmation: Take the "hits" from the focused HTS and conduct secondary assays for confirmation and initial characterization.

- Iterative Learning: Feed the experimental results from steps 3 and 4 back into the AI models to refine and improve future prediction cycles [4].

Protocol: Miniaturization and Automation for Lean HTS

This protocol focuses on reducing per-experiment costs through miniaturization and full automation, enabling massive parallel testing.

Objective: To achieve up to 50% cost reduction in screening operations and accelerate development cycles by up to 70% [4]. Background: High-throughput labs use robotics and miniaturization to conduct hundreds of parallel experiments, continuously analyzing results and adjusting parameters in real-time [4].

Materials:

- Automated liquid handling robots (e.g., Tecan, Hamilton) [16]

- High-density microplates (384-well or 1536-well) [16]

- Assay reagents optimized for miniaturization

- Robotic plate handlers and incubators

- Integrated data analysis software

Procedure:

- Assay Miniaturization: Scale down your established assay to a 384-well or 1536-well format. This reduces reagent volumes by 4-8 times compared to a standard 96-well plate [16].

- Automated Library Reformating: Use liquid handling robots to precisely dispense compound libraries and reagents into the high-density plates. This ensures accuracy and reproducibility while saving time [16].

- Robotic Assay Execution: Integrate all steps—dispensing, incubation, and reading—into a single, automated workflow using robotic arms to transfer plates between instruments. This enables 24/7 operation without human intervention [4].

- Real-Time Data Streamlining: Configure software to automatically collect and analyze data from plate readers as soon as a run is complete. This provides immediate feedback on assay quality (e.g., Z'-factor calculation) and compound activity [4] [16].

- Data-Driven Iteration: Use real-time insights to adjust subsequent experimental parameters instantly, creating a self-optimizing feedback loop that minimizes wasted resources on unproductive experiments [4].

Troubleshooting Guide and FAQs

This section addresses common operational challenges in high-throughput research from a cost-efficiency perspective.

FAQ: How can we justify the high initial investment in automation and AI? Answer: Frame the investment not as an expense but as a strategic cost transformation. The ROI includes a ~50% reduction in testing costs, up to 70% faster development cycles, and a 10x acceleration in materials discovery [4]. Calculate the long-term savings from reduced reagent use, lower labor costs, and increased output.

FAQ: Our experimental data is vast and siloed. How can we use it to reduce costs? Answer: Implement process mining and task mining tools. These data-driven approaches deconstruct workflows to identify non-essential tasks and process inefficiencies that are not visible at a surface level. They provide the objective data needed to build a business case for change and target optimization efforts effectively [12].

FAQ: We are experiencing a high rate of false positives in our HTS, wasting resources on follow-up. How can we mitigate this? Answer:

- Problem: High false positive rate in primary screening.

- Cause: Compound interference (e.g., assay noise, non-specific binding).

- Solution: Implement robust counter-screens and use orthogonal assay technologies early in the cascade to triage artifacts [16]. Incorporate AI tools that are trained to recognize and flag compounds with characteristics linked to promiscuous activity.

FAQ: How do we maintain research quality and innovation when facing budget pressure? Answer: Prioritize cost optimization over blunt cost cutting. Cutting arbitrarily undermines strategy and innovation [12]. Instead, optimize by:

- Diversifying Funding: Pursue grants from foundations, industry partners, and global charities to avoid reliance on a single source [17] [13].

- Forging Collaborations: Share infrastructure, data, and costs through international or public-private partnerships [17] [13].

- Strategic Supplier Management: Build partnerships with suppliers to jointly identify waste and share savings, rather than just seeking the lowest price [14].

FAQ: Our organization has significant technical debt in its data systems. How can we modernize without a massive write-down? Answer: This is a common challenge [15]. Address it incrementally. Start by building a business case for modernization focused on the Total Cost of Ownership (TCO) of the current system, including hidden costs of workarounds and lost productivity. Then, phase the migration, prioritizing modules that will deliver the fastest ROI in efficiency and cost savings, funding subsequent phases from the initial gains [15].

In the demanding field of high-throughput experimentation (HTE), particularly in early drug discovery, the traditional paradigm of large-scale synthesis is becoming economically and environmentally unsustainable. Conservative estimates indicate that drug discovery processes alone produce approximately 2 million kilograms of waste per year, with an additional 1.5 million kilograms generated during preclinical studies [18]. This resource-intensive approach is being fundamentally disrupted by the adoption of nanoscale operations. Miniaturization, powered by technologies like acoustic dispensing, transforms discovery workflows by performing chemical synthesis and screening on a nanomole scale, dramatically reducing the consumption of precious reagents, compounds, and solvents [18]. This article establishes the economic case for this shift, demonstrating how nanoscale operations serve as a powerful cost-reduction strategy while simultaneously enhancing research efficiency and sustainability.

Quantitative Economic Benefits of Nanoscale Operations

The transition from milligram to nanogram and nanoliter scales directly impacts key financial metrics in research and development. The following table summarizes the core economic advantages:

| Economic Benefit | Traditional HTS Scale | Nanoscale Operation | Impact and Cost Reduction |

|---|---|---|---|

| Reagent Consumption | Milligram (mmol) scale | Nanomole scale (e.g., 500 nMol per well) [18] | Direct reduction in reagent purchase costs by several orders of magnitude. |

| Chemical Waste Production | ~2 million kg/year in discovery [18] | Drastically reduced (Theoretical ~99%+ reduction) | Lower waste disposal costs and reduced environmental footprint. |

| Material Utility | 1 mg for a limited number of tests | 1 μg enables ~1,500 HTS campaigns [18] | Massive increase in data points per unit of synthesized material. |

| Library Synthesis Volume | Multi-milliliter reactions | 3.1 μL total reaction volume [18] | Enables massive library generation (e.g., 1536 compounds) with minimal solvent use. |

| Screening Throughput | Limited by reagent availability | 1536 compounds synthesized and screened on-the-fly [18] | Accelerates discovery timelines, reducing labor and overhead costs. |

The data underscores a powerful principle: by minimizing material input, nanoscale operations systemically reduce costs across reagent acquisition, waste management, and overall research efficiency [18].

Essential Protocols for Nanoscale High-Throughput Synthesis

Automated Nano-Synthesis Using Acoustic Dispensing

This protocol details the synthesis of a 1536-compound library via the Groebcke–Blackburn–Bienaymé three-component reaction (GBB-3CR) using acoustic dispensing technology [18].

- Objective: To autonomously synthesize and screen a diverse library of heterocycles on a nanomole scale to identify novel binders for the menin protein.

- Materials:

- Building Blocks: 71 isocyanides, 53 aldehydes, 38 cyclic amidines.

- Instrument: Echo 555 acoustic dispensing instrument.

- Labware: Source microplates, 1536-well destination microplates.

- Solvents: Anhydrous ethylene glycol or 2-methoxyethanol.

- Methodology:

- Stock Solution Preparation: Prepare 0.1 M stock solutions of all building blocks in appropriate, compatible solvents (e.g., DMSO, ethylene glycol).

- Library Design: Use a randomization script to assign building block combinations to the 1536 destination wells to maximize chemical diversity and avoid bias.

- Acoustic Dispensing:

- Position the source plate and an inverted 1536-well destination plate in the Echo 555.

- The instrument uses focused sound energy to eject 2.5 nL droplets from the source into the destination wells.

- Dispense the required volumes to deliver 500 nanomoles of each reagent into each well, resulting in a total reaction volume of 3.1 μL.

- Reaction Incubation: Seal the destination plate and incubate at room temperature for 24 hours.

- Quality Control: After incubation, dilute each well with 100 μL of ethylene glycol and analyze reaction success by direct-injection mass spectrometry. Categorize outcomes as successful (main peak is product), partial (product present but not main peak), or unsuccessful [18].

- Troubleshooting:

- Low Reaction Success: Verify solvent compatibility and building block solubility. Ensure stock solution concentrations are accurate.

- Dispensing Failure: Check for air bubbles in source wells and confirm that the liquid level meets the instrument's minimum requirements.

Downscaling Validation: From Nano to Millimole

A critical step in validating nanoscale workflows is demonstrating that reactions can be successfully scaled up to produce meaningful quantities for further characterization.

- Objective: To confirm that hit compounds identified from nanoscale screening can be produced on a milligram scale for secondary assays and structural analysis.

- Protocol:

- Hit Identification: From the primary nanoscale screen, select wells showing desired activity (e.g., in a DSF assay for menin binding).

- Reaction Optimization: Using the same building blocks, test a small set of reaction conditions (solvent, catalyst, temperature) in a 1 mL reaction volume to identify optimal milligram-scale conditions.

- Scale-Up Synthesis: Perform the synthesis using standard laboratory glassware at the 1 mmol scale.

- Purification and Validation: Purify the compound using flash chromatography or preparative HPLC. Confirm structure and purity via NMR and LC-MS. Compare the analytical data with the crude analysis from the nanoscale reaction to ensure consistency [18].

The entire workflow, from nanoscale library generation to hit identification and scale-up, is visualized below.

The Scientist's Toolkit: Essential Reagents & Materials

The successful implementation of miniaturized workflows relies on specialized materials and reagents. The table below lists key components for the featured nanoscale synthesis protocol.

| Item | Function in the Protocol | Key Consideration for Miniaturization |

|---|---|---|

| Acoustic Dispenser | Contact-less, precise transfer of nanoliter droplets using sound energy. | Enables high-density, low-volume reactions in 1536-well plates. Fast and accurate [18]. |

| GBB Reaction Components | Core building blocks for the synthesis of imidazo[1,2-a]pyridines. | Diversity of building blocks (isocyanides, aldehydes, amidines) is key to exploring large chemical space with minimal material [18]. |

| 1536-Well Microplates | Miniaturized reaction vessels for high-density library synthesis. | Standard format ensures compatibility with automation and other laboratory instrumentation [18]. |

| Polar Protic Solvents | Reaction medium (e.g., ethylene glycol, 2-methoxyethanol). | Must be compatible with acoustic dispensing technology and support the GBB-3CR reaction [18]. |

| Mass Spectrometer | High-throughput quality control of crude reaction mixtures. | Direct injection capability is essential for rapid analysis of thousands of nanoscale reactions without purification [18]. |

Troubleshooting Guide & FAQs for Nanoscale Experiments

FAQ 1: Our nanoscale synthesis in the 1536-well plate shows low reaction success rates. What are the primary factors we should investigate?

- Cause A: Solvent and Building Block Incompatibility. Not all solvents or concentrated stock solutions are optimal for acoustic dispensing.

- Solution: Use solvents recommended for acoustic dispensing, such as DMSO, DMF, ethylene glycol, or 2-methoxyethanol [18]. Ensure building blocks are fully dissolved and solutions are free of precipitate.

- Cause B: Inaccurate Liquid Handling. The success of nanoliter dispensing is sensitive to plate positioning and liquid properties.

- Solution: Regularly maintain and calibrate the acoustic dispenser. Ensure source plates are properly filled and free of air bubbles. Verify that the destination plate is correctly positioned and level.

- Cause C: Chemical Reactivity Issues. The chosen reaction may not be optimal for the miniaturized, solvent-limited environment.

- Solution: Before full-library execution, run a small pilot plate with a subset of building blocks to validate the reaction conditions. Consider testing a Lewis acid catalyst if the reaction is known to be catalyzed [18].

FAQ 2: We are encountering high background noise and artefacts during the nanoscale characterization of our materials. How can we improve image quality?

- Cause A: Tip Artefacts (for AFM/SPM). A contaminated or broken probe tip is one of the most common causes of image duplication and distortion.

- Solution: Replace the AFM probe with a new, sharp one. If a new probe is not available, attempt to clean the tip by engaging and indenting on a soft, clean sample (e.g., gold film) to dislodge debris [19].

- Cause B: Environmental Noise and Vibration. At the nanoscale, external vibrations from building equipment, doors, or traffic can severely degrade image resolution.

- Solution: Ensure the instrument is on a functioning anti-vibration table. If possible, schedule sensitive imaging for quieter times (e.g., evenings). Relocate the instrument to a basement lab if vibrations are persistent [19].

- Cause C: Electrical or Laser Interference. Repetitive lines in images can be caused by 50/60 Hz electrical noise or laser interference from reflective samples.

- Solution: Use probes with a reflective coating to minimize laser interference. Try to identify and isolate the instrument from sources of electrical noise [19].

FAQ 3: From a project management perspective, how do we justify the initial capital investment in automation and miniaturization equipment?

- Answer: The justification is based on Total Cost of Ownership (TCO) and Return on Investment (ROI) through dramatically reduced recurring costs.

- Reagent Cost Savings: A single nanomole-scale screen can save thousands of dollars in reagent costs compared to a traditional milligram-scale screen, especially for expensive or complex building blocks.

- Accelerated Timelines: The ability to synthesize and screen thousands of compounds "on-the-fly" compresses discovery cycles from months to weeks, leading to faster time-to-decision and significant labor savings [18].

- Waste Disposal Cost Reduction: A ~99% reduction in solvent and chemical waste volume directly translates into lower hazardous waste disposal costs [18].

- Increased Success Rate: Access to a larger and more diverse chemical space at minimal marginal cost increases the probability of finding high-quality hits early in the process, avoiding costly late-stage failures.

The economic case for miniaturization in high-throughput research is unequivocal. By adopting nanoscale operations, research organizations can directly and significantly reduce their largest variable costs: reagents and materials. This strategy transcends mere cost-cutting; it enables a more agile, sustainable, and productive research paradigm. As the field evolves, the integration of artificial intelligence (AI) with these miniaturized platforms promises to further accelerate discovery, guiding the design of new libraries and the analysis of screening data towards the most promising outcomes. For research organizations aiming to maintain a competitive edge, the strategic implementation of miniaturization is no longer optional—it is an economic and scientific imperative.

Identifying Hidden Cost Centers in Automated Experimentation Platforms

Automated experimentation platforms are powerful tools for accelerating high-throughput research in fields like drug development. However, their total cost of ownership extends far beyond initial software licensing. This guide helps researchers, scientists, and R&D professionals identify and troubleshoot hidden cost centers that can impact research budgets, framed within a broader thesis on cost-reduction strategies for high-throughput experimentation.

Frequently Asked Questions (FAQs)

Q1: Our experimentation platform budget focused on software licenses. What are the most commonly overlooked cost centers we should anticipate?

The most frequently overlooked costs extend beyond initial licensing to ongoing operational expenses. These typically include data preparation and cleaning (often 20-30% of project budgets), infrastructure upgrades for increased data processing (adding 30-50% to initial estimates), and annual maintenance ranging from 15-25% of initial implementation costs [20]. Additional hidden expenses include employee training programs (10-15% of implementation budgets) and legacy system integration, which can increase project costs by 40-60% [20].

Q2: Our experimental data quality is inconsistent, leading to failed experiments and costly repeats. How can we troubleshoot this systematically?

Inconsistent data quality often stems from upstream process issues. Follow this troubleshooting methodology:

- Audit Data Sources: Identify all data generation points and their quality control measures

- Validate Collection Protocols: Ensure standardized procedures across all research personnel

- Check Integration Points: Verify data transfer integrity between instruments and platforms

- Implement Automated Quality Checks: Deploy validation rules to flag anomalies in real-time

- Establish Data Lineage Tracking: Monitor data from origin through all transformations

Q3: We're experiencing "configuration drift" in our experiment templates, causing inconsistent results. How can we maintain reproducibility without excessive manual oversight?

Configuration drift is a common hidden cost center. Implement these safeguards:

- Version Control for Templates: Treat experiment configurations as code with proper versioning

- Automated Validation Checks: Implement pre-execution checks for configuration consistency

- Environment Snapshots: Capture complete experiment states for reproducibility

- Change Control Procedures: Formalize modification approval processes

- Regular Audit Protocols: Schedule periodic configuration reviews

Q4: Our team spends significant time preparing data for analysis rather than analyzing results. What optimization strategies can reduce this overhead?

Data preparation is a major hidden cost, typically consuming 20-30% of project budgets [20]. Implement these efficiency strategies:

- Automated Data Pipelines: Create standardized preprocessing workflows

- Centralized Data Validation: Implement organization-wide quality standards

- Metadata Enforcement: Require complete experimental metadata at collection

- Self-Service Preparation Tools: Empower researchers with user-friendly cleaning interfaces

- Dedicated Data Engineering Support: Allocate specialized resources for pipeline optimization

Q5: How can we accurately calculate the true ROI of our automated experimentation platform given these hidden costs?

True ROI calculation requires comprehensive cost tracking:

- Track All Labor Costs: Include researcher time for setup, maintenance, and troubleshooting

- Quantify Experiment Repeat Costs: Measure resources wasted on failed experiments

- Calculate Speed-to-Insight Value: Quantify the business impact of accelerated discovery

- Monitor Infrastructure Scaling Costs: Track expenses related to increased data volumes

- Factor in Training and Onboarding: Include costs of bringing new researchers onto the platform

Quantitative Analysis of Hidden Costs

Table 1: Common Hidden Cost Centers in Automated Experimentation Platforms

| Cost Category | Typical Impact Range | Primary Contributors | Mitigation Strategies |

|---|---|---|---|

| Data Preparation & Cleaning | 20-30% of project budget [20] | Manual data formatting, quality validation, standardization | Automated data pipelines, standardized collection protocols |

| Infrastructure Upgrades | 30-50% added to initial estimates [20] | Increased storage needs, processing power, specialized hardware | Cloud scaling options, performance optimization |

| Ongoing Maintenance & Monitoring | 15-25% of initial cost annually [20] | Software updates, performance tuning, security patches | Strategic vendor partnerships, dedicated platform teams |

| Training & Workforce Development | 10-15% of implementation budget [20] | Researcher onboarding, advanced feature training, skill maintenance | Internal certification programs, knowledge sharing systems |

| Legacy System Integration | 40-60% cost increase [20] | Custom connectors, data transformation, compatibility layers | API-based architecture, phased modernization |

| Experiment Repeats Due to Quality Issues | Varies by organization | Poor data quality, configuration errors, protocol drift | Automated quality gates, template version control |

Table 2: Troubleshooting Guide for Common Cost-Related Issues

| Problem Symptom | Root Cause | Immediate Actions | Long-Term Solutions |

|---|---|---|---|

| Increasing experiment repeat rates | Inconsistent data quality or configuration drift | Audit recent changes, review quality metrics | Implement automated validation checks, version control |

| Slowing experiment throughput | Inadequate infrastructure for data volume | Monitor system performance, identify bottlenecks | Right-size computing resources, optimize data workflows |

| Rising platform maintenance time | Increasing system complexity or technical debt | Document pain points, prioritize critical fixes | Establish dedicated platform team, refactor problem areas |

| Growing training demands | High researcher turnover or complex features | Develop quick-reference guides, peer mentoring | Create tiered training program, simplify user interfaces |

| Expanding data storage costs | Unoptimized data retention policies | Archive old experiments, compress existing data | Implement data lifecycle policies, tiered storage |

Experimental Protocols for Cost Optimization

Protocol 1: Data Quality Validation Framework

Objective: Establish standardized data quality checks to reduce experiment repeats Materials: Automated validation scripts, quality metrics dashboard, data provenance tracking Methodology:

- Implement automated pre-experiment quality gates

- Establish quantitative quality metrics for all input data

- Create data lineage tracking from source through analysis

- Develop threshold-based alerting for quality deviations Expected Outcome: 25-40% reduction in experiment repeats due to data quality issues

Protocol 2: Experiment Template Management System

Objective: Maintain configuration consistency across research teams Materials: Version control system, template repository, change management protocol Methodology:

- Implement Git-based version control for all experiment templates

- Establish template review and approval workflow

- Create automated consistency checks for template modifications

- Develop template performance monitoring across use cases Expected Outcome: 30-50% reduction in configuration-related experiment failures

Workflow Visualization

Automated Experimentation Platform Cost Centers

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Cost-Effective Experimentation Management

| Tool Category | Example Solutions | Primary Function | Cost Considerations |

|---|---|---|---|

| Data Quality Tools | Automated validation scripts, Quality metrics dashboards | Ensure data integrity before experiment execution | Open-source options available; commercial tools offer advanced features |

| Version Control Systems | Git, Subversion | Track experiment template changes and maintain reproducibility | Open-source with minimal licensing costs; training required |

| Infrastructure Monitoring | Prometheus, Datadog | Track system performance and identify resource bottlenecks | Open-source and commercial options with varying capability levels |

| Experiment Template Repositories | Internal knowledge bases, Commercial template libraries | Standardize experimental protocols across teams | Development time for internal solutions; licensing for commercial |

| Automated Pipeline Tools | Nextflow, Snakemake | Streamline data processing and analysis workflows | Open-source with computational infrastructure requirements |

Implementing Automation, AI and Advanced Technologies for HTE Efficiency

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Our high-throughput screening (HTS) generates vast data, but we struggle with low model accuracy and poor decision-making. How can we improve this?

A1: The core issue often lies in data quality and relevance, not just quantity. Historical data may lack the quality needed for effective machine learning (ML) modeling [21].

- Actionable Protocol:

- Implement FAIR Data Principles: Ensure all data is Findable, Accessible, Interoperable, and Reusable. Use a well-designed data repository and query system [22].

- Adopt Comprehensive Data Capture: Move beyond basic observables. Integrate automated, high-throughput, label-free techniques that probe reaction chemistry in finer detail to create information-rich datasets for ML [21].

- Use Dimensionality Reduction: For high-dimensional data, use techniques like Principal-Component Analysis (PCA) or variational autoencoders (VAEs) before applying ML models like Bayesian Optimization [21].

Q2: Our HTE platform is inflexible; modifying workflows requires specialized control-systems knowledge we lack. What solutions exist?

A2: This is a common barrier to entry. The solution involves investing in more accessible control software.

- Actionable Protocol:

- Evaluate Platform Architecture: Seek robust control software that translates model predictions into machine-executable tasks without deep specialized knowledge [21].

- Consider All-in-One Platforms: Investigate integrated scientific platforms (e.g., Sapio Sciences Exemplar) that combine workflow management, analysis, and knowledge extraction, reducing integration overhead [22].

- Plan for Operational Design: Prioritize broadly accessible systems in your procurement process to ensure long-term adaptability and reduce reliance on specialized support [21].

Q3: How can we balance the need for high throughput with the requirement for detailed sample analysis in our HTE pipeline?

A3: This is a classic trade-off in HTE. A tiered approach is often most effective [21].

- Actionable Protocol:

- Initial High-Throughput Phase: Use a small set of low-cost observables to quickly screen large chemical libraries and identify the most promising candidates.

- Secondary Detailed Analysis: Route only the promising hits from the first phase to a more detailed, lower-throughput investigation offline. This ensures comprehensive analysis is reserved for the most valuable samples [21].

Troubleshooting Guides

Issue: Inefficient Experimental Design Leading to Redundant Data and High Costs

Symptoms: Experiments are taking too long, consuming excessive reagents, and generating data that does not effectively narrow the search space or lead to optimal outcomes.

Diagnosis and Solution:

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Shift from Brute-Force to AI-Driven Design | More informative experiments, reduced redundant information. |

| 2 | Implement Bayesian Optimization (BO) | Efficient navigation of high-dimensional chemical space by balancing exploration and exploitation [21]. |

| 3 | Apply Active Learning (AL) | In data-scarce domains like materials science, AL selects samples that maximize learning efficiency, optimizing libraries with fewer experiments [22]. |

Detailed Methodology for Bayesian Optimization:

- Define Objective: Clearly state the goal (e.g., maximize yield, minimize byproducts).

- Choose Surrogate Model: Start with a Gaussian Process (GP) to model the relationship between input variables and your objective. For very high dimensions, use a Random Forest or Neural Network with uncertainty estimation [21].

- Select Acquisition Function: Use a function (e.g., Expected Improvement) to determine the next most promising experiment to run.

- Run Experiment & Update: Execute the experiment, collect the result, and update the surrogate model to inform the next iteration [21].

Issue: Low Throughput and Reproducibility in Synthesis and Screening

Symptoms: Inability to scale experiments, inconsistent results, and long cycle times for discovery.

Diagnosis and Solution:

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Leverage Full Lab Automation | Greater reproducibility, faster experiment turnaround, increased efficiency [22]. |

| 2 | Integrate Synthesis and Analytics | Use modular workstations for synthesis and fast serial analytical platforms (e.g., plate-based analyses) integrated into IT systems [22]. |

| 3 | Ensure FAIR-Compliant Data Capture | Use Electronic Lab Notebooks (ELNs) and Lab Information Management Systems (LIMS) to capture all data systematically, enabling reproducibility and future reuse [22]. |

Experimental Workflow for an ML-Enhanced HTE Cycle

The following diagram illustrates the self-reinforcing cycle of ML-enhanced HTE, which is key to systematic cost reduction.

Research Reagent Solutions for HTE

The following table details key resources and their functions in a modern HTE platform.

| Item | Function in HTE | Application Note |

|---|---|---|

| Lab Automation & Robotics | Executes fast, parallel, and serial experiments with high consistency. Includes liquid handlers, solid dispensers, and robotic arms. [22] | Vendors: Tecan, Hamilton, Molecular Devices. Essential for HTS in drug discovery. [22] |

| Design of Experiments (DOE) | Statistical framework for designing experiments to maximize information gain while minimizing resource use. [22] | Critical for moving beyond brute-force methods. Used for reaction optimization with a small number of variables. [21] |

| FAIR-Compliant Data Repository | Centralized system to capture, store, and manage all experimental data, making it findable and reusable. [22] | Foundational for all ML efforts. Initiatives like the Open Reaction Database provide guidance. [21] |

| Bayesian Optimization (BO) | An efficient experimental design strategy for navigating complex, high-dimensional search spaces. [21] | Uses a surrogate model (e.g., Gaussian Process) to relate inputs to outputs and suggest the next best experiment. [21] |

| Electronic Lab Notebook (ELN) | Captures experimental requests, protocols, and results in a digital, structured format. [22] | Often integrated with a LIMS to manage the end-to-end experimental workflow. [22] |

Intelligent powder dosing systems represent a transformative technology for high-throughput experimentation (HTE) in research and drug development. By automating one of the most variable and time-consuming manual processes in the laboratory, these systems directly address critical cost pressures. This technical support center provides researchers with practical guidance to maximize the benefits of automated powder dosing, focusing on troubleshooting common issues and implementing best practices to enhance experimental reproducibility while reducing operational expenses.

Quantifiable Benefits for High-Throughput Research

Implementing intelligent powder dosing systems delivers measurable improvements in operational efficiency and resource utilization, which are central to cost reduction in research.

Table 1: Impact of Automated Powder Dosing on Research Efficiency

| Metric | Manual Process | Automated System | Impact |

|---|---|---|---|

| Optimization Time | Baseline | ~4x reduction [23] | Accelerated development cycles |

| Reactions per Chemist | Baseline | 150-200 reactions; goal of 1,000+/week [23] | Dramatically increased output |

| Dosing Accuracy | Variable (human-dependent) | Up to ±0.1% or better [24] | Improved reproducibility, reduced waste |

| Overall Equipment Effectiveness (OEE) | Baseline | 25% improvement [24] | Better asset utilization |

| Dosing Errors | Baseline | Up to 40% reduction [24] | Lower reagent loss and failed experiment costs |

Troubleshooting Common Powder Dosing Issues

Even advanced systems can encounter issues. The following guide addresses common problems, their causes, and solutions.

Table 2: Powder Dosing System Troubleshooting Guide

| Problem | Possible Causes | Solutions |

|---|---|---|

| No Flow | High humidity, irregularly shaped particles, material coatings causing bridging [25] | Install a mechanical agitator before feeder entry; add a vibrator to the hopper; use air pads to aerate the product [25]. |

| Low Flow | Obstructions above feeder, misalignments, material too thick, feeder too small [25] | Upgrade to a larger feeder; add a variable frequency drive; change the reducer on the drive [25]. |

| Decreasing Flow Over Time | Static build-up causing material to stick to feeder surfaces [25] | Ground the feeder frame; use an electro-polished finish on the feeder; add a Teflon coating to the feeder [25]. |

| Material Flooding | Over-aeration, excessive feed speed [25] | Vent the hopper; install a slide gate or butterfly valve; use a smaller feeder; lower the drive speed; incline the feeder [25]. |

| Inconsistent Dosing Rates | Air bubbles in the system, worn pump components, clogged injection points [26] | Check for leaks and inspect pump components; perform regular system flushing [26]. |

| Insufficient Flow/Blockage | Blocked suction pipe, foreign matter in valves, diaphragm deformation [27] | Clean and dredge the suction pipe; clean the one-way valve; repair or replace worn components [27]. |

Frequently Asked Questions (FAQs)

Q1: What are the most significant motivations for automating our powder dosing processes? The primary motivations are avoiding tedious and time-consuming manual processing, cutting cycle times to significantly increase productivity, and conserving limited solid compounds by reducing wastage [28]. This directly translates to lower labor costs and more efficient use of valuable research materials.

Q2: What types of powders are most problematic for automated systems? Survey respondents reported that 63% of compounds present dispensing challenges. The most frequent issues are with light/low-density/fluffy solids (21% of the time), sticky/cohesive/gum-like solids (18%), and large crystals/granules/lumps (10%) [28]. Modern systems with adaptive technologies are specifically designed to handle this wide spectrum of powder characteristics [24].

Q3: What is the single biggest concern with automated powder dispensing technology? The largest concern is a large "dead volume"—the minimum starting mass required or the residual compound lost in the process itself. This is closely followed by minimum dispense mass, system robustness, and cross-contamination [28]. These factors directly impact the conservation of often scarce and expensive research compounds.

Q4: How does automation enhance safety and compliance? Automated systems enhance safety by operating within enclosed environments, limiting worker exposure to airborne particles and potent compounds [29]. They provide detailed electronic logs of every weighing operation, which is crucial for perfect traceability and regulatory compliance (e.g., FDA, GMP) [30] [31]. Automated cleaning cycles also minimize cross-contamination risks [29].

Q5: What role do Industry 4.0 technologies play in modern dosing systems? The integration of the Internet of Things (IoT) and Artificial Intelligence (AI) is transformative. IoT enables real-time monitoring and predictive maintenance, improving Overall Equipment Effectiveness (OEE) by 25% [24]. AI-driven algorithms use historical data to predict and adjust dosing parameters in real-time, reducing errors by up to 40% by accounting for variables like humidity and powder flow characteristics [24].

Experimental Workflow for Automated Solid Handling

The following diagram illustrates a generalized workflow for implementing an automated powder dosing system in a high-throughput experimentation setting.

Automated Powder Dosing Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Components of an Automated Powder Dosing System

| System Component | Function | Considerations for High-Throughput Research |

|---|---|---|

| Gravimetric Dispensing Unit (GDU) | Precisely weighs powder directly into destination vials or reactors [28]. | Look for systems with high-resolution load cells for micro-dosing and dynamic weight correction for accuracy up to ±0.05% [24]. |

| Dosing Mechanism (e.g., Auger) | Volumetrically or gravimetrically transfers powder from source to destination [29]. | Variable pitch screws adapt to different powder flowabilities. Vibration-assisted feeding prevents bridging of cohesive powders [24]. |

| Collaborative Robot (Cobot) | Automates the weighing and handling of powder containers [32]. | Frees highly skilled researchers from repetitive tasks, enabling them to manage 150+ reactions simultaneously [23]. |

| Hopper & Storage Vessels | Holds bulk powder before dispensing [25]. | Agitators, vibrators, or air pads can be added to prevent no-flow issues. Sizes range from 50L to 300L [25] [31]. |

| Control Software & IoT | Manages recipes, data logging, and system integration [24]. | Essential for batch traceability and replicability. Integration with ERP and LIMS ensures seamless production processes [32] [29]. |

Advanced Protocols for Optimal Performance

Protocol: System Calibration for Micro-Dosing Applications

Objective: To achieve dosing accuracy of ±0.1mg or better for masses under 10mg.

- Pre-Calibration: Ensure the system is in a temperature-stable environment, away from air currents and vibrations.

- Weight Standard: Use a certified, high-precision calibration weight appropriate for the target mass range (e.g., 10mg).

- Multi-Point Calibration: Execute the system's internal calibration routine at multiple points across the intended operational range (e.g., 1mg, 5mg, 10mg, 20mg) to ensure linearity [24].

- Performance Verification: Dispense a known, challenging powder (e.g., a fluffy, low-density material) at the minimum target mass ten times. Weigh each dispense on an independent, calibrated micro-balance.

- Acceptance Criteria: The standard deviation (CV) of the ten dispenses should be ≤10% [28]. If not, adjust the system's adaptive feed control parameters and repeat.

Protocol: Handling Problematic Powders

Objective: To reliably dispense light, fluffy, or cohesive powders.

- System Preparation: Activate all available material handling aids:

- Parameter Optimization: Utilize the system's "AutoTeaching" or AI-driven algorithm to determine the optimal feed rate, vibration frequency, and discharge timing for the specific powder [28] [24].

- Validation Run: Perform a short run of 5-10 dispenses and verify weight consistency. Manually inspect destination vials for incomplete or clumped transfers.

- Documentation: Save the optimized parameters as a dedicated "method" within the control software for future use, ensuring recipe replicability [31].

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why is my AI model performing well on training data but failing to predict successful experimental outcomes accurately?

This is typically caused by the "distributional shift" problem, where the training data does not adequately represent real-world experimental conditions. To address this:

- Implement transfer learning: Fine-tune pre-trained models with a smaller set of high-fidelity experimental data specific to your domain. This approach combines broad pattern recognition with domain-specific knowledge [33].

- Create hybrid training sets: Augment computational data with strategically collected experimental data to fill critical knowledge gaps. Research shows that intentionally gathering high-throughput experimental data to address model weaknesses leads to better predictions [34].

- Validate with iterative testing: Establish a "lab in a loop" workflow where model predictions are experimentally validated, and results are fed back to continuously retrain and improve the model [33].

Q2: How can I prioritize which experiments to run when facing resource constraints?

AI-guided experimental platforms can dramatically reduce the number of required tests. Focus on:

- Bayesian optimization algorithms: These can identify the most informative experiments to run, maximizing knowledge gain while minimizing resource consumption [34].

- Multi-objective optimization: Consider multiple parameters simultaneously (e.g., efficacy, cost, safety) to identify Pareto-optimal experimental conditions [35].

- Leverage high-throughput robotics: One research team reduced their testing from 2,000 possible combinations to less than 10% through AI-guided prioritization, identifying optimal solvent mixtures for energy storage solutions [34].

Q3: What are the common data quality issues that undermine AI model performance in experimental design?

- Inconsistent data formatting: Establish standardized data collection protocols across all experiments.

- Inadequate metadata: Ensure all experimental conditions are thoroughly documented.

- Small dataset bias: Leverage data augmentation techniques or synthetic data generation to expand training sets.

- Experimental noise: Implement outlier detection algorithms and data cleaning pipelines.

Q4: How can we effectively integrate AI predictions with researcher expertise?

The most successful implementations combine AI capabilities with human domain knowledge:

- Develop intuitive interfaces: Create visualization tools that make AI predictions interpretable to domain experts.

- Establish feedback mechanisms: Enable researchers to easily correct model errors and incorporate their insights.

- Focus on collaborative workflows: One successful approach "leveraged the speed of high-throughput and human intuition to better train AI" [34].

Common Error Messages and Solutions

| Error Type | Possible Causes | Solutions |

|---|---|---|

| Poor Generalization | • Insufficient training data• Overfitting• Dataset shift | • Apply regularization techniques• Implement cross-validation• Augment with experimental data [34] |

| Algorithm Convergence Failure | • Inappropriate hyperparameters• Local minima trapping• Noisy gradients | • Systematic hyperparameter tuning• Try alternative optimizers• Gradient clipping |

| Feature Encoding Problems | • High dimensionality• Sparse features• Multicollinearity | • Dimensionality reduction (PCA, t-SNE)• Feature selection algorithms• Regularization methods |

Experimental Protocols and Methodologies

Protocol 1: AI-Guided High-Throughput Screening for Material Discovery

This protocol details the methodology used to identify optimal solvent mixtures for redox flow batteries, which achieved a threefold improvement in compound dissolution [34].

Materials and Reagents:

- Organic solvent library (2000+ possible combinations)

- Target compound for dissolution

- High-throughput robotic screening system

- AI computing infrastructure

Procedure:

- Initial Data Collection: Run a limited set of diverse experiments (50-100 combinations) to generate initial training data.

- Model Training: Train machine learning models (random forest or neural networks) on collected data.

- Prediction Phase: Use trained models to predict performance of untested combinations.

- Selection and Validation: Test the top 5-10% most promising predictions experimentally.

- Iterative Refinement: Feed results back into model training and repeat cycle 2-4 times.

Key Parameters:

- Input features: solvent chemical properties, concentrations, temperature

- Output targets: dissolution capacity, stability metrics

- Validation: Cross-validation with hold-out experimental sets

Protocol 2: "Lab in a Loop" for Drug Discovery

This protocol implements Genentech's approach to integrating AI with experimental validation [33].

Workflow:

- Data Aggregation: Compile diverse data sources (lab experiments, clinical studies, literature).

- Model Development: Design custom ML algorithms for specific prediction tasks (target identification, molecule design).

- Experimental Validation: Test model predictions in wet lab settings.

- Continuous Learning: Incorporate new experimental results to retrain and improve models.

Applications:

- Neoantigen selection for cancer vaccines

- Antibody design optimization

- Small-molecule activity prediction

Performance Data and Results

Table 1: Experimental Efficiency Improvements with AI Guidance

| Metric | Traditional Approach | AI-Guided Approach | Improvement |

|---|---|---|---|

| Experiments required to identify optimal conditions | 200-400 | 15-40 | 85-92% reduction [34] |

| Time to solution identification | 6-12 months | 2-4 months | 60-75% reduction [34] |

| Resource utilization | High | Optimized | 70-85% reduction [34] |

| Success rate in experimental outcomes | 10-15% | 35-50% | 3-4x improvement [35] |

Table 2: AI Model Performance Comparison for Drug Discovery Applications

| Application | Algorithm Type | Performance Metrics | Traditional Methods |

|---|---|---|---|

| Virtual Screening | Deep Neural Networks | 30-50% higher hit rate compared to random screening [35] | QSAR models with limited predictivity [35] |

| ADMET Prediction | Deep Learning | Significant improvement across 15 ADMET datasets [35] | Traditional ML with lower accuracy [35] |

| Chemical Property Prediction | Multilayer Perceptron | R² > 0.9 for solubility, logP predictions [35] | Experimental measurement only |

Research Reagent Solutions

Essential Materials for AI-Enhanced Experimentation

| Reagent/Material | Function | Application Notes |

|---|---|---|

| High-Throughput Screening Robots | Automated experimental execution | Enables rapid testing of AI-predicted conditions; critical for generating training data [34] |

| Specialized Chemical Libraries | Diverse compound collections | Provides broad coverage of chemical space for AI pattern recognition [35] |

| Multi-parameter Assay Kits | Simultaneous measurement of multiple outcomes | Generates rich datasets for training more sophisticated AI models [35] |

| Data Management Platforms | Structured storage of experimental results | Ensures data quality and accessibility for continuous model retraining [33] |

Workflow Diagrams

AI-Guided Experimental Workflow

AI Experimental Troubleshooting Process

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary cost-saving advantages of switching from batch to flow chemistry for High-Throughput Experimentation (HTE)?

Flow chemistry reduces costs in HTE by enabling more efficient and safer processes. Key advantages include:

- Reduced Reagent and Solvent Consumption: Microreactors use minimal volumes for screening, drastically cutting material costs [36].

- Minimized Re-optimization during Scale-up: Optimized conditions in flow can be scaled directly to production by increasing operation time or "numbering-up" identical reactors, avoiding the costly and time-consuming re-optimization often required with batch-scale-up [36] [37].

- Lower Waste Disposal Costs: Enhanced control and efficiency typically lead to a 10–12% reduction in waste generation, directly cutting disposal costs and environmental impact [38].

- Improved Safety Profile: Flow systems safely contain hazardous reagents and intermediates, reducing risks and associated costs [36] [37].

FAQ 2: How can I prevent clogging in my microreactor system, especially with heterogeneous mixtures or solid-forming reactions?

Clogging is a common challenge. Mitigation strategies include:

- Segmented Flow: Using an immiscible fluid (e.g., gas or perfluorinated solvent) to create segments, isolating the reaction mixture and preventing solid deposition on reactor walls [37].

- Sonication: Integrating an ultrasonic transducer directly onto the reactor can dislodge microparticles and prevent agglomeration [37].

- Reaction Homogenization: Prior HTE should focus on identifying homogeneous reaction conditions. For instance, switching from a heterogeneous to a homogeneous photocatalyst was key to successfully scaling a photoredox fluorodecarboxylation reaction in flow [36].

FAQ 3: Our HTE workflow is slowed down by offline analysis. How can we accelerate data acquisition?

Integrating Process Analytical Technology (PAT) is the solution. Inline or online analytical tools like IR, UV, or mass spectrometry can be connected directly to the flow stream [38] [37]. This allows for:

- Real-time reaction monitoring.

- Immediate feedback for process control.

- A reported 15–18% increase in reaction monitoring efficiency, which significantly accelerates high-throughput screening campaigns [38].

FAQ 4: Are flow reactors suitable for photochemical HTE, and what are the benefits?

Yes, flow reactors are particularly advantageous for photochemistry [36] [37]. Benefits include:

- Superior Photon Efficiency: The short path length in microreactors ensures uniform and efficient light penetration, overcoming the light penetration issues of batch photoreactors [37].

- Precise Control of Irradiation Time: Residence time in the irradiated zone is exactly controlled, preventing product decomposition from over-irradiation [36].

- Safer Operation: Flow systems safely contain the high-energy light source and often allow for the use of milder, visible-light-driven reactions [37].

FAQ 5: What are the common pitfalls when translating a batch-optimized reaction to a flow system?

Common pitfalls and how to avoid them:

- Unaccounted Mixing Dynamics: Assume mixing in flow is not instantaneous. Factors like reactor geometry, flow rate (Reynolds number), and viscosity must be considered.

- Incompatible Solvent Systems: Solvents must be compatible with the reactor construction material (e.g., avoid aggressive solvents with certain polymers) and have suitable properties for pumping and pressure control.