Strategic Approaches to Minimize Downtime in Robotic Laboratory Systems

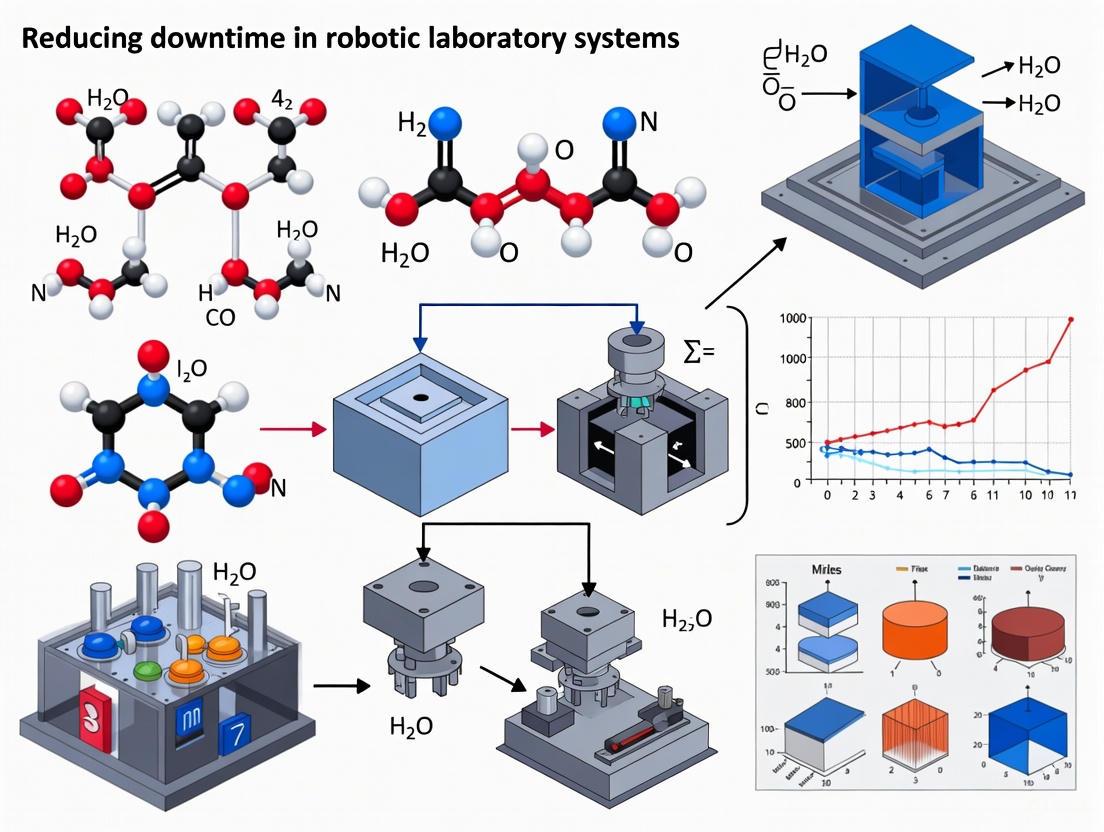

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on reducing unplanned downtime in robotic laboratory systems.

Strategic Approaches to Minimize Downtime in Robotic Laboratory Systems

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on reducing unplanned downtime in robotic laboratory systems. It explores the foundational causes of downtime, details methodological applications of preventive and predictive maintenance, offers advanced troubleshooting and optimization techniques leveraging AI and IoT, and presents validation frameworks for measuring success and ROI. By synthesizing current industry data and emerging trends, this resource equips laboratories with actionable strategies to enhance operational efficiency, protect valuable research, and accelerate discovery timelines.

Understanding the Root Causes and High Cost of Lab Robotics Downtime

Quantifying the Impact: Data on Laboratory Downtime

Understanding the true cost of laboratory equipment downtime is the first step toward mitigating its effects on research and development (R&D) timelines. The data reveal a direct correlation between equipment reliability and operational efficiency.

Table 1: Laboratory Equipment Downtime Benchmarks and Interpretations

| Downtime Rate | Performance Rating | Operational Implications |

|---|---|---|

| < 2% | Excellent | Indicates robust maintenance protocols and effective scheduling [1]. |

| 2% - 5% | Acceptable | Within an acceptable range, but should be monitored for potential issues [1]. |

| > 5% | Concerning | Requires immediate investigation and corrective action [1]. |

The consequences of exceeding acceptable downtime thresholds are severe. A case study from a leading pharmaceutical company demonstrated that when downtime reached 12%, it caused significant delays in drug development and increased operational costs [1]. Furthermore, a study on Electronic Health Record (EHR) downtime, which disrupts connected laboratory systems, showed that during such events, laboratory testing results were delayed by an average of 62% compared to normal operation [2]. For labs operating on tight schedules, such delays can directly translate into postponed clinical trials and extended time-to-market for new therapies.

Table 2: Financial and Operational Costs of Downtime

| Impact Area | Quantified Effect | Source Context |

|---|---|---|

| Drug Development Timelines | Can take 10-15 years on average; delays are costly [3]. | Drug Development |

| Unplanned Downtime Cost | Can cost up to $8,600 per hour [4]. | Manufacturing |

| Corrective Action Outcome | A pharmaceutical company reduced downtime from 12% to 4%, saving an estimated $25MM [1]. | Laboratory Management |

Troubleshooting Guides: Addressing Common Downtime Issues

FAQ 1: What are the most common causes of unexpected downtime in robotic laboratory systems?

Unexpected downtime typically stems from mechanical failures, inadequate maintenance, operator error, and environmental factors.

- Mechanical Wear and Tear: Common failure points include mechanical components in moving parts such as robotic arms and pipettes, sensor degradation, and fluid handling system blockages [5]. Regular inspection and preventive maintenance are crucial to identify signs of wear before they lead to failure.

- Inadequate Maintenance Schedules: Failing to implement a preventive maintenance schedule is a major pitfall that leads to unexpected breakdowns [1]. Without regular checks, equipment is more likely to fail at critical times.

- Improper Operation: Neglecting to train staff on proper equipment operation can result in misuse and accidents, causing damage and subsequent downtime [1].

- Software and Integration Issues: Modern lab robotics often integrate systems from multiple vendors, which can lead to software conflicts and connectivity problems that halt operations [5].

- Environmental Factors: Laboratory conditions such as temperature fluctuations, humidity variations, and exposure to corrosive chemicals can accelerate wear and tear on sensitive robotic components [5].

FAQ 2: How can we quickly diagnose the root cause of a system failure?

Implementing a structured diagnostic workflow can significantly reduce the time to identify and resolve system failures. The following diagram outlines a logical troubleshooting pathway.

FAQ 3: What data should we collect during a downtime event to facilitate analysis?

Collecting granular, actionable data during a downtime event is essential for root cause analysis and preventing future occurrences. Your data collection method should capture the following for every stoppage [4]:

- Machine Identifier: Which specific instrument or system failed.

- Start and Stop Time: Accurate timing of the downtime duration.

- Category of Stoppage: A forced choice from a standardized list (e.g., machine problem, tool adjustment, unplanned maintenance, no operator available).

- Shift and Personnel: Who was operating or maintaining the machine during the event.

- Descriptive Notes: Any relevant observations about the failure mode, error messages, or environmental conditions.

Automated data collection via a Computerized Maintenance Management System (CMMS) linked to the machine's control system is superior to manual logs, as it guarantees accuracy and prevents restarts without a reason being entered [4].

Experimental Protocols: Proactive Downtime Reduction

Protocol: Implementing a Proactive Preventive Maintenance (PM) Program

A well-structured PM program is the most effective defense against unplanned downtime. The following workflow ensures maintenance is systematic and data-driven.

Detailed Methodology:

- Comprehensive Equipment Audit: Begin with a complete audit of all laboratory equipment. Identify critical assets that have the greatest impact on R&D timelines if they fail [1]. For each piece of equipment, create a profile that includes its maintenance history, technical manuals, and critical spare parts list.

- Establish a Preventive Maintenance Schedule: Develop a time-based or usage-based maintenance schedule. This should include [5]:

- Daily Inspections: Visual checks of mechanical components, fluid levels, and system alerts.

- Weekly Calibrations: Verification of measurement accuracy and system performance.

- Monthly Deep Cleaning: Thorough cleaning of accessible components and replacement of consumables.

- Quarterly Assessments: Comprehensive system evaluation, including software updates and hardware inspections.

- Annual Overhauls: Complete system teardown, component replacement, and performance verification.

- Proper Technician Training: Ensure maintenance technicians receive proper training on the specific robotic systems. This includes understanding system operation, best practices for maintenance, and proper lubrication procedures as outlined in the equipment manuals [6].

- Execution and Documentation: Perform all maintenance activities according to the schedule. Before starting, create image and program backups to prevent data loss [6]. Maintain detailed records of all performed activities, parts replacements, and calibration certificates for regulatory compliance and lifecycle tracking [5].

- Analyze Performance Data: Utilize a CMMS or other downtime tracking software to aggregate data. Calculate key metrics like Mean Time Between Failures (MTBF) and Mean Time To Repair (MTTR) to quantify equipment reliability and maintenance effectiveness [4].

- Refine PM Strategy: Use the data collected to optimize your maintenance schedule. If data shows a particular component fails frequently before its scheduled PM, adjust the replacement interval accordingly.

Table 3: Research Reagent Solutions for Downtime Management

| Tool or Solution | Function | Application in Downtime Reduction |

|---|---|---|

| CMMS Software | A computerized system to schedule, track, and document maintenance activities. | Automates maintenance scheduling, tracks work orders, stores equipment manuals, and analyzes MTBF/MTTR metrics [4]. |

| Predictive Maintenance Sensors | IoT sensors that monitor equipment conditions (vibration, temperature, etc.). | Provides early warning of component failure by detecting anomalies, allowing for intervention before a breakdown occurs [5]. |

| Critical Spare Parts Inventory | An organized stock of high-failure-rate components. | Expedites repairs by ensuring essential parts are readily available, minimizing waiting times during a breakdown [1]. |

| Image and Program Backups | Complete backups of a system's software and configuration. | Enables rapid recovery after a system failure or during battery replacement, preventing lengthy reprogramming [6]. |

| Standardized Operating Procedures (SOPs) | Documented, step-by-step instructions for operation and maintenance. | Ensures consistency, reduces operator error, and provides clear guidelines for troubleshooting and recovery [1]. |

| Laboratory Information System (LIS) | A software system for managing laboratory operations and data. | A modern, cloud-native LIS can provide real-time monitoring of equipment and maintenance schedules, reducing manual tracking errors [7]. |

Troubleshooting Guides

Guide 1: Diagnosing Mechanical Drive Failures in Robotic Arms

Problem: Robotic arm exhibits reduced positioning accuracy, unusual noises (grinding or clicking), or complete failure to move under load. These symptoms are common in collaborative robots (cobots) and precision industrial arms.

Investigation Methodology:

- Vibration Analysis: Use an accelerometer sensor to monitor high-frequency vibrations on the drive housing. Analyze the data for specific signatures:

- Wear Signature: An increase in overall vibration amplitude across a broad frequency range.

- Pitting Signature: The appearance of specific frequency components related to bearing and gear tooth fault frequencies [8].

- Performance Monitoring: Track the robot's positional error against the commanded input. A gradual increase in error is a key indicator of wear-induced backlash in components like harmonic drives [8].

- Visual Inspection: During scheduled maintenance, inspect for visible signs of wear, pitting, or cracks on the flexspline and circular spline components of the harmonic drive [8].

Solution: Based on the diagnostic data, proceed with the following:

- If early wear is detected: Adjust the preventive maintenance schedule and continue monitoring. Verify lubrication levels and quality [8].

- If advanced pitting or cracking is identified: Plan for immediate component replacement to prevent catastrophic failure and costly unplanned downtime [8].

Guide 2: Resolving Sensor Spoofing and Data Integrity Issues

Problem: A cyber-physical system behaves erratically based on incorrect sensor data, despite the sensor itself appearing functional. This can lead to safety incidents or corrupted experimental data.

Investigation Methodology:

- Log Audit: Check system logs for critical security events. A key vulnerability is the failure to log events like repeated failed authentication attempts or unauthorized configuration changes [9].

- Signal Analysis: Use an oscilloscope or spectrum analyzer to examine the sensor's output signal for anomalies. Look for unexpected signal patterns or frequencies that do not correspond to the physical environment.

- Vulnerability Assessment: Determine if the sensor is susceptible to Out-of-Band (OOB) vulnerabilities. This occurs when a physical stimulus outside the sensor's intended operational range (e.g., using lasers on a microphone) creates a false in-band measurement [10].

- Out-of-Range Vulnerability: The attack uses the correct signal type (e.g., acoustic) but at an amplitude or frequency the sensor cannot handle correctly.

- Cross-Field Vulnerability: The attack uses a different signal modality (e.g., magnetic) that the sensor unintentionally converts into an electrical signal [10].

Solution:

- Implement Secure Logging: Ensure all critical security events are logged, stored securely to prevent tampering, and monitored with real-time alerts [9].

- Sensor Hardening: Physically shield sensors from unintended environmental influences. For critical measurements, use sensor fusion from multiple, different sensor types to cross-verify data [10].

- Component Testing: During system design, test sensors against known OOB stimuli to characterize and mitigate their vulnerabilities [10].

Guide 3: Addressing Fluidic System Control Failures in Soft Robotics

Problem: A soft fluidic robot responds slowly, moves erratically, or fails to actuate. This is common in systems with multiple fluidic actuators or degrees of freedom (DoFs) that rely on external pressure sources.

Investigation Methodology:

- Leak & Obstruction Check: Examine all fluidic lines and connectors for leaks or physical blockages [5].

- Valve Bank Inspection: For systems with external control, verify the operation of each individual valve controlling pressure to the actuators. Mismatched hardware or software incompatibility can cause communication breakdowns [11] [12].

- Onboard Controller Evaluation: If the robot uses integrated microfluidic control, assess the control method's capabilities against the system's requirements. Key metrics to consider are shown in the table below [12].

Solution:

- For tethered systems: Replace faulty valves and ensure software drivers are compatible and updated [11].

- For autonomous systems: Redesign the control system to integrate more appropriate onboard control hardware, such as specialized soft valves, to reduce dependence on external connections and improve response speed [12].

Table: Comparison Metrics for Onboard Fluidic Control Methods in Soft Robotics [12]

| Metric | Description | Why It Matters |

|---|---|---|

| Controllable DoFs | Number of independent actuators that can be managed. | Determines the complexity of tasks the robot can perform. |

| External Connections | Number of fluidic/electrical lines needed from outside the robot. | Impacts autonomy, miniaturization, and freedom of movement. |

| Scalability | How small the control components can be made and integrated. | Critical for applications with strict size constraints (e.g., medical robots). |

| Maximum Pressure | Highest pressure the control method can support or generate. | Dictates the force and stroke capabilities of the actuators. |

| Bandwidth | The speed of the control system's response. | Affects the robot's reaction speed and dynamic performance. |

Frequently Asked Questions (FAQs)

Q1: Our lab's robotic automation system suffers from frequent, unplanned downtime. What is the most effective maintenance strategy?

A: A Preventive Maintenance (PM) program is the most effective strategy to maximize uptime. Reactive maintenance (fixing after failure) leads to costly interruptions. A robust PM program for laboratory robotics should include [5]:

- Daily: Visual inspections of mechanical components and fluid levels.

- Weekly: Calibration of measurement accuracy and system performance checks.

- Monthly: Deep cleaning of components and replacement of consumables.

- Quarterly: Comprehensive system evaluation, including software updates and hardware inspections. Implementing a digital maintenance management system can organize schedules, track parts, and ensure compliance, helping to achieve over 98% uptime [5].

Q2: We are designing a new soft robot for a biomedical application. How can we make it more resilient to pressure surges that could cause catastrophic failure?

A: Consider integrating controlled failure mechanisms into the design. Research has shown that by intentionally designing specific, well-understood failure points into a soft fluidic device (e.g., in heat-sealed textiles), the system can be made to fail in a predictable and non-catastrophic way. This allows the device to relieve excess pressure and can even enable a single system to perform multiple tasks by leveraging these designed failure modes [13].

Q3: Our robotic cell's harmonic drive failed unexpectedly. Are there advanced methods to predict such failures before they happen?

A: Yes, Prognostics and Health Management (PHM) is an advanced approach that moves from scheduled maintenance to condition-based and predictive maintenance. PHM involves [8]:

- Condition Monitoring: Using sensors (e.g., vibration, temperature) to continuously monitor the drive's state.

- Diagnostics: Analyzing the data to identify early signs of degradation, such as specific wear patterns.

- Prognostics: Using data-driven or physics-based models (digital twins) to forecast the component's Remaining Useful Life (RUL). This allows you to schedule maintenance right before a predicted failure, maximizing component use and preventing unexpected downtime [8].

Diagnostic Workflows & System Relationships

Sensor Vulnerability Assessment Workflow

Diagram Title: Sensor Vulnerability Assessment Workflow

Relationship Between CPS Features and Sensor Defense

Diagram Title: CPS Features and Sensor Defense

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Resources for Robotic System Reliability Research

| Item | Function/Application |

|---|---|

| Accelerometer Sensors | Used for vibration analysis to detect early-stage mechanical wear in drives and gears [8]. |

| Digital Maintenance Management Platform | Software to organize preventive maintenance schedules, track parts inventory, and ensure regulatory compliance [5]. |

| Signal Generator & Amplifier | Essential equipment for conducting vulnerability assessments on sensors, allowing researchers to inject out-of-band signals [10]. |

| Oscilloscope / Spectrum Analyzer | For analyzing sensor output signals to identify spoofing attacks or unintended signal noise [10]. |

| Microfluidic Valves & Control Components | The fundamental building blocks for creating onboard control systems in soft fluidic robots, reducing the need for external tethers [12] [14]. |

| Heat-Sealable Textiles | Common materials in sheet-based fluidic devices for soft robotics; understanding their failure thresholds is key to designing controlled failure mechanisms [13]. |

In automated laboratories, where the precision of drug discovery and research is paramount, unplanned downtime is a critical adversary. A significant portion of this downtime stems from environmental factors that progressively degrade robotic systems. This technical support center provides researchers and scientists with targeted troubleshooting guides and FAQs to identify, mitigate, and prevent failures caused by temperature fluctuations, humidity, and chemical exposure, directly supporting the broader thesis of maximizing uptime in robotic laboratory systems.

Quantitative Impact of Environmental Stressors

Understanding the frequency and financial impact of failures is crucial for prioritizing mitigation strategies. The following data summarizes how environmental factors and other common issues contribute to robotic downtime.

Table 1: Common Causes of Robot Downtime and Their Impact

| Cause of Downtime | Contribution to Downtime | Key Statistics |

|---|---|---|

| Software & Control Issues [15] | 42% | Leading cause of unplanned stoppages |

| Hardware Failures [15] | 35% | Often linked to mechanical wear from environmental stress |

| Sensor Malfunctions [15] | 8-12% | Frequently caused by dust, moisture, heat, or misalignment |

| Connectivity Issues [15] | 10-15% | Disruptions in networked robotic systems |

| Average Unplanned Downtime Cost [15] | N/A | Up to $260,000 per hour for manufacturers |

Table 2: Reliability Metrics and Proactive Maintenance Benefits

| Metric | Typical Range | Implication for Lab Operations |

|---|---|---|

| Mean Time Between Failures (MTBF) [15] | 30,000 - 60,000 hours | Aids in planning system overhauls and replacements |

| Mean Time To Repair (MTTR) [15] | 3 - 6 hours | Highlights importance of repair preparedness |

| Predictive Maintenance Uptime Boost [15] | Reduces downtime by 30-50% | Justifies investment in condition-monitoring sensors |

Troubleshooting Guide: A Systematic Workflow

Adopting a logical, step-by-step methodology is essential for efficiently resolving issues. The following workflow, based on established troubleshooting frameworks, helps narrow down the root cause of robotic failures [16] [17].

Frequently Asked Questions (FAQs)

How does temperature variation specifically affect my robotic arm's accuracy?

Temperature fluctuations cause thermal expansion and contraction in metal components, leading to positional drift. High temperatures can also lead to overheating motors and controllers, triggering protective shutdowns [15]. For example, a robot's repeatability specification can degrade significantly outside its rated operating temperature. Mitigation includes maintaining a stable lab temperature and allowing the robot to warm up to its operating temperature before running high-precision tasks.

Our lab uses various solvents. What are the first signs of chemical-induced wear?

Early signs include cracking or swelling of cable jackets and protective boots, corrosion on metallic joints and end-effectors, and hazing or etching of optical sensor lenses [18]. Pneumatic components like suction cups can also degrade, losing grip strength [19]. Regularly inspect cables and joints for tackiness, stiffness, or discoloration, which precede failure.

High humidity is causing condensation inside our instrument enclosures. What is the immediate risk?

Condensation poses a severe risk of short circuits on printed circuit boards (PCBs) and corrosion on electrical contacts, leading to catastrophic failure [15]. This is a critical issue that requires immediate action. Implement industrial-grade desiccant dehumidifiers in the lab space or localized dry air purges for sensitive electrical cabinets to control moisture levels.

Can environmental factors cause intermittent faults that are hard to diagnose?

Yes. Environmental faults are often intermittent and notoriously difficult to trace [19]. For instance, high humidity can lower the insulation resistance of cables, causing sporadic communication errors. Temperature-dependent faults may only appear when the system has been running for several hours. A logical approach, as shown in the troubleshooting workflow, and data logging of environmental conditions are key to diagnosis [17].

Our robotic vision system is unreliable. Could ambient light be the problem?

Absolutely. Changes in ambient lighting from windows or overhead lamps can dramatically affect the consistency of a machine vision system [19]. A surface's appearance can change with humidity or temperature, further confusing the system. The solution is to use a dedicated, enclosed vision light source to ensure consistent illumination independent of the lab environment.

The Scientist's Toolkit: Essential Reagents & Materials for Mitigation

Proactive maintenance requires specific materials to combat environmental wear. The following table details key solutions for protecting robotic laboratory assets.

Table 3: Research Reagent Solutions for Robotic System Protection

| Item Name | Function | Application Example |

|---|---|---|

| Conformal Coatings | Protects circuit boards from moisture and chemical contamination. | Applied to PCBs within control cabinets to prevent short circuits and corrosion in humid environments. |

| High-Flex, Chemical-Resistant Cables | Withstands repeated motion and exposure to splashes without cracking. | Replacing standard cables in cable carriers exposed to solvents or disinfectants [19]. |

| Specified Greases & Lubricants | Reduces friction and wear in joints while resisting washout. | Used in preventive maintenance on robot axis joints to ensure smooth operation and block moisture [20]. |

| Industrial Desiccants | Controls humidity within enclosed spaces to prevent condensation. | Placed inside control cabinets and vision system enclosures in non-climate-controlled lab areas. |

| Approved Laboratory Cleaners & Solvents | Safely removes contamination without damaging sensitive components. | Used to clean optical surfaces of sensors and cameras without causing hazing or degradation [18]. |

Proactive Maintenance & Monitoring Protocols

Transitioning from reactive troubleshooting to proactive prevention is the most effective strategy for reducing downtime.

- Implement Condition-Based Monitoring: Install sensors to continuously track environmental conditions (temperature, humidity, volatile organic compounds) inside critical enclosures and the lab itself [15]. This data provides an objective baseline and early warning of damaging conditions.

- Establish an Environmental Inspection Routine: Create a weekly checklist that includes:

- Visual inspection of all cables for stiffness, cracking, or discoloration [19].

- Check for corrosion on metal surfaces, fasteners, and end-effectors.

- Verification of airflow and dust accumulation on cooling fans and vents.

- Develop a Targeted Preventive Maintenance Schedule: Move beyond time-based maintenance. Use data from your monitoring systems to trigger maintenance tasks. For example, if humidity sensors consistently read above a set threshold, increase the frequency of inspections for corrosion and electrical connections.

Troubleshooting Guide: Step-by-Step Diagnostics for System Failure

When faced with a complete or partial system halt, follow this structured diagnostic workflow to identify the root cause related to multi-vendor incompatibility.

1. Initial Problem Recognition and Definition The first step is to recognize that a problem exists and determine its scope. Ask: Is the entire workflow down, or is one specific robot or device not responding? Check the central management dashboard (if available) for system status alerts. [21] Define whether the issue is likely due to hardware failure, software/communication error, or human error (e.g., mislabeled samples, incorrect commands). [22] This initial triage determines the direction of your troubleshooting.

2. Data Gathering and Questioning Collect as much information as possible about the failure.

- When did it start? Note the exact time the failure occurred.

- What was happening? Document the experiment step, samples being processed, and devices in use.

- Review logs: Examine activity logs and error messages from all involved systems and devices. Look for error codes or failure notifications. [22]

- Check connections: Verify physical connections (power, network cables) and software-based communication links between systems. [22]

3. Listing and Testing Potential Causes Create a list of likely and unlikely explanations. Common multi-vendor issues include:

- Incompatible Communication Protocols: Devices cannot understand each other's commands. [21]

- Data Format Inconsistency: One system outputs data in a format the next cannot read. [21]

- Misaligned Equipment: Hardware components are physically unable to interact as intended. [22]

- Failed Software Handshake: An API (Application Programming Interface) call between systems is timing out or being rejected. [23]

Use a process of elimination. If possible, run a simplified version of the workflow to see if the issue recurs. [22]

4. Running Comprehensive Diagnostics Perform a full review of every system in the workflow. Beyond the robots, this includes:

- Consumables and Reagents: Verify correct barcodes and that items are not expired. [22]

- Sample Storage & Handling: Confirm samples were stored and handled correctly prior to automation. [22]

- Points of Human Interaction: Identify any manual steps where errors could have been introduced. [22]

5. Seeking External Help and Evaluation If internal diagnostics fail, escalate.

- Consult Colleagues and Forums: Other scientists may have solved similar issues. [22]

- Contact Vendor Support: Provide vendors with the data you've collected. They are aware of common issues and can run deeper system checks. [22]

The flowchart below outlines this logical troubleshooting progression:

FAQs: Addressing Common Multi-Vendor Integration Challenges

Q1: Our lab uses robots from three different manufacturers. Data from each is siloed, making it hard to get a unified view of our experiment's status. What can we do? A: This is a classic challenge of fragmented data. [21] The solution is to invest in a centralized robot management platform capable of ingesting data from disparate sources. Look for platforms that offer AI-powered data unification, which can normalize inconsistent performance metrics and provide real-time, fleet-wide monitoring from a single interface. [21] This eliminates the need for manual data aggregation and provides predictive insights to prevent unexpected downtime. [21]

Q2: We rushed a new Electronic Data Capture (EDC) system integration, and now we have data inconsistencies and compliance risks. How can we fix this? A: This scenario often results from skipping critical integration steps. [23] Immediately:

- Pause and Assess: Halt the affected processes to prevent further data corruption.

- Develop a Clear Roadmap: Create a detailed plan for the integration process, including data mapping, shared parameters, and timelines. [23]

- Conduct Rigorous Testing: Set up a test environment to simulate the integration and identify the root cause of the inconsistencies. [23]

- Ensure Data Compatibility: Validate that data formats and structures are compatible between your systems and the vendor's. [23]

- Validate for Compliance: Re-validate the integration to ensure it meets GxP regulations, maintaining comprehensive documentation throughout. [23]

Q3: A large part of our system's downtime seems to be spent on activities that aren't the actual repair. How can we reduce this? A: Downtime is more than just repair time. Research shows that repair actions can constitute only about 50% of total downtime. [24] The remaining time is spent on pre- and post-repair actions. To minimize this:

- Pre-repair: Analyze time spent on failure detection, decision-making, and technician travel. Implement real-time monitoring to speed up detection and standardize decision-making protocols. [24] [25]

- Post-repair: Streamline functional testing and validation procedures. Approximately 30% of overall downtime can be attributed to transportation and operational delays, so optimizing these logistics offers significant improvement opportunities. [24]

Q4: What are the most effective strategies to prevent unplanned downtime in a complex automated lab? A: A proactive, multi-layered approach is key.

- Implement Preventive Maintenance (PM): Establish scheduled protocols including daily inspections, weekly calibrations, and monthly deep cleaning to identify issues before they cause failures. [5]

- Adopt Predictive Maintenance (PdM): Use monitoring systems (e.g., vibration analysis, temperature tracking) to analyze performance data and predict failures before they occur. [5]

- Ensure Robust Changeovers: Optimize and standardize procedures for switching between experiments or samples to minimize transition delays. [26]

- Invest in Continuous Training: Well-trained staff can identify and resolve issues promptly, reducing errors and speeding up recovery. [26]

Q5: When integrating a new vendor's system, what are the non-negotiable best practices to ensure compatibility and avoid future downtime? A:

- Thorough Vendor Assessment: Evaluate vendors not just on their product, but on their ability to integrate with your existing ecosystem. Assess their API capabilities and compliance track record. [23]

- Demand Open APIs: Ensure the vendor uses robust, well-documented APIs. An API-first architecture is crucial for enabling different software systems to communicate and work together seamlessly. [23]

- Test Integration Capabilities Extensively: Never skip rigorous testing in a simulated environment before finalizing the integration. [23]

- Plan for GxP Compliance from the Start: Align your integration plans with regulatory requirements, including risk assessments and validation, from the very beginning. [23]

Quantitative Downtime Analysis and Maintenance Data

The following tables summarize key quantitative data to help you benchmark and analyze downtime in your own systems.

Table 1: Downtime Component Analysis for Heavy Machinery (Case Study) [24]

| Downtime Component | Percentage of Total Downtime | Description of Activities |

|---|---|---|

| Repair Actions | ~50% | Diagnosis, disassembly, parts replacement, reassembly, and testing. |

| Pre- and Post-Repair Actions | ~50% | Vehicle arrival, delays, preparatory work, diagnostics, and performance testing. |

| Transportation & Delays | ~30% | Time for travel from repair facility to the machine and operational holdups. |

Table 2: Recommended Preventive Maintenance Schedule for Laboratory Robotics [5]

| Frequency | Maintenance Tasks | Key Performance Indicators |

|---|---|---|

| Daily | Visual checks of mechanical components, fluid levels, system alerts. | System uptime, alert frequency. |

| Weekly | Verification of measurement accuracy, system performance parameters. | Calibration drift, precision metrics. |

| Monthly | Thorough cleaning of accessible components, replacement of consumables. | Contamination rates, consumable usage. |

| Quarterly | Comprehensive system evaluation, software updates, hardware inspections. | Mean Time Between Failures (MTBF), overall equipment effectiveness (OEE). |

The Researcher's Toolkit: Essential Solutions for Integration and Maintenance

Table 3: Key Research Reagent Solutions for System Integration and Troubleshooting

| Item | Function in Integration & Maintenance |

|---|---|

| Centralized Management Platform | Provides unified observability, operations, and analytics for heterogeneous robotic fleets, breaking down data silos. [21] |

| API (Application Programming Interface) | Acts as a "communication reagent" enabling different software systems to exchange data and commands seamlessly. [23] |

| Preventive Maintenance (PM) Kit | Includes checklists, calibration tools, and replacement consumables for scheduled maintenance to prevent failures. [5] |

| Predictive Monitoring Tools | Software and sensors (e.g., for vibration, temperature) that act as a "diagnostic reagent" by predicting failures before they occur. [5] |

| Standard Operating Procedure (SOP) | A documented "protocol reagent" that ensures consistent and correct procedures for troubleshooting and maintenance. [26] |

| Digital Maintenance Management System | A software "catalyst" that organizes maintenance schedules, tracks parts inventory, and ensures regulatory compliance. [5] |

Technical Support Center

Troubleshooting Guides

Problem: Robotic System Experiences Unplanned Stoppages

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Check all real-time EtherCAT communication terminals and network connections for faults. [27] | Control system regains communication with all modules; error lights on terminals turn off. |

| 2 | Verify the status of the personnel protection system and all E-stop circuits via the Safety over EtherCAT (FSoE) interface. [27] | Safety system status is reported as "normal"; safety I/O terminals show no active fault codes. |

| 3 | Inspect robotic air casters (if applicable) and seismic anchoring to ensure the system has not shifted from its operational envelope. [27] | System is confirmed to be on a stable, level base and within its defined kinematic mountings. |

| 4 | Review the fault detection and diagnostics (FDD) dashboard for alerts on sensor drift or actuator failure that may have preceded the stoppage. [28] | Root cause is identified (e.g., a drifting humidity sensor, a stuck damper). |

Problem: Laboratory Equipment Fails Calibration or Produces Erroneous Results

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Confirm that 100% calibration of the equipment has been performed according to the manufacturer's specifications. [29] | Calibration certificates are current and valid for the instrument. |

| 2 | Run internal quality control samples; ensure ≥98% of results are within acceptable limits. [29] | QC data falls within established control ranges, verifying instrument performance. |

| 3 | Use predictive analytics software to check for subtle anomalies in the equipment's sensor data that indicate early-stage failure. [28] | A potential failing component (e.g., a specific sensor) is identified before it causes a major outage. |

Frequently Asked Questions (FAQs)

Q: What is the industry benchmark for operational uptime in a critical laboratory? A: While a universal percentage is not explicitly stated, the leading standard is to limit equipment and process downtime to ≤0.5% of total operational hours annually [29]. The primary goal is to achieve near-zero unplanned downtime, as interruptions can risk research outcomes and incur massive costs, sometimes exceeding $500,000 per hour in pharmaceutical settings [28].

Q: How can we reduce experiment changeover time on a complex robotic positioning system? A: Implementing advanced robotic systems with integrated automation and PC-based control has proven highly effective. For example, at the SLAC National Accelerator Laboratory, a new robotic system reduced equipment changeover time from two days to just 12 hours [27]. This was achieved by enabling off-line setup of experiments and using a user-friendly front-end software to dial in new configurations rapidly [27].

Q: Our lab still uses manual logbooks. What is the advantage of a digital system for compliance and uptime? A: Digital compliance dashboards automate the tracking of critical parameters like temperature, humidity, and pressure. They provide real-time visibility and automatically flag any parameter that goes out of range, creating a permanent digital logbook for audits [28]. This replaces labor-intensive, error-prone manual processes and allows staff to identify and diagnose issues proactively, preventing compliance breaches and downtime [28].

Q: What role does AI play in improving laboratory uptime? A: Artificial intelligence is a key trend for enhancing efficiency and reducing errors. AI can suggest reflex testing based on initial results, shortening the diagnostic journey [30]. In billing and operations, AI can automate data entry, predict claim denials, and provide real-time compliance monitoring, which streamlines workflows and reduces administrative burdens that can impact operational focus [30].

Quantitative Data Tables

| Benchmark Metric | Industry Standard Target |

|---|---|

| Operational Downtime | ≤0.5% of total operational hours annually |

| Turnaround Time (TAT) for STAT Tests | ≤1 hour |

| Turnaround Time (TAT) for Routine Tests | ≤24 hours |

| Turnaround Time (TAT) for Specialized Tests | ≤72 hours |

| Sample Rejection Rate | ≤0.3% |

| First Attempt Specimen Collection Success | ≥98% |

| Process Automation | 80% - 90% of laboratory processes |

| Inventory Turnover | 6 - 8 times per year |

| Facility Type | Estimated Cost of Downtime |

|---|---|

| Hospital (Average) | $7,900 per minute |

| Pharmaceutical Manufacturing | $100,000 - $500,000 per hour |

Experimental Protocols

Methodology: Implementing a Predictive Maintenance Program

Objective: To transition from reactive repairs to predictive maintenance, thereby reducing unplanned equipment downtime.

Procedure:

- Sensor Deployment: Install sensors to monitor key operational parameters (e.g., vibration, temperature, pressure, relative humidity) on critical laboratory equipment such as robotic arms, automated incubators, and analytical instruments [28].

- Data Integration: Feed the sensor data into a centralized Fault Detection and Diagnostics (FDD) software platform or a cloud-native Laboratory Information System (LIS) [7] [28].

- Baseline Establishment: Allow the system to collect data during a period of normal operation to establish a performance baseline for each instrument.

- Anomaly Detection: Configure the analytics software to alert facility managers or lab managers when data trends indicate early degradation or anomalous behavior (e.g., a sensor beginning to drift, increased motor vibration) [28]. The system should prioritize alerts for equipment tagged as "critical" [28].

- Proactive Intervention: Schedule maintenance based on the analytics-driven alerts to replace or recalibrate components before they fail completely and cause operational downtime [28].

Methodology: Phased Technology Upgrade with Minimal Disruption

Objective: To replace a legacy laboratory automation or control system while maintaining continuous operation of critical research activities.

Procedure:

- Architecture Planning: Select an open, modular control system architecture (e.g., based on standard protocols like BACnet/IP) to avoid vendor lock-in and ensure future flexibility [28].

- Phased Rollout: Divide the upgrade project into logical phases (e.g., by laboratory wing or by instrument group). Execute cutovers during planned, overnight downtime windows [28].

- Extensive Pre-Testing: Before each cutover, perform extensive bench-testing of new controllers and software on a virtual machine or test environment to validate functionality [28].

- Rapid Cutover: Execute the final system switch, installation, commissioning, and testing within a tightly defined window (e.g., 12 hours) [28].

- Validation: Confirm full system operability and integration with existing platforms (e.g., EHRs, LIS) before resuming all research activities in the upgraded section [29] [28].

System Architecture & Workflow Diagrams

Predictive Maintenance Workflow

Open Architecture for Upgrades

The Scientist's Toolkit: Research Reagent & Solutions

Table 3: Essential Control & Integration Technologies

| Item | Function in Research Setup |

|---|---|

| EtherCAT Communication Terminals | Provide a highly modular, real-time network for connecting sensors, drives, and I/O, enabling precise control of robotic motion systems. [27] |

| Fault Detection and Diagnostics (FDD) Software | Acts as a tireless sentinel, using analytics to monitor equipment data streams for subtle anomalies and early signs of failure before they cause downtime. [28] |

| PC-based Embedded Controller | Serves as an all-in-one automation brain, handling motion control, logic, and machine vision integration seamlessly on a single device. [27] |

| Safety over EtherCAT (FSoE) | Provides a robust, integrated safety system for personnel protection, enabling reliable E-stop functionality and safe access to equipment hutches. [27] |

| Cloud-native LIS (Lab Information System) | A scalable, central nervous system for the lab that integrates instrument data, manages workflows, and provides AI-driven insights to optimize operations and reduce administrative errors. [7] |

Implementing Proactive Maintenance Frameworks: From Schedules to AI Copilots

In robotic laboratory systems research, unplanned downtime directly impacts a facility's ability to deliver data quickly and accurately, hurting productivity and the bottom line [31]. A structured, tiered preventive maintenance (PM) schedule is fundamental to reducing this downtime, extending equipment life, and ensuring the integrity of experimental results [32] [33]. This guide provides a detailed framework, from daily checks to annual overhauls, to help researchers and drug development professionals maintain peak operational efficiency.

Why a Tiered Preventive Maintenance Schedule is Critical

Preventive maintenance is a proactive, organized approach to regularly inspect, service, and manage your lab’s robotics and AI equipment [33]. Implementing a scheduled program can reduce unexpected repairs by 24% [31]. The core benefits include:

- Maximized Uptime: Prevents minor issues from escalating into major failures that halt research [32].

- Extended Equipment Lifespan: Regular servicing reduces wear and tear, protecting your investment [31].

- Enhanced Safety: Regular checks ensure safety systems like emergency stops and interlocks function correctly, protecting personnel [32].

- Data Integrity: Properly maintained instruments ensure the accuracy and repeatability of your results [31].

The Tiered Preventive Maintenance Schedule

The following table outlines a comprehensive maintenance schedule, synthesizing daily, weekly, monthly, quarterly, and annual tasks. Always prioritize the manufacturer's guidelines if they are more stringent than general recommendations [32].

Table 1: Tiered Preventive Maintenance Schedule for Robotic Laboratory Systems

| Frequency | Key Maintenance Tasks and Focus Areas |

|---|---|

| Daily | • Visual Inspection: Check for visible damage, loose connections, or signs of wear [32].• Cleanliness: Wipe down the robot and components to remove dust, dirt, and debris [32].• Sensor Check: Ensure sensors are clean and unobstructed [32].• Software & Alerts: Check for software updates and system alerts [32] [31]. |

| Weekly | • Lubrication: Check and lubricate moving parts such as joints and bearings [32] [33].• Test Run: Execute a test program to verify proper functioning [32].• Safety Systems: Verify the functionality of emergency stop buttons and other safety devices [32]. |

| Monthly | • Detailed Inspection: Inspect the end-effectors (e.g., grippers, tools) for alignment and wear [32].• Calibration: Verify the calibration of sensors, vision systems, and force-torque sensors [32] [33].• Controller Maintenance: Clean ventilation fans with compressed air and back up the controller's memory [32].• Battery Check: Test batteries in the controller and robot arm [32]. |

| Quarterly | • Deep Cleaning: Perform a detailed clean of the mechanical unit to remove chips and debris [32].• Structural Check: Tighten all external bolts and inspect all unit cables for kinks, cuts, or tears [32].• Joint & Bearing Inspection: Inspect joints and bearings for wear and tear [32].• Wiring Inspection: Check wiring and connectors for damage [32]. |

| Annually | • Battery Replacement: Replace batteries in the mechanical unit, RAM, and CPU [32].• Fluid Replacement: Replace grease and oil as recommended by the manufacturer [32].• Brake Operation: Inspect the operation of the brakes for any delays [32].• Comprehensive Audit: Perform a full system audit, parts replacement, and thorough functional testing [32] [33]. |

Troubleshooting Common Robotic System Issues

Even with a robust PM schedule, issues can arise. Here are answers to frequently asked troubleshooting questions.

FAQ 1: Our robotic arm is not moving to its programmed position accurately. What should we check?

This issue of position deviation or repeatability problems can have several causes [32].

- Methodology: Follow a logical escalation path from simple to complex.

- Check for Mechanical Issues: Visually inspect for signs of grease or oil leakage, and check that all external bolts are tight [32]. Listen for excessive noise or vibrations during movement [32].

- Verify Calibration: Recalibrate the robot's sensors and vision systems. Check and clean any machine vision lenses [32].

- Inspect the Teach Pendant: Examine the teach pendant for any faults or error codes that may indicate a controller issue [32] [19].

- Test Brake Functioning: Inspect the operation of the brakes to ensure there are no delays in engagement or disengagement [32].

FAQ 2: The system has stopped unexpectedly and won't restart. What are the first steps to diagnose the problem?

A full system stoppage requires a swift, methodical response [19].

- Methodology:

- Check for Alarm Codes: Look for fault or alarm codes on the teach pendant. The system's fault history is the primary source of diagnostic information [19].

- Confirm Safety Mechanisms: Ensure all safety interlocks, such as gate switches or light curtains, have not been triggered. A common reason for a robot to stop is an open guard [32] [19].

- Inspect Basic Electrical Components: Check for blown fuses, bad switches, and faulty solenoids. Look for broken wires, especially in high-flex cables [19].

- Cycle Power: A system restart can sometimes clear registers and reset flags that caused an unexplained stoppage [19].

FAQ 3: We are experiencing intermittent, random faults. How can we identify the root cause?

Intermittent faults are among the most challenging to diagnose [19].

- Methodology:

- Analyze Environmental Factors: Explore electrical noise spikes from other equipment (e.g., welders, large pumps) that can cause seemingly random events [19]. Also, monitor lab temperature and humidity, as fluctuations can affect sensitive electronics [5].

- Check Connections: Verify that all electrical connections are tight and secure, and that the robot is properly grounded [32].

- Inspect End-Effector Components: For gripping problems, check suction cups for splits or inspect pneumatic systems for sufficient air pressure [19].

- Review Recent Changes: Determine if any changes were made recently, such as a software update, a modification to the end-effector, or even a change in sample characteristics that could confuse a vision system [19].

Workflow for Implementing a Tiered Maintenance Schedule

The following diagram illustrates the logical relationship and workflow between the different tiers of maintenance and the overarching goal of reducing downtime.

Beyond the schedule itself, successful maintenance programs rely on a suite of tools and documents.

Table 2: Essential Resources for Robotic Lab Maintenance

| Resource | Function and Purpose |

|---|---|

| Maintenance Management Software (CMMS) | Digital platforms for organizing schedules, tracking parts inventory, managing technician assignments, and ensuring regulatory compliance. They automate scheduling and prevent documentation delays [33] [34] [5]. |

| Manufacturer Service Manuals | Provide the definitive source for maintenance intervals, specific procedures, and recommended lubricants and parts. Always adhere to these guidelines where they are stricter than general ones [32] [33]. |

| Predictive Monitoring Tools | Use IoT sensors and data analytics (e.g., for vibration, temperature) to predict failures before they occur, transforming maintenance from scheduled to need-based [33] [5]. |

| Centralized Documentation Log | A secure repository for all maintenance records, parts replacement logs, and calibration certificates. This is essential for regulatory compliance (CAP, CLIA), quality assurance, and tracking equipment history [5]. |

| Calibration Kits & Specialty Tools | Kits containing manufacturer-approved parts and tools required for specific PM tasks, ensuring technicians have everything needed to complete jobs correctly and efficiently [34]. |

Mastering Calibration and Documentation for Unbroken Regulatory Compliance

This technical support center provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals minimize downtime in robotic laboratory systems by addressing common calibration and documentation challenges.

Troubleshooting Guides

Guide 1: Resolving Low Positional Accuracy in Robotic Arms

Problem: The robot's end-effector consistently misses its target position by several millimeters, jeopardizing experimental repeatability.

Investigation:

- Step 1: Verify Basic Operation. Execute the motion path through the teach pendant without a payload. Observe if the robot completes the path and note any unusual sounds like grinding or clicking from the joints [35].

- Step 2: Check for Overheating. Feel the servo motors for excessive heat. Overheating can indicate insufficient lubrication, worn bearings, or electrical issues leading to performance drift [36].

- Step 3: Inspect Mechanical Components. With the robot powered off and locked out, check for loose fasteners in the arm and wrist assembly. Look for signs of wear on gears and belts [36].

- Step 4: Review Error Logs. Check the system's controller and the Laboratory Information System (LIS) for recent error codes or alerts that might pinpoint the fault [37] [38].

Resolution: Based on your investigation, proceed as follows:

- If mechanical looseness or wear is found: Tighten fasteners to specification and replace worn components. Re-calibrate the robot after repairs [36].

- If motors are overheating: Clean cooling systems and replace clogged filters. If the problem persists, the servo motor may need replacement [35] [36].

- If no obvious mechanical faults are found: The robot likely requires a full kinematic calibration to correct parametric errors in its geometric model [39] [40]. Proceed with the calibration protocol outlined in the Experimental Protocols section below.

Guide 2: Addressing Compliance Documentation Gaps

Problem: An audit is approaching, and the records for robot calibration and maintenance in the Laboratory Information System (LIS) are incomplete.

Investigation:

- Step 1: Audit the LIS Audit Trail. Use the LIS's built-in audit trail feature to generate a report of all actions related to the robotic systems. This will identify which specific procedures lack a digital record [37] [41].

- Step 2: Reconcile Paper Records. Gather all paper-based logs, technician notes, and instrument output from the periods in question. Cross-reference these with electronic entries [41].

- Step 3: Identify the Root Cause. Determine why records are missing. Common causes include lack of training, cumbersome data entry processes, or failure to integrate an instrument's data output with the LIS [37].

Resolution:

- For missing past records: Digitize the gathered paper records by uploading scanned copies into the LIS's document management system. Ensure each entry is dated and linked to the specific robot and procedure [41].

- For future prevention: Implement and enforce Standard Operating Procedures (SOPs) that mandate real-time data entry into the LIS. Automate data capture where possible by integrating robotic systems directly with the LIS to eliminate manual entry errors and omissions [37] [38]. Schedule recurring training for staff on these SOPs [41].

Frequently Asked Questions (FAQs)

Q1: What is the most cost-effective way to improve our robot's absolute accuracy for high-precision tasks? A: Kinematic calibration is the most cost-effective method. It uses software-based error modeling and parameter identification to enhance pose accuracy without the expense of hardware improvements [39] [40]. For a 6-DOF serial robot, this can reduce position errors from over 1.95 mm to as little as 0.012 mm and orientation errors from 0.0146 rad to 0.000131 rad [40].

Q2: How can we quickly get a malfunctioning robot back online to avoid halting a critical experiment? A: First, perform basic checks: restart the controller, check for tripped breakers, and ensure all safety interlocks are engaged [35]. For more complex issues, utilize a remote robot monitoring and control system if available. These systems allow a specialist to perform remote diagnostics and even correct errors by jogging grippers or resetting configurations without being on-site, dramatically reducing repair time [42].

Q3: Our lab follows GLP. How does an LIS help us demonstrate compliance during an inspection? A: A robust LIS is central to GLP compliance. It provides a centralized, tamper-evident repository for all data [41]. It enforces data integrity through electronic signatures and detailed audit trails that record every action, ensuring full traceability from raw data to final results for auditors [37]. It also manages SOPs, equipment calibration schedules, and personnel training records, keeping all essential compliance documents audit-ready [41].

Q4: What are the key components of a preventative maintenance plan to avoid unexpected robot downtime? A: A comprehensive plan includes [36]:

- Scheduled Mechanical Inspections: Checking joints, belts, gears, and fasteners.

- Regular Lubrication: Following the robot manufacturer's intervals and grease specifications.

- Electrical System Checks: Inspecting cables, connectors, and I/O boards for wear or damage.

- Controller and Software Updates: Keeping firmware and software current.

- Sensor Calibration: Regularly calibrating vision systems and force sensors.

- Program Backups: Maintaining backups of all robot programs and parameters.

Experimental Protocols

Protocol: Kinematic Calibration of a Serial Industrial Robot

This methodology, based on recent research, enhances measurement efficiency by decomposing the robot kinematics, saving measurement configurations and controller memory without sacrificing accuracy [39].

1. Objective To identify the actual structural parameters of a serial industrial robot to improve its absolute positional and orientation accuracy.

2. Equipment and Reagent Solutions

| Item | Function |

|---|---|

| Laser Tracker | High-precision measurement system for capturing the robot end-effector's 6-DOF position and orientation in space [39] [40]. |

| Calibration Sphere | Defines a precise reference point for the measurement system. |

| Mounting Hardware | Securely attaches the reflector (from the laser tracker) to the robot's flange. |

| Kinematic Modeling Software | Software used to establish the error model (e.g., based on Modified Denavit-Hartenberg parameters) and perform parameter identification [40]. |

3. Methodology

Step 1: Kinematics Decomposition.

- Divide the original 6-DOF robot into three lower-mobility virtual sub-robots. These sub-robots share the same base and end-effector as the original robot, avoiding the need to detect any intermediate frames, which reduces measurement complexity and cost [39].

Step 2: Data Collection.

- For each sub-robot, command the robot to a set of measurement configurations that cover the joint motion ranges. The required number of configurations is significantly reduced due to the decomposition [39].

- At each configuration, use the laser tracker to measure the actual 6-DOF pose of the end-effector and record the corresponding nominal joint variables [40].

Step 3: Error Model Establishment and Identification.

- For each sub-robot, treat it as a kinematically equivalent system with configuration-dependent joint motion errors [39].

- Use the measurement data to calculate the equivalent joint motion errors.

- Employ a Least-Squares Support Vector Regression (LS-SVR) model to approximate the function between the nominal joint variables (input) and the observed joint motion errors (output). This model learns the error pattern without a complex physical model [39].

Step 4: Error Prediction and Compensation.

- The trained LS-SVR models can predict joint motion errors for any given joint configuration within the calibrated range.

- These predicted errors are used to compensate the nominal joint variables sent to the robot controller, thereby correcting the pose of the end-effector [39].

4. Workflow Visualization

Data Presentation

Table 1: Impact of Preventative Maintenance on Robot Downtime

Facilities that implement a proactive maintenance program report significant operational improvements [36].

| Metric | Improvement Range |

|---|---|

| Reduction in Unexpected Downtime | 50 - 75% |

| Extension of Robot Lifespan | 25 - 30% |

| Savings in Repair Costs | 20 - 40% |

Table 2: Calibration Performance Results for a 6-DOF Robot

A calibration experiment on an ABB IRB 2600 robot demonstrated the effectiveness of the kinematics decomposition method in reducing pose errors [39] [40].

| Performance Indicator | Before Calibration | After Calibration |

|---|---|---|

| Maximum Position Error | 1.9536 mm | 0.0122 mm |

| Maximum Orientation Error | 0.0146 rad | 0.000131 rad |

Leveraging IoT and Smart Sensors for Real-Time Equipment Health Monitoring

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center provides researchers and scientists with practical solutions for implementing IoT-based health monitoring systems to minimize downtime in robotic laboratory environments.

Troubleshooting Common IoT Monitoring Issues

Problem: Inconsistent or Missing Sensor Data

- Step 1: Verify Power and Connectivity - Check that the sensor is receiving stable power and, for wireless sensors, confirm the connection to the gateway (e.g., ESP32) is active. Look for status LEDs [43].

- Step 2: Inspect Data Logging - Ensure your cloud platform (e.g., ThingSpeak, Firebase) is correctly configured to receive data. Check for any errors in the device's data transmission code [43].

- Step 3: Examine Sensor Health - Use a multimeter to check the sensor's output. Compare readings against a known good sensor or a calibrated value to identify drift or failure [44].

Problem: High Latency in Real-Time Alerts

- Step 1: Analyze Network Performance - Monitor network latency and packet loss between edge devices and the cloud. High latency can delay critical alerts [45] [44].

- Step 2: Review Data Processing Location - For ultra-low-latency responses, implement edge computing. Preprocess and analyze data on a local gateway (like a Raspberry Pi) to trigger immediate alerts without waiting for cloud round-trips [45].

- Step 3: Optimize Stream Processing - If using cloud processing, ensure your stream processing engine (e.g., Apache Flink) is properly tuned and that data partitions are balanced to prevent bottlenecks [45].

Problem: Rapid Battery Drain in Wireless Sensors

- Step 1: Profile Power Consumption - Use diagnostic tools to track the device's power consumption across different operational states (active, sleep, transmission) [44].

- Step 2: Adjust Transmission Frequency - Program the device to transmit data less frequently or to spend more time in a low-power sleep mode [44].

- Step 3: Implement Adaptive Sensing - Configure the device to collect data based on events or thresholds rather than at fixed, continuous intervals [44].

Frequently Asked Questions (FAQs)

Q1: What are the most critical parameters to monitor for a laboratory robotic arm? The most critical hardware parameters are CPU usage, memory allocation, and temperature to prevent overheating and performance throttling. For mechanical health, monitor vibration signatures and motor current draw, as anomalies can indicate wear, misalignment, or impending bearing failure [44] [46].

Q2: How can we ensure data security and privacy when transmitting sensitive research data? Protecting information requires a multi-layered approach. Implement real-time streaming encryption for data in transit. Establish strong authentication and authorization protocols (e.g., API keys, OAuth) to control device and user access. Ensure your system complies with relevant regulations by incorporating rigorous access control mechanisms across the entire data pipeline [45].

Q3: Our system is generating too many false alerts. How can we improve accuracy? To reduce false alerts, avoid using simple, static thresholds. Instead, deploy smart anomaly detection systems that use machine learning to study historical performance data and establish normal operating ranges. These systems can identify subtle, unusual behavior that might signal trouble without triggering on benign, short-lived fluctuations [44].

Q4: What is the difference between real-time and near-real-time processing for our monitoring application?

- Real-Time Processing: Provides responses within milliseconds to seconds. This is essential for mission-critical applications requiring instant analysis and action, such as immediately stopping a robot upon detecting a dangerous collision [45].

- Near-Real-Time (NRT) Processing: Delivers insights in seconds or minutes. NRT suffices for applications that tolerate small delays, such as generating hourly reports on average equipment utilization or tracking long-term temperature trends in an incubator [45].

Q5: How can we scale our IoT monitoring system from a few devices to hundreds without performance loss? Scaling successfully requires a streaming-first architecture designed for elasticity. Utilize platforms like Apache Kafka that inherently support event-driven architectures and horizontal scaling. Choose cloud-based deployment for flexibility, as it allows you to scale processing and storage resources on-demand without large upfront investments in physical hardware [45] [46].

Quantitative Data for Strategic Planning

The tables below summarize key market and performance data to help justify and plan your IoT monitoring investment.

Table 1: Robotics Downtime Reduction Services Market Data [46]

| Market Segment | 2024 Market Size | Projected 2033 Market Size | CAGR (2025-2033) |

|---|---|---|---|

| Global Market | USD 2.45 Billion | USD 7.15 Billion | 13.2% |

| Service Type: Predictive Maintenance | (Part of global market) | (Part of global market) | (Leading segment) |

| Application: Healthcare | (Part of global market) | (Part of global market) | (Growing segment) |

Table 2: Key IoT Device Health Metrics and Monitoring Impact [44]

| Monitoring Parameter | Impact of Proactive Monitoring | Tools/Methods |

|---|---|---|

| Battery Life / Power | 20% threshold alerts prevent unexpected shutdowns; smart strategies extend battery lifespan. | Voltage tracking, automated notifications [44] |

| Hardware Status (CPU, Temp) | Predictive maintenance reduces upkeep costs by 30% and extends equipment life. | Temperature sensors, usage rate monitoring [44] |

| Connectivity (Signal, Latency) | Maintains operational continuity; analysis reveals needed infrastructure upgrades. | SNR, Network Response Time monitoring [44] |

Experimental Protocol: Implementing an Equipment Health Monitor

This protocol outlines the methodology for setting up a real-time health monitoring system for a critical piece of laboratory equipment, such as an automated liquid handler.

Objective: To deploy a non-invasive IoT sensor kit that monitors equipment status and performance, enabling early fault detection and predictive maintenance.

Materials and Reagents: Table 3: Essential Research Reagent Solutions and Materials

| Item Name | Function / Explanation |

|---|---|

| ESP32-S3 Microcontroller | A low-cost system-on-chip with integrated Wi-Fi and Bluetooth, serving as the central gateway for sensor data acquisition and transmission to the cloud [43]. |

| DS18B20 Temperature Sensor | A digital temperature sensor using the 1-Wire protocol for accurate readings (±0.5°C) with minimal power consumption, ideal for monitoring motor or ambient temperature [43]. |

| Vibration Sensor (e.g., ADXL345) | A small, low-power accelerometer that detects vibrations and orientation changes, useful for identifying unusual oscillations in motors and moving parts. |

| AC Current Sensor (e.g., SCT-013) | A non-invasive sensor that clamps around a power cable to measure the current draw of the equipment, which can signal mechanical load and motor strain. |

| ThingSpeak / Firebase Cloud Platform | Cloud-based IoT platforms that provide a straightforward way to aggregate, visualize, and analyze live data streams from multiple devices [43]. |

Methodology:

- Sensor Deployment: Mount the DS18B20 temperature sensor on the equipment's primary motor housing using thermal adhesive. Attach the vibration sensor securely to the equipment's frame. Clip the AC current sensor around the main power cord of the device.

- Edge Device Configuration: Connect all sensors to the ESP32-S3 microcontroller. Develop and flash firmware to read sensor values at a defined interval (e.g., every 10 seconds), package the data into a JSON format, and transmit it to the chosen cloud platform via Wi-Fi.

- Cloud Dashboard and Alert Configuration: Within the cloud platform (e.g., ThingSpeak), create a dashboard to visualize the real-time telemetry data. Configure alert rules to trigger notifications (e.g., via email or SMS) when sensor readings exceed predefined thresholds (e.g., temperature > 70°C, vibration amplitude > 0.5g).

- Data Analysis and Model Training: Collect baseline data during normal equipment operation. Use this data to train simple machine learning models for anomaly detection, moving beyond static thresholds to dynamic fault prediction.

System Architecture and Workflow Visualizations

The following diagrams, created using the specified color palette, illustrate the logical flow and architecture of a robust equipment health monitoring system.

Real-Time Monitoring Data Flow

Fault Alert and Escalation Logic

Integrating Maintenance Management Software for Scheduling and Parts Tracking

This technical support center provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals minimize downtime in robotic laboratory systems by effectively integrating maintenance management software.

Troubleshooting Guide: Common Integration Issues

This section addresses specific technical problems you might encounter when integrating maintenance management software for scheduling and parts tracking.

Problem: The software does not communicate with laboratory robotic assets, preventing data collection.

- Question: Why can't my CMMS pull operational data like error codes or cycle counts from our high-throughput screening robots?

- Investigation: This is typically a connectivity or configuration issue.

- Solution:

- Verify Physical Connectivity: Ensure all cables connecting the robotic assets to the network are secure. For wireless systems, check signal strength.

- Check Communication Protocols: Confirm that the robotic system and the CMMS support a common communication protocol (e.g., OPC UA, MQTT, or manufacturer-specific APIs). Consult both systems' documentation.

- Validate Data Mapping: Within the CMMS, ensure the asset's unique identifier (e.g., asset tag "HTS-01") is correctly mapped to the data stream from the robot. Incorrect mapping is a common cause of failure [47].

- Review Firewall Settings: Corporate firewall settings may block the port used for communication. Work with your IT department to ensure the required ports are open.

Problem: Inaccurate parts tracking leads to stockouts of critical consumables.

- Question: Our system shows a spare pipette head is in stock, but the bin is empty, causing an unexpected experiment halt. What went wrong?

- Investigation: The root cause is often a process failure, not a software bug.

- Solution:

- Audit Inventory Counts: Perform a physical count of all tracked items to reconcile with the digital records in the CMMS.

- Implement Barcode/QR Code Scanning: Mandate the use of scanners for all check-ins and check-outs of critical parts. This eliminates manual data entry errors [47].

- Set Reorder Points: For every critical spare part, establish a minimum quantity. The CMMS should automatically generate a purchase request or alert when stock falls below this threshold [48].

- Review Procedures: Ensure all team members are trained on the correct procedure for withdrawing and recording parts.

Problem: Scheduled maintenance tasks are not being generated or assigned.

- Question: I configured a monthly calibration task for our automated liquid handler, but no work orders were created.

- Investigation: The issue likely lies in the task setup or scheduling parameters.

- Solution:

- Confirm Task Activation: Check that the preventive maintenance (PM) task is set to "Active" and not in "Draft" mode.

- Review Assignment Rules: Verify the task is assigned to a valid user or team. If the assigned technician is inactive, the task may not generate.

- Check Schedule Parameters: Ensure the start date for the schedule has passed and the frequency (e.g., every 30 days) is correct. Check for conflicting "downtime" or "holiday" calendars that might be suppressing generation.

- Validate Trigger Conditions: If the task is condition-based (e.g., trigger after 1000 cycles), confirm the asset's cycle count is being accurately recorded by the CMMS.

Problem: The CMMS is generating a high volume of alerts, causing the team to ignore them.

- Question: My team is receiving dozens of automated alerts daily, many of which are low-priority, leading to important alerts being missed.

- Investigation: This is an issue of alert fatigue due to poor configuration.

- Solution:

- Implement Alert Triage: Classify alerts based on urgency and impact. Use priorities like "Critical," "High," "Medium," and "Low."

- Customize Notification Rules: Configure the CMMS to send immediate push notifications or emails only for "Critical" alerts (e.g., complete robot failure). "Low" priority alerts can be consolidated into a daily digest email.

- Utilize Failure Codes: When closing a work order, technicians should assign a failure code. Analyze this data to identify and eliminate recurring, low-importance alerts at their root cause [49].

Frequently Asked Questions (FAQs)

Q1: What is the most critical data to import when first setting up our CMMS for lab robotics? Start with a complete and accurate asset list of all your robotic systems, including make, model, and serial number. Then, import the maintenance manuals, historical work orders, and a current inventory of all critical spare parts. Establishing this "single source of truth" is foundational for effective scheduling and parts tracking [49].

Q2: How can we ensure our team actually uses the new CMMS and follows the new maintenance schedules? Choose a user-friendly CMMS with a mobile app for technicians in the field. Involve the team in the selection and setup process to foster a sense of ownership. Provide comprehensive training and clearly communicate the benefits, such as how the system will make their jobs easier by reducing emergency repairs and improving parts availability [47].

Q3: Our lab operates 24/7. How can we perform maintenance without disrupting critical experiments? Leverage the scheduling flexibility of your CMMS. You can:

- Schedule non-intrusive PMs (like visual inspections) during active run times.

- Use the system to plan and reserve longer maintenance windows between experimental cycles.

- Employ predictive maintenance (PdM) techniques, using sensor data to identify the optimal time for maintenance before a failure occurs, thus avoiding unplanned downtime during a critical run [5].

Q4: What are the key metrics we should track to prove this software is reducing downtime? Your CMMS should help you track and report on the following key performance indicators (KPIs) [50]:

- Mean Time Between Failures (MTBF): This should increase as your maintenance program improves.

- Mean Time To Repair (MTTR): This should decrease as parts tracking and troubleshooting improve.

- Overall Equipment Effectiveness (OEE): A holistic measure of availability, performance, and quality.

- Planned Maintenance Percentage (PMP): The ratio of planned to unplanned maintenance; a higher percentage indicates a more proactive, efficient program.

Q5: We have multiple types of robots from different vendors. Can one CMMS handle all of them? Yes, a modern CMMS is designed to be a centralized platform. The key is to ensure it is compatible with the various data outputs from your different systems. This may require some initial configuration or custom API integrations, but it will provide a unified view of your entire lab's maintenance operations [51].

The following table summarizes key quantitative data relevant to maintaining robotic laboratory systems, based on industry findings.

Table 1: Maintenance Performance Metrics and Outcomes

| Metric / Factor | Industry Benchmark or Outcome | Source |

|---|---|---|

| Cost of Unplanned Downtime | Average of $25,000 per hour | [48] |

| Predictive Maintenance Impact | Reduces downtime by 30-50% | [51] |

| Predictive Maintenance Impact | Extends equipment life by 20-40% | [51] |

| Lab Automation Uptime Target | 99.5% requirement for critical systems | [5] |

| Robotic System Uptime | 98%+ achievable with preventive maintenance | [5] |

Experimental Protocol: Implementing a Condition-Based Maintenance (CBM) Workflow

This detailed methodology describes how to set up a CBM program for a robotic arm using integrated sensor data and your CMMS [51].

- Sensor Installation and Calibration: Install appropriate condition monitoring sensors (e.g., vibration, temperature, current draw) directly on the critical components of the robotic arm, such as the joints and gripper. Calibrate all sensors according to manufacturer specifications.

- Baseline Data Collection: Operate the robotic arm under normal conditions for a set period (e.g., 72 hours). Record the sensor data to establish a baseline "healthy" signature for parameters like vibration frequency and operating temperature.