Small Data Machine Learning in Materials Science: Strategies for Overcoming Limited Data Challenges

This article provides a comprehensive guide for researchers and drug development professionals facing the common challenge of small datasets in materials machine learning.

Small Data Machine Learning in Materials Science: Strategies for Overcoming Limited Data Challenges

Abstract

This article provides a comprehensive guide for researchers and drug development professionals facing the common challenge of small datasets in materials machine learning. It explores the fundamental nature of small data problems, details advanced methodological approaches like transfer learning and data augmentation, offers troubleshooting strategies for issues like overfitting and data imbalance, and outlines robust validation techniques to ensure model reliability. By synthesizing the latest research, this guide aims to equip scientists with practical strategies to extract maximum value from limited experimental and computational data, accelerating materials discovery and development.

Understanding the Small Data Dilemma in Materials Informatics

Defining 'Small Data' in the Context of Materials Science

Frequently Asked Questions (FAQs)

FAQ 1: What constitutes a 'Small Dataset' in materials science? A 'Small Dataset' refers to a collection of data that is insufficient in size, diversity, or quality to train a reliable machine learning model using standard methods. In materials science, this challenge is severe due to the high costs and time required for data acquisition from experiments and simulations. The problem is often compounded by issues of data diversity, noise, imbalance, and high-dimensionality [1] [2]. The core issue is not just the absolute number of data points, but the relationship between this number and the complexity (degrees of freedom) of the machine learning model, where an inadequate sample size leads to underfitting and large prediction bias [3].

FAQ 2: Why is the small data problem so common in materials science? The small data problem is pervasive in materials science due to several constraints specific to the field:

- High Acquisition Costs: Experimental materials synthesis and characterization, as well as high-fidelity computational simulations (e.g., DFT), are often extremely expensive and time-consuming [1] [3].

- Technical and Practical Limits: Data collection can be limited by technical hurdles, ethical considerations, privacy, and security [1].

- Data Imbalance and Quality: Available data may be noisy, contain missing values, or be imbalanced, where one class of materials or properties is significantly underrepresented [2].

FAQ 3: What are the primary consequences of using small datasets for ML? Using small datasets for machine learning typically results in models with poor predictive performance and generalizability. The key consequence is underfitting, characterized by a large prediction bias, which restricts the model's ability to make accurate predictions on unseen data, especially when exploring unknown domains of the materials space [3]. The power of machine learning to recognize complex patterns is generally proportional to the size of the dataset [2].

FAQ 4: Can I use advanced Deep Learning models with small materials data? Standard Deep Learning models, which typically require tens of thousands to millions of labeled training examples, are often not suitable for small data scenarios [4]. However, strategies have been developed to enable the use of sophisticated models even with limited data. These include data augmentation to artificially expand the dataset, transfer learning where knowledge from a pre-trained model is adapted, and incorporating domain knowledge to guide the model [5] [1] [4].

Troubleshooting Guides

Problem: Your ML Model is Underfitting on a Small Dataset

Symptoms:

- High error on both training and test data.

- Failure to capture underlying trends in the data.

Solutions:

- Integrate Domain Knowledge: Infuse your model with physical laws, empirical rules, or crude property estimations. This provides a foundational understanding that the model can build upon, reducing its reliance on vast amounts of data [3].

- Apply Data Augmentation: Artificially expand your dataset by creating synthetic data points. In materials science, this can be achieved through physical model-based simulations or techniques that introduce realistic variations to existing data [5] [1].

- Utilize Transfer Learning: Start with a model that has been pre-trained on a large, related dataset (even from a different domain). Then, fine-tune the model on your specific, small dataset. This leverages general patterns learned from the large dataset [5] [4].

- Implement Active Learning: Use a cyclical process where the model identifies which new data points would be most informative to acquire. This strategy optimizes experimental or computational resources by focusing them on gathering the most valuable data [5].

Problem: Difficulty in Finding Suitable Datasets for Your Research

Symptoms:

- Inability to locate relevant, high-quality, ML-ready data.

- Discovery of datasets that are too small or lack critical metadata.

Solutions:

- Consult Curated Repositories: Utilize community-driven resources that catalog high-quality datasets. Below is a table of prominent repositories and key datasets in materials science.

Table: Key Resources for Materials Science Datasets

| Resource Name | Type | Notable Datasets (Size) | Format |

|---|---|---|---|

| Awesome Materials & Chemistry Datasets [6] | Curated Repository | A curated list of useful datasets for ML/AI, including OMat24, Materials Project, and Open Catalyst. | Various (CSV, JSON, CIF) |

| Materials Project [6] | Computational Database | >500,000 inorganic compounds | JSON/API |

| Open Catalyst 2020 (OC20) [6] | Computational Dataset | ~1.2M surface relaxations | JSON/HDF5 |

| Crystallography Open Database (COD) [6] | Experimental Database | ~525,000 crystal structures | CIF/SMILES |

| Cambridge Structural Database (CSD) [6] | Experimental Database | ~1.3 million organic crystal structures | CIF |

| OMat24 [6] | Computational (Meta) | 110 million DFT entries | JSON/HDF5 |

- Use Educational Datasets for Method Development: For initial testing and benchmarking of new ML algorithms on small data problems, consider using well-documented educational datasets like those from the Materials Data Science Book (MDS) [7]. These are often simpler and come with extensive documentation.

Table: Example Educational Datasets from the MDS Book

| Dataset | Domain | Size | Description |

|---|---|---|---|

| MDS-1 | Tensile Test | 350 data records | Simulated stress-strain curves for a material at three temperatures. |

| MDS-2 | Microstructure | 5,000 images (64x64) | Microstructure images from Ising model simulations with associated temperatures. |

| MDS-3 | Microstructure | 5,000 images (64x64) | Microstructure images from Cahn-Hilliard simulations of spinodal decomposition. |

Experimental Protocols for Small Data

Protocol: Data Augmentation via Physical Model-Based Simulation

Objective: To generate synthetic data points to augment a small experimental dataset. Materials: A physical model (e.g., a constitutive law, phase field model, or DFT) that approximates the material behavior of interest. Methodology:

- Identify Key Parameters: Determine the input parameters for your physical model (e.g., composition, temperature, strain).

- Define Parameter Ranges: Establish realistic ranges for these parameters based on domain knowledge.

- Run Simulations: Execute the model across a designed set of input parameters (e.g., via a grid search or random sampling) to generate synthetic output data (e.g., stress, conductivity, phase).

- Validate: Where possible, check that the simulated data aligns qualitatively with known physical behavior or a subset of held-out experimental data.

- Combine Datasets: Merge the synthetic data with the original experimental data to create a larger, augmented training set for ML [1].

Protocol: Implementing a Transfer Learning Workflow

Objective: To leverage a pre-trained model to improve performance on a small, target dataset. Materials: A large "source" dataset and a small "target" dataset; a suitable ML model architecture (e.g., a Graph Neural Network for molecules/materials). Methodology:

- Pre-training: Train a model on the large source dataset (e.g., the OMat24 database with 110M DFT entries) until it achieves good performance. This allows the model to learn general feature representations [5] [4].

- Model Adaptation: Remove the final output layer(s) of the pre-trained model.

- Fine-Tuning: Replace the output layers with new ones suited to the specific task on your small target dataset. Re-train the entire model, or just the final layers, using the small target dataset. The learning rate for fine-tuning is often set lower than that used for pre-training [4].

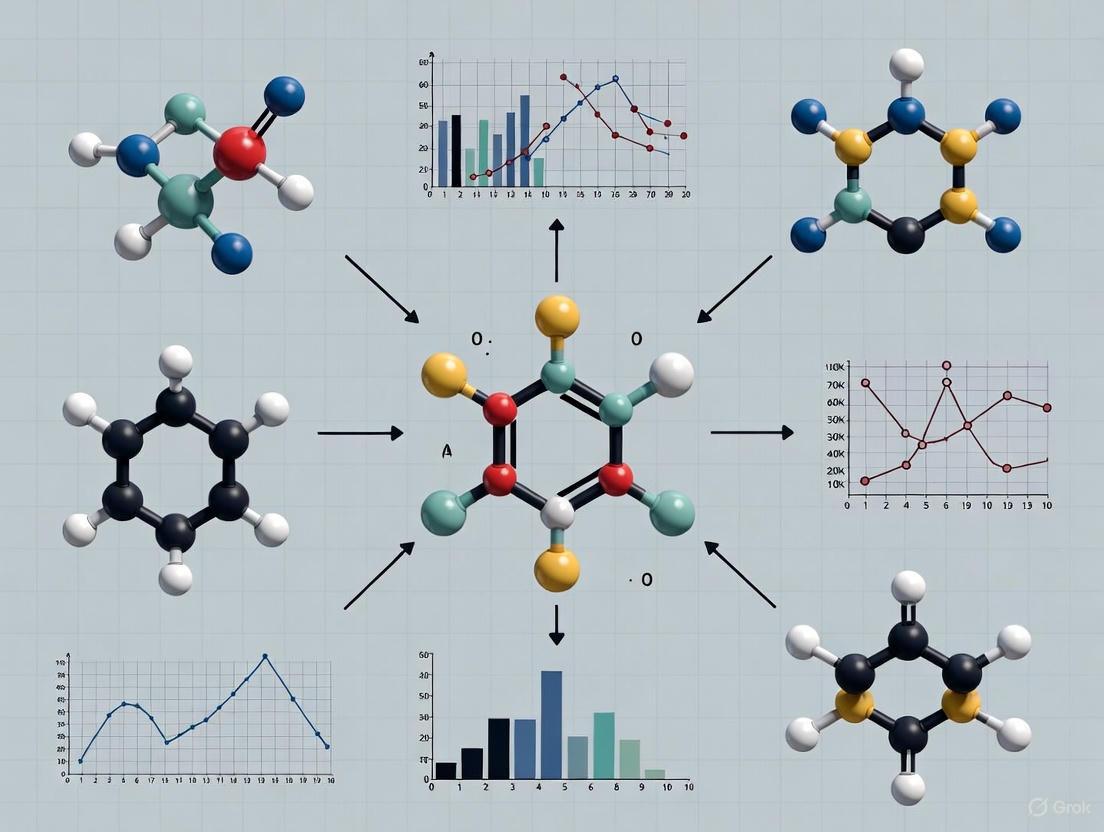

The following diagram illustrates this workflow:

Protocol: Active Learning for Optimal Data Acquisition

Objective: To strategically select the most valuable new experiments or calculations to perform, maximizing model performance with minimal new data. Materials: An initial small dataset; a machine learning model capable of quantifying its prediction uncertainty. Methodology:

- Train Initial Model: Train a model on the currently available small dataset.

- Query Strategy: Use the model to predict on a large pool of candidate materials (defined by their features). Select the candidates where the model's prediction uncertainty is highest, or where it would have the most impact on the model [5].

- Acquire Data: Perform the experiment or calculation for the selected candidate(s). This step is the most resource-intensive.

- Update Model: Add the newly acquired data point(s) to the training set and re-train the model.

- Iterate: Repeat steps 2-4 until a desired performance level is reached or resources are exhausted.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools and Data for Small Data ML in Materials Science

| Tool / Resource | Function & Application | Key Characteristics |

|---|---|---|

| Pre-Trained Foundation Models [4] | Provides a starting point for specific ML tasks via transfer learning, drastically reducing the data required. | Models pre-trained on massive datasets (e.g., OMat24, OMol25). |

| Data Augmentation Algorithms [5] [1] | Generates synthetic data to expand training sets, improving model robustness and performance. | Includes physical model-based simulation and other feature-space manipulations. |

| Uncertainty Quantification (UQ) [5] | Identifies the reliability of model predictions, which is critical for guiding active learning and establishing trust. | Methods include ensemble learning, Bayesian neural networks, etc. |

| Domain Knowledge & Crude Estimators [3] | Constrains ML models to physically plausible solutions, reducing the solution space the model must learn. | Includes physical laws, empirical rules, and semi-empirical models. |

| Ensemble Learning Models [1] [2] | Combines multiple models to improve predictive performance and robustness, often outperforming single models on small data. | Includes Random Forest, Gradient Boosting Trees, and model averaging. |

| Curated Data Repositories [6] | Provides access to high-quality, structured datasets for initial model development and pre-training. | Examples: Awesome Materials & Chemistry Datasets, Materials Project. |

For researchers in materials science and drug development, the challenge of extracting profound insights from limited experimental data is a daily reality. Unlike domains with abundant, easily generated data, materials science is inherently a small data domain. This characteristic stems from the high costs, extensive time investments, and extreme complexity associated with materials experiments and synthesis. Operating within this constraint is not a limitation of scientific methodology but rather a fundamental aspect of the discipline. This technical support center provides targeted troubleshooting guides and frameworks to help you effectively navigate these challenges, with a specific focus on strategies for successful machine learning applications in small data contexts.

The following table quantifies the primary constraints that define materials science as a small data domain, making it inherently different from data-rich fields.

Table: Key Factors Making Materials Science a "Small Data" Domain

| Constraint Factor | Typical Impact on Data Generation | Consequence for ML Research |

|---|---|---|

| High Experimental Costs [8] | Limits the number of feasible experiments, leading to sparse datasets. | High risk of overfitting; model generalization becomes a significant challenge. |

| Extended Experiment Duration [9] | Slow data acquisition rate; data points can take weeks or months to generate. | Iterative model training and validation cycles are prohibitively slow. |

| Complex, Multi-variable Synthesis [9] | Each data point exists in a high-dimensional space (composition, structure, processing). | Requires sophisticated feature engineering and dimensionality reduction. |

| Data Reproducibility Issues [9] | Experimental noise and irreproducibility corrupt data quality and reduce effective dataset size. | Increases uncertainty and requires robust models that can handle noisy data. |

FAQs: Addressing Common Small Data Challenges

Q1: What defines a "small dataset" in materials informatics, and what are the primary bottlenecks in generating larger ones? A "small dataset" in this context refers to a collection of data points that is insufficient for training conventional machine learning models without triggering severe overfitting. The bottlenecks are multifaceted. Financially, the specialized equipment and precursor materials required are often extraordinarily expensive [8]. Temporally, traditional "artisanal" experimentation, often conducted manually by graduate students, can take months for a single cycle of synthesis, characterization, and testing [8] [10]. Technically, achieving reproducibility is a major hurdle, as minute deviations in precursor mixing or environmental conditions can alter material properties, a problem that MIT researchers found required computer vision models to even diagnose [9].

Q2: We have a small internal dataset for a novel polymer. How can we possibly train a reliable predictive model? The most effective strategy is to leverage Transfer Learning. This involves using a model initially pre-trained on a large, general materials dataset (the "source domain"), such as the Materials Project database, and then fine-tuning it with your small, specific polymer dataset (the "target domain") [5] [11]. For example, a study on doped perovskites successfully predicted formation energies by first training a deep learning model on a large ABO3-type perovskite dataset and then fine-tuning it on a much smaller dataset of doped structures [11]. This approach allows the model to incorporate fundamental knowledge of chemistry and physics before specializing.

Q3: With a limited budget for experiments, how should I prioritize which experiments to run next to maximize information gain? Implement an Active Learning framework. This machine learning strategy intelligently selects the most informative experiments to run next. The core workflow involves using a model's own uncertainty to guide the experimental design process [5] [12]. As detailed in the troubleshooting guide below, you start by training an initial model on your existing data. For the next round of experiments, you prioritize synthesizing and testing the materials for which your model's predictions are most uncertain. This ensures that every experiment you conduct provides the maximum possible amount of new information to improve your model.

Q4: Our experimental data is sparse and high-dimensional. What techniques can help reduce the feature space without losing critical information? Integrating Domain Knowledge directly into the model is a powerful method for feature reduction. Instead of relying solely on data-driven descriptors, you can use physics-based or chemistry-based principles to create more meaningful features. This could include using known crystal structure descriptors, thermodynamic parameters, or functional groupings [5]. This approach grounds the model in established science, reducing the risk of it learning spurious correlations from the limited data. Data augmentation techniques, such as slightly perturbing existing data points within physically plausible bounds, can also artificially expand the effective training set [5].

Troubleshooting Guides for Small Data Experiments

Issue: Machine Learning Model Performs Poorly on Small Internal Dataset

Problem: A model trained on a small, proprietary dataset shows high accuracy on training data but fails to predict new, unseen material compositions accurately (i.e., it overfits).

Solution: Implement a Transfer Learning workflow.

Methodology:

- Source Model Selection and Pre-training: Begin with a deep neural network model. Pre-train this model on a large, public-domain dataset that is broadly related to your material class (e.g., the Materials Project for inorganic crystals [11]).

- Feature Representation: Construct a unified feature representation for the source and target data. This typically involves elemental properties (e.g., electronegativity, atomic radius) and structural information [11].

- Model Fine-tuning: Replace the final layers of the pre-trained model and perform additional training (fine-tuning) using your small internal dataset. This process allows the model to adapt its general knowledge to your specific problem.

- Validation: Always validate the transfer-learned model on a held-out portion of your internal data or through subsequent experimental confirmation.

Diagram 1: Transfer Learning Workflow for Small Data

Issue: Optimizing an Experimental Campaign with a Limited Number of Tests

Problem: You have resources for only 20-30 experiments but need to find a material with optimal properties within a vast compositional space.

Solution: Deploy an Active Learning loop with Bayesian optimization.

Methodology:

- Initial Design: Start with a small, diverse set of initial experiments (4-6 data points) selected to cover the compositional space.

- Model and Predict: Train a machine learning model (e.g., a Gaussian process model) on all collected data. The model will provide predictions and, crucially, uncertainty estimates for all unexplored compositions.

- Acquisition Step: Use an acquisition function (e.g., Upper Confidence Bound or Expected Improvement) to select the next experiment. This function balances exploring high-uncertainty regions and exploiting areas with predicted high performance [12].

- Iterate: Run the selected experiment(s), add the new data to the training set, and repeat steps 2-4 until the experimental budget is exhausted. As MIT researchers describe, this is like "Netflix recommending the next movie to watch based on your viewing history, except instead it recommends the next experiment to do" [9].

Diagram 2: Active Learning Loop for Experimental Optimization

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table: Key Solutions for Small Data Challenges in Materials Machine Learning

| Tool / Solution | Function | Application Example |

|---|---|---|

| Transfer Learning [5] [11] | Transfers knowledge from a data-rich "source domain" to a data-poor "target domain", significantly improving model performance. | Fine-tuning a model pre-trained on general perovskites (ABO3) to predict properties of novel doped perovskites (AA'BB'O6) [11]. |

| Active Learning [5] [12] [9] | An iterative process that uses model uncertainty to select the most informative experiments, maximizing the value of each data point. | Guiding a robotic lab to synthesize the next material composition most likely to improve a fuel cell catalyst's performance [9]. |

| Data Augmentation [5] | Artificially expands the training set by creating slightly modified versions of existing data points, based on physically plausible rules. | Generating new virtual data points by applying small random noise to the elemental features of known stable materials. |

| Domain Knowledge Integration [5] [12] | Uses established scientific principles to create meaningful features or constrain models, preventing unphysical predictions. | Using known crystal structure descriptors (e.g., tolerance factor, octahedral factor) as primary inputs for perovskite stability prediction [11]. |

| Ensemble Models [5] | Combines predictions from multiple models to improve accuracy and provide a robust measure of prediction uncertainty. | Using a random forest model, which aggregates many decision trees, to predict material properties with higher confidence. |

Advanced Protocols: Case Study of a Self-Driving Lab

The CRESt (Copilot for Real-world Experimental Scientists) platform developed at MIT provides a robust protocol for overcoming small data challenges through full automation and multimodal learning [9].

Objective: To discover a high-performance, low-cost multielement catalyst for a direct formate fuel cell.

Experimental Workflow:

- Multimodal Knowledge Integration: The system begins by ingesting diverse information sources, including scientific literature text, known chemical compositions, and structural data, to form an initial knowledge base [9].

- Dimensionality Reduction: Principal Component Analysis (PCA) is performed on this "knowledge embedding space" to define a reduced, tractable search space for experimentation [9].

- Robotic Synthesis and Testing: A liquid-handling robot and a carbothermal shock system automatically synthesize candidate compositions. An automated electrochemical workstation then tests their performance [9].

- Continuous Feedback and Optimization: Results from synthesis and testing (including microstructural images from automated electron microscopy) are fed back into the active learning model. This model, which combines Bayesian optimization with literature-derived knowledge, plans the next round of experiments [9].

- Computer Vision Monitoring: Cameras and vision-language models monitor experiments in real-time to detect and suggest corrections for irreproducibility issues, such as a misaligned sample or pipetting error [9].

Outcome: This protocol enabled the exploration of over 900 chemistries and 3,500 tests in three months, leading to the discovery of an eight-element catalyst with a record 9.3-fold improvement in power density per dollar over pure palladium [9]. This showcases the power of integrated AI and robotics to solve small data problems by generating high-quality data at an unprecedented scale.

Troubleshooting Guides

Troubleshooting Guide: Overfitting

Problem: Model performs well on training data but poorly on new, unseen data.

- Check for Data Scarcity: Insufficient training data is a primary cause. In materials science, small datasets are common due to high experimental or computational costs [13].

- Verify Model Complexity: Overly complex models learn noise and idiosyncrasies in the training data. A model that is more complex than necessary for your problem will overfit [14].

- Inspect Training Metrics: Use cross-validation, not just training data accuracy, for evaluation. A high accuracy on training data with a high error rate on test data indicates overfitting [14] [15].

Solutions:

- Apply Regularization: Techniques like L1 (Lasso) or L2 (Ridge) regularization add a penalty for model complexity, discouraging overfitting [15].

- Use Early Stopping: When training an iterative model like a neural network, pause the training process before the model starts to learn the noise in the data [15].

- Implement Dimensionality Reduction: Use feature selection (pruning) or projection methods like Principal Component Analysis (PCA) to reduce the number of descriptors and eliminate irrelevant features [13] [15].

Troubleshooting Guide: High-Dimensionality

Problem: Too many features or descriptors compared to the number of data samples, leading to models that are difficult to interpret and prone to overfitting.

- Assess Feature-to-Sample Ratio: A high number of features relative to data samples is a hallmark of this problem, common when using software-generated material descriptors [13] [16].

- Check for Feature Redundancy: Many features may be highly correlated, providing redundant information [13].

Solutions:

- Perform Feature Engineering: This includes feature selection and dimensionality reduction.

- Feature Selection: Use filtered (e.g., correlation-based), wrapped (e.g., recursive feature elimination), or embedded (e.g., Lasso) methods to select the most important descriptors [13].

- Dimensionality Reduction: Apply linear (e.g., PCA, LDA) or non-linear methods to project high-dimensional data into a lower-dimensional space while preserving key information [13] [16].

- Leverage Domain Knowledge: Generate physically meaningful descriptors based on expert knowledge to create more interpretable and robust models [13].

Troubleshooting Guide: Data Imbalance

Problem: The dataset has a disproportionate distribution of classes, causing the model to be biased toward the majority class and perform poorly on the minority class.

- Evaluate Class Distribution: Calculate the number of samples in each class. Imbalance is common in materials science, such as having far more stable material candidates than unstable ones [17].

- Check Model Performance Metrics: Do not rely on accuracy alone. For imbalanced datasets, high accuracy can be misleading if the model simply defaults to predicting the majority class [17] [18].

Solutions:

- Apply Resampling Techniques:

- Oversampling the Minority Class: Randomly duplicate samples or use synthetic data generation algorithms like SMOTE to create new, synthetic minority samples [17] [18].

- Undersampling the Majority Class: Randomly remove samples from the majority class or use methods like Tomek Links to clean the data space [17] [18].

- Use Algorithm-Level Approaches: Employ cost-sensitive learning that assigns a higher penalty for misclassifying minority class samples during model training [17] [19].

Frequently Asked Questions (FAQs)

Q1: What are the most reliable evaluation metrics for imbalanced classification in materials data? Accuracy is a poor metric for imbalanced datasets. Instead, use a suite of metrics for a comprehensive view [17] [18]. These include:

- Precision: The ability of the model to not label a negative sample as positive.

- Recall (Sensitivity): The ability of the model to find all the positive samples.

- F1-Score: The harmonic mean of precision and recall.

- Confusion Matrix: A table showing correct and incorrect classifications for each class.

Q2: How can I generate a good dataset when experimental data is scarce and expensive to obtain?

- Utilize Transfer Learning: Begin with a model pre-trained on a large, general materials database (even if from computational methods like DFT). Then, fine-tune this model on your small, specific experimental dataset. This leverages knowledge from a related large dataset to improve performance on your small-data task [13].

- Employ Active Learning: This iterative process allows the model to identify which data points would be most valuable to acquire next. The model "asks" for the most informative new experiments, maximizing the value of each costly data point and reducing the total number of experiments needed [13] [20].

- Data Augmentation: For certain types of materials data, you can create modified versions of existing data. In image-based microstructural analysis, this could include rotations, flips, or slight contrast adjustments to artificially expand your dataset [19] [15].

Q3: What is the fundamental difference between overfitting and underfitting?

- Overfitting: The model is too complex and has learned the training data too well, including its noise and random fluctuations. It has high performance on the training data but fails to generalize to new data (high variance) [14] [15].

- Underfitting: The model is too simple to capture the underlying trend in the data. It performs poorly on both the training data and new data (high bias) [14] [15]. The goal is to find a well-fitted model that balances bias and variance.

Table 1: Comparison of Resampling Techniques for Imbalanced Data

| Technique | Type | Brief Methodology | Key Advantages | Key Limitations | Common Applications in Chemistry/Materials Science |

|---|---|---|---|---|---|

| Random Oversampling [18] | Data-level | Randomly duplicates samples from the minority class. | Simple to implement; No loss of information. | Can lead to overfitting. | Drug discovery, Polymer property prediction |

| SMOTE [17] | Data-level | Generates synthetic minority samples by interpolating between existing ones. | Reduces risk of overfitting vs. random oversampling; Creates diverse samples. | May generate noisy samples; High computational cost. | Catalyst design, Polymer materials, Drug discovery [17] |

| Borderline-SMOTE [17] | Data-level | A variant of SMOTE that only oversamples minority instances near the decision boundary. | Focuses on harder-to-learn samples; Improves decision boundary. | Sensitive to noise near the boundary. | Protein-protein interaction site prediction [17] |

| Random Undersampling [17] [18] | Data-level | Randomly removes samples from the majority class. | Reduces dataset size and training time; Simple to implement. | Can discard potentially useful information. | Drug-target interaction prediction, Anti-parasitic peptide prediction [17] |

| Tomek Links [18] | Data-level | Removes majority class samples that form "Tomek Links" (pairs of close opposite-class instances). | Cleans the data space; Can improve the quality of the class boundary. | Does not balance the dataset by itself; Often used as a cleaning step after oversampling. | General data preprocessing for classification |

| NearMiss [17] | Data-level | Selectively undersamples the majority class based on distance to minority class instances (e.g., keeping only the closest majority samples). | Preserves meaningful majority class samples near the boundary. | Can still lead to information loss; Choice of version (e.g., NearMiss-1 vs -2) impacts results. | Protein acetylation site prediction, Molecular dynamics [17] |

Table 2: Dimensionality Reduction and Regularization Methods

| Method | Type | Brief Methodology | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Principal Component Analysis (PCA) [13] [16] | Dimensionality Reduction | Projects data into a lower-dimensional space using orthogonal axes of maximum variance. | Reduces noise and redundancy; Helps visualize high-dimensional data. | Assumes linear relationships; Resulting components can be hard to interpret. |

| Feature Selection (Filtered/Wrapped/Embedded) [13] | Feature Engineering | Selects a subset of the most relevant features from the original set based on statistical tests (filter), model performance (wrapper), or built-in model properties (embedded). | Maintains original feature meaning, enhancing interpretability. | Can be computationally expensive (wrapper methods); May miss complex interactions. |

| L1 (Lasso) Regularization [15] | Regularization | Adds a penalty equal to the absolute value of the magnitude of coefficients to the loss function. This can drive some coefficients to zero, performing feature selection. | Creates sparser models; Built-in feature selection. | Can be unstable with correlated features. |

| Early Stopping [15] | Training Technique | Monitors model performance on a validation set during training and halts training when performance begins to degrade. | Prevents the model from learning noise; Simple to implement. | Requires careful selection of a validation set and stopping criteria. |

Detailed Protocol: Applying SMOTE for Imbalanced Materials Data

This protocol is based on applications in predicting mechanical properties of polymers and catalyst design [17].

- Problem Definition: Define the classification task (e.g., classifying materials as "high-strength" vs. "low-strength").

- Data Preprocessing:

- Perform standard scaling or normalization of all feature descriptors.

- Handle any missing values.

- Split the data into training and test sets. Crucially, apply SMOTE only to the training set to avoid data leakage and over-optimistic performance estimates.

- Apply SMOTE to Training Set:

- From the

imblearnPython library, import theSMOTEclass. - Fit the

SMOTEobject on the training features and labels. The algorithm will generate synthetic samples for the minority class by: a. Randomly selecting a point from the minority class. b. Finding its k-nearest neighbors (k is a parameter). c. Randomly selecting one of these neighbors and creating a new synthetic point on the line segment between the two points in feature space.

- From the

- Model Training and Evaluation:

- Train your chosen classifier (e.g., Random Forest, XGBoost) on the resampled (balanced) training set.

- Evaluate the final model's performance on the original, untouched test set using metrics like F1-score, precision, and recall.

Workflow Diagrams

SMOTE Resampling Workflow

Overfitting Detection with Cross-Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Small Data Challenges

| Tool / "Reagent" | Function / Purpose | Relevance to Small Data Challenges |

|---|---|---|

| Imbalanced-Learn (imblearn) [17] [18] | A Python library providing a wide range of oversampling (e.g., SMOTE, ADASYN) and undersampling (e.g., Tomek Links, NearMiss) techniques. | Directly implements data-level methods to mitigate bias from class imbalance. |

| Scikit-learn | A core Python library for machine learning, providing implementations for feature selection, dimensionality reduction (PCA), regularization, and model evaluation (cross-validation). | Offers a unified toolkit for nearly all steps in the ML workflow to combat overfitting and high-dimensionality. |

| SISSO [13] | Sure Independence Screening Sparsifying Operator; a compressed sensing method for feature engineering that creates optimal descriptor sets from a huge pool of primary features. | Crucial for high-dimensional problems; helps identify the most physically meaningful, low-dimensional descriptors from a vast space of possibilities. |

| CTGAN/TVAE [19] | Deep learning models (Generative Adversarial Network and Variational Autoencoder) designed to generate high-quality synthetic tabular data. | Advanced method for data augmentation to increase the size and diversity of small or imbalanced datasets while preserving privacy. |

| Active Learning Loops [13] [20] | A machine learning framework where the model iteratively queries an "oracle" (e.g., an experiment or simulation) for new data that it deems most informative. | Maximizes the value of each expensive data point in materials science, strategically guiding which experiments to run next to build the most informative small dataset. |

The Critical Importance of Data Quality over Quantity

Troubleshooting Guides

Troubleshooting Guide: Addressing Common Small Data Challenges

This guide helps diagnose and resolve frequent issues encountered when working with limited materials data.

| Symptom | Possible Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| Model overfitting | Data size too small, high feature dimensionality [13] | Check performance gap between training and test sets [21] | Apply feature selection (filtered, wrapped, embedded methods) or dimensionality reduction (PCA) [13] |

| Poor generalization | Insufficient or low-quality training data [21] | Evaluate model on a simpler, synthetic dataset [21] | Use data augmentation or integrate domain knowledge to generate descriptors [13] [5] |

| Unreliable predictions | High uncertainty in model | Use ensemble models or quantify prediction uncertainty [5] | Implement active learning to strategically acquire new data points [13] [5] |

| Inconsistent results | Data from publications has mixed quality or inconsistencies [13] | Audit data sources and collection methods | Standardize data preprocessing: normalize/scaling, handle missing values [13] |

Troubleshooting Guide: Debugging Machine Learning Models

A systematic approach to diagnosing and fixing machine learning model performance issues.

| Problem | Why It Happens | How to Fix It |

|---|---|---|

| Error explodes during training | Numerical instability, high learning rate [21] | Lower learning rate, check for exponent/log/division operations in code [21] |

| Error oscillates | Incorrect data augmentation, shuffled labels, learning rate too high [21] | Lower learning rate, inspect data pipeline and labels for correctness [21] |

| Error plateaus | Loss function issues, data pipeline errors [21] | Increase learning rate, remove regularization, inspect loss function and data [21] |

| Model fails to learn | Architecture too simple for problem, fundamental bugs [21] | Compare to simple baselines (linear regression), overfit a single batch to test [21] |

Frequently Asked Questions (FAQs)

Q1: Why is data quality more critical than quantity in materials science?

High-quality data consumes fewer resources and provides more reliable information for exploring causal relationships, which is often the goal in materials research [13]. In many cases, the data used for materials machine learning is considered "small data," making the reliability of each data point paramount [13]. Poor quality data in a materials information system reduces its usefulness for engineering design [22].

Q2: What are the primary methods for improving data quality at the source?

The main methods are:

- Data Extraction and Databases: Systematically collecting data from publications and established databases [13].

- High-Throughput Methods: Using high-throughput computations and experiments to generate consistent, high-quality data under unified conditions [13].

- Domain Knowledge: Generating descriptors based on expert knowledge to create more meaningful and interpretable features for modeling [13] [5].

Q3: My model performs well on training data but poorly on test data. Is this a small data problem?

This is a classic sign of overfitting, which is a common challenge with small datasets [13]. When the data scale is small and feature dimensions are high, the model may memorize the training data noise instead of learning the underlying pattern. Solutions include performing feature selection to reduce dimensionality, using regularization techniques, or applying data augmentation strategies to effectively increase your dataset size [13] [5].

Q4: How can I make the most of a limited amount of experimental data?

Employ machine learning strategies designed for small data scenarios:

- Transfer Learning: Leverage knowledge from a model pre-trained on a larger, related dataset or a different but relevant property [13] [5].

- Active Learning: Use the model to identify the most valuable data points to acquire next, optimizing your experimental resources [13] [5]. This creates a closed loop between computation and experiment.

Data Presentation

Data Augmentation and Enhancement Techniques

This table summarizes quantitative information on methods to enhance data for small dataset machine learning.

| Method | Description | Typical Data Gain | Key Consideration |

|---|---|---|---|

| High-Throughput Computation | Using first-principles calculations to generate data [13] | Can generate 100s to 1000s of data points | Accuracy depends on material system and hardware [13] |

| Feature Combination (e.g., SISSO) | Generating new descriptors via mathematical operations on original features [13] | Can create 100s of combined features | Requires subsequent feature selection to avoid overfitting [13] |

| Data Extraction from Publications | Manual curation of data from existing literature [13] | Varies widely; can access latest data | Risk of inconsistency and mixed quality between sources [13] |

Experimental Protocols

Detailed Methodology: Active Learning for Materials Discovery

This protocol outlines the active learning cycle, a core strategy for efficient experimentation with limited data.

Objective: To strategically select the most informative experiments or calculations to perform, maximizing model performance with minimal data.

Workflow Overview: The process is a cycle of model prediction, uncertainty quantification, experimental validation, and model updating, as shown in the diagram below.

Procedure:

- Initial Data Collection: Begin with a small, high-quality initial dataset of materials and their target properties [13].

- Model Training: Train a machine learning model on the current dataset. The model should be capable of quantifying its prediction uncertainty [5].

- Prediction and Selection: Use the model to predict properties for a large pool of candidate materials from the unexplored space. Identify and select the candidates where the model's prediction uncertainty is highest [5].

- Validation: Perform the key experiment or first-principles calculation on the selected high-uncertainty candidates [13].

- Data Update: Add the new, validated data points to the existing training dataset.

- Iteration: Repeat steps 2 through 5 until the model achieves the desired performance or a target material is identified.

Detailed Methodology: Feature Engineering for Interpretable Models

This protocol uses domain knowledge to create meaningful descriptors, improving model performance with small data.

Objective: To generate optimal descriptor subsets through preprocessing, selection, and transformation to build accurate and interpretable models.

Workflow Overview: The feature engineering process involves preparing the data, selecting the most important features, and optionally creating new ones.

Procedure:

- Data Preprocessing:

- Handle Missing Values: For descriptors with missing values, apply imputation (using mean, median, or adjacent values) or delete the problematic data points [13].

- Normalization/Standardization: Scale descriptor data to a common range (e.g., [0,1]) or standardize to zero mean and unit variance. This removes unit influence and makes data processing more agile [13].

- Feature Selection:

- Redundancy Removal: Materials descriptors, especially those software-generated, are often high-dimensional and contain redundant information [13].

- Selection Methods: Choose a feature selection algorithm based on its interaction with the modeling algorithm. Filtered methods use statistical measures, Wrapped methods use model performance, and Embedded methods perform selection during model training (e.g., Lasso regularization) [13].

- Feature Combination (Optional):

- For problems with too few features, create new combined descriptors using mathematical operations on the original descriptors. Use methods like SISSO for this transformation, followed by another round of feature selection to avoid overfitting [13].

The Scientist's Toolkit

Research Reagent Solutions

Essential computational tools and data sources for materials informatics research.

| Item | Function | Application in Small Data Context |

|---|---|---|

| First-Principles Calculations | Quantum mechanics-based computations to predict material properties [13]. | Generates high-quality, consistent data to supplement scarce experimental data [13]. |

| Descriptor Generation Software (Dragon, PaDEL, RDKit) [13] | Software toolkits that generate numerical descriptors from material composition or structure [13]. | Systematically creates feature sets for modeling, but requires subsequent feature selection to manage dimensionality on small datasets [13]. |

| Domain Knowledge Descriptors | Features designed by human experts based on scientific theory or empirical knowledge [13] [5]. | Improves model interpretability and predictive accuracy by guiding the algorithm with physically meaningful features [13]. |

| Transfer Learning | A strategy where a model pre-trained on a large dataset is fine-tuned on a small, target dataset [13] [5]. | Mitigates the small data problem by leveraging related knowledge from a different, larger dataset or task [5]. |

In materials science, the ability to collect large datasets is often constrained by the high cost and time required for experiments and computations. Consequently, many research projects must rely on small data, typically defined by limited sample sizes rather than an absolute number [13]. This reality presents specific challenges and demands tailored machine learning (ML) workflows. The core dilemma is balancing the complex, causal analysis possible with small data against the predictive power typically associated with larger datasets [13]. The essence of working with small data is to consume fewer resources to extract more meaningful information, a process that requires specialized strategies at every stage of the ML pipeline.

Frequently Asked Questions (FAQs) & Troubleshooting

This section addresses common challenges researchers face when applying machine learning to small materials data.

FAQ 1: My dataset has fewer than 50 samples. Can I still use powerful, non-linear machine learning models, or am I stuck with linear regression?

- Answer: While linear regression is valued for its simplicity and robustness in low-data regimes, properly tuned non-linear models can perform on par with or even outperform them [23]. The key is to mitigate overfitting.

- Troubleshooting Guide:

- Problem: Model overfitting on small training data.

- Solution: Employ automated workflows that use Bayesian hyperparameter optimization. This technique incorporates an objective function that explicitly accounts for and penalizes overfitting in both interpolation and extrapolation tasks [23].

- Solution: Use strong regularization techniques within the non-linear models to prevent them from learning the noise in your small dataset.

FAQ 2: The data I extracted from public databases contains inconsistencies and errors. How can I filter it for reliability?

- Answer: Data quality is more critical than quantity. For domain-specific datasets, conventional filtering may not be sufficient, especially with data from multiple sources with high standard deviations [24].

- Troubleshooting Guide:

- Problem: Noisy and inconsistent data from multi-source public repositories.

- Solution: Implement a statistical round-robin error-based data filtering method. This advanced technique can be applied to filter any material property by statistically identifying and removing outliers and inaccurate entries that fall outside expected error bounds [24].

- Solution: Consider a hybrid data curation workflow, manually extracting high-quality data from key publications to supplement and validate the data obtained from large-scale automated extractions [24].

FAQ 3: I am an experimentalist with limited coding experience. How can I implement a complete ML workflow for my small dataset?

- Answer: Use user-friendly software toolkits designed to lower the technical barrier. Platforms like MatSci-ML Studio provide an intuitive graphical user interface (GUI) that encapsulates the entire ML workflow without requiring extensive programming knowledge [25].

- Troubleshooting Guide:

- Problem: Steep learning curve associated with Python and ML libraries.

- Solution: Adopt an interactive, code-free software toolkit. These platforms guide you through data management, preprocessing, feature selection, model training, and hyperparameter optimization via a visual interface, democratizing access to advanced ML analysis [25].

FAQ 4: How can I make the most of my limited data to improve model performance?

- Answer: Enhance your data from both algorithmic and strategic perspectives. This involves improving the data itself and using smarter ML strategies [13] [5].

- Troubleshooting Guide:

- Problem: Insufficient data for robust model training.

- Solution: Data Augmentation: Use feature engineering and domain knowledge to create more informative descriptors [13] [5].

- Solution: Transfer Learning: Leverage models pre-trained on larger, related datasets (even from different domains) and fine-tune them on your small, specific dataset [5].

- Solution: Active Learning: Use a strategy where the ML model itself suggests the next most informative experiments to run, maximizing the value of each new data point and reducing the total number of experiments required [13] [5] [9].

The Complete Machine Learning Workflow for Small Data

The following diagram illustrates the integrated, cyclical workflow for machine learning with small materials data, highlighting strategies to overcome data limitations.

Detailed Experimental Protocols & Methodologies

Protocol: Data Preprocessing for Small Datasets

Data preprocessing is critical, consuming up to 80% of a data practitioner's time [26]. For small datasets, every step must be meticulously executed to preserve valuable information.

Objective: To transform raw, messy materials data into a clean, structured format suitable for machine learning algorithms, while avoiding the loss of critical information.

Step-by-Step Procedure:

- Data Acquisition & Import: Gather data from targeted sources (e.g., publications, databases, experiments) [13]. Load the dataset into your analysis environment (e.g., Python, MatSci-ML Studio) [26].

- Handle Missing Values: Assess the dataset for missing data points. For small datasets, simply deleting rows can lead to significant information loss.

- Encode Categorical Data: Convert all non-numerical text data (e.g., synthesis methods, crystal systems) into numerical form using techniques like one-hot encoding so ML algorithms can process them [26].

- Scale Features: Normalize or standardize numerical features to a common scale. This is crucial for distance-based models.

- Data Splitting: Split the preprocessed dataset into training, validation, and testing sets. A typical split for small datasets might be 70% for training, 15% for validation, and 15% for testing [26] [27]. The validation set is used for hyperparameter tuning, and the test set provides a final, unbiased evaluation.

The following diagram details this multi-step preprocessing pipeline.

Protocol: Implementing an Active Learning Cycle

Active learning is a powerful strategy for small data regimes, as it optimizes the experimental process by letting the model select the most valuable data points to acquire next [13] [9].

Objective: To minimize the number of experiments or computations required to achieve a target model performance by iteratively selecting the most informative samples.

Step-by-Step Procedure:

- Initial Model Training: Train a machine learning model on a small, initial set of labeled data (e.g., 10-20 data points).

- Uncertainty Quantification: Use the trained model to make predictions on a large pool of unlabeled or candidate samples. Identify the samples where the model is most uncertain (e.g., using metrics like prediction variance or entropy).

- Query & Experimentation: Select the top-K most uncertain samples from the pool and perform experiments or computations to obtain their true labels (e.g., measure the property of interest for those material compositions).

- Model Update: Add the newly acquired data (samples and their labels) to the training set. Retrain the ML model on this expanded dataset.

- Iterate: Repeat steps 2-4 until a predefined performance threshold or experimental budget is reached.

The following diagram visualizes this iterative, closed-loop process.

Essential Tools & Software for Small Data ML

The table below summarizes key software tools that facilitate machine learning workflows in materials science, especially for users with limited data or coding expertise.

| Tool / Platform | Core Paradigm | Key Features for Small Data | Target Audience |

|---|---|---|---|

| MatSci-ML Studio [25] | Graphical User Interface (GUI) | Integrated project management, intelligent data preprocessing, automated hyperparameter optimization, SHAP interpretability. | Domain experts with limited coding expertise. |

| Automatminer / MatPipe [25] | Code-based (Python) | Automated featurization from composition/structure, automated model benchmarking, pipeline creation. | Computational scientists and programming experts. |

| CRESt Platform [9] | Multimodal AI & Robotics | Incorporates diverse data (literature, images, compositions), uses active learning, integrates robotic high-throughput testing. | Research groups with access to automated lab equipment. |

| Custom Bayesian Optimization Workflows [23] | Code-based (Python) | Mitigates overfitting via Bayesian hyperparameter optimization, suitable for non-linear models in low-data regimes. | Data scientists and computational researchers. |

| Data Source | Type | Description & Relevance to Small Data |

|---|---|---|

| Starrydata2 [24] | Public Database | One of the largest public repositories for experimental thermoelectric data. Requires careful curation (e.g., round-robin filtering) to ensure quality for small-data studies. |

| Materials Project [13] | Public Database | Provides extensive computational data on a vast range of materials. Can be used for pre-training models or generating initial feature sets. |

| Manual Extraction from Publications [13] [24] | Curated Data | A hybrid approach of manually extracting high-fidelity data from key papers ensures data quality, which is paramount when working with small datasets. |

| High-Throughput Experiments [13] | Generated Data | Automated synthesis and testing can systematically generate focused, high-quality datasets to strategically expand a small initial dataset. |

Advanced Techniques and Algorithms for Small Dataset Modeling

Leveraging Domain Knowledge and Physics for Informative Descriptors

FAQs: Enhancing Models with Domain Knowledge

1. Why is integrating domain knowledge particularly critical when working with small materials datasets?

When data is scarce, machine learning models are far more susceptible to overfitting and learning spurious correlations. Integrating domain knowledge acts as a powerful regularizer, constraining the model to physically plausible solutions and helping to compensate for the lack of data [5]. Techniques that use tools from data science alongside domain knowledge are essential for mitigating the issues arising from limited materials data [5].

2. What types of domain-specific knowledge can be incorporated into molecular property prediction?

Domain knowledge can be systematically grouped into several key categories [28]:

- Atom-Bond Properties: This includes atomic properties (e.g., isotope numbers, chirality, hybridization, formal charge) and bond attributes (e.g., bond type, stereochemistry, length). These are fundamental for modeling molecular connectivity and reactivity [28].

- Molecular Substructures: Knowledge of functional groups (e.g., hydroxyl, carboxyl), molecular fragments (e.g., benzene rings), and pharmacophores is crucial as they directly dictate a molecule's chemical behavior and interactions with biological targets [28].

- Chemical Reactions: Information about reaction pathways and mechanisms can guide model development.

- Molecular Characteristics: Global physical and chemical properties also provide valuable constraints.

3. Does integrating molecular substructure information quantitatively improve prediction accuracy?

Yes, quantitative analyses reveal that integrating molecular substructure information leads to statistically significant improvements in model performance. A systematic survey discovered that this integration resulted in an average improvement of 3.98% in regression tasks and 1.72% in classification tasks for molecular property prediction [28].

4. What is a systematic method for selecting informative molecular descriptors to avoid overfitting?

A proven method involves focusing on feature selection to reduce multicollinearity and improve model interpretability [29]. The process includes:

- Starting with a comprehensive set of calculated molecular descriptors.

- Applying statistical techniques (e.g., correlation analysis) to identify and remove highly correlated descriptors, minimizing redundancy.

- Using automated machine learning tools (e.g., Tree-based Pipeline Optimization Tool, TPOT) to help select the most predictive feature set.

- Developing models that are both accurate and interpretable, allowing researchers to explore which features contribute most to the prediction [29].

Troubleshooting Guides

Problem: Model performance is poor despite trying different algorithms.

- Possible Cause: The chosen molecular descriptors may lack physical relevance or be highly collinear, leading to an uninformative and unstable model.

- Solution: Implement a systematic descriptor selection method. Reduce feature multicollinearity to create a more robust set of inputs. This approach has been shown to yield models with excellent performance (e.g., MAPE of 3.3% to 10.5% for various properties) while offering scientific interpretability [29].

Problem: Your graph-based model for predicting reaction kinetics fails to generalize.

- Possible Cause: The model's architecture or descriptors may not adequately capture the critical topological features of the molecules involved, such as the specific structure of phosphine ligands in a catalytic reaction [30].

- Solution: Incorporate domain knowledge directly into the model's representation. For instance, use graph neural networks (GNNs) with tree-like representations specifically designed to encapsulate the topological features of ligands that are known to be important for the reaction mechanism, moving beyond simpler, standard structural parameters [30].

Quantitative Evidence of Effectiveness

The following table summarizes key quantitative findings on the impact of domain knowledge and multi-modal data, as identified in a systematic survey of deep learning methods [28].

Table 1: Quantitative Impact of Domain Knowledge and Multi-Modality on Molecular Property Prediction (MPP)

| Integration Strategy | Task Type | Average Performance Improvement | Key Finding |

|---|---|---|---|

| Molecular Substructure Information | Regression | 3.98% | Integrating functional groups and molecular fragments significantly enhances prediction accuracy [28]. |

| Molecular Substructure Information | Classification | 1.72% | Substructure knowledge provides a measurable boost in classifying molecular properties [28]. |

| Multi-Modal Data (1D, 2D, & 3D) | MPP (Overall) | Up to 4.2% | Utilizing 3-dimensional spatial information simultaneously with 1D and 2D data substantially enhances predictions [28]. |

Experimental Protocols

Protocol 1: Systematic Selection of Molecular Descriptors for Interpretable Models

This methodology is designed to develop predictive models without sacrificing accuracy or interpretability, which is crucial for small datasets [29].

- Data Collection: Gather a publicly available experimental dataset for the target physiochemical property (e.g., melting point, boiling point).

- Descriptor Calculation: Compute a wide range of molecular descriptors for each molecule in the dataset.

- Feature Selection:

- Analyze the descriptor matrix for multicollinearity.

- Reduce redundancy by removing descriptors that are highly correlated with others.

- This step simplifies the model and helps prevent overfitting.

- Model Training and Optimization:

- Employ an automated tool like the Tree-based Pipeline Optimization Tool (TPOT) to assist in selecting the best model architecture and feature set.

- Train models such as linear regression, decision trees, or ensemble methods on the selected features.

- Validation and Interpretation:

- Validate model performance using held-out test sets and cross-validation.

- Analyze the importance of the selected features to gain new scientific insights into the relationships between molecular structure and the target property [29].

Protocol 2: Incorporating Structure-Based Descriptors in a GNN for Reaction Kinetics

This protocol outlines a case study for predicting activation free energies in Pd-catalyzed Sonogashira reactions [30].

- Problem Framing: Define the prediction target, such as the activation free energy (ΔG) for the oxidative addition step of a catalytic cycle.

- Descriptor Design:

- Focus on Domain Knowledge: Instead of using standard structural parameters, design descriptors based on the topological structures of the reactants, specifically the phosphine ligands.

- Graph-Based Representation: Construct a graph-based neural network (GNN) model that uses tree-like representations for the phosphine ligands. This allows the model to encapsulate complex topological features relevant to the reaction [30].

- Model Training:

- Train the GNN using calculated or experimental free energy values.

- The model learns to map the graph-based descriptor of the ligand to the activation energy.

- Model Validation:

- Test the model on a held-out set of ligands.

- Compare its performance against models using simpler, conventional descriptors to demonstrate the advantage of the domain-informed representation [30].

Workflow Visualization

Essential Research Reagent Solutions

Table 2: Key Computational Tools for Descriptor Generation and Model Development

| Item/Reagent | Function/Benefit |

|---|---|

| RDKit | An open-source toolkit for Cheminformatics and machine learning, used to generate 2D molecular images, calculate molecular descriptors, and handle SMILES strings [28]. |

| Tree-based Pipeline Optimization Tool (TPOT) | An automated machine learning tool that can assist in selecting the best model architecture and feature set, helping to develop interpretable models without sacrificing accuracy [29]. |

| Graph Neural Network (GNN) Libraries (e.g., PyTorch Geometric) | Libraries that enable the creation of models using graph-based representations of molecules, allowing for the direct incorporation of topological structure as a descriptor [30]. |

| ColorBrewer | A tool designed for selecting effective and colorblind-safe color palettes for data visualization, ensuring accessibility and clarity in diagrams and charts [31]. |

This technical support guide addresses a central challenge in materials machine learning research: developing robust models with small datasets. A powerful solution to this problem is data augmentation through physics-based modeling. This approach integrates mechanistic physical knowledge with data-driven methods, creating physically consistent synthetic data to significantly enhance model generalization and robustness when experimental data is scarce [32].

The following FAQs, troubleshooting guides, and experimental protocols provide a foundation for implementing these strategies in your research.

FAQs: Core Concepts and Rationale

1. What is physics-based data augmentation, and why is it used for small datasets in materials science?

Physics-based data augmentation uses mathematical models of fundamental physical processes (e.g., heat transfer, grain growth) to generate synthetic data. In materials science, high-fidelity experimental data is often difficult, expensive, or time-consuming to obtain [32] [33]. This creates small datasets that limit the performance of machine learning (ML) models. By augmenting a small set of real experimental data with a larger volume of synthetic data from physical simulations, you provide the ML model with more information to learn from, which improves its predictive accuracy and generalizability without the cost of additional experiments [32].

2. How does this hybrid approach improve upon pure data-driven ML or pure physical modeling?

A hybrid approach offers the best of both worlds. Pure ML models can struggle with small data and may produce physically implausible results [32]. Pure physical simulations can be computationally expensive and may rely on simplifications that reduce accuracy [32]. The hybrid framework uses a calibrated physical model to generate cheap, plentiful, and physically meaningful synthetic data, which is then used to train an ML model. This results in a model that is both data-efficient and physically interpretable [32].

3. My synthetic data comes from a simulation. How can I ensure it is relevant to my real experimental data?

The key is a technique known as domain adaptation or style transfer. A primary challenge is that simulated data can look structurally correct but lack the visual "style" and noise of real experimental data (e.g., microscopic images) [33]. To bridge this gap, you can use models like Generative Adversarial Networks (GANs) to learn the statistical characteristics of your real data and then apply these characteristics to the simulated data. This process creates synthetic data that retains the exact structural labels from the simulation but has the appearance of real data, making it a more effective tool for training models meant to analyze experimental results [33].

Troubleshooting Guides

Issue 1: Poor Model Generalization Despite Data Augmentation

Problem: Your ML model performs well on the training data (including synthetic data) but poorly on unseen experimental test data.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Domain Gap | Compare the distributions (e.g., mean, variance) of key features between synthetic and real datasets. | Apply domain adaptation techniques (e.g., image style transfer [33]) or calibrate your physical model with experimental parameters [32]. |

| Insufficient Physical Fidelity | Check if synthetic data fails to capture key regimes (e.g., transition modes in melt pool dynamics [32]). | Refine the physical model to cover a wider range of physical scenarios and ensure nonlinear, critical behaviors are represented [32]. |

| Data Overfitting | Perform learning curve analysis; if performance plateaus with more data, the model may be overfitting to artifacts in the synthetic data. | Introduce regularization techniques (e.g., dropout, L2 regularization) or diversify the synthetic data generation process. |

Issue 2: The Generative Model Produces Low-Quality or Physically Inconsistent Synthetic Data

Problem: The generated synthetic data is noisy, contains artifacts, or violates known physical laws.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inadequate Training of Generative Model | Check the loss function convergence during the training of models like GANs or VAEs. | Adjust hyperparameters, ensure the training dataset (even if small) is of high quality and representative. |

| Violation of Physical Constraints | Manually inspect generated samples for obvious physical impossibilities (e.g., negative densities). | Incorporate physical rules directly into the generative model's loss function as penalty terms to create "physics-informed" networks [32]. |

| Mode Collapse | Check for low diversity in generated outputs; all samples look very similar. | Use techniques like mini-batch discrimination or switch to a generative model architecture like a Variational Autoencoder (VAE) that is less prone to mode collapse [34]. |

Experimental Protocols

Protocol 1: Physics-Informed Augmentation for Melt Pool Geometry Prediction

This protocol is based on a successful study that predicted melt pool geometry in Laser Powder Bed Fusion (L-PBF) with only 36 experimental samples [32].

1. Objective: Train an accurate ML model to predict melt pool width and depth under different laser power and scanning speed conditions.

2. Materials and Reagent Solutions:

| Item | Function / Specification |

|---|---|

| 316L Stainless Steel Powder | Base material for L-PBF single-track experiments. |

| L-PBF System | Equipped with Yb fiber laser (e.g., 200W max power). |

| Explicit Thermal Model | A physics-based analytical model for predicting melt pool geometry. Calibrated with variable penetration depth and absorptivity [32]. |

| ML Algorithms (e.g., MLP, Random Forest, XGBoost) | Data-driven models to be trained on the hybrid dataset. |

3. Methodology:

- Step 1: Experimental Data Collection. Systematically conduct 36 single-track L-PBF experiments covering conduction, transition, and keyhole melting regimes. Measure and average melt pool dimensions for each parameter set [32].

- Step 2: Physical Model Calibration. Calibrate the explicit thermal model using the limited experimental data. This involves solving a constrained optimization problem to fit model parameters (e.g., laser penetration depth, absorptivity) to the real data [32].

- Step 3: Synthetic Data Generation. Use the calibrated physical model to generate a large number of synthetic data points across the parameter space. This study augmented 36 real samples with synthetic data, creating a significantly larger training set [32].

- Step 4: Model Training and Validation. Train ML models (MLP, Random Forest, XGBoost) on the hybrid (real + synthetic) dataset. Use five-fold cross-validation for robust performance estimation [32].

4. Key Quantitative Results:

The following table summarizes the performance improvements achieved through physics-based augmentation in the source study [32].

| Model | Training Data | R² Score | Key Performance Notes |

|---|---|---|---|

| Multilayer Perceptron (MLP) | Hybrid (Real + Synthetic) | > 0.98 | Notable reduction in MAE and RMSE; especially accurate in unstable transition regions. |

| Multilayer Perceptron (MLP) | Experimental Data Only | Lower than 0.98 | Performance suboptimal due to limited data. |

| Random Forest | Hybrid (Real + Synthetic) | High | Improved accuracy over model trained only on experimental data. |

| XGBoost | Hybrid (Real + Synthetic) | High | Improved accuracy over model trained only on experimental data. |

Protocol 2: Style Transfer for Augmenting Material Microscopic Images

This protocol details a strategy for generating synthetic microscopic images for tasks like image segmentation when labeled data is scarce [33].

1. Objective: Create a large dataset of realistic synthetic microscopic images with pixel-wise labels to train a high-performance segmentation model.

2. Materials and Reagent Solutions:

| Item | Function / Specification |

|---|---|

| Real Dataset | A small set (e.g., 136 images) of high-quality, manually annotated microscopic images (e.g., polycrystalline iron) [33]. |

| Monte Carlo Potts Model | A simulation model to generate 3D polycrystalline microstructures. Used to create 2D slices with perfect, pixel-accurate labels [33]. |

| Generative Adversarial Network (GAN) | An image-to-image translation model (e.g., CycleGAN) used to transfer the "style" of real images onto simulated labels [33]. |

3. Methodology:

- Step 1: Acquire Real and Simulated Datasets. Collect a small number of real microscopic images and manually annotate them. Separately, run a Monte Carlo Potts simulation to generate a large number of 2D grain structure images with perfect, automatic labels [33].

- Step 2: Train Style Transfer Model. Train a GAN model to learn the mapping from the simulated images to the style and texture of the real microscopic images. This model learns to make simulated data look realistic [33].

- Step 3: Generate Synthetic Data. Feed all simulated label images through the trained style transfer model. The output is a "synthetic dataset" that has the realistic appearance of experimental images but the perfect, pixel-wise labels of the simulation [33].

- Step 4: Train Segmentation Model. Train a segmentation model (e.g., a U-Net) using a combination of the limited real data and the generated synthetic data. The study found that a model trained with synthetic data and only 35% of the real data could achieve performance competitive with a model trained on 100% of the real data [33].

The workflow for this protocol is visualized below.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational and physical "reagents" essential for experiments in physics-based data augmentation.

| Item | Category | Function / Application |

|---|---|---|

| Explicit Thermal Model | Physical Model | Provides fast, approximate physical simulations for generating synthetic data on parameters like melt pool geometry [32]. |

| Monte Carlo Potts Model | Physical Model | Simulates microstructural evolution, such as grain growth, to generate labeled image data for segmentation tasks [33]. |

| Generative Adversarial Network (GAN) | Generative Model | Translates data between domains (e.g., from simulation to reality) for creating realistic synthetic images [33] [35]. |

| Variational Autoencoder (VAE) | Generative Model | Generates synthetic data and is often more stable to train than GANs; useful for tabular and time-series data [34] [35]. |

| k-Nearest Neighbor Mega-Trend Diffusion (kNNMTD) | Data Generation Algorithm | Generates "pseudo-real" data from small tabular datasets to facilitate the training of deep learning models [34]. |

| AutoAugment | Automated Augmentation | Uses reinforcement learning to automatically discover optimal data augmentation policies for a given dataset [35]. |

FAQs: Core Concepts

What is transfer learning and why is it useful for materials science research? Transfer learning is a machine learning technique where a model (called a "source model") trained on one task or dataset is repurposed as the starting point for a model on a different, yet related, task or dataset [36] [37]. This is particularly beneficial in materials science, where acquiring large, labeled datasets through experiments or computations is often costly and time-consuming [13] [38]. It reduces computational costs, shortens training time, and can improve model performance, especially when the target dataset is small [36] [37] [39].

What is the difference between transfer learning and fine-tuning? These are distinct but related concepts. Transfer learning refers to the broad strategy of adapting a model trained for a "source task" to a new "target task" [36]. Fine-tuning is a specific technique used within transfer learning where the pre-trained model is not used as a static feature extractor, but is instead further trained (i.e., its parameters are updated) on the new target dataset [36] [40] [41]. This process often uses a lower learning rate to avoid destroying the valuable pre-existing knowledge in the model's weights [40].

What is 'negative transfer' and how can I avoid it? Negative transfer occurs when the use of a pre-trained model on a source task leads to worse performance on the target task instead of improving it [36] [41]. This typically happens when the source and target tasks or their data distributions are too dissimilar [36] [40]. To mitigate this risk, ensure the source and target tasks are related. Techniques like "distant transfer" are also being researched to correct for negative transfer resulting from significant dissimilarity in data distributions [36].

How do I decide which layers of a pre-trained model to freeze and which to train? The decision depends on the size of your target dataset and its similarity to the source data [37]. The general principle is that early layers in a neural network learn general, low-level features (like edges or basic shapes), while later layers learn more task-specific features [40] [37]. The following table provides a general guideline:

| Scenario | Recommended Strategy |

|---|---|

| Small, Similar Dataset | Freeze most layers; only fine-tune the last one or two to prevent overfitting [37]. |

| Large, Similar Dataset | Unfreeze more layers, allowing the model to adapt while retaining learned features [37]. |

| Small, Different Dataset | Fine-tuning layers closer to the input may be necessary, but risk of negative transfer is higher [37]. |

| Large, Different Dataset | Fine-tuning the entire model can be effective, as the large dataset helps it adapt [37]. |

Troubleshooting Guides

Problem: Poor Model Performance After Transfer Learning

Potential Causes and Solutions:

Domain Mismatch (Negative Transfer): The source model was trained on data that is not sufficiently related to your target problem.

- Solution: Re-evaluate your choice of pre-trained model. Select a source model trained on a domain closer to your target materials science problem (e.g., a model pre-trained on general materials properties versus a model pre-trained on natural images) [36] [38]. If no suitable model exists, training from scratch might be more effective.

Incorrect Fine-Tuning Strategy: The learning rate might be too high, or the wrong layers might be trainable.

Data Quality Issues in Target Dataset: The small target dataset may have problems like incorrect labels, lack of representativeness, or insufficient predictive features.