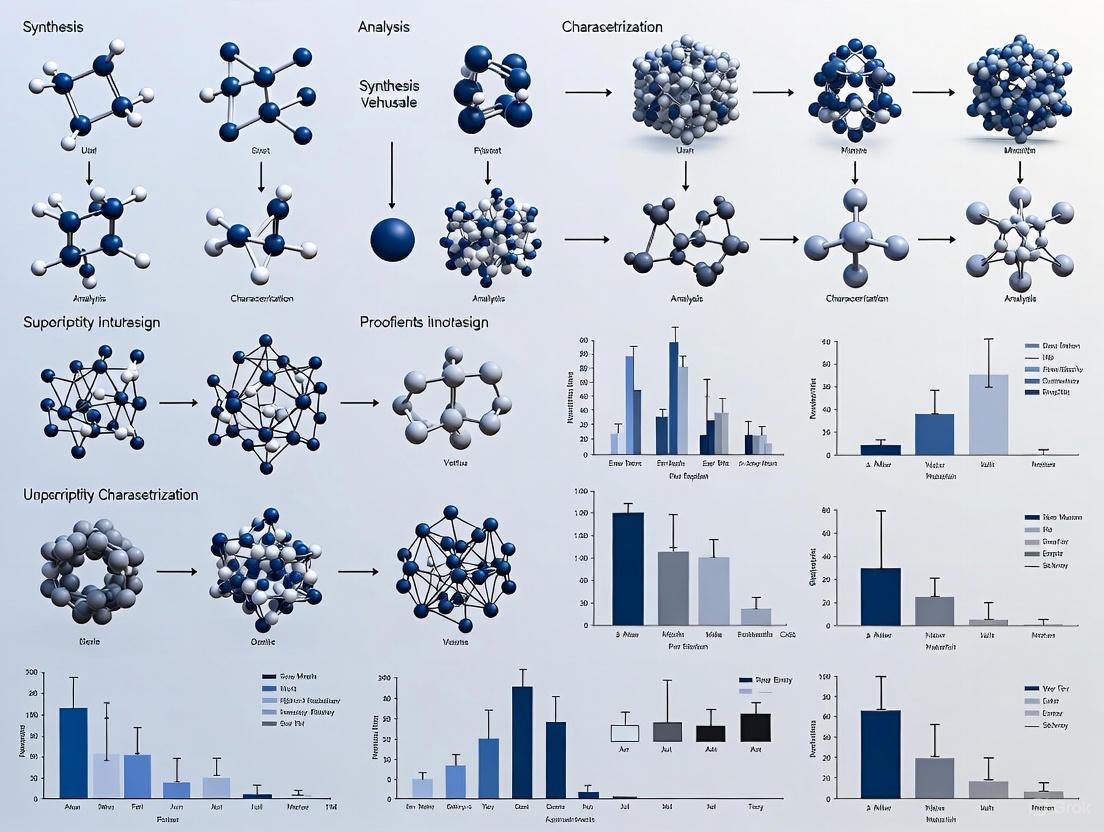

Quantifying Uncertainty in Materials Measurement: From Foundational Principles to Advanced Applications in Research and Development

This article provides a comprehensive framework for understanding and applying uncertainty quantification (UQ) in materials measurement, tailored for researchers, scientists, and drug development professionals.

Quantifying Uncertainty in Materials Measurement: From Foundational Principles to Advanced Applications in Research and Development

Abstract

This article provides a comprehensive framework for understanding and applying uncertainty quantification (UQ) in materials measurement, tailored for researchers, scientists, and drug development professionals. It begins by establishing the core concepts of measurement uncertainty, distinguishing between random and systematic errors, and introducing the standard GUM framework. The content then progresses to explore both established and cutting-edge methodological approaches, including Type A/B evaluation and advanced machine learning techniques like Bayesian Neural Networks (BNNs). A practical troubleshooting section addresses the identification and mitigation of key uncertainty sources, such as equipment, operator, and environmental factors, while guiding readers on constructing an uncertainty budget. Finally, the article offers a critical comparison of UQ methods—from Gaussian Process Regression to physics-informed models—evaluating their performance through metrics like coverage and interval width. By synthesizing foundational knowledge with modern applications, this guide aims to enhance the reliability, traceability, and decision-making confidence in materials research and pharmaceutical development.

What is Measurement Uncertainty? Core Concepts and the GUM Framework

In the science of metrology, precise communication and conceptual clarity are not merely beneficial—they are fundamental to the integrity of data. The terms "measurand" and "uncertainty" are central to this discourse, representing a sophisticated framework that moves beyond simplistic notions of error. A measurand is formally defined as the specific quantity intended to be measured [1]. This definition carries crucial nuance: the measurand exists in the domain of theory, while measurement results exist in the domain of observable reality [2]. This distinction is not philosophical pedantry but has practical consequences. In materials science and drug development, where conclusions drawn from measurements inform critical decisions, understanding exactly what is being measured—and the context in which it is measured—is essential for interpreting results correctly. The specification of a measurand is inseparable from its measurement method, as the value of a measurand is always understood within the context of a particular measurement procedure [1].

Uncertainty quantification (UQ) provides the complementary framework for characterizing the quality of these measurements. UQ is defined as the science of characterizing what is known and not known in a given analysis, defining the realm of variation in analytical responses given that input parameters may not be well characterized [3]. This approach represents a fundamental shift beyond simple error analysis, which typically focuses on discrepancies from a "true value." Instead, UQ systematically assesses all possible sources of doubt in both measurement and modeling processes, providing a structured approach to risk assessment and decision-making in research and development.

Defining the Core Concepts

The Measurand: What Is Actually Being Measured?

The concept of the measurand requires careful consideration in materials research. A measurand is a physical quantity or health condition under measurement [1]. In biomedical contexts, this could include biopotentials from the body surface (ECG, EEG), blood pressure, flow, medical images, body temperature, or evoked potentials in response to external stimulation [1]. The critical insight is that a measurand is not merely a label but requires precise definitional boundaries. For instance, in nanoparticle analysis using Single Particle-ICP-MS, multiple measurands may exist for the same analyte, including the number concentration of particles, mass of element per particle, or the equivalent spherical diameter when additional assumptions about shape and composition are applied [1].

Table: Classification of Measurands in Biomedical and Materials Science

| Category | Definition | Examples |

|---|---|---|

| Internal Measurands | Quantities measured within the body | Blood pressure, intracranial pressure |

| Body Surface Measurands | Biopotentials measured at the body surface | ECG, EMG, EOG, EEG signals |

| Peripheral Measurands | External manifestations of physiological processes | Infrared radiation from body surfaces |

| Offline Measurands | Quantities requiring sample extraction | Tissue histology, blood analysis, biopsy results |

| Nanoparticle Measurands | Properties of particulate materials | Number concentration, element mass per particle, equivalent spherical diameter |

A properly defined measurand must be specified with sufficient completeness that it is unaffected by variations in the measurement process that should not influence the measurement result. In synthetic instrumentation systems, this means precisely expressing the measurement through stimulus-response measurement maps, defining abscissas, ordinates, sampling strategies, calibration approaches, and post-processing algorithms [1]. The definition of the measurand thereby becomes synonymous with the complete specification of how the measurement is performed.

Measurement Uncertainty: Beyond Simple Error

Where error represents the difference between a measured value and a "true value," uncertainty quantifies the doubt about the measurement result. The internationally accepted definition describes uncertainty of measurement as "an estimate characterizing the range of values within which the true value of a measurand lies" [1]. This definition acknowledges that the concept of a single "true value" is often problematic in practical measurement scenarios.

Uncertainty arises from multiple potential sources in materials measurement [1]:

- Incomplete definition of the measurand: When the quantity intended to be measured is not defined with sufficient completeness

- Imperfect realization of the measurand: When the measurement does not perfectly correspond to the definition

- Inadequate sampling: When the sample measured may not represent the defined measurand

- Environmental conditions: Imperfect knowledge or control of environmental effects on the measurement

- Instrument resolution: Finite instrument resolution or discrimination threshold

- Reference values: Inexact values of measurement standards and reference materials

- Approximations: Assumptions incorporated in the measurement method and procedure

- Operator effects: Personal bias in reading analogue instruments

A critical distinction in modern uncertainty quantification separates aleatoric and epistemic uncertainty [4]. Aleatoric uncertainty arises from inherent randomness in processes (e.g., similarities in experimental data from the same experiment), while epistemic uncertainty relates to limitations in knowledge due to insufficient data or imperfect models [4]. This distinction is particularly valuable in materials science, where it helps researchers determine whether reducing uncertainty requires more sophisticated models (addressing epistemic uncertainty) or simply more data collection (addressing aleatoric uncertainty).

Uncertainty Quantification Framework

Methodologies for Uncertainty Quantification

In materials science and engineering, several computational approaches have emerged for robust uncertainty quantification. Bayesian methods have gained particular prominence for their ability to provide probabilistic frameworks that capture uncertainties in data-driven models [4]. The table below compares major UQ methodologies applied in materials research:

Table: Comparison of Uncertainty Quantification Methods in Materials Science

| Method | Key Features | Strengths | Limitations | Suitable Applications |

|---|---|---|---|---|

| Bayesian Neural Networks (BNNs) | Probabilistic framework capturing uncertainties through posterior distribution of network parameters [4] | High flexibility in model structure; reliable UQ; accommodates physics-informed priors [4] | Computationally intensive; complex implementation | Creep rupture life prediction [4], composite materials property prediction |

| Gaussian Process Regression (GPR) | Non-parametric Bayesian approach using continuous sample paths [4] | Excellent predictive accuracy; inherent uncertainty estimates; well-established theory | Less suitable for material properties with significant microstructural variations [4] | Conventional material property prediction with smooth variations |

| Markov Chain Monte Carlo (MCMC) | Sampling-based approximation of posterior parameter distributions [4] | More reliable UQ compared to variational inference; asymptotically exact | Computationally expensive for high-dimensional problems | Most promising for creep life prediction when accuracy is prioritized [4] |

| Deep Ensembles | Multiple neural networks with different initializations trained on same data [4] | Simple implementation; good uncertainty estimates | Computationally expensive; may overestimate uncertainty | Alternative to BNNs when implementation simplicity is valued |

| Quantile Regression (QR) | Estimates conditional quantiles of response variable [4] | No distributional assumptions; robust to outliers | Lacks closed-form parameter estimation; prone to overestimating uncertainty [4] | Applications requiring quantile estimates rather than full distribution |

Implementing Physics-Informed Bayesian Approaches

Physics-informed Bayesian Neural Networks (BNNs) represent a cutting-edge approach for UQ in materials property prediction. These networks integrate knowledge from governing physical laws to guide models toward physically consistent predictions [4]. The implementation involves several critical steps:

First, physics-informed features are incorporated based on governing creep laws or other relevant physical principles to estimate uncertainties in model predictions [4]. For creep rupture life prediction, this might include incorporating temperature-stress relationships derived from fundamental materials science principles.

Second, the BNN architecture is designed with stochastic parameters, typically implemented through either Variational Inference (VI) or Markov Chain Monte Carlo (MCMC) approximation of the posterior distribution of network parameters [4]. Research indicates that MCMC-based BNNs generally provide more reliable results compared to those based on variational inference approximation [4].

The training process then proceeds with these physics-informed constraints, allowing the model to simultaneously learn from experimental data while respecting fundamental physical laws. This approach has demonstrated competitive or superior performance compared to conventional UQ methods like Gaussian Process Regression in predicting properties such as creep rupture life of steel alloys [4].

Experimental Protocols for UQ in Materials Research

Case Study: Creep Rupture Life Prediction

The experimental protocol for UQ in creep rupture life prediction exemplifies rigorous methodology in materials research. The following workflow outlines the comprehensive approach:

Dataset Composition and Feature Selection: The experimental validation utilizes three distinct creep datasets covering multiple material systems [4]:

- Stainless Steel 316 alloys: 617 test samples with 20 features including material composition (C, Si, Mn, P, S, Ni, Cr, Mo, Cu, Ti, Al, B, N, Nb, Ta), material group, testing conditions (applied stress, temperature), test measurements (elongation percentage, area reduction percentage), and recorded creep rupture life in hours.

- Nickel-based superalloys: 153 test samples with 15 features including material composition (Ni, Al, Co, Cr, Mo, Re, Ru, Ta, W, Ti, Nb, T), testing conditions, and creep rupture life.

- Titanium alloys: 177 test samples with 24 features including material composition (Ti, Al, V, Fe, C, H, O, Sn, Mb, Mo, Zr, Si, B, Cr), testing conditions, finishing conditions (solution treated temperature/time, annealing temperature/time), test measurements (steady-state strain rate, strain to rupture), and creep rupture life.

Physics-Informed Feature Engineering: The protocol incorporates physics-informed features based on governing creep laws, which guide the BNNs toward physically consistent predictions [4]. This integration of domain knowledge leverages the models' capacity for improved creep life prediction by ensuring that predictions adhere to fundamental physical principles.

Model Training and Validation: The BNNs are implemented using both Variational Inference and Markov Chain Monte Carlo approximations, with experimental results demonstrating the superiority of MCMC-based approaches for this application [4]. The models are validated against experimental data using both point prediction metrics (R², RMSE, MAE, Pearson Correlation Coefficient) and uncertainty quality metrics (coverage, mean interval width).

Active Learning Integration for Efficient Experimentation

Uncertainty quantification frameworks can be strategically employed in active learning scenarios to accelerate materials discovery and characterization. The active learning process leverages uncertainty estimates to prioritize the most informative experiments:

This approach combines variance reduction techniques with k-means clustering to select the most uncertain and diverse data points for training, introducing an optimal trade-off between exploration and exploitation of the solution space [4]. Research demonstrates that physics-informed BNNs have significant potential to accelerate model training in active learning for material property prediction, potentially reducing experimental costs and time requirements while maintaining robust predictive accuracy.

The Researcher's Toolkit: Essential Methods and Reagents

Table: Essential Research Reagent Solutions for Materials Measurement

| Reagent/Method | Function in Measurement Process | Application Context |

|---|---|---|

| Bayesian Neural Networks (BNNs) | Probabilistic framework for predicting material properties with inherent uncertainty quantification [4] | Creep life prediction, composite materials property estimation |

| Markov Chain Monte Carlo (MCMC) | Sampling method for approximating posterior distributions in Bayesian inference [4] | Parameter estimation for complex materials models |

| Gaussian Process Regression | Non-parametric Bayesian approach for spatial and temporal data modeling [4] | Conventional material property prediction with smooth variations |

| Physics-Informed Features | Incorporation of domain knowledge from governing physical laws to constrain predictions [4] | Ensuring physically consistent predictions in creep rupture and other properties |

| Active Learning Framework | Strategic selection of most informative experiments based on uncertainty estimates [4] | Accelerated materials discovery and characterization |

| Uncertainty Decomposition | Separation of aleatoric and epistemic uncertainty sources [4] | Targeted strategy development for uncertainty reduction |

| Contrast Metrics | Quantitative measures for evaluating predictive intervals and uncertainty quality [4] | Validation of uncertainty quantification reliability |

The toolkit for advanced uncertainty quantification extends beyond traditional laboratory reagents to encompass computational methods and metrics. For experimental validation of UQ in materials research, three creep test datasets serve as essential reference materials: Stainless Steel 316 alloys (617 samples), Nickel-based superalloys (153 samples), and Titanium alloys (177 samples) [4]. These datasets provide benchmark cases for evaluating UQ method performance across different material systems and testing conditions.

Evaluation metrics form another critical component of the researcher's toolkit. For point predictions, standard metrics include the coefficient of determination (R²), root-mean-squared error (RMSE), mean absolute error (MAE), and Pearson Correlation Coefficient (PCC) [4]. For uncertainty quality assessment, coverage and mean interval width provide insights into the calibration and precision of predictive intervals [4]. These metrics enable researchers to quantitatively compare different UQ methods and select the most appropriate approach for their specific materials characterization challenge.

In materials measurements research, all experimental data contains inherent uncertainties that must be rigorously characterized to ensure research validity. The distinction between systematic error (bias) and random error (imprecision) forms the foundational framework for understanding measurement uncertainty in scientific research [5] [6]. For drug development professionals and materials scientists, proper identification, quantification, and control of these error types directly impacts the reliability of research conclusions and the success of development pipelines.

Systematic error refers to consistent, reproducible inaccuracies that skew measurements in a specific direction, while random error constitutes unpredictable statistical fluctuations that create scatter in repeated measurements [5] [7]. The sophisticated management of these errors is particularly crucial in materials characterization, where properties such as tensile strength, thermal conductivity, and surface morphology measurements underpin critical research conclusions and product development decisions.

Theoretical Foundations: Defining Error Types and Characteristics

Systematic Error (Bias)

Systematic error represents a consistent or proportional deviation between observed values and the true value of what is being measured [5]. These errors reproduce consistently across measurements and typically stem from identifiable causes such as instrument calibration issues, methodological flaws, or environmental factors [6] [7]. Unlike random errors, systematic errors cannot be reduced by simply repeating measurements, as they affect all measurements in the same way and direction [8].

Table 1: Characteristics and Examples of Systematic Errors

| Characteristic | Description | Example in Materials Research |

|---|---|---|

| Direction | Consistently skews measurements in one direction | A miscalibrated analytical balance always reading 0.5 mg high [5] |

| Consistency | Reproducible across measurements | Microscope with incorrect stage calibration consistently distorting dimensional measurements [7] |

| Source | Identifiable causes in instrumentation, method, or environment | Temperature-sensitive electronic components in testing equipment causing drift [9] |

| Elimination | Not reducible through repetition; requires correction | Using reference standards to establish correction factors [7] |

Systematic errors manifest in several distinct forms. Offset errors (also called additive errors or zero-setting errors) occur when a measurement instrument isn't properly calibrated to a correct zero point, affecting all measurements by a fixed amount [5] [9]. Scale factor errors (multiplier errors) occur when measurements consistently differ from the true value proportionally, such as by a consistent percentage [5] [9]. In materials testing, this might appear as a load cell consistently overreporting stress by 5% across its measurement range.

Random Error (Imprecision)

Random error comprises unpredictable, statistical fluctuations in measured data that vary in both magnitude and direction between measurements [5] [10]. These errors arise from uncontrollable environmental factors, instrumental sensitivity limits, or subtle variations in experimental execution [8] [10]. Random error primarily affects measurement precision—the degree of reproducibility and consistency in measurements—rather than the average accuracy [5] [8].

Table 2: Characteristics and Examples of Random Errors

| Characteristic | Description | Example in Materials Research |

|---|---|---|

| Direction | Varies unpredictably (positive and negative) | Slight variations in sample positioning in instrument fixtures [10] |

| Consistency | Irregular, non-reproducible fluctuations | Electronic noise in detector circuits during spectroscopic analysis [9] [10] |

| Source | Uncontrollable environmental or instrumental factors | Ambient temperature fluctuations affecting sensitive instrumentation [5] |

| Reduction | Can be minimized through averaging and increased sample size | Repeating tensile tests and averaging results [5] [10] |

Common sources of random error in materials research include natural variations in experimental contexts (e.g., minor temperature fluctuations in a laboratory), imprecise measurement instruments with limited resolution [5], and observer interpretation variations when reading analog instruments or interpreting complex data patterns [5] [8].

Accuracy vs. Precision: The Visual Distinction

The relationship between systematic and random errors is best understood through the framework of accuracy and precision. Accuracy describes how close a measurement is to the true value and is primarily affected by systematic error. Precision refers to how reproducible repeated measurements are and is primarily affected by random error [5] [8].

Diagram 1: Accuracy vs. Precision Relationships. This visualization shows how systematic and random errors combine to affect measurement outcomes. The bullseye represents the true value, while dots represent individual measurements.

Quantitative Analysis: Error Magnitudes and Impacts in Research Data

Comparative Error Significance in Research Outcomes

In scientific research, systematic errors generally pose a more significant threat to validity than random errors [5]. With random error, multiple measurements tend to cluster around the true value, and when collecting data from large samples, errors in different directions often cancel each other out [5]. Systematic errors, however, consistently skew data away from true values, potentially leading to false conclusions about relationships between variables [5].

The mathematical behavior of these errors differs substantially. Random errors in individual measurements, when averaged over many observations, tend toward a mean of zero, following the pattern:

[ \lim{n \to \infty} \frac{1}{n} \sum{i=1}^{n} \epsilon_{\text{random}, i} = 0 ]

where ( \epsilon_{\text{random}, i} ) represents the random error in the i-th measurement [6]. In contrast, systematic error does not diminish with repeated measurements:

[ \frac{1}{n} \sum{i=1}^{n} \epsilon{\text{systematic}, i} = \epsilon_{\text{systematic}} \neq 0 ]

Error Rates in Data Processing Methods

Empirical studies of data quality in clinical research provide quantitative insights into error rates across different data processing methods, with implications for materials research data management:

Table 3: Error Rates in Data Processing Methods (Clinical Research Data)

| Data Processing Method | Pooled Error Rate | 95% Confidence Interval | Implications for Materials Research |

|---|---|---|---|

| Medical Record Abstraction (MRA) | 6.57% | (5.51%, 7.72%) | Manual data transcription introduces significant error potential |

| Optical Scanning | 0.74% | (0.21%, 1.60%) | Automated methods reduce but don't eliminate errors |

| Single-Data Entry | 0.29% | (0.24%, 0.35%) | Single-person data handling maintains moderate error rates |

| Double-Data Entry | 0.14% | (0.08%, 0.20%) | Independent verification significantly reduces errors [11] |

These quantitative findings underscore the importance of systematic data handling protocols in materials research, where measurement precision is often critical. The nearly 50-fold difference in error rates between the least and most reliable methods highlights how procedural choices significantly impact data quality [11].

Methodologies for Error Identification and Reduction

Experimental Protocols for Systematic Error Control

Protocol 1: Instrument Calibration and Standardization

Purpose: To identify and correct systematic errors introduced by measurement instrumentation [7].

Procedure:

- Select certified reference materials (CRMs) with known property values traceable to national or international standards

- Conduct measurements on CRMs using identical procedures to those used for test samples

- Compare measured values to certified values across the operational range of the instrument

- Develop correction factors or calibration curves to adjust future measurements

- Document calibration uncertainty and incorporate into overall uncertainty budget [7]

Frequency: Regular calibration intervals based on instrument stability, usage frequency, and criticality of measurements. Typically performed at minimum annually or when results begin to show consistent directional drift.

Protocol 2: Method Comparison Studies

Purpose: To detect systematic methodological errors by comparing results from different measurement techniques [7].

Procedure:

- Select well-characterized test materials representing the typical sample matrix

- Analyze identical samples using multiple measurement techniques (e.g., SEM, AFM, and optical profilometry for surface topography)

- Apply appropriate statistical tests (t-tests, F-tests, ANOVA) to identify significant differences between methods

- Investigate sources of methodological discrepancies through controlled experiments

- Establish correction factors or preference hierarchies for different sample types

Experimental Protocols for Random Error Quantification

Protocol 3: Repeatability and Reproducibility Assessment

Purpose: To quantify random error components through structured repeated measurements [5] [6].

Procedure:

- Design a balanced experiment with multiple factors: operator, instrument, day, and sample preparation batch

- Perform minimum of 10 repetitions for each combination of factors

- Calculate variance components for each factor using appropriate statistical models (e.g., nested ANOVA)

- Determine total measurement uncertainty from variance components

- Establish control limits for ongoing quality control based on reproducibility data

Statistical Analysis: Calculate within-run precision (repeatability) and between-run precision (reproducibility) using:

[ s{\text{total}} = \sqrt{s{\text{repeatability}}^2 + s_{\text{reproducibility}}^2} ]

where ( s ) represents standard deviation of the respective components.

Protocol 4: Statistical Process Control for Ongoing Monitoring

Purpose: To monitor measurement processes for changes in random error patterns over time.

Procedure:

- Establish control measurements using stable reference materials

- Incorporate control samples in each analytical run at predetermined frequency

- Plot results on control charts with limits set at ±2s (warning) and ±3s (action)

- Implement corrective action procedures when control measurements exceed established limits

- Periodically review and update control limits based on accumulated data

Comprehensive Error Reduction Workflow

The interaction between systematic and random error reduction strategies can be visualized as an integrated workflow:

Diagram 2: Comprehensive Error Reduction Workflow. This diagram illustrates the integrated approach to addressing both systematic and random errors throughout the measurement process.

The Scientist's Toolkit: Essential Materials and Methods for Error Management

Table 4: Research Reagent Solutions for Error Management in Materials Measurement

| Tool/Reagent | Function in Error Management | Application Examples |

|---|---|---|

| Certified Reference Materials (CRMs) | Quantify and correct systematic errors through calibration | Instrument calibration, method validation, quality control |

| Standard Operating Procedures (SOPs) | Minimize random errors from operator variability | Ensuring consistent sample preparation, measurement techniques |

| Statistical Software Packages | Quantify random errors, perform significance testing | Variance component analysis, control chart creation, uncertainty calculation |

| Environmental Monitoring Systems | Control random errors from laboratory conditions | Temperature, humidity, and vibration monitoring in sensitive areas |

| Calibration Standards | Identify and correct systematic instrument errors | Mass weights, dimensional standards, voltage references |

| Blank Samples | Detect systematic contamination or interference effects | Process blanks, reagent blanks, instrument blanks |

| Control Charts | Monitor both systematic shifts and random error changes | Ongoing verification of measurement process stability |

The rigorous distinction between systematic and random errors provides more than just a theoretical framework—it offers practical guidance for enhancing research quality in materials measurement. By implementing systematic protocols for error identification, quantification, and reduction, researchers can significantly improve the reliability of their findings. The integration of regular calibration procedures, appropriate replication strategies, statistical monitoring, and comprehensive uncertainty analysis creates a robust foundation for producing trustworthy scientific data that advances the field of materials research and drug development.

Through conscious attention to both bias and imprecision, the materials research community can strengthen the validity of structural-property relationships, improve reproducibility across laboratories, and accelerate the development of novel materials with tailored characteristics for specific applications.

The Guide to the Expression of Uncertainty in Measurement (GUM) establishes internationally standardized rules for evaluating and expressing measurement uncertainty across various accuracy levels and fields, from fundamental research to industrial production [12] [13]. Developed through international collaboration and published in 1993, the GUM provides a systematic framework that ensures measurement results are reliable, comparable, and traceable to national standards [12].

This standardized approach is vital in materials measurement research, where quantifying uncertainty is essential for validating results, ensuring product quality, and supporting scientific claims. The GUM's methodology allows researchers to move beyond simple point measurements and account for all significant uncertainty components affecting their measurements [14] [15].

Core Principles and Framework

Fundamental Concepts and Terminology

The GUM creates a consistent conceptual framework for measurement uncertainty, addressing the historical lack of standardized nomenclature in the field [15]. It defines measurement uncertainty as a parameter that characterizes the dispersion of values attributed to a measured quantity, recognizing that even repeated measurements with the same instrument will yield varying results due to multiple influencing factors [13].

This framework distinguishes between two types of uncertainty evaluation:

- Type A Evaluation: Uncertainty estimated using statistical analysis of repeated measurements

- Type B Evaluation: Uncertainty estimated using means other than statistical analysis of series of observations, such as manufacturer specifications, calibration certificates, or previous measurement data

Standardized Uncertainty Components

The GUM requires identification and quantification of all significant uncertainty sources. The table below outlines common uncertainty components in materials measurement research:

Table: Common Uncertainty Components in Materials Measurement

| Uncertainty Component | Description | Typical Evaluation Method |

|---|---|---|

| Calibration Uncertainty | Uncertainty in reference standards or calibration process | Type B (from calibration certificates) |

| Environmental Factors | Effects of temperature, humidity, pressure variations | Type A (statistical) or Type B (model-based) |

| Measurement Repeatability | Variation under repeated measurement conditions | Type A (statistical analysis of repeats) |

| Instrument Resolution | Finite resolution of digital display or analog scale | Type B (based on instrument specifications) |

| Operator Bias | Systematic effects from different operators | Type A (through comparative measurements) |

| Material Heterogeneity | Non-uniformity in material properties or composition | Type A (multiple sampling measurements) |

The GUM Uncertainty Framework Workflow

The following diagram illustrates the systematic workflow for uncertainty analysis according to GUM methodology:

Quantitative Methods and Calculations

Uncertainty Propagation Framework

The GUM provides mathematical tools for combining uncertainty components from various sources. The combined standard uncertainty ( u_c(y) ) for a measured quantity ( y ) is calculated using the root-sum-square method:

[ uc(y) = \sqrt{\sum{i=1}^N \left(\frac{\partial f}{\partial xi}\right)^2 u^2(xi)} ]

where ( \frac{\partial f}{\partial xi} ) are sensitivity coefficients quantifying how the output estimate varies with changes in input estimates ( xi ), and ( u(x_i) ) are the standard uncertainties associated with each input quantity [16].

Experimental Example: Pendulum Gravity Measurement

To illustrate GUM methodology, consider estimating gravitational acceleration ( g ) using a simple pendulum, where ( g ) is derived from length ( L ) and period ( T ) measurements [16]:

[ \hat{g} = \frac{4\pi^2 L}{T^2} ]

The uncertainty analysis examines how biases in input quantities affect the derived value:

Table: Sensitivity Analysis for Pendulum Experiment

| Measurement Parameter | Theorized Bias | Resulting Change in g | Fractional Change |

|---|---|---|---|

| Length (L) | -5 mm | -0.098 m/s² | -1.0% |

| Period (T) | +0.02 seconds | -0.068 m/s² | -0.7% |

| Initial Angle (θ) | -5 degrees | +0.006 m/s² | +0.06% |

This sensitivity analysis reveals that length measurement bias has the most significant impact on the final result, guiding researchers to prioritize measurement precision for this parameter [16].

Uncertainty Budget Development

Creating a comprehensive uncertainty budget is essential for rigorous materials measurement research:

Table: Example Uncertainty Budget for Load Cell Calibration

| Uncertainty Source | Value | Probability Distribution | Standard Uncertainty | Sensitivity Coefficient | Contribution |

|---|---|---|---|---|---|

| Calibration Standard | 0.05% | Normal | 0.025% | 1.0 | 0.025% |

| Measurement Repeatability | 0.1% | Normal | 0.1% | 1.0 | 0.1% |

| Temperature Effect | 0.2% | Rectangular | 0.115% | 0.5 | 0.058% |

| Resolution | 0.01% | Rectangular | 0.0058% | 1.0 | 0.0058% |

| Combined Standard Uncertainty | 0.12% | ||||

| Expanded Uncertainty (k=2) | 0.24% |

This systematic approach ensures all significant uncertainty components are properly quantified and combined [14].

Experimental Protocols and Implementation

Step-by-Step GUM Implementation Methodology

For researchers implementing GUM principles in materials measurement studies, the following detailed protocol ensures comprehensive uncertainty analysis:

Define the Measurand: Precisely specify the parameter being measured and its units of measure [14]. For materials research, this could include Young's modulus, fracture toughness, thermal conductivity, or chemical composition percentage.

Identify Uncertainty Sources: Document all components of the measurement process and accompanying sources of error [14]. Create a cause-and-effect diagram that maps how each source influences the final result.

Quantify Uncertainty Components: For each identified source, write an expression for its uncertainty and determine its probability distribution (normal, rectangular, triangular, etc.) [14].

Calculate Standard Uncertainties: Convert each uncertainty component to a standard uncertainty using appropriate divisors based on the probability distribution [14].

Construct Uncertainty Budget: Develop a comprehensive budget listing all components, their distributions, standard uncertainties, sensitivity coefficients, and contributions to the combined uncertainty [14].

Combine and Expand: Calculate the combined standard uncertainty using root-sum-square method, then multiply by a coverage factor (typically k=2 for 95% confidence) to obtain the expanded uncertainty [14].

Advanced Measurement Systems

Modern measurement systems, particularly optical and camera-based techniques, present unique uncertainty challenges that extend beyond traditional point measurements. These systems require specialized consideration of uncertainties that are not linearly related to readings, including spatial calibration uncertainties, pixel-locking effects in digital image correlation, and variations in lighting conditions that affect measurement accuracy [15].

Essential Research Tools and Reagents

Implementation of GUM principles requires specific tools and analytical resources. The following table details key solutions for uncertainty analysis in materials measurement research:

Table: Essential Research Reagent Solutions for Measurement Uncertainty Analysis

| Tool/Resource | Function in Uncertainty Analysis | Application Context |

|---|---|---|

| GUM Document (JCGM 100) | Primary reference for uncertainty evaluation methodology | All measurement applications requiring standardized uncertainty analysis |

| Monte Carlo Supplement (JCGM 101) | Enables propagation of distributions using computational methods | Complex measurement models where analytical methods are insufficient |

| Statistical Analysis Software | Facilitates Type A uncertainty evaluation through data analysis | Processing repeated measurement data to quantify random effects |

| Calibrated Reference Materials | Provides traceable standards for method validation | Establishing measurement accuracy and identifying systematic errors |

| Urban Institute R Package (urbnthemes) | Open-source tool for creating standardized uncertainty visualizations | Preparing publication-quality charts with consistent formatting [17] |

| NIST Technical Note 1297 | Implementation guidelines for GUM approach | Adapting international standards to specific laboratory contexts [12] |

GUM in Conformity Assessment and Regulatory Contexts

The pharmaceutical and biomedical fields increasingly require rigorous uncertainty analysis for regulatory compliance and method validation. GUM principles provide the framework for establishing measurement reliability in drug development, where understanding uncertainty is critical for dosage determination, purity analysis, and clinical measurements [12].

The GUM has been adopted by numerous accreditation bodies including A2LA (American Association for Laboratory Accreditation), NAVLAP (National Voluntary Laboratory Accreditation Program), and EA (European Cooperation for Accreditation), making compliance with its principles essential for international recognition of testing and calibration results [12].

The Guide to the Expression of Uncertainty in Measurement provides materials researchers with a standardized, systematic framework for quantifying and expressing measurement reliability. By implementing GUM methodologies through uncertainty budgets, sensitivity analyses, and comprehensive documentation, scientists can enhance the credibility and comparability of their research findings across international boundaries. The ongoing development of supplementary guides addresses emerging measurement challenges, ensuring the GUM framework remains relevant for advanced materials characterization techniques.

Uncertainty analysis is the process of identifying limitations in scientific knowledge and evaluating their implications for scientific conclusions. It is a non-negative parameter characterising the dispersion of values being attributed to a measurand, based on the information used [18]. This definition distinguishes uncertainty from 'error,' which is formally the difference between a measurement and its reference or true value [18]. In materials science research and drug development, understanding uncertainty is not merely a statistical exercise but a fundamental requirement for reliable decision-making. When comparing experimental results or ensuring regulatory compliance, properly characterized uncertainty provides the essential context for interpreting data and establishing confidence in findings.

The treatment of uncertainty varies significantly across scientific literature, ranging from simple calculations of standard deviation to fully characterized uncertainty trees rooted in fiducial reference measurements [18]. This variability poses particular challenges for materials researchers and drug development professionals who must often reconcile data from multiple sources with differing uncertainty reporting practices. Furthermore, regulatory bodies increasingly require explicit uncertainty analysis, as demonstrated by space agencies mandating per-pixel uncertainty estimates for all Essential Climate Variables they fund [18]. Similar expectations are emerging in pharmaceutical regulation, where uncertainty analysis provides reliable information for decision-making throughout the drug development lifecycle [19].

Fundamental Concepts and Definitions

Types of Uncertainty

Scientific uncertainty manifests in several distinct forms, each with different implications for data comparison and compliance:

Aleatory Uncertainty: Also known as stochastic uncertainty, this arises from natural variability in the system being measured. In materials science, this might include inherent variations in material properties due to processing conditions or microstructural heterogeneities [20].

Epistemic Uncertainty: This results from limited knowledge about the system and can theoretically be reduced through further research or improved measurements. Examples include uncertainty in model parameters or incomplete understanding of underlying physical mechanisms [20].

Parameter Uncertainty: This specifically relates to uncertainty in the input parameters of models used for simulation or prediction. For instance, in modeling ceramic impact performance, parameter uncertainty propagates through both mechanism-based and phenomenological models [20].

Uncertainty can be represented in either parametric or nonparametric ways. Parametric representations assume errors follow a known probability distribution characterized by parameters, such as 'standard uncertainty' (represented after the ± sign), which indicates the standard deviation (σ) of a normal distribution [18]. Nonparametric representations are used when the probability distribution is complex, unknown, or non-symmetric, often expressed as confidence intervals specifying a range of values corresponding to certain probabilities [18].

Uncertainty Versus Variability

A crucial distinction in uncertainty analysis is that between uncertainty and variability. Variability refers to actual differences in attributed values due to heterogeneity, diversity, or temporal changes in the system being studied. In contrast, uncertainty reflects a lack of knowledge about the true value of a quantity [19]. This distinction is particularly important in materials science, where variability in material properties due to processing conditions [20] must be distinguished from uncertainty in measuring those properties. For drug development professionals, confusing these concepts can lead to inappropriate conclusions about drug efficacy or safety.

Methodologies for Uncertainty Analysis

Structured Framework for Uncertainty Analysis

A comprehensive uncertainty analysis follows a structured framework comprising several key elements [19]:

Identifying uncertainties affecting the assessment in a structured way to minimize overlooking relevant uncertainties.

Prioritizing uncertainties within the assessment to focus detailed analysis on the most important uncertainties.

Dividing the uncertainty analysis into manageable parts when dealing with complex assessments.

Ensuring questions or quantities of interest are well-defined such that the true answer or value could be determined, at least in principle.

Characterizing uncertainty for parts of the analysis, which may be done quantitatively or qualitatively.

Combining uncertainty from different parts of the analysis when uncertainty has been quantified separately.

Characterizing overall uncertainty by expressing quantitatively the overall impact of as many identified uncertainties as possible.

Reporting uncertainty analysis clearly and unambiguously in a form compatible with decision-makers' requirements.

Quantitative Methods for Uncertainty Quantification

Several technical approaches exist for quantifying uncertainty in scientific assessments:

Monte Carlo Methods: Traditional approaches requiring repeated sampling from statistical distributions of inputs and subsequent simulation of outputs [20]. These methods are robust but computationally intensive.

Polynomial Chaos Expansion: Expansion-based methods in which the model is represented as a polynomial expanded over suitable orthogonal basis functions of the random input variables [20]. These can be more efficient than Monte Carlo methods for certain types of problems.

Neural-Network Based Surrogates: Using artificial neural networks to create surrogate models that map inputs to outputs from expensive computational models [20]. Multi-layer perceptrons (MLPs) are particularly advantageous as 'universal approximators' that can handle high-dimensional input.

Rigorous Uncertainty Quantification: Methods that compute bounds on design uncertainties with knowledge of ranges of input parameters only [20]. This approach is particularly valuable for high-risk applications where conservative estimates are required.

Uncertainty Propagation in Multi-Scale Modeling

In materials science, uncertainty propagation often involves multi-scale analysis, as demonstrated in impact modeling of advanced ceramics [20]. This typically involves three scales and two steps:

First Step: Connecting parameters that define mesoscopic features of the material ("materials" scale) to continuum-scale representations of sub-scale deformation mechanisms ("phenomenological" scale).

Second Step: Connecting the phenomenological representation to a performance metric deduced from "structural-scale" simulations.

This multi-scale approach is particularly relevant for materials researchers studying properties that emerge from microstructural characteristics but must be designed for macroscopic performance.

Uncertainty Propagation in Multi-Scale Modeling: This workflow illustrates how uncertainty propagates from microstructural features through computational models to final performance metrics, with formal uncertainty quantification and sensitivity analysis at key stages.

Uncertainty in Materials Research: Experimental Protocols

Protocol for Mechanism-Based Model Calibration

Advanced ceramics impact modeling demonstrates a rigorous approach to uncertainty quantification [20]:

Material Selection: Begin with a well-characterized model material system (e.g., silicon carbide for armor applications) with documented properties and processing history.

Physics-Based Modeling: Implement a validated physics-informed model (e.g., Li and Ramesh 2021 model for SiC) that incorporates statistical defect distribution, rate- and pressure-dependences, and relevant inelastic deformation mechanisms.

Parameter Mapping: Establish connections between mechanistic quantities (inputs in the physics-based model) and phenomenological representations (within an established phenomenological model like JH-2).

Surrogate Model Construction: Develop neural-network based surrogates of specific impact simulations to enable uncertainty propagation analysis across parameter sets.

Uncertainty Propagation: Quantify how uncertainty propagates from the mechanism-based model parameters to the parameters of the phenomenological model using the constructed surrogates.

Performance Metric Evaluation: Determine uncertainty in impact performance metrics from simulation-surrogates using the phenomenological model with uncertain parameters.

Sensitivity Analysis: Conduct sensitivity analysis of impact performance over the large parameter space of the mechanism-based model via the phenomenological parameters.

Data Distribution Analysis in Materials Science

Understanding where data resides within research papers is essential for comprehensive uncertainty analysis [21]:

Paper Selection: Systematically examine materials science papers to discern where key data types reside within textual content, tables, and figures.

Data Categorization: Categorize data into composition, processing conditions, characterization, and performance properties.

Interconnection Analysis: Identify cases where data types are isolated or interconnected across different sources to understand uncertainty propagation through the data ecosystem.

Annotation: Document challenges and limitations faced during the annotation process to improve future data extraction and uncertainty analysis.

This methodology highlights the importance of understanding data distribution within materials science papers, as it has profound implications for data accessibility and integration in the field.

Uncertainty in Regulatory Compliance and Drug Development

Regulatory Requirements for Uncertainty Analysis

Regulatory bodies increasingly require explicit uncertainty analysis in scientific assessments. The European Food Safety Authority (EFSA) states that "all EFSA scientific assessments must include consideration of uncertainties" [19]. This unconditional requirement means assessments must identify sources of uncertainty and characterize their overall impact on assessment conclusions, reported clearly and unambiguously in a form compatible with decision-makers' requirements.

In the pharmaceutical sector, regulatory uncertainty may arise from factors such as FDA staffing reductions, which can lead to longer review timelines for Biologics License Applications (BLAs), New Drug Applications (NDAs), and Investigational New Drug (IND) applications [22]. This operational uncertainty compounds the scientific uncertainties inherent in drug development.

Strategies for Managing Regulatory Uncertainty

Drug development professionals can employ several strategies to navigate regulatory uncertainty [22]:

Anticipate and Plan for Delays: Build extra time into clinical trial and drug approval timelines, file applications early, and engage regulatory consultants to navigate potential shifts in FDA processes.

Strengthen Global Regulatory Strategy: Consider parallel submissions with other regulatory agencies to diversify approval pathways and reduce dependence on any single agency's timeline.

Increase Communication with Regulators: Proactively engage reviewers early in the process to clarify expectations and minimize unexpected regulatory hurdles.

Strengthen Internal Compliance & Data Readiness: Ensure clinical trial data and regulatory submissions are well-prepared to reduce the need for additional review cycles.

These strategies highlight the intersection between scientific uncertainty in drug development and regulatory uncertainty in the approval process, both of which must be managed for successful product development.

Practical Applications and Worked Examples

Case Study: Sea Surface Temperature Measurements

A practical example from environmental science illustrates how uncertainty budgets provide deeper insight into dataset construction [18]. The European Space Agency Climate Change Initiative Sea Surface Temperature product provides not only total uncertainty for each measurement but also a breakdown into components with different correlation length scales:

- Uncorrelated Errors: Primarily related to instrument noise and sampling uncertainty, largest in regions with strong SST gradients.

- Synoptic-Scale Correlated Errors: Arising from errors in atmospheric correction data, correlated over weather system scales.

- Large-Scale Systematic Errors: Dominated by instrument calibration errors, consistent across the observed domain.

This case demonstrates that large uncertainties are not necessarily indicative of bad data. Filtering data based solely on uncertainty thresholds can inadvertently introduce bias by preferentially excluding regions with greater natural variability.

Worked Example: Uncertainty Propagation in Data Aggregation

Uncertainty propagation through data aggregation follows specific mathematical rules [18]. For example, when coarsening or merging data, uncertainties must be properly combined. If combining n measurements x₁, x₂, ..., xₙ with associated standard uncertainties u₁, u₂, ..., uₙ, the uncertainty of the mean is given by:

u(mean) = √(∑(uᵢ²)) / n

This formula assumes the uncertainties are uncorrelated. For correlated uncertainties, additional covariance terms must be included. Worked examples of such calculations are essential for researchers applying uncertainty analysis to their specific datasets.

Table 1: Essential Resources for Materials Data and Uncertainty Analysis

| Resource Name | Resource Type | Key Features | Application in Uncertainty Analysis |

|---|---|---|---|

| ASM Handbooks Online | Reference Database | Extensive engineering and property data for many metals and non-metallic materials [23] | Provides reference data for uncertainty comparison |

| Springer Materials | Evaluated Data Collection | Compilation of critically evaluated materials science data, from thermodynamics to physical properties [23] | Offers pre-evaluated data with quality indicators |

| Data Citation Index | Data Repository Index | Locates quality data sets across disciplines, displaying data within broader research context [23] | Enables assessment of data provenance and reliability |

| Knovel E-Books | Engineering Reference | Supports property searching and interactive equations [23] | Facilitates uncertainty calculations through tools |

| NIST Chemistry WebBook | Chemical Property Database | Chemical & physical property data for thousands of compounds [23] | Provides certified reference data for uncertainty assessment |

| ASTM Standards | Standards Database | Standard test methods and specifications [23] | Establishes standardized measurement protocols |

| EFSA Uncertainty Analysis Guidance | Methodology Framework | Guidance on characterising, documenting and explaining uncertainties [19] | Provides structured approach to uncertainty analysis |

Computational Tools for Uncertainty Quantification

Table 2: Computational Methods for Uncertainty Quantification

| Method Category | Specific Methods | Strengths | Limitations |

|---|---|---|---|

| Sampling-Based | Monte Carlo Methods [20] | Robust, widely applicable | Computationally intensive for complex models |

| Expansion-Based | Polynomial Chaos Expansion [20] | More efficient than Monte Carlo for certain problems | Requires specialized implementation |

| Surrogate Models | Neural-Network Based Surrogates [20] | Handles high-dimensional input; universal approximators | Requires training data; potential overfitting |

| Rigorous Bounds | Optimal Uncertainty Quantification [20] | Provides conservative estimates for high-risk applications | May yield overly conservative results |

Visualization Techniques for Uncertainty Communication

Uncertainty-Aware Workflow Diagram

Uncertainty-Aware Data Analysis Workflow: This workflow integrates uncertainty identification, prioritization, and propagation throughout the data analysis process, ensuring uncertainties are properly considered in final decision-making.

Best Practices for Uncertainty Presentation

Effective communication of uncertainty information follows several key principles [18]:

Explicit Representation: Always include uncertainty estimates alongside reported values, using either standard uncertainty (± notation) or confidence intervals.

Appropriate Precision: Report uncertainties with appropriate significant figures, typically no more than two digits.

Contextual Explanation: Provide sufficient methodological detail to help users interpret the uncertainty information correctly.

Visual Clarity: Use visualization techniques that clearly represent uncertainty, such as error bars, probability distributions, or uncertainty maps.

Transparency About Limitations: Acknowledge incomplete uncertainty budgets while emphasizing they still add value to observations.

Uncertainty analysis is not merely a technical requirement but a fundamental aspect of scientific rigor that enables meaningful data comparison and regulatory compliance. For materials researchers and drug development professionals, a systematic approach to identifying, quantifying, and propagating uncertainties provides the necessary foundation for reliable decision-making. By implementing the methodologies, tools, and visualization techniques outlined in this guide, scientists can enhance the reliability of their conclusions and more effectively navigate both scientific and regulatory challenges. As uncertainty analysis continues to evolve, its integration throughout the research lifecycle will remain essential for advancing materials science and ensuring the safety and efficacy of pharmaceutical products.

Modern Methods for Uncertainty Quantification: From GUM to Machine Learning

In the domain of materials measurements research, particularly in pharmaceutical development, the completeness of a quantitative result is fundamentally dependent on a rigorous statement of its associated uncertainty. The International Organization for Standardization (ISO) laboratory standard, ISO 15189, mandates that pathology laboratories provide estimates of measurement uncertainty for all quantitative test results, a principle that extends directly to materials science and drug development [24]. A measurement result is considered metrologically incomplete if it lacks an interval characterizing the dispersion of values that could reasonably be attributed to the measurand—the quantity intended to be measured [25]. The Guide to the Expression of Uncertainty in Measurement (GUM), established by the Joint Committee for Guides in Metrology (JCGM), provides the globally recognized framework for evaluating and expressing this uncertainty [24] [25]. This guide delineates two primary methods for uncertainty evaluation: Type A and Type B. These classifications do not indicate different natures of the underlying uncertainty components but rather denote the two distinct methodologies for their evaluation [26]. For researchers and scientists, a proficient understanding of these methods is not merely academic; it is essential for asserting the reliability, traceability, and fitness-for-purpose of measurement data upon which critical decisions in research and development are based.

Core Concepts: Uncertainty, Error, and the Measurand

Distinguishing Between Uncertainty and Error

A fundamental precept in modern metrology is the clear distinction between "error" and "uncertainty." These terms are often used interchangeably in casual discourse but possess critically different meanings [24].

- Measurement Error: Defined as the difference between a measured quantity value and a reference quantity value (often considered the "true" value). Error is, in theory, a single value that could be perfectly known and corrected.

- Measurement Uncertainty: A non-negative parameter that characterizes the dispersion of the quantity values being attributed to a measurand. It is an estimate of the possible range of values within which the true value is believed to lie, with a given level of confidence. Unlike error, uncertainty cannot be corrected; it can only be quantified and managed [24] [25].

The GUM procedure operates on the principle that all recognized significant systematic errors (biases) have been corrected, and the remaining uncertainty associated with these corrections, along with all random errors, is what is quantified and combined [24].

Defining the Measurand

The measurand is the specific quantity subject to measurement. A precise definition of the measurand is crucial, as it must encompass the specific measurement system and the conditions under which the measurement is performed [24]. For instance, in materials research, "the tensile strength of Polymer X, measured according to ASTM D638 using a specific universal testing machine at 23°C," defines a measurand more completely than simply "tensile strength." This specificity ensures that the uncertainty evaluation is relevant and correctly scoped.

Type A Evaluation of Uncertainty

Definition and Principle

Type A evaluation of uncertainty is defined as the method of evaluation by a statistical analysis of measured quantity values obtained under defined measurement conditions [26] [24]. In essence, it involves deriving an uncertainty estimate from a series of repeated observations of the same measurand, thereby characterizing the observed frequency distribution. A Type A standard uncertainty is obtained from a probability density function derived from this observed frequency distribution [26].

Standard Evaluation Methodology

The standard methodology for a basic Type A evaluation involves calculating three key statistical parameters from a series of n repeated observations. The following protocol outlines this process for a typical repeatability test in a materials laboratory.

Experimental Protocol 1: Single Repeatability Test

- Objective: To quantify the random uncertainty (repeatability) component of a measurement system.

- Procedure:

- Under identical conditions (same instrument, operator, location, and short time interval), perform

nindependent measurements (x₁, x₂, ..., xₙ) of the same measurand. - Ensure the measurement process is stable and the sample is homogeneous.

- Record all individual measurement values.

- Under identical conditions (same instrument, operator, location, and short time interval), perform

- Data Analysis:

- Calculate the arithmetic mean (

x̄), which serves as the best estimate of the measurand's value. - Calculate the standard deviation (

s), which quantifies the dispersion of the individual observations. - Calculate the standard uncertainty (

u), which is the standard deviation of the mean. - Determine the degrees of freedom (

ν), which represent the number of independent pieces of information available to estimate the uncertainty.

- Calculate the arithmetic mean (

The calculations for these key parameters are summarized in Table 1.

Table 1: Statistical Formulas for Type A Evaluation

| Parameter | Formula | Description |

|---|---|---|

| Arithmetic Mean | x̄ = (Σx_i)/n |

The central value or average of the measurement series. |

| Standard Deviation | s = √[Σ(x_i - x̄)²/(n-1)] |

A measure of the dispersion of the data set around the mean. |

| Standard Uncertainty (u) | u = s/√n |

The standard uncertainty of the mean value itself. |

| Degrees of Freedom (ν) | ν = n - 1 |

The number of independent values in the calculation of the standard deviation. |

Advanced Evaluation: Pooled Variance

For measurement systems that are monitored over time, a more robust estimate of repeatability can be obtained by combining data from multiple experiments. This is achieved using the method of pooled variance.

Experimental Protocol 2: Multiple Repeatability Tests

- Objective: To establish a reliable, long-term estimate of a measurement system's repeatability uncertainty.

- Procedure:

- Conduct

kseparate repeatability tests (e.g., monthly), each withnᵢmeasurements. - For each test

i, calculate the standard deviationsᵢ.

- Conduct

- Data Analysis:

- Calculate the pooled standard deviation (

s_pooled), which provides a combined estimate of variability across all experiments.

- Calculate the pooled standard deviation (

The formula for the pooled standard deviation is:

s_pooled = √[Σ(ν_i * s_i²) / Σν_i] where ν_i = n_i - 1

The standard uncertainty is then u = s_pooled / √n for a future measurement based on n observations.

Type B Evaluation of Uncertainty

Definition and Principle

Type B evaluation of uncertainty is determined by means other than a Type A evaluation. It is an evaluation based on available knowledge and evidence [26] [24]. This knowledge can come from a variety of sources, including:

- Previous measurement data

- Manufacturer's specifications

- Calibration certificates

- Data from handbooks and reference standards

- The scientific literature

Unlike Type A, a Type B standard uncertainty is obtained from an assumed probability density function (PDF) based on the degree of belief that an event will occur, often called subjective probability [26]. The choice of the appropriate PDF is a critical step in a Type B evaluation.

Standard Evaluation Methodology

The methodology for Type B evaluation involves a systematic process of identifying non-statistical uncertainty sources, selecting appropriate probability distributions, and converting the source information into a standard uncertainty. The core of this evaluation lies in dividing the estimated bounds (±a) of the value by a distribution-specific divisor.

Table 2: Type B Evaluation: Common Probability Distributions

| Distribution Type | Scenario / Use Case | Divisor | Standard Uncertainty (u) |

Degrees of Freedom (ν) |

|---|---|---|---|---|

| Rectangular (Uniform) | Manufacturer's tolerance, digital resolution, data quantization. Assumes equal probability of value lying anywhere within ±a. |

√3 |

u = a / √3 |

Often considered infinite |

| Triangular | Used when values near the center of the range are more likely than those near the extremes. | √6 |

u = a / √6 |

Often considered infinite |

| Normal (Gaussian) | Uncertainty derived from a calibration certificate reporting an expanded uncertainty with a stated coverage factor k (e.g., k=2). |

k |

u = U / k |

Taken from the certificate |

Experimental Protocol 3: Type B Evaluation from a Calibration Certificate

- Objective: To determine the standard uncertainty associated with the calibration of a reference material or instrument.

- Procedure:

- Obtain the calibration certificate for the standard.

- Identify the expanded uncertainty (

U) and its coverage factor (k), which is typically 2 for a 95% confidence level.

- Data Analysis:

- Calculate the standard uncertainty as

u = U / k.

- Calculate the standard uncertainty as

Experimental Protocol 4: Type B Evaluation from Manufacturer's Specification

- Objective: To determine the standard uncertainty associated with an instrument's tolerance or resolution.

- Procedure:

- Identify the manufacturer's stated tolerance limit (e.g.,

±L). - Determine the most appropriate probability distribution. For a maximum bound without further information, a rectangular distribution is typically used.

- Identify the manufacturer's stated tolerance limit (e.g.,

- Data Analysis:

- For a rectangular distribution, the standard uncertainty is

u = L / √3.

- For a rectangular distribution, the standard uncertainty is

Comparative Analysis and Combined Uncertainty

Synthesis of Differences

The practical application of uncertainty analysis requires a clear understanding of the distinctions between Type A and Type B methods. Table 3 provides a structured comparison to guide researchers in selecting the appropriate evaluation method.

Table 3: Comparative Analysis: Type A vs. Type B Evaluation

| Feature | Type A Evaluation | Type B Evaluation |

|---|---|---|

| Basis of Evaluation | Statistical analysis of repeated observations [26]. | Available knowledge and scientific judgment [26]. |

| Source of Data | Current, internal measurement data. | Historical data, certificates, handbooks, manufacturer specs. |

| Probability Distribution | Observed frequency distribution (often normal). | Assumed based on knowledge (rectangular, triangular, normal, etc.). |

| Primary Method | Calculation of mean, standard deviation, and standard uncertainty of the mean. | Application of distribution divisor to estimated bounds. |

| Resource Intensity | Can be resource-intensive (time, materials). | Generally less resource-intensive. |

| Objectivity Perception | Often perceived as more "objective." | Requires expert judgment, sometimes perceived as "subjective." |

The Combined Standard Uncertainty

In a real-world measurement, multiple uncertainty sources, both Type A and Type B, typically contribute to the overall uncertainty of the measurand y. The GUM provides a framework for combining these components into a combined standard uncertainty, denoted u_c(y). For a measurand that is a function of several independent input quantities, y = f(x₁, x₂, ..., x_N), the combined standard uncertainty is calculated using the law of propagation of uncertainty. If the input quantities are uncorrelated, the formula is:

u_c(y) = √[ Σ( (∂f/∂x_i)² * u²(x_i) ) ]

Where (∂f/∂x_i) is the sensitivity coefficient that describes how the output estimate y varies with changes in the input estimate x_i, and u(x_i) is the standard uncertainty associated with x_i.

The Scientist's Toolkit: Essential Reagents and Materials for Uncertainty Evaluation

The practical implementation of uncertainty evaluation requires both physical tools and conceptual frameworks. The following table details key "research reagents" and resources essential for robust uncertainty analysis in a materials or drug development laboratory.

Table 4: Essential Toolkit for Measurement Uncertainty Evaluation

| Item / Solution | Function in Uncertainty Analysis |

|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable reference value with a stated uncertainty. Used to evaluate measurement bias (trueness) and its associated uncertainty component. |

| Calibrated Instrumentation | Equipment with valid calibration certificates provides the foundation for Type B uncertainty evaluations related to the measurement standard itself. |

| Stable, Homogeneous Control Material | A essential material for conducting Type A repeatability and reproducibility studies over time, enabling the calculation of pooled standard deviations. |

| Statistical Software Package | Facilitates the computation of means, standard deviations, ANOVA, and the combination of uncertainty components according to the GUM framework. |

| GUM (JCGM 100:2008) & VIM | The foundational reference documents that provide the definitions, principles, and methodologies for a consistent and internationally accepted uncertainty evaluation. |

| Uncertainty Budget Template | A structured spreadsheet or document used to systematically list, quantify, and combine all significant uncertainty components (both Type A and Type B). |

Application in Materials and Drug Development Research

In materials measurements research, the classification and evaluation of uncertainty sources are paramount. For instance, determining the concentration of an active pharmaceutical ingredient (API) in a complex formulation involves multiple potential uncertainty sources. A Type A component would arise from the repeatability of the chromatographic peak area measurement (e.g., HPLC). Type B components would include the uncertainty of the CRM used for calibration, the uncertainty in the purity of the internal standard, and the volumetric tolerance of the glassware used for sample preparation.

Adopting a systematic approach to classifying and evaluating these uncertainties as either Type A or Type B allows researchers to construct a comprehensive uncertainty budget. This budget not only provides a quantitative assurance of result quality but also identifies which components contribute most significantly to the overall uncertainty, thereby guiding efforts for methodological improvement. This rigorous practice, framed within the broader thesis of understanding uncertainty, ensures that data generated in materials and drug development is not just precise, but also metrologically sound, traceable, and fit for its intended purpose—whether that is formulation optimization, quality control, or regulatory submission.

In materials measurements research, from advanced nanomaterials to pharmaceutical development, the quantification of measurement reliability is as critical as the measurement result itself. An uncertainty budget provides the formal, structured framework for this quantification. It is an itemized table of all components that contribute to the doubt about a measurement result, providing a systematic method for combining them into a single, comprehensive statement of uncertainty [27]. For researchers and drug development professionals, mastering this framework is essential for validating methods, supporting regulatory submissions, and making high-consequence decisions based on experimental data. This guide details the construction, calculation, and practical application of uncertainty budgets within a materials research context.

Core Concepts and Definitions

- Measurand: The particular quantity subject to measurement [28]. In materials research, this could be the concentration of an active pharmaceutical ingredient (API), the thickness of a coating, or the hardness of a metal alloy.

- Measurement Uncertainty: A non-negative parameter characterizing the dispersion of the quantity values attributed to a measurand [28]. It is a quantitative indication of the quality of a measurement.

- Uncertainty Budget: A statement of the complete uncertainty analysis, typically presented in a table that lists all identified sources of uncertainty, their quantified magnitudes, sensitivity coefficients, probability distributions, and the method for combining them [27].

- Standard Uncertainty (u(xi)): The uncertainty of a measurement result expressed as a standard deviation [29].

- Combined Standard Uncertainty (uc(y)): The standard uncertainty of the final result (y), obtained by combining the individual standard uncertainties using the law of propagation of uncertainty, often via root sum of squares (RSS) [27] [29].

- Expanded Uncertainty (U): The final product of the uncertainty analysis, which defines an interval about the measurement result that may be expected to encompass a large fraction of the value distribution. It is calculated by multiplying the combined standard uncertainty by a coverage factor (k), typically k=2 for a 95% confidence level [27] [30].

A Step-by-Step Methodology for Budget Construction

The process of creating a robust uncertainty budget can be broken down into a sequence of deliberate steps.

Step 1: Specify the Measurement Process and Equation

The foundation of a valid uncertainty budget is a clear definition of the measurement. This requires documenting what is being measured (the measurand), the specific method or procedure used, the equipment involved, and the relevant measurement range [31]. Crucially, the mathematical model relating the input quantities to the final result must be established.

For a calibration laboratory, this might be straightforward, such as following a standard like ISO 6789 for torque wrenches [31]. For a materials test lab, the process can be more complex, potentially involving multiple sub-measurements and a derived formula. For instance, determining the tensile strength of a polymer sample involves a formula like σ = F / A, where F is the measured force and A is the cross-sectional area of the specimen. This formula immediately identifies force and area as key input quantities for the uncertainty analysis.

Next, a systematic search for all possible uncertainty contributors must be conducted. A "cause and effect" diagram is an excellent tool for this purpose. For a typical material measurement, sources can be broadly categorized as follows [28]:

- Imprecision (Random Effects): Variability observed in repeated measurements under similar conditions, quantified through standard deviations. This includes within-run and between-day imprecision [28].

- Bias (Systematic Effects): A constant or predictable offset in the measurement result. This must be corrected for, and the uncertainty of the correction itself becomes a component in the budget. Bias is often evaluated through proficiency testing or by comparing against a reference method [28].

- Reference Standards and Calibrators: The uncertainty inherent in the reference materials or calibrators used, as stated in their certificates [28].

- Environmental Factors: Variations in temperature, humidity, and other ambient conditions that can influence the measurement outcome.

- Sample-Related Factors: For biological or pharmaceutical materials, within-subject biological variation can be a significant source of uncertainty [28]. For physical materials, sample homogeneity is a critical factor.

Step 3: Quantify the Uncertainty Components