QIIME vs. Mothur: A Comprehensive Guide to Choosing and Optimizing Your 16S rRNA Bioinformatics Pipeline

This article provides a foundational to advanced overview of the two predominant bioinformatics pipelines, QIIME and mothur, for 16S rRNA amplicon data analysis.

QIIME vs. Mothur: A Comprehensive Guide to Choosing and Optimizing Your 16S rRNA Bioinformatics Pipeline

Abstract

This article provides a foundational to advanced overview of the two predominant bioinformatics pipelines, QIIME and mothur, for 16S rRNA amplicon data analysis. Tailored for researchers, scientists, and drug development professionals, it explores the core principles and appropriate use-cases for each tool. The content delivers detailed methodological workflows, addresses common troubleshooting and optimization challenges, and synthesizes evidence from recent comparative studies to validate pipeline selection. By integrating practical guidance with current research findings, this guide aims to empower users to generate robust, reproducible, and biologically meaningful microbiome data for biomedical and clinical applications.

Understanding 16S rRNA Analysis and the Key Players: QIIME and Mothur

16S ribosomal RNA (rRNA) gene sequencing is a cornerstone molecular method for identifying and classifying bacteria and archaea within complex biological samples. The 16S rRNA gene is approximately 1500 base pairs long and contains nine hypervariable regions (V1-V9) interspersed between conserved regions. This genetic structure makes it an ideal target for phylogenetic studies: the conserved regions enable amplification with universal primers, while the variable regions provide the sequence diversity necessary for taxonomic differentiation [1] [2].

This culture-free approach has revolutionized microbial ecology by allowing researchers to characterize microbial communities that are difficult or impossible to study using traditional laboratory cultivation methods. In biomedical research, 16S rRNA sequencing provides insights into the composition of human-associated microbiota and their roles in health and disease, making it fundamental to understanding the human microbiome [3] [1].

Sequencing Technology Comparisons

The selection of sequencing technology significantly influences the scope and resolution of a 16S rRNA study. The table below compares the key characteristics of the primary platforms used in modern research.

Table 1: Comparison of 16S rRNA Gene Sequencing Platforms

| Sequencing Platform | Read Type | Targeted Region | Typical Taxonomic Resolution | Key Advantages | Primary Considerations |

|---|---|---|---|---|---|

| Illumina MiSeq | Short-read (∼300 bp) | Single hypervariable regions (e.g., V3-V4) | Genus-level | High accuracy (Q30+), low per-read cost | Limited resolution for closely related species |

| Oxford Nanopore (ONT) | Long-read (Full-length) | V1-V9 (∼1500 bp) | Species-level | Real-time sequencing, portable devices, lower instrument cost | Higher raw error rate, though improved with recent chemistry [4] |

| PacBio SMRT | Long-read (Full-length) | V1-V9 (∼1500 bp) | Species-level | High-fidelity (HiFi) reads, high single-read accuracy | Higher cost per sample for equivalent read depth [5] |

Multiple studies have demonstrated that full-length 16S rRNA sequencing improves species-level classification. One study on human microbiome samples showed that while Illumina (V3-V4) and PacBio (V1-V9) assigned a similar percentage of reads to the genus level (∼95%), PacBio assigned a significantly higher proportion to the species level (74.14% vs. 55.23%) [5]. Similarly, Oxford Nanopore's full-length sequencing has been shown to identify more specific bacterial biomarkers for conditions like colorectal cancer compared to Illumina's V3-V4 approach [4].

Experimental Protocol: From Sample to Data

A standard 16S rRNA gene sequencing workflow involves multiple critical steps, from sample collection to sequencing, each of which can impact the final results.

Sample Preparation and DNA Extraction

- Sample Collection: Samples (e.g., feces, saliva, tissue) must be collected with strict adherence to sterility to prevent contamination. Immediate freezing at -20°C or -80°C is crucial to preserve microbial composition. For temporary storage, samples can be held at 4°C or in preservation buffers [1].

- DNA Extraction: This process involves cell lysis (chemical and mechanical), precipitation of DNA using salt and alcohol, and final purification to remove contaminants. The choice of DNA extraction kit should be appropriate for the sample type, as this can significantly impact sequencing results [1].

Library Preparation and Sequencing

- PCR Amplification: The extracted DNA is amplified using primers that target specific hypervariable regions of the 16S rRNA gene. The choice of primer pair and the targeted region (e.g., V3-V4 vs. V1-V9) is a key experimental decision that influences which taxa are detected [1] [5].

- Barcoding and Clean-up: For multiplexing, unique molecular barcodes are added to samples during a second PCR. The amplified DNA is then cleaned, typically with magnetic beads, to remove impurities and select for the correct fragment size [1].

- Sequencing: The final library is loaded onto a sequencer (Illumina, Nanopore, or PacBio) for high-throughput analysis [1].

The following diagram illustrates the complete wet-lab workflow:

Bioinformatics Pipelines for Data Analysis

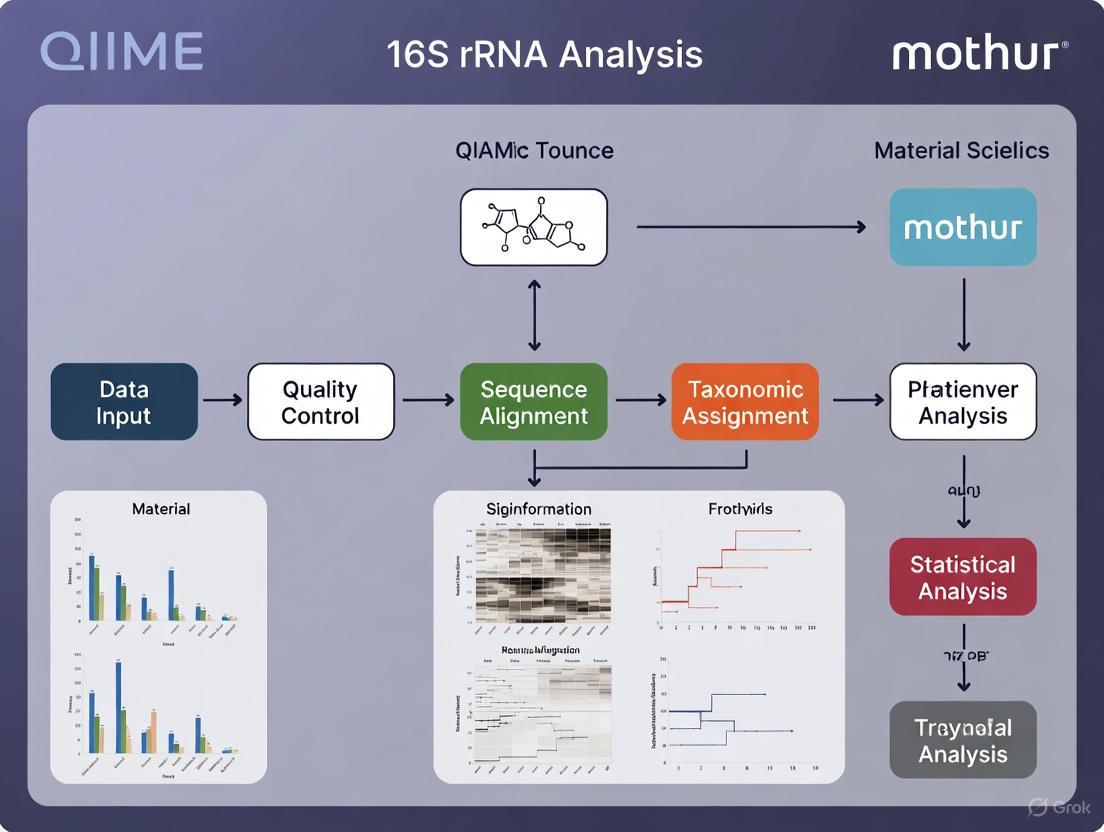

The raw sequencing data must be processed through a bioinformatics pipeline to generate actionable biological insights. Two of the most established pipelines are QIIME and mothur.

Both QIIME and mothur follow a similar core workflow for processing 16S rRNA amplicon data, though their underlying implementations and philosophies differ.

QIIME vs. Mothur: A Comparative Analysis

The choice between QIIME and mothur can influence results, particularly for low-abundance taxa.

Table 2: Comparison of QIIME and Mothur Bioinformatics Pipelines

| Feature | QIIME | Mothur |

|---|---|---|

| Primary Language | Python (wrapper for external tools) [6] | C/C++ (standalone compiled code) [6] |

| Development Strategy | Integrates multiple independent tools [6] | Self-contained; most tools reimplemented in C++ [6] |

| Installation | Can be complex due to dependencies [6] | Straightforward; single executable [6] |

| Performance | Slower for computationally intensive tasks (e.g., alignment) [6] | Faster execution for core algorithms (e.g., 21.9x faster alignment) [6] |

| Database Influence | With GreenGenes, assigned fewer OTUs and genera for RA < 10% [7] [8] | With GreenGenes, higher richness, more genera detected for RA < 10% [7] [8] |

| Recommendation | SILVA database attenuates differences between pipelines [7] [8] | SILVA database is preferred for comparable results with QIIME [7] [8] |

A study on rumen microbiota found that while both tools showed a high correlation (>0.99) for the relative abundance of major genera, mothur detected a larger number of Operational Taxonomic Units (OTUs) and genera, especially for low-abundance microorganisms (relative abundance < 10%) when using the GreenGenes database. These differences can significantly impact beta diversity metrics. However, using the SILVA reference database attenuated these discrepancies, making the outputs of QIIME and mothur more comparable [7] [8].

Essential Research Reagents and Materials

Successful execution of a 16S rRNA sequencing experiment requires careful selection of reagents and reference databases.

Table 3: Key Research Reagent Solutions for 16S rRNA Gene Sequencing

| Item | Function/Description | Examples & Considerations |

|---|---|---|

| DNA Extraction Kit | Isolates microbial genomic DNA from complex samples. | Choice critical for low-biomass samples (e.g., skin swabs). Kits from Molzym GmbH & Co. KG used in clinical samples [9]. |

| PCR Primers | Amplifies the target hypervariable region of the 16S rRNA gene. | Region selection (e.g., V3V4, V1V9) influences results. Examples: 27F and 1492R for full-length [5]. |

| Sequencing Platform | Determines read length, accuracy, and cost. | Illumina for short-read; Oxford Nanopore or PacBio for full-length [4] [5]. |

| Reference Database | Essential for taxonomic classification of sequence reads. | SILVA and GreenGenes are common; database choice greatly influences identified species [7] [4]. |

| Bioinformatics Tools | Process raw sequences into taxonomic and ecological data. | QIIME and mothur are standard pipelines; DADA2 (Illumina) and Emu (ONT) for denoising [4] [10]. |

Applications in Biomedical Research

The application of 16S rRNA sequencing in biomedicine is vast and growing, providing insights into disease diagnostics, forensic science, and therapeutic development.

- Infectious Disease Diagnostics: 16S sequencing is a powerful tool for identifying pathogens in culture-negative clinical samples, especially after antibiotic administration. Next-Generation Sequencing (NGS) outperforms Sanger sequencing in polymicrobial samples, with one study showing a higher positivity rate (72% vs. 59%) and better detection of mixed infections [9].

- Forensic Identification: The human microbiome is unique enough to serve as a "microbial fingerprint." 16S rRNA gene sequencing, combined with machine learning, can match skin, oral, or soil microbiomes to individuals or specific locations with high accuracy, providing evidence for criminal investigations [3].

- Disease Biomarker Discovery: By comparing microbiomes between healthy and diseased individuals, researchers can identify specific microbial biomarkers. For example, full-length 16S sequencing with Oxford Nanopore has identified species like Fusobacterium nucleatum and Bacteroides fragilis as precise biomarkers for colorectal cancer, improving predictive models for the disease [4].

- Therapeutic Monitoring: This technology can track changes in a patient's microbiome in response to interventions such as antibiotics, probiotics, or dietary changes, enabling personalized treatment strategies.

In the field of microbial ecology, the analysis of 16S rRNA gene amplicon sequencing data has become a fundamental methodology for profiling complex microbial communities. Among the bioinformatic tools available, QIIME (Quantitative Insights Into Microbial Ecology) and mothur have emerged as two of the most prominent and widely adopted ecosystems [7] [11]. Since their initial releases within months of each other in 2009-2010, these pipelines have supported thousands of microbiome studies across diverse environments, from the human gut to industrial bioreactors [6]. Despite addressing similar analytical challenges, they embody fundamentally different philosophical approaches to software design, implementation, and user interaction. This article explores the core philosophies of these two ecosystems, provides structured comparisons of their performance, and offers practical guidance for researchers navigating the choice between them within modern bioinformatics workflows for 16S rRNA data analysis.

Philosophical Foundations and Architectural Design

Core Design Philosophies

The divergence between QIIME and mothur begins at the architectural level, reflecting distinct priorities in software design:

mothur adopts a unified toolset approach, implemented primarily in C++ to create a standalone, optimized executable. This design prioritizes computational efficiency, independence from external dependencies, and a consistent user experience through an integrated command-line interface [6]. As noted by its developers, "When you run a function from within mothur, you are running mothur code" [6]. This self-contained nature ensures stability and reduces installation complications, though it may limit community code contributions due to the C++ implementation barrier.

QIIME (particularly the contemporary QIIME 2 platform) embraces a modular, framework-based philosophy. Built primarily in Python, it functions as a "big wrapper that helps users to transition data between independent packages" [6]. This plugin-based architecture encourages community development and method integration, allowing specialized tools to be incorporated with "light wrapper" code while maintaining their original implementations [6]. The platform emphasizes data provenance tracking, reproducibility, and extensibility, with a focus on creating a transparent analytical record that documents every processing step [12] [13].

Implementation and Performance Characteristics

The choice of programming language profoundly influences performance characteristics and user interaction patterns:

Table 1: Implementation Characteristics of mothur and QIIME

| Aspect | mothur | QIIME/QIIME 2 |

|---|---|---|

| Primary Language | C/C++ (compiled) | Python (interpreted) |

| Execution Speed | Faster execution for core algorithms | Slower for computationally intensive tasks |

| Dependencies | Self-contained, minimal dependencies | Multiple external dependencies |

| Installation | Straightforward (single executable) | More complex (dependency management) |

| Extensibility | Limited by core development team | High (plugin architecture) |

| Provenance Tracking | Limited | Comprehensive (core feature) |

As observed in benchmarking studies, "Because of our overall development strategy we have worked very hard to make mothur a standalone software package. When you download mothur, you have mothur. All of it. You don't have to chase down external dependencies or worry about software licenses" [6]. This integrated approach translates to performance advantages for certain computationally intensive tasks, with mothur's aligner performing 21.9-times faster than QIIME's Python-based alternative in one comparison [6].

Conversely, QIIME 2's framework approach has facilitated ongoing innovation, with regular releases adding functionality such as cryptographic signing of results [14], enhanced visualization capabilities [12], and improved reporting features [14]. The provenance system automatically records all analytical steps, parameters, and computational environments, addressing critical reproducibility challenges in bioinformatics [13].

Comparative Performance Benchmarking

Taxonomic Composition and Diversity Assessment

Multiple studies have directly compared the analytical outcomes of QIIME and mothur pipelines using both mock communities and real biological samples. The choice between these platforms can influence the resulting biological interpretations, particularly for low-abundance taxa.

A comprehensive comparison using rumen microbiota samples found that both tools showed a high degree of agreement in identifying the most abundant genera (Bifidobacterium, Butyrivibrio, Methanobrevibacter, Prevotella, and Succiniclasticum), regardless of the reference database used [7] [15]. However, significant differences emerged for less abundant microorganisms (relative abundance < 10%), with mothur assigning OTUs to a larger number of genera and estimating higher relative abundances for these rare taxa [7]. These differences subsequently impacted beta diversity measurements between samples.

A separate evaluation using human fecal samples confirmed these trends, noting that "the use of different bioinformatic pipelines affects the estimation of the relative abundance of gut microbial community, indicating that studies using different pipelines cannot be directly compared" [11]. The study observed statistically significant differences in relative abundance estimates for all phyla and most abundant genera across pipelines [11].

Table 2: Performance Comparison Based on Empirical Studies

| Performance Metric | mothur | QIIME/QIIME 2 | Notes |

|---|---|---|---|

| Sensitivity for Rare Taxa | Higher (more genera identified) | Lower | Particularly with GreenGenes database [7] |

| Richness Estimation | Higher (P < 0.05) | Lower | More favorable rarefaction curves [7] |

| Analytical Specificity | Lower for rare taxa | Higher for rare taxa | mothur may overestimate rare taxa [7] |

| Database Dependence | Significant | Significant | SILVA reduces inter-pipeline differences [7] |

| Reproducibility Across OS | Minimal differences [11] | Minimal differences [11] | Both show good cross-platform consistency |

Reference Database Considerations

The performance differences between pipelines are modulated by the choice of reference database. The SILVA database has been shown to attenuate discrepancies between mothur and QIIME, producing more comparable richness, diversity, and relative abundance estimates for common rumen microbes [7] [15]. This has led to recommendations that "the SILVA database seemed a preferred reference dataset for classifying OTUs from rumen microbiota" [7] when using either pipeline.

Recent QIIME 2 developments have expanded database support, including the incorporation of updated Greengenes2 classifiers [12] and enhanced functionality for creating custom reference databases through plugins like RESCRIPt [13]. The platform's modular architecture facilitates accommodation of new reference datasets as they become available.

Experimental Protocols and Workflows

Standardized Analytical Workflows

The following protocols outline core analytical pathways for both ecosystems, representing standardized approaches for 16S rRNA amplicon analysis:

Detailed Methodological Considerations

mothur Protocol follows a sequential processing approach where quality filtering, alignment to reference databases (SILVA recommended), and distance-based clustering (typically at 97% similarity) form the core workflow [7]. The pipeline produces Operational Taxonomic Units (OTUs) through heuristic algorithms that bin sequences based on similarity thresholds. Critical parameters include quality score thresholds, alignment method (e.g., NAST-based aligners), and clustering algorithm selection (e.g., average neighbor) [16].

QIIME 2 Protocol employs a more modular approach, with denoising algorithms (DADA2 or Deblur) that model and correct sequencing errors to resolve Amplicon Sequence Variants (ASVs) [17] [16]. This method provides single-nucleotide resolution without predefined clustering thresholds. The platform's plugin system allows specialized tools to be incorporated at each step, with provenance tracking automatically recording all parameters and software versions [12].

Multi-Amicon Sequencing Application

For comprehensive taxonomic profiling, multi-amplicon sequencing approaches targeting multiple variable regions have been developed. A recently validated QIIME 2 and R-based pipeline for semiconductor-based sequencing demonstrates the platform's adaptability to complex experimental designs [18]. This standardized workflow integrates data from all targeted 16S regions, generating microbial profiles comparable to proprietary software while maintaining the advantages of open-source transparency and reproducibility [18].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents and Resources for 16S rRNA Analysis

| Resource | Function | Pipeline Compatibility |

|---|---|---|

| SILVA Database | Curated 16S/18S rRNA reference database for taxonomic classification | Both (recommended for consistency) [7] |

| GreenGenes Database | 16S rRNA gene database and taxonomy reference | Both [7] |

| DADA2 Algorithm | Error correction and ASV inference for single-nucleotide resolution | QIIME 2 (as plugin) [17] |

| UNITE Database | Fungal ITS reference database for taxonomic assignment | QIIME 2 (via q2-feature-classifier) [12] |

| RESCRIPt Plugin | Reference database curation and management for custom markers | QIIME 2 [13] |

| Mock Community Standards | Validation and benchmarking of pipeline performance | Both (essential for quality control) [16] |

The QIIME and mothur ecosystems have fundamentally shaped the landscape of 16S rRNA analysis through their complementary philosophical approaches. mothur offers a streamlined, efficient solution with predictable performance characteristics, while QIIME 2 provides an extensible framework with robust provenance tracking and a rapidly evolving method repertoire.

Current evidence suggests that pipeline selection meaningfully impacts analytical outcomes, particularly for low-abundance taxa and beta diversity assessments [7] [11] [17]. The field is increasingly moving toward ASV-based approaches (as implemented in QIIME 2) for improved resolution and cross-study comparability [17] [16], though OTU-based methods retain value for specific applications and historical comparisons.

As the microbiome research field matures, standardization and reproducibility become increasingly critical. The development of validated, open-source pipelines like the QIIME 2-based multi-amplicon workflow [18] represents an important step toward mitigating technical variability and enhancing biological discovery. Both platforms continue to evolve, with recent updates focusing on improved visualization, enhanced database support, and more sophisticated analytical capabilities [14] [12].

Researchers should select their analytical pipeline based on specific experimental requirements, computational resources, and the need for methodological comparability with existing datasets. Whichever ecosystem is chosen, consistent application throughout a study, transparent reporting of parameters, and validation using mock communities remain essential practices for generating robust, interpretable results in microbiome research.

In 16S rRNA gene amplicon sequencing, raw sequence data is processed into structured units that enable quantitative microbial community analysis. The field has primarily utilized two types of units: Operational Taxonomic Units (OTUs) and Amplicon Sequence Variants (ASVs) [19]. OTUs are clusters of sequences grouped based on a predefined similarity threshold, traditionally 97%, which is intended to approximate the species level [20] [21]. In contrast, ASVs are unique sequences inferred from the data through a process of error correction and resolution of single-nucleotide differences, providing a higher-resolution, reproducible representation of microbial diversity without relying on arbitrary clustering thresholds [20] [19] [22]. The final output from both methods is a feature table—either an OTU or ASV table—which is a matrix detailing the abundance of each unit in every sample, forming the basis for all subsequent ecological and statistical analyses [23] [22].

Table 1: Core Concepts: OTUs vs. ASVs

| Feature | Operational Taxonomic Units (OTUs) | Amplicon Sequence Variants (ASVs) |

|---|---|---|

| Definition | Clusters of sequences with a defined similarity (e.g., 97%) [21] | Exact, error-corrected sequences differing by as little as one nucleotide [20] [23] |

| Basis | Identity clustering based on a fixed dissimilarity threshold [19] | Denoising based on statistical error models and probability [20] [19] |

| Typical Resolution | Approximate (e.g., species-level with 97% identity) [20] | High (single-nucleotide) [22] |

| Reproducibility | Variable, depends on clustering method and parameters [19] | High, results are consistent across studies [19] [22] |

| Primary Method | Clustering (de novo, closed-reference, open-reference) [21] [19] | Denoising (e.g., DADA2, Deblur) [20] [22] |

Quantitative Comparisons and Pipeline Performance

The choice of bioinformatics pipeline and reference database can significantly impact the resulting microbial community composition. A study comparing two widely used pipelines, QIIME and mothur, on rumen microbiota from dairy cows revealed both consistencies and critical differences [7] [8].

Table 2: Pipeline and Database Comparison: Mothur vs. QIIME

| Aspect | Finding | Implication |

|---|---|---|

| Abundant Genera (RA > 1%) | High agreement between mothur and QIIME on identity and relative abundance, regardless of database (GreenGenes or SILVA) [7] [8] | Core, high-abundance community members are robustly identified across pipelines. |

| Less Abundant Genera (RA < 10%) | Significant differences (P < 0.05) with GreenGenes; mothur assigned OTUs to more genera and at larger relative abundances [7] [8] | Low-abundance and rare biosphere are more sensitive to analytical choices. |

| Taxonomical Richness | Mothur consistently clustered sequences into a larger number of OTUs, resulting in higher observed richness [7] [8] | mothur may exhibit higher analytical sensitivity, particularly for rare taxa. |

| Database Choice | Differences were attenuated, but not erased, when using the SILVA database instead of GreenGenes [7] [8] | SILVA is preferred for rumen microbiota, leading to more comparable results between pipelines. |

Furthermore, comparisons between OTU and ASV methods show that while the overall biological conclusions about community differences (beta diversity) can be robust, the taxonomic profiles are most comparable at higher taxonomic levels (e.g., family) or when using a 99% OTU identity threshold coupled with frequency filters to remove low-abundance clusters [20].

Experimental Protocols for 16S rRNA Data Analysis

Protocol 1: Constructing an ASV Table with DADA2

The following protocol outlines the steps for constructing a feature table using the DADA2 denoising algorithm within the QIIME 2 environment [20] [22].

Input: Paired-end FASTQ files (demultiplexed, primers removed). Software: QIIME 2 (incorporating DADA2), R [20] [22]. Database: SILVA or GreenGenes for taxonomic assignment [7] [21].

Data Preprocessing and Quality Control:

- Quality Assessment: Use

FastQCto visualize sequence quality profiles [21]. - Trimming and Filtering: Based on quality profiles, trim sequences to remove low-quality bases and filter out sequences with ambiguous bases or expected errors. For the V3-V4 region, forward reads are often trimmed to 280bp and reverse reads to 240bp, or as dictated by the quality plot [22].

- Quality Assessment: Use

Core Denoising with DADA2:

- Learn Error Rates: The algorithm learns the specific error rates of the sequencing run from the data itself, creating an error model [22].

- Dereplication and Denoising: Combine identical sequences and apply the core sample inference algorithm to distinguish true biological sequences from sequencing errors [19] [22].

- Merge Paired-end Reads: Merge the denoised forward and reverse reads, requiring a minimum overlap (e.g., 12bp) and removing mismatches [20].

- Remove Chimeras: Identify and remove chimeric sequences using the

removeBimeraDenovofunction [22].

Construct ASV Table: The output of DADA2 is a sequence table (matrix) reporting the frequency of each non-chimeric, denoised ASV in every sample [22].

Taxonomic Assignment: Assign taxonomy to each ASV by comparing sequences to a reference database using a classifier (e.g., the Naive Bayes classifier in QIIME2) [21].

Protocol 2: Generating OTUs via Open-Reference Clustering

This protocol describes a hybrid method for OTU picking that leverages reference databases while retaining novel sequences, often implemented in QIIME or mothur [21] [19].

Input: High-quality, preprocessed sequences (e.g., stitched, filtered). Software: QIIME or mothur. Database: GreenGenes or SILVA for reference clustering [7] [21].

Initial Closed-Reference Clustering:

- Compare all quality-filtered sequences against a reference database.

- Sequences that match a reference sequence at or above a defined identity threshold (e.g., 97%) are assigned to that reference-based OTU [19].

De Novo Clustering of Failures:

OTU Table Generation:

- The results from the closed-reference and de novo clustering steps are combined into a single OTU table.

- A representative sequence is selected for each OTU (e.g., the most abundant sequence) [21].

Taxonomic Assignment and Chimera Removal:

- Assign taxonomy to the representative sequences using a classifier and reference database.

- Perform chimera detection and removal (e.g., with UCHIME) on the representative sequences or the initial dataset [21].

Table 3: Key Resources for 16S rRNA Gene Analysis

| Category | Item | Function and Application |

|---|---|---|

| Bioinformatics Software | QIIME/QIIME 2 [7] [22] | A comprehensive, modular pipeline for processing amplicon data from raw sequences to statistical analysis. |

| mothur [7] [8] | A single-piece, standalone software pipeline for analyzing 16S rRNA gene sequences. | |

| DADA2 [20] [22] | An R package that performs denoising to infer ASVs from amplicon data with high resolution. | |

| Reference Databases | SILVA [7] [21] | A curated database of aligned rRNA sequences; often preferred for non-human microbiomes like rumen [7]. |

| GreenGenes [7] [21] | A reference database for bacterial and archaeal 16S rRNA gene sequences, historically widely used. | |

| Experimental Controls | Mock Community [23] | A defined mix of microbial cells or DNA with known composition; used as a positive control to evaluate sequencing and bioinformatics performance. |

| No-Template Control (NTC) [23] | A water blank carried through DNA extraction and library preparation to identify laboratory and reagent contamination. | |

| Sequencing Platforms | Illumina MiSeq/HiSeq [22] | High-throughput platforms for short-read sequencing, commonly used for 16S amplicon studies (e.g., V4 region). |

In the field of microbiome research, the choice of sequencing method is a fundamental decision that shapes the scope, resolution, and cost of a study. Two primary techniques dominate this landscape: amplicon sequencing (typically targeting the 16S rRNA gene for bacteria and archaea) and shotgun metagenomic sequencing. Within the context of developing a bioinformatics pipeline for 16S rRNA data analysis, understanding the capabilities and limitations of each method is paramount. Amplicon sequencing, analyzed through pipelines like QIIME and mothur, provides a cost-effective means of taxonomic profiling, whereas shotgun sequencing offers a more comprehensive view of the entire microbial community, including its functional potential [24] [25]. This application note delineates the core concepts of these techniques, provides a structured comparison, and offers clear guidelines for selecting the appropriate method, supported by detailed experimental protocols and data from key studies.

Core Technological Principles

16S rRNA Amplicon Sequencing

The 16S ribosomal RNA (rRNA) gene is a cornerstone of microbial phylogeny and taxonomy. This gene contains nine hypervariable regions (V1-V9), which are flanked by conserved regions. The principle of 16S amplicon sequencing involves using polymerase chain reaction (PCR) with primers designed to bind to these conserved regions, thereby amplifying the intervening hypervariable regions [25] [26]. The resulting PCR amplicons are then sequenced using high-throughput platforms. The hypervariable sequences serve as unique fingerprints, allowing for the identification and classification of bacteria and archaea present in a sample.

The bioinformatics analysis of these sequences, using pipelines such as QIIME and mothur, involves several standardized steps [27] [28]. These include quality filtering, merging of paired-end reads, removal of chimeric sequences, and clustering of sequences into Operational Taxonomic Units (OTUs) or resolving Amplicon Sequence Variants (ASVs). These units are then classified taxonomically by comparing them to reference databases like SILVA or Greengenes [29] [28].

Shotgun Metagenomic Sequencing

In contrast to the targeted approach of amplicon sequencing, shotgun metagenomic sequencing is an untargeted method that fragments all DNA in a sample— microbial, host, and otherwise— into countless small pieces [24] [25]. These fragments are sequenced in a high-throughput manner, generating millions of short reads. Bioinformatics pipelines then assemble these reads into longer contigs or directly align them to comprehensive genomic databases. This process allows for the simultaneous identification of microorganisms across all domains of life (bacteria, archaea, viruses, fungi, and protists) and enables the reconstruction of metabolic pathways and the annotation of gene functions [25] [30].

The following diagram illustrates the fundamental procedural differences between these two sequencing strategies.

The choice between 16S amplicon and shotgun sequencing involves trade-offs between cost, resolution, and analytical depth. The table below synthesizes quantitative and qualitative data from recent studies to provide a clear, side-by-side comparison.

Table 1: Comprehensive comparison of 16S rRNA amplicon sequencing and shotgun metagenomic sequencing.

| Factor | 16S rRNA Amplicon Sequencing | Shotgun Metagenomic Sequencing |

|---|---|---|

| Typical Cost (USD per sample) | ~$50 [24] | Starting at ~$150 (depends on depth) [24] |

| Principle | Targeted PCR amplification of a specific gene region [25] | Untargeted, random sequencing of all DNA [25] |

| Taxonomic Resolution | Genus-level (sometimes species) [24] [30] | Species-level and often strain-level [24] [30] |

| Taxonomic Coverage | Bacteria and Archaea only [24] | All domains: Bacteria, Archaea, Viruses, Fungi [24] [30] |

| Functional Profiling | No (only predicted, e.g., with PICRUSt) [24] | Yes (direct identification of genes and pathways) [24] [30] |

| Bioinformatics Complexity | Beginner to Intermediate [24] | Intermediate to Advanced [24] |

| Sensitivity to Host DNA | Low (PCR targets microbes) [24] | High (can be mitigated by sequencing depth) [24] |

| Reference Databases | Well-established (e.g., SILVA, Greengenes) [29] | Growing, but complex (e.g., NCBI, GTDB, UHGG) [29] |

| Data Sparsity & Diversity | Sparser data, lower alpha diversity [29] | More complete community profile, higher alpha diversity [29] |

| Species-Level Detection | Detects only part of the community [29] [31] | Reveals a wider diversity, including low-abundance species [29] [31] |

Experimental Protocols and Benchmarking Data

To ground this comparison in empirical evidence, we review the methodologies and key findings from two pivotal studies that directly compared these sequencing techniques.

Protocol 1: Comparative Analysis in Colorectal Cancer Microbiota

- Study Objective: To compare the reliability of 16S and shotgun sequencing for bacterial profiling in the context of colorectal cancer (CRC), advanced lesions, and healthy controls [29].

- Sample Collection: 156 human stool samples were collected from a CRC screening program. Samples were stored at -20°C by participants and then preserved at -80°C upon delivery [29].

- DNA Extraction: Two different kits were used for compatibility with each sequencing method: the NucleoSpin Soil Kit (for shotgun) and the Dneasy PowerLyzer Powersoil kit (for 16S) [29].

- Sequencing:

- 16S rRNA: The V3-V4 hypervariable region was amplified and sequenced. An in-house bioinformatics pipeline using DADA2 and additional classification with BLASTN and Kraken2 against the SILVA database was used to enhance species-level classification [29].

- Shotgun: Whole genome sequencing was performed, and human reads were filtered out using Bowtie2 against the GRCh38 human genome [29].

- Key Findings: The study concluded that 16S sequencing detects only a portion of the gut microbiota community revealed by shotgun sequencing. Shotgun data was less sparse and exhibited higher alpha diversity. While machine learning models from both techniques could identify microbial signatures associated with CRC (e.g., Parvimonas micra), only some shotgun models showed predictive power in an independent test set. The study recommends shotgun sequencing for in-depth analysis of stool samples, while noting 16S remains suitable for studies with more targeted aims [29].

Protocol 2: Taxonomic Characterization in Chicken Gut Microbiota

- Study Objective: To evaluate the agreement between 16S and shotgun sequencing for taxonomic characterization of the chicken gut microbiota under different experimental conditions [31].

- Experimental Design: The same DNA samples from a previous intervention study were re-analyzed using both 16S and shotgun sequencing. Conditions included different gastrointestinal tract compartments (crop and caeca) and sampling times [31].

- Methodology: The study focused on comparing relative species abundance distributions, rarefaction curves, and the power of each method to discriminate between experimental conditions using differential abundance analysis (DESeq2) [31].

- Key Findings: Shotgun sequencing, when a sufficient number of reads was available (>500,000), identified a statistically significant higher number of taxa, primarily the less abundant ones. The genera detected only by shotgun sequencing were biologically meaningful and able to discriminate between experimental conditions as effectively as the more abundant genera detected by both methods. The correlation of genus abundances between the two techniques was strong (average Pearson's r = 0.69) for shared taxa, but 16S failed to detect many of the significant changes identified by shotgun sequencing [31].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table catalogues critical reagents and materials required for executing the sequencing protocols described in the cited studies.

Table 2: Key Research Reagent Solutions for 16S and Shotgun Sequencing Workflows.

| Item | Function/Application | Example Product(s) / Methods |

|---|---|---|

| DNA Extraction Kit | Isolation of high-quality, inhibitor-free microbial DNA from complex samples. | PowerSoil DNA Isolation Kit [32], NucleoSpin Soil Kit [29], Dneasy PowerLyzer Powersoil kit [29] |

| 16S PCR Primers | Amplification of specific hypervariable regions of the 16S rRNA gene. | Primers for V3-V4 region [29] [26], V1-V3 region [26] |

| Library Prep Kit | Preparation of DNA fragments for sequencing, including end-repair, adapter ligation, and index PCR. | NEBNext Ultra DNA Library Prep Kit [32], NEXTflex 16S V1–V3 Amplicon-Seq kit [32] |

| Bioinformatics Pipelines | Software for processing raw sequencing data, taxonomic assignment, and diversity analysis. | QIIME [24] [27], mothur [24] [28], DADA2 [29] |

| Reference Databases | Curated collections of genomic or gene sequences for taxonomic and functional classification. | 16S: SILVA [29], Greengenes [33]. Shotgun: NCBI RefSeq [29], GTDB [29] |

| Computational Resources | Hardware and software environment for data-intensive bioinformatics analysis. | Galaxy platform [28], High-performance computing (HPC) clusters |

Decision Framework: When to Use 16S rRNA Sequencing

The following workflow diagram encapsulates the decision-making process for selecting the appropriate sequencing method, based on research goals, sample type, and resources.

Guidance for Pipeline Development

For researchers building a bioinformatics pipeline for 16S rRNA data analysis using tools like QIIME and mothur, it is critical to recognize the inherent limitations of the data these tools process.

- Taxonomic Resolution: The pipeline will typically achieve reliable genus-level classifications, but species-level identification is limited and dependent on the hypervariable region chosen [33]. Full-length 16S sequencing on long-read platforms can improve this but is not yet the standard.

- Functional Insights: Since 16S data does not directly provide functional information, pipelines often integrate prediction tools like PICRUSt [24] [28]. These predictions are inferences based on reference genomes and should be interpreted with caution.

- Bias Awareness: The pipeline must account for biases introduced during PCR amplification and the choice of primers, which can affect the observed taxonomic composition [29].

Both 16S amplicon and shotgun metagenomic sequencing provide powerful yet distinct lenses for examining microbial communities. 16S rRNA sequencing remains a highly cost-effective and accessible method for large-scale studies focused primarily on the taxonomy of bacteria and archaea, making it an excellent tool for initial surveys and hypothesis generation. In contrast, shotgun metagenomic sequencing offers superior taxonomic resolution, broader kingdom coverage, and direct access to functional insights, at a higher cost and computational burden. The decision between them is not a matter of which is universally better, but which is the most appropriate tool for the specific research question, sample type, and available resources. As the field advances, the development of robust, standardized bioinformatics pipelines for both methods, particularly within user-friendly platforms like Galaxy, is essential for ensuring the reproducibility and translational impact of microbiome research [28].

In the standardized bioinformatics pipeline for 16S rRNA data analysis using tools like QIIME and Mothur, the initial computational processing represents merely the final stage of a long analytical chain. Preceding this, the critical wet-lab decision of primer selection irrevocably shapes all downstream results. Targeted amplicon sequencing of the 16S ribosomal RNA gene serves as the cornerstone method for profiling microbial communities across diverse environments, from the human gut to engineered bioreactors [34] [35]. The 16S rRNA gene contains nine hypervariable regions (V1-V9) flanked by conserved sequences that enable primer design for PCR amplification [36]. However, no universal primer pair exists that perfectly amplifies all bacterial taxa without bias, making primer choice a fundamental determinant of observed microbial composition [37] [38].

The growing recognition of primer-induced biases challenges the assumption that data generated using different hypervariable regions are directly comparable. Recent comprehensive studies demonstrate that specific taxa are systematically underrepresented or completely missing from taxonomic profiles when using suboptimal primer combinations [34] [39]. For instance, the Bacteroidetes phylum may be missed using primers 515F-944R (targeting V4-V5), while the V1-V2 region fails to adequately capture Fusobacteriota without specific modifications [34] [39]. These technical artifacts can lead to biologically erroneous conclusions if not properly accounted for in experimental design.

This Application Note examines how hypervariable region selection biases 16S rRNA sequencing results, provides structured comparisons of primer performance characteristics, and offers practical protocols for optimizing primer choice within standardized bioinformatics pipelines for microbial ecology research.

Theoretical Foundation: Mechanisms of Primer Bias

Primer binding efficiency varies substantially across bacterial taxa due to several molecular mechanisms. Sequence mismatch tolerance differs among DNA polymerases, leading to preferential amplification of templates with perfect primer complementarity [38]. The degeneracy design of primers (incorporating mixed bases at highly variable positions) attempts to mitigate this but introduces variability in primer synthesis efficiency and annealing kinetics [38]. Additionally, secondary structure formation in template regions can block primer access, particularly in GC-rich sequences [38].

Perhaps the most underappreciated source of bias stems from intergenomic variation within conserved regions. Traditionally, primer design has assumed that flanking regions remain sufficiently conserved across all bacteria for universal amplification. However, comprehensive analysis of 20 core gut genera reveals substantial variability even in these supposedly conserved regions [37]. Shannon entropy analysis demonstrates unexpected nucleotide variation at primer binding sites, challenging the concept of truly "universal" primers and explaining systematic amplification failures for specific bacterial lineages [37].

Variable Region Performance Characteristics

Different hypervariable regions offer varying levels of taxonomic resolution due to their distinct evolutionary rates and sequence characteristics. The V4 region provides reliable family-level classification but struggles with species-level discrimination for many taxa, while the V1-V2 regions often enable finer taxonomic resolution but may miss certain phyla [40] [39]. The V3-V4 region, popularized by the Earth Microbiome Project, offers a compromise between length and discriminative power but exhibits particularly problematic off-target amplification in human-derived samples [39] [41].

Table 1: Taxonomic Resolution of Commonly Used Hypervariable Regions

| Hypervariable Region | Optimal Taxonomic Level | Notable Limitations | Recommended Applications |

|---|---|---|---|

| V1-V2 | Genus to species | Poor coverage of Fusobacteriota without modifications | Human biopsy samples, clinical diagnostics |

| V3-V4 | Family to genus | High off-target amplification of human DNA | Environmental samples, stool samples |

| V4 | Family | Limited species-level resolution | Broad microbial surveys, educational use |

| V4-V5 | Family | May miss Bacteroidetes | Industrial microbiome monitoring |

| V6-V8 | Genus | Variable coverage across Firmicutes | Specialized environmental applications |

Experimental Evidence: Documented Impacts of Primer Choice

Comparative Performance Across Sample Types

The severity of primer-induced bias varies considerably across sample types, largely dependent on the ratio of host to bacterial DNA. In human biopsy samples where host DNA predominates (often exceeding 97% of total DNA), primer pairs targeting the V3-V4 region generate 70-98% human-derived sequences in gastrointestinal tract biopsies, breast tissue, and esophageal samples [39] [41]. This massive off-target amplification drastically reduces sequencing depth for bacterial communities and can obscure rare taxa. Switching to V1-V2 targeted primers reduces human DNA alignment to nearly zero while significantly increasing observable bacterial richness [39].

In contrast, stool samples with minimal human DNA contamination show much smaller differences between primer sets, though taxonomic composition shifts remain substantial [34] [35]. Similarly, mock communities with known composition reveal that certain primer pairs consistently fail to detect specific members, with the magnitude of bias increasing with community complexity [34] [36].

Quantitative Comparison of Primer Performance

Systematic evaluation of 57 commonly used primer sets against the SILVA database identified three candidate primers (V3P3, V3P7, and V4_P10) that provide balanced coverage across 20 key genera of the core gut microbiome [37]. Notably, many widely used "universal" primers showed significant limitations in coverage, failing to amplify substantial portions of microbial diversity due to unexpected variability in conserved regions [37].

Table 2: Performance Metrics of Select Primer Pairs in Human Gut Microbiome Profiling

| Primer Pair | Target Region | Average Coverage (%) | Human DNA Amplification | Taxonomic Richness (ASVs) | Reproducibility (R²) |

|---|---|---|---|---|---|

| 68F-338R (V1-V2M) | V1-V2 | 89.7 | <0.1% | 215 ± 34 | 0.96 |

| 341F-785R | V3-V4 | 85.3 | 34-98% | 127 ± 42 | 0.87 |

| 515F-806R | V4 | 82.1 | 55-85% | 98 ± 39 | 0.83 |

| 515F-944R | V4-V5 | 79.4 | <0.1% | 142 ± 28 | 0.91 |

| 1115F-1492R | V7-V9 | 76.8 | <0.1% | 135 ± 31 | 0.89 |

Practical Protocols: A Methodological Framework

Protocol 1: In Silico Primer Validation

Purpose: Computational assessment of primer performance against reference databases before wet-lab experimentation.

Materials:

- Reference database (SILVA, GreenGenes, or RDP)

- Primer sequences in FASTA format

- Computational tools: TestPrime, DECIPHER, or mopo16S

Procedure:

- Database Selection: Download and curate an appropriate 16S rRNA reference database (e.g., SILVA SSU Ref NR 99%).

- Primer Screening: Use TestPrime or equivalent tool to calculate coverage statistics for your primer candidates against the database.

- Mismatch Analysis: Identify potential systematic mismatches, particularly at the 3'-end of primers where they most impact amplification.

- Taxonomic Specificity: Evaluate coverage variation across phyla of interest, noting any systematic exclusions.

- Amplicon Length Verification: Confirm that the resulting amplicon size is compatible with your sequencing platform.

Interpretation: Primer pairs achieving ≥70% coverage across all target phyla and ≥90% coverage for at least four out of 20 representative genera are considered candidates for experimental validation [37].

Protocol 2: Experimental Validation with Mock Communities

Purpose: Empirical evaluation of primer performance using synthetic microbial communities of known composition.

Materials:

- Mock community with staggered or even abundance (e.g., ZymoBIOMICS Gut Microbiome Standard)

- DNA extraction kit suitable for your sample type

- Selected primer pairs for comparison

- High-fidelity PCR master mix

- Access to appropriate sequencing platform

Procedure:

- Sample Preparation: Extract DNA from mock community according to manufacturer's protocol, including negative controls.

- Library Preparation: Amplify using candidate primer pairs with identical cycling conditions and PCR cycle numbers.

- Sequencing: Process all samples in the same sequencing run to minimize technical variation.

- Bioinformatic Processing: Process raw data through standardized pipeline (QIIME2 or Mothur) with identical parameters.

- Bias Quantification: Compare observed composition to expected composition using Bray-Curtis dissimilarity or similar metrics.

Interpretation: Successful primer pairs should recover all expected taxa with relative abundances correlating strongly (R² > 0.85) with expected composition [34] [36].

Protocol 3: Optimization for Host-Associated Microbiomes

Purpose: Specialized protocol for samples with high host DNA content (biopsies, blood, etc.).

Materials:

- V1-V2 targeted primers (68F-338R with modifications)

- High-fidelity PCR enzyme with GC-rich buffer capability

- Human DNA depletion kit (optional)

- Agarose gel electrophoresis equipment

Procedure:

- Human DNA Depletion: Optional step using selective hybridization or enzymatic digestion to reduce host DNA.

- Primer Modification: For V1-V2 primers, include modified forward primer (68F_M) to capture Fusobacteriota.

- Annealing Optimization: Implement touchdown PCR with annealing temperatures between 62-65°C to enhance specificity.

- Cycle Number Titration: Use minimum PCR cycles needed for sufficient library yield (typically 25-30 cycles).

- Size Selection: Purify amplicons of correct size (≈260bp for V1-V2) to exclude primer dimers and non-specific products.

Interpretation: Successful implementation yields >90% bacterial reads with representative diversity across known community members [39].

Bioinformatics Considerations: Pipeline Adjustments for Primer Effects

Database Selection and Customization

The reference database used for taxonomic assignment must align with the amplified region to avoid misclassification. Region-specific training sets significantly improve classification accuracy in QIIME2 and Mothur [34]. For example, using a V1-V2 trained classifier with V4 sequence data introduces substantial misclassification errors. Database nomenclature differences (e.g., Enterorhabdus versus Adlercreutzia) further complicate cross-study comparisons using different primer sets [34].

Truncation Length Optimization

Different hypervariable regions require specific quality trimming parameters to maximize data quality. Systematic testing reveals that appropriate truncation of amplicons is essential, and different truncated-length combinations should be empirically determined for each primer set and study [34]. For the commonly used V3-V4 region (341F-785R), truncation at 260bp forward and 200bp reverse typically optimizes quality without excessive data loss, while V1-V2 amplicons (68F-338R) perform best with 220bp forward and 180bp reverse truncation [39].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents for Primer Evaluation and Optimization

| Reagent/Resource | Specifications | Application | Example Sources |

|---|---|---|---|

| Mock Community B | 20 bacterial strains, even and staggered configurations | Protocol validation | BEI Resources, ATCC |

| ZymoBIOMICS Gut Standard | 19 bacterial and archaeal strains with multiple 16S copies | Primer bias assessment | Zymo Research |

| SILVA SSU Database | Curated 16S rRNA sequences with quality checking | In silico validation | silva-arb.org |

| TestPrime Tool | Online primer coverage analysis | Primer screening | silva-arb.org |

| mopo16S Software | Multi-objective primer optimization | Novel primer design | http://sysbiobig.dei.unipd.it |

| High-Fidelity Polymerase | Low error rate, minimal bias | Library preparation | Multiple vendors |

Decision Framework: Visual Guide to Primer Selection

The following workflow diagram provides a systematic approach to primer selection based on experimental goals and sample characteristics:

Primer selection constitutes a fundamental, often underestimated source of bias in 16S rRNA sequencing studies that propagates through all downstream bioinformatics analyses. The evidence presented demonstrates that hypervariable region choice systematically impacts observed microbial composition, diversity metrics, and taxonomic resolution. To maximize experimental validity:

- Validate primers empirically using mock communities that reflect your sample type's complexity

- Select variable regions based on your specific sample characteristics and research questions

- Account for primer effects when comparing datasets generated with different amplification protocols

- Document primer sequences and amplification conditions thoroughly to enable appropriate cross-study comparisons

No single hypervariable region provides optimal resolution for all research scenarios. Rather, researchers should align primer selection with specific experimental goals while acknowledging the technical constraints inherent in targeted amplicon sequencing. When studying novel environments or when primer biases may substantially impact conclusions, employing a multi-primer approach or supplementing with PCR-independent methods (such as metatranscriptomics or shotgun sequencing) provides valuable verification of community composition [42]. Through deliberate primer selection and appropriate validation, researchers can minimize technical artifacts and focus on meaningful biological variation within their microbial systems.

Step-by-Step Workflows: From Sequence Data to Community Analysis

Within the broader context of bioinformatics pipelines for 16S rRNA data analysis, the mothur tool suite represents a cornerstone methodology for processing amplicon sequencing data. Developed by the Schloss lab, this SOP provides a robust framework for analyzing microbial communities using sequences generated from Illumina's MiSeq platform [43] [44]. The protocol outlined here exemplifies the application of this pipeline to investigate a specific biological question: understanding the effect of normal variation in the gut microbiome on host health, using a longitudinal study of mouse fecal samples [43] [45]. This SOP has been extensively validated through peer-reviewed research and continues to be refined as the field advances [43].

The primary strength of the mothur pipeline lies in its comprehensive approach to error reduction and data curation. Unlike approaches that may sacrifice data quality for throughput, this methodology employs rigorous quality control measures that reduce error rates by as much as two orders of magnitude, providing a reliable foundation for downstream ecological interpretations [44]. The protocol processes paired-end reads that overlap in the V4 region of the 16S rRNA gene (approximately 253 bp), leveraging Illumina's sequencing technology to generate high-quality data for microbial community analysis [43].

Experimental Design and Workflow

The mothur MiSeq SOP follows a logical progression from raw sequencing data to interpreted ecological patterns, with multiple quality checkpoints throughout the process. Figure 1 illustrates the complete workflow from initial setup through final analysis.

Figure 1. Overview of the mothur MiSeq SOP workflow. The diagram illustrates the sequential steps from raw sequencing data processing through alignment, quality control, and final diversity analysis. Reference databases are incorporated at critical junctures to ensure proper sequence alignment and taxonomic classification.

Computational Requirements and Setup

The mothur pipeline can be implemented across various computing environments, from personal computers to high-performance computing clusters. The software is written in C++, is independent of operating system, and requires no dependencies [46]. For larger datasets (e.g., >100 samples), computational resources should be scaled accordingly, with recommended specifications including sufficient memory (≥100 GB RAM for large projects) and multiple processors to leverage mothur's parallel computing capabilities [28] [46].

The initial setup involves creating a dedicated project directory and obtaining the necessary reference files:

- Create project directory: Organize a dedicated folder structure for the analysis

- Obtain reference files: Download the SILVA-based bacterial reference alignment, RDP training set, or alternative databases [43]

- Install mothur: Obtain the latest version of mothur executable [43]

Materials and Reagents

Research Reagent Solutions

Table 1: Essential computational reagents and reference databases for the mothur MiSeq SOP

| Item | Function | Source |

|---|---|---|

| mothur Software | Primary analysis platform for processing 16S rRNA sequences | mothur.org [43] |

| SILVA Reference Database | Reference alignment for sequence alignment and classification | SILVA [43] |

| RDP Training Set | Reference taxonomy for Bayesian classification | RDP [43] |

| Greengenes Database | Alternative reference database for classification | Greengenes [7] |

| Illumina MiSeq Sequences | Raw paired-end FASTQ files from 16S rRNA amplicon sequencing | Experimental data [43] |

| Mock Community DNA | Control sample with known composition for error rate assessment | e.g., HMP_MOCK.v35.fasta [43] |

Sample Data Collection

The exemplary dataset referenced in this SOP was generated from a longitudinal study investigating gut microbiome dynamics in mice. Fresh feces were collected from mice on a daily basis for 365 days post-weaning, focusing specifically on comparing the rapid change period (first 10 days) with a stable period (days 140-150) [43]. To make the tutorial tractable, a subset of this data is provided, representing one animal at 10 time points (5 early and 5 late) plus a mock community sample [43]. The mock community, composed of genomic DNA from 21 bacterial strains, provides an essential control for measuring pipeline error rates and their effect on downstream analyses [43].

Step-by-Step Protocol

Data Preparation and Contig Assembly

The initial phase of the pipeline focuses on organizing input data and assembling paired-end reads into contigs:

Create files list: Execute the

make.filecommand to identify forward and reverse reads and create a stability.files document that maps sample names to their respective FASTQ files [43]:Assemble contigs: Use the

make.contigscommand to combine paired-end reads, creating the reverse complement of the reverse read and joining reads into contigs [43]:This command employs a quality-aware algorithm that resolves disagreements between paired reads by considering quality scores, requiring a quality score difference of ≥6 points when both sequences have a base, or a score >25 when one sequence has a base and the other has a gap [43].

Sequence summary: Generate initial quality metrics using

summary.seqsto assess sequence length distribution and quality [46]:

Quality Control and Alignment

This critical phase removes low-quality sequences and aligns reads to a reference database:

Screen sequences: Remove sequences with ambiguous bases, excessive length, or homopolymers

Align to reference: Align screened sequences to the appropriate reference database (e.g., SILVA) [28]:

Filter alignment: Remove poorly aligned regions and minimize overhangs

Remove redundant sequences: Dereplicate to reduce computational burden

Pre-cluster sequences: Implement a lightweight clustering to reduce sequencing errors

Chimera Removal and Classification

This phase identifies and removes artificial chimeric sequences while assigning taxonomy:

Chimera detection: Identify chimeras using UCHIME or ChimeraSlayer [28]:

Remove chimeras: Filter identified chimeras from the dataset

Taxonomic classification: Assign taxonomy using a Bayesian classifier with an appropriate reference training set [28]:

OTU Clustering and Diversity Analysis

The final phase generates operational taxonomic units and calculates diversity metrics:

Calculate distances: Generate a distance matrix for clustering

Cluster sequences: Cluster sequences into OTUs using an appropriate algorithm (e.g., Opticlust, average neighbor) at 97% similarity [28] [16]:

Classify OTUs: Assign consensus taxonomy to each OTU

Calculate diversity metrics: Generate alpha and beta diversity measures, including rarefaction curves and ordination plots [45]:

Expected Results and Interpretation

Sequence Processing Statistics

When properly executed, the pipeline should yield high-quality data with minimal errors. The mock community sample provides a critical benchmark for assessing pipeline performance.

Table 2: Expected sequence processing statistics at major pipeline stages

| Processing Stage | Expected Result | Quality Indicator |

|---|---|---|

| Initial Contig Assembly | >70% of read pairs successfully assembled | Sequencing quality and library preparation |

| Post-Quality Control | <5% of sequences removed during screening | Initial sequence quality |

| Chimera Removal | 10-30% of sequences identified as chimeric | PCR amplification artifacts |

| Final OTU Clustering | Error rate <0.1% on mock community | Overall pipeline accuracy [44] |

| Taxonomic Classification | >90% of sequences classified to genus level | Reference database appropriateness |

Mock Community Analysis

The mock community with known composition serves as a vital control for quantifying error rates. When processing the 21-strain mock community, the pipeline should correctly identify the expected sequences with minimal errors. Studies have demonstrated that this SOP can reduce error rates by as much as two orders of magnitude compared to uncorrected data [44]. The error rate can be calculated by comparing the observed sequences to the expected reference sequences in the mock community.

Diversity Assessment

For the exemplary mouse gut microbiome data, the pipeline should reveal differences in community structure between early (days 0-10) and late (days 140-150) time points. Alpha diversity metrics (e.g., Shannon index, Chao1) may show higher variability during the early rapid change period compared to the stable late period. Beta diversity measures (e.g., Weighted Unifrac) should demonstrate clustering of samples by time period, indicating distinct community structures.

Troubleshooting and Technical Notes

Common Issues and Solutions

- Low sequence yield after make.contigs: Adjust

trimoverlapparameter and check primer sequences in oligos file [46] - Poor alignment rates: Verify that the reference database matches the target region (e.g., V4 region for V4 primers)

- High chimera rates: Optimize PCR cycle numbers in wet-lab protocol and consider using multiple chimera detection methods

- Low classification rates: Try alternative reference databases (SILVA, Greengenes, RDP) as performance varies by sample type [43]

Methodological Considerations

The choice between OTU clustering and Amplicon Sequence Variants (ASVs) represents a key methodological decision. While this SOP focuses on traditional OTU clustering at 97% similarity, ASV approaches (e.g., DADA2, Deblur) offer single-nucleotide resolution and may reduce spurious OTUs [16]. Recent benchmarking analyses indicate that OTU algorithms (like those in mothur) tend to achieve clusters with lower errors but with more over-merging, while ASV algorithms produce more consistent output but may suffer from over-splitting [16].

When analyzing data from multiple studies with different primer sets or sequencing regions, it is generally recommended to analyze datasets separately rather than attempting to combine them in a single analysis, as alignment artifacts can lead to significant data loss [47].

Alternative Implementations

For researchers seeking a more user-friendly interface, the Galaxy mothur Toolset (GmT) provides a web-based implementation of the entire mothur tool suite, making the pipeline accessible to non-bioinformaticians while maintaining analytical rigor [28]. This implementation preserves all functionality while adding workflow automation and integration with visualization tools like Krona and Phinch [28] [45].

Comparative studies have shown that mothur and QIIME produce comparable results for abundant taxa (>10% relative abundance) but may differ in their handling of rare taxa, with mothur typically assigning OTUs to a larger number of genera for less abundant microorganisms [7]. The choice between these platforms may depend on the specific research question and the importance of detecting rare community members.

Within the broader context of developing robust bioinformatics pipelines for 16S rRNA data analysis, the transition from traditional OTU-clustering methods to Amplicon Sequence Variants (ASVs) represents a significant advance. ASVs offer higher resolution by inferring exact biological sequences, thereby reducing the spurious inflation of diversity metrics common with arbitrary OTU clustering [48]. QIIME 2 has emerged as a comprehensive, reproducible framework that integrates these modern denoising methods, notably through its DADA2 and Deblur plugins, into a cohesive analysis workflow [49] [50]. This protocol details the application of these plugins within the QIIME 2 environment, providing a standardized pipeline for researchers and drug development professionals to process raw 16S rRNA sequencing data into high-resolution, analytically powerful ASVs.

Background: Denoising vs. Clustering

The initial step in marker gene analysis involves grouping similar sequences. This can be achieved through two primary approaches: denoising and clustering [51].

- Denoising (ASV Workflow): Methods like DADA2 and Deblur correct sequence errors and remove chimeras to infer the exact biological sequences present in the original sample, resulting in Amplicon Sequence Variants (ASVs) [48] [52]. This approach provides higher resolution and is often more sensitive than OTU clustering.

- Clustering (OTU Workflow): Methods like VSEARCH group sequences based on a percent similarity threshold (e.g., 97%), creating Operational Taxonomic Units (OTUs). This was the standard approach before denoising methods became prevalent [51].

For most applications, denoising is recommended as it provides a more accurate representation of biological sequences without relying on arbitrary similarity thresholds [52] [51]. The following workflow focuses on this modern ASV-based approach.

The complete QIIME 2 workflow for ASV inference, from raw data to biological insight, involves multiple stages that can be visualized in the following diagram. The denoising step, which is the focus of this protocol, is central to this process.

Detailed Experimental Protocol

Initial Data Preparation and Import

Principle: All data used in QIIME 2 must be imported into a QIIME 2 artifact (.qza file) to ensure type safety and provenance tracking [52] [51]. For raw sequencing data, the most straightforward approach uses a manifest file.

Protocol:

- Create a manifest file: This is a tab-separated text file that maps sample identifiers to the file paths of their forward and reverse (for paired-end) reads [48] [52]. The format must be exact: sample-id | forward-absolute-filepath | reverse-absolute-filepath F26 | /path/to/F261.fq.gz | /path/to/F262.fq.gz F27 | /path/to/F271.fq.gz | /path/to/F272.fq.gz

Import sequences: Use the

qiime tools importcommand, specifying the type of data and the format. For paired-end data with Phred33 quality scores, the command is [48]:Summarize and visualize imported data: Generate an interactive quality plot to determine optimal truncation parameters for denoising [48]:

Open the resulting

.qzvfile at https://view.qiime2.org to inspect quality score distributions and read lengths.

Denoising with DADA2

Principle: DADA2 models and corrects Illumina amplicon errors to infer exact amplicon sequence variants (ASVs), performing quality filtering, dereplication, chimera removal, and read merging (for paired-end data) in a single process [48] [53].

Protocol:

- Execute DADA2 denoising: Based on the quality plots, choose truncation lengths (

--p-trunc-len-fand--p-trunc-len-r) where median quality scores drop significantly (e.g., below Q30). The reads must still overlap after truncation [48] [53].

Key Parameters:

--p-trim-left: Number of nucleotides to trim from the 5' start of reads to remove primers or low-quality bases.--p-trunc-len: Position to truncate reads at the 3' end due to quality drop. Reads shorter than this are discarded.--p-max-ee: Maximum expected errors allowed in a read; reads with higher expected errors are discarded.--p-n-threads: Number of threads to use for parallel processing to speed up computation on multi-core systems [53].

Summarize outputs: Generate visualizations to inspect the feature table, representative sequences, and denoising statistics [48].

Denoising with Deblur

Principle: Deblur uses an error-profile-based approach to remove sequencing errors from Illumina data, resulting in ASVs. It is typically applied to single-end reads and can include a positive filter step for 16S data [52].

Protocol:

- Join paired-end reads (if necessary): Deblur operates on single-end reads. If you have paired-end data and wish to use Deblur, join reads first using

q2-vsearch[51].

- Execute Deblur denoising: For 16S data, use the

denoise-16Saction, which performs a positive filtering step against reference sequences. For other markers (e.g., ITS), usedenoise-other[52]. The--p-trim-lengthparameter is required for Deblur to ensure all reads are the same length for analysis.

Critical Decision Points: DADA2 vs. Deblur

The choice between DADA2 and Deblur depends on your data type and analytical goals. The table below summarizes the key differences to guide your selection.

Table 1: Comparison of DADA2 and Deblur for ASV Inference in QIIME 2

| Feature | DADA2 | Deblur |

|---|---|---|

| Primary Use Case | Paired-end or single-end Illumina reads [53] [52] | Primarily single-end Illumina reads [52] |

| Read Joining | Performs read merging internally as part of denoise-paired [52] |

Requires pre-joined reads via a separate tool (e.g., vsearch join-pairs) [51] |

| Algorithm Core | Error model based on alternation of nucleotides and quality scores [48] | Error profile based on read shifts and specific substitutions [52] |

| Positive Filter (16S) | No positive filtering; classifies all input reads | Optional positive filter against reference database in denoise-16S [52] |

| Key Parameter | --p-trunc-len-f/-r (Truncation length) [53] |

--p-trim-length (Trim all reads to fixed length) [52] |

| Output | Feature table, representative sequences, denoising stats [48] [53] | Feature table, representative sequences, deblurring stats [52] |

Downstream Analysis

Following ASV inference, the resulting feature table and representative sequences form the basis for all subsequent biological interpretations.

Taxonomic Classification: Assign taxonomy to ASVs using a pre-trained classifier [48].

Diversity Analysis: Calculate alpha and beta diversity metrics, which often require building a phylogenetic tree [49] [51].

Visualization: Create interactive barplots and other visualizations to explore taxonomic composition and diversity results [48].

The Scientist's Toolkit

A successful QIIME 2 analysis requires several key components, from raw data to reference databases. The following table details these essential resources.

Table 2: Essential Research Reagents and Resources for QIIME 2 ASV Analysis

| Item | Specifications & Function | Example Sources |

|---|---|---|

| Raw Sequence Data | Demultiplexed FASTQ files (Phred33 encoding). The starting point of the analysis. | Illumina MiSeq/HiSeq instruments; BaseSpace [54] |

| Sample Metadata File | Tab-separated values (.tsv) file with sample-id column and experimental factors. Links biological samples to their data and covariates. |

Researcher-generated; validated with Keemei [55] |

| QIIME 2 Environment | Installed and activated Conda environment. Provides the core platform and all integrated plugins for analysis. | https://docs.qiime2.org [50] [56] |

| Taxonomic Classifier | Pre-trained Naive Bayes classifier artifact (.qza). Used for assigning taxonomy to ASV sequences. | SILVA, Greengenes; or custom-trained with fit-classifier-naive-bayes [48] |

| Reference Databases | Curated sequences and taxonomy files (e.g., FASTA, .txt). Used for classifier training or, in Deblur, for positive filtering. | SILVA, Greengenes, GTDB, UNITE [57] |

| Quality Visualization Tool | Web-based interface for viewing .qzv files. Essential for interactive quality control and result exploration. |

https://view.qiime2.org [48] [50] |

Integrating DADA2 or Deblur within the QIIME 2 framework provides a powerful, standardized, and reproducible pipeline for inferring high-resolution ASVs from 16S rRNA sequencing data. This protocol outlines the critical steps and decision points, empowering researchers to move beyond traditional OTU clustering. The structured workflow from raw data import through denoising to downstream analysis ensures robust, transparent, and reproducible results, forming a solid bioinformatics foundation for microbiome studies in both basic research and drug development contexts.

Within bioinformatics pipelines for 16S rRNA data analysis, such as those implemented in QIIME and mothur, the pre-processing of raw sequencing data is a critical foundational step. The accuracy of all downstream ecological inferences—including taxonomic assignment, diversity analysis, and statistical comparison—is fundamentally dependent on the rigorous application of quality filtering, paired-end read merging, and chimera removal [58] [59]. This protocol outlines detailed methodologies for these key pre-processing steps, providing a standardized framework that ensures data quality and reproducibility in microbial ecology studies. The procedures are designed to be applicable within popular analysis environments, including QIIME, mothur, and USEARCH, and are essential for researchers, scientists, and drug development professionals working with 16S amplicon sequencing data.

The Scientist's Toolkit: Essential Research Reagents & Software

The following table catalogues key software tools and reference databases essential for implementing a robust 16S rRNA pre-processing pipeline.

Table 1: Key Research Reagent Solutions for 16S rRNA Data Pre-processing

| Item Name | Type | Primary Function in Pre-processing |

|---|---|---|

| USEARCH / VSEARCH [60] [59] | Software Tool | Paired-end read merging, quality filtering, dereplication, and chimera checking. VSEARCH is an open-source alternative to USEARCH. |

| DADA2 [58] | Software Tool | A denoising algorithm that infers amplicon sequence variants (ASVs) by modeling and correcting Illumina-sequenced amplicon errors. |

| mothur [45] [59] | Software Pipeline | A comprehensive, open-source software package for processing 16S rRNA gene sequences, including all steps from raw data to statistical analysis. |

| PEAR [61] | Software Tool | An ultrafast, memory-efficient, and highly accurate paired-end read merger. |

| Chimera Slayer [62] | Software Algorithm | A tool for detecting chimeric sequences by identifying reads that are hybrids of multiple parent sequences. |

| SILVA Database [58] [63] | Reference Database | A curated, high-quality alignment of ribosomal RNA genes used for sequence alignment, chimera checking, and taxonomic assignment. |

| GreenGenes Database [58] [63] | Reference Database | A curated 16S rRNA gene database used for taxonomic classification and phylogenetic analysis. |

The pre-processing of 16S rRNA amplicon data follows a logical sequence to transform raw sequencing reads into a high-quality set of non-chimeric sequences ready for downstream analysis. The following diagram illustrates the core workflow and the key decision points at each stage.