Predicting Material Properties: A Machine Learning Roadmap for Accelerated Discovery

This article provides a comprehensive overview of machine learning (ML) applications for predicting material properties from structural data, tailored for researchers and drug development professionals.

Predicting Material Properties: A Machine Learning Roadmap for Accelerated Discovery

Abstract

This article provides a comprehensive overview of machine learning (ML) applications for predicting material properties from structural data, tailored for researchers and drug development professionals. It explores the foundational principles of ML in materials science, delves into advanced methodologies like graph neural networks and image-based learning, and addresses key challenges such as data scarcity and model interpretability. The content also covers rigorous validation techniques and comparative analyses of model performance across different material classes, including emerging modalities like targeted protein degraders. By synthesizing the latest research, this guide aims to equip scientists with the knowledge to leverage ML for accelerating the design and discovery of new materials and therapeutics.

The Foundation: Why Machine Learning is Revolutionizing Materials Science

The field of materials science is undergoing a profound paradigm shift, moving from traditional empirical methods toward sophisticated, data-driven discovery. This transition is critical for addressing society's pressing demands for advanced materials in areas ranging from clean energy to healthcare, where development cycles have historically spanned decades [1]. The core of this transformation lies in the ability to predict material properties from their structure using machine learning (ML), thereby accelerating the discovery and design of novel materials with tailored characteristics.

Traditional materials development relied heavily on experimental trial-and-error or high-throughput computational screening, which are often time-consuming and resource-intensive [2] [3]. The emergence of materials informatics has created new pathways to overcome these limitations by leveraging large-scale data analysis and machine learning algorithms to establish crucial relationships between material compositions, structures, and properties [4] [1]. This approach is particularly powerful for identifying materials with exceptional properties that fall outside known distributions—a capability essential for groundbreaking discoveries [2].

The Data-Driven Revolution in Materials Science

Historical Context and Paradigm Shift

The evolution of materials science reflects a journey through different scientific eras, culminating in the current fourth paradigm of data-driven science. This new era builds upon the previous three—experimental, theoretical, and computational science—by systematically extracting knowledge from large, complex datasets [5]. The dramatic uptake of ML in materials science is evidenced by bibliometric analyses; one assessment noted that titles with ML focus in a leading computational materials journal rose from approximately 16% in 2017 to about 42% in recent years [6].

This shift has been facilitated by several key developments, including the open science movement, substantial national funding initiatives, and remarkable progress in information technology [5]. The proliferation of open materials databases such as the Materials Project, AFLOW, NOMAD, and JARVIS has provided the foundational data resources necessary for training ML models [2] [6]. Concurrently, the development of high-quality open-source software packages including scikit-learn, PyTorch, and JAX has democratized access to advanced ML tools [6].

Key Challenges in Traditional Approaches

Traditional materials development faces significant hurdles that data-driven approaches aim to overcome:

Multiple Length Scale Challenge: Material properties emerge from hierarchical structures forming over multiple time and length scales, from atomic interactions to macroscopic morphology. Understanding these complex process-structure-property (PSP) linkages represents a fundamental challenge in materials design [1].

Computational Limitations: Conventional crystal structure prediction methods based on density functional theory (DFT) provide high accuracy but are computationally expensive, restricting their application to relatively small systems [4].

Temporal and Resource Constraints: The average time for novel materials to reach commercial maturity remains approximately 20 years, creating an urgent need for accelerated discovery approaches [1].

Machine Learning Frameworks for Materials Property Prediction

Core Machine Learning Approaches

Machine learning applications in materials property prediction primarily utilize supervised learning frameworks, where models are trained on labeled datasets to establish mappings between material representations (inputs) and target properties (outputs). These approaches generally fall into classification tasks, such as distinguishing between crystalline and amorphous phases, and regression tasks for predicting continuous properties like formation energy or band gap [4].

The predictive modeling process involves several key steps: selecting appropriate material representations or "fingerprints," choosing suitable algorithm architectures, training models on available data, and validating predictions against unseen data [1]. The material fingerprint acts as a DNA code composed of individual "genes" (descriptors) that connect fundamental material characteristics to macroscopic properties [1].

Table 1: Key Machine Learning Algorithms for Materials Property Prediction

| Algorithm Category | Specific Methods | Typical Applications | Key Advantages |

|---|---|---|---|

| Traditional ML | Ridge Regression, Random Forest, Support Vector Machines | Composition-based property prediction, Small to medium datasets | Interpretability, Lower computational requirements |

| Deep Learning | Convolutional Neural Networks (CNNs), Fully Connected Neural Networks, Graph Neural Networks | Crystal property prediction, Image-based classification, Complex structure-property mappings | Automatic feature extraction, Handling complex nonlinear relationships |

| Specialized Architectures | Bilinear Transduction, Ensemble of Experts, CrabNet | Out-of-distribution prediction, Data-scarcity scenarios, Transfer learning | Improved extrapolation, Knowledge transfer between properties |

Addressing Data Scarcity Through Advanced Architectures

Data scarcity poses a significant challenge in materials science, particularly for predicting complex material properties where experimental data is limited. Recent innovations have addressed this limitation through specialized ML architectures:

Ensemble of Experts (EE) Approach: This methodology leverages pre-trained models ("experts") on datasets of different but physically meaningful properties. The knowledge encoded by these experts is then transferred to make accurate predictions on more complex systems, even with very limited training data [7]. The EE framework has demonstrated superior performance over standard artificial neural networks, particularly under severe data scarcity conditions for predicting properties like glass transition temperature (Tg) and the Flory-Huggins interaction parameter (χ) [7].

Bilinear Transduction for OOD Prediction: For discovering high-performance materials, extrapolation to out-of-distribution (OOD) property values is critical. Bilinear Transduction reparameterizes the prediction problem by learning how property values change as a function of material differences rather than predicting these values directly from new materials [2]. This approach has shown 1.8× improvement in extrapolative precision for materials and 1.5× for molecules, boosting recall of high-performing candidates by up to 3× [2].

Table 2: Performance Comparison of ML Methods for OOD Property Prediction

| Method | Bulk Modulus MAE | Shear Modulus MAE | Debye Temperature MAE | Extrapolative Precision | Recall of Top Candidates |

|---|---|---|---|---|---|

| Ridge Regression | Baseline | Baseline | Baseline | Baseline | Baseline |

| MODNet | -6.2% | -4.8% | -5.7% | +22% | +45% |

| CrabNet | -8.1% | -6.3% | -7.2% | +31% | +62% |

| Bilinear Transduction | -14.5% | -12.7% | -13.9% | +80% | +200% |

Experimental Protocols and Application Notes

Protocol: Bilinear Transduction for OOD Property Prediction

Objective: To train predictor models that extrapolate zero-shot to higher property value ranges than present in training data, given chemical compositions of solids or molecular graphs and their property values.

Materials and Data Requirements:

- Solid-state materials datasets (AFLOW, Matbench, Materials Project) or molecular datasets (MoleculeNet)

- Stoichiometry-based representations for solids or graph representations for molecules

- Property values spanning a defined range for training, with OOD test sets covering extended ranges

Procedure:

- Data Preparation: Curate datasets containing material compositions and corresponding property values. For solids, focus on compositionally driven variation in properties using stoichiometry-based representations.

- Training-Test Split: Partition data into in-distribution (ID) training and validation sets, and OOD test sets with property values outside the training distribution.

- Model Training: Implement Bilinear Transduction by reparameterizing the prediction problem to learn how property values change as a function of material differences.

- Inference: During prediction, base estimates on known training examples and the difference in representation space between them and new samples.

- Validation: Evaluate using mean absolute error (MAE) for OOD predictions and compute extrapolative precision to measure identification of top OOD candidates.

Validation Metrics:

- Mean Absolute Error (MAE) for OOD predictions

- Extrapolative precision: Fraction of true top OOD candidates correctly identified

- Recall of high-performing candidates: Percentage of materials with exceptional properties successfully identified

Protocol: Ensemble of Experts for Data-Scarce Scenarios

Objective: To predict complex material properties under severe data scarcity conditions by leveraging knowledge transfer from pre-trained models on related physical properties.

Materials:

- Tokenized SMILES strings for molecular representation

- Pre-trained "expert" models on related physical properties

- Limited target property data (Tg for molecular glass formers, χ for polymer-solvent systems)

Procedure:

- Expert Model Preparation: Pre-train multiple expert models on large, high-quality datasets for different but physically meaningful properties.

- Fingerprint Generation: Use these experts to generate molecular fingerprints that encapsulate essential chemical information.

- Target Model Training: Train models on limited target property data using the generated fingerprints as input features.

- Ensemble Integration: Combine predictions from multiple expert-informed models to enhance accuracy and generalization.

- Performance Validation: Compare against standard ANN models trained solely on the limited target data.

Validation Metrics:

- Predictive accuracy (R², MAE) under varying data scarcity conditions (using 10%, 30%, 50% of available data)

- Generalization capability across diverse molecular structures and interactions

- Comparison with standard ANN performance benchmarks

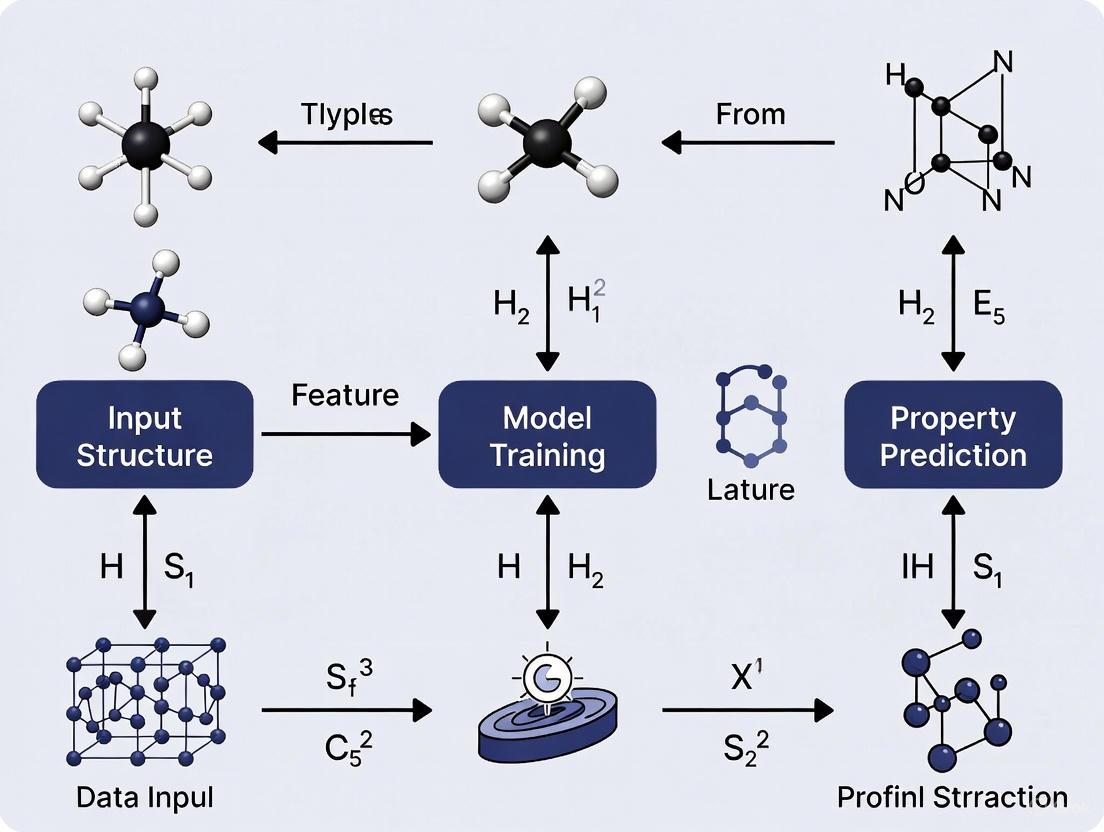

Visualization of Key Workflows

Data-Driven Materials Discovery Workflow

Ensemble of Experts Architecture

Table 3: Key Research Reagent Solutions for Data-Driven Materials Science

| Resource Category | Specific Tools | Function | Application Examples |

|---|---|---|---|

| Materials Databases | Materials Project, AFLOW, NOMAD, JARVIS, OQMD | Provide curated datasets of material structures and properties | Training data for ML models, High-throughput screening |

| Representation Methods | Stoichiometry-based descriptors, Graph representations, SMILES strings, Material fingerprints | Encode material structures in machine-readable formats | Input features for property prediction models |

| ML Frameworks | scikit-learn, PyTorch, JAX, TensorFlow | Implement and train machine learning models | Developing custom prediction pipelines |

| Specialized ML Models | Bilinear Transduction, Ensemble of Experts, CrabNet, MODNet | Address specific challenges like OOD prediction and data scarcity | Extrapolative prediction, Knowledge transfer |

| Validation Tools | Matbench, Various ML reproducibility checklists | Benchmark model performance and ensure research rigor | Comparative analysis, Method standardization |

Future Perspectives and Challenges

The field of data-driven materials discovery continues to evolve rapidly, with several emerging trends and persistent challenges shaping its development. Key among these is the need for improved model interpretability, as understanding the physical basis for ML predictions remains crucial for scientific acceptance and fundamental insight [6]. The development of standardized validation protocols and reproducibility checklists represents an important step toward establishing community-wide best practices [6].

Future advancements will likely focus on enhancing generalization capabilities across diverse materials classes, integrating multi-fidelity data from computational and experimental sources, and developing more sophisticated approaches for uncertainty quantification [2] [7]. As these technical challenges are addressed, data-driven methodologies are poised to become increasingly integral to materials research and development, potentially reducing discovery timelines from decades to months and unlocking new regions of materials property space [8] [1].

The integration of physical knowledge through hybrid modeling approaches, combining ML with domain-inspired constraints and first-principles understanding, represents a particularly promising direction for future research [7]. Such approaches may ultimately fulfill the vision of a "Materials Ultimate Search Engine" (MUSE) that can rapidly identify optimal materials for specific applications, dramatically accelerating innovation across numerous technology sectors [5].

In materials property prediction, the exceptional accuracy of complex Machine Learning (ML) models often comes at the cost of understanding. The most accurate models, such as deep neural networks (DNNs), frequently operate as "black boxes," making it challenging to trust their predictions or gain scientific insights from them [9]. This opacity is particularly problematic in scientific fields like materials science and drug discovery, where understanding the "why" behind a prediction is as crucial as the prediction itself [10] [11]. Two concepts central to addressing this challenge are transparency and explainability. Though sometimes used interchangeably, they represent distinct aspects of understanding AI systems [12] [13]. For researchers and scientists, mastering these concepts is essential for building trustworthy, reliable, and scientifically useful predictive models.

Core Conceptual Definitions

Understanding the precise meaning of key terms is the first step toward their practical implementation. The table below defines the core concepts as they apply to materials and drug discovery research.

Table 1: Core Concepts in ML Model Understanding

| Concept | Core Definition | Primary Focus | Key Question | Example in Materials Science |

|---|---|---|---|---|

| Transparency [12] [13] | Openness about the AI system's design, development, and deployment processes. | The entire system's architecture and data. | "How is the model built and what data was used?" | An open-source ML project on GitHub providing full source code, training dataset, and documentation for a model predicting formation energy [12]. |

| Explainability [12] [13] | The ability to describe, in understandable terms, the reasoning behind a specific decision or output. | The logic behind an individual prediction. | "Why did the model make this specific prediction?" | A model predicting a low bandgap for a perovskite highlights the specific elemental interactions and structural features that led to that prediction [12] [9]. |

| Interpretability [12] [14] | A deeper, often technical, understanding of the model's internal decision-making processes and mechanics. | The inner workings of the algorithm itself. | "How do the model's internal mechanisms lead to its decisions?" | Using a decision tree for a polymer stability prediction where each node represents a clear decision based on a molecular descriptor, allowing the entire path to be traced [12]. |

A crucial technical distinction lies in how explainability and interpretability are achieved. Interpretable models are often inherently transparent, designed from the ground up to be understood by humans (e.g., linear models with non-linear basis functions or short decision trees) [15] [14]. In contrast, explainability is often achieved through post-hoc techniques—external methods applied after a complex "black-box" model has made a prediction to provide a plausible rationale for it [14]. Common techniques include SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) [16] [14].

Experimental Protocols for XAI in Materials Science

Implementing Explainable AI (XAI) requires a structured methodology. The following protocol provides a workflow for integrating explainability into a materials property prediction project, from data preparation to insight generation.

Diagram 1: XAI Experimental Workflow

Phase 1: Data Preparation and Feature Engineering

Objective: To curate a dataset with human-interpretable features that represent material structures. Detailed Steps:

- Data Collection: Assemble a dataset of materials structures and their corresponding target properties (e.g., bandgap, tensile strength, formation energy). Sources can include computational databases (e.g., DFT calculations) or historical experimental data [17].

- Feature Engineering: Transform raw structural data (e.g., composition, crystal structure) into a set of numerical descriptors. The interpretability of the final model depends heavily on this step.

- Examples: Calculate compositional features (e.g., atomic radii, electronegativity), structural features (e.g., symmetry, coordination numbers), or domain-specific descriptors (e.g., porosity, build direction for additively manufactured materials) [17].

- Tool: Use libraries like

pymatgenormatminerto automate feature generation.

Phase 2: Model Development and Training

Objective: To train both a high-accuracy (potentially black-box) model and an inherently interpretable model for comparison. Detailed Steps:

- Baseline Interpretable Model: Train a simple, transparent model such as Linear Regression or a shallow Decision Tree. This provides a benchmark for both performance and explainability.

- Advanced Model Training: Train a high-performance model such as:

- Model Evaluation: Compare models using standard metrics (e.g., Mean Absolute Error, R² score) on a held-out test set.

Phase 3: XAI Analysis and Explanation

Objective: To generate explanations for the model's predictions, both globally and locally. Detailed Steps:

- Global Explanation (Model-Level):

- Technique: Apply SHAP to calculate the mean absolute impact of each feature on the model's output across the entire dataset [16].

- Output: A bar plot of mean(|SHAP value|) reveals the most important features globally.

- Local Explanation (Prediction-Level):

- Technique: For a single material's prediction, use SHAP or LIME to explain which features were most influential for that specific outcome [16].

- Output: A force plot or a list of weighted features showing their contribution to pushing the prediction higher or lower.

Phase 4: Explanation and Model Validation

Objective: To ensure the explanations are faithful and the model's behavior aligns with physical principles. Detailed Steps:

- Physical Consistency Check: Analyze if the important features identified by XAI align with known domain knowledge. For example, a model predicting formation energy for elpasolite crystals should assign coefficients that reflect trends across the periodic table [15].

- Sensitivity Analysis: Perturb input features and observe changes in the prediction to verify the causal relationships suggested by the XAI output.

Phase 5: Scientific Insight and Hypothesis Generation

Objective: To translate model explanations into actionable scientific knowledge. Detailed Steps:

- Hypothesis Formulation: Use the feature-property relationships uncovered by XAI to form new hypotheses. For instance, if a specific structural motif is consistently identified as crucial for high ionic conductivity, hypothesize that synthesizing new materials with enhanced versions of this motif will improve performance.

- Inverse Design: Leverage the interpretable model to guide the search for new materials by identifying the combination of features that leads to a desired property [15].

The Scientist's Toolkit: Key Reagents and Computational Solutions

The successful application of XAI in materials informatics relies on a suite of computational tools and methodologies.

Table 2: Essential Research Reagents for XAI in Materials Science

| Tool / Solution | Category | Primary Function | Application Example |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) [16] | Post-hoc Explainability | Unifies several explanation methods to quantify the contribution of each feature to a single prediction. | Explaining why a specific (AlxGayInz)2O3 compound was predicted to have a high formation energy [15]. |

| LIME (Local Interpretable Model-agnostic Explanations) [16] | Post-hoc Explainability | Approximates a complex model locally with an interpretable one (e.g., linear model) to explain individual predictions. | Creating a local, interpretable model to explain a DNN's prediction of toxicity for a specific small molecule [16]. |

| Inherently Interpretable Models (e.g., SISSO, Linear Models with nonlinear basis) [15] | Interpretable ML | Provides a directly understandable functional form for the structure-property relationship, avoiding the black box. | Creating a predictive, simple bilinear model for TCO formation energy that offers direct insight into cluster-cluster interactions [15]. |

| XpertAI Framework [16] | Advanced XAI Framework | Integrates XAI methods with Large Language Models (LLMs) to generate natural language explanations of structure-property relationships from raw data. | Automatically generating a scientific summary of why certain molecular descriptors correlate with a target property, backed by literature evidence [16]. |

Quantitative Comparison of Model Performance and Explainability

The choice of model often involves a trade-off between predictive accuracy and explainability. The following table summarizes performance data from real-world materials science applications, highlighting that simpler, interpretable models can sometimes achieve accuracy comparable to black-box approaches.

Table 3: Performance Comparison of ML Models in Materials Property Prediction

| Material System | Target Property | Model Type | Performance Metric | Explainability / Insights Gained |

|---|---|---|---|---|

| Ti-6Al-4V Alloy (SLM) [17] | Tensile Strength | Gaussian Process Regression (GPR) | MAE: 23.9 MPa | High explainability against human-centric understanding levels. |

| Neural Network (NN) | MAE: 28.24 MPa | Slightly worse explainability compared to GPR [17]. | ||

| Transparent Conducting Oxides (TCOs) [15] | Formation Energy | Kernel Ridge Regression (KRR) | Performance comparable to linear models. | Low; model is a black box. |

| Bilinear Model (proposed interpretable) | Accuracy on par with KRR [15]. | High; provides a clear functional form and reveals cluster-cluster interactions [15]. | ||

| Elpasolite Crystals [15] | Formation Energy | Kernel Ridge Regression (KRR) | Performance comparable to linear models. | Low; model is a black box. |

| Linear Model (proposed interpretable) | Accuracy on par with KRR [15]. | High; coefficients reflect known periodic table trends, enabling validation and guiding new material searches [15]. |

In high-stakes scientific research, such as materials property prediction and drug discovery, a model's accuracy is necessary but not sufficient. Transparency in its construction and explainability in its predictions are critical for building trust, ensuring reliability, and—most importantly—deriving new scientific knowledge [9] [11]. As the field progresses, the integration of frameworks like XpertAI, which combine XAI with literature knowledge, promises to further bridge the gap between data-driven predictions and human scientific reasoning [16]. By adopting the protocols and tools outlined in this document, researchers can move beyond black-box predictions toward a more profound, interpretable understanding of material behavior.

The discovery of next-generation materials and molecules is fundamentally limited by the human capacity to comprehend complex, high-dimensional structure-property relationships. Traditional experimental methods and computational simulations are often resource-intensive and struggle to navigate vast chemical spaces. Machine learning (ML) has emerged as a transformative tool, overcoming these human limits by identifying subtle patterns within complex datasets that are intractable for manual analysis [2] [7]. This is particularly critical for predicting material properties, where the goal is often to discover extremes—materials with property values that fall outside known distributions, thereby unlocking new technological capabilities [2]. This document provides application notes and detailed protocols for applying advanced ML techniques to the challenge of materials property prediction, with a focus on overcoming data scarcity and achieving extrapolation.

Key Methodological Approaches and Protocols

Two advanced ML paradigms addressing core challenges in materials science are detailed below: one for Out-of-Distribution (OOD) property prediction and another for data-scarcity scenarios.

Protocol 1: Bilinear Transduction for OOD Property Prediction

The objective of this protocol is to train predictor models that extrapolate zero-shot to property value ranges higher than those present in the training data, given chemical compositions or molecular graphs [2].

Application Notes

Bilinear Transduction reparameterizes the prediction problem. Instead of predicting a property value from a new candidate material directly, it learns how property values change as a function of material differences. Predictions are made based on a known training example and the difference in representation space between that example and the new sample [2]. This method has been shown to improve extrapolative precision by 1.8× for materials and 1.5× for molecules, and can boost the recall of high-performing candidates by up to 3× [2].

Step-by-Step Experimental Protocol

- Data Preparation: Curate a dataset of material compositions (e.g., as stoichiometry) or molecular graphs (e.g., as SMILES strings) with corresponding property values. The training set should intentionally exclude the high-value region of the target property to simulate an OOD scenario.

- Representation: Convert the material inputs into a numerical representation. For solids, use stoichiometry-based representations; for molecules, use graph-based representations or tokenized SMILES strings [2] [7].

- Model Training (Transductive Learning):

- For a test candidate material, select a analogous training example.

- Compute the difference vector between the test candidate's representation and the training example's representation.

- The model is trained to predict the property value for the test candidate based on the chosen training example's property and the calculated representation difference.

- Inference: During inference, property values for new samples are predicted using the same logic—based on a selected training example and the difference between it and the new sample.

- Validation: Evaluate the model on a held-out test set containing property values outside the training distribution. Key metrics include Mean Absolute Error (MAE) for OOD samples and extrapolative precision, defined as the fraction of true top OOD candidates correctly identified among the model's top predictions [2].

Protocol 2: Ensemble of Experts for Data-Scarcity Scenarios

The objective of this protocol is to accurately predict complex material properties, such as glass transition temperature (Tg) or the Flory-Huggins interaction parameter (χ), when labeled training data for the target property is severely limited [7].

Application Notes

The Ensemble of Experts (EE) approach overcomes data scarcity by leveraging knowledge from pre-trained models ("experts") on large, high-quality datasets for different but physically related properties. The knowledge encoded in these experts is transferred to the new prediction task with limited data, significantly outperforming standard artificial neural networks (ANNs) trained from scratch on the small dataset [7].

Step-by-Step Experimental Protocol

- Expert Pre-training: Train multiple independent models (e.g., ANNs) on large, available datasets for foundational material properties (e.g., formation energy, band gap). These properties should be physically relevant to the target property.

- Fingerprint Generation: For each data point in the small target dataset (e.g., Tg), pass the molecular representation (e.g., a tokenized SMILES string) through each pre-trained expert. Extract a feature vector (the "fingerprint") from an intermediate layer of each network.

- Fingerprint Aggregation: Concatenate or otherwise combine the fingerprints generated by all experts to create a comprehensive, knowledge-rich input vector for the target property predictor.

- Target Model Training: Train a final predictor model (e.g., a shallow ANN) on the small target dataset, using the aggregated fingerprints as input features and the target property values (e.g., Tg) as labels.

- Validation: Compare the performance of the EE system against a standard ANN trained directly on the limited target data using metrics like predictive accuracy and generalization across diverse molecular structures [7].

Experimental Workflow and Logical Diagrams

The following diagrams illustrate the logical workflows for the two primary protocols described in this document.

OOD Prediction via Bilinear Transduction

Ensemble of Experts for Data Scarcity

Performance Metrics and Data

The following tables summarize quantitative performance data for the ML methods discussed.

Table 1: OOD Prediction Performance on Solid-State Materials

Table showing Mean Absolute Error (MAE) for OOD predictions on benchmark datasets (AFLOW, Matbench, Materials Project) across various material properties. Bilinear Transduction is compared against baseline methods. [2]

| Material Property | Ridge Regression | MODNet | CrabNet | Bilinear Transduction |

|---|---|---|---|---|

| Band Gap | 0.41 | 0.39 | 0.38 | 0.35 |

| Bulk Modulus | 0.081 | 0.079 | 0.078 | 0.075 |

| Debye Temperature | 0.061 | 0.060 | 0.059 | 0.056 |

| Shear Modulus | 0.098 | 0.095 | 0.093 | 0.090 |

| Thermal Conductivity | 0.121 | 0.118 | 0.116 | 0.112 |

Table 2: Performance under Data Scarcity

Table comparing the performance of a standard ANN versus the Ensemble of Experts approach when predicting the glass transition temperature (Tg) of molecular glass formers with limited data. [7]

| Training Set Size | Standard ANN (MAE in K) | Ensemble of Experts (MAE in K) |

|---|---|---|

| 50 samples | 12.5 | 8.2 |

| 100 samples | 9.1 | 6.0 |

| 200 samples | 7.2 | 4.8 |

The Scientist's Toolkit: Research Reagent Solutions

This section details key computational "reagents" essential for conducting experiments in ML-driven materials property prediction.

| Resource Name / Type | Function / Application | Reference / Source |

|---|---|---|

| Tokenized SMILES Strings | A representation for molecular structures that enhances a model's capacity to interpret chemical information compared to traditional one-hot encoding. | [7] |

| Morgan Fingerprints | Encodes chemical substructures as bit vectors; a widely used strategy for featurizing molecules for machine learning models. | [7] |

| MatEx (Materials Extrapolation) | An open-source implementation of the Bilinear Transduction method for OOD property prediction, available for use and validation. | https://github.com/learningmatter-mit/matex |

| Pre-trained Expert Models | Models previously trained on large datasets of related physical properties (e.g., formation energy), used to generate knowledge-rich fingerprints for new tasks. | [7] |

| Coblis / Color Oracle | Color blindness simulators used to preview and ensure that data visualizations and charts are accessible to all researchers. | [18] |

In modern drug development, the journey from a molecular structure to a safe and effective therapeutic is governed by a series of key properties spanning multiple scales. Traditionally, optimizing these properties has been a sequential, resource-intensive process. The integration of machine learning (ML) from materials informatics is revolutionizing this pipeline by enabling the simultaneous prediction of properties from the atomic scale, such as formation energy and crystal structure, to the macroscopic, system-level scale of absorption, distribution, metabolism, and excretion (ADME) profiles [19] [20]. This paradigm shift allows researchers to pre-emptively screen for desirable drug-like behavior, de-risking the development process and accelerating the discovery of advanced lead compounds directed toward specific therapeutic indications [19].

Key Property Targets Across Scales

Effective drug discovery requires the optimization of a hierarchy of properties. The table below summarizes the critical property targets from the atomic level to the full organism-level profile.

Table 1: Key Property Targets in Drug Development

| Scale | Property Target | Description | Impact on Development | Common Prediction Methods |

|---|---|---|---|---|

| Atomic / Molecular | Formation Energy / Stability | The energy of a molecule relative to its constituent atoms; indicates stability [21]. | Determines synthetic feasibility and stability of the solid form (e.g., crystal, salt) [4]. | DFT, Graph Neural Networks (GNNs), Roost [21] [22] |

| Crystal Structure (CSP) | The three-dimensional arrangement of atoms in a solid [4]. | Critical for bioavailability, solubility, and manufacturability (polymorph control) [4]. | Genetic Algorithms, Particle Swarm Optimization, ML Potentials [4] | |

| Solubility (logS) | Logarithm of aqueous solubility (mol/L) [19]. | Directly impacts drug absorption; a prerequisite for oral bioavailability. | QSPR models, Random Forests, ANNs [19] [23] | |

| Physicochemical & In Vitro | ADME Properties (e.g., HIA, PPB) | Absorption, Distribution, Metabolism, Excretion parameters (e.g., % Human Intestinal Absorption, Plasma Protein Binding) [19]. | Predicts in vivo pharmacokinetic behavior and appropriate dosing regimens [19]. | Machine Learning models on curated experimental data [19] |

| Drug-Target Affinity (DTA) | The strength of interaction between a drug molecule and its protein target [24]. | Defines therapeutic potency and selectivity; crucial for efficacy and avoiding side effects. | Deep Learning, Graph Neural Networks, Transformer models [24] | |

| Macroscopic / Clinical | Toxicity & Side Effect Profile | The adverse effects of a compound on biological systems. | Ultimate determinant of clinical safety and patient quality of life. | Multitask Learning, Knowledge Graphs [24] |

Experimental Protocols for Property Prediction

This section details standardized methodologies for building predictive models for key properties, leveraging insights from both materials science and cheminformatics.

Protocol: Predicting Formation Energy for Molecular Stability

Objective: To build a deep transfer learning model for predicting the formation energy of a drug-like molecule from its composition and structure, achieving accuracy that surpasses traditional Density Functional Theory (DFT) computations [21].

Workflow:

Data Acquisition:

- Source Domain Data: Obtain a large dataset (>100,000 data points) of DFT-computed formation energies and structures from databases like the Open Quantum Materials Database (OQMD), Materials Project (MP), or Joint Automated Repository for Various Integrated Simulations (JARVIS) [21].

- Target Domain Data: Collect a smaller, high-quality experimental dataset of formation energies for pharmaceutically relevant compounds (e.g., from the "exp-formation-enthalpy" database) [21].

Model Pre-training:

- Train a deep neural network (e.g., IRNet) on the large DFT-computed source dataset. The input is the material's composition and crystal structure, and the output is the DFT-predicted formation energy [21].

- This step allows the model to learn a rich set of domain-specific features from the structural data.

Model Fine-tuning:

- Use the smaller experimental dataset to fine-tune the parameters of the pre-trained model. This transfers the knowledge from the DFT domain to the more accurate experimental domain [21].

Model Validation:

- Evaluate the model on a hold-out experimental test set. The target performance is a Mean Absolute Error (MAE) lower than the known discrepancy between DFT computations and experiments (e.g., < 0.076 eV/atom) [21].

Protocol: Building a QSPR Model for ADME Properties

Objective: To create a robust Quantitative Structure-Property Relationship (QSPR) model for predicting human intestinal absorption (HIA) using an open-source toolkit [23].

Workflow:

Data Curation:

- Collect a dataset of compounds with experimentally measured HIA values (e.g., from scholarly literature or databases like e-Drug3D) [19].

- Standardize molecular structures from SMILES strings using a tool like RDKit, ensuring consistent representation (e.g., neutralizing charges, removing duplicates) [23].

Featurization:

- Convert the standardized molecules into numerical descriptors. Use the QSPRpred toolkit to generate a combination of features, which can include:

Model Training and Benchmarking:

Model Serialization and Deployment:

- Serialize the final model using QSPRpred's automated system, which saves the model with all required data pre-processing steps. This allows for direct prediction on new compounds from their SMILES strings, ensuring reproducibility and transferability into practice [23].

Visualization of Workflows

The following diagrams illustrate the core computational workflows for the protocols described above.

Deep Transfer Learning for Formation Energy

QSPR Model Development for ADME Properties

Successful implementation of property prediction models relies on a suite of computational tools and data resources.

Table 2: Essential Computational Tools for Property Prediction

| Tool / Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecule standardization, descriptor calculation, and fingerprint generation [19]. | Calculating topological polar surface area (PSA) and AlogP for QSPR models [19]. |

| QSPRpred | QSPR Modelling Toolkit | End-to-end workflow for data analysis, model building, benchmarking, and deployment [23]. | Building a serialized model for Human Intestinal Absorption (HIA) prediction that can be deployed directly from SMILES. |

| Roost | Structure-Agnostic ML Model | Predicts material properties from stoichiometry alone, without requiring a 3D crystal structure [22]. | Rapid screening of formation energy for novel molecular compositions when structural data is unavailable. |

| Materials Project / OQMD | Computational Database | Databases of DFT-calculated properties for inorganic materials and molecules [21] [22]. | Source of large-scale data for pre-training deep learning models on properties like formation energy. |

| Tokenized SMILES | Data Representation | Represents molecular structures as tokenized arrays, improving chemical interpretation for ML models [7]. | Used as input to neural networks for predicting properties like glass transition temperature (Tg) in polymer-drug systems. |

| Magpie Fingerprint | Fixed-Length Descriptor | A hand-engineered feature vector encoding elemental properties of a material's composition [22]. | Used as a baseline feature set or a pre-training target for structure-agnostic property prediction. |

ML in Action: Advanced Architectures and Real-World Applications

Molecular Representation Learning (MRL) is a foundational discipline in modern computational chemistry and materials science, concerned with translating molecular structures into mathematical formats that machine learning algorithms can process. This translation is crucial for modeling, analyzing, and predicting molecular behavior and properties, thereby accelerating drug design and materials discovery [25]. The primary challenge lies in capturing the complex relationships between molecular structure and key characteristics such as biological activity, physicochemical properties, and multi-scale functionality.

Effective molecular representation must not only encode chemical structure but also enable efficient exploration of the vast, nearly infinite chemical space to identify compounds with desired biological or physical properties [25]. The evolution of representation methods has progressed from traditional, rule-based descriptors to advanced, data-driven artificial intelligence (AI) approaches. These AI-driven strategies extend beyond traditional structural data, facilitating exploration of broader chemical spaces and accelerating critical tasks like scaffold hopping—the discovery of new core structures while retaining biological activity [25].

This document provides Application Notes and Protocols for three dominant molecular representation paradigms—molecular graphs, SMILES strings, and molecular images—framed within the context of machine learning for materials property prediction. It is structured to equip researchers with both the theoretical understanding and practical methodologies needed to implement these representations in predictive modeling workflows.

Molecular Representation Modalities: A Comparative Analysis

Molecular Graphs

Principles and Applications: Molecular graphs represent molecules as mathematical graphs where atoms correspond to nodes and bonds to edges. This representation intuitively captures the topological structure of molecules, making it particularly powerful for predicting properties intrinsically linked to connectivity and atomic environment [25] [26]. Graph Neural Networks (GNNs) are the primary deep learning architecture designed to process this data structure. They operate by passing messages between connected nodes, iteratively updating node embeddings to capture both local atomic environments and global molecular structure [25].

Advantages and Limitations:

- Advantages: Intuitively captures topological structure; naturally models local and global molecular information; particularly powerful for predicting properties related to molecular connectivity and geometry [25] [26].

- Limitations: Can be computationally intensive; performance on small datasets may require transfer learning strategies to mitigate overfitting [27].

SMILES Strings

Principles and Applications: The Simplified Molecular-Input Line-Entry System (SMILES) provides a compact string-based representation of molecular structures, using a grammar of atomic symbols and rules to denote branching, cycles, and bond types [25]. Inspired by advances in Natural Language Processing (NLP), models such as Transformers and BERT have been adapted to process SMILES strings by tokenizing them at the atomic or substructure level [25].

Advantages and Limitations:

- Advantages: Compact and human-readable; vast existing infrastructure for generation and parsing; benefits from direct application of powerful NLP architectures [25].

- Limitations: Inherently sequential nature can obscure spatial relationships; different SMILES strings can represent the same molecule (lack of canonicalization); small syntactic changes can lead to invalid or vastly different chemical structures [25].

Molecular Images

Principles and Applications: Molecular images represent chemical structures as 2D raster images, typically depicting structural formulas with atoms and bonds. This approach offers a model-agnostic featurization that can leverage powerful, pre-trained computer vision models [26]. A significant advantage is the ability to utilize vision foundation models, such as OpenAI's CLIP, as a backbone for molecular encoders, a strategy employed by the MoleCLIP framework [26].

Advantages and Limitations:

- Advantages: Model-agnostic featurization; enables use of powerful, pre-trained computer vision models; less explicit bias introduced compared to engineered descriptors [26].

- Limitations: Images are less explicit and compact than graphs or strings; representation can be sparse (many pixels are empty); may not be the most efficient encoding of structural information [26].

Table 1: Comparative Analysis of Molecular Representation Modalities

| Feature | Molecular Graphs | SMILES Strings | Molecular Images |

|---|---|---|---|

| Primary Data Structure | Graph (Nodes, Edges) | Sequential String | 2D Pixel Grid |

| Key Strengths | Captures topology & geometry | Compact, vast tooling | Leverages vision foundation models |

| Common ML Architectures | GNNs, GCNs, Message-Passing Networks | Transformers, RNNs, LSTMs | CNNs, Vision Transformers (ViTs) |

| Sample Use Cases | Quantum property prediction, formation energy | Large-scale generative chemistry, QSAR | Property prediction, few-shot learning |

| Notable Frameworks | CGCNN, ALIGNN | SMILES-BERT, ChemBERTa | MoleCLIP, ImageMol |

Experimental Protocols for Representation-Specific Model Training

Protocol 1: Fine-Tuning a Graph Neural Network for Property Prediction

Objective: To adapt a pre-trained GNN to predict a specific material property (e.g., formation energy) using a limited target dataset.

Materials:

- Pre-trained GNN model (e.g., on a large source dataset like the Materials Project).

- Curated target dataset with known property values.

- Deep learning framework (e.g., PyTorch, TensorFlow).

- Access to GPU computing resources.

Procedure:

- Model Selection and Initialization: Select a pre-trained GNN architecture such as ALIGNN or CGCNN. Initialize the model weights from those pre-trained on a large, diverse dataset like the OQMD or Materials Project [27].

- Data Preparation: Format your target dataset (e.g., 100-800 data points for fine-tuning) into crystal graph or molecular graph structures. Split the data into training, validation, and test sets (e.g., 80/10/10).

- Strategy Selection: Choose a fine-tuning strategy based on target dataset size and similarity to the pre-training data [27]:

- Strategy 1 (Full Fine-Tuning): Re-train all layers of the model on the target dataset. Use a low learning rate (e.g., 1e-5 to 1e-4) to avoid catastrophic forgetting.

- Strategy 2 (Feature Extraction): Freeze the weights of the pre-trained graph encoder layers. Only train the newly initialized property prediction head (regressor). This is suitable for very small datasets (<100 samples).

- Model Training:

- Use a Mean Absolute Error (MAE) or Mean Squared Error (MSE) loss function.

- Employ the Adam optimizer with a reduced learning rate.

- Monitor performance on the validation set to implement early stopping and prevent overfitting.

- Model Evaluation: Evaluate the final fine-tuned model on the held-out test set. Report standard metrics: MAE, R² score, and RMSE. Compare performance against a model trained from scratch on the same target data.

Protocol 2: Implementing a Molecular Image Representation Learner (MoleCLIP)

Objective: To leverage a vision foundation model for molecular property prediction using image representations.

Materials:

- RDKit software for generating molecular images from SMILES.

- Pre-trained MoleCLIP model or OpenAI's CLIP model weights.

- Dataset of molecular SMILES and corresponding property labels.

- Computational environment for deep learning.

Procedure:

- Molecular Image Generation: Use RDKit to convert SMILES strings from your dataset into 2D structure images. Standardize image dimensions (e.g., 224x224 pixels) and formatting [26].

- Model Initialization: Initialize the image encoder using the pre-trained weights from a vision foundation model like CLIP. This model has been trained on hundreds of millions of general image-text pairs, providing a robust starting point [26].

- Molecular Pre-training (Optional but Recommended): Further pre-train the encoder on a large, unlabeled molecular dataset (e.g., ChEMBL-25 with 1.9M molecules). Employ two simultaneous self-supervised tasks [26]:

- Structural Classification: Assign pseudo-labels via clustering of molecular fingerprints and train the model to classify structures.

- Contrastive Learning (SimCLR): Generate augmented versions of each molecular image and train the model to minimize the distance between augmented pairs in the latent space.

- Fine-Tuning for Property Prediction: Add a task-specific prediction head (a lightweight Multi-Layer Perceptron). Fine-tune the entire model on the labeled target property dataset. Use a standard regression or classification loss function.

- Validation: Benchmark the performance of MoleCLIP against state-of-the-art graph and string-based models on standard benchmarks like MoleculeNet to validate its efficacy, especially in low-data regimes [26].

Protocol 3: Multi-Modal Pre-Training for Enhanced Generalization

Objective: To create a general-purpose molecular encoder by pre-training on multiple data modalities and properties simultaneously.

Materials:

- Multi-modal datasets (e.g., combining structural, compositional, and image data).

- A flexible model architecture (e.g., a transformer-based encoder for each modality).

- High-performance computing cluster for large-scale training.

Procedure:

- Data Curation: Assemble a large and diverse dataset encompassing multiple modalities (e.g., graphs, SMILES, images) and various material properties (e.g., formation energy, band gap, shear modulus) from databases like the Materials Project [28].

- Model Architecture Design: Implement a framework like MultiMat, which uses separate encoders for each input modality. The encoders project different modalities into a shared latent space [28].

- Self-Supervised Pre-Training: Train the model using self-supervised objectives such as:

- Masked Modeling: Randomly mask portions of the input (atoms in a graph, tokens in a SMILES string) and train the model to reconstruct them.

- Cross-Modal Contrastive Learning: Maximize the similarity between embeddings of the same molecule represented in different modalities (e.g., its graph and its image) while minimizing similarity with embeddings from different molecules [28].

- Fine-Tuning: For a downstream task, take the pre-trained multi-modal encoder and fine-tune it on the labeled target data, potentially using only one of the input modalities. This approach has been shown to improve performance on tasks with limited data and enhance robustness to distribution shifts [28].

- Evaluation: Test the model's performance on out-of-domain datasets and its ability to extrapolate to property values outside the training distribution to validate its generalization capability [2].

Table 2: Key Software and Data Resources for Molecular Representation Learning

| Resource Name | Type | Primary Function | Relevance to Representation |

|---|---|---|---|

| RDKit | Software | Cheminformatics and ML | Generates molecular descriptors, fingerprints, and images from SMILES/Graphs [26]. |

| ALIGNN | Model | Graph Neural Network | Processes atomic graphs and bond angles for accurate material property prediction [27]. |

| CLIP (OpenAI) | Model | Vision Foundation Model | Serves as a backbone for molecular image encoders (e.g., in MoleCLIP) [26]. |

| ChemBERTa | Model | Language Model | Pre-trained transformer for SMILES strings, usable for feature extraction or fine-tuning. |

| Materials Project | Database | Crystalline Materials Data | Primary source of data for pre-training and benchmarking models on solid-state materials [27] [28]. |

| ChEMBL | Database | Bioactive Molecules | Large-scale dataset of drug-like molecules for pre-training molecular encoders [26]. |

| MoleculeNet | Benchmark | Standardized Tasks | Suite of molecular datasets for fair comparison of ML model performance [26]. |

Advanced Applications & Future Directions

Scaffold Hopping and Inverse Design

AI-driven molecular generation methods have emerged as a transformative approach for scaffold hopping. Techniques such as Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) are increasingly utilized to design entirely new scaffolds absent from existing chemical libraries, while simultaneously tailoring molecules to possess desired properties [25]. These models often use graph or SMILES representations to generate novel molecular structures, enabling efficient exploration of chemical space for novel lead compounds [25] [29].

Predicting Out-of-Distribution Properties

A significant challenge in materials informatics is developing models that can extrapolate to predict property values outside the distribution of the training data (OOD). Recent work has proposed transductive approaches, such as the Bilinear Transduction method, which learns how property values change as a function of material differences rather than predicting values from new materials directly [2]. This method reparameterizes the prediction problem, showing improved extrapolative precision for both molecules and solid-state materials [2].

Multi-Task and Transfer Learning

The framework of transfer learning is critical for overcoming data scarcity in materials science. Systematic exploration of pre-training and fine-tuning strategies has shown that models pre-trained on large source datasets (even across different properties) consistently outperform models trained from scratch on small target datasets [27]. Furthermore, Multi-Property Pre-Training (MPT), where a model is pre-trained on several different material properties simultaneously, has been shown to outperform pair-wise pre-training on several datasets and fine-tune effectively on completely out-of-domain datasets, such as 2D material band gaps [27].

Workflow and Architecture Diagrams

Molecular Representation Learning Workflow

Multi-Modal Foundation Model Architecture

The rapid prediction of material properties from atomic structure represents a cornerstone of modern materials informatics, accelerating the discovery of new functional materials for applications ranging from energy storage to drug development. Traditional methods, such as density functional theory (DFT) calculations, provide high accuracy but are computationally intensive and slow, particularly for complex multicomponent systems [30] [29]. Machine learning (ML) surrogates have emerged as powerful tools that overcome these limitations by analyzing large datasets to reveal complex relationships between chemical composition, microstructure, and material properties [29]. Among ML models, Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), and Transformers have demonstrated particular success. GNNs incorporate a natural inductive bias for atomic structures, treating atoms as nodes and bonds as edges in a graph representation, which provides a physically intuitive framework for materials science [31] [32]. This architectural deep dive explores the application of these advanced neural network architectures in predicting materials properties, providing detailed protocols, comparative analyses, and implementation frameworks for researchers and scientists.

Architectural Fundamentals and Comparative Analysis

Graph Neural Networks (GNNs) for Structure-Property Relationships

GNNs have gained significant traction in materials property prediction due to their ability to operate directly on graph-structured representations of molecules and crystals. The fundamental principle involves representing a material's structure as a graph ( G = (V, E) ) where atoms comprise the vertex set ( V ) and chemical bonds form the edge set ( E ) [32]. Most GNNs designed for materials science follow the Message Passing Neural Network (MPNN) framework, which involves iterative steps of message passing, node updating, and graph-level readout [32]. During message passing, node information is propagated through edges to neighboring nodes, with each node updating its embedding based on incoming messages. After ( K ) message passing steps, a graph-level embedding is obtained through a permutation-invariant readout function, which is then used for property prediction [32]. This architecture enables GNNs to capture both local atomic environments and global structural information, making them particularly suited for predicting properties governed by atomic interactions and bonding patterns.

Advanced GNN architectures have evolved beyond basic MPNNs to incorporate more sophisticated physical principles. For instance, the Atomistic Line Graph Neural Network (ALIGNN) extends representation to inter-bond relationships by creating edges of a line graph, enabling the model to capture higher-order interactions [33]. Other architectures like MEGNet (MatErials Graph Network) incorporate global state attributes to handle multifidelity data and provide greater expressive power [31]. Equivariant GNNs, such as Equiformer and MACE, ensure that predictions of tensorial properties transform correctly under rotations, making them suitable for predicting directional properties like forces and dipole moments [31].

Convolutional Neural Networks (CNNs) for Spatial and Image-Based Data

CNNs excel at processing data with spatial correlations, making them valuable for materials science applications involving image data or spatially distributed properties. While traditionally applied to 2D image data, 3D CNNs have emerged for molecular property prediction by representing molecular structures as voxelized 3D grids, preserving crucial geometric information about atomic arrangements [34]. However, molecular 3D data often exhibits high sparsity, leading to computational inefficiencies from redundant operations on empty voxels [34].

Innovative approaches like the Prop3D model address these challenges through kernel decomposition strategies that reduce computational cost while maintaining predictive accuracy [34]. For microstructural analysis, multi-input CNNs can simultaneously process multiple views of materials, such as upper surface, lower surface, and cross-sectional images of particleboards, merging information from different perspectives to enhance prediction accuracy for mechanical properties like modulus of elasticity (MOE) and modulus of rupture (MOR) [35]. These architectures typically employ channel and spatial attention mechanisms (e.g., CBAM) to focus on salient features, improving model generalization and interpretability [34] [35].

Transformer Architectures for Compositional and Sequential Data

Transformers, with their self-attention mechanisms, have shown remarkable success in processing sequential and compositional data in materials science. Originally developed for natural language processing, Transformers effectively capture long-range dependencies and relationships in data sequences [30] [33]. In materials informatics, Transformer architectures process composition-based features and human-extracted physical properties, leveraging attention mechanisms to weigh the importance of different elements and features in property prediction [30].

The SMILES Transformer has demonstrated effectiveness on limited databases by processing Simplified Molecular-Input Line-Entry System (SMILES) strings representing molecular structures [36]. More recently, Large Language Models (LLMs) like MatBERT—a materials-specific BERT model pre-trained on scientific literature—have been fine-tuned for property prediction tasks, capturing latent knowledge embedded within domain texts [33]. The exceptional ability of these models to understand semantic relationships and syntactic structures in text representations of materials provides complementary insights to structure-focused models [33].

Comparative Architecture Analysis

Table 1: Comparative Analysis of Neural Network Architectures for Materials Property Prediction

| Architecture | Primary Data Representation | Key Strengths | Common Applications | Notable Models |

|---|---|---|---|---|

| Graph Neural Networks (GNNs) | Graph (nodes=atoms, edges=bonds) | Natural representation of atomic structures; captures topological relationships [31] [32] | Formation energy prediction [37] [30]; band gap prediction [37] [30]; mechanical properties [30] | MEGNet [31]; M3GNet [31]; ALIGNN [33]; CGCNN [36] |

| Convolutional Neural Networks (CNNs) | Grid-based (2D/3D images, voxels) | Effective spatial feature extraction; strong performance on image data [34] [35] | Microstructure-property relationships [35]; 3D molecular property prediction [34] | Prop3D [34]; 3D-DenseNet [34]; Multi-input CNN [35] |

| Transformers | Sequences (compositions, SMILES, text) | Captures long-range dependencies; effective for textual and compositional data [36] [30] [33] | Composition-based prediction [30]; literature-based knowledge extraction [33] | CrabNet [30]; MatBERT [33]; SMILES Transformer [36] |

Advanced Hybrid and Integrated Frameworks

Hybrid Architecture Design Principles

Leading research in materials informatics increasingly focuses on hybrid architectures that combine the strengths of multiple neural network paradigms to overcome individual limitations and enhance predictive performance. These integrated frameworks address fundamental challenges in materials property prediction, including data scarcity, limited model interpretability, and the need to capture both local atomic environments and global structural characteristics [36] [30] [33]. The core design principle involves creating complementary information pathways that process different material representations simultaneously, with fusion mechanisms that integrate these diverse perspectives into a unified predictive model.

The CrysCo framework exemplifies this approach by combining a crystal structure-based GNN (CrysGNN) with a composition-based Transformer network (CoTAN) [30]. The GNN branch processes crystal structures using edge-gated attention graph neural networks that capture up to four-body interactions (atom type, bond lengths, bond angles, dihedral angles), while the Transformer branch analyzes compositional features and human-extracted physical properties [30]. This hybrid design enables the model to leverage both detailed structural information and compositional characteristics, resulting in superior performance for energy-related properties including formation energy and energy above the convex hull [30]. The framework particularly addresses the challenge of capturing global crystal structure and periodicity information, which is often limited in conventional GNNs [30].

Dual-Stream Spatial-Topological Models

For molecular property prediction, the TSGNN architecture introduces a dual-stream approach comprising topological and spatial streams [36]. The topological stream employs a GNN that initializes atom representations using a two-dimensional matrix based on the periodic table of elements, providing a comprehensive depiction of atomic characteristics compared to alternative methods [36]. The spatial stream utilizes a CNN to process spatial information of molecules, capturing three-dimensional geometric arrangements that significantly influence molecular properties [36]. This approach addresses a critical limitation of GNNs that focus primarily on topological relationships while overlooking spatial configurations, which can lead to inaccurate predictions for molecules with identical topologies but distinct spatial arrangements [36].

LLM-GNN Integration Frameworks

The Hybrid-LLM-GNN framework represents a cutting-edge approach that integrates large language models with graph neural networks to enhance both prediction accuracy and model interpretability [33]. This architecture extracts structure-aware embeddings from GNNs and contextual word embeddings from pre-trained LLMs, then concatenates these representations for property prediction [33]. The LLM embeddings provide deep understanding of text sequences, including nuanced semantic relationships, syntactic structures, and commonsense reasoning, while GNN embeddings capture geometric information in atomic connections [33]. This integration has demonstrated up to 25% improvement in accuracy compared to GNN-only approaches, particularly for small datasets [33]. Additionally, by leveraging human-readable text inputs, the framework enables direct mapping between model predictions and string representations, facilitating interpretability by tracing the impact of specific text elements on outputs [33].

Experimental Protocols and Methodologies

Protocol 1: Transfer Learning with GNNs for Data-Scarce Properties

Application Context: Predicting material properties with limited available data (e.g., piezoelectric modulus, mechanical properties) using transfer learning from data-rich source properties [37] [30].

Data Preparation and Preprocessing:

- Source Dataset Selection: Identify a data-rich property for pre-training (e.g., DFT Formation Energy with ~132,752 materials from Materials Project) [37].

- Target Dataset Preparation: Curate target property dataset (e.g., Piezoelectric Modulus with ~941 structures) [37].

- Data Standardization: Apply standardization and normalization to ensure uniformity across datasets. Adjust scales so each dataset can be compared easily [37].

- Graph Representation: Convert crystal structures to graph representations using tools like MatGL's graph converter [31]. Use a consistent cutoff radius (typically 5-8 Å) to define bonds between atoms [31].

Model Architecture and Training:

- Pre-training Phase:

- Transfer Learning Strategy Selection:

- Fine-tuning Implementation:

- Strategy Selection: Choose from four fine-tuning strategies based on target dataset size and similarity to source [37]:

- Unfreezing All Layers: Allows entire model to adapt during fine-tuning.

- Adding a New Prediction Head: Introduces new layer while keeping existing layers frozen.

- Unfreezing Only the Last Layer: Re-trains only the final layer.

- Unfreezing Selective Layers: Allows only specific layers to update.

- Hyperparameter Tuning: Carefully select learning rate (typically lower than pre-training), number of frozen layers, and dataset size used for both pre-training and fine-tuning [37].

- Strategy Selection: Choose from four fine-tuning strategies based on target dataset size and similarity to source [37]:

Performance Evaluation:

- Use appropriate metrics (MAE, RMSE, R²) on held-out test set not used during training [37].

- Compare against baseline models trained from scratch on target dataset [37].

- Evaluate generalization on completely different datasets (e.g., 2D material band gap dataset) to assess out-of-distribution performance [37].

Protocol 2: Multi-Input CNN for Microstructure-Property Prediction

Application Context: Predicting mechanical properties from microstructural images of materials (e.g., particleboard MOE/MOR from surface and cross-section images) [35].

Data Preparation and Preprocessing:

- Image Acquisition: Collect images of material from multiple perspectives (upper surface, lower surface, cross-section) under standardized lighting and magnification conditions [35].

- Image Preprocessing: Apply normalization, resizing to consistent dimensions, and data augmentation (rotation, flipping) to increase dataset size [35].

- Density Integration: Optionally include density information as additional input channel alongside images, as density significantly influences mechanical properties [35].

Model Architecture and Training:

- Single-Input Baseline Models:

- Develop separate CNN models for each image type (upper surface, lower surface, cross-section).

- Use standard CNN architecture with convolutional blocks (convolution, activation, pooling) followed by fully connected layers [35].

- Multi-Input CNN Architecture:

- Early Fusion (Type #1): Merge information from different images after first convolutional block, minimizing parameters and adapting better to relatively small datasets [35].

- Intermediate Fusion (Type #2): Process each image stream through multiple convolutional blocks before merging, allowing specialized feature extraction from each view [35].

- Late Fusion (Type #3): Process each image through complete CNN backbone before merging features, maximizing specialized processing but requiring more data [35].

- Attention Mechanisms: Incorporate channel and spatial attention modules (e.g., CBAM) after convolutional blocks to focus on salient features [34] [35].

- Training Procedure: Use regression loss function, appropriate optimizer (Adam), and learning rate scheduling. Apply regularization techniques (dropout, weight decay) to prevent overfitting [35].

Interpretation and Analysis:

- Regression Activation Maps: Visualize image features strongly correlated with predictions using gradient-based methods [35].

- Feature Importance Analysis: Identify critical morphological factors (e.g., resin distribution, particle alignment, interface characteristics) influencing mechanical properties [35].

Protocol 3: Hybrid LLM-GNN Framework for Enhanced Prediction

Application Context: Enhancing property prediction accuracy and interpretability by combining structural information from GNNs with textual knowledge from LLMs [33].

Data Preparation and Preprocessing:

- Structure Representation: Convert crystal structures to graph representations for GNN processing [33].

- Text Representation Generation: Generate textual descriptions of materials using domain-specific tools (Robocrystallographer, ChemNLP) [33].

- Data Splitting: Use standard splits (80:10:10) with random shuffling for training, validation, and testing [33].

Model Architecture and Training:

- GNN Embedding Extraction:

- Employ pre-trained GNN model (e.g., ALIGNN trained on formation energy) as knowledge model [33].

- Extract structure-aware embeddings from intermediate layers.

- LLM Embedding Extraction:

- Utilize pre-trained LLM (BERT or domain-specific MatBERT) [33].

- Process generated text descriptions through LLM.

- Extract embeddings from final hidden layer by averaging token representations.

- Feature Integration:

- Concatenate GNN and LLM embeddings to create hybrid representations [33].

- Pass combined features through fully-connected deep neural network for prediction.

- Training Strategy: For small datasets, freeze feature extractors and train only the final prediction head. For larger datasets, fine-tune entire architecture end-to-end [33].

Interpretation and Analysis:

- Ablation Studies: Evaluate contributions of GNN-only, LLM-only, and hybrid approaches [33].

- Text Erasure Analysis: Examine model predictions by systematically removing parts of text representation to identify critical descriptive elements [33].

- Domain Adaptation Assessment: Compare performance of general-purpose (BERT) versus domain-specific (MatBERT) language models [33].

Visualization of Architectural Workflows

Diagram 1: Experimental protocols for materials property prediction, showing three distinct methodologies with their data flows and decision points.

Table 2: Essential Research Resources for Materials Property Prediction Experiments

| Resource Category | Specific Tools/Libraries | Function and Application | Key Features |

|---|---|---|---|

| Graph Deep Learning Libraries | Materials Graph Library (MatGL) [31] | "Batteries-included" library for developing GNN models and interatomic potentials | Built on DGL and Pymatgen; implements M3GNet, MEGNet, CHGNet; pre-trained foundation potentials [31] |

| Benchmark Datasets | Materials Project (MP) [37] [30] | Source of DFT-computed material structures and properties | ~146K material entries; formation energies, band gaps, elastic tensors [30] |

| JARVIS-DFT [37] [33] | Repository of DFT-computed properties for diverse materials | 75,993 materials; formation energies, band gaps, spectroscopic properties [33] | |

| Text Representation Tools | Robocrystallographer [33] | Generates textual descriptions of crystal structures from atomic coordinates | Automates creation of domain-knowledge descriptions for LLM processing [33] |

| ChemNLP [33] | Natural language processing library for chemical and materials science text | Domain-specific text processing capabilities [33] | |

| Pre-trained Models | ALIGNN [33] | Graph neural network incorporating bond-angle information | State-of-art performance; enables transfer learning [33] |

| MatBERT [33] | Domain-specific BERT model pre-trained on materials science literature | Captures materials science terminology and scientific reasoning [33] | |

| Simulation Interfaces | Atomic Simulation Environment (ASE) [31] | Python library for working with atoms | Interface for atomistic simulations; compatible with MatGL [31] |

| LAMMPS [31] | Classical molecular dynamics simulator | Integration with machine learning potentials [31] |

The architectural landscape for materials property prediction continues to evolve toward increasingly sophisticated and integrated frameworks. The comparative analysis of GNNs, CNNs, and Transformers reveals distinct strengths and applications, with GNNs excelling in structure-property relationships, CNNs in spatial and image-based data, and Transformers in compositional and sequential data [37] [34] [30]. The emergence of hybrid architectures such as dual-stream GNN-CNN models [36], transformer-GNN frameworks [30], and LLM-GNN integrations [33] demonstrates the field's trajectory toward leveraging complementary representations and knowledge sources.

Future advancements will likely focus on several key areas: improving data efficiency through advanced transfer learning and few-shot learning techniques [37] [33], enhancing model interpretability to build trust and provide scientific insights [33], developing more sophisticated physics-informed architectures that respect fundamental constraints [38], and creating unified foundation models capable of handling diverse materials classes and properties [31]. The continued development of comprehensive libraries like MatGL [31] will lower barriers to entry and standardize implementation practices across the research community. As these architectural innovations mature, they promise to further accelerate the discovery and design of novel materials with tailored properties for specific applications across energy, electronics, medicine, and beyond.

The accurate prediction of material properties from atomic structure is a cornerstone of accelerated materials discovery and drug development. Traditional machine learning models, particularly Graph Neural Networks (GNNs), have demonstrated remarkable success by representing materials as topological graphs, where atoms are nodes and chemical bonds are edges [36]. However, a significant limitation of these topology-only models is their neglect of spatial atomic arrangements and global structural context [36] [30]. Molecules or crystals with identical bond topology but distinct spatial conformations can exhibit vastly different properties [36]. This gap necessitates a paradigm shift towards architectures that explicitly integrate spatial information. Dual-stream models, which process topological and spatial features in parallel, have emerged as a powerful framework to address this limitation, enabling more robust and accurate property prediction across diverse chemical spaces [36] [30] [39].

Conceptual Framework and Key Innovations

Dual-stream models are founded on the principle of feature decoupling, where separate dedicated network streams learn complementary representations of a material's structure.

- The Topological Stream: This component is typically a GNN that operates on the molecular or crystal graph. Its strength lies in learning from the local connectivity and bonding environment of each atom. Innovations in this stream include using advanced GNN architectures like edge-gated attention networks [30] and enriching initial node embeddings with comprehensive atomic features, such as representations derived from the periodic table [36].

- The Spatial Stream: This component is designed to capture global structural information that is inherently missing from the graph topology. Implementations vary, including: