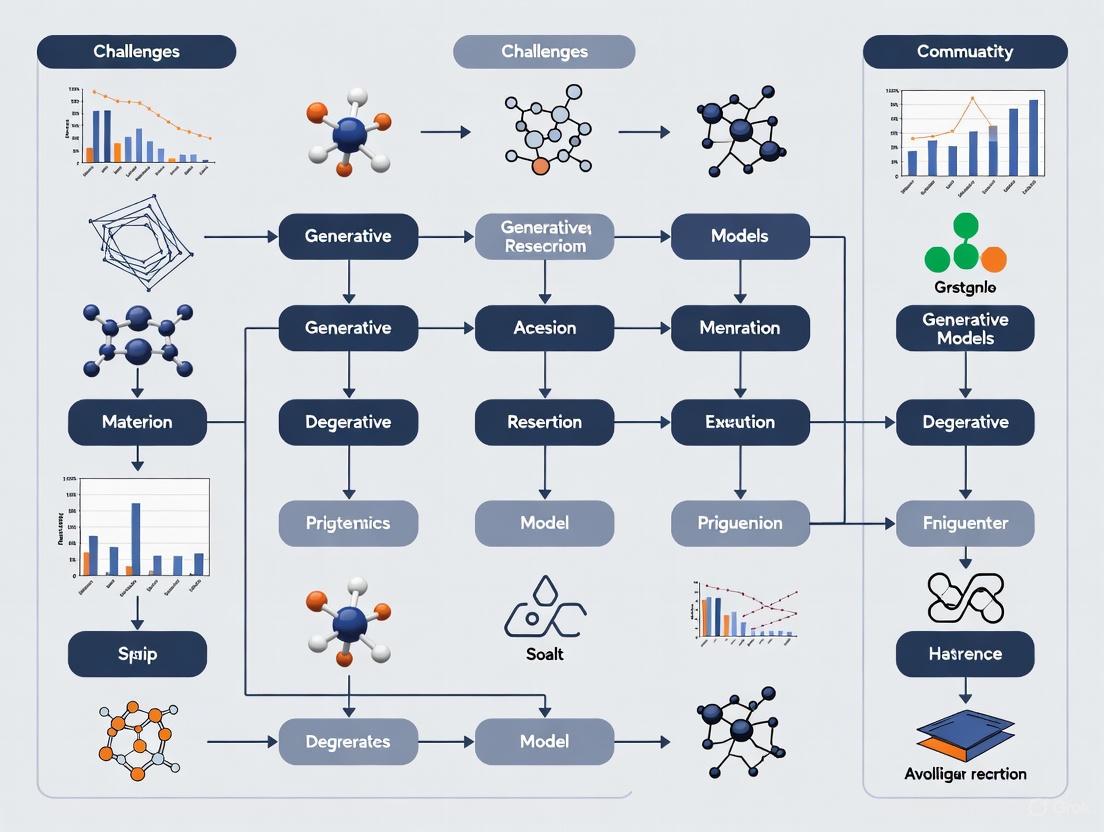

Overcoming the Hurdles: Key Challenges and Solutions for Generative AI in Materials Research and Drug Development

Generative artificial intelligence holds transformative potential for accelerating the discovery of novel materials and therapeutic compounds.

Overcoming the Hurdles: Key Challenges and Solutions for Generative AI in Materials Research and Drug Development

Abstract

Generative artificial intelligence holds transformative potential for accelerating the discovery of novel materials and therapeutic compounds. However, its application in scientific research faces significant, domain-specific challenges. This article provides a comprehensive analysis for researchers and drug development professionals, exploring the foundational data and computational limitations of generative models. It delves into methodological advances for designing nanoporous materials and small molecules, outlines critical strategies for troubleshooting model instability and bias, and finally, establishes a rigorous framework for validating and benchmarking AI-generated candidates to ensure they are stable, diverse, and ready for experimental pursuit.

The Core Hurdles: Understanding Data Scarcity, Cost, and Fundamental Model Limitations

In the field of materials science, the discovery of new materials is often bottlenecked by the "small data" problem. Unlike data-rich domains, the acquisition of high-quality materials data through experiments or high-fidelity computations is typically slow, expensive, and resource-intensive [1]. This creates a fundamental challenge for generative AI models, which require large datasets to learn from. These models, designed for the "inverse design" of new materials with desired properties, often struggle when data is scarce, leading to generated materials that are either unstable, non-synthesizable, or fail to exhibit the target exotic properties [2] [3]. This technical support center addresses the specific issues researchers encounter when applying generative models to small data environments, providing practical guides and solutions to accelerate materials discovery.

Frequently Asked Questions (FAQs)

FAQ 1: What defines a "small data" problem in materials science? The concept is relative, but "small data" in materials science primarily focuses on a limited sample size of available data [1]. This often arises when data is sourced from human-conducted experiments, which are costly and time-consuming, rather than from large-scale, automated observations. The quality and targeted information of this data are often prioritized over sheer quantity [1].

FAQ 2: Why do generative AI models fail to propose viable quantum materials? Popular generative models from major tech companies are often optimized to generate materials that are structurally stable [3]. However, materials with exotic quantum properties (e.g., superconductivity, unique magnetic states) require specific, and often unstable, geometric atomic patterns (like Kagome or Lieb lattices) to function [3]. Models trained on general datasets typically do not generate these unconventional structures, creating a bottleneck for discovering groundbreaking quantum materials.

FAQ 3: Can synthetic data truly solve the problem of data scarcity? Yes, but with caveats. Synthetic data generated by models like Con-CDVAE can improve property prediction models in data-scarce scenarios [4]. However, its effectiveness varies. In some cases, using a combination of real and synthetic data for training yields the best performance, while in others, training solely on synthetic data can underperform models trained only on real data [4]. The quality and distribution of the synthetic data are critical.

FAQ 4: Is it possible for an AI model to make accurate predictions beyond its training data? Conventional machine learning models are generally interpolative, meaning their predictions are reliable only for materials similar to those in their training set [5]. However, novel algorithms like E2T (Extrapolative Episodic Training) have been developed to enable extrapolative predictions. This meta-learning approach trains a model on a large number of artificially generated "extrapolative tasks," allowing it to learn how to make predictions for material features not present in the original training data [5].

Troubleshooting Guides

Problem 1: Generative Model Produces Physically Implausible or Non-Synthesizable Materials

This is a common issue when models are trained on small or biased datasets and learn incorrect structure-property relationships.

- Step 1: Integrate Physical Constraints. Use a tool like SCIGEN to enforce geometric constraints during the generation process. This steers the model to create structures known to give rise to desired quantum properties [3].

- Step 2: Implement a Multi-Stage Screening Pipeline. Do not rely on the generative model alone. Pass generated candidates through a rigorous funnel:

- Stability Screening: Use computational tools (e.g., Density Functional Theory) to filter for thermodynamic stability [3].

- Synthesizability Check: Screen candidates against known synthesis protocols or use predictive models for synthesizability.

- Step 3: Iterate with Active Learning. Use an active learning loop. Select the most promising (and diverse) generated candidates for actual synthesis or high-fidelity simulation. Feed this new, high-quality data back into the model to iteratively improve its performance [6].

Problem 2: Poor Performance of Predictive Models Trained on Limited Data

When your dataset is too small to train an accurate property prediction model, which in turn hampers the evaluation of generated materials.

- Step 1: Apply Data Augmentation. Use feature combination methods or conditional generative models to create synthetic data. The MatWheel framework is an example of this approach, which can be used in both fully-supervised and semi-supervised learning scenarios [4].

- Step 2: Leverage Transfer Learning. Begin with a pre-trained model (a foundation model) that has been trained on a large, versatile dataset from a related domain. Fine-tune this model on your small, specific dataset to achieve higher predictive accuracy with less data [6] [5].

- Step 3: Incorporate Domain Knowledge. Generate descriptors based on domain knowledge (e.g., physical laws, empirical rules) to construct more interpretable and robust machine learning models. This helps the algorithm capture key information more effectively [1].

- Step 4: Utilize Extrapolative Algorithms. For exploring completely new material spaces, employ algorithms specifically designed for extrapolation, such as the E2T method, which can maintain higher accuracy for materials with features outside the training distribution [5].

Problem 3: High Experimental Cost and Slow Data Generation

The core of the small data problem is the expense and time required to acquire new data points.

- Step 1: Deploy High-Throughput Methods. Where possible, use high-throughput computations (e.g., high-throughput first-principles calculations) or high-throughput experimentation tools to generate data more rapidly [1] [2].

- Step 2: Adopt a Centralized Data Management System. Use a platform like MaterialsZone's Centralized Data Hub to aggregate data from diverse sources (spreadsheets, databases, ERP systems). This minimizes inconsistencies, streamlines workflows, and ensures that all existing data is readily available and usable, maximizing the value of every data point [7].

- Step 3: Practice Research Data Management (RDM). Adhere to the FAIR Guiding Principles—making data Findable, Accessible, Interoperable, and Reusable. This promotes collaboration, reduces redundant data generation, and accelerates the overall research lifecycle [8].

Experimental Protocols

Protocol 1: The MatWheel Framework for Synthetic Data Generation

This protocol outlines the methodology for using the MatWheel framework to generate and utilize synthetic materials data to improve property prediction models under data scarcity [4].

1. Objective: To enhance the performance of a material property prediction model by incorporating synthetic data generated by a conditional generative model.

2. Methodology:

- Scenario A: Fully-Supervised Learning

- Train Conditional Generative Model: Train a model (e.g., Con-CDVAE) using the entire available set of real training data.

- Generate Synthetic Data: Sample the trained model using scalar properties as conditions to create a synthetic dataset (e.g., 1,000 samples).

- Train Predictive Model: Train the property prediction model (e.g., CGCNN) on a combined dataset of real and synthetic data.

- Scenario B: Semi-Supervised Learning (The Data Flywheel)

- Initial Training: Train the predictive model on only a small fraction (e.g., 10%) of the real training data.

- Generate Pseudo-Labels: Use this initial model to infer pseudo-labels for the remaining 90% of the training data.

- Train Generative Model: Train the conditional generative model (e.g., Con-CDVAE) on the combined set of real-labeled and pseudo-labeled data.

- Generate Synthetic Data: Use the trained generative model to create an expanded synthetic dataset.

- Re-train Predictive Model: Finally, re-train the predictive model using a combination of the original small real dataset and the new synthetic dataset.

3. Materials/Models Used:

- Property Prediction Model: CGCNN (Crystal Graph Convolutional Neural Network).

- Conditional Generative Model: Con-CDVAE (Conditional-Crystal Diffusion Variational Autoencoder).

- Data Source: Data-scarce property datasets from the Matminer database (e.g., Jarvis2d exfoliation, MP poly total) [4].

The workflow for this framework is illustrated below.

Protocol 2: Constrained Generation of Quantum Materials with SCIGEN

This protocol describes the process of using the SCIGEN tool to constrain a generative AI model to produce materials with specific geometric lattices associated with exotic quantum properties [3].

1. Objective: To generate candidate materials with specific geometric structural patterns (e.g., Archimedean lattices) that are likely to host exotic quantum phenomena.

2. Methodology: 1. Tool Integration: Apply the SCIGEN computer code to a generative diffusion model (e.g., DiffCSP). 2. Define Constraints: Input user-defined geometric structural rules (e.g., Kagome lattice, Lieb lattice) that the model must follow at each step of the generation process. 3. Generate Candidates: Run the constrained model to produce a large pool of candidate materials (e.g., millions of candidates). 4. Screen for Stability: Filter the generated candidates for thermodynamic stability. 5. Simulate & Validate: Select a subset of stable candidates for detailed simulation (e.g., using supercomputers to model atomic behavior) and ultimately, experimental synthesis to validate the model's predictions.

3. Materials/Models Used:

- Generative Model: DiffCSP (a diffusion model for crystal structure prediction).

- Constraining Tool: SCIGEN (Structural Constraint Integration in GENerative model).

- Validation: Synthesis in lab settings (e.g., TiPdBi and TiPbSb compounds were synthesized and tested) [3].

The following diagram outlines this constrained generation and validation pipeline.

The following table summarizes quantitative results from key studies that tackled the small data problem, providing a comparison of their performance.

Table 1: Performance Comparison of Small Data Solutions on Benchmark Tasks

| Method / Model | Core Function | Dataset(s) Used | Key Result / Performance |

|---|---|---|---|

| MatWheel [4] | Synthetic data generation for property prediction | Jarvis2d exfoliation (636 samples) | Combining real + synthetic data gave best performance (MAE*: 57.49) vs. real data only (MAE: 62.01). |

| MatWheel [4] | Synthetic data generation for property prediction | MP poly total (1056 samples) | Real data only performed best (MAE: 6.33), highlighting variable success of synthetic data. |

| SCIGEN [3] | Constrained generation of quantum materials | Application with DiffCSP model | Generated 10M+ candidates with Archimedean lattices; 41% of a 26k-sample subset showed magnetism in simulation. |

| E2T (Extrapolative Episodic Training) [5] | Meta-learning for extrapolative prediction | 40+ property prediction tasks for polymers & inorganics | Outperformed conventional ML in extrapolative accuracy in almost all cases, with comparable performance on interpolative tasks. |

*MAE: Mean Absolute Error (lower is better).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools and Models for Small Data Materials Research

| Tool / Model Name | Type | Primary Function in Small Data Context |

|---|---|---|

| Con-CDVAE [4] | Conditional Generative Model | Generates synthetic crystal structures conditioned on target properties to augment small datasets. |

| SCIGEN [3] | Generative AI Constraint Tool | Applies geometric rules to generative models, steering them to produce materials with specific, target structures. |

| E2T Algorithm [5] | Meta-Learning Algorithm | Enables models to make accurate predictions for material features that lie outside the training data distribution. |

| CGCNN [4] | Property Prediction Model | A graph neural network that predicts material properties from crystal structure, effective even with limited data. |

| MaterialsZone Hub [7] | Data Management Platform | A centralized system to aggregate and manage disparate materials data, ensuring maximum utility of existing data. |

| Active Learning Cycles [6] | Machine Learning Strategy | Intelligently selects the most valuable data points to acquire next, optimizing the cost of data generation. |

Skyrocketing Computational Costs and Environmental Impact of Model Training

The integration of artificial intelligence, particularly generative models, into materials research and drug development has inaugurated a new paradigm of scientific discovery, enabling the inverse design of novel materials and molecules. However, this revolution is accompanied by two formidable challenges: skyrocketing computational costs and a significant environmental footprint. Training state-of-the-art AI models now requires financial investments that can exceed hundreds of millions of dollars, effectively placing frontier model development beyond the reach of all but the most well-funded organizations [9] [10]. Concurrently, the immense computational power demanded by these models translates into massive electricity consumption and water usage for cooling, raising urgent sustainability concerns for the field [11] [12]. This technical support center is designed to help researchers and scientists navigate these challenges by providing practical, actionable guidance for optimizing computational efficiency and mitigating environmental impact within their experiments.

Frequently Asked Questions (FAQs)

Q1: What is the typical cost range for training different tiers of AI models in materials science?

Training costs vary dramatically based on the model's size and complexity. The following table summarizes estimated benchmarks for different tiers [9] [10].

Table: AI Model Training Cost Benchmarks

| Model Tier | Example Models | Typical Training Cost (Compute) | Primary Use Cases |

|---|---|---|---|

| Frontier Models | GPT-4, Gemini Ultra, Llama 3.1-405B | $100 million - $192 million [9] | General-purpose, state-of-the-art foundational models |

| Mid-Scale Models | GPT-3, Mistral Large | $4.6 million - $41 million [9] [10] | Strong performance for commercial applications |

| Efficient/Compact Models | DeepSeek-V3, Llama 2-70B | $3 million - $6 million [9] [10] | Domain-specific tasks, fine-tuning base |

| Small-Scale & Fine-Tuning | RoBERTa Large, Domain-specific adaptations | Thousands to hundreds of thousands of dollars [10] | Specialized tasks, proof-of-concept studies |

Q2: Why is AI model training so resource-intensive and environmentally impactful?

The resource intensity stems from several factors:

- Computational Demand: Training models with billions or trillions of parameters requires thousands of high-performance GPUs/TPUs running continuously for weeks or months [11] [13].

- Energy Consumption: This computational process consumes enormous electricity. For instance, training OpenAI's GPT-3 was estimated to use 1,287 MWh, enough to power about 120 U.S. homes for a year [12].

- Inference Costs: After training, using the model (inference) accounts for 80-90% of AI's total computing power and energy demand. A single ChatGPT query can use five times more electricity than a simple web search [12] [13].

- Cooling Overhead: Data centers require massive water and energy for cooling, with an estimated 2 liters of water used for every kilowatt-hour of energy consumed [12].

Q3: What are the key components that contribute to the total cost of a training run?

The cost is not just for compute cycles. A comprehensive budget includes the following components [10]:

Table: Breakdown of Neural Network Training Cost Components

| Cost Component | Share of Total Cost | Description |

|---|---|---|

| GPU/TPU Accelerators | 40% - 50% | Rental or amortized purchase cost of the primary processing hardware. |

| Research & Engineering Staff | 20% - 30% | Salaries for scientists and engineers designing and running experiments. |

| Cluster Infrastructure | 15% - 22% | Servers, storage, and crucially, high-speed interconnects. |

| Networking & Synchronization | 9% - 13% | (Included in cluster infrastructure) Overhead for coordinating thousands of chips. |

| Energy & Electricity | 2% - 6% | Direct power consumption for computation and cooling. |

Q4: What strategies can my research team adopt to reduce costs and environmental impact?

- Prioritize Model Efficiency: Use architectures like Mixture-of-Experts (e.g., DeepSeek-V3), which activates only a fraction of total parameters, dramatically cutting compute needs [10].

- Leverage Parallel Computing: Utilize Message Passing Interface (MPI) libraries like MPI4Py to parallelize data preprocessing and model training across multiple processors, significantly speeding up workflows and reducing rental time [14].

- Employ Lower Precision: Using FP8 precision instead of BF16 for most operations can effectively double calculation speed with minimal quality loss [10].

- Focus on Fine-Tuning: Instead of training from scratch, fine-tune existing pre-trained models for your specific domain. This is far less costly and computationally intensive [10].

- Optimize Data Center Selection: Choose cloud providers or data centers that are powered by renewable energy sources to lower the carbon footprint of your computations [11].

Troubleshooting Guides

Issue 1: Experiment Costs Exceeding Budget

Problem: Your model training runs are consuming more computational resources than allocated, leading to unexpected costs and stalled projects.

Diagnosis and Solutions:

- Step 1: Audit Compute Usage. Use cloud monitoring tools to analyze your past runs. Identify if costs are high due to long training times, inefficient use of hardware, or overly large experimental batches.

- Step 2: Implement Early Stopping. Define clear performance metrics and stop training runs early if the model is not converging as expected. This prevents wasting resources on unproductive experiments.

- Step 3: Start Small. Use a smaller subset of your data for initial model architecture and hyperparameter experiments. Scale up to the full dataset only once you have a promising configuration.

- Step 4: Optimize Hyperparameters. Systematically search for optimal hyperparameters (e.g., learning rate, batch size) using efficient methods like Bayesian optimization instead of exhaustive grid searches, which can be prohibitively expensive.

Issue 2: High Energy and Carbon Footprint

Problem: Your lab or institution is concerned about the sustainability of your AI-driven research, citing high energy usage or carbon emissions.

Diagnosis and Solutions:

- Step 1: Measure Your Footprint. Utilize tools like the

codecarbonlibrary to track the energy consumption and estimated carbon emissions of your code runs directly. - Step 2: Schedule for "Greener" Times. If using cloud resources, configure training jobs to run during off-peak hours when the local energy grid may have a higher mix of renewable sources, or align with periods of peak renewable availability [11].

- Step 3: Select Efficient Hardware. When provisioning resources, choose the latest-generation AI accelerators that offer better performance-per-watt compared to older hardware.

- Step 4: Consolidate Experiments. Bundle multiple inference or testing tasks together to maximize GPU utilization and reduce the total active compute time, rather than running jobs sporadically.

Issue 3: Managing Data and Feature Complexity in Materials ML

Problem: The materials science dataset is messy, with high-dimensional feature spaces, leading to long preprocessing times and inefficient model training.

Diagnosis and Solutions:

- Step 1: Standardize Data Preprocessing. Use robust Python packages like

Matminerfor inorganic materials orRDKitfor molecular data to automatically generate and standardize descriptors, ensuring consistency and saving time [15]. - Step 2: Apply Feature Selection. Before training, employ feature selection methods (e.g., filter methods like maximum information coefficient, or embedded methods like LASSO) to identify and retain only the most relevant features. This reduces model complexity and training time [15].

- Step 3: Parallelize Preprocessing. Use parallel computing frameworks like MPI4Py to distribute data cleaning, feature engineering, and normalization tasks across multiple CPU cores, drastically speeding up this critical stage [14].

Experimental Protocol: Cost-Effective Model Training with Parallelization

This protocol outlines a methodology for leveraging parallel computing to reduce the time and cost of training a generative model for molecular design.

1. Objective: To train a variational autoencoder (VAE) for generating novel molecular structures, while minimizing training time and associated cloud compute costs.

2. Hypothesis: Implementing data parallelism using MPI4Py will significantly reduce model training time compared to a serial implementation, leading to a direct reduction in computational costs.

3. Materials and Reagents (Computational): Table: Research Reagent Solutions for Computational Experiment

| Item Name | Function/Description | Example/Alternative |

|---|---|---|

| HPC Cluster/Cloud VM | Provides the computational backbone with multiple nodes/CPUs. | AWS ParallelCluster, Google Cloud VMs, Azure HPC. |

| MPI Implementation | Enables communication and coordination between processes. | OpenMPI, MPICH. |

| MPI4Py Python Library | Provides Python bindings for MPI, allowing Python scripts to run in parallel [14]. | pip install mpi4py |

| Training Dataset | Curated set of molecular structures (e.g., in SMILES string format). | ZINC database, PubChem. |

| Deep Learning Framework | Provides the infrastructure for building and training neural networks. | PyTorch, TensorFlow, JAX. |

4. Methodology:

- Step 1: Data Preparation and Partitioning. Load and preprocess the entire molecular dataset. The root process (Rank 0) will then partition the dataset into N nearly equal subsets, where N is the total number of processes.

- Step 2: Model and Optimizer Initialization. Initialize the identical VAE model architecture and optimizer on every computational process.

- Step 3: Parallelized Training Loop.

- Scatter Data: The root process scatters one data subset to each process (including itself).

- Local Forward/Backward Pass: Each process performs a forward pass, calculates the loss, and a backward pass on its local data subset.

- Gradient Synchronization: All processes communicate to average the gradients computed locally using

MPI4Py'sAllreduceoperation. - Parameter Update: Each optimizer updates the model parameters using the averaged gradients, ensuring all models remain synchronized.

- Step 4: Validation and Checkpointing. A designated process can periodically evaluate the model on a validation set and save checkpoints.

5. Workflow Visualization:

The Scientist's Toolkit: Key Reagents for Sustainable AI Research

Table: Essential "Reagents" for Cost-Effective and Sustainable AI Research

| Tool / Technique Name | Category | Brief Function & Explanation |

|---|---|---|

| MPI4Py | Parallel Computing | A library for parallel execution of Python code, crucial for speeding up data preprocessing and distributed model training [14]. |

| Matminer / RDKit | Data Handling | Python libraries for automatically generating standardized, domain-aware feature descriptors for inorganic and organic materials, respectively [15]. |

| Mixture-of-Experts (MoE) | Model Architecture | A neural network design that uses only a subset of parameters per input, drastically reducing computation and cost during training and inference [10]. |

| FP8 Precision Training | Numerical Optimization | Using 8-bit floating-point precision for computations, which increases speed and reduces memory usage with minimal impact on model accuracy [10]. |

| CodeCarbon | Sustainability Tracking | A Python package that estimates the energy consumption and carbon emissions of your computational code, enabling measurement and accountability. |

Instability and Lack of Diversity in Generated Material Structures

Frequently Asked Questions (FAQs)

FAQ 1: Why does my generative model produce chemically implausible or unstable material structures? This is a common issue where models prioritize structural stability over exotic properties. Generative models like diffusion models are often trained on datasets that optimize for stability, which can cause them to miss promising candidates for applications like quantum computing. Furthermore, models trained on 2D representations (like SMILES) may omit critical 3D conformational information, leading to structures that are invalid in three-dimensional space [16]. A key technical challenge is that the model's input space may not be smooth with respect to parameter variation, making optimization difficult and leading to generations that are unstable [17].

FAQ 2: My model's outputs lack diversity, often generating slight variations of the same structure. What is causing this? This problem, known as mode collapse, is a fundamental limitation of several generative models, particularly Generative Adversarial Networks (GANs) [18]. It occurs when the model learns to produce a limited variety of outputs that it has determined are "successful," failing to explore the wider design space. This is especially problematic for complex metamaterials like kirigami, where the design space has non-trivial restrictions. If the model relies on an inappropriate similarity metric like Euclidean distance, it can get stuck in one region of the design space [19].

FAQ 3: How can I steer my generative model to produce materials with specific target properties, like a particular geometric lattice? Constraining a model requires specialized techniques. One approach is to use a tool like SCIGEN, which can be integrated with diffusion models. SCIGEN works by blocking model generations that do not align with user-defined structural rules at each iterative step of the generation process [3]. This allows researchers to enforce specific geometric patterns (e.g., Kagome or Lieb lattices) known to give rise to desired quantum properties.

FAQ 4: Why do generative models that work well for images struggle with my materials data? Images typically exist in a design space where a simple metric like Euclidean distance is a reasonable measure of similarity. However, for material structures, the Euclidean distance between two parameter sets can be a poor indicator of their actual similarity in terms of function or admissibility. A short path in Euclidean space might pass through a region of invalid materials, making it an ineffective guide for the model [19]. This is a key reason why models struggle with geometrically complex metamaterials.

FAQ 5: What are the best metrics to evaluate the diversity and quality of my generated materials? Evaluation should be multi-faceted. The table below summarizes key quantitative metrics. It is also crucial to validate model outputs with physical simulations (e.g., for stability and magnetic properties) and, ultimately, experimental synthesis to confirm that the generated materials can be created and exhibit the predicted properties [3] [20].

Table 1: Key Metrics for Evaluating Generative Model Outputs

| Metric | Description | Application in Materials Science |

|---|---|---|

| Fréchet Inception Distance (FID) [20] | Assesses realism by comparing distributions of real and generated data. | Can be adapted to compare distributions of material properties or structural descriptors. |

| Inception Score (IS) [20] | Balances quality and variety of generated outputs. | Useful for a high-level assessment of diversity, though may require domain adaptation. |

| Self-BLEU [20] | Measures diversity by comparing generated outputs to each other. | Lower scores suggest higher diversity in generated structures. |

| Mode Coverage [20] | Measures how many unique categories or modes the model captures. | Ensures the model explores different classes of crystal structures or compositions. |

| Synthesizability Score | (Proposed) Prediction of whether a proposed material can be synthesized. | Would require a separate model trained on experimental synthesis data. |

| Stability Screening | Percentage of generated materials predicted to be thermodynamically stable [17]. | A high failure rate indicates the model is generating implausible structures. |

Experimental Protocols & Methodologies

Protocol: Integrating Structural Constraints with SCIGEN

This protocol is based on the methodology developed by MIT researchers to generate materials with specific geometric lattices using the SCIGEN tool [3].

Objective: To steer a generative diffusion model (e.g., DiffCSP) to produce crystal structures that conform to a user-defined geometric pattern.

Workflow:

- Define Constraint: Precisely define the target structural constraint (e.g., a specific Archimedean lattice like Kagome).

- Model Integration: Integrate the SCIGEN code with your chosen generative diffusion model.

- Constrained Generation: Run the generative process. At each iterative denoising step, SCIGEN evaluates the emerging structure.

- Rule Enforcement: If the structure violates the predefined geometric rule, SCIGEN blocks that generation path, steering the model toward admissible structures.

- Output: The final output is a set of material candidates that conform to the target geometry.

The following diagram illustrates this iterative constraint-enforcement workflow.

Protocol: Validating Generative Output for Quantum Materials

This protocol details the steps taken to validate AI-generated materials, as described in the MIT study that led to the synthesis of new compounds [3].

Objective: To screen, simulate, and experimentally validate material candidates generated by a constrained AI model.

Workflow:

- Initial Generation: Use a constrained generative model to produce a large pool of candidate structures (e.g., millions).

- Stability Screening: Apply a stability filter to remove thermodynamically unstable candidates, significantly reducing the pool.

- Property Simulation: Use high-performance computing (HPC) and Density Functional Theory (DFT) or other advanced simulations on a smaller sample (e.g., tens of thousands) to predict quantum properties like magnetism.

- Candidate Selection: Select the most promising candidates based on simulation results for experimental synthesis.

- Experimental Validation: Synthesize the selected materials (e.g., via solid-state reaction) and characterize their properties (e.g., using X-ray diffraction and magnetic susceptibility measurements) to compare with AI predictions.

The following flowchart outlines this multi-stage validation process.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational and Experimental Tools for AI-Driven Materials Discovery

| Tool / Resource | Type | Function in Research |

|---|---|---|

| DiffCSP [3] | Generative Model | A crystal structure prediction model that can be augmented with constraint tools for targeted generation. |

| SCIGEN [3] | Constraint Tool | Computer code that enforces user-defined geometric rules during the generative process. |

| Archimedean Lattices [3] | Design Blueprint | A collection of 2D lattice tilings (e.g., Kagome) used as target constraints for generating materials with exotic quantum properties. |

| High-Performance Computing (HPC) [3] | Computational Resource | Essential for running large-scale stability and property simulations (e.g., DFT) on thousands of AI-generated candidates. |

| Stability Prediction Model [17] | Screening Tool | A separate machine learning model used to predict the thermodynamic stability of a generated structure, filtering out implausible candidates. |

| Wasserstein GAN (WGAN) [19] | Generative Model | A variant of GAN that can be more stable in training, though it may still struggle with complex geometric constraints. |

| Denoising Diffusion Model [19] | Generative Model | A state-of-the-art model that excels at generating high-quality outputs; its iterative nature is well-suited to constraint integration. |

In the field of materials science, generative AI models offer unprecedented capabilities for accelerating the discovery of new compounds. However, these models are susceptible to "hallucinations" – the generation of implausible, incorrect, or physically impossible material designs – and can inherit and amplify biases present in their training data. This technical support guide helps researchers identify, troubleshoot, and mitigate these issues within their experimental workflows.

Frequently Asked Questions (FAQs)

Q1: What exactly is an "AI hallucination" in the context of materials design? A hallucination occurs when a generative AI model produces a material structure that is superficially plausible but is factually incorrect, physically invalid, or non-synthesizable [21]. In materials science, this often manifests as structurally unstable crystals, compositions that violate chemical rules, or properties that defy physical laws [22].

Q2: How do inherited biases affect generative models for materials? Biases in training data can severely limit a model's creativity and applicability. For instance, if a model is trained predominantly on stable, common crystal structures, it may be biased against generating novel materials with exotic, target properties like the geometric lattices needed for quantum spin liquids [3]. This results in a generative process optimized for historical stability rather than groundbreaking discovery.

Q3: What are the most common types of hallucinations to look for?

- Structural Hallucinations: Generation of crystal structures with impossible coordination environments or periodic lattices [22].

- Compositional Hallucinations: Proposing material compositions with incompatible elements or unstable stoichiometries.

- Property Hallucinations: Predicting exotic physical properties (e.g., superconductivity, specific magnetic behaviors) that are not supported by the generated structure [21].

Q4: Can hallucinations ever be beneficial for research? While often problematic, the uncontrolled "creativity" of hallucinations can be harnessed in a constrained environment for idea generation and to explore highly novel, non-obvious material spaces that might not be proposed through traditional reasoning [23]. The key is to implement rigorous validation to separate plausible breakthroughs from implausible noise.

Troubleshooting Guide: Identifying and Mitigating Hallucinations

Problem 1: Model Generates Structurally Unstable Materials

Symptoms:

- Generated structures have very high energy upon Density Functional Theory (DFT) relaxation [22].

- Structures collapse into different configurations during simulation.

- Low success rate in proposing stable crystals [22].

Solutions:

- Impose Geometric Constraints: Use tools like SCIGEN to enforce specific structural rules (e.g., Archimedean lattices) during the generation process, guiding the model toward physically realistic and interesting geometries [3].

- Refine with Adapter Modules: Fine-tune pre-trained base models on smaller, high-fidelity datasets labeled with property data (e.g., formation energy). This steers generation toward stability without requiring massive retraining [22].

- Implement Confidence Thresholds: Reject generated samples where the model's internal confidence scores are low, indicating uncertainty or fabrication.

Experimental Protocol for Validation:

- DFT Relaxation: Use software like VASP or Quantum ESPRESSO to relax the generated atomic structure and calculate its total energy.

- Stability Check: Compute the energy above the convex hull (Ehull). A material is generally considered stable if Ehull is below 0.1 eV/atom [22].

- Phonon Dispersion Calculation: Perform a phonon calculation to confirm dynamic stability (no imaginary frequencies).

Problem 2: Model is Biased Towards Common Structures and Lacks Diversity

Symptoms:

- Generated materials are minor variations of known crystals in the training database.

- The model fails to propose materials with user-specified, exotic properties.

- Outputs lack novelty and do not constitute a meaningful expansion of chemical space.

Solutions:

- Data Curation and Augmentation: Augment training datasets with hypothetical structures, under-represented chemical systems, and materials with target properties to balance intrinsic data biases [24].

- Employ Retrieval-Augmented Generation (RAG): Ground the generative model by retrieving relevant information from a trusted, curated database of materials (e.g., Materials Project) before generating an output, improving factual accuracy and relevance [25] [26].

- Leverage Classifier-Free Guidance: During sampling, use techniques that allow you to steer the generation by specifying a desired property (e.g., "high magnetic density"), pushing the model away from its default, biased distribution [22].

Problem 3: Model Generates Physically Implausible Property Values

Symptoms:

- Predicted property values (e.g., band gap, magnetic moment) are extreme outliers with no physical justification.

- Property predictions are inconsistent with the generated structure (e.g., predicting metallicity for a wide-gap insulator).

Solutions:

- Incorporate Physical Constraints: Integrate known physical laws (e.g., symmetry constraints, thermodynamic boundaries) directly into the model's loss function or architecture to penalize unphysical outputs [21].

- Human-in-the-Loop Validation: Never trust AI-generated outputs blindly. Establish a mandatory review step where domain experts critically evaluate the plausibility of generated materials and their properties before further investment [25] [26].

- Uncertainty Quantification: Use models that provide uncertainty estimates for their predictions. Treat high-uncertainty outputs as likely hallucinations and subject them to greater scrutiny [21].

Quantitative Data on Model Performance and Hallucination Rates

The following table summarizes the performance of different generative models, highlighting their propensity to generate stable versus hallucinated structures.

Table 1: Performance Comparison of Generative Models for Materials Design

| Model / Method | Stable, Unique, and New (SUN) Materials | Average RMSD to DFT-Relaxed Structure | Key Mitigation Strategy |

|---|---|---|---|

| MatterGen (Base Model) | 75% below 0.1 eV/atom hull [22] | < 0.076 Å [22] | Diffusion model with physical constraints [22] |

| MatterGen-MP | 60% more SUN materials than CDVAE/DiffCSP [22] | 50% lower than CDVAE/DiffCSP [22] | Trained on diverse dataset (Alex-MP-20) [22] |

| SCIGEN + DiffCSP | Generated 10M candidates; 1M stable [3] | N/A (Focused on lattice constraints) | Hard-coded geometric constraints [3] |

| CDVAE / DiffCSP | (Baseline for comparison) Lower SUN yield [22] | (Baseline for comparison) Higher RMSD [22] | Standard generative approach |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational and Experimental Tools for Validating AI-Generated Materials

| Item / Tool | Function / Purpose |

|---|---|

| Density Functional Theory (DFT) Codes | The foundational computational method for validating structural stability and predicting electronic properties of generated materials. |

| Phonopy Software | Calculates phonon spectra to confirm the dynamic stability of a crystal structure (absence of imaginary frequencies). |

| SCIGEN | A tool for applying hard geometric constraints to generative models, forcing them to produce specific lattice types (e.g., Kagome) [3]. |

| Adapter Modules | Small, tunable components added to a pre-trained base model that allow for efficient fine-tuning on small, property-specific datasets [22]. |

| High-Throughput Synthesis Workflow | An experimental setup for rapidly synthesizing and characterizing a shortlist of the most promising AI-generated candidates. |

Workflow Diagram for Hallucination Mitigation

The following diagram illustrates a robust experimental workflow to integrate generative AI into materials discovery while proactively identifying and mitigating hallucinations and biases.

AI-Driven Materials Discovery and Validation Workflow

Hallucinations and inherited biases are not terminal flaws but inherent challenges of generative AI. By understanding their origins and implementing a rigorous, multi-layered validation protocol—combining constrained generation, computational physics checks, and irreplaceable human expertise—researchers can harness the transformative power of AI while maintaining the integrity of the scientific discovery process.

Generative AI in Action: From MOFs to Molecules - Methods and Real-World Applications

Technical Support & Troubleshooting Hub

This section addresses common technical challenges encountered when deploying generative models for materials discovery. The FAQs and troubleshooting guides are framed within the context of a broader thesis on overcoming instability, data scarcity, and computational constraints in materials research.

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary trade-offs when choosing between GANs, VAEs, and Diffusion Models for generating new crystal structures?

The choice involves a fundamental trade-off between sample quality, diversity, and training stability [27]. The table below summarizes the key performance characteristics based on current research:

Table 1: Comparative Analysis of Generative Models for Materials Science

| Feature | Generative Adversarial Networks (GANs) | Variational Autoencoders (VAEs) | Diffusion Models |

|---|---|---|---|

| Sample Quality | High-fidelity, sharp samples [27] [28] | Often blurrier, lower fidelity outputs [27] | High-fidelity and diverse samples [27] |

| Sample Diversity | Can suffer from mode collapse (low diversity) [27] [28] | High diversity, better data coverage [27] | High diversity [27] |

| Training Stability | Unstable, sensitive to hyperparameters [29] [28] | Generally more stable due to likelihood-based training [28] | More stable than GANs [29] |

| Training Speed | Faster training [29] | - | Slower training [29] |

| Sampling Speed | Fast sampling [30] | Fast sampling [30] | Slow, iterative sampling [27] [30] |

| Latent Space | Implicit, less interpretable [28] | Explicit, structured, and meaningful [28] | - |

FAQ 2: Our Diffusion Model for molecule generation is computationally slow. What strategies can accelerate sampling?

The slow sampling of diffusion models is a known challenge, as they require many iterative steps to denoise a sample [27] [30]. Several strategies have been developed to address this:

- Advanced Solvers: Use dedicated ODE/SDE (Ordinary/Stochastic Differential Equation) solvers designed to reduce the number of steps required without significantly compromising quality [30].

- Model Distillation: Distill a complex, multi-step diffusion model into a model that can generate samples in fewer steps (e.g., one or a handful) [30].

- Latent Diffusion: Perform the diffusion process in a lower-dimensional latent space instead of the raw data space. Models like Stable Diffusion use a VAE to achieve this, dramatically reducing computational cost [31] [32].

FAQ 3: How can we improve the stability and physical realism of crystals generated by our model?

Ensuring generated crystals are stable and physically plausible is a core challenge. Beyond choosing an appropriate model architecture, you can:

- Incorporate Physics-Guided Loss Functions: Introduce loss terms that penalize physically impossible structures, such as atoms that are too close or too far apart [29].

- Leverage Reinforcement Learning (RL) with Energy Feedback: Fine-tune a pre-trained diffusion model using reinforcement learning, where the reward is based on formation energy (a measure of stability) calculated from Density Functional Theory (DFT). This directly guides the model to generate more stable structures [33].

- Use Symmetry-Aware Representations: Employ crystal representations like CrysTens [29] or other graph-based methods that inherently respect the periodic and symmetric nature of crystal structures.

FAQ 4: Our GAN for material generation is suffering from mode collapse. What are the remediation steps?

Mode collapse occurs when the generator produces a limited variety of samples [27] [28].

- Switch to More Stable GAN Variants: Implement advanced GAN architectures like Wasserstein GAN (WGAN) [29], which uses a different loss function to improve training stability and mitigate mode collapse.

- Modify Training Techniques: Use techniques like minibatch discrimination, which allows the discriminator to look at multiple data samples simultaneously, helping it to identify a lack of diversity in the generator's output.

- Adjust Hyperparameters: Carefully tune the learning rates and the frequency of training between the generator and discriminator. A common strategy is to train the discriminator more frequently than the generator.

Troubleshooting Guides

Issue: Unstable Training and Mode Collapse in Generative Adversarial Networks (GANs)

- Symptoms: The generator produces a very limited variety of structures, or the quality of generated samples oscillates wildly during training. The loss values for the generator and discriminator may become unstable.

- Diagnosis: This is a classic sign of GAN training instability and/or mode collapse [28].

- Resolution Protocol:

- Implement Gradient Penalties: Switch from a standard GAN to a Wasserstein GAN with Gradient Penalty (WGAN-GP). This imposes a constraint on the discriminator's gradients, leading to more stable training [29].

- Review Network Architecture: Ensure the generator and discriminator are not too powerful relative to each other. A common practice is to use a structured design like Deep Convolutional GANs (DCGANs).

- Adjust Training Schedule: Try training the discriminator more times (e.g., 3-5 times) per each generator training step.

- Monitor Progress: Use multiple, fixed noise vectors to generate samples throughout training to visually monitor for mode collapse, rather than relying solely on loss values.

Issue: Blurry or Over-Smoothed Outputs from a Variational Autoencoder (VAE)

- Symptoms: Generated crystal structures or material images lack sharp, defined features and appear blurry.

- Diagnosis: This is a known limitation of VAEs, often resulting from the use of pixel-based reconstruction loss (like MSE) and the inherent averaging in the latent space [27] [31].

- Resolution Protocol:

- Modify the Loss Function: Increase the weight of the KL divergence term in the VAE loss function. This encourages the latent space to conform better to the prior distribution, which can sometimes improve feature separation. Alternatively, consider using a different reconstruction loss.

- Use a Hybrid Approach: Use the VAE as a tool for learning a compressed, meaningful latent space. Then, train a separate generative model (like a GAN or a diffusion model) on this latent space to generate new, sharp samples [31].

- Explore Alternative Architectures: For tasks requiring high visual fidelity, consider transitioning to a GAN or diffusion model, which are generally better at producing sharp outputs [27].

Issue: Extremely Slow Sampling with Diffusion Models

- Symptoms: Generating a single new material structure takes a prohibitively long time, hindering high-throughput screening.

- Diagnosis: This is a fundamental characteristic of diffusion models, which require many denoising steps (often hundreds or thousands) [27] [30].

- Resolution Protocol:

- Employ a Distilled Model: If available, use a version of your diffusion model that has undergone model distillation. This can reduce the number of sampling steps to 10 or fewer [30].

- Utilize an Advanced Solver: Integrate a fast ODE/SDE solver (e.g., DPM-Solver) into your sampling pipeline. These solvers are designed to take fewer, smarter steps [30].

- Validate Output Quality: After implementing acceleration techniques, always validate that the quality, diversity, and stability (e.g., via formation energy calculations) of the generated materials have not significantly degraded.

Experimental Protocols & Workflows

This section details specific methodologies cited in research for developing and optimizing generative models in materials science.

Protocol: Reinforcement Learning Fine-Tuning with Formation Energy Feedback (RLFEF)

This protocol describes a method to fine-tune a pre-trained material diffusion model to generate crystals with lower formation energy, implying higher stability [33].

- Objective: To shift the output distribution of a diffusion model towards regions of the chemical space that correspond to more stable materials.

- Primary Materials:

- A pre-trained crystal diffusion model (e.g., CDVAE, DiffCSP).

- A dataset of crystal structures with computed formation energies (e.g., from Pearson's Crystal Database).

- A DFT code (e.g., VASP) or a pre-computed database for formation energy calculation.

Table 2: Research Reagent Solutions for RLFEF Protocol

| Reagent / Resource | Function in the Experiment |

|---|---|

| Pre-trained Diffusion Model | Serves as the foundation model that already understands the general distribution of crystal structures. Provides the initial policy for the RL agent. |

| Formation Energy (from DFT) | Functions as the reward signal in the RL framework. Guides the model update towards generating more stable structures. |

| Reinforcement Learning Algorithm | The optimization framework (e.g., Policy Gradient) that updates the diffusion model's parameters based on the formation energy reward. |

- Methodology:

- Formulate the MDP: Model the denoising process of the diffusion model as a Markov Decision Process (MDP). Each denoising step is an action, and the state is the partially denoised crystal.

- Compute Rewards: For each fully generated crystal structure, compute its formation energy using DFT. A lower (more negative) formation energy should result in a higher reward.

- Policy Gradient Update: Using a reinforcement learning algorithm (like REINFORCE), calculate the policy gradient. The key theoretical insight is that optimizing the expected reward in RL is equivalent to applying policy gradient updates to the diffusion model [33].

- Fine-tune the Model: Update the parameters of the diffusion model using the calculated gradient, effectively teaching it to generate crystals that are more likely to yield a high reward (low formation energy).

- Symmetry Assurance: Theoretically, it has been proven that this fine-tuning process can be designed to maintain the fundamental physical symmetries (e.g., invariance to rotation, translation) of the crystal structures [33].

Protocol: Crystal Generation using CrysTens and Diffusion Models

This protocol outlines the process of generating novel crystal structures using the CrysTens representation and a diffusion model, as described by Alverson et al. (2024) [29].

- Objective: To generate theoretical, synthesizable crystal structures by leveraging a standardized image-like crystal embedding.

Primary Materials:

- Pearson's Crystal Database (PCD): A comprehensive source of Crystallographic Information Files (CIFs).

- CrysTens Representation: A pre-processing pipeline to convert CIFs into a 64x64x4 tensor representation.

Methodology:

- Data Curation: Filter a large collection of CIFs from the PCD. Remove any structures with more than 52 atoms in the basis and any erroneous or incomplete files. A final dataset of ~53,000 CIFs is used [29].

- Create CrysTens: For each CIF, generate its CrysTens representation. This tensor is designed to capture both chemical and structural crystal properties in an image-like format, making it suitable for image-generation models [29].

- Model Training: Train a diffusion model on the dataset of CrysTens. The model learns the data distribution by gradually adding noise to the CrysTens (forward process) and then learning to reverse this process (reverse process).

- Generation & Validation: Sample new crystal structures by running the reverse diffusion process from random noise. The output is a novel CrysTens, which can be decoded back into a standard CIF format for analysis and validation using domain expertise and stability metrics.

The Scientist's Toolkit

This section catalogs essential computational resources, datasets, and representations used in modern generative materials discovery research.

Table 3: Key Research Reagents in Generative Materials Science

| Tool / Resource | Type | Primary Function |

|---|---|---|

| CrysTens [29] | Crystal Representation | An image-like tensor representation (64x64x4) that encodes crystal structure and composition, compatible with standard image-generation models. |

| Formation Energy [33] | Stability Metric | A property calculated via DFT that measures a crystal's stability; used as a reward signal to guide generative models. |

| Reinforcement Learning (RL) [33] | Optimization Framework | A machine learning paradigm used to fine-tune generative models by optimizing for specific objectives (e.g., low formation energy). |

| Diffusion Model [29] [30] | Generative Model | A state-of-the-art model that generates data by iteratively denoising from random noise; known for high-quality and diverse samples. |

| Generative Adversarial Network (GAN) [29] [28] | Generative Model | A model comprising a generator and discriminator in an adversarial game; can produce high-fidelity samples but may be unstable. |

| Variational Autoencoder (VAE) [31] [28] | Generative Model | An encoder-decoder model that learns a probabilistic latent space; useful for interpolation and ensuring diverse outputs. |

| Pearson's Crystal Database (PCD) [29] | Dataset | A large, curated database of Crystallographic Information Files (CIFs) used for training crystal generative models. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center addresses common challenges researchers face when using generative AI for designing novel small molecules and proteins. The guidance is framed within the broader thesis that generative models for materials research must overcome issues of data scarcity, computational cost, and model interpretability to achieve real-world impact [2].

Frequently Asked Questions (FAQs)

FAQ 1: My generative model produces invalid molecular structures. What could be the cause? This is often a problem with the training data or the model's representation of molecules.

- Potential Cause 1: The model was trained on a dataset containing invalid or noisy chemical structures.

- Solution: Curate a high-quality, clean dataset. Use tools like the CAS Content Collection, a human-curated repository of scientific information, to ensure data integrity [34].

- Potential Cause 2: The method for representing molecules (e.g., SMILES strings) allows for grammatically incorrect sequences during generation.

- Solution: Utilize advanced molecular representation techniques. Implement models like ChemBERTa or MolBERT that learn molecular embeddings from SMILES notation, which can improve the validity of generated structures [35]. Alternatively, use models like DeepSMILES or ReLeaSE that are specifically designed for de novo molecular design and can learn the rules of valid chemical structures [35].

FAQ 2: How can I improve my model's prediction of protein-ligand binding affinity? Accurate prediction of Drug-Target Interaction (DTI) is crucial for efficacy.

- Solution 1: Employ advanced deep learning architectures. Transformer models, which use self-attention mechanisms, are highly effective at analyzing vast datasets of protein-ligand interactions to suggest potential drug candidates [35].

- Solution 2: Use specialized diffusion models. Models like DiffDock enhance drug binding prediction by simulating how molecules fit into protein binding sites, providing a more dynamic assessment of interaction [35].

- Methodology: For a typical DTI prediction experiment, fine-tune a pre-trained transformer model on a dataset of known protein-ligand pairs with measured binding affinities (e.g., Ki, Kd). The model will learn to map structural features of the protein and ligand to the binding strength.

FAQ 3: My AI-designed compound failed in wet-lab testing. How can I make the models more predictive of real-world behavior? This highlights the "synthesizability" and "accuracy" challenges in generative AI for materials research [2].

- Solution 1: Integrate physics-informed architectures. Use AI models that incorporate known physical laws and constraints, which can make the generated molecules more realistic and synthesizable [2].

- Solution 2: Implement closed-loop discovery systems. Integrate AI generation with high-throughput experimentation tools, where AI proposes candidates, they are tested in the lab, and the results are fed back to retrain and improve the AI model [2].

- Solution 3: Perform early validation with in silico models. Before lab testing, simulate biological responses to drug candidates using AI-powered digital twins or quantitative systems pharmacology (QSP) models to evaluate toxicity risks and off-target effects, weeding out weak compounds early [34] [35].

FAQ 4: What are the best practices for using generative AI to design a PROTAC? PROteolysis TArgeting Chimeras (PROTACs) are a promising class of drugs that degrade target proteins.

- Challenge: Most designed PROTACs act via a limited set of E3 ligases (e.g., cereblon, VHL) [34].

- Solution: Use AI to expand the E3 ligase toolbox. Leverage predictive models to identify and design PROTACs that utilize novel or less common E3 ligases, such as DCAF16, DCAF15, or KEAP1. This can enable the targeting of previously inaccessible proteins [34].

- Experimental Protocol:

- Data Collection: Compile a dataset of known E3 ligases, their structures, and known binders.

- Ligase Selection: Use a transformer model to predict the compatibility of a target protein with various E3 ligases.

- Linker Design: Employ a diffusion model or RNN to generate potential chemical linkers that connect the E3 ligase binder to the target protein binder, optimizing for length and stability.

- Validation: Use molecular dynamics simulations and in vitro binding assays to validate the designed PROTAC.

Experimental Data and Protocols

Table 1: Key AI Techniques in Drug Discovery

| AI Technique | Primary Function | Example Models/Tools | Key Application in Drug Discovery |

|---|---|---|---|

| Transformer Models [35] | Processes large-scale biological data using self-attention. | AlphaFold, ChemBERTa, MolBERT | Protein structure prediction, molecular representation learning, drug-target interaction prediction. |

| Diffusion Models [35] | Generates structures by iteratively refining noise. | PocketDiffusion, DiffDock | Molecular generation, ligand-protein docking, de novo drug design. |

| Recurrent Neural Networks (RNNs) [35] | Processes sequential data; ideal for SMILES strings. | DeepSMILES, ReLeaSE | De novo molecular design, molecular property prediction, optimization of drug candidates. |

Table 2: Recent Breakthroughs in AI-Driven Drug Discovery (2025)

| Breakthrough Area | Key Finding | Quantitative Impact | Significance |

|---|---|---|---|

| Personalized CRISPR Therapy [34] | A seven-month-old infant with CPS1 deficiency received personalized CRISPR base-editing therapy. | Developed in just 6 months; marked the first use of CRISPR tailored to a single patient. | Demonstrates feasibility of rapid, individualized gene editing for rare diseases with no existing treatments. |

| AI-Powered Clinical Trials [34] | AI-powered digital twins and "virtual patient" platforms simulate disease trajectories. | AI-augmented virtual cohorts can reduce placebo group sizes considerably, ensuring faster timelines. | Accelerates clinical trial process and provides more confident data without losing statistical power. |

| PROTAC Development [34] | Sharp increase in PROTAC-related publications in less than 10 years. | More than 80 PROTAC drugs are in the development pipeline, with over 100 commercial organizations involved. | Demonstrates significant therapeutic potential and commercial interest in AI-driven protein degradation. |

Research Reagent Solutions

Table 3: Essential Research Reagents for AI-Driven Drug Discovery

| Reagent / Material | Function in the Experimental Workflow |

|---|---|

| E3 Ligase Assay Kits | Validate the binding and functionality of AI-designed PROTACs against specific E3 ubiquitin ligases (e.g., VHL, cereblon) [34]. |

| Cell Lines for Target Validation | Engineered cell lines (e.g., for specific cancer types) used to test the efficacy and cytotoxicity of AI-generated small molecules in in vitro models. |

| Protein Crystallization Kits | Used to determine the 3D structure of target proteins or protein-ligand complexes, providing critical data for training and validating AI models like AlphaFold [35]. |

| Lipid Nanoparticles (LNPs) | A delivery system for in vivo CRISPR therapies, enabling the transport of gene-editing machinery to target cells [34]. |

Workflow Visualizations

AI-Driven Drug Discovery Workflow

PROTAC Mechanism of Action

Synthetic Data Generation to Overcome Clinical Data Scarcity and Privacy Issues

Frequently Asked Questions

Q1: What is synthetic data and how can it help with data scarcity in medical research? Synthetic data is artificially generated information that mimics the statistical properties of real patient data without containing any sensitive personal information [36]. It is a promising solution for rare disease research, where small patient populations lead to limited data, hindering the development of AI-driven diagnostics and treatments [36]. By providing diverse and privacy-preserving datasets, synthetic data enables the training of robust AI models, the simulation of clinical trials, and secure collaboration across institutions [36] [37].

Q2: What are the main technical methods for generating synthetic clinical data? The primary methods can be grouped into three categories [36]:

- Rule-based approaches: Use predefined rules and statistical distributions (e.g., for age or gender) to create artificial patient records.

- Statistical modelling: Relies on techniques like Bayesian Networks or Markov chains to capture and replicate relationships between variables in real data.

- Machine learning-based techniques: State-of-the-art methods, including:

- Generative Adversarial Networks (GANs): Two neural networks (a generator and a discriminator) are trained together to produce highly realistic data. Variants include Conditional GANs (cGANs) for generating data with specific diseases, and Tabular GANs for numerical and categorical data [36].

- Variational Autoencoders (VAEs): Use probabilistic modeling to encode data into a latent space and decode it to generate new datasets. They often have a lower computational cost than GANs [36].

Q3: My model trained on synthetic data is performing poorly on real-world data. What could be wrong? This is often a sign of a simulation-to-reality gap [38], where the synthetic data fails to capture some crucial complexity of the real world. Key issues and solutions include:

- Data Fidelity: The synthetic data may not accurately replicate complex relationships between variables in the original dataset. Solution: Validate the synthetic data's statistical similarity to a held-out set of real data and refine the generative model [38].

- Missing Edge Cases: Generative models can miss rare but critical anomalies. Solution: Actively identify and oversample these edge cases during the data generation process, if possible [38].

- Model Collapse (Data Pollution): If a model is trained on synthetic data generated by another AI, it can lead to a degradation in quality. Solution: Whenever possible, use fresh, real-world data for validation and final tuning [38].

Q4: How can I ensure the synthetic data I generate preserves patient privacy? While synthetic data reduces privacy risks, it is not automatically anonymous. High-fidelity synthetic data could potentially be reverse-engineered to identify individuals [38]. To mitigate this:

- Use Privacy-Preserving Techniques: Incorporate methods like Differential Privacy into your generative models. This adds calibrated noise to the data or model outputs to prevent the identification of any individual record [36] [38].

- Conduct Disclosure Risk Assessments: Before sharing synthetic datasets, perform rigorous tests to evaluate the risk of re-identifying individuals [36].

- Maintain Provenance Tracking: Keep clear records of the real datasets used to create the synthetic version to enable audits [38].

Q5: What are the best practices for validating the quality of synthetic data? A multi-faceted validation approach is essential [38]:

- Statistical Validation: Check that the synthetic data matches the real data's distributions, correlations, and other statistical properties.

- Utility Validation: Train a standard model on the synthetic data and test it on a held-out real dataset. Performance close to a model trained on real data indicates high utility.

- Privacy Validation: Perform penetration tests and attempt re-identification attacks on the synthetic dataset to uncover privacy vulnerabilities.

- Avoid Circular Validation: Never validate synthetic data using another synthetic dataset, as this creates a "hall of mirrors" effect and gives false confidence [38].

Troubleshooting Guides

Problem: Generative model fails to learn complex relationships in clinical data. Applicability: Issues with GANs or VAEs generating low-quality, nonsensical, or oversimplified data.

| Step | Action & Description |

|---|---|

| 1 | Verify Data Preprocessing. Ensure categorical variables are properly encoded and continuous variables are normalized. The model may be struggling with inconsistent data formats. |

| 2 | Inspect Model Architecture. For GANs, a common failure is "mode collapse," where the generator produces limited varieties of samples. Consider using advanced GAN architectures like Wasserstein GAN (WGAN) or CTGAN for tabular data [36]. |

| 3 | Adjust Hyperparameters. Systematically tune learning rates, batch sizes, and the number of training epochs. The discriminator and generator must be balanced to avoid one overpowering the other [36]. |

| 4 | Implement Hybrid Models. If using a VAE, the output may be blurry or lack sharpness. A VAE-GAN hybrid can combine the stability of VAEs with the sharp output of GANs [36]. |

Problem: Synthetic data is amplifying existing biases. Applicability: The generated data under-represents certain patient subgroups (e.g., based on ethnicity, age, or gender), leading to biased AI models.

| Step | Action & Description |

|---|---|

| 1 | Audit the Source Data. Profile the original, real-world dataset to identify and quantify existing biases in the representation of different groups [38]. |

| 2 | Use Conditional Generation. Employ conditional generative models (e.g., cGANs, Con-CDVAE) to explicitly generate data for underrepresented subgroups, effectively oversampling them in the synthetic dataset [36] [4]. |

| 3 | Apply Fairness Metrics. Use metrics like demographic parity or equalized odds to evaluate the synthetic data and the models trained on it, ensuring fairness across groups [38]. |

| 4 | Engage Domain Experts. Involve clinicians and patient advocates to review the synthetic data and the choices made during generation, ensuring they are clinically and ethically sound [38]. |

Problem: High computational cost and long training times for generative models. Applicability: Training large-scale generative models on high-dimensional medical data (e.g., MRI images, genomic sequences) is prohibitively slow.

| Step | Action & Description |

|---|---|

| 1 | Start with a Smaller Model. Begin with a less complex model, such as a VAE, which generally has a lower computational cost than GANs, to establish a baseline [36]. |

| 2 | Use Transfer Learning. Leverage a pre-trained generative model from a similar domain (e.g., a general image GAN) and fine-tune it on your specific clinical dataset. |

| 3 | Optimize Hardware. Utilize GPUs or TPUs, which are specifically designed for parallel processing of the matrix operations fundamental to deep learning. |

| 4 | Implement Distributed Training. Split the training process across multiple machines or processors to reduce the overall time required. |

Experimental Protocols & Data

Table 1: Comparison of Synthetic Data Generation Techniques

| Method | Key Mechanism | Best For Data Type | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Generative Adversarial Networks (GANs) [36] | Two-network adversarial training (Generator vs. Discriminator) | Images (MRIs, X-rays), tabular data, time-series (ECG) | Produces very high-quality, sharp data samples | Training can be unstable; prone to mode collapse |

| Variational Autoencoders (VAEs) [36] | Probabilistic encoding/decoding to a latent space | Numerical data, bio-signals, smaller datasets | More stable and robust training than GANs | Generated data can be blurrier than GAN output |

| Conditional Generative Models (e.g., cGAN, Con-CDVAE) [36] [4] | Generation conditioned on specific input parameters (e.g., disease type, material property) | Creating data for specific subpopulations or property targets | Enables targeted data generation; improves control | Requires labeled data for conditioning |

| Rule-based & Statistical Models [36] | Predefined rules and statistical distributions (Gaussian Mixture Models, etc.) | Simple tabular data, data with known distributions | Highly interpretable and transparent | Struggles with complex, high-dimensional data |

Table 2: Performance of Predictive Models Using Synthetic Data Augmentation (Materials Science Example)

The following table from a materials science study illustrates the potential of synthetic data in a data-scarce environment, which is analogous to many clinical research scenarios [4]. The Mean Absolute Error (MAE) is used, where lower values are better.

| Dataset & Scenario | Training on Real Data Only | Training on Synthetic Data Only | Training on Real + Synthetic Data |

|---|---|---|---|

| Jarvis2d Exfoliation (Fully-Supervised) | 62.01 | 64.52 | 57.49 |

| MP Poly Total (Fully-Supervised) | 6.33 | 8.13 | 7.21 |

| Jarvis2d Exfoliation (Semi-Supervised) | 64.03 | 64.51 | 63.57 |

| MP Poly Total (Semi-Supervised) | 8.08 | 8.09 | 8.04 |

Experimental Protocol: Using Conditional Generation for a Data-Scarce Study

This protocol is adapted from the MatWheel framework for materials science and is applicable to clinical data [4].

- Objective: Augment a small dataset of patient records or material properties to improve a predictive model.

- Data Splitting:

- Split the full dataset into Training (70%), Validation (15%), and Test (15%) sets.

- For a semi-supervised scenario, further split the Training set into a small labeled portion (e.g., 10%) and a larger unlabeled portion.

- Generative Model Training:

- Train a conditional generative model (e.g., CTGAN, Con-CDVAE) on the labeled training data. The model learns to generate samples based on specific property or disease conditions.

- Synthetic Data Sampling:

- Use Kernel Density Estimation (KDE) on the training data's property distribution to create a conditional input distribution.

- Sample from this KDE to generate conditional inputs for the generative model, producing a synthetic dataset.

- Predictive Model Training & Evaluation:

- Train the predictive model (e.g., a classifier or regressor) on three setups: the original real data only, the synthetic data only, and a combined real+synthetic dataset.

- Evaluate the final model's performance on the held-out real test set.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Synthetic Data Generation in Research

| Tool / Solution | Type | Primary Function | Relevance to Research |

|---|---|---|---|

| GANs & VAEs [36] | Algorithm Family | Generate high-fidelity synthetic data of various types (images, tabular, time-series). | Core engine for creating artificial datasets where real data is scarce or sensitive. |

| Differential Privacy [36] [38] | Privacy Framework | A mathematical guarantee that limits the disclosure of individual information in a dataset. | Integrated into generative models to provide robust privacy protection for synthetic data. |

| Conditional Generative Models (e.g., cGAN, Con-CDVAE) [36] [4] | Specialized Algorithm | Generate data samples that meet specific, predefined criteria or conditions. | Crucial for creating targeted data for rare disease subtypes or materials with desired properties. |

| Synthea [37] | Open-Source Software | A synthetic patient population simulator that generates realistic but fictional patient health records. | Provides a readily available, standardized source of synthetic clinical data for method development and testing. |

| CTAB-GAN+ [36] | Specialized Algorithm | A GAN variant specifically designed for generating synthetic tabular data. | Effective for creating synthetic electronic health records (EHRs) that mimic complex, mixed-type real-world tables. |

Workflow Visualization

Diagram 1: GAN Training for Data Generation

Diagram 2: Conditional Synthetic Data Flywheel

Debugging the Design: Strategies to Enhance Stability, Fairness, and Efficiency

Mitigating Thermodynamic Instability in AI-Proposed Materials

Generative artificial intelligence offers a promising avenue for accelerating the discovery of new inorganic crystals, a process that has traditionally been slow and resource-intensive [39]. However, a significant challenge persists: many materials proposed by these models are thermodynamically unstable and thus not synthetically viable [39] [40]. These models sometimes lack rigorous physical constraints, leading to structures that are energetically unfavorable [40]. This guide provides targeted troubleshooting and methodologies to help researchers identify, mitigate, and overcome the root causes of instability in AI-driven materials discovery.

Troubleshooting Guides & FAQs

Generative Model Outputs

Q: Why do my generative models produce materials that are thermodynamically unstable? A: This is a common issue often stemming from two sources: the model's architecture and its training data. Generative models learn the probability distribution of known materials; without explicit physical constraints, they can sample from regions of this distribution that represent high-energy, unstable structures [40]. Furthermore, if the training data lacks diversity or sufficient examples of stable configurations, the model's outputs will reflect this limitation.

- Troubleshooting Steps:

- Audit Your Training Data: Ensure your dataset is comprehensive and includes formation energies or other stability metrics. A model trained only on structural data without energetic information has no signal to learn what "stable" means.

- Incorporate Physical Inductive Biases: Utilize or develop models that embed physical laws. For example, SE(3)-equivariant models respect translational and rotational invariance, and some diffusion models can be trained to output gradients that drive atomic coordinates toward lower energy states [40].