Overcoming Key Limitations in Material Property Prediction: From Data Scarcity to Robust AI Models

Accurately predicting material properties is crucial for accelerating the discovery of new materials and drugs, yet researchers face significant challenges including data scarcity, an inability to extrapolate beyond training data,...

Overcoming Key Limitations in Material Property Prediction: From Data Scarcity to Robust AI Models

Abstract

Accurately predicting material properties is crucial for accelerating the discovery of new materials and drugs, yet researchers face significant challenges including data scarcity, an inability to extrapolate beyond training data, and poor model generalizability. This article explores the current landscape of machine learning for property prediction, detailing foundational challenges and innovative solutions. It provides a comprehensive overview of advanced methodologies like transductive learning, ensemble models, and novel descriptors that enhance extrapolation and data efficiency. The article also offers practical troubleshooting strategies for imbalanced datasets and model optimization, and concludes with a rigorous validation framework comparing the performance and robustness of various state-of-the-art models. Tailored for researchers, scientists, and drug development professionals, this review serves as a strategic guide for navigating and overcoming the most pressing limitations in the field.

The Core Hurdles: Understanding Fundamental Challenges in Material Property Prediction

The Critical Problem of Data Scarcity in Materials Science and Drug Discovery

Technical Support Center: Troubleshooting Guides and FAQs

Troubleshooting Common Experimental Roadblocks

This section addresses frequent challenges researchers face when building predictive models with limited data.

FAQ 1: My predictive model is overfitting on a small dataset. What regularization strategies are most effective?

| Strategy | Description | Best Used When | Key Performance Metric |

|---|---|---|---|

| Multi-task Learning (MTL) [1] | A single model learns several related tasks simultaneously, sharing representations to improve generalization. | Multiple, related property datasets are available, even if some are small. | Mean Absolute Error (MAE) improvement across all tasks. |

| Transfer Learning (TL) [1] [2] | A model pre-trained on a large, data-rich "source" task is fine-tuned on the data-scarce "target" task. | A large source dataset exists, and its property is related to your target property. | MAE on the target task vs. training from scratch. |

| Mixture of Experts (MoE) [2] [3] | Combines multiple pre-trained models ("experts") via a gating network that weights their contributions for each prediction. | You have access to multiple models pre-trained on different, complementary tasks or data types. | Outperforms pairwise Transfer Learning on data-scarce tasks [2]. |

Experimental Protocol: Implementing a Mixture of Experts (MoE) Framework

- Objective: To accurately predict a data-scarce material property (e.g., piezoelectric modulus) by leveraging knowledge from multiple pre-trained models.

- Materials:

- Pre-trained Expert Models: Multiple Crystal Graph Convolutional Neural Networks (CGCNNs), each trained on a different data-abundant property (e.g., formation energy, band gap) [2].

- Downstream Dataset: Your small, target property dataset (e.g., < 1000 samples).

- Method:

- Feature Extraction: For each material in your target dataset, obtain feature vectors from all pre-trained expert models.

- Aggregation: The MoE framework uses a trainable gating network to compute a weighted sum of these feature vectors. The gating network learns which experts are most relevant for the target task [2].

- Prediction: The aggregated feature vector is passed through a property-specific head network to make the final prediction.

- Training: Only the gating network and the final head network are trained on the target task, preventing overfitting and catastrophic forgetting in the expert models [2].

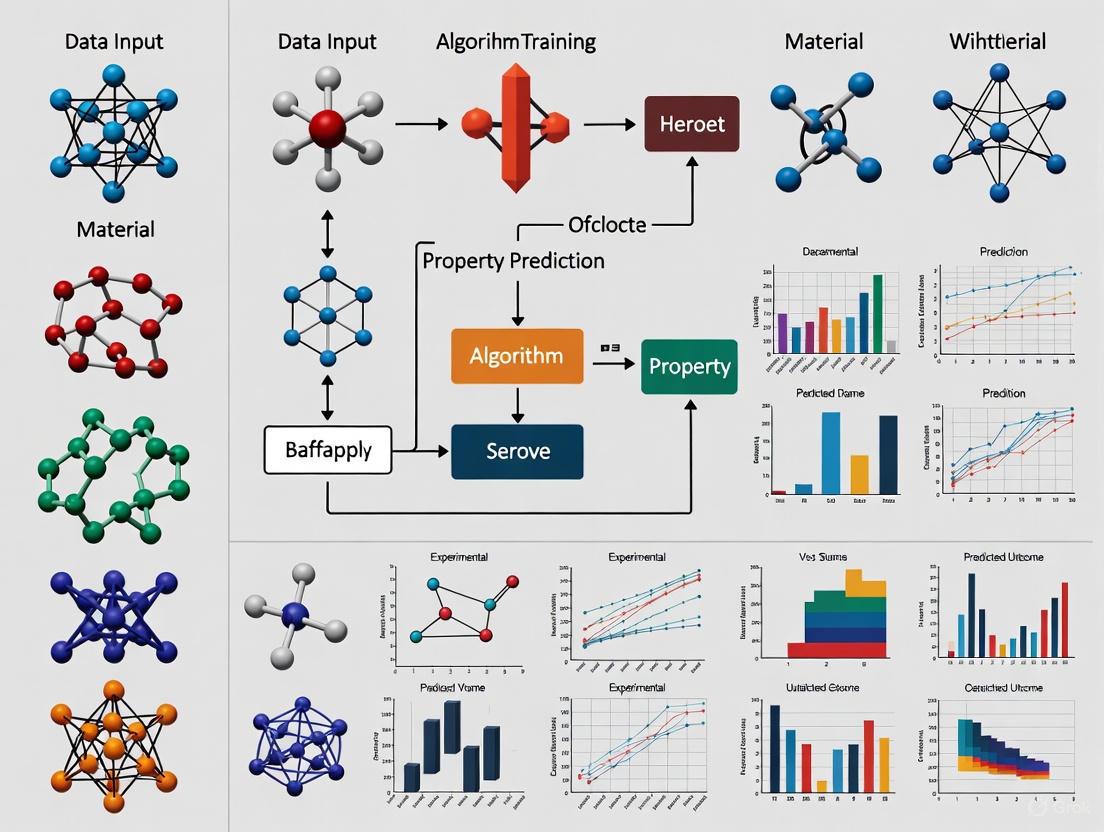

Diagram 1: Mixture of Experts (MoE) workflow for materials property prediction.

FAQ 2: For drug-target affinity (DTA) prediction, how can I leverage unlabeled data and multiple data types?

| Strategy | Description | Application Context |

|---|---|---|

| Semi-Supervised Multi-task Training [4] | Combines DTA prediction with masked language modeling on paired data and uses large-scale unpaired molecules/proteins for representation learning. | Labeled DTA data is scarce, but large libraries of unlabeled molecular and protein sequences are available. |

| Mixture of Synergistic Experts [5] | Uses separate experts for intrinsic (e.g., molecular structure) and extrinsic (e.g., biological network) data, fusing them adaptively and using mutual supervision. | Input data is incomplete or scarce for some drugs/targets, and/or interaction labels are limited. |

Experimental Protocol: Semi-Supervised Multi-task Training for DTA

- Objective: Improve DTA prediction accuracy by learning better drug and target representations.

- Materials:

- Labeled Data: A small benchmark dataset like BindingDB or DAVIS.

- Unlabeled Data: Large-scale molecular (e.g., ZINC) and protein sequence databases.

- Method:

- Pre-training: Train a model on large corpora of unpaired molecules and proteins using masked language modeling. This step helps the model learn fundamental biochemical "grammar" [4].

- Multi-task Fine-tuning: Further train the model on your labeled DTA data, but simultaneously have it perform auxiliary tasks like predicting masked tokens in the drug and target sequences. This acts as a regularizer [4].

- Interaction Modeling: Use a lightweight cross-attention module to model the interaction between the encoded drug and target representations, leading to the final affinity prediction [4].

Diagram 2: Semi-supervised multi-task training for drug-target affinity prediction.

Comparative Analysis of Low-Data Handling Methods

The table below provides a high-level comparison of common techniques to guide your strategy selection [1].

| Method | Mechanism | Advantages | Limitations & Technical Considerations |

|---|---|---|---|

| Transfer Learning (TL) | Transfers knowledge from a data-rich source task to a data-scarce target task. | Reduces data needs; leverages existing models. | Risk of negative transfer if source and target are dissimilar; requires careful layer freezing[fragment] [1]. |

| Multi-task Learning (MTL) | Jointly learns multiple related tasks in a single model. | Improved generalization via shared representations; data efficiency. | Difficult training due to task interference; sensitive to hyperparameters; hard to find optimal task groupings [1] [2]. |

| Active Learning (AL) | Iteratively selects the most informative data points to be labeled. | Optimizes labeling costs; focuses resources. | Requires an oracle/experiment to label points; initial model may be poor [1]. |

| Data Augmentation (DA) | Creates new training examples via label-preserving transformations. | Artificially expands dataset size; improves robustness. | Confidence in transformations is crucial; less established for molecular data vs. images [1]. |

| Data Synthesis (DS) | Generates entirely new synthetic data using generative models. | Can create data for rare scenarios or where real data is hard to acquire. | Quality and fidelity of synthetic data must be rigorously validated [1]. |

| Federated Learning (FL) | Trains a model across decentralized data sources without sharing the data itself. | Solves data privacy and silo issues; enables collaboration. | Emerging in drug discovery; computational overhead; model aggregation challenges [1]. |

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" and their functions for building robust models in low-data regimes.

| Research Reagent | Function & Application |

|---|---|

| Pre-trained Expert Models [2] [3] | Models pre-trained on large, public datasets (e.g., formation energy). They serve as feature extractors or base models for transfer learning, providing a strong prior of chemical or physical rules. |

| Tokenized SMILES Strings [3] | A representation of molecular structure that enhances a model's capacity to interpret chemical information compared to traditional one-hot encoding, improving learning on small datasets. |

| Molecular Fingerprints (e.g., Circular/Morgan) [6] | Fixed-length vector representations of molecules that capture key substructures. Often yield competitive performance with simple models (e.g., Random Forest) in low-data scenarios. |

| Graph Neural Networks (GNNs) [2] | Neural networks that operate directly on the graph structure of a molecule or crystal, learning representations from atomic connections. Powerful but typically require more data. |

| Multi-task Benchmark Datasets [6] | Curated datasets (e.g., from MoleculeNet) containing multiple properties for the same set of molecules, essential for developing and evaluating MTL and TL methods. |

In materials property prediction, a model performs Out-of-Distribution (OOD) extrapolation when it makes predictions for materials that are significantly different from those in its training data. This is distinct from the easier task of interpolation, where test samples fall within the training data distribution [7]. Traditional evaluation methods, which randomly split datasets into training and test sets, often lead to over-optimistic performance estimates due to high redundancy and similarity in standard materials databases [7]. In real-world discovery, scientists actively search for novel, high-performing materials that are, by definition, OOD. This makes overcoming extrapolation failures a critical frontier for accelerating the discovery of new materials and molecules [8].

Frequently Asked Questions (FAQs)

Q1: Why does my model perform well during validation but fails in real-world material discovery? This common issue often stems from the standard practice of random train-test splits. When a dataset contains many highly similar materials, a random split will create test sets that are very similar to the training set, a scenario known as Independent and Identically Distributed (i.i.d.) testing. Your model excels here because it is essentially performing interpolation. However, real-world discovery targets novel materials that are OOD. Studies have shown that state-of-the-art Graph Neural Networks (GNNs) can experience significant performance degradation when evaluated on properly constructed OOD test sets, revealing a substantial generalization gap [7].

Q2: What is the difference between OOD generalization in the input space versus the output space? This is a crucial distinction for materials informatics [8] [9]:

- Input Space (Materials/Chemical Space): This refers to the model's ability to generalize to new types of materials, such as predicting properties for ceramics when it was only trained on metals. The core challenge is that the model encounters new compositions or crystal structures.

- Output Space (Property Value Range): This refers to extrapolating to property values that are outside the range seen during training. The primary challenge is identifying materials with exceptionally high or low values for a target property, which is essential for finding high-performance candidates [8].

Q3: My goal is to discover materials with exceptional, record-breaking properties. What is my biggest challenge? Your primary challenge is output-space extrapolation. Classical machine learning regression models are inherently poor at predicting property values that fall outside the distribution of the training data [8] [9]. This is why some approaches reframe the problem as a classification task, setting a high threshold to identify "top-performing" candidates, though this is a workaround for the fundamental difficulty of regression-based extrapolation [8].

Q4: What data splitting strategies should I use to realistically evaluate my model's OOD performance? Avoid random splits. Instead, use splitting strategies that deliberately place dissimilar materials in the test set. The table below summarizes several rigorous methods.

Table 1: Data Splitting Strategies for Realistic OOD Evaluation

| Strategy Name | Core Principle | Best For |

|---|---|---|

| Leave-One-Cluster-Out (LOCO) [10] | Clusters the entire dataset (e.g., by composition/structure) and uses entire clusters as test sets. | General-purpose OOD evaluation. |

| SparseX [10] | Selects test samples from low-density regions of the material descriptor space (e.g., using Magpie features). | Testing on chemically novel or unique materials. |

| SparseY [10] | Selects test samples with property values from the extremes (tails) of the overall property distribution. | Testing output-value extrapolation for high-performance screening. |

| SOAP-LOCO [11] | Uses Smooth Overlap of Atomic Positions (SOAP) descriptors to cluster materials by local atomic environment, then applies LOCO. | Structure-based models; provides a fine-grained, challenging OOD test. |

Troubleshooting Guides

Issue 1: Poor Performance on Structurally Novel Materials

Problem: Your model fails to accurately predict properties for materials with crystal structures or chemical compositions not represented in the training data.

Solution: Implement structure-aware models and domain adaptation.

- Upgrade to Advanced Graph Neural Networks (GNNs): Move beyond simple composition-based models to structure-based GNNs that can capture local atomic interactions. Models like ALIGNN (which incorporates bond angles) and CGCNN have demonstrated more robust OOD performance in benchmarks [7].

- Fuse Spatial and Topological Information: Consider dual-stream models like TSGNN. One stream processes the topological graph of the crystal, while the other processes spatial information using a CNN, overcoming the limitation that molecules with the same topology but different spatial configurations can have different properties [12].

- Apply Domain Adaptation (DA): If you know the characteristics of your target OOD materials, use DA techniques. DA incorporates information from the target test set (compositions/structures only, not labels) during training to guide the model to adapt to the new distribution [10].

Table 2: Research Reagent Solutions for Structurally Aware Modeling

| Reagent / Method | Function | Key Implementation Note |

|---|---|---|

| SOAP Descriptors [11] | Atomic-scale descriptor that captures the local chemical environment around each atom. | Used for creating rigorous OOD splits (SOAP-LOCO) or as model input features. |

| ALIGNN Model [7] | A GNN that explicitly incorporates bond angle information in addition to atom and bond features. | Captures more detailed geometric information, leading to better OOD generalization. |

| Domain Adaptation (DA) [10] | A set of techniques that adapts a model trained on a source domain to perform well on a different (but related) target domain. | Requires access to the unlabeled target OOD materials during training. |

Experimental Protocol: Evaluating with SOAP-LOCO Split

- Descriptor Generation: Compute averaged SOAP descriptors for all crystal structures in your dataset.

- Clustering: Use a clustering algorithm like K-means on the SOAP descriptors to group materials with similar local atomic environments.

- Splitting: For a rigorous OOD test, leave out one entire cluster for testing and use the remaining clusters for training. Repeat this process for all clusters (leave-one-cluster-out cross-validation) [11].

- Evaluation: Train your model on the training clusters and evaluate its performance on the held-out test cluster. The average performance across all folds provides a realistic measure of OOD generalization.

SOAP-LOCO Evaluation Workflow

Issue 2: Failure in Extrapolating to Extreme Property Values

Problem: Your model cannot identify materials with property values outside the range present in the training data, which is crucial for finding high-performance candidates.

Solution: Reframe the prediction problem and use transductive or matching-based methods.

- Adopt a Transductive Approach: Instead of learning a direct mapping from material to property, use a method like Bilinear Transduction. This approach learns how property values change as a function of the difference between two materials in representation space. During inference, it predicts a new material's property based on a known training example and their representational difference, which can improve extrapolation [8] [9].

- Use a Matching-based Framework: The MEX (Matching-based EXtrapolation) framework reframes property regression as a material-property matching problem, which can alleviate the complexity of direct regression and has shown state-of-the-art performance on extrapolation benchmarks [13].

- Leverage Generative Models for Data Imputation: Deep generative models can "imagine" missing data in incomplete databases. They learn the joint distribution of all data (descriptors and properties), which can improve prediction accuracy for extrapolation tasks, especially when working with small datasets (<100 records) [14].

Experimental Protocol: Implementing a Bilinear Transduction Workflow

- Representation: Convert all material compositions or structures into a fixed-length descriptor vector (e.g., using Magpie, SOAP, or a pre-trained GNN).

- Model Training: Train the bilinear model on pairs of training samples. The model learns to predict the difference in their target property values based on the difference in their descriptor vectors.

- Inference:

- For a new test material, select a base material from the training set.

- Compute the difference vector between the test material and the base material.

- The model uses this difference vector to predict the property difference, which is then added to the base material's known property to get the final prediction for the test material [8] [9].

Bilinear Transduction Workflow

Issue 3: Overconfident and Unreliable Predictions on OOD Data

Problem: Your model makes incorrect predictions on OOD materials but assigns high confidence to these wrong answers, which is dangerous for guiding experiments.

Solution: Integrate Uncertainty Quantification (UQ) into your training and evaluation pipeline.

- Implement Uncertainty-Aware Training: Use techniques like Monte Carlo Dropout (MCD) or Deep Evidential Regression (DER). These methods allow the model to estimate both the predicted value and its associated uncertainty. Combining MCD and DER in a unified protocol has been shown to reduce prediction errors by an average of 70.6% on challenging OOD tasks [11].

- Benchmark with a Unified UQ Framework: Use frameworks like MatUQ to benchmark your model's predictive accuracy and uncertainty quality. MatUQ introduces metrics like D-EviU, which combines stochastic forward passes with evidential parameters and shows a strong correlation with prediction errors, helping you identify when the model is uncertain [11].

- Calibrate Your Expectations: Understand that no single model is universally best. The MatUQ benchmark, which includes over 1,300 OOD tasks, reveals that different GNN architectures (e.g., SchNet, ALIGNN, CrystalFramer) excel at different types of OOD problems. Task-specific model selection is critical [11].

Table 3: Key Uncertainty Quantification (UQ) Techniques

| Technique | Mechanism | Advantage |

|---|---|---|

| Monte Carlo Dropout (MCD) [11] | Performs multiple forward passes with dropout enabled at inference time. The variance across predictions estimates model (epistemic) uncertainty. | Simple to implement; requires no change to model architecture. |

| Deep Evidential Regression (DER) [11] | Model directly learns parameters of a higher-order evidential distribution (e.g., a Normal Inverse-Gamma). | Provides a single-forward-pass estimate of both aleatoric and epistemic uncertainty. |

| Model Ensembles [11] | Trains multiple models independently and aggregates their predictions. | A robust and powerful method, but computationally expensive. |

Limitations of Traditional Descriptors and Black-Box Models

Frequently Asked Questions (FAQs)

1. What are the main limitations of traditional molecular descriptors in property prediction? Traditional molecular descriptors often require significant manual feature engineering and expert knowledge to select and calculate. They can be time-consuming to compute for large datasets, and their applicability domain is often limited, meaning models may not perform well on compounds that are structurally different from the training set [15].

2. Why are "black-box" models problematic in scientific research? Black-box models, such as complex deep neural networks, lack transparency because their internal decision-making process is not easily interpretable. This makes it difficult to trust their predictions, debug errors, or extract scientifically meaningful insights from the model, which is critical in fields like drug development and materials science where understanding structure-property relationships is key [16] [17].

3. What are "activity cliffs" and why do they challenge machine learning models? Activity cliffs occur when two molecules are structurally very similar but exhibit a large difference in their biological activity or potency. These edge cases are particularly challenging for ML models, which operate on the principle that similar structures have similar properties. Consequently, models often make significant prediction errors on these compounds [18].

4. How can I assess if my model will fail on new, unseen data? Performance degradation often occurs due to data distribution shifts. Techniques to foresee this issue include:

- Using Uniform Manifold Approximation and Projection (UMAP) to visualize whether your test data lies outside the feature space of your training data.

- Analyzing the disagreement (e.g., high variance) between predictions from multiple models on the test data, which can illuminate out-of-distribution samples [19].

5. What can be done to improve model interpretability? Several methods exist to shed light on black-box models:

- SHapley Additive exPlanations (SHAP): This method quantifies the contribution of each input feature (e.g., a molecular substructure) to a specific prediction, helping to explain the model's reasoning [15] [17].

- Using interpretable models by design: Simpler models like regression trees or ensemble methods based on them are inherently more interpretable than deep neural networks [20].

- Text-based representations: Using human-readable text descriptions of materials as input to transformer models can provide more transparent reasoning, as the explanations can be traced back to known chemical terms [21].

Troubleshooting Guides

Issue 1: Poor Model Performance on Structurally Similar Molecules with Divergent Properties

Problem: Your model performs well overall but makes significant errors on pairs or groups of molecules that are highly similar yet have very different target property values (i.e., activity cliffs).

Diagnosis Steps:

- Identify Activity Cliffs: Calculate the pairwise structural similarity (e.g., using Tanimoto coefficient on ECFP fingerprints) and potency difference for all compounds in your dataset [18].

- Benchmark Performance: Use a dedicated benchmark like MoleculeACE (Activity Cliff Estimation) to evaluate your model's performance specifically on these challenging cliff compounds [18].

Solutions:

- Model Selection: Consider using machine learning approaches based on molecular descriptors, which have been shown to outperform more complex deep learning methods on activity cliff compounds in some benchmarks [18].

- Data-Centric Approach: Actively seek out or synthesize data for known activity cliffs to include in your training set, ensuring the model is exposed to these edge cases.

Issue 2: Model Fails to Generalize to New Data or Different Material Classes

Problem: A model trained on one version of a database (e.g., Materials Project 2018) shows severely degraded performance when predicting properties for new compounds in an updated database (e.g., Materials Project 2021) [19].

Diagnosis Steps:

- Visualize Data Distribution: Use UMAP to project the training and new test data into a 2D or 3D space. If the test data forms clusters well outside the training data distribution, your model is extrapolating and likely to be unreliable [19].

- Query by Committee: Train multiple different models on your data. High disagreement (variance) in their predictions on the new data is a strong indicator of out-of-distribution samples [19].

Solutions:

- Active Learning: Implement an acquisition strategy like UMAP-guided or query-by-committee sampling. By adding a small amount (e.g., 1%) of the new, challenging data to your training set, you can significantly improve the model's robustness and accuracy [19].

- Universal Descriptors: Explore the use of more fundamental, physics-grounded descriptors. For materials, the electronic charge density is a universal descriptor that is uniquely determined by the material's structure and composition and contains the information needed to predict a wide range of properties, potentially improving transferability [22].

Issue 3: Lack of Trust in Model Predictions Due to "Black-Box" Nature

Problem: You cannot understand or explain why your model made a specific prediction, making it difficult to trust and act upon the results, especially in a regulatory or high-stakes R&D environment [16] [23].

Diagnosis Steps:

- Audit Model Explanations: Use interpretability tools like SHAP on a few key predictions. Check if the model's reasoning aligns with established domain knowledge or if it is relying on spurious, non-meaningful features [15] [17].

- Simplify the Model: If possible, try a simpler, more interpretable model (e.g., a regression tree) on the same task. If its performance is comparable, it may be a more suitable and trustworthy choice [20].

Solutions:

- Implement Explainable AI (XAI) Techniques: Integrate methods like SHAP directly into your prediction workflow. This provides post-hoc explanations for individual predictions, revealing which functional groups or structural features the model deemed most important [15] [17].

- Adopt Interpretable Architectures: Consider using models that offer a balance between performance and interpretability. For example, text-based transformer models that use human-readable crystal descriptions can provide explanations consistent with expert rationales [21]. Similarly, ensemble models based on regression trees are more transparent than deep neural networks [20].

Experimental Protocols & Data

Protocol: Benchmarking Model Performance on Activity Cliffs

Objective: To quantitatively evaluate a machine learning model's susceptibility to errors when predicting the properties of activity cliff compounds.

Methodology:

- Data Curation: Obtain a bioactivity dataset (e.g., from ChEMBL). Curate it by removing duplicates, standardizing structures, and checking for consistency in experimental values [18].

- Cliff Identification: For all molecular pairs in the dataset:

- Calculate structural similarity using the Tanimoto coefficient with Extended Connectivity Fingerprints (ECFPs).

- Calculate the absolute difference in potency (e.g., pIC50, pKi).

- Define activity cliffs using a threshold (e.g., similarity > 0.85 and potency difference > 100-fold or 2 log units) [18].

- Model Evaluation: Train your model on a standard training set. Evaluate its performance not just on a random test set, but specifically on the subset of molecules identified as part of activity cliff pairs. Use metrics like Mean Absolute Error (MAE).

Expected Outcome: A clear measure of model performance (e.g., MAE) on activity cliffs, which is often significantly worse than the overall test set performance, highlighting a key model weakness [18].

Protocol: Assessing Model Generalizability with Data Distribution Shift

Objective: To test whether a model trained on an existing database will perform reliably on new, previously unseen types of materials or compounds.

Methodology:

- Temporal Data Split: Instead of a random train-test split, split your data temporally. For example, train your model on data from an older version of a database (e.g., Materials Project 2018) and test it on entries added in a newer version (e.g., Materials Project 2021) [19].

- Feature Space Analysis:

- Reduce the dimensionality of both the training and test set features using UMAP.

- Plot the UMAP projections to visually inspect if the test data falls within the distribution of the training data [19].

- Committee Disagreement:

- Train three or more different model architectures (e.g., a graph neural network, a descriptor-based model, and a boosting model) on the training set.

- Calculate the standard deviation of their predictions for each point in the test set. High standard deviation indicates high model uncertainty and potential extrapolation [19].

Expected Outcome: Identification of a potential performance drop on new data. Visualization of the distribution shift and quantification of model uncertainty, guiding the need for model retraining or active learning.

Table 1: Benchmark Performance of ML Models on Activity Cliff Compounds across 30 Macromolecular Targets [18]

| Model Category | Specific Method | Key Finding on Activity Cliffs |

|---|---|---|

| Machine Learning (Descriptor-Based) | Random Forest, SVM, etc. | Outperformed more complex deep learning methods, though all methods struggled. |

| Deep Learning (Graph-Based) | Graph Neural Networks, etc. | Generally showed poorer performance on activity cliff compounds compared to descriptor-based methods. |

| Overall Conclusion | 24 methods tested | All models struggled in the presence of activity cliffs, highlighting a pressing limitation of molecular ML. |

Table 2: Performance Degradation of a State-of-the-Art Model on New Data [19]

| Dataset (Formation Energy Prediction) | Mean Absolute Error (MAE) (eV/atom) | Coefficient of Determination (R²) |

|---|---|---|

| Training Set (MP18 - Alloys of Interest) | 0.013 | High (Not specified) |

| Test Set (MP21 - New Alloys of Interest) | 0.297 | 0.194 |

| Observation | Error increased by ~22x, with severe underestimation for high-formation-energy alloys. | Model failed to make even qualitatively correct predictions. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Computational Tools and Datasets for Material Property Prediction

| Tool / Resource | Type | Function & Application |

|---|---|---|

| MoleculeACE [18] | Software Benchmark | A dedicated platform for benchmarking ML model performance on activity cliff compounds. |

| SHAP (SHapley Additive exPlanations) [15] [17] | Interpretation Library | Explains the output of any ML model by quantifying the contribution of each input feature to a single prediction. |

| UMAP (Uniform Manifold Approximation and Projection) [19] | Dimensionality Reduction Tool | Visualizes high-dimensional data to assess the overlap between training and test datasets and identify distribution shifts. |

| Electronic Charge Density [22] | Universal Descriptor | A fundamental, physics-grounded input for ML models that can predict multiple material properties, improving transferability. |

| MatBERT / Text-based Transformers [21] | Language Model | Uses human-readable text descriptions of materials for property prediction, often yielding more interpretable results. |

| Ensemble Learning (RF, XGBoost) [20] | Modeling Technique | Combines multiple simple models (e.g., regression trees) to create a robust and more interpretable predictor. |

Workflow Diagrams

Frequently Asked Questions

Q1: Why do my machine learning models fail to generalize on new molecular datasets, even when using standard fingerprints? The failure often stems from a topological mismatch between the molecular representation you've chosen and the underlying property landscape of your data. If the feature space of your representation is topologically "rough" – meaning it contains many discontinuities like Activity Cliffs (ACs) – standard machine learning models will struggle to learn a smooth, generalizable function [24]. Structurally similar molecules with large property differences break the fundamental principle that "similar molecules have similar properties" [24].

Q2: My high-throughput DFT screening suggests many topological materials, but experimental validation finds far fewer. What is the cause of this discrepancy? This is a classic electronic structure representation bottleneck. High-throughput screenings have often relied on semi-local DFT functionals (like PBE) due to computational cost. However, these can underestimate electronic interactions, leading to an over-prediction of topological states [25]. Using more advanced hybrid functionals (like HSE), which incorporate exact Hartree-Fock exchange, provides a more accurate electronic structure. Studies show this can reduce the identified fraction of topological materials from ~30% to ~15%, bringing computational predictions in line with experimental reality [25].

Q3: How can I predict material properties accurately when I only have a very small amount of experimental data? In severe data scarcity scenarios, avoid training standard models from scratch. Instead, use an Ensemble of Experts (EE) approach [3]. This method uses pre-trained models ("experts") on large datasets of related physical properties. The knowledge from these experts is combined to create informative molecular fingerprints, which are then used to make accurate predictions for your complex target property, even with very limited data [3].

Q4: Is there a single best molecular representation for all drug discovery tasks? No. Systematic benchmarking studies reveal that no single representation is universally superior [24]. The performance of a representation is highly task-dependent. While traditional fingerprints (like ECFP) are often favored for their interpretability and efficiency, modern learned representations (from GNNs or Transformers) can capture more complex patterns but may underperform with small datasets [24] [26]. The choice depends on your specific data and task.

Troubleshooting Guides

Problem: Inaccurate Topological Classification of Materials

Issue: Computational workflows misclassify a material's topological state (e.g., trivial vs. topological insulator), often due to an inadequate approximation of the electronic exchange-correlation functional [25].

Diagnosis and Solution: Adopt a high-fidelity DFT workflow that integrates both atomic structure optimization and hybrid functional calculations.

- Step 1: Structure Optimization. Begin with an experimental crystal structure (e.g., from the Materials Project Database). Perform a DFT-based geometry optimization to find the theoretical ground-state atomic configuration, as experimental structures may not be optimized for DFT calculations [25].

- Step 2: Charge Density Calculation. Using the optimized structure, run a non-self-consistent field calculation with a semi-local functional (e.g., PBE) to compute the charge density [25].

- Step 3: High-Fidelity Electronic Structure. Calculate the wavefunctions at high-symmetry points in the Brillouin zone using both PBE and the HSE hybrid functional. This comparative step is critical to assess the sensitivity of your results to the exchange-correlation approximation [25].

- Step 4: Topological Analysis. Process the wavefunctions (e.g., using

VASP2Trace) to compute symmetry operators and plane-wave coefficients. Finally, feed this data to a classification tool likeCheckTopologicalMaton the Bilbao Crystallographic Server, which uses symmetry indicators and elementary band representations to determine the topological class [25].

Problem: Handling Rough and Discontinuous Molecular Property Landscapes

Issue: Model performance is poor due to Activity Cliffs—pairs of structurally similar molecules with large property differences that create a complex, "rough" property landscape [24].

Diagnosis and Solution: Quantify the landscape's roughness and select a representation whose feature space topology is compatible with it.

- Step 1: Quantify Landscape Roughness. Before model training, calculate topological and roughness indices for your dataset and representation.

- SALI (Structure-Activity Landscape Index): Identifies individual Activity Cliffs by measuring the ratio of property difference to structural dissimilarity for molecule pairs [24].

- ROGI (Roughness Index): Measures global surface roughness by observing how property dispersion changes as the dataset is progressively coarse-grained. A higher ROGI value predicts a higher potential model error [24].

- Step 2: Select an Optimal Representation. Use a predictive model like TopoLearn, which correlates the topological descriptors of a representation's feature space with expected machine learning generalization error. This provides a data-driven method to select the most effective representation for your specific dataset, avoiding exhaustive empirical testing [24].

- Step 3: Consider a Universal Descriptor. For a fundamentally different approach, use the real-space electronic charge density as your descriptor. According to the Hohenberg-Kohn theorem, this single object uniquely determines all ground-state molecular properties and has shown promise as a highly transferable input for multi-task property prediction [22].

Experimental Data & Protocols

| Functional Type | Total Materials Calculated | Topological Insulators (NLC & SEBR) | Topological Semimetals (ES & ESFD) | Total Topological Materials |

|---|---|---|---|---|

| PBE (Semi-local) | 12,035 | 1,350 (11.2%) | 2,070 (17.2%) | 28.4% |

| HSE (Hybrid) | 9,757 | 705 (7.2%) | 749 (7.7%) | ~15.0% |

Protocol for Topological Classification (as in Table 1):

- Workflow: Follow the detailed hybrid DFT workflow outlined in the troubleshooting guide above.

- Classification Logic: The

CheckTopologicalMattool classifies materials based on band structure analysis [25]:- Trivial Insulator: Band structure matches a sum of elementary band representations (EBRs).

- Topological Insulator (SEBR/NLC): Band structure involves split EBRs or cannot be expressed as a linear combination of EBRs.

- Topological Semimetal (ES/ESFD): Band crossings are enforced by symmetry, either at (ESFD) or not necessarily at (ES) the Fermi level.

Table 2: A Researcher's Toolkit for Molecular Representation

| Representation | Type | Key Function | Best Use Case |

|---|---|---|---|

| ECFP Fingerprints [26] [24] | Traditional | Encodes molecular substructures as a fixed-length binary vector, capturing local atomic environments. | Similarity searching, virtual screening, and models where interpretability and speed are key [24]. |

| SMILES/SELFIES [27] [3] | Language-Based | Represents molecular structure as a string of characters, enabling use of NLP models (Transformers). | Generative tasks and property prediction using large pre-trained chemical language models [27]. |

| Graph Neural Networks [26] [24] | Learned (AI) | Learns representations directly from the molecular graph (atoms as nodes, bonds as edges). | Capturing complex structure-property relationships when sufficient data is available [24]. |

| Electronic Charge Density [22] | Physical | Uses the 3D electron density distribution as a universal descriptor of the material. | Multi-task learning and predicting diverse properties from a single, physically rigorous input [22]. |

| TopoLearn Model [24] | Meta-Model | Predicts the optimal molecular representation for a given dataset based on the topology of its feature space. | Guiding representation selection to improve model generalizability, especially on challenging landscapes [24]. |

Essential Research Reagent Solutions

The following computational "reagents" are essential for designing experiments to overcome representation bottlenecks.

- Hybrid DFT Functionals (e.g., HSE): Used for high-fidelity electronic structure calculation. Their function is to mix exact Hartree-Fock exchange with DFT exchange, providing a more accurate description of band gaps and electronic states, which is critical for identifying topological materials [25].

- Symmetry Indicator Tools (e.g., CheckTopologicalMat): A software tool available on the Bilbao Crystallographic Server. Its function is to automate the topological classification of materials by analyzing band representations and symmetry data derived from DFT calculations [25].

- Topological Data Analysis (TDA) Descriptors: Mathematical tools (e.g., persistent homology) that quantify the shape and connectivity (topology) of a high-dimensional data cloud. Their function is to characterize the "roughness" of a molecular property landscape and correlate it with expected machine learning performance [24].

- Ensemble of Experts (EE) Framework: A machine learning architecture. Its function is to leverage knowledge from models pre-trained on large, related datasets to make accurate predictions for complex properties, effectively overcoming severe data scarcity [3].

Next-Generation Solutions: Advanced Methods for Accurate and Generalizable Prediction

Leveraging Transductive Learning for Improved OOD Extrapolation

Frequently Asked Questions

Q1: What is the primary advantage of using transductive learning for Out-of-Distribution (OOD) property prediction?

Transductive learning methods, such as Bilinear Transduction, significantly improve extrapolation capabilities by reparameterizing the prediction problem. Instead of predicting property values directly from new materials, these methods predict based on a known training example and the difference in representation space between the known and new material. This approach learns how property values change as a function of material differences, leading to more accurate OOD predictions. For solid-state materials and molecules, this method has been shown to improve extrapolative precision by 1.8× and 1.5× respectively, and boost recall of high-performing candidates by up to 3× [8] [9].

Q2: My model performs well during validation but fails to identify promising OOD candidates during screening. What could be wrong?

This common issue often stems from using conventional random cross-validation, which tends to overestimate performance on OOD data. Standard cross-validation assesses models primarily on interpolative tasks, where test samples fall within the training distribution. For true OOD extrapolation, consider implementing leave-one-group-out validation, where the model is explicitly trained to predict properties for entirely unseen chemical families [28]. This approach provides a more realistic assessment of extrapolation capability and has been shown to improve accuracy when predicting novel material classes.

Q3: How does Multi-Anchor Latent Transduction (MALT) improve upon single-anchor approaches?

MALT overcomes limitations of fixed descriptors and single-anchor comparisons by operating directly within a learned latent space and leveraging multiple relevant analogues of query molecules. By selecting multiple anchors and integrating their embeddings with the query embedding, MALT provides more robust predictions that consistently improve OOD generalization over standard inductive baselines while matching or surpassing their in-distribution performance [29].

Q4: What are the most common failure modes when applying transductive learning to molecular property prediction?

The primary failure modes include: (1) Inadequate anchor selection, where chosen training examples don't sufficiently represent the query's chemical space; (2) Representation mismatch, where the embedding space doesn't capture meaningful chemical relationships; and (3) Property-specific challenges, where certain molecular properties exhibit discontinuous behavior across chemical space. Rigorous validation using scaffold splits or time splits can help identify these issues early [28].

Troubleshooting Guides

Issue: Poor OOD Performance Despite High In-Distribution Accuracy

Symptoms: Model achieves low MAE on validation data but fails to identify true high-performance candidates during virtual screening.

Diagnosis: This indicates overfitting to the training distribution and poor extrapolation capability.

Solution:

- Implement Bilinear Transduction by reparameterizing predictions to use analogical reasoning

- Apply leave-one-cluster-out cross-validation during development

- Utilize multi-anchor approaches like MALT to leverage multiple relevant analogues

- Verify that the representation space captures chemically meaningful relationships

Verification: Check if the method improves recall of true top candidates in the OOD set. Successful implementation should yield at least 2× improvement in identifying high-performing OOD materials [8] [9].

Issue: Inconsistent Performance Across Different Material Classes

Symptoms: Model performs well on some material families but poorly on others, particularly novel chemical scaffolds.

Diagnosis: The model likely relies too heavily on specific chemical features present in the training data.

Solution:

- Apply stratified sampling based on chemical families during training

- Use domain adaptation techniques to improve transfer across families

- Implement multi-anchor latent transduction to leverage diverse analogues

- Incorporate additional descriptors that capture broader chemical relationships

Verification: Evaluate performance separately for each material family in the test set. The performance gap between seen and unseen families should decrease significantly with proper implementation [28].

Issue: High Variance in OOD Predictions

Symptoms: Predictions for similar OOD candidates show unexpected large variations.

Diagnosis: Instability in the transduction process, potentially from poor anchor selection or representation inconsistencies.

Solution:

- Increase the number of anchors used in multi-anchor approaches

- Implement anchor selection criteria based on both structural similarity and property space

- Regularize the bilinear transformation to prevent overfitting to specific anchor-query pairs

- Use ensemble methods combining multiple transduction instances

Verification: Monitor prediction stability for similar query molecules and reduce coefficient of variation in predictions.

Performance Comparison of OOD Methods

Table 1: Comparative Performance of Transductive vs. Baseline Methods on Materials Property Prediction

| Dataset | Property | Ridge Regression | CrabNet | Bilinear Transduction |

|---|---|---|---|---|

| AFLOW | Bulk Modulus (GPa) | 74.0 ± 3.8 | 59.25 ± 3.2 | 47.4 ± 3.4 |

| AFLOW | Debye Temperature (K) | 0.45 ± 0.03 | 0.38 ± 0.02 | 0.31 ± 0.02 |

| AFLOW | Shear Modulus (GPa) | 0.69 ± 0.03 | 0.55 ± 0.02 | 0.42 ± 0.02 |

| Matbench | Yield Strength (MPa) | 972 ± 34 | 740 ± 49 | 591 ± 62 |

| Materials Project | Bulk Modulus (GPa) | 151 ± 14 | 57.8 ± 4.2 | 45.8 ± 3.9 |

Table 2: Extrapolative Precision Improvement for Top 30% OOD Candidates

| Domain | Baseline Precision | Transductive Precision | Improvement Factor |

|---|---|---|---|

| Solid-State Materials | 22% | 40% | 1.8× |

| Molecules | 17% | 26% | 1.5× |

Experimental Protocols

Protocol 1: Implementing Bilinear Transduction for Materials Property Prediction

Purpose: To accurately predict material properties for out-of-distribution values using analogical reasoning.

Materials and Representations:

- Input: Chemical compositions (stoichiometry) for solids or molecular graphs/SMILES for molecules

- Representations: Stoichiometry-based descriptors or learned molecular representations

- Property values: Electronic, mechanical, thermal properties from standard databases

Procedure:

- Data Preparation: Split data into training and OOD test sets, ensuring test set contains property values outside training range

- Representation Learning: Generate material representations using appropriate encoders

- Anchor Selection: For each test sample, identify the most similar training examples based on representation similarity

- Bilinear Transformation: Learn how property differences relate to representation differences using bilinear model

- Prediction: For test sample ( x{test} ), predict property as: ( y{test} = y{anchor} + f(x{test} - x_{anchor}) )

- Validation: Use leave-one-cluster-out validation to assess OOD performance

Expected Outcomes: Significant improvement in OOD MAE and recall of high-performing candidates compared to standard regression approaches [8] [9].

Protocol 2: Multi-Anchor Latent Transduction (MALT) for Molecular Property Prediction

Purpose: To improve OOD generalization for molecular properties using multiple analogues in latent space.

Materials:

- Molecular encoders: Pre-trained models for molecular representation

- Property datasets: ESOL, FreeSolv, Lipophilicity, BACE from MoleculeNet

- Implementation: MALT framework operating in learned latent space

Procedure:

- Latent Space Construction: Generate molecular embeddings using pre-trained encoder

- Multi-Anchor Selection: Identify k-most relevant training analogues for each query molecule

- Feature Integration: Combine query and anchor embeddings through attention mechanism

- Property Prediction: Generate final prediction through integrated representation

- Evaluation: Assess on rigorous OOD benchmarks targeting shifts in property values and chemical features

Validation Metrics: OOD MAE, precision-recall for high-value candidates, and comparison to standard inductive baselines [29].

Workflow Diagrams

Multi-Anchor Latent Transduction Workflow

OOD Validation Strategy

Research Reagent Solutions

Table 3: Essential Computational Resources for Transductive OOD Prediction

| Resource | Function | Implementation Examples |

|---|---|---|

| Molecular Encoders | Generate latent representations for molecules | Pre-trained GNNs, Transformer models |

| Material Descriptors | Represent solid-state materials | Stoichiometry-based features, composition embeddings |

| Similarity Metrics | Measure distance in representation space | Cosine similarity, Euclidean distance, learned metrics |

| Anchor Selection | Identify relevant training analogues | k-NN, similarity thresholding, diversity sampling |

| Bilinear Models | Learn property difference relationships | Matrix factorization, regularized regression |

| Benchmark Datasets | Evaluate OOD performance | AFLOW, Matbench, Materials Project, MoleculeNet |

Frequently Asked Questions (FAQs)

Q1: What is an Ensemble of Experts (EE) model and how does it help with small datasets? An Ensemble of Experts (EE) is a machine learning framework that combines knowledge from multiple pre-trained models, or "experts." These experts are first trained on large, high-quality datasets for physical or chemical properties that are related to your target property. When you need to predict a complex property (like glass transition temperature) but have very little training data, the EE system uses the knowledge already encoded in these experts to make accurate predictions, significantly outperforming standard models trained from scratch on your small dataset [3].

Q2: My dataset has less than 100 data points. Can the EE approach work for me? Yes. Research has demonstrated that the EE framework is particularly effective under "severe data scarcity conditions," where it maintains higher predictive accuracy and better generalization compared to standard artificial neural networks (ANNs). Its ability to leverage pre-existing knowledge makes it suitable for scenarios where collecting large datasets is impractical [3].

Q3: What is the minimum data required to start using an EE system? While the EE is designed for data-scarce environments, a related guideline for AI in drug delivery, the "Rule of Five" (Ro5), suggests that a robust formulation dataset should contain at least 500 entries and cover a minimum of 10 drugs and all significant excipients [30]. For the EE, the focus is less on a fixed minimum and more on leveraging the pre-trained experts; however, ensuring your small dataset is high-quality and representative is critical.

Q4: How should I represent molecular structures for the best results in an EE model? Using tokenized SMILES (Simplified Molecular Input Line Entry System) strings is recommended. This approach enhances the model's capacity to interpret complex chemical information and relationships compared to traditional one-hot encoding methods, leading to more accurate predictions of material properties [3].

Q5: What are common reasons for poor EE model performance even with the correct architecture?

- Low-Quality Expert Pre-Training: The experts were not trained on sufficiently large or physically relevant datasets.

- Irrelevant Expert Knowledge: The properties on which the experts were trained are not meaningfully related to your target property.

- Data Imbalance: Your small target dataset does not adequately represent the chemical space you are trying to predict.

- Incorrect Gating Function: The mechanism that routes inputs to the most relevant expert(s) may not be functioning optimally, leading to poor model specialization [3] [31].

Troubleshooting Guides

Issue: Model Fails to Generalize to New Types of Molecules

Problem: Your EE model performs well on molecules similar to those in your small training set but fails on new molecular structures or polymer-solvent systems.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Experts lack diverse knowledge. | Check the diversity of chemicals in the experts' original training datasets. | Incorporate additional experts that were pre-trained on more diverse chemical databases, or retrain experts on a broader set of compounds [3]. |

| Gating function is not learning meaningful routes. | Analyze the gating patterns to see if similar molecules are consistently routed to the same expert. | Adjust the gating function's design, for example, by ensuring it promotes a balanced use of experts to prevent model collapse and encourage specialization [31]. |

Issue: Model Performance is Highly Variable Across Different Training Runs

Problem: When you retrain the EE model on the same small dataset, you get significantly different performance metrics each time.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| High variance from small dataset. | Perform multiple training runs with different random seeds and calculate the standard deviation of key metrics. | Employ bootstrap aggregation (bagging). Train multiple EE models on different bootstrap samples of your small dataset and average their predictions. This has been shown to enhance reliability and provide uncertainty quantification [32]. |

| Unstable training dynamics. | Monitor the loss landscape and router behavior during training for large fluctuations. | Implement training stabilization techniques specific to MoE models, such as a router z-loss penalty, which helps ensure training stability in complex architectures [31]. |

Experimental Protocol for an Ensemble of Experts Workflow

The following workflow outlines the key steps for developing and training an Ensemble of Experts model for material property prediction, based on established methodologies [3] [32].

Detailed Methodology

Step 1: Assemble Expert Datasets

- Action: Gather large, high-quality datasets for properties that are physically related to your target property. For example, if predicting the Flory-Huggins parameter (χ), relevant expert properties might include solubility parameters or other interaction energies.

- Data Sources: Utilize public material databases such as the Materials Project [32], Supercon Material Database [32], or other experimental compilations [32].

- Key Consideration: The size and quality of these datasets directly determine the knowledge base of your experts. Aim for datasets with thousands to tens of thousands of entries where possible [33].

Step 2: Pre-train Expert Models

- Action: Train individual neural network models (the "experts") on each of the datasets assembled in Step 1.

- Model Architecture: Standard Artificial Neural Networks (ANNs) or Graph Neural Networks (GNNs) are commonly used. For molecular data, GNNs that use tokenized SMILES strings or element graphs as input are effective [3] [32].

- Output: The goal is to have fully trained models that can accurately predict their respective expert properties.

Step 3: Prepare Target Dataset

- Action: Compile your small, target dataset for the complex property you wish to predict (e.g., glass transition temperature Tg).

- Representation: Represent each molecule in this dataset using its tokenized SMILES string [3].

Step 4: Generate Molecular Fingerprints

- Action: Pass the tokenized SMILES strings from your target dataset through the pre-trained expert models.

- Output: The activations from an intermediate layer of each expert model are extracted. These activations serve as a "fingerprint" that encapsulates the chemical knowledge of each expert. These fingerprints are then used as the input features for the final EE model [3].

Step 5: Build and Train the EE Model

- Action: Construct a final model (e.g., a linear model or a shallow neural network) that takes the combined expert fingerprints as input and predicts the target property.

- Training: This final model is trained only on your small target dataset. Because the inputs are rich, knowledge-dense fingerprints, the model can learn effectively even with limited data.

Step 6: Evaluate and Deploy

- Action: Rigorously evaluate the EE model's performance using hold-out test sets or cross-validation. Compare its performance against a standard ANN trained directly on your small dataset to quantify the improvement.

- Deployment: Use the trained EE model to screen new candidate materials and predict their properties.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and data resources essential for building an Ensemble of Experts framework.

| Item Name | Function / Role in the EE Workflow | Key Characteristics |

|---|---|---|

| Tokenized SMILES Strings | Represents molecular structure as a sequence of tokens for model input. | Enhances chemical interpretation compared to one-hot encoding; captures complex structural relationships [3]. |

| Graph Neural Networks (GNNs) | Serves as the architecture for expert models, especially for crystalline or molecular data. | Naturally represents materials as graphs (atoms=nodes, bonds=edges); automatically learns relevant features [33] [32]. |

| Bootstrap Aggregation (Bagging) | A resampling technique used to improve model stability and quantify uncertainty. | Trains multiple models on different subsets of data; combined outputs reduce variance and highlight outliers [32]. |

| Public Material Databases | Provides the large, high-quality datasets needed to pre-train the expert models. | Examples: Materials Project (DFT data), Supercon (superconductivity), NIST (experimental data) [32]. |

| Gating Function / Router | The mechanism within the EE that dynamically selects the most relevant expert(s) for a given input. | Critical for model efficiency and performance; often a linear function with softmax; must balance expert specialization with load balancing [31]. |

Accurate material property prediction is crucial for accelerating the discovery of new materials for applications in energy, catalysis, and drug development. Traditional methods, like Density Functional Theory (DFT), are computationally expensive, limiting large-scale screening [34]. While machine learning models, particularly Graph Neural Networks (GNNs), offer a faster alternative by representing materials as graphs (atoms as nodes, bonds as edges), they face significant challenges [34]. Data scarcity for specific properties (e.g., mechanical properties like elastic modulus) and difficulties in capturing complex global crystal structure and periodicity often lead to model overfitting and restricted performance [34]. Dual-stream GNN architectures represent a promising advancement by integrating multiple, complementary data processing pathways to create a more comprehensive and powerful representation of materials, thereby overcoming these fundamental limitations.

Frequently Asked Questions (FAQs)

Q1: My dual-stream model is overfitting on a data-scarce mechanical property dataset. What strategies can I use?

A1: For data-scarce properties like bulk or shear modulus, consider these approaches:

- Leverage Transfer Learning (TL): Pre-train your model on a data-rich "source task" (e.g., predicting formation energy or total energy) before fine-tuning it on your data-scarce "downstream task." This leverages learned features and acts as a regularizer to reduce overfitting [34].

- Employ a Modular Framework: Use a framework like MoMa, which centralizes specialized modules trained on diverse high-resource tasks. For a new, data-scarce task, an adaptive composition algorithm selects and combines the most synergistic modules, effectively transferring knowledge without retraining a full model from scratch [35].

- Utilize a Hybrid Architecture: Implement a model like CrysCo, which combines a structure-based GNN stream with a composition-based Transformer network. This allows the model to learn from both atomic structure and human-extracted compositional/physical properties, enriching the feature set even when structural data is limited [34].

Q2: How can I ensure my GNN captures both local atomic environments and global structural features of a crystal?

A2: Relying on a single, shallow GNN often fails to capture global context. To address this:

- Implement a Deeper GNN Architecture: Use a deep GNN model (e.g., with 10 layers) that explicitly incorporates higher-order interactions. The CrysGNN model, for instance, uses an Edge-Gated Attention Graph Neural Network (EGAT) to update representations based on up to four-body interactions (atom type, bond lengths, bond angles, and dihedral angles), capturing more complex structural patterns [34].

- Adopt a Dual-Stream Approach: A parallel architecture is highly effective. One stream (e.g., a GNN) can focus on the local topological structure and motion representations, while the other (e.g., a Transformer) captures global inter-joint relationships and long-range dependencies beyond immediate neighbors [36]. A late fusion strategy then combines these complementary perspectives [36].

Q3: My model's predictions lack interpretability. How can I understand which atomic structures or compositions drive the results?

A3: For Text-Attributed Graphs (TAGs), you can use post-hoc explanation frameworks.

- Use an LLM-based Explainer: Frameworks like Logic project GNN node embeddings into the embedding space of a Large Language Model (LLM). The LLM then reasons over the GNN's internal representations to generate natural language explanations and concise explanation subgraphs, making the model's decision-making process more human-interpretable [37].

- Analyze Elemental Contributions: Some hybrid models, like the Transformer and Attention Network (TAN) in CoTAN, offer built-in interpretability by highlighting the importance of different elemental contributions from the composition, providing physical insights into the model's predictions [34].

Troubleshooting Common Experimental Issues

Problem: Poor Performance on Heterophilous Graphs

- Symptoms: Model performance is worse than a simple Multi-Layer Perceptron (MLP) that ignores graph structure.

- Background: GNNs were traditionally believed to work best on homophilous graphs, where connected nodes share similar features and labels. However, in material graphs, nodes (atoms) with different properties (e.g., different elements) can be connected (heterophily) [38].

- Solution: Do not assume GNNs are unsuitable for heterophily. GNNs can still achieve discriminative representations if nodes from the same class share a similar neighborhood distribution, even if the immediate neighbors are from a different class. The key is the consistency of the connection pattern [38]. Empirically validate your graph's properties rather than relying on assumptions.

Problem: Ineffective Fusion of Dual Streams

- Symptoms: The combined model performs no better, or even worse, than the individual streams run independently.

- Background: Simply running two streams in parallel does not guarantee beneficial interaction. Poorly designed fusion can introduce noise or fail to leverage complementary information [36] [34].

- Solution:

- Refine Fusion Strategy: Implement and test different fusion strategies, such as a weighted late fusion of the prediction scores (logits) from each stream, rather than simply averaging intermediate features [36].

- Systematic Evaluation: Conduct an ablation study to rigorously test the contribution of each stream and the fusion mechanism. The table below shows a template for such an analysis.

Table: Template for Ablation Study on Fusion Strategy Performance

| Model Configuration | Test MAE (Formation Energy) | Test Accuracy (Band Gap Classification) |

|---|---|---|

| GNN Stream Only | ||

| Transformer Stream Only | ||

| Early Feature Fusion | ||

| Late Prediction Fusion |

Experimental Protocols & Methodologies

Protocol 1: Implementing a Hybrid Transformer-Graph (CrysCo) Framework This protocol is designed for predicting energy-related properties (e.g., formation energy, energy above convex hull) and data-scarce mechanical properties [34].

Data Preparation:

- Source: Use computational data from public databases like the Materials Project (MP).

- Inputs:

- Crystal Structure Graph: Represent the crystal structure as a graph. The CrysGNN stream uses three distinct graphs: the original graph (Gδ), its line graph L(Gδ), and the line graph of the deltahedral graph L(Gδd) to capture four-body interactions [34].

- Compositional Features: For the CoTAN stream, input compositional features and human-extracted physical properties [34].

Model Architecture:

- Stream A (CrysGNN): A 10-layer Edge-Gated Attention GNN (EGAT) that performs message-passing on the three graph representations to update node (atom) and edge (bond) features, capturing complex local and multi-body interactions [34].

- Stream B (CoTAN): A Transformer and Attention network inspired by CrabNet that processes compositional data and learns from elemental relationships [34].

- Fusion: Train the two streams in a single, hybrid manner, allowing the model to simultaneously learn from structure and composition [34].

Training with Transfer Learning (for data-scarce tasks):

- Pre-train the entire CrysCo model on a large dataset of primary properties (e.g., formation energy).

- Use this pre-trained model as the initialization for fine-tuning on the smaller, target dataset (e.g., shear modulus) [34].

Dual-Stream Architecture for Material Property Prediction

Protocol 2: Adaptive Module Composition with MoMa This protocol is for scenarios where you need to adapt quickly to multiple, disparate material property prediction tasks with varying data availability [35].

Module Training & Centralization:

- Train a multitude of specialized modules (e.g., for thermal, electronic, mechanical properties) on high-resource datasets. Each module can be a fully fine-tuned model or a parameter-efficient adapter.

- Centralize these trained modules in a repository called MoMa Hub [35].

Adaptive Module Composition (AMC):

- For a new downstream task, the AMC algorithm estimates the performance of each module in the hub on the target data in a training-free manner.

- It then heuristically optimizes a weighted combination of the most synergistic modules.

- The final step is to fine-tune this composed module on the target task for optimal adaptation [35].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for Dual-Stream GNN Experiments

| Research Reagent | Function & Explanation |

|---|---|

| Crystallographic Data (e.g., from Materials Project) | Provides the foundational graph structure. Atomic coordinates and species define the nodes, while interatomic distances and bond types define the edges in the topological stream [34]. |

| Compositional Descriptors | These are the input features for the compositional stream. They can include stoichiometry, elemental properties (e.g., electronegativity, atomic radius), and other human-engineered features that describe the material's chemical makeup [34]. |

| Pre-trained Model Checkpoints (for Transfer Learning) | Models pre-trained on large, generic material datasets (e.g., formation energies). They act as a form of "pre-trained knowledge," providing a strong starting point to improve performance and convergence on data-scarce tasks [34] [35]. |

| Modular Framework (e.g., MoMa Hub) | A centralized repository of specialized, pre-trained modules. This allows researchers to "mix and match" expert modules without retraining from scratch, facilitating rapid adaptation to new prediction tasks and mitigating data scarcity [35]. |

| LLM Explanation Framework (e.g., Logic) | A tool for model interpretability. It translates the complex, internal representations of the GNN into natural language narratives and key subgraphs, helping researchers understand and trust the model's predictions on text-attributed graphs [37]. |

Fundamental Concepts & Data Acquisition

What is electronic charge density and why is it a physically-grounded descriptor?

Answer: Electronic charge density, denoted as ρ(r), is a fundamental quantum mechanical observable that describes the probability per unit volume of finding any electron at a specific point in space, expressed in units of eÅ⁻³ or atomic units [39] [40]. For an N-electron system, it is defined by ρ(r) = N ∫ ψ*ψ dτ, where ψ is the stationary state wavefunction and τ denotes the spin and spatial coordinates of all electrons but one [39].

Its role as a physically-grounded descriptor is anchored by the Hohenberg-Kohn theorem of Density Functional Theory (DFT), which establishes that the ground-state electron density uniquely determines all properties of a quantum system, including its total energy and wavefunction [22] [41]. This one-to-one correspondence makes it an excellent universal descriptor for machine learning models, as it inherently encodes information about atomic species, structural symmetry, chemical bonding, and valence electron states without requiring ad-hoc feature engineering [22].

How can I obtain the electronic charge density for a material?

Answer: You can acquire electronic charge density through both theoretical computation and experimental measurement.

| Method | Brief Description | Key Outputs/Analyses |

|---|---|---|

| Theoretical Calculation (DFT) | Uses quantum mechanical codes (e.g., VASP) to solve Kohn-Sham equations iteratively until self-consistency (SCF) is reached [42] [41]. | CHGCAR files (VASP); Cube files; Total energy, band structure, bonding analysis [22] [41] [43]. |

| Experimental Measurement (X-ray Diffraction) | Measures intensities of Bragg reflections. Electron density is reconstructed via Fourier summation and refined using multipolar models [39] [40]. | Deformation density maps; Topological analysis of bonds; Experimental structure factors [39] [40]. |

Experimental Protocol for X-ray Diffraction:

- Data Collection: Collect high-resolution, high-redundancy X-ray diffraction data on a single crystal at low temperature to minimize thermal vibrations [40].

- Structural Refinement: Perform a preliminary refinement of the crystal structure in the spherical atom approximation using software like Shelx [39].

- Multipolar Model Refinement: Refine a multipolar expansion model (e.g., Hansen & Coppens model) against the measured structure factors using dedicated software such as XD or MoPro to obtain the static experimental electron density [39] [40].

What software can I use to visualize charge density and related fields?

Answer: Multiple software packages offer visualization capabilities, often directly reading output files from standard DFT codes.

- AMSview: This module from the Amsterdam Modeling Suite can visualize isosurfaces of the SCF density, color them by the electrostatic potential, and create difference density maps. It allows you to adjust grids for smoothness and use various colormaps [43].

- chemtoools: A Python package that can calculate and visualize the Electron Localization Function (ELF) and electron density from Gaussian cube files. It can also interpolate values to arbitrary points [44].

- VESTA, VMD, and Jmol are other widely used programs for visualizing volumetric data like charge density.

Technical Issues & Troubleshooting

My DFT calculations are slow to converge or fail to converge. How can charge density help?

Answer: Slow SCF convergence is a common bottleneck. Machine-learning models can predict a highly accurate initial charge density, which can serve as an excellent starting point for the DFT calculation, significantly reducing the number of SCF iterations required.

Experimental Evidence: A model called ChargE3Net, when trained on over 100K materials from the Materials Project, was used to initialize DFT calculations on unseen materials. This led to a median reduction of 26.7% in SCF steps compared to standard initialization methods, dramatically accelerating computational workflows [42].

I work with large systems; can I use machine learning to predict charge density efficiently?

Answer: Yes, this is an active and promising research area. The key is to use models with linear time complexity with respect to system size. For example, the ChargE3Net architecture has demonstrated the capability to predict charge density for systems containing over 10,000 atoms, a scale that is computationally prohibitive for standard DFT calculations due to its O(N³) scaling [42].

My charge density data has different grid dimensions for different materials, which is problematic for my ML model. How can I standardize this?

Answer: This is a major challenge when using 3D grid-based data for machine learning. The solution is to use a representation-independent approach.

Standardization Protocol:

- Fourier Interpolation: Leverage the periodicity of the crystal. Take the discrete Fourier transform of your real-space charge density grid, augment it with zero-valued high-frequency components, and then apply the reverse transform to obtain a consistently up-sampled grid [41].

- Resampling to a Common Grid: Use the up-sampled data to resample the charge density onto a common, material-agnostic grid using linear interpolation, ensuring all data inputs have a unified dimension for the ML model [22] [41].

- Image Representation: Some modern frameworks convert the 3D charge density matrix into a series of 2D image snapshots along specific crystal directions, which can then be processed by standard convolutional neural networks (CNNs) [22].

Diagram 1: Data standardization workflow for machine learning.

Data Handling & Modeling Challenges

How can I ensure my ML-predicted charge density is physically meaningful?

Answer: To ensure physical meaningfulness, your machine learning model must respect the inherent symmetries of the system. This is achieved by building E(3)-equivariance into the model architecture. E(3)-equivariance means that a rotation or translation of the input atomic system results in an identical rotation or translation of the output charge density field.

Implementation with Higher-Order Tensors: Modern architectures like ChargE3Net go beyond simple scalar and vector features. They use higher-order equivariant features in the form of irreducible representations (irreps) of the SO(3) rotation group. These features are operated on using equivariant functions like the tensor product (governed by Clebsch-Gordan coefficients), which guarantees that the model's predictions transform correctly under symmetry operations, leading to more accurate and physically credible results [42].

What is the typical accuracy of ML-predicted charge densities?

Answer: The accuracy is quantitatively measured by how well the ML-predicted density reproduces the DFT-calculated ground truth. Performance varies by model and dataset, but current state-of-the-art models show high fidelity. The table below summarizes key quantitative findings from recent research.

Table 2: Performance Metrics of ML Models for Charge Density Prediction

| Model / Study | Dataset | Key Performance Metric | Result |

|---|---|---|---|

| Universal MSA-3DCNN [22] | Materials Project | Average Coefficient of Determination (R²) | R² = 0.66 (Single-Task), R² = 0.78 (Multi-Task) |

| ChargE3Net [42] | Diverse Molecules & Materials | Reduction in SCF Iterations | 26.7% median reduction on unseen materials |