Orthogonal Characterization in Autonomous Workflows: Ensuring Reliability in AI-Driven Scientific Discovery

The integration of autonomous artificial intelligence (AI) with orthogonal characterization—the use of multiple, independent analytical methods—is revolutionizing scientific research and drug development.

Orthogonal Characterization in Autonomous Workflows: Ensuring Reliability in AI-Driven Scientific Discovery

Abstract

The integration of autonomous artificial intelligence (AI) with orthogonal characterization—the use of multiple, independent analytical methods—is revolutionizing scientific research and drug development. This article explores the foundational principles of this synergy, demonstrating how it enhances the reliability, reproducibility, and decision-making capabilities of self-driving laboratories. By examining real-world applications from chemical synthesis to biopharmaceutical profiling, we provide a methodological framework for implementation, address key troubleshooting and optimization challenges, and present validation strategies that compare autonomous systems against conventional research. This synthesis is intended to equip researchers and development professionals with the knowledge to build more robust, trustworthy, and efficient AI-driven research platforms.

The Pillars of Trust: Defining Orthogonal Characterization and Agentic Autonomy

The landscape of artificial intelligence in science is undergoing a fundamental transformation, evolving from narrowly-scoped computational tools toward autonomous, end-to-end research partners. This progression marks a pivotal stage in the AI for Science paradigm, where AI systems have moved from acting as computational oracles for targeted tasks toward the emergence of what is now termed Agentic Science [1]. In this advanced stage, AI operates as an autonomous scientific agent capable of formulating hypotheses, designing and executing experiments, interpreting results, and iteratively refining theories with significantly reduced human guidance [1]. This evolution is particularly pronounced in fields like drug development and synthetic chemistry, where the integration of orthogonal characterization techniques—using multiple, independent measurement methods to validate findings—has become a critical component of autonomous workflows [2]. The shift from tools to partners represents not merely improved algorithms but a fundamental reimagining of the scientific process itself, with AI systems now demonstrating capabilities in complex reasoning, planning, and collaborative problem-solving that were once considered exclusively human domains [3] [1].

Defining the Spectrum: From AI Tools to Autonomous Partners

The transition to Agentic Science can be understood as an evolution through distinct levels of autonomy and capability. This progression begins with AI as a specialized tool and advances toward AI as a fully autonomous scientific partner. The terminology surrounding this field has crystallized into three distinct but interconnected concepts: AI Agents, Agentic AI, and Autonomous AI [3].

Table 1: Key Definitions in the Spectrum of Scientific AI

| Term | Definition | Core Characteristics | Scientific Analogy |

|---|---|---|---|

| AI Agents [3] | Foundational systems that perceive their environment and act to meet predefined goals within fixed rules. | Task-specific automation, limited adaptability, reliable in predictable environments. | A specialized lab instrument that performs a single, repetitive measurement. |

| Agentic AI [3] [4] | Systems that exhibit planning, learning, and context-aware adaptability for dynamic goal achievement. | Multi-step reasoning, dynamic task decomposition, adaptability to new information, collaboration. | A research assistant who can plan a series of experiments and adjust protocols based on initial results. |

| Autonomous AI [3] [1] | Systems capable of self-initiated decision-making and long-term planning with minimal human oversight. | Self-initiation, adaptation to novel situations, long-term planning, minimal supervision. | A principal investigator who defines research directions, formulates hypotheses, and directs entire projects. |

The conceptual relationship between these systems can be visualized as a progressive increase in capabilities, with each stage building upon the last.

Diagram 1: The AI Autonomy Spectrum

The Evolutionary Stages of AI in Science

Formally, this evolution can be categorized into distinct levels of scientific autonomy:

Level 1: AI as a Computational Oracle (Expert Tools): At this foundational level, AI operates as a collection of highly specialized, non-agentic models designed to solve discrete, well-defined problems within a human-led workflow. These expert tools excel at tasks such as prediction and generation but lack autonomy; they function as sophisticated function approximators that require constant human guidance for task definition, execution, and interpretation of results [1]. The core of the scientific process remains entirely in the hands of the human researcher.

Level 2: AI as an Automated Research Assistant (Partial Agentic Discovery): This level marks the introduction of AI as an Automated Research Assistant. Here, AI systems exhibit partial autonomy, functioning as agents that can execute specific, pre-defined stages of the research workflow. These agents can integrate multiple tools and carry out sequences of actions to complete well-defined sub-goals, such as running a series of experiments or performing a standardized data analysis pipeline. However, the high-level scientific direction, including the initial hypothesis, is still provided by human researchers [1].

Level 3: AI as an Autonomous Research Partner (Full Agentic Discovery): This represents the current frontier of Agentic Science, where AI systems operate as full research partners capable of end-to-end scientific investigation. These systems can formulate novel hypotheses, design complete experimental campaigns, execute methodologies through integrated platforms, analyze resulting data, and iteratively refine their understanding with minimal human intervention [1]. This level is characterized by robust multi-agent collaboration, where different AI specialists (e.g., design agents, analysis agents, validation agents) work in concert to solve complex problems [4] [1].

Case Study: Autonomous Discovery in Synthetic Chemistry

A landmark demonstration of Level 3 Autonomous AI recently emerged from synthetic chemistry, where researchers developed a modular autonomous platform for general exploratory synthesis using mobile robots [2]. This system exemplifies the core principles of Agentic Science and provides a compelling case study for evaluating orthogonal characterization in autonomous workflows.

Experimental Protocol and Workflow Design

The autonomous chemistry platform was designed to mimic human decision-making processes while leveraging the persistence and precision of robotic systems. The methodology centered on a closed-loop synthesis-analysis-decision cycle that integrated multiple analytical techniques for robust characterization [2].

Table 2: Core Experimental Protocol for Autonomous Chemical Discovery

| Protocol Phase | Description | Agentic Capability Demonstrated |

|---|---|---|

| Automated Synthesis | Reactions performed using a Chemspeed ISynth synthesizer with automated aliquot sampling and reformatting for different analysis types. | Task execution, sample handling |

| Orthogonal Characterization | Samples autonomously transported by mobile robots to UPLC-MS and benchtop NMR instruments for parallel analysis. | Tool integration, multi-modal perception |

| Heuristic Decision-Making | Custom algorithm processes both UPLC-MS and NMR data to provide binary pass/fail grading based on expert-defined criteria. | Reasoning, decision logic, goal orientation |

| Workflow Progression | System autonomously selects successful reactions for scale-up or further elaboration based on combined analytical results. | Planning, iterative learning, goal achievement |

The complete workflow, integrating physical robotics with algorithmic decision-making, represents a sophisticated embodiment of agentic science principles.

Diagram 2: Autonomous Chemistry Workflow

The Scientist's Toolkit: Research Reagent Solutions

The successful implementation of this autonomous workflow depended on carefully selected research reagents and instrumentation that enabled reliable, reproducible operations with minimal human intervention.

Table 3: Essential Research Reagents and Platforms for Autonomous Discovery

| Tool/Platform | Function | Role in Autonomous Workflow |

|---|---|---|

| Chemspeed ISynth Synthesizer | Automated chemical synthesis platform | Core reaction execution with integrated aliquot sampling |

| Mobile Robots with Multipurpose Grippers | Sample transportation and equipment operation | Physical linkage between modules; enables shared equipment use |

| UPLC-MS System | Ultra-high performance liquid chromatography with mass spectrometry | Primary characterization providing molecular weight and purity data |

| Benchtop NMR Spectrometer | Nuclear magnetic resonance spectroscopy | Orthogonal characterization for structural elucidation |

| Heuristic Decision Algorithm | Custom software for data interpretation | Autonomous decision-making based on multiple analytical inputs |

| Python Control Scripts | Customizable automation protocols | Orchestrates data acquisition and instrument control |

Orthogonal Characterization in Autonomous Workflows

A critical innovation in this platform was its emphasis on orthogonal characterization through combining UPLC-MS and NMR spectroscopic analysis [2]. Unlike earlier autonomous systems that relied on single analytical techniques, this approach mirrored human experimental practice by employing multiple, independent measurement methods to validate findings. This orthogonal methodology was particularly valuable for exploratory synthesis where reactions could yield multiple potential products, such as in supramolecular self-assembly processes [2]. The heuristic decision-maker processed these orthogonal datasets to make context-aware decisions about which reactions to advance, effectively dealing with the complexity inherent in chemical discovery where some products might yield complex NMR spectra but simple mass spectra, while others showed the reverse behavior [2].

Performance Comparison: Quantitative Assessment of AI Systems

Evaluating the performance of agentic AI systems requires multiple metrics beyond traditional computational benchmarks. The following comparative analysis examines both the capabilities and current limitations of these systems across different domains and task types.

Table 4: Performance Comparison of AI Systems Across Domains

| Domain/System | Key Performance Metrics | Strengths | Limitations/Challenges |

|---|---|---|---|

| Synthetic Chemistry Automation [2] | Successful autonomous navigation of multi-step synthetic pathways; Integration of orthogonal characterization (UPLC-MS + NMR) | Human-like decision-making; Equipment sharing without lab monopolization; Handling of exploratory synthesis | Limited to predefined chemistry spaces; Heuristic rules may overlook novel phenomena |

| Software Development [5] | -19% speed impact on experienced developers; 20-24% expected vs. actual performance gap | Effective for algorithmic tasks and benchmarks; Useful for prototyping and single-use code | Slows developers on complex, real-world codebases; Struggles with implicit requirements and high-quality standards |

| Drug Discovery Platforms [6] | AI-designed drugs reaching clinical trials in ~2 years vs. traditional ~5 years; 70% faster design cycles with 10x fewer compounds | Dramatically compressed discovery timelines; Efficient lead optimization; Integration of patient-derived biology | No AI-discovered drugs fully approved yet; Questions about better success vs. faster failure |

| Scientific Benchmark Performance [7] | 18.8-67.3 percentage point increases on demanding new benchmarks (MMMU, GPQA, SWE-bench) | Rapid performance improvements on specialized tasks; High scores on algorithmic evaluation | Performance may not translate to real-world scientific tasks; Potential for overestimation of capabilities |

The Validation Challenge: Reconciling Different Performance Metrics

The performance data reveals significant disparities between AI capabilities measured in controlled benchmarks versus real-world applications. While AI systems demonstrate impressive results on specialized benchmarks—with scores on demanding tests like MMMU, GPQA, and SWE-bench increasing by 18.8, 48.9, and 67.3 percentage points respectively [7]—their performance in practical scientific settings reveals important limitations. For instance, a randomized controlled trial with experienced software developers found that AI assistance actually resulted in a 19% slowdown when working on real-world codebases from large open-source projects [5]. This contrast highlights the critical importance of orthogonal validation methodologies that assess AI systems not just through algorithmic benchmarks but through realistic workflow integration and outcome measurement.

Current Landscape and Future Trajectory

AI in Pharmaceutical Development: The 2025 Outlook

The pharmaceutical industry represents a critical testing ground for Agentic Science, with AI-driven platforms demonstrating tangible progress. By mid-2025, over 75 AI-derived drug candidates had reached clinical stages, representing exponential growth from essentially zero in 2020 [6]. Leading AI drug discovery companies have advanced candidates into clinical trials, with notable examples including:

- Insilico Medicine's generative-AI-designed idiopathic pulmonary fibrosis drug progressing from target discovery to Phase I in 18 months, compared to the typical 5-year timeline [6].

- Exscientia's platform achieving approximately 70% faster design cycles while requiring 10x fewer synthesized compounds than industry norms [6].

- Schrödinger's physics-enabled design strategy advancing the TYK2 inhibitor zasocitinib into Phase III clinical trials [6].

The U.S. Food and Drug Administration (FDA) has recognized this trend, reporting a significant increase in drug application submissions using AI/ML components, and has established the CDER AI Council in 2024 to provide oversight and coordination of AI-related activities [8]. This regulatory engagement underscores the transition of AI from experimental curiosity to clinical utility.

Technical and Implementation Challenges

Despite promising advances, Agentic Science faces significant hurdles before achieving widespread adoption:

Reproducibility and Validation: The ability of autonomous AI systems to make genuinely novel discoveries that are reproducible and valid remains unproven. As noted in one survey, "AI's advancing capabilities have captured policymakers' attention, leading to an increase in AI-related policies worldwide" [7], reflecting concerns about reliability and accountability.

Integration with Existing Infrastructure: Successful autonomous systems must operate within established laboratory environments without monopolizing equipment or requiring extensive redesign. The mobile robot approach in synthetic chemistry demonstrates one solution, enabling "robots to share existing laboratory equipment with human researchers without monopolizing it" [2].

Reasoning Limitations: Current AI systems still struggle with complex reasoning benchmarks. As the 2025 AI Index Report notes, AI models "often fail to reliably solve logic tasks even when provably correct solutions exist, limiting their effectiveness in high-stakes settings where precision is critical" [7].

Trust and Communication Barriers: In pharmaceutical applications, concerns about "data security, algorithmic bias, and the reproducibility of AI's predictions contribute to hesitation among stakeholders" [9]. Bridging communication gaps between domain scientists and AI specialists remains challenging.

The evolution from AI tools to autonomous partners represents a fundamental transformation in scientific methodology. The integration of orthogonal characterization approaches—both in analytical techniques and performance validation—will be crucial for advancing Agentic Science from demonstration projects to reliable research partners. As autonomous systems increasingly handle exploratory tasks in complex domains like synthetic chemistry and drug discovery, their ability to leverage multiple, independent measurement and validation techniques will separate symbolic automation from genuine scientific advancement.

The most promising developments combine sophisticated AI reasoning with physical laboratory automation, creating closed-loop systems that can navigate the iterative, often ambiguous nature of scientific discovery. As these systems evolve, the focus must remain on robust validation, transparent methodology, and complementary human-AI collaboration rather than wholesale replacement of human researchers. The future of Agentic Science lies not in autonomous systems working in isolation, but in effectively orchestrated partnerships that leverage the unique strengths of both human and artificial intelligence to accelerate the pace of scientific discovery.

Defining Orthogonal Characterization

In scientific research and development, orthogonal characterization refers to the strategy of using multiple, independent analytical methods to measure the same essential property of a sample. The core principle is that each technique operates on a different physical or chemical measurement principle, thus providing independent data streams that cross-validate one another [10] [11].

This approach is fundamentally linked to complementary methods, but with a key distinction:

- Orthogonal Methods target the same property (e.g., particle size) using different physical principles (e.g., flow imaging microscopy vs. light obscuration) to minimize method-specific biases and provide independent confirmation [10] [11].

- Complementary Methods provide information about different properties of a sample (e.g., particle size and protein conformation) to build a more comprehensive profile [10].

The power of orthogonality lies in its ability to mitigate the inherent biases and limitations of any single analytical technique. By comparing results from methods with different systematic errors, scientists can achieve a more accurate and reliable measurement of Critical Quality Attributes (CQAs), which are essential for ensuring the safety and efficacy of products like biopharmaceuticals [10] [12].

The Critical Role of Orthogonal Characterization

Orthogonal characterization matters because it is a cornerstone of reliability and accuracy in complex scientific fields. Its importance is most evident in several key areas:

Ensuring Product Quality and Safety

In the pharmaceutical and biopharmaceutical industries, orthogonal methods are essential for characterizing complex biological products like monoclonal antibodies, vaccines, and cell therapies [12]. For instance, combining Flow Imaging Microscopy (FIM) with Light Obscuration (LO) provides a more accurate assessment of subvisible particles and protein aggregates in a drug product than either method alone, ensuring batch consistency and patient safety [10].

Building Robust Analytical Methods

During drug development, orthogonal methods are used to validate primary analytical techniques. As shown in Table 1, a systematic approach using multiple chromatographic conditions can reveal impurities or degradation products that a single method might miss, ensuring the primary control method is truly stability-indicating [13].

Enabling Autonomous Discovery

The use of orthogonal data is becoming crucial for advanced research workflows, including autonomous laboratories. A 2024 study in Nature demonstrated a robotic platform that uses UPLC-MS and benchtop NMR to autonomously characterize reaction outcomes. The heuristic decision-maker processes this orthogonal data to select successful reactions for further exploration, mimicking the multifaceted decision-making of a human researcher [14].

Table 1: Summary of Orthogonal Method Applications Across Industries

| Field/Industry | Common Orthogonal Technique Pairs | Property Measured | Primary Benefit |

|---|---|---|---|

| Biopharmaceuticals | Flow Imaging Microscopy (FIM) & Light Obscuration (LO) [10] | Subvisible particle size & concentration | Cross-validation for accurate particle counting and regulatory compliance. |

| Analytical Chemistry | Multiple HPLC methods with different columns and mobile phases [13] | Impurity and degradation product profiles | Ensures no critical impurities are overlooked by the primary stability-indicating method. |

| Antibody Engineering | Dynamic Light Scattering (DLS), Size Exclusion Chromatography (SEC), & Mass Photometry [15] | Protein aggregation, size, and oligomeric state | Robust evaluation of conformational stability and aggregation propensity. |

| Autonomous Chemistry | UPLC-MS & Benchtop NMR Spectroscopy [14] | Reaction outcome and product identity | Enables robotic platforms to make reliable, human-like decisions on synthetic success. |

Experimental Protocols: Implementing an Orthogonal Workflow

The following case studies illustrate detailed protocols for implementing orthogonal characterization.

Case Study 1: Orthogonal Screening for HPLC Method Development

This protocol ensures a primary HPLC method can separate all potential impurities and degradation products [13].

- Sample Generation: Collect all available batches of drug substance and product. Generate potential degradation products through forced decomposition studies (e.g., exposure to heat, light, acid, base, oxidants).

- Initial Screening: Analyze the generated samples using a single, broad generic gradient HPLC method to identify samples with unique impurity profiles for further study.

- Orthogonal Screening: Screen the selected samples using a matrix of 36 different chromatographic conditions. This typically involves six different broad gradients, each run on six different column chemistries (e.g., C18, C8, PFP, phenyl) with varying pH modifiers (e.g., formic acid, trifluoroacetic acid, ammonium acetate) [13].

- Method Selection & Optimization: From the screening data, select a primary method that separates all components of interest. Identify a second, orthogonal method that provides a distinctly different selectivity profile. Use modeling software to fine-tune both methods.

- Validation and Deployment: Validate the primary method for release and stability testing. Use the orthogonal method to screen samples from new synthetic routes or pivotal stability studies to ensure the primary method remains specific over time [13].

Case Study 2: Orthogonal Analysis of Engineered Antibodies

This protocol characterizes the stability and aggregation propensity of various antibody constructs (e.g., full-length IgG, scFv fragments) [15].

- Sample Preparation: Express and purify the panel of antibody constructs (e.g., in Expi293 cells using transient transfection and Protein-G purification).

- Multi-Technique Analysis: Subject each construct to a suite of orthogonal analytical techniques:

- Size Exclusion Chromatography (SEC): To monitor oligomeric state and quantify soluble aggregates.

- Dynamic Light Scattering (DLS): To determine hydrodynamic size distribution and polydispersity.

- Mass Photometry: To measure molecular mass and quantify oligomers in solution.

- nano-Differential Scanning Fluorimetry (nanoDSF): To assess thermal stability by measuring protein unfolding.

- Circular Dichroism (CD): To evaluate secondary and tertiary structure.

- Data Integration: Correlate findings across all techniques. For example, an early elution peak in SEC, an increase in polydispersity from DLS, and a shift in thermal unfolding from nanoDSF collectively provide orthogonal confirmation of reduced stability and increased aggregation propensity in engineered fragments compared to full-length antibodies [15].

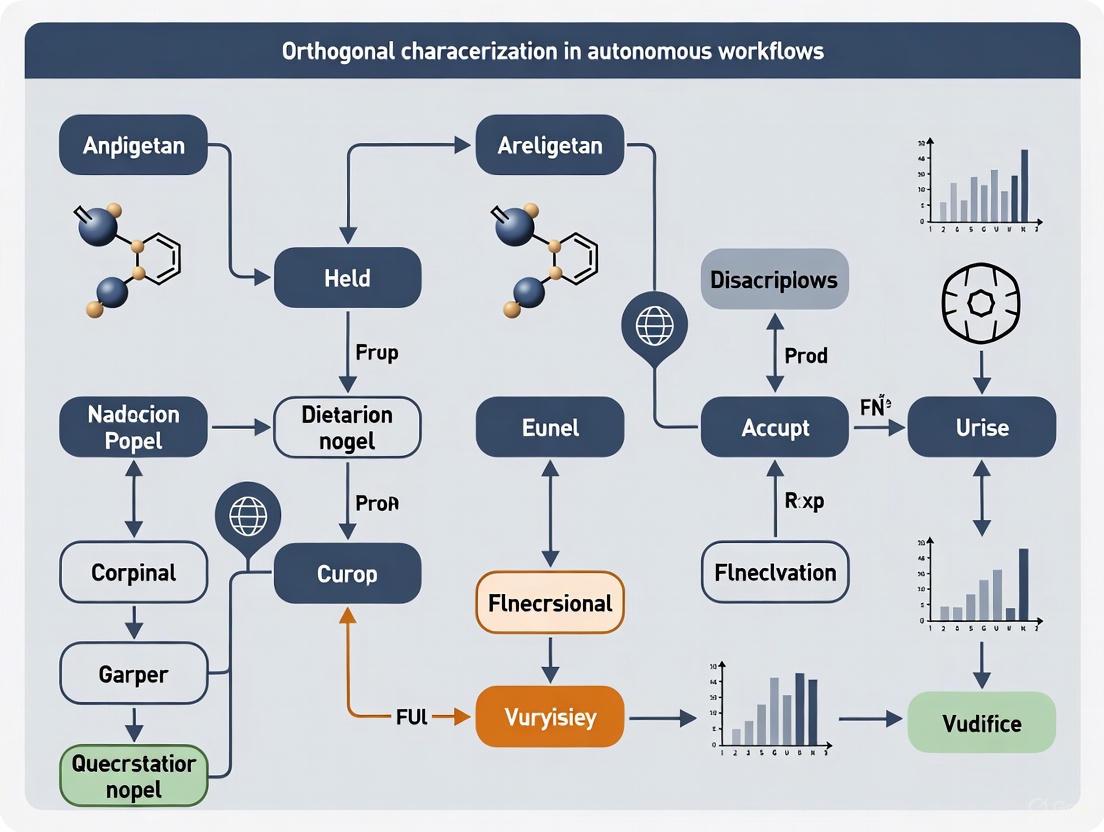

Orthogonal Characterization in Autonomous Workflows

The integration of orthogonal characterization is a key enabler for the next generation of autonomous laboratories. The workflow, as demonstrated by the mobile robot platform, can be visualized as a cyclic process of synthesis, orthogonal analysis, and heuristic decision-making.

Diagram 1: Autonomous Orthogonal Workflow. This cycle shows how a synthesis platform, coupled with orthogonal analysis and a decision-maker, can operate autonomously.

In this workflow, the robot handles samples and operates standard, unmodified laboratory equipment like UPLC-MS and NMR spectrometers [14]. The "heuristic decision-maker" processes the orthogonal data streams (e.g., MS molecular weight information and NMR structural information) to assign a pass/fail grade to each reaction. This allows the system to autonomously select successful reactions for scale-up or further diversification, and to check the reproducibility of screening hits, all based on multifaceted data that mimics human judgment [14].

The Scientist's Toolkit: Key Reagents and Instruments

Table 2: Essential Research Solutions for Orthogonal Characterization

| Category | Item / Technique | Primary Function in Orthogonal Workflows |

|---|---|---|

| Separation & Analysis | Size Exclusion Chromatography (SEC) | Separates biomolecules by size to analyze aggregation and oligomeric state [15]. |

| Dynamic Light Scattering (DLS) | Measures hydrodynamic size distribution and polydispersity of particles in solution [15]. | |

| UPLC/HPLC-MS | Separates complex mixtures (UPLC/HPLC) and provides molecular weight/identity data (MS) [14]. | |

| Structural Analysis | Nuclear Magnetic Resonance (NMR) | Provides detailed information on molecular structure, dynamics, and environment [14]. |

| Circular Dichroism (CD) | Assesses protein secondary and tertiary structure and folding stability [15]. | |

| nanoDSF | Measures thermal unfolding to evaluate protein conformational stability [15]. | |

| Imaging & Counting | Flow Imaging Microscopy (FIM) | Takes images of individual particles for size, count, and morphological analysis [10]. |

| Light Obscuration (LO) | Counts and sizes particles based on light blockage, often for pharmacopeial compliance [10]. | |

| Material Characterization | Orthogonal Experimental Design | Statistically optimizes multiple parameters (e.g., in battery thermal management) with minimal experimental runs [16] [17]. |

Orthogonal characterization is far more than a technical best practice; it is a fundamental paradigm for ensuring data integrity and making reliable decisions in science. By deliberately employing multiple independent measurement techniques, researchers can control for methodological biases, uncover hidden complexities, and build a more truthful understanding of their samples. As scientific challenges grow more complex, particularly with the advent of autonomous discovery platforms, the principle of orthogonality will remain a critical tool for ensuring that our measurements are robust, our products are safe, and our discoveries are sound.

The evolution of autonomous scientific systems represents a fundamental shift in research methodology, moving from single-measurement optimization to multifaceted, data-rich decision-making. Autonomous laboratories, particularly in fields like chemical synthesis and drug discovery, now demonstrate that integrating multiple, independent data streams significantly enhances the robustness and discovery potential of self-directed research. This approach, termed orthogonal characterization, leverages complementary analytical techniques to create a more comprehensive understanding of experimental outcomes than any single method could provide. Unlike traditional automated systems designed to maximize a single, known output, modern autonomous workflows must navigate complex, open-ended problems where multiple potential outcomes exist and the "correct" answer may not be predefined. The synergy created by fusing these orthogonal data streams enables autonomous systems to make nuanced decisions that more closely emulate human expert reasoning, thereby accelerating scientific discovery while remaining open to novel findings that might otherwise be overlooked.

Theoretical Foundation: From Single-Stream to Multi-Stream Data Integration

The Limitations of Single-Stream Automation

Traditional automated research workflows often rely on bespoke equipment with hard-wired characterization techniques, forcing decision-making algorithms to operate with limited analytical information [14]. This single-stream approach works adequately for well-defined optimization problems, such as maximizing the yield of a known catalyst, where a single scalar output (e.g., chromatographic peak area) suffices [14]. However, it fails dramatically in exploratory science where outcomes are multivariate and unknown in advance. In drug discovery, for instance, early-stage research has seen widespread AI adoption (76% of use cases in molecule discovery), while later clinical phases remain cautious (only 3% in clinical outcomes analysis), partly due to limitations in validation frameworks for complex, multi-faceted decision-making [18].

The Principle of Orthogonality in Data Streams

Orthogonal characterization combines measurement techniques that provide independent, non-redundant information about a system's properties. The power of this approach lies in the statistical independence of the data streams - where one method might fail or provide ambiguous results, another offers complementary insights. For example, in chemical synthesis, mass spectrometry reveals molecular weight information, while nuclear magnetic resonance spectroscopy elucidates molecular structure [14]. A product might yield highly complex NMR spectra but simple mass spectra, or vice versa [14]. Autonomous systems leveraging such orthogonal measurements can make context-based decisions about which data streams to prioritize, much like human researchers do, creating decision-making resilience that single-characterization systems lack.

Experimental Evidence: Quantitative Comparisons of Workflow Performance

Case Study: Autonomous Exploratory Chemistry

A landmark study in Nature (2024) directly demonstrates the superiority of multi-stream autonomous workflows. Researchers developed a modular platform using mobile robots to operate a synthesis platform, UPLC-MS, and benchtop NMR spectrometer, with a heuristic decision-maker processing the orthogonal measurement data [14]. The system was tested across three domains: structural diversification chemistry, supramolecular host-guest chemistry, and photochemical synthesis [14].

Table 1: Performance Comparison of Single vs. Multiple Data Streams in Autonomous Chemistry

| Workflow Configuration | Characterization Techniques | Decision Accuracy | Novelty Detection | Reproducibility Verification |

|---|---|---|---|---|

| Single-Stream (Chromatography) | UPLC only | Limited to known peak identification | Low - misses non-chromophoric products | Partial - based on retention time only |

| Single-Stream (Spectroscopy) | NMR only | Moderate for structural confirmation | Moderate - identifies novel structures | Good for structural reproducibility |

| Multi-Stream Orthogonal | UPLC-MS + NMR | High - combinatorial assessment | High - captures diverse product types | Comprehensive - structural + compositional |

The experimental results demonstrated that reactions needed to pass both orthogonal analyses to proceed to the next step, with the combined assessment effectively selecting successful reactions and automatically checking the reproducibility of screening hits [14]. This approach proved particularly valuable in supramolecular chemistry where self-assembly processes can produce diverse combinations from the same starting materials, frequently giving complex product mixtures [14].

Case Study: Autonomous Biomedical Research System

The Data-dRiven self-Evolving Autonomous systeM (DREAM) represents another advanced implementation of multi-stream decision-making in biomedical research. This fully autonomous system operates without human intervention, autonomously formulating scientific questions, configuring computational environments, and performing result evaluation and validation [19].

Table 2: Performance Metrics of DREAM Autonomous Research System

| Evaluation Metric | DREAM Performance | Top Human Scientists | Graduate Students | GPT-4 |

|---|---|---|---|---|

| Question Difficulty Score | Exceeded top-tier articles by 5.7% | Baseline | 56.0% lower than DREAM | 58.6% lower than DREAM |

| Question Originality | 12.3% gain over initial questions | Baseline | >40% lower than DREAM | >40% lower than DREAM |

| Research Efficiency (Framingham Heart Study) | 10,000x average scientists | Baseline | Not measured | Not measured |

| Success Rate in Environment Configuration | Higher than experienced human researchers | Baseline | Not measured | Not measured |

DREAM's architecture incorporates multiple data interpretation modules (dataInterpreter, questionRaiser, variableGetter, taskPlanner, codeMaker, dockerMaker, codeDebugger, resultJudger, resultAnalyzer, resultValidator, deepQuestioner) that process diverse data streams to enable robust autonomous decision-making [19]. After four evolutionary rounds, 68% of DREAM's generated questions were successfully addressed, with 10% surpassing published articles in originality and complexity [19].

Methodologies: Experimental Protocols for Orthogonal Workflows

Protocol: Modular Robotic Workflow for Exploratory Synthesis

The autonomous chemistry platform exemplifies a meticulously designed protocol for orthogonal characterization [14]:

Synthesis Module: Reactions are performed in a Chemspeed ISynth synthesizer, which automatically takes aliquots of each reaction mixture upon completion.

Sample Reformating: The synthesizer reformats samples separately for MS and NMR analysis to ensure optimal preparation for each technique.

Mobile Robot Transportation: Free-roaming mobile robots handle samples and transport them to the appropriate analytical instruments (UPLC-MS and benchtop NMR), enabling physical integration of distributed laboratory equipment.

Parallel Data Acquisition: Customizable Python scripts autonomously operate both analytical instruments, with resulting data saved to a central database.

Heuristic Decision-Making: A domain-expert-designed algorithm processes both UPLC-MS and 1H NMR data, applying experiment-specific pass/fail criteria to each analytical technique.

Combinatorial Assessment: Binary results from each analysis are combined to give pairwise grading for each reaction, determining which experiments proceed to subsequent stages.

This protocol successfully bridges the gap between automated experimentation (where researchers make decisions) and true autonomy (where machines interpret data and make decisions) [14]. The modular design allows instruments to be shared with human researchers without monopolization or requiring extensive laboratory redesign [14].

Protocol: Fully Autonomous Biomedical Research System

The DREAM system implements a different but equally sophisticated protocol for autonomous research [19]:

Data Interpretation: The dataInterpreter module autonomously interprets information from structured biomedical datasets, including omics and clinical data.

Question Generation: The questionRaiser module generates research questions directly from data, filtered for research value using defined scoring criteria.

Variable Screening: Relevant variables are identified (variableGetter) for each research question.

Task Planning: The taskPlanner designs appropriate analysis tasks and steps.

Code Generation: Analytical code is automatically written (codeMaker) to implement the planned analyses.

Environment Configuration: Computational environments are automatically configured (dockerMaker) without human intervention.

Execution and Debugging: The codeDebugger executes and debugs analytical code as needed.

Result Judgment: The resultJudger evaluates results against research questions.

Interpretation and Validation: Results are interpreted (resultAnalyzer) and validated (resultValidator) against literature and cross-datasets.

Self-Evolution: The deepQuestioner formulates more complex questions based on previous outcomes, enabling continuous research progression.

This UNIQUE paradigm (Question, codE, coNfIgure, jUdge) enables fully autonomous operation across the entire research lifecycle [19].

Visualization: Workflow Architectures for Orthogonal Data Integration

Orthogonal Characterization Workflow in Autonomous Chemistry

Self-Evolving Autonomous Research System Architecture

Implementation: The Researcher's Toolkit for Autonomous Workflows

Successful implementation of orthogonal characterization in autonomous workflows requires specific technical components and analytical resources. The following table details essential research reagent solutions and their functions in enabling robust multi-stream decision-making.

Table 3: Research Reagent Solutions for Orthogonal Characterization Workflows

| Component Category | Specific Solution | Function in Autonomous Workflow | Key Capabilities |

|---|---|---|---|

| Robotic Hardware | Mobile robot agents with multipurpose grippers | Sample transportation and instrument operation | Free-roaming mobility enables distributed instrument access without laboratory redesign [14] |

| Synthesis Platform | Chemspeed ISynth synthesizer | Automated chemical synthesis with aliquot capability | Combinatorial chemistry execution with automatic sample reformatting for multiple analyses [14] |

| Analytical Instrumentation | UPLC-MS system | Molecular separation and mass detection | Provides retention time, peak area, and molecular weight data for reaction assessment [14] |

| Analytical Instrumentation | Benchtop NMR spectrometer | Molecular structure characterization | Delivers structural information complementary to MS data [14] |

| Decision Algorithms | Heuristic decision-maker | Orthogonal data integration and pass/fail assessment | Combines binary results from multiple analyses using domain-expert-defined criteria [14] |

| Control Software | Customizable Python scripts | Instrument control and data acquisition | Enables autonomous operation of unmodified laboratory equipment [14] |

| Data Management | Central database | Storage and retrieval of multimodal analytical data | Maintains integrated data from multiple characterization techniques [14] |

| Autonomous Research System | DREAM framework | End-to-end autonomous research without human intervention | Implements UNIQUE paradigm for continuous self-evolving research [19] |

Regulatory and Practical Considerations

The implementation of multi-stream autonomous workflows operates within an evolving regulatory landscape, particularly for drug development applications. The U.S. FDA has established the CDER AI Council to provide oversight, coordination, and consolidation of activities around AI use, responding to a significant increase in drug application submissions using AI components [8]. The European Medicines Agency has articulated a risk-based approach focusing on 'high patient risk' applications and 'high regulatory impact' cases [18]. Notably, the EMA framework prohibits incremental learning during clinical trials to ensure the integrity of clinical evidence generation, while permitting continuous model enhancement in post-authorization phases with rigorous validation and monitoring [18].

Practical implementation must also address computational efficiency concerns. Methods like Orthogonal Recursive Fitting (ORFit) demonstrate approaches for one-pass learning that update parameters in directions orthogonal to past gradients, minimizing disruption of previous predictions while incorporating new data [20]. This is particularly valuable for autonomous systems operating on streaming data where storing and reprocessing all previous data is computationally prohibitive.

The integration of multiple orthogonal data streams represents a fundamental advancement in autonomous research systems, enabling decision-making robustness that exceeds the capabilities of single-characterization approaches. Experimental evidence from both chemical synthesis and biomedical research demonstrates that systems leveraging complementary data streams achieve superior performance in identifying successful experiments, generating novel insights, and maintaining reproducibility. As these technologies mature, their impact will increasingly transform scientific discovery from a human-directed process to a collaborative partnership between researchers and autonomous systems. The continued evolution of regulatory frameworks, computational methods, and instrumentation integration will further enhance the capabilities of these systems, potentially accelerating the pace of scientific discovery by orders of magnitude and opening new frontiers in exploratory science.

In the development of complex biologics, ensuring product quality, safety, and efficacy is paramount. Unlike small-molecule drugs, biologics are large, complex molecules produced by living systems, making them inherently heterogeneous and sensitive to manufacturing conditions [21] [22]. This complexity necessitates a rigorous framework for defining and controlling Critical Quality Attributes (CQAs)—physical, chemical, biological, or microbiological properties that must remain within appropriate limits to ensure desired product quality [21]. Among these, Identity, Potency, Purity, and Stability stand as the four foundational pillars. With the advent of autonomous workflows and advanced analytical techniques, the pharmaceutical industry is undergoing a transformation in how these attributes are characterized and controlled. This guide provides a comparative analysis of the experimental methodologies used to assess these key attributes, focusing on the integration of orthogonal characterization within modern, automated research environments.

Identity Confirmation

Core Concept and Analytical Techniques

Identity refers to the definitive confirmation of a biologic's molecular structure, including its primary amino acid sequence and higher-order structure. Verifying identity ensures that the product is what it claims to be, a fundamental requirement for safety and consistency [23].

- Primary Structure Analysis: Peptide mapping using Liquid Chromatography-Mass Spectrometry (LC-MS) is a gold standard for confirming the amino acid sequence and identifying post-translational modifications such as oxidation or deamidation [23]. High-resolution mass spectrometry further pinpoints these modifications and can confirm disulfide bond arrangements [23].

- Higher-Order Structure Analysis: Techniques like Circular Dichroism (CD) and Fourier-Transform Infrared (FTIR) spectroscopy probe the secondary and tertiary structure of the protein, confirming its correct folding [23]. Hydrogen–deuterium exchange mass spectrometry (HDX-MS) is an advanced method for characterizing higher-order structure and dynamics in solution [23].

Autonomous Workflow Integration

In autonomous laboratories, the identity confirmation workflow can be seamlessly integrated. A robotic system can prepare samples from a synthesis module, transport them via a mobile robot to a benchtop NMR spectrometer and a UPLC-MS for analysis, and feed the orthogonal data into a central database for a heuristic decision-maker to provide a pass/fail grade [2]. This closed-loop system mimics human protocols but with enhanced reproducibility and speed.

Table 1: Key Analytical Techniques for Assessing Identity

| Quality Attribute | Analytical Technique | Key Information Provided | Suitability for Autonomous Workflows |

|---|---|---|---|

| Identity | Peptide Mapping (LC-MS) | Amino acid sequence verification, post-translational modifications | High (Automated sample processing and data analysis) |

| High-Resolution Mass Spectrometry | Precise molecular weight, disulfide bond confirmation | High | |

| Circular Dichroism (CD) | Secondary and tertiary structure confirmation | Medium (Requires specific sample preparation) | |

| HDX-MS | Higher-order structure and dynamics in solution | Medium (Complex data interpretation) |

Potency Assessment

Core Concept and Bioassays

Potency is a quantitative measure of a biologic's biological activity, directly linked to its mechanism of action and therapeutic effect. It ensures that each batch of the product can elicit the desired clinical response [22] [24].

- Cell-Based Bioassays: These assays measure a functional response, such as antibody-dependent cell-mediated cytotoxicity (ADCC) or cytokine neutralization, reflecting the biologic's intended mechanism of action in a live-cell system [23].

- Binding Assays: Techniques like Enzyme-Linked Immunosorbent Assay (ELISA) determine affinity and specificity. Surface Plasmon Resonance (SPR) provides kinetic data (on-rate/off-rate) and active concentration, offering a more detailed immunological profile [23].

Data-Driven Decision-Making

Potency is a primary driver for lead selection in discovery. When multiple candidates show equivalent potency, other developability properties are used for differentiation. Hierarchical clustering analysis (HCA) can be applied to high-dimensional data from potency and other developability assays to systematically rank molecules and identify optimal leads with the best combination of properties, streamlining decision-making [25].

Purity and Impurity Analysis

Core Concept and Variants

Purity refers to the freedom from product-related and process-related impurities. Product-related variants include aggregates, fragments, and charge isoforms, while process-related impurities can include host cell proteins and DNA [24] [23].

- Size Variants: Size Exclusion Chromatography-High Performance Liquid Chromatography (SEC-HPLC) is critical for differentiating aggregates and high molecular weight species from the desired monomeric product, which are key indicators of instability [24] [23]. Capillary electrophoresis (CE-SDS) is also routinely used [23].

- Charge Variants: Ion Exchange (IEX)-HPLC and isoelectric focusing are recommended for monitoring charge state variants that arise from modifications like deamidation or sialylation [24].

- Orthogonality in Autonomous Systems: The combination of UPLC-MS and NMR spectroscopy, as used in modular robotic workflows, provides a powerful orthogonal approach to purity analysis, mitigating the uncertainty of relying on a single measurement [2].

Table 2: Key Analytical Techniques for Assessing Purity and Stability

| Quality Attribute | Analytical Technique | Key Information Provided | Key Measured Output(s) |

|---|---|---|---|

| Purity | SEC-HPLC | Quantification of aggregates and fragments | % Monomer, % High-Molecular-Weight Species |

| IEX-HPLC | Quantification of acidic and basic charge variants | % Acidic Peak, % Main Peak, % Basic Peak | |

| CE-SDS | Purity and aggregation under denaturing conditions | % Purity, % Fragments | |

| Stability | SEC-HPLC (Stability Indicating) | Monitoring aggregate formation over time | Increase in % Aggregates over time |

| First-Order Kinetic Modeling | Predicting long-term stability and shelf-life | Rate constant (k), Predicted shelf-life | |

| Accelerated Stability Studies | Identifying degradation pathways under stress | Degradation rate at elevated temperatures |

Stability Profiling

Core Concept and Degradation Pathways

Stability is the ability of a drug substance or product to retain its properties within specified limits throughout its shelf life. For biologics, instability often manifests as fragmentation or aggregation, which can lead to a loss of efficacy or increased immunogenicity [24].

- Stability-Indicating Methods: As per ICH Q5C, a stability-testing program should include long-term, accelerated, and stress studies [24]. SEC-HPLC is a cornerstone method for monitoring stability, as it can track the increase in aggregates and fragments over time [24].

- Predictive Kinetic Modeling: Traditionally, predicting long-term stability was challenging. However, recent advances demonstrate that simplified first-order kinetic models combined with the Arrhenius equation can accurately predict long-term stability for various quality attributes, including aggregates, across different protein modalities (e.g., IgG1, bispecifics, scFv) [26]. This Accelerated Predictive Stability (APS) approach is more precise than linear extrapolation and is being incorporated into revised ICH guidelines [26].

Experimental Protocol: Predictive Stability Modeling

The protocol for APS involves:

- Study Design: Expose the biologic to a range of temperatures (e.g., 5°C, 25°C, 40°C) for a defined period (e.g., 12-36 months) [26].

- Sample Pull Points: At predefined intervals, samples are taken and analyzed using a stability-indicating method like SEC to quantify attributes like % aggregates [26].

- Data Modeling: The degradation data at each temperature is fitted using a first-order kinetic model. The model is simplified to avoid overfitting, often focusing on a single dominant degradation pathway relevant to storage conditions [26].

- Arrhenius Plotting: The reaction rate constants (k) at different temperatures are used in the Arrhenius equation to extrapolate the degradation rate at the recommended storage temperature (e.g., 2-8°C), enabling shelf-life prediction [26].

The Autonomous Workflow: Integrating Orthogonal Characterization

Modern autonomous laboratories are revolutionizing biologics characterization by integrating disparate modules into a single, closed-loop workflow. This approach leverages robotics and heuristic or AI-driven decision-making to execute exploratory synthesis and characterization with minimal human intervention [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents, materials, and instruments essential for the characterization of biologics, particularly within advanced automated workflows.

Table 3: Essential Research Reagent Solutions for Biologics Characterization

| Item | Function/Application | Key Characteristics |

|---|---|---|

| UPLC-MS System | Orthogonal analysis for identity (peptide mapping) and purity. Combines chromatographic separation with mass detection. | High resolution, sensitivity, and compatibility with automated data pipelines [2] [23]. |

| Benchtop NMR Spectrometer | Orthogonal analysis for identity and higher-order structure confirmation. Provides atomic-level structural information. | Lower footprint for lab integration, operable by robotic agents [2]. |

| Size Exclusion Chromatography (SEC) Column | Critical for purity and stability analysis, separating monomers from aggregates and fragments. | High resolution for quantitating low-abundance species; used with specific mobile phases [26] [24]. |

| Surface Plasmon Resonance (SPR) Chip | Functional characterization for potency; measures binding kinetics (kon, koff) and affinity (KD). | Coated with antigen or other binding partner for specific interaction studies [23]. |

| Automated Synthesis Platform (e.g., Chemspeed ISynth) | Executes synthetic operations and sample preparation autonomously based on AI/heuristic instructions. | Modular, integrable with robotic sample handlers for end-to-end automation [2]. |

| Mobile Robot Agents | Physical linkage between synthesis and analysis modules; transport samples and labware. | Free-roaming, capable of operating standard laboratory equipment [2]. |

The rigorous assessment of Identity, Potency, Purity, and Stability is non-negotiable for developing safe and effective complex biologics. The landscape of characterization is being profoundly reshaped by the adoption of autonomous workflows that integrate orthogonal analytical techniques like UPLC-MS and NMR, coupled with data-driven decision-making through heuristic algorithms or machine learning. These advanced approaches, including predictive kinetic modeling for stability and hierarchical clustering for lead selection, enable a more efficient, reproducible, and in-depth understanding of Critical Quality Attributes. As these technologies mature, they promise to accelerate the pace of biologics development from discovery to commercial manufacturing, ensuring that high-quality therapeutics reach patients faster and more reliably.

The biological complexity of Cell and Gene Therapy (CGT) products, comprising viable cells, genetic material, and viral vectors, represents a fundamental departure from traditional small-molecule drugs [27]. This complexity necessitates rigorous quality control strategies to ensure product efficacy, patient safety, and batch-to-batch consistency [27]. An orthogonal approach—which employs multiple independent analytical methods to assess the same quality attribute—has become a regulatory expectation and scientific necessity for comprehensive product characterization [27]. This methodology mitigates the risk of false results inherent to any single analytical technique and provides a more complete understanding of Critical Quality Attributes (CQAs). Furthermore, the emergence of autonomous laboratories and AI-driven workflows is poised to integrate these orthogonal methods into seamless, automated characterization pipelines, accelerating development while maintaining rigorous quality standards [28].

Critical Quality Attributes and the Orthogonal Approach

For CGT products, key CQAs typically include identity, potency, purity, and for cell-based products, viability [27]. The orthogonal strategy is applied by using different analytical techniques that provide independent but complementary data on each attribute.

Table 1: Orthogonal Methods for Critical Quality Attribute Analysis

| Critical Quality Attribute | Analytical Technique 1 | Analytical Technique 2 | Additional Techniques | Primary Application |

|---|---|---|---|---|

| Identity (Cell Therapy) | Flow Cytometry (Phenotype) [27] | STR Profiling (Genotype) [27] | Karyological Analysis [27] | Confirms cell population and donor source [27] |

| Identity (Viral Vector) | Restriction Analysis [27] | Transgene Sequencing [27] | Dynamic Light Scattering (DLS) [27] | Verifies vector construct and physical properties [27] |

| Potency | Functional Cell-Based Assays [27] | Cytokine Secretion Profile [27] | Transgene Expression Analysis [27] | Measures biological activity [27] |

| Purity (Full/Empty Capsids) | Analytical Ultracentrifugation (AUC) [27] [29] | SEC-MALS [27] [29] | Mass Photometry, dPCR/ELISA [29] | Quantifies product-related impurities [27] |

| Genome Integrity | digital PCR (dPCR) [29] | Next-Generation Sequencing (NGS) [29] | Gel Electrophoresis [29] | Assesses integrity of packaged genetic material [29] |

Identity Testing

Identity confirmation ensures the product contains the correct biological components. For cell therapies, this involves a multi-level characterization:

- Phenotypic Analysis: Techniques like flow cytometry confirm the identity of the cell population by detecting specific surface and intracellular markers [27].

- Genotypic Analysis: Short Tandem Repeat (STR) profiling provides a genetic fingerprint, crucial for verifying the autologous or allogeneic origin of cells and ensuring they have not been cross-contaminated [27].

- Karyological Analysis: This assesses genetic stability, providing indirect evidence of safety concerning tumorigenic potential [27].

For viral vector-based gene therapies, identity is confirmed through a combination of methods that analyze the vector itself and its functional output. Restriction analysis and transgene sequencing characterize the genetic construct, while biophysical methods like Dynamic Light Scattering (DLS) can determine the size of viral particles, helping to distinguish between full and empty capsids [27].

Potency and Purity Assessment

Potency, a measure of the product's biological activity, is often evaluated using functional assays tailored to the mechanism of action. For a CAR-T cell product, this could involve measuring target cell killing or cytokine secretion upon target engagement [27]. Purity often focuses on quantifying product-related impurities, with the full-to-empty capsid ratio being a major CQA for AAV-based gene therapies. The presence of empty capsids is an impurity that can reduce efficacy and trigger immune responses [27].

Table 2: Orthogonal Methods for Full/Empty Capsid Ratio and Genome Integrity Analysis

| Method | Principle | Key Advantage | Key Limitation | Role in Orthogonality |

|---|---|---|---|---|

| Analytical Ultracentrifugation (AUC) | Separates particles by buoyant density under centrifugal force [27]. | Considered a gold standard; can resolve full, partial, and empty capsids [27]. | Low-throughput, not ideal for GMP release [27]. | Primary method for in-depth characterization [27]. |

| SEC-MALS | Separates by size, then measures mass via light scattering [27]. | Suitable for quality control in GMP release [27]. | Cannot separate partially filled capsids [27]. | Orthogonal QC method correlated with AUC [29]. |

| Mass Photometry | Measures mass of individual particles by light scattering [29]. | Rapid, label-free analysis at the single-particle level. | Emerging technique, requires further standardization. | Provides orthogonal mass measurements. |

| dPCR/ELISA | dPCR quantifies genome copies; ELISA quantifies total capsids [29]. | High sensitivity and suitability for routine QC [29]. | Indirect ratio calculation; requires two separate assays. | Fast, cost-effective orthogonal check [29]. |

The evaluation of genome integrity—the proportion of full-length, correctly assembled genetic sequences within viral vectors—has emerged as a critical parameter closely linked to potency. Digital PCR (dPCR) is advancing as a key tool here, with multiplex assays designed to target different regions of the genome (e.g., promoter, poly-A tail, and internal regions) to provide a percentage of intact genomes [29]. This data has shown strong correlation with potency assay results, explaining observed variations in biological activity [29]. dPCR results are often validated orthogonally by Next-Generation Sequencing (NGS), which provides base-by-base sequence information but is more time-consuming and costly [29].

The Autonomous Workflow: Integrating Orthogonal Characterization

The future of CGT characterization lies in the integration of orthogonal methods into intelligent, automated systems. Autonomous laboratories are demonstrating how AI-driven decision-making can be coupled with robotic experimentation to create closed-loop discovery and characterization cycles [28] [14].

These systems seamlessly integrate various instruments. For instance, a modular robotic workflow can use mobile robots to transport samples between an automated synthesis platform, a liquid chromatography–mass spectrometer (UPLC-MS), and a benchtop NMR spectrometer [14]. A central heuristic decision-maker then processes this orthogonal analytical data (MS and NMR spectra) to automatically grade reaction outcomes and determine the next experimental steps, mimicking human expert judgment [14].

The diagram above illustrates a generalized autonomous R&D workflow. The critical phase of "Orthogonal Analysis" is where multiple characterization techniques are executed, and their data is fed into the decision-making algorithm. This mirrors the manual orthogonal approach but achieves unprecedented speed and consistency by eliminating human downtime and subjective bias [28] [14]. As noted in research on AI-driven labs, "By tightly integrating these stages... autonomous labs aim to turn processes that once took months of trial and error into routine high-throughput workflows" [28].

Experimental Protocols in Practice

Protocol: Genome Integrity Analysis via Multiplex Digital PCR

Purpose: To determine the percentage of intact versus fragmented viral genomes in an AAV-based gene therapy product [29].

Key Reagent Solutions:

- QIAGEN CGT Viral Vector Lysis Kit: For digesting host cell DNA and releasing the viral genome for analysis. The included DNAse is critical for removing contaminating DNA [29].

- Cell and Gene Therapy Assays for dPCR (QIAGEN): Pre-designed assays targeting specific regions of the genome (e.g., ITR, promoter, poly-A tail, and internal gene sequence) [29].

- Universal AAV Standard (Agathos Biologics): A well-characterized control template that allows for comparative analysis and validation of dPCR assays across different targets and products [29].

Methodology:

- Lysis and Digestion: Incubate the A vector sample with the lysis kit to break open the capsids and digest any external DNA [29].

- Assay Design: A multiplex dPCR assay is designed with probes targeting at least two, but ideally more, distinct regions of the viral genome. A common strategy is to place one probe at the 5' end (e.g., near a promoter) and another at the 3' end (e.g., near the poly-A tail). The co-localization of signals from both probes in a single droplet indicates an intact genome [29].

- dPCR Run: The lysed sample is partitioned into thousands of nanodroplets, and PCR amplification is performed [29].

- Data Analysis: The software (e.g., QIAcuity Software v3.1) automatically calculates the percentage of droplets positive for all target regions, providing the genome integrity percentage. Advanced software features include cross-talk compensation to prevent signal bleed-through between different fluorescent probes [29].

Orthogonal Validation: The results from the dPCR integrity assay are validated using Next-Generation Sequencing (NGS), which provides direct sequence information to confirm the presence of full-length, correct sequences [29].

Protocol: Full/Empty Capsid Ratio Analysis

Purpose: To quantify the ratio of genome-filled capsids (full) to non-genome-containing capsids (empty) in a final AAV product lot.

Methodology 1: Analytical Ultracentrifugation (AUC)

- Principle: Capsids are separated in a density gradient under high centrifugal force. Full capsids (denser due to the DNA genome) sediment at a different rate than empty capsids (less dense) [27].

- Procedure: The product is loaded into a centrifuge cell with a stabilizing gradient medium (e.g., cesium chloride). After prolonged centrifugation, the separated bands are detected optically, and their relative areas are quantified to determine the ratio [27].

- Application: Best suited for in-depth product characterization during development due to its resolution but lower throughput [27].

Methodology 2: Size-Exclusion Chromatography with Multi-Angle Light Scattering (SEC-MALS)

- Principle: SEC separates particles by size, with empty capsids typically eluting slightly later than full capsids. The connected MALS detector then measures the absolute molar mass of the eluting particles, providing unambiguous distinction between full and empty populations based on mass [27] [29].

- Procedure: The product is injected into an SEC column. The eluent passes through a UV detector and a MALS detector. The mass data from MALS is cross-referenced with the elution volume to identify and quantify each population [27].

- Application: More suitable than AUC for quality control during GMP batch release due to higher throughput and automation compatibility [27].

The adoption of orthogonal methods is non-negotiable for the rigorous characterization required to bring safe and effective CGT products to market. The synergistic use of techniques like dPCR/AUC/SEC-MALS for capsid analysis and flow cytometry/STR for cell identity provides a robust safety net against analytical errors and a deeper product understanding. The field is rapidly evolving toward the integration of these methods into AI-driven autonomous workflows, where robotic systems execute synthesis, orthogonal analysis, and data-driven decision-making in a continuous loop. This convergence of rigorous analytical science and intelligent automation promises to accelerate the development of these transformative therapies while upholding the highest standards of quality and safety.

From Theory to Practice: Implementing Orthogonal Workflows in Self-Driving Labs

The field of scientific discovery is undergoing a profound transformation, driven by the integration of artificial intelligence (AI), robotics, and orthogonal characterization techniques into a continuous, closed-loop cycle. Autonomous laboratories, or "self-driving labs," represent a powerful strategy to accelerate scientific experimentation by seamlessly combining these elements into workflows that require minimal human intervention [28]. At the core of this paradigm shift is the move from traditional, linear research processes to an iterative cycle where AI plans experiments, robotic systems execute them, and multiple analytical techniques provide complementary (orthogonal) data on the results. This data then informs the next cycle of AI-driven planning [2] [28]. This article objectively compares the performance of several pioneering autonomous platforms, focusing on their architectural approaches to integrating orthogonal characterization—the use of multiple, independent measurement techniques to unambiguously identify reaction products—a critical capability for exploratory research in fields like drug development and materials science [2].

Comparative Analysis of Autonomous Platforms

The following section compares three distinct architectural implementations of the closed-loop principle, highlighting their unique strategies for integrating AI, robotics, and analysis.

The Mobile Robotics Platform for Exploratory Chemistry

A modular autonomous platform for exploratory synthetic chemistry demonstrates a highly flexible architecture. It uses free-roaming mobile robots to physically connect an automated synthesis platform (Chemspeed ISynth) with standalone analytical instruments: an ultrahigh-performance liquid chromatography–mass spectrometer (UPLC-MS) and a benchtop nuclear magnetic resonance (NMR) spectrometer [2]. This setup allows robots to share existing laboratory equipment with human researchers without requiring extensive redesign or monopolizing instruments [2].

- Decision-Making Protocol: Unlike optimization-focused systems, this platform employs a heuristic decision-maker designed by domain experts. It processes orthogonal UPLC-MS and NMR data, assigning a binary pass/fail grade to each analysis based on experiment-specific criteria. Reactions must pass both analyses to proceed to the next stage, such as scale-up or functional assays, mimicking human expert judgment [2].

- Performance and Application: This workflow has been successfully applied to structural diversification chemistry, supramolecular host-guest chemistry, and photochemical synthesis. In supramolecular chemistry, where reactions can yield multiple products, the "loose" heuristic decision-maker remains open to novelty, facilitating genuine chemical discovery rather than just optimizing for a single, known metric [2].

A-Lab: The AI-Driven Materials Synthesis Platform

A-Lab is a fully autonomous solid-state synthesis platform specifically designed for inorganic materials discovery [28]. Its workflow is a tightly integrated, computationally driven closed loop.

- Decision-Making Protocol: A-Lab's intelligence is rooted in AI and machine learning. It begins by selecting novel, theoretically stable materials from large-scale ab initio databases. Natural-language models trained on vast literature data generate synthesis recipes. After robotic execution, machine learning models, particularly convolutional neural networks, analyze X-ray diffraction (XRD) patterns for phase identification. An active-learning algorithm (ARROWS3) then iteratively improves synthesis routes based on the results [28].

- Performance and Application: In a seminal demonstration, A-Lab operated continuously for 17 days, successfully synthesizing 41 out of 58 target inorganic materials predicted by density functional theory (DFT), achieving a 71% success rate with minimal human input [28]. This showcases the power of a dedicated, AI-centric platform for high-throughput materials discovery.

The Pyiron Framework: Integrating Simulation and Experimentation

The pyiron framework offers an integrated development environment (IDE) originally designed for high-throughput computational materials science that has been extended to include experimental data acquisition [30]. This approach focuses on fusing data from simulations and experiments within a single platform.

- Decision-Making Protocol: Pyiron implements an Active Learning loop with a direct interface to experimental equipment. It uses Gaussian Process Regression (GPR) to model a material's property (e.g., electrical resistance across a composition spread) and to suggest the most informative next measurement point. A key feature is its ability to use prior knowledge from density functional theory (DFT) simulations and literature mining to accelerate the learning process [30].

- Performance and Application: This strategy optimizes the measurement process itself, drastically reducing the number of actual measurements required to characterize a material library with a defined uncertainty. By leveraging existing knowledge, it accelerates the entire materials discovery cycle, demonstrating a pathway toward partially autonomous research systems where computational and experimental resources collaborate [30].

Quantitative Performance Comparison

The table below summarizes the key performance metrics and characteristics of the three platforms.

Table 1: Performance Comparison of Autonomous Laboratory Platforms

| Platform Feature | Mobile Robotics Platform [2] | A-Lab [28] | Pyiron Framework [30] |

|---|---|---|---|

| Primary Domain | Exploratory Synthetic Chemistry | Inorganic Materials Synthesis | Materials Characterization & Discovery |

| Central AI Model | Heuristic Decision-Maker | Natural Language Models, Convolutional Neural Networks, Active Learning | Gaussian Process Regression, Active Learning |

| Key Robotic Component | Free-roaming Mobile Robots | Integrated Robotic Arms | Interface to Measurement Devices |

| Orthogonal Characterization | UPLC-MS & Benchtop NMR | X-ray Diffraction (XRD) | Electrical Resistance, prior DFT/data |

| Reported Success Rate/Outcome | Successful application in multi-step synthesis & host-guest assays | 71% (41/58 target materials synthesized) | Order-of-magnitude reduction in required measurements |

| Key Strength | Flexibility, use of existing lab equipment | High-throughput, end-to-end autonomy | Fusion of simulation and experimental data |

Experimental Protocols for Orthogonal Characterization

The reliability of an autonomous workflow hinges on its experimental protocols. This section details the methodologies for the key analytical techniques cited.

Protocol 1: Heuristic Analysis of UPLC-MS and ¹H NMR Data

This protocol is designed for the mobile robotics platform to assess the outcome of organic and supramolecular synthesis reactions [2].

- Sample Preparation: Upon reaction completion, the Chemspeed ISynth synthesizer automatically takes an aliquot of the reaction mixture and reformats it into separate vials suitable for MS and NMR analysis.

- Sample Transport: Mobile robots retrieve the vials and transport them to the respective instruments (UPLC-MS and benchtop NMR), which are located elsewhere in the laboratory.

- Data Acquisition:

- UPLC-MS: The system runs a standardized method to separate reaction components and acquire mass spectrometry data.

- ¹H NMR: The benchtop NMR spectrometer acquires proton nuclear magnetic resonance spectra.

- Autonomous Data Processing & Decision:

- MS Analysis: The decision-maker uses a precomputed m/z lookup table to identify expected and unexpected masses.

- NMR Analysis: The decision-maker uses dynamic time warping to detect reaction-induced spectral changes compared to controls.

- Heuristic Fusion: Each analysis receives a binary pass/fail. The results are combined, and a reaction must pass both to be considered a "hit" and proceed to the next stage (e.g., replication or scale-up).

Protocol 2: ML-Driven Phase Identification for Solid-State Materials

This protocol is central to the A-Lab's operation for identifying synthesized inorganic materials [28].

- Synthesis: Robotic arms handle precursor powders, mix them, and pelletize the mixture. The pellet is heated in a furnace at a temperature suggested by the AI.

- Characterization: After synthesis, the sample is automatically transferred to an X-ray diffractometer for structural characterization.

- Phase Analysis: The acquired XRD pattern is fed into a machine learning model (a convolutional neural network) trained on a vast database of known diffraction patterns.

- Identification and Optimization: The ML model identifies the crystalline phases present in the sample. If the target material is not formed or is impure, the active learning algorithm (ARROWS3) analyzes the result and proposes a modified synthesis recipe (e.g., different precursors or heating temperature) for the next iteration.

Workflow Architecture Visualization

The following diagram illustrates the core closed-loop logic that is common to advanced autonomous laboratories, integrating the key stages of planning, execution, and analysis.

Diagram 1: Generic autonomous laboratory workflow.

The Scientist's Toolkit: Essential Research Reagents & Platforms

This section details the key hardware and software components that form the foundation of modern autonomous research workflows.

Table 2: Key Research Reagents and Platforms for Autonomous Workflows

| Tool / Platform Name | Type | Primary Function in the Workflow |

|---|---|---|

| Chemspeed ISynth | Automated Synthesis Platform | Performs automated liquid handling, reagent dispensing, and reaction control in an inert atmosphere [2]. |

| UPLC-MS | Analytical Instrument | Provides orthogonal data on reaction components through separation (chromatography) and mass identification (spectrometry) [2]. |

| Benchtop NMR | Analytical Instrument | Provides orthogonal data on molecular structure and reaction progress via nuclear magnetic resonance spectroscopy [2]. |

| X-ray Diffractometer | Analytical Instrument | Identifies crystalline phases and structure in solid-state materials synthesis [28]. |

| Mobile Robots | Robotic Agent | Transports samples between modular stations (synthesis, MS, NMR), enabling flexibility and shared lab equipment [2]. |

| Pyiron | Software Framework | An integrated development environment (IDE) that manages data, automates workflows, and combines simulation and experimental data [30]. |

| Gaussian Process Regression | AI/ML Model | A surrogate model used in active learning to predict material properties and suggest optimal next experiments [30]. |

The development of modern biopharmaceuticals, particularly complex engineered proteins and antibody-based therapeutics, demands a rigorous analytical approach to ensure product quality, safety, and efficacy. Reliable biophysical characterization is essential for assessing critical quality attributes such as purity, folding stability, aggregation propensity, and overall conformational integrity [15]. Orthogonal analytical strategies—which employ multiple, independent measurement techniques to cross-validate results—have become foundational to autonomous workflows in pharmaceutical research. By integrating techniques like UPLC-MS/MS, NMR, DLS, SEC, and NanoDSF, scientists can build comprehensive and robust datasets that overcome the limitations of any single method. This guide provides an objective comparison of these key instrumentation tools, supported by experimental data, to inform their application in therapeutic development pipelines.

Each technique in the analytical toolbox provides unique insights into different aspects of a molecule's properties. The following table summarizes their primary functions, key performance metrics, and comparative advantages.

Table 1: Performance Comparison of Key Analytical Techniques

| Technique | Primary Function | Key Measured Parameters | Typical Analysis Time | Sample Consumption | Key Strengths |

|---|---|---|---|---|---|

| UPLC-MS/MS | Quantitative analysis of small molecules and some biologics [31] | Retention time, mass-to-charge ratio, concentration [32] | 2-5 min per sample [32] | Low (µL volumes) [32] | High sensitivity, specificity, and throughput [32] |

| NanoDSF | Protein conformational stability [33] | Melting temperature (Tm), onset of unfolding (Ton) [33] | 30-90 min (including temp. ramp) | Low (10 µL capillaries) [15] | Label-free, uses intrinsic fluorescence [34] |

| DLS | Hydrodynamic size and aggregation [15] | Hydrodynamic radius (Rh), polydispersity [15] | Minutes | Low (µL volumes) | Measures size distribution in native state |

| SEC | Size-based separation and purity [15] | Elution volume/profile, molecular weight [15] | 10-30 min | Moderate (50-100 µL) | Gold standard for quantifying aggregates |

| NMR | Atomic-level structure and dynamics | Chemical shift, relaxation times | Hours to days | High (mg amounts) | Provides atomic-resolution structural data |

Table 2: Quantitative Performance Data from Representative Studies