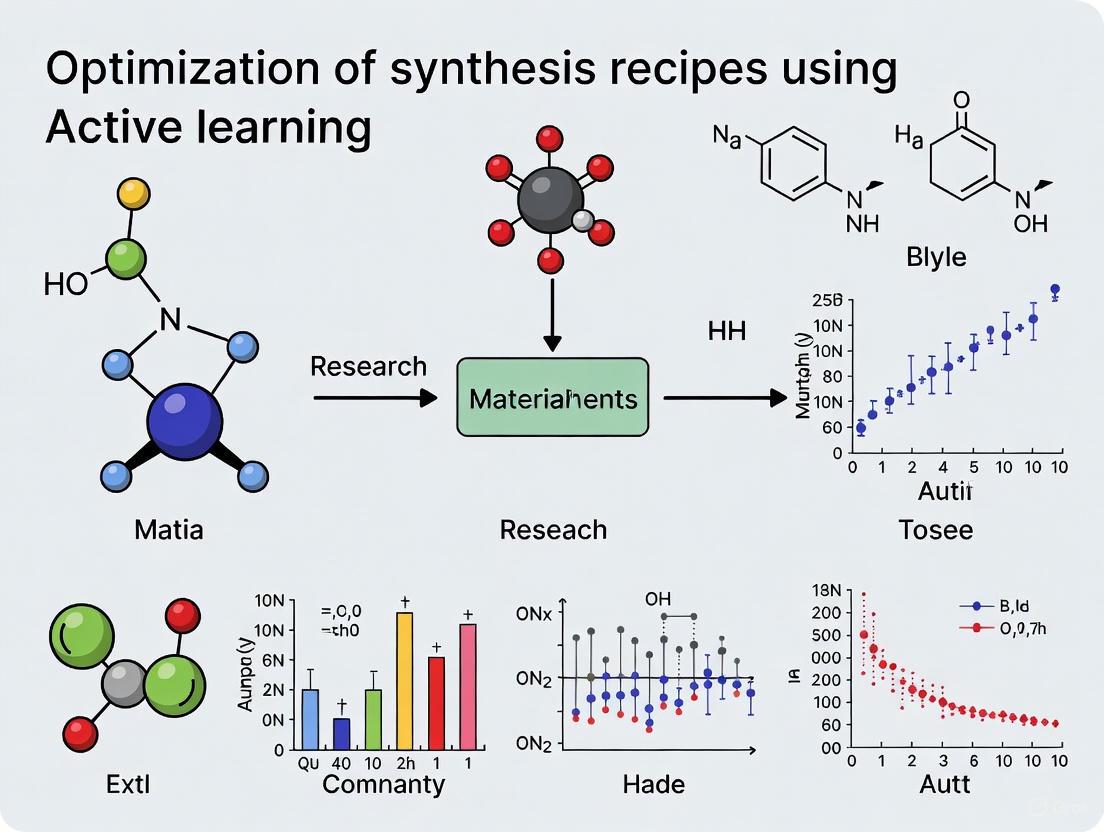

Optimizing Synthesis Recipes with Active Learning: A Strategic Guide for Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on leveraging Active Learning (AL) to optimize complex synthesis processes.

Optimizing Synthesis Recipes with Active Learning: A Strategic Guide for Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging Active Learning (AL) to optimize complex synthesis processes. It covers the foundational principles of AL as a data-efficient machine learning strategy, detailing its iterative feedback loop that integrates model predictions with experimental design. The content explores advanced methodological frameworks, including the integration of AL with Automated Machine Learning (AutoML) and generative models, and addresses key challenges such as model generalization and multi-objective optimization. Through benchmarking studies and real-world case studies from catalyst and drug molecule development, the article validates AL's significant potential to accelerate discovery, reduce experimental costs by over 90%, and improve yields, positioning it as a transformative tool for sustainable and efficient biomedical research.

What is Active Learning and Why Does it Matter for Synthesis Optimization?

Frequently Asked Questions

What is the biggest advantage of using Active Learning in drug discovery? Active Learning can lead to significant resource savings. In one study, novel batch AL methods achieved better model performance with fewer experiments, offering "significant potential saving in the number of experiments needed" compared to traditional approaches [1].

My initial dataset is very small. Can Active Learning still work? Yes. A key strength of AL is its effectiveness in low-data regimes. For instance, a generative AI workflow for drug design used a Variational Autoencoder (VAE), which is noted for its "robust, scalable training that performs well even in low-data regimes" [2]. The AL process itself iteratively improves the model from this small starting point.

How do I choose which molecules to test in the next batch? Selection is based on criteria designed to maximize information gain. Common strategies include:

- Uncertainty Sampling: Choosing data points where the model's prediction is least certain.

- Diversity Sampling: Selecting a batch of molecules that are diverse from one another to cover the chemical space broadly.

- Combined Methods: Advanced methods like COVDROP select batches that maximize joint entropy, considering both the "uncertainty" and the "diversity" of the samples simultaneously [1].

What is a "nested" Active Learning cycle? A nested AL cycle uses two levels of iteration to refine molecules more effectively [2]:

- Inner Cycle: Focuses on chemical properties like drug-likeness and synthetic accessibility.

- Outer Cycle: Uses more computationally expensive, physics-based evaluations (e.g., molecular docking) to assess target affinity. Molecules that pass the inner cycle criteria graduate to the outer cycle, creating a focused and efficient optimization funnel.

Why are my generated molecules not synthetically accessible? This is a common challenge. To address it, you can integrate a synthetic accessibility (SA) predictor as a "chemoinformatic oracle" within your AL loop [2]. This filter scores generated molecules on how easy they are to synthesize, allowing the model to prioritize and fine-tune towards more practical candidates.

Troubleshooting Guides

Problem: Model Performance Stagnates After a Few AL Cycles

Possible Causes and Solutions:

Cause 1: Lack of Diversity in Selected Batches The model may be stuck exploring a local optimum and fails to find new, promising regions of chemical space.

- Solution: Implement a batch selection method that explicitly maximizes diversity. For example, the COVDROP method selects batches by maximizing the determinant of the epistemic covariance matrix, which "enforces batch diversity by rejecting highly correlated batches" [1].

- Action: Switch from a simple uncertainty sampling method to one that incorporates a diversity metric.

Cause 2: High Epistasis in the Genotype-Phenotype Landscape In complex landscapes where small sequence changes lead to large, non-linear effects on the outcome (high epistasis), one-shot optimization can fail.

- Solution: Ensure your AL framework is iterative and can handle complexity. Research has shown that "active learning can outperform one-shot optimization approaches in complex landscapes with a high degree of epistasis" [3].

- Action: Verify that your model is being retrained with new data from each AL cycle, allowing it to learn the complex landscape progressively.

Problem: Generative Model Produces Invalid or Low-Quality Molecules

Possible Causes and Solutions:

- Cause: The decoder in the VAE is not properly constrained.

- Solution: Integrate chemoinformatic oracles early in the loop. A published workflow does this by using an "inner AL cycle" where generated molecules are evaluated for "druggability, SA, and similarity" using fast computational filters. Only molecules passing these filters are used to fine-tune the model in the next round [2].

- Action: Add a validation step immediately after molecule generation that filters out invalid structures, molecules with undesirable properties, or those with low synthetic accessibility scores before they are added to the training set.

Problem: The Workflow is Computationally Too Expensive

Possible Causes and Solutions:

- Cause: Using high-fidelity simulations (e.g., docking) on every generated molecule.

- Solution: Implement a nested AL framework. Use cheap, fast filters (drug-likeness, SA) in the inner cycles to narrow down the candidate pool. Then, run the expensive, high-fidelity simulations (docking, free energy calculations) only on the top candidates during the less-frequent outer cycles [2].

- Action: Profile the computational cost of each step and design your AL loop so that the most expensive oracle is called the least number of times.

Experimental Protocols & Data

Table 1: Summary of Active Learning Performance on Various Molecular Datasets This table summarizes the performance of different AL methods on public benchmark datasets, demonstrating the efficiency gains possible. A lower RMSE is better.

| Dataset | Property Target | Number of Molecules | Best Performing AL Method | Key Result |

|---|---|---|---|---|

| Aqueous Solubility [1] | Solubility (LogS) | 9,982 | COVDROP | Achieved lower RMSE faster than random sampling and other batch methods [1] |

| Lipophilicity [1] | Lipophilicity (LogD) | 1,200 | COVDROP | Led to better model performance with fewer experiments [1] |

| Cell Permeability (Caco-2) [1] | Effective Permeability | 906 | COVDROP | Quicker convergence to high accuracy compared to other methods [1] |

| CDK2 Inhibitors [2] | Binding Affinity (via Docking) | Target-specific | VAE with Nested AL | Generated novel scaffolds; 8 out of 9 synthesized molecules showed in vitro activity [2] |

Protocol: Implementing a Nested Active Learning Cycle for Molecular Optimization

This protocol is based on a successfully demonstrated workflow for generating novel drug molecules [2].

Data Representation & Initial Training:

- Represent your initial, target-specific training molecules as SMILES strings and tokenize them.

- Train a Variational Autoencoder (VAE) first on a general molecular dataset, then fine-tune it on your specific training set.

Inner AL Cycle (Cheminformatics Filtering):

- Generation: Sample the VAE to generate new molecules.

- Validation & Filtering: Evaluate generated molecules for chemical validity, drug-likeness (e.g., Lipinski's Rule of Five), and synthetic accessibility (SA) using a predictor.

- Similarity Check: Assess similarity to known active molecules to ensure novelty.

- Fine-tuning: Add molecules that pass the filters to a

temporal-specific set. Use this set to fine-tune the VAE. Repeat for a set number of iterations.

Outer AL Cycle (Affinity Optimization):

- Evaluation: Take the accumulated molecules from the inner cycle and evaluate them using a physics-based affinity oracle (e.g., molecular docking simulations).

- Selection: Transfer molecules with favorable docking scores to a

permanent-specific set. - Fine-tuning: Use this high-quality, permanent set to fine-tune the VAE. Subsequent inner cycles will now assess similarity against this improved set.

Candidate Selection:

- After multiple outer cycles, apply stringent filtration to the

permanent-specific set. - Use advanced molecular modeling simulations (e.g., PELE, Absolute Binding Free Energy calculations) to further validate and select the top candidates for synthesis and experimental testing [2].

- After multiple outer cycles, apply stringent filtration to the

The Scientist's Toolkit

Table 2: Essential Research Reagents & Solutions for an AL-Driven Drug Discovery Project

| Item | Function in the Active Learning Workflow |

|---|---|

| Variational Autoencoder (VAE) | The core generative model; maps molecules to a latent space and generates novel molecular structures from it [2]. |

| Synthetic Accessibility (SA) Predictor | A computational oracle that scores how easily a computer-generated molecule can be synthesized in a lab, crucial for practical drug design [2]. |

| Molecular Docking Software | A physics-based oracle used in the outer AL cycle to predict how strongly a generated molecule binds to a target protein [2]. |

| Cheminformatics Library (e.g., RDKit) | Used to calculate molecular descriptors, filter for drug-likeness, and handle molecular representations like SMILES [2]. |

| Active Learning Batch Selection Algorithm | The algorithm (e.g., COVDROP, BAIT) that intelligently selects the most informative batch of molecules for the next round of evaluation [1]. |

Workflow Diagrams

Core Active Learning Loop for Drug Discovery

Nested AL Cycle with VAE

What is the core principle of Active Learning (AL) in synthesis optimization?

Active Learning is a machine learning paradigm designed to overcome the inefficiency of traditional trial-and-error experimentation and the high cost of exhaustively evaluating vast chemical or material spaces. Its core principle is the "intelligent data selection" or "query-by-committee" strategy. Instead of randomly or exhaustively testing all possible conditions, an AL system uses a surrogate model to predict outcomes. It then iteratively selects the most "informative" or "promising" experiments to perform next based on an acquisition function. The results from these targeted experiments are used to retrain and improve the model, creating a self-improving cycle that rapidly converges on optimal solutions with minimal resource expenditure [2] [4].

Why is AL particularly suited to addressing the high cost of synthesis?

Synthesis optimization—whether for new drug molecules or material processing parameters—involves exploring a high-dimensional space with countless combinations of variables. AL is uniquely suited for this because it:

- Minimizes Experimental Burden: By focusing only on high-potential experiments, AL can reduce the number of synthesis and testing cycles required, saving time, materials, and computational resources [4].

- Accelerates Discovery: AL frameworks can pinpoint optimal parameters or molecules much faster than traditional one-variable-at-a-time (OVAT) or full-factorial Design of Experiments (DoE) approaches [5].

- Navigates Complex Trade-offs: It efficiently handles multi-objective optimization problems, such as balancing a drug candidate's potency with its synthetic accessibility or a material's strength with its ductility [2] [4].

FAQs and Troubleshooting Guides

Implementation and Strategy

FAQ 1: How do I design an effective AL cycle for my synthesis project?

An effective AL cycle integrates computational prediction with targeted experimental validation. The workflow below outlines a generalized, robust structure for a synthesis optimization campaign.

Troubleshooting Guide: My AL model seems to be stuck in a local optimum and is not exploring new areas.

- Problem: The algorithm keeps proposing similar experiments, limiting the diversity of the discovered solutions.

- Potential Causes & Solutions:

- Cause 1: The acquisition function is too exploitative. Solution: Adjust the acquisition function to favor exploration. For example, if using Upper Confidence Bound (UCB), increase the weight on the uncertainty term. Alternatively, use a purely exploratory function like maximum uncertainty sampling for a few cycles [4].

- Cause 2: The initial training data is not diverse enough. Solution: Introduce a diversity metric into the selection criteria. The algorithm can be forced to select candidates that are dissimilar to the existing dataset in the feature space [2].

- Cause 3: The model's uncertainty estimates are poorly calibrated. Solution: Validate the surrogate model's performance on a held-out test set. Consider using a different model architecture (e.g., ensemble methods) that provides more reliable uncertainty quantification [4].

FAQ 2: What are the key differences between AL and other high-throughput or machine learning approaches?

| Feature | Active Learning (AL) | High-Throughput Screening (HTS) | Traditional Machine Learning (ML) |

|---|---|---|---|

| Core Philosophy | Iterative, closed-loop; “learns what to test next” | Parallel, one-shot; “tests a vast library quickly” | One-off; “learns from a static dataset” |

| Data Selection | Intelligent, model-driven querying | Pre-defined, often random or based on simple rules | Uses entire available dataset for training |

| Resource Efficiency | High; minimizes experiments via smart selection | Low to Medium; requires large initial library synthesis and screening | N/A (only predictive) |

| Adaptability | High; continuously adapts its search strategy based on new data | Low; the search space is fixed from the start | Low; model must be manually retrained |

| Best Suited For | Optimizing in vast spaces where experiments are expensive | Initial hit finding from diverse but finite libraries | Building predictive models when large, representative datasets exist |

Experimental and Technical Considerations

FAQ 3: What are the essential components needed to set up an AL-driven synthesis lab?

Implementing a physical AL workflow requires integrating several key components into a cohesive, automated system.

Table 1: Essential Components of an AL-Driven Synthesis Lab

| Component | Function | Examples & Notes |

|---|---|---|

| Surrogate Model | Predicts outcomes of proposed experiments; the "brain" of the operation. | Gaussian Process Regressor (for uncertainty), Random Forest, Neural Networks [4]. |

| Acquisition Function | Selects the most informative experiments from the candidate pool. | Expected Improvement (EI), Upper Confidence Bound (UCB), Expected Hypervolume Improvement (EHVI) for multi-objective [4]. |

| Automated Synthesis Platform | Executes the chemical or material synthesis with minimal human intervention. | Automated reactors, liquid handling robots, laser powder bed fusion for alloys [5]. |

| Analytical & Testing Unit | Characterizes the products of synthesis to provide feedback data. | In-line spectrometers, HPLC systems, mechanical testers (for materials) [5]. |

| Data Management Platform | Manages the flow of information between all components; central database. | Custom software platforms (e.g., based on Python) to control the closed loop [2] [5]. |

Troubleshooting Guide: The experimental results from my automated platform do not match the model's predictions.

- Problem: High discrepancy between predicted and observed values breaks the AL loop's learning capability.

- Potential Causes & Solutions:

- Cause 1: Experimental noise or failure. Solution: Implement quality control checks on the automated platform. Replicate experiments with high uncertainty to confirm results [5].

- Cause 2: The feature representation of the synthesis parameters is inadequate. Solution: Re-evaluate the feature engineering. Incorporate more domain-knowledge-based descriptors or use a different molecular/material representation [2].

- Cause 3: Model drift. The chemical space being explored has shifted beyond the model's initial applicability domain. Solution: Periodically retrain the model from scratch or on a larger subset of the accumulated data to refit its parameters [2].

FAQ 4: Can you provide a specific case study where AL successfully reduced synthesis costs?

A recent study in drug discovery showcases a successful application. Researchers developed a generative AI model for designing new drug molecules, integrated with a physics-based AL framework.

Experimental Protocol: AL for Novel CDK2 Inhibitor Discovery [2]

- Objective: Generate novel, synthesizable, high-affinity inhibitors for the CDK2 target.

- Model Architecture: A Variational Autoencoder (VAE) was used to generate molecular structures.

- AL Workflow: The system employed nested AL cycles:

- Inner Cycle: Generated molecules were evaluated by fast chemoinformatics oracles (drug-likeness, synthetic accessibility). Promising molecules were used to fine-tune the VAE.

- Outer Cycle: Periodically, accumulated molecules were evaluated by a more expensive physics-based oracle (molecular docking). High-scoring molecules were added to a permanent set for VAE fine-tuning.

- Outcome: The workflow generated molecules with novel scaffolds. Of 9 molecules synthesized and tested, 8 showed in vitro activity, including one with nanomolar potency. This demonstrates a high success rate, directly minimizing the cost of failed synthesis and testing [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Computational and Experimental Tools for AL-Driven Synthesis

| Item / Reagent | Function in AL-Driven Synthesis | Specific Example / Note |

|---|---|---|

| Gaussian Process Regressor (GPR) | A surrogate model that provides predictions with built-in uncertainty estimates, crucial for acquisition functions. | Ideal for continuous parameter optimization (e.g., reaction conditions, processing parameters) [4]. |

| Variational Autoencoder (VAE) | A generative model that learns a continuous latent representation of molecular structures, enabling exploration of novel chemical space. | Used in de novo molecular design to generate novel drug candidates [2]. |

| Expected Hypervolume Improvement (EHVI) | An acquisition function for multi-objective optimization; it selects points that maximize the dominated area in the objective space. | Used to balance trade-offs like strength vs. ductility in materials or potency vs. solubility in drugs [4]. |

| Synthetic Accessibility (SA) Score | A computational oracle that predicts how easy a molecule is to synthesize, filtering out impractical candidates early. | Integrated into the inner AL cycle to ensure generated molecules are synthetically feasible [2]. |

| Molecular Docking Software | A physics-based oracle that predicts how a small molecule binds to a protein target, providing an affinity estimate. | Used in the outer AL cycle for more accurate, target-specific scoring (e.g., for CDK2/KRAS targets) [2]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are exploitation and exploration in an Active Learning context, and why is balancing them critical? In Active Learning (AL), exploitation refers to selecting subsequent experiments based on the surrogate model's current best prediction to maximize immediate performance. In contrast, exploration prioritizes sampling from areas of high predictive uncertainty to improve the model itself. Balancing this trade-off is crucial because pure exploitation may cause the model to get stuck in a local optimum, while pure exploration can be inefficient. Multi-objective Bayesian optimization acquisition functions, like the Expected Hypervolume Improvement (EHVI), are specifically designed to balance these two goals, leading to a more efficient discovery of optimal solutions [6].

FAQ 2: My AL model seems to have converged on poor results. How can I break out of this local optimum? This is a classic sign of an algorithm overly focused on exploitation. To address this:

- Adjust your acquisition function: Switch from a purely exploitative strategy to one that explicitly balances exploration and exploitation, such as EHVI [6].

- Incorporate human expertise: A domain expert can identify when the model is stagnating and manually propose an experiment in an unexplored region of the parameter space. This intervention can provide the novel data needed to guide the model toward a more promising search area.

- Re-evaluate initial data: Ensure your initial training set is diverse enough to allow the model to learn a meaningful representation of the search space from the outset.

FAQ 3: How does human expertise integrate with the automated Active Learning cycle? Human expertise is not replaced by but is integrated into the AL cycle. Experts are crucial for:

- Framing the problem: Defining the relevant search space, objectives, and constraints.

- Designing the initial dataset: Curating a representative set of data to train the initial surrogate model.

- Interpreting results: Providing physicochemical or biological context to the model's predictions, which can validate findings or flag potential errors [6].

- Guiding refinement: Making strategic decisions when the model performance plateaus, such as adjusting the workflow or incorporating new oracles, as seen in generative AI workflows for drug design [2].

FAQ 4: How many AL iterations are typically needed to find a good solution? The number of iterations is highly dependent on the problem complexity and the initial data. However, AL is designed to find optimal solutions with significantly fewer experiments than traditional methods. For example, in material science, one study found the optimal Pareto front by sampling only 16% to 23% of the entire search space using EHVI [6]. Another study on Ti-6Al-4V alloy synthesis used an initial dataset of 119 known combinations to efficiently explore 296 candidates through iterative AL cycles [4].

Troubleshooting Guides

Issue: The surrogate model's predictions are inaccurate and are leading the AL cycle to poor experimental suggestions.

- Potential Cause 1: Insufficient or poor-quality initial training data.

- Solution: Expand or refine the initial dataset to ensure it is representative of the broader search space. Data cleaning and normalization are essential first steps.

- Potential Cause 2: The model's features do not adequately capture the underlying physics or chemistry of the process.

- Solution: Conduct a feature importance analysis to identify which parameters are most critical for predictions [6]. Consult with a domain expert to incorporate more meaningful descriptors or features.

- Potential Cause 3: The chosen machine learning model is not suitable for the problem.

- Solution: Benchmark different surrogate models (e.g., Gaussian Process Regressors, Random Forests) to identify the best performer for your specific data and objectives. Gaussian Process Regressors are commonly used as they provide uncertainty estimates [4].

Issue: The algorithm is successfully optimizing one target property but severely compromising another.

- Potential Cause: The acquisition function is not effectively handling the trade-off between conflicting objectives.

- Solution: Implement a multi-objective optimization strategy. Use the Expected Hypervolume Improvement (EHVI) as your acquisition function, which is specifically designed to find a set of non-dominated solutions, known as the Pareto front, that balance multiple objectives [6]. This avoids over-optimizing a single property at the expense of others.

Issue: The AL process is slow, and each iteration is computationally expensive.

- Potential Cause: The evaluation of proposed experiments (e.g., high-fidelity simulations or complex molecular docking) is inherently resource-intensive.

- Solution: Implement a nested or multi-fidelity AL framework. For example, in drug discovery, a workflow can use a fast, inexpensive oracle (e.g., a chemoinformatic filter for drug-likeness) in an "inner" cycle to screen many candidates, and a slower, expensive oracle (e.g., molecular docking) in an "outer" cycle to evaluate only the most promising candidates [2].

Experimental Data & Protocols

Table 1: Performance Comparison of Acquisition Functions in a Multi-Objective Active Learning Study [6] This table summarizes quantitative results from a study applying different acquisition functions to discover materials with optimal electronic and mechanical properties. The key metric is the percentage of the total search space that needed to be sampled to find the optimal Pareto Front (PF).

| Acquisition Function | Strategy Type | Sampling % to Find Optimal PF (C2DB Database) | Key Advantage |

|---|---|---|---|

| EHVI | Balanced | 16% - 23% | Best balance of exploitation vs. exploration |

| Exploitation | Performance-focused | 36% less efficient than EHVI in data-deficient cases | Maximizes immediate performance gains |

| Exploration | Uncertainty-focused | 36% less efficient than EHVI in data-deficient cases | Maximizes global model understanding |

| Random Selection | None | 36% less efficient than EHVI | Baseline for comparison |

Protocol 1: Implementing a Pareto Active Learning Framework for Material Synthesis [4] This protocol outlines the workflow for optimizing process parameters for additive-manufactured Ti-6Al-4V to achieve high strength and ductility.

- Construct Initial Dataset: Compile a dataset of known combinations of process parameters (e.g., laser power, scan speed) and post-heat treatment conditions (e.g., temperature, time) and their corresponding outcomes (e.g., Ultimate Tensile Strength, Total Elongation). The cited study used 119 such data points [4].

- Define Unexplored Search Space: Establish a set of candidate parameter combinations (e.g., 296 candidates) that are scientifically plausible but untested.

- Train Surrogate Model: Train a multi-output surrogate model, such as a Gaussian Process Regressor (GPR), on the initial dataset to predict the outcomes of new parameter sets.

- Select Acquisition Function: Choose a multi-objective acquisition function like Expected Hypervolume Improvement (EHVI) to balance the strength-ductility trade-off.

- Run Active Learning Loop: a. Use the trained GPR and EHVI to select the most promising candidate(s) from the unexplored set. b. Perform the experiment(s) (e.g., fabricate and test the alloy) to obtain the true outcome values. c. Add the new data point (parameters and measured outcomes) to the training dataset. d. Retrain the GPR model on the updated, enlarged dataset.

- Iterate: Repeat step 5 for a set number of iterations or until a performance target is met.

Protocol 2: A Nested Active Learning Workflow for Generative Molecular Design [2] This protocol describes a methodology for generating novel, drug-like molecules with high predicted affinity for a specific biological target.

- Initial Model Training: Train a generative model (e.g., a Variational Autoencoder or VAE) on a general set of known drug-like molecules to learn viable chemical structures.

- Fine-tune on Target Data: Further fine-tune the VAE on a small, target-specific set of molecules (e.g., known inhibitors of a protein).

- Inner AL Cycle (Chemical Optimization): a. Generate: Sample the VAE to generate new molecular structures. b. Evaluate with Cheminformatic Oracle: Filter generated molecules using fast computational tools to assess drug-likeness, synthetic accessibility, and novelty compared to the training set. c. Fine-tune: Use the molecules that pass this filter to fine-tune the VAE, steering generation toward chemically desirable compounds. Repeat this inner cycle several times.

- Outer AL Cycle (Affinity Optimization): a. After several inner cycles, take the accumulated, chemically-valid molecules and evaluate with a Physics-based Oracle (e.g., molecular docking simulations) to predict target affinity. b. Fine-tune: Use the molecules with the best docking scores to fine-tune the VAE, steering generation toward high-affinity compounds.

- Iterate: Conduct further rounds of nested inner and outer AL cycles to progressively refine the generated molecules.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational and Experimental Tools for Active Learning-driven Synthesis

| Item / Solution | Function in Active Learning Workflows |

|---|---|

| Gaussian Process Regressor (GPR) | A surrogate model that predicts the properties of unexplored parameter sets and, crucially, provides an estimate of its own uncertainty, which is essential for acquisition functions [4]. |

| Expected Hypervolume Improvement (EHVI) | An acquisition function for multi-objective optimization that selects experiments likely to maximize the dominated volume of the objective space, efficiently balancing exploitation and exploration [4] [6]. |

| Variational Autoencoder (VAE) | A generative model that learns a compressed, continuous representation (latent space) of molecular structures, enabling the generation of novel molecules with tailored properties [2]. |

| Cheminformatic Oracle | A computational filter (e.g., for drug-likeness, synthetic accessibility) used to quickly evaluate and prioritize generated molecules before more costly assessments [2]. |

| Physics-based Oracle (e.g., Docking) | A more computationally expensive simulation (e.g., molecular docking, absolute binding free energy calculations) used to predict the biological activity or affinity of a candidate molecule [2]. |

Workflow Visualization

Diagram 1: High-Level Active Learning Cycle for Synthesis Optimization

This diagram illustrates the core, high-level iterative loop of an Active Learning process, highlighting the step where human expertise can be applied to perform the proposed experiment.

Diagram 2: Nested AL for Molecular Design with Dual Oracles

This diagram details the nested active learning workflow used in generative molecular design [2], showing how fast (cheminformatic) and slow (physics-based) oracles are used in different cycles to efficiently optimize molecules.

The Expanding Role of AL in Drug Discovery and Materials Science

Frequently Asked Questions (FAQs)

FAQ 1: What is the core benefit of using Active Learning over high-throughput screening? Active Learning (AL) optimizes the experimental process by iteratively selecting the most informative experiments to perform, rather than relying on random or exhaustive screening. This approach directly addresses the challenge of navigating vast combinatorial spaces where desired outcomes, such as synergistic drug pairs or stable materials, are rare. By leveraging a surrogate model and an acquisition function, AL balances the exploration of unknown regions with the exploitation of promising areas, dramatically reducing the time and cost required for discovery [7] [8].

FAQ 2: How do I choose the right acquisition function for my experiment? The choice of acquisition function depends on your primary goal. The table below summarizes common functions and their applications:

| Acquisition Function | Primary Goal | Application Example |

|---|---|---|

| Expected Improvement | Find the best possible outcome | Maximizing the synergy score of a drug pair [8] |

| Upper Confidence Bound | Balance performance and uncertainty | Discovering new solder alloys with optimal strength & ductility [9] |

| Uncertainty Sampling | Improve the overall model accuracy | Selecting drug-cell line combinations where the model's prediction is least certain [7] |

FAQ 3: My AL model seems to get stuck in a local optimum. How can I encourage more exploration? This is a classic issue of over-exploitation. You can address it by:

- Adjusting the batch size: Using smaller batch sizes has been shown to increase the discovery yield of rare events by allowing the model to re-prioritize more frequently [7].

- Tuning the acquisition function: For functions like the Gaussian Upper Confidence Bound, you can dynamically adjust the weight assigned to the uncertainty term. A higher weight encourages exploration of less-characterized areas of the search space [9].

- Incorporating domain knowledge: Using physically meaningful features or biasing the model with known thermodynamic data can guide it away from physically implausible regions [10].

FAQ 4: What are the common reasons for synthesis failure in autonomous materials discovery, and how can AL help? The A-Lab study identified several failure modes, including slow reaction kinetics, precursor volatility, and amorphization [11]. AL helps by:

- Learning from Failure: Each failed experiment is fed back into the model, updating its understanding of the synthesis landscape and helping it avoid similar unproductive paths in the future.

- Pathway Optimization: AL algorithms can prioritize synthesis routes that avoid intermediates with low driving forces to form the final target, thus circumventing kinetic traps [11].

Troubleshooting Guides

Issue 1: Poor Model Performance in Low-Data Regimes

Problem: The AL algorithm performs poorly when starting with very little initial training data, leading to uninformative experimental selections.

Solutions:

- Prioritize Data-Efficient Features: In drug synergy prediction, molecular encoding (e.g., Morgan fingerprints) has a limited impact in low-data scenarios. Instead, prioritize informative cellular context features. Using gene expression profiles of the target cell line can significantly boost prediction performance even with small training sets [7].

- Start with a Diverse Initial Set: If possible, the initial batch of experiments for training the model should be chosen to be as diverse as possible in the feature space (e.g., covering diverse chemistries and structures) to provide the model with a broad foundational understanding [8].

- Use a Simpler Model: In the initial stages, a parameter-light model like logistic regression or a simple neural network may outperform very large, complex models (e.g., transformers with millions of parameters) that are prone to overfitting on small datasets [7].

Issue 2: Inefficient Experimental Loops

Problem: The iterative loop of experiment selection, execution, and model update is not yielding improvements quickly enough.

Solutions:

- Optimize Batch Size and Learning Cycles: A study on drug synergy found that using an active learning framework discovered 60% of synergistic pairs by exploring only 10% of the combinatorial space. This high efficiency was achieved with careful tuning of batch size and the number of learning cycles [7]. The workflow for such an optimized loop is illustrated below.

- Implement Adaptive Weights: In multi-objective optimization (e.g., maximizing strength and ductility), use an acquisition function with adaptive weights that decay over iterations. This strategy starts with a strong emphasis on exploration and gradually shifts towards exploitation, efficiently navigating the trade-off [9].

- Leverage Prior Knowledge: Pre-train your model on large, publicly available datasets (e.g., the Materials Project for materials, ChEMBL for drugs) before initiating the active learning cycle. This provides the model with a strong foundational understanding, as demonstrated in the RECOVER model for drug synergy [7] [11].

Experimental Protocols

Protocol 1: Predicting Synergistic Drug Combinations with Active Learning

This protocol is adapted from a study that used AL to efficiently discover synergistic drug pairs [7].

1. Objective: To iteratively identify drug combinations with a high Loewe synergy score (>10) while minimizing the number of experimental measurements.

2. Materials and Data:

- Initial Dataset: Public drug synergy data (e.g., O'Neil or ALMANAC datasets) for pre-training.

- Drug Features: Morgan fingerprints or other molecular representations.

- Cellular Features: Gene expression profiles of cancer cell lines (e.g., from GDSC database).

- AI Algorithm: A multi-layer perceptron (MLP) or other data-efficient model.

3. Methodology:

- Step 1 - Pre-training: Pre-train the MLP model on the initial dataset using drug and cellular features as input to predict the synergy score.

- Step 2 - Initial Selection: Use an acquisition function (e.g., Upper Confidence Bound) on the unmeasured drug-cell space to select the first batch of combinations for testing.

- Step 3 - Iterative Loop: a. Experiment: Measure the synergy score for the selected batch of drug combinations in vitro. b. Update: Add the new experimental results to the training dataset. c. Retrain: Retrain the MLP model on the augmented dataset. d. Select: Use the updated model to select the next most informative batch of experiments.

- Step 4 - Termination: The loop continues until a predefined number of synergistic pairs is found or the experimental budget is exhausted.

4. Key Quantitative Findings:

| Metric | Random Screening | Active Learning |

|---|---|---|

| Experiments to find 300 synergistic pairs | 8,253 | 1,488 |

| Percentage of combinatorial space explored | ~100% | 10% |

| Synergistic pairs found | 300 | 300 (with 82% cost savings) |

Data derived from benchmark studies [7].

Protocol 2: Autonomous Synthesis of Novel Inorganic Materials

This protocol is based on the workflow of the A-Lab, which successfully synthesized 41 novel compounds [11].

1. Objective: To autonomously synthesize and characterize novel, computationally predicted inorganic materials.

2. Materials and Setup:

- Robotics: Integrated stations for powder dispensing, mixing, heat treatment, and X-ray diffraction (XRD) characterization.

- Precursors: Library of solid powder precursors.

- Computational Data: Phase stability data from ab initio databases (e.g., Materials Project).

- AI Models: (a) Natural language processing model trained on literature for initial recipe suggestion; (b) Active learning algorithm (ARROWS³) for recipe optimization.

3. Methodology:

- Step 1 - Target Selection: Receive a list of air-stable target materials predicted to be thermodynamically stable.

- Step 2 - Recipe Proposal: The NLP model proposes up to five initial synthesis recipes based on analogy to known materials.

- Step 3 - Synthesis & Characterization: Robotic systems execute the recipe: weigh and mix precursors, load into a furnace for heating, cool, and then characterize the product via XRD.

- Step 4 - Phase Analysis: ML models analyze the XRD pattern to identify phases and quantify target yield via automated Rietveld refinement.

- Step 5 - Active Learning Optimization: If the target yield is below 50%, the ARROWS³ algorithm proposes a new recipe. It uses a database of observed pairwise reactions and thermodynamic driving forces to avoid intermediates and suggest more efficient pathways.

- Step 6 - Iteration: Steps 3-5 are repeated until a high-yield synthesis is achieved or all options are exhausted.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table lists key resources used in the cited experiments for drug and materials discovery.

| Item Name | Function / Application | Example from Research |

|---|---|---|

| Morgan Fingerprints | A numerical representation of molecular structure used as input for AI models in drug discovery. | Used as molecular features for predicting drug synergy scores [7]. |

| Gene Expression Profiles | Genomic data describing the cellular environment, critical for context-specific predictions. | Profiles from the GDSC database were used to model the response of specific cancer cell lines [7]. |

| Solid Powder Precursors | High-purity inorganic powders used as starting materials for solid-state synthesis. | The A-Lab used a library of such powders to synthesize novel oxides and phosphates [11]. |

| ARROWS³ Algorithm | An active learning algorithm that integrates observed reaction data and thermodynamics to optimize solid-state synthesis routes. | Used by the A-Lab to improve synthesis yields by avoiding low-driving-force intermediates [11]. |

| Gaussian Process Regression (GPR) Model | A surrogate model that provides predictions with uncertainty estimates, essential for Bayesian optimization. | Used to model the strength and ductility of solder alloys, guiding the AL search [9]. |

Active Learning for Multi-Objective Optimization

Optimizing for multiple properties often involves trade-offs, such as the strength-ductility trade-off in alloys. The following diagram illustrates how AL navigates this challenge.

Implementing Active Learning Frameworks: From Theory to Practical Workflows

Frequently Asked Questions

FAQ 1: What is the most critical factor for a successful initial Active Learning (AL) cycle? The quality of your data representation, or embeddings, is paramount [12]. High-quality embeddings capture relevant semantic information, which allows your AL query strategies to more effectively identify ambiguous or informative instances. Initializing your labeled pool with a diversity-based sampling method, rather than a purely random one, can create a strong synergy with these good embeddings and boost performance in the crucial early AL iterations [12].

FAQ 2: Is there a single best query strategy I should always use? No, our benchmark results show that there is no universally best query strategy [13]. The optimal choice is highly sensitive to the quality of your underlying data embeddings and the specific target task [12]. While some computationally inexpensive strategies like Margin sampling can perform well on specific datasets, hybrid strategies such as BADGE often demonstrate greater robustness across diverse tasks [12]. You should plan to evaluate several strategies in your specific context.

FAQ 3: Why does my model's performance seem to plateau despite continued AL cycles? This is a common observation. The effectiveness of AL is most pronounced when labeled data is scarce. As the size of your labeled set grows, the performance gap between different AL strategies and random sampling typically narrows, indicating diminishing returns [13]. This is a sign that you may need to refine your search space, incorporate new data sources, or consider that the model may be approaching its performance limit for the given data and architecture.

FAQ 4: How can I debug issues of poor reproducibility in my AL experiments? Integrating computer vision and vision language models to monitor experiments can help automate the debugging process [14]. These systems can detect subtle issues, such as a millimeter-sized deviation in a sample's shape or a misplacement by automated equipment. The model can then hypothesize sources of this irreproducibility and suggest corrective actions, serving as an invaluable experimental assistant [14].

Active Learning Query Strategy Benchmark

The table below summarizes the performance of various AL query strategies based on a benchmark in materials science regression tasks. Performance can vary significantly based on embedding quality and the specific task [12] [13].

| Strategy Type | Example Methods | Key Principle | Performance Notes |

|---|---|---|---|

| Uncertainty-Based | LCMD, Tree-based-R, Margin Sampling [13] [12] | Selects instances where the model's prediction is least confident. | Often shows strong performance early in the AL cycle; Margin sampling can be computationally efficient [13]. |

| Diversity-Based | CoreSet, ProbCover, TypiClust [12] | Selects instances that represent the underlying data distribution. | Helps avoid redundant samples and can be crucial for initial pool selection [12]. |

| Hybrid | BADGE, RD-GS, DropQuery [12] [13] | Combines uncertainty and diversity principles. | Generally offers greater robustness across different tasks and embedding qualities [12]. |

| Representativeness | GSx, EGAL [13] | Selects instances that are most representative of the unlabeled pool. | In benchmarks, geometry-only heuristics can be outperformed by uncertainty-driven or hybrid methods early on [13]. |

Experimental Protocol: Benchmarking AL Strategies with AutoML

This protocol is adapted from a benchmark study that integrated AL with Automated Machine Learning (AutoML) for small-sample regression, a common scenario in materials science and drug development [13].

1. Problem Definition & Data Preparation:

- Define the regression task (e.g., predicting a material's property or a compound's activity).

- Start with a dataset where only a small subset,

L = {(x_i, y_i)}_{i=1}^l, is labeled. The majority of the data should be an unlabeled pool,U = {x_i}_{i=l+1}^n[13]. - Partition the data into training and test sets with an 80:20 ratio [13].

2. Initial Pool Selection (IPS):

- Instead of a purely random selection, consider a diversity-based method (e.g., TypiClust) to select the initial

n_initlabeled samples fromU. This can establish a better-performing initial classifier [12].

3. Iterative AL Cycle: The core process involves repeating the following steps:

- Model Training & Validation: Fit an AutoML model on the current labeled set

L. The AutoML system should automatically handle model selection (e.g., from linear regressors to tree-based ensembles) and hyperparameter tuning, using 5-fold cross-validation [13]. - Query Instance Selection: Use the chosen AL query strategy (e.g., BADGE, Margin Sampling) to select the most informative sample

x*from the unlabeled poolU[13]. - Annotation & Update: Obtain the label

y*forx*(simulated from the test set in a benchmark). Add the newly labeled sample(x*, y*)toLand removex*fromU[13].

4. Performance Evaluation:

- At each iteration, test the updated model on the held-out test set.

- Track performance metrics like Mean Absolute Error (MAE) and the Coefficient of Determination (R²) over the number of acquired samples [13].

- Compare the learning curve of your AL strategies against a baseline of random sampling.

5. Stopping Criterion:

- The process can be stopped when the performance plateaus or when a pre-defined budget for data acquisition is exhausted [13].

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in AL-Driven Synthesis |

|---|---|

| Automated Liquid-Handling Robot | Precisely dispenses precursor molecules and solvents according to recipes suggested by the AL model, enabling high-throughput synthesis [14]. |

| Carbothermal Shock System | Allows for the rapid synthesis of materials (e.g., catalysts) by subjecting precursors to very high temperatures for short durations, accelerating the experimental loop [14]. |

| Automated Electrochemical Workstation | Performs high-throughput testing of material properties (e.g., catalytic activity, power density) to generate labeled data for the AL model [14]. |

| Automated Electron Microscopy | Provides microstructural images and characterization data. This multimodal information can be fed back to the AL model to inform subsequent experiment design [14]. |

| Frozen LLM Embeddings | Serves as a high-quality, fixed feature extractor to represent textual or structural data (e.g., scientific literature, molecule SMILES strings), forming the basis for calculating data diversity and similarity in AL strategies [12]. |

| Bayesian Optimization (BO) | A core algorithm that acts as a recommendation engine, suggesting the next experiment to run based on all previous results and a knowledge base, guiding the search for optimal recipes [14]. |

Workflow Diagram: The CRESt AL System for Synthesis Optimization

Workflow Diagram: Generic Pool-Based Active Learning Cycle

Performance Benchmarks of Active Learning Strategies

The table below summarizes the performance of various Active Learning (AL) query strategies in regression tasks, as benchmarked on small-sample materials science datasets. This data can help you select the most appropriate strategy for your specific experimental conditions [13].

| Strategy Category | Example Strategies | Performance in Data-Scarce Phase | Performance as Data Grows | Key Characteristics |

|---|---|---|---|---|

| Uncertainty-Based | LCMD, Tree-based-R | Clearly outperforms baseline and geometry heuristics [13] | Gap with other strategies narrows [13] | Targets points where model is most uncertain, often using predictive variance [15] [13] |

| Diversity-Based | GSx, EGAL | Lower performance compared to uncertainty methods early on [13] | Converges with other methods [13] | Aims to cover the feature space, selecting maximally different data points [16] |

| Hybrid | RD-GS | Outperforms baseline; balances uncertainty and diversity [13] | Converges with other methods [13] | Combines multiple principles (e.g., uncertainty & diversity) for more robust selection [17] [18] |

| Expected Model Change | EMCM | Not top performer in benchmark [13] | Converges with other methods [13] | Selects samples expected to cause the largest change in the model [15] [13] |

Frequently Asked Questions for Experimental Troubleshooting

Q1: My regression model's performance plateaus or even degrades after the first few AL cycles. What could be wrong? This is a common sign that your query strategy is selecting outliers or redundant samples. An uncertainty-only approach can be "myopic," focusing on a specific region of the feature space and failing to explore globally [15] [19].

- Solution A: Implement a Hybrid Strategy. Combine your uncertainty sampling with a diversity component. For instance, the RD-GS strategy or the ε-weighted hybrid query strategy (ε-HQS) are explicitly designed to balance exploration (diversity) and exploitation (uncertainty), preventing the model from getting stuck [13] [17].

- Solution B: Use Adaptive Weighting. The Adaptive Weighted Uncertainty Sampling (AWUS) method dynamically balances pure uncertainty sampling with random exploration based on how much the model changes between iterations, automatically adapting from exploration to exploitation [18].

Q2: How do I implement an effective stopping criterion for my AL cycle to avoid wasting resources? A general stopping criterion needs to consider the Metric, Dataset, and Condition. Simply using performance on a small, potentially biased validation set can lead to unstable and impractical results [15].

- Solution: Monitor Model Stability. One approach is to track the change in a relevant metric, such as the model's overall uncertainty or its predictions on a held-out set. When the change between iterations falls below a pre-defined threshold, the AL process can be halted. The AWUS method, for example, uses the magnitude of model change between iterations to guide its sampling probability, which can also inform a stopping decision [15] [18].

Q3: For optimizing synthesis recipes, should I use a pool-based or query-synthesis approach? The choice depends on whether your candidate recipes are pre-defined or can be generated on-demand.

- Pool-Based Approach: Your AL algorithm selects the most informative samples from a fixed, unlabeled pool of existing candidate recipes. This is the most common method [20].

- Query-Synthesis Approach: Your algorithm generates synthetic, optimal query points from the entire input space. This is particularly powerful for optimization tasks like finding the best synthesis parameters. Research has shown that the query-synthesis approach can be "less-myopic" and significantly superior for objective optimization, as it is not limited by the existing pool [20].

Q4: How can I ensure my AL-generated models are useful for real-world synthesis optimization and not just accurate predictors? Standard AL strategies often focus only on maximizing prediction accuracy. For synthesis optimization, your goal is often to maximize a utility function (e.g., product yield, material strength) [20].

- Solution: Adopt an Exploration-Exploitation Framework. Frame your AL process to explicitly handle the trade-off between:

- Exploration: Choosing synthesis conditions to minimize the uncertainty of your property prediction model.

- Exploitation: Choosing synthesis conditions predicted to maximize your target utility function (e.g., yield). Strategies designed for this balance will more efficiently guide your experiments toward optimal recipes [20].

Experimental Protocol: Implementing a Hybrid Query Strategy

This protocol outlines the steps to implement a pool-based active learning cycle with a hybrid query strategy for a regression task, such as predicting the property of a synthesized material.

1. Problem Formulation and Initial Setup

- Define Objective: Clearly state the target variable to be predicted (e.g., reaction yield, material bandgap).

- Prepare Data Pool: Assemble a pool of unlabeled data points, where each point is a vector of features describing a synthesis recipe (e.g., precursors, temperature, time) [13].

- Establish Initial Labeled Set: Randomly select a small number of data points from the pool, synthesize and characterize them to obtain the target variable value, and place them in the initial labeled set

L[13].

2. Active Learning Cycle Repeat the following steps until a stopping criterion is met (e.g., performance plateau, budget exhaustion) [15] [13]:

- Step A - Model Training: Train a regression model (can be an AutoML system) on the current labeled set

L. - Step B - Hybrid Query Selection:

- For all data points in the unlabeled pool

U, calculate an uncertainty score (e.g., the predictive variance of the model) [13]. - For all data points in

U, calculate a diversity score. This can be done by clustering the feature space and selecting points from underrepresented clusters, or using a representativeness measure [16]. - Combine the two scores into a single selection metric. A simple method is a weighted sum:

Selection_Score = α * Uncertainty_Score + (1-α) * Diversity_Score. - Select the data point

x*with the highest combined score.

- For all data points in the unlabeled pool

- Step C - Experimentation and Model Update:

- Perform the wet-lab synthesis and characterization for the selected recipe

x*to obtain its true labely*. - Update the data sets:

L = L ∪ {(x*, y*)}andU = U \ {x*}[13].

- Perform the wet-lab synthesis and characterization for the selected recipe

Active Learning Workflow for Synthesis Optimization

The diagram below illustrates the iterative, closed-loop process of an Active Learning framework applied to optimizing synthesis recipes.

The Scientist's Toolkit: Essential Research Reagents

The table below lists key computational "reagents" and frameworks used in building active learning pipelines for regression.

| Tool / Framework | Function / Application |

|---|---|

| modAL [15] | A flexible, modular Active Learning framework for Python3, built on scikit-learn. It allows for rapid implementation of custom AL workflows with support for uncertainty-based, committee-based, and other strategies. |

| AutoML [13] | Automated Machine Learning systems are used to automatically search and optimize between different model families and their hyperparameters. This is particularly valuable when the underlying surrogate model in an AL cycle may change. |

| Bayesian Linear Regression [20] | A probabilistic model that provides native uncertainty estimates, which are crucial for calculating uncertainty scores in query strategies. It is a common choice for regression tasks in AL. |

| Variational Autoencoder (VAE) [2] | A type of generative model that can be integrated with AL cycles to generate novel molecular structures or synthesis parameters, rather than selecting from a fixed pool (query-synthesis). |

| Expected Model Change Maximization (EMCM) [15] [13] | A query principle that selects data points which are expected to cause the largest change in the model parameters, often estimated using the gradient of the loss function. |

This technical support center provides troubleshooting guides and FAQs for researchers integrating Active Learning (AL) with Generative AI and Automated Machine Learning (AutoML). This content supports a thesis on optimizing synthesis recipes with active learning, focusing on practical challenges in drug discovery. The guidance is tailored for scientists developing AI-driven molecular discovery pipelines [2].

Experimental Protocols & Methodologies

This section details a core methodology for integrating a Variational Autoencoder (VAE) with nested Active Learning cycles, a proven framework for generating novel, drug-like molecules [2].

Core Workflow: VAE with Nested Active Learning Cycles

The following diagram illustrates the iterative workflow of a generative model integrated with nested active learning cycles for molecular optimization.

Diagram Title: VAE with Nested Active Learning Workflow

Protocol Steps:

- Data Representation & Initial Training: Represent training molecules as tokenized SMILES strings. The VAE is first trained on a general molecular dataset and then fine-tuned on a target-specific set to learn viable chemistry and initial target engagement [2].

- Molecule Generation: The trained VAE is sampled to generate new, previously unseen molecules [2].

- Inner AL Cycle (Chemoinformatics Oracle): Generated molecules are evaluated by computational oracles for:

- Drug-likeness: Adherence to rules like Lipinski's Rule of Five.

- Synthetic Accessibility (SA): Estimated ease of laboratory synthesis.

- Similarity: Dissimilarity to molecules in the training set to ensure novelty. Molecules passing these filters are added to a "temporal-specific set" and used to fine-tune the VAE. This cycle iterates to prioritize chemically desirable properties [2].

- Outer AL Cycle (Affinity Oracle): After a set number of inner cycles, molecules from the temporal-specific set are evaluated by a physics-based affinity oracle, such as molecular docking simulations. Molecules with favorable docking scores are promoted to a "permanent-specific set" and used for another round of VAE fine-tuning, directly steering generation toward high-affinity candidates [2].

- Candidate Selection: After multiple outer cycles, the most promising molecules from the permanent-specific set undergo stringent filtration and advanced molecular modeling (e.g., binding free energy simulations) for final selection and experimental validation [2].

Quantitative Performance Data

The table below summarizes key quantitative findings from a study that applied this VAE-AL workflow to two pharmaceutical targets, CDK2 and KRAS [2].

| Metric | CDK2 | KRAS |

|---|---|---|

| Molecules Synthesized | 9 | N/A (In-silico) |

| Experimentally Active Molecules | 8 | N/A (In-silico) |

| Potent Molecule (Nanomolar) | 1 | N/A (In-silico) |

| Key Achievement | Novel scaffolds with high predicted affinity and synthesis accessibility generated and validated. | Novel scaffolds distinct from known inhibitors (e.g., Amgen's scaffold) generated with high predicted affinity [2]. |

The Scientist's Toolkit: Research Reagent Solutions

This table lists essential computational tools and frameworks for building integrated AL-Generative AI-AutoML architectures.

| Tool / Framework | Type | Function in the Experiment |

|---|---|---|

| Variational Autoencoder (VAE) | Generative Model | Generates novel molecular structures from a continuous latent space; chosen for stability and efficient sampling [2] [21]. |

| mljar-supervised | AutoML Framework | Automates the entire ML pipeline for predictive tasks, including data preprocessing, feature engineering, algorithm selection, and hyperparameter tuning [22]. |

| Azure Machine Learning | AI Development Platform | Provides cloud environment to build, deploy, and manage machine learning models and pipelines at scale; supports open-source frameworks [23]. |

| GPT-4 / Azure OpenAI | Large Language Model (LLM) | Used for tasks like summarizing research literature, generating code templates, or aiding in data analysis and report generation [23] [21]. |

| Encord Active | Active Learning Platform | Facilitates building active learning pipelines to strategically select the most informative data points for labeling, reducing annotation costs [24]. |

| Retrieval Augmented Generation (RAG) | Architecture Pattern | Grounds a generative LLM on specific, private data sources (e.g., proprietary research papers) to provide more accurate and context-aware responses [23]. |

Troubleshooting Guides and FAQs

FAQ 1: Our generative model produces molecules, but they are chemically invalid or have poor synthetic accessibility (SA). How can we fix this?

- Problem: The generated output violates basic chemical rules or is too complex to synthesize.

- Solution:

- Implement Robust Validity Checks: Integrate cheminformatics libraries (e.g., RDKit) into your generation pipeline to filter out invalid SMILES strings as a first-pass filter [2].

- Incorporate SA Filters: Use a synthetic accessibility oracle within your Active Learning loop. This can be a rule-based scorer or a predictive model that penalizes molecules with complex ring systems or rare functional groups, steering the generation toward more tractable chemistries [2].

- Refine Training Data: Ensure your initial training set is curated for drug-like molecules and good SA. The model learns patterns from its training data; "garbage in, garbage out" applies.

FAQ 2: Our integrated AL-Generative AI pipeline is slow and computationally expensive to run, especially the docking simulations. How can we optimize it?

- Problem: The physics-based evaluations (e.g., molecular docking) create a computational bottleneck.

- Solution:

- Leverage Multi-Fidelity Oracles: Use a fast, approximate method (like a QSAR model) as a primary filter in an inner AL cycle. Only the top candidates from this stage are passed to the more computationally expensive, high-fidelity docking simulation in the outer AL cycle [2].

- Optimize Resource Allocation: Use cloud-based high-performance computing (HPC) resources, such as those available through Azure Machine Learning, to parallelize the docking evaluations across multiple compute nodes [23].

- Use AutoML for Surrogate Models: Employ AutoML frameworks to quickly build and train efficient surrogate models that can approximate the docking score, reducing the number of direct docking calls needed [25].

FAQ 3: How can we effectively guide the generative model to explore novel chemical space rather than just reproducing known actives from the training set?

- Problem: The model generates molecules too similar to the training data, lacking novelty.

- Solution:

- Active Learning with Diversity Sampling: Implement a diversity sampling strategy in your AL query step. This ensures that the molecules selected for oracle evaluation and subsequent model fine-tuning are maximally dissimilar from each other and from the existing training set, forcing the model to explore new regions of chemical space [24] [2].

- Explicit Novelty Reward: Structure the AL reward function or the fine-tuning step to explicitly favor molecules that are dissimilar to the known actives in the training set [2].

FAQ 4: We are struggling with the initial setup and integration of the different components (AL, Generative AI, AutoML). Are there platforms that can simplify this?

- Problem: The architectural complexity of integrating multiple advanced AI components is a significant barrier.

- Solution:

- Use Integrated Platforms: Leverage comprehensive platforms like Microsoft Foundry, which provides tools to experiment with foundation models, build AI agents, and manage knowledge stores in a unified environment, simplifying integration [23].

- Adopt Reference Architectures: Study and implement well-architected framework guides for AI workloads, which provide best practices for designing scalable and maintainable systems that incorporate these components [23].

- Start with AutoML: Begin by using AutoML tools to handle the predictive modeling parts of your pipeline (e.g., property prediction). This reduces the initial complexity and allows you to focus on the integration between AL and the generative model [22] [25].

FAQ 5: Our generative model seems to have "mode collapse," where it generates a limited variety of structures. How can we improve diversity?

- Problem: The generative model produces a small set of similar molecules repeatedly.

- Solution:

- Architecture Choice: If using a Generative Adversarial Network (GAN), mode collapse is a known challenge. Consider switching to a VAE, which is generally more robust to this issue due to its structured latent space [2] [21].

- Adjust AL Query Strategy: Balance your Active Learning query strategy between "exploration" (selecting diverse samples) and "exploitation" (selecting uncertain samples predicted to be high-affinity). Over-emphasizing uncertainty can sometimes reduce diversity [24].

- Examine the Training Data: Ensure your initial training dataset is itself diverse and representative of the broad chemical space you wish to explore.

Frequently Asked Questions (FAQs)

Q1: What types of research problems is Bayesian Optimization best suited for? Bayesian Optimization (BO) is ideal for optimizing expensive, black-box functions where you have no gradient information and evaluations are noisy. This makes it perfectly suited for problems like catalyst development, hyperparameter tuning for machine learning models, and experimental parameter optimization in drug development [26].

Q2: How do I choose between different acquisition functions for my catalyst screening project? The choice depends on your desired balance between exploration and exploitation. Expected Improvement (EI) is widely recommended as it generally provides a good balance, considering both the probability and magnitude of improvement. Probability of Improvement (PI) tends to over-exploit areas near the current best sample, while Lower Confidence Bound (LCB) has a tunable parameter to explicitly control exploration-exploitation trade-offs [27] [28].

Q3: Why use Gaussian Process Regression as the surrogate model in Bayesian Optimization? Gaussian Process Regression (GPR) provides a flexible, probabilistic model that not only predicts the mean performance of a catalyst but also quantifies the uncertainty (variance) of that prediction at any point in the parameter space. This uncertainty quantification is essential for the acquisition function to make informed decisions about where to sample next [29] [26].

Q4: Our catalyst dataset is relatively small (<100 data points). Can Bayesian Optimization still be effective? Yes. Bayesian optimization has been successfully applied to stereoselective polymerization catalyst discovery starting with just 56 literature data points, demonstrating superior search efficiency compared to random search even with limited initial data [30].

Q5: What are the computational bottlenecks when applying BO-GP to high-dimensional problems? The primary computational cost comes from inverting the covariance matrix during GPR fitting, which scales with the cube of the number of data points (O(n³)). For large datasets (e.g., 10,000 points), this requires inverting a 10,000 × 10,000 matrix, which becomes computationally expensive [27].

Troubleshooting Guides

Issue 1: Slow Convergence or Poor Performance

Problem: The optimization process requires too many iterations to find a good candidate, or the final performance is unsatisfactory.

| Potential Cause | Diagnosis Steps | Solution |

|---|---|---|

| Inadequate initial sampling of the parameter space | Check if initial samples cover the domain uniformly (e.g., using a Sobol sequence) [29]. | Increase the number of initial quasi-random points or ensure they are space-filling. |

| Mis-specified Gaussian Process kernel or hyperparameters | Review the model's fit on known data; poor extrapolation suggests an inappropriate kernel [27]. | Experiment with different kernels (e.g., Matern, RBF) and optimize hyperparameters via marginal likelihood maximization. |

| Improperly tuned acquisition function | Analyze whether the process is overly exploring (sampling only high-uncertainty areas) or exploiting (ignoring promising, uncertain regions) [28]. | For LCB, adjust the κ parameter; for EI or PI, introduce or tune an ε parameter to encourage more exploration early on [27] [28]. |

| Irrelevant or poorly chosen molecular descriptors for the catalyst system | Perform feature importance analysis; if descriptors lack mechanistic relevance, the model will struggle to learn [30]. | Use mechanistically meaningful descriptors (e.g., %Vbur, EHOMO from DFT calculations) and consider feature selection techniques [30]. |

Issue 2: Poor Model Predictions and High Uncertainty

Problem: The Gaussian Process surrogate model provides inaccurate predictions or fails to generalize.

| Potential Cause | Diagnosis Steps | Solution |

|---|---|---|

| Noisy or inconsistent experimental measurements of catalyst performance | Check for high variance in replicate experiments. | Increase replicate measurements for critical data points; ensure consistent experimental protocols. |

| Insufficient quantity of training data | Evaluate learning curves or performance on a held-out validation set [30]. | Incorporate an active learning loop to strategically acquire the most informative new data points, as guided by the acquisition function [29]. |

| Incorrect noise level assumption in the Gaussian Process model | Review the estimated noise level from the GP hyperparameters. | Allow the GP to learn the noise level from the data by optimizing the marginal likelihood. |

Structured Data Presentation

Table 1: Comparison of Common Acquisition Functions in Bayesian Optimization

| Acquisition Function | Mathematical Formula | Key Characteristics | Best Use Cases |

|---|---|---|---|

| Expected Improvement (EI) | ( \alpha_{EI}(x) = (\mu(x) - f(x^+) - \epsilon)\Phi(Z) + \sigma(x)\phi(Z) )where ( Z = \frac{\mu(x) - f(x^+) - \epsilon}{\sigma(x)} ) | Balances exploration and exploitation; considers magnitude of improvement [27] [28]. | Recommended default choice for most applications, including catalyst design [27]. |

| Probability of Improvement (PI) | ( \alpha_{PI}(x) = \Phi\left(\frac{\mu(x) - f(x^+) - \epsilon}{\sigma(x)}\right) ) | Focuses on likelihood of improvement; tends to over-exploit [27] [28]. | When probability of improvement is more critical than the magnitude. |

| Lower Confidence Bound (LCB) | ( \alpha_{LCB}(x) = \mu(x) - \kappa\sigma(x) ) | Explicit exploration parameter κ; simple interpretation [27]. | When explicit control over the exploration-exploitation trade-off is desired. |

Table 2: Performance of Bayesian Optimization vs. Random Search in Catalyst Discovery

| Optimization Method | Number of Initial Data Points | Iterations to Convergence | Average Final Performance (Pm/Pr) | Key Findings |

|---|---|---|---|---|

| Bayesian Optimization | 56 (literature data) | ≤7 (for 10 independent runs) [30] | >0.8 [30] | Superior search efficiency; convergence achieved reliably [30]. |

| Random Search | 56 (literature data) | No convergence within 12 iterations [30] | Not Reported | Failed to converge within the same iteration budget, demonstrating lower efficiency [30]. |

Table 3: Comparison of Molecular Descriptors for Gaussian Process Regression in Catalyst Optimization

| Descriptor Type | Example Descriptors | Regression Performance (Mean Error) | Advantages | Limitations |

|---|---|---|---|---|

| DFT-Calculated | %Vbur, EHOMO [30] | Lowest mean errors [30] | Provides rich, mechanistically meaningful chemical information [30]. | Computationally expensive to generate for large datasets [30]. |

| Electrotopological-State Index | Atom-type indices [30] | Low mean errors [30] | Captures atom-level electronic and topological influences. | May require careful interpretation. |

| Mordred | 2D molecular descriptors [30] | Low mean errors [30] | Computationally efficient; generates a comprehensive set of descriptors. | Can produce high-dimensional feature space requiring feature selection. |

| One-Hot-Encoding | Binary fragment indicators [30] | Higher mean errors [30] | Simple implementation for categorical variables. | Lacks quantitative chemical information; can lead to poor regression performance [30]. |

Experimental Protocols

Detailed Methodology: Bayesian Optimization for Stereoselective Catalyst Discovery

Objective: To discover Al complexes with high stereoselectivity (Pm or Pr > 0.8) for the ring-opening polymerization of racemic lactide [30].

Workflow Overview:

Step-by-Step Procedure:

Initial Data Curation

- Collect 56 unique data points for salen- and salan-type Al complexes from literature, including their reported stereoselectivity (Pm or Pr values) [30].

- Critical Step: Ensure consistent measurement conditions and accuracy of reported performance metrics.

Ligand Fragmentation & Descriptor Generation

- Fragment each catalyst ligand into an arene ring (fragment Am, containing R1 and R2 groups) and an amine linker (fragment BnCp, containing R3 and C groups) [30].

- Generate molecular descriptors for each fragment using:

- Combinatorically concatenate fragment descriptors to represent whole catalyst properties.

Surrogate Model Training

- Train a Gaussian Process Regression model on the initial dataset using a 5-fold cross-validation scheme [30].

- Use the surrogate model to predict catalyst performance and associated uncertainty across the design space.

Acquisition Function Optimization

- Apply the Expected Improvement (EI) acquisition function to identify the most promising catalyst candidate for the next experimental iteration [30].

- Optimize the acquisition function using standard numerical methods to propose specific ligand structures for synthesis.

Experimental Validation & Model Update

- Synthesize the proposed Al complex and evaluate its performance in the ring-opening polymerization of rac-LA.

- Measure the stereoselectivity (Pm or Pr) of the resulting PLA.

- Add the new catalyst data (descriptors and performance) to the training set.

- Update the Gaussian Process surrogate model with the expanded dataset.

- Repeat steps 4-5 until convergence (identification of catalyst with Pm/Pr > 0.8) or exhaustion of the experimental budget [30].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials and Computational Tools for BO-GP Catalyst Development

| Item Name | Type/Source | Function in the Experiment |

|---|---|---|

| Salen-/Salan-type Ligands | Chemical Reagents | Scaffolds for constructing Al complexes; structural variations enable exploration of the chemical space [30]. |

| Aluminum Precursors | Chemical Reagents | Metal sources for forming active Al catalysts for ring-opening polymerization [30]. |

| Racemic Lactide (rac-LA) | Monomer (Chemical Reagent) | Substrate for ring-opening polymerization to produce poly(lactic acid) and evaluate catalyst stereoselectivity [30]. |

| Gaussian Program | Computational Software | Performs DFT calculations to generate electronic and steric descriptors (e.g., %Vbur, EHOMO) for catalyst ligands [30]. |

| Mordred Program | Computational Software/Package | Generates a comprehensive set of 2D molecular descriptors directly from chemical structures [30]. |

| Gaussian Process Regression (GPR) Model | Computational Model | Serves as the probabilistic surrogate model within Bayesian optimization, predicting catalyst performance and uncertainty [30]. |

| Expected Improvement (EI) | Algorithm/Acquisition Function | Guides the iterative selection of the most promising catalyst candidates by balancing exploration and exploitation [27] [30] [28]. |

## Technical Support Center

This support center provides troubleshooting guides and FAQs for researchers implementing a generative AI workflow for de novo drug design, based on the study "Optimizing drug design by merging generative AI with a physics-based active learning framework" [2] [31].

### Troubleshooting Guide: Common Experimental Issues

1. Issue: Generative Model Struggles with Target Engagement

- Problem: The generated molecules have poor predicted affinity for the specific biological target.

- Solution: Integrate physics-based oracles earlier in the workflow. Use the outer Active Learning (AL) cycle to fine-tune the Variational Autoencoder (VAE) with molecules that meet predefined molecular docking score thresholds, thereby steering the generation toward the target's pharmacological space [2].

2. Issue: Generated Molecules Have Poor Synthetic Accessibility (SA)

- Problem: The proposed molecules are theoretically valid but difficult or impossible to synthesize.