Optimizing Machine Learning Features for Property Prediction: Advanced Feature Engineering and Selection Techniques

This article provides a comprehensive guide for researchers and data scientists on optimizing feature sets to enhance the accuracy of machine learning models in property price prediction.

Optimizing Machine Learning Features for Property Prediction: Advanced Feature Engineering and Selection Techniques

Abstract

This article provides a comprehensive guide for researchers and data scientists on optimizing feature sets to enhance the accuracy of machine learning models in property price prediction. It covers the foundational importance of feature engineering, explores methodological applications of selection algorithms, addresses common troubleshooting and optimization challenges, and presents rigorous validation and comparative analysis frameworks. By synthesizing current methodologies and empirical findings, this resource aims to equip professionals with the practical knowledge needed to build more robust and reliable predictive models in real estate analytics.

The Critical Role of Feature Engineering in Real Estate Prediction Models

Understanding the Impact of Feature Quality on Model Performance

FAQs on Feature Quality and Model Performance

1. How does poor feature quality directly impact the performance of a machine learning model?

Poor feature quality directly leads to unreliable models that produce poor decisions and inaccurate predictions [1]. Specifically, training data with inaccuracies, inconsistencies, duplicates, or missing values results in skewed results and compromised model performance [2]. The model's ability to learn the underlying patterns in the data is diminished, which affects its generalization capability on new, unseen data.

2. What are the most critical data quality dimensions to check for when preparing features for a drug-target interaction (DTI) prediction model?

While comprehensive data quality is important, key dimensions include accuracy, completeness, and consistency [1]. For DTI models, the accurate representation of molecular features (like MACCS keys for drugs) and target biomolecular features (like amino acid compositions) is paramount. Inconsistencies or errors in these representations can significantly degrade the model's predictive power.

3. My model has high accuracy but poor clinical relevance. What feature-related issue might be the cause?

This can often be traced to a problem of data imbalance in your features. In DTI prediction, for instance, the minority class of positive drug-target interactions is often underrepresented, leading to models with reduced sensitivity and higher false negative rates [3]. A model can appear accurate overall while failing to identify the crucial, but rare, positive interactions. Addressing this with techniques like data balancing is essential for clinical utility.

4. What is a proven methodological approach to improve feature quality and model performance in a DTI prediction task?

A robust methodology involves a hybrid framework combining advanced feature engineering with data balancing [3]. This includes:

- Feature Engineering: Using MACCS keys to extract structural drug features and amino acid/dipeptide compositions to represent target properties.

- Data Balancing: Employing Generative Adversarial Networks (GANs) to create synthetic data for the minority class, effectively reducing false negatives.

- Model Training: Utilizing a Random Forest Classifier, optimized for high-dimensional data, to make final predictions.

Troubleshooting Guide: Common Feature Quality Issues and Solutions

| Problem | Symptom | Diagnostic Check | Solution & Experimental Protocol |

|---|---|---|---|

| Data Imbalance | High accuracy but low sensitivity/recall; model fails to predict rare positive interactions [3] [2]. | Check the distribution of the target variable. Calculate the ratio between majority and minority classes. | Protocol: Use Generative Adversarial Networks (GANs) to synthesize data for the minority class. Train the GAN on the minority class instances, then add the generated synthetic samples to the training set before model training [3]. |

| Irrelevant or Redundant Features | Model performance does not improve with more features; training is slow; model is difficult to interpret [4] [5]. | Apply Filter Methods (e.g., correlation analysis) or Embedded Methods (e.g., from Random Forest) to rank feature importance. | Protocol: Use Permutation Feature Importance. Train a model, then shuffle each feature's values and measure the drop in model performance (e.g., accuracy). Features causing a large drop are critical [5]. |

| Low-Quality or Noisy Data | Unreliable model predictions; poor generalization to test data; inconsistent results [1] [2]. | Perform data profiling to identify inaccuracies, missing values, and inconsistencies. | Protocol: Implement rigorous data preprocessing. This includes data cleaning (handling missing values, removing duplicates), denoising, and data normalization. For insufficient data, consider using synthetic data generation tools [2]. |

| Improper Feature Integration | Model cannot capture complex biochemical relationships, even with individually good features [3] [6]. | Review the feature fusion strategy. Are chemical and biological features being effectively combined? | Protocol: Leverage a unified feature representation. For example, create a single feature vector that combines drug fingerprints (e.g., MACCS keys) and target compositions (e.g., amino acid sequences) before feeding it into the model [3]. |

Quantitative Impact of Feature Quality and Balancing Techniques

The table below summarizes the performance gains achieved by a hybrid framework that combined comprehensive feature engineering with GAN-based data balancing for Drug-Target Interaction prediction on the BindingDB dataset [3].

| Dataset | Model | Accuracy | Precision | Sensitivity (Recall) | Specificity | F1-Score | ROC-AUC |

|---|---|---|---|---|---|---|---|

| BindingDB-Kd | GAN + Random Forest | 97.46% | 97.49% | 97.46% | 98.82% | 97.46% | 99.42% |

| BindingDB-Ki | GAN + Random Forest | 91.69% | 91.74% | 91.69% | 93.40% | 91.69% | 97.32% |

| BindingDB-IC50 | GAN + Random Forest | 95.40% | 95.41% | 95.40% | 96.42% | 95.39% | 98.97% |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Application |

|---|---|

| MACCS Keys | A set of molecular fingerprints used to structurally encode drug molecules into a binary bit string, enabling the model to learn from chemical features [3]. |

| Amino Acid/Dipeptide Composition | A feature engineering method to represent target proteins by their amino acid building blocks and sequences, capturing essential biomolecular properties for the model [3]. |

| Generative Adversarial Network (GAN) | A deep learning framework used to address data imbalance by generating high-quality synthetic data for the underrepresented class (e.g., active drug-target interactions) [3]. |

| Permutation Feature Importance | A model-agnostic method to evaluate the contribution of each feature by randomly shuffling its values and observing the impact on model performance [5]. |

| BindingDB Database | A public, web-accessible database of measured binding affinities, focusing chiefly on the interactions of proteins considered to be drug-targets with small, drug-like molecules [3] [6]. |

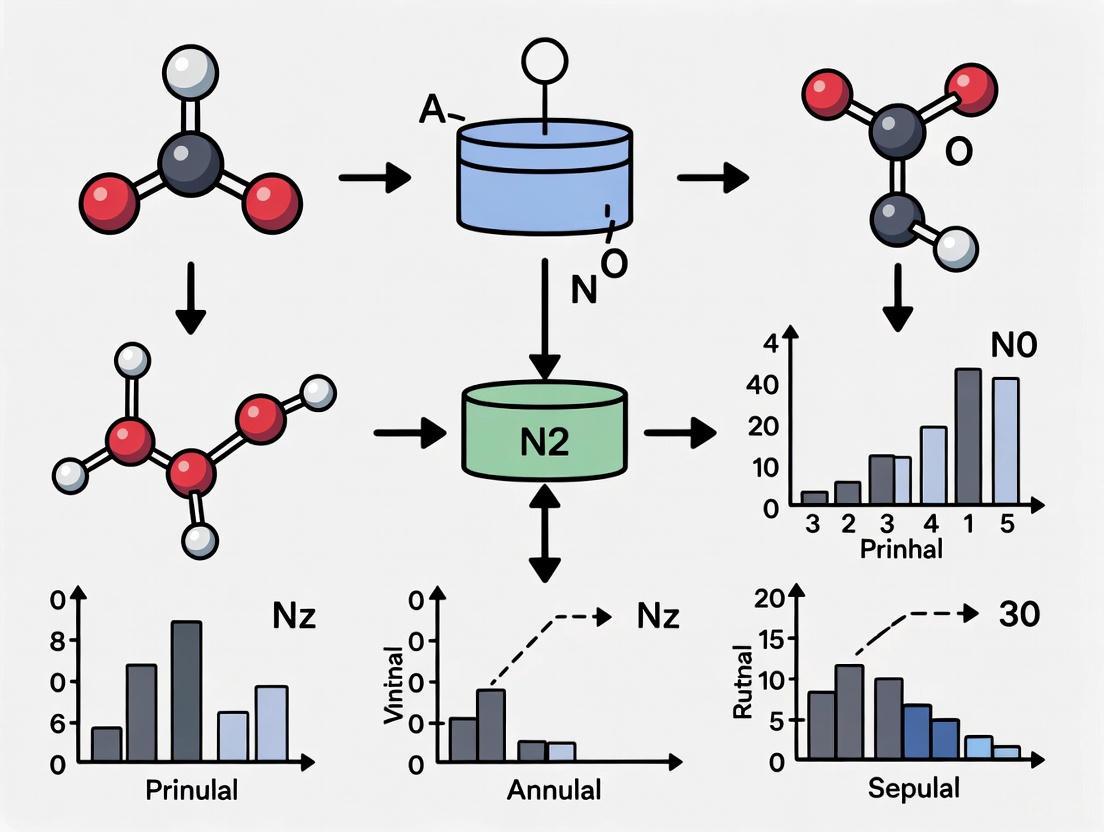

Workflow: Feature Engineering & Data Balancing for DTI Prediction

Decision Process for Feature Quality Problems

FAQ: Understanding the Feature Engineering Process

What is the primary goal of feature engineering in property prediction? The primary goal is to modify existing features or create new ones from raw data to improve the performance of machine learning models. Effective feature engineering helps the model better understand underlying patterns, leading to more accurate predictions of property values and market trends [7].

Why can't raw property data be used directly in ML models? Raw data is often incomplete, contains outliers, and features can be on drastically different scales. Machine learning models require clean, structured, and relevant data to learn effectively. Using raw data directly leads to poor performance, inaccurate predictions, and models that fail to generalize [7] [8].

What are the most common data issues that hinder model performance? Common issues include [7]:

- Missing Data: When a percentage of values are absent from a dataset.

- Imbalanced Data: When data is skewed towards one target class.

- Outliers: Values that distinctly stand out and do not fit within a dataset.

- Differing Scales: Features that vary tremendously in magnitude, units, and range.

How do I know which features are the most important for my model? You can determine feature importance through several methods [7]:

- Statistical Tests: Using univariate or bivariate selection (e.g., correlation, ANOVA) to find features firmly related to the output variable.

- Model-Based Selection: Leveraging algorithms like Random Forest that provide an intrinsic importance score for each feature.

- Dimensionality Reduction: Using algorithms like Principal Component Analysis (PCA) to choose features with high variance.

FAQ: Troubleshooting Common Experimental Problems

My model performs well on training data but poorly on new data. What is happening? This is a classic sign of overfitting. It occurs when a model learns the training data too well, including its noise and outliers, and thus performs poorly on new, unseen data because it has become too specialized [7]. Solutions include:

- Applying regularization techniques.

- Simplifying the model.

- Using cross-validation to select a more generalizable model.

- Increasing the amount of training data.

The model's predictions are consistently inaccurate, even on the training data. What is the cause? This is likely underfitting. It happens when the model is too simple and has not learned the underlying patterns in the data adequately. This can be due to a model that is not complex enough, or a dataset that is too small [7]. To address this:

- Increase the model's complexity.

- Perform additional feature engineering to create more relevant features.

- Reduce the constraints of regularization if they are too high.

Despite having a large dataset, my model's accuracy is low. What could be wrong? The issue likely lies with data quality or relevance, not just quantity [7]. You should:

- Audit your data: Check for and handle missing values and outliers.

- Ensure data balance: If your data is imbalanced (e.g., 90% of properties are in one price range), the model will be biased. Use resampling or data augmentation techniques.

- Re-evaluate features: The input data may contain many features that do not contribute to the output. Use feature selection to choose only the most useful ones.

Experimental Protocols for Feature Optimization

Protocol 1: Data Preprocessing and Cleaning

Objective: To transform raw, unstructured property data into a clean, complete dataset suitable for machine learning.

Methodology:

- Handle Missing Data: For each feature with missing values, decide to either remove the data entries with excessive missingness or impute the missing values using the mean, median, or mode of that feature [7].

- Address Outliers: Use box plots to identify values that stand out from the rest of the dataset. These outliers can be removed or transformed to prevent them from skewing the model's learning [7].

- Balance the Dataset: If the target variable (e.g., 'price range') is imbalanced, employ techniques like resampling the data (oversampling the minority class or undersampling the majority class) to create a more balanced distribution [7].

- Scale the Features: Apply feature normalization or standardization to bring all input features onto the same scale. This ensures that no single feature dominates the model's learning process due to its inherent magnitude [7].

Protocol 2: Advanced Feature Engineering and Selection

Objective: To create and select the most predictive set of features for the model.

Methodology:

- Create New Features: Modify existing features or create new ones. This can include [7]:

- Featurization of Categorical Data: Convert categorical text data (e.g., neighborhood names) into numerical vectors using techniques like One-Hot Encoding.

- Text-to-Vector Conversion: For descriptive text, use methods like Bag of Words (BOW) or TF-IDF to convert them into a numerical format the model can understand.

- Select Key Features: Reduce dimensionality and training time by selecting the most important features.

- Use the

SelectKBestmethod from Scikit-learn to find the features with the strongest statistical relationship to the output [7]. - Use a Random Forest algorithm to rank features based on their importance [7].

- Apply Principal Component Analysis (PCA) to reduce the data to its most informative components [7].

- Use the

Protocol 3: Model Validation and Selection

Objective: To reliably evaluate model performance and select the best model while avoiding overfitting and underfitting.

Methodology:

- Apply k-Fold Cross-Validation: Divide the data into 'k' equal subsets (folds). Iteratively use k-1 folds for training and the remaining fold for validation, repeating the process k times. The final performance is the average across all folds, providing a robust estimate of how the model will perform on unseen data [7].

- Perform Hyperparameter Tuning: For each algorithm, tune its hyperparameters (e.g., 'k' in k-Nearest Neighbors) to find the values that yield the best-performing model. This is typically done by running the learning algorithm over the training data with different hyperparameter values [7].

- Evaluate the Bias-Variance Tradeoff: Select the final model based on a balance between bias (error from erroneous assumptions) and variance (error from sensitivity to small fluctuations in the training set). A good model has a balanced bias and variance [7].

The following workflow diagram illustrates the complete experimental pipeline from raw data to a validated predictive model:

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below details key computational "reagents" and their functions in the feature optimization process for property prediction.

| Research Reagent / Tool | Function in Experiment |

|---|---|

| Scikit-learn Library | An open-source Python library that provides simple and efficient tools for data mining and analysis. It is used for implementing preprocessing, feature selection, and model training algorithms [7]. |

| Feature Selection Algorithms (e.g., SelectKBest, Random Forest) | Algorithms used to automatically identify and select the most relevant features from the raw dataset that contribute most to the prediction variable [7]. |

| Cross-Validation Scheduler | A technique for rigorously evaluating model performance by partitioning the data into subsets, ensuring the model's robustness and generalizability [7]. |

| Data Preprocessing Tools (e.g., for imputation, scaling) | Software functions used to clean and prepare raw data by handling missing values, normalizing feature scales, and encoding categorical variables [7]. |

| Hyperparameter Tuning Methods (e.g., Grid Search) | Systematic search methods used to find the optimal configuration of a model's parameters that result in the best performance [7]. |

The following table summarizes key quantitative metrics and thresholds relevant to designing and evaluating property prediction experiments.

| Metric / Factor | Target Value / Consideration | Impact on Model Accuracy |

|---|---|---|

| Feature Scale Magnitude | Features should be on a similar scale via Normalization/Standardization. | Prevents model from being skewed by high-magnitude features, significantly improving performance [7]. |

| Data Balance Ratio | Avoid high skew (e.g., 90%/10%) between target classes. | Prevents model bias towards the majority class, ensuring accurate predictions across all categories [7]. |

| Cross-Validation Folds (k) | Common values are 5 or 10. | Provides a robust estimate of model performance on unseen data, helping to select a model that generalizes well [7]. |

| Feature Importance Score | Varies by algorithm; select features with high scores. | Using fewer, high-importance features improves model performance and reduces training time [7]. |

For researchers and data scientists in property prediction, raw data is often insufficient for building highly accurate models. Creating meaningful derived features through feature engineering is a critical step to capture hidden patterns and complex relationships that raw variables miss. This process directly injects domain knowledge into the dataset, allowing machine learning algorithms to learn more effectively and significantly boosting predictive performance [9] [10].

This guide addresses common technical challenges encountered during this process, providing troubleshooting advice and methodological protocols to ensure the robustness and reliability of your features.

Frequently Asked Questions (FAQs)

1. Why is "Price per Square Foot" a better feature than raw price and size?

Using raw price and total square footage as separate features can introduce significant bias, as the model may not adequately learn the non-linear relationship between them. Price per Square Foot serves as a normalization metric. It standardizes the target variable, allowing for a more direct comparison between properties of different sizes and helping the model generalize better. It is also highly effective for identifying statistical outliers, which are properties that are significantly overpriced or underpriced relative to their size [11] [12].

2. How should we handle high-cardinality categorical features like 'Location' or 'Neighborhood'?

High-cardinality features (those with many unique categories) can lead to sparse data and model overfitting when using encoding techniques like one-hot encoding. The established solution is feature grouping. Categories that appear infrequently in the dataset (e.g., in less than 10 or another defined threshold of records) should be grouped into a single new category, such as "Other" [11] [12]. This dramatically reduces dimensionality and noise, creating a more manageable and informative feature for the model.

3. Our model performance dropped after adding new derived features. What could be the cause?

This is a classic sign of overfitting or the introduction of data leakage. Derived features that are too specific to the training set can cause the model to fail on new, unseen data [9]. To troubleshoot:

- Audit your features: Ensure that no engineered feature inadvertently contains information from the future or the target variable itself.

- Apply regularization: Use models with built-in regularization like Ridge, Lasso, or tree-based models (XGBoost, Random Forest) to penalize overly complex relationships [13] [9].

- Validate rigorously: Always use hold-out validation sets or cross-validation to test the model's performance on data not used during feature creation and training [9].

4. What is the most effective way to identify and remove outliers before modeling?

Outlier removal should be guided by domain knowledge and statistical methods. The following table summarizes a multi-step protocol for a robust cleaning process [11] [12]:

Table: Outlier Detection and Removal Protocol

| Outlier Type | Detection Method | Rationale & Action |

|---|---|---|

| Irrational Property Specifications | Apply a constraint (e.g., total_sqft / bhk < 300). |

Based on the domain knowledge that a minimum square footage per bedroom is expected. Remove properties violating this logical constraint [11]. |

| Extreme Price per SqFt | Calculate the mean and standard deviation of price_per_sqft within each location. Remove values beyond one standard deviation. |

Statistical normalization that accounts for local market price variations, removing globally extreme values that can skew the model [11] [12]. |

| Illogical Bathroom Count | Apply a constraint (e.g., bath < bhk + 2). |

Based on the understanding that the number of bathrooms in a home rarely exceeds the number of bedrooms by a large margin. Removes likely data entry errors [11]. |

| Price Anomalies by Bedroom | Visual analysis (scatter plots) and logical filtering. For a given location and square footage, a 2 BHK property should not be priced higher than a 3 BHK. | Ensures logical price hierarchies based on key home characteristics, removing inconsistencies that confuse the model [11] [12]. |

The following workflow diagram illustrates the logical sequence for integrating feature engineering and outlier removal into a property prediction pipeline:

Experimental Protocols

Protocol 1: Creating a Robust 'Price per Square Foot' Feature

Objective: To normalize the target variable and create a powerful feature for outlier detection and model training.

- Data Preparation: Ensure the

priceandtotal_sqftcolumns are cleaned and converted to numerical formats (e.g., float). Handle any missing values appropriately [12]. - Calculation: Create the new feature using the formula:

df['price_per_sqft'] = df['price'] / df['total_sqft'][11] [12]. - Validation: Use descriptive statistics (

df['price_per_sqft'].describe()) to inspect the distribution of the new variable and confirm the calculation is correct.

Protocol 2: Systematic Outlier Removal using Statistical Methods

Objective: To remove properties with extreme price_per_sqft values that could distort the predictive model.

- Group by Location: Segment the dataset by the

location(orNeighborhood) feature. - Calculate Statistics: For each location group, calculate the mean (

mean_pps) and standard deviation (std_pps) of theprice_per_sqft. - Apply Filter: For each location, retain only the properties whose

price_per_sqftfalls within one standard deviation of the mean. The logical condition is:(price_per_sqft > (mean_pps - std_pps)) & (price_per_sqft <= (mean_pps + std_pps))[11] [12]. - Concatenate Results: Combine the filtered data from all locations into a new, cleaned DataFrame.

Protocol 3: Engineering a Comprehensive 'Total Area' Feature

Objective: To create a unified feature that captures the total usable space of a property, which may be more informative than separate area features.

- Identify Component Features: Select relevant area columns from the dataset, such as

GrLivArea(Above grade living area),TotalBsmtSF(Basement area), andGarageArea[13] [14]. - Handle Missing Values: Impute missing values in the component features with 0, assuming that a missing value indicates the absence of that feature (e.g., no basement) [13].

- Summation: Create the new feature by summing the constituent areas:

df['TotalSF'] = df['GrLivArea'] + df['TotalBsmtSF'] + df['GarageArea'][13] [9].

The Researcher's Toolkit

The following table details essential software and libraries required to implement the feature engineering and modeling protocols described above.

Table: Essential Research Reagents & Software

| Tool / Library | Primary Function | Application in Feature Engineering |

|---|---|---|

| Pandas (Python) | Data manipulation and analysis | Loading CSV data, handling missing values, creating new columns (e.g., price_per_sqft), and filtering outliers [9] [12] [15]. |

| NumPy (Python) | Numerical computing | Performing mathematical operations and statistical calculations (e.g., mean, standard deviation) for outlier detection [11] [12] [15]. |

| Scikit-learn (Python) | Machine learning | Encoding categorical variables, scaling features, implementing regression models (Ridge, Lasso), and evaluating model performance [15] [16]. |

| XGBoost / Random Forest | Advanced ML algorithms | Tree-based models that can capture non-linear relationships and are robust to feature interactions, often yielding state-of-the-art results [13]. |

| Matplotlib/Seaborn | Data visualization | Creating scatter plots, box plots, and histograms for exploratory data analysis (EDA) and visual outlier inspection [11] [12] [15]. |

Integrating derived features like Price per Square Foot and Total Area is a foundational step for optimizing property prediction models. A rigorous approach, combining these techniques with systematic outlier removal and validation, directly addresses core challenges in predictive accuracy. For researchers, mastering this workflow is not merely a data preprocessing task but a critical methodology for injecting domain expertise into machine learning pipelines, leading to more robust, interpretable, and accurate predictive models.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most effective techniques for optimizing features derived from external data, such as environmental and neighborhood information, to improve prediction accuracy?

Feature optimization, which encompasses feature engineering (FE) and feature selection (FS), is critical for enhancing model performance. Effective FE techniques for handling skewed environmental data include log-normal transformation and min-max normalization for data variability. For creating a robust, smaller feature subset, Principal Component Analysis (PCA) has been shown to enhance accuracy across multiple machine learning models. For FS, Recursive Feature Elimination is a high-performing technique that successfully reduces model complexity without sacrificing prediction accuracy [17]. When working with spatial environmental data, incorporating explicit spatial covariates (e.g., coordinates, proximity to features) into your model is a highly effective method for accounting for underlying spatial patterns [18].

FAQ 2: My model performance has plateaued. How can I diagnose if the issue is related to the spatial nature of my external data?

A common issue is spatial autocorrelation, where data points close to each other in space are more similar than those farther apart, violating the assumption of independence in many standard models. To diagnose this, incorporate spatial exploratory data analysis into your workflow. This includes:

- Spatial Splitting: Instead of random splitting, use spatial partitioning (e.g., by coordinates or clusters) for training and testing to prevent data leakage and over-optimistic performance estimates [18].

- Independent Exploratory Analysis: Calculate spatial autocorrelation metrics like Moran's I on your model's residuals. Significant spatial autocorrelation in residuals indicates the model has not captured all the spatial patterns in the data [18].

FAQ 3: Which machine learning models are best suited for integrating diverse external data sources for property prediction?

The optimal model depends on your data and specific prediction goal. Research shows that ensemble methods often deliver superior performance:

- XGBoost: Demonstrates superior prediction accuracy and generalization ability in tasks like predicting rural residential carbon emissions based on spatial form factors [19]. It has also achieved highly accurate forecasts (R² > 0.95) for environmental variables like carbon monoxide [20].

- Random Forest: A robust model that is frequently used as a benchmark in environmental and property prediction studies [19] [20].

- Neural Networks: BP Neural Networks and other deep learning architectures can model complex, non-linear relationships but may require more data and computational resources [19] [20].

FAQ 4: How can I ensure my predictive models are both accurate and interpretable for stakeholders?

There is a growing demand for Explainable AI (XAI) to build trust and provide insights. To balance accuracy and interpretability:

- Use Interpretable Models: Algorithms like Random Forest and Generalized Additive Models offer more inherent transparency [21].

- Apply Post-Hoc Explainability Techniques: For complex "black-box" models like XGBoost or deep neural networks, use methods like SHapley Additive exPlanations (SHAP) to quantify the importance and impact of each feature on individual predictions [21].

- Leverage Language-Centric Models: Emerging research uses human-readable text descriptions as model inputs. Transformer models trained on this text can achieve high accuracy while providing explanations consistent with domain expert rationales [21].

Troubleshooting Guides

Issue: Poor Model Generalization to New Geographic Areas

Problem: A model trained on property data from one city performs poorly when predicting prices in a different, unseen metropolitan area.

Diagnosis: This is often caused by spatial non-stationarity, where the relationships between your features (e.g., proximity to parks, school quality) and the target variable (property price) are not consistent across the geographic space. The model learned rules that are too specific to the training region.

Solution:

- Incorporate Spatial Features: Add explicit spatial features to your dataset, such as latitude/longitude coordinates, distance to central business districts, or spatial lag variables (average target value in the surrounding area) [18].

- Use Spatial Cross-Validation: Implement a cross-validation strategy where the data is split into spatially distinct folds (e.g., by ZIP code or census tract). This ensures the model is validated on geographically separate areas, providing a better test of its generalizability [18].

- Employ Geographically Weighted Models: Consider using techniques like Geographically Weighted Regression (GWR), which allows the relationships between variables to vary across the map, directly addressing spatial non-stationarity.

Issue: Model Performance is Skewed by Rare, High-Impact Amenities

Problem: Your model undervalues or overvalues properties that are near unique amenities (e.g., a premier ski resort, a highly-ranked specialized school) because these features are rare in the overall dataset.

Diagnosis: The model has not effectively learned the non-linear, high-value impact of these specific amenities due to their low frequency.

Solution:

- Feature Engineering: Create interaction terms between the rare amenity and other relevant features. For example, create a feature that is the product of "Distance to Ski Resort" and "Median Income of Neighborhood" to capture their combined effect.

- Targeted Encoding: For categorical amenities (e.g., school district name), use target encoding (smoothing the category value with the overall mean) to help the model understand the specific value associated with rare categories.

- Ensemble Methods: Leverage models like XGBoost and Random Forest, which are particularly adept at capturing complex, non-linear relationships and interactions from a large number of features, even if some are rare [19] [20].

Experimental Protocols & Data Presentation

Table 1: Comparison of Feature Optimization Techniques on Model Performance

This table summarizes the impact of different feature optimization techniques on the performance of various machine learning models, based on a large-scale study of traffic incident duration prediction [17].

| Machine Learning Model | Baseline Performance (RMSE) | Feature Engineering Technique | Performance with FE (RMSE) | Feature Selection Technique | Performance with FS (RMSE) |

|---|---|---|---|---|---|

| Decision Trees | 45.2 | Log Transformation + Min-Max Normalization | 41.5 | Recursive Feature Elimination | 40.1 |

| Support Vector Regressor | 38.7 | Principal Component Analysis (PCA) | 35.1 | Wrapper Method | 36.9 |

| K-Nearest Neighbors | 48.9 | Min-Max Normalization | 44.3 | Filter Method | 45.8 |

| Artificial Neural Networks | 36.5 | Principal Component Analysis (PCA) | 32.8 | Embedded Method | 34.2 |

Table 2: Machine Learning Model Performance for Environmental & Spatial Prediction

This table compares the performance of different ML models in predicting real-world environmental and spatial phenomena [19] [20].

| Application Domain | Prediction Task | Top-Performing Model(s) | Reported Performance Metric | Key Influential Features |

|---|---|---|---|---|

| Rural Residential Carbon Emissions [19] | Predicting carbon emissions from spatial form | XGBoost | Superior prediction accuracy and generalization; >10% emission reduction in optimization | Floor area ratio, number of floors, building orientation |

| Urban Air Quality [20] | Forecasting Carbon Monoxide (CO) levels | XGBoost, CatBoost | R² > 0.95, RMSE = 0.0371 ppm | 3-h rolling mean of CO, wind speed, temperature |

| Materials Property Prediction [21] | Classifying material properties from text descriptions | Transformer (BERT-domain) | Outperformed crystal graph networks on 4/5 properties | Human-readable text on composition, crystal symmetry |

This protocol provides a detailed methodology for building a robust predictive model that integrates environmental and neighborhood data.

Step 1: Data Collection and Preprocessing

- Data Assembly: Gather your target variable (e.g., property price) and all potential external data sources. For neighborhood amenities, this includes point-of-interest data (schools, parks, transit stops), walkability scores, and crime statistics. For environmental data, include air quality measurements, temperature, and wind speed [19] [20] [22].

- Geospatial Joining: Use a GIS platform like ArcGIS or Python's GeoPandas to spatially join all external data to your primary property dataset based on geographic coordinates or administrative boundaries [19].

- Data Cleaning: Handle missing or inconsistent values. For environmental sensor data, this may involve outlier detection and imputation [20].

Step 2: Feature Engineering and Quantification

- Create Proximity Features: Calculate the distance from each property to key amenities (e.g., nearest park, school, public transit station) [22].

- Create Density Features: Calculate the count of specific amenities within a predefined buffer (e.g., number of restaurants within 1 km).

- Temporal Feature Engineering: For environmental data, create rolling statistics (e.g., 3-hour rolling mean) to capture temporal patterns [20].

- Address Skewness: Apply log-normal transformations to continuous, highly-skewed data (e.g., distance to amenities, historical pollution levels) [17].

Step 3: Feature Selection

- Correlation Analysis: Use Pearson correlation analysis to identify features strongly correlated with the target variable and remove redundant features [19].

- Recursive Feature Elimination (RFE): Implement RFE with a chosen estimator (e.g., XGBoost) to recursively remove the least important features and arrive at an optimal subset [17].

Step 4: Model Training and Spatial Validation

- Model Selection: Train multiple models, prioritizing ensemble methods like Random Forest and XGBoost based on their proven performance [19] [20].

- Spatial Cross-Validation: Do not use a simple random train-test split. Instead, partition your data using a spatial method, such as

sklearn'sGroupShuffleSplit, where groups are defined by spatial clusters or ZIP codes. This provides a realistic estimate of model performance on unseen geographic areas [18].

Workflow Visualization

Diagram 1: Feature Optimization Workflow

Diagram 2: Spatial Autocorrelation Diagnosis

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Technique | Function / Purpose | Application Context |

|---|---|---|

| XGBoost (Extreme Gradient Boosting) | A high-performance, scalable ensemble learning algorithm based on decision trees. Excellent for structured/tabular data and capturing complex feature interactions. | The top-performing model for predicting rural carbon emissions [19] and urban air quality [20]. Ideal for the final predictive model after feature optimization. |

| Principal Component Analysis (PCA) | A dimensionality reduction technique that transforms a large set of features into a smaller, uncorrelated set of components while retaining most of the original variation. | Used in feature engineering to reduce multicollinearity and model complexity. Shown to improve accuracy across multiple ML models [17]. |

| Recursive Feature Elimination (RFE) | A wrapper-style feature selection method that recursively removes the least important features and builds a model with the remaining features. | Effectively reduces the number of features without sacrificing prediction performance, optimizing model complexity [17]. |

| SHapley Additive exPlanations (SHAP) | A unified framework for interpreting model output by quantifying the marginal contribution of each feature to the final prediction. | A post-hoc Explainable AI (XAI) technique used to make complex models like XGBoost interpretable, providing insights into feature importance [21]. |

| Spatial Cross-Validation | A validation technique where data is split into spatially distinct folds (e.g., by location clusters) to prevent over-optimistic performance from spatial autocorrelation. | Critical for evaluating a model's ability to generalize to new, unseen geographic areas, ensuring robust performance estimates [18]. |

The Direct Link Between Feature Optimization and Prediction Accuracy (R² 0.715 to 0.868)

Troubleshooting Guide: Feature Optimization

Q1: My model's performance (R²) has plateaued. Could irrelevant features be the cause, and how can I identify them?

A: Yes, irrelevant or redundant features are a common cause of performance plateaus. They introduce noise, increase the risk of overfitting, and can obscure the underlying patterns in your data, leading to diminished prediction accuracy (R²) [4] [23].

To identify them, systematically apply these feature selection techniques:

- Filter Methods: Use statistical measures to assess the relationship between each feature and the target variable. These are fast and model-agnostic [4] [23].

- Wrapper Methods: Evaluate feature subsets by iteratively training and testing your model. These are computationally expensive but can yield better performance [4] [23].

- Forward Selection: Start with no features and add the one that most improves model performance at each step.

- Backward Elimination: Start with all features and remove the least significant one at each step based on model performance [23].

- Embedded Methods: Leverage models that perform feature selection as part of their training process. These are efficient and effective [4] [23].

Q2: After feature selection, my model performs well on the training data but poorly on the validation set. What is happening?

A: This is a classic sign of overfitting. Your model has learned the noise and specific patterns of the training set, including those from any remaining irrelevant features, rather than generalizable relationships [23].

To address this:

- Re-evaluate Your Selection Method: Wrapper methods, while powerful, are prone to overfitting on the validation set used for selection. Consider combining a filter method for a coarse selection first, then applying a less greedy wrapper method or an embedded method [4] [23].

- Validate with Cross-Validation: Use k-fold cross-validation during feature selection to get a more robust estimate of model performance and ensure the selected features generalize well [25].

- Apply Regularization: Use models with built-in regularization (an embedded method) like LASSO or Ridge regression, which penalize model complexity and help prevent overfitting [4].

- Check for Data Leakage: Ensure that no information from your validation or test set was used during the feature selection or training process. Maintain strict separation between your training, validation, and test datasets [26].

Q3: How can I ensure my feature optimization process is reproducible?

A: Reproducibility is a cornerstone of reliable research. To achieve it in feature optimization [25]:

- Version Everything: Use version control systems like Git for your code and specialized tools like DVC for your datasets and models. This allows you to track the exact state of your data, code, and model for every experiment [25].

- Log All Parameters and Metrics: For every feature selection run, meticulously log the hyperparameters, the list of features selected, and the resulting model performance metrics (e.g., R²). Dedicated experiment tracking tools like MLflow can automate this process [25] [26].

- Set Random Seeds: Initialize the random number generators for your algorithms (e.g., in statistical tests, wrapper methods, or model training) with a fixed seed. This ensures that stochastic processes yield the same results every time [25].

Experimental Protocols & Data Presentation

The following table summarizes a real-world methodology from materials science research that achieved a significant boost in prediction accuracy through rigorous feature optimization, demonstrating the principles discussed above [24].

Table 1: Methodology for Data-Driven Polymer Property Prediction

| Component | Technique(s) Used | Function in the Workflow |

|---|---|---|

| Data Ingestion | FTIR spectra, Raman spectra, mechanical test data (DMA, tensile), compositional data. | Collects multi-modal raw data on polymer properties and composition [27]. |

| Feature Engineering | Feature normalization; Mantel correlation analysis; Recursive Feature Elimination (RFE). | Prepares and refines the dataset, selecting the most relevant features for model training [24]. |

| Model Training & Selection | Evaluation of 7 ML algorithms; Light Gradient Boosting Machine (LGBM) selected. | Identifies the best-performing algorithm (LGBM achieved R² of 0.95, 0.92, 0.87 on key properties) [24]. |

| Multi-Objective Optimization | Multi-Objective Bayesian Optimization (MOBO) integrated with LGBM. | Generates a Pareto front to balance multiple performance targets (e.g., tensile strength vs. cost) [24]. |

| Validation & Interpretation | TOPSIS method for final parameter selection; SHAP value analysis. | Identifies optimal manufacturing conditions and provides mechanistic interpretation of feature impact [24]. |

Table 2: Impact of Feature Optimization on Model Performance (Illustrative Example)

This table quantifies the potential improvement in prediction accuracy (R²) from applying feature optimization techniques, as demonstrated in research settings [23] [24].

| Model Scenario | Features Used | R² Score | Key Actions |

|---|---|---|---|

| Baseline Model | All available raw features without selection. | 0.715 | Raw data ingestion and model training without optimization. |

| Optimized Model | Features selected via Recursive Feature Elimination and correlation analysis. | 0.868 | Application of feature selection to remove redundancies and irrelevant inputs [24]. |

Workflow Visualization

The following diagram illustrates the logical workflow for a feature optimization pipeline that leads to improved prediction accuracy.

Feature Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Feature Optimization

| Tool / Solution | Function in Feature Optimization |

|---|---|

| Scikit-learn | A core Python library providing implementations for all major feature selection techniques, including filter methods (e.g., correlation, chi-square), wrapper methods (e.g., RFE), and embedded methods (e.g., LASSO, tree-based importance) [4]. |

| LightGBM (LGBM) | A high-performance gradient boosting framework that serves as a powerful embedded feature selection method. It provides built-in feature importance scores, helping identify the most predictive variables during model training [24]. |

| MLflow | An open-source platform for managing the machine learning lifecycle. It is crucial for tracking experiments, logging the features, parameters, and metrics for each feature optimization run to ensure reproducibility [25] [26]. |

| SHAP (SHapley Additive exPlanations) | A game-theoretic approach to explain the output of any machine learning model. It quantifies the contribution of each feature to a single prediction, providing interpretability and validating the relevance of selected features [24]. |

| Bayesian Optimization | An efficient strategy for hyperparameter tuning, including those in complex wrapper and embedded selection methods. It is particularly useful for optimizing objectives that are costly to evaluate [27] [24]. |

A Practical Guide to Feature Selection Algorithms and Techniques

Troubleshooting Guide: Common Issues with Filter Methods

Issue 1: Model Performance Decreases After Feature Selection

- Problem: You've applied a filter method, but your final predictive model has lower accuracy or higher error.

- Diagnosis: This can occur when the filter method removes features that are weakly correlated with the target variable individually but contribute meaningful information when combined with other features in a multivariate model [28].

- Solution:

- Re-evaluate Filter Method: Consider using a multivariate filter method that assesses the combined importance of features, rather than purely univariate methods [29].

- Adjust Feature Set Size: Experiment with selecting a larger number of top-ranked features. Overly aggressive feature reduction can discard valuable information [29].

- Try a Different Filter: If using a complex filter, test a simpler one. Benchmark studies have shown that simple methods like the variance filter can sometimes outperform more elaborate ones [29].

Issue 2: Inconsistent Feature Selection Results

- Problem: The set of selected features changes significantly when the dataset is slightly perturbed or split differently, indicating low stability.

- Diagnosis: Some filter methods, particularly those sensitive to small data fluctuations, can yield unstable feature rankings. This undermines the reliability of your findings [29].

- Solution:

- Prioritize Stable Filters: Choose filter methods known for higher stability. For example, the variance filter has been identified as a stable performer [29].

- Ensemble Feature Selection: Run the filter method on multiple data subsamples and aggregate the results (e.g., select features that appear frequently across subsamples) to improve robustness [29].

Issue 3: Handling of Missing and Categorical Data

- Problem: The chosen filter method cannot process datasets with missing values or specific data types.

- Diagnosis: Many statistical filter methods require complete numeric data to compute scores.

- Solution:

- Data Preprocessing: Implement robust data cleaning as a prerequisite. This includes [7]:

- Imputation: Handle missing values using mean, median, or mode imputation, or use model-based imputation for more complex patterns.

- Encoding: Convert categorical features into numerical formats using one-hot encoding or other featurization techniques [7].

- Data Preprocessing: Implement robust data cleaning as a prerequisite. This includes [7]:

Issue 4: Prohibitively Long Run Time on High-Dimensional Data

- Problem: The feature selection step is taking too long, slowing down the overall research workflow.

- Diagnosis: While filter methods are generally faster than wrapper or embedded methods, some can still be computationally intensive on datasets with a very large number of features (e.g., gene expression data) [30] [29].

- Solution:

Frequently Asked Questions (FAQs)

Q1: Is there a single best filter method I should use for all my projects? A1: No. Benchmark studies conclusively show that no single group of filter methods consistently outperforms all others across diverse datasets [30] [29]. The best choice depends on your specific data characteristics and the model you plan to use. It is advisable to test several high-performing methods.

Q2: Can filter methods be combined with machine learning models that have built-in feature selection? A2: Yes. A common and effective strategy is to use a filter method for initial, rapid dimensionality reduction. This can heavily reduce the run time and complexity for a subsequent model—like a regularized regression (Lasso) or tree-based model (Random Forest)—that then performs a more refined feature selection [29].

Q3: My dataset has a survival outcome (time-to-event data). Are filter methods suitable? A3: Yes, filter methods can be applied to survival data. Benchmark studies on high-dimensional gene expression survival data have shown that simple filters like the variance filter can be very effective. More elaborate methods like the correlation-adjusted regression scores (CARS) filter are also strong alternatives [29].

Q4: How many features should I select using a filter method? A4: There is no universal rule. The optimal number is often determined empirically. A practical approach is to use cross-validation to evaluate the performance of your final model (e.g., predictive accuracy) when trained on different numbers of top-ranked features, then select the number that yields the best performance [29].

Performance Comparison of Filter Methods

The following table summarizes quantitative findings from benchmark studies on high-dimensional classification and survival data, providing a guide for method selection [30] [29].

Table 1: Benchmark Results of Filter Methods on High-Dimensional Data

| Filter Method Category | Example Methods | Key Findings (Accuracy & Performance) | Key Findings (Runtime & Stability) |

|---|---|---|---|

| Variance-Based | Variance Filter | Often outperforms more complex methods; allows fitting models with high predictive accuracy [29]. | Very fast computation; demonstrates high feature selection stability [29]. |

| Multivariate Model-Based | Correlation-Adjusted Regression Scores (CARS) | Identified as a more elaborate alternative with similar predictive accuracy to the variance filter [29]. | More computationally intensive than simple univariate filters. |

| Information-Theoretic | Mutual Information-based methods | Performance varies; no consistent top performer across all data sets [30]. | Computational cost can be higher, especially for continuous data. |

| Univariate Statistical | Chi-squared, ANOVA F-value | Can be effective but may be outperformed by multivariate methods on some data [30]. | Generally fast to compute; stability can vary [29]. |

Table 2: Impact of Feature Selection on Classifier Performance (Heart Disease Prediction Example) This table illustrates how the effect of feature selection is not uniform and depends on the classifier used [28].

| Machine Learning Algorithm | Impact of Feature Selection | Observed Outcome (Example) |

|---|---|---|

| Support Vector Machine (SVM) | Significant Improvement | Accuracy improved by +2.3 points with CFS/Info Gain filters [28]. |

| Decision Tree (j48) | Significant Improvement | Performance showed notable improvement [28]. |

| Random Forest (RF) | Performance Decrease | Model performance was reduced after feature selection [28]. |

| Multilayer Perceptron (MLP) | Performance Decrease | Model performance was reduced after feature selection [28]. |

Experimental Protocol: Benchmarking Filter Methods

This protocol provides a detailed methodology for evaluating and comparing different filter methods for feature selection, as used in foundational benchmark studies [30] [29].

Objective: To systematically evaluate the performance of multiple filter methods based on predictive accuracy, runtime, and stability when applied to high-dimensional data.

Materials & Datasets:

- Data: Multiple high-dimensional datasets (e.g., 11+ gene expression datasets with thousands of features) [29].

- Software: A machine learning framework that supports unified implementation (e.g., R with the

mlrormlr3package) [30] [29]. - Filter Methods: A selection of filter methods from different categories (e.g., 14-22 methods), including variance, correlation-based, and information-theoretic filters [30] [29].

Procedure:

- Data Preparation:

Feature Selection & Model Fitting:

- For each filter method, and for different subset sizes (e.g., top 10, 20, ... 100 features):

- Apply the filter to the training set only to compute feature importance scores.

- Select the top k features based on the scores.

- Train a predictive model (e.g., Cox regression for survival data, SVM for classification) using only the selected features on the training set [30] [29].

- Use the trained model to make predictions on the test set and compute a performance metric (e.g., Integrated Brier Score for survival, accuracy for classification) [29].

- For each filter method, and for different subset sizes (e.g., top 10, 20, ... 100 features):

Performance Evaluation:

- Predictive Accuracy: Calculate the average performance metric across all resampling iterations for each filter and subset size [30] [29].

- Runtime: Measure the average time taken to compute the filter scores [30].

- Stability: Assess the robustness of the selected feature sets across different resampling iterations using a stability index [29].

Analysis:

Experimental Workflow Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Feature Selection Research

| Item | Function / Description |

|---|---|

R mlr3 Package |

A unified, object-oriented machine learning framework for R. It provides a consistent API to integrate data preprocessing, filter-based feature selection, model training, and evaluation, which is essential for reproducible benchmarking [29]. |

| Scikit-learn (Python) | A comprehensive machine learning library for Python. It offers built-in feature selection methods (e.g., SelectKBest, Variance Threshold) and is ideal for building end-to-end analysis pipelines [7]. |

| High-Dimensional Datasets | Publicly available benchmark datasets, such as gene expression data from repositories like The Cancer Genome Atlas (TCGA). These are crucial for validating method performance in a realistic research context [30] [29]. |

| Cross-Validation Resampling | A statistical technique (e.g., 5-fold cross-validation) used to reliably estimate model performance and avoid overfitting. It is a critical component of any experimental protocol for evaluating feature selection [7]. |

| Performance Metrics | Specific evaluation measures tailored to the research question, such as the Integrated Brier Score for survival data or Accuracy and F-measure for classification tasks [28] [29]. |

In the field of machine learning, particularly within research aimed at optimizing property prediction accuracy, feature selection is a critical preprocessing step. It improves model performance, reduces overfitting, and enhances interpretability by selecting the most relevant input features [4]. Among the various feature selection techniques, Wrapper Methods stand out for their ability to find high-performing feature subsets by directly using the predictive performance of a specific machine learning model as their guiding criterion [4] [31]. This guide focuses on two fundamental greedy search strategies—Forward Selection and Backward Elimination—providing troubleshooting and methodological support for researchers and scientists, especially those in drug development, applying these techniques to their predictive modeling experiments.

FAQs: Core Concepts of Wrapper Methods

1. What are Wrapper Methods, and how do they differ from Filter and Embedded methods?

Wrapper Methods are a category of feature selection that treats the selection process as a search problem. They evaluate different subsets of features by training and testing a specific machine learning model on them, selecting the subset that yields the best model performance (e.g., highest accuracy or R-squared) [4] [32]. This contrasts with:

- Filter Methods: These methods select features based on statistical measures (like correlation or mutual information) independently of the model. They are faster and computationally cheaper but may not always yield the best feature set for a specific algorithm [4] [33].

- Embedded Methods: Feature selection is built into the model training process itself (e.g., L1 regularization in LASSO). They are efficient but can be less interpretable and not universally applicable across all models [4] [33].

The primary advantage of Wrapper Methods is their model-specific optimization, which can lead to superior performance. Their main drawback is computational expense, as they require training models on numerous feature subsets [4] [31].

2. Why are Forward Selection and Backward Elimination considered "greedy" algorithms?

Both Forward Selection and Backward Elimination are termed "greedy" because they make the locally optimal choice at each step without considering the global optimal solution [4]. Forward Selection adds the single best feature at each step, while Backward Elimination removes the single worst feature. While this approach is computationally more feasible than trying all possible feature combinations, it may miss the optimal feature subset if it requires adding or removing multiple features simultaneously [31] [32].

3. In the context of property prediction, when should I prefer Forward Selection over Backward Elimination?

The choice often depends on your dataset and hypotheses:

- Use Forward Selection when you suspect that only a small number of features are truly predictive. It starts from an empty set and is computationally efficient in the early stages, making it suitable for datasets with a very large number of features [31] [32].

- Use Backward Elimination when you have strong reason to believe that most features are relevant and you want to remove the redundant ones. It starts with all features, which can be computationally intensive if the initial feature set is large [31] [32].

For high-dimensional data, such as in molecular property prediction, Forward Selection is often the more practical starting point due to its lower initial computational cost.

4. What are the common evaluation metrics used for feature subsets in Wrapper Methods?

The metric should align with your overall modeling goal. Common choices include:

- For Regression (e.g., predicting property values): R-squared, Adjusted R-squared, or p-values of the features [31].

- For Classification: Accuracy, F1-score, Precision, or Recall [31] [32].

Troubleshooting Common Experimental Issues

Problem: The feature selection process is taking too long to complete.

- Cause: Wrapper methods are inherently computationally expensive, as they require repeatedly training and evaluating a model [4] [32].

- Solutions:

- Reduce the feature space initially: Use a Filter method (e.g., correlation analysis) for a quick pre-filtering to remove obviously irrelevant features before applying a Wrapper method [4].

- Use a faster model: For the wrapper search, use a less complex, faster-to-train model (e.g., Logistic Regression instead of Random Forest) to evaluate the subsets. The optimal features found are often transferable to your final, more complex model [32].

- Increase the step size: In Recursive Feature Elimination (RFE), increasing the

stepparameter allows you to remove more features per iteration, reducing the total number of training cycles [32].

Problem: The final model with selected features is overfitting.

- Cause: Wrapper methods can overfit the feature subset to the evaluation metric, especially with small datasets or when the search is not properly constrained [4].

- Solutions:

- Use cross-validation: Always use a robust evaluation method like k-fold cross-validation within the feature selection process to assess the true performance of a feature subset, not just its performance on the entire training set. The

RFECVclass insklearnis designed for this [32]. - Set a stopping criterion: Define a stopping criterion, such as when the performance improvement after adding/removing a feature falls below a certain threshold [4] [31].

- Use cross-validation: Always use a robust evaluation method like k-fold cross-validation within the feature selection process to assess the true performance of a feature subset, not just its performance on the entire training set. The

Problem: I get a different set of optimal features every time I run the selection with a slightly different dataset.

- Cause: High variance in feature selection can be due to instability in the underlying model or high correlation between features.

- Solutions:

- Stratify your data: Ensure your training data is representative and stratified, especially for classification tasks [32].

- Ensemble methods: Run the feature selection process multiple times on different data resamples (e.g., bootstraps) and aggregate the results to find the most consistently selected features.

- Tune model hyperparameters: An unstable model can lead to unstable feature selection. Ensure your base estimator is properly tuned before feature selection.

Experimental Protocols and Workflows

Protocol 1: Implementing Forward Selection

Forward selection starts with no features and iteratively adds the feature that most improves the model until a stopping criterion is met [31] [32].

Detailed Methodology:

- Choose a Significance Level: Select a statistical significance level (e.g., SL=0.05) for p-value evaluation [31].

- Initialize: Start with an empty set of best features (

best_features = []). - Iterate and Evaluate:

- For each remaining feature not in

best_features, fit the model (e.g., Linear Regression) onbest_features + [new_feature]. - Calculate the evaluation metric (e.g., p-value of the new feature or model R-squared).

- Identify the feature that provides the most significant improvement (lowest p-value).

- For each remaining feature not in

- Check Stopping Criterion: If the lowest p-value is less than the significance level, add that feature to

best_featuresand repeat Step 3. Otherwise, terminate the process [31]. - Output: The final set of

best_features.

Python Implementation with mlxtend:

Protocol 2: Implementing Backward Elimination

Backward elimination begins with all features and iteratively removes the least significant feature until a stopping criterion is met [31] [32].

Detailed Methodology:

- Choose a Significance Level: (e.g., SL=0.05).

- Initialize: Start with a model that includes all features.

- Iterate and Evaluate:

- Fit the model with the current set of features.

- Calculate the p-value for each feature.

- Identify the feature with the highest p-value.

- Check Stopping Criterion: If the highest p-value is greater than or equal to the significance level, remove that feature and repeat Step 3. Otherwise, terminate the process [31].

- Output: The final set of remaining features.

Python Implementation with mlxtend:

Workflow Visualization

The following diagram illustrates the logical workflow and decision process for both Forward Selection and Backward Elimination, helping to visualize the "greedy" search path.

Wrapper Method Greedy Search Workflow

The table below summarizes the performance of Forward Selection and Backward Elimination on the Boston Housing dataset, a common regression benchmark for predicting property values (in this case, median house price).

Table: Feature Selection Performance on Boston Housing Dataset [31]

| Method | Optimal Number of Features Selected | Selected Features (Abbreviated) | Model Performance (R²) |

|---|---|---|---|

| Forward Selection | 11 | CRIM, ZN, CHAS, NOX, RM, DIS, RAD, TAX, PTRATIO, B, LSTAT | Optimized for the selected subset |

| Backward Elimination | 11 | CRIM, ZN, CHAS, NOX, RM, DIS, RAD, TAX, PTRATIO, B, LSTAT | Optimized for the selected subset |

Note: The specific R² value depends on the training/test split and cross-validation. The key outcome is the set of features identified as most predictive.

The Scientist's Toolkit: Essential Research Reagents & Software

Table: Key Resources for Feature Selection Experiments

| Item Name | Function / Purpose | Example / Implementation |

|---|---|---|

| Scikit-learn | A core machine learning library providing datasets, algorithms, and feature selection tools like RFE and SelectFromModel. |

from sklearn.feature_selection import RFE [34] [32] |

| MLxtend | A library extending scikit-learn, providing easy-to-use implementations of Sequential Feature Selector (SFS) for Forward/Backward selection. | from mlxtend.feature_selection import SequentialFeatureSelector [31] [32] |

| Statsmodels | A library for statistical modeling, often used for detailed statistical output like p-values, which can drive custom feature selection code. | import statsmodels.api as sm [31] |

| Pandas | A data manipulation and analysis library, essential for handling structured data (DataFrames) during feature subset creation and evaluation. | import pandas as pd [31] |

| Recursive Feature Elimination (RFE) | A wrapper method that recursively removes features, building a model with the remaining features and removing the least important ones. | RFE(estimator=LogisticRegression(), n_features_to_select=5) [34] [32] |

Frequently Asked Questions (FAQs)

FAQ 1: What are embedded methods and how do they differ from other feature selection techniques?

Embedded methods integrate the feature selection process directly into the model training algorithm, combining the efficiency of filter methods and the accuracy of wrapper methods. Unlike filter methods that evaluate features independently of the model, or wrapper methods that iteratively train models on different feature subsets, embedded methods perform feature selection automatically during training. This makes them faster than wrapper methods and often more accurate than filter methods because they consider feature interactions with the specific model being trained [35] [36] [37].

FAQ 2: When should I use Lasso over Random Forest for feature selection in my research?

The choice depends on your data characteristics and project goals. Lasso (L1 Regularization) is particularly effective when you have many features and want to create a very sparse, interpretable model, as it can shrink coefficients of irrelevant features to exactly zero [38] [36]. Random Forest feature importance is better suited for capturing complex, non-linear relationships and interactions between features without assuming linearity [39] [35]. For drug property prediction where interpretability is key, Lasso might be preferable; for complex bioactivity prediction where accuracy is paramount, Random Forest may perform better.

FAQ 3: Why are my Lasso regression results selecting what seem to be irrelevant features?

This common issue can stem from several causes. First, your regularization strength (λ or alpha) may be set too low, providing insufficient penalty to shrink coefficients to zero. Try increasing the alpha parameter. Second, high multicollinearity among features can cause instability in feature selection; consider using Elastic Net, which combines L1 and L2 regularization to handle correlated features better [38] [37]. Finally, ensure your features are properly scaled, as Lasso is sensitive to feature scale [35].

FAQ 4: How can I improve the reliability of feature importance scores from Random Forest?

To enhance reliability, consider these approaches: Increase the number of trees (n_estimators) to produce more stable importance estimates. Use permutation importance rather than Gini importance, as it is less biased toward high-cardinality features [40]. Ensure your dataset is representative and sufficient in size. Implement recursive feature elimination with cross-validation (RFECV) that repeatedly trains Random Forest and removes the weakest features, which can provide more robust feature subsets [35] [41].

FAQ 5: Can embedded methods handle highly correlated features in pharmaceutical datasets?

Different embedded methods handle correlated features differently. Lasso tends to arbitrarily select one feature from a correlated group, which can be problematic for interpretation. Ridge regression (L2) shrinks coefficients but keeps all features. Elastic Net, which combines L1 and L2 regularization, often performs best with correlated features as it tends to select or deselect groups of correlated features together [38] [37]. Random Forest can handle correlated features reasonably well, though importance scores may be distributed across correlated variables [35].

Troubleshooting Guides

Issue 1: Poor Model Performance After Lasso Feature Selection

Symptoms: Model accuracy decreases significantly after applying Lasso feature selection, or too many features are eliminated.

Diagnosis and Resolution:

Check regularization strength: The alpha parameter may be too high, causing excessive feature removal.

- Solution: Use cross-validation (e.g.,

LassoCVin scikit-learn) to find the optimal alpha value that minimizes prediction error [35].

- Solution: Use cross-validation (e.g.,

Verify feature scaling: Lasso is sensitive to feature scale.

- Solution: Standardize all features (zero mean, unit variance) before applying Lasso [35].

Consider alternative methods: If important features are consistently eliminated, try Elastic Net or Random Forest.

- Solution: Implement Elastic Net with balanced L1 and L2 ratios [37].

Issue 2: Inconsistent Feature Selection Across Different Runs

Symptoms: Different features are selected when the same algorithm is run on different data samples or with different random seeds.

Diagnosis and Resolution:

Increase model stability:

- For Random Forest: Increase

n_estimators(number of trees) and set a fixed random state for reproducibility [35].

- For Random Forest: Increase

Use ensemble feature selection:

Apply statistical testing:

- Solution: Use methods like

SelectFromModelwith appropriate thresholding instead of selecting fixed number of features [35].

- Solution: Use methods like

Issue 3: Handling High-Dimensional Data with Limited Samples

Symptoms: Performance degradation with thousands of features but only hundreds of samples, common in genomic and proteomic studies.

Diagnosis and Resolution:

Implement two-stage feature selection:

- Solution: Combine filter and embedded methods. First, use a fast filter method (e.g., variance threshold, mutual information) to reduce feature space, then apply embedded methods [39].

Utilize specialized high-dimensional techniques:

- Solution: For Random Forest, use methods like

varSelRForVSURFthat implement backward elimination based on variable importance [40].

- Solution: For Random Forest, use methods like

Apply more aggressive regularization:

- Solution: Increase Lasso alpha parameter or use stability selection with Lasso to identify robust features [42].

Experimental Protocols & Methodologies

Protocol 1: Lasso Feature Selection for QSAR Modeling

This protocol details the application of Lasso regression for feature selection in Quantitative Structure-Activity Relationship (QSAR) studies for drug property prediction [42].

Materials and Reagents:

- Dataset: Chemical compounds from PubChem or ChEMBL with associated biological activity or property data [42] [43]

- Molecular descriptors: Topological indices, molecular fingerprints (e.g., ECFP, FCFP), physicochemical properties [42]

- Software: Python with scikit-learn, RDKit for descriptor calculation

Procedure:

Data Preparation:

- Calculate molecular descriptors and fingerprints for all compounds

- Split data into training (70%), validation (15%), and test (15%) sets

- Standardize features to zero mean and unit variance

Model Training with Cross-Validation:

- Perform k-fold cross-validation (typically k=5 or 10) on training set to determine optimal α value

- Train Lasso regression with optimal α on entire training set

- Identify features with non-zero coefficients as selected feature subset

Validation:

- Evaluate model performance on validation and test sets using MSE and R² metrics [42]

- Compare with full feature model and other feature selection methods

Workflow Diagram:

Protocol 2: Random Forest Feature Importance for Drug-Target Interaction Prediction

This protocol describes using Random Forest feature importance for predicting drug-target interactions (DTIs), a key task in drug discovery [44] [43].

Materials and Reagents:

- Dataset: Known drug-target pairs from databases like ChEMBL or BindingDB [43]

- Drug features: Molecular fingerprints (e.g., E3FP, ECFP), physicochemical properties [43]

- Target features: Sequence descriptors, structural features

- Software: Python with scikit-learn, RDKit, specialized DTI packages

Procedure:

Feature Engineering:

- Compute drug features using molecular fingerprinting algorithms

- Calculate target protein features using sequence or structure-based descriptors

- Create combined feature vectors for drug-target pairs

Random Forest Training:

- Train Random Forest classifier with sufficient trees (typically 500-1000)

- Use out-of-bag (OOB) samples for internal validation

- Calculate feature importance using mean decrease impurity or permutation importance

Feature Selection and Evaluation:

Workflow Diagram:

Performance Comparison Tables

Table 1: Comparison of Embedded Feature Selection Methods

| Method | Key Mechanism | Best Use Cases | Advantages | Limitations |

|---|---|---|---|---|

| Lasso (L1) | Shrinks coefficients to zero via L1 penalty [38] [36] | High-dimensional data, linear relationships, interpretability [42] | Creates sparse models, feature elimination, interpretable [37] | Struggles with correlated features, linear assumptions [38] |

| Random Forest | Feature importance based on impurity reduction [35] | Complex non-linear relationships, interaction effects [39] [40] | Handles non-linearity, robust to outliers, no linearity assumption [35] | Computationally intensive, less interpretable, biased toward high-cardinality features [40] |

| Elastic Net | Combines L1 and L2 regularization [38] [37] | Correlated features, grouped feature selection [37] | Handles correlated features, balances selection and shrinkage [38] | Two parameters to tune (α, l1_ratio), more complex [37] |

| Regularized Logistic Regression | L1 penalty on logistic loss function [35] [38] | Binary classification problems, high-dimensional data [35] | Sparse solutions for classification, interpretable [38] | Limited to classification, linear decision boundary [35] |

Table 2: Quantitative Performance in Drug Discovery Applications

| Application Domain | Method | Reported Performance | Key Findings | Reference |

|---|---|---|---|---|

| Drug-Target Interaction Prediction | Lasso + Random Forest | Acc: 94.88-98.09%, AUC: ~0.99 [44] [43] | Lasso effectively removes redundant features before RF classification [44] | [44] |

| QSAR Property Prediction | Lasso Regression | MSE: 3540.23, R²: 0.9374 [42] | Excellent for datasets with inherent linear relationships [42] | [42] |

| QSAR Property Prediction | Ridge Regression | MSE: 3617.74, R²: 0.9322 [42] | Handles multicollinearity effectively [42] | [42] |

| Two-Stage RF + Genetic Algorithm | RF + Improved GA | Significant improvement in classification performance [39] | Combines advantages of filter and wrapper methods [39] | [39] |

Research Reagent Solutions

Table 3: Essential Tools for Embedded Feature Selection Experiments

| Tool/Reagent | Function/Purpose | Example Applications | Implementation Notes |

|---|---|---|---|

| scikit-learn SelectFromModel | Meta-transformer for feature selection based on importance weights [35] | General-purpose feature selection with any estimator with feature_importances_ or coef_ attribute [35] |

Useful for threshold-based selection after model training [35] |

| LassoCV/ElasticNetCV | Lasso/Elastic Net with built-in cross-validation for parameter tuning [35] | Automated optimization of regularization parameters [42] | More efficient than manual grid search [35] |

| RandomForestClassifier/Regressor | Implementation of Random Forest with feature importance calculation [35] | Non-linear feature selection, complex biological data [39] [40] | Prefer permutation importance over Gini for reliable results [40] |

| RDKit | Cheminformatics library for molecular descriptor calculation [43] | Generation of molecular fingerprints and descriptors for drug discovery [43] | Essential for pharmaceutical and chemical informatics [43] |

| varSelRF/VSURF | R packages for Random Forest feature selection with backward elimination [40] | High-dimensional biological data, genomic studies [40] | Implements sophisticated wrapper-embedded hybrid approaches [40] |

Within the scope of thesis research focused on optimizing machine learning features for property price prediction, managing high-cardinality categorical data is a critical challenge. This technical support center provides troubleshooting guides and FAQs to help researchers effectively handle features like location (zip codes, neighborhoods) and property type, which contain a large number of unique categories, to enhance model accuracy and generalizability.

Frequently Asked Questions (FAQs)