Optimizing High-Throughput Workflow Selection for Autonomous Experimentation in 2025

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on selecting and optimizing high-throughput workflows for autonomous experimentation.

Optimizing High-Throughput Workflow Selection for Autonomous Experimentation in 2025

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on selecting and optimizing high-throughput workflows for autonomous experimentation. It covers foundational principles, practical methodologies for implementation, strategies for troubleshooting and optimization, and rigorous validation approaches. Drawing on the latest trends in AI, automation, and real-world case studies from pharmaceutical R&D and materials science, this resource is designed to help scientific teams accelerate discovery, improve data quality, and enhance operational efficiency in their labs.

The Foundations of Autonomous Experimentation and High-Throughput Workflows

Autonomous Experimentation (AE), also referred to as Self-Driving Labs (SDLs), represents a transformative paradigm in scientific research that combines artificial intelligence (AI), robotics, and automation to execute iterative research cycles without human intervention. This approach is defined as "an iterative research loop of planning, experiment, and analysis [that is] carried out autonomously" [1]. AE systems are designed to accelerate materials discovery and development—processes that can traditionally take decades—by orders of magnitude through closed-loop operation [2] [3]. Unlike automated systems that simply perform predefined tasks rapidly, genuine AE incorporates AI to dynamically design and select subsequent experiments based on real-time analysis of incoming data, effectively placing the "human on the loop" rather than "in the loop" [3]. This capability allows AE systems to investigate richer, more complex phenomena across high-dimensional parameter spaces that would be intractable for human researchers trained to reduce variables to manageable levels [2].

The value proposition of AE extends beyond mere acceleration. These systems can generate and test scientific hypotheses faster and more effectively than human researchers alone, producing deeper scientific understanding of materials phenomena and enabling rational investigations beyond naïve machine learning approaches [3]. The emerging infrastructure for AE envisions network effects where interconnected research robots collectively multiply the impact of each individual contribution, creating a tipping point in research productivity [2]. As the field advances, AE is poised to revolutionize materials synthesis, characterization, and development across diverse domains including pharmaceuticals, electronics, and energy applications.

Core Principles and Workflow Selection Framework

The Autonomous Experimentation Cycle

The fundamental operating principle of AE systems is a continuous, closed-loop process comprising three core phases: planning, execution, and analysis. In the planning phase, AI algorithms use mathematical models to design the next experiment based on accumulated data and campaign objectives. The execution phase involves robotic systems carrying out the physical experiment, often with in situ monitoring. In the analysis phase, data is processed and interpreted to inform the next planning cycle [1] [3]. This iterative process continues autonomously until the research objective is met or experimental resources are exhausted.

A critical capability of advanced AE systems is balancing exploration (probing unexplored regions of parameter space to discover new phenomena) against exploitation (refining conditions near known optima) [3]. The acquisition function—the algorithm that selects subsequent experiments—determines this balance based on the campaign objectives. For example, Bayesian optimization approaches using Gaussian process models can effectively guide measurement sequences across combinatorial libraries, as demonstrated in the discovery of improved phase-change memory materials [3].

Framework for High-Value Workflow Selection

Selecting optimal data collection workflows is essential for AE efficiency. A robust framework enables AE systems to dynamically identify highest-value workflows that generate structured materials information according to user-defined objectives [1]. This framework follows a structured approach:

- Objective Establishment: The user defines the quantitative objective guiding workflow development

- Procedure Enumeration: The user specifies procedures, methods, and models available for workflow consideration

- Fast Search Implementation: The system rapidly filters possible workflows to identify high-quality candidates

- Fine Search Execution: The system selects the optimal workflow from high-quality candidates [1]

This framework conceptualizes that a well-designed Workflow generates relevant Information that delivers measurable Value, expressed as: Workflow → Information → Value [1]. The value of information is proportional to its Quality (accuracy, precision, certainty) and Actionability (utility for achieving objectives) [1]. This relationship enables quantitative workflow evaluation and selection, moving beyond static, human-designed protocols.

Table 1: Workflow Value Determination Factors

| Factor | Sub-Factor | Description | Impact on Value |

|---|---|---|---|

| Quality | Accuracy | Proximity to ground truth | Higher accuracy increases value |

| Precision | Reproducibility of results | Higher precision increases value | |

| Certainty | Confidence in information | Higher certainty increases value | |

| Actionability | Decision Support | Utility for high-value decisions | Critical objectives increase value |

| Cost Efficiency | Resource requirements | Lower cost increases net value | |

| Temporal Efficiency | Time requirements | Faster collection increases value |

Quantitative Performance of Autonomous Experimentation Systems

AE systems have demonstrated remarkable efficiency improvements across multiple materials science domains. The performance gains are quantified through reduced experimentation time, fewer required experiments, and accelerated discovery cycles compared to traditional approaches.

In a case study focusing on characterization workflows for additively manufactured Ti-6Al-4V samples, an AE system employing the workflow selection framework identified an optimal high-throughput workflow that reduced collection time for backscattered electron scanning electron microscopy (BSE-SEM) images by a factor of 85 compared to a previously published study, and by a factor of 5 compared to the case study's benchmark workflow [1]. This dramatic improvement was achieved through the integration of a deep-learning based image denoiser that enabled faster data acquisition without compromising information quality.

In materials synthesis, autonomous systems have demonstrated similar efficiencies. In the determination of eutectic phase diagrams for Sn-Bi binary thin-film systems, an AE campaign achieved accurate phase mapping with a six-fold reduction in the number of required experiments compared to conventional approaches [3]. This was accomplished through real-time, self-driving cyclical interaction between experiments and computational predictions, with the system autonomously guiding the sampling of composition-temperature space.

Table 2: Quantitative Performance Improvements in Autonomous Experimentation

| Application Domain | Traditional Approach | AE Approach | Performance Improvement |

|---|---|---|---|

| Image Characterization(Ti-6Al-4V BSE-SEM) | 85X time requirement(previous study) | Deep-learning denoising | 85X faster than previous study5X faster than benchmark [1] |

| Phase Diagram Mapping(Sn-Bi thin films) | Comprehensive samplingof parameter space | Gaussian process-guided sampling | 6X reduction in number ofexperiments required [3] |

| Material Discovery(Ge-Sb-Te system) | Full compositional rangemeasurement | Targeted sampling of promising regions | Identified optimal material after measuringonly a fraction of full range [3] |

| Carbon Nanotube Synthesis(CVD growth optimization) | One-variable-at-a-timeor full factorial | Iterative optimal experimental design | Rapid probing of 500°C temperature windowand 8-10 orders of magnitude pressure [3] |

Experimental Protocols and Methodologies

Protocol: Autonomous Chemical Vapor Deposition of Carbon Nanotubes

The ARES (Autonomous Research System) protocol for carbon nanotube synthesis represents a pioneering implementation of AE for materials synthesis [3].

Objective Definition: Define campaign goal, which may be either (1) Blackbox optimization to maximize target properties (e.g., CNT growth rate, minimize diameter variation) or (2) Hypothesis testing to confirm/reject scientific hypotheses (e.g., catalyst activity dependence on oxidation state) [3].

Workflow Setup:

- Equipment Configuration: Cold-wall CVD system with precursor gas introduction system, microreactor silicon pillars with pre-deposited catalysts, high-power laser heating system, and in situ Raman spectroscopy capability [3].

- Parameter Definition: Define adjustable parameters including temperature (adjustable over 500°C range), gas flow rates (hydrocarbon, hydrogen, oxidants), and partial pressure ratios (spanning 8-10 orders of magnitude) [3].

- Acquisition Function Selection: Choose appropriate algorithm (e.g., Bayesian optimization) to balance exploration and exploitation based on campaign objectives [3].

Execution Cycle:

- Planning Phase: AI planner selects growth conditions for next experiment based on all previous results and campaign objectives [3].

- Synthesis Phase: Focus laser on single silicon pillar microreactor to reach target temperature, introduce growth gases to initiate CNT synthesis [3].

- In Situ Characterization: Monitor CNT growth in real time using Raman spectroscopy of scattered laser light [3].

- Analysis Phase: Analyze spectral data to determine CNT characteristics (growth rate, quality, dimensions) [3].

- Iteration Decision: Assess if campaign objective is met; if not, return to planning phase for next iteration [3].

Validation: Compare final optimized conditions or hypothesis conclusions with literature values and physical models. For hypothesis testing campaigns, design experiments specifically to probe contrasting predictions of competing hypotheses.

Protocol: High-Throughput Workflow Selection for Material Characterization

This protocol enables AE systems to autonomously select optimal characterization workflows for extracted information [1].

Objective Establishment: Define the characterization goal (e.g., grain size measurement, defect density quantification, phase identification) and value metric (time minimization, information quality maximization, or balanced objective) [1].

Workflow Space Definition:

- Procedure Enumeration: List all available characterization techniques (e.g., SEM, TEM, XRD), operational modes (e.g., accelerating voltage, magnification, detector type), and data processing methods (e.g., deep-learning denoising, conventional filtering) [1].

- Constraint Specification: Define resource constraints (time, cost, computational resources) and minimum quality thresholds [1].

Workflow Selection Process:

- Fast Search Implementation: Rapidly screen workflow space to eliminate low-quality candidates that fail to meet minimum information quality thresholds [1].

- Fine Search Execution: Evaluate high-quality workflow candidates using multi-objective optimization that balances information quality, actionability, and resource consumption [1].

- Optimal Workflow Selection: Identify workflow that delivers highest value according to user-defined objective function [1].

Execution and Validation:

- Workflow Implementation: Execute selected workflow on target sample(s) [1].

- Performance Monitoring: Track actual information quality, resource utilization, and actionability compared to predictions [1].

- Database Update: Incorporate performance results into workflow knowledge base for future selection improvements [1].

- Iterative Refinement: Return to workflow selection if objectives are not met or new information needs emerge [1].

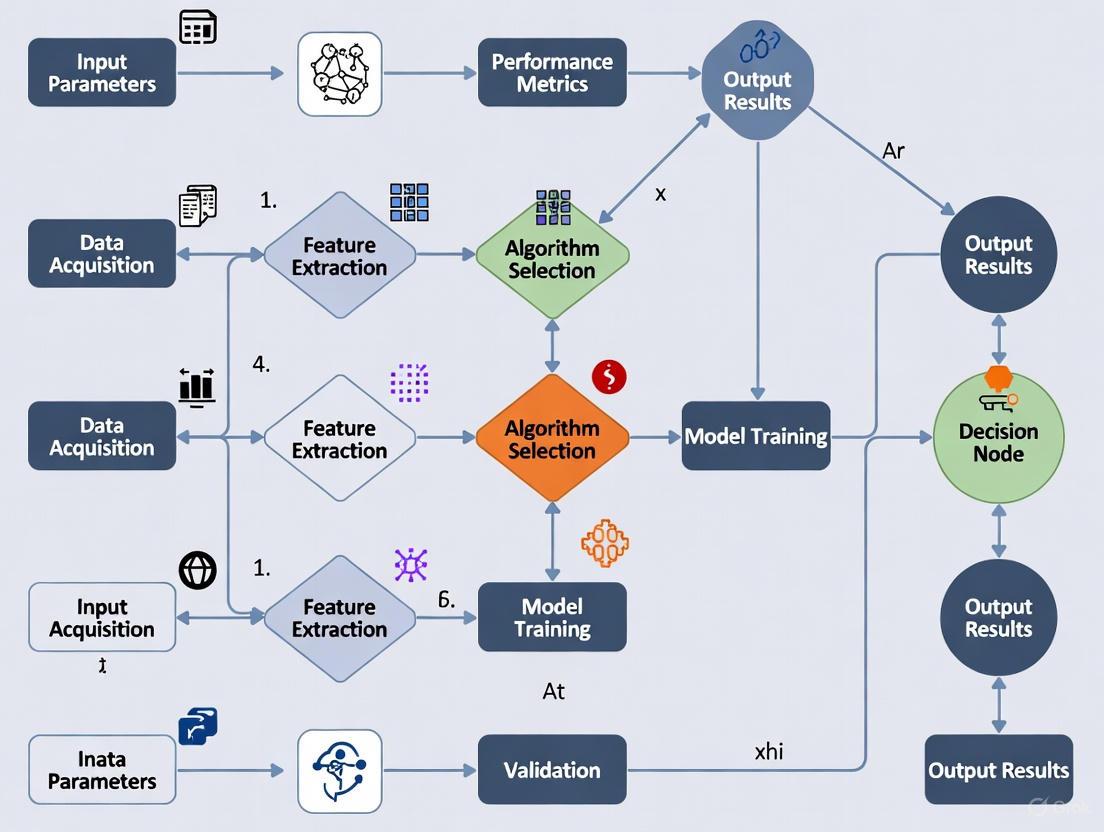

Diagram 1: Autonomous Workflow Selection Process (87 characters)

Essential Research Reagent Solutions and Materials

Successful implementation of AE requires both physical components and computational infrastructure. The table below details essential resources for establishing autonomous experimentation capabilities.

Table 3: Essential Research Reagent Solutions for Autonomous Experimentation

| Category | Item | Function | Application Examples |

|---|---|---|---|

| Sample Management | Universal Sample Holders | Standardized interface for handling diverse sample form factors (thin films, bulk samples, powders) [4] | Multi-material platformsHigh-throughput screening |

| Instrument Control | SiLA/EPICS/MQTT Protocols | Standardized communication for instrument control and data acquisition [4] | Robotics integrationReal-time monitoring |

| Data Management | FAIR Data Standards | Ensure machine-actionable, AI-Ready data (Findable, Accessible, Interoperable, Reusable) [4] | Knowledge graphsMeta-data management |

| AI/ML Infrastructure | Scientific AI Software Stack(PyTorch, TensorFlow, scikit-learn) | Physics-aware machine learning specialized for scientific data [4] | Bayesian optimizationDeep learning denoising |

| Synthesis Reagents | CVD Precursor Gases(e.g., ethylene, hydrogen) | Feedstock materials for vapor phase deposition processes [3] | Carbon nanotube growthThin film deposition |

| Catalyst Systems | Metal Nanoparticle Catalysts(e.g., Fe, Co, Ni) | Seed and template nanostructure growth [3] | CNT synthesisNanomaterial fabrication |

| Characterization Tools | In Situ Monitoring Systems(e.g., Raman spectroscopy) | Real-time material characterization during synthesis [3] | Process optimizationGrowth mechanism studies |

| Computational Frameworks | Autonomous Experimentation Environment(e.g., BlueSky, ChemOS) | High-level abstraction layer for experimental control [4] | Method portabilityAlgorithm comparison |

Standards and Infrastructure Requirements

The development of robust AE ecosystems depends on establishing standards across multiple domains. The National Institute of Standards and Technology (NIST) is leading efforts to develop standards for modular and autonomous laboratory ecosystems [4].

Sample Management Standards: Universal sample holder standards are needed to handle diverse materials forms including thin films, bulk samples, and powders. These standards would define physical form factors, size specifications, temperature ranges, and atmospheric control requirements, similar to the USB-C standard which defines multiple aspects at varying support levels [4].

Instrument Control and Communication Standards: Digital connectivity between AI infrastructure and physical laboratory equipment requires robust communication protocols. Existing frameworks including SiLA (Standardization in Lab Automation), EPICS (Experimental Physics and Industrial Control System), and IoT protocols like MQTT provide foundations, but require adaptation for materials-specific challenges [4].

Data and Knowledge Management Standards: Machine-actionable, AI-ready data are essential for autonomous systems. The FAIR (Findable, Accessible, Interoperable, Reusable) principles must be implemented through standardized data interchange formats and knowledge graphs that prioritize key instrument types [4].

Algorithm and Model Integration Standards: Portable algorithms that can operate across multiple autonomous systems require abstraction layers similar to the Atomic Simulation Environment used in computational materials science. Open-source autonomous experimentation environments (e.g., BlueSky, ChemOS, Hermes, HELAO) provide precursors for these standards [4].

Diagram 2: AE Standards and Infrastructure (58 characters)

In autonomous experimentation research, the strategic selection of high-throughput workflows is paramount for accelerating scientific discovery. The value generated by any experimental workflow is not a direct product of the data collected, but of the quality and actionability of the information extracted from that data [1]. This principle forms the foundation for effective, scalable research, particularly in fields like drug development where resources are precious and the cost of non-actionable data is high. This document outlines the core principles and provides practical protocols for researchers to quantify these concepts, enabling the systematic selection of optimal workflows that maximize informational value.

Core Conceptual Framework

The value-generation pathway of a workflow can be conceptualized as a direct chain: Workflow → Information → Value [1]. The value of the derived information is proportional to its quality and its actionability.

Defining Information Quality

Information Quality is an objective measure of the faithfulness of the extracted information to the true state of the system under investigation. It is proportional to the information's accuracy and precision with respect to a predetermined ground truth or reference [1]. High-quality information reliably reduces uncertainty about the system.

Defining Information Actionability

Information Actionability is a user-defined decision function that quantifies how useful the information is in achieving a specific objective [1]. It is context-dependent. For example:

- High-Actionability Information: The ground-truth defect density of a critical medical implant component for predicting failure probability.

- Lower-Actionability Information: An estimate of area fraction from a 2D image used as a proxy for 3D volume fraction when the latter is too expensive or difficult to measure [1].

A workflow's ultimate value is determined by the intersection of these two properties. A highly accurate measurement (high quality) is of little value if it does not inform the decision at hand (low actionability). Conversely, a highly actionable piece of information must be of sufficient quality to be trusted.

Quantitative Framework for Workflow Selection

To move from concept to practice, the value of a workflow must be quantified. The following table defines the key metrics that form the basis for an optimal workflow selection framework [1].

Table 1: Core Metrics for Quantifying Workflow Value

| Metric | Definition | Measurement Approach | Role in Workflow Selection |

|---|---|---|---|

| Information Quality | Fidelity of the information to the true system state. | Quantified by Accuracy (proximity to ground truth) and Precision (reproducibility) [1]. | Ensures the generated information is trustworthy and reduces epistemic uncertainty. |

| Information Actionability | Usefulness of information for achieving a specific objective. | A user-defined scoring function based on the decision context (e.g., cost of wrong decision, time-sensitivity) [1]. | Aligns the workflow output with the overarching experimental goal. |

| Acquisition Cost | Total resource expenditure for data collection. | Includes time, computational resources, consumables, and labor [1]. | Introduces practical constraints and enables cost-benefit analysis. |

A Protocol for Optimal Workflow Selection

The following protocol, adapted from a framework for autonomous materials characterization, provides a step-by-step methodology for selecting the highest-value workflow [1].

Protocol 1: Optimal Workflow Selection for Autonomous Experimentation

- Objective: To algorithmically select a high-throughput data collection workflow that maximizes information value for a given objective.

- Application Context: This protocol is designed for integration into autonomous experimentation loops, such as high-throughput screening in drug development or materials characterization [1] [5].

- Inputs: User-defined objective; a set of candidate procedures, methods, and models.

- Outputs: A selected optimal workflow with summary statistics of its performance.

| Step | Procedure | Rationale & Notes |

|---|---|---|

| 1. Establish Objective | The user defines a quantifiable objective to guide workflow development. | The objective must be clear and measurable, as it defines the actionability function. Example: "Identify the formulation with the highest binding affinity at a throughput of 100 samples/hour." |

| 2. Define Workflow Space | The user enumerates all potential procedures, methods, and models to be considered. | This creates the universe of possible workflows. Example components: sample preparation methods, imaging techniques (e.g., SEM, fluorescence), and data processing models (e.g., denoising algorithms) [1]. |

| 3. Fast Search | Conduct a broad search over the possible workflows to filter for high-quality candidates. | Uses simplified models or heuristics to quickly eliminate workflows that are clearly suboptimal in terms of quality or cost [1]. |

| 4. Fine Search | Perform a detailed evaluation of the shortlisted high-quality workflows. | The workflows are run and evaluated based on the precise metrics of Information Quality and Acquisition Cost, all measured against the user-defined objective (Actionability) [1]. |

| 5. Select & Deploy | Select the workflow with the highest value score. | The value score is a function of Quality, Actionability, and Cost. The selected workflow is then deployed in the autonomous experimental loop [1]. |

Case Study & Experimental Protocol

A case study on characterizing an additively manufactured Ti-6Al-4V sample illustrates the power of this framework. The objective was to collect high-quality backscattered electron SEM (BSE-SEM) images. The framework was used to select a workflow that incorporated a deep-learning-based image denoiser, allowing for much faster image acquisition times without significant loss of information quality [1].

Table 2: Research Reagent Solutions for High-Throughput Characterization

| Item | Function in the Experiment | Specification Notes |

|---|---|---|

| Ti-6Al-4V Sample | The system under investigation; a model material system. | Additively manufactured; prepared via standard metallographic procedures (cutting, mounting, polishing). |

| Scanning Electron Microscope (SEM) | Primary data collection instrument for high-resolution imaging. | Configured for Backscattered Electron (BSE) imaging to reveal material phase contrast. |

| Deep-Learning Denoiser | Computational model to reduce noise in images acquired with low electron dose or short dwell times. | The key enabling technology for high-throughput; allows for a factor of 85 reduction in collection time [1]. |

| Workflow Selection Software | Executes the framework logic (fast search, fine search) to select the optimal data collection parameters. | Can be custom-built or integrated into AE control software. |

The following DOT script visualizes the experimental workflow selected by the framework, highlighting the critical integration of the denoising model that enabled high-throughput operation.

Protocol 2: High-Throughput BSE-SEM Imaging with Integrated Denoising

- Objective: To acquire high-quality microstructural information from a Ti-6Al-4V sample at a throughput 85 times faster than a conventional workflow [1].

- Materials: Ti-6Al-4V sample, Scanning Electron Microscope, pre-trained deep-learning denoising model.

| Step | Procedure | Parameters & Notes |

|---|---|---|

| 1. Sample Preparation | Prepare the material sample for SEM imaging using standard metallographic techniques. | Ensure a flat, scratch-free surface to avoid imaging artifacts. |

| 2. Workflow Selection | Execute the Workflow Selection Framework (Protocol 1) to identify optimal SEM imaging parameters. | The framework will select a "low dose / fast scan" parameter set that minimizes acquisition time, justifying the use of the denoiser. |

| 3. Data Collection | Acquire the BSE-SEM image using the selected fast-scan parameters. | Results in a noisy image with low signal-to-noise but acquired in a fraction of the time (e.g., 5-85x faster) [1]. |

| 4. Data Processing | Apply the deep-learning-based denoising algorithm to the raw, noisy image. | The model infers and reconstructs a high-quality, denoised image. Model must be pre-validated on similar data. |

| 5. Information Extraction | Analyze the denoised image to extract quantitative microstructural information. | Perform tasks such as phase identification, grain size measurement, or particle counting. |

| 6. Validation | Compare the information extracted from the denoised image against a ground-truth image (e.g., a slow-scan, high-quality image). | Quantify accuracy and precision to confirm that information quality has been maintained despite the faster throughput. |

Results and Interpretation

In the cited case study, the selected workflow reduced the BSE-SEM image collection time by a factor of 85 times compared to a previously published study and by a factor of 5 times compared to the case study's own benchmark workflow [1]. This was achieved because the framework correctly identified that the Deep-Learning Denoiser could compensate for the lower Information Quality of the fast-scan RawData, producing a CleanData output with sufficient Information Quality for the objective. The immense reduction in Acquisition Cost made this workflow the highest-value option, perfectly balancing quality and actionability. This demonstrates a core principle: sometimes, the highest-value workflow is not the one that generates the highest-quality data in an absolute sense, but the one that generates fit-for-purpose quality at a drastically reduced cost.

The Role of AI and Machine Learning as the Central 'Brain' of Autonomous Labs

Autonomous laboratories represent a paradigm shift in scientific research, transitioning from human-directed experimentation to self-driving labs where Artificial Intelligence (AI) and Machine Learning (ML) serve as the central decision-making "brain." These systems integrate robotics, data infrastructure, and AI into a continuous closed-loop cycle, enabling them to conduct scientific experiments with minimal human intervention [6]. Within the context of high-throughput workflow selection for autonomous experimentation, the AI brain is responsible for learning from data, designing experiments, and dynamically allocating resources to optimize the research process. This approach accelerates discovery timelines and enhances the reproducibility and scalability of scientific research. Industry leaders are already seeing dramatic results—cutting development cycles by up to 70%, reducing testing costs by 50%, and accelerating materials discovery by 10x [7]. In drug discovery, this shift enables a faster understanding of biological mechanisms and allows prospective drug candidates to be tested more quickly and efficiently [8].

Core Architecture of the Autonomous Lab 'Brain'

The intelligence of an autonomous laboratory is not a single monolithic system but a layered architecture where different AI components specialize in specific cognitive functions. This multi-agent structure enables the lab to perform complex, multi-stage experiments autonomously.

Hierarchical AI Decision-Making Framework

A modern implementation of this architecture uses a hierarchical multi-agent system. For example, the ChemAgents framework features a central Task Manager that coordinates four role-specific agents:

- Literature Reader: Searches and synthesizes existing scientific knowledge

- Experiment Designer: Formulates testable hypotheses and designs experimental procedures

- Computation Performer: Runs simulations and predictions to inform experimental plans

- Robot Operator: Translates digital plans into executable commands for physical laboratory hardware [6]

The Closed-Loop Workflow

This AI brain operates through a continuous, self-optimizing workflow. The diagram below illustrates this closed-loop operation, which forms the core functional circuit of an autonomous laboratory.

This continuous loop minimizes downtime between experiments, eliminates subjective decision points, and enables rapid exploration of novel materials and optimization strategies [6]. The AI system's ability to adapt based on incoming data distinguishes it from simple automation, creating a truly learning system that improves its experimental strategy with each iteration.

Quantified Impact of AI-Driven Workflows

The integration of AI as the central nervous system of autonomous laboratories has produced measurable performance improvements across multiple industries. The following table summarizes key quantitative benefits observed in real-world implementations.

Table 1: Performance Metrics of AI-Driven Autonomous Laboratories

| Application Domain | Reported Efficiency Gains | Key Performance Indicators | Reference Implementation |

|---|---|---|---|

| Battery Materials Development | Development cycles reduced by up to 70% | 50% cost reduction in testing; 10x acceleration in materials discovery | BASF, Samsung SDI, Wildcat Discovery Technologies [7] |

| Pharmaceutical Research | Significant acceleration in understanding biological mechanisms | Faster testing of prospective drug candidates; Improved reproducibility | AstraZeneca, Evotec, Bayer [8] |

| Inorganic Materials Synthesis | 71% success rate in synthesizing predicted materials | 41 of 58 target materials successfully created in continuous operation | A-Lab autonomous synthesis platform [6] |

| Proteomics Workflow | 5x faster sequence identification | High-throughput analysis of 100+ sequences enabled by semi-automated tools | PeptoidSeq workflow for 20mer peptidomimetics [9] |

| Antibody Purification Process | Accelerated development timeframe | Minimized resource consumption; Automated data manipulation | High Throughput Process Development (HTPD) workflow [10] |

These performance improvements stem from the AI system's ability to optimize experimental plans, reduce redundant testing, and identify promising research directions that might elude human researchers. For instance, in battery engineering, AI-driven laboratories can conduct hundreds of parallel experiments, continuously analyzing results and adjusting parameters in real-time [7]. This represents a fundamental shift from sequential testing approaches that dominated traditional laboratories.

Implementation Protocols for AI-Driven Workflow Selection

Protocol: AI-Optimized High-Throughput Experimental Design

Objective: Systematically explore a multi-parameter experimental space to identify optimal conditions for material synthesis or biological response using AI-guided design.

Materials and Equipment:

- Robotic liquid handling system (e.g., Chemspeed ISynth synthesizer)

- Analytical instruments (UPLC-MS, benchtop NMR, or XRD)

- AI/ML software platform (Monolith AI, ChemOS, or custom Python implementation)

- Centralized data management system

Procedure:

- Define Parameter Space: Identify critical variables (temperature, concentration, pH, reaction time) and their feasible ranges based on literature and prior knowledge [6].

- Initial DoE Setup: Implement a Design of Experiments (DoE) with AI-generated initial points, leveraging Latin Hypercube Sampling or similar space-filling algorithms for maximum coverage [10].

- Active Learning Loop:

- Execute first batch of experiments using robotic systems

- Collect analytical data (spectra, yields, performance metrics)

- Train surrogate models (Gaussian Process Regression) on accumulated data

- Use acquisition functions (Expected Improvement, Upper Confidence Bound) to select next most informative experiments [6]

- Multi-Fidelity Optimization: Incorporate computational predictions and historical data to prioritize expensive experimental trials [4].

- Termination Criteria: Continue until performance targets met, budget exhausted, or convergence criteria reached (minimal improvement over 3 iterations).

Validation:

- Confirm optimal conditions through triplicate validation experiments

- Compare AI-derived optimum with traditional approaches

- Characterize resulting materials/products with orthogonal analytical methods

Protocol: Autonomous Discovery of Functional Materials

Objective: Discover and optimize new energy storage materials through fully autonomous synthesis and characterization.

Materials and Equipment:

- Powder handling robotics for solid-state synthesis

- High-temperature furnaces with automated loading/unloading

- X-ray diffractometer (XRD) with automated sample changer

- ML models for phase identification from XRD patterns

- Active-learning driven optimization algorithm (e.g., ARROWS3) [6]

Procedure:

- Target Selection: Identify novel theoretically stable materials using large-scale ab initio phase-stability databases (Materials Project, Google DeepMind) [6].

- Synthesis Recipe Generation: Use natural-language models trained on literature data to propose precursor combinations and synthesis temperatures [6].

- Robotic Synthesis: Execute solid-state synthesis recipes using automated powder handling and furnace systems.

- Phase Identification: Analyze XRD patterns with convolutional neural networks to identify successful synthesis and quantify phase purity [6].

- Iterative Optimization: Use active learning to modify synthesis routes based on results:

- Adjust precursor ratios

- Modify temperature profiles

- Explore alternative precursors

- Validation: Characterize successful materials for application-specific properties (electrochemical performance for battery materials).

Key Considerations:

- Implement sample tracking through standardized sample holders [4]

- Ensure FAIR (Findable, Accessible, Interoperable, Reusable) data principles throughout workflow [4]

- Maintain synthesis failure logs to improve AI decision-making

Essential Research Reagent Solutions

The implementation of AI-driven autonomous laboratories requires specialized materials and informatics tools. The following table details key research reagent solutions that enable high-throughput experimentation and data generation.

Table 2: Essential Research Reagents and Materials for Autonomous Laboratories

| Category | Specific Examples | Function in Workflow | Implementation Example |

|---|---|---|---|

| Specialized Linkers & Handles | Charged C-terminal lysine linker with ivDde protection | Enables sequencing of uncharged oligomers by amplifying y-ion detection in MS/MS | Peptoid sequencing with MALDI-TOF MS [9] |

| Solvatochromic Probes | Reichardt's dye | Colorimetric conformational analysis of immobilized macromolecules through environmental polarity sensing | High-throughput screening of peptoid library conformations [9] |

| Standardized Sample Holders | Universal holders for thin films, bulk samples, powders | Enables automated handling and transfer of diverse material formats between instruments | NIST sample management standards for modular labs [4] |

| Chromatography Resins | CEX resin candidates for antibody purification | High-throughput screening of purification conditions using minimal resources | HTPD workflow for antibody purification [10] |

| Characterization Standards | Peptide standards for LC-MS retention time calibration | Enables transfer of peptide target information between different instrument types | Rapid development of targeted proteomic assays [11] |

Integration and Standardization Frameworks

The full potential of AI-driven autonomous laboratories can only be realized through robust integration frameworks and standardization. The National Institute of Standards and Technology (NIST) is leading efforts to develop standards for a modular and autonomous laboratory ecosystem, addressing four critical areas [4]:

Sample Management Standards: Universal sample holders that handle different sample form factors (thin films, bulk samples, powders) with defined specifications for size, temperature range, and atmospheric control [4].

Instrument Control and Communication Standards: Evaluation of existing protocols (SiLA, EPICS, MQTT) to address the unique challenges of materials research hardware, moving beyond "fragile hacks" to robust interfaces [4].

Data and Knowledge Management Standards: Emphasis on FAIR data principles with consensus on data interchange formats and knowledge graphs to ensure AI-ready data [4].

Algorithm and Model Integration Standards: Development of high-level interfaces (similar to Atomic Simulation Environment) for experimental materials science to enable algorithm portability across different autonomous systems [4].

The relationship between these components creates a foundation for interoperable autonomous research systems, as shown in the following architecture diagram.

This standards-based approach dramatically reduces the cost of engineering an autonomous platform while reducing the risk of obsolescence by ensuring expandability and upgradeability [4]. Just as the internet revolution was enabled by low-level communication standards, the laboratory revolution will be powered by this standards-based modular ecosystem.

Regulatory and Compliance Considerations

As autonomous laboratories become more prevalent, particularly in regulated industries like pharmaceuticals, compliance with emerging AI regulations becomes essential. Key considerations include:

- Algorithmic Impact Assessments: Required before deploying automated decision-making tools, as outlined in Colorado AI Act and California SB 1047 [12].

- AI Governance Structures: Establishment of multidisciplinary ethics committees and bias mitigation strategies throughout the AI lifecycle [12].

- Documentation Standards: Maintaining detailed records of AI system decision-making processes, training data sources, and bias mitigation measures [12].

- Transparency Requirements: Content disclosures under the California AI Transparency Act and adherence to FAIR data principles for regulatory submissions [12].

Companies implementing autonomous laboratories should develop tiered governance structures that align with risk levels while maintaining operational efficiency, incorporating regular audit mechanisms to validate adherence to established guidelines [12].

AI and machine learning have evolved from supportive tools to the central cognitive system of autonomous laboratories, capable of designing experiments, executing them through robotic systems, analyzing results, and iterating based on learned knowledge. This transformation enables unprecedented efficiency gains, with documented reductions in development cycles by up to 70% and cost reductions of 50% in industrial applications [7]. The implementation of standardized interfaces for sample management, instrument control, data management, and algorithm integration [4] will further accelerate adoption across diverse scientific domains.

For researchers selecting high-throughput workflows for autonomous experimentation, success depends on selecting appropriate AI decision architectures, implementing robust data standards, and addressing emerging regulatory requirements. As these systems mature, the role of human scientists will shift from manual execution to creative problem-solving and strategic oversight [8], potentially unlocking new frontiers in materials science, drug discovery, and beyond. The organizations that most effectively integrate AI as the central brain of their research operations will gain significant competitive advantages in the rapidly evolving landscape of scientific discovery.

Application Note

The evolution of high-throughput screening (HTS) into autonomous experimentation represents a paradigm shift in drug discovery and biological research. This transformation is powered by the strategic integration of three core technological pillars: advanced robotic systems for physical task execution, sophisticated AI planning for experimental design and decision-making, and comprehensive automated analysis platforms for data interpretation. Together, these components create closed-loop systems capable of designing, executing, and analyzing experiments with minimal human intervention, dramatically accelerating the pace of scientific discovery while reducing costs and improving reproducibility [13] [14].

Traditional drug discovery has been hampered by extensive timelines (10-15 years), high costs (exceeding $2 billion per therapeutic), and failure rates exceeding 90% before clinical testing [13]. The integration of robotic automation, AI, and automated analysis addresses these inefficiencies by enabling researchers to explore vast chemical spaces—estimated at 10⁶⁰ potential molecules—that were previously inaccessible through manual methods [13]. This application note examines the key components of these integrated systems and provides detailed protocols for their implementation in autonomous research workflows.

System Components and Their Integration

Robotic Systems for Physical Automation

Modern robotic systems for high-throughput experimentation have evolved from fixed automation to modular, adaptable platforms featuring collaborative robots that can safely operate alongside researchers [14]. These systems incorporate multiple specialized components:

- Liquid handling devices capable of dispensing reagents in microliter to nanoliter volumes with precision, minimizing reagent consumption while enabling miniaturization [15].

- Robotic workstations that transfer microplates between different stations (pipetting, incubation, reading) within the automated workflow [16] [15].

- Integrated automation systems that manage sample and reagent transfer, mixing, and final readout with minimal human intervention [15].

- Modular unguarded systems that provide enhanced flexibility and accessibility while maintaining screening productivity [14].

These robotic platforms typically process samples in 96- to 3456-well microplates, with ultra-high-throughput systems capable of analyzing over 100,000 samples daily [15]. The transition to modular systems allows research institutions to adapt quickly to changing experimental demands without replacing entire automation infrastructures.

AI Planning and Experimental Design

Artificial intelligence serves as the cognitive center of autonomous experimentation, dramatically accelerating the design-make-test-analyze (DMTA) cycle through several key capabilities:

- Target Identification and Validation: AI algorithms mine omics datasets, scientific literature, and clinical data to identify novel disease-relevant biological targets. Machine learning models detect patterns invisible to human researchers, such as subtle gene expression correlations or pathway perturbations [13].

- Virtual Screening and Hit Discovery: Instead of experimentally screening millions of compounds, AI models predict which molecules are most likely to interact with target proteins. Generative AI models, including variational autoencoders and diffusion models, can design entirely novel molecular structures optimized for specific binding characteristics [13].

- Lead Optimization: AI predicts absorption, distribution, metabolism, excretion, and toxicity (ADME/Tox) profiles, enabling chemists to modify molecular structures intelligently before synthesis [13].

- Adaptive Experimental Planning: AI systems dynamically redesign experiments based on incoming results, focusing resources on the most promising regions of chemical or experimental space [13].

Companies like Insilico Medicine, Exscientia, and Atomwise have demonstrated the power of AI-driven drug discovery, with examples such as Insilico's fibrosis drug reaching Phase II clinical trials in under three years—an unprecedented timeline compared to traditional approaches [13].

Automated Analysis Systems

Automated analysis platforms transform raw experimental data into actionable insights through multiple interconnected technologies:

- High-throughput detectors and plate readers that assess fluorescence, luminescence, absorption, and other specific parameters for thousands of samples with minimal human input [15].

- Data processing software that handles the enormous data volumes generated by HTS systems, employing quality control measures to identify issues such as pipetting errors and edge effects caused by evaporation [15].

- Machine learning-powered data quality tools that automatically detect anomalies and ensure data reliability before analysis [17].

- Cloud-based data integration that connects instrument data, AI-driven analytics, and molecular design databases through laboratory information management systems (LIMS) and electronic lab notebooks (ELNs) [13].

These automated analysis systems incorporate sophisticated quality control measures, including plate-based controls that characterize assay performance and sample-based controls that measure variability in biological responses [15].

Performance Metrics and Applications

Table 1: Performance Metrics of Integrated Autonomous Experimentation Systems

| Metric Category | Traditional Approach | AI-Automation Integrated System | Improvement Factor |

|---|---|---|---|

| Discovery Timeline | 10-15 years (drug development) | 3 years (INS018_055 example) | 3-5x faster [13] |

| Screening Throughput | Limited by manual processes | >100,000 samples per day | >10x improvement [15] [18] |

| Sample Preparation | Hours to days for 96 samples | 2.5 hours for 96 samples | ~10x faster [18] |

| Data Processing | Manual analysis with high error rate | Automated analysis with ML-powered QC | Significantly improved accuracy [17] [15] |

| Hit Identification | Low hit rates due to limited exploration | AI-predicted binding affinities | Dramatically increased hit rates [13] |

Integrated autonomous systems have demonstrated remarkable success across multiple application domains:

- Drug Discovery: Identification of biologically active compounds against therapeutic targets, such as the use of high-throughput screening to identify small molecules that bind to cardiac MyBP-C for heart failure treatment [15].

- Precision Medicine: Screening of anticancer drug libraries against patient-derived tumor samples to identify optimal treatment strategies [15].

- Toxicology Assessment: Utilization of model organisms like C. elegans in high-throughput assays to evaluate compound toxicity across multiple lifecycles [15].

- Target Identification: Automated proteomics platforms that identify both primary targets and off-target effects of drug candidates, as demonstrated by the autoSISPROT platform's analysis of kinase inhibitors [18].

Implementation Challenges and Solutions

Despite their transformative potential, implementing integrated autonomous experimentation systems presents several challenges:

- Data Quality and Integration: Incomplete or biased datasets can skew AI predictions. Solution: Implement curated, standardized assay databases and cloud-based workflow orchestration [13].

- System Interoperability: Legacy instruments and software often lack interoperability. Solution: Utilize modular systems with API-driven integration capabilities [13] [14].

- Cultural Resistance: Researchers may fear job displacement. Solution: Develop training programs focused on AI-human collaboration [13].

- Interpretability: Deep learning models often function as "black boxes." Solution: Incorporate explainable AI (XAI) tools to enhance transparency [13].

Protocols

Protocol 1: Implementation of an Integrated Screening Workflow for Compound Identification

Purpose

To establish a complete autonomous workflow for identifying biologically active compounds against a specific therapeutic target through the integration of robotic systems, AI planning, and automated analysis.

Materials and Equipment

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Reagents and Materials for Autonomous Screening

| Item | Function | Specifications |

|---|---|---|

| Compound Libraries | Source of potential active molecules | Thousands to millions of compounds; commercially available or custom-synthesized [16] |

| Assay Plates | Platform for biochemical reactions | 96- to 3456-well microplates; type selected based on assay requirements [15] |

| Biological Targets | Disease-relevant proteins or pathways | Purified proteins or cell lines expressing target of interest [16] |

| Detection Reagents | Enable measurement of interactions | Fluorescent, luminescent, or absorption-based markers [16] [15] |

| Liquid Handling Consumables | Precision delivery of reagents | Disposable tips, reservoirs, tubing compatible with automated systems [16] |

Equipment

- Modular robotic platform with collaborative robots [14]

- Automated liquid handling systems [15]

- High-capacity microplate incubators and storage systems [16]

- Multi-mode plate readers (fluorescence, luminescence, absorption) [15]

- High-performance computing infrastructure for AI/ML processing [13]

- Laboratory Information Management System (LIMS) [13]

Procedure

Target Selection and Validation

- Utilize AI algorithms to mine omics datasets and scientific literature for disease-relevant targets [13]

- Validate selected targets through pathway analysis and expression profiling

- Document target selection rationale in electronic lab notebook (ELN)

Assay Development and Optimization

- Develop either cell-based or biochemical assays sensitive to target-compound interactions

- Optimize assay conditions for automation compatibility and miniaturization

- Implement appropriate controls (positive, negative, vehicle) for quality assessment

- Validate assay performance using known ligands or modulators if available

AI-Driven Compound Selection and Library Design

- Employ virtual screening to prioritize compounds from existing libraries [13]

- Utilize generative AI models to design novel compounds if needed

- Apply QSAR models to estimate binding affinities and ADME properties

- Curate final compound set for experimental testing

Automated Sample Preparation and Screening

- Program liquid handlers to transfer compounds and reagents to assay plates

- Implement robotic systems to manage plate movement between stations

- Execute screening runs with appropriate sample randomization

- Monitor system performance through integrated sensors and cameras

Automated Data Collection and Analysis

- Collect readouts using appropriate detectors (fluorescence, luminescence, etc.)

- Process raw data using automated analysis pipelines

- Apply quality control metrics to identify and exclude problematic assays

- Identify "hits" based on predetermined activity thresholds

AI-Powered Iterative Optimization

- Feed experimental results back to AI models for continuous learning

- Design subsequent rounds of compounds based on structure-activity relationships

- Prioritize confirmed hits for secondary assays and counter-screens

Timing

- Steps 1-2: 2-4 weeks (initial setup)

- Steps 3-5: 1-3 days per screening cycle

- Step 6: Continuous iterative process

Protocol 2: Automated Proteomics Sample Preparation for Target Deconvolution

Purpose

To implement a fully automated proteomics workflow for identifying drug targets and off-target interactions using the autoSISPROT platform or equivalent system.

Materials and Equipment

- Automated proteomics sample preparation platform (e.g., autoSISPROT) [18]

- Thermal proteome profiling (TPP) reagents and buffers

- Isobaric labeling tags (TMT) if required [18]

- Mass spectrometry-grade solvents and digestion enzymes

- High-resolution mass spectrometer with data-independent acquisition (DIA) capability [18]

Procedure

Sample Processing

- Program automated platform to process up to 96 samples in parallel

- Implement all-in-tip operations for protein digestion and desalting

- Optional: Incorporate TMT labeling for multiplexed quantification

Thermal Proteome Profiling

- Treat samples with compounds of interest at relevant concentrations

- Perform heat denaturation at controlled temperatures

- Separate soluble fractions for subsequent analysis

Automated Sample Preparation

- Execute protein digestion using the automated platform (achieving >94% efficiency)

- Perform TMT labeling if applicable (>98% efficiency)

- Cleanup and concentrate samples for mass spectrometry analysis

Data Acquisition and Analysis

- Acquire proteomics data using data-independent acquisition (DIA) mode

- Process raw data using automated quantification pipelines

- Identify stabilized and destabilized proteins across temperature ranges

- Annotate known targets and potential off-targets based on melting curves

Timing

- Total processing time: <2.5 hours for 96 samples (additional 1 hour if TMT labeling required) [18]

Workflow Visualization

Autonomous Experimentation Workflow

Integrated System Architecture

Data Analysis Pipeline

Implementing High-Throughput Workflows: From Design to Real-World Application

In autonomous experimentation research, the selection of a high-throughput workflow is a critical strategic decision that directly impacts the pace and reliability of scientific discovery. An optimal workflow functions as an integrated system that transforms experimental inputs into high-value information, a relationship defined by the core principle: Workflow → Information → Value [1]. The value of the generated information is proportional to its quality and its actionability for achieving specific research objectives [1].

The modern experimental landscape is characterized by multi-fidelity models and complex, automated pipelines. The design and operation of these systems often rely on expert intuition, which can lead to suboptimal performance and inefficient use of computational and physical resources [19]. This article outlines a systematic framework for selecting and optimizing high-throughput workflows to maximize the return on computational investment (ROCI) without compromising data quality, enabling researchers to navigate the inherent trade-offs between speed, cost, and accuracy [19].

Core Principles of the Optimal Workflow Selection Framework

The proposed framework is built on a two-stage selection process designed to efficiently navigate the vast space of possible workflows [1].

Stage 1: Fast Search for High-Quality Workflows

The first stage involves a rapid screening of user-defined possible workflows to quickly filter for those capable of generating high-quality information relevant to the established objective. This broad search prioritizes identification of workflows with the fundamental potential for success.

Stage 2: Fine Search for the Highest-Value Workflow

The second stage performs a more rigorous, fine-grained evaluation of the shortlisted high-quality workflows from Stage 1. The goal is to select the single optimal workflow that generates the highest-value information, as defined by a user-specified objective function, such as maximizing ROCI [1] [19].

Table 1: Key Performance Indicators for Benchmarking Workflows

| Key Performance Indicator | Definition | Impact on Autonomous Experimentation |

|---|---|---|

| Throughput Speed | The number of experimental iterations or data points processed per unit time. | A 5x faster throughput enables significantly faster model iteration cycles [20]. |

| Data Quality & Accuracy | Measured by error rates, signal-to-noise ratio, or conformity to ground-truth data. | A 30% increase in annotation accuracy can directly translate to a 15% improvement in downstream task performance (e.g., robotic grasping precision) [20]. |

| Cost per Experiment | The total resource expenditure (computational, material, time) per experimental unit. | Intelligent data selection and automation can reduce dataset annotation requirements by 35% and cut associated costs by over 33% [20]. |

| Automation Capability | The degree to which the workflow can operate with minimal human intervention. | Hybrid AI-human workflows reduce repetitive manual effort and can lead to a 60-95% reduction in time spent on repetitive tasks [20] [21]. |

Quantitative Metrics for Workflow Benchmarking

To implement the framework, workflows must be benchmarked using quantifiable metrics. The following table summarizes critical metrics for evaluating workflow performance in the context of autonomous experimentation.

Table 2: Workflow Performance Metrics from Real-World Case Studies

| Experimental Domain | Metric Category | Baseline Performance | Optimized Performance | Key Enabling Factor |

|---|---|---|---|---|

| Materials Characterization (BSE-SEM) [1] | Collection Time | Benchmark Workflow | 5x reduction vs. benchmark | Optimal HTVS workflow selection |

| Computer Vision Data Labeling [20] | Data Throughput | Legacy Annotation Platform | 5x improvement | AI-assisted pre-labeling (e.g., SAM2) |

| Computer Vision Data Labeling [20] | Project Setup Time | Legacy Platform (2 months) | 4x faster (2 weeks) | User-friendly interfaces & workflow templates |

| Robotic Grasping Precision [20] | Task Accuracy | Outsourced Labeling | 15% boost | High-precision data pipeline & nested ontologies |

| Business Process Automation [21] | Repetitive Task Time | Manual Execution | 60-95% reduction | Workflow automation software |

Figure 1: The two-stage Optimal Workflow Selection Framework. This process systematically moves from a broad set of user-defined workflows to the selection of a single, optimal workflow designed to maximize Return on Computational Investment (ROCI) [1] [19].

Experimental Protocols for Workflow Implementation

Protocol: Deployment of a Hybrid AI-Human Labeling Workflow

This protocol is adapted from successful implementations in computer vision for physical AI and robotics [20].

4.1.1 Research Reagent Solutions

- AI-Assisted Labeling Platform (e.g., Encord): A platform integrating models like SAM2 for smart pre-labeling. Its function is to automate repetitive annotation tasks, drastically reducing manual effort [20].

- Nested Ontology Management System: A structured hierarchy of labels and classes. Its function is to ensure labeling consistency and accuracy for complex objects [20].

- Analytics Dashboard: A real-time monitoring tool. Its function is to track annotator throughput, label accuracy, and project progress [20].

4.1.2 Step-by-Step Procedure

- Workflow Setup: Import raw data and configure the nested ontology within the labeling platform. Define project-specific guidelines.

- AI Pre-labeling: Run the AI-assisted labeling model to generate initial annotations on the entire dataset.

- Human-in-the-Loop Review: Direct human annotators to review and correct AI-generated labels, focusing on complex edge cases and low-confidence model predictions.

- Quality Assurance: Implement a review pipeline where a second annotator or project lead audits a subset of corrected labels.

- Model Retraining: Use the human-corrected labels to fine-tune the AI pre-labeling model, creating a feedback loop for continuous improvement.

4.1.3 Expected Outcomes: Following this protocol can result in a 5x improvement in data throughput, a 30-35% reduction in labeling costs, and a significant increase in annotation accuracy, as demonstrated by companies like Pickle Robot [20].

Protocol: Bayesian Optimization for Medium Conditioning in Bioproduction

This protocol is adapted from an autonomous lab system used to optimize medium conditions for a glutamic acid-producing E. coli strain [22].

4.2.1 Research Reagent Solutions

- Modular Autonomous Lab System: A system comprising culturing, preprocessing, and analysis modules. Its function is to autonomously execute a closed loop from culture to analysis [22].

- Bayesian Optimization Algorithm: A machine learning algorithm. Its function is to model the relationship between input parameters and objective variables to intelligently suggest the next best experiment [22].

- M9 Minimal Medium: A defined growth medium. Its function is to serve as a base for optimization, allowing for the exclusive quantification of target metabolites without background interference [22].

4.2.2 Step-by-Step Procedure

- Initial Dataset Construction: Conduct a preliminary experiment to measure objective variables against a wide range of component concentrations.

- Algorithmic Setup: Input the initial dataset into the Bayesian optimization algorithm, defining the objective variables.

- Autonomous Experimentation Loop: a. The algorithm proposes the next set of component concentrations to test. b. The autonomous lab system prepares the medium, cultures the cells, and measures the outcomes. c. The new data is fed back into the algorithm.

- Iteration: Repeat Step 3 until a convergence criterion is met or the objective is satisfactorily achieved.

4.2.3 Expected Outcomes: This protocol allows an autonomous system to efficiently navigate a complex multi-parameter space to find optimal conditions for cell growth or product yield, significantly accelerating the bioproduction strain development process [22].

Figure 2: The Autonomous Experimentation Loop. This workflow, powered by Bayesian optimization, enables closed-loop, high-throughput experimentation for domains like bioproduction [22].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key solutions and materials essential for implementing high-throughput workflows in autonomous experimentation.

Table 3: Essential Research Reagent Solutions for Autonomous Experimentation

| Tool Category | Specific Tool Example | Function in Workflow |

|---|---|---|

| No-Code Data Automation | Mammoth Analytics [23] | Provides an intuitive, drag-and-drop interface for cleaning and analyzing large datasets without coding, enabling non-technical researchers to automate data pipelines. |

| AI-Powered Data Labeling | Encord [20] | Integrates AI models for pre-labeling data (e.g., with SAM2), reducing manual annotation effort by up to 75% and accelerating the creation of training data for machine learning. |

| Cloud-Based Workflow Automation | CloudPipe [23] | Offers a serverless, pay-per-use architecture for running data workflows, providing auto-scaling capabilities and minimizing infrastructure management overhead. |

| Modular Autonomous Lab Hardware | Autonomous Lab (ANL) System [22] | A system of modular, movable devices for culturing, preprocessing, and analysis that can be reconfigured for different biological experiments. |

| Bayesian Optimization Software | Custom Algorithm [22] | Models the relationship between experimental parameters and outcomes to intelligently propose the next highest-value experiment, maximizing the efficiency of resource allocation. |

The Framework for Optimal Workflow Selection provides a systematic, value-driven methodology for designing and operating high-throughput experimental pipelines. By moving beyond static, human-designed workflows and adopting a dynamic, metrics-based approach, researchers can significantly accelerate their ROCI. The integration of hybrid AI-human workflows and Bayesian optimization loops, as demonstrated in the accompanying protocols, offers a tangible path toward more efficient, reliable, and scalable autonomous experimentation in scientific research and drug development.

The adoption of high-throughput experimentation (HTE) in pharmaceutical research marks a paradigm shift towards autonomous experimentation, enabling the rapid exploration of complex biological and chemical spaces. This article details practical applications and standardized protocols for two transformative automation technologies: a fully automated workflow for 3D cell culture and the CHRONECT XPR system for automated solid dosing. These case studies provide a framework for researchers selecting and implementing high-throughput workflows, highlighting measurable gains in reproducibility, efficiency, and data quality essential for autonomous research pipelines.

Case Study: Fully Automated 3D Midbrain Organoid Generation & Screening

Application Note

The manual generation and analysis of complex 3D cell cultures, such as organoids, present significant challenges for reproducible, high-throughput screening. A fully automated workflow was developed to address this, enabling the production of highly homogeneous human midbrain organoids in a standard 96-well format [24]. This integrated system automates the entire process from generation to high-content analysis.

Key quantitative outcomes from the automated workflow demonstrate its precision and robustness [24]:

Table 1: Performance Metrics of Automated Organoid Workflow

| Performance Metric | Result | Implication for HTE |

|---|---|---|

| Sample Retention (over 30 days) | 99.7% (SD ± 0.7%) | Enhanced reliability and reduced material waste in long-term studies. |

| Post-Analysis Sample Retention | 96.5% (SD ± 3.1%) | High process stability for complex, multi-step protocols. |

| Sample Rejection Rate (Imaging) | 6.1% (SD ± 1.3%) | Low incidence of imaging artifacts, ensuring high-quality data output. |

| Intra-batch Size Variability (CV) | 3.56% (min 2.2%, max 5.6%) | Exceptional morphological homogeneity, critical for screening consistency. |

This workflow allows for assessing drug effects at single-cell resolution within a complex 3D environment, providing a more physiologically relevant model for neurodegenerative diseases like Parkinson's disease in a scalable, HTS-compatible format [24].

Experimental Protocol

Aim: To generate, maintain, and analyze homogeneous human midbrain organoids in a fully automated, high-throughput manner. Starting Material: Small molecule neural precursor cells (smNPCs) derived from pluripotent stem cells [24].

Key Methodological Details:

- Automation Platform: All steps are performed using an Automated Liquid Handling System (ALHS) with a 96-channel pipetting head [24].

- Culture Format: Organoids are generated and maintained one-per-well in standard 96-well plates to minimize batch effects from paracrine signaling, unless such signaling is desired [24].

- Critical Omission: The protocol intentionally omits Matrigel embedding to reduce batch-to-batch variability [24].

- Quality Control: The workflow includes automated steps for fixation, whole-mount immunostaining, and tissue clearing, culminating in high-content imaging that provides quantitative data at the single-cell level [24].

Case Study: Automated Powder Dispensing with CHRONECT XPR

Application Note

In pharmaceutical development, accurate and reproducible handling of solid materials is a major bottleneck. Manual weighing of powders, especially in the milligram range, is time-consuming and prone to human error. The CHRONECT XPR workstation is an integrated system designed to fully automate powder dosing and subsequent liquid addition, enabling high-throughput experimentation for applications like formulation development and compound library management [25] [26] [27].

Table 2: Technical Specifications of the CHRONECT XPR Workstation

| Feature | Specification | Benefit for Pharmaceutical HTE |

|---|---|---|

| Dosing Precision | Sub-milligram to several grams [26] | Reduces variability, increases reproducibility for sensitive assays. |

| Powder Handling Capacity | Up to 32 different powders per run [26] | Enables many-to-many formulation strategies from many starting materials [25]. |

| Vial Handling | Automatic decapping, sealing, and transfer of 1 mL to 20 mL vials [26] | Minimizes manual intervention and exposure to hazardous compounds. |

| Software & Traceability | Chronos software; RFID tracking of dosing heads [26] | Ensures data integrity, full traceability, and integration with LIMS. |

| Footprint | Compact, benchtop design [26] | Fits into standard lab spaces or safety cabinets. |

This system enhances productivity by automating repetitive tasks such as capping, vortexing, and liquid addition, freeing valuable scientist time for data analysis and experimental design [26].

Experimental Protocol

Aim: To perform automated, precise dispensing of multiple solid powders and prepare them for subsequent liquid addition and reaction. Starting Materials: Up to 32 different powder compounds; appropriate solvent vials [26].

Key Methodological Details:

- Dosing Principle: The system uses gravimetric dosing, leveraging a high-precision balance isolated from environmental vibration for maximum accuracy [26].

- Workflow Integration: The CHRONECT XPR platform follows a "many-to-many" approach, allowing numerous formulations to be created from many starting materials quickly and reproducibly without manual interaction [25].

- Operational Environment: The system can support inert gas environments for the safe handling of oxygen- or moisture-sensitive compounds [26].

- Software Control: The Chronos software provides intuitive, sample-based control without the need for complex robot programming, facilitating integration with existing data management systems [26].

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful implementation of the aforementioned automated workflows relies on a suite of specialized materials and equipment.

Table 3: Essential Materials for Automated HTE in Pharmaceuticals

| Item | Function/Description | Example in Use |

|---|---|---|

| Automated Liquid Handler | A robotic system for precise, high-volume liquid transfers. | Pipetting medium changes and reagents in 3D organoid workflow [24]. |

| Robotic Powder Dispenser | A system for accurate, hands-free dispensing of solid materials. | CHRONECT XPR for sub-milligram solid dosing [26] [27]. |

| High-Content Imaging System | An automated microscope with analytical software for detailed cellular analysis. | Quantitative whole-mount analysis of organoids with single-cell resolution [24]. |

| Standard Multi-Well Plates | Industry-standard plasticware for cell culture and assays. | 96-well plates used for organoid generation and screening [24]. |

| Specialized Cell Culture Media | Chemically defined media supporting specific cell types and 3D growth. | Differentiation and maintenance of midbrain organoids [24]. |

| Tissue Clearing Reagents | Chemical solutions that render biological samples optically transparent. | Enabling deep imaging of whole, intact organoids [24]. |

| Primary & Secondary Antibodies | For specific detection of proteins via immunostaining. | Characterizing protein expression and cellular composition in organoids [24]. |

| Laboratory Information Management System (LIMS) | Software for tracking samples and experimental data. | Integrating with Chronos software for complete data traceability [26]. |

Leveraging Low-Code Platforms and Modular Systems for Accessible Workflow Design

The paradigm of scientific research, particularly in fields like chemistry and drug development, is shifting from manual, sequential experimentation towards autonomous, high-throughput systems. This transition is largely driven by the integration of two powerful concepts: low-code platforms for orchestrating complex decision-making and modular systems for physical execution. Together, they create a framework for accessible workflow design that accelerates the cycle of hypothesis, experimentation, and analysis.

Low-code workflow automation uses visual tools and pre-built components to automate processes with minimal manual coding, making powerful automation accessible to researchers who are domain experts but not necessarily software developers [28] [29]. This agility is critical for Autonomous Experimentation (AE) systems, defined as iterative research loops of planning, experiment, and analysis carried out autonomously [1]. When combined with modular hardware systems—discrete, interchangeable units for tasks like synthesis, purification, and analysis—these digital platforms enable the creation of robust, self-driving laboratories (SDLs) capable of rapidly exploring vast experimental spaces [30] [6].

Core Concepts and Definitions

Low-Code/No-Code Platforms

- Low-Code Platform: A software development environment that enables the creation of applications and workflows through visual interfaces, drag-and-drop components, and configuration, significantly reducing the need for hand-written code [28] [31]. They offer more flexibility than no-code platforms but have a steeper learning curve [29].

- No-Code Platform: A subset of low-code platforms that allows users with no coding experience to build applications and automate processes entirely through visual tools [28].

- Citizen Developer: A business user or, in this context, a researcher with domain expertise but limited formal coding skills, who is empowered to create and manage automated workflows [28] [29].

Modular Systems in Science

- Modular Bioprocessing Platform: A system composed of discrete, interchangeable units (modules) engineered for integration, rapid deployment, and scalability. Instead of rebuilding for every new product, operators mix and match modules, building on a flexible backbone [30].

- Self-Driving Lab (SDL): A system that combines robotic automation with artificial intelligence (AI) to conduct high-throughput scientific experiments with minimal human intervention [32] [6].

The Workflow Selection Framework

A critical process for AE systems involves selecting the optimal data collection workflow. This can be framed as [1]: Workflow → Information → Value The value of the information generated is proportional to its Quality and Actionability. A well-designed workflow generates high-quality, actionable information that adds significant value to the broader scientific objective.

Quantitative Comparison of Enabling Technologies

The following tables summarize key low-code platforms and modular system components relevant to autonomous research.

Table 1: Comparison of Low-Code Automation Platforms for Scientific Workflows

| Platform | Primary Use Case | Technical Proficiency Required | Key Strengths | Scalability & Governance |

|---|---|---|---|---|

| Vellum AI [33] | AI-native workflow orchestration | Low (No-code prompting) | Built-in evaluations, versioning, AI-native primitives (retrieval, semantic routing) | Strong versioning, dev/stage/prod environments, RBAC, VPC/on-prem deployment |

| Appian [34] | Enterprise process automation | Medium (Steeper learning curve) | Powerful BPMN-compliant process modeling, deep legacy system integration, high-security standards | Enterprise-grade, ideal for regulatory-heavy industries (finance, healthcare) |

| AppSheet [34] | Data-driven internal apps | Low (Business-user-friendly) | Quick deployment for internal tools, integrates with Google Sheets/Excel, offline functionality | Scalable via Google Workspace SSO & permissions |

| OutSystems [35] | Enterprise-grade application development | Medium (Basic coding knowledge) | High scalability, built-in support for microservices and containers, versatile for various app types | Scalable for all application sizes, strong security and governance features |

| n8n [33] | Technical workflow automation | High (Developer-focused) | Open-source/self-host option, flexible for technical teams | Self-hosted option provides control, suitable for technical teams |

Table 2: Typical Modules in a Modular Bioprocessing or SDL Platform [30]

| Module Type | Core Function | Scale Options | Example Research & Development Use Cases |

|---|---|---|---|

| Upstream Processing | Sterile growth of cells/microbes | Pilot to Large Scale | Vaccine cell culture, fermentation for bio-therapeutics |

| Downstream Processing | Purification and isolation of products | Bench to Production | Protein harvest, enzyme extraction, purification of synthesized compounds |

| Formulation/Fill | Final product preparation | Lab to Commercial | Sterile vial filling for drug candidates, media blends |

| Quality Control (QC) | Real-time analytics and sampling | Any Scale | On-line monitoring (e.g., pH, metabolites), release testing for product quality |

| Synthesis | Chemical reaction execution | Micro-scale to Production | Solid-state synthesis, organic molecule construction, nanoparticle fabrication |

Application Notes: Implementation in Autonomous Research

Enabling Closed-Loop Experimentation

The core of an SDL is the closed-loop cycle, where AI plans an experiment, robotics execute it, data is analyzed, and results inform the next AI-driven decision [6]. Low-code platforms act as the orchestration layer that manages this cycle, while modular systems provide the physical means. For instance, a platform like Vellum can manage a workflow that involves a mobile robot transporting a sample from a synthesis module (e.g., Chemspeed ISynth) to an analysis module (e.g., UPLC-MS or benchtop NMR), then process the analytical data to decide the next experimental step [6].

Enhancing Robustness with Real-Time Inspection