Optimizing High-Throughput Experimentation: A Strategic Guide to Design of Experiments for Biomedical Research

This article provides a comprehensive framework for implementing Design of Experiments (DOE) in High-Throughput Experimentation (HTE) workflows, specifically tailored for researchers, scientists, and drug development professionals.

Optimizing High-Throughput Experimentation: A Strategic Guide to Design of Experiments for Biomedical Research

Abstract

This article provides a comprehensive framework for implementing Design of Experiments (DOE) in High-Throughput Experimentation (HTE) workflows, specifically tailored for researchers, scientists, and drug development professionals. It bridges the gap between statistical theory and practical application, covering foundational principles, advanced methodological strategies, systematic troubleshooting for process optimization, and rigorous validation protocols. By synthesizing current best practices, the guide empowers scientists to efficiently explore vast experimental spaces, accelerate discovery, enhance data quality, and ensure robust, reproducible results in biomedical and clinical research.

Laying the Groundwork: Core Principles of DOE for Robust HTE

In the demanding fields of drug development and materials science, the pursuit of innovation is often a race against time and resources. Two methodologies have emerged as critical tools for accelerating this process: High-Throughput Experimentation (HTE) and statistical Design of Experiments (DoE). Individually, each offers distinct advantages; HTE delivers unparalleled scale, while DoE provides statistical rigor. However, their true transformative potential is realized when they are synergistically integrated. This whitepaper defines HTE and DoE, elucidates their individual roles, and details how their fusion creates a workflow that is greater than the sum of its parts, enabling researchers to explore complex experimental spaces with unprecedented efficiency and insight. This approach is fundamentally reshaping discovery and optimization pipelines, particularly in the development of novel radiopharmaceuticals and other precision therapies [1].

Core Definitions and Foundational Concepts

High-Throughput Experimentation (HTE)

HTE is an automated, parallelized approach to scientific investigation that allows for the rapid execution and analysis of a vast number of experiments. Its primary value proposition is scale. By leveraging robotics, miniaturization, and automated data analysis, HTE enables the empirical testing of thousands of hypotheses that would be impractical to conduct manually. The core challenges of traditional, scattered HTE workflows include their reliance on multiple disconnected software systems, manual configuration of equipment, and the tedious process of connecting analytical results back to experimental conditions, all of which introduce inefficiencies and potential for error [2].

Design of Experiments (DoE)

DoE is a structured, statistical method for planning, conducting, and analyzing experiments to efficiently determine the relationship between factors affecting a process and its output. Unlike the "one-factor-at-a-time" (OFAT) approach, DoE involves the deliberate variation of multiple input factors simultaneously to identify not only their individual main effects but also their complex interactions. The use of statistical models, such as response surface methodology, allows researchers to build predictive models of the experimental landscape, guiding them directly to optimal conditions with a minimal number of experimental runs [1].

The Synergy: Integrating DoE with HTE Workflows

The integration of DoE and HTE represents a paradigm shift. The brute-force scale of HTE is strategically directed by the statistical intelligence of DoE. Instead of blindly testing a massive grid of conditions, an HTE platform is used to execute a sophisticated, information-rich DoE design. This allows for the efficient exploration of a high-dimensional factor space—including variables like temperature, concentration, solvent composition, and catalyst loading—in a single, coordinated experimental campaign. The outcome is a robust, predictive model that maps the influence of all factors and their interactions on the desired outcome, all achieved in a fraction of the time and with significantly less consumption of valuable starting materials [1].

This synergy directly addresses the "scattered workflow" problem. Integrated software platforms are now designed to support this combined approach, providing a chemically intelligent environment that connects experimental design directly to inventory, automated execution, and analytical data processing. This creates a closed-loop system where data flows seamlessly from design to decision, ensuring data integrity and making it immediately usable for AI/ML modeling [2].

Case Study: Accelerated Development of a Novel Radiopharmaceutical

A compelling demonstration of the HTE-DoE synergy is documented in the development of a novel radiopharmaceutical, [18F]crizotinib, a process that would be prohibitively slow and costly using traditional methods [1].

Experimental Objectives and Challenges

The primary objective was to optimize the Cu-mediated radiofluorination (CMRF) reaction for the synthesis of [18F]crizotinib. The key challenge was the extremely limited availability of the precious crizotinib boronate precursor, which made extensive, non-systematic screening impossible.

Integrated HTE-DoE Methodology

High-Throughput Experimental Protocol

The researchers developed a miniaturized HTE protocol to maximize information gain from minimal material [1]:

- Reaction Setup: Experiments were conducted in parallel in a 24-well or 96-well aluminum heating block. Each reaction was performed on a miniaturized scale (75-100 µL volume), using one-tenth of a typical production scale.

- Azeotropic Drying-Free

18FProcessing:18F-was trapped on a cartridge and eluted as [18F]TBAF in methanol. Aliquots (30-50 µL) were distributed into the reaction plates and evaporated to dryness (100 °C, 3 minutes), allowing any reaction solvent to be added directly. - Parallel Execution: Reaction mixtures were added to the [18F]TBAF residue in all wells and performed in parallel with stirring (120 °C, 30 minutes).

- High-Throughput Analysis: Reactions were analyzed using radio-thin-layer chromatography (rTLC). The protocol was validated against more resource-intensive methods (PET scanner and gamma counter), showing strong correlation (R²=0.974) and low error, confirming its reliability for high-throughput analysis.

DoE Design and Progression

The statistical approach was executed in two phases [1]:

- Initial Screening DoE: A low-resolution DoE study was first conducted using a more readily available model precursor to screen categorical variables (solvents, ligand additives) and continuous variables (Cu(OTf)₂ loading). This identified imidazo[1,2-b]pyridazine (IMPY) as the optimal ligand and DMI as the optimal solvent.

- Optimization DoE: Informed by the screening results, a high-resolution, 24-run D-optimal design was constructed to model the effects of four continuous factors on the Radiochemical Conversion (RCC):

- Cu(OTf)₂ loading (1-5 µmol)

- Crizotinib precursor loading (0.25-2 µmol)

- IMPY loading (1-40 µmol)

- Percentage of n-BuOH co-solvent (0-25%)

Table 1: Key Factors and Ranges for the D-Optimal Optimization DoE

| Factor | Low Level | High Level | Units |

|---|---|---|---|

| Cu(OTf)₂ Loading | 1 | 5 | µmol |

| Precursor Loading | 0.25 | 2 | µmol |

| IMPY Ligand Loading | 1 | 40 | µmol |

| n-BuOH Co-solvent | 0 | 25 | % |

Results and Quantitative Outcomes

The entire 24-run DoE optimization study was completed in a single 3-hour experimental session, consuming only 27.8 µmol of the limited precursor [1]. The response surface model generated from the data successfully predicted optimal conditions:

- Predicted RCC: 55%

- Experimental Validation RCC: 57% (n=1) Furthermore, the model identified a sub-optimal condition set that used less than half the precursor while still delivering an acceptable RCC of 40% (predicted 36%), providing a valuable alternative for resource-constrained situations.

Table 2: Summary of Experimental Outcomes from the HTE-DoE Campaign

| Metric | Outcome |

|---|---|

| Total DoE Runs | 24 |

| Total Experiment Time | 3 hours |

| Total Precursor Consumed | 27.8 µmol |

| Optimal Condition RCC (Predicted) | 55% |

| Optimal Condition RCC (Validated) | 57% |

| Alternative Condition RCC (Predicted) | 36% |

| Alternative Condition RCC (Validated) | 40% |

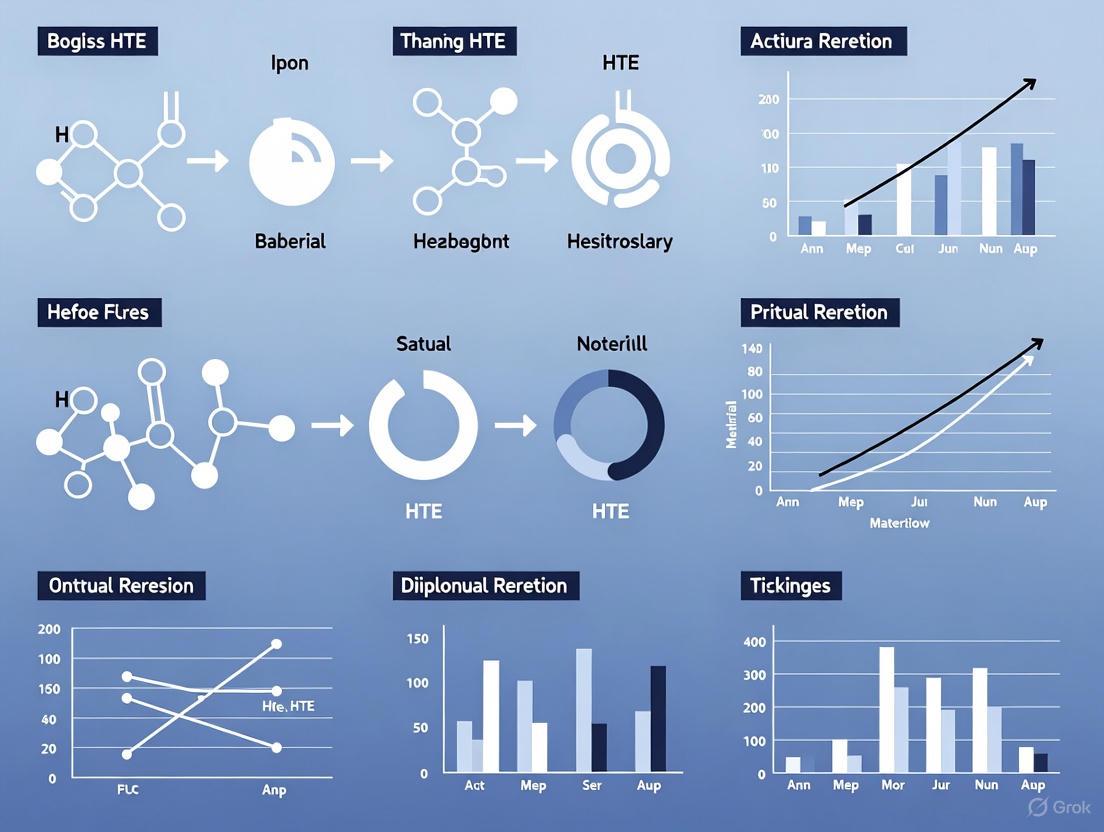

Visualizing the Integrated Workflow

The following diagram illustrates the seamless, cyclic workflow of an integrated HTE-DoE campaign, from initial design through to decision and future prediction.

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful execution of an integrated HTE-DoE campaign, as in the radiochemistry case study, relies on a specific set of reagents and materials [1].

Table 3: Key Research Reagent Solutions for HTE-DoE in Radiochemistry

| Reagent / Material | Function in the Workflow |

|---|---|

| Crizotinib Boronate Precursor | The scarce, valuable starting material for the radiofluorination reaction; its conservation was a primary driver for the HTE-DoE approach. |

| Cu(OTf)₂ | The source of copper catalyst for the Cu-mediated radiofluorination (CMRF) reaction. |

| Imidazo[1,2-b]pyridazine (IMPY) | The optimal ligand identified by the initial DoE screen, crucial for stabilizing the copper catalyst and facilitating the transformation. |

| Solvents (DMI, n-BuOH) | The reaction medium. DMI was identified as the optimal primary solvent, and n-BuOH was a co-solvent factor in the optimization DoE. |

| TBAF in Methanol | The elution solution used to recover 18F- from the QMA cartridge, forming [18F]TBAF for distribution into reaction wells. |

| QMA Cartridge (KOTf conditioned) | Used to trap and purify the 18F- isotope before its distribution into the HTE plate. |

| Glass Micro Vials / 96-Well Plate | The miniaturized reaction vessel, enabling parallel experimentation and minimal reagent consumption. |

| Aluminum Heating Block | Provides uniform heating to all wells in the plate during the parallel reaction step. |

The integration of High-Throughput Experimentation and statistical Design of Experiments represents a cornerstone of modern scientific methodology in drug development. This synergy is not merely a technical improvement but a fundamental shift in research strategy. It replaces empirical, resource-intensive guesswork with a directed, intelligent, and predictive exploration of chemical space. As demonstrated in the development of [18F]crizotinib, this approach dramatically accelerates optimization cycles, minimizes the consumption of precious materials, and generates high-quality, structured data that is ideal for building robust AI/ML models. For researchers and drug development professionals, mastering the combined HTE-DoE workflow is no longer optional but essential for achieving rapid, reliable, and impactful innovation.

In high-throughput experimental (HTE) workflows, the fundamental challenge of distinguishing correlation from causation is amplified by the scale and complexity of the data. Research strategies primarily fall into two methodological paradigms: observational studies, where researchers observe the effect of a risk factor, diagnostic test, or treatment without trying to change who is or isn't exposed to it, and experimental studies, where researchers introduce an intervention and systematically study its effects [3]. The hierarchy of evidence places systematic reviews and randomized controlled trials (RCTs) at the pinnacle of reliability, followed by cohort studies and case-control studies [3]. Within the context of HTE systems—which may encompass genomic screens, proteomic profiling, and large-scale biochemical assays—the choice between these approaches carries significant implications for resource allocation, biological resolution, and the validity of causal conclusions. This guide examines the principles, applications, and methodological integration of these approaches to enable robust causal inference in high-throughput biology.

Fundamental Concepts: Defining Study Paradigms

Core Characteristics and Definitions

Observational studies are defined by the passive role of the investigator, who collects data without manipulating the system under study. These approaches are particularly valuable when exploring the initial stages of hypothesis generation or when practical or ethical constraints prevent experimental manipulation [3] [4]. For instance, it would be unethical to design a randomized controlled trial deliberately exposing workers to a potentially harmful situation [3].

In contrast, controlled experiments actively manipulate the system to isolate causal relationships. In these studies, researchers introduce an intervention and study the effects, typically using randomization to assign subjects to different groups [3]. The RCT represents the classic experimental design, where eligible people or biological units are randomly assigned to one of two or more groups, with one group receiving the intervention and another serving as a control that receives nothing or an inactive placebo [3].

Key Differences Structured for Comparison

Table 1: Fundamental Differences Between Observational and Experimental Studies

| Characteristic | Observational Studies | Controlled Experiments |

|---|---|---|

| Role of Investigator | Passive observer of naturally occurring variations | Active interventionist who manipulates variables |

| Assignment to Groups | Determined by existing characteristics, exposures, or preferences | Random assignment to treatment and control groups |

| Control of Confounding | Limited; relies on statistical adjustment post-hoc | High; achieved primarily through randomization |

| Establishing Causality | Limited capacity, prone to confounding biases | Strong capacity, particularly when randomized and blinded |

| Primary Utility | Hypothesis generation, studying long-term/ethical exposures | Hypothesis testing, establishing efficacy |

| Real-World Generalizability | Often high (reflects "real-world" conditions) | Potentially limited by strict inclusion criteria |

| Typical Settings | Epidemiology, comparative effectiveness research, toxicology | Clinical trials, preclinical drug development, mechanistic biology |

Methodological Approaches for High-Throughput Systems

Causal Biological Networks for High-Throughput Data Interpretation

High-throughput measurement technologies produce data sets with potential to elucidate biological impact of disease, drug treatment, and environmental agents, but present challenges in analysis and interpretation [5]. A powerful approach structures prior biological knowledge of cause-and-effect relationships into network models describing specific biological processes. This enables quantitative assessment of network perturbation in response to a given stimulus [5].

The Network Perturbation Amplitude (NPA) scoring method leverages high-throughput measurements and literature-derived knowledge in the form of network models to characterize activity change for a broad collection of biological processes at high resolution [5]. The methodology uses structures called "HYPs" (derived from "hypothesis"), which are specific types of network models comprised of causal relationships connecting a particular biological activity to measurable downstream entities that it regulates [5].

Table 2: NPA Scoring Methods for High-Throughput Data

| Method | Calculation Approach | Primary Advantage | Optimal Application Context |

|---|---|---|---|

| Strength | Mean differential expression of downstream genes, adjusted for causal connection sign | Simplicity and interpretability | Initial screening of pathway activity |

| Geometric Perturbation Index (GPI) | Strength method weighted by statistical significance of differential expression | Balances magnitude and reliability of changes | Data with variable measurement precision |

| Measured Abundance Signal Score (MASS) | Change in absolute quantities supporting upstream increase, divided by total absolute quantity | Normalization for technical variation | Cross-platform or cross-experiment comparisons |

| Expected Perturbation Index (EPI) | "Smoothed" GPI averaged over significance thresholds | Robustness to statistical threshold selection | Noisy data or small sample sizes |

Experimental Design Principles for High-Throughput Workflows

Good scientific practice for HTE requires careful consideration of resource allocation and variability. Experimental design rationalizes the tradeoffs imposed by finite resources, limited measurement precision, and practical sample size constraints [6]. Basic principles include:

- Balancing and avoidance of confounding: Ensuring comparison groups are comparable across known sources of variation

- Blocking: Grouping experimental units to account for nuisance factors (e.g., different labs, operators, technology batches)

- Randomization: Assigning experimental units randomly to treatment groups to minimize unconscious bias

- Replication: Distinguishing between technical replicates (multiple measurements of same biological sample) and biological replicates (multiple independent biological samples) [6]

The efficiency of HTE workflows can be dramatically improved through strategic design. For instance, manual Design of Experiments (DOE) approaches can take weeks or months, but automated high-throughput DOE implementations can achieve the same goals with accuracy and confidence in a fraction of the time [7].

Diagram 1: Study design selection workflow for HTE

Comparative Analysis: Strengths, Limitations, and Applications

When to Prefer Observational Approaches

Observational studies provide distinct advantages in several research scenarios relevant to high-throughput systems:

- Studying rare conditions or outcomes: For rare health problems, a case-control study (which begins with existing cases) may be the most efficient way to identify potential causes [3].

- Investigating long-term outcomes: When little is known about how a problem develops over time, a cohort study may be the best design [3]. RCTs would not be the right approach for outcomes that take a long time to appear [3].

- Ethical constraints: When random assignment to a potentially harmful exposure would be unethical, observational designs provide the only feasible approach [3] [4].

- Real-world effectiveness: Observational studies may better reflect the 'real clinical world' than RCTs performed in homogenous subgroups of patients under ideal conditions [4].

A prominent example comes from transfusion medicine, where numerous observational studies have compared liberal versus restrictive transfusion strategies across diverse medical and surgical populations, sometimes yielding conflicting results that highlight the complexity of these clinical decisions [4].

When Controlled Experiments Are Indispensable

Randomized controlled trials remain the "gold standard" for producing reliable evidence about intervention efficacy because they minimize confounding through random assignment [3] [4]. Their strengths include:

- Causal inference: The prospective study protocol with strict inclusion/exclusion criteria, well-defined intervention, and predefined endpoints enables strong causal conclusions [4].

- Bias minimization: Randomization, blinding, and placebo controls reduce various forms of bias that plague observational research.

- Precise effect estimation: The controlled environment allows more precise quantification of treatment effects under ideal conditions.

However, RCTs have recognized limitations: they are time-consuming, expensive, often restricted by how many participants researchers can manage, and may not reflect real-world conditions due to strict inclusion criteria [3] [4].

Empirical Comparisons of Results Across Methodologies

Contrary to long-standing assumptions, empirical evidence suggests that well-conducted observational studies and RCTs often produce similar estimates of treatment effects. One comprehensive comparison identified 136 reports about 19 diverse treatments and found that "in most cases, the estimates of the treatment effects from observational studies and randomized, controlled trials were similar" [8]. In only 2 of the 19 analyses did the combined magnitude of effect in observational studies lie outside the 95% confidence interval for the combined magnitude in the randomized trials [8].

Integration and Advanced Applications in High-Throughput Workflows

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for High-Throughput Experimentation

| Tool/Platform | Primary Function | Application in HTE Workflows |

|---|---|---|

| SPT Labtech Dragonfly | Non-contact liquid dispensing using positive displacement | Enables use of 96, 384, and 1,536-well plates for simple method transfer to high-throughput workflows [7] |

| Synthace Platform | DOE implementation and experimental planning | Provides provenance of liquid contents in multi-well plates and automates experimental design optimization [7] |

| Selventa Knowledgebase | Literature-curated causal biological relationships | Provides structured "cause and effect" relationships for constructing biological network models [5] |

| Reverse-Causal Reasoning (RCR) | Deductive algorithm for upstream activity inference | Uses measurable downstream entities to deduce activity of upstream biological controllers from high-throughput data [5] |

| Statistical Platforms (JMP, etc.) | Statistical modeling and experimental design | Facilitates implementation of sophisticated DOE approaches compatible with high-throughput hardware [7] |

A Hybrid Approach: Combining Strengths for Causal Inference

Rather than viewing observational and experimental approaches as mutually exclusive, modern high-throughput research benefits from their integration:

- Observational studies for target discovery: Large-scale observational data (e.g., transcriptomic screens of patient cohorts) can identify potential therapeutic targets or biomarkers.

- Controlled experiments for mechanistic validation: High-throughput functional screens (e.g., CRISPR-based gene perturbation) can experimentally validate candidates from observational discovery.

- Translational bridging: Basic research findings from controlled laboratory experiments can be validated in human populations through observational studies long before experimental therapeutics could be tested in RCTs [4].

Diagram 2: Causal network model for NPA scoring

Statistical Framework for Network Perturbation Assessment

The NPA scoring framework incorporates companion statistics to qualify the significance and specificity of results:

- Uncertainty: A confidence interval for a particular NPA score, providing a measure of precision and reliability.

- Specificity: Tests whether an NPA score is specific to the downstream genes represented by a particular HYP, and not due to a general trend in the data [5].

This approach was successfully validated in transcriptomic data sets of normal human bronchial epithelial cells treated with TNFα and HCT116 colon cancer cells treated with a CDK inhibitor, demonstrating its ability to quantify perturbation amplitude for specific network models when compared against independent measures of pathway activity [5].

Establishing causality in high-throughput systems requires thoughtful selection and integration of observational and experimental approaches. While controlled experiments, particularly RCTs, provide the strongest foundation for causal inference, observational studies offer complementary strengths for specific research contexts. The growing sophistication of statistical methods, including multivariable logistic regression and propensity score matching, has enhanced the value of observational studies for assessing safety and effectiveness of different therapeutic strategies [4].

In high-throughput biology, causal network models and perturbation scoring methods provide a quantitative framework for interpreting large-scale data in biologically meaningful contexts. The most robust research programs will leverage both observational and experimental paradigms, recognizing that "all types of evidence rely primarily on the rigour with which individual studies were conducted (regardless of the methodological approach) and the care with which they are interpreted" [4]. By understanding the characteristic strengths, limitations, and appropriate applications of each approach, researchers can design more efficient and informative high-throughput experiments that yield reliable insights into causal biological mechanisms.

In the pursuit of personalized medicine, the accurate estimation of Heterogeneous Treatment Effects (HTE) is paramount. HTE analysis seeks to understand how treatment effects vary across subpopulations, enabling more targeted and effective therapeutic interventions. However, a fundamental challenge in this process is the decomposition of overall variability into two distinct components: bias and noise. Bias represents systematic errors that consistently skew results in one direction, while noise constitutes random, unsystematic variability that obscures true treatment signals [9]. The interplay between these elements—often termed the bias-variance tradeoff in machine learning—directly impacts the reliability, interpretability, and ultimate utility of HTE estimates in drug development workflows [9].

Understanding this tradeoff is not merely a statistical exercise; it is a critical prerequisite for robust experimental design in clinical research. High bias can lead to overly simplistic models that overlook crucial patient subgroups, potentially missing valuable therapeutic opportunities. Conversely, high variance can result in models that are overly sensitive to random fluctuations in the data, identifying spurious subgroups that do not generalize to broader populations [9]. This paper provides a comprehensive technical framework for quantifying, managing, and partitioning these sources of variability, with methodologies tailored specifically for pharmaceutical researchers and clinical scientists operating within complex experimental paradigms.

Theoretical Foundations: Deconstructing Bias and Noise

Formal Definitions and Mathematical Framework

In HTE analysis, we consider a dataset comprising patient covariates ( X ), a treatment assignment ( T ), and an outcome ( Y ). The goal is to estimate the conditional average treatment effect ( \tau(x) = E[Y(1) - Y(0) | X = x] ), where ( Y(1) ) and ( Y(0) ) represent potential outcomes under treatment and control, respectively.

The expected prediction error at any point ( x ) can be decomposed as follows:

$$ E[(y - \hat{f}(x))^2] = \text{Bias}[\hat{f}(x)]^2 + \text{Var}[\hat{f}(x)] + \sigma^2 $$

Where:

- Bias quantifies the difference between the expected prediction of our model and the true underlying function: ( \text{Bias}[\hat{f}(x)] = E[\hat{f}(x)] - f(x) ) [9].

- Variance measures how much the model's predictions fluctuate across different training datasets: ( \text{Var}[\hat{f}(x)] = E[\hat{f}(x)^2] - E[\hat{f}(x)]^2 ) [9].

- ( \sigma^2 ) represents the irreducible error or noise inherent in the data generation process.

This decomposition reveals a critical insight: as model complexity increases, bias typically decreases while variance increases, and vice versa [9]. The optimal model complexity achieves the best balance between these competing error sources.

Implications for HTE Estimation

In clinical trial settings, bias often manifests as systematic underestimation or overestimation of treatment effects for specific patient subgroups. This can arise from:

- Confounding bias: When treatment assignment correlates with patient prognosis factors.

- Selection bias: When participants in subgroups differ systematically from the target population.

- Measurement bias: When outcome assessments inconsistently apply across sites or subgroups.

Noise in HTE contexts typically originates from:

- Biological variability: Intrinsic patient-to-patient differences in treatment response.

- Measurement error: Imperfect assays, diagnostic tools, or clinical assessments.

- Data quality issues: Missing values, transcription errors, or protocol deviations.

The following table summarizes key characteristics of these error sources in HTE data:

Table 1: Characteristics of Bias and Noise in HTE Analysis

| Characteristic | Bias (Systematic Error) | Noise (Random Variability) |

|---|---|---|

| Directionality | Consistent directional deviation from true effect | Non-directional fluctuations around true effect |

| Impact on HTE | Missed subgroup effects or spurious subgroup identification | Reduced precision in treatment effect estimates |

| Reducibility | Potentially correctable through improved study design | Can be reduced but not eliminated through larger samples |

| Sources in Clinical Trials | Confounding, selection bias, measurement bias | Biological variability, measurement error, data quality issues |

| Detection Methods | Sensitivity analyses, negative controls, balance diagnostics | Resampling methods, reliability assessments, variance decomposition |

Quantitative Frameworks for Variability Partitioning

Experimental Metrics and Diagnostic Tools

Quantifying the relative contributions of bias and noise requires specialized metrics tailored to HTE contexts. The following diagnostic measures enable researchers to partition variability and identify dominant error sources:

Table 2: Quantitative Metrics for Partitioning Variability in HTE Data

| Metric Category | Specific Metric | Calculation | Interpretation in HTE Context |

|---|---|---|---|

| Bias Diagnostics | Standardized Mean Difference | ( \frac{\bar{X}t - \bar{X}c}{\sqrt{(st^2 + sc^2)/2}} ) | Values >0.1 indicate meaningful covariate imbalance between treatment subgroups |

| Calibration Slope | Slope from regression of observed vs. predicted outcomes | Slope <1 suggests overfitting; slope >1 suggests underfitting of HTE model | |

| Variance Diagnostics | Predictive R² | ( 1 - \frac{\sum(yi - \hat{y}i)^2}{\sum(y_i - \bar{y})^2} ) | Measures proportion of outcome variance explained by the model |

| ICC (Subgroup Consistency) | ( \frac{\sigma^2{\text{between}}}{\sigma^2{\text{between}} + \sigma^2_{\text{within}}} ) | Values near 1 indicate high consistency of treatment effects within subgroups | |

| Bias-Variance Decomposition | MSE Decomposition | ( \frac{1}{n}\sum(\hat{y}i - yi)^2 = \text{Bias}^2 + \text{Variance} + \sigma^2 ) | Direct quantification of error components |

| Cross-validation Error | Average prediction error across k folds | Estimates model's expected predictive performance on new data |

Methodological Protocols for Variability Assessment

Protocol 1: Bootstrap-Based Bias-Variance Decomposition

This protocol enables empirical estimation of bias and variance components using resampling techniques:

- Data Preparation: From the original dataset D of size n, generate B bootstrap samples ( D^{(b)} ) by drawing n observations with replacement.

- Model Training: For each bootstrap sample ( D^{(b)} ), train the HTE estimation model ( \hat{f}^{(b)}(x) ).

- Prediction Generation: For each patient i, generate predictions ( \hat{f}^{(b)}(x_i) ) across all bootstrap samples.

- Component Calculation:

- Bootstrap aggregate prediction: ( \hat{f}{\text{bag}}(xi) = \frac{1}{B}\sum{b=1}^B \hat{f}^{(b)}(xi) )

- Bias² estimate: ( [\hat{f}{\text{bag}}(xi) - y_i]^2 )

- Variance estimate: ( \frac{1}{B}\sum{b=1}^B [\hat{f}^{(b)}(xi) - \hat{f}{\text{bag}}(xi)]^2 )

- Aggregation: Average bias and variance estimates across all patients to obtain global metrics.

Protocol 2: Cross-Validation for Hyperparameter Tuning

This protocol systematically evaluates model complexity to optimize the bias-variance tradeoff:

- Parameter Grid: Define a grid of hyperparameter values that control model complexity (e.g., regularization strength, tree depth, number of neighbors).

- Data Splitting: Partition data into k folds of approximately equal size.

- Iterative Validation: For each hyperparameter combination:

- For k = 1 to K:

- Train model on all folds except fold k

- Calculate predictions for patients in fold k

- Aggregate predictions across all folds

- Compute overall bias² and variance using decomposition formula

- For k = 1 to K:

- Optimal Selection: Identify hyperparameter values that minimize the sum of bias² and variance.

- Validation: Refit model with optimal hyperparameters on full training set and evaluate on held-out test set.

Experimental Design for Optimal Bias-Noise Tradeoff

Stratified Randomization and Covariate Balancing

Minimizing bias in HTE estimation begins with robust experimental design. Covariate-adaptive randomization techniques significantly reduce systematic imbalances between treatment subgroups:

Protocol 3: Minimization-Based Randomization for HTE Studies

- Define Prognostic Factors: Identify 4-8 patient characteristics strongly predictive of outcome (e.g., disease severity, biomarkers, age).

- Implement Minimization Algorithm:

- For each new patient, calculate imbalance scores for each treatment arm based on marginal sums of prognostic factors

- Assign patient to the arm that minimizes overall imbalance with probability 0.75-0.80

- Use random assignment with remaining probability to maintain unpredictability

- Validate Balance: After randomization, formally test for residual imbalances using standardized mean differences (<0.1 indicates adequate balance).

Sample Size Planning for HTE Detection

Adequate power for HTE detection requires substantially larger samples than overall treatment effects. The following protocol ensures sufficient precision:

Protocol 4: Power Calculation for Subgroup Treatment Effects

- Define Key Subgroups: Identify 2-4 primary subgroups of interest based on biological rationale.

- Specify Effect Sizes: Define clinically meaningful treatment effect differences between subgroups (θ).

- Calculate Sample Requirements:

- For continuous outcomes: ( n = \frac{4\sigma^2(Z{1-\alpha/2} + Z{1-\beta})^2}{\theta^2} )

- Adjust for multiple comparisons using Bonferroni correction if testing multiple subgroups

- Incorporate variance inflation factors for continuous subgrouping variables

- Sensitivity Analysis: Evaluate power across a range of plausible effect sizes and variance components.

Advanced Methodologies for Noise Control and Bias Correction

Regularization Approaches for HTE Estimation

Regularization techniques explicitly manage the bias-variance tradeoff by penalizing model complexity. The following advanced methods show particular promise for HTE applications:

Protocol 5: Adaptive Regularization for Causal Forests

- Base Learner Specification: Implement causal forest with honesty constraint (sample splitting).

- Tuning Parameter Grid:

- alpha: Imbalance penalty (values: 0.001, 0.01, 0.05, 0.10, 0.15)

- lambda: Regularization strength (values: 0.0001, 0.001, 0.01, 0.1)

- min.node.size: Terminal node size (values: 1, 5, 10, 20)

- Targeted Regularization:

- Calculate gradient-based weights to downweight high-variance observations

- Apply cross-fitting to debias estimates

- Use efficiency augmentation with outcome model

- Validation: Estimate asymptotic variance using bootstrap or infinitesimal jackknife.

Ensemble Methods for Variance Reduction

Combining multiple HTE estimation approaches through ensemble methods can substantially reduce variance while maintaining low bias:

Protocol 6: Super Learner for HTE Meta-Estimation

- Library Definition: Create diverse library of HTE estimators including:

- Parametric models (linear interaction, logistic regression)

- Nonparametric methods (causal forests, BART, neural networks)

- Semi-parametric approaches (propensity score stratification)

- Cross-Validation: Estimate performance of each algorithm using V-fold cross-validation.

- Optimal Weighting: Calculate ensemble weights that minimize cross-validated risk.

- Final Prediction: Generate weighted combination of algorithm-specific HTE estimates.

Table 3: Research Reagent Solutions for HTE Analysis

| Reagent Category | Specific Tool/Method | Primary Function | Considerations for HTE Research |

|---|---|---|---|

| Statistical Software | R causalForest package |

Nonparametric HTE estimation with honesty constraints | Handles high-dimensional covariates; provides uncertainty quantification |

| Python Libraries | EconML, CausalML | Metalearners for HTE (S-, T-, X-learners) | Integration with scikit-learn; supports multiple data types |

| Bias Diagnostics | cobalt R package |

Balance assessment for propensity score methods | Comprehensive visualization; supports multiple study designs |

| Variance Estimation | grf R package |

Efficient variance estimation via bootstrap of little bags | Debiased inference; small-sample corrections |

| Sensitivity Analysis | sensemakr R package |

Quantifies robustness to unmeasured confounding | Formal bounds on confounding strength; visualization tools |

| Clinical Data Standards | CDISC SDTM/ADaM | Standardized clinical trial data structures | Facilitates pooling across studies; regulatory acceptance |

Effectively partitioning variability into bias and noise components represents a fundamental advancement in HTE research methodology. The frameworks and protocols presented herein enable researchers to systematically diagnose, quantify, and mitigate sources of error that compromise treatment effect estimation. By adopting these approaches, drug development professionals can enhance the reliability of subgroup identification, improve clinical trial efficiency, and ultimately advance the precision medicine paradigm.

The integration of robust experimental design with advanced statistical learning methods creates a powerful foundation for HTE discovery. Future methodological developments should focus on adaptive designs that dynamically balance bias-variance tradeoffs throughout trial execution, Bayesian approaches that formally incorporate prior information about subgroup structures, and machine learning methods that explicitly optimize for transportability of HTE estimates to target populations. Through continued methodological innovation and rigorous application of these principles, the research community can overcome the challenges of variability partitioning and fully realize the potential of heterogeneous treatment effect analysis in drug development.

In the rigorous context of High-Throughput Experimentation (HTE) for drug development, a precise understanding of experimental design is not merely beneficial—it is fundamental to generating reliable, interpretable, and actionable data. HTE workflows enable researchers to rapidly test a vast number of hypotheses by conducting many parallel experiments [2]. However, the value of this massive data output is entirely dependent on the soundness of the underlying experimental architecture. This guide details three foundational concepts—experimental units, treatment factors, and lurking variables—that form the bedrock of any valid experiment. Mastery of these concepts ensures that HTE delivers not just high quantity, but high quality of information, accelerating the journey from experimental data to scientific insight and decision-making [2] [10].

The power of a well-designed experiment lies in its ability to establish cause-and-effect relationships. By systematically manipulating inputs and observing outputs, researchers can move beyond correlation to true causation, a critical requirement when optimizing chemical reactions or biological assays in pharmaceutical research. This document provides a technical guide for scientists and researchers, framing these core principles within the specific challenges and opportunities of modern HTE workflows.

Defining the Foundational Components

A well-designed experiment is built upon clearly defined components. Misidentification of these elements can lead to pseudoreplication, invalid statistical analysis, and incorrect conclusions [11]. The following sections break down the essential terminology.

The Experimental Unit

The experimental unit is the physical entity to which a specific treatment combination is applied independently of all other units [11] [12]. It is the primary unit of interest in a specific research objective and the entity about which researchers wish to draw inferences [13]. Correct identification of the experimental unit is critical because it directly determines the sample size for statistical analysis; mistaking sub-units for independent experimental units artificially inflates the sample size and invalidates statistical tests [11].

Table 1: Identification of the Experimental Unit in Different Contexts

| Experimental Scenario | Description | Experimental Unit | Rationale |

|---|---|---|---|

| Individual Animal Study [11] | An animal is individually administered a treatment (e.g., by injection). | The individual animal | The treatment is assigned to and affects each animal independently. |

| Cage of Animals [11] | A treatment (e.g., medicated diet) is administered to a whole cage of group-housed animals. | The entire cage | All animals within the cage receive the same treatment; the intervention is applied to the cage as a whole. |

| Skin Patch Application [11] | Different patches on a single animal's skin receive distinct topical treatments. | The patch of skin | Each patch can be assigned a different treatment independently of others on the same animal. |

| Litter Study [11] | A pregnant female receives a treatment, and measurements are taken on the pups. | The entire litter | The treatment is applied to the dam, and all pups in the litter are exposed to the same experimental condition. |

| HTE Plate [2] | A 96-well plate is used to screen different catalyst combinations. | The individual well | Each well can receive a unique combination of reactants, making it an independent treatment entity. |

Treatments and Factors

In experiments, a treatment is something that researchers administer to experimental units [14]. It is a specific combination of the levels of the factors being studied. A factor is a controlled independent variable—a variable whose levels are set by the experimenter [12]. Different treatments constitute different levels of a factor. For example, in an experiment testing the effect of training methods on runners, the "type of training" is the factor, and the three different training regimens are the treatments [14].

- Factor Levels: The settings of a factor are its levels. For instance, if the factor is temperature, it could have levels set at 50°C, 70°C, and 90°C [12].

- Treatment Combination: In a factorial experiment with multiple factors, a treatment combination is the unique set of conditions for a single experimental run. For example, a reaction might be run at 70°C (Level 1 of Factor A) and 2.5 mol% catalyst (Level 2 of Factor B) [12].

Lurking Variables

A lurking variable is an extra variable that is not included in the experimental study but that can affect the results and the relationship between the explanatory and response variables [15]. Unlike controlled factors, lurking variables are not managed or measured by the researcher, creating a risk that the observed effects will be incorrectly attributed to the planned treatment.

A classic example is a study investigating the effectiveness of vitamin E. If subjects who take vitamin E also tend to exercise more and eat a healthier diet, then exercise and diet are lurking variables. Any observed health benefits could be due to these other factors, not the vitamin E itself [15]. The primary method for controlling lurking variables is randomization, which randomly assigns experimental units to treatment groups. This ensures that potential lurking variables are spread equally among all groups, isolating the true effect of the treatment [15] [14].

Experimental Protocols and Methodologies

A Generalized DOE Workflow for HTE

The Design of Experiments (DOE) workflow provides a structured framework for planning, executing, and analyzing experiments. This is especially critical in HTE to manage complexity and ensure data quality [10]. The typical workflow consists of six key steps:

Diagram 1: Core DOE Workflow

- Define: Clearly state the experiment's purpose, identify the responses to measure, and define the factors to manipulate along with their meaningful ranges [10]. In HTE, this involves defining the chemical space to be explored [2].

- Model: Propose an initial statistical model (e.g., a first-order model for screening or a second-order model for optimization) that the experiment is intended to support [10].

- Design: Generate an experimental design—a collection of runs (treatment combinations)—that can efficiently estimate the proposed model. This includes determining the number of replicates and randomization schemes [10].

- Data Entry: Execute the experiment according to the design and record the response data for each run. HTE software can automate data capture from analytical instruments, linking results directly to each experimental well [2] [10].

- Analyze: Fit the statistical model to the data to identify significant factors and interactions, and refine the model by removing inactive terms [10].

- Predict: Use the confirmed model to predict response values under new factor settings and find optimal conditions to achieve the desired response goals [10].

Protocol for a Randomized Experiment: Aspirin and Heart Attacks

The following protocol illustrates how core concepts are integrated into a real-world study design.

- Research Objective: To investigate whether taking aspirin regularly reduces the risk of heart attack in men [15].

- Experimental Units: 400 men between the ages of 50 and 84 recruited for the study. Each man is an experimental unit [15].

- Explanatory Variable: Type of oral medication [15].

- Treatments:

- Treatment 1: Aspirin

- Treatment 2: Placebo (a pill with no active medication) [15]

- Response Variable: Whether a subject had a heart attack during the study period [15].

- Methodology:

- Random Assignment: The 400 men are divided randomly into two groups. This ensures that lurking variables (e.g., diet, exercise habits, genetic predisposition) are distributed equally between the groups [15].

- Blinding: The study is double-blinded. Neither the subjects nor the researchers interacting with them know which treatment (aspirin or placebo) each subject is receiving. This prevents the power of suggestion from influencing the outcomes (the placebo effect) and prevents researchers from unconsciously treating the groups differently [15].

- Execution: Each man takes one pill daily for three years [15].

- Data Collection: Researchers count the number of men in each group who have had heart attacks at the end of the study [15].

The Scientist's Toolkit: Key Reagents and Solutions for HTE

In HTE for drug development, specialized tools and reagents are essential for efficiently executing complex experimental designs.

Table 2: Essential Research Reagent Solutions for HTE

| Item / Solution | Function in HTE Workflow |

|---|---|

| HTE Plates (96, 384, 1536-well) [2] [7] | The physical platform for running parallel experiments. Higher well densities enable greater throughput. |

| Automated Liquid Dispenser [7] | Provides accurate, low-volume dispensing for 96, 384, and 1536-well plates, enabling rapid and precise preparation of treatment combinations. |

| Chemically Intelligent Software [2] | Allows scientists to design experiments by dragging and dropping chemical structures, ensuring the design covers the appropriate chemical space and automatically links chemical identity to each reaction well. |

| Pre-dispensed Reagent Kits [2] | Pre-prepared plates of reagents or catalysts that allow for quick experiment setup and increase throughput by minimizing manual preparation time. |

| Integrated AI/ML Module [2] | Algorithms like Bayesian Optimization for design of experiments (DoE) that reduce the number of experiments needed to find optimal conditions by intelligently selecting the next set of experiments to run. |

Advanced Considerations and Diagramming Relationships

Complexities in Defining Experimental Units

Correctly identifying the experimental unit prevents the statistical error of pseudoreplication, where sub-units are mistakenly treated as independent replicates [11]. The decision framework for identifying the true experimental unit can be visualized as follows:

Diagram 2: Experimental Unit Decision Tree

In advanced designs, a single experiment can have multiple experimental units. Consider a "split-plot" experiment in mice investigating diet (administered in the cage's food) and a vitamin supplement (administered by injection). Here, the experimental unit for the diet is the entire cage (as all mice in a cage get the same diet), while the experimental unit for the vitamin supplement is the individual mouse (as mice in the same cage can get different supplements) [11]. Such designs are powerful but require complex statistical analysis.

The Critical Role of Control and Randomization

Control and randomization are the twin pillars that defend an experiment against bias and lurking variables [14].

- Control Groups: A control group is given a placebo or a baseline treatment that cannot influence the response variable. This group helps researchers balance the effects of simply being in an experiment against the effects of the active treatments [15]. Without a proper control, as in the example of the farmer comparing two differently irrigated fields, it is impossible to attribute changes in the response to the treatment itself [14].

- Randomization: Random assignment of experimental units to treatment groups is the most reliable method for ensuring that all lurking variables, both known and unknown, are distributed evenly across the groups. This process creates homogeneous treatment groups, preventing the experimenter's conscious or unconscious biases from influencing the group assignments [15] [14]. As stated in the NIST handbook, "The importance of randomization cannot be overstressed. Randomization is necessary for conclusions drawn from the experiment to be correct, unambiguous and defensible" [12].

Within the high-stakes, high-throughput environment of modern drug development, a rigorous grasp of experimental units, treatment factors, and lurking variables is non-negotiable. These concepts are not abstract statistical ideas but are practical necessities for designing efficient and valid experiments. Correctly identifying the experimental unit ensures statistical analyses are sound and conclusions are valid. A clear definition of factors and treatments allows for the efficient exploration of complex chemical and biological spaces. Diligently controlling for lurking variables through randomization and blinding ensures that observed effects are truly causal, providing the confidence needed to make critical decisions in the research and development pipeline. By building these foundational concepts into HTE workflows, scientists can fully leverage the power of high-throughput platforms to accelerate innovation.

In the demanding world of high-throughput experiments (HTE), where resources are finite and the margin for error is small, the adage "fail fast, learn fast" has never been more relevant. The concept of 'Dailies'—adopted from the film industry where directors review each day's footage to correct issues before they affect entire productions—provides a powerful framework for experimental scientists [16]. This practice involves initiating data analysis as soon as the first experimental results are acquired, rather than waiting until all data collection is complete. This approach allows researchers to track unexpected sources of variation and adjust protocols in real-time, preventing the costly propagation of errors throughout lengthy experimental workflows [16]. Within the broader thesis of experimental design for HTE workflows, embracing 'Dailies' represents a fundamental shift from reactive problem-solving to proactive process control, enabling researchers to manage the inherent tradeoffs between resource constraints, instrument limitations, and biological complexity more effectively.

The Scientific and Economic Rationale for Early Analysis

Partitioning Error: Distinguishing Bias from Noise

A core benefit of early analysis is the ability to distinguish between different types of experimental error at a stage when they can still be addressed. Statistical theory broadly categorizes error into two distinct types that require different management strategies [16]:

- Noise: This type of error "averages out" with sufficient replication. It is easily recognized by looking at replicates and becomes less impactful as more data is analyzed.

- Bias: This systematic error remains consistent across replicates and does not diminish with increased sample size. Bias can be difficult to detect without careful analysis and often requires quantitative modeling to measure and adjust for.

The practice of 'Dailies' enables researchers to identify bias early, when corrective actions are most effective. As noted in experimental design literature, "No amount of replication will remedy the fact that the center of the points is in the wrong place" when bias is present [16]. This distinction is particularly crucial in high-throughput settings where undetected bias can compromise entire experimental campaigns.

The Economic Imperative in Drug Development

The economic implications of early troubleshooting are magnified in pharmaceutical development, where the cost of bringing a single product to market averages $2.2 billion distributed over more than a decade of research [17]. With novel drug and biologic approvals averaging just 56 per year over the past decade, the efficiency of each experimental workflow carries tremendous financial consequences [17]. The high attrition rates at various regulatory stages further underscore the need for early problem detection. Recent FDA initiatives aimed at modernizing preclinical research reflect a growing recognition that strengthening the reliability of translational studies represents a critical leverage point for improving overall development efficiency [17].

Table 1: Economic Context for Early Troubleshooting in Drug Development

| Metric | Value | Significance for Troubleshooting |

|---|---|---|

| Average Cost to Bring Product to Market | $2.2 billion | Early error detection prevents costly downstream failures |

| Average Development Timeline | >10 years | Early analysis compresses development cycles |

| Annual Novel Drug/Biologic Approvals | ~56 | Highlights competitive landscape and efficiency premium |

| R&D Spending (Biopharmaceutical Sector) | >$100 billion/year | Context for resource allocation decisions |

Implementing Dailies: Methodologies and Workflows

The Pipettes and Problem Solving Framework

A structured approach called "Pipettes and Problem Solving" has been developed and implemented at the University of Texas at Austin to formally teach troubleshooting skills to graduate students [18]. This methodology, designed as a journal-club style meeting lasting 30-60 minutes, provides a replicable framework for putting the 'Dailies' principle into practice:

- Scenario Preparation: Before each meeting, an experienced researcher creates 1-2 slides describing a hypothetical experimental setup with unexpected outcomes, along with relevant background information (instrument calibration records, laboratory environmental conditions, concurrent research activities) [18].

- Consensus-Driven Investigation: Participants must reach a full consensus on proposed troubleshooting experiments, fostering collaboration and ensuring thorough evaluation of possibilities [18].

- Iterative Experimentation: The group typically proposes a limited number of experiments (usually three), with the leader providing mock results after each proposal to guide subsequent investigative steps [18].

- Constraint Integration: Leaders can reject experiments deemed too expensive, dangerous, time-consuming, or requiring unavailable equipment, mirroring real-world research constraints [18].

This framework explicitly addresses the challenge that "PhD students rarely receive formal training in troubleshooting, and are expected to acquire this skill 'on the fly' as they progress through graduate school" [18].

Workflow Integration and Sequential Design

The integration of 'Dailies' into HTE workflows follows principles of sequential experimental design, where information from early results informs subsequent experimental phases [16]. This approach recognizes that despite advanced planning, "intermediate data analyses and visualizations will track unexpected sources of variation and enable you to adjust the protocol" [16]. The workflow for implementing this approach can be visualized as follows:

Diagram 1: Dailies Implementation Workflow (77 characters)

Classification of Troubleshooting Scenarios

The Pipettes and Problem Solving framework distinguishes between two fundamental types of troubleshooting scenarios, each requiring different analytical approaches [18]:

Table 2: Classification of Troubleshooting Scenarios

| Scenario Type | Description | Training Focus | Example |

|---|---|---|---|

| Known Outcome with Atypical Results | Experiments where controls return unexpected results (e.g., negative control giving positive signal) | Fundamentals of appropriate controls, instrument technique, and recognizing researcher-driven shortcuts | MTT assay with unusually high variance and error bars [18] |

| Unknown Target Outcome | Developing new assays or protocols where the "correct" outcome isn't established | Hypothesis development, advanced analytical techniques, proper control implementation | Creating novel assays that require characterization of compounds or samples before the original experiment can be reattempted [18] |

Essential Research Reagents and Materials for Effective Troubleshooting

Successful implementation of 'Dailies' requires ready access to key research materials that enable rapid investigative follow-up. The following toolkit represents essential resources for effective troubleshooting in experimental workflows:

Table 3: Research Reagent Solutions for Experimental Troubleshooting

| Reagent/Material | Function in Troubleshooting | Application Context |

|---|---|---|

| Cytotoxic Compounds (range) | Serves as appropriate negative controls in viability assays | MTT assays for cytotoxicity studies [18] |

| Defined Cell Culture Media | Controls for culturing condition variables | Mammalian cell line studies [18] |

| Enzyme Variants | Tests protocol robustness to reagent batch effects | PCR, cloning, and molecular biology workflows [18] |

| Calibration Standards | Verifies instrument performance and detection limits | Analytical chemistry and spectroscopy [18] |

| Antibody Panels | Validates specificity and identifies cross-reactivity | Immunoassays, Western blotting, flow cytometry [18] |

Error Modeling and Analytical Approaches

Conceptualizing Experimental Variability

The analytical foundation of 'Dailies' rests on sophisticated error modeling that acknowledges the complex nature of variability in biological systems. Rather than asking whether effects are fundamentally random or deterministic, a more productive framework considers "whether we care to model it deterministically (as bias), or whether we ignore the details, treat it as stochastic, and use probabilistic modeling (noise)" [16]. In this context, probabilistic models become "a way of quantifying our ignorance, taming our uncertainty" [16]. This conceptual framework can be visualized through the relationship between different error types and their appropriate management strategies:

Diagram 2: Error Modeling Framework (67 characters)

Addressing Latent Factors and Batch Effects

A critical challenge in early analysis is dealing with latent factors—unknown variables that systematically affect measurements but lack explicit documentation. As noted in experimental literature, "with high-dimensional data, noise caused by latent factors tends to be correlated, and this can lead to faulty inference" [16]. The practice of 'Dailies' provides opportunity to detect patterns suggesting such latent factors before they compromise entire datasets. When known factors like different reagent batches create systematic effects (batch effects), these can be explicitly modeled and accounted for in analysis [16]. Computational tools like DESeq2 offer specific functionalities for handling these challenges, allowing researchers to specify "sample- and gene-dependent normalization factors for a matrix" intended to contain explicit estimates of such biases [16].

Future Directions: AI and Technological Enablement

The practice of 'Dailies' is poised for transformation through artificial intelligence and connected technologies. By the end of 2025, artificial intelligence is predicted to "transform clinical operations, dramatically improving efficiency and productivity" through automation of labor-intensive tasks and predictive analytics [19]. Specific AI applications with relevance to early troubleshooting include:

- Predictive Analytics: Leveraging historical and real-time operational data to forecast outcomes and optimize resource allocation [19].

- Protocol Automation: Using AI to "extract key information from protocol documents to populate downstream systems, reducing manual entry errors and increasing speed" [19].

- Site Selection Optimization: Identifying optimal experimental sites with the greatest likelihood for success by analyzing factors like demographics, past performance, and resource availability [19].

Concurrently, integration of previously isolated technologies creates opportunities for more seamless troubleshooting. As sites report increasing frustration with disconnected systems, technology providers are shifting "from fixing individual pain points to building a unified, interoperable framework that brings together data and processes across the study start-up ecosystem" [19]. This connectivity enables the real-time data sharing and analysis essential for effective 'Dailies' implementation in distributed research environments.

The adoption of 'Dailies' represents a paradigm shift in high-throughput experimental workflows, moving the analytical process from a concluding phase to a continuous activity running parallel to data collection. This approach acknowledges the profound wisdom in R.A. Fisher's observation that "to consult the statistician after an experiment is finished is often merely to ask him to conduct a post mortem examination" [16]. By starting analysis early, researchers transform troubleshooting from retrospective autopsy to prospective quality control. For the drug development professionals and researchers navigating increasingly complex experimental landscapes, embedding this practice into organizational culture offers a pathway to more efficient resource utilization, accelerated discovery timelines, and ultimately, more reliable scientific conclusions.

Strategic Frameworks: Selecting and Executing DOE Designs for HTE

Within the broader thesis on design of experiments (DoE) for High-Throughput Experimentation (HTE) workflows, screening designs represent a critical first step in the research pipeline. These designs enable researchers and drug development professionals to efficiently sift through a large number of potential factors to identify the few key influential variables that significantly impact a process or outcome. In HTE contexts where resources are finite and the number of candidate factors can be enormous, screening designs provide a systematic approach to resource rationalization, allowing for pragmatic choices that are both feasible and informative [20]. The fundamental challenge these designs address is the art of achieving "good enough" results within constraints of cost, time, and material, while ensuring that truly important factors are not overlooked.

High-throughput screening experiments are particularly reliant on specialized designs that maximize the amount of information gained per experimental unit. As noted in research on saturated row-column designs for primary high-throughput screening, these approaches allow "the maximum number of compounds arranged in each microplate" while effectively eliminating positional effects that could confound results [21]. This efficiency is paramount in early-stage drug discovery where thousands of compounds must be evaluated rapidly, and where the cost of full factorial experimentation across all potential factors would be prohibitive.

Foundational Principles of Effective Screening

Core Statistical Principles

Effective screening designs are built upon several interconnected statistical principles that ensure reliable identification of key factors:

Effect Sparsity: This principle assumes that among many potential factors being investigated, only a relatively small number will have substantial effects on the response variable. Screening designs leverage this sparsity to efficiently distinguish active compounds from inactive ones in primary screening environments [21]. The practical implication is that researchers can investigate many factors with relatively few experimental runs.

Randomization and Blocking: Proper screening designs incorporate randomization to avoid confounding of factor effects with unknown nuisance variables. As illustrated in a toy example of two-group comparison, fatal confounding can occur when batch effects align perfectly with experimental conditions, making valid conclusions impossible [20]. Blocking known sources of variation (such as measurement date, technician, or equipment) increases the sensitivity for detecting genuine factor effects.

Replication Strategy: A nuanced understanding of replication is essential. The distinction between technical replicates (multiple measurements of the same biological unit) and biological replicates (measurements across different biological units) must be carefully considered in experimental planning [20]. In HTE for drug discovery, this might extend to different CRISPR guides for the same target gene or different cell line models for the same biological system.

Addressing Variability in HTE

Biological and technical variability presents particular challenges for screening designs. The efficiency of a screening design depends heavily on properly accounting for different sources of variation:

Variance Decomposition: Analysis of variance (ANOVA) techniques allow partitioning of total variability into components attributable to different factors [20]. This decomposition is crucial for distinguishing genuine factor effects from background noise.

Normalization Methods: Many biological assays lack universal units, requiring normalization techniques to make measurements comparable [20]. These methods aim to remove technical variation while preserving biological variation, with the signal-to-noise ratio serving as a key figure of merit.

Regular vs. Catastrophic Noise: While regular noise can be modeled with standard probability distributions, screening designs must also contend with catastrophic noise events where entire measurement batches may be compromised [20]. Quality assessment procedures and outlier detection mechanisms are therefore essential components of screening workflows.

Types of Screening Designs and Their Applications

Saturated Row-Column Designs

Saturated row-column designs represent a specialized approach for high-throughput screening experiments where positional effects within microplates must be controlled. These designs are particularly valuable in primary screening where all compounds need to be comparable within each microplate despite the existence of row and column effects [21]. The efficiency of these designs comes from their ability to accommodate the maximum number of experimental units (e.g., compounds) while systematically accounting for positional biases.

Table 1: Comparison of Screening Design Types and Their Characteristics

| Design Type | Key Features | Optimal Use Cases | Limitations |

|---|---|---|---|

| Saturated Row-Column Designs | Controls for row and column effects; maximizes compounds per plate | Primary HTS with microplates; when positional effects are significant | Requires specialized statistical analysis methods |

| Two-Group Comparative Designs | Simple structure with control and treatment groups | Preliminary screening with limited factors; clear binary comparisons | Vulnerable to confounding without proper randomization |

| Factorial Screening Designs | Systematically varies multiple factors simultaneously | Identifying interaction effects; balanced factor exploration | Resource intensive with many factors; resolution limitations |

Statistical Analysis Methods for Screening

The analysis of data from screening experiments requires specialized statistical approaches that align with the screening context:

Effect Sparsity Utilization: Modern statistical methods for analyzing nonorthogonal saturated designs take full advantage of effect sparsity in primary screening [21]. These methods recognize that most factors will have negligible effects, allowing analytical focus on the few potentially significant factors.

False Positive/Negative Balance: An effective screening method maintains a balanced approach to false positives and false negatives [21]. In drug discovery, this balance is critical—too many false positives waste resources on follow-up testing, while too many false negatives causes promising compounds to be overlooked.

Multiple Testing Corrections: Given the large number of comparisons typically made in screening experiments, appropriate statistical corrections for multiple testing are essential to control the family-wise error rate or false discovery rate.

Implementation Workflows and Protocols

Screening Design Workflow

The following diagram illustrates the standard workflow for implementing screening designs in HTE contexts:

Experimental Protocol for High-Throughput Screening

The following detailed protocol outlines the key steps for implementing a screening design in HTE environments:

Factor Selection and Range Determination:

- Identify all potential factors that may influence the response variable

- Define appropriate ranges for each continuous factor based on preliminary knowledge

- For categorical factors, define meaningful levels to test

- Document all factors and their ranges in an experimental registry

Design Matrix Construction:

- Select an appropriate screening design based on the number of factors and available resources

- Generate a design matrix that specifies factor levels for each experimental run

- Incorporate randomization to avoid confounding

- Include appropriate controls for quality assessment

Experimental Execution:

- Execute experiments according to the design matrix

- Implement blocking for known sources of variation (e.g., plate effects, day effects)

- Maintain detailed documentation of any deviations from protocol

- Conduct intermediate data analysis ("dailies") to identify potential issues early [20]

Data Analysis and Hit Selection:

- Perform statistical analysis using methods appropriate for the design type

- Apply effect sparsity principles to identify potentially significant factors

- Use appropriate multiple testing corrections

- Select "hits" or significant factors for confirmation studies

Validation and Confirmation:

- Design and execute confirmation experiments for selected hits

- Validate findings using independent experimental approaches

- Document false positive and false negative rates for method improvement

Research Reagent Solutions and Materials

Table 2: Essential Research Reagent Solutions for Screening Experiments

| Reagent/Material | Function in Screening Experiments | Implementation Considerations |

|---|---|---|

| Statistical Design Software | Generates optimal design matrices for efficient screening | Must accommodate chemical information; integration with chemical structure display [2] |

| Laboratory Information Management Systems (LIMS) | Tracks samples, reagents, and experimental conditions | Essential for maintaining data integrity across large screening campaigns |

| High-Throughput Screening Platforms | Enables rapid testing of multiple compounds or conditions | Robotics and automation to minimize manual intervention [2] |

| Analytical Instrumentation | Provides quantitative readouts for response variables | Should support >150 instrument vendor data formats for automated processing [2] |

| Chemical Inventory Systems | Manages compounds and reagents used in screening | Integration with experimental design software for direct compound selection [2] |

Data Analysis and Visualization Approaches

Statistical Analysis Methods

The analysis of screening data requires specialized approaches that account for the unique characteristics of HTE:

Nonorthogonal Saturated Design Analysis: Specialized methods have been developed for analyzing nonorthogonal saturated designs using effect sparsity [21]. These approaches recognize the inherent limitations of saturated designs where the number of experimental units equals the number of parameters to estimate.

Mixed-Effects Models: These models are particularly useful for screening data with hierarchical structure (e.g., compounds within plates, plates within batches). They properly account for both fixed effects (the factors of interest) and random effects (sources of variation not of primary interest).

Robust Statistical Methods: Given the potential for outliers and non-normal distributions in screening data, robust statistical methods provide more reliable identification of significant factors.

Data Visualization for Screening Results

Effective visualization methods are essential for interpreting screening results and communicating findings:

Hit Selection Visualizations: specialized plots such as z-score plots or volcano plots (showing effect size versus statistical significance) are particularly valuable for distinguishing true hits from background noise.

Quality Control Charts: Control charts monitoring various quality metrics across plates or batches help identify systematic issues that might compromise screening results.

Interactive Visualization Tools: Modern HTE software platforms provide interactive visualization capabilities that allow researchers to explore screening results from multiple perspectives [2].

Case Study: Screening Design in Medical Physics

A compelling example of screening design application comes from volumetric-modulated arc therapy (VMAT) in radiation oncology. Researchers developed an original optimization tool using DoE to determine optimal field configuration selections [22]. The study investigated multiple input factors including couch angles, arc angles, collimator angles, field sizes, and beam energy to optimize dose distributions in brain tumor treatments.

The screening approach allowed efficient assessment of these factors before resource-intensive dose calculations. Results demonstrated that the DoE-optimized configurations provided the same or slightly superior plan quality compared to clinical plans created by experts [22]. This case illustrates how screening designs can efficiently identify influential factors in complex systems while removing dependence on individual practitioner experience.

Integration with Broader HTE Workflows

Screening designs do not exist in isolation but function as a critical component within comprehensive HTE workflows. Effective integration requires:

Data Structure Compatibility: Screening data must be structured to enable export for use in AI/ML frameworks, requiring normalization of data from heterogeneous systems [2].

Workflow Connectivity: End-to-end HTE platforms connect experimental design to analytical results, eliminating manual transcription and reducing errors [2]. This connectivity is essential for maintaining data integrity throughout the screening process.

Iterative Design Implementation: Modern approaches increasingly use machine learning-enabled DoE, such as Bayesian optimization modules, to reduce the number of experiments needed to achieve optimal conditions [2]. These iterative approaches use information from initial screening results to guide subsequent experimental designs.

The proper implementation of screening designs within HTE workflows represents a powerful approach for accelerating research and development across multiple domains, from pharmaceutical discovery to materials science. By efficiently identifying truly influential factors from among many candidates, these designs enable more focused and productive subsequent research phases.