Optimal Experimental Design for Functional Materials: Accelerating Discovery with Statistical and Machine Learning Methods

This article provides a comprehensive guide to optimal experimental design (OED) for researchers and professionals developing functional materials.

Optimal Experimental Design for Functional Materials: Accelerating Discovery with Statistical and Machine Learning Methods

Abstract

This article provides a comprehensive guide to optimal experimental design (OED) for researchers and professionals developing functional materials. It covers foundational statistical principles, explores advanced methodologies including machine learning-guided design and scalable algorithms for complex design spaces, addresses critical challenges in model robustness and constraint handling, and outlines rigorous validation frameworks. By synthesizing modern computational strategies with practical experimental validation, this resource aims to equip scientists with the tools to drastically reduce experimental costs and accelerate the discovery of next-generation materials for biomedical and clinical applications.

Laying the Groundwork: Core Principles and the Need for Optimal Design in Complex Materials

Defining Optimal Experimental Design and its Impact on Materials Research

Optimal Experimental Design (OED) refers to a systematic framework for guiding the sequence of experiments or simulations to maximize the information gain while minimizing resource consumption. In the context of functional materials research, OED addresses the fundamental challenge that materials search spaces are vast due to the complex interplay of structural, chemical, and microstructural degrees of freedom, yet only a small fraction can be experimentally investigated due to cost and time constraints [1]. The core principle of OED is to use knowledge from previously completed experiments to recommend the next experiment that most effectively reduces the model uncertainty affecting the materials properties of interest [1]. This approach moves beyond traditional trial-and-error or high-throughput methods, which can be inefficient, toward a guided, intelligent discovery process. For materials researchers and drug development professionals, adopting OED principles can dramatically accelerate the discovery of new functional materials, such as shape memory alloys, catalysts, or drug delivery systems, by strategically targeting experimental efforts where materials with desirable properties are most likely to be found.

Core Methodological Frameworks

Mean Objective Cost of Uncertainty (MOCU)

The Mean Objective Cost of Uncertainty (MOCU) is an objective-based uncertainty quantification scheme that measures uncertainty based on the increased operational cost it induces [1]. Unlike general uncertainty measures, MOCU specifically quantifies how uncertainty deteriorates the performance of a designed operator, such as a material simulation, in achieving a targeted objective. The MOCU-based experimental design selects the next experiment that is expected to most reduce this operational cost, thereby directly steering the experimental campaign toward the ultimate material performance goal [1].

Mathematical Definition: For a vector of uncertain parameters θ with a prior probability distribution f(θ), and a cost function C(θ, *a) that depends on both the parameters and a designed operator a, the robust operator a*R is the one that minimizes the expected cost given the current uncertainty. The MOCU is then defined as the expected cost increase due to implementing this robust operator instead of the ideal operator that would be chosen if the true parameters were known [1].

Information-Matching Approach

A recent information-theoretic approach formulates OED as a convex optimization problem that selects training data to ensure sufficient information is available to learn the parameter combinations necessary for predicting downstream Quantities of Interest (QoIs) [2]. This method is based on the Fisher Information Matrix (FIM) and is particularly useful for models with many unidentifiable parameters, as it focuses on learning only the parameter combinations that the QoIs depend on [2]. This makes it scalable to large models and datasets and effective for active learning in materials science applications.

Comparative Analysis of OED Methods

The table below summarizes key OED methodologies discussed, highlighting their core principles, advantages, and applicability to materials research.

Table 1: Comparison of Optimal Experimental Design (OED) Methodologies

| Method | Core Principle | Key Advantage | Primary Application Context |

|---|---|---|---|

| MOCU-Based Design [1] | Selects experiments that maximally reduce the expected deterioration in a performance objective caused by parameter uncertainty. | Directly ties experimental selection to the end-use performance goal, ensuring efficient resource allocation for specific targets. | Materials design with a targeted property (e.g., minimizing energy dissipation in shape memory alloys). |

| Information-Matching [2] | Selects data that provides the most information for learning the specific parameter combinations that influence downstream Quantities of Interest (QoIs). | Efficiently handles models with many "sloppy" parameters; formulated as a scalable convex optimization problem. | Active learning for large-scale models in material science and other fields where QoIs depend on a subset of parameters. |

| Random Selection [1] | Chooses subsequent experiments uniformly at random from the candidate pool. | Simple to implement with no required model. | Serves as a baseline for comparing the performance of more sophisticated OED strategies. |

| Pure Exploitation [1] | Selects the experiment with the best-predicted performance based on the current model. | May quickly find a reasonably good solution. | Can lead to suboptimal performance by getting stuck in local optima and failing to reduce overall uncertainty. |

Application Notes: A Case Study in Shape Memory Alloys

Experimental Objective and Setup

This application note outlines the use of MOCU-based OED to discover shape memory alloy (SMA) compositions with minimal energy dissipation during superelastic (SE) cycles. Energy dissipation, quantified by the hysteresis area in the stress-strain curve, is a critical property affecting the fatigue life of SMA-based devices such as cardiovascular stents [1]. The goal of the OED is to recommend the best dopant and its concentration for the next simulation by leveraging data from previous Time-dependent Ginzburg-Landau (TDGL) simulations.

Computational Model (TDGL Oracle): The mesoscale TDGL theory serves as the computational oracle that captures the underlying physics of the shape memory effect and superelasticity [1]. In this model, chemical doping is mimicked by varying model parameters, specifically the dimensionless scaled temperature (h) and the stress scaling factor (σ). For a given parameter set (h, σ), the TDGL simulation computes the stress-strain curve, from which the energy dissipation, D(h, σ), is calculated as the area enclosed by the hysteresis loop.

Detailed Experimental Protocol

Table 2: Reagent Solutions for Computational Materials Discovery

| Research Reagent / Computational Tool | Function / Description |

|---|---|

| Time-dependent Ginzburg-Landau (TDGL) Model | A phase-field model that acts as the "experimental oracle," calculating microstructural evolution and resulting stress-strain curves for a given set of material parameters [1]. |

| Uncertain Parameter Vector (θ = [h, σ]) | A computational analogue to chemical reagents. The parameters h (scaled temperature) and σ (stress scaling factor) mimic the effect of different dopants and concentrations on material behavior [1]. |

| Prior Probability Distribution, f(θ) | Encodes initial belief or knowledge about the plausible values of the uncertain parameters h and σ before new "experiments" are conducted. A uniform distribution is often used when prior knowledge is limited [1]. |

| Cost Function, C(θ, a) = D(θ) | The objective function to be minimized. In this case, the cost is defined as the energy dissipation D calculated from the hysteresis loop for a material with parameters θ [1]. |

Protocol: MOCU-Based Iterative Design for SMA Discovery

Pre-experimental Planning:

- Define Objective: Clearly state the primary objective. In this case, it is to find the material parameters θ = (h, σ) that minimize the energy dissipation D(θ).

- Define Uncertainty Class: Specify the range of values for the uncertain parameters h and σ that will be considered, forming the uncertainty class Θ.

- Specify Prior Distribution: Establish a prior probability distribution f(h, σ) over the uncertainty class. In the absence of prior knowledge, a uniform distribution is a standard starting point [1].

- Identify Candidate Experiments: Enumerate the set of possible next experiments. In this study, each candidate is a specific dopant-concentration pair, computationally represented by a specific (h, σ) parameter set [1].

Procedure: Note: This procedure is iterative. The following steps (5-9) are repeated until a stopping criterion is met (e.g., a sufficiently low dissipation is found, or a computational budget is exhausted).

- Compute Robust Material: Find the robust material parameter set θR that minimizes the expected dissipation given the current uncertainty: θR = argmina EΘ[C(θ, a)] = argmin_a* E_Θ[D(θ)].

- Calculate MOCU: Compute the Mean Objective Cost of Uncertainty as MOCU = EΘ[ *C*(θ, θR) - *C(θ, θI*(θ*)) ], where θI(θ) is the ideal material if θ were known [1].

- Evaluate Expected MOCU Reduction: For every candidate experiment i (e.g., each dopant-concentration pair), calculate the expected MOCU reduction after measuring that candidate. This involves considering all possible outcomes x of the experiment, updating the prior distribution to a posterior f(h, σ | X_i = x), and computing the resulting MOCU for each posterior [1].

- Select Next Experiment: Choose the candidate experiment i that provides the largest expected MOCU reduction.

- Perform Experiment & Update: Run the TDGL simulation (the "experiment") for the selected candidate parameters. Use the outcome (the calculated dissipation value) to update the prior probability distribution f(h, σ) to a posterior distribution *f(h, *σ | *X_i = x) using Bayes' theorem [1].

Reporting Guidelines: Adhere to scientific reporting standards to ensure reproducibility [3]. Key reported data elements should include:

- Objective: A clear statement of the targeted material property.

- Uncertain Parameters: Definitions and ranges for all uncertain variables.

- Prior Distribution: Justification and description of the initial prior.

- Computational Model: Details of the TDGL model (or other oracle) used.

- Experimental Results: For each iteration, report the selected candidate, the outcome, and the updated posterior distribution.

- Final Recommendation: The parameter set identified as optimal and its predicted performance.

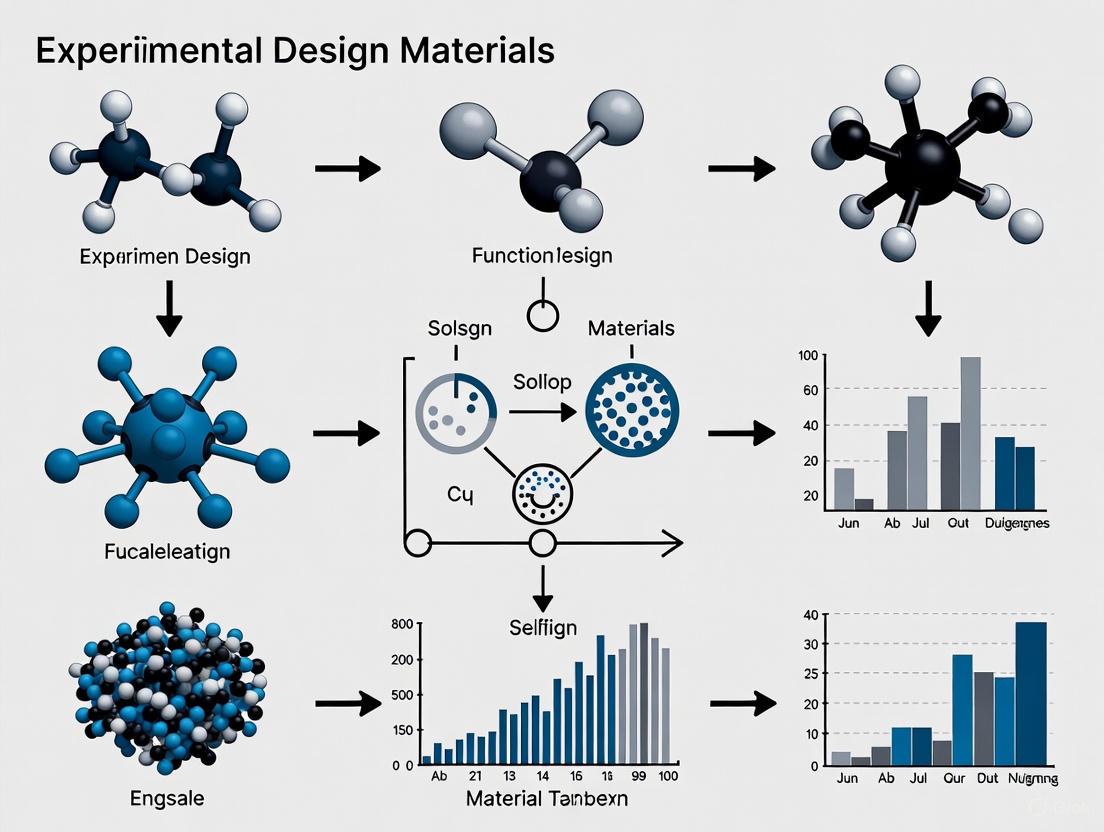

Workflow and System Diagram

The following diagram visualizes the logical flow of the MOCU-based optimal experimental design process applied to materials discovery.

MOCU-Based OED Workflow

The implementation of Optimal Experimental Design in materials research represents a paradigm shift from data-intensive to intelligence-intensive discovery. The MOCU-based framework has demonstrated superior performance in computational studies, significantly outperforming random selection and pure exploitation strategies by efficiently guiding the search for low-dissipation shape memory alloys [1]. This results in a marked reduction in the number of experiments or simulations required to achieve a performance target, translating directly into saved time and computational resources.

The broader impact of OED extends across functional materials research and drug development. The information-matching approach shows that a relatively small set of optimally chosen training data can often provide the necessary information for precise predictions, which is encouraging for active learning in large machine-learning models [2]. As materials models grow in complexity and the demand for novel materials accelerates, OED provides a mathematically rigorous and practical framework for navigating vast design spaces. By focusing experimental efforts on the most informative directions, OED empowers researchers and scientists to systematically overcome the challenges of complexity and cost, ultimately accelerating the discovery and deployment of next-generation functional materials.

In the field of functional materials research, where experimental resources are often limited and the relationship between material structure and performance is complex, the strategic design of experiments is paramount. Optimal experimental design (OED) provides a statistical framework for maximizing the information gain from each experimental run, thereby accelerating the discovery and optimization of new materials. These model-specific designs are generated computationally to optimize a particular statistical criterion, ensuring the most efficient use of resources when classical designs like full factorials are impractical due to constraints or excessive run requirements [4] [5].

The core principle of OED is to select a set of experimental points (design points) that minimize the uncertainty associated with the parameters of a pre-specified model or the predictions made by that model. The "optimality" of a design is always defined relative to a chosen statistical criterion, each with distinct objectives and applications. For researchers calibrating models that map processing conditions to material properties—a common scenario in functional materials research—understanding these criteria is essential for designing efficient and informative experiments [5] [6].

Mathematical Foundations of Optimality

The Information Matrix and Its Role

The foundation of most optimality criteria is the Fisher Information Matrix, denoted as ( M(\xi, \theta) ). This matrix captures the amount of information an experimental design ( \xi ) provides about the unknown parameters ( \theta ) of a model. For a nonlinear model ( f(x, \theta) ), the information matrix for a design ( \xi ) is defined as:

[ M(\xi, \theta) = \intX m(x, \theta) \xi(dx) = \intX D\theta f(x,\theta)^T \cdot \Sigma^{-1} \cdot D\theta f(x,\theta) \xi(dx) ]

where ( D\theta f(x,\theta) ) is the Jacobian matrix of the model with respect to its parameters, and ( \Sigma ) is the covariance matrix of the measurement errors [6]. The information matrix is inversely related to the covariance matrix of the parameter estimates from the least-squares estimator: ( \text{Cov}(\theta{LSE}) \approx M(\xi, \theta)^{-1} ) [6]. Different optimality criteria are functionals of this information matrix or its inverse, each aiming to minimize a different aspect of estimation or prediction uncertainty [5].

The Kiefer-Wolfowitz Equivalence Theorem

A landmark result in OED theory is the Kiefer-Wolfowitz Equivalence Theorem [6]. This theorem establishes the equivalence between D-optimality and G-optimality (defined in Section 3) for designs considered as probability measures. In practice, it provides a method to verify whether a numerically generated design is truly D-optimal by checking its G-efficiency. This theorem is crucial for the algorithms that generate optimal designs and assures researchers of the quality of the computed design [5] [6].

Detailed Criterion Explanations and Applications

D-Optimality (Determinant)

D-optimality seeks to minimize the generalized variance of the parameter estimates. Mathematically, a D-optimal design maximizes the determinant of the information matrix ( M(\xi, \theta) ), which is equivalent to minimizing the determinant of the parameter covariance matrix ( (M(\xi, \theta))^{-1} ) [4] [5]. This criterion results in the smallest possible confidence ellipsoid for the parameter estimates, providing the most precise estimates overall.

- Primary Application: Ideal for factor screening and model discrimination, where the primary goal is to identify which factors or model terms have significant effects on the material's performance [7] [8]. It is the best choice when the research objective is precise parameter estimation for powerful statistical tests of significance [7].

- Design Characteristic: D-optimal designs typically place experimental runs at the extreme boundaries (edges) of the design space to achieve this goal [9] [7].

- Software Note: Algorithms for generating D-optimal designs (e.g., coordinate-exchange, row-exchange) are available in major statistical software like JMP, SAS, R, and MATLAB's Statistics and Machine Learning Toolbox (

cordexch,rowexchfunctions) [4] [10].

I-Optimality (Integrated Variance)

I-optimality focuses on prediction quality. It aims to minimize the average prediction variance across the entire design space [5] [9]. While D-optimality is concerned with the "variance of the estimates," I-optimality is concerned with the "variance of the predicted response."

- Primary Application: Excellent for response surface methodology (RSM) and process optimization, where the fitted model will be used to find factor settings that produce a desired material property or performance outcome [7] [8]. It is the preferred criterion when the goal is to use the model for making precise predictions [7].

- Design Characteristic: To minimize the average prediction variance, I-optimal designs tend to include more points in the interior of the design space compared to D-optimal designs [9] [7].

A-, E-, and G-Optimality

A-Optimality ("Average" or Trace): This criterion seeks to minimize the trace of the inverse of the information matrix [5]. This is equivalent to minimizing the average variance of the individual parameter estimates [5] [7]. A-optimality can be seen as a compromise between D- and I-optimality, though it is not scale-invariant. It offers the possibility to emphasize particular terms in the model by adjusting weights before summing the variances [7].

E-Optimality (Eigenvalue): This design maximizes the minimum eigenvalue of the information matrix [5]. By focusing on the worst-case scenario in the parameter space, E-optimality seeks to make the confidence ellipsoid as spherical as possible, preventing any single parameter from being estimated with excessively high variance relative to the others.

G-Optimality (Global Variance): A G-optimal design minimizes the maximum prediction variance over the design space [5]. It is directly concerned with the worst-case prediction error within the region of interest. As per the Kiefer-Wolfowitz theorem, it is equivalent to D-optimality for continuous design measures [5] [8].

The table below provides a consolidated comparison of these key criteria for quick reference.

Table 1: Summary of Key Optimality Criteria in Experimental Design

| Criterion | Statistical Objective | Primary Application in Materials Research | Key Advantage | ||

|---|---|---|---|---|---|

| D-Optimality | Maximize ( | X'X | ) / Minimize generalized variance of parameters [4] [5] | Factor screening, model discrimination, precise parameter estimation [7] | Powerful significance tests for model effects [7] |

| I-Optimality | Minimize average prediction variance over design space [5] [9] | Response surface optimization, prediction-based modeling [7] [8] | Best for predicting material properties within the design space [7] | ||

| A-Optimality | Minimize trace of ( (X'X)^{-1} ) / average parameter variance [5] | Focusing on specific model terms, a balanced approach to parameter estimation [7] | Allows for weighting of specific terms of interest [7] | ||

| E-Optimality | Maximize the minimum eigenvalue of ( X'X ) [5] | Preventing large variance in any single parameter estimate | Improves the conditioning of the information matrix | ||

| G-Optimality | Minimize the maximum prediction variance [5] | Ensuring no region of the design space has poor prediction capability | Directly controls worst-case prediction error |

Experimental Protocols for Functional Materials Research

Protocol 1: Sequential D-Optimal Design for Model Calibration

This protocol is ideal for iteratively building and refining a model that describes the relationship between material synthesis parameters and a key performance metric.

- Define Initial Model and Space: Specify an initial candidate model (e.g., a first-order model with potential interactions) and define the feasible region of your design variables (e.g., temperature, pressure, precursor concentration).

- Generate and Execute Initial Design: Use software to generate a D-optimal design with a small number of runs (e.g., 8-12) from the candidate set. Randomize the run order and perform the experiments to measure the response.

- Calibrate Model and Assess Fit: Fit the initial model to the collected data. Analyze residuals and statistical significance of terms.

- Sequential Augmentation: If the model fit is inadequate or parameter uncertainties are high, use a D-Augmentation algorithm (e.g.,

daugmentin MATLAB) to add a further set of runs to the existing design to estimate additional terms (e.g., quadratic effects) or to reduce variances [6] [10]. - Iterate Until Satisfied: Repeat steps 3 and 4, potentially updating the model as new information is gained, until the parameter estimates are sufficiently precise for the research goals [6].

Protocol 2: I-Optimal Design for Response Surface Optimization

This protocol is used when the goal is to locate optimal processing conditions to maximize or minimize a material's property.

- Define the Response Surface Model: Specify a second-order (quadratic) model, which is standard for capturing curvature in response surfaces.

- Generate I-Optimal Design: Using the defined model and a fixed number of available experimental runs, generate an I-optimal design. This design will minimize the integrated variance of prediction.

- Execute Experiments and Fit Model: Perform the experiments in a randomized order and measure the responses. Fit the full quadratic model to the data.

- Validate Model and Generate Contour Plots: Use diagnostic plots to check model validity. Then, use the fitted model to create contour and surface plots of the response over the design space.

- Identify Optimal Settings: Analyze the response surface plots to find the factor settings that yield the optimal (maximum or minimum) predicted response. Conduct confirmation runs at these settings to validate the prediction.

Workflow Diagram: Optimal Design Selection

The following diagram illustrates the decision-making process for selecting an appropriate optimality criterion based on the research objective, a common scenario in functional materials development.

Figure 1: A workflow for selecting an optimality criterion based on research goals.

The Scientist's Toolkit: Essential Reagent Solutions

The implementation of optimal designs, particularly in functional materials research, relies on a combination of statistical software and domain-specific tools.

Table 2: Essential Research Reagent Solutions for Optimal Design Implementation

| Tool / Reagent | Category | Function in Optimal Design |

|---|---|---|

| JMP Custom Design | Statistical Software | A comprehensive platform for generating D-, I-, A-, and other optimal designs. It provides heuristic guidance to select the best criterion based on the user's defined model and goals [9] [7]. |

R (packages: DoE.base, skpr) |

Statistical Software | Open-source environment with extensive packages for generating and analyzing a wide variety of optimal designs, offering high customizability for advanced users [5]. |

MATLAB (cordexch, rowexch) |

Statistical Software | Provides algorithms for generating D-optimal designs using coordinate-exchange and row-exchange methods, suitable for integrating design generation with custom simulation and modeling workflows [10]. |

| SAS PROC OPTEX | Statistical Software | A procedure in the SAS/QC package dedicated to finding optimal experimental designs based on a specified candidate set and optimality criterion [5]. |

| Candidate Set | Computational Concept | A data table containing all possible treatment combinations (e.g., from a full factorial) from which the optimal design algorithm selects the most informative runs [4]. |

| Fisher Information Matrix | Mathematical Construct | A matrix that quantifies the amount of information an experiment carries about the unknown parameters; the foundation for computing all optimality criteria [6]. |

Advanced Topics and Future Directions

Robustness and Model Uncertainty

A significant challenge in optimal design, particularly for nonlinear models common in materials science, is that the "optimal" design depends on the specified model and, for nonlinear models, on the values of the parameters ( \theta ) themselves, which are unknown a priori [5] [6]. This model dependence means a design optimal for one model may perform poorly for another. To address this, robust optimal design approaches have been developed:

- Bayesian Optimal Designs: These incorporate prior knowledge or uncertainty about the parameters ( \theta ) by using a probability distribution. The design is then optimized to maximize the expected information, providing robustness over a range of plausible parameter values [5] [6].

- Maxi-Min Designs: These aim to find a design that performs well across a set of possible models or parameter values by maximizing the minimum efficiency relative to the best design for each scenario [6].

Sequential Design and Active Learning

In complex functional materials optimization, a fixed design may be inefficient. Sequential design or active learning strategies are increasingly employed [6] [11]. The process involves:

- Starting with an initial small design (whose size may be optimized for the design space) [11].

- Fitting a surrogate model (e.g., a Gaussian process or a quadratic model) to the collected data.

- Using an acquisition function (which can be based on optimality criteria) to select the next most informative experiment.

- Iterating this process, continually updating the model and refining the experimental focus towards regions of interest, such as the optimum or a boundary. This approach is highly aligned with the iterative workflow of materials discovery and optimization [6] [11].

The drive to develop next-generation functional materials, from advanced alloys to targeted drug delivery systems, is increasingly focused on architectures that are hierarchical and non-uniform. These complex structures are not random; their specific spatial arrangements across multiple length scales dictate their macroscopic properties and performance. However, this complexity presents a profound challenge for traditional characterization and design methods, which often struggle with the infinite design possibilities and the computational cost of exploring them via trial-and-error or intuition-based approaches [12]. Framing this challenge within the principles of Optimal Experimental Design (OED) provides a powerful strategy to navigate this complexity efficiently. OED uses mathematical criteria to plan experiments that extract the maximum amount of information with minimal resources, a critical advantage when dealing with costly simulations or physical experiments [13] [6]. This Application Note outlines integrated, OED-driven protocols that combine machine learning (ML) and advanced statistical design to accelerate the characterization and inverse design of hierarchical and non-uniform materials.

Quantitative Analysis of ML and OED Approaches

Selecting the appropriate computational methodology is crucial for managing complexity. The table below summarizes and compares the key quantitative findings from recent advancements in machine learning and optimal experimental design for materials research.

Table 1: Comparison of Quantitative Findings from ML and OED Studies

| Study Focus | Key Metric/Performance | Methodology | Implication for Materials Characterization |

|---|---|---|---|

| Non-Uniform Cellular Materials Design [12] | Accurate prediction of mechanical response curves; Framework capable of generating matching geometric patterns for a target response. | Deep Neural Network (DNN) with 5 hidden layers, trained on 65,536 possible 4x4 unit cell patterns. | Demonstrates ML's capability to replace costly finite element simulations for rapid property prediction and inverse design. |

| Optimal Design for Small Samples [13] | Introduction of nature-inspired metaheuristics (e.g., Particle Swarm Optimization) and an efficient rounding method for small-N experiments (N ≈ 10-15). | Particle Swarm Optimization (PSO) for generating model-based optimal designs applicable to small-sample toxicology. | Provides a solution for efficient experimentation under tight budgetary or regulatory constraints, common in early-stage research. |

| Surrogate-Based Active Learning [11] | Investigation of optimal initial data sizes for efficient convergence in functional materials optimization. | Surrogate-based active learning coupled with quantum computing for navigating complex design spaces. | Reduces computational costs by determining the minimum data required to initiate an efficient optimization process. |

| Optimal Design Under Uncertainty [6] | Methods (clustering & local approximation) to manage uncertainty in optimal designs for nonlinear models. | Local approximation of confidence regions for optimal design points in nonlinear model calibration. | Ensures robust experimental designs even when initial parameter estimates are uncertain, improving model reliability. |

Integrated Experimental Protocols

The following protocols integrate the OED and ML approaches detailed in Table 1 into a cohesive workflow for characterizing and designing non-uniform materials.

Protocol: ML-Accelerated Characterization & Inverse Design of Cellular Materials

This protocol is adapted from the machine-learning framework for non-uniform cellular materials [12].

1. Objective: To rapidly predict the mechanical properties of a given non-uniform geometric pattern (forward prediction) and to discover geometric patterns that yield a target mechanical response (inverse design).

2. Research Reagent Solutions (Computational):

- Data Generation Engine: Finite Element Analysis (FEA) software (e.g., ABAQUS) to simulate mechanical response and generate training data.

- Machine Learning Framework: A Deep Neural Network (DNN) environment, such as MATLAB or Python (with TensorFlow/PyTorch). The referenced study used a 5-hidden-layer DNN [12].

- Computational Resources: GPU-accelerated hardware to train deep learning models efficiently.

3. Methodology: 1. Data Preparation: * Define the design space for your unit cell (e.g., a 4x4 grid of binary states representing material presence/absence). * Generate a comprehensive set of "unique" patterns, leveraging geometric symmetries (rotations, flips) to minimize redundant simulations. * Run FEA simulations for all unique patterns to obtain mechanical response curves (e.g., stress-strain) as ground truth. 2. Model Training: * Represent each geometric pattern as a binary matrix (input) and its corresponding response curve as a vector (output). * Partition the data into training and testing sets (e.g., 80%/20%). * Train the DNN using a stochastic gradient descent algorithm (e.g., stochastic conjugate gradient backpropagation) to learn the mapping from geometry to mechanical response. 3. Implementation: * Forward Prediction: Use the trained DNN to predict the response of any new geometric pattern within the design domain in a fraction of the time required for FEA. * Inverse Design: Construct a databank from the model's predictions. To find a pattern for a target response, query the databank for the closest-matching prediction.

4. Visualization of Workflow: The diagram below illustrates the integrated DBTL (Design-Build-Test-Learn) cycle enhanced with ML and OED principles.

Protocol: OED for Small-Sample Materials Testing Using PSO

This protocol is adapted from applications of optimal design in toxicology to the context of materials science [13].

1. Objective: To determine the most efficient set of experimental conditions (e.g., composition, processing temperature) for model calibration when the total number of experiments is severely limited (N < 15).

2. Research Reagent Solutions:

- Statistical Software: R, Python (with

pyswarmor similar), or a custom web-app implementing Particle Swarm Optimization (PSO). - Experimental Setup: Standard materials characterization tools (e.g., mechanical tester, spectrometer) with precise control over input parameters.

3. Methodology:

1. Define Model and Criterion:

* Posit a statistical model (e.g., a dose-response model linking a material's composition to its strength).

* Select a design criterion (e.g., D-optimality) to maximize the precision of the model parameters.

2. Generate Optimal Design with PSO:

* Use a nature-inspired metaheuristic algorithm like PSO to find the optimal set of design points (e.g., specific compositions) and the proportion of replicates at each point.

* PSO efficiently navigates the complex design space without restrictive assumptions.

3. Implement the Exact Design:

* For a small sample size N, convert the optimal proportions into an implementable, exact design (a specific number of replicates at each point) using an Efficient Rounding Method (ERM). The ERM ensures the integer number of tests sums to N while preserving statistical efficiency.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and analytical "reagents" essential for implementing the protocols described above.

Table 2: Essential Research Reagent Solutions for Complex Materials Characterization

| Item | Function / Application |

|---|---|

| Finite Element Analysis (FEA) Software | Generates high-fidelity mechanical or physical response data for training machine learning models where experimental data is scarce or expensive [12]. |

| Deep Neural Network (DNN) Frameworks | Serves as a surrogate model to learn the complex, non-linear relationships between material structure and properties, enabling rapid prediction and inverse design [12]. |

| Particle Swarm Optimization (PSO) Algorithm | A nature-inspired metaheuristic used to efficiently find model-based optimal experimental designs, especially for problems with complex, non-convex design spaces [13]. |

| Abstraction Hierarchy Framework | Provides a modular framework (Project -> Service -> Workflow -> Unit Operation) to standardize and streamline complex, multi-step experimental and computational workflows, improving reproducibility [14]. |

| Optimal Experimental Design (OED) Web Apps | User-friendly tools that allow researchers to input their model and generate various types of optimal designs without deep expertise in optimization algorithms [13]. |

Characterizing hierarchical and non-uniform materials requires a fundamental shift from sequential, intuition-based methods to integrated, closed-loop strategies. The protocols outlined herein demonstrate that combining Optimal Experimental Design for strategic data acquisition with Machine Learning for building fast, accurate surrogate models creates a powerful, synergistic framework. This approach directly addresses the challenge of complexity by making the design process data-driven, efficient, and systematic. By adopting these OED-guided, ML-accelerated workflows, researchers in functional materials and drug development can significantly reduce computational and experimental costs while accelerating the discovery and optimization of next-generation materials with tailored properties.

Why Traditional Methods Fail for Modern Functional Materials

Modern functional materials, engineered for specific advanced properties, are defining the next frontier of technological progress. These materials—including shape memory alloys (SMAs), metamaterials, and advanced aerogels—are characterized by their complex, multi-phase structures and dynamic, responsive behaviors. Their development is a key priority, with the U.S. Department of Defense investing billions to grow and modernize the defense industrial base, seeking to drastically accelerate the process of taking new materials from concept to certified samples from years to less than three months [15]. Similarly, industry leaders like LG Innotek face the immense pressure of global markets, where they must innovate on radically compressed timelines, iterating new materials and technologies in "three to six months" rather than years [15].

However, this drive for acceleration is clashing with a fundamental methodological challenge. The traditional materials discovery paradigm, often reliant on sequential trial-and-error, linearly executed experiments, and intuition-driven design, is proving inadequate. These conventional approaches fail to account for the complex, non-linear interactions within functional materials and their extreme sensitivity to manufacturing parameters. This article details the specific limitations of traditional methods and establishes a modern framework based on Optimal Experimental Design (OED) and machine learning to navigate these challenges efficiently.

Defining the "Modern Functional Material"

Modern functional materials are distinguished from traditional structural materials by their active, tailored responses to external stimuli. Their performance is not merely a function of their bulk composition but is critically derived from their intricate micro-architectures and phase transformations.

- Shape Memory Alloys (SMAs): These metals exhibit the shape memory effect and pseudoelasticity, driven by a reversible, diffusionless solid-state phase transformation between austenite and martensite phases. Their functional properties are governed by four critical transformation temperatures (Ms, Mf, As, Af) and are highly sensitive to compositional variations and thermomechanical processing history [16].

- Metamaterials: These artificially engineered materials gain their properties—such as a negative refractive index or the ability to manipulate electromagnetic, acoustic, or seismic waves—from their designed architecture rather than their innate chemistry. Their performance is a direct result of their nanoscale structuring, requiring fabrication techniques like 3D printing and lithography with extreme precision [17].

- Aerogels: Once confined to thermal insulation, these ultra-lightweight, highly porous materials are now engineered for applications in energy storage and biomedical engineering. Their functionality, such as superior electrical conductivity or drug delivery efficacy, depends on their dendritic microstructure and surface chemistry, which are dictated by novel synthesis and drying methods [17].

Table 1: Key Characteristics of Modern Functional Materials

| Material Class | Defining Functional Property | Source of Complexity | Example Applications |

|---|---|---|---|

| Shape Memory Alloys | Shape memory effect, superelasticity | Phase transformation temperatures, sensitivity to stress and thermal history | Medical implants, aerospace actuators, seismic dampers [16] |

| Metamaterials | Properties not found in nature (e.g., negative refraction) | Nanoscale architecture and structural ordering | Improving 5G networks, earthquake protection, invisibility cloaks [17] |

| Advanced Aerogels | Ultra-high porosity, tunable surface chemistry | Synthesis and drying process determining pore structure | Energy storage supercapacitors, drug delivery systems, advanced insulation [17] |

The Critical Failures of Traditional Methodologies

The development and manufacturing of these advanced materials expose several fundamental weaknesses in traditional R&D approaches.

Inability to Handle Multi-Parameter Optimization

Traditional one-factor-at-a-time (OFAT) experimentation is inefficient and often ineffective for functional materials, where properties emerge from the complex interplay of numerous parameters. For instance, laser machining of carbon fiber-reinforced polymers (CFRPs) requires the simultaneous optimization of laser power, cutting speed, pulse frequency, and environmental conditions to minimize the heat-affected zone (HAZ) and preserve tensile strength [18]. An OFAT approach would require an impractically large number of experiments and would likely miss optimal parameter combinations due to a lack of interaction effects.

High Sensitivity and "Extreme" Requirements

Functional materials are often deployed in extreme environments—high temperatures, corrosive media, or under intense mechanical loads—where their performance is highly sensitive to minor variations in composition or processing. A material that performs well in a lab setting may fail in the field. This sensitivity, combined with the push from industry and government to accelerate development and qualification cycles, renders the traditional, slow, and iterative "PhD-length" development cycles obsolete [15].

The Scalability Gap

A material's lab-scale performance is no guarantee of its commercial viability. A key industrial definition of an "extreme material" is one that can be manufactured at an extremely large scale. LG Innotek, for example, produces substrates for semiconductor chips at a scale of nine billion units annually [15]. Traditional methods often fail to predict how synthesis and processing conditions translate from gram-scale batches in a laboratory to ton-scale industrial production, leading to unexpected failures in performance, durability, or cost-effectiveness.

A New Framework: Optimal Experimental Design for Functional Materials

Optimal Experimental Design (OED) provides a powerful, mathematically rigorous framework to overcome the limitations of traditional methods. OED moves beyond statistical designs by proactively selecting design points (experimental conditions) that maximize the information content of each experiment, leading to more precise mathematical models with fewer resources [6].

Core Principles of OED

At its heart, OED for non-linear models, which are ubiquitous in materials science, involves maximizing a function of the Fisher Information Matrix (FIM). The FIM, defined for a model ( f(x, \theta) ) at a design point ( x ), is: [ m(x,\theta) = D\theta f(x,\theta)^T \cdot \Sigma^{-1} \cdot D\theta f(x,\theta) ] where ( D\theta f ) is the Jacobian matrix of the model with respect to its parameters ( \theta ), and ( \Sigma ) is the covariance matrix of measurement errors. The overall information matrix for a design ( \xi ) is ( M(\xi, \theta) = \intX m(x,\theta) \xi(dx) ) [6]. By optimizing the design ( \xi ) to maximize ( M(\xi, \theta) ), we minimize the uncertainty in the estimated parameters ( \theta ).

A significant challenge in OED for nonlinear models is that the optimal design depends on the very parameters ( \theta ) that are unknown. This creates a circular problem. Modern approaches to handle this uncertainty include:

- Average Case Design: The objective function is replaced with its expected value over the uncertain parameters.

- Worst-Case (Minimax) Design: The design is optimized for the least favorable possible value of the parameters, providing a robust design [6].

- Sequential Design: Experiments are conducted iteratively; after each batch of experiments, the parameter estimates are updated, and the next optimal design is computed based on the new estimates [6].

The following workflow diagram illustrates this powerful iterative process.

Protocol: Implementing a Sequential OED for a Kinetic Model

This protocol is adapted from a study using OED and Artificial Neural Networks (ANNs) for kinetic model identification [19].

Objective: To identify the correct set of equations defining a kinetic model for a batch reaction system with a minimal number of experiments.

Reagents and Equipment:

- Table 2: Research Reagent Solutions

| Reagent/Equipment | Function in Protocol |

|---|---|

| Batch Reactor System | A controlled environment for carrying out the chemical reactions. |

| In-line Spectrophotometer | For real-time monitoring of reactant and product concentrations. |

| Artificial Neural Network (ANN) Software | For classifying experimental data into candidate kinetic models. |

| OED Optimization Algorithm | To compute the next best experiment based on current model uncertainty. |

Procedure:

- Initialization: Begin with a small set of initial experiments (e.g., 2-3 runs at different temperatures and initial concentrations) to form a preliminary dataset.

- Model Calibration: Use the available data to calibrate the parameters of several candidate kinetic models.

- ANN Training: Train an ANN classifier to distinguish between the candidate kinetic models based on simulated concentration-time profiles.

- Optimal Design Computation: Using the OED framework, compute the experimental conditions (e.g., temperature, initial concentration) that maximize the expected information gain for the ANN classifier. The goal is to find the conditions where the candidate models predict the most divergent outcomes, making them easiest to distinguish.

- Experiment Execution: Conduct the experiment at the computed optimal conditions.

- Model Identification and Update: Input the new experimental data into the ANN classifier to identify the most probable kinetic model. Update the dataset.

- Iteration: Repeat steps 2-6 until the ANN's confidence in a single model exceeds a pre-defined threshold (e.g., 95%).

Key Benefit: This methodology has been shown to effectively reduce the number of required experiments while enhancing the ANN's accuracy in identifying the correct kinetic model structure [19].

The Role of Machine Learning and Advanced Computation

Machine Learning (ML) acts as a force multiplier for OED, providing the tools to model complex structure-property relationships that are intractable with traditional physical models.

- Property Prediction: ML algorithms can screen novel materials with good performance by learning Quantitative Structure-Activity Relationships (QSARs) from existing data, dramatically accelerating the discovery process [20].

- Process Optimization: In laser manufacturing, ML algorithms optimize laser parameters (power, speed, pulse duration) in real-time, enhancing precision, reducing thermal damage, and enabling the fabrication of complex geometries in hard-to-machine materials like CFRPs and ceramics [18]. The synergy between ML and laser technologies is a cornerstone of smart manufacturing.

- Uncertainty Quantification: ML models can help characterize the uncertainty in parameter estimates, which directly feeds into the robust OED methods (like average-case or minimax design) discussed in Section 4.1, ensuring experimental designs are informative even under parameter uncertainty [6].

The integration of machine learning into the experimental design and fabrication process creates a powerful, closed-loop system for materials development, as visualized below.

The unique complexities of modern functional materials—their multi-parameter dependencies, extreme sensitivities, and scalability challenges—have rendered traditional, sequential trial-and-error methods obsolete. These approaches are too slow, too costly, and too likely to fail for the demands of modern industry and government. The path forward lies in the integrated adoption of Optimal Experimental Design and Machine Learning. The OED framework provides a mathematical foundation for maximizing information gain from every experiment, while ML provides the computational power to model complex relationships and optimize processes. Together, they form a new paradigm for functional materials research: a data-driven, iterative, and efficient process that is equal to the challenge of discovering and manufacturing the next generation of advanced materials.

The design of advanced functional materials demands a paradigm shift from traditional, empirical approaches to rational, strategy-led methodologies. This is particularly true for complex material classes like relaxor ferroelectrics (RFEs), where the intricate relationship between a heterogeneous structure and its macroscopic electromechanical properties dictates performance. The integration of optimal experimental design (OED) principles provides a powerful framework for navigating this complexity, enabling researchers to extract maximal information from minimal experiments and efficiently bridge design strategies to material function. This Application Note details the theoretical and practical protocols for implementing this integrated approach, using the groundbreaking design of a liquid-matter nematic relaxor ferroelectric (nRFE) as a central case study [21].

Theoretical Foundation: From Normal Ferroelectrics to Relaxors

Fundamental Polar States

Ferroelectric materials are characterized by a spontaneous electric polarization that can be reversed by an external electric field. The nature of this polarization varies significantly, primarily distinguishing between normal ferroelectrics and relaxor ferroelectrics.

- Normal Ferroelectrics: These materials exhibit a long-range, uniform polar order. Below the Curie temperature, their state is described by a double-well free-energy landscape, resulting in a stable, non-zero spontaneous polarization (Ps) and a characteristic square-shaped P-E hysteresis loop [21].

- Relaxor Ferroelectrics (RFEs): In contrast, RFEs are characterized by a disordered polar state consisting of polar nanoregions (PNRs) embedded within a non-polar matrix [21] [22]. This structure creates a broadened energy bottom in the free-energy landscape, leading to strong polarization fluctuations, a slim, slanted P-E hysteresis loop, and a diffuse phase transition [21]. Their properties include ultrahigh dielectric permittivity, large field-induced polarization, and superior electromechanical responses compared to normal ferroelectrics [21] [23].

The Role of Optimal Experimental Design (OED)

Optimizing the design of functional materials like RFEs requires precise model calibration, where OED is critical. The core of OED involves maximizing a function of the Fisher Information Matrix (FIM) to reduce the uncertainty of parameter estimates for a mathematical model [6] [24].

For a model (f(x, \theta)) predicting outputs from design variables (x) with parameters (\theta), the FIM for a design (\xi) is: [ M(\xi, \theta) = \intX m(x,\theta) \xi(dx), \quad \text{where} \quad m(x,\theta) = D\theta f(x,\theta)^T \cdot \Sigma^{-1} \cdot D\theta f(x,\theta) ] Here, (D\theta f) is the model Jacobian, and (\Sigma) is the measurement noise covariance [6]. Optimal designs (\xi^*) are found by optimizing criteria such as:

- D-optimality: Maximizes (\det(M(\xi, \theta))), minimizing the volume of the confidence ellipsoid for the parameters.

- A-optimality: Minimizes (\operatorname{tr}(M(\xi, \theta)^{-1})), reducing the average variance of the parameter estimates [6] [24].

For nonlinear models, the FIM depends on the unknown (\theta), necessitating iterative or robust OED approaches [6].

Case Study: Design of a Liquid-Matter Nematic Relaxor Ferroelectric

Design Strategy and Conceptual Workflow

The discovery of a fluid nRFE demonstrates a direct "design-by-concept" strategy [21]. The core principle was to artificially introduce polar nanoregions (PNRs) with nematic order (nPNRs) into a dielectric nematic environment to create a heterogeneous polarity, mimicking the structural disorder of solid-state RFEs in a liquid crystal system [21].

The diagram below illustrates the logical workflow connecting the initial design concept to material characterization and validation.

Quantitative Comparison of Ferroelectric States

The table below summarizes key characteristics of different ferroelectric states, highlighting the functional outcome of the nRFE design strategy.

Table 1: Comparison of Ferroelectric Material States

| Feature | Normal Ferroelectric | Relaxor Ferroelectric (RFE) | Nematic Relaxor Ferroelectric (nRFE) [21] |

|---|---|---|---|

| Polar Order | Long-range, uniform | Short-range, disordered PNRs | Nematic PNRs (nPNRs) in apolar matrix |

| Free-Energy Landscape | Double-well | Broadened bottom | Broadened bottom, single-well at high T |

| P-E Hysteresis | Square loop (S-shaped) | Slim, slanted loop (Shrunk S-shape) | Slim loop, high field-induced polarization (1.1 μC·cm⁻²) |

| Key Characteristic | Stable Ps | Ultrahigh permittivity, strong fluctuations | High fluidity, stable >30 K range, field-induced transition |

| Typical Material | PZT ceramics, BaTiO₃ | Pb(Mg₁/₃Nb₂/₃)O₃ (PMN) | Designed liquid crystal mixture |

Experimental Protocols

Protocol 1: Material Synthesis and nPNR Stabilization

This protocol outlines the procedure for creating a liquid-matter nRFE system via molecular mixing [21].

Objective: To synthesize a composite system with well-dispersed nematic polar nanoregions (nPNRs) of controlled size within an apolar nematic background. Principle: Achieving an intermediate length scale (200-400 nm) for nPNRs is critical. This is governed by balancing the energy stabilization within nPNRs against the energy penalties from polarization gradients and depolarization fields at the apolar-polar interface [21].

Materials:

- Polar Nematic Molecules: High dipole moment molecules that form the nPNRs.

- Apolar Nematic Host: Dielectric nematic liquid crystal material.

- Solvent (if needed, for homogeneous pre-mixing).

Procedure:

- Selection and Preparation: Select polar and apolar molecules with carefully tuned chemical structures and concentrations to favor micro-phase separation over full molecular solubility or macroscopic segregation.

- Solution Mixing: Dissolve the polar and apolar components in a common solvent at a defined ratio. Use sonication for 30-60 minutes to ensure a homogeneous mixture at the molecular level.

- Solvent Evaporation: Gradually evaporate the solvent under controlled temperature and pressure conditions to allow the slow self-assembly and phase separation of the components.

- Annealing: Anneal the resulting film or bulk sample at a temperature within the nematic phase range of the apolar host for several hours to facilitate the growth and stabilization of nPNRs to their equilibrium size.

Protocol 2: Characterization via Free-Energy Landscape Reconstruction

This protocol describes how to determine the polar state of the synthesized material by reconstructing its free-energy landscape from polarization-field (P-E) measurements [21].

Objective: To experimentally distinguish between normal ferroelectric, relaxor, and paraelectric states by reconstructing the Landau-Ginzburg-Devonshire (LGD) free-energy landscape. Principle: The free energy density (F) can be related to the P-E data via (E = \partial F / \partial P). Integration of the P-E curve allows for the reconstruction of (F(P)) [21].

Materials:

- Synthesized nRFE material (e.g., in a capacitor cell with aligned electrodes).

- Precision ferroelectric tester (hysteresis loop tracer).

- Temperature-controlled stage.

Procedure:

- Cell Preparation: Fabricate a test cell with transparent electrodes (e.g., ITO) and a spacer to define a precise thickness. Fill the cell with the synthesized material and align the nematic director uniformly.

- P-E Hysteresis Measurement: Place the cell in the temperature-controlled stage. Measure a series of P-E hysteresis loops at different temperatures across the region of interest (e.g., from 20 K below to 20 K above the suspected transition).

- Data Processing: For each temperature, use the measured P-E data point ((Ei, Pi)) to compute the free energy density (F) by numerical integration: [ F(P) \approx \int_0^P E(P') dP' ] Use a suitable numerical integration method (e.g., trapezoidal rule).

- Landscape Analysis: Plot (F) as a function of polarization (P) for each temperature.

- A double-well landscape indicates a normal ferroelectric.

- A broadened, single-well or shallow basin around (P=0) indicates a relaxor state.

- A sharp, single-well indicates a paraelectric.

Workflow for Integrated OED and Material Characterization

The following diagram outlines the sequential workflow for applying OED principles to the iterative optimization of a functional material, integrating the protocols above.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for RFE Design

| Item Name | Function/Description | Application Context |

|---|---|---|

| Apolar Nematic Host | Provides a fluid, dielectric background with long-range orientational order. | Creates the nematic environment for dispersing nPNRs [21]. |

| High-μₑ Polar Dopant | Molecules with large permanent dipole moments to form the core of PNRs. | Source of local polarity for nPNR formation [21]. |

| Landau-Ginzburg-Devonshire (LGD) Model | Phenomenological theory describing free energy and phase transitions. | Reconstructing free-energy landscapes from P-E data to identify polar states [21] [25]. |

| Phase-Field Simulation | Mesoscopic computational method to simulate domain evolution. | Modeling polarization switching and electric breakdown paths in material design [25]. |

| Ferroelectric Tester | Instrument for measuring polarization vs. electric field (P-E) hysteresis loops. | Key experimental characterization for ferroelectric and relaxor materials [21]. |

| Fisher Information Matrix (FIM) | Metric quantifying the information content of an experimental design. | Optimizing design points (e.g., composition, temperature) for efficient model calibration [6] [24]. |

Advanced Methodologies: Implementing OED with Machine Learning and Scalable Algorithms

Adaptive Machine Learning Workflows for Guiding Experiments and Computations

The discovery and development of functional materials are critical for advancing technologies in renewable energy, catalysis, electronics, and medicine. Traditional experimental approaches, often relying on trial-and-error or researcher intuition, are inefficient when confronting the vastness of chemical and compositional space. Optimal Experimental Design (OED) provides a statistical framework for maximizing information gain while minimizing experimental costs. When integrated with adaptive machine learning (ML) workflows, OED transforms materials discovery into a guided, iterative process of computational prediction and experimental validation. These workflows actively learn from data to redirect subsequent simulations or experiments toward the most promising regions of materials space, dramatically accelerating the discovery cycle [26] [1] [6].

This document outlines application notes and detailed protocols for implementing such adaptive ML workflows, framed within the context of functional materials research. We focus on practical methodologies that have successfully discovered novel materials, including thermodynamically stable crystals and high-temperature superconductors.

Table 1: Representative Adaptive Machine Learning Systems for Materials Discovery

| System Name | Core Methodology | Primary Application | Key Performance Metrics |

|---|---|---|---|

| InvDesFlow-AL [27] | Active Learning-based Diffusion Model | Inverse design of functional materials (crystals, superconductors) | RMSE of 0.0423 Å in crystal structure prediction (32.96% improvement); Discovered 1,598,551 materials with Ehull < 50 meV. |

| GNoME [28] | Scalable Graph Neural Networks (GNNs) with Active Learning | Discovery of stable inorganic crystals | Discovered 2.2 million stable structures, 381,000 on the convex hull; Prediction error of 11 meV/atom. |

| ME-AI [29] | Gaussian Process with Chemistry-Aware Kernel | Identification of topological semimetals and insulators | Translates expert intuition into quantitative descriptors; Demonstrated transferability across material classes. |

| MOCU-based Framework [1] | Mean Objective Cost of Uncertainty | Minimizing energy dissipation in shape memory alloys | Recommends next experiment to most effectively reduce model uncertainty affecting target properties. |

Detailed Experimental Protocols

Protocol 1: Inverse Design of Crystals using InvDesFlow-AL

This protocol describes the workflow for inverse designing crystalline materials with target properties, such as low formation energy or specific electronic properties.

1. Preparation and Pre-training

- Data Curation: Assemble a large-scale dataset of inorganic crystal structures. The InvDesFlow-AL model was pre-trained on a combined dataset of 607,683 materials from Alex-MP-20 and 381,000 materials from the GNoME database [27].

- Model Initialization: Pre-train a diffusion model to generate crystal structures by learning to reverse a fixed corruption process applied to atom types (A), coordinates (X), and periodic lattice (L) of known crystals.

2. Active Learning Cycle

- Step 1 – Conditional Fine-tuning: Fine-tune the pre-trained model on a subset of data rich in the target property (e.g., crystals with formation energy Eform < -0.5 eV/atom).

- Step 2 – Candidate Generation: Use the fine-tuned model to generate novel crystal structures.

- Step 3 – Selection and Filtering: Apply a Query-By-Committee (QBC) strategy with multi-objective functions to select the most valuable generated candidates. Filter for compositional uniqueness against existing databases [27].

- Step 4 – Validation and Data Flywheel:

- Perform atomic-scale structural relaxation on selected candidates using an interatomic potential (e.g., DPA-2) with DFT-level accuracy [27].

- Discard structures that fail force convergence criteria (e.g., interatomic forces > 1e-4 eV/Å).

- Calculate target properties (e.g., formation energy, energy above convex hull) for the relaxed structures.

- Add the successfully validated structures and their properties to the training dataset.

- Step 5 – Model Update: Use the expanded dataset to fine-tune the generative model for the next round. Repeat from Step 2.

3. Validation and Characterization

- Stability Assessment: Confirm the thermodynamic stability of final candidates by verifying a low energy above the convex hull (Ehull), typically < 50 meV/atom [27].

- Property Verification: Use higher-fidelity methods, such as Density Functional Theory (DFT), to verify the electronic, catalytic, or superconducting properties of the discovered materials.

Protocol 2: Large-Scale Discovery of Stable Crystals using GNoME

This protocol outlines a scaled-up active learning process for expanding the realm of known stable crystals.

1. Candidate Generation via Two Parallel Frameworks

- Structural Path: Generate candidate structures by modifying existing crystals using symmetry-aware partial substitutions (SAPS) and other substitutions, biasing probabilities toward discovery [28].

- Compositional Path: Generate candidate compositions using relaxed chemical constraints (e.g., beyond strict oxidation-state balancing). For each promising composition, initialize 100 random structures using ab initio random structure searching (AIRSS) [28].

2. Filtration with Graph Neural Networks (GNNs)

- Model Architecture: Employ GNNs where crystal structures are represented as graphs with elements as nodes.

- Prediction and Uncertainty: The GNN predicts the decomposition energy (stability) of each candidate. Use volume-based test-time augmentation and deep ensembles for uncertainty quantification [28].

- Selection: Retain candidates predicted to be stable with respect to the known convex hull.

3. DFT Validation and Active Learning

- Energy Calculation: Evaluate the filtered candidates using DFT calculations (e.g., in VASP) with standardized settings to determine their accurate energy and stability [28].

- Data Integration: Incorporate the DFT-validated structures and their energies into the training set for the GNN model.

- Iterative Refinement: Repeat the cycle. With each iteration, the model's prediction error decreases, and its ability to identify stable crystals improves, as governed by neural scaling laws.

Protocol 3: Embedding Expert Intuition with ME-AI

This protocol uses machine learning to codify and extend the chemical intuition of materials experts for identifying materials with specific functional properties.

1. Expert-Curated Dataset Creation

- Define Material Class: Focus on a specific class of materials where expert intuition exists. The ME-AI framework was demonstrated on square-net compounds [29].

- Select Primary Features (PFs): Choose atomistic and structural features based on expert knowledge. For square-net compounds, this included 12 PFs such as electronegativity, electron affinity, valence electron count, and key structural distances (dsq, dnn) [29].

- Label Data: Expertly label the dataset with the target property (e.g., "topological semimetal" or "trivial") using available experimental/computational band structures and chemical logic for related compounds [29].

2. Model Training and Descriptor Discovery

- Model Training: Train a Dirichlet-based Gaussian Process model with a chemistry-aware kernel on the curated dataset [29].

- Descriptor Extraction: Analyze the trained model to uncover the emergent descriptors—combinations of primary features—that are most predictive of the target property. ME-AI successfully recovered the known "tolerance factor" and identified new chemical descriptors like hypervalency [29].

3. Prediction and Generalization

- Screening: Use the trained model and discovered descriptors to screen new or existing material databases for candidates with a high probability of possessing the target property.

- Cross-System Validation: Test the model's generalizability by evaluating its performance on material classes outside the original training set (e.g., predicting topological insulators in rocksalt structures using a model trained on square-net compounds) [29].

Workflow Visualization

Active ML Workflow for Materials Discovery

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational and Data Resources for Adaptive ML-Driven Discovery

| Category | Item | Function and Notes |

|---|---|---|

| Software & Algorithms | Graph Neural Networks (GNNs) | Models crystal structures as graphs for property prediction [28]. |

| Diffusion Models | Generative models for creating novel, valid crystal structures [27]. | |

| Gaussian Processes (with custom kernels) | For interpretable descriptor discovery and uncertainty-aware prediction [29]. | |

| Databases | Materials Project (MP), Inorganic Crystal Structure Database (ICSD), Open Quantum Materials Database (OQMD) | Sources of known crystal structures and properties for initial model training and candidate generation [27] [28]. |

| Validation Tools | Density Functional Theory (DFT) Codes (e.g., VASP) | High-fidelity computational validation of predicted structures and properties [27] [28]. |

| Learned Interatomic Potentials (e.g., DPA-2) | Faster, near-DFT accuracy for structural relaxation and screening [27]. | |

| Experimental Design | Mean Objective Cost of Uncertainty (MOCU) | An objective-based uncertainty quantification to recommend the next most informative experiment [1]. |

| Frameworks | Automated ML (AutoML) Frameworks (e.g., AutoGluon, TPOT) | Automates model selection and hyperparameter tuning to improve efficiency [26]. |

Scalable Algorithms for Exponentially Large and Constrained Design Spaces

The discovery and development of functional materials are fundamental to advancements in renewable energy, electronics, and drug development. However, the design space for these materials is often exponentially large and subject to multiple complex constraints, making comprehensive exploration through traditional experimental methods infeasible. Optimal Experimental Design (OED) provides a statistical framework to maximize information gain while minimizing resource expenditure [6]. This document details scalable algorithms and practical protocols for navigating these vast design spaces efficiently, directly supporting the broader thesis that adaptive, computational OED is crucial for accelerating functional materials research.

Algorithmic Foundations and Comparative Analysis

Navigating large design spaces requires a diverse set of algorithms, each with specific strengths. The table below summarizes key scalable algorithms suited for different challenges in functional materials design.

Table 1: Scalable Algorithms for Large and Constrained Design Spaces

| Algorithm Class | Core Principle | Strengths | Ideal for Design Spaces That Are... | Key References |

|---|---|---|---|---|

| Nature-Inspired Metaheuristics (e.g., PSO) | Mimics collective intelligence (e.g., bird flocking) to explore complex spaces. | Highly versatile; assumptions-free; effective for non-convex, black-box problems. | ...highly nonlinear, multi-modal, and where gradient information is unavailable. | [13] |

| Surrogate-Based Active Learning | Uses a fast, approximate model (surrogate) to guide the selection of high-fidelity evaluations. | Dramatically reduces computational cost by minimizing calls to expensive simulations. | ...governed by computationally expensive high-fidelity models (e.g., DFT). | [11] |

| Large Circuit Models (LCMs) | AI-native foundation models that learn from multi-modal circuit data (netlists, layouts). | Captures intricate structure-property relationships; enables holistic PPA optimization. | ...defined by complex structural topologies and multi-physics interactions. | [30] |

| Adaptive Strategy Management (ASM) | Dynamically switches between multiple solution-generation strategies based on real-time feedback. | Enhances efficiency in computationally expensive optimization; ensures stability in large designs. | ...very large-scale and require robust, adaptive optimization strategies. | [31] |

| High-Throughput Computing (HTC) Pipelines | Leverages parallel processing to automate large-scale simulation and screening. | Enables rapid evaluation of vast material libraries; excellent for initial screening. | ...vast and combinatorial, requiring brute-force initial screening. | [32] [33] |

Experimental Protocols and Workflows

Protocol: Particle Swarm Optimization (PSO) for Small-Sample Toxicology Studies

This protocol adapts the metaheuristic PSO algorithm for finding optimal experimental designs in resource-constrained settings, such as toxicology studies with small sample sizes (N < 15) [13].

1. Research Reagent Solutions

Table 2: Key Research Reagents and Computational Tools

| Item/Tool | Function in Protocol |

|---|---|

| Dose-Response Model (e.g., Hormesis) | A statistical model (e.g., Brain-Cousens model) representing the biological phenomenon where low doses of a toxin stimulate a response. |

| Particle Swarm Optimization (PSO) Algorithm | The core metaheuristic algorithm that optimizes the experimental design by navigating the dose space. |

| Web-Based Optimal Design App | A user-friendly tool (as developed in [13]) to execute PSO and generate designs without deep programming expertise. |

| Efficient Rounding Method (ERM) | A mathematical procedure to convert an optimal "approximate" design (with proportional weights) into an implementable "exact" design (with integer subject allocations). |

2. Procedure

- Step 1: Problem Formulation. Define the statistical dose-response model (e.g., a hormesis model) and the primary objective of the study (e.g., model parameter estimation, threshold dose estimation).

- Step 2: Criterion Selection. Formalize the study objective into a mathematical design criterion (e.g., D-optimality for parameter estimation).

- Step 3: PSO Execution. Configure and run the PSO algorithm via a web-based app [13]. The algorithm will search for the set of dose levels and their optimal proportional allocation of experimental units that maximize the chosen criterion.

- Step 4: Design Realization. Apply an Efficient Rounding Method (ERM) to the PSO-generated optimal approximate design. The ERM intelligently converts the proportional allocations into integer subject counts for a given small sample size N, ensuring the design is statistically efficient and directly implementable [13].

- Step 5: Validation. Implement the exact design in the wet lab. Use the collected data to calibrate the model and assess the precision of the parameter estimates, validating the design's efficiency.

Protocol: Surrogate-Based Active Learning for Redox-Active Material Screening

This protocol outlines a computational campaign for efficiently screening organic materials to identify candidates with specific redox potentials (RP) for energy storage applications [32] [11].

1. Research Reagent Solutions

Table 3: Key Components for Virtual Screening Pipeline

| Item/Tool | Function in Protocol |

|---|---|

| High-Fidelity Model (e.g., DFT) | Provides accurate but computationally expensive RP predictions for a given molecular structure. |

| Set of Surrogate Models | Faster, less complex models (e.g., machine learning regressors) of varying accuracy used to approximate the high-fidelity model's predictions. |

| Active Learning Logic | The decision-making algorithm that uses surrogate uncertainty to select the most informative candidates for high-fidelity evaluation. |

| Molecular Database | A large library of candidate organic material structures (e.g., from PubChem) to be screened. |

2. Procedure

- Step 1: Pipeline Construction. Design a multi-stage HTVS pipeline. The initial stages use fast, low-fidelity surrogate models to filter out clearly non-viable candidates. Promising candidates from early stages are evaluated with more accurate, expensive surrogates in later stages [32].

- Step 2: Optimal Initialization. Determine the optimal initial data size for the active learning loop. As shown in [11], using an adequate number of initial high-fidelity data points is critical for the surrogate model to learn effectively and ensure faster convergence.

- Step 3: Active Learning Loop.

- Train Surrogate: Train a surrogate model (e.g., a Gaussian Process regressor) on the current set of high-fidelity data.

- Predict and Select: Use the surrogate to predict RPs for all unscreened candidates. Select the candidates where the surrogate's prediction is most uncertain or has the highest potential to meet the target RP.

- Evaluate and Update: Run the high-fidelity model on the selected candidates and add the new data points to the training set.

- Step 4: Iteration. Repeat Step 3 until a predefined stopping condition is met (e.g., a candidate meeting the target RP is found, or a computational budget is exhausted). This process maximizes the Return-on-Computational-Investment (ROCI) [32].

Workflow Visualization

Optimal Experimental Design Workflow

This diagram illustrates the iterative, adaptive workflow for optimal experimental design, which is central to managing parameter uncertainty in nonlinear models [6].

Hybrid AI-Driven Materials Design Workflow

This diagram outlines a modern, integrated framework for digitized material design, combining physics-based simulations with data-driven AI models [33].

D-Optimal Design for High-Order Polynomial and Nonlinear Models

D-optimal design is a model-based statistical approach that facilitates the most accurate parameter estimation for complex models by minimizing the generalized variance of the parameter estimates. This is achieved by maximizing the determinant of the Fisher information matrix, which minimizes the volume of the confidence ellipsoid for the parameters [34] [35]. For nonlinear models commonly encountered in functional materials research and drug development, the design criterion depends on the unknown model parameters, necessitating the use of locally optimal designs based on nominal parameter values from prior knowledge or pilot studies [34].

The application of D-optimal design is particularly valuable in high-dimensional problems where multiple factors interact, creating a non-separable optimization landscape. Classical gradient-based optimization techniques often fail for such problems due to premature convergence at local optima [34]. This protocol outlines methodologies for constructing D-optimal designs for high-order polynomial and nonlinear models, with specific applications in functional materials science and pharmacodynamic research.

Computational Implementation Using Advanced Optimization

Differential Evolution Algorithm for High-Dimensional Problems

For high-dimensional, non-separable design problems, nature-inspired metaheuristic algorithms such as Differential Evolution (DE) have demonstrated superior performance compared to classical techniques. The following NovDE algorithm incorporates a novelty-based mutation strategy to preserve population diversity and escape local optima [34]:

Algorithm 1: NovDE for D-Optimal Design

- Initialization: Generate initial population of design candidates

- While stopping criterion not met do

- Novelty-based Mutation: For selected individuals, sample difference vectors with largest angle differences from previous generation to explore novel regions

- Collaborative Mutation: Apply 'DE/rand/2' and 'DE/current-to-rand/1' strategies to balance exploration and exploitation