Navigating the Chemical Universe: AI and Computational Strategies for Accelerated Materials Discovery

This article provides a comprehensive overview of the computational methods and artificial intelligence (AI) tools revolutionizing the exploration of chemical space for materials discovery.

Navigating the Chemical Universe: AI and Computational Strategies for Accelerated Materials Discovery

Abstract

This article provides a comprehensive overview of the computational methods and artificial intelligence (AI) tools revolutionizing the exploration of chemical space for materials discovery. Aimed at researchers and drug development professionals, it covers the foundational concepts of chemical space, including the biologically relevant chemical space (BioReCS) and underexplored regions. It delves into advanced methodological approaches such as generative AI, foundation models, virtual screening, and global optimization algorithms. The content also addresses key challenges like synthetic accessibility and the confined nature of known chemical space, offering troubleshooting and optimization strategies. Finally, it presents a comparative analysis of validation frameworks and performance metrics, synthesizing how these integrated computational workflows are streamlining the path to novel material and drug development.

Mapping the Vastness of Chemical Space: From BioReCS to Underexplored Frontiers

The concept of the chemical universe, or chemical space, provides a foundational framework for modern computational drug discovery and materials science. It can be defined as the vast, theoretically infinite universe of all possible chemical compounds, encompassing both known and hypothetical molecules [1]. This space includes all conceivable combinations of atoms and bonds, forming a multi-dimensional universe where each dimension represents a distinct molecular property or structural feature [2] [1]. For practical applications, researchers often focus on subsets of this space, such as the synthetically accessible chemical space estimated to contain between 10²³ to 10⁶⁰ molecules based on constraints like molecular size, stability, and lead-like properties [1]. The sheer scale of this universe presents both an extraordinary opportunity for discovery and a significant challenge for efficient exploration [3]. Technological advances and practical applications of the chemical space concept have attracted substantial scientific interest, particularly in drug discovery, natural product research, and materials science, where researchers must develop sophisticated methods to navigate this vast terrain effectively [2].

Theoretical Foundations: Defining Chemical Space and Multiverse

Evolving Definitions of Chemical Space

The conceptualization of chemical space has evolved through several refined definitions, as summarized in Table 1. Dobson's early definition encompassed "all possible small organic molecules, including those present in biological systems," while Lipinski and Hopkins drew an analogy to the cosmological universe, noting its vastness with "chemical compounds populating space instead of stars" [2]. Reymond and colleagues expanded this to the "ensemble of all known and possible molecules described by their chemical properties," emphasizing the inclusion of both existing and potential compounds [2]. A more mathematical perspective was introduced by Varnek and Baskin, who defined it as an "ensemble of graphs or descriptor vectors" that must contain defined relations between objects [2].

Table 1: Key Definitions of Chemical Space

| Author(s) | Chemical Space Definition | Key Emphasis |

|---|---|---|

| Dobson | "All possible small organic molecules, including those present in biological systems" [2] | Inclusivity of biological molecules |

| Lipinski and Hopkins | "Chemical space can be viewed as being analogous to cosmological universe in its vastness..." [2] | Vast scale and stellar analogy |

| Reymond et al. | "Ensemble of all known and possible molecules described by their chemical properties" [2] | Known and hypothetical molecules |

| Varnek and Baskin | "Ensemble of graphs or descriptor vectors forms a chemical space..." [2] | Mathematical representation |

| von Lilienfeld et al. | "Combinatorial set of all compounds from possible combinations of N1 atoms and Ne electrons..." [2] | Atomic and electronic combinations |

The Chemical Multiverse: A Multi-Descriptor Paradigm

Building upon these foundational definitions, a crucial advancement is the concept of the chemical multiverse [2]. Unlike physical space, chemical space is not unique; each ensemble of molecular graphs and descriptors defines its own distinct chemical space [2]. The chemical multiverse refers to the comprehensive analysis of compound datasets through several chemical spaces, each defined by a different set of chemical representations [2]. This concept acknowledges that molecules with fundamentally different chemical natures—such as small organic molecules, peptides, metal-containing compounds, and biologics—require divergent chemical spaces and descriptors for meaningful representation [2]. This multi-descriptor approach stands in contrast to the related idea of a consensus chemical space, offering a more nuanced framework for understanding molecular relationships across different representation systems.

Methodological Approaches: Navigating the Chemical Multiverse

Dimensionality Reduction and Visualization Techniques

The high-dimensional nature of chemical space, often containing hundreds or thousands of descriptors, necessitates the implementation of dimensionality reduction techniques to generate interpretable visual representations [2]. These methods transform complex multi-dimensional data into two-dimensional (2D) or three-dimensional (3D) maps that researchers can visually analyze. Several powerful techniques have been developed for this purpose, including t-distributed Stochastic Neighbor Embedding (t-SNE), which is particularly effective for preserving local structure; Principal Component Analysis (PCA), which identifies the directions of maximum variance in the dataset; Self-Organizing Maps (SOMs), which use neural networks to produce low-dimensional representations; and Generative Topographic Mapping (GTM), which provides a probabilistic alternative to SOMs [2]. Additionally, Chemical Space Networks offer alternative visualization approaches that represent molecules as nodes and their relationships as edges in a network graph [2]. The choice of technique depends on the specific analysis goals, dataset characteristics, and the aspects of chemical space (global vs. local structure) that researchers wish to emphasize.

Crystal Structure Prediction-Informed Evolutionary Optimization

Recent methodological advances have addressed a critical limitation in materials discovery: traditional searches of chemical space have largely focused on molecular properties while ignoring the significant effects of crystal packing on material properties [3]. To overcome this, researchers have developed an evolutionary algorithm (EA) that incorporates crystal structure prediction (CSP) into the evaluation of candidate molecules [3]. This CSP-informed EA allows fitness evaluation based on predicted materials properties rather than molecular properties alone, leading to more effective identification of promising candidates for functional materials [3].

Table 2: Crystal Structure Prediction Sampling Schemes

| Sampling Scheme | Space Groups | Structures per Group | Global Minima Found | Low-Energy Structures Recovered |

|---|---|---|---|---|

| SG14-500 | 1 (P2₁/c) | 500 | 12/20 | 25.7% |

| SG14-2000 | 1 (P2₁/c) | 2000 | 15/20 | 33.9% |

| Sampling A | 5 (biased) | 2000 | 18/20 | 73.4% |

| Top10-2000 | 10 (most common) | 2000 | 19/20 | 77.1% |

The experimental protocol for CSP-informed evolutionary optimization involves several key steps. First, researchers must select an appropriate CSP sampling scheme that balances computational cost with prediction accuracy (Table 2) [3]. For molecular semiconductors, the algorithm then proceeds through generations of candidate evaluation, starting with fully automated CSP calculations that generate and optimize trial crystal structures from a line notation description of the molecule [3]. The lattice energy surface is explored to identify low-energy crystal structures, typically defined as those within 7.2 kJ mol⁻¹ of the global minimum based on polymorph energy difference studies [3]. For each candidate molecule, charge carrier mobility is calculated from the predicted crystal structures, serving as the primary fitness criterion for selection [3]. The fittest candidates are selected as parents to generate new molecular designs through evolutionary operations, carrying forward favorable characteristics to subsequent generations [3]. This process iterates until convergence, successfully identifying molecules with high predicted electron mobilities that would be missed by searches based solely on molecular properties [3].

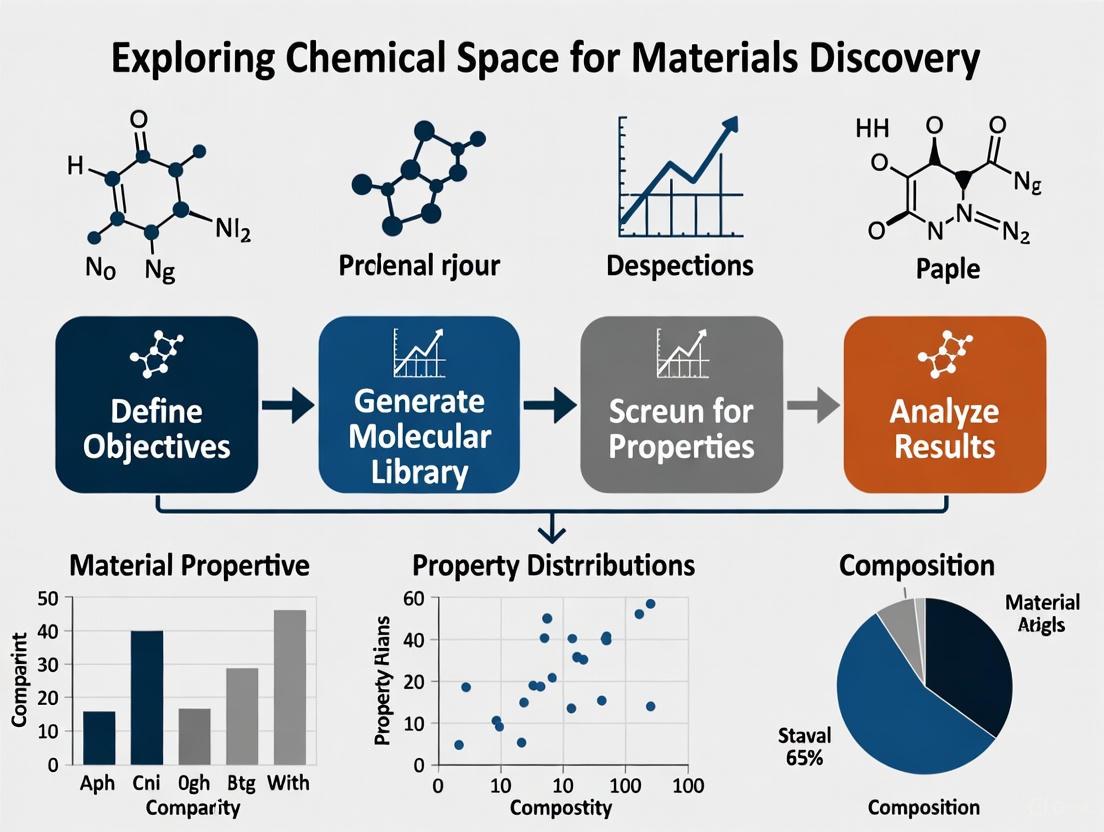

Diagram 1: CSP-Informed Evolutionary Algorithm Workflow. This process integrates crystal structure prediction with evolutionary optimization to identify materials with enhanced properties.

Computational Tools for Chemical Space Navigation

The exploration of chemical space relies on sophisticated computational tools that enable researchers to analyze, visualize, and navigate molecular diversity. Key platforms include ChemGPS, which acts as a global positioning system for chemical space, providing stable coordinates and visual representations of chemical diversity [1]; the ZINC Database, a comprehensive collection of commercially available compounds that covers a substantial portion of known chemical space and is widely used for virtual screening [1]; PubChem, a public repository containing millions of chemical molecules and their biological activities, serving as a valuable resource for exploring biologically relevant chemical space [1]; and RDKit, an open-source cheminformatics toolkit that provides fundamental capabilities for molecular representation, analysis, and chemical space visualization [1]. These tools form the foundation for most chemical space exploration initiatives, providing both data and analytical capabilities necessary for effective navigation.

Research Reagent Solutions for Chemical Space Exploration

Table 3: Essential Research Reagents and Tools for Chemical Space Exploration

| Tool/Resource | Type | Primary Function | Application in Research |

|---|---|---|---|

| ZINC Database | Compound Library | Curated collection of commercially available compounds | Virtual screening against biological targets; accessing diverse chemical structures [1] |

| PubChem | Database | Repository of chemical molecules and their bioactivities | Exploring structure-activity relationships; benchmarking compound collections [1] |

| RDKit | Software Toolkit | Cheminformatics and machine learning algorithms | Calculating molecular descriptors; similarity searching; chemical space visualization [1] |

| CSP Algorithms | Computational Method | Crystal structure prediction | Evaluating solid-state properties of candidate molecules for materials design [3] |

| Evolutionary Algorithm | Optimization Method | Guided search through chemical space | Generating and optimizing molecular structures with desired properties [3] |

Advanced Applications in Materials Discovery and Drug Development

Materials Discovery Through Chemical Space Exploration

The application of chemical space exploration to materials discovery represents a frontier in computational materials science. Organic molecular crystals offer diverse potential applications across pharmaceuticals, organic electronics, optical materials, and porous materials for gas storage and separation [3]. The challenge lies in the prohibitive expense of exhaustively searching chemical space to find novel molecules with promising solid-state properties [3]. The CSP-informed evolutionary algorithm described in Section 3.2 has demonstrated significant success in identifying organic molecular semiconductors with high predicted electron mobilities, outperforming approaches based solely on molecular properties [3]. This methodology is particularly valuable because charge carrier mobilities in organic semiconductors are highly sensitive to crystal packing, making the incorporation of CSP essential for accurate property prediction [3]. By enabling crystal structure-aware searches, this approach opens new possibilities for the computational design of functional organic materials with tailored electronic, optical, and mechanical properties.

Drug Discovery Applications

In pharmaceutical research, chemical space exploration enables several critical applications that accelerate drug discovery. It facilitates molecular diversity analysis, allowing researchers to identify diverse structural motifs that increase the chances of finding novel drug candidates with unique properties [1]. Through virtual screening, computational tools can efficiently evaluate large libraries of compounds within chemical space to identify potential ligands for biological targets [1]. The approach also supports lead identification by helping researchers pinpoint promising regions of chemical space for specific biological targets [1]. Once initial leads are identified, chemical space exploration aids in lead optimization by examining analogs and derivatives that may possess enhanced potency, selectivity, or improved ADMET (absorption, distribution, metabolism, excretion, and toxicity) properties [1]. Finally, de novo drug design directly explores chemical space to generate novel drug-like molecules with desired properties by building molecules computationally without screening pre-existing libraries [1]. These applications demonstrate how chemical space concepts directly contribute to reducing the time and cost associated with traditional drug discovery approaches.

Future Directions and Challenges in Chemical Space Exploration

The field of chemical space exploration continues to evolve rapidly, facing several significant challenges and opportunities. The ongoing growth of chemical libraries to millions of compounds creates substantial demands for efficient visualization methods capable of handling this scale while remaining interpretable to human researchers [4]. Deep generative modeling represents a promising direction, potentially enabling interactive exploration of chemical space through human-in-the-loop approaches that combine computational efficiency with human intuition [4]. As chemical space visualization extends beyond simple compounds to include reactions and entire chemical libraries, new analytical frameworks will be needed to represent these more complex relationships [4]. Additionally, chemical space maps are finding unconventional applications, including visual validation of quantitative structure-activity relationship (QSAR) and quantitative structure-property relationship (QSPR) models, and even emerging as a form of digital art that communicates scientific complexity through aesthetic representation [4]. These developments highlight the dynamic nature of chemical space research and its expanding role across scientific disciplines.

The concept of the chemical multiverse continues to gain importance as researchers recognize the limitations of single-representation approaches [2]. Different molecular descriptors emphasize different aspects of chemical structure and properties, making the comprehensive analysis of compound datasets through multiple complementary chemical spaces essential for thorough understanding [2]. This multi-faceted perspective enables more robust diversity analysis, virtual screening, and structure-activity relationship studies that account for the complex, multi-dimensional nature of molecular similarity and difference [2]. As chemical space exploration continues to mature, the integration of multiple representation systems, combined with advanced optimization algorithms like CSP-informed evolutionary approaches, will likely become standard methodology for tackling the fundamental challenge of finding novel functional molecules within the vastness of possible chemical structures.

The Biologically Relevant Chemical Space (BioReCS) represents the vast collection of molecules exhibiting biological activity, encompassing both beneficial and detrimental effects [5]. This space includes not only therapeutic agents but also compounds relevant to agrochemistry, sensory chemistry, food science, and natural product research [5]. For materials discovery research, charting BioReCS provides an essential framework for identifying functional molecules with tailored biological properties. The concept of "chemical space" (CS) is fundamentally multidimensional, with molecular properties defining coordinates and relationships between compounds [5]. Within this framework, BioReCS can be viewed as a critical subspace distinguished by shared biological functionality, offering an organizing principle for exploring nature's chemical diversity and guiding the design of novel bioactive materials [6].

Computational Frameworks for BioReCS Navigation

Molecular Descriptors and Representations

The systematic study of BioReCS requires molecular descriptors that define the dimensionality of the space. The choice of descriptors depends on project goals, compound classes, and dataset characteristics [5]. For large chemical libraries used in modern discovery projects, descriptors must balance computational efficiency with chemical relevance [5]. Several descriptor types have been developed for this purpose:

- Structural Fingerprints: Morgan circular fingerprints encode the presence and frequency of substructures within a molecule by capturing atomic environments up to a certain radius, while MACCS keys provide a fixed-length binary representation based on predefined structural features [7].

- Neural Network Embeddings: ChemDist embeddings are obtained from graph neural networks trained using deep metric learning, generating continuous vector representations that quantify molecular similarity based on distances in the embedding space [7].

- Universal Descriptors: Ongoing efforts aim to develop structure-inclusive, general-purpose descriptors like molecular quantum numbers and the MAP4 fingerprint, which can accommodate entities ranging from small molecules to biomolecules [5]. Neural network embeddings from chemical language models show particular promise in encoding chemically meaningful representations [5].

Dimensionality Reduction for Chemical Space Visualization

Dimensionality reduction (DR) techniques are essential for visualizing high-dimensional chemical data in human-interpretable 2D or 3D maps, a process known as "chemography" [7]. These methods transform feature vectors representing chemical structures into spatial coordinates that preserve chemical relationships.

Table 1: Comparison of Dimensionality Reduction Methods for BioReCS Visualization

| Method | Type | Key Characteristics | Optimal Use Cases | Neighborhood Preservation Performance |

|---|---|---|---|---|

| PCA | Linear | Fast, deterministic, preserves global structure | Initial exploration, large datasets | Lower performance for complex non-linear structures [7] |

| t-SNE | Non-linear | Preserves local structure, clusters similar compounds | Detailed analysis of local relationships | High local neighborhood preservation [7] [8] |

| UMAP | Non-linear | Balances local and global structure, computational efficiency | General-purpose mapping of diverse compound sets | Strong performance in neighborhood preservation [7] |

| GTM | Non-linear | Generative model, produces interpretable landscapes | Property prediction, activity landscape modeling | Supports highly neighborhood-preserving landscapes [7] |

The performance of these DR methods is typically evaluated using neighborhood preservation metrics, including the percentage of preserved nearest neighbors (PNNk), co-k-nearest neighbor size (QNN), and trustworthiness [7]. Non-linear methods generally outperform linear methods in preserving local neighborhoods, though global structure may be better captured by linear techniques [7].

Experimental Protocols for BioReCS Mapping

Workflow for Chemical Space Analysis

The following diagram illustrates the comprehensive workflow for mapping and analyzing BioReCS using dimensionality reduction techniques:

Data Collection and Preprocessing Protocol

Objective: To assemble a representative dataset from BioReCS for analysis [7].

Materials:

- Public compound databases (ChEMBL, PubChem, ZINC, COCONUT)

- Cheminformatics software (RDKit, OpenBabel)

- Computing environment with Python/R capabilities

Procedure:

- Database Selection: Retrieve target-specific subsets from curated databases like ChEMBL, applying quality filters based on experimental confidence and data completeness [7].

- Dataset Criteria: Select subsets containing sufficient compounds (typically >400) covering a range of intrinsic dimensionality values [7].

- Standardization: Apply consistent molecular standardization including neutralization of charges, removal of duplicates, and normalization of specific functional groups.

- Curate Inactive Compounds: Include confirmed inactive compounds from sources like InertDB, which contains 3,205 curated inactive compounds and 64,368 putative inactive molecules [5].

Dimensionality Reduction Implementation Protocol

Objective: To project high-dimensional chemical descriptors into 2D/3D visualizations [7].

Materials:

- Python/R with DR libraries (scikit-learn, OpenTSNE, umap-learn)

- High-performance computing resources for large datasets

- Visualization frameworks (Matplotlib, Plotly, MolCompass)

Procedure:

- Descriptor Calculation:

Data Preprocessing:

- Remove all zero-variance features to reduce dimensionality.

- Standardize remaining features to zero mean and unit variance.

Model Optimization:

Model Validation:

Table 2: Essential Research Reagents and Computational Tools for BioReCS Exploration

| Category | Resource/Tool | Key Function | Application in BioReCS |

|---|---|---|---|

| Compound Databases | ChEMBL [5] | Annotated bioactive molecules | Source of poly-active compounds and promiscuous structures |

| PubChem [5] | Large-scale screening data | Access to massive compound collections with activity data | |

| InertDB [5] | Curated inactive compounds | Definition of non-biologically relevant chemical space | |

| Computational Tools | RDKit [7] | Cheminformatics toolkit | Molecular descriptor calculation and fingerprint generation |

| MolCompass [8] | Chemical space visualization | Parametric t-SNE implementation for deterministic mapping | |

| TMAP [8] | Large-scale visualization | Tree-based mapping for datasets exceeding 10^7 compounds | |

| DR Algorithms | Parametric t-SNE [8] | Neural network-based DR | Deterministic projection enabling consistent coordinate system |

| UMAP [7] | Manifold learning | Efficient handling of large datasets with global structure preservation | |

| GTM [7] | Generative mapping | Creation of interpretable property landscapes with probability basis |

Advanced Applications in Materials Discovery

Visual Validation of QSAR/QSPR Models

Chemical space visualization enables critical assessment of quantitative structure-activity/property relationship (QSAR/QSPR) models through visual validation [8]. This approach addresses the "black-box" nature of complex models by mapping prediction errors across chemical space, helping researchers identify regions where models perform poorly and refine their applicability domains [8]. Tools like MolCompass implement this by coloring chemical space maps according to prediction errors, revealing model cliffs analogous to activity cliffs in traditional QSAR [8].

Navigating Underexplored Regions of BioReCS

Significant portions of BioReCS remain underexplored due to computational challenges [5]. These include:

- Metal-containing molecules: Often excluded from standard analyses due to limitations in descriptor systems and modeling tools [5].

- Beyond Rule of 5 (bRo5) compounds: Including macrocycles, peptides, and protein-protein interaction modulators with complex structural features [5].

- Dark regions: Compounds with undesirable biological effects, such as toxic chemicals, which are crucial for understanding the boundaries between beneficial and harmful bioactivity [5].

Recent efforts have developed specialized approaches for these regions, including tailored descriptors for metallodrugs and analysis frameworks for macrocycles and PPIs [5].

Future Directions and Integrative Approaches

The field of BioReCS exploration is evolving toward more integrated and automated approaches. Parametric t-SNE represents a significant advancement, enabling deterministic projection of new compounds into predefined regions of chemical space [8]. This creates a consistent coordinate system for chemical space, allowing researchers to reference specific regions in a manner analogous to geographical coordinates [8]. Additionally, the integration of deep generative models with chemical space visualization enables interactive exploration and targeted generation of compounds with desired properties [4] [8]. As these tools mature, they will increasingly support the rational navigation of BioReCS for accelerated discovery of bioactive materials across multiple application domains.

Public Databases and Molecular Descriptors for Systematic Exploration

Systematic exploration of chemical space is a foundational strategy in modern materials discovery and drug development. This whitepaper provides a technical guide to the essential computational infrastructure—public databases and molecular descriptors—that enables researchers to navigate this vast space efficiently. We detail experimentally validated protocols for employing these resources in large-scale virtual screening and optimization campaigns, with a particular focus on crystal structure-aware methods for materials informatics. The integration of these tools, powered by both traditional chemistry and modern artificial intelligence (AI), creates a powerful framework for accelerating the design of novel functional molecules.

The concept of "chemical space" (CS) refers to the multidimensional universe of all possible chemical compounds, where each dimension represents a distinct molecular property or structural feature [5]. Navigating this space is a central challenge in chemistry, with profound implications for materials science and drug discovery. Within this vast universe, the biologically relevant chemical space (BioReCS) encompasses molecules with documented biological activity, while subspaces exist for specific applications like organic electronics [5] [3]. The sheer number of possible organic molecules makes exhaustive experimental screening impossible [3]. Computational methods, therefore, rely on public databases to access known regions of chemical space and molecular descriptors to represent and quantify molecular structures, enabling virtual exploration, pattern recognition, and predictive modeling.

Public Databases for Chemical Space Mapping

Public compound databases are key resources for exploring the CS and are central to chemoinformatics [5]. These repositories vary in size, specialization, and the type of annotations they provide, allowing researchers to target specific regions of chemical space. The table below summarizes representative public databases critical for systematic exploration.

Table 1: Representative Public Databases for Chemical Space Exploration

| Database Name | Primary Focus | Key Features & Content | Relevance to Exploration |

|---|---|---|---|

| ChEMBL [5] | Bioactive molecules | Curated database of drug-like small molecules with binding, functional, and ADMET information. | Major source for poly-active and promiscuous structures; essential for drug discovery. |

| PubChem [5] | Chemical substances | Massive repository of chemical structures and their biological activities, integrating multiple sources. | Provides a broad view of bioactive space; useful for similarity searching and activity prediction. |

| InertDB [5] | Inactive compounds | Collection of curated and AI-generated molecules known or predicted to lack bioactivity. | Defines the non-biologically relevant chemical space, crucial for model accuracy. |

| PHYSPROP [9] | Physicochemical properties | Dataset of experimental physicochemical properties, including log P values. | Foundational for developing and validating Quantitative Structure-Property Relationship (QSPR) models. |

Beyond these, specialized databases exist for underexplored regions, such as metallodrugs, macrocycles, and PROTACs, though these classes are often underrepresented in broader cheminformatics tools [5].

Molecular Descriptors: Quantifying Molecular Structures

Molecular descriptors are numerical representations that translate chemical structures into a quantifiable format for computational analysis. The choice of descriptor is critical and depends on the project's goals, the compound classes involved, and the required balance between computational efficiency and chemical relevance [5].

A Taxonomy of Molecular Descriptors

The following diagram illustrates the major categories of molecular descriptors and their relationships, from classical to AI-driven approaches.

Key Descriptor Types and Their Applications

Table 2: Categories and Examples of Molecular Descriptors

| Descriptor Category | Key Examples | Calculation Basis | Best Use Cases |

|---|---|---|---|

| Empirical Scales | Kamlet-Taft, Abraham, Catalan parameters [10] | Experimentally derived from solvatochromic measurements, chromatography, etc. | Linear Solvation Energy Relationships (LSER); modeling solvation-related properties. |

| Quantum Chemical (QC) | COSMO-Based Descriptors (VCOSMO*, αCOSMO, βCOSMO, δCOSMO) [10] | Low-cost DFT/COSMO computations of screening charge densities. | Predicting acidity, basicity, and charge distribution; theory-independent QSPR. |

| 3D-Structure-Based | Optimized 3D-MoRSE (opt3DM) [9] | Weighted atomic distances within a molecule, optimized with a scale factor (sL). | Machine learning prediction of properties like log P; materials informatics. |

| AI-Driven Embeddings | Graph Neural Networks, Transformer-based Models [11] | Learned from large datasets using deep learning; high-dimensional vectors. | Scaffold hopping; capturing non-linear structure-property relationships. |

Experimental Protocols for Systematic Exploration

This section outlines detailed methodologies for leveraging databases and descriptors in materials discovery workflows.

Protocol 1: QSPR Modeling for log P Prediction

The partition coefficient (log P) is a critical parameter in drug design and materials science. The following protocol, based on the development of the opt3DM descriptor, enables highly accurate log P prediction [9].

Workflow Overview:

Step-by-Step Methodology:

- Dataset Curation: Obtain a high-quality training set of molecules with experimental log P values. The M-dataset (from PHYSPROP), containing 13,952 molecules with SMILES strings and log P values, is a robust starting point [9].

- Descriptor Generation:

- Generate 3D molecular structures from SMILES strings using a toolkit like RDKit.

- Calculate the opt3DM descriptor using a customized code. The core function is:

I(s) = Σᵢⱼ AᵢAⱼ * sin(s × sL × rᵢⱼ) / (s × sL × rᵢⱼ)wheresis a scattering parameter,rᵢⱼis the interatomic distance, andAᵢandAⱼare atomic weights (e.g., mass, electronegativity) [9]. - Optimization: Fine-tune the descriptor by setting the scale factor

sL = 0.5and the descriptor dimensionNs = 500for optimal performance [9].

- Machine Learning Model Building:

- Implement ML algorithms such as Automatic Relevance Determination (ARD) regression, Ridge regression, or Bayesian Ridge regression using the scikit-learn library.

- Use a feature selector (e.g., SelectFromModel) to identify the most relevant opt3DM descriptors before fitting the regressor [9].

- Model Validation:

- Perform standard train-test validation on the M-dataset.

- Use external benchmark challenges like SAMPL6 and SAMPL9 to test the model's predictive power on novel, drug-like molecules. This protocol achieved a root mean square error (RMSE) of 0.31 on the SAMPL6 data, outperforming many quantum chemical and molecular dynamics methods [9].

Protocol 2: Crystal Structure Prediction-Informed Evolutionary Optimization

For materials whose properties depend on solid-state packing, evolutionary algorithms (EAs) guided by crystal structure prediction (CSP) are superior to methods based on molecular properties alone. This protocol is demonstrated for discovering organic semiconductors with high electron mobility [3].

Workflow Overview:

Step-by-Step Methodology:

- Algorithm Initialization: Define a search space (e.g., organic semiconductors based on azapentacenes) and generate an initial population of candidate molecules, represented by InChi strings [3].

- Fitness Evaluation via CSP: For each molecule in the population, perform an automated crystal structure prediction.

- Efficient Sampling: To manage computational cost, use a reduced CSP scheme. A biased sampling of 5-10 of the most common space groups (e.g., P2₁/c, P-1, P2₁2₁2₁), generating 1000-2000 structures per space group, can recover ~75% of the low-energy landscape at a fraction of the cost of a comprehensive search [3].

- Property Calculation: For the lowest-energy predicted crystal structures, calculate the target material property, such as electron mobility derived from the transfer integral and reorganization energy. This property value is assigned as the molecule's fitness [3].

- Evolutionary Operations:

- Selection: Prioritize molecules with higher fitness scores (e.g., higher electron mobility) as "parents."

- Crossover & Mutation: Generate new "child" molecules by combining fragments from parents and introducing random structural changes (e.g., heteroatom substitution, functional group changes) [3].

- Iteration: The new generation of molecules undergoes the same CSP-informed fitness evaluation. This process repeats, guiding the population toward regions of chemical space with superior solid-state properties. This method has been shown to identify molecules with significantly higher predicted charge carrier mobility than EAs optimized for molecular properties like reorganization energy alone [3].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Chemical Space Exploration

| Tool / Resource | Type | Function in Exploration |

|---|---|---|

| RDKit [9] | Cheminformatics Library | Handles molecule I/O from SMILES, calculates 2D/3D coordinates, and computes fundamental molecular descriptors. |

| scikit-learn [9] | Machine Learning Library | Provides a wide array of ML algorithms (e.g., ARD, Ridge Regression) and feature selectors for building QSPR models. |

| Amsterdam Modeling Suite (with ADF/COSMO-RS) [10] | Quantum Chemistry Software | Performs low-cost DFT/COSMO computations to generate quantum chemical descriptors like σ-profiles and COSMO-based acidity/basicity scales. |

| Crystal Structure Prediction (CSP) Software [3] | Modeling Software | Automatically generates and ranks polymorphs for a given molecule, enabling materials property prediction in evolutionary algorithms. |

| ECFP Fingerprints [11] | Molecular Fingerprint | Encodes molecular substructures as bit strings, widely used for similarity searching and as input for machine learning models. |

| Transformer Models (e.g., FP-BERT) [11] | AI Model | Learns high-dimensional molecular representations from SMILES or fingerprints, enabling advanced tasks like scaffold hopping. |

Emerging Trends and Future Outlook

The field of chemical space exploration is rapidly evolving. Key emerging trends include:

- Universal Descriptors: Efforts are underway to develop descriptors like MAP4 fingerprint and neural network embeddings that work consistently across diverse molecular classes, from small organics to peptides and metallodrugs, enabling integrated analysis of disparate chemical subspaces [5].

- Advanced AI and Foundation Models: Large-scale scientific foundation models like MIST are being trained on vast molecular datasets to predict a wide range of atomistic, thermodynamic, and kinetic properties across multiple domains, promising a more generalized understanding of chemical space [12].

- Sustainable Exploration (SusML): A growing focus on developing Efficient, Accurate, Scalable, and Transferable (EAST) methodologies aims to reduce the computational energy and data storage footprint of large-scale chemical space exploration [13].

The concept of "chemical space" is a foundational theoretical framework in cheminformatics and materials discovery, representing a multidimensional universe where each molecule occupies a unique position defined by its structural and functional properties [5]. This conceptual space is vast; the region of small organic molecules alone is estimated to exceed 10^60 possible compounds, presenting both an extraordinary opportunity and a significant challenge for discovery efforts [14]. Within this nearly infinite expanse, research has naturally concentrated on specific subspaces (ChemSpas) with desired functions, while leaving others relatively untouched. The biologically relevant chemical space (BioReCS) comprises molecules with biological activity—both beneficial and detrimental—spanning applications from drug discovery to agrochemistry and materials science [5]. Navigating this space effectively requires sophisticated computational and experimental approaches that can bridge the gap between molecular design and functional application, particularly for materials discovery research where properties often depend critically on solid-state packing and structural arrangement [3].

This whitepaper provides a technical guide to the heavily explored and underexplored regions of chemical space, focusing on three critical compound classes: small molecules, peptides, and metallodrugs. We synthesize current methodologies, experimental protocols, and research tools to empower researchers in strategically navigating these domains for advanced materials discovery.

Mapping the Chemical Universe: Explored and Underexplored Territories

Table 1: Characteristics of Explored vs. Underexplored Chemical Subspaces

| Chemical Subspace | Exploration Status | Key Databases/Resources | Structural Features | Research Challenges |

|---|---|---|---|---|

| Small Molecule Drug Candidates | Heavily Explored | ChEMBL, PubChem, DrugBank [5] [14] | Rule of 5 compliant, primarily organic, low molecular weight | Limited structural diversity in corporate collections; dark chemical matter prevalent [5] |

| Natural Products | Heavily Explored | Dictionary of Natural Products [14] | Complex stereochemistry, diverse scaffolds | Synthesis complexity, supply limitations |

| Metallodrugs | Underexplored | Limited specialized databases | Metal-carbon covalent bonds, diverse geometries | Modeling challenges; often filtered out in standard cheminformatics [5] [15] |

| Macrocycles & bRo5 Compounds | Underexplored | Emerging specialized collections | Rings of ≥12 atoms, beyond Rule of 5 space | Poor membrane permeability, synthetic complexity [5] |

| Peptide-Based Therapeutics | Moderately Explored | Peptide-specific databases emerging [5] | Mid-sized chains (5-50 amino acids), modified backbones | Metabolic instability, poor oral bioavailability |

| Protein-Protein Interaction Inhibitors | Underexplored | Limited curated datasets | Large surface area binders, unique pharmacophores | Difficulty in identifying druggable hotspots |

The Biologically Relevant Chemical Space (BioReCS)

The BioReCS encompasses all compounds with biological activity, including both therapeutic and detrimental effects. This space is systematically explored through distinct chemical subspaces characterized by shared structural or functional features [5]. Key public databases such as ChEMBL and PubChem serve as major sources for biologically active small molecules, primarily containing organic compounds with extensive biological activity annotations [5]. These repositories have enabled the identification of poly-active and promiscuous structures, but have also revealed significant biases in chemical space coverage.

A critical consideration in BioReCS exploration is the inclusion of negative biological data—compounds known to lack bioactivity—which helps define the non-biologically relevant portions of chemical space [5]. Notable examples include dark chemical matter, comprising small molecules from corporate collections that repeatedly fail to show activity in high-throughput screening assays, and InertDB, a collection of curated inactive compounds from PubChem [5]. These negative datasets provide crucial boundaries for medicinal chemistry efforts.

Quantifying Chemical Space Expansion

The exponential growth of chemical databases raises fundamental questions about whether increased library size translates to greater chemical diversity. Recent research employing innovative cheminformatics methods like the iSIM framework and BitBIRCH clustering algorithm has revealed that simply adding more molecules does not automatically increase diversity [14]. The iSIM framework bypasses the quadratic scaling problem of traditional similarity indices by comparing all molecules simultaneously, enabling efficient diversity assessment of libraries containing millions of compounds [14].

Time-evolution analyses of major databases including ChEMBL, DrugBank, and PubChem show that while cardinality is growing rapidly, diversity metrics do not always follow the same trajectory [14]. This highlights the importance of strategic compound selection rather than exhaustive library expansion for effectively exploring chemical space.

Heavily Explored Regions: Small Molecules and Natural Products

Small Organic Molecules

Small molecule drug candidates represent the most heavily explored region of chemical space, with public databases containing over 2.4 million compounds and 20 million bioactivity measurements in ChEMBL alone [14]. These compounds are predominantly characterized by "drug-like" properties adhering to the Rule of Five, with molecular weights typically under 500 Da and favorable lipophilicity profiles [5]. The extensive exploration of this subspace has enabled the development of robust quantitative structure-activity relationship (QSAR) models and predictive algorithms for property optimization.

The chemical space of small molecules has been systematically mapped using molecular fingerprints and descriptors that encode structural patterns, physicochemical properties, and topological features [14]. These representations enable efficient similarity searching, clustering, and virtual screening—essential tools for navigating such extensively explored territory. However, even within this crowded space, opportunities remain for innovative approaches, particularly in targeting challenging biomacromolecules such as RNA.

Table 2: Experimental Protocol for RNA-Targeted Small Molecule Discovery

| Step | Methodology | Key Parameters | Output |

|---|---|---|---|

| Library Design | Cobalamin (Cbl) hosting of unfavorable RNA-binding ligands [16] | β-axial moiety variation; π-stacking optimization | Diverse Cbl derivative library (e.g., compounds 8-44) |

| Affinity Screening | Fluorescence displacement assay [16] | Competitive titration with CNCbl–5×PEG-ATTO590 probe | KD values (submicromolar range) |

| QSAR Analysis | Multivariate analysis with 347 physiochemical descriptors [16] | Linear discriminant analysis (LDA) of β-axial groups | Identification of tight (29, 7 ± 7 nM), moderate, and weak binders |

| Cell-Based Validation | Regulatory activity assays [16] | Antagonism of riboswitch function | Functional characterization beyond binding affinity |

Evolutionary Algorithms for Small Molecule Optimization

For materials discovery, evolutionary algorithms (EAs) have emerged as powerful tools for navigating small molecule chemical space. Recent advances incorporate crystal structure prediction (CSP) into the fitness evaluation of candidate molecules, enabling optimization based on solid-state properties rather than molecular properties alone [3]. This CSP-informed EA approach has demonstrated superior performance in identifying organic molecular semiconductors with high electron mobilities, addressing the critical challenge that materials properties often depend strongly on crystal packing [3].

The computational efficiency of this approach relies on balanced CSP sampling schemes that capture essential low-energy crystal structures without prohibitive computational cost. Effective sampling of 20 benchmark molecules revealed that schemes focusing on 5-10 space groups with 500-2000 structures per space group can recover 73-77% of low-energy crystal structures at a fraction of the cost of comprehensive sampling [3]. This enables practical CSP-guided exploration of chemical space for materials discovery.

Diagram 1: Crystal structure-informed evolutionary algorithm workflow for small molecule optimization.

Underexplored Regions: Peptides and Metallodrugs

Expanding Peptide Chemical Space

Peptides represent a moderately explored but rapidly expanding region of chemical space, occupying a crucial middle ground between small molecules and biologics. Recent innovations focus on novel methodologies for peptide modification that dramatically expand accessible chemical diversity while improving drug-like properties.

A breakthrough acid-mediated chemoselective method enables targeted modification of arginine residues in peptides, converting guanidinium side chains into amino pyrimidine moieties with near-quantitative conversion across diverse substrates [17]. This transformation significantly enhances cellular permeability—a major limitation for peptide therapeutics—with modified peptides demonstrating 2-fold increases in membrane permeability in cell-based permeability assays (CAPA) [17].

Table 3: Experimental Protocol for Arginine-Directed Peptide Modification

| Step | Reaction Conditions | Quality Control | Downstream Applications |

|---|---|---|---|

| Peptide Synthesis | Standard solid-phase peptide synthesis | HPLC purity >95% | Base peptide for modification |

| Arginine Modification | 100 equiv malonaldehyde in 12 M HCl, room temperature, 1h [17] | HPLC monitoring at 220 nm | Amino pyrimidine peptides (e.g., 2f-2k) |

| Byproduct Reversal | Butylamine treatment | HPLC confirmation of side product removal | Purified single products |

| Late-Stage Functionalization | Reaction with 2-bromoacetophenone derivatives, catalytic base [17] | HRMS, NMR characterization | Imidazo[1,2-a]pyrimidinium salts (4a-4d, 63-75% yield) |

Another innovative approach to peptide diversification involves disulfuration of azlactones, providing versatile entry to unnatural, disulfide-linked amino acids and peptides specifically functionalized at the α-position [18]. This method employs base-catalyzed disulfuration of azlactones followed by ring-opening functionalization, yielding disulfurated azlactones in excellent yields across diverse N-dithiophthalimides and azlactones derived from various amino acids and peptides [18]. The modular integration of functional molecules and azlactones into SS-linkage in two-step operations significantly expands available peptide chemical space.

Metallodrugs: An Emerging Frontier

Metallodrugs constitute a profoundly underexplored region of chemical space, primarily due to modeling challenges that lead to their systematic exclusion from standard cheminformatics workflows [5]. Most chemoinformatics tools are optimized for small organic compounds, automatically filtering out metal-containing molecules during data curation [5]. However, metallodrugs offer unique therapeutic opportunities distinct from purely organic compounds.

Cyclometalated complexes exemplify the promise of metallodrugs, particularly in oncology applications. These compounds are characterized by a metal-carbon covalent bond and chelate formation with σ M–C bonds and coordination bonds (D–M, where D = N, O, P, S, Se, C) [15]. Their exceptional structural versatility enables geometries ranging from linear to octahedral, with fine-tuning possible through ligand modification and oxidation state adjustment [15]. This versatility translates to superior control over intracellular properties including kinetic stability and lipophilicity.

Compared to platinum-based drugs, cyclometalated complexes containing Fe, Ru, and Os exhibit mechanisms of action distinct from cisplatin, targeting molecular sites other than DNA and activating diverse cell death pathways [15]. Iridium and rhodium complexes demonstrate remarkable photophysical and photochemical properties valuable for photodynamic therapy, while nickel and palladium complexes show more efficient cytotoxic properties with different mechanisms of action compared to cisplatin [15].

Diagram 2: Design workflow for cyclometalated complexes with groups 8, 9, and 10 metals.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents for Chemical Space Exploration

| Reagent/Category | Function/Application | Specific Examples | Key Characteristics |

|---|---|---|---|

| Crystal Structure Prediction (CSP) | Predicting solid-state packing and materials properties [3] | Evolutionary algorithm with CSP fitness evaluation | Automated from InChi string; quasi-random sampling of structural degrees of freedom |

| Molecular Descriptors | Defining chemical space dimensionality and relationships [5] | MAP4 fingerprint, molecular quantum numbers, neural network embeddings | Structure-inclusive, general-purpose for diverse compound classes |

| iSIM Framework | Quantifying intrinsic similarity/diversity of compound libraries [14] | iSIM Tanimoto (iT) value calculation | O(N) complexity for large libraries; identifies central vs. outlier molecules |

| BitBIRCH Algorithm | Clustering ultra-large chemical libraries [14] | Tree structure clustering of binary fingerprints | Enables O(N) scaling for chemical space analysis |

| Cobalamin Hosting System | RNA-targeted small molecule delivery [16] | Cbl derivatives with variable β-axial moieties | Solubilizes unfavorable RNA-binding ligands; enables base displacement |

| Malonaldehyde Reagent | Arginine-specific peptide modification [17] | Conversion of guanidinium to amino pyrimidine | Chemoselective in 12 M HCl; near-quantitative conversion |

| Azlactone Disulfuration | SS-linked amino acid/peptide synthesis [18] | Base-catalyzed disulfuration of azlactones | Modular integration of functional molecules; excellent yields |

| N-Dithiophthalimides | Bilateral disulfurating reagents [18] | Various substituted derivatives | Modular building blocks for disulfide-linked peptides |

The strategic exploration of chemical space requires balanced attention to both heavily explored and underexplored regions. While small molecules continue to yield valuable discoveries through increasingly sophisticated approaches like CSP-informed evolutionary algorithms, significant opportunities exist in underexplored territories including metallodrugs, macrocycles, and modified peptides.

Future progress will depend on developing universal molecular descriptors capable of representing diverse compound classes beyond traditional small organic molecules [5]. Chemical language models and neural network embeddings show particular promise for encoding chemically meaningful representations across disparate regions of chemical space [5]. Additionally, the integration of artificial intelligence throughout the discovery pipeline—from generative molecular design to autonomous synthesis and characterization—will dramatically accelerate exploration of underexplored regions [19].

For metallodrugs specifically, overcoming the historical exclusion of metal-containing compounds from standard cheminformatics workflows requires dedicated tool development and database curation [5] [15]. The rich structural diversity and unique mechanisms of action offered by cyclometalated complexes and other organometallic structures justify this specialized investment, particularly for challenging therapeutic areas like oncology.

The continued expansion of chemical space—both in terms of cardinality and diversity—will rely on synergistic advances in computational prediction, synthetic methodology, and biological evaluation. By strategically targeting underexplored regions while deepening understanding of heavily explored territories, researchers can unlock novel materials and therapeutics with enhanced properties and functions.

The Challenge of Universal Descriptors for Diverse Compound Classes

The exploration of chemical space for materials discovery presents a combinatorial challenge of staggering proportions. Considering only naturally occurring elements and stoichiometric compositions, the potential search space includes roughly 3 × 10¹¹ potential quaternary compounds and 10¹³ quinary combinations, with the total number of theoretical materials estimated to be as large as 10¹⁰⁰ [20]. This vastness makes brute-force exploration entirely impractical, even with high-throughput computational methods. The field of medicinal chemistry faces a similar challenge, with the number of potential organic molecules estimated between 10¹³ and 10¹⁸⁰ [20]. This scale creates fundamental challenges for developing universal descriptors that can accurately represent and predict properties across diverse compound classes, from inorganic crystals to large drug-like molecules.

The core challenge lies in creating descriptor frameworks that transcend specific material families while maintaining predictive accuracy. Traditional quantitative structure-property relationship (QSPR) models have often been limited to single families of materials, with narrow applicability outside their training scope [20]. This limitation significantly hinders materials discovery efforts, as researchers cannot leverage insights from one material class to accelerate discovery in another. This technical guide examines the current state of universal descriptor development, provides detailed methodologies for their implementation, and offers a scientific toolkit for researchers pursuing chemical space exploration for materials discovery.

Current Approaches to Universal Descriptors

Property-Labelled Materials Fragments (PLMF)

A significant advancement in universal descriptors comes from the development of Property-Labelled Materials Fragments (PLMF), which adapt fragment descriptors typically used for organic molecules to serve for materials characterization [20]. The PLMF approach represents materials as 'colored' graphs, with vertices decorated according to the nature of the atoms they represent [20]. This methodology requires only minimal structural input while capturing essential chemical information, allowing straightforward implementation of simple heuristic design rules.

The construction of PLMFs involves a multi-step process beginning with determining atomic connectivity within the crystal structure. This is achieved through a computational geometry approach that partitions the crystal structure into atom-centered Voronoi-Dirichlet polyhedra [20]. Connectivity between atoms is established when they share a Voronoi face and their interatomic distance is shorter than the sum of the Cordero covalent radii to within a 0.25 Å tolerance [20]. This approach models strong interatomic interactions (covalent, ionic, and metallic bonding) while ignoring van der Waals interactions.

Table 1: Atomic Properties Used in PLMF Descriptor Differentiation

| Property Category | Specific Properties Included |

|---|---|

| General Properties | Mendeleev group and period numbers (gP, pP), number of valence electrons (NV) |

| Measured Properties | Atomic mass (matom), electron affinity (EA), thermal conductivity (λ), heat capacity (C), enthalpies of atomization (ΔHat), fusion (ΔHfusion) and vaporization (ΔHvapor), first three ionization potentials (IP1,2,3) |

| Derived Properties | Effective atomic charge (Zeff), molar volume (Vmolar), chemical hardness (η), covalent (rcov), absolute, and van der Waals radii, electronegativity (χ) and polarizability (αP) |

The final descriptor vector incorporates both fragment-based and crystal-wide properties, including lattice parameters, their ratios, angles, density, volume, number of atoms, number of species, lattice type, point group, and space group [20]. After filtering out low variance and highly correlated features, the final feature vector captures 2,494 total descriptors, providing a comprehensive representation of the material's chemical and structural identity.

Quantum-Mechanical Descriptors for Drug-like Molecules

For pharmaceutical applications, the Aquamarine (AQM) dataset represents a significant advancement in addressing the challenge of universal descriptors for large drug-like molecules [21]. This extensive quantum-mechanical dataset contains structural and electronic information of 59,783 conformers of 1,653 molecules with total atoms ranging from 2 to 92, containing up to 54 non-hydrogen atoms [21]. The dataset specifically addresses limitations of previous quantum-mechanical datasets that primarily consisted of molecules considerably smaller than those encountered in modern medicinal chemistry.

The AQM dataset includes over 40 global and local physicochemical properties per conformer computed at the tightly converged PBE0+MBD level of theory for gas-phase molecules, while PBE0+MBD with the modified Poisson-Boltzmann (MPB) model of water was used for solvated molecules [21]. By addressing both molecule-solvent and dispersion interactions, the AQM dataset serves as a challenging benchmark for state-of-the-art machine learning methods for property modeling and de novo generation of large solvated molecules with pharmaceutical relevance.

Quantitative Performance of Universal Descriptors

The performance of universal descriptor approaches has been quantitatively evaluated across multiple material properties. When applied to predicting properties of inorganic crystals, PLMF descriptors combined with machine learning methods have demonstrated remarkable accuracy that compares well with the quality of training data for virtually any stoichiometric inorganic crystalline material [20].

Table 2: Prediction Performance of Universal Descriptor Approaches

| Property Category | Specific Properties Predicted | Performance Metrics |

|---|---|---|

| Electronic Properties | Metal/insulator classification, band gap energy | Accuracy comparable to training data quality |

| Thermomechanical Properties | Bulk and shear moduli, Debye temperature | Reciprocates available thermomechanical experimental data |

| Thermal Properties | Heat capacities at constant pressure and volume, thermal expansion coefficient | Accurate predictions validated via AEL-AGL framework |

The universal applicability of the PLMF approach is particularly valuable for thermomechanical properties, as proper calculation pathways for these properties in the most efficient scenarios still require analysis of multiple density functional theory (DFT) runs, elevating the cost of already expensive calculations [20]. Once trained, models using universal descriptors achieve comparable accuracies without the need for further ab initio data, as all necessary input properties are either tabulated or derived directly from geometrical structures [20].

Experimental Protocols for Descriptor Implementation

Protocol 1: Generating Property-Labelled Materials Fragments

Objective: To construct PLMF descriptors for inorganic crystalline materials [20].

Materials and Software Requirements:

- Crystal structure file (CIF format or similar)

- Atomic property database [20]

- Voronoi tessellation software

- Graph analysis tools

Step-by-Step Procedure:

Structure Input: Begin with a well-defined crystal structure containing atomic coordinates and lattice parameters.

Voronoi Tessellation: Partition the crystal structure into atom-centered Voronoi-Dirichlet polyhedra using a computational geometry approach [20]. This partitioning is invaluable in the topological analysis of crystals.

Connectivity Determination: Establish connectivity between atoms by satisfying two criteria:

- The atoms must share a Voronoi face (perpendicular bisector between neighboring atoms)

- The interatomic distance must be shorter than the sum of the Cordero covalent radii to within a 0.25 Å tolerance [20]

Graph Construction: Construct a three-dimensional graph from the connectivity information and generate the corresponding adjacency matrix. The adjacency matrix A of a simple graph with n vertices (atoms) is a square matrix (n × n) with entries aij=1 if atom i is connected to atom j, and aij=0 otherwise [20].

Graph Partitioning: Partition the full graph into smaller subgraphs corresponding to individual fragments, restricting the length l to a maximum of three, where l is the largest number of consecutive, non-repetitive edges in the subgraph [20]. This restriction serves to curb the complexity of the final descriptor vector.

Property Assignment: Differentiate fragments by local reference properties, including general properties, measured properties, and derived properties as detailed in Table 1.

Descriptor Vector Assembly: Concatenate all fragment-based and crystal-wide descriptors, then filter out low variance (<0.001) and highly correlated (r²>0.95) features to produce the final descriptor vector containing 2,494 total descriptors [20].

Troubleshooting Notes:

- Bond ambiguity: In materials, bond order (single/double/triple bond classification) is not considered due to inherent ambiguity [20].

- Limited context: If models perform poorly with new fragments, consider expanding the training dataset to include more diverse structural motifs.

Protocol 2: Quantum-Mechanical Conformer Analysis for Drug-like Molecules

Objective: To generate quantum-mechanical descriptors for large, flexible drug-like molecules accounting for solvent effects [21].

Materials and Software Requirements:

- Molecular structure files

- CREST code (Conformer-Rotamer Ensemble Sampling Tool) [21]

- Quantum chemistry software with DFTB3 and PBE0+MBD capability

- Implicit solvation model implementation (GBSA, MPB)

Step-by-Step Procedure:

Conformer Generation: Generate molecular conformers using the conformational search workflow implemented in CREST code, which considers semi-empirical GFN2-xTB with GBSA implicit solvent model of water [21].

Geometry Optimization: Optimize a set of representative conformers using the third-order DFTB method (DFTB3) supplemented with a treatment of many-body dispersion (MBD) interactions [21].

Solvent Environment Consideration: Perform calculations in both gas phase and implicit water described by the GBSA model to understand solvent effects [21].

Property Calculation: For each optimized conformer, compute an extensive number (over 40) of global (molecular) and local (atom-in-a-molecule) quantum-mechanical properties at a high level of theory:

Dataset Assembly: Compile the AQM-gas and AQM-sol subsets containing quantum-mechanical structural and property data of molecules in gas phase and implicit water, respectively [21].

Validation Steps:

- Compare calculated properties with experimental values where available

- Validate thermomechanical predictions via the AEL-AGL integrated framework [20]

- Assess convergence of electronic properties with basis set size

Visualization of Descriptor Generation Workflows

Diagram 1: PLMF descriptor generation workflow showing the transformation of crystal structures into quantitative descriptors.

Visualization of Quantum-Mechanical descriptor Generation

Diagram 2: Quantum-mechanical descriptor generation for drug-like molecules, highlighting environment-aware calculations.

Table 3: Essential Computational Tools for Universal Descriptor Research

| Tool/Resource | Type | Function in Research | Access Information |

|---|---|---|---|

| AFLOW Repository | Computational Database | Provides high-throughput ab initio calculation data for training descriptor models | Online access: aflow.org [20] |

| Springer Nature Experiments | Protocols Database | Searchable database of 95,000+ protocols and methods in life and biomedical sciences | Institutional subscription [22] |

| CREST Code | Software Tool | Conformer-Rotamer Ensemble Sampling Tool for generating molecular conformers | Open access [21] |

| JoVE (Journal of Visualized Experiments) | Video Protocols | Peer-reviewed methods in video format, including chemistry and engineering techniques | Institutional subscription [23] |

| protocols.io | Open Access Repository | Platform for creating, organizing, and publishing reproducible research protocols | Open access with premium institutional features [23] |

| Current Protocols | Protocol Series | Collection of over 20,000 updated, peer-reviewed laboratory methods and protocols | Institutional subscription [24] |

The development of universal descriptors for diverse compound classes remains a significant challenge in chemical space exploration, but recent advancements in fragment-based approaches and quantum-mechanical descriptors show considerable promise. The PLMF methodology provides a framework for representing inorganic crystals that transcends traditional material family limitations, while datasets like AQM enable more accurate modeling of drug-like molecules in chemically relevant environments.

Future progress will likely come from improved integration of these approaches, with fragment-based methods incorporating more sophisticated electronic structure information and quantum-mechanical methods becoming efficient enough to handle broader compound classes. As these technologies mature, they will significantly accelerate the discovery of novel materials and pharmaceutical compounds by enabling more effective navigation of the vast chemical space. The experimental protocols and scientific toolkit provided in this guide offer researchers essential methodologies for implementing these approaches in their materials discovery research.

AI-Powered Navigation: Generative Models, Virtual Synthesis, and High-Throughput Screening

Generative AI and Foundation Models for De Novo Molecular Design

The exploration of organic chemical space for functional materials represents one of the most significant challenges and opportunities in modern materials science. The vast number of theoretically possible organic molecules—estimated to be in the range of 10^60—presents both an opportunity for discovery and a prohibitive challenge for exhaustive exploration [3]. Traditional experimental approaches relying on trial-and-error and empirical rules are fundamentally inadequate for systematically navigating this immense design space. Within this context, generative artificial intelligence (AI) and foundation models have emerged as transformative paradigms for de novo molecular design, enabling researchers to algorithmically navigate and construct molecules with tailored properties for specific applications, from pharmaceuticals to organic electronics [25] [19].

This technical review examines the current state of generative AI for molecular design, focusing on the architectural frameworks, methodological considerations, and translational applications that are reshaping materials discovery. By framing these computational advances within the broader thesis of chemical space exploration, we aim to provide researchers with both theoretical understanding and practical protocols for implementing these approaches in their own materials discovery pipelines.

Theoretical Foundations: Generative Architectures for Molecular Representation

Key Algorithmic Architectures

Generative AI for molecular science encompasses several distinct architectural paradigms, each with unique strengths for navigating chemical space [25]:

- Variational Autoencoders (VAEs): These probabilistic models learn a compressed latent representation of molecular structure that enables smooth interpolation and sampling of novel compounds. The encoder network maps molecules to a distribution in latent space, while the decoder reconstructs molecules from points in this space, allowing for gradient-based optimization of desired properties.

- Generative Adversarial Networks (GANs): Through an adversarial training process between generator and discriminator networks, GANs learn to produce highly realistic synthetic molecular structures that are indistinguishable from real experimental compounds in the training data.

- Autoregressive Models: Including transformers, these models generate molecular sequences (SMILES, SELFIES) or structures token-by-token, leveraging attention mechanisms to capture long-range dependencies in molecular representation.

- Denoising Diffusion Probabilistic Models (DDPMs): These models progressively add noise to data in a forward process, then learn to reverse this process to generate novel molecular structures from noise, often producing highly diverse and valid outputs.

Molecular Representation Schemes

The choice of molecular representation fundamentally shapes the generative approach and its effectiveness:

Table: Molecular Representation Schemes in Generative AI

| Representation | Format | Advantages | Limitations |

|---|---|---|---|

| SMILES | Text-based | Simple, compact string representation | Potential invalid structures |

| SELFIES | Text-based | Guaranteed molecular validity | Less human-readable |

| Molecular Graphs | Graph-based | Explicit atom-bond relationships | Complex generation process |

| 3D Coordinates | Spatial | Direct structural information for property prediction | Increased computational complexity |

Methodological Implementation: Workflows and Protocols

Crystal Structure Prediction-Informed Evolutionary Optimization

Recent advances have demonstrated the critical importance of incorporating crystal structure prediction (CSP) into evolutionary algorithms for materials discovery. The CSP-informed evolutionary algorithm (CSP-EA) represents a significant advancement over property-based approaches by embedding automated crystal structure prediction directly within the fitness evaluation of candidate molecules [3].

Table: CSP Sampling Schemes for Evolutionary Algorithms

| Sampling Scheme | Space Groups | Structures per Group | Global Minima Found | Low-Energy Structures Recovered | Computational Cost (Core-Hours) |

|---|---|---|---|---|---|

| SG14-500 | 1 (P2₁/c) | 500 | 12/20 | 25.7% | <5 |

| SG14-2000 | 1 (P2₁/c) | 2000 | 15/20 | 33.9% | <5 |

| Sampling A | 5 (biased) | 2000 | 18/20 | 73.4% | ~70 |

| Top10-2000 | 10 | 2000 | 19/20 | 77.1% | ~169 |

| Comprehensive | 25 | 10,000 | 20/20 | 100% | 2533 |

The workflow for CSP-EA involves fully automated processing from line notation descriptions (e.g., InChi strings) through structure generation, lattice energy minimization, and property assessment [3]. For organic semiconductors, this approach has demonstrated superior performance in identifying molecules with high charge carrier mobilities compared to optimization based solely on molecular properties like reorganization energy.

Diagram 1: CSP-informed evolutionary algorithm workflow for molecular discovery.

Experimental Protocol: CSP-Informed Evolutionary Search

Objective: To identify organic molecular semiconductors with optimized charge carrier mobility through CSP-informed evolutionary algorithms.

Materials and Computational Resources:

- High-performance computing cluster (e.g., 40-core nodes)

- Automated CSP pipeline (handles structure generation, minimization, property calculation)

- Evolutionary algorithm framework with molecular representation and variation operators

Methodology:

- Initialization: Generate initial population of 50-100 molecules using fragment-based assembly or sampling from known chemical spaces.

- Fitness Evaluation:

- Perform automated CSP using balanced sampling scheme (e.g., Sampling A: 5 space groups, 2000 structures/group)

- Calculate charge carrier mobility from predicted crystal structures

- Compute fitness score based on mobility and stability metrics

- Selection: Apply tournament selection to identify parent molecules based on fitness rankings.

- Variation:

- Crossover: Combine molecular fragments from two parent structures

- Mutation: Apply point mutations (atom substitution, bond modification, functional group changes)

- Iteration: Repeat for 20-50 generations or until convergence criteria met.

- Validation: Select top candidates for comprehensive CSP and experimental synthesis validation.

Key Parameters:

- Low-energy crystal structure threshold: 7.2 kJ mol⁻¹ (captures 95% of known polymorph pairs)

- Charge mobility calculation: Use Marcus theory or Boltzmann transport equation

- Population size: 50-100 molecules per generation

- Mutation rate: 0.05-0.15 per molecular position

Advanced Applications: From Small Molecules to Proteins

Drug Discovery and Protein Design

Generative AI has catalyzed a paradigm shift in structure-based drug discovery and protein engineering [25]. For small molecule design, models now optimize multiple pharmacological objectives simultaneously, including target affinity, ADMET profiles (absorption, distribution, metabolism, excretion, toxicity), and synthetic accessibility. In protein engineering, large language models (LLMs) guided by evolutionary sequence data and diffusion-based structural prediction pipelines (e.g., RFdiffusion, FrameDiff) have demonstrated remarkable success in de novo protein design.

Table: Generative AI Applications in Biomedical Domains

| Application Domain | Generative Model | Key Achievement | Limitations |

|---|---|---|---|

| Small Molecule Design | VAE, GAN, Diffusion | Multi-property optimization (affinity, ADMET) | Synthetic accessibility challenges |

| Protein Sequence Design | Transformer, LLM | De novo enzyme design with catalytic activity | Limited training data for specific folds |

| Protein Structure Design | Diffusion (RFdiffusion) | Novel protein scaffolds with specified symmetry | Computational intensity for large proteins |

| Retrosynthesis Planning | Transformer, Monte Carlo Tree Search | Novel synthetic routes for complex molecules | Reaction condition prediction less accurate |

| Clinical Data Augmentation | GAN, Diffusion | Privacy-preserving synthetic EHR data | May overlook rare pathologies |

Clinical Translation and Validation

The translational pathway for AI-generated molecules involves multiple validation stages [26]:

- In silico validation: Binding affinity prediction, molecular dynamics simulations, and ADMET profiling

- In vitro testing: Compound synthesis, target binding assays, and cellular efficacy/toxicity assessments

- In vivo evaluation: Animal models for pharmacokinetics and therapeutic efficacy

- Clinical trials: Phase I-III trials for safety and efficacy in human populations

Several AI-designed small molecules have progressed to preclinical and clinical stages, demonstrating the growing maturity of these approaches. For protein therapeutics, generative models have produced novel enzymes, binders, and structural proteins with functions comparable to naturally occurring counterparts.

Diagram 2: Multi-stage validation pipeline for AI-generated therapeutic candidates.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Generative Molecular Design

| Tool Category | Specific Solutions | Function | Application Context |

|---|---|---|---|

| Generative Modeling Frameworks | PyTorch, TensorFlow, JAX | Deep learning implementation | Model development and training |

| Molecular Representation | RDKit, OpenBabel | Chemical informatics and manipulation | Feature extraction, molecule processing |

| Crystal Structure Prediction | Global Lattice Explorer, Random Structure Search | Crystal packing exploration | Materials property prediction |

| Property Prediction | SchNet, DimeNet++, GemNet | Quantum property estimation | Molecular fitness evaluation |

| Protein Design | RFdiffusion, ESMFold, AlphaFold | Protein structure prediction | De novo protein engineering |

| Drug Discovery Platforms | Atomwise, Insilico Medicine, Recursion | Integrated discovery pipelines | Therapeutic candidate identification |

| High-Performance Computing | SLURM, Kubernetes | Computational resource management | Large-scale parallel CSP and model training |

Challenges and Future Directions

Despite significant progress, generative AI for molecular design faces several persistent challenges [26] [19]:

- Data Quality and Quantity: Biomedical datasets often contain noise, missing values, and biases that can propagate through generative models.

- Interpretability and Explainability: The "black box" nature of many deep generative models complicates scientific interpretation and trust.

- Generalization and Transfer Learning: Models trained on one chemical domain often struggle to generalize to unrelated regions of chemical space.