Navigating Experimental Failures: A Practical Guide to Robust Bayesian Optimization in Biomedical Research

Bayesian optimization (BO) is a powerful, sample-efficient method for guiding expensive experiments, but its real-world application is often hampered by a pervasive issue: experimental failures.

Navigating Experimental Failures: A Practical Guide to Robust Bayesian Optimization in Biomedical Research

Abstract

Bayesian optimization (BO) is a powerful, sample-efficient method for guiding expensive experiments, but its real-world application is often hampered by a pervasive issue: experimental failures. These failures, arising from failed syntheses, unstable compounds, or equipment issues, create missing data that can derail standard BO. This article provides a comprehensive guide for researchers and drug development professionals on handling these unknown constraints and failures. We explore the foundational causes of failures in scientific domains, detail state-of-the-art methodological solutions like feasibility-aware acquisition functions and the 'floor padding trick,' and troubleshoot common pitfalls such as model misspecification and boundary oversampling. Through validation against real-world benchmarks from materials science and drug discovery, we demonstrate how robust BO strategies can accelerate the search for optimal conditions while safely navigating infeasible regions, ultimately enhancing the reliability and efficiency of autonomous experimentation in biomedical research.

The Inevitability of Failure: Understanding Unknown Constraints in Scientific Experimentation

In the application of Bayesian optimization (BO) to experimental science, researchers frequently encounter a critical roadblock: experimental failure. Unlike optimization in purely computational domains where every parameter combination yields a result, physical experiments can fail catastrophically, providing no useful data about the objective function. Within the context of BO, an experimental failure is specifically defined as an evaluation attempt for a parameter set x that does not yield a measurable objective function value y, preventing its use in updating the regression surrogate model [1] [2]. These failures arise from a priori unknown constraints—regions in parameter space that violate unmodeled physical, chemical, or technical limitations of the experimental system [2]. Handling these failures is not merely a technical inconvenience but a fundamental requirement for efficient autonomous experimentation, as they provide critical information about the boundaries of feasible parameter space.

Classification and Characteristics of Experimental Failures

Experimental failures in BO can be categorized through a formal taxonomy based on the nature of the constraint function, c(x), which defines the feasible region X ⊆ Ω where the objective function can be evaluated [2]. The most pertinent category for experimental sciences comprises unknown constraints, characterized by several key properties.

- Non-Quantifiable: The experiment returns only binary information (success/failure) without indicating how close the parameters were to the feasibility boundary [2].

- Unrelaxable: The constraint must be satisfied for an objective measurement to be obtained. A failed experiment means y is simply unavailable [2].

- Simulation (or Measurement) Constraint: Evaluating the constraint incurs a non-negligible cost, similar to the objective function itself, as it requires executing the (often costly) experimental procedure [2].

- Hidden: The constraint is not explicitly known to the researcher before commencing the optimization campaign [2].

Table 1: Properties of Common Experimental Failure Types in Scientific Applications

| Failure Mode | Constraint Type | Impact on Objective Evaluation | Example from Literature |

|---|---|---|---|

| Failed Synthesis/Reaction | Unknown, Unrelaxable | No property measurement possible | SrRuO3 thin film phase not formed during ML-MBE; molecule synthesis fails in drug discovery [1] [2]. |

| Equipment/Instrument Limitation | Unknown, Unrelaxable | Measurement cannot be performed or is invalid | Sensor fault in Organic Rankine Cycle systems; instrument sensitivity limits [2] [3]. |

| Material Property Violation | Unknown, Unrelaxable | Property measurement is precluded | Material is too fragile for characterization; insufficient photoluminescence for analysis [2]. |

| Safety/Operational Boundary | Unknown or Known, Unrelaxable | Experiment is aborted or produces dangerous outcome | Charge delivery curve in neuromodulation causing adverse effects; unstable process conditions [4] [2]. |

Quantifying the Impact: Failure Statistics and Optimization Performance

The prevalence of experimental failures significantly impacts the sample efficiency and success of BO campaigns. Data from real-world applications demonstrate that failures are not edge cases but common occurrences.

Table 2: Documented Experimental Failure Rates in Bayesian Optimization Studies

| Application Domain | Reported Failure Rate | Primary Cause of Failure | Impact on BO Efficiency |

|---|---|---|---|

| Materials Growth (SrRuO3) | Handled explicitly in algorithm | Target phase not formed | Addressed via "floor padding trick"; successful optimization in 35 runs [1]. |

| Polymer Compound Development | Implied by complex feasibility | Opposition between Young's Modulus and Impact Strength | Over-complication with expert knowledge initially impaired BO performance [5]. |

| Neuromodulation (Simulated) | Implied by safety boundaries | Parameter combinations near safety/charge limits | Standard BO prone to oversampling boundaries; required mitigation strategies [4]. |

Simulation studies further reveal performance degradation of standard BO algorithms as the proportion of infeasible space increases. Naive strategies, such as ignoring failure data or assigning a constant penalty, can lead to suboptimal performance, including excessive sampling of infeasible regions or convergence to local optima [1] [2]. The performance of failure-handling algorithms is often measured by the number of valid experiments required to find a feasible optimum and the best objective value achieved over the course of the optimization [2].

Detailed Experimental Protocols for Failure Handling

Protocol 1: The Floor Padding Trick with Gaussian Process BO

This protocol is designed for material growth and synthesis optimization where failures are common [1].

- 1. Objective: To optimize an experimental objective (e.g., residual resistivity ratio) while handling synthesis failures that prevent measurement.

- 2. Materials and Reagents:

- Molecular Beam Epitaxy (MBE) system or analogous synthesis apparatus.

- Precursor materials (e.g., Sr, Ru, O₂ for SrRuO3).

- Characterization equipment (e.g., X-ray diffractometer, electrical transport measurement system).

- 3. Procedure:

- Step 1 - Initialization: Select a small number (e.g., 5) of initial parameter points X = {x₁, ..., xₙ} via random sampling or space-filling design.

- Step 2 - Experiment and Evaluation:

- For each proposed point xₙ, execute the synthesis procedure.

- IF synthesis is successful and the target material is formed:

- Measure the objective value yₙ = S(xₙ) + ε.

- ELSE (Experimental Failure):

- Assign yₙ = min(Y), where Y is the set of all successfully measured objective values obtained so far. This is the "floor padding" [1].

- Step 3 - Model Update: Update the Gaussian Process (GP) surrogate model with the new data point (xₙ, yₙ), regardless of whether yₙ is a genuine measurement or a padded value.

- Step 4 - Acquisition and Suggestion: Using the updated GP, maximize an acquisition function (e.g., Expected Improvement) to propose the next experiment xₙ₊₁.

- Step 5 - Iteration: Repeat steps 2-4 until a termination criterion is met (e.g., budget exhaustion, performance target reached).

- 4. Analysis and Notes:

- This method provides an adaptive, worst-case penalty that helps the algorithm avoid regions near failures.

- It leverages all experimental outcomes, including failures, by updating the GP model, guiding subsequent exploration.

Protocol 2: Feasibility-Aware BO with Variational GP Classification

This protocol uses a classifier to explicitly model the probability of failure, suitable for applications with significant infeasible regions like molecule design [2].

- 1. Objective: To find parameters x that optimize an objective f(x) while respecting an a priori unknown constraint c(x) ≥ 0.

- 2. Materials and Reagents:

- Automated synthesis platform (e.g., for organic molecules).

- Analytical equipment for property verification (e.g., HPLC, mass spectrometer).

- Reagents and starting materials for synthesis.

- 3. Procedure:

- Step 1 - Initialization: Define parameter space and acquire initial data set D = {(xᵢ, yᵢ, ỹᵢ)} for i = 1,...,N, where ỹᵢ is a binary label (1 for feasible, 0 for infeasible).

- Step 2 - Surrogate Modeling:

- Train a regression GP model on the feasible data (where ỹᵢ = 1) to model the objective f(x).

- Train a separate Variational Gaussian Process (VGP) classifier on the entire dataset D to model the probability of feasibility, p(ỹ = 1 | x) [2].

- Step 3 - Feasibility-Aware Acquisition:

- Calculate a feasibility-weighted acquisition function, such as the Expected Improvement with Constraint (EIC):

- EIC(x) = EI(x) × p(ỹ = 1 | x) [2].

- Here, EI(x) is the standard Expected Improvement from the regression GP.

- Calculate a feasibility-weighted acquisition function, such as the Expected Improvement with Constraint (EIC):

- Step 4 - Experiment and Evaluation:

- Propose the next point xₙ₊₁ by maximizing EIC(x).

- Execute the experiment at xₙ₊₁.

- Record both the feasibility label ỹₙ₊₁ and, if feasible, the objective value yₙ₊₁.

- Step 5 - Iteration: Update the datasets and both surrogate models. Repeat steps 2-4 until termination.

- 4. Analysis and Notes:

- This method explicitly balances the pursuit of high-performance points with the avoidance of likely failures.

- It is particularly effective when the feasible region is small and complexly shaped.

Visualization of Failure-Handling Bayesian Optimization Workflows

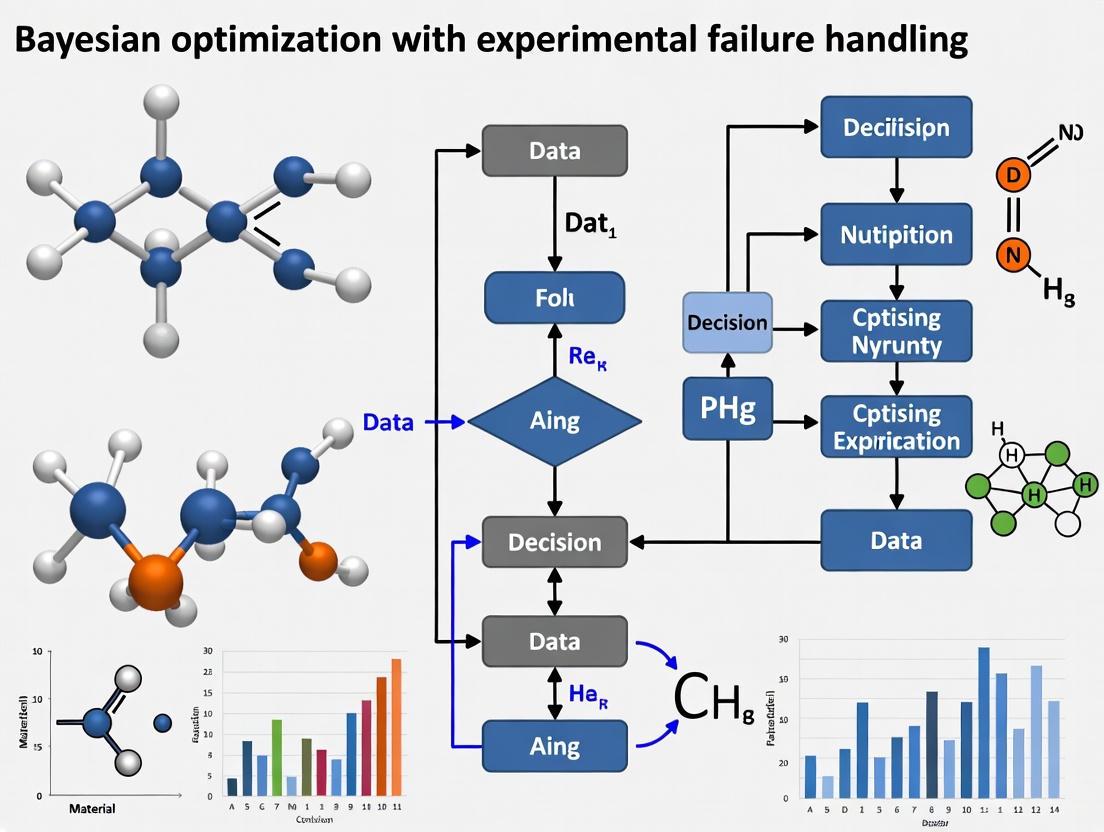

The following diagrams illustrate the core logical structure of BO workflows that incorporate experimental failure handling.

Diagram 1: General workflow for BO with experimental failure handling, illustrating the critical decision point after each experiment and the two paths for successful and failed trials.

Diagram 2: A taxonomy of 'experimental failure' in BO, showing its relationship to unknown constraints, its defining characteristics, and common real-world examples.

The Scientist's Toolkit: Key Reagents and Materials

Table 3: Essential Research Reagents and Materials for Featured BO Experiments

| Item Name | Function/Application | Example Use Case |

|---|---|---|

| Virgin & Recycled Polymers | Base materials for compound formulation with variable properties. | Optimizing polymer compound properties (MFR, Young's modulus) [5]. |

| Impact Modifier & Filler | Additives to modify specific mechanical properties of a polymer compound. | Balancing impact strength and stiffness in recycled plastic compounds [5]. |

| Molecular Beam Epitaxy (MBE) System | High-precision thin film deposition system for materials growth. | Growing high-quality SrRuO3 thin films for electrode applications [1]. |

| Metalorganic Precursors (Sr, Ru, O₂) | Source materials for the growth of oxide thin films in an MBE system. | Forming the perovskite crystal structure of SrRuO3 [1]. |

| Organic Synthesis Platform | Automated system for performing chemical reactions and synthesizing molecules. | High-throughput synthesis of drug candidates (e.g., BCR-Abl kinase inhibitors) [2]. |

| Cyclopentane Working Fluid | Organic fluid used in the Rankine cycle for waste heat recovery. | Serving as the working medium in an ORC system for sensor fault diagnosis studies [3]. |

In scientific domains such as materials science and drug development, optimizing processes via experimental campaigns is fundamentally hampered by experimental failures. These failures manifest when a suggested experiment cannot be evaluated, yielding no useful data for the objective function. Within the framework of Bayesian optimization (BO)—a sample-efficient, sequential global optimization strategy—such occurrences present a significant challenge, as they can stall the optimization loop and waste precious resources [1]. This article establishes a taxonomy of these failures, categorizing them primarily into synthetic inaccessibility and measurement limitations, and provides structured protocols for handling them within a BO campaign, leveraging the latest research in the field.

A Taxonomy of Experimental Failures

Experimental failures in optimization campaigns can be systematically classified. The following table outlines the core categories and their characteristics.

Table 1: Taxonomy of Experimental Failures in Bayesian Optimization

| Failure Category | Description | Common Examples in Research | Impact on BO |

|---|---|---|---|

| Synthetic Inaccessibility / Unknown Feasibility Constraints | The proposed experimental parameters lie in a region of the search space where the target material cannot be synthesized, the chemical reaction fails, or the target molecule is unstable or unsynthesizable [1] [6]. | Failed thin-film growth in molecular beam epitaxy (MBE); unstable hybrid organic-inorganic halide perovskites; unsynthesizable molecular structures in drug design [1] [6]. | Results in a "missing" or invalid data point. The algorithm must learn to avoid this infeasible region. |

| Measurement Limitations | The experiment is conducted, but a technical fault prevents a valid measurement of the property of interest from being obtained. | Equipment malfunction; sample degradation during measurement; software errors in data acquisition [7]. | Wastes an experimental cycle without yielding an objective function value. |

| System-Level Failures (IoT/ Automated Labs) | Failures arising from the complex, distributed hardware and software systems that operate a self-driving laboratory (SDL). These are particularly relevant to integrated, automated workflows [7]. | A single component (e.g., a robotic arm, sensor, or software controller) in an IoT-based lab fails, causing a cascade that aborts the experiment [7]. | Halts the entire automated workflow until the failure is diagnosed and rectified. |

Bayesian Optimization Algorithms for Handling Failures

The core challenge is to adapt the BO procedure to learn from failures, not just successes. The surrogate model must be updated, and the acquisition function must balance the exploration of promising regions with the avoidance of known failures. Several key strategies have been developed.

The Floor Padding Trick

This method, introduced for high-throughput materials growth, is a simple yet powerful data imputation technique [1]. When an experimental trial for parameter vector ( xn ) results in a failure, the evaluation ( yn ) is complemented with the worst value observed so far in the campaign: ( yn = \min{1 \leq i < n} y_i ) [1].

- Rationale: It provides the BO algorithm with a strong, adaptive signal that the attempted parameter set performed poorly, encouraging exploration away from that region without requiring manual tuning of a penalty constant [1].

- Protocol:

- Maintain a running list of all successfully measured objective function values.

- Upon encountering an experimental failure, identify the minimum value from the list of successful observations.

- Assign this minimum value to the failed experiment's output.

- Proceed with the standard BO update of the Gaussian Process surrogate model using this imputed data point.

Feasibility-Aware BO with Binary Classification

A more sophisticated approach involves explicitly modeling the probability of failure using a classifier, often a variational Gaussian process classifier, that is learned on-the-fly [6]. This model predicts whether a given parameter set ( x ) will lead to a feasible (successful) experiment.

- Rationale: It directly learns the unknown feasibility constraint boundary, allowing the acquisition function to actively avoid regions predicted to be infeasible [6].

- Protocol:

- Data Collection: Build a dataset of parameters ( x ) and their corresponding feasibility labels (success or failure).

- Model Training: Train a Gaussian process classifier on this dataset to estimate the probability of feasibility, ( p(\text{feasible} | x) ).

- Feasibility-Aware Acquisition: Modify a standard acquisition function, ( \alpha(x) ), to incorporate the feasibility prediction. A common method is the Product of Expectations: ( \alpha_{\text{feas}}(x) = p(\text{feasible} | x) \cdot \mathbb{E}[\alpha(x) | \text{feasible}] ) This function balances high expected performance with a high probability of success [6].

- Sequential Update: With each new experiment (success or failure), update both the regression surrogate model for the objective and the classification model for feasibility.

Integrated Workflow

The following diagram illustrates the logical workflow of a BO loop that integrates both the floor padding trick and a feasibility classifier to handle experimental failures.

Experimental Protocols

Protocol 1: Optimizing Materials Synthesis with Unknown Stability Constraints

This protocol is adapted from studies on optimizing the growth of SrRuO3 thin films and the inverse design of hybrid perovskites [1] [6].

Objective: To find the growth parameters ( x ) (e.g., temperature, pressure, flux ratios) that maximize a target property ( y ) (e.g., Residual Resistivity Ratio, RRR) while handling failed growth runs.

Materials and Reagents:

- Molecular Beam Epitaxy (MBE) system or other relevant thin-film deposition system.

- Precursor materials for the target material (e.g., Sr, Ru, O2 for SrRuO3).

- Single-crystal substrates.

Procedure:

- Initialization:

- Define a wide, multi-dimensional parameter space based on literature and expert knowledge.

- Initialize the BO campaign using a space-filling design (e.g., Latin Hypercube Sampling) or a more advanced method like HIPE [8] for 5-10 initial experiments.

Sequential BO Loop: a. Suggestion: Use a feasibility-aware acquisition function (see Section 3.2) to suggest the next parameter set ( xn ). b. Execution: Attempt to grow the thin film using the suggested parameters ( xn ) in the MBE system. c. Evaluation: - Success: If a coherent, single-phase film is confirmed (e.g., via in-situ reflection high-energy electron diffraction), proceed to measure the target property ( yn ) (e.g., RRR). - Failure: If the film is not formed or is polycrystalline/amorphous, classify the run as a failure. Apply the floor padding trick, setting ( yn ) to the worst RRR value recorded from successful runs so far [1]. d. Update: Update the Gaussian Process regression model with the new data point ( (xn, yn) ). Simultaneously, update the binary feasibility classifier with the new feasibility label for ( x_n ).

Termination: Continue until a predefined performance threshold is met, a maximum number of experiments is reached, or the system converges.

Protocol 2: Drug Design with Synthetic Accessibility Constraints

This protocol is informed by benchmarks involving the design of BCR-Abl kinase inhibitors with unknown synthetic accessibility constraints [6].

Objective: To find a molecular structure ( x ) that maximizes a desired property (e.g., binding affinity, selectivity) while being synthetically accessible.

Materials and Reagents:

- Virtual chemical library or generative molecular model.

- Software for predicting molecular properties (e.g., docking software for binding affinity).

- (For validation) Chemical reagents and laboratory equipment for organic synthesis.

Procedure:

- Problem Formulation:

- Represent the molecular design space via a continuous latent space (e.g., from a variational autoencoder) or using molecular descriptors.

- Define the objective function, ( f(x) ), as a composite score incorporating predicted bioactivity and other ADMET properties.

Sequential BO Loop: a. Suggestion: The acquisition function suggests a candidate molecule ( xn ). b. Feasibility Check: A synthetic accessibility (SA) predictor, which is a binary classifier updated in real-time, evaluates ( xn ). - If ( p(\text{synthesizable} | x_n) ) is below a threshold, the acquisition function is penalized, and the molecule may be rejected. c. Evaluation: - Success (Virtual): If deemed synthesizable, the molecule's properties are evaluated via computational prediction (e.g., docking score). - Failure (Virtual): If the SA predictor flags the molecule as unsynthesizable, it is recorded as a failure. A penalty (e.g., a very low objective value or floor-padded value) is assigned [6]. d. Update: The regression model for the objective and the SA classifier are updated with the new outcome.

Validation:

- The top-performing, synthetically accessible candidates identified by the BO campaign are subjected to actual laboratory synthesis and experimental testing to validate the predictions.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Failure-Aware Bayesian Optimization

| Item | Function in the Context of Failure Handling |

|---|---|

| Gaussian Process (GP) Regression Library (e.g., GPyTorch, scikit-learn) | Serves as the core surrogate model for modeling the objective function. It is updated with imputed values from failures via the floor padding trick [1]. |

| Variational Gaussian Process (VGP) Classifier | Used to model the unknown feasibility constraint function (probability of failure) on-the-fly, a key component of feasibility-aware BO as in the Anubis framework [6]. |

| Bayesian Optimization Suite (e.g., BoTorch, Ax, Atlas) | Provides the infrastructure for defining the optimization problem, combining regression and classification models, and implementing custom acquisition functions like HIPE or feasibility-aware EI [6] [8]. |

| Automated Laboratory Equipment / Self-Driving Lab (SDL) | The physical (or virtual) platform where experiments are executed. Its reliability is crucial; system-level failures can be analyzed using integrated frameworks like Model-Based Systems Engineering (MBSE) and Fault Tree Analysis (FTA) [7]. |

| Fault Tree Analysis (FTA) & Bayesian Network (BN) Models | Used for quantitative failure analysis of the integrated IoT systems within an automated lab, helping to identify and prioritize the weakest links in the experimental hardware/software pipeline [7]. |

In real-world experimental sciences, from materials growth to drug development, the optimization of complex processes is frequently hampered by experimental failures. These failures result in missing data, a problem that severely impedes traditional Bayesian optimization (BO) frameworks. Standard BO algorithms operate under the assumption that every suggested parameter configuration can be evaluated and will yield a meaningful quantitative result. However, in practice, many experiments fail entirely—synthesis reactions yield no target compound, thin films fail to crystallize properly, or biological assays produce inconclusive results. These scenarios create fundamental challenges for the Gaussian process (GP) surrogate models at the heart of BO, which require complete datasets to build accurate representations of the underlying objective function. When experimental failures are treated as simple omissions, the surrogate model's uncertainty estimates become miscalibrated, and the acquisition function begins to suggest suboptimal or repeatedly failing parameters. This article examines the mechanistic reasons for standard BO's failure in the presence of missing data and presents advanced methodological adaptations that transform this challenge into a tractable problem.

The Mechanistic Breakdown: How Missing Data Compromises Standard BO

The Surrogate Model's Dependency on Complete Data

The Gaussian process surrogate model functions by establishing a covariance structure across the entire parameter space based on observed data points. Each successful evaluation informs the model about the objective function's behavior in its vicinity. Missing data creates "holes" in this structure—regions where the model lacks direct evidence about whether parameters yield good results or simply fail. Consequently, the model's posterior mean and variance in these regions become poorly calibrated. The GP may extrapolate inappropriately across failure zones, leading to misguided predictions.

The Acquisition Function's Misguided Exploration-Exploitation Balance

Acquisition functions like Expected Improvement (EI) and Upper Confidence Bound (UCB) rely on the surrogate model's predictions to balance exploring uncertain regions with exploiting promising ones. When experimental failures are treated as missing observations:

- Over-exploration of failure regions: If failures are simply omitted from the dataset, the surrogate model maintains high uncertainty in these regions. Acquisition functions like UCB, which explicitly favor high-uncertainty areas, may repeatedly sample from parameter configurations that consistently fail [4].

- Failure to recognize constraint boundaries: Missing data often occurs at parameter boundaries where conditions become physically unrealizable. Standard BO lacks mechanisms to infer that these regions should be avoided, wasting experimental resources on invalid configurations.

Table 1: Impact of Missing Data on Standard BO Components

| BO Component | Function in Standard BO | Impact of Missing Data |

|---|---|---|

| Gaussian Process Surrogate | Models the objective function across parameter space | Creates inaccurate posterior distributions with poor extrapolation across failure zones |

| Acquisition Function | Balances exploration and exploitation to select next parameters | Suggests points in failure regions due to improperly high uncertainty estimates |

| Experimental Iteration Loop | Sequentially improves model with new data | Wastes resources on failed experiments, slowing convergence |

Advanced Methodologies for Handling Missing Data in BO

The Floor Padding Trick: A Simple Yet Effective Approach

A straightforward but powerful method for handling experimental failures is the "floor padding trick" [1]. This approach assigns a penalty value to failed experiments that actively discourages the algorithm from sampling nearby regions. The implementation is refreshingly simple: when an experiment fails, the missing evaluation is imputed with the worst observed value obtained from successful experiments up to that point.

Mechanism and Workflow:

- Conduct experiments at suggested parameters ( x_n ).

- For successful experiments, record the objective function value ( y_n ).

- For failed experiments, assign ( yn = \min{1 \leq i < n} y_i ) (the worst value observed so far).

- Update the surrogate model with this completed dataset.

This method provides two critical benefits: it supplies the surrogate model with information that the attempted parameters performed poorly, and it creates a gradient that steers future sampling away from failure regions. The approach is adaptive and automatic, requiring no predetermined penalty values that might require delicate tuning [1].

Binary Classification for Failure Prediction

A more sophisticated approach involves training a binary classifier alongside the regression surrogate model to explicitly predict the probability of experimental failure for any given parameter set [1].

Implementation Protocol:

- Data Collection: Maintain a dataset ( D = {(xi, si, yi)} ) where ( si ) is a binary success/failure indicator.

- Model Training:

- Train a GP classifier on ( {(xi, si)} ) to predict failure probability ( p(fail|x) ).

- Train a GP regressor only on successful experiments ( {(xi, yi) | s_i = success} ) to model the objective function.

- Acquisition Modification: Modify the acquisition function ( \alpha(x) ) to incorporate failure probability:

- ( \alpha_{modified}(x) = \alpha(x) \cdot (1 - p(fail|x)) )

- This naturally discourages sampling in high-risk regions.

Constrained Bayesian Optimization with Known Boundaries

When the boundaries between viable and failing parameter regions can be explicitly defined, constrained BO methods excel. These approaches directly incorporate known experimental constraints into the optimization process [9] [10].

Algorithmic Framework:

- Constraint Specification: Define constraint functions ( c_j(x) \leq 0 ) that delineate feasible regions.

- Feasibility Modeling: Model each constraint function with a separate GP surrogate.

- Feasible Acquisition: Modify the acquisition function to favor points with high probability of satisfying all constraints:

- ( \alpha{feasible}(x) = \alpha(x) \cdot \prodj p(c_j(x) \leq 0) )

This approach is particularly valuable in chemistry and materials science where physical laws or synthetic accessibility constraints can be formally encoded [9].

Table 2: Comparison of Advanced Methods for Handling Missing Data in BO

| Method | Key Mechanism | Advantages | Limitations |

|---|---|---|---|

| Floor Padding Trick | Imputes failures with worst observed value | Simple, automatic, requires no tuning | May over-penalize near feasible boundaries |

| Binary Classifier | Predicts failure probability explicitly | Actively avoids failure regions | Requires sufficient data to train classifier |

| Constrained BO | Incorporates known constraint functions | Optimal for problems with defined boundaries | Requires explicit constraint formulation |

Experimental Protocols and Validation

Protocol: Bayesian Optimization with Floor Padding for Materials Growth

This protocol adapts the methodology successfully employed in optimizing the growth of SrRuO₃ thin films via molecular beam epitaxy (MBE), which achieved record residual resistivity ratio in only 35 growth runs [1].

Materials and Equipment:

- Molecular beam epitaxy system

- SrRuO₃ source materials

- Appropriate substrates

- Structural characterization (X-ray diffraction)

- Electrical transport measurement system

Procedure:

- Define Parameter Space: Identify critical growth parameters (e.g., temperature, flux ratios, pressure) and their feasible ranges.

- Initialize with Random Sampling: Conduct 5-10 initial growth experiments with randomly selected parameters to establish baseline.

- Implement BO Loop:

a. Characterize each grown film and calculate evaluation metric (e.g., residual resistivity ratio).

b. For failed growths (no film formation, wrong phase), apply floor padding:

- Identify worst successful RRR value from current dataset.

- Assign this value to the failed experiment. c. Update GP surrogate model with completed dataset (successful values + padded failures). d. Use Expected Improvement acquisition function to select next growth parameters. e. Repeat until convergence or resource exhaustion.

- Validation: Characterize optimal material properties against literature standards.

Protocol: BO with Failure Classification for Neuromodulation Parameters

This protocol addresses the challenges of optimizing neuromodulation parameters where effect sizes are small and safety constraints are critical, as demonstrated in deep brain stimulation studies [4].

Materials and Equipment:

- Neuromodulation device with programmable parameters

- Physiological and behavioral monitoring equipment

- Safety monitoring systems

Procedure:

- Parameter Definition: Define stimulation parameters (amplitude, frequency, pulse width) and their safe operating ranges.

- Outcome Measures: Establish primary outcome metric (e.g., reaction time improvement) and safety thresholds.

- Dual-Model Implementation: a. For each parameter set, administer stimulation and measure outcomes. b. Record binary success (measurable effect within safety bounds) or failure (no effect or adverse events). c. Train GP classifier on entire dataset to predict failure probability. d. Train GP regressor only on successful trials to model outcome function. e. Implement modified acquisition function: ( \alpha_{modified}(x) = EI(x) \cdot (1 - p(fail|x)) ). f. Select next parameters maximizing modified acquisition function.

- Safety Monitoring: Implement additional boundary avoidance techniques to prevent parameter selection near safety limits [4].

Visualization: Workflow Diagrams

Diagram 1: Bayesian Optimization Workflow with Experimental Failure Handling

Diagram 2: Experimental Failure Handling Methods

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools for BO with Missing Data

| Item | Function/Application | Implementation Notes |

|---|---|---|

| Gaussian Process Framework | Core surrogate model for objective function | Use Matern kernel for realistic experimental responses; implement in Python with GPyTorch or scikit-learn |

| Binary Classifier Model | Predicts probability of experimental failure | Gaussian Process classifier or Random Forest for mixed parameter types |

| Acquisition Functions | Balances exploration and exploitation | Expected Improvement (EI) or Upper Confidence Bound (UCB), modified for constraints |

| Constraint Handling Toolkit | Encodes known experimental boundaries | PHOENICS or GRYFFIN algorithms for chemistry applications [9] |

| Boundary Avoidance Methods | Prevents oversampling at parameter edges | Iterated Brownian-bridge kernel for low effect-size problems [4] |

The challenge of missing data due to experimental failures represents a critical limitation of standard Bayesian optimization in practical scientific applications. The breakdown occurs fundamentally in the surrogate model's inability to distinguish between genuinely promising but unexplored regions and parameter spaces that lead to experimental failure. Through methodical approaches like the floor padding trick, binary failure classification, and constrained optimization, researchers can transform this limitation into a manageable aspect of experimental design. The protocols and methodologies presented here provide a roadmap for implementing these advanced BO techniques across diverse domains, from materials science to neuromodulation therapy development. By formally addressing the reality of experimental failures, these approaches enable more efficient resource utilization and accelerate scientific discovery in high-dimensional, constrained parameter spaces.

Autonomous experimentation represents a paradigm shift in materials science, leveraging machine learning to navigate high-dimensional parameter spaces efficiently. A critical challenge in this endeavor, particularly for molecular synthesis and materials growth, is the frequent occurrence of experimental failures. These are trials where targeted materials are not formed, yielding no useful property data and creating a "missing data" problem that can stall optimization pipelines [1]. Bayesian optimization (BO) has emerged as a powerful, sample-efficient approach for global optimization, but its standard implementations often fail in these real-world scenarios where a significant portion of experiments does not yield a quantifiable result [1] [11].

This application note details practical strategies for adapting BO to handle experimental failures, enabling robust optimization in the face of incomplete data. We present domain-specific case studies and detailed protocols that frame failure not as a setback, but as an informative guide for subsequent experimentation.

Case Study 1: Failure-Handling in Oxide Thin Film Synthesis

Experimental Background and Objective

The first case study involves the optimization of molecular beam epitaxy (MBE) growth parameters for high-quality strontium ruthenate (SrRuO3) thin films. SrRuO3 is a metallic perovskite oxide critically used as an electrode in oxide electronics. The goal was to maximize the Residual Resistivity Ratio (RRR), an indicator of sample purity and crystallinity, by searching a wide three-dimensional parameter space. A key challenge was that many parameter combinations, being far from optimal, resulted in failed growth runs where the target phase did not form, leading to missing RRR data [1].

Bayesian Optimization Protocol with Failure Handling

1. Problem Formulation:

- Input Parameters (x): A 3D vector representing key MBE growth conditions (e.g., substrate temperature, ruthenium flux, strontium flux).

- Objective Function (S(x)): The measured RRR of the resulting SrRuO3 film. This function is unknown a priori.

- Constraint: Experimental failure occurs when the designated phase is not formed, resulting in a missing measurement for

S(x).

2. Algorithm: Bayesian Optimization with Floor Padding Trick The core innovation was the "floor padding trick" to handle missing data from failed experiments [1].

- Surrogate Model: A Gaussian Process (GP) model is used to approximate the unknown objective function

S(x)based on all previous successful observations. - Acquisition Function: An Expected Improvement (EI) function guides the selection of the next experiment by balancing exploration (high uncertainty) and exploitation (high predicted mean).

- Failure Handling - Floor Padding: When a parameter set

x_nleads to an experimental failure, instead of discarding the point, it is assigned the worst observed RRR value from all successful experiments conducted up to that point (y_n = min(y_i) for i < n). This complemented dataset is used to update the GP model for the next iteration.

3. Experimental Workflow:

- Initialization: Start with a small set of initial growth runs based on domain knowledge or a space-filling design.

- Iterative Loop:

- Characterization: Measure the RRR of the successfully grown film. If the growth failed, note the failure.

- Data Imputation: Apply the floor padding trick to any failed experiments from the last round.

- Model Update: Update the GP surrogate model with the complete dataset (successful measurements and padded failure points).

- Next Experiment Selection: Choose the next growth parameters

x_n+1by maximizing the acquisition function.

- Termination: The process concludes after a fixed number of runs or when the RRR value converges.

The failure-handling BO algorithm successfully navigated the parameter space, avoiding regions that led to failed synthesis. In just 35 MBE growth runs, it discovered a SrRuO3 film with an RRR of 80.1, the highest value ever reported for a tensile-strained SrRuO3 film [1]. The floor padding trick was crucial for maintaining a stable search trajectory despite a substantial rate of experimental failure.

Table 1: Key Experimental Data from SrRuO3 Thin Film Optimization

| Metric | Result | Significance |

|---|---|---|

| Optimal RRR Achieved | 80.1 | Highest reported for tensile-strained SrRuO3 films [1] |

| Total Number of Growth Runs | 35 | Demonstrates high sample efficiency |

| Search Space Dimensionality | 3-dimensional | Includes substrate temperature, Ru flux, Sr flux |

| Core Failure-Handling Method | Floor Padding Trick | Enabled efficient search despite missing data |

Workflow Visualization

The following diagram illustrates the closed-loop autonomous experimentation system that integrates the Bayesian optimization algorithm with material synthesis and characterization.

Case Study 2: Multi-Objective Optimization in Additive Manufacturing

Experimental Background and Objective

The second case study shifts focus to additive manufacturing (AM), where the goal was to optimize the printing of a test specimen using a syringe extrusion system. The challenge here was multi-objective optimization, aiming to simultaneously maximize the geometric similarity between the printed object and its target while also maximizing the homogeneity of the printed layers. This is a non-trivial problem as these objectives are often interdependent and competing [12].

Multi-Objective Bayesian Optimization (MOBO) Protocol

1. Problem Formulation:

- Input Parameters (x): A set of 5 or more AM process parameters (e.g., print speed, extrusion pressure, nozzle height).

- Objective Functions: Two functions,

f1(x)(geometric accuracy) andf2(x)(layer homogeneity), to be maximized simultaneously.

2. Algorithm: Multi-Objective Bayesian Optimization with EHVI The optimization was performed using the Expected Hypervolume Improvement (EHVI) algorithm [12].

- Surrogate Models: Separate GP models are built for each objective function (

f1,f2) based on observed data. - Acquisition Function: EHVI calculates the expected improvement of a new point with respect to the entire Pareto front—the set of optimal solutions where no objective can be improved without worsening another. Maximizing EHVI expands the hypervolume dominated by the Pareto front.

- Failure Consideration: While not explicitly detailed in the source, failures in this context (e.g., catastrophic print failure) could be integrated using the floor padding trick for each objective.

3. Experimental Workflow via AM-ARES: The Additive Manufacturing Autonomous Research System (AM-ARES) executes the following closed-loop workflow [12]:

- Plan: The MOBO planner (EHVI) suggests a new set of print parameters based on the current Pareto front and surrogate models.

- Experiment: AM-ARES uses the parameters to generate machine code and print the specimen. An onboard machine vision system captures an image.

- Analyze: The system analyzes the image to compute scores for both geometric accuracy and layer homogeneity.

- Update: The knowledge base is updated with the new parameters and their multi-objective scores. The MOBO planner is updated for the next iteration.

The MOBO approach successfully identified a set of optimal solutions (the Pareto front) that captured the trade-offs between geometric accuracy and layer homogeneity. This allowed researchers to select printer parameters based on their preferred balance of objectives, a significant advantage over single-objective optimization. The study demonstrated that autonomous experimentation could efficiently handle complex, multi-parameter optimization problems in additive manufacturing [12].

Table 2: Research Reagent Solutions for Autonomous Experimentation

| Item / Reagent | Function in Experimental Protocol |

|---|---|

| Molecular Beam Epitaxy (MBE) System | High-precision thin film deposition tool for the SrRuO3 case study [1]. |

| Strontium (Sr) and Ruthenium (Ru) Sources | Metallic precursors for the synthesis of SrRuO3 perovskite films [1]. |

| Syringe Extrusion System (AM-ARES) | Additive manufacturing tool for depositing materials layer-by-layer in the AM case study [12]. |

| Machine Vision System | Integrated camera system for in-situ characterization of printed specimens, enabling automated analysis of geometry and homogeneity [12]. |

| Gaussian Process (GP) Model | Core statistical model serving as the surrogate for the objective function(s) in Bayesian optimization [1] [12] [11]. |

Multi-Objective Optimization Visualization

The following diagram illustrates the core concept of multi-objective optimization and the Pareto front, which is central to the additive manufacturing case study.

The Scientist's Toolkit: Essential Methods and Software

Implementing failure-resilient Bayesian optimization requires a suite of computational and experimental tools. Below is a summary of key software packages identified in the research.

Table 3: Essential Software Tools for Bayesian Optimization

| Software Package | Key Features | License | Ref. |

|---|---|---|---|

| BoTorch | Built on PyTorch, supports multi-objective and high-throughput BO. | MIT | [11] |

| Ax | Modular, adaptable framework for general-purpose optimization. | MIT | [11] |

| Dragonfly | Comprehensive package with multi-fidelity and constrained BO. | Apache | [11] |

| COMBO | Efficient for problems with multiple categorical parameters. | MIT | [11] |

These case studies demonstrate that experimental failure is not a terminal obstacle but an integral part of the learning process in autonomous materials development. The floor padding trick provides a simple yet powerful data imputation method for handling failed syntheses in single-objective optimization, as proven by the rapid discovery of high-RRR SrRuO3 films. For more complex goals, Multi-Objective Bayesian Optimization (MOBO) techniques like EHVI can effectively manage trade-offs between competing objectives, as shown in the additive manufacturing workflow. By adopting these protocols and integrating them with robust autonomous research systems, scientists and engineers can significantly accelerate the development of new molecules and advanced materials, transforming failure from a roadblock into a guidepost.

Building Resilient Systems: Key Algorithms and Strategies for Handling Failures

Within the framework of advanced research into Bayesian optimization (BO) with experimental failure handling, the management of missing data presents a significant challenge. In high-throughput experimental domains, such as materials growth or drug development, experimental failures are not merely inconveniences; they are inherent sources of missing data that can critically impede the optimization process if not handled appropriately [1]. Traditional methods like listwise deletion or simple mean imputation can introduce bias or fail to utilize the informational value of a failure. The floor padding trick emerges as a simple, yet potent, heuristic designed to integrate these failures directly into the BO framework, thereby turning failed experiments from data liabilities into valuable algorithmic guides [1].

This technique is particularly crucial when searching wide, multi-dimensional parameter spaces where the optimal region is unknown a priori. Restricting the search to a small, "safe" space based on prior experience risks missing the global optimum. The floor padding trick enables a more aggressive and comprehensive search strategy by providing a principled way to learn from failure [1].

Theoretical Foundation and Mechanism

Definition and Principle

The floor padding trick is an imputation strategy for handling missing data resulting from experimental failures. Its core operation is straightforward: when an experiment for a parameter vector x_n fails to yield a measurable outcome, the missing evaluation y_n is imputed with the worst observed value recorded up to that point in the optimization run [1].

Formally, given a sequence of observations (x_1, y_1), ..., (x_{n-1}, y_{n-1}), if the experiment at x_n fails, the complemented value is:

y_n = min{ y_i | 1 ≤ i < n }

This heuristic is founded on two key rationales:

- Algorithmic Guidance: It provides the BO algorithm with a strong, negative signal that the parameter

x_nis undesirable. This discourages the surrogate model from subsequently recommending parameters in the vicinity ofx_n, thereby fulfilling the requirement to avoid regions of experimental failure [1]. - Model Update: It ensures that the failure information is incorporated into the Gaussian Process (GP) surrogate model. This update improves the model's representation of the underlying response surface, making its predictions and uncertainty estimates more accurate across the entire parameter space [1].

Comparison with Alternative Failure-Handling Methods

The following table summarizes the floor padding trick against other common approaches to handling experimental failures in optimization.

Table 1: Comparison of Methods for Handling Experimental Failures in Bayesian Optimization

| Method | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Floor Padding Trick [1] | Imputes with the worst value observed so far. | Adaptive, requires no tuning; provides a strong signal to avoid failure regions; updates the surrogate model. | The negative signal's intensity is dependent on the history of observations. |

| Constant Padding [1] | Imputes with a pre-defined constant value (e.g., 0 or -1). | Simple to implement. | Performance is highly sensitive to the chosen constant; requires careful prior tuning. |

| Binary Classifier [1] | Uses a separate model (e.g., GP classifier) to predict the probability of failure. | Explicitly models failure regions, helping to avoid them. | Does not inherently update the evaluation surrogate model with failure information; often combined with padding. |

| Data Deletion | Simply discards the failed trial from the dataset. | Simple. | Wastes experimental resources; the model gains no knowledge from the failure. |

Performance and Quantitative Evaluation

The efficacy of the floor padding trick has been demonstrated in both simulation studies and real-world experimental optimization. The key performance metric in such sequential learning tasks is the best evaluation value achieved as a function of the number of experimental observations. A method that reaches a high value with fewer observations is considered more sample-efficient.

In simulation studies using artificially constructed functions with embedded failure regions, the floor padding trick (denoted as method 'F') showed a rapid improvement in evaluation value in the early stages of the optimization process compared to other methods [1]. This indicates its high sample efficiency, a critical property when each observation corresponds to an expensive, time-consuming experiment like a materials growth run.

The table below quantifies the outcomes of a real-world application in materials science, optimizing the growth of SrRuO₃ thin films via Machine-Learning-Assisted Molecular Beam Epitaxy (ML-MBE).

Table 2: Experimental Outcomes from ML-MBE Optimization Using the Floor Padding Trick

| Optimization Aspect | Outcome with Floor Padding Trick | Significance |

|---|---|---|

| Parameter Space Searched | Wide 3-dimensional space | Enabled exploration beyond empirically "safe" regions. |

| Total Growth Runs | 35 | Demonstrates high sample-efficiency. |

| Achieved Residual Resistivity Ratio (RRR) | 80.1 | The highest value ever reported among tensile-strained SrRuO₃ films. |

| Handling of Failed Growths | Successfully complemented and leveraged | Failures informed the model and guided the search away from unstable parameter regions. |

Experimental Protocol: Implementation in Bayesian Optimization

This section provides a detailed, step-by-step protocol for integrating the floor padding trick into a standard Bayesian optimization loop. The example context is the optimization of a physical property (e.g., RRR) in a materials growth experiment.

Workflow and Signaling Logic

The following diagram illustrates the integrated Bayesian optimization workflow with the floor padding trick, highlighting the critical decision point at the experimental failure check.

Step-by-Step Protocol

Step 1: Initialization

- Action: Collect a small initial dataset

D_0 = {(x_1, y_1), ..., (x_k, y_k)}through a space-filling design (e.g., Latin Hypercube Sampling) or based on prior literature. - Reagents & Materials:

- Parameter Ranges: Define the minimum and maximum values for each growth parameter (e.g., temperature, flux ratios) to be optimized.

- Bayesian Optimization Software: Utilize a framework such as BoTorch, GPyOpt, or Ax.

- Experimental Apparatus: The automated or semi-automated system for conducting experiments (e.g., Molecular Beam Epitaxy system).

Step 2: Model Fitting

- Action: Fit a Gaussian Process (GP) surrogate model to the current dataset

D_n. The GP will model the mean and uncertainty of the evaluation functionS(x)across the parameter space. - Protocol Notes: Standard kernel functions like the Matérn kernel are a robust default choice.

Step 3: Candidate Selection

- Action: Using the GP posterior, maximize an acquisition function

a(x)(e.g., Expected Improvement, Upper Confidence Bound) to select the next parameter setx_{n+1}to evaluate. - Protocol Notes: This step balances exploration (high uncertainty) and exploitation (high predicted mean).

Step 4: Experimental Execution and Failure Assessment

- Action: Conduct the experiment at

x_{n+1}. - Critical Check: Determine if the experiment was successful and produced a valid, quantifiable measurement

y_{n+1}.- Success Criteria: Must be predefined. For materials growth, this could be the successful formation of the target crystalline phase confirmed by in-situ characterization.

- Failure Criteria: Failure to form the target material, formation of an incorrect phase, or equipment error.

Step 5: Data Imputation via Floor Padding (upon Failure)

- Action: If the experiment at

x_{n+1}fails, apply the floor padding trick.- Calculate the worst observed value so far:

y_{floor} = min( y_i for all i ≤ n ). - Set

y_{n+1} = y_{floor}. - Append

(x_{n+1}, y_{floor})to the dataset:D_{n+1} = D_n ∪ (x_{n+1}, y_{floor}).

- Calculate the worst observed value so far:

- Protocol Notes: This step is the core of the method. The worst value

y_flooris recalculated after each iteration, making the heuristic adaptive.

Step 6: Iteration

- Action: Return to Step 2 and repeat the loop until a convergence criterion is met (e.g., a performance threshold is reached, a maximum number of experiments is conducted, or the acquisition function value falls below a threshold).

The Scientist's Toolkit

The following table details key computational and experimental components required to implement the described protocol.

Table 3: Research Reagent Solutions for Bayesian Optimization with Floor Padding

| Item | Function/Description | Example Tools / Values |

|---|---|---|

| Gaussian Process Model | Serves as the probabilistic surrogate model to approximate the unknown objective function and quantify uncertainty. | BoTorch (PyTorch), GPyOpt, scikit-learn's GaussianProcessRegressor. |

| Acquisition Function | Guides the search by quantifying the utility of evaluating a new point, balancing exploration and exploitation. | Expected Improvement (EI), Upper Confidence Bound (UCB). |

| Bayesian Optimization Framework | Provides a high-level API for managing the optimization loop, models, and data. | Ax, BoTorch, GPyOpt. |

| Experimental Failure Criteria | Predefined, quantifiable conditions that determine if an experimental run is considered a failure and triggers the floor padding trick. | In-situ reflection high-energy electron diffraction (RHEED) pattern loss; X-ray diffraction peak absence. |

| Floor Padding Function | The algorithmic component that computes the worst observed value and performs the imputation upon failure. | Custom script: y_floor = current_data['y'].min(). |

Advanced Implementation: Synergy with a Binary Classifier

For enhanced performance, the floor padding trick can be combined with a binary classifier that predicts the probability of failure for a given parameter set. This hybrid approach, referred to as 'FB' in the literature, uses two models [1].

The logical relationship between these components is as follows:

Protocol for the FB Hybrid Method:

- Action: In parallel to the GP regressor for the evaluation metric, fit a GP classifier (or another probabilistic classifier) to the binary success/failure data.

- Action: During the candidate selection step (Step 3 in the main protocol), modify the acquisition function to heavily penalize or discard points that the classifier predicts with high probability will lead to failure.

- Action: If a failure nonetheless occurs (indicating a classifier error), the floor padding trick is applied as before to update the GP regressor. The failure data point is also used to update the binary classifier.

This combined strategy actively avoids predicted failure regions while still learning from mispredicted failures, making the overall optimization process more robust and efficient [1].

Within the broader research on Bayesian optimization (BO) with experimental failure handling, a significant challenge is managing a priori unknown feasibility constraints. In scientific domains like drug development and materials science, many experiments fail due to reasons that cannot be perfectly predicted beforehand, such as failed syntheses, unstable compounds, or equipment issues. These failures create nonquantifiable, unrelaxable, hidden constraints on the experimental parameter space [2]. This application note details how binary classifiers can learn these unknown constraint functions on-the-fly, making autonomous scientific experimentation more efficient and robust by avoiding infeasible regions.

Core Concept: The Feasibility-Aware Bayesian Optimization Framework

The standard BO loop is extended by integrating a probabilistic classifier that actively learns the boundary between feasible and infeasible experimental conditions. The core problem is formalized as finding an optimum ( \mathbf{x}^* ) such that: [ \mathbf{x}^* = \underset{\mathbf{x} \in \mathcal{S}}{\text{argmin}} \; f(\mathbf{x}), \quad \text{where} \; \mathcal{S} = { \mathbf{x} \in \mathcal{X} \; | \; c(\mathbf{x}) = 1 } ] Here, ( c(\mathbf{x}) ) is a binary constraint function that returns 1 if an experiment at point ( \mathbf{x} ) is feasible (yielding a measurement of the objective ( f(\mathbf{x}) )) and 0 if it is infeasible (a failure) [2]. This function is initially unknown and is learned sequentially.

Table 1: Key Characteristics of Unknown Constraints in Experimental Optimization

| Characteristic | Description | Experimental Example |

|---|---|---|

| Nonquantifiable | Only binary (pass/fail) information is available, not the degree of violation. | A synthesis either succeeds or fails; no intermediate "ease of synthesis" score is provided [2]. |

| Unrelaxable | The constraint must be satisfied to obtain an objective function measurement. | A compound's bioactivity cannot be measured if its synthesis yields insufficient material [2]. |

| Hidden | The constraint is not known to the researcher before the experimental campaign. | The precise stability region for a new perovskite material is unknown before experimentation [2]. |

| Simulation | Evaluating the constraint involves a costly procedure (e.g., an attempted synthesis). | The "synthetic accessibility" constraint is evaluated via the costly process of attempted synthesis [2]. |

The Role of the Binary Classifier

A binary classifier, typically a Gaussian Process Classifier (GPC) or other probabilistic model, is trained on all data points evaluated so far. For each point ( \mathbf{x} ), it estimates the probability of feasibility, ( p(c(\mathbf{x}) = 1) ). This probability is then integrated into the acquisition function of the BO to balance the exploration/exploitation of the objective with the avoidance of likely-infeasible regions [2] [13].

Quantitative Benchmarking of Feasibility-Aware Strategies

Several acquisition functions have been proposed to handle unknown constraints. Benchmarks on synthetic and real-world problems reveal their relative performance.

Table 2: Performance Comparison of Feasibility-Aware BO Strategies

| Strategy | Core Principle | Average Performance (Valid Exps.) | Convergence Speed | Best-Suited Scenario |

|---|---|---|---|---|

| Expected Feasibility | Explicitly maximizes the probability of being feasible and optimal. | High [2] | Fast [2] | General purpose, balanced risk. |

| Floor Padding Trick | Assigns the worst observed objective value to failed experiments. | Competitive [1] | Fast initial improvement [1] | Simple baseline; tasks with smaller infeasible regions [2] [1]. |

| Binary Classifier (B) | Uses a separate classifier to predict and avoid failures. | Good [1] | Can be slower initially [1] | When active failure avoidance is a high priority. |

| Entropy-Based Search | Actively queries points with high uncertainty about feasibility. | High [13] | Efficient for learning boundaries [13] | For actively mapping complex constraint boundaries. |

Experimental Protocols

The following protocols outline the implementation of feasibility-aware BO in scientific experimentation.

Protocol: Inverse Design of Materials with Stability Constraints

This protocol is adapted from the benchmark on hybrid organic-inorganic halide perovskite materials [2].

1. Objective Definition:

- Primary Objective (( f )): Maximize a target property (e.g., photovoltaic efficiency).

- Unknown Constraint (( c )): Material stability under specific conditions (feasible = stable, infeasible = unstable).

2. Initialization:

- Design Space (( \mathcal{X} )): Define the multi-dimensional parameter space (e.g., composition ratios, processing temperatures).

- Initial Dataset: Conduct a small, space-filling set of experiments (e.g., 5-10 points) to collect initial data on both property and stability.

3. Model Configuration:

- Objective Surrogate: Gaussian Process (GP) regression model.

- Constraint Surrogate: Variational Gaussian Process (VGP) classifier.

- Acquisition Function: Expected Improvement with feasibility probability (EI-CF) or similar.

4. Autonomous Loop Execution:

- Suggestion: Optimize the acquisition function to select the next most promising and likely feasible experiment.

- Making/Measurement: Execute the experiment.

- IF the material is stable, measure its efficiency.

- IF the material is unstable, record it as a failure.

- Update: Augment the dataset and update both the GP regressor and the VGP classifier.

- Iteration: Repeat until a performance target is met or the budget is exhausted.

Protocol: Drug Discovery with Synthetic Accessibility Constraints

This protocol is adapted from the benchmark on designing BCR-Abl kinase inhibitors [2] and principles of handling class imbalance [14].

1. Objective Definition:

- Primary Objective (( f )): Maximize inhibitory activity (e.g., pIC50).

- Unknown Constraint (( c )): Synthetic accessibility (feasible = synthesizable, infeasible = failed synthesis).

2. Initialization:

- Design Space (( \mathcal{X} )): A molecular space (e.g., defined by SELFIES or a chemical descriptor space).

- Initial Dataset: Select a diverse set of molecules from a virtual library for initial synthesis attempts.

3. Model Configuration:

- Objective Surrogate: GP regression on molecular fingerprints.

- Constraint Surrogate: Bernoulli distribution-based GPC, potentially using a Complement Naive Bayes variant to handle the inherent imbalance between feasible and infeasible molecules [15] [14].

- Acquisition Function: A feasibility-aware function like EI-CF.

4. Autonomous Loop Execution:

- Suggestion: Propose a molecule predicted to be active and synthesizable.

- Making/Measurement:

- Attempt the synthesis.

- IF synthesis is successful, proceed to measure bioactivity.

- IF synthesis fails, record the molecule as a failure.

- Update: Update both surrogate models. The classifier learns from the failed synthesis to improve its prediction of synthetic accessibility.

- Iteration: Continue the loop to discover highly active and synthesizable drug candidates.

The Scientist's Toolkit: Essential Research Reagents

This table lists key computational tools and algorithms required to implement the described framework.

Table 3: Key Research Reagents for Feasibility-Aware BO

| Item / Algorithm | Function / Purpose | Example Implementation / Notes |

|---|---|---|

| Gaussian Process (GP) Regressor | Models the unknown objective function ( f(\mathbf{x}) ) and provides uncertainty estimates. | Use a Matérn kernel for modeling scientific functions. |

| Gaussian Process Classifier (GPC) | Models the unknown binary constraint function ( c(\mathbf{x}) ) and provides feasibility probabilities. | A Variational GPC is recommended for robustness [2]. |

| Expected Improvement (EI) | Standard acquisition function for optimizing the objective. | Suggests points with high potential improvement. |

| Feasibility-Weighted EI (EI-CF) | Modifies EI to account for the probability of feasibility. | The workhorse acquisition function for constrained BO [2]. |

| Atlas | Open-source Python library for Bayesian optimization. | Includes implementations of the strategies discussed here [2]. |

| GRYFFIN | Another BO package with some constraint handling capabilities. | Can be used for known constraint problems [13]. |

Workflow and System Diagrams

The following diagrams illustrate the logical workflow of the feasibility-aware BO system and the integration of the classifier.

Feasibility-Aware BO Workflow

Classifier Integration Logic

In the broader context of research on Bayesian optimization (BO) with experimental failure handling, feasibility-aware acquisition functions represent a critical advancement. These functions transform BO from a mere optimizer into a robust decision-making framework for autonomous experimentation. They address a pervasive challenge in scientific domains, including drug development: many optimization algorithms fail to intelligently manage a priori unknown constraints, which lead to experimental failures and wasted resources [2].

Such unknown constraints are ubiquitous, stemming from failed syntheses, unstable molecules, or equipment failures in chemical processes [2] [16]. In materials science, a target material phase may not form, preventing property measurement [1]. In drug discovery, a molecule might be synthetically inaccessible, halting its progression [2]. Naive BO strategies, which only optimize for performance, sample these infeasible regions repeatedly, depleting precious experimental budgets.

Feasibility-aware acquisition functions tackle this by integrating an online-learned probabilistic model of the constraint function directly into the sampling decision. They systematically balance the pursuit of high performance with the avoidance of likely constraint violations, enabling more efficient navigation of complex, constrained experimental landscapes. This document details their quantitative comparison, provides protocols for their implementation, and contextualizes their role within a modern experimental workflow.

Quantitative Analysis of Feasibility-Aware Methods

A comparative analysis of different strategies reveals significant performance variations. The following table synthesizes findings from benchmark studies on synthetic and real-world problems, highlighting the efficiency of different approaches in handling unknown constraints.

Table 1: Comparison of Strategies for Handling Unknown Constraints in Bayesian Optimization

| Strategy Category | Specific Method | Key Mechanism | Average Performance (vs. Naive) | Best-Suited Constraint Scenarios |

|---|---|---|---|---|

| Naive (Baseline) | Constant Penalty [1] | Assigns a fixed, poor objective value (e.g., 0 or -1) to failures. | Highly sensitive to penalty choice; can be suboptimal or lead to slow improvement. | Small, easily avoided infeasible regions. |

| Adaptive Imputation | Floor Padding [1] | Assigns the worst observed objective value from successful experiments to failures. | Quick initial improvement; robust without parameter tuning; final performance can be slightly suboptimal. | General-purpose use when constraint boundaries are unknown. |

| Classification-Guided | Binary Classifier [1] | Uses a separate model (e.g., GP classifier) to predict failure probability and avoids those regions. | Slower initial improvement but better avoidance of failures; less sensitive to penalty value. | Problems where feasibility is a primary concern and can be learned. |

| Integrated Acquisition | Feasibility-Aware EI [2] | Multiplies standard Expected Improvement by the probability of feasibility. | On average, outperforms naive strategies, producing more valid experiments and finding optima at least as fast. | Most scenarios, especially with balanced risk. |

| Feasibility-Driven Search | FuRBO [17] | Uses inspector sampling and a trust region to aggressively guide the search toward feasible regions. | Ties or outperforms alternatives, with superior performance in high-dimensional problems where feasible regions are narrow and hard to find. | High-dimensional problems (Dozens of variables) with small, irregular feasible regions. |

Key insights from benchmark studies include:

- Balanced strategies outperform naive ones: Comprehensive testing, as in the Anubis benchmark, shows that feasibility-aware acquisition functions with balanced risk (like Feasibility-Aware EI) on average outperform commonly adopted naive strategies [2]. They produce a higher proportion of valid experiments and locate the global optimum at least as fast.

- Scenario-dependent performance: In tasks where the region of infeasibility is small, naive strategies can be competitive. However, their performance deteriorates significantly as the complexity and size of the infeasible region grow [2].

- The cost of initial learning: Methods that rely on learning a separate classifier, such as the Binary Classifier approach, can exhibit slower initial improvement as the model requires data to learn the constraint boundary [1].

Experimental Protocols

This section provides a detailed, actionable protocol for implementing a feasibility-aware BO campaign, using the discovery of a BCR-Abl kinase inhibitor with unknown synthetic accessibility constraints as a representative example [2].

Protocol: Drug Discovery with Unknown Synthetic Accessibility

1. Problem Formulation and Goal

- Objective: To find a molecule ( x^* ) with high inhibitory activity (e.g., low IC50) against BCR-Abl kinase.

- Unknown Constraint: The synthetic accessibility of proposed molecular candidates. A molecule ( x ) is infeasible if its synthesis fails or yield is insufficient for assay.

- Success Metric: Find the molecule with the best activity within a fixed budget of 50-100 total experiments (including both successful and failed syntheses).

2. Experimental Setup and Reagent Solutions Table 2: Research Reagent Solutions for the Drug Discovery Benchmark

| Reagent / Resource | Function in the Experiment |

|---|---|

| Virtual Chemical Library | A large set of purchasable or easily enumerable molecules (e.g., from ZINC database) serves as the search space. |

| Retrosynthesis Software | (e.g., ASKCOS, IBM RXN) Provides a coarse-grained proxy for evaluating synthetic difficulty during pre-screening (optional). |

| Automated Synthesis Platform | Enables high-throughput execution of chemical synthesis protocols for proposed molecules. |

| LC-MS / NMR Equipment | Used to confirm successful synthesis and purify the compound for biological testing. |

| Bioassay Kit for BCR-Abl Kinase | Measures the primary objective (e.g., IC50) of successfully synthesized molecules. |

3. Bayesian Optimization Workflow

- Step 1 - Initial Design: Select 5-10 initial molecules using a space-filling design (e.g., Latin Hypercube Sampling) or based on expert knowledge to ensure some diversity.

- Step 2 - "Make" Phase: Attempt to synthesize the proposed molecule using the automated platform.

- Step 3 - "Measure" and Classify:

- If synthesis is successful: Proceed to measure the IC50 value. Record ( y = \text{IC50} ) and feasibility ( c = 0 ) (feasible).

- If synthesis fails: Record no IC50 value. Record ( c = 1 ) (infeasible).

- Step 4 - Model Update:

- Update a Gaussian Process (GP) regressor with all data points ( (x, y) ) where synthesis was successful.

- Update a Variational Gaussian Process (VGP) classifier with all data points ( (x, c) ), where ( c ) is the feasibility label (0 or 1). This model learns the probability of feasibility, ( p(\text{feasible} | x) ) [2].

- Step 5 - Suggestion via Acquisition Function:

- Calculate a feasibility-aware acquisition function, such as Constrained Expected Improvement (CEI): ( \alpha{CEI}(x) = \alpha{EI}(x) \times p(\text{feasible} | x) ) where ( \alpha_{EI}(x) ) is the standard Expected Improvement from the GP regressor [2].

- Optimize ( \alpha{CEI}(x) ) over the chemical search space to propose the next molecule ( x{next} ) for synthesis.

- Step 6 - Iterate: Return to Step 2 and repeat until the experimental budget is exhausted.

4. Critical Steps and Troubleshooting

- Kernel Selection: Use a molecular kernel (e.g., Tanimoto kernel for fingerprints) for both the GP regressor and VGP classifier to capture similarity between molecules effectively.

- Handling Imbalanced Data: Early in the optimization, failed experiments may dominate. Using a VGP classifier is more robust to such class imbalance compared to a standard GP classifier.

- Validation: Run multiple optimization trials with different initializations to ensure the robustness of the result. The best-performing molecule from the final iteration should be re-synthesized and re-tested to confirm activity.

Workflow Visualization

The following diagram illustrates the core closed-loop workflow of a feasibility-aware Bayesian optimization, as described in the protocol.

Feasibility-Aware BO Workflow - This diagram shows the suggest-make-measure cycle that handles experimental failure. The key difference from a standard BO loop is the decision point after "Make," which routes failed experiments to update only the constraint model.

The Scientist's Toolkit

Table 3: Essential Software and Computational Tools for Feasibility-Aware BO

| Tool / Library | Function | Key Feature for Feasibility |

|---|---|---|

| BoTorch [17] | A flexible library for Bayesian optimization research and implementation. | Provides built-in acquisition functions like Constrained Expected Improvement (qECI) for handling unknown constraints. |

| Atlas [2] | An open-source BO package designed for autonomous scientific experimentation. | Implements several feasibility-aware strategies, including the VGP classifier for constraint learning, as used in the Anubis benchmark. |

| Summit [16] | A Python toolkit for chemical reaction optimization and analysis. | Offers multiple optimization strategies, including TSEMO, which can be adapted for constraint handling in reaction spaces. |

| BioKernel [18] | A no-code BO framework designed for biological experiment optimization. | Features modular kernel architecture and heteroscedastic noise modeling, which are beneficial for modeling complex biological constraints. |

Integrating feasibility-aware acquisition functions into Bayesian optimization frameworks is a cornerstone for developing robust and truly autonomous research systems. As benchmarks have demonstrated, moving beyond naive strategies like constant penalty allows researchers to navigate complex experimental landscapes with greater sample efficiency and a higher rate of return on investment [1] [2].

The continued development of algorithms like FuRBO for high-dimensional problems [17] and target-oriented BO for specific property values [19] shows the field's trajectory toward ever-more specialized and powerful optimization tools. For researchers in drug development and materials science, adopting these feasibility-aware protocols is no longer a speculative advantage but a necessary step in accelerating the pace of discovery while effectively managing the inherent risks and costs of experimental failure.

Model-based optimization strategies, particularly Bayesian optimization (BO), have become a cornerstone of autonomous scientific experimentation due to their sample efficiency and flexibility. When combined with automated laboratory equipment in a closed-loop system, they form the core of a self-driving laboratory (SDL), a next-generation technology for accelerating scientific discovery [20] [6]. A pervasive challenge in real-world scientific experimentation, especially in fields like chemistry, materials science, and drug development, is handling unexpected experimental failures. These failures arise from a priori unknown feasibility constraints in the parameter space, stemming from issues such as failed syntheses, unstable materials, unexpected equipment failures, or inaccessible drug dose combinations [20] [1] [21]. Traditional BO algorithms, which assume every suggested parameter combination can be evaluated, often perform poorly when a significant portion of the parameter space is infeasible. This application note details modern computational frameworks, led by Anubis, that are specifically designed to handle such unknown constraints, thereby advancing the reliability and efficiency of autonomous experimentation.

Several sophisticated frameworks have been developed to navigate the problem of unknown constraints and experimental failures. The table below summarizes the core approaches of three key implementations.

Table 1: Key Frameworks for Bayesian Optimization with Unknown Constraints

| Framework Name | Core Problem Addressed | Primary Strategy | Reported Application Domain |

|---|---|---|---|