Machine Learning Validation of Density Functional Theory: Accelerating Accuracy in Drug Discovery and Materials Science

This article explores the transformative integration of Machine Learning (ML) with Density Functional Theory (DFT) to validate and enhance the accuracy of quantum mechanical calculations.

Machine Learning Validation of Density Functional Theory: Accelerating Accuracy in Drug Discovery and Materials Science

Abstract

This article explores the transformative integration of Machine Learning (ML) with Density Functional Theory (DFT) to validate and enhance the accuracy of quantum mechanical calculations. Aimed at researchers and drug development professionals, it provides a comprehensive analysis of how data-driven approaches are solving long-standing DFT challenges, such as errors in formation enthalpies and the approximation of exchange-correlation functionals. The review covers foundational concepts, specific methodologies like ML-corrected functionals and interatomic potentials, strategies for troubleshooting model transferability and data quality, and rigorous benchmarking against high-fidelity quantum chemistry data. By synthesizing recent advances, this work demonstrates how ML-validated DFT is accelerating reliable molecular and materials design, reducing experimental cycles, and informing critical decisions in pharmaceutical development.

The Convergence of Machine Learning and Density Functional Theory: Core Principles and Motivations

Density Functional Theory (DFT) has long been a cornerstone of computational chemistry and materials science, providing invaluable insights into electronic structure and molecular properties. However, its practical application is perpetually constrained by a fundamental trade-off: the balance between computational cost and accuracy. While high-level DFT methods can yield remarkably accurate results, they often demand prohibitive computational resources, especially for large or complex systems relevant to drug discovery and materials development. This accuracy-cost gap represents a significant bottleneck for research progress. The emergence of sophisticated machine learning (ML) interatomic potentials, trained on massive, high-quality DFT datasets, now offers a transformative solution. This guide compares the performance of this new paradigm against traditional computational methods, demonstrating how ML validation and augmentation is bridging DFT's accuracy gap.

The Dataset Foundation: OMol25 and the New Benchmark

The development of robust ML models hinges on the availability of comprehensive, high-quality training data. The recently released Open Molecules 2025 (OMol25) dataset from Meta's FAIR team represents a quantum leap in this domain, setting a new standard for the field.

Scope and Scale: OMol25 comprises over 100 million quantum chemical calculations consuming billions of CPU-hours, dwarfing previous datasets [1]. It includes approximately 83 million unique molecular systems spanning up to 350 atoms, far exceeding the size limitations of earlier datasets like QM9 (≤20 atoms) [2].

Chemical Diversity: The dataset's value lies not only in its size but in its unprecedented chemical diversity, encompassing:

- Biomolecules: Protein-ligand complexes, nucleic acid structures, and binding pocket fragments from RCSB PDB and BioLiP2 datasets [1]

- Metal Complexes: Combinatorially generated structures across different metals, ligands, and spin states via the Architector framework [1]

- Electrolytes: Aqueous solutions, ionic liquids, and systems relevant to battery chemistry [1]

- Element Coverage: All first 83 elements (H through Bi), including main group elements, transition metals, and lanthanides [2]

Methodological Consistency: A key advantage of OMol25 is its consistent use of the ωB97M-V/def2-TZVPD level of theory throughout, a state-of-the-art range-separated meta-GGA functional that avoids many pathologies of previous density functionals [1] [2]. This consistency eliminates the methodological variations that often complicate comparisons across traditional DFT studies.

Performance Comparison: ML Potentials vs. Traditional Methods

The true test of any new methodology lies in its performance against established approaches. The following tables summarize comprehensive benchmarking data for ML potentials trained on the OMol25 dataset compared to traditional computational methods.

Table 1: Energy and Force Accuracy Across Methodologies

| Methodology | Energy MAE (meV/atom) | Force MAE (meV/Å) | Computational Speed vs DFT | System Size Limit |

|---|---|---|---|---|

| eSEN-md (OMol25) | ~1-2 [2] | Comparable to energy MAE [2] | ~1000x faster [1] | ~350 atoms [2] |

| Traditional DFT (ωB97M-V) | Reference | Reference | 1x (baseline) | ~50-100 atoms (practical) |

| Semi-empirical Methods | 10-100 | 50-200 | ~100x faster | No practical limit |

| Classical Force Fields | Varies widely | Varies widely | ~10,000x faster | No practical limit |

Table 2: Domain-Specific Performance Metrics

| Chemical Domain | ML Model | Key Metric | Performance vs Traditional DFT |

|---|---|---|---|

| Biomolecules | eSEN-conserving | Protein-ligand interaction energy | Matches DFT accuracy at ~1000x speed [1] |

| Metal Complexes | UMA-MoLE | Spin-state energy splitting | Accurate across diverse coordination chemistries [1] |

| Reaction Pathways | GemNet-OC (OMol25) | Transition state barrier height | < 1 kcal/mol error vs reference [2] |

| Battery Materials | eSEN-MoLE | Ion adsorption energy | Accurate for novel electrolyte materials [1] |

Internal benchmarks conducted by researchers and early users confirm these performance advantages. One Rowan user reported that "OMol25-trained models give much better energies than the DFT level of theory I can afford" and "allow for computations on huge systems that I previously never even attempted to compute" [1]. Another described the release as "an AlphaFold moment" for the field of atomistic simulation [1].

Experimental Protocols and Methodologies

Training Workflows for ML Potentials

The development of high-performance ML potentials follows carefully designed workflows that optimize knowledge transfer from high-quality DFT data.

Validation Protocols for ML-DFT Integration

Rigorous validation is essential when integrating ML potentials with traditional DFT workflows. The following standardized protocol ensures reliability:

Energy Conservation Testing: For molecular dynamics applications, conserving models must demonstrate numerical stability in NVE ensembles with energy drift below 0.1% over nanosecond simulations [1].

Out-of-Distribution Detection: Implement entropy-based uncertainty quantification to identify when systems fall outside the training distribution, triggering fallback to traditional DFT.

Multi-Fidelity Cross-Verification: Critical results should be verified through a cascade of methods:

- ML Potential: Initial screening and dynamics

- Mid-tier DFT: ωB97X-D3/def2-SVP level single-point verification

- High-tier DFT: ωB97M-V/def2-TZVPD level benchmark calculations

Domain-Specific Metrics:

- Biomolecules: Protein-ligand interaction energy decomposition (ΔE = Ecomplex - Eligand - E_receptor) [2]

- Reaction Pathways: Transition state verification through nudged elastic band calculations

- Electronic Properties: Band gaps, orbital energies, and frontier molecular orbital alignment

Research Reagent Solutions: The Computational Toolkit

Table 3: Essential Computational Tools for ML-DFT Integration

| Tool Category | Specific Solutions | Primary Function | Application Context |

|---|---|---|---|

| ML Potential Architectures | eSEN (equivariant Smooth Edition of Newton) [1] | Direct and conservative force prediction | Molecular dynamics, geometry optimization |

| UMA (Universal Models for Atoms) [1] | Cross-domain knowledge transfer | Multi-property prediction across chemical spaces | |

| GemNet-OC, MACE [2] | High-accuracy symmetry-adapted potentials | Challenging electronic environments | |

| Training Frameworks | Mixture of Linear Experts (MoLE) [1] | Multi-dataset integration | Learning from disparate DFT sources |

| Two-phase conservative training [1] | Force conservation enforcement | Physically realistic dynamics | |

| Dataset Resources | OMol25 (Meta FAIR) [1] [2] | High-quality training data | General molecular ML |

| OC20, ODAC23, OMat24 [1] | Extended material domains | Catalysts, surfaces, crystals | |

| Validation Suites | Wiggle150 [1] | Conformer energy ranking | Drug discovery applications |

| GMTKN55 [1] | General main-group thermochemistry | Method benchmarking |

The integration of machine learning with density functional theory represents more than an incremental improvement—it constitutes a paradigm shift in computational molecular science. By leveraging massive, high-quality datasets like OMol25 and sophisticated architectures such as eSEN and UMA, researchers can now achieve DFT-level accuracy at a fraction of the computational cost, while simultaneously accessing system sizes previously considered intractable.

This bridging of the accuracy gap has profound implications for drug development and materials science. Pharmaceutical researchers can screen larger compound libraries with quantum mechanical accuracy, while materials scientists can explore extended time- and length-scales for complex phenomena. The two-phase training methodology, combining direct-force pre-training with conservative fine-tuning, ensures that these ML potentials produce physically realistic dynamics suitable for demanding applications like drug binding simulations and reaction pathway analysis.

As the field progresses, the universal model approach exemplified by UMA's Mixture of Linear Experts architecture promises even greater generalization across chemical domains, potentially creating comprehensive models that span the entire periodic table. This evolution will further solidify the role of ML validation not as a replacement for traditional DFT, but as an essential partner in extending its reach and reliability, ultimately accelerating scientific discovery across chemistry, materials science, and drug development.

Density Functional Theory (DFT) stands as a cornerstone computational method for predicting the electronic structure of molecules and materials, with profound implications across chemistry, physics, and drug development. Its fundamental principle is elegant: expressing the total energy of a system as a functional of the electron density, thereby drastically simplifying the many-electron Schrödinger equation [3]. In practice, however, the exact functional form for a critical component—the exchange-correlation (XC) energy—remains unknown. This introduces persistent challenges, as approximations to the XC functional lead to systematic errors in energy predictions, limiting the theory's predictive accuracy [3] [4].

The core of the problem lies in the trade-off between computational cost and accuracy, a relationship historically conceptualized by John Perdew as "Jacob's Ladder" of DFT [5]. Climbing this ladder towards "chemical heaven" involves incorporating increasingly complex ingredients into the XC approximation, from the local density (LDA) to generalized gradients (GGA) and exact exchange (hybrid functionals) [5]. Despite these advancements, even modern functionals struggle with fundamental issues such as self-interaction error, electron delocalization, and the inaccurate description of band gaps and charge-transfer processes [3].

Today, a new paradigm is emerging within this long-standing conversation: the integration of machine learning (ML). This guide objectively compares the performance of traditional DFT approximations against a new generation of ML-corrected and ML-constructed models, framing them within the broader thesis of validating and enhancing DFT through data-driven research. By examining experimental protocols and benchmarking data, we provide a clear comparison for researchers seeking to understand the current state and future trajectory of computational accuracy.

The Foundational Challenge: Exchange-Correlation Approximations and Their Shortcomings

The Kohn-Sham equations, formulated in 1965, provide a practical framework for DFT calculations by introducing an auxiliary system of non-interacting electrons [5]. The total energy in this system is expressed as:

[E{\text{total}}[\rho] = T{\text{KS}}[\rho] + E{\text{XC}}[\rho] + E{\text{H}}[\rho] + E_{\text{ext}}[\rho]]

Here, the entire quantum mechanical complexity of many-electron interactions is contained within the (E_{\text{XC}}[\rho]) term [3]. Since the exact form of this term is unknown, all practical DFT calculations rely on approximations, which are the primary source of systematic errors.

These approximations lead to several well-documented shortcomings:

Self-Interaction Error (SIE): In the exact functional, the electron's interaction with itself would be perfectly canceled. Semi-local approximations fail to achieve this, leading to spurious electrostatic interactions that can cause excessive electron delocalization [3]. This manifests in the incorrect prediction of charge transfer processes and the underbinding of electrons.

Delocalization Error: This is a direct consequence of SIE, where DFT functionals tend to favor electron densities that are overly delocalized in space over more physically realistic, localized distributions [3]. This affects the accuracy of reaction barrier calculations and the description of conjugated systems.

Band Gap Underestimation: The Perdew-Burke-Ernzerhof (PBE) functional, a widely used GGA, is notorious for systematically underestimating the energy band gaps of semiconductors and insulators [6]. This "band gap problem" limits DFT's utility in predicting electronic and optical properties of materials.

Incorrect Energy vs. Electron Number: The exact total energy should vary linearly with the number of electrons between integer values. Semi-local functionals, however, produce a convex curve, which incorrectly stabilizes fractional electron charges and contributes to delocalization error [3].

Table 1: Common Types of Systematic Errors in Density Functional Approximations.

| Error Type | Physical Manifestation | Impact on Predicted Properties |

|---|---|---|

| Self-Interaction Error (SIE) | Spurious interaction of an electron with itself | Excessive charge delocalization; inaccurate redox potentials [3] |

| Delocalization Error | Overly diffuse electron densities | Underestimated reaction barriers; incorrect dissociation limits [3] |

| Band Gap Underestimation | Too small gap between occupied and unoccupied states | Inaccurate semiconductor & insulator electronic properties [6] |

Machine Learning as a Validating and Corrective Framework

Machine learning is being deployed in several distinct architectures to address the core challenges of DFT. These approaches move beyond traditional physical approximations by learning from high-quality reference data, either from higher-level theories or experiment.

Taxonomy of ML-DFT Hybrid Approaches

The integration of ML into DFT has crystallized into three primary strategies, each with a different corrective locus [3]:

Machine-Learned XC Functionals: Here, a machine learning model, often a neural network, is trained to represent the entire exchange-correlation functional or a residual correction to an existing functional [3] [7]. The model takes features derived from the electron density (or its derivatives) as input and outputs the XC energy density or potential. This approach directly tackles the problem at its source.

Post-DFT Corrections (Δ-ML): In this "composite" approach, a machine learning model is trained to predict the difference between a property calculated with a low-cost DFT functional and a higher-accuracy reference method [3]. This is a highly effective and transferable strategy for property prediction.

Machine-Learned Hamiltonian Corrections: This method applies ML-derived corrections directly to the Hamiltonian or the effective potential, aiming to fix fundamental errors like self-interaction in a system-specific way [3].

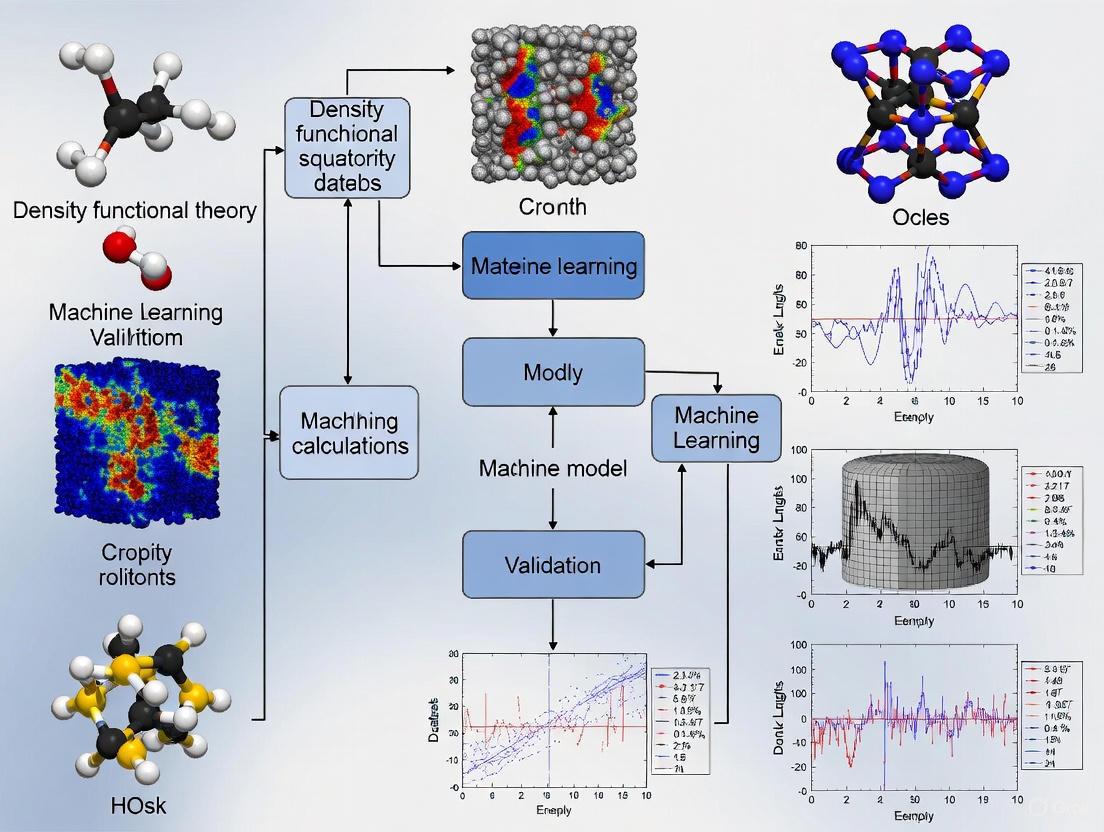

The following diagram illustrates the workflow and logical relationships of these three primary ML-DFT approaches.

Experimental Protocols for Benchmarking ML-DFT Models

Rigorous benchmarking on standardized datasets is critical for objectively comparing the performance of new methods. The protocols below are commonly employed in the field.

Protocol 1: Benchmarking on Quantum Chemistry Sets (e.g., W4-17, G21EA, G21IP) This protocol evaluates a model's ability to predict molecular properties like atomization energies (W4-17), electron affinities (G21EA), and ionization potentials (G21IP) [7].

- Data Splitting: Models are trained on a large portion of the dataset and tested on a held-out set of molecules to evaluate generalizability.

- Reference Data: The "ground truth" is typically high-level, post-Hartree-Fock quantum chemistry methods like CCSD(T) or expensive hybrid functionals.

- Metric: Root-mean-square error (RMSE) and mean absolute error (MAE) between predicted and reference values are the standard metrics.

Protocol 2: Benchmarking on Experimental Redox and Band Gap Data This protocol tests a model's performance against physical measurements, bridging the gap between computation and experiment [8] [6].

- Property Calculation: For reduction potentials, the electronic energy difference between reduced and oxidized species is computed, often with implicit solvation corrections. For band gaps, the direct Kohn-Sham gap or a derived correction is used.

- Model Comparison: The predictions of ML models, traditional DFT functionals, and semiempirical methods are compared against the experimental values.

- Metric: MAE, RMSE, and the coefficient of determination (R²) are reported for different chemical classes (e.g., main-group vs. organometallic species).

Performance Comparison: Traditional DFT vs. Machine Learning Models

This section provides a quantitative comparison of the accuracy achieved by various methods on key chemical properties, highlighting where ML models offer significant improvements.

Accuracy on Molecular Energetics and Redox Properties

The table below summarizes the performance of various methods on high-quality quantum chemistry benchmarks and experimental redox potentials.

Table 2: Performance comparison of methods on molecular energetics and redox properties (MAE values shown).

| Method / Model | Type | W4-17 (Atomization Energy) | G21EA (Electron Affinity) | G21IP (Ionization Potential) | Organometallic Reduction Potential (V) |

|---|---|---|---|---|---|

| B3LYP (Hybrid Functional) | Traditional DFT | (Baseline) | (Baseline) | (Baseline) | - |

| DM21 (DeepMind 21) | ML XC Functional | - | - | - | - |

| Residual XC-Uncertain Functional | ML XC Functional | 62% lower RMSE vs. B3LYP [7] | 37% lower RMSE vs. DM21 [7] | - | - |

| B97-3c | Traditional DFT | - | - | - | 0.414 [8] |

| GFN2-xTB | Semiempirical | - | - | - | 0.733 [8] |

| UMA-S (OMol25 NNP) | ML Δ-Correction | - | - | - | 0.262 [8] |

Key Comparisons:

- ML XC Functionals Can Surpass Popular Hybrids: The "Residual XC-Uncertain Functional" demonstrates a dramatic improvement over the widely used B3LYP hybrid functional on quantum chemistry benchmarks, reducing the RMSE by 62% [7]. It also outperforms a previous state-of-the-art ML functional (DM21) by 37% RMSE, showing rapid progress in this area [7].

- NNPs Can Excel in Specific Domains: For predicting experimental reduction potentials of organometallic species, the Universal Model for Atoms (UMA-S), a neural network potential trained on the massive OMol25 dataset, outperforms both the B97-3c DFT functional and the GFN2-xTB semiempirical method, achieving an MAE of just 0.262 V [8]. This is notable as NNPs do not explicitly consider Coulombic physics.

Accuracy on Material Band Gaps

The systematic underestimation of band gaps by semi-local DFT is a well-known problem. The following table compares traditional and ML-based approaches for its correction.

Table 3: Performance of different methods in predicting/correcting the band gaps of inorganic materials.

| Method / Model | Type | Target | RMSE (eV) | Key Features / Notes |

|---|---|---|---|---|

| DFT-PBE | Traditional DFT (GGA) | - | (Systematically underestimates) | Standard GGA functional [6] |

| G0W0 Approximation | Many-Body Perturbation | Gold Standard | (High computational cost) | Considered a reliable reference [6] |

| Linear Model (Morales-Garcia) | ML Post-Correction | G0W0 | 0.29 | Uses only PBE band gap [6] |

| Support Vector Machine (Lee et al.) | ML Post-Correction | G0W0 | 0.24 | 270 inorganic compounds [6] |

| Gaussian Process Regression (This Work) | ML Post-Correction | G0W0 | 0.252 | Minimal set of 5 features [6] |

Key Comparisons:

- ML Enables High Accuracy with Low Cost: Machine learning models successfully bridge the gap between inexpensive DFT-PBE calculations and high-accuracy G0W0 results. The Gaussian Process Regression model achieves an RMSE of 0.252 eV, approaching the accuracy of the more complex SVM model but with a drastically reduced and more interpretable feature set [6].

- Feature Reduction Aids Interpretability: The GPR model highlights a trend in modern ML-DFT: moving away from black-box models with dozens of features. By using only five carefully chosen features (PBE band gap, average atomic distance, oxidation states, etc.), it maintains high accuracy (R²=0.9932) while offering greater physical insight and computational efficiency [6].

For researchers embarking on ML-DFT projects, the following "toolkit" comprises essential datasets, software approaches, and model types that are central to current efforts.

Table 4: Key resources for developing and applying ML-enhanced DFT methods.

| Tool / Resource | Type | Function & Purpose |

|---|---|---|

| OMol25 Dataset | Dataset | A massive dataset of >100 million calculations at ωB97M-V/def2-TZVPD level; used for pre-training general-purpose neural network potentials [8]. |

| ECD Dataset | Dataset | A benchmark for electronic charge density prediction, containing 140,646 crystals with PBE data and 7,147 with high-precision HSE data; vital for models targeting the electron density [9]. |

| Neural Network Potentials (NNPs) | Model | Models like eSEN and UMA that learn the relationship between atomic structure and total energy; act as highly accurate, fast surrogates for DFT energy calculations [8]. |

| Fused XC (FXC) Functional | Model | An ML-based XC functional that uses multi-task learning to improve generalization and robustness across multiple molecular properties [4]. |

| Residual XC-Uncertain Functional | Model | A neural XC functional that models prediction uncertainty, improving accuracy and stability, especially for systems with large systematic errors [7]. |

| Gaussian Process Regression (GPR) | Algorithm | A powerful ML method for property prediction (e.g., band gap correction) that provides uncertainty estimates and performs well with small feature sets [6]. |

The core challenges of DFT—centered on the approximation of the exchange-correlation functional—have long defined the limits of its predictive power. The systematic errors in energy calculations have real-world consequences, from hindering the accurate prediction of catalytic reaction energies to muddying the computational design of novel electronic materials.

The objective comparisons presented in this guide, however, illustrate a significant shift. Machine learning is no longer just a tool for accelerating simulations; it has matured into a robust framework for validating and correcting the fundamental physics within DFT. The data show that ML-based XC functionals and post-correction models can consistently outperform traditional GGA and hybrid functionals on key benchmarks like molecular atomization energies and material band gaps [7] [6]. Furthermore, neural network potentials trained on large, high-quality datasets are now capable of rivaling or even exceeding the accuracy of low-cost DFT for specific properties like reduction potentials, despite not explicitly encoding the underlying physics [8].

The path forward is one of synergistic integration. The future of accurate electronic structure calculation lies not in abandoning the profound physical insights of DFT, but in augmenting them with pattern-recognition capabilities of machine learning. This hybrid approach, built on rigorous benchmarking and scalable data resources, promises to deliver the long-sought "chemical accuracy" for a broader range of systems, ultimately accelerating discovery in nanotechnology, drug development, and materials science.

Computational quantum chemistry, long anchored by first-principles methods like Density Functional Theory (DFT), is undergoing a revolutionary shift driven by machine learning (ML). DFT serves as a powerful tool for modeling electronic structures and predicting molecular properties at a quantum mechanical level by calculating the electron density rather than the wavefunction directly [10] [11]. However, its utility is constrained by prohibitive computational costs, which escalate dramatically with molecular size, making it intractable to simulate scientifically relevant systems of real-world complexity [12]. The emergence of Machine Learning Interatomic Potentials (MLIPs) addresses this fundamental limitation. These surrogate models are trained on DFT-generated data to achieve near-DFT accuracy in predicting energies and atomic forces while reducing computational cost by a factor of up to 10,000, thereby unlocking the simulation of large atomic systems previously out of reach [12] [11]. This article examines the key concepts, terminology, and datasets underpinning this rise of data-driven quantum chemistry, objectively comparing the performance of new, large-scale datasets and the ML models they enable within the critical context of validating and advancing DFT.

Essential Terminology and Concepts

To navigate the field of data-driven quantum chemistry, a clear understanding of its core terminology is essential. The following table defines the key concepts that form the foundation of this interdisciplinary field.

Table 1: Key Concepts and Terminology in Data-Driven Quantum Chemistry

| Term | Definition | Role in Data-Driven Quantum Chemistry |

|---|---|---|

| Density Functional Theory (DFT) | A computational quantum mechanical method for modeling the electronic structure of molecules and materials using electron density [10] [11]. | Serves as the primary source of high-quality, albeit expensive, training data for machine learning models. |

| Machine Learning Interatomic Potentials (MLIPs) | Surrogate models trained on DFT data that learn to predict the total energy and atomic forces of a system from atomic coordinates and numbers [11] [13]. | Provide a fast, efficient alternative to DFT for large-scale atomistic simulations like molecular dynamics. |

| Potential Energy Surface (PES) | A hypersurface governing the energy of a molecular system based on the spatial arrangement of atomic nuclei under the Born-Oppenheimer approximation [11]. | The fundamental relationship that MLIPs are designed to learn and approximate. |

| Geometry Optimization | An iterative process that uses energy gradients (forces) to find a local minimum on the PES, resulting in a stable molecular conformation [11]. | A key application for MLIPs, requiring accurate prediction for both stable and intermediate geometries. |

| Relaxation Trajectory | The complete sequence of intermediate molecular conformations generated during a geometry optimization calculation [11]. | Provides a diverse sampling of the PES, which is crucial for training robust and generalizable MLIPs. |

Comparative Analysis of Major Molecular Datasets

The development of accurate and transferable MLIPs is critically dependent on large-scale, high-quality datasets. These datasets provide the foundational information from which models learn the intricacies of the Potential Energy Surface. We objectively compare the specifications and intended applications of several major public datasets below.

Table 2: Comparison of Major Datasets for Molecular Machine Learning

| Dataset Name | Molecules & Conformations | Key Features & Content | Notable Limitations |

|---|---|---|---|

| OMol25 (Open Molecules 2025) [12] | 83M unique molecules; Over 100M 3D snapshots [11]. | Exceptional chemical diversity; Includes biomolecules, electrolytes, and metal complexes; Covers most of the periodic table; Systems up to 350 atoms. | Does not provide full relaxation trajectories [11]. |

| PubChemQCR [11] | ~3.5M trajectories; Over 300M conformations (105M from DFT). | Focuses on relaxation trajectories for organic molecules; Includes energy and atomic force labels for all conformations. | Primarily limited to small organic molecules. |

| SIMG (Stereoelectronics-Infused Molecular Graphs) [14] | N/A (Model, not a dataset) | A molecular representation that incorporates quantum-chemical orbital interactions; A model is provided to generate this representation quickly from standard graphs. | Trained on small molecules; Scope is currently limited but expanding to the entire periodic table [14]. |

| QM9 [11] | ~130,000 small molecules. | A historical benchmark with 19 quantum chemical properties per molecule. | Only one conformation per molecule; limited to 5 atom types; no force labels. |

| ANI-1x [11] | Over 20M conformations; 57,000 unique molecules. | A large dataset of molecular conformations. | Supports only 4 atom types (H, C, N, O). |

Experimental Protocol for Dataset Curation and Benchmarking

The creation and validation of these datasets follow rigorous computational protocols. For OMol25, the curation process involved leveraging massive computing resources to run millions of DFT simulations. The team started with existing datasets to ensure coverage of chemistry important to researchers, performed more advanced DFT simulations on these snapshots, and then identified and filled major gaps in chemical diversity, leading to a dataset with a strong focus on biomolecules, electrolytes, and metal complexes [12]. The computational scale was unprecedented, costing six billion CPU hours [12].

For PubChemQCR, the experimental protocol involved curating the raw geometry optimization outputs from the PubChemQC project [11]. This process preserves the entire relaxation trajectory—each intermediate conformation a molecule passes through on its way to a stable geometry—rather than just the final, optimized structure. Each of these hundreds of millions of conformations is annotated with its total energy and the atomic forces, which are the negative gradients of the energy with respect to atomic positions [11].

To ensure model performance and drive innovation, datasets like OMol25 and PubChemQCR are released with thorough evaluations and benchmarks. These are public leaderboards that rank MLIPs on their ability to accurately complete specific, chemically meaningful tasks, providing a standardized measure of progress and fostering healthy competition within the research community [12] [11].

Benchmarking Machine Learning Interatomic Potentials

The ultimate test for any MLIP is its performance on scientifically relevant tasks. Benchmarks provide objective measures of model accuracy, generalization, and computational efficiency, guiding researchers in selecting the right tool for their application.

Table 3: MLIP Performance Metrics and Benchmarking Criteria

| Benchmarking Aspect | Key Metric | Interpretation & Impact |

|---|---|---|

| Energy Accuracy | Mean Absolute Error (MAE) of predicted vs. DFT total energy. | Lower error indicates a more faithful representation of the Potential Energy Surface, crucial for property prediction. |

| Force Accuracy | Mean Absolute Error (MAE) of predicted vs. DFT atomic forces. | Critical for reliable geometry optimization and molecular dynamics simulations; force errors are often more telling than energy errors. |

| Generalization | Performance on molecular systems or elements not seen during training. | Measures model transferability and practical utility beyond its training set. |

| Geometric Transferability | Accuracy on intermediate, off-equilibrium conformations within a relaxation trajectory [11]. | Essential for MLIPs to function as true surrogates in simulation, not just for predicting stable structures. |

| Computational Speed | Simulation speedup factor compared to direct DFT calculation. | A key practical advantage, with MLIPs being up to 10,000x faster than DFT [12]. |

Advanced Protocol: Refining MLIPs with Experimental Data

A frontier in data-driven quantum chemistry involves moving beyond the inherent limitations of DFT accuracy. Since MLIPs are trained on DFT data, they inherit any systematic errors of the underlying quantum mechanical method [13]. A cutting-edge protocol to address this uses experimental data to refine pre-trained MLIPs.

As demonstrated in recent research, this process involves:

- Pre-training: An MLIP is first trained on a large dataset of DFT calculations, for example, for a material like uranium dioxide (UO₂).

- Experimental Target Selection: A sensitive experimental probe, such as Extended X-ray Absorption Fine Structure (EXAFS) spectra—which is highly sensitive to the local atomic structure—is selected as the refinement target.

- Trajectory Re-weighting: A trajectory re-weighting technique is combined with transfer learning to minimally adjust the MLIP's parameters. This ensures the structural ensembles generated by the MLIP yield EXAFS spectra that match the experimental data.

- Validation: The refined MLIP is then validated by comparing its predictions for various properties (e.g., lattice parameters, bulk modulus, defect energies) against other experimental or high-fidelity theoretical data. This protocol has been shown to yield significant improvement, creating MLIPs that surpass the accuracy of their base DFT training data [13].

The Scientist's Toolkit: Essential Research Reagents

In this context, "research reagents" refer to the essential computational tools, datasets, and models that enable work in data-driven quantum chemistry.

Table 4: Essential Research Reagents and Resources

| Resource / "Reagent" | Type | Primary Function |

|---|---|---|

| DFT Software | Software Tool | Generates the high-fidelity data on electronic structure, energies, and forces required to train and validate MLIPs. |

| OMol25 & PubChemQCR Datasets | Training Data | Provides massive, diverse, and publicly available datasets of molecular conformations and relaxation trajectories for training generalizable MLIPs [12] [11]. |

| MLIP Architectures | Model | Acts as the core engine that learns the mapping from atomic structure to system energy and forces (e.g., models like NequIP, MACE). |

| EXAFS Experimental Data | Experimental Data | Serves as a high-precision refinement target for correcting systematic errors in DFT-trained MLIPs, pushing accuracy beyond the DFT baseline [13]. |

| Public Benchmarks & Evaluations | Benchmarking Tool | Provides standardized challenges and leaderboards to objectively measure, compare, and track the performance of different MLIPs on chemically meaningful tasks [12]. |

Workflow and Signaling Pathways

The integration of DFT, machine learning, and experimental validation can be conceptualized as a powerful workflow for scientific discovery. The diagram below maps this integrated pipeline.

The integration of machine learning (ML) with density functional theory (DFT) has created a paradigm shift in computational chemistry and materials science. This revolution is powered by large-scale, high-quality datasets that serve as the foundational training material for ML models. These datasets enable the development of machine learning interatomic potentials (MLIPs) and other surrogate models that approximate DFT-level accuracy at a fraction of the computational cost [10]. The resulting models can accelerate molecular dynamics simulations, high-throughput screening, and materials discovery by orders of magnitude, effectively bridging the gap between quantum mechanical accuracy and computational tractability for complex systems [15] [16].

This guide provides an objective comparison of major high-throughput datasets fueling the ML-DFT revolution, examining their structural composition, applications, and performance benchmarks to aid researchers in selecting appropriate resources for their scientific objectives.

Comparative Analysis of Major ML-DFT Datasets

Table 1: Specification Comparison of Major High-Throughput DFT Datasets

| Dataset | Primary Focus | Size (Structures) | Elements Covered | Key Properties | Primary Applications |

|---|---|---|---|---|---|

| QCML [17] | Small molecules & chemical space | 33.5M DFT + 14.7B semi-empirical | Large fraction of periodic table | Energies, forces, multipole moments, Kohn-Sham matrices | Training foundation models, ML force fields |

| PubChemQCR [15] | Molecular relaxation trajectories | ~3.5M trajectories, >300M conformations | Organic molecules (H, C, N, O, etc.) | Total energy, atomic forces | Training/evaluating MLIPs, molecular dynamics |

| MP-ALOE [18] | Solid-state materials | ~1M DFT calculations | 89 elements | Cohesive energies, forces, stresses | Universal ML interatomic potentials, materials discovery |

| MatPES [18] | Solid-state materials | Not specified (reference for MP-ALOE) | Multiple elements | Energies, forces from MD trajectories | MLIP training, near-equilibrium properties |

Table 2: Performance and Benchmarking Capabilities

| Dataset | Benchmarking Focus | Key Performance Metrics | Level of Theory | Structural Diversity |

|---|---|---|---|---|

| QCML [17] | ML force field accuracy | Force prediction, energy accuracy | Various DFT and semi-empirical | Equilibrium and off-equilibrium conformations |

| PubChemQCR [15] | MLIP generalization | Energy/force prediction across relaxations | Various DFT levels | Molecular relaxation trajectories |

| MP-ALOE [18] | UMLIP transferability | Equilibrium properties, extreme deformation stability | r2SCAN meta-GGA | Off-equilibrium, high-pressure structures |

| MatPES [18] | Solid-state MLIP accuracy | Formation energy prediction, force accuracy | r2SCAN meta-GGA | Near-equilibrium structures from MD |

Experimental Protocols and Methodologies

Dataset Generation Workflows

The value of ML-DFT datasets depends fundamentally on their generation methodologies. The QCML dataset employs a systematic hierarchical approach beginning with chemical graphs represented as canonical SMILES strings, followed by conformer search and normal mode sampling to generate both equilibrium and off-equilibrium 3D structures [17]. This method ensures comprehensive coverage of chemical space for small molecules up to 8 heavy atoms.

For solid-state materials, MP-ALOE utilizes active learning via query by committee (QBC) to strategically sample structures, particularly targeting off-equilibrium configurations with high-energy states and large magnitude forces [18]. This approach efficiently expands the coverage of the potential energy surface beyond equilibrium minima, which is crucial for developing robust universal ML interatomic potentials.

PubChemQCR takes a different approach by curating relaxation trajectories from the PubChemQC project, capturing the complete pathway from initial molecular configurations to DFT-optimized structures [15]. This provides unique insights into non-equilibrium conformations encountered during geometric optimization processes.

Diagram 1: High-Throughput Dataset Generation Workflow. This generalized workflow shows the multi-stage process for creating comprehensive ML-DFT datasets, from initial chemical space sampling through final quality control.

Benchmarking Methodologies for ML Model Validation

Robust benchmarking is essential for validating ML models trained on these datasets. The Matbench Discovery framework addresses key challenges in materials discovery by emphasizing prospective benchmarking with realistic test data, relevant stability targets (distance to convex hull), and informative classification metrics beyond simple regression accuracy [16]. This approach reveals that accurate regressors can still produce high false-positive rates near decision boundaries, highlighting the importance of task-relevant evaluation.

For MLIP validation, standard protocols include:

- Equilibrium property prediction - Comparing formation energies, lattice parameters, and band gaps with DFT references [18] [19]

- Force accuracy assessment - Evaluating force predictions on off-equilibrium structures [18]

- Molecular dynamics stability - Testing model stability under extreme temperatures and pressures [18]

- Relaxation trajectory accuracy - Assessing geometric optimization pathways [15]

MP-ALOE benchmarking demonstrates that models trained on their dataset show improved stability in molecular dynamics runs and maintain physical soundness under extreme hydrostatic pressures up to 100 GPa [18].

Table 3: Key Computational Tools and Resources for ML-DFT Research

| Resource Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Dataset Platforms | Materials Project [18] [16], PubChemQC [15] | Source of initial structures & reference data | High-throughput calculation inputs |

| Active Learning Frameworks | Query by Committee (QBC) [18] | Strategic sampling of configuration space | Efficient dataset expansion |

| MLIP Architectures | MACE [18], Graph Neural Networks [16] | Surrogate potential training | Force field development |

| Benchmarking Suites | Matbench Discovery [16] | Standardized model evaluation | Performance validation |

| DFT Accelerators | Accelerated DFT [20] | Cloud-native DFT computation | Rapid data generation |

Performance Comparison and Research Applications

Dataset-Specific Strengths and Applications

Each major dataset exhibits distinct advantages for particular research domains:

The QCML dataset excels in chemical diversity with coverage of a large fraction of the periodic table, making it particularly valuable for training foundation models intended for broad applicability across chemical space [17]. Its inclusion of both equilibrium and off-equilibrium structures enables the development of ML force fields that can accurately describe molecular deformations and reaction pathways.

PubChemQCR provides unique value through its focus on complete relaxation trajectories, offering unprecedented insight into the geometric optimization process [15]. This makes it particularly valuable for developing MLIPs that can accurately reproduce DFT relaxation pathways, a crucial capability for computational screening of molecular conformations.

For solid-state materials discovery, MP-ALOE offers superior performance in extreme condition modeling due to its inclusion of high-pressure structures and configurations with large magnitude forces [18]. Benchmarks show that MLIPs trained on MP-ALOE maintain physical soundness under hydrostatic pressures up to 100 GPa, significantly outperforming models trained on narrower datasets.

Impact on Materials Discovery Pipelines

The integration of these datasets into materials discovery workflows has demonstrated significant acceleration of computational screening campaigns. Universal MLIPs trained on comprehensive datasets like MP-ALOE can effectively pre-screen thermodynamically stable hypothetical materials, reducing the computational burden on DFT calculations in high-throughput pipelines [16]. This approach has advanced to the point where ML models can achieve prospective discovery success – identifying previously unknown stable materials that are subsequently verified by DFT calculations.

The hybrid DFT+ML approach has shown particular success in challenging prediction tasks such as band gap estimation in metal oxides, where combining DFT+U calculations with machine learning regression achieves accuracy comparable to higher-level theories at substantially reduced computational cost [19].

The ML-DFT revolution continues to evolve with several emerging trends. The development of universal ML interatomic potentials capable of approximating accurate DFT functionals across the periodic table represents a major frontier, with current benchmarks indicating that UIPs surpass other methodologies in both accuracy and robustness for materials discovery [16]. The integration of active learning methodologies enables more efficient dataset expansion by strategically sampling regions of chemical space where model uncertainty is high [18].

Future efforts will likely focus on improving model interpretability, enhancing data quality standards, and broadening applicability to increasingly complex systems including disordered materials, interfaces, and dynamic processes [10]. The growing emphasis on prospective validation – testing model predictions on genuinely new materials rather than retrospective benchmarks – represents a crucial step toward reliable real-world deployment [16].

As these high-throughput datasets continue to expand and diversify, they will increasingly serve as the foundation for developing next-generation ML models that further blur the distinction between computational accuracy and efficiency, ultimately accelerating the discovery of novel materials and molecules for technological applications.

Machine Learning Methodologies for Enhancing and Validating DFT Calculations

Density Functional Theory (DFT) stands as one of the most widely used computational tools in materials science and drug development for predicting electronic structure and material properties. Despite its considerable successes, DFT suffers from intrinsic errors in its exchange-correlation functionals that limit its predictive accuracy for key thermodynamic properties, particularly formation enthalpies and phase stability. These errors, often described as a lack of sufficient "energy resolution," become particularly problematic in ternary phase stability calculations where small energy differences determine which phases are stable [21]. The emerging solution that has gained significant traction in recent research involves leveraging machine learning (ML) techniques to systematically correct these DFT errors, thereby enhancing predictive reliability without prohibitive computational cost.

This review compares the current landscape of ML-corrected DFT methodologies, focusing specifically on their application to formation enthalpy prediction and phase stability assessment. We examine multiple approaches—from neural network corrections of alloy thermodynamics to ML-aided high-throughput screening of complex phases—and provide objective performance comparisons based on recently published experimental data. By framing this analysis within the broader thesis of validating DFT with machine learning research, we aim to provide researchers with a comprehensive guide to selecting appropriate correction strategies for their specific computational challenges.

Systematic Errors in Standard DFT Implementations

The predictive limitations of DFT manifest most clearly in thermodynamic calculations where energy differences between competing phases or compounds are small but significant. Standard DFT implementations, including the widely used B3LYP functional and EMTO-CPA methods, typically exhibit intrinsic errors in their energy functionals that prevent accurate determination of phase stability, particularly for ternary systems [21] [22]. These limitations arise from several sources:

- Exchange-correlation functional inaccuracies: The unknown form of the exact exchange-correlation functional necessitates approximations that introduce systematic deviations from true energy values [22].

- Error cancellation dependencies: Practical DFT implementations often rely on error cancellation between chemical species to achieve reasonable accuracy for energy differences, but this approach is inherently system-dependent and limits transferability [22].

- Intrinsic energy resolution limits: DFT lacks the fine energy resolution required to distinguish between structurally similar phases with small formation enthalpy differences, particularly problematic for ternary phase diagrams [21].

These fundamental limitations have motivated the development of ML-based correction schemes that target the discrepancy between DFT-calculated and reference values, whether derived from experimental measurements or high-level theoretical calculations.

Machine Learning Correction Paradigms

Several distinct ML correction paradigms have emerged, each with different theoretical foundations and application domains:

- Δ-ML correction of formation enthalpies: This approach trains neural networks to predict the discrepancy between DFT-calculated and experimentally measured formation enthalpies, utilizing features such as elemental concentrations, atomic numbers, and interaction terms to capture chemical effects [21].

- Direct ML prediction of material properties: For computationally expensive phases like the σ phase, ML models can be trained to predict formation enthalpies directly from compositional and structural descriptors, bypassing full DFT calculations for new compositions [23].

- XC functional correction: Rather than correcting final energies, this approach uses ML to model the deviation between approximate DFAs like B3LYP and the exact exchange-correlation functional, operating directly on the functional level [22].

- Stability classification: For applications requiring rapid screening, ML classifiers can predict phase stability categories (e.g., solid solution, intermetallic, or mixed) based on compositional descriptors [24] [25].

Table 1: Comparison of ML Correction Paradigms for DFT

| Correction Type | Theoretical Basis | Target Output | Applicable Systems |

|---|---|---|---|

| Δ-ML Enthalpy Correction | DFT vs. experimental enthalpy discrepancy | Corrected formation enthalpy | Binary and ternary alloys [21] |

| Direct Property Prediction | Composition-structure-property relationships | Formation enthalpy directly | Complex phases (σ phase, MAX phases) [23] [25] |

| XC Functional Correction | Deviation from exact functional | Improved XC energy | Molecular systems [22] |

| Stability Classification | Compositional features vs. phase stability | Phase category | High-entropy alloys [24] |

Methodological Approaches: Experimental Protocols and Workflows

Neural Network Correction for Alloy Thermodynamics

The Δ-ML approach for correcting alloy formation enthalpies employs a structured methodology to ensure physical meaningfulness and robustness. Simak et al. detail a protocol where a neural network model is trained to predict the discrepancy between DFT-calculated and experimentally measured formation enthalpies for binary and ternary alloys [21] [26]. The key methodological components include:

- Feature engineering: The input feature set comprises elemental concentrations, atomic numbers, and interaction terms designed to capture essential chemical and structural effects without explicit structural information.

- Network architecture: Implementation as a multi-layer perceptron (MLP) regressor with three hidden layers, optimized through leave-one-out cross-validation (LOOCV) and k-fold cross-validation to prevent overfitting.

- Data curation: Rigorous dataset construction focusing on chemically relevant systems, particularly for high-temperature applications in aerospace and protective coatings (Al-Ni-Pd and Al-Ni-Ti systems).

- Reference data: Experimental formation enthalpy measurements serve as ground truth for training, with the model learning systematic deviations rather than absolute values.

This approach maintains the computational efficiency of DFT while significantly improving accuracy, as the trained ML model adds minimal computational overhead to the standard DFT workflow.

High-Throughput σ Phase Prediction

For complex intermetallic phases like the σ phase, a different methodological approach proves more efficient. Rather than correcting DFT energies, this method uses ML to predict formation enthalpies directly from compositional information, dramatically reducing computational requirements. The protocol involves:

- Database construction: Creating a specialized first-principles database containing 1342 configurations of binary σ phases for model training and testing [23].

- Descriptor selection: Utilizing features including element types at different crystallographic sites, atomic radius, and number of valence electrons to encode essential chemical information.

- Model training and validation: Comparing multiple ML algorithms with the Multi-Layer Perceptron demonstrating superior predictive accuracy (MAE of 22.881 meV/atom for training, 34.871 meV/atom for validation).

- Computational efficiency optimization: The trained model predicts formation enthalpies for 1177 untrained ternary configurations with a significant reduction in computational time (over 59% compared to traditional first-principles calculations).

This approach is particularly valuable for phases with numerous possible configurations where exhaustive DFT calculations would be computationally prohibitive.

Figure 1: Generalized workflow for ML-corrected DFT stability prediction

ML-Corrected Density Functional Approximations

A more fundamental approach targets the root cause of DFT inaccuracies—the approximate exchange-correlation functional. An et al. developed a novel ML-based correction to the widely used B3LYP functional that directly targets its deviations from the exact exchange-correlation functional [22]. The methodology includes:

- Reference data generation: Utilizing highly accurate absolute energies as exclusive reference data to eliminate reliance on error cancellation between chemical species.

- Error attribution: Designing a scheme to attribute errors to real-space pointwise contributions rather than global energy differences.

- Double-cycle protocol: Incorporating self-consistent-field (SCF) calculations directly into the training workflow to ensure self-consistency.

- Transferability optimization: Focusing on absolute energies during training rather than relative energies to enhance model transferability across different chemical systems.

This approach addresses the fundamental limitation of conventional DFAs that rely on system-dependent error cancellation, potentially leading to more universally applicable functionals.

Performance Comparison: Quantitative Analysis of ML-DFT Methods

Accuracy Metrics and Computational Efficiency

Direct performance comparison between different ML-DFT methods reveals distinct trade-offs between accuracy, computational efficiency, and applicability. The σ phase prediction model achieves a mean absolute error (MAE) of 22.881 meV/atom on training data and 34.871 meV/atom on validation data, which the authors note is comparable to the error of DFT calculations themselves [23]. This performance comes with a significant computational advantage—the ML approach reduces computational time by over 59% compared to traditional high-throughput DFT calculations for ternary configurations.

For the neural network correction of alloy thermodynamics, while specific MAE values aren't provided in the available excerpts, the authors report "significantly enhanced predictive accuracy" enabling "more reliable determination of phase stability" compared to both uncorrected DFT and simple linear corrections [21]. The ML-corrected B3LYP functional demonstrates notable improvement across diverse thermochemical and kinetic energy calculations, though the degree of improvement varies depending on the specific property being calculated [22].

Table 2: Performance Metrics of ML-Enhanced DFT Methods

| Method | System Type | Accuracy Metrics | Computational Efficiency | Limitations |

|---|---|---|---|---|

| Neural Network Δ-ML [21] | Binary and ternary alloys | Significant improvement over uncorrected DFT | Minimal overhead after training | Limited to trained chemical spaces |

| Direct σ Phase Prediction [23] | σ phase (binary and ternary) | MAE: 22.881 meV/atom (train), 34.871 meV/atom (validation) | >59% time reduction vs DFT | Specific to σ phase crystal structure |

| ML-B3LYP Functional [22] | Molecular systems | Improved thermochemical and kinetic energies | Similar SCF efficiency to B3LYP | Limited improvement for barrier heights |

| Random Forest Phase Prediction [24] | High-entropy alloys | Accuracy: 0.914, Precision: 0.916, ROC-AUC: 0.97 | Fast screening of compositions | Classification only, no enthalpy values |

Transferability and Generalization Performance

A critical consideration for ML-enhanced DFT methods is their performance on unseen data—systems or compositions not included in the training set. The ML-corrected B3LYP functional demonstrates that training exclusively on absolute energies rather than energy differences enhances transferability, though challenges remain for certain properties like isomerization energies and reaction barrier heights [22].

For σ phase prediction, the gap between training error (22.881 meV/atom) and validation error (34.871 meV/atom) indicates some degradation in performance on unseen ternary systems, though the validation performance remains chemically meaningful [23]. The neural network correction for alloy thermodynamics employs rigorous cross-validation strategies (LOOCV and k-fold) specifically to enhance generalization beyond the training set [21].

Comparative analysis of NMR shielding predictions reveals an important limitation: correction schemes developed for DFT do not necessarily translate effectively to ML models. ShiftML2, a machine-learning model trained on PBE-calculated NMR data, benefits only marginally from single-molecule PBE0 corrections that significantly improve periodic DFT predictions [27]. This suggests that ML models may learn different aspects of the underlying physics compared to DFT, and thus require specifically tailored correction approaches.

Research Reagent Solutions: Essential Tools for ML-DFT Implementation

Table 3: Essential Computational Tools for ML-Enhanced DFT Research

| Tool Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| DFT Codes | EMTO-CPA [21], VASP [23] | First-principles total energy calculations | Basis for ML correction and training data generation |

| Machine Learning Frameworks | Multi-Layer Perceptron [21] [23], Random Forest [24] [25] | Learning DFT error patterns or direct property prediction | Implementing correction models |

| Feature Libraries | Elemental properties, atomic radii, valence electrons [23] [25] | Encoding chemical information for ML | Representing composition-structure relationships |

| Validation Methods | Leave-one-out cross-validation [21], k-fold cross-validation [21] | Preventing overfitting, assessing generalization | Model optimization and performance evaluation |

| High-Performance Computing | Intel Xeon Gold CPUs [23] | Handling computationally intensive calculations | Enabling high-throughput screening |

The integration of machine learning with density functional theory has matured beyond conceptual promise to practical methodology for addressing DFT's systematic errors in formation enthalpy and phase stability prediction. Our comparison reveals that while all ML-DFT approaches offer significant improvements over uncorrected DFT, they exhibit distinct strengths and limitations that make them suitable for different research scenarios.

For researchers focusing on specific material systems like high-temperature alloys or complex intermetallic phases, Δ-ML correction and direct property prediction offer an optimal balance between accuracy and computational efficiency. For those investigating fundamental functional development or requiring broad transferability across chemical space, ML-corrected density functional approximations provide a more foundational approach, though with more modest improvements for certain properties.

Future developments will likely focus on improving transferability through better feature engineering, incorporating physical constraints directly into ML architectures, and developing unified frameworks that combine the strengths of multiple correction strategies. As these methodologies continue to evolve, ML-enhanced DFT is poised to become an increasingly standard approach for reliable materials prediction and design, ultimately reducing the dependency on serendipitous error cancellation and advancing toward truly predictive computational materials science.

Density Functional Theory (DFT) stands as a cornerstone of modern computational materials science, physics, and chemistry, enabling the prediction of electronic structure and material properties from first principles. The accuracy of any DFT calculation, however, critically depends on the approximation chosen for the exchange-correlation (XC) functional, which accounts for quantum mechanical electron-electron interactions. The landscape of XC functionals is vast, ranging from the simple Local Density Approximation (LDA) to more sophisticated Generalized Gradient Approximations (GGA), meta-GGAs, and hybrids.

This guide provides an objective comparison of the performance of different XC functionals, framing the analysis within a broader research context focused on validating and improving density functional theory through machine learning. For researchers in fields like drug development, where accurate predictions of molecular interactions are paramount, selecting the appropriate functional is not an academic exercise but a practical necessity for obtaining reliable data.

Methodology for Comparative Analysis

Benchmarking the performance of exchange-correlation functionals requires a structured and reproducible methodology. The following protocol outlines the key steps for a fair and informative comparison.

Computational Setup and Workflow

A standardized computational workflow is essential to ensure that performance differences are attributable to the functionals themselves and not to variations in calculation parameters. The following diagram illustrates the key stages of a robust benchmarking protocol.

Step 1: Selection of a Benchmark Set. A diverse set of benchmark materials or molecules should be selected, encompassing a range of bonding types (metallic, ionic, covalent) and properties. For drug development, this would include organic molecules, transition metal complexes, and non-covalent interaction complexes like hydrogen-bonded systems [28].

Step 2: Definition of Computational Parameters. Consistent parameters must be fixed across all calculations. This includes the basis set (or plane-wave cutoff energy), k-point grid for Brillouin zone integration, and convergence criteria for energy and forces. For example, a force convergence limit below 0.01 eV/Å and a high energy cutoff (e.g., 600 eV) are typical [28].

Step 3: Execution of Calculations. The same structural models and computational code (e.g., VASP) should be used to evaluate all XC functionals for the benchmark set. This ensures that differences in implementation do not confound the results.

Step 4: Computation of Properties. Key electronic, structural, and magnetic properties are calculated for each functional. These typically include lattice parameters, band gaps, binding energies, reaction energies, and magnetic moments.

Step 5: Data Analysis and Comparison. The calculated properties are compared against high-quality experimental data or advanced quantum chemistry methods (like quantum Monte Carlo or CCSD(T)) which serve as a reference. The deviation from the reference data is quantified using statistical metrics like mean absolute error (MAE) and root mean square error (RMSE).

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key computational "reagents" and resources essential for conducting research in this field.

Table 1: Essential Computational Tools for DFT and ML Research

| Research Reagent / Tool | Function / Purpose |

|---|---|

| Vienna Ab initio Simulation Package (VASP) | A widely used software package for performing DFT calculations using a plane-wave basis set and pseudopotentials [28]. |

| LibXC Library | An extensive library providing nearly 200 different exchange-correlation functionals, enabling systematic benchmarking and development of new functionals [29]. |

| Benchmark Datasets (e.g., MGDB) | Curated datasets of high-quality experimental and theoretical data for molecules and solids, used to train and validate computational methods. |

| Machine Learning Libraries (e.g., PyTorch, TensorFlow) | Software libraries used to develop and train ML models for predicting density functionals or material properties. |

Performance Comparison of Exchange-Correlation Functionals

The choice of XC functional profoundly impacts the accuracy of predicted material properties. The data below summarizes a comparative analysis based on published studies.

LDA vs. GGA: A Case Study on Magnetic Materials

A study on the L10-MnAl compound, a rare-earth-free permanent magnet, provides a clear contrast between two common functionals. The research utilized both the Local Density Approximation (LDA) and the Perdew-Burke-Ernzerhof (PBE) form of the Generalized Gradient Approximation (GGA) to compute structural and magnetic properties [28].

Table 2: Comparison of LDA and GGA (PBE) Performance for L10-MnAl [28]

| Property | Experimental / Theoretical Reference | LDA Prediction | GGA (PBE) Prediction | Key Finding |

|---|---|---|---|---|

| Lattice Parameter a (Å) | ~3.91 Å | Underestimated | In good agreement | GGA provides more accurate structural description. |

| Lattice Parameter c (Å) | ~3.57 Å | Underestimated | In good agreement | GGA provides more accurate structural description. |

| Magnetic Moment (μB/Mn) | ~2.7 μB | Less accurate | More accurate | GGA better captures magnetic behavior. |

| Electronic Structure | N/A | Less accurate DOS | Improved agreement | GGA offers a superior description of electronic states. |

The study concluded that for the L10-MnAl compound, GGA provides greater accuracy in describing both the electronic structure and magnetic behavior compared to LDA, which tends to underestimate lattice parameters [28].

Expanding the Comparison: Hybrid Functionals and Beyond

While LDA and GGA are efficient, they are known to systematically underestimate band gaps in semiconductors and insulators. Hybrid functionals, such as HSE06, which mix a portion of exact Hartree-Fock exchange with GGA, significantly improve band gap predictions but at a much higher computational cost. A recent development is the hybrid Kohn-Sham DFT and 1-electron Reduced Density Matrix Functional Theory (1-RDMFT), designed to capture strong correlation effects at a lower computational cost than traditional hybrid functionals [29].

Table 3: Broader Functional Comparison and Machine Learning Context

| Functional Class | Typical Performance | Computational Cost | Suitability for Drug Development |

|---|---|---|---|

| LDA | Underestimates bond lengths, overbinds, poor for molecules. | Low | Low; poor for molecular systems. |

| GGA (e.g., PBE) | Improved structures and energies over LDA, but underestimates band gaps. | Low | Moderate; good for geometry optimization, but caution with energetics. |

| Hybrid (e.g., HSE06) | Accurate band gaps and reaction energies. | High (10-100x GGA) | High for accurate single-point energies, but prohibitive for large systems. |

| Hybrid 1-RDMFT [29] | Aims to describe strong correlation at mean-field cost. | Moderate | Promising for transition metal complexes in drugs. |

| ML-Augmented Functional | Potentially high accuracy across multiple properties. | Varies (training is high, prediction can be low) | High future potential for high-throughput screening. |

Integrating Machine Learning for Functional Validation and Development

The challenge of functional choice is being addressed by machine learning, which offers new paradigms for validation and development. Machine learning provides powerful tools to navigate the complex functional space and develop next-generation solutions.

ML for Benchmarking and Functional Selection

Machine learning can analyze the massive datasets generated from systematic benchmarks of hundreds of functionals, like those available in LibXC [29]. ML models can identify patterns and correlations between functional forms and their performance on specific material classes, creating a predictive map that guides researchers to the optimal functional for their specific system without the need for exhaustive testing.

ML-Driven Functional Design

A more advanced application involves using ML to design entirely new XC functionals. The logical relationship between data, model, and functional design is shown in the following workflow.

By training on high-fidelity data (from experiments or advanced quantum chemistry methods), an ML model learns to map electron densities to the exact exchange-correlation potential, effectively learning a more accurate functional [29]. This approach directly addresses the core thesis of moving "from electron densities to accurate potentials." These ML-derived functionals have the potential to break traditional trade-offs, offering high accuracy across diverse properties without a prohibitive computational cost, which is a key objective for large-scale drug discovery projects.

Density Functional Theory (DFT) has long served as the cornerstone of computational materials science, providing crucial insights into material properties and reaction mechanisms at the quantum mechanical level. However, its formidable computational cost, which scales cubically with the number of atoms, severely restricts its application to small systems and short timescales. Classical molecular dynamics (MD), while computationally efficient, often lacks the accuracy for modeling complex chemical environments due to its reliance on predefined empirical potentials. Machine Learning Interatomic Potentials (MLIPs) have emerged as a transformative solution to this fundamental trade-off, acting as surrogate models that learn the intricate relationship between atomic configurations and potential energy surfaces from DFT data. These models achieve near-DFT accuracy while reducing computational costs by several orders of magnitude, enabling large-scale, long-timescale simulations previously inaccessible to first-principles methods [30] [31].

The core innovation of MLIPs lies in their data-driven approach. By training on high-fidelity ab initio datasets, they implicitly capture complex quantum mechanical effects without explicitly solving the electronic structure problem. This paradigm shift has opened new frontiers across diverse domains, from catalysis and battery materials to drug development, where understanding atomistic dynamics is crucial for innovation [32]. This guide provides a comprehensive comparison of state-of-the-art MLIP architectures, evaluating their performance, computational efficiency, and suitability for different research applications within the broader context of validating and augmenting DFT through machine learning.

Comparative Analysis of Major MLIP Architectures

The landscape of MLIP architectures has evolved rapidly, progressing from descriptor-based models to sophisticated geometrically equivariant neural networks. The table below summarizes the key characteristics and performance metrics of leading frameworks.

Table 1: Comparison of State-of-the-Art Machine Learning Interatomic Potentials

| Model | Architectural Approach | Key Features | Reported Accuracy (Force MAE) | Computational Efficiency |

|---|---|---|---|---|

| NequIP [33] [34] | Equivariant Graph Neural Network | Rotationally equivariant representations using higher-order tensors and irreducible representations. | ~47.3 meV/Å (Formate), ~60.2 meV/Å (Defected Graphene) [35] | High accuracy, moderate computational cost [34] |

| MACE [35] [34] | Higher-Order Message Passing | Complete basis for many-body atomic interactions; uses higher-order body-order messages. | Top performer on Al-Cu-Zr system [34] | High accuracy, competitive cost [35] |

| Allegro [34] | Equivariant Architecture | - | Top performer on Al-Cu-Zr system [34] | - |

| AlphaNet [35] | Local-Frame-Based Equivariant Model | Employs learnable geometric transitions and rotary position embedding for multi-body messages. | 42.5 meV/Å (Formate), 19.4 meV/Å (Defected Graphene) [35] | State-of-the-art accuracy with high computational efficiency [35] |

| DPMD / DeePMD [33] [31] | Descriptor-Based Neural Network | Uses atom-centered symmetry functions to describe local environments. | - | 1-2 orders of magnitude less efficient than NequIP for Tobermorites [33] |

| Nonlinear ACE [34] | Atomic Cluster Expansion | Nonlinear extension of the ACE formalism. | High accuracy [34] | Forms Pareto front for accuracy vs. cost [34] |

Key Architectural Paradigms and Performance Insights

Equivariant Models for Accuracy: Models like NequIP, MACE, and Allegro explicitly embed Euclidean symmetries (rotation, translation, reflection) into their architecture. This geometric equivariance is crucial for correctly modeling vector quantities like atomic forces and leads to superior data efficiency and accuracy. A user-focused benchmark found that MACE and Allegro offered the highest accuracy for a complex metallic system (Al-Cu-Zr), while NequIP excelled for a system with more directional bonding (Si-O) [34].

The Efficiency-Accuracy Trade-off: The benchmark establishes that nonlinear ACE and equivariant graph networks like NequIP and MACE form the "Pareto front," representing the optimal balance between computational cost and predictive accuracy [34]. The more recent AlphaNet claims to advance this frontier further, demonstrating state-of-the-art accuracy on multiple datasets while maintaining high computational efficiency [35].

Performance on Real-World Systems: Beyond standardized benchmarks, performance can vary significantly with the material system. For example, in modeling tobermorite (a cement analogue), NequIP showed errors 1-2 orders of magnitude smaller than DPMD [33]. Furthermore, AlphaNet demonstrated a significant 20% improvement over other equivariant models on a diverse zeolite dataset [35].

Experimental Protocols for MLIP Benchmarking

Robust and standardized benchmarking is essential for validating the performance of MLIPs against DFT and for making meaningful comparisons between different potentials. The following workflow outlines a comprehensive experimental protocol derived from recent literature.

Diagram 1: MLIP Validation Workflow. The iterative process of generating data, training potentials, predicting properties, and validating against DFT, with active learning closing the loop.

Data Generation and Model Training

The foundation of any reliable MLIP is a high-quality, diverse dataset of atomic configurations with corresponding DFT-calculated energies, forces, and optionally, stress tensors.

Dataset Curation: Datasets are typically generated from first-principles molecular dynamics (AIMD) trajectories, nudged elastic band (NEB) calculations, or random displacements of structures. For universal MLIPs (uMLIPs), datasets like the Materials Project, Alexandria, and OC20 are used, covering a vast chemical space [36] [31]. A critical consideration is ensuring the dataset encompasses all relevant atomic environments the model will encounter during application.

Training and Loss Functions: Models are trained to minimize a loss function that is a weighted sum of the errors in energy, forces, and stress. A typical loss function is:

L = λ_E * MSE_E + λ_F * MSE_F + λ_S * MSE_S, whereMSEis the mean squared error, andλare weighting parameters for energy (E), forces (F), and stress (S) [31]. This ensures the potential accurately reproduces both equilibrium energies and the derivatives of the energy landscape.

Validation and Benchmarking Metrics

Once trained, MLIPs are rigorously validated against held-out DFT data and used in practical simulations to assess their predictive power.

Primary Accuracy Metrics: The standard metrics are the Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) for energy (typically in meV/atom) and forces (in meV/Å) [33] [35] [34]. These quantify how closely the MLIP reproduces the DFT potential energy surface.

Stability in Molecular Dynamics: A critical test is running extended MD simulations and checking for unphysical energy drift or structural collapse. This assesses the stability and smoothness of the potential energy surface beyond the training data points [35] [31].

Prediction of Macroscopic Properties: The ultimate validation is the accurate prediction of macroscopic material properties. This includes:

Table 2: Key Research Reagent Solutions for MLIP Development and Application

| Tool / Resource | Type | Function & Application |

|---|---|---|

| DeePMD-kit [31] | Software Package | Open-source implementation of the Deep Potential method; widely used for training and running MLIP-based MD simulations. |

| Open Catalyst Project (OC20) [35] | Benchmark Dataset | A comprehensive dataset of catalyst relaxations and molecular dynamics trajectories for training and benchmarking MLIPs in catalysis. |