Large Language Models for Materials Discovery: From Data Extraction to Autonomous Research

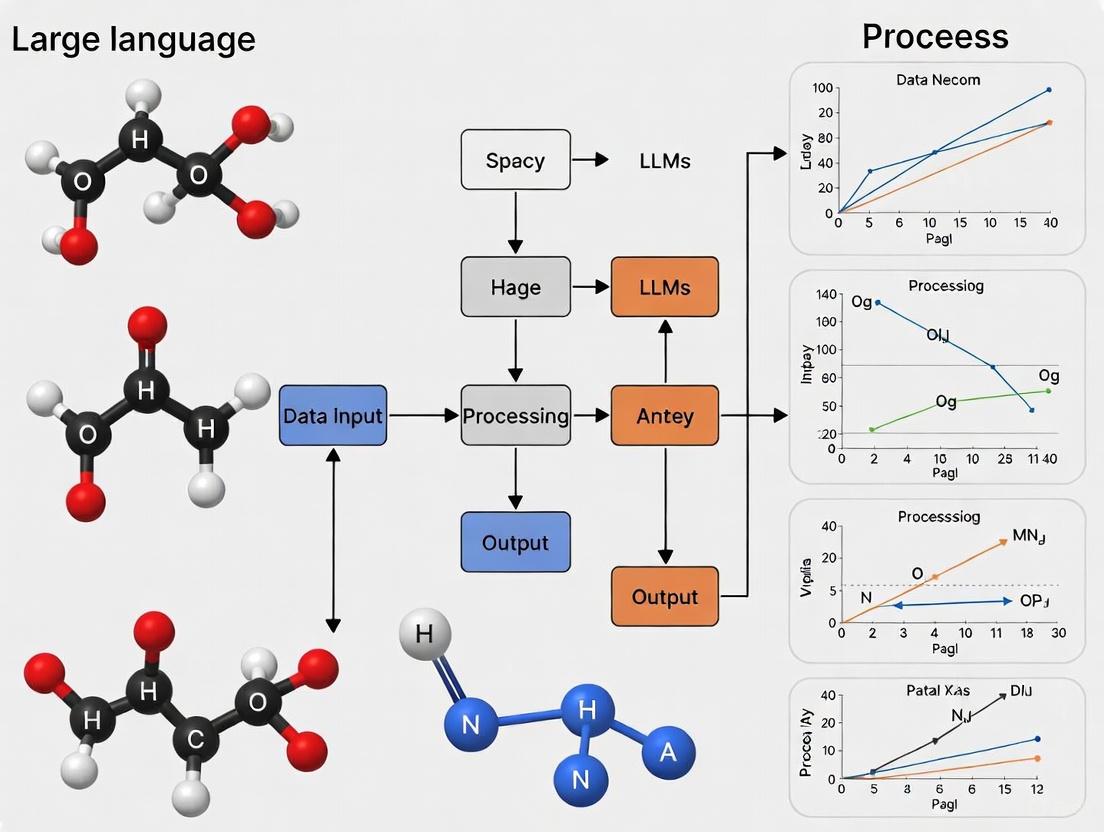

This article explores the transformative impact of Large Language Models (LLMs) on accelerating materials discovery and development.

Large Language Models for Materials Discovery: From Data Extraction to Autonomous Research

Abstract

This article explores the transformative impact of Large Language Models (LLMs) on accelerating materials discovery and development. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive overview of how foundational models are revolutionizing the field. We cover the evolution of Natural Language Processing (NLP) in materials science, detail cutting-edge methodologies for data extraction and property prediction, and analyze specialized Materials Science LLMs (MatSci-LLMs). The article also addresses critical challenges such as data quality, model reliability, and physical admissibility, offering insights into optimization techniques like physics-aware fine-tuning. Finally, we present a comparative analysis of model performance and validation frameworks, concluding with the future outlook for integrating LLMs into autonomous research workflows and their profound implications for biomedical and clinical research.

The Foundation: How LLMs are Revolutionizing Materials Science

The field of materials science is undergoing a profound transformation driven by artificial intelligence (AI) technologies. Among these, Natural Language Processing (NLP) and Large Language Models (LLMs) have emerged as particularly revolutionary tools for accelerating materials research [1]. The overwhelming majority of materials knowledge resides in published scientific literature, which represents a vast but underutilized resource. Manually collecting and organizing this data from published literature is exceptionally time-consuming, severely limiting the efficiency of large-scale data accumulation [1]. The development of NLP has provided an opportunity for the automatic construction of large-scale materials datasets, giving data-driven materials research a powerful new capability to extract and utilize information from text sources [1]. This technical guide explores the application of NLP tools in materials science within the broader context of leveraging LLMs for materials discovery research, focusing on automatic data extraction, materials discovery, and autonomous research systems.

The Evolution of NLP in Materials Science

Natural Language Processing has a long history dating back to the 1950s, with the objective of making computers understand and generate text through two principal tasks: Natural Language Understanding (NLU) and Natural Language Generation (NLG) [1]. NLU focuses on machine reading comprehension via syntactic and semantic analysis to mine underlying semantics, while NLG involves producing phrases, sentences, and paragraphs within a given context [1].

The development of NLP in materials science has progressed through several distinct phases:

- Handcrafted Rules Era (Pre-2010s): Early systems used handwritten rules based on expert knowledge, solving only specific, narrowly defined problems.

- Machine Learning Era (Late 1980s onward): ML algorithms began analyzing large corpora of annotated texts to learn relations, though this approach faced challenges with sparse data and the curse of dimensionality.

- Deep Learning Era (Recent years): Neural network architectures, particularly bidirectional long short-term memory networks (BiLSTM) and Transformers, enabled automatic feature engineering from training data [1].

A pivotal moment came in 2011 when NLP entered the field of materials chemistry for the first time [1], beginning its impact on materials informatics. The most common initial application used NLP to solve the automatic extraction of materials information reported in literature, including compounds and their properties, synthesis processes and parameters, alloy compositions and properties, and process routes [1].

Core NLP Concepts and Methodologies

Foundational NLP Components

Several key technological advancements have enabled the current capabilities of NLP in materials science:

Word Embeddings: These distributed representations of words enable language models to interpret sentences and underlying concepts similarly to humans [1]. Word embeddings allow words to be represented as dense, low-dimensional vectors that preserve contextual word similarity [1]. Popular implementations include Word2vec and GloVe, which compute global word-word co-occurrence statistics from large corpora [1].

Attention Mechanism: First introduced in 2017 as an extension to encoder-decoder models, the attention mechanism organizes two recurrent neural networks and has become fundamental to modern NLP architectures [1].

Transformer Architecture: Characterized by the attention mechanism, Transformer architecture has become the fundamental building block for impactful LLMs [1]. This architecture has been employed to solve numerous problems in information extraction, code generation, and the automation of chemical research [1].

Large Language Models in Materials Science

The emergence of pre-trained models has brought a new era in NLP research and development. Large Language Models (LLMs) such as Generative Pre-trained Transformer (GPT), Falcon, and Bidirectional Encoder Representations from Transformers (BERT) have demonstrated general "intelligence" capabilities via large-scale data, deep neural networks, self and semi-supervised learning, and powerful hardware [1].

Recently, GPTs have emerged in materials science, offering a novel approach to materials information extraction through prompt engineering, distinct from conventional NLP pipelines [1]. Prompt engineering involves skillfully crafting prompts to direct text generation, with well-designed prompts being essential for maximizing AI effectiveness through elements of clarity, structure, context, examples, constraints, and iterative refinement [1].

Table 1: Key LLM Architectures Relevant to Materials Science

| Model Architecture | Key Features | Materials Science Applications |

|---|---|---|

| BERT-based Models | Bidirectional understanding, pre-training on scientific text | Named entity recognition, relation classification [2] |

| GPT Models | Generative capabilities, prompt engineering | Information extraction, materials prediction and design [1] |

| Domain-Specific LLMs | Fine-tuned on materials science literature | Property prediction, synthesis planning [3] |

| Multimodal Models | Integration of text with structural data | Holistic materials understanding and design [4] |

NLP Pipelines for Materials Data Extraction

Traditional Information Extraction

Traditional NLP approaches to materials information extraction have focused on developing algorithms for specific tasks, particularly named entity recognition and relationship extraction in specific domains [1]. This has led to the formation of materials literature data extraction pipelines targeting various types of information:

- Compounds and their properties [1]

- Synthesis processes and parameters [1]

- Alloy compositions and properties [1]

- Process routes [1]

These pipelines have enabled the systematic extraction of structured information from unstructured scientific text, facilitating the creation of large-scale materials databases.

Advanced LLM-Based Extraction

More recently, LLM-based AI agents have been developed for automated data extraction of material properties and structural features. One such workflow autonomously extracts thermoelectric and structural properties from approximately 10,000 full-text scientific articles [5]. This system integrates dynamic token allocation, zero-shot multi-agent extraction, and conditional table parsing to balance accuracy against computational cost [5].

Benchmarking results demonstrate the effectiveness of this approach:

Table 2: Performance of LLM Models on Materials Data Extraction Tasks

| Model | Thermoelectric Properties F1 Score | Structural Fields F1 Score | Computational Cost |

|---|---|---|---|

| GPT-4.1 | 0.91 | 0.838 | High |

| GPT-4.1 Mini | 0.889 | 0.833 | Fraction of GPT-4.1 cost |

| Domain-Specific BERT | Varies by task (~0.75-0.85) | Varies by task (~0.72-0.82) | Moderate [2] |

This workflow has enabled the creation of a dataset of 27,822 property temperature records with normalized units, spanning figure of merit (ZT), Seebeck coefficient, electrical conductivity, power factor, and thermal conductivity, together with structural attributes such as crystal class, space group, and doping strategy [5].

Experimental Protocol: Large-Scale Data Extraction

For researchers implementing LLM-based data extraction systems, the following methodology has proven effective:

Corpus Collection: Gather full-text scientific articles from targeted materials science journals and repositories. The corpus should represent the diversity of materials classes and property types of interest.

Preprocessing: Implement text cleaning and normalization procedures, including unit conversion, symbol standardization, and terminology harmonization across different literature sources.

Multi-Agent Extraction System: Deploy an LLM-based multi-agent system where different specialized agents focus on specific extraction tasks (e.g., one agent for numerical properties, another for structural descriptions, and a third for synthesis conditions).

Dynamic Token Allocation: Implement token management systems that allocate computational resources based on document complexity, reserving higher token limits for more complex extraction tasks.

Conditional Table Parsing: Develop specialized parsers for extracting data from tables and figures, with conditional logic to handle varying table formats across different publications.

Validation Framework: Establish a multi-tier validation system including:

- Automated consistency checks

- Cross-referencing with existing databases

- Expert manual review of a subset of extractions

- Statistical analysis of outlier values

This protocol, when applied to thermoelectric materials, achieved an extraction accuracy of F1 ≈ 0.91 for thermoelectric properties and F1 ≈ 0.838 for structural fields using GPT-4.1 [5].

Domain-Specific Language Models

Benchmarking and Evaluation

The development of specialized benchmarks has been crucial for advancing domain-specific NLP applications. MatSci-NLP represents the first comprehensive benchmark dataset specifically designed for materials science [3] [2]. This benchmark encompasses seven different NLP tasks, including both conventional NLP tasks like named entity recognition and relation classification, as well as materials-specific tasks such as synthesis action retrieval [2].

Experiments in low-resource training settings have demonstrated that language models pre-trained on scientific text consistently outperform BERT trained on general text [2]. Furthermore, models pre-trained specifically on materials science journals, such as MatBERT, generally achieve the best performance across most tasks [2].

Specialized Models for Materials Science

The HoneyBee large language model represents a significant advancement in domain-specific LLMs for materials science [3]. HoneyBee is fine-tuned specifically for materials science using a novel instruction-based data generation framework called MatSci-Instruct [3]. Key innovations in its development include:

Automatic Instruction Generation: Advanced algorithms parse and comprehend existing materials science literature to create a diverse set of instructions and examples, ensuring training data is both comprehensive and relevant [3].

Progressive Instruction Fine-Tuning: The model employs a continuous feedback loop that combines instruction generation with model ability evaluation, allowing progressive improvement with each iteration [3].

Text-to-Schema Framework: This approach unifies diverse materials science tasks as text-to-schema formats to encourage generalization across multiple tasks [2].

NLP for Materials Discovery and Design

Knowledge Encoding and Materials Similarity

Beyond information extraction, materials science knowledge present in published literature can be efficiently encoded as information-dense word embeddings [1]. These dense, low-dimensional vector representations have been successfully used for materials similarity calculations that can assist in new materials discovery [1]. By representing materials concepts in vector space, NLP models can identify relationships and similarities that may not be immediately apparent through traditional methods.

Hypothesis Generation

Recent research has explored the potential of LLMs to generate viable hypotheses that, once validated, can expedite materials discovery [6]. Collaborating with materials science experts, researchers have curated novel datasets from recent journal publications featuring real-world goals, constraints, and methods for designing real-world applications [6].

LLM-based agents can generate hypotheses for achieving given goals under specific constraints, with evaluation metrics that emulate the process materials scientists use to critically evaluate hypotheses [6]. This approach represents a significant advancement in leveraging NLP not just for information extraction, but for creative scientific discovery.

Integration with Self-Driving Labs

NLP technologies are increasingly integrated with self-driving laboratories (SDLs) - research systems that combine robotics, AI, and autonomous experimentation [4]. These systems can run and analyze thousands of experiments in real time, accelerating discovery at a scale previously unimaginable [4].

Researchers are developing LLM-based agents that help users navigate experimental datasets, ask technical questions, and propose new experiments using retrieval-augmented generation (RAG) [4], a technique for improving answers from generative AI. This integration creates a closed-loop system where NLP both extracts knowledge from literature and contributes to generating new experimental data.

Research Reagent Solutions

Implementing NLP approaches for materials discovery requires both computational and experimental resources. The following table outlines key components of the research "toolkit":

Table 3: Essential Research Reagents for NLP-Driven Materials Discovery

| Resource Category | Specific Examples | Function in Research Pipeline |

|---|---|---|

| Computational Models | MatBERT, HoneyBee, GPT-4.1 | Domain-specific language understanding and data extraction |

| Benchmark Datasets | MatSci-NLP, Thermoelectric dataset [5] | Evaluation standards and training data for specialized tasks |

| Experimental Facilities | Self-driving labs (e.g., MAMA BEAR [4]) | High-throughput validation of computational predictions |

| Data Infrastructure | BU Libraries FAIR data repository [4] | Storage, sharing, and curation of experimental results |

| Analysis Tools | Retrieval-augmented generation (RAG) systems | Bridging knowledge gaps between literature and experiments |

Challenges and Future Directions

Despite significant progress, notable gaps remain between the expectations of materials scientists and the capabilities of existing models. A major limitation is the need for models to provide more accurate and reliable predictions in materials science applications [1]. While models such as GPTs have shown promise, they often lack the specificity and domain expertise required for intricate materials science tasks [1].

Key challenges and emerging solutions include:

Domain Knowledge Integration: Materials science involves complex terminology and diverse sub-disciplines. Future models must better leverage domain-specific knowledge to enhance predictive capabilities and provide contextually relevant information [1].

Explainability and Interpretability: Materials scientists require models that provide explanations for predictions, enabling understanding of underlying mechanisms and informed decision-making [1].

Localized Solutions and Resource Optimization: The development of localized solutions using LLMs, optimal utilization of computing resources, and availability of open-source model versions are crucial aspects for advancement [1].

Multi-Modal Integration: Future systems will increasingly integrate textual information with structural data, simulation results, and experimental measurements to create comprehensive materials knowledge graphs [4].

The NSF Artificial Intelligence Materials Institute (AI-MI) exemplifies the future direction of this field, planning to create the AI Materials Science Ecosystem (AIMS-EC), an open, cloud-based portal that couples a science-ready LLM with targeted data streams, including experimental measurements, simulations, images, and scientific papers [4].

Natural Language Processing has evolved from a tool for basic information extraction to a foundational technology enabling accelerated materials discovery. Through specialized language models, automated data extraction pipelines, and integration with autonomous experimentation systems, NLP is transforming how researchers access and utilize the vast knowledge embedded in scientific literature. While challenges remain in accuracy, reliability, and domain specificity, ongoing advances in LLM architectures, training methodologies, and multi-modal integration promise to further enhance the role of NLP in materials science. The convergence of sophisticated language models with high-throughput experimental validation represents a powerful paradigm shift that will continue to drive innovation in materials discovery and design.

In the field of materials science, where knowledge is traditionally encoded in peer-reviewed literature, patents, and experimental reports, the ability to computationally extract and reason with this information has become a critical accelerator for discovery. Word embeddings and representation learning form the foundational layer that enables machines to understand and process this human-generated knowledge. These techniques transform unstructured text into structured, numerical representations that capture semantic relationships, allowing researchers to navigate the vast landscape of materials science literature with unprecedented efficiency. Within the context of large language models (LLMs) for materials research, high-quality embeddings are not merely a convenience—they are a prerequisite for accurate information retrieval, knowledge graph construction, and ultimately, the prediction of new material compositions and properties.

The evolution from traditional sparse representations to dense, neural embeddings has fundamentally changed how natural language processing (NLP) systems interact with scientific text [7]. Where earlier methods treated words as isolated symbols, modern embedding approaches capture nuanced semantic relationships, allowing models to understand that "yttria-stabilized zirconia" and "YSZ" refer to the same material, or that the properties of "MAX phases" are more similar to "ceramics" than to "biopolymers." This capability to encode meaning numerically provides the substrate upon which powerful LLMs for materials science are built and fine-tuned [8].

Theoretical Foundations: From Words to Vectors

The Evolution of Word Representation

Traditional methods for representing words in NLP relied on simplistic, sparse representations that failed to capture semantic meaning. One-Hot Encoding, the most basic approach, represents each word as a vector with a dimension equal to the vocabulary size, where only one element is "hot" (set to 1) to indicate the presence of that specific word [9] [7]. While straightforward, this method suffers from the "curse of dimensionality," lacks semantic information, and cannot represent relationships between words. The Bag-of-Words (BoW) model extends this concept by representing a document as an unordered collection of words with their respective frequencies, but it similarly discards word order and contextual information [9].

Term Frequency-Inverse Document Frequency (TF-IDF) introduced a statistical measure to assess word importance by considering both how frequently a word appears in a specific document and how rare it is across the entire document collection [9] [10] [7]. The TF-IDF score for a term in a document is calculated as:

TF-IDF(t,d,D) = TF(t,d) × IDF(t,D)

Where:

- Term Frequency (TF) measures how often a term appears in a document: TF(t,d) = (Number of times term t appears in document d) / (Total number of terms in document d)

- Inverse Document Frequency (IDF) measures the importance of a term across a collection: IDF(t,D) = log(Total number of documents / Number of documents containing term t)

While TF-IDF improves upon simpler frequency-based methods by highlighting discriminative words, it still operates on the same fundamental limitation: these are essentially count-based models that cannot capture nuanced semantic relationships or contextual meaning [10] [7].

The Distributional Hypothesis: Core Linguistic Principle

The theoretical foundation underlying modern word embeddings is the Distributional Hypothesis, which posits that words with similar meanings tend to occur in similar contexts [7]. This principle, famously summarized as "a word is characterized by the company it keeps," provides the linguistic basis for learning semantic relationships from text corpora without explicit human supervision. In materials science, this means that terms like "perovskite," "ABO₃," and "crystal structure" will frequently co-occur in related contexts, enabling models to learn their semantic relatedness automatically from scientific literature [8].

Neural Word Embeddings: Capturing Semantic Relationships

The breakthrough in word representation came with the development of neural word embeddings, particularly Word2Vec and GloVe, which represented words as dense, continuous vectors in a relatively low-dimensional space (typically 50-300 dimensions) [11] [7] [12]. Unlike sparse representations, these dense embeddings capture semantic and syntactic relationships through their vector orientations and magnitudes.

Table 1: Comparison of Major Word Embedding Approaches

| Feature | One-Hot Encoding | TF-IDF | Word2Vec | GloVe |

|---|---|---|---|---|

| Vector Type | Sparse | Sparse | Dense | Dense |

| Dimensionality | Vocabulary size | Vocabulary size | 50-300 | 50-300 |

| Semantic Capture | None | Limited | Strong | Strong |

| Training Basis | Vocabulary indexing | Document statistics | Local context | Global co-occurrence |

| Memory Efficiency | Low | Low | High | High |

| Context Awareness | No | No | Yes | Yes |

Word2Vec, introduced by Mikolov et al. at Google in 2013, employs two distinct neural architectures to learn word representations [11] [7]:

- Continuous Bag of Words (CBOW): Predicts a target word given its surrounding context words

- Skip-gram: Predicts context words given a target word

The training process involves sliding a context window through text corpora, generating (target, context) word pairs that form the training data. For example, in the sentence "The solid electrolyte showed high ionic conductivity," with a window size of 2, the word "electrolyte" would generate pairs: (electrolyte, solid), (electrolyte, showed), (electrolyte, high), (electrolyte, ionic). Through iterative training, the model adjusts vector representations so that semantically similar words cluster in the vector space.

GloVe (Global Vectors for Word Representation), developed at Stanford in 2014, takes a different approach by leveraging global word-word co-occurrence statistics from entire corpora [12]. The GloVe model is based on the observation that the ratios of word co-occurrence probabilities have the potential for encoding some form of meaning. For example, it can capture that "solid" co-occurs more frequently with "electrolyte" than with "gas," while "steam" shows the opposite pattern. GloVe trains word vectors such that their dot product equals the logarithm of the words' probability of co-occurrence, effectively encoding meaning into vector differences [12].

Advanced Embedding Architectures for Scientific Applications

Contextualized Embeddings and Transformer Models

While Word2Vec and GloVe generate static word representations, a significant advancement came with the development of contextualized embeddings through transformer architectures [10]. Models like BERT (Bidirectional Encoder Representations from Transformers) generate dynamic word representations that change based on surrounding context, enabling them to handle polysemy—where words have multiple meanings depending on usage [13].

For example, in materials science, the word "phase" can refer to different concepts in different contexts: "crystal phase" in solid-state chemistry, "phase diagram" in thermodynamics, or "phase separation" in polymer science. Contextual embeddings can disambiguate these meanings by generating distinct vector representations for each usage [13]. This capability is particularly valuable for scientific domains where terminology is often highly specialized and context-dependent.

Domain-Specific Language Models

General-purpose language models often underperform when applied to specialized scientific domains due to unfamiliarity with domain-specific terminology and concepts. This limitation has driven the development of domain-specific language models that are pre-trained on scientific corpora [13].

Table 2: Domain-Specific Language Models for Scientific Applications

| Model | Domain | Base Architecture | Training Corpus | Key Applications |

|---|---|---|---|---|

| MatSciBERT | Materials Science | BERT | 285M words from materials science literature [13] | Named Entity Recognition, Relation Classification, Abstract Classification |

| SciBERT | General Science | BERT | 3.17B words from biomedical and computer science papers [13] | Scientific text processing, Information extraction |

| BioBERT | Biomedicine | BERT | Biomedical literature [14] | Biomedical Named Entity Recognition, Gene-protein extraction |

| MedCPT | Biomedicine | Transformer | PubMed clinical notes [15] | Biomedical retrieval, Clinical text processing |

MatSciBERT represents a significant advancement for materials science applications [13]. Trained on a carefully curated corpus of approximately 285 million words from peer-reviewed materials science publications across domains including inorganic glasses, metallic glasses, alloys, and cement, MatSciBERT outperforms general-purpose models on key information extraction tasks. The training process involves domain-adaptive pre-training, where an existing language model (SciBERT) is further trained on domain-specific text, allowing it to develop specialized knowledge while retaining general language understanding capabilities [13].

The effectiveness of domain-specific models stems from their familiarity with specialized vocabulary and concepts. For instance, MatSciBERT's tokenizer has a 53.64% vocabulary overlap with SciBERT compared to only 38.90% with standard BERT, indicating its better alignment with materials science terminology [13]. This specialized training enables more accurate tokenization of complex material names like "yttria-stabilized zirconia," which general models might split into less meaningful subwords.

Experimental Protocols and Implementation

Training Methodologies for Word Embeddings

The quality of word embeddings depends critically on the training methodology and hyperparameter selection. For Word2Vec, key experimental considerations include:

Architecture Selection: The choice between CBOW and Skip-gram involves trade-offs: CBOW is faster and better for frequent words, while Skip-gram works well with small amounts of data and represents rare words more effectively [11].

Context Window Size: This crucial parameter determines how many surrounding words are considered as context. Smaller windows (2-5 words) capture more syntactic relationships, while larger windows (5-10+ words) capture more semantic/topic relationships [11].

Dimensionality: Word vector size typically ranges from 100-300 dimensions. Lower dimensions may not capture sufficient semantic information, while higher dimensions may lead to overfitting and increased computational cost [11] [15].

Training Corpus: The domain and size of the training text significantly impact embedding quality. For materials science applications, domain-specific corpora like the Elsevier Science Direct Database or PubMed are preferable to general web crawls [13].

The following diagram illustrates the complete workflow for training and applying domain-specific word embeddings in materials science research:

Evaluation Metrics for Embedding Quality

Assessing the quality of word embeddings requires multiple evaluation strategies:

Intrinsic Evaluation measures how well the embeddings capture linguistic regularities through tasks like:

- Word similarity/relatedness tests (measuring correlation with human judgments)

- Word analogy tasks (e.g., "King is to Queen as Man is to Woman") [11]

- Clustering quality metrics (silhouette scores, purity)

Extrinsic Evaluation tests embedding performance on downstream NLP tasks like:

- Named Entity Recognition (NER) for materials science concepts [14] [13]

- Relation Classification between material entities [13]

- Document classification (e.g., glass vs. non-glass abstracts) [13]

For materials science applications, MatSciBERT established state-of-the-art results with F1 scores of 90.18 on the SOFC dataset for named entity recognition, significantly outperforming general-purpose models [13].

Implementation Protocols

Implementing word embeddings for materials discovery involves several concrete steps:

Corpus Collection and Curation: Gather domain-specific text from scientific databases, ensuring coverage of relevant subfields. The MatSciBERT corpus, for example, included 150K papers from inorganic glasses, metallic glasses, alloys, and cement [13].

Preprocessing Pipeline: Implement text cleaning, tokenization, and normalization. For scientific text, this may require special handling of chemical formulas, mathematical notation, and domain-specific terminology.

Model Training and Fine-tuning: Utilize frameworks like Gensim for Word2Vec or Hugging Face Transformers for BERT-based models. For domain adaptation, continue pre-training general models on specialized corpora.

Validation and Iteration: Evaluate on domain-specific benchmarks and refine based on performance gaps.

Applications in Materials Discovery and Sustainability

Knowledge Extraction from Scientific Literature

The primary application of word embeddings in materials science is information extraction from the vast body of existing scientific literature. Named Entity Recognition (NER) systems powered by domain-specific embeddings can automatically identify and categorize materials, properties, synthesis methods, and characterization techniques mentioned in text [14] [13]. For example, in the sentence "The perovskite solar cell achieved 25.3% efficiency with minimal hysteresis," an NER system would identify "perovskite" as a material class, "solar cell" as an application, and "25.3% efficiency" as a performance metric.

This capability enables the automated construction of structured materials databases from unstructured text, dramatically accelerating the curation process that would otherwise require manual expert annotation. The extracted information can populate knowledge graphs that link materials to their properties, synthesis conditions, and performance metrics, creating a searchable network of materials knowledge [8].

Materials Recommendation and Discovery

Word embeddings enable materials recommendation by capturing semantic relationships between material compositions, structures, and properties. The vector representations allow mathematical operations that mirror conceptual relationships—for instance, the vector equation V("high-entropy alloy") - V("CoCrFeNi") + V("Ti") might yield vectors close to representations of "CoCrFeNiTi" and similar compositions [8].

This analogical reasoning capability, famously demonstrated with word embeddings in the general domain (e.g., King - Man + Woman = Queen), can be harnessed for materials discovery by identifying promising compositional variations or substitutions based on learned patterns from existing materials [11] [8]. For high-entropy alloys, where the compositional space is vast, such data-driven approaches significantly narrow the search space for experimental investigation.

Sustainable Materials Design

Word embeddings contribute to sustainable materials design by enabling the identification of materials with improved environmental profiles. NLP models can extract information linking materials to sustainability metrics—energy consumption during synthesis, recyclability, toxicity, and abundance—from literature [8]. By encoding these relationships in vector space, models can recommend material substitutions that maintain performance while improving sustainability.

For example, a model might identify that "cobalt-free cathodes" are being researched as alternatives to "lithium cobalt oxide" batteries due to cobalt's supply chain constraints and ethical concerns, enabling the recommendation of specific cobalt-free compositions for further investigation [8].

Table 3: Research Reagent Solutions for Embedding Implementation

| Resource | Type | Function | Application Context |

|---|---|---|---|

| Gensim Library | Software Library | Implements Word2Vec and other embedding algorithms | General-purpose embedding training [11] |

| Hugging Face Transformers | Software Library | Provides pre-trained transformer models and training utilities | Contextual embedding implementation and fine-tuning [13] |

| MatSciBERT Weights | Pre-trained Model | Domain-specific language model for materials science | Materials science information extraction tasks [13] |

| GloVe Pre-trained Vectors | Pre-trained Embeddings | General-domain word vectors trained on large corpora | Baseline comparisons and transfer learning [12] |

| SciBERT | Pre-trained Model | Language model trained on scientific corpus | Scientific text processing before domain specialization [13] |

| BERT Base Model | Pre-trained Model | General-purpose language understanding | Starting point for domain-adaptive pre-training [13] |

| Text8 Corpus | Training Data | Preprocessed Wikipedia text | General embedding training and benchmarking [11] |

| Materials Science Corpus | Domain Data | Curated collection of materials science publications | Domain-specific model training [13] |

Current Landscape and Future Directions

The 2025 Embedding Ecosystem

The field of word embeddings has evolved significantly, with current models optimized for different operational priorities [15]:

Cloud-Managed Embedding APIs (OpenAI, Cohere, Anthropic) offer high-quality, scalable embeddings with minimal infrastructure requirements but introduce vendor dependency and ongoing costs.

Open & Self-Hosted Embeddings (BAAI BGE, E5-Mistral, Voyage) provide transparency and data control, ideal for privacy-sensitive applications but require more technical infrastructure.

Multimodal Embeddings (SigLIP, EVA-CLIP) project text, images, and other modalities into a unified semantic space, enabling cross-modal retrieval valuable for materials science where visual data (micrographs, spectra) complements textual information.

Domain-Specialized Models (MedCPT for biomedicine, FinText for finance) offer the highest precision within narrow domains but sacrifice generalizability.

Emerging Challenges and Research Frontiers

Several challenges remain at the frontier of word embeddings for materials discovery:

Tokenizer Effects: The tokenization process significantly impacts model performance on scientific text. Specialized tokenizers that preserve complete compound names and maintain consistent token counts are crucial for accurate representation of materials science terminology [16].

Multimodal Integration: Future systems will need to seamlessly integrate textual information with structural data, synthesis protocols, and property measurements to create comprehensive materials representations [8].

Knowledge Graph Integration: Combining embedding-based NLP with structured knowledge graphs creates powerful hybrid systems that leverage both statistical patterns from text and explicit relationships from curated databases [8].

Bias and Fairness: Ensuring that embeddings don't perpetuate biases present in scientific literature (e.g., preferential citation of certain research groups or methodologies) requires careful dataset curation and algorithmic consideration [15].

The following diagram illustrates the integration of word embeddings into a complete materials discovery pipeline:

Word embeddings and representation learning have evolved from simple statistical methods to sophisticated contextual representations that form the essential substrate for modern language models in materials discovery. The progression from Word2Vec to domain-specific transformers like MatSciBERT represents a fundamental shift in how machines understand and process materials science knowledge. These technologies enable the extraction of structured information from unstructured text, the discovery of novel material relationships through vector reasoning, and the acceleration of sustainable materials design.

As the field advances, the integration of embeddings with knowledge graphs, multimodal data, and sophisticated reasoning systems will further enhance their utility for materials research. The specialized challenges of materials science—complex nomenclature, heterogeneous information sources, and the need for precise relationship extraction—will continue to drive innovation in embedding techniques. For researchers and professionals in materials science and drug development, understanding and leveraging these representation learning approaches is no longer optional but essential for harnessing the full potential of AI-driven discovery platforms.

In the landscape of artificial intelligence, the transformer architecture has emerged as a foundational technology, catalyzing advances across numerous scientific domains. For researchers in materials discovery and drug development, understanding this architecture is no longer a niche interest but a prerequisite for leveraging the next generation of computational tools. Transformers, built upon the core mechanism of self-attention, have enabled the development of large language models (LLMs) that are reshaping how we approach scientific inquiry [17]. These models are now being tailored to tackle domain-specific challenges, from predicting molecular properties to designing novel therapeutic compounds and advanced materials [18] [19] [20]. This technical guide explores the architectural principles of transformers and their transformative potential in accelerating materials and pharmaceutical research.

The Core Architectural Components

The transformer architecture, introduced in the seminal paper "Attention Is All You Need," represents a departure from previous recurrent and convolutional neural networks [17] [21]. Its design enables unprecedented parallel processing capabilities and effectiveness at capturing long-range dependencies in sequential data—properties that are equally valuable for analyzing molecular sequences, scientific literature, and experimental data.

The Attention Mechanism

The attention mechanism is the transformative innovation at the heart of the transformer architecture. Conceptually, it allows the model to dynamically prioritize different parts of the input sequence when processing each element [22] [21].

Mathematical Formulation: The scaled dot-product attention, as formalized in the original transformer paper, is computed as:

[ \text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right)V ]

Where:

- Q (Query) represents the current token or element seeking information.

- K (Key) represents all tokens that can be attended to, providing an identifier.

- V (Value) contains the actual information from each token that will be aggregated.

- (dk) is the dimensionality of the key vectors, and the scaling factor (\frac{1}{\sqrt{dk}}) prevents the softmax function from entering regions of extremely small gradients [22] [21].

This mechanism computes alignment scores between queries and keys, normalizes them into weights using softmax, and uses these weights to create a weighted sum of the value vectors. The result is a context-aware representation for each token that incorporates the most relevant information from across the entire sequence [22].

Multi-Head Attention and Transformer Architecture

Transformers extend the basic attention mechanism through multi-head attention, which applies the attention mechanism multiple times in parallel. Each "head" potentially learns to focus on different types of relationships or dependencies within the sequence [17] [22]. The outputs of all heads are concatenated and linearly transformed to produce the final output.

Table 1: Key Components of the Transformer Architecture

| Component | Function | Advantage for Scientific Applications |

|---|---|---|

| Multi-Head Attention | Parallel attention mechanisms capturing different relationship types | Can identify diverse molecular patterns simultaneously (e.g., structural, functional) |

| Positional Encoding | Injects information about token position into the model | Critical for understanding sequential data like DNA, proteins, and chemical syntheses |

| Layer Normalization | Stabilizes training by normalizing inputs across features | Enables training of deeper, more capable models for complex scientific predictions |

| Feed-Forward Networks | Applies point-wise transformations to each position | Allows non-linear feature transformation while maintaining positional independence |

| Encoder-Decoder Architecture | Processes input and generates output sequences | Ideal for tasks like reaction prediction or converting material properties to structures |

The full transformer architecture typically follows an encoder-decoder structure. The encoder processes the input sequence to build rich, contextualized representations, while the decoder generates output sequences one element at a time, attending to both the decoder's previous outputs and the full encoded input [17] [21].

Transformers in Materials Discovery and Drug Development

The application of transformer architectures in scientific domains represents a paradigm shift from general-purpose language models to specialized systems that understand the language of molecules, materials, and biological systems.

Current Applications and Performance

Transformers and their attention-based variants are demonstrating significant potential across the materials and pharmaceutical development pipeline.

Table 2: Transformer Applications in Scientific Domains

| Application Area | Specific Tasks | Reported Performance | Key Models/Approaches |

|---|---|---|---|

| Molecular Property Prediction | Predicting efficacy, safety, bioavailability | Superior to traditional MLP and RNN models | Graph Attention Networks (GATs), BERT-style models [20] |

| De Novo Drug Design | Generating novel molecular structures with desired properties | 35% success rate for valid synthesis plans vs. 5% for text-only LLMs | Llamole (multimodal LLM) [23] |

| Drug-Target Interaction | Predicting binding affinity and interaction mechanisms | High accuracy in identifying potential drug candidates | Transformer encoders with protein sequence inputs [20] |

| Materials Property Prediction | Predicting material characteristics from composition or structure | Outperforms classical feature-based models | MatSciBERT, Materials Science LLMs [18] |

| Retrosynthetic Planning | Predicting synthetic pathways for target molecules | 35% success rate vs. 5% for baseline LLMs | Llamole with graph reaction predictor [23] |

specialized Architectures for Scientific Discovery

The unique challenges of molecular and materials representation have spurred the development of specialized architectures that adapt the core transformer principles to scientific data:

Multimodal LLMs for Molecules: The Llamole architecture exemplifies how transformers can be augmented to handle molecular graph structures while maintaining natural language understanding. This system uses a base LLM as a controller that activates specialized graph modules when needed—switching between natural language processing, molecular structure generation, and synthesis planning through learned trigger tokens [23].

Graph Attention Networks (GATs): For molecular data naturally represented as graphs (atoms as nodes, bonds as edges), GATs apply the attention mechanism to neighborhood aggregation in graphs. Each node computes attention weights over its neighbors, determining how much to weight their features when updating its own representation [20].

Domain-Specific Pre-training: Models like MatSciBERT and BatteryBERT are pre-trained on large-scale scientific corpora, enabling them to develop a fundamental understanding of materials science concepts and terminology before being fine-tuned for specific tasks [18].

Experimental Protocols and Methodologies

Implementing transformer models for materials discovery requires carefully designed experimental protocols to ensure robust and reproducible results.

Protocol 1: Pre-training Domain-Specific Transformers

Objective: Create a transformer model with foundational knowledge in materials science or chemistry.

- Data Curation: Assemble a large-scale corpus of domain-specific text (scientific papers, patents, datasets). The Materials Project database and related literature serve as valuable sources [18].

- Tokenization: Implement domain-aware tokenization that recognizes scientific nomenclature, chemical formulas, and material identifiers.

- Pre-training Objective: Employ masked language modeling, where 15% of tokens are randomly masked and the model must predict them based on context [18].

- Validation: Evaluate the pre-trained model on cloze-style tests with domain knowledge and named entity recognition tasks.

Protocol 2: Fine-tuning for Molecular Property Prediction

Objective: Adapt a pre-trained transformer to predict specific molecular properties.

Data Preparation:

- Collect labeled dataset of molecules with target properties (e.g., solubility, toxicity, binding affinity)

- Represent molecules as SMILES strings or graph representations

- Split data into training (80%), validation (10%), and test sets (10%)

Model Architecture Selection:

- For sequence-based approaches: Use transformer encoder with regression/classification head

- For graph-based approaches: Implement Graph Attention Network with multiple attention heads

Training Procedure:

- Initialize with pre-trained weights where available

- Use learning rate warmup for first 2% of training steps, followed by decay

- Apply gradient clipping to prevent explosion

- Monitor validation loss for early stopping

Evaluation Metrics:

- Root Mean Square Error (RMSE) for regression tasks

- Area Under ROC Curve (AUC-ROC) for classification tasks

- Mean Absolute Error (MAE) for quantitative predictions

Llamole-Style Multimodal Molecular Design

The Llamole framework demonstrates a sophisticated methodology for integrating multiple representation modalities [23]:

Experimental Details:

- Training Data: 400,000 patented molecules augmented with AI-generated natural language descriptions

- Model Architecture: Base LLM with three specialized graph modules (structure generator, structure encoder, reaction predictor)

- Trigger Mechanism: Learned tokens that activate specific modules when predicted by the LLM

- Evaluation: Success rate of generating synthesizable molecules that match specifications

The Scientist's Toolkit: Research Reagent Solutions

Implementing transformer-based approaches requires both computational and data resources. The following table outlines essential components for establishing this capability in a research environment.

Table 3: Essential Resources for Transformer-Based Materials Research

| Resource Category | Specific Tools/Resources | Function/Purpose |

|---|---|---|

| Pre-trained Models | MatSciBERT, BatteryBERT, SciBERT | Domain-specific foundation models that can be fine-tuned for specialized tasks [18] |

| Materials Databases | Materials Project, MatSci NLP, PubChem | Curated datasets for training and benchmarking models [18] |

| Molecular Representations | SMILES, SELFIES, Graph Representations | Standardized formats for encoding molecular structures as model inputs [23] |

| Multimodal Integration Frameworks | Llamole Architecture, Graph Transformers | Systems that combine natural language with structural representations [23] |

| Specialized Attention Mechanisms | Graph Attention Networks, Multi-Head Attention | Architectures designed for scientific data structures [20] |

| Evaluation Benchmarks | MaScQA, MatSci-NLP, MoleculeNet | Standardized tasks and metrics for assessing model performance [18] |

Future Directions and Challenges

While transformer architectures show tremendous promise for materials discovery, significant challenges remain. Current LLMs struggle with comprehending and reasoning over complex, interconnected materials science knowledge, often producing hallucinations or inaccurate predictions [18] [24]. The path forward involves developing more sophisticated multimodal architectures that are explicitly grounded in domain knowledge and physical principles.

Key research priorities include:

- Building high-quality, multimodal datasets that capture valuable materials science principles

- Developing better information extraction methods from scientific literature

- Creating retrieval-augmented generation systems that ground responses in verified knowledge

- Integrating physical simulations with transformer-based reasoning [18]

The ultimate goal is creating end-to-end solutions that automate the entire process of materials design and synthesis—from natural language specification to validated candidate selection. As these technologies mature, they promise to dramatically accelerate the discovery and development of novel materials and therapeutics, potentially reducing discovery timelines from years to weeks or days [23].

The integration of Large Language Models (LLMs) into materials science represents a paradigm shift from traditional data-driven methods to an AI-driven scientific approach, revolutionizing the research landscape [25]. While general-purpose LLMs encode vast general knowledge, the complex, specialized nature of materials science—with its unique terminology and structured knowledge—has driven the need for specialized MatSci-LLMs [26]. These domain-specific models are engineered to move beyond general language understanding, becoming grounded in domain-specific knowledge to enable accurate data extraction, property prediction, and even the autonomous design of novel materials [27] [26]. This transformation is crucial for accelerating materials discovery, as traditional trial-and-error approaches and manual data extraction from millions of scientific publications have created significant bottlenecks in the research pipeline [27] [1].

The development of MatSci-LLMs marks a critical evolution from their use as passive assistants to their deployment as active participants in the research process. These models are increasingly functioning as the central "brain" in research workflows, capable of planning multi-step procedures, interfacing with computational simulation tools, and operating robotic platforms in autonomous laboratories [27] [25]. This whitepaper provides a comprehensive technical overview of the methodologies, applications, and experimental validations underpinning specialized MatSci-LLMs, framed within the broader context of their role in advancing materials discovery research for scientists, researchers, and drug development professionals.

Development Methodologies for MatSci-LLMs

Creating effective MatSci-LLMs requires specialized strategies to embed deep domain knowledge into general-purpose foundation models. Four primary methodologies have emerged, each with distinct advantages and implementation protocols.

Fine-Tuning for Domain Adaptation

Fine-tuning involves further training a pre-existing general LLM on a curated dataset of materials science literature. This process allows the model to internalize the specific language, concepts, and relationships prevalent in the field. Kang and colleagues demonstrated this approach by fine-tuning GPT-3.5-turbo and GPT-4o using textual formulas of Metal-Organic Framework (MOF) precursors, enabling the models to predict synthesis conditions with an 82% similarity score to true experimental conditions [27]. Similarly, Liu et al. achieved a 94.8% accuracy in predicting hydrogen storage performance of MOFs—a 46.7% improvement over baseline models—by fine-tuning on rich natural language descriptions that included composition, node connectivity, and topological features [27].

Experimental Protocol for Fine-Tuning:

- Base Model Selection: Choose a commercially available or open-source foundation model (e.g., GPT series, Llama, Qwen, GLM).

- Dataset Curation: Compile a high-quality, domain-specific corpus from scientific literature, databases, and structured knowledge sources.

- Training Configuration: Employ Parameter-Efficient Fine-Tuning (PEFT) methods like Low-Rank Adaptation (LoRA). A typical setup uses a rank of 32 and optionally 4-bit quantization to reduce computational demands, enabling the fine-tuning of large models (e.g., GLM-4.5-Air) on accelerator systems such as four AMD Instinct MI250X [27].

- Validation: Evaluate performance on held-out test sets from the target domain, measuring task-specific accuracy and similarity metrics.

Retrieval-Augmented Generation (RAG)

RAG enhances LLMs by connecting them to external, verified knowledge bases. When generating responses, the model first retrieves relevant information from authoritative sources, thereby grounding its outputs in factual data and reducing hallucinations. The MatSciAgent framework exemplifies this approach by leveraging databases like the Materials Project and MatWeb to retrieve and summarize materials data, ensuring "grounded, factual responses" [28]. This directly addresses a key limitation of vanilla LLMs, which may generate plausible but incorrect or unverified information [28].

AI Agent Frameworks

AI agents represent the most advanced application of MatSci-LLMs, transforming them from conversational tools into active problem-solving systems. These agents can comprehend user intent, autonomously design and plan multi-step research procedures, and utilize specialized computational tools [29] [28]. The MatSciAgent framework operates on a modular multi-agent architecture where a master agent interprets natural language queries, identifies the task type, and delegates it to specialized task-specific agents equipped with tools for data retrieval, continuum simulation, crystal structure generation, and molecular dynamics simulation [28].

Specialized Material Representations

Effective MatSci-LLMs often require novel input representations that encode complex materials information in formats suitable for language models. Song and colleagues developed the "Material String" format—a dense, information-rich textual representation that encodes essential structural details like space group, lattice parameters, and Wyckoff positions, enabling complete mathematical reconstruction of a material's primitive cell in 3D [27]. Models fine-tuned on this representation demonstrated remarkable accuracy (98.6%) on synthesizability tests and exceptional generalization, maintaining 97.8% accuracy on complex experimental structures far beyond the 40-atom limit of their training data [27].

Key Applications and Experimental Benchmarks

MatSci-LLMs are delivering transformative capabilities across the materials discovery pipeline. The table below summarizes quantitative performance benchmarks across critical application domains.

Table 1: Performance Benchmarks of MatSci-LLMs Across Applications

| Application Domain | Specific Task | Model/Method Used | Reported Performance | Reference |

|---|---|---|---|---|

| Data Extraction | Mining MOF synthesis conditions | Open-source models (Qwen3, GLM-4.5 series) | >90% accuracy, up to 100% with largest models | [27] |

| Data Extraction | Interpreting reaction scheme images | ReactionSeek with GLM-4V | 91.5% accuracy on diverse images | [27] |

| Property Prediction | Hydrogen storage performance | Fine-tuned LLM with comprehensive descriptions | 94.8% accuracy (46.7% improvement over baseline) | [27] |

| Synthesis Prediction | Predicting synthesis routes | Fine-tuned model with Material String representation | 91.0% accuracy | [27] |

| Synthesis Prediction | MOF synthesis condition recommendation | L2M3 with fine-tuned GPT-3.5/4 | 82% similarity to experimental conditions | [27] |

Intelligent Data Extraction and Curation

The ability to automatically extract structured information from unstructured scientific text represents one of the most immediate applications of MatSci-LLMs. Traditional rule-based extraction methods struggle with the diversity of natural language expressions, while LLMs can understand context and extract information with higher flexibility [27]. Ghosh et al. developed an LLM-driven workflow that extracted key thermoelectric properties and structural characteristics from approximately 10,000 materials science articles, creating the largest LLM-curated thermoelectric dataset with 27,822 temperature-resolved property records [27].

Pruyn et al. advanced this further with "MOF-ChemUnity," which extracts material properties and synthesis procedures while also linking various material names to their co-reference names and crystal structures, forming a knowledge graph that bridges textual synthesis knowledge with atomic-level structural insights [27]. Zhao et al. addressed temporal sequencing with a "sequence-aware" extraction method that captures step-by-step experimental workflows as directed graphs, achieving high F1-scores for both entity (0.96) and relation (0.94) extraction [27].

Predictive Modeling and Property Prediction

Beyond information retrieval, MatSci-LLMs demonstrate remarkable capability in learning structure-property relationships and predicting material characteristics. The exceptional performance of models fine-tuned on comprehensive material descriptions or specialized representations like Material String underscores their ability to capture complex structural patterns that govern material behavior and functionality [27].

Multi-Agent Autonomous Systems

Agentic MatSci-LLMs represent the frontier of autonomous materials research. These systems can coordinate multiple specialized tools and simulations to execute complex, multi-step research tasks. The MatSciAgent framework demonstrates this capability through its modular architecture, where different agents with specialized functions collaborate to address materials research challenges from data retrieval to simulation [28].

Table 2: MatSci-LLM Agent Types and Functions

| Agent Type | Core Function | Tools/Resources Accessed |

|---|---|---|

| Master Agent | Interprets user query, delegates tasks to specialized agents | Natural language processing capabilities |

| Data Retrieval Agent | Finds and summarizes materials data | Materials Project, MatWeb databases |

| Generative Agent | Proposes plausible crystal structures | Structure generation algorithms |

| Simulation Agent | Conducts continuum and atomistic simulations | Cellular Automata, Monte Carlo Annealing, Molecular Dynamics code |

Experimental Protocols and Workflows

Data Extraction and Curation Workflow

The process of extracting structured materials data from literature follows a systematic pipeline. The following diagram illustrates the sequence-aware data extraction workflow for capturing synthesis procedures:

Diagram 1: Data Extraction Workflow

Step-by-Step Protocol:

- Input Scientific Full-Text: Begin with complete research articles in PDF or text format. Processing entire papers (as done with open-source models) captures additional contextual information that may be missed by chunk-based approaches [27].

- Pre-processing & Text Normalization: Convert documents to plain text, extract tables and captions as distinct high-value data sources [27], and identify relevant experimental paragraphs.

- LLM Processing: Feed the normalized text to a MatSci-LLM (open-source models like Qwen3-32B can achieve >94% accuracy and be deployed on standard workstations) [27].

- Structured Data Extraction: The LLM extracts specific entities (e.g., synthesis conditions, material properties) and their relationships. Sequence-aware extraction captures actions and their order as a directed graph [27].

- Knowledge Graph Construction: Transform extracted data into a structured, queryable knowledge base that links textual information with structural data [27].

Multi-Agent Task Execution Workflow

The following diagram outlines the operational workflow of a multi-agent MatSci-LLM system for executing complex materials research tasks:

Diagram 2: Multi-Agent Task Workflow

Execution Protocol:

- User Natural Language Query: Researcher submits a request in natural language (e.g., "Find materials with high hydrogen storage capacity and simulate their synthesis").

- Master Agent Task Interpretation: The master agent analyzes the query intent, identifies required subtasks, and determines the sequence of operations [28].

- Specialized Agent Delegation: The master agent delegates to appropriate specialized agents: data retrieval agents for database queries, generative agents for novel structure creation, or simulation agents for computational modeling [28].

- Tool Execution & Data Retrieval: Specialized agents utilize their tools—querying materials databases (Materials Project, MatWeb), running simulations (Molecular Dynamics, Monte Carlo), or generating crystal structures [28].

- Synthesis & Result Return: The master agent synthesizes outputs from specialized agents into a coherent response, providing grounded, factual answers to the user's original query [28].

Successful implementation of MatSci-LLMs requires both computational tools and data resources. The table below details key components of the research infrastructure.

Table 3: Essential Research Resources for MatSci-LLM Implementation

| Resource Category | Specific Tool/Resource | Function/Purpose | Access Method |

|---|---|---|---|

| Computational Frameworks | MatSciAgent | Multi-agent framework for materials tasks | Research code implementation |

| AI Toolkits | NOMAD AI Toolkit | Interactive analysis of FAIR materials data | Web-based platform, Jupyter notebooks [30] |

| Materials Databases | Materials Project | Repository of crystal structures and properties | API access, web interface [28] |

| Materials Databases | MatWeb | Database of material properties | API access [28] |

| Open-Source LLMs | Qwen3 Series (14B-355B) | Domain-adapted model for materials tasks | Download, local deployment [27] |

| Open-Source LLMs | GLM-4.5 Series | Commercial-grade open-source alternative | Download, local deployment [27] |

| Specialized Representations | Material String Format | Dense representation of crystal structures | Custom implementation [27] |

Specialized MatSci-LLMs represent a transformative advancement in materials research, evolving from information extraction tools to active participants in the discovery process. The development methodologies—fine-tuning, RAG, AI agents, and specialized representations—enable these models to overcome the limitations of general-purpose LLMs for domain-specific tasks. Robust experimental benchmarks demonstrate their capabilities across data extraction, property prediction, and autonomous research.

Despite significant progress, challenges remain in dataset quality, benchmarking standards, hallucination mitigation, and AI safety [25]. The emergence of capable open-source models like Llama 3, Qwen, and GLM offers a promising path toward greater transparency, reproducibility, and cost-effectiveness [27]. As noted in recent research, "open-source alternatives can match performance while offering greater transparency, reproducibility, cost-effectiveness, and data privacy" [27]. Future developments will likely focus on creating more deeply domain-grounded models, improving agentic capabilities for autonomous experimentation, and fostering community-driven open-source platforms that accelerate materials discovery through accessible, flexible AI tools [27] [26].

From Theory to Practice: Methodologies and Real-World Applications

The exponential growth of materials science literature has created a significant bottleneck in knowledge extraction, synthesis, and scientific reasoning [31]. The overwhelming majority of materials knowledge is published as scientific literature in non-machine-readable form, making manual data extraction time-consuming and severely limiting the efficiency of large-scale data accumulation [1]. Natural Language Processing (NLP) and Large Language Models (LLMs) have emerged as transformative technologies to address these challenges by enabling automated analysis of textual data at scale [31].

These technologies have revolutionized how researchers engage with materials information, opening new avenues to accelerate materials research through efficient information extraction and utilization [1]. The development of NLP has provided an opportunity for the automatic construction of large-scale materials datasets, giving data-driven materials research a complementary focus in utilizing NLP tools [1]. The advances in NLP techniques and the development of LLMs facilitate the efficient extraction and utilization of information from the vast body of existing scientific literature [1] [32].

Table: Evolution of NLP Techniques in Materials Science

| Era | Primary Approach | Key Technologies | Materials Science Applications |

|---|---|---|---|

| 1950s-1980s | Handcrafted Rules | Expert-crafted rules | Limited to specific, narrowly defined problems |

| 1980s-2010s | Machine Learning | Feature-based algorithms | Early information extraction attempts |

| 2010s-Present | Deep Learning | BiLSTM, Word2Vec, Transformers | Automatic data extraction from literature [1] |

| 2018-Present | Large Language Models | BERT, GPT, Domain-specific models | Materials discovery, property prediction, autonomous research [1] [33] |

The emergence of pre-trained models has brought a new era in NLP research and development, with LLMs such as Generative Pre-trained Transformer (GPT), Falcon, and Bidirectional Encoder Representations from Transformers (BERT) demonstrating general "intelligence" capabilities via large-scale data, deep neural networks, self and semi-supervised learning, and powerful hardware [1]. The Transformer architecture, characterized by the attention mechanism, is the fundamental building block that has impacted LLMs and has been employed to solve many problems in information extraction, code generation, and the automation of chemical research [1].

Core NLP Pipeline Components for Materials Data Extraction

Named Entity Recognition (NER) for Materials Science

Named Entity Recognition (NER) forms the foundation of information extraction pipelines in materials science, enabling the identification and categorization of key entities within scientific text. The primary challenge in materials NER involves developing ontologies that capture domain-specific terminology and relationships. A representative pipeline for polymer data extraction demonstrates this capability through an ontology encompassing eight entity types: POLYMER, POLYMERCLASS, PROPERTYVALUE, PROPERTYNAME, MONOMER, ORGANICMATERIAL, INORGANICMATERIAL, and MATERIALAMOUNT [34].

The annotation process requires significant domain expertise, with inter-annotator agreement metrics reaching Fleiss Kappa values of 0.885, indicating good homogeneity in annotations [34]. The standard architecture for materials NER utilizes BERT-based encoders to generate context-aware token embeddings, followed by a linear layer connected to a softmax non-linearity that predicts the probability of the entity type for each token [34]. This approach has been successfully deployed to extract approximately 300,000 material property records from ~130,000 abstracts in just 60 hours [34].

Relation Extraction and Entity Normalization

Beyond entity recognition, effective pipelines must identify relationships between extracted entities and normalize entity variations. Relation extraction classifies relationships between identified entities, while co-referencing identifies clusters of named entities referring to the same object (such as a polymer and its abbreviation) [34]. Named entity normalization addresses the critical challenge of identifying all naming variations for an entity across numerous documents, which is particularly important for polymers that exhibit non-trivial naming variations and cannot typically be converted to standardized representations like SMILES strings [34].

More recent approaches leverage the capabilities of LLMs through prompt engineering and schema-based extraction, offering a novel approach to materials information extraction distinct from conventional NLP pipelines [1] [33]. Well-designed prompts are essential for maximizing the effectiveness of GPTs, encompassing crucial elements of clarity, structure, context, examples, constraints, and iterative refinement [1].

Multimodal Data Integration

Modern extraction pipelines must handle information presented across multiple modalities, including text, tables, images, and molecular structures [33]. In materials science, significant information is embedded in tables, images, and molecular structures, requiring advanced models capable of multimodal integration [33]. For example, in patent documents, key molecules are often represented by images while text may contain irrelevant structures, necessitating extraction of molecular data from multiple modalities [33].

Specialized algorithms can extract data from specific content types, such as Plot2Spectra for extracting data points from spectroscopy plots and DePlot for converting visual representations into structured tabular data [33]. These tools can be integrated with LLMs, which function as orchestrators to enhance overall efficiency and accuracy of data extraction pipelines in materials science [33].

Domain-Specific Language Models and Training Methodologies

Materials-Specific Foundation Models

The development of domain-adapted foundation models represents a significant advancement in materials NLP. These models undergo continued pre-training on extensive corpora of materials literature, enabling them to develop specialized knowledge while maintaining general linguistic capabilities. The LLaMat model family exemplifies this approach, demonstrating exceptional performance in materials-specific NLP tasks and structured information extraction [31]. These specialized models demonstrate unprecedented capabilities in domain-specific tasks, with the LLaMat-CIF variant showing remarkable performance in crystal structure generation, predicting stable crystals with high coverage across the periodic table [31].

Training these models requires careful consideration of base model selection, as evidenced by the unexpected finding that LLaMat-2 (based on LLaMA-2) demonstrated enhanced domain-specific performance across diverse materials science tasks compared to LLaMA-3-based versions, suggesting potential "adaptation rigidity" in overtrained LLMs [31]. This highlights the importance of matching model architecture to specific domain requirements rather than simply selecting the most powerful base model.

Training Data Curation and Preparation

The quality and composition of training data significantly influence model performance in materials science applications. Effective training corpora typically comprise millions of materials science abstracts and papers, carefully filtered for relevance and data quality [34]. The starting point for successful pre-training and instruction tuning of foundational models is the availability of significant volumes of high-quality data, which is particularly critical in materials science where minute details can significantly influence properties [33].

Data extraction from scientific documents must address challenges of noisy, incomplete, or inconsistent information, including discrepancies in naming conventions, ambiguous property descriptions, and poor-quality images [33]. The CRESt platform exemplifies advanced data integration, incorporating diverse information sources including experimental results, scientific literature, imaging and structural analysis, and domain expertise [35].

Table: Domain-Adapted Language Models for Materials Science

| Model Name | Base Architecture | Training Corpus | Specialized Capabilities | Performance Highlights |

|---|---|---|---|---|

| MaterialsBERT [34] | BERT-based | 2.4 million materials science abstracts | Polymer property extraction | Outperforms baseline models in 3/5 NER tasks |

| LLaMat [31] | LLaMA-2/3 | Extensive materials literature + crystallographic data | Structured information extraction, crystal structure generation | Excels in materials-specific NLP while maintaining general capabilities |

| LLaMat-CIF [31] | LLaMA-2/3 | Materials literature + CIF data | Crystal structure generation | Predicts stable crystals with high periodic table coverage |

| CRESt Integration [35] | Multimodal LLM | Scientific literature + experimental data + human feedback | Autonomous materials discovery | 9.3-fold improvement in power density per dollar for fuel cell catalysts |

Evaluation Metrics and Performance Validation

Rigorous evaluation of materials NLP systems requires both quantitative metrics and qualitative expert validation. Standard NLP metrics include accuracy, precision, recall, and F1 scores measured on annotated test sets, with reported classification accuracy ranging from 59-76% depending on the model used [36]. The highest-performing Transformer models have been shown to rival inter-annotator agreement metrics, indicating human-level performance on specific extraction tasks [36].

Beyond traditional metrics, materials-specific evaluations assess the utility of extracted data for downstream tasks such as property prediction and materials discovery. For example, data extracted using automated pipelines has been successfully used to train machine learning predictors for properties like glass transition temperature, validating the quality and usefulness of the extracted information [34]. Furthermore, successful experimental validation of materials discovered or optimized using these systems provides the ultimate performance metric, as demonstrated by the CRESt platform's discovery of a catalyst material that delivered record power density in fuel cells [35].

Experimental Protocols and Implementation Frameworks

Corpus Construction and Annotation Methodology

Building effective materials NLP pipelines begins with careful corpus construction and annotation. A representative protocol involves these critical steps:

Corpus Collection: Gather a large corpus of materials science papers (e.g., 2.4 million abstracts) from scientific databases and repositories [34].

Domain Filtering: Filter abstracts using domain-specific keywords (e.g., "poly" for polymer research) and regular expressions to identify texts containing numeric information likely to contain property data [34].

Annotation Guideline Development: Create detailed annotation guidelines defining entity types and relationships through iterative refinement with domain experts [34].

Multi-Round Annotation: Implement annotation over multiple rounds using tools like Prodigy, with each round refining guidelines and re-annotating previous abstracts using updated criteria [34].

Inter-Annotator Agreement Assessment: Measure agreement using Cohen's Kappa and Fleiss Kappa metrics on subsets annotated by multiple experts, with target values exceeding 0.85 indicating good homogeneity [34].

The final annotated dataset typically splits into training (85%), validation (5%), and test (10%) sets to enable model development and evaluation while preventing overfitting [34].

Model Training and Fine-Tuning Procedures

Training effective materials NLP models requires specialized procedures:

Architecture Selection: Choose appropriate base architectures (BERT-based encoders for NER, decoder-only models for generation) [34] [33].

Tokenization: Implement domain-appropriate tokenization strategies, with custom chemistry-focused tokenizers (like SmilesTokenizer) providing mild improvements over standard approaches [36].

Pre-training: Conduct continued pre-training on domain corpora, with the size of the training corpus significantly influencing model performance [1].

Task-Specific Fine-tuning: Add task-specific layers (linear layer with softmax for NER) and fine-tune using annotated datasets with cross-entropy loss [34].