Interlaboratory Comparison Studies for Materials Methods: A Guide to Validation, Harmonization, and Quality Assurance

This article provides a comprehensive overview of interlaboratory comparison (ILC) studies, a critical tool for ensuring data quality and methodological reliability in materials science and biomedical research.

Interlaboratory Comparison Studies for Materials Methods: A Guide to Validation, Harmonization, and Quality Assurance

Abstract

This article provides a comprehensive overview of interlaboratory comparison (ILC) studies, a critical tool for ensuring data quality and methodological reliability in materials science and biomedical research. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of ILCs, detailed methodological approaches for implementation, strategies for troubleshooting and optimizing laboratory performance, and the role of ILCs in formal method validation and comparative analysis. By synthesizing current practices and insights from recent studies across fields like gene therapy, environmental science, and construction materials, this guide aims to support laboratories in achieving harmonized, accurate, and comparable results.

What Are Interlaboratory Comparisons? Establishing the Bedrock of Reliable Data

Interlaboratory Comparisons (ILCs) are systematic procedures in which two or more laboratories analyze the same or similar test items under predetermined conditions to assess their performance. Within the framework of materials methods research, two primary types of ILCs are critical for ensuring data quality and method reliability: Proficiency Testing (PT) and Collaborative Method Validation (often referred to as Ring Trials). These processes play distinct yet complementary roles in the drug development pipeline, serving as essential tools for quality assurance and method standardization [1] [2].

Proficiency Testing operates as an external quality assessment tool, focusing on evaluating a laboratory's competence to perform specific tests or measurements accurately. In contrast, Collaborative Method Validation studies are research and development exercises aimed at establishing the performance characteristics of a new analytical method before it becomes standardized. For researchers and drug development professionals, understanding the distinction between these approaches is fundamental to designing appropriate validation strategies and meeting regulatory requirements for method suitability [2].

The implementation of these interlaboratory studies has become increasingly important with the growing emphasis on biomarker development and the incorporation of novel analytical techniques in pharmaceutical research. Both PT and Collaborative Method Validation provide mechanisms for establishing confidence in measurement results, which is particularly crucial when these results inform critical decisions in the drug development process, from target validation to clinical trial endpoints [3].

Core Concepts and Definitions

Proficiency Testing (PT)

Proficiency Testing is defined as the evaluation of participant performance against pre-established criteria through interlaboratory comparisons [4]. According to ISO/IEC 17043, PT is a formal exercise managed by a coordinating body that includes a reference laboratory, with results issued in a formal report that typically includes performance metrics such as En and Z-scores [4]. The primary objective of PT is to assess a laboratory's technical competence in performing specific analyses and to monitor the continuing effectiveness of their quality management system [1] [5].

In a typical PT scheme, a proficiency testing provider prepares and distributes samples with known but undisclosed values to participating laboratories. Each laboratory then analyzes the samples using their routine methods, equipment, and reagents, exactly as they would for customer samples. The results are returned to the provider for comparison against reference values or the results from other laboratories [1]. This process provides an objective assessment of a laboratory's ability to produce accurate data under normal operating conditions, making it particularly valuable for accreditation purposes under standards such as ISO/IEC 17025 [1] [4].

Collaborative Method Validation (Ring Trials)

Collaborative Method Validation, commonly known as Ring Trials, represents a different type of interlaboratory study with distinct objectives. A Ring Trial is an interlaboratory test where multiple laboratories analyze the same sample under controlled conditions following a standardized protocol [1]. The key distinction from PT lies in its purpose: while PT assesses laboratory competence, Ring Trials evaluate the reproducibility and robustness of analytical methods themselves [1].

These collaborative studies are fundamental to method development and harmonization, particularly in fields requiring standardized analytical procedures. During a Ring Trial, a reference laboratory typically prepares and distributes samples to participating laboratories, all of which adhere to identical protocols, reagents, and equipment specifications whenever possible [1]. This standardized approach minimizes methodological variations, allowing researchers to identify factors influencing precision and accuracy, and enabling procedural refinements before methods are implemented in individual laboratories [1]. Such studies are especially valuable for establishing standardized methods in regulated environments, such as food safety testing and pharmaceutical analysis [2].

Comparative Analysis: Objectives and Applications

The fundamental distinction between Proficiency Testing and Collaborative Method Validation lies in their primary objectives: PT assesses laboratory performance, while Collaborative Method Validation assesses method performance. This distinction drives differences in their design, implementation, and applications within materials methods research and drug development.

Table 1: Key Differences Between Proficiency Testing and Collaborative Method Validation

| Aspect | Proficiency Testing (PT) | Collaborative Method Validation (Ring Trials) |

|---|---|---|

| Main Objective | Assessment of laboratory competence [1] | Evaluation and validation of analytical methods [1] |

| Reference Values | Pre-established and concealed from participants [1] | May be derived from participants' results [1] |

| Frequency | Regular and periodic as part of quality control [1] | Occasional, as needed for method validation [1] |

| Operating Conditions | Each laboratory uses its own method, equipment, and reagents [1] | Standardized protocols to minimize methodological variations [1] |

| Participation | Often mandatory for laboratory accreditation [1] | Usually voluntary for method development [1] |

| Applicable Standards | Complies with ISO/IEC 17043 and ISO/IEC 17025 [1] [4] | Not always ISO-compliant; focused on method-specific parameters [1] |

| Comparison Method | Comparison of laboratory performance to assess technical competence [1] | Comparison among laboratories to improve method reproducibility [1] |

| Sample Preparation | A specialized PT provider supplies samples with hidden values [1] | A reference or organizing laboratory prepares and distributes samples [1] |

| Primary Application | Quality control and compliance with accreditation standards [1] | Development, validation, and harmonization of analytical methods [1] |

| Methodological Flexibility | Allows each laboratory to use its standard methodology [1] | Requires adherence to a common protocol to ensure data comparability [1] |

In the context of drug development, these interlaboratory approaches support different stages of the research pipeline. Collaborative Method Validation is particularly valuable during early method development phases, where establishing robust, transferable analytical methods is crucial for biomarker qualification or assay validation [3]. For instance, when developing methods for biomarker measurements, collaborative validation studies help establish the precision, accuracy, and reproducibility of analytical techniques before they are implemented across multiple sites in clinical trials [3].

Proficiency Testing, conversely, serves as an ongoing quality assurance tool once methods are established. It ensures that different laboratories involved in multi-center trials can generate comparable results over time, providing confidence in data consistency across study sites [5]. This distinction is particularly important in pharmaceutical development, where the FDA recognizes different levels of biomarker validity—from exploratory to known valid biomarkers—with increasing requirements for analytical validation and cross-laboratory verification [3].

Evaluation Methodologies and Performance Metrics

Proficiency Testing Evaluation Protocols

Proficiency Testing employs standardized statistical methods to evaluate participant performance. According to ISO/IEC 17043, two primary metrics are used: normalized error (En) and Z-score [4]. These quantitative measures provide objective assessment of a laboratory's performance relative to reference values and other participants.

The normalized error (En) calculation incorporates measurement uncertainty into the performance assessment. It is calculated using the formula:

Where Ulab is the expanded uncertainty reported by the participant laboratory and Uref is the expanded uncertainty of the reference value. The criteria for performance interpretation are straightforward: |En| ≤ 1 indicates satisfactory performance, while |En| > 1 indicates unsatisfactory performance [4].

The Z-score provides an alternative assessment method that compares a laboratory's result to the consensus value of all participants, normalized by the standard deviation for proficiency assessment:

Where σ represents the standard deviation for proficiency assessment. Interpretation follows these guidelines: |Z| ≤ 2 indicates satisfactory performance; 2 < |Z| < 3 indicates questionable performance requiring attention; and |Z| ≥ 3 indicates unsatisfactory performance [4].

Collaborative Method Validation Assessment Protocols

In Collaborative Method Validation studies, the evaluation focuses on method performance rather than laboratory performance. Key metrics include interlaboratory reproducibility, precision, and robustness. These studies typically generate precision statements that capture both within-laboratory repeatability and between-laboratory reproducibility [6].

The statistical analysis in Collaborative Method Validation often involves:

- Precision Assessment: Calculation of repeatability standard deviation (sr) and reproducibility standard deviation (sR) across all participating laboratories

- Bias Evaluation: Determination of systematic errors by comparing results to reference values when available

- Robustness Testing: Assessment of method sensitivity to variations in environmental conditions, reagents, or equipment

These comprehensive assessments establish the method's fitness for purpose and provide data supporting its standardization through organizations like ISO or CEN [2]. Successful collaborative studies demonstrate that the method produces consistent results across multiple laboratories, operating environments, and technicians—a critical requirement for methods intended for widespread use in regulatory applications [2].

Table 2: Key Reagents and Materials for Interlaboratory Studies

| Reagent/Material | Function in ILCs | Critical Quality Attributes |

|---|---|---|

| Homogeneous Test Samples | Distributed to all participants as the test material; ensures comparisons are based on identical samples [1] | Homogeneity, stability, commutability with routine samples |

| Certified Reference Materials | Provide traceability to stated references; used for calibration or as verification standards [6] | Certified values with stated uncertainties, stability |

| Method-Specific Reagents | Ensure standardized protocols in Collaborative Validation; may be specified and distributed to participants [1] | Purity, specificity, lot-to-lot consistency |

| Stabilization Solutions | Maintain sample integrity during shipping and storage in distributed schemes [7] | Effective preservation without analyte alteration |

| Blind Control Materials | Incorporated into PT schemes to test routine performance; values unknown to participants [1] | Stability, similarity to routine samples, commutability |

Implementation in Research and Regulatory Contexts

Experimental Design Considerations

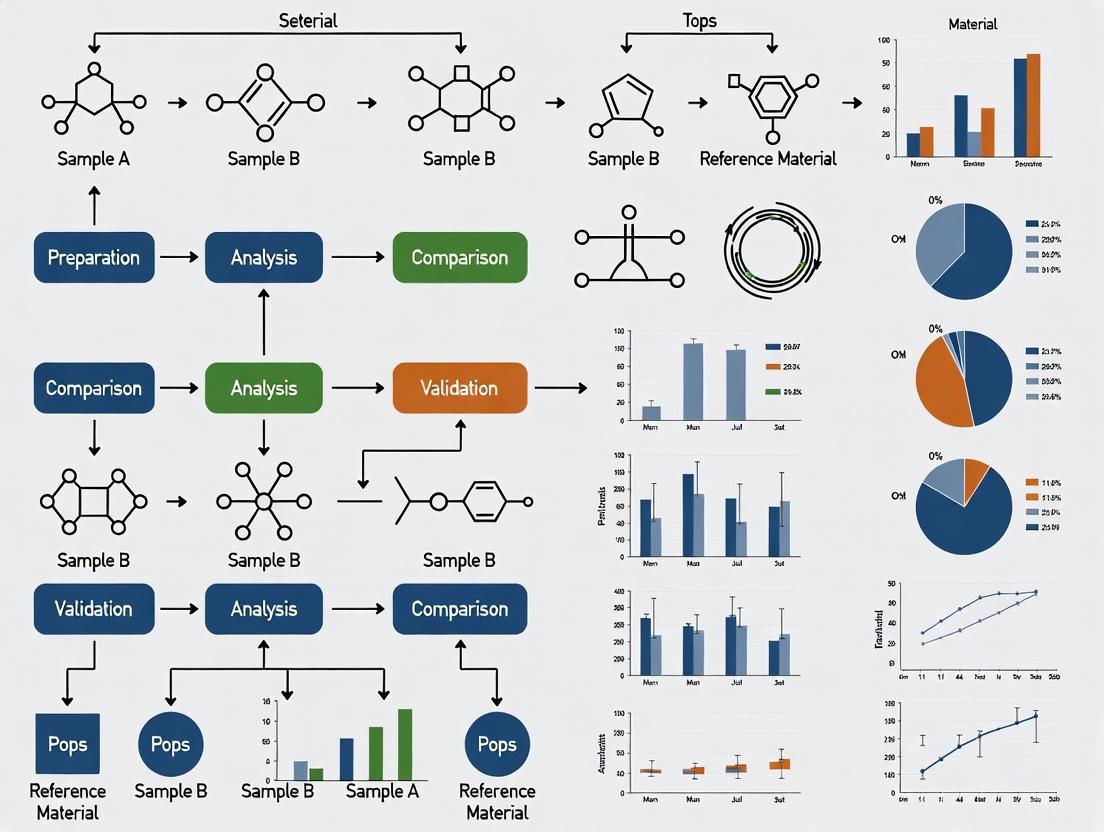

Designing effective interlaboratory studies requires careful consideration of the research objectives. The following diagram illustrates the decision pathway for selecting and implementing the appropriate type of interlaboratory comparison:

For both PT and Collaborative Method Validation, sample homogeneity is paramount to ensure that variations stem from methodological or laboratory differences rather than sample heterogeneity [1]. The organizing body must implement rigorous homogeneity testing and stability assessments to validate that distributed samples are sufficiently uniform for the intended comparisons.

In Proficiency Testing, common schemes include simultaneous participation designs where sub-samples are randomly selected from a material source and distributed to participant laboratories for concurrent testing [4]. These are particularly suitable for reference materials or single-use samples that are consumed during analysis. Sequential participation schemes, such as round-robin or petal tests, circulate artifacts successively between laboratories and are preferred when sample stability permits extended testing periods [4].

In Collaborative Method Validation, the experimental design must carefully control variables to isolate method performance. This typically involves detailed protocols specifying equipment, reagents, environmental conditions, and analysis procedures. Participating laboratories often undergo training to ensure consistent implementation of the method, and pilot studies may precede the full collaborative trial to identify potential issues with the protocol [1].

Regulatory Applications in Drug Development

Interlaboratory comparisons play increasingly important roles in pharmaceutical development and regulatory submissions. The FDA's critical path initiative and NIH roadmap have emphasized the importance of biomarkers in rational drug development, creating a need for robust analytical methods and demonstrated measurement competence [3].

Collaborative Method Validation supports the biomarker qualification process, particularly in transitioning biomarkers from exploratory status to probable valid and known valid biomarkers [3]. Known valid biomarkers require widespread agreement in the scientific community, which is often established through cross-validation experiments across multiple laboratories [3]. For example, biomarker assays for companion diagnostics require demonstration that the method produces consistent results across different testing sites, which is typically established through collaborative validation studies.

Proficiency Testing provides the ongoing quality assurance needed once diagnostic methods are implemented. For laboratories performing tests that guide therapeutic decisions—such as HER2 testing for breast cancer or EGFR mutation analysis for lung cancer—regular participation in PT programs is often mandated by accreditation bodies and regulatory agencies [3]. Successful PT performance demonstrates continuing competence in performing these clinically important assays.

Proficiency Testing and Collaborative Method Validation serve distinct but complementary roles in the landscape of interlaboratory comparisons. PT focuses on assessing and monitoring laboratory competence, using a variety of statistical tools to compare a laboratory's results to reference values or peer performance. In contrast, Collaborative Method Validation establishes the performance characteristics of analytical methods themselves, determining their reproducibility across multiple laboratories and operating conditions.

For researchers and drug development professionals, understanding these distinctions is essential for designing appropriate validation strategies and meeting regulatory requirements. Collaborative Method Validation provides the foundation for standardizing new methods, particularly important with the growing emphasis on biomarker development and personalized medicine approaches. Proficiency Testing offers the ongoing surveillance needed to ensure data quality throughout the drug development pipeline, from preclinical studies to multi-center clinical trials.

Both approaches contribute significantly to the overall quality framework in materials methods research, providing mechanisms to establish confidence in analytical results and ensure that data generated across different locations and timepoints remains comparable and reliable. As analytical technologies continue to evolve and regulatory expectations advance, these interlaboratory comparison approaches will remain essential tools for establishing method validity and demonstrating measurement competence in pharmaceutical research and development.

In materials methods research and drug development, the transition from a laboratory's internal validation of a new analytical procedure to its acceptance as a "fit-for-purpose" method relies heavily on robust comparison studies. These studies are designed to assess the systematic error or bias between a new test method and a established comparative method, providing critical data on method trueness and ensuring the reliability of results across different laboratories and instrument platforms [8] [9]. The fundamental question these comparisons address is whether two methods can be used interchangeably without affecting patient results or clinical outcomes [9]. As the field advances, particularly in areas like oxidative potential (OP) measurements of aerosol particles, international interlaboratory comparisons (ILCs) are becoming essential for harmonizing methods across the global research community, moving beyond self-assessment to establish unified, purpose-driven frameworks [10].

Experimental Protocols for Method Comparison

A well-designed method comparison experiment is foundational to generating reliable, actionable data. The following protocols outline the key considerations for both basic and advanced interlaboratory studies.

Basic Method Comparison Design

The core protocol for comparing a new method against a comparative method involves a structured analysis of patient specimens to estimate systematic error [8].

- Sample Selection and Size: A minimum of 40 different patient specimens is recommended, with 100-200 being preferable to identify unexpected errors from interferences or sample matrix effects. Specimens must be carefully selected to cover the entire clinically meaningful measurement range and represent the spectrum of diseases expected in routine application [8] [9].

- Experimental Procedure: Specimens should be analyzed by both the test and comparative methods within a 2-hour period to maintain specimen stability, unless the analyte is known to have shorter stability. Analysis should be performed over several different analytical runs and a minimum of 5 days to minimize systematic errors from a single run. Ideally, duplicate measurements should be made for both methods to minimize random variation and help identify sample mix-ups or transposition errors [8] [9].

- Comparative Method: The choice of comparative method is critical. A "reference method" with documented correctness through definitive studies is ideal. When using a routine "comparative method," any large, medically unacceptable differences must be carefully interpreted, as the error could originate from either method, potentially requiring additional recovery and interference experiments for resolution [8].

Protocol for Interlaboratory Comparison Exercises (ILC)

Interlaboratory comparisons represent a more comprehensive level of method assessment, focusing on harmonization across multiple research groups.

- Objective: To assess the consistency of measurements between different laboratories applying varied protocols, identify sources of variability, and enhance overall accuracy, reliability, and comparability [10].

- Implementation: A recent ILC for oxidative potential (OP) measurement using the dithiothreitol (DTT) assay was coordinated by a core group of experienced laboratories. This group first produced a harmonized and simplified Standard Operating Procedure (SOP), the "RI-URBANS DTT SOP," which was integrated and tested by the organizing laboratory. This SOP was adapted from several original protocols published in the literature [10].

- Execution: Participating laboratories (20 in the cited study) then performed measurements using both their own "home protocols" and the new harmonized SOP. This approach allowed for a direct analysis of the discrepancies and commonalities arising from differences in experimental procedures, equipment, or techniques [10].

Quantitative Data Analysis and Statistical Framework

Once data is collected, appropriate statistical analysis is required to move from raw numbers to meaningful conclusions about method performance. The following table summarizes the key statistical measures used in method comparison studies.

Table 1: Key Statistical Measures in Method Comparison

| Statistical Measure | Description | Application and Interpretation |

|---|---|---|

| Linear Regression | Calculates the slope (b), y-intercept (a), and standard deviation of points about the line (s~y/x~) for the line of best fit [8]. | Preferred for data covering a wide analytical range. Slope indicates proportional error; y-intercept indicates constant error. Systematic error (SE) at a medical decision concentration (X~c~) is calculated as SE = (a + bX~c~) - X~c~ [8]. |

| Bias (Average Difference) | The average difference between the results from the test method and the comparative method [8]. | Commonly used for data with a narrow analytical range. It represents the constant systematic error between the two methods. |

| Correlation Coefficient (r) | A measure of the strength of the linear relationship between two methods [8] [9]. | Misleading for acceptability. A high r (e.g., 0.99) indicates a strong linear relationship but does not prove comparability; a large, medically unacceptable bias can still exist. It is mainly useful for verifying a wide enough data range for regression [8] [9]. |

| Precision | The closeness of agreement between individual test results from repeated analyses [11]. | Documented as:• Repeatability: Agreement under identical conditions over a short time.• Intermediate Precision: Agreement within a laboratory with variations in days, analysts, or equipment.• Reproducibility: Agreement between different laboratories [11]. |

It is critical to avoid common statistical pitfalls. Neither correlation analysis nor a t-test is sufficient for assessing method comparability. Correlation does not detect bias, and a t-test may miss clinically meaningful differences with small sample sizes or flag statistically significant but clinically irrelevant differences with large samples [9].

Visualization of Data and Workflows

Effective visualization is key to both analyzing data and communicating the results of a comparison study.

Data Visualization Principles

Initial graphical inspection of data is a fundamental step for identifying discrepant results and understanding error patterns.

- Difference Plot (Bland-Altman Plot): Plots the difference between the test and comparative results (y-axis) against the comparative result or the average of the two methods (x-axis). The data should scatter around the line of zero difference, allowing for visual identification of outliers and patterns (e.g., constant or proportional error) [8] [9].

- Comparison Plot (Scatter Plot): Plots the test result (y-axis) against the comparative result (x-axis). A visual line of best fit shows the general relationship. This is useful for displaying the analytical range and linearity of response [8].

- Applying Contrast for Clarity: In any chart, use color strategically to direct the viewer's attention. Employ a bold color for the most important data series or values and use muted colors like gray for less critical context. Titles should be active, stating the key finding, and callouts can be used to annotate specific events or data points [12] [13].

Experimental Workflow Visualization

The following diagram illustrates the logical workflow and key decision points in a method comparison study, from planning to final assessment.

Method Comparison Decision Workflow

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials commonly used in method validation and comparison studies, with a focus on the widely applied DTT assay for oxidative potential.

Table 2: Essential Research Reagent Solutions for Method Comparison Studies

| Reagent/Material | Function and Application |

|---|---|

| Dithiothreitol (DTT) | A thiol-containing probe that serves as an surrogate for biological antioxidants in the DTT assay. It reacts with redox-active species in particulate matter (PM), and its oxidation rate is measured to determine the oxidative potential (OP) of the sample [10]. |

| Phosphate Buffered Saline (PBS) | A common buffer solution used in many acellular OP assays, such as the DTT and Ascorbic Acid (AA) assays, to maintain a stable pH during the reaction, mimicking physiological conditions [10]. |

| Trichloroacetic Acid (TCA) | Used in the DTT assay to terminate the reaction at specific time points, halting the oxidation of DTT by the sample and allowing for subsequent measurement [10]. |

| 5,5'-Dithio-bis(2-nitrobenzoic acid) (DTNB) | Also known as Ellman's reagent. It is used in the DTT assay to quantify the remaining (unoxidized) DTT after the reaction. DTNB reacts with DTT to produce a yellow-colored compound, 2-nitro-5-thiobenzoic acid (TNB), which can be measured spectrophotometrically [10]. |

| Authentic Reference Materials | Well-characterized standard reference materials (e.g., from NIST) used to assess the accuracy of a method by comparing the measured value to an accepted reference value [11]. |

| Patient-Derived Specimens | Fresh or properly preserved serum, plasma, or other relevant biological samples from a diverse patient population. These are crucial for assessing method performance across a wide clinical range and identifying matrix effects [8] [9]. |

Method comparison studies, from internal self-assessment to large-scale interlaboratory exercises, are indispensable for establishing the fitness-for-purpose of analytical methods in research and drug development. A successful study hinges on a rigorous experimental design, appropriate statistical analysis that goes beyond basic correlation, and clear visualization of data and workflows. By adhering to structured protocols and utilizing the essential research tools, scientists can generate defensible data that ensures methodological rigor, promotes harmonization across laboratories, and ultimately supports the safety and efficacy assessments critical to public health.

The Critical Role of ILCs in Accreditation and Regulatory Compliance

The Critical Role of ILCs in Accreditation and Regulatory Compliance

Interlaboratory comparisons (ILCs) and proficiency testing (PT) are foundational tools for laboratories seeking to ensure the accuracy and reliability of their results, meet stringent accreditation requirements, and meet regulatory compliance mandates. For researchers and scientists developing and validating material methods, these exercises provide an indispensable, independent assessment of technical competence, reveal methodological biases, and foster confidence in data quality across global scientific communities.

The Accreditation and Regulatory Imperative for ILCs

For any testing or calibration laboratory, participation in ILCs is not merely a best practice but a fundamental requirement of international quality standards. The ISO/IEC 17025 standard for laboratory competence mandates that laboratories must have quality control procedures to monitor the validity of tests and calibrations, which "shall include, where available, participation in interlaboratory comparisons or proficiency testing programmes" [14]. These activities serve as a critical external check, providing objective evidence that a laboratory's methods, personnel, and equipment are performing as expected.

The regulatory landscape is increasingly emphasizing ILC participation. Updates to regulations like the U.S. Clinical Laboratory Improvement Amendments (CLIA) have further tightened standards for proficiency testing, underscoring its importance in the laboratory quality system [15]. Similarly, European directives, such as those governing ambient air quality monitoring, explicitly require laboratories to participate in ILCs [16]. Beyond compliance, these exercises are a strategic asset. They help laboratories prevent the release of substandard products, identify sources of analytical error, take corrective actions, and provide stakeholders—from regulators to clients—with confidence in the quality of testing services [14].

ILCs in Action: A Landscape of Providers and Programs

A diverse ecosystem of accredited PT providers exists to serve the needs of various scientific and industrial sectors. These organizations design programs where laboratories analyze the same or similar homogeneous test materials, allowing them to compare their results against an assigned value or the results of other participants. The table below summarizes key providers and their specialized focus areas.

Table 1: Overview of Accredited Proficiency Testing Providers and Programs

| Provider | Accreditation Status | Key Sectors and Focus Areas | Example Programs (2025-2026) |

|---|---|---|---|

| CMLS [14] | ISO 9001, ISO/IEC 17043 | Agricultural products, food, feed, fertilizers | Annual series programs with multiple rounds |

| Czech Metrology Institute [17] | ISO/IEC 17043 | Metrology, calibration | Annual ILC program, Bilateral ILCs (BILC) on application |

| INERIS [16] | Cofrac Accreditation | Air quality, water quality, stationary source emissions | PFAS, Levoglucosan, PAHs in ambient air; emissions on test bench |

| Collaborative Testing Services (CTS) [6] | ISO/IEC 17043:2023 | Forensics, plastics, metals, agriculture, wine | Programs across multiple industries in over 80 countries |

| Proftest Syke [18] | ISO/IEC 17043 (FINAS) | Environmental measurements, circular economy, built environment | Natural/waste/drinking water analyses, metals, VOC, calorific value |

These providers operate under quality frameworks like ISO/IEC 17043, which sets the general requirements for their competence [17] [6]. This ensures that the design and operation of the proficiency tests are themselves reliable and consistent. The range of available programs is vast, covering everything from classical chemical analyses to highly specialized methodological comparisons.

Table 2: Detailed ILC Programs in Environmental and Material Sciences

| Program Focus | Organizing Body | Specific Measurands/Parameters | Timeline (Sample/Delivery) |

|---|---|---|---|

| PFAS in Atmospheric Emissions [16] | INERIS | 49 semi-volatile PFAS substances (Fraction 1: Filter, Fraction 2: Resin, Fraction 3: Solution) | Oct - Dec 2025 |

| Oxidative Potential (OP) of Aerosols [10] | RI-URBANS Project Consortium | Dithiothreitol (DTT) assay for oxidative potential | 2025 (Study Published) |

| Soluble Aerosol Trace Elements [19] | International Research Collaboration | Soluble fractions of Al, Cu, Fe, Mn, etc., via 8 different leaching protocols | 2025 (Study Published) |

| Metals in Water and Sludge [18] | Proftest Syke | Al, As, Cd, Cr, Hg, Pb, and 15+ other metals | Week 17, 2026 |

| Leaching Behaviour of Solid Waste [18] | Proftest Syke | As, Ba, Cd, Cr, Cu, Hg, Mo, Ni, Pb, Sb, Se, V, Zn, Cl-, F-, SO42-, DOC, pH | Week 22, 2026 |

Experimental Protocols: Case Studies from Recent Research ILCs

For researchers, the practical implementation of an ILC is critical. The following case studies illustrate the experimental protocols used in recent, sophisticated ILCs relevant to materials and environmental research.

Case Study 1: Intercomparison of Soluble Aerosol Trace Element Leaching Protocols

A large-scale international ILC was conducted to compare eight widely used leaching protocols for measuring the soluble fraction of aerosol trace elements, a key metric in atmospheric and ocean science [19].

Methodology:

- Sample Collection: Ambient PM10 samples were collected on acid-washed Whatman 41 cellulose fiber filters at sites in Guangzhou and Qingdao, China [19].

- Sample Preparation: Each filter was divided into eight identical discs using a circular titanium hole-punch. These subsamples were distributed to the participating institutions [19].

- Leaching Protocols: Participinating labs applied their standard protocols, which fell into three categories based on leaching solution [19]:

- Ultrapure Water (UPW) Leach: Simulates solubility in pure water.

- Ammonium Acetate (AmmAc) Leach: A buffer solution.

- Acetic Acid with Hydroxylamine Hydrochloride (Berger Leach): A more aggressive leach designed to simulate the effect of organic ligands.

- Analysis and Data Treatment: Each group processed and analyzed their leachates using their usual practices (e.g., ICP-MS). No standardization was imposed on these steps to assess the real-world variability introduced by the entire methodological chain [19].

Key Workflow Diagram for an ILC:

Case Study 2: Harmonizing Oxidative Potential (OP) Measurements

The RI-URBANS project conducted a pioneering ILC involving 20 laboratories to quantify the variability in measuring the oxidative potential (OP) of aerosol particles using the dithiothreitol (DTT) assay [10].

Methodology:

- Development of a Simplified Protocol: A core group of experts developed a harmonized Standard Operating Procedure (SOP)—the "RI-URBANS DTT SOP"—to be tested alongside participants' own "home" protocols [10].

- Sample Distribution: Participants were provided with liquid samples of a reference material (quinone) and PM filter extracts to focus on the analytical measurement itself, isolating it from variability introduced by sample extraction [10].

- Parallel Testing: Labs were required to analyze the provided samples using both their home protocol and the harmonized RI-URBANS SOP. This design allowed for direct comparison of the effect of protocol harmonization on inter-laboratory variability [10].

- Data Analysis: The organizers collected results and performed statistical analysis to identify critical parameters influencing OP measurements (e.g., instrument type, reagent delivery method, analysis timing) [10].

The Scientist's Toolkit: Key Reagents and Materials for ILCs

Successful participation in ILCs, particularly in method-defined fields, relies on the use of specific, high-quality reagents and materials. The following table details essential items used in the featured experimental case studies.

Table 3: Essential Research Reagents and Materials for Analytical ILCs

| Item Name | Function / Rationale | Example from ILC Case Studies |

|---|---|---|

| Whatman 41 Cellulose Filters | Aerosol particle collection medium. Chosen for low background trace element concentrations after acid-washing. | Used for collecting PM10 samples in the soluble aerosol trace elements ILC [19]. |

| Ultrapure Water (UPW) | Leaching solution simulating pure water solubility; a mild extractant. | One of the three main leaching solutions compared for soluble trace elements [19]. |

| Ammonium Acetate Buffer | A buffered leaching solution, more aggressive than UPW. | Used in several protocols for soluble trace elements to simulate specific environmental conditions [19]. |

| Acetic Acid / Hydroxylamine Hydrochloride | Components of the "Berger leach," a strong leaching solution designed to mimic ligand-promoted dissolution. | Used to assess the more bioaccessible fraction of trace elements [19]. |

| Dithiothreitol (DTT) | A probe compound in an acellular assay that reacts with redox-active species in PM, simulating oxidative stress in the lungs. | The core reagent in the oxidative potential (OP) ILC [10]. |

| Quinone Solutions | Used as a stable, standardized reference material to calibrate or benchmark instrument response in OP assays. | Provided as a liquid sample in the OP ILC to isolate measurement variability [10]. |

Interlaboratory comparisons stand as an indispensable pillar of modern analytical science, directly linking robust methodology to accreditation and regulatory acceptance. For researchers and drug development professionals, they are not simply a compliance exercise but a proactive tool for method validation, quality assurance, and scientific advancement. As methodologies evolve and regulatory scrutiny intensifies, the role of ILCs in ensuring data is not only precise but also comparable across the global scientific community will only become more critical. Engaging with these programs is a direct investment in the integrity and impact of research outcomes.

Interlaboratory comparison studies are a cornerstone of analytical quality assurance, providing a mechanism for laboratories to validate their measurement performance against peers. Within materials methods research, particularly in pharmaceutical development, two key frameworks guide the design and interpretation of these critical studies: ISO/IEC 17043, which outlines requirements for proficiency testing providers, and various IUPAC protocols that provide chemical-specific methodological guidance. These frameworks operate in a complementary fashion, with ISO 17043 establishing the managerial and statistical requirements for running valid proficiency testing schemes, while IUPAC recommendations provide the technical foundation for specific analytical techniques like Nuclear Magnetic Resonance (NMR) spectroscopy.

The revised ISO/IEC 17043:2023 standard represents a significant evolution from its 2010 predecessor, incorporating risk-based thinking, harmonizing with other conformity assessment standards like ISO/IEC 17025, and clarifying requirements for statistical methods based on ISO 13528 [20]. Simultaneously, IUPAC continues to advance analytical science through its validated protocols and terminology, such as its precise definition of NMR spectroscopy as "measurement principle of spectroscopy to measure the precession of magnetic moments placed in a magnetic induction based on absorption of electromagnetic radiation of a specific frequency by an atomic nucleus" [21]. For researchers in drug development, understanding the interaction between these managerial standards and technical protocols is essential for designing robust interlaboratory studies that yield scientifically valid and regulatory-ready data.

Core Concepts and Definitions

ISO/IEC 17043: Proficiency Testing Requirements

ISO/IEC 17043:2023 specifies the general requirements for the competence of proficiency testing (PT) providers, establishing a framework for designing, conducting, and evaluating interlaboratory comparisons [20]. The standard defines proficiency testing as the "evaluation of participant performance against pre-established criteria by means of interlaboratory comparisons" [20]. The 2023 revision introduced several critical updates, including harmonization with ISO 13528 for statistical methods, incorporation of risk-based thinking approaches, and clarification of PT requirements for inspection and sampling activities beyond traditional testing and calibration [20].

The primary purpose of proficiency testing under ISO/IEC 17043 is to provide laboratories with objective evidence of their technical competence, helping to identify potential problems in analytical procedures, educate participating laboratories on methodological nuances, and ultimately build confidence in measurement results [20]. For drug development professionals, this framework ensures that analytical methods used in characterizing active pharmaceutical ingredients, excipients, or final drug products produce consistent and comparable results across different laboratories and geographical locations.

IUPAC Guidelines for Analytical Chemistry

The International Union of Pure and Applied Chemistry (IUPAC) develops and maintains standardized protocols, terminology, and best practices for chemical measurements. While IUPAC covers the entire breadth of chemical sciences, its analytical chemistry recommendations provide essential guidance for specific techniques relevant to materials method research. For instance, IUPAC's precise definition of NMR spectroscopy identifies it as a technique that measures "the precession of magnetic moments placed in a magnetic induction based on absorption of electromagnetic radiation of a specific frequency by an atomic nucleus" [21].

IUPAC recommendations typically focus on the fundamental analytical principles, appropriate experimental parameters, data interpretation methods, and reporting standards for specific analytical techniques. The organization's guidelines emphasize technical excellence and methodological rigor, often serving as the scientific foundation upon which accreditation standards like ISO/IEC 17043 are built. For NMR spectroscopy, IUPAC notes that nuclei with suitable magnetic moments include ( \ce{^{1}H} ), ( \ce{^{13}C} ), ( \ce{^{15}N} ), ( \ce{^{19}F} ), and ( \ce{^{31}P} )—critical information for researchers designing interlaboratory studies involving structural elucidation of drug molecules [21].

Table 1: Key Definitions in Interlaboratory Comparisons

| Term | ISO/IEC 17043:2023 Perspective | IUPAC Perspective |

|---|---|---|

| Proficiency Testing | Evaluation of participant performance against pre-established criteria via interlaboratory comparisons [20] | - |

| NMR Spectroscopy | - | Measurement of magnetic moment precession in magnetic induction via RF absorption [21] |

| Statistical Evaluation | Based on ISO 13528; uses normalized error and comparison uncertainty [22] [23] | Employs robust statistical procedures after removing obvious blunders [23] |

| Primary Purpose | Demonstrate competence, identify problems, provide additional confidence [20] | Determine organic molecule structure, enable quantification [21] |

Comparative Analysis: ISO 17043 vs. IUPAC Guidelines

Scope and Application Focus

The fundamental distinction between ISO/IEC 17043 and IUPAC guidelines lies in their scope and primary focus. ISO/IEC 17043 operates as a managerial standard that specifies requirements for organizations providing proficiency testing schemes, emphasizing the processes needed to ensure valid and comparable results across participating laboratories [20]. It is intentionally broad, designed to be applicable to testing and calibration laboratories, legal regulation by governments, and industrial standards development [20]. In contrast, IUPAC guidelines provide technical recommendations for specific analytical methods, such as the precise experimental conditions for NMR spectroscopy or appropriate statistical approaches for data analysis in chemical measurements [21] [23].

This distinction manifests clearly in their application within pharmaceutical research and development. ISO/IEC 17043 compliance ensures that a proficiency testing program for drug substance characterization is properly designed, implemented, and statistically evaluated—focusing on the process rather than the chemical specifics. Meanwhile, IUPAC recommendations would guide the technical execution of the analytical methods themselves, such as the proper referencing of NMR chemical shifts using tetramethylsilane (TMS) or residual solvent peaks [24]. A robust interlaboratory study in drug development would integrate both frameworks: using IUPAC protocols to ensure analytical correctness and ISO/IEP 17043 requirements to guarantee procedural validity.

Statistical Approaches and Performance Assessment

Both frameworks address statistical evaluation but with different emphases and applications. ISO/IEC 17043 relies heavily on ISO 13528 for its statistical foundation, employing metrics like normalized error (Eₙ) to assess participant performance [23]. The standard acknowledges limitations in traditional criteria—where |Eₙ| ≤ 1 indicates acceptable performance—by noting that high values for comparison uncertainty (ucomp) or transfer standard uncertainty (uTS) can artificially improve performance scores, potentially masking measurement instability [22]. Recent amendments to ISO 13528 have introduced more sophisticated probability-based approaches and the possibility of "inconclusive" results when comparison uncertainty is excessive [23].

IUPAC's statistical guidance, particularly evident in its Harmonized Protocol, recommends removing "obvious blunders from a data set at an early stage in an analysis, prior to use of any robust procedure or any test to identify statistical outliers" [23]. This approach prioritizes scientific judgment before applying statistical tests, recognizing that chemical measurements often involve complex contextual factors that pure statistical approaches might miss. For pharmaceutical researchers, this means that IUPAC provides the foundational statistical philosophy for data quality assessment, while ISO standards provide the specific implementation framework for proficiency testing schemes.

Table 2: Statistical Methods in Interlaboratory Comparisons

| Aspect | ISO 17043/13528 Approach | IUPAC Approach |

|---|---|---|

| Primary Criterion | Normalized error (⎮Eₙ⎮ ≤ 1) [22] | Removal of obvious blunders prior to analysis [23] |

| Key Metric | Comparison uncertainty (u_comp) [22] | Robust statistical procedures after data cleaning [23] |

| Recent Developments | Probability-based criteria; "inconclusive" category [22] [23] | - |

| Limitations Addressed | High uTS or urepeat can mask poor performance [22] | - |

Experimental Protocols for Interlaboratory Studies

Designing a Proficiency Test Scheme (ISO 17043 Framework)

Designing a valid proficiency testing scheme according to ISO/IEC 17043 requires meticulous attention to multiple procedural elements. The process begins with defining clear objectives and scope for the study, followed by selecting appropriate test items that adequately represent the analytical challenges laboratories face in routine practice. The standard mandates that PT providers must "document the reasons for any statistical assumptions and demonstrate that the assumptions are reasonable" [23], requiring transparent methodology in establishing assigned values and evaluation criteria.

A critical requirement in the updated standard is that "testing activities, calibration activities and PT item production conform to the relevant requirements of appropriate ISO conformity assessment standards" [20]. This ensures that the proficiency testing process itself does not introduce additional variables that could compromise result interpretation. For drug development applications, this means that the production of reference materials for PT schemes must follow Good Manufacturing Practice (GMP) principles where appropriate, and their characterization should employ fully validated analytical methods. The standard also introduces risk-based thinking, requiring providers to identify potential sources of uncertainty in the PT scheme and implement appropriate control measures [20].

Implementing IUPAC-Recommended Analytical Techniques

Implementing IUPAC-recommended analytical methods requires strict adherence to technical specifications tailored to each technique. For NMR spectroscopy—a critical tool in pharmaceutical analysis for structural elucidation and quantification—key considerations include proper referencing practices to ensure accurate chemical shift determination. Recent research highlights that discrepancies of up to 1.9 ppm for ¹³C NMR in CDCl₃ can occur without proper referencing protocols [24]. IUPAC-endorsed approaches recommend using tetramethylsilane (TMS) as an internal standard or the solvent's residual peak as a secondary reference, with attention to concentration effects and solvent interactions [24].

For complex analyses such as investigating protein-ligand interactions—highly relevant to drug discovery—IUPAC methodologies support techniques like Saturation Transfer Difference (STD) NMR and transfer NOEs for pharmacophore mapping (INPHARMA) NMR [24]. These methods allow researchers to investigate ligand binding modes even in proteins with multiple binding sites, providing critical information for structure-activity relationship studies. The experimental workflow involves specific pulse sequences, careful temperature control, and appropriate data processing algorithms to extract meaningful thermodynamic and kinetic parameters from NMR measurements [24].

Diagram: Integration of ISO 17043 and IUPAC frameworks in interlaboratory studies

Case Study: Microplastics Analysis Interlaboratory Comparison

Experimental Design and Methodology

A revealing example of interlaboratory comparison in practice comes from a study of microplastics quantification involving 12 experienced laboratories worldwide [25]. Researchers prepared standardized samples by mixing one liter of plastic-free seawater with precisely characterized microplastics made from polypropylene, high- and low-density polyethylene, along with artificial particles in two plastic bottles [25]. This design created a controlled yet realistic scenario that mimicked environmental sample analysis while allowing for exact quantification of measurement accuracy.

The study implemented key requirements of both ISO/IEC 17043 and IUPAC principles by establishing predetermined criteria for success, using homogeneous reference materials, and employing statistical evaluation based on comparison with known quantities. Laboratories applied their preferred analytical methods for microplastics identification and quantification, enabling researchers to assess both methodological variability and individual laboratory performance. The minimum requirements for reliable microplastic quantification were systematically examined by comparing actual numbers of microplastics in sample bottles with numbers measured by each participating laboratory [25].

Results and Implications for Method Validation

The interlaboratory comparison revealed significant challenges in microplastics analysis, with the number of microplastics <1 mm being underestimated by 20% even when using best practice methodologies [25]. The uncertainty was attributed to pervasive errors derived from inaccuracies in measuring sizes and/or misidentification of microplastics, including both false recognition and overlooking particles [25]. These findings highlight the critical importance of interlaboratory studies in revealing methodological limitations that might remain undetected in single-laboratory method validation.

Statistical analysis of the results indicated that size distribution of microplastics should be smoothed using a running mean with a length of >0.5 mm to reduce uncertainty to less than ±20% [25]. This finding demonstrates the practical application of statistical methods aligned with ISO 13528 amendments, which emphasize appropriate data treatment to improve comparison reliability. For pharmaceutical researchers, this case study underscores how interlaboratory comparisons can identify systematic methodological biases and establish minimum performance criteria for analytical techniques—whether applied to environmental monitoring or drug product characterization.

Key Research Reagent Solutions

Table 3: Essential Materials for Interlaboratory Studies in Analytical Chemistry

| Item | Function | Application Example |

|---|---|---|

| Deuterated Solvents | Provide locking signal for NMR; residual peaks as secondary reference standards [24] | CDCl₃, DMSO-d₆ for organic compound analysis [24] |

| Tetramethylsilane (TMS) | Primary internal reference for ¹H and ¹³C NMR chemical shift calibration [24] | Establishing 0 ppm reference point in NMR spectra [24] |

| Proficiency Test Items | Well-characterized materials with assigned values for interlaboratory comparison [20] | Microplastics in seawater matrix for method validation [25] |

| Reference Materials | Substances with certified properties for method calibration and validation [20] | Characterized polymers for microplastics analysis [25] |

| Stable Isotope Labels | Enable tracing and quantification in complex matrices via MS or NMR [21] | ¹³C-labeled compounds for metabolic studies in drug development [21] |

Standards and Guidelines Compendium

Successful navigation of interlaboratory comparisons requires access to both current standards and technical recommendations. ISO/IEC 17043:2023 provides the foundational requirements for proficiency testing providers, with its recent revision reflecting updated approaches to risk management and statistical evaluation [20]. The ISO 13528:2022/DAmd 1 amendment offers specific guidance on statistical methods for proficiency testing, including refined approaches for outlier treatment and assigned value determination [23]. For NMR spectroscopy—particularly relevant to pharmaceutical research—the IUPAC Gold Book provides precise definitions and methodological principles, while recent special issues in analytical journals explore emerging applications like machine learning-assisted spectral interpretation and quantum chemical calculations of NMR parameters [21] [24].

Drug development professionals should maintain access to the IUPAC Harmonized Protocol, which recommends procedures for collaborative study design and data analysis, emphasizing the importance of removing obvious blunders before applying robust statistical methods [23]. Additionally, publications like the Marine Pollution Bulletin study on microplastics analysis provide real-world examples of how these standards and guidelines converge in practical interlaboratory comparisons, highlighting both methodological challenges and statistical solutions [25]. This comprehensive toolkit enables researchers to design, implement, and evaluate interlaboratory studies that meet both scientific and regulatory requirements for materials methods research.

Executing Successful ILCs: Protocols, Design, and Real-World Applications

Interlaboratory comparison (ILC) studies are foundational tools for validating analytical methods and ensuring data quality in materials science and drug development. These studies involve the systematic testing of homogeneous, stable samples by multiple laboratories to evaluate and compare their analytical performance. The core objective is to determine the consistency of results across different instruments, operators, and environmental conditions, thereby identifying potential biases and establishing method robustness. A well-executed ILC provides empirical evidence of a method's transferability and reliability, which is critical for regulatory submissions and quality assurance in pharmaceutical development. The structure of an ILC, from participant selection to the final analysis of results, must be meticulously planned to yield statistically sound and actionable data. This guide outlines the essential steps for organizing a conclusive ILC, supported by experimental data and practical protocols.

Participant Selection and Enrollment

The selection and enrollment of participating laboratories are critical first steps that directly influence the validity and scope of an ILC's findings. The goal is to assemble a cohort that represents the typical operational environments where the method will be applied.

A purposeful selection strategy should be employed to ensure diversity in laboratory capabilities and equipment. Participants may be recruited from professional networks, existing collaborations, or through open registration as seen in initiatives like the NORMAN interlaboratory comparison, which involved 37 chromatographic systems, or the IAEA's biennial comparisons [26] [27]. Key selection criteria often include:

- Technical Capability: Laboratories must possess the requisite instrumentation (e.g., LC/HRMS, FTIR) and expertise to perform the analytical method under investigation [26].

- Methodological Diversity: Intentionally including labs that use different instrument models, column chemistries, or mobile phases can help test the method's robustness across technical variations [26].

- Sample Size: While more participants improve statistical power, a group of 12 to 40 laboratories, as used in recent studies, is often practical and sufficient for meaningful comparison [26].

Once identified, a clear enrollment protocol must be established. This includes defining timelines, roles and responsibilities, and data submission formats to ensure a smooth workflow.

Table: Participant Diversity in a Representative ILC on LC/HRMS

| Characteristic | Number of Laboratories | Percentage of Total (%) |

|---|---|---|

| Total Participating Labs | 37 | 100 |

| Chromatography Column Chemistry | ||

| C18 | 28 | 75.7 |

| C8 | 5 | 13.5 |

| Phenyl/Biphenyl | 4 | 10.8 |

| Mobile Phase Additive | ||

| Acid Only | 22 | 59.5 |

| Acid with Ammonium Salt | 15 | 40.5 |

Experimental Design and Sample Preparation

The experimental design forms the blueprint of the ILC, ensuring that the data collected is comparable, reproducible, and fit for purpose. A core principle is the use of common calibrants and test samples distributed to all participants.

The sample set should include two distinct groups of chemicals: calibrants and suspects (or unknowns). In a recent NTS ILC, 41 calibration chemicals and 45 suspect chemicals were used [26]. The calibrants serve a dual purpose: they are used by participants to calibrate their instruments and by organizers to model the relationship between different chromatographic systems. The suspect chemicals are the actual test items used to evaluate laboratory performance. All samples must be thoroughly tested for homogeneity and stability to ensure that any variation in results is attributable to laboratory performance rather than sample degradation. This involves verifying that samples are homogeneous at the intended level of intake and stable for the duration of the study, including during shipment and storage.

A detailed experimental protocol is then distributed to all participants. This document must be unambiguous and cover all critical parameters to minimize variability introduced by procedural differences.

Figure 1: ILC Sample Preparation and Distribution Workflow

Table: Essential Components of an ILC Experimental Protocol

| Protocol Section | Key Elements | Purpose |

|---|---|---|

| Sample Handling | Reconstitution procedure, storage conditions (e.g., frozen, light-protected), stability information. | Ensures sample integrity from receipt through analysis. |

| Instrument Calibration | Specification of calibration chemicals and required quality control checks. | Standardizes the initial setup across all instruments. |

| Chromatographic Method | Column type, mobile phase composition (including pH and additives), gradient program, flow rate, column temperature [26]. | Defines the core separation parameters to ensure comparability of retention data. |

| Data Acquisition & Reporting | Required data formats (e.g., retention time, peak area), file naming conventions, metadata to be reported. | Facilitates uniform data collection and simplifies subsequent analysis. |

Sample Shipment and Logistics

The shipment of samples is a logistical operation that demands precision to preserve sample integrity and comply with international regulations. Proper packaging and documentation are non-negotiable.

Samples must be packaged to withstand transit conditions and remain stable. Key requirements include [28]:

- Primary Container: The labeled specimen container must be securely sealed, crush-proof, and leak-proof (e.g., a stoppered or screw-top tube).

- Light Protection: For light-sensitive analytes, samples must be collected and shipped in amber glass or wrapped in aluminum foil [28].

- Secondary Container: Each primary container must be placed in a secondary container with sufficient absorbent material to absorb the entire liquid contents in case of breakage [28].

- Temperature Control:

- For refrigerated transport: Use a minimum of two frozen gel packs in a foam container.

- For frozen transport: Ship specimens immediately frozen on sufficient dry ice in a foam container. Note that glass tubes should not be frozen unless placed at a shallow angle to avoid cracking [28].

Regulatory compliance is mandatory, especially for international shipments. For non-infectious human diagnostic specimens (Category B/UN3373), the outer package must display the "Exempt Human Specimen" or "UN3373" label [28]. A completed Importer Certification Statement Form must accompany the shipment. If samples are known or suspected to be infectious, a CDC Import Permit is required, which can take two or more weeks to procure [28]. All required documents, such as analysis requisitions and chain of custody forms, should be placed in a separate sealed plastic bag and included in the same box as the specimens [28].

Data Collection and Performance Assessment

The collection and analysis of data are the culminating phases where the performance of the method and the participating laboratories is quantitatively evaluated.

Data collection should be streamlined, often using electronic templates or dedicated platforms. The focus is on collecting both the raw results (e.g., retention times, peak areas) and the critical metadata describing the chromatographic system (CS) used, such as column chemistry and mobile phase pH [26]. To account for differences in equipment—such as column length and flow rate—that affect absolute retention times, data is often normalized. A common approach is to convert retention times to Retention Time Indices (RTI) using a set of calibration chemicals, scaling values between 0 and 1000 for unified comparison [26].

Performance assessment typically involves calculating the agreement between reported results and known reference values or the consensus value from all participants. For retention time projection studies, a Generalized Additive Model (GAM) is often fitted on the calibration chemicals to project RTIs from one chromatographic system to another. The accuracy is then evaluated on the suspect chemicals using metrics like Root Mean Square Error (RMSE) [26]. The similarity of the chromatographic systems, particularly in terms of column chemistry and mobile phase pH, has been shown to be a major factor impacting the accuracy of both projection and machine learning prediction models [26].

Table: Comparison of RT Projection vs. Prediction Model Performance

| Model Approach | Key Principle | Data Requirements | Reported Performance (RMSE in RTI units) | Major Influencing Factor |

|---|---|---|---|---|

| Projection Model | Projects experimental RTs from a source CS to a target CS using a statistical model (e.g., GAM) fit on common calibrants [26]. | A small set (10-50) of chemicals measured on both CSsource and CStarget. | Accuracy directly linked to the similarity between CSsource and CStarget [26]. | Mobile phase pH and column chemistry [26]. |

| Prediction Model (Machine Learning) | Predicts RT/RTI directly from chemical structure using a model trained on large datasets [26]. | A large, representative dataset of chemical structures and their RTs/RTIs. | Can perform on par with projection models when CStraining and CStarget are similar [26]. | Overlap of chemical space and similarity between CStraining and CStarget [26]. |

Figure 2: Typical 12-Week ILC Timeline from Enrollment to Report

The Scientist's Toolkit: Research Reagent Solutions

Successful execution of an ILC relies on a set of well-characterized reagents and materials. The following table details key components used in the featured LC/HRMS study [26].

Table: Essential Research Reagents for an LC/HRMS Interlaboratory Comparison

| Reagent/Material | Function in the Experiment | Example from Case Study |

|---|---|---|

| Calibration Chemicals | A set of known compounds analyzed by all labs to calibrate instruments and model inter-system retention time projections [26]. | 41 diverse chemicals used to establish a Generalized Additive Model (GAM) for RTI projection between different chromatographic systems [26]. |

| Suspect/Target Chemicals | The test compounds whose analysis forms the basis for comparing laboratory performance. Their identity may be blinded to participants. | 45 suspect chemicals used to evaluate the accuracy of the retention time projection and prediction models [26]. |

| Chromatography Column | The stationary phase that separates chemicals based on their chemical properties. Diversity in column chemistry tests method robustness. | Columns included C18, C8, C6-phenyl, and biphenyl phases from all major vendors [26]. |

| Mobile Phase Additives | Modifiers in the solvent that influence separation, ionization, and retention behavior. A key variable in method transfer. | All participating labs used an acidic water phase, containing either just an acid or an acid with an ammonium salt [26]. |

In the field of atmospheric aerosol research, the Oxidative Potential (OP) of particulate matter (PM) has emerged as a pivotal health-relevant metric, quantifying the ability of airborne particles to trigger oxidative stress in the lungs—a key mechanism behind many air pollution-related diseases [29]. Among various analytical techniques, the dithiothreitol (DTT) assay has gained widespread adoption as a sensitive method for quantifying PM's OP by measuring the depletion of this thiol-based surrogate for lung antioxidants [10] [30]. Despite over a decade of increased research activity, the absence of standardized methods has resulted in significant variability in results across different research groups, rendering meaningful comparisons challenging and limiting the potential for synthesizing evidence across studies [10].

To address this critical methodological gap, the RI-URBANS project (Research and Innovation for Urban Air Quality and Health) launched an innovative international interlaboratory comparison (ILC) exercise specifically aimed at harmonizing OP measurements [10]. This pioneering effort represents the first large-scale ILC targeted at standardizing OP assessment methods, setting a new benchmark in the field of health-related aerosol metrics [10]. The exercise engaged 20 laboratories worldwide in a systematic evaluation of the DTT assay, establishing a simplified, harmonized protocol and comparing its performance against the diverse "home" protocols used by participating laboratories [10] [29]. This case study examines the development, implementation, and outcomes of this standardized protocol, providing a framework for methodological harmonization that extends beyond aerosol science to other fields dependent on complex biochemical assays.

The Standardization Challenge: Pre-existing Methodological Variability in DTT Assays

Critical Parameters Contributing to Methodological Variability

The DTT assay operates on the principle that PM components can catalyze the oxidation of DTT, with the rate of DTT consumption serving as a proxy for the material's oxidative potential [30]. This seemingly straightforward measurement is complicated by numerous methodological variables that significantly influence results. Prior to standardization efforts, laboratories employed different versions of the DTT protocol adapted from early seminal publications, including methods described by Li et al. (2003, 2009), Cho et al. (2005), and Kumagai et al. (2002) [10].

Key sources of variability included incubation conditions (time, temperature), initial DTT concentration, sample preparation methods, and instrumentation [10] [30]. Furthermore, the chemical complexity of PM samples introduced additional complications, as different assay conditions varied in their sensitivity to various PM components, including transition metals (e.g., copper, manganese) and organic compounds (e.g., quinones, water-soluble organic carbon) [30]. These methodological differences resulted in substantial interlaboratory variability, undermining the comparability of data across studies and limiting the potential for epidemiological applications of OP metrics [10].

Table 1: Key Methodological Variables in DTT Assays Before Harmonization

| Variable Category | Specific Parameters | Impact on Results |

|---|---|---|

| Reaction Conditions | Incubation time, temperature, initial DTT concentration | Affects reaction kinetics and measured oxidation rates |

| Chemical Environment | Buffer composition, pH, chelating agents | Influences metal reactivity and organic compound behavior |

| Sample Preparation | Extraction method, solvent composition, filter type | Alters bioavailability of redox-active compounds |

| Detection Method | Instrumentation, detection wavelength, reference standards | Affects sensitivity and quantification accuracy |

| Data Expression | Mass-normalized vs. volume-normalized activity | Influences interpretation of health relevance |

Analytical Framework for Interlaboratory Comparisons

Interlaboratory comparison studies provide a systematic approach to quantifying methodological variability and identifying its sources. The RI-URBANS ILC employed statistical frameworks consistent with ISO 5725-2 standards, using metrics such as z-scores to evaluate individual laboratory performance against consensus values [29]. This rigorous statistical foundation enabled objective assessment of both accuracy and precision across participants, providing a robust evidence base for protocol refinement.

The conceptual framework guiding this harmonization effort recognized that reliable OP quantification requires careful consideration of reaction kinetics and concentration-response relationships. Research has demonstrated that DTT assays typically show first-order kinetics at low PM concentrations but may exhibit non-linear kinetics at higher concentrations, emphasizing the importance of using reduced reaction times and appropriate concentration ranges for reliable quantification [31].

The RI-URBANS Harmonization Initiative: Protocol Development and Implementation

Structured Approach to Protocol Development

The RI-URBANS DTT harmonization initiative employed a systematic, collaborative approach to protocol development. A core group of laboratories with extensive OP measurement experience—including institutions from Greece (FORTH, NOA), the United Kingdom (ICL, UoB), and France (IGE)—spearheaded the development of a simplified Standardized Operating Procedure (SOP), referred to as the "RI-URBANS DTT SOP" [10]. This core group first conducted a comprehensive review of existing DTT methodologies to identify critical parameters requiring standardization, then developed a simplified protocol that balanced methodological rigor with practical implementability across diverse laboratory settings [10].

The harmonization process focused specifically on the analytical measurement phase using liquid samples, deliberately decoupling this from preceding variables like PM sampling methods and extraction techniques [10]. This strategic decision allowed researchers to isolate and quantify variability specifically associated with the DTT measurement itself, providing a foundation for future standardization efforts addressing earlier steps in the analytical chain. The coordinated exercise was implemented within the broader framework of the RI-URBANS European project, which aims to develop service tools for enhancing air quality monitoring networks and supports the proposed inclusion of OP as a parameter in the new European Air Quality Directive [10].

Key Components of the Standardized DTT Protocol

The RI-URBANS DTT SOP established specific parameters for critical methodological steps based on systematic testing of variables observed in the literature [10]. While the complete detailed protocol is documented in the project's internal documents, the key harmonized components include:

- Standardized reagent preparation with specified DTT concentration and buffer composition

- Controlled incubation conditions including precise temperature and time parameters

- Quantification method using trichloroacetic acid (TCA) to quench the reaction and DTNB (5,5'-dithiobis-(2-nitrobenzoic acid)) to develop color for spectrophotometric measurement [30]

- Calibration procedures ensuring consistent quantification across instruments

- Data reporting standards including both mass-normalized (DTTm) and volume-normalized (DTTv) activity where appropriate [30]

This simplified protocol was designed to be readily implementable while controlling for the most significant sources of methodological variability identified in prior methodological studies and the initial assessments of the core group [10].

Diagram: Workflow of the RI-URBANS DTT Assay Harmonization Process

Experimental Design and Comparative Methodologies

Interlaboratory Comparison Structure

The RI-URBANS ILC employed a systematic experimental design to enable robust comparison between the harmonized protocol and existing laboratory methods. Eighteen participating laboratories from the European Union, United States, Canada, and Australia analyzed identical liquid samples using both the new RI-URBANS DTT SOP and their established "home" protocols [29]. This paired approach allowed for direct assessment of how protocol standardization influenced measurement consistency while controlling for interlaboratory differences in equipment and technical expertise.

The experimental design focused on liquid samples specifically to isolate the measurement protocol from variations introduced by earlier analytical steps such as PM sampling and extraction [10]. This approach recognized that the complete analytical chain involves multiple potential sources of variability, and that systematic harmonization requires stepwise addressing of each component. Participants followed detailed instructions for sample handling, storage conditions, and analysis timelines to minimize extraneous sources of variation, with the entire exercise coordinated by IGE-CNRS and data processed independently by the European Joint Research Centre (JRC) following ISO 5725-2 standards to ensure analytical rigor and impartiality [29].

Comparative Assessment Metrics

The comparative analysis employed multiple quantitative metrics to evaluate protocol performance:

- Z-scores assessing individual laboratory accuracy relative to consensus values

- Relative Standard Deviation (RSD) measuring precision across replicate measurements

- Ranking accuracy evaluating how well laboratories could correctly order samples by OP value

- Comparative statistics analyzing variability between harmonized and home protocols

These metrics provided a multidimensional assessment of how protocol standardization influenced different aspects of analytical performance, from basic precision to more complex analytical capabilities like correct sample differentiation [29].

Key Findings and Quantitative Results: Harmonized Protocol vs. Home Protocols

Performance Improvement with Standardized Methods

The RI-URBANS ILC yielded compelling quantitative evidence supporting protocol harmonization. Preliminary analysis revealed that a significant proportion of participating laboratories achieved acceptable z-scores when using the standardized approach, indicating improved accuracy relative to consensus values [29]. The exercise demonstrated that the overall measurement procedure displayed good repeatability, with 62% of laboratories achieving relative standard deviations below 20% for triplicate measurements of samples with concentrations typically encountered in European monitoring contexts [29].

Perhaps most notably, 73% of participating laboratories correctly ranked the five samples by their OP values when using the harmonized protocol, demonstrating high analytical precision even in cases where some accuracy biases remained [29]. This ranking capability is particularly important for real-world applications where understanding relative differences in PM toxicity between locations or over time is often more immediately practical than requiring absolute quantitation.

Table 2: Comparative Performance of Harmonized vs. Home Protocols in DTT ILC

| Performance Metric | Harmonized Protocol | Home Protocols | Significance |

|---|---|---|---|

| Laboratories with Acceptable Z-scores | 54% of participants achieved acceptable scores across all samples | Not explicitly reported but indicated as more variable | Improved accuracy with standardized method |

| Measurement Repeatability (RSD) | 62% of labs had <20% RSD on triplicates | Higher variability observed | Enhanced precision with harmonization |

| Sample Ranking Accuracy | 73% of labs correctly ranked all 5 samples | Lower ranking accuracy | Better differentiation capability |

| Interlaboratory Variability | Reduced coefficient of variation | Larger variability between labs | Improved comparability across studies |

| Systematic Bias | Consistent across participants | Tendency to underestimate OP values | More reliable absolute quantification |

Identification of Critical Methodological Parameters