Generative AI for Molecular Discovery: Transforming Drug and Materials Design with Inverse Design

This article explores the paradigm shift in molecular discovery driven by generative artificial intelligence.

Generative AI for Molecular Discovery: Transforming Drug and Materials Design with Inverse Design

Abstract

This article explores the paradigm shift in molecular discovery driven by generative artificial intelligence. It details how models like VAEs, GANs, diffusion models, and LLMs enable the inverse design of novel molecules and materials, moving beyond traditional screening methods. Covering foundational concepts, key architectures, and real-world applications in drug design and materials science, it also addresses critical challenges in model optimization, validation, and benchmarking. Aimed at researchers and development professionals, this review synthesizes current advances and future trajectories for deploying robust, experimentally-aligned generative AI systems in biomedical and industrial research.

The Generative Revolution: Why AI is Reshaping Molecular Exploration

The total chemical space of feasible small organic molecules is estimated to encompass approximately 10^60 compounds, a number so vast that it defies comprehensive exploration through traditional experimental means [1] [2]. This fundamental intractability represents one of the most significant challenges in modern drug discovery and materials science. Conventional discovery methods, including high-throughput screening (HTS), exhibit severely limited efficiency when faced with this enormity, as they require substantial resources while yielding only a limited number of hit compounds [1]. The development of artificial intelligence (AI)-based generative models, particularly deep generative models for molecular design, has emerged as a transformative approach to this problem. By leveraging sophisticated algorithms that learn probability distributions of molecular properties, these models enable efficient exploration of chemical space and the creation of novel compounds with targeted characteristics, thereby reshaping the entire drug discovery pipeline [1] [3].

Table 1: Scale and Characteristics of Different Chemical Libraries

| Library Type | Representative Examples | Estimated Size (Number of Compounds) | Key Characteristics |

|---|---|---|---|

| Stock Compound Libraries | In-house pharma collections | 10^6 – 10^7 | Commercially available, "drug-like" compounds with associated historical data |

| Ultra-Large Virtual Libraries | Enamine REAL Space, WuXi GalaXi, Otava CHEMriya | 10^10 – 10^15 | Synthetically accessible on-demand compounds with low inter-library overlap (<10%) |

| Generative Virtual Libraries | GDB-17, AI-generated spaces | 10^23 – 10^60 | Theoretically feasible compounds enumerated by rules or generative algorithms |

The Generative Modeling Paradigm: Navigating Intractability

Generative molecular models represent a paradigm shift from traditional screening-based approaches to an inverse design framework. Instead of searching existing libraries, these models learn to directly generate novel molecular structures conditioned on desired properties [4] [3]. This inverse design capability is particularly valuable for addressing the chemical space intractability problem, as it focuses exploration on the most promising regions of chemical space.

The theoretical foundation of these approaches lies in their ability to learn conditional probability distributions P(molecule|properties) from existing chemical data, then sample this distribution to generate novel structures with targeted characteristics [5]. When applied to drug discovery, this enables the creation of molecules with specific binding affinities, selectivity profiles, and optimal pharmacokinetic properties.

Molecular Representation Strategies

A critical aspect of generative modeling involves how molecules are represented computationally, with each approach offering distinct advantages for capturing chemical information:

- 1D Sequence Representations: Use language-like representations such as SMILES (Simplified Molecular Input Line Entry System), treating molecules as strings of characters following grammatical rules. This approach benefits from natural language processing architectures but may generate invalid structures [3].

- 2D Graph Representations: Depict molecules as graphs with atoms as nodes and bonds as edges, preserving topological connectivity information. Graph-based models naturally capture molecular connectivity but originally lacked 3D structural information [1] [3].

- 3D Structural Representations: Incorporate spatial atomic coordinates, providing critical information about molecular shape, conformation, and complementarity to protein binding pockets. This explicit structural information enables more rational drug design but requires more complex equivariant neural architectures [1] [6].

Table 2: Molecular Representation Schemes in Generative Models

| Representation Type | Data Structure | Example Applications | Advantages | Limitations |

|---|---|---|---|---|

| 1D (Sequence) | SMILES, SELFIES strings | RNN, Transformer models | Compact, memory-efficient, easily searchable | May generate invalid structures; lacks explicit spatial information |

| 2D (Graph) | Molecular graphs with atoms (nodes) and bonds (edges) | GNN, GAN, VAE models | Preserves topological connectivity; intuitive representation | Originally lacked 3D structural context |

| 3D (Structural) | Atomic coordinates with element types | Equivariant diffusion models, 3D CNNs | Captures spatial structure crucial for binding interactions | Computationally intensive; requires specialized architectures |

Experimental Protocols for Generative Molecular Design

Protocol 1: Target-Aware 3D Molecular Generation Using Guided Equivariant Diffusion

Application Note: This protocol describes the methodology for DiffGui, a target-conditioned E(3)-equivariant diffusion model that integrates bond diffusion and property guidance for structure-based drug design. The approach addresses key challenges in 3D molecular generation, including structural feasibility and explicit optimization of drug-like properties [6].

Materials and Reagents:

- Dataset: PDBbind or CrossDocked2020 containing protein-ligand complexes with 3D structural information

- Software: RDKit (v. 4.9.1) for molecular manipulation and property calculation

- Computational Framework: PyTorch or TensorFlow with E(3)-equivariant graph neural network extensions

- Property Prediction Tools: AutoDock Vina for binding affinity estimation, OpenBabel toolkit for file format conversion

Methodology:

Data Preprocessing:

- Extract protein binding pockets using a distance-based criterion (e.g., all residues within 6.5Å of any ligand atom)

- Align complex structures to a common coordinate frame to ensure rotational and translational invariance

- Represent ligands as 3D graphs with node features (atom types, positions) and edge features (bond types)

Model Architecture:

- Implement an E(3)-equivariant graph neural network using tensor field networks or similar architectures

- Design separate noise schedules for atom diffusion (coordinates and types) and bond diffusion

- Incorporate property prediction heads for binding affinity (Vina Score), drug-likeness (QED), synthetic accessibility (SA), and physicochemical properties (LogP, TPSA)

Training Procedure:

- Phase 1: Diffuse bond types toward prior distribution while minimally perturbing atom types and positions

- Phase 2: Diffuse atom types and positions to their prior distributions

- Utilize a modified evidence lower bound (ELBO) objective with property prediction terms

- Train for 500,000-1,000,000 iterations with batch sizes of 16-32 complexes

Sampling with Guidance:

- Initialize with pure noise for atom positions, types, and bond types

- Perform reverse diffusion steps with classifier-free guidance for target properties

- Apply bond guidance to ensure chemical validity during the coordinate generation process

- Assemble final molecules using both generated atoms and bonds

Validation Metrics:

- Structural Quality: Jensen-Shannon divergence between generated and reference distributions of bonds, angles, and dihedrals; RMSD to optimized conformations

- Chemical Validity: Atom stability, molecular stability, RDKit validity, PoseBusters validity

- Drug-like Properties: Quantitative Estimate of Drug-likeness (QED), Synthetic Accessibility (SA), LogP, Topological Polar Surface Area (TPSA)

- Binding Affinity: Estimated using AutoDock Vina or similar docking software

Protocol 2: Large Property Model (LPM) Framework for Multi-Property Inverse Design

Application Note: This protocol outlines the Large Property Model approach, which addresses the data scarcity problem for prized molecular properties by leveraging abundant chemical data across multiple property dimensions. The method directly learns the property-to-molecular-graph mapping, enabling inverse design conditioned on comprehensive property vectors [5].

Materials and Reagents:

- Dataset: PubChem compounds (1.3M molecules with up to 14 heavy atoms containing CHONFCl elements)

- Geometry Generation: Auto3D for conformer generation

- Property Calculation: GFN2-xTB for quantum chemical properties (dipole moment, HOMO-LUMO gap, solvation energies, etc.)

- Software: RDKit for descriptor calculation (complexity, H-bond acceptors/donors, logP, TPSA)

Methodology:

Data Curation and Property Calculation:

- Curate molecular set with defined size and elemental constraints

- Generate low-energy 3D conformers using Auto3D or similar tools

- Calculate 23 diverse molecular properties spanning:

- Quantum Chemical: Dipole moment, HOMO-LUMO gap, ionization potential, electrophilicity index

- Thermodynamic: Total energy, enthalpy, free energy, heat capacity, entropy

- Solvation: Free energies of solvation in octanol and water, solvent accessible surface areas

- Drug-like: Compound complexity, H-bond acceptors/donors, logP, topological polar surface area

Model Architecture:

- Implement transformer-based architecture for property-to-graph mapping

- Design graph decoder that generates molecular structures atom-by-atom

- Use message passing networks for graph refinement

Training Procedure:

- Formulate as direct minimization: min┬w∑ᵢ‖f(p⁽ⁱ⁾)-G⁽ⁱ⁾‖ where f is the property-to-molecule mapping

- Train with property vectors of varying lengths to test hypothesis that reconstruction accuracy increases with property dimensions

- Utilize teacher forcing during training with scheduled sampling

Inverse Design Protocol:

- Specify target property vector including both primary and auxiliary properties

- Generate molecular graphs through transformer decoding

- Validate generated structures using forward property prediction models

- Filter based on synthetic accessibility and chemical novelty

Validation Approach:

- Reconstruction Accuracy: Measure exact match recovery for molecules in test set

- Property Achievement: Assess how generated molecules match target property values

- Chemical Validity: Evaluate using standard metrics (validity, uniqueness, novelty)

- Phase Transition Analysis: Monitor how accuracy changes with increasing property dimensions

Table 3: Key Research Reagents and Computational Tools for Generative Molecular Design

| Resource Category | Specific Tools/Databases | Key Function | Application Context |

|---|---|---|---|

| Small-Molecule Databases | ZINC (2B purchasable compounds), ChEMBL (1.5M bioactive molecules), GDB-17 (166.4B enumerated molecules) | Training data for generative models; validation of generated compounds | Ligand-based design; pre-training generative models |

| Ultra-Large Screening Libraries | Enamine REAL (36B compounds), WuXi GalaXi (8B compounds), Otava CHEMriya (11.8B compounds) | Source of synthesizable compounds for virtual screening; benchmark for generative methods | Structure-based design; validation of generative model outputs |

| Macromolecular Structure Resources | Protein Data Bank (PDB), AlphaFold Protein Structure Database | Source of 3D protein structures for structure-based design | Target-aware molecular generation; binding pocket characterization |

| Property Prediction Tools | RDKit, AutoDock Vina, GFN2-xTB, OpenBabel | Calculation of molecular properties; binding affinity estimation | Training property predictors; validating generated molecules |

| Generative Modeling Frameworks | PyTorch/TensorFlow with geometric deep learning extensions (e.g., Tensor Field Networks, SE(3)-Transformers) | Implementation of equivariant generative architectures | Developing custom generative models; research on novel algorithms |

The intractability of chemical space, once considered an insurmountable barrier to systematic molecular discovery, is being transformed by generative AI models into a navigable landscape of opportunity. Through advanced representation strategies, equivariant architectures, and multi-property optimization frameworks, these approaches enable targeted exploration of regions with high probabilities of success. The integration of 3D structural information with comprehensive property guidance represents the current state-of-the-art, moving beyond simple property prediction to holistic molecular design. As these methodologies continue to mature, they promise to accelerate the discovery of novel therapeutic agents and functional materials while dramatically reducing the time and cost associated with traditional discovery approaches. The protocols and resources outlined in this application note provide researchers with practical frameworks for implementing these cutting-edge approaches in their molecular discovery pipelines.

Inverse design represents a fundamental paradigm shift in materials science and drug discovery. Unlike the traditional forward design process, which relies on trial-and-error experimentation to find materials with desired properties, inverse design begins with the target properties and works backward to generate optimal molecular structures. [7] This approach has become feasible through advances in generative artificial intelligence (AI), which can navigate the vast chemical space—estimated to contain up to 10^60 theoretically feasible compounds—that is intractable for traditional screening methods. [4] The core of this paradigm is the development of generative models that can create novel, valid molecular structures conditioned on specific property requirements, effectively shortcutting years of experimental work and accelerating the discovery of new materials and therapeutics.

Generative Model Architectures for Inverse Design

Key Model Architectures and Their Applications

Multiple generative AI architectures have been adapted for inverse design tasks, each with distinct strengths and optimal application domains. The table below summarizes the primary model types and their characteristics:

Table 1: Generative Model Architectures for Molecular Inverse Design

| Model Type | Key Mechanism | Strengths | Common Applications |

|---|---|---|---|

| Variational Autoencoders (VAEs) [8] [7] | Encodes inputs into latent space distribution, then samples from this distribution to generate new structures | Smooth latent space enables interpolation; provides explicit probability model | Polymer design, inorganic crystals, small molecules |

| Generative Adversarial Networks (GANs) [8] [7] | Generator creates synthetic data while discriminator distinguishes real from generated samples | High-quality sample generation; no requirement for explicit probability distribution | Molecular generation, image-based material representations |

| Diffusion Models [9] [10] [11] | Learns to reverse a gradual noising process to generate data from noise | State-of-the-art sample quality; relationship to physical forces | Crystal structure generation, drug-like molecules, linker design |

| Transformer-based Models [8] | Self-attention mechanisms process sequential data | Excellent for sequence data (SMILES); handles long-range dependencies | SMILES-based molecular generation, property-conditioned design |

| Reinforcement Learning (RL) [10] [8] | Agents learn through rewards from environment interactions | Direct optimization of complex objectives; minimal labeled data needed | Multi-property optimization, crystal generation |

Specialized Frameworks and Recent Advances

Recent research has produced specialized frameworks that combine multiple approaches to address specific inverse design challenges:

DyRAMO (Dynamic Reliability Adjustment for Multi-objective Optimization): This framework addresses reward hacking—where models generate molecules with favorable predicted properties that are actually outside the reliable prediction domain. DyRAMO dynamically adjusts reliability levels for each property during optimization, ensuring generated molecules fall within the applicability domain of prediction models. [9]

MatInvent: A reinforcement learning workflow that optimizes diffusion models for crystal structure generation. MatInvent demonstrates sample efficiency, converging to target properties within approximately 60 iterations (about 1,000 property evaluations) across electronic, magnetic, mechanical, thermal, and physicochemical properties. [10]

SiMGen (Similarity-based Molecular Generation): A zero-shot method that leverages a time-varying local similarity kernel and pretrained descriptors. SiMGen provides exceptional control over generation, enabling fragment-biased generation and shape control via point cloud priors without additional training. [11]

Experimental Protocols and Implementation

Protocol 1: Multi-Objective Molecular Optimization with DyRAMO

This protocol enables reliable multi-property molecular design while preventing reward hacking. [9]

Materials and Computational Requirements

Table 2: Research Reagent Solutions for DyRAMO Implementation

| Component | Specification | Function/Purpose |

|---|---|---|

| Generative Model | ChemTSv2 (RNN + MCTS) | Generates molecular structures via SMILES |

| Property Predictors | Supervised learning models (e.g., Random Forest, Neural Networks) | Predicts target properties (e.g., bioactivity, solubility) |

| Applicability Domain (AD) Metric | Maximum Tanimoto Similarity (MTS) | Defines reliable prediction regions based on training data similarity |

| Optimization Framework | Bayesian Optimization (BO) | Efficiently explores reliability level combinations |

| Programming Environment | Python 3.8+ with RDKit, NumPy, SciPy | Chemical informatics and numerical computing |

Step-by-Step Procedure

Define Multi-Objective Reward Function:

- Identify target properties (e.g., inhibitory activity, metabolic stability, membrane permeability)

- Establish baseline prediction models for each property using historical data

- Define reward function:

Reward = (Π(v_i^w_i))^(1/Σw_i)if molecule within all ADs, else 0- where

v_iis predicted value for property i,w_iis weighting factor

- where

Initialize Reliability Levels:

- Set initial reliability level ρ_i for each property i (typically 0.7-0.9)

- Define AD for each property: molecule included if maximum Tanimoto similarity to training set > ρ_i

Execute Iterative Optimization Loop:

- Step 1: Set reliability levels for current iteration

- Step 2: Generate molecules using ChemTSv2 with constraint to remain within AD intersection

- Step 3: Evaluate molecular design using DSS score:

DSS = (Π Scaler_i(ρ_i))^(1/n) × Reward_topX%- where Scaleri standardizes reliability level (0-1), RewardtopX% averages top X% rewards

- Step 4: Use Bayesian optimization to update reliability levels for next iteration

- Step 5: Repeat until DSS score convergence (typically 20-50 iterations)

Validation and Selection:

- Select top-performing molecules based on optimized reward function

- Verify synthetic accessibility using SAscore

- Conduct expert review of selected candidates for chemical novelty and feasibility

Protocol 2: Reinforcement Learning for Crystal Design with MatInvent

This protocol outlines the MatInvent workflow for goal-directed crystal structure generation using reinforcement learning with diffusion models. [10]

Materials and Computational Requirements

Table 3: Research Reagent Solutions for MatInvent Implementation

| Component | Specification | Function/Purpose |

|---|---|---|

| Pre-trained Diffusion Model | MatterGen or DiffCSP | Generates 3D crystal structures via denoising process |

| Property Evaluation | DFT, ML potentials, or empirical calculators | Computes target properties (electronic, magnetic, mechanical, etc.) |

| Stability Filter | MLIP geometry optimization + Ehull calculation | Ensures thermodynamic stability (Ehull < 0.1 eV/atom) |

| Diversity Filter | Structural and composition similarity metrics | Prevents mode collapse, encourages exploration |

| Experience Replay Buffer | Storage of high-reward crystals | Improves sample efficiency and learning stability |

Step-by-Step Procedure

Initialize Pre-trained Diffusion Model:

- Load diffusion model pre-trained on large-scale unlabeled crystal dataset (e.g., Alex-MP)

- Configure denoising process as Markov Decision Process with T steps (typically T=1000)

Set Up RL Optimization Framework:

- Define reward function based on target properties (single or multi-objective)

- Configure policy optimization with KL regularization to prevent overfitting

- Initialize experience replay buffer and diversity filter

Execute RL Training Loop:

- Generation Phase: Diffusion model generates batch of m crystal structures

- Stability Screening: Structures undergo MLIP geometry optimization; filter for SUN (Stable, Unique, Novel) criteria:

- Thermodynamically stable (Ehull < 0.1 eV/atom)

- Unique compared to previously generated structures

- Novel compared to training database

- Property Evaluation: Calculate target properties for n randomly selected SUN structures

- Reward Assignment: Assign rewards based on property values and diversity metrics

- Model Update: Fine-tune diffusion model using top k samples ranked by reward, with KL regularization toward pre-trained model

Convergence and Output:

- Continue iterations until reward convergence (typically 50-100 iterations)

- Output diverse set of crystals satisfying target property constraints

- Validate top candidates through DFT calculations or experimental synthesis

Performance Metrics and Validation

Quantitative Benchmarking Results

Rigorous evaluation is essential for assessing generative model performance. Recent benchmarking studies provide quantitative comparisons across multiple metrics:

Table 4: Benchmarking Results for Generative Models on Polymer Design Tasks [12]

| Model | Validity (fv) | Uniqueness (f10k) | Novelty (SNN) | Diversity (IntDiv) | FCD |

|---|---|---|---|---|---|

| CharRNN | 0.97 | 1.00 | 0.76 | 0.86 | 2.45 |

| REINVENT | 0.99 | 1.00 | 0.72 | 0.85 | 1.98 |

| GraphINVENT | 0.94 | 1.00 | 0.69 | 0.84 | 3.12 |

| VAE | 0.89 | 0.98 | 0.65 | 0.82 | 5.34 |

| AAE | 0.85 | 0.97 | 0.63 | 0.81 | 6.01 |

| ORGAN | 0.79 | 0.95 | 0.58 | 0.79 | 8.72 |

Table 5: MatInvent Performance Across Single-Property Optimization Tasks [10]

| Target Property | Convergence Iterations | Property Evaluations | Success Rate | Diversity Ratio |

|---|---|---|---|---|

| Band Gap (3.0 eV) | 55 | ~990 | 92% | 0.84 |

| Magnetic Density (>0.2 Å⁻³) | 48 | ~864 | 88% | 0.79 |

| Heat Capacity (>1.5 J/g/K) | 62 | ~1116 | 85% | 0.81 |

| Bulk Modulus (300 GPa) | 58 | ~1044 | 90% | 0.76 |

Case Study: Inverse Design of Radiation-Resistant Polymers

A closed-loop generative AI framework demonstrates the practical application of inverse design for radiation-resistant polymers: [13]

Data Preparation: Collected SMILES representations of polymer repeat units, computed 17 RDKit molecular descriptors, and integrated experimental glass transition temperatures (Tg) and mass attenuation coefficients (MAC)

Surrogate Model Development: Trained random forest models to predict Tg and MAC (R² > 0.90 for Tg, R² > 0.99 for MAC) to fill sparse experimental data

Generative Process: Implemented property-conditional Transformer model generating chemically valid SMILES conditioned on target properties

Closed-Loop Optimization: Generated candidates automatically featurized, evaluated by surrogate models, and selected through score-diversity scheme

Results: Identified polymers with Tg ~215°C and MAC > 0.0569 cm²/g, meeting radiation shielding targets while maintaining thermal stability

Future Directions and Challenges

Despite significant progress, inverse design faces several challenges that represent opportunities for future research:

Data Quality and Availability: Limited experimental data for many material classes remains a bottleneck, particularly for polymers where real polymer datasets are orders of magnitude smaller than small molecule databases. [12]

Multi-Objective Optimization: Practical applications require balancing multiple, sometimes conflicting properties. Frameworks like DyRAMO represent initial steps, but more robust multi-objective approaches are needed. [9]

Experimental Validation: While computational results are promising, broader experimental validation is essential to establish real-world efficacy of inverse-designed materials.

Interpretability: Understanding the reasoning behind model-generated structures remains challenging, limiting researcher trust and adoption.

The integration of physical principles directly into generative models—such as physics-informed generative AI that embeds crystallographic symmetry, periodicity, and permutation invariance—represents a promising direction for ensuring generated materials are not only mathematically possible but chemically realistic and synthesizable. [14] As these challenges are addressed, inverse design is poised to become the standard approach for molecular and materials discovery across pharmaceuticals, energy storage, electronics, and beyond.

Generative artificial intelligence (genAI) has emerged as a transformative force in scientific research, enabling the algorithmic creation of novel digital content, from images and text to molecular structures [15] [16]. For researchers in materials science and drug development, these models offer a paradigm shift from traditional discovery methods toward data-driven inverse design [17] [18]. This overview focuses on four key generative architectures—Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Diffusion Models, and Large Language Models (LLMs)—that are currently revolutionizing the field of molecular generation.

The following sections provide a detailed examination of each architecture's fundamental principles, supported by comparative analysis, experimental protocols for molecular design applications, and visualization of their workflows. Framed within the context of materials research, this article serves as a technical reference for scientists seeking to leverage generative AI for accelerated innovation.

Variational Autoencoders (VAEs)

Principles of Operation: VAEs are generative models that learn to compress input data into a lower-dimensional, continuous latent space and then reconstruct it back to the original form [19] [20]. Unlike standard autoencoders, VAEs encode inputs as probability distributions rather than single points, characterized by a mean (μ) and standard deviation (σ) [19]. This probabilistic approach enables the generation of new, similar data by sampling from the learned latent space and decoding the samples [15].

The training objective combines two loss functions: reconstruction loss, which ensures the decoder can accurately rebuild the input, and KL-divergence loss, which regularizes the latent distribution to resemble a standard normal distribution [19]. This ensures the latent space is smooth and continuous, allowing for meaningful interpolation between data points [15] [19].

Strengths and Limitations: VAEs provide a structured and stable framework for unsupervised learning, making them particularly useful for data exploration and anomaly detection [19]. Their probabilistic nature allows them to handle uncertainty and incomplete data effectively [20]. However, a primary limitation is that they often produce blurrier or less detailed outputs compared to other generative models, as they may prioritize learning overarching data distributions over capturing fine-grained details [19] [16].

Generative Adversarial Networks (GANs)

Principles of Operation: GANs operate on an adversarial principle, pitting two neural networks against each other: a generator that creates synthetic data from random noise, and a discriminator that distinguishes between real training data and the generator's fakes [15] [16]. This setup is framed as a minimax game where the generator strives to produce increasingly realistic outputs to fool the discriminator, while the discriminator concurrently improves its judgment capabilities [16].

Through this iterative competition, the generator learns to map from a simple noise distribution to complex, high-dimensional data distributions (like images or molecular structures), eventually producing highly realistic samples [15] [20].

Strengths and Limitations: GANs are renowned for their ability to generate sharp, high-fidelity, and highly realistic samples [19] [20]. Their adversarial training process, while unstable, can capture complex data patterns without explicitly modeling the data probability distribution [16]. Key challenges include training instability, where the generator and discriminator may fail to reach equilibrium, and mode collapse, where the generator produces limited varieties of samples [19] [16].

Diffusion Models

Principles of Operation: Diffusion models generate data through a progressive noising and denoising process [19] [16]. The forward process (diffusion) systematically adds Gaussian noise to training data over many steps until it becomes pure noise [15]. The reverse process (denoising) is then learned by a neural network that predicts how to iteratively remove this noise to reconstruct the original data [19]. To generate new content, the model starts with pure noise and applies the learned denoising steps to yield a coherent sample [15].

Strengths and Limitations: Diffusion models excel at producing diverse and high-quality outputs, often surpassing GANs in fidelity and detail in image synthesis tasks [19] [21]. Their training process is generally more stable than that of GANs [16]. A significant drawback is their computational intensity and slow inference speed, as generation requires many sequential denoising steps, though newer methods like Latent Diffusion aim to mitigate this [19] [20].

Large Language Models (LLMs) & Transformers

Principles of Operation: Transformer-based models, including LLMs, utilize a self-attention mechanism that weighs the importance of different parts of the input data when generating output [15] [20]. This allows them to capture long-range dependencies and contextual relationships effectively [19]. In generative tasks, they typically operate autoregressively, predicting the next element in a sequence (e.g., the next word in text or the next atom in a molecular string) based on all previous elements [15] [22].

Strengths and Limitations: Transformers are highly scalable and versatile, capable of handling massive datasets and adapting to various data modalities (text, code, molecular representations) [19] [22]. Their ability to manage context over long sequences makes them powerful for complex generation tasks. However, they require immense computational resources for training and inference and can be difficult to interpret due to their "black box" nature [20].

Table 1: Comparative Analysis of Key Generative Architectures

| Architecture | Core Principle | Key Strengths | Key Limitations | Primary Molecular Design Applications |

|---|---|---|---|---|

| VAE [19] [20] | Probabilistic encoding/decoding to a latent space | Stable training; smooth latent space; good for exploration | Often produces blurry outputs; may miss fine details | Inverse molecular design [18]; latent space optimization [17] |

| GAN [15] [16] | Adversarial competition between generator & discriminator | High-quality, sharp outputs; fast inference | Unstable training; mode collapse | Generating novel molecular structures [23] |

| Diffusion Model [19] [21] | Iterative noising and denoising process | State-of-the-art output quality & diversity; stable training | Computationally intensive; slow generation | High-fidelity molecule & protein design [17] [18] |

| LLM/Transformer [15] [22] | Self-attention for context-aware sequence generation | Excellent with long sequences; highly versatile & scalable | High computational demand; poor interpretability | Generating SMILES/SELFIES strings [22]; protein sequence design [17] |

Application Notes for Molecular Generation

Optimizing Molecular Generation with Hybrid Strategies

Standalone generative models are often enhanced with specialized optimization strategies to better navigate the complex chemical space and produce molecules with desired properties [18].

Property-Guided Generation: This approach directly integrates target objectives into the generation process. For instance, the Guided Diffusion for Inverse Molecular Design (GaUDI) framework combines a diffusion model with an equivariant graph neural network for property prediction, enabling the generation of molecules optimized for specific electronic applications with high validity rates [18]. Similarly, VAEs can be used for property-guided generation by integrating property predictors into their latent space, allowing for targeted exploration and optimization of molecular structures [18].

Reinforcement Learning (RL): RL frames molecular generation as a sequential decision-making process. An agent (the generative model) is trained to take actions (e.g., adding atoms or bonds) to construct a molecule, receiving rewards based on how well the molecule achieves predefined objectives like drug-likeness, binding affinity, or synthetic accessibility [18]. The Graph Convolutional Policy Network (GCPN) is a prominent example that uses RL to sequentially build molecular graphs, successfully generating molecules with targeted chemical properties [18].

Bayesian Optimization (BO): BO is particularly useful when evaluating candidate molecules is computationally expensive (e.g., docking simulations). It builds a probabilistic model of the objective function to intelligently propose the most promising candidates for evaluation. In generative models, BO is often performed in the latent space of a VAE, searching for latent vectors that decode into molecules with optimal properties [18].

Protocol 1: Property-Guided Molecular Generation with a VAE

This protocol outlines the steps for generating novel molecules with desired properties using a VAE, a common approach in inverse molecular design [18].

Workflow Overview:

- Data Preparation: A dataset of existing molecules (e.g., from PubChem or ZINC) is converted into a standardized representation, such as SMILES (Simplified Molecular-Input Line-Entry System) or SELFIES (Self-Referencing Embedded Strings) [22].

- Model Training: A VAE is trained to encode each molecule into a latent vector and then decode the vector back to the original molecular representation. The loss function is a weighted sum of reconstruction loss (cross-entropy for SMILES) and the KL-divergence loss.

- Property Predictor Training: A separate, simple regression model (e.g., a feed-forward neural network) is trained on the VAE's latent vectors to predict the molecular property of interest (e.g., solubility, binding affinity). This creates a direct mapping from the latent space to the property.

- Latent Space Optimization: An optimization algorithm (e.g., Bayesian Optimization) is used to search the VAE's latent space for vectors that are predicted by the property model to yield high values of the desired property.

- Generation and Validation: The optimized latent vectors are decoded by the VAE's decoder into new molecular structures. The generated molecules are then validated using chemical validation tools and external property prediction methods.

Protocol 2: Reinforcement Learning for Multi-Objective Optimization

This protocol describes using RL to fine-tune a pre-trained generative model to generate molecules that satisfy multiple complex objectives, such as high binding affinity and low toxicity [18].

Workflow Overview:

- Pre-training a Base Model: A generative model (e.g., a GAN or an autoregressive model) is first pre-trained on a broad dataset of molecules to learn the fundamental rules of chemical structure.

- Defining the Reward Function: A critical step is designing a composite reward function that incorporates multiple objectives. For example:

Reward = w1 * Binding_Affinity + w2 * (1 - Toxicity) + w3 * Synthetic_Accessibility. The weights (w1, w2, w3) balance the importance of each objective. - RL Fine-Tuning: The pre-trained model serves as the initial policy for the RL agent. The agent interacts with the environment by generating molecules. Each generated molecule is evaluated by the reward function. The model's parameters are updated using an RL algorithm (e.g., Policy Gradient) to maximize the expected cumulative reward.

- Iteration and Evaluation: The process repeats for many episodes. The generated molecules from the final model are evaluated using in silico methods and, for promising candidates, through experimental validation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Generative Molecular Design Experiments

| Tool / Resource | Type | Primary Function in Research | Example Instances |

|---|---|---|---|

| Chemical Databases [22] | Data | Provides large-scale, structured molecular data for model training and benchmarking. | PubChem, ChEMBL, ZINC |

| Molecular Representations [22] | Data Format | Standardized text-based representations that allow models to process chemical structures. | SMILES, SELFIES |

| Benchmarking Datasets [23] | Data | Curated datasets for fair evaluation and comparison of different generative models. | QM9, MOSES |

| Deep Learning Frameworks [24] | Software | Provides the foundational libraries for building and training complex generative models. | TensorFlow, PyTorch |

| Specialized Molecular AI Tools [17] [18] | Software/Model | Pre-trained or specialized models for specific generative tasks in chemistry and biology. | GCPN, GraphAF, GaUDI, RFdiffusion |

The field of molecular generation is undergoing a rapid transformation, expanding its reach from the well-established domain of small molecule drugs into the more complex territories of polymers and crystalline materials. Generative artificial intelligence (AI) models are reshaping materials discovery by offering new ways to propose structures and predict properties, enabling a paradigm shift from empirical trial-and-error to proactive in silico design [25]. This evolution represents a fundamental change in the approach to materials discovery, moving from heuristic methods to theory-guided synthesis and now to AI-driven generative design [25]. These models learn the underlying probability distribution of material structures from large databases, allowing them to generate novel, plausible configurations without the computationally intensive search steps traditionally required [25]. This review details the specific applications, protocols, and experimental frameworks driving this expansion across three critical material classes: protein-based therapeutics, crystalline materials, and advanced polymer systems.

Application Notes: Generative Models Across Material Classes

Protein Therapeutics and Biological Molecules

Generative AI has demonstrated remarkable success in creating novel biological molecules, particularly for addressing challenging therapeutic targets. The BoltzGen model exemplifies this capability as a unified generative framework for protein structure prediction and design [26].

- Novel Binder Generation: Unlike previous models limited to easy targets, BoltzGen can generate novel protein binders for "undruggable" disease targets from scratch. Its architecture unifies protein design and structure prediction while maintaining state-of-the-art performance through built-in physical constraints and a rigorous evaluation process [26].

- Experimental Validation: The model's effectiveness was confirmed through comprehensive testing on 26 diverse targets in eight wet labs across academia and industry. One industry collaborator, Parabilis Medicines, noted BoltzGen's potential to "accelerate our progress to deliver transformational drugs against major human diseases" [26].

Table 1: Quantitative Performance Metrics for Generative Models in Protein Design

| Model Name | Application Scope | Key Innovation | Experimental Validation |

|---|---|---|---|

| BoltzGen [26] | Protein binder generation for undruggable targets | Unifies structure prediction and protein design; ensures physical constraints | Tested on 26 therapeutically relevant targets; validated in 8 wet labs |

| Generative Models [27] | Small molecule drug candidates | Inverse design based on 3D protein structure and binding pockets | Relies on molecular docking, virtual screening, and binding affinity tests |

Crystalline and Quantum Materials

The application of generative AI to crystalline materials represents one of the most active frontiers, with models now capable of designing structures with exotic quantum properties.

- Crystal Structure Generation: Models like CrystalFlow address the unique challenges of crystalline materials by combining Continuous Normalizing Flows (CNFs) with graph-based equivariant neural networks and symmetry-aware data representations [28]. This architecture efficiently models lattice parameters, atomic coordinates, and atom types, enabling data-efficient learning and high-quality sampling of crystals. CrystalFlow achieves performance comparable to state-of-the-art models while being approximately an order of magnitude more efficient than diffusion-based models [28].

- Targeted Quantum Materials: For quantum materials with specific geometric patterns (e.g., Kagome lattices), standard generative models often fail. The SCIGEN tool addresses this by constraining diffusion models to follow user-defined geometric rules during generation [29]. This approach enabled the generation of over 10 million candidate materials with Archimedean lattices, leading to the synthesis of two previously undiscovered magnetic compounds, TiPdBi and TiPbSb [29]. This demonstrates a critical advance: steering AI models toward materials with high potential impact rather than just high stability.

Table 2: Performance Benchmarks for Crystalline Material Generative Models

| Model/Technique | Architecture | Key Advantage | Reported Output/Performance |

|---|---|---|---|

| CrystalFlow [28] | Flow-based (CNF/CFM) | Data-efficient learning; high sampling efficiency; symmetry-aware | Comparable to state-of-the-art on MP-20 and MPTS-52 benchmarks |

| SCIGEN [29] | Constrained Diffusion Model | Generates materials with specific geometric lattices for quantum properties | Generated >10M candidates; 41% of a 26k sample showed magnetism in simulation |

| Generative AI Taxonomy [25] | VAEs, GANs, Transformers, Flows, Diffusions, LLMs | Conditional generation for target properties (e.g., band gap, superconductivity) | Enables inverse design, moving beyond stability to functional property targeting |

Polymer and Organic Materials

Polymer science benefits from generative models and autonomous systems that navigate the vast design space of possible blends and nanostructures.

- Autonomous Polymer Blending: MIT researchers developed a closed-loop system that uses a genetic algorithm to explore polymer blends, a robotic system to mix and test them, and an iterative feedback loop. This system can identify and test up to 700 new polymer blends per day, autonomously discovering blends that outperform their individual components—for instance, one blend showed an 18% improvement in retained enzymatic activity [30]. This highlights a key insight: optimal blends do not necessarily contain the best individual polymers, underscoring the value of full-formulation-space optimization [30].

- Polymer Nanostructures for Drug Delivery: Controlled "living" polymerization techniques (ATRP, RAFT, ROMP) enable the creation of sophisticated polymer architectures (star polymers, brushes, hyperbranched polymers) for drug delivery. These well-defined nanostructures can be designed with precise size (optimally <50 nm for tumor penetration), surface charge (neutral or slightly negative for better diffusion), and targeting ligands to improve pharmacokinetics and biodistribution via the Enhanced Permeability and Retention (EPR) effect [31].

(Diagram 1: Autonomous discovery workflow for polymers)

Experimental Protocols

Protocol: Autonomous Discovery of Polymer Blends

This protocol details the closed-loop workflow for identifying optimal polymer blends, as demonstrated by MIT researchers [30].

Step 1: Algorithmic Formulation Selection

- Objective: Define target properties (e.g., maximized Retained Enzymatic Activity (REA) for thermal stability).

- Procedure:

- Encode a polymer blend's composition into a digital chromosome for a genetic algorithm.

- Initialize the algorithm with a random population of blends.

- Balance exploration (random search) and exploitation (optimizing previous bests) to select 96 initial blends for testing.

Step 2: Robotic Preparation and Testing

- Equipment: Autonomous liquid handler, pipetting system, heating block, activity assay plates.

- Procedure:

- The robotic system mixes chemicals according to the algorithm's specified compositions.

- The platform prepares polymers for thermal stability testing.

- Measure the REA for each blend using a standardized enzymatic activity assay after heat exposure.

Step 3: Iterative Optimization and Analysis

- Procedure:

- Feed the REA results for all 96 blends back to the genetic algorithm.

- The algorithm uses selection, crossover, and mutation operations to generate a new set of 96 improved blends.

- Repeat Steps 2 and 3 until performance plateaus or the target REA is achieved.

- Synthesize and characterize the final optimal blend(s) using standard analytical techniques.

- Procedure:

Protocol: Generating and Validating Quantum Materials with SCIGEN

This protocol describes the process for generating candidate quantum materials with specific geometric constraints and validating them through simulation and synthesis [29].

Step 1: Constraint Definition and Model Integration

- Objective: Generate crystalline materials with a specific Archimedean lattice (e.g., Kagome).

- Procedure:

- Define the desired geometric structural rules as constraints.

- Integrate the SCIGEN code with a base generative diffusion model (e.g., DiffCSP).

- SCIGEN acts as a filter at each generation step, blocking structures that do not adhere to the defined lattice constraints.

Step 2: High-Throughput Screening and Simulation

- Procedure:

- Generate a large pool of candidate materials (e.g., 10 million) using the SCIGEN-equipped model.

- Screen the generated candidates for basic stability, reducing the pool (e.g., to 1 million).

- Select a smaller, manageable subset (e.g., 26,000) for detailed simulation on high-performance computing systems (e.g., Oak Ridge National Laboratory supercomputers).

- Run detailed simulations (e.g., Density Functional Theory) to understand atomic behavior and predict properties like magnetism.

- Procedure:

Step 3: Synthesis and Experimental Validation

- Procedure:

- Select top candidate materials (e.g., TiPdBi and TiPbSb) from the simulated subset based on predicted properties.

- Synthesize the selected compounds in a materials laboratory.

- Experimentally characterize the synthesized materials using techniques like X-ray diffraction to confirm the crystal structure and magnetic measurements to validate predicted properties.

- Procedure:

(Diagram 2: Constrained generation workflow for quantum materials)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Generative Materials Research

| Tool/Resource | Function/Role | Specific Examples & Notes |

|---|---|---|

| Generative AI Models | Core engine for proposing novel material structures. | BoltzGen: Protein binder design [26]. CrystalFlow: Crystal structure generation [28]. DiffCSP: Base model for crystalline systems, often used with constraining tools [29]. |

| Constraining Tools | Steer generative models to produce structures with specific target features. | SCIGEN: Code that forces models to adhere to user-defined geometric constraints during generation [29]. |

| Autonomous Robotic Platform | Physically executes the formulation, mixing, and testing of AI-generated candidates. | Handles liquid transfers, mixing polymers, and preparing samples for high-throughput testing (e.g., 700 blends/day) [30]. |

| Benchmark Datasets | Standardized data for training and evaluating generative models. | MP-20 & MPTS-52: Curated datasets of crystalline materials used for benchmarking model performance [28]. |

| High-Performance Computing (HPC) | Runs detailed simulations to screen and validate AI-generated candidates. | Used for Density Functional Theory (DFT) calculations to predict stability and properties (e.g., magnetism) before synthesis [29]. |

Architectures in Action: A Deep Dive into Generative Models and Their Real-World Uses

The field of molecular discovery is undergoing a transformation through generative artificial intelligence, which enables the exploration of vast chemical spaces estimated to contain up to 10^60 theoretically feasible compounds [4]. Among the diverse generative architectures, three families have demonstrated particular significance: Variational Autoencoders (VAEs) with their structured latent spaces, Generative Adversarial Networks (GANs) with their adversarial training mechanisms, and Transformers with their sequence processing capabilities. Each architecture offers unique strengths that researchers can leverage for specific aspects of molecular generation, from de novo design to synthesizable pathway planning [8] [18]. The strategic selection and optimization of these models are crucial for addressing the complex challenges in drug discovery and materials science, where generating chemically valid, diverse, and functionally relevant molecules remains a critical challenge [32].

Architectural Strengths and Molecular Applications

Variational Autoencoders: Structured Latent Space Exploration

VAEs excel in molecular design through their probabilistic encoder-decoder architecture that learns continuous latent representations of chemical structures [8] [18]. This structured latent space enables smooth interpolation between molecules and facilitates property-guided optimization through Bayesian optimization techniques [18]. The Transformer Graph VAE (TGVAE) represents a recent advancement that combines graph neural networks with VAE architecture, capturing complex structural relationships within molecules more effectively than string-based representations [32]. This approach addresses common issues like over-smoothing in GNN training and posterior collapse in VAEs, resulting in more robust training and improved generation of chemically valid and diverse molecular structures [32].

Key Applications in Molecular Research:

- Inverse Molecular Design: Generating molecular structures with specific desired properties by navigating the continuous latent space [18]

- Property-Guided Optimization: Using Bayesian optimization in the latent space to identify molecules with optimized properties [18]

- Scaffold Hopping: Discovering structurally novel molecules with similar biological activity by modifying core molecular structures in latent representations [8]

Generative Adversarial Networks: Adversarial Training for Realistic Outputs

GANs employ a competitive training paradigm where a generator network creates synthetic molecules while a discriminator network distinguishes them from real molecular data [8] [33]. This adversarial process pushes the generator toward producing increasingly realistic molecular structures. The RL-MolGAN framework demonstrates how GANs can be adapted for discrete molecular data by incorporating a first-decoder-then-encoder Transformer structure and reinforcement learning with Monte Carlo tree search [34]. To address training instability, RL-MolWGAN incorporates Wasserstein distance and mini-batch discrimination, enhancing stability and performance in molecular generation tasks [34].

Key Applications in Molecular Research:

- High-Fidelity Molecular Generation: Creating novel drug-like molecules with sharp, high-quality structural features [35] [33]

- Property-Optimized Generation: Using reinforcement learning to guide GANs toward molecules with specific chemical properties [34]

- de novo Design: Generating completely novel molecular structures from scratch without scaffold constraints [34]

Transformers: Sequence Modeling for Complex Molecular Representations

Transformers process sequential data through self-attention mechanisms, effectively capturing long-range dependencies in molecular representations such as SMILES strings and synthetic pathways [8] [36]. This architecture has revolutionized natural language processing and has been successfully adapted for molecular generation tasks. The ReaSyn model exemplifies this approach by treating synthetic pathways as reasoning chains using a chain of reaction (CoR) notation, inspired by the chain of thought approach in large language models [36]. This enables the model to reconstruct pathways for synthesizable molecules and project unsynthesizable molecules into synthesizable chemical space.

Key Applications in Molecular Research:

- Retrosynthesis Planning: Predicting synthetic pathways for target molecules through step-by-step reasoning [36]

- Synthesizable Molecular Generation: Creating molecules with feasible synthetic pathways by processing reaction sequences [36]

- Multi-step Molecular Optimization: Guiding molecular modifications through sequential decision-making processes [36]

Quantitative Performance Comparison

Table 1: Performance Metrics of Generative Models on Molecular Tasks

| Model Architecture | Validity Rate (%) | Novelty Rate (%) | Uniqueness Rate (%) | Property Optimization Score |

|---|---|---|---|---|

| Transformer Graph VAE [32] | >90% (Chemical Validity) | High (Previously Unexplored Structures) | High (Diverse Collection) | Improved Property Profiles |

| RL-MolGAN [34] | High on QM9/ZINC | Demonstrated | Demonstrated | Optimized for Desired Chemical Properties |

| ReaSyn (Transformer) [36] | 76.8% (Enamine Retrosynthesis) | N/A | N/A | 0.638 (Graph GA-ReaSyn Optimization) |

Table 2: Architectural Strengths for Molecular Applications

| Model Type | Primary Strength | Optimal Molecular Task | Training Stability | Output Diversity |

|---|---|---|---|---|

| VAEs [35] [18] | Structured Latent Space | Property-Guided Optimization, Scaffold Hopping | Generally Stable | Better Coverage, Less Prone to Mode Collapse |

| GANs [35] [34] | High-Quality Samples | de novo Design, Realistic Structure Generation | Can Be Unstable | Can Experience Mode Collapse |

| Transformers [8] [36] | Sequence Processing & Long-Range Dependencies | Retrosynthesis, Reaction Planning, Multi-step Generation | Stable with Adequate Data | High with Beam Search |

Experimental Protocols and Methodologies

Protocol: Transformer Graph VAE for Molecular Generation

Objective: Generate novel, diverse, and chemically valid molecular structures using graph-based representations [32].

Materials and Reagents:

- Molecular dataset (e.g., ZINC, QM9) with graph representations

- Graph neural network framework (e.g., PyTorch Geometric)

- Chemical validation toolkit (e.g., RDKit)

Methodology:

- Graph Representation: Represent molecules as graphs with atoms as nodes and bonds as edges

- Graph Encoding: Utilize graph neural networks to encode molecular graphs into latent distributions (mean and variance)

- Latent Sampling: Sample latent vectors z from the distribution: z ~ N(μ, σ²)

- Graph Decoding: Employ transformer-based decoder to reconstruct molecular graphs from latent samples

- Loss Optimization: Minimize combined reconstruction loss and KL divergence: L = Lreconstruction + β * LKL

- Validation: Assess chemical validity of generated structures using RDKit

Technical Notes: Address over-smoothing in GNNs through skip connections and posterior collapse in VAEs through appropriate β scheduling [32].

Protocol: RL-MolGAN for Adversarial Molecular Generation

Objective: Generate drug-like molecules with optimized chemical properties using adversarial training with reinforcement learning [34].

Materials and Reagents:

- SMILES strings from molecular databases (e.g., QM9, ZINC)

- Reinforcement learning framework (e.g., OpenAI Gym)

- Property prediction models (e.g., for QED, SA Score)

Methodology:

- Generator Setup: Implement Transformer decoder as generator to produce SMILES strings from random noise

- Discriminator Setup: Implement Transformer encoder as discriminator to evaluate authenticity of generated SMILES

- Adversarial Pre-training: Train generator and discriminator in alternating manner:

- Update discriminator on real and generated samples

- Update generator to fool discriminator

- RL Fine-tuning: Incorporate reinforcement learning with policy gradient: ∇J(θ) = E[Σ∇logπ(at|st) * Rt] where Rt represents reward based on chemical properties

- Monte Carlo Tree Search: Use MCTS to explore promising molecular generation paths

- Stability Enhancement: Apply Wasserstein distance and mini-batch discrimination in RL-MolWGAN variant

Technical Notes: The first-decoder-then-encoder structure helps handle discrete SMILES data, while RL integration helps optimize for specific chemical properties [34].

Protocol: Transformer-based Retrosynthesis with ReaSyn

Objective: Predict synthetic pathways for target molecules using chain-of-reaction reasoning [36].

Materials and Reagents:

- Reaction database with documented synthetic pathways

- Reaction executor (e.g., RDKit)

- Molecular similarity calculation toolkit

Methodology:

- Pathway Representation: Encode synthetic pathways as Chain of Reaction (CoR) sequences using special tokens for reactants, reactions, and products

- Autoregressive Training: Train Transformer model to predict next step in synthetic pathway given previous steps

- Beam Search Generation: Generate multiple pathway candidates using beam search with width k=5-10

- Reinforcement Learning Tuning: Fine-tune with GRPO (Generation-Reinforced Policy Optimization) with reward based on molecular similarity between pathway end-product and input molecule

- Pathway Validation: Execute predicted reactions using reaction executor to validate feasibility

- Multi-path Exploration: Maintain diverse beam search candidates to explore alternative synthetic routes

Technical Notes: The model benefits from intermediate supervision at each synthetic step, providing richer training signals for learning chemical reaction rules [36].

Research Reagent Solutions

Table 3: Essential Research Reagents for Molecular Generation Experiments

| Reagent / Tool | Function | Example Applications |

|---|---|---|

| SMILES Strings [34] | Text-based molecular representation | Sequential molecular generation with Transformer models |

| Molecular Graphs [32] | Graph-structured molecular representation | Capturing structural relationships in Graph VAEs |

| SELFIES [34] | Syntax-guaranteed molecular representation | Ensuring chemical validity in generated structures |

| RDKit [36] | Cheminformatics toolkit | Reaction execution, molecular validation, and descriptor calculation |

| QM9/ZINC Datasets [34] | Curated molecular databases | Model training and benchmarking |

| Property Predictors [18] | QED, SA Score, DRD2 activity models | Providing rewards for reinforcement learning optimization |

Workflow Visualization

VAE Latent Space Optimization

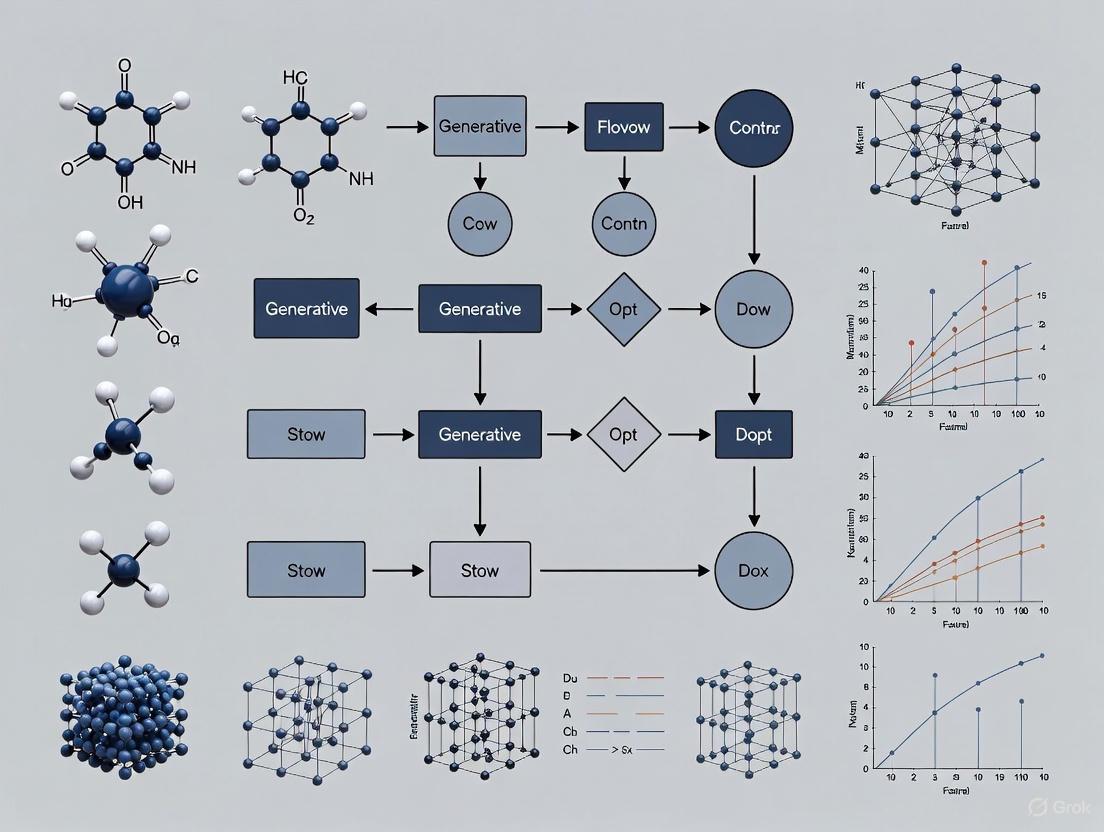

Diagram Title: VAE Latent Space Optimization Workflow

GAN Adversarial Training Process

Diagram Title: GAN Adversarial Training with RL

Transformer Retrosynthesis Planning

Diagram Title: Transformer Retrosynthesis with Beam Search

The strategic application of VAEs, GANs, and Transformers enables researchers to address distinct challenges in molecular generation. VAEs provide exceptional capabilities for property-guided optimization through their structured latent spaces, GANs generate high-fidelity molecular structures through adversarial training, and Transformers excel at complex sequence-based tasks like retrosynthesis planning [32] [34] [36]. The emerging trend of hybrid models—such as Transformer Graph VAEs and GANs enhanced with reinforcement learning—demonstrates how integrating architectural strengths can overcome individual limitations [32] [34]. As generative AI continues to evolve in molecular research, the strategic selection and combination of these architectures will be crucial for accelerating the discovery of novel therapeutics and functional materials.

Application Notes

The application of generative artificial intelligence (AI) is fundamentally reshaping the discovery and design of polymers and crystalline materials. By moving beyond traditional trial-and-error methods, these models enable a targeted inverse design paradigm, where desired properties dictate the search for optimal material structures [37]. This approach is particularly powerful for navigating the vastness of chemical space, allowing researchers to efficiently identify promising candidates for applications ranging from quantum computing to sustainable technologies.

Generative AI for Polymer Inverse Design

In polymer science, generative models are achieving unprecedented control over molecular design. A key advancement involves ensuring the generation of 100% chemically valid polymer structures, a challenge addressed by integrating robust representations like Group SELFIES with state-of-the-art generators such as PolyTAO [38]. This model demonstrates remarkable on-demand design capabilities, allowing researchers to specify target chemical motifs, polymer classes, and properties. For instance, it has been used to generate polyimides with dielectric constants that deviate less than 10% from their target values, as validated by first-principles calculations [38]. This level of precision makes such models ready for integration with high-throughput, self-driving laboratories and industrial synthesis pipelines.

Another compelling application is the inverse design of polymers with specific optical properties. Researchers have developed a predictive platform for designing structural colours in bottlebrush block copolymers (BBCPs) by integrating a strong segregation self-consistent field (SS-SCF) theory model with a multilayer optical framework [39]. This "colour design model" quantitatively links BBCP molecular structures—such as side chain lengths (e.g., (n{s,A}), (n{s,B})) and backbone lengths (e.g., (n{b,A}), (n{b,B}))—to macroscopic colours via the domain spacing ((d)) of the self-assembled nanostructure [39]. The model successfully predicted new polymers exhibiting reversible, nonlinear thermochromism, a property valuable for applications in displays, sensing, and camouflage.

Generative AI for Crystalline Materials Inverse Design

For crystalline materials, generative AI is tackling the complex challenge of crystal structure prediction (CSP), which is essential for discovering materials with tailored electronic, magnetic, and optical properties. State-of-the-art models are increasingly symmetry-aware, explicitly incorporating fundamental crystallographic principles like space group symmetry and periodicity into their architecture. This ensures that generated crystal structures are not only mathematically possible but also chemically realistic and synthesizable [28] [14].

Flow-based models, such as CrystalFlow, offer a computationally efficient approach. CrystalFlow uses Continuous Normalizing Flows and Conditional Flow Matching to model the conditional probability distribution over stable or metastable crystal configurations [28]. It represents a unit cell by its chemical composition (A), fractional atomic coordinates (F), and lattice parameters (L), and can generate novel structures under specific conditions, such as a target chemical composition or external pressure [28]. Notably, CrystalFlow is reported to be approximately an order of magnitude more efficient than diffusion-based models in terms of integration steps, without sacrificing performance on established benchmarks [28].

An alternative strategy for designing exotic quantum materials, such as those exhibiting superconductivity or unique magnetic states, involves steering existing generative models with specific design rules. The SCIGEN (Structural Constraint Integration in GENerative model) tool, for instance, can be applied to popular diffusion models to force them to adhere to user-defined geometric constraints during generation [29]. This technique was used to generate millions of candidate materials with specific Archimedean lattices (e.g., Kagome lattices), from which two previously unknown magnetic compounds, TiPdBi and TiPbSb, were successfully synthesized [29]. This approach directly addresses the bottleneck in discovering materials for transformative technologies like quantum computing.

Table 1: Performance Metrics of Featured Generative AI Models

| Model Name | Material Class | Key Performance Achievement | Validation Method |

|---|---|---|---|

| PolyTAO with Group SELFIES [38] | Polymers | Generated polyimides with dielectric constants <10% deviation from target. | First-principles calculations. |

| Colour Design Model [39] | Bottlebrush Block Copolymers | Accurately predicted domain spacing and structural colour; designed polymers with reversible thermochromism. | Synthesis, DSC, cross-sectional SEM, and reflectance spectroscopy. |

| CrystalFlow [28] | Crystalline Materials | Performance comparable to state-of-the-art models; ~10x more efficient than diffusion models. | Benchmarking on MP-20/MPTS-52 datasets; DFT calculations. |

| SCIGEN with DiffCSP [29] | Crystalline Materials (Quantum) | Generated 10M candidates with target lattices; led to synthesis of 2 new magnetic materials (TiPdBi, TiPbSb). | Simulation (41% showed magnetism), synthesis, and experimental property measurement. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for AI-Driven Materials Discovery

| Item Name | Function/Description | Application Context |

|---|---|---|

| Generative Model Backends (e.g., DiffCSP, PolyTAO) | Core AI engines for generating novel material structures. | Used as a base model for inverse design; can be enhanced with tools like SCIGEN for constrained generation [29] [38]. |

| Symmetry-Aware Architectures | Neural networks that incorporate inductive biases like SE(3) or periodic-E(3) equivariance. | Critical for generating chemically plausible and stable crystal structures [28]. |

| High-Throughput Synthesis Platforms | Automated systems for rapid synthesis of AI-generated candidates. | Enables quick transition from in silico design to physical sample, as seen in the synthesis of TiPdBi and TiPbSb [29]. |

| Self-Consistent Field Theory (SCFT) | A polymer physics model that predicts nanostructures from molecular architectures. | Used to map polymer chain structures to domain spacing in the colour design model [39]. |

| Multilayer Optical Models | Computational models that simulate light interaction with layered nanostructures. | Translates material domain spacing and refractive index into a predicted macroscopic colour [39]. |

| First-Principles Calculations (DFT) | Quantum-mechanical computational methods for predicting material properties. | Used for high-fidelity validation of AI-generated materials' properties (e.g., dielectric constant, stability) [38] [28]. |

Experimental Protocols

Protocol: Inverse Design of Structural Colours in Bottlebrush Block Copolymers

This protocol details the workflow for inversely designing structurally coloured BBCPs, integrating a generative AI-driven model with synthesis and validation [39].

Workflow Diagram

Materials and Equipment

- Monomers: High-purity monomers for the desired BBCP chemistry (e.g., PDMS-b-PEO, PDMS-b-PCL).

- Catalyst: Grubbs' catalyst for ring-opening metathesis polymerisation (ROMP).

- Solvents: Anhydrous toluene for synthesis and solution casting.

- Synthesis Setup: Schlenk line or glovebox for air-sensitive ROMP.

- Annealing Oven: Vacuum oven capable of maintaining 100°C.

- Characterization:

- Spectroscopy: UV-Vis-NIR spectrometer for reflectance measurements.

- Microscopy: Scanning Electron Microscope (SEM) for cross-sectional imaging.

- Thermal Analysis: Differential Scanning Calorimeter (DSC).

Step-by-Step Procedure

Define Target and Extract Domain Spacing:

- Input the target colour (e.g., CIE coordinates) into the inverse design solver.

- The solver uses the integrated multilayer optical model to calculate the required domain spacing ((d)) and refractive index to produce the target colour [39].

Generate BBCP Architecture:

- Using the target domain spacing ((d)) and known monomer parameters (Kuhn length (b), monomer volume (v)), the SS-SCF polymer physics model calculates the backbone and side chain lengths (e.g., (n{b,PDMS}), (n{b,PEO}), (n{s,PDMS}), (n{s,PEO})) needed to achieve the target (d) [39].

Synthesize BBCP:

- Synthesize the designed BBCP via sequential ring-opening metathesis polymerisation (ROMP) under an inert atmosphere to achieve narrow-dispersity, high molecular weight polymers [39].

- Purify the resulting polymer.

Assemble into Photonic Film:

- Dissolve the purified BBCP in toluene (a good solvent) to create a casting solution.

- Cast the solution in a controlled toluene atmosphere to allow slow solvent evaporation, facilitating polymer self-assembly into ordered nanostructures.

- Anneal the cast film in a vacuum oven at 100°C for 8 hours to remove residual solvent and improve structural order [39].

Validate Results:

- Colour Validation: Measure the reflectance spectrum of the film and compare it to the target.

- Structural Validation: Obtain cross-sectional SEM images to confirm the formation of the layered nanostructure and measure the experimental domain spacing ((d_{expt})).

- Thermal Analysis: Use DSC to determine the melting temperature ((T_m)) of crystalline blocks, which is critical for interpreting thermochromic behavior.

Protocol: Constrained Generation of Quantum Materials with SCIGEN

This protocol describes using the SCIGEN tool to steer a generative model for the discovery of crystalline materials with specific quantum-relevant geometries [29].

Workflow Diagram

- Base Generative Model: A diffusion model for crystalline materials, such as DiffCSP.

- SCIGEN Code: The computer code that applies structural constraints during generation [29].

- High-Performance Computing (HPC): Access to supercomputing resources for large-scale generation and subsequent simulation (e.g., Oak Ridge National Laboratory's resources were used in the original study [29]).

- Simulation Software: Software for first-principles calculations (e.g., DFT) to simulate electronic and magnetic properties.

- Synthesis Lab: Access to a solid-state chemistry laboratory for synthesis (e.g., arc melting, solid-state reaction) and characterization (e.g., XRD, magnetometry).

Step-by-Step Procedure

Define Target Geometric Constraint:

- Identify the specific geometric pattern (e.g., Kagome, Lieb, or other Archimedean lattices) known to host the desired quantum phenomena (e.g., quantum spin liquids, flat bands) [29].

Generate Constrained Candidates:

- Apply the SCIGEN tool to a base generative model (e.g., DiffCSP). SCIGEN works by blocking generation steps that do not align with the user-defined structural rules, steering the model to produce structures that conform to the target geometry [29].

- Generate a large pool (e.g., over 10 million) of candidate structures.

Screen for Stability:

- Apply computational stability filters (e.g., based on energy above hull) to the generated pool to identify the most thermodynamically plausible candidates. In the MIT study, 1 million of the 10 million generated materials passed this stage [29].

Simulate Target Properties:

- Perform detailed ab initio simulations on a down-sampled set of stable candidates (e.g., 26,000 materials) to understand their electronic structure and predict properties like magnetism. The MIT study found magnetic behavior in 41% of the simulated subset [29].

Downselect and Synthesize:

- Select the most promising candidates for experimental synthesis. This decision should be based on simulation results, synthetic feasibility, and chemical intuition.

- Synthesize the chosen materials (e.g., TiPdBi and TiPbSb) and characterize their structures and properties to validate the AI model's predictions [29].

The process of discovering novel molecules for medicines and materials is traditionally cumbersome and expensive, consuming vast computational resources and months of human labor to narrow down the enormous space of potential candidates [40]. Generative models for molecular design have emerged as a powerful tool to navigate this complex search space. Within this field, a significant challenge has been the effective integration of different AI paradigms. Large Language Models (LLMs) bring broad domain knowledge and the ability to interpret natural language queries, but they are not natively built to understand the nuanced, non-sequential graph structures of molecules [40]. In contrast, graph-based models are specifically designed for generating and predicting molecular structures but struggle with natural language understanding and can yield results that are difficult to interpret [40]. Multimodal integration, which combines LLMs with graph-based models, creates a unified framework that leverages the strengths of both, promising to streamline the end-to-end process of molecular design from a simple text prompt to a synthesizable candidate [40]. This application note details the protocols, data, and key reagents for implementing such multimodal systems.

Key Multimodal Frameworks and Performance Metrics

Recent research has demonstrated several successful approaches to integrating LLMs with graph models. The following table summarizes the core methodologies and their reported performance.

Table 1: Key Multimodal Frameworks for Molecular Design and Property Prediction

| Framework Name | Core Integration Methodology | Reported Performance Advantages |

|---|---|---|

| Llamole [40] | Uses a base LLM as a gatekeeper to interpret natural language queries and automatically switches to specialized graph modules (diffusion model, neural network, reaction predictor) via trigger tokens. | Improved success ratio for retrosynthetic planning from 5% to 35%; generated molecules better matched user specifications and were more likely to have a valid synthesis plan [40]. |

| MMFRL [41] | Leverages relational learning to enrich embedding initialization during multimodal pre-training. Systematically investigates early, intermediate, and late-stage fusion of graph and other data modalities. | Significantly outperformed baseline methods on MoleculeNet benchmarks with superior accuracy and robustness; intermediate fusion achieved the highest scores in 7 out of 11 tasks [41]. |

| MolLLMKD [42] | Enhances molecular representation with semantic prompts from an LLM, followed by multi-level knowledge distillation between graph neural networks at atom, bond, substructure, and molecule levels. | Achieved state-of-the-art performance on 12 benchmark datasets for molecular property prediction [42]. |

| ExLLM [43] | An LLM-as-optimizer framework that uses a compact, evolving experience snippet for memory, a k-offspring scheme for exploration, and a feedback adapter for multi-objective constraints. | Set a new state-of-the-art on the PMO benchmark with an aggregate score of 19.165 (max 23), ranking first on 17 out of 23 tasks [43]. |

| MolEdit [44] | A knowledge editing framework for Molecule Language Models (MoLMs) that uses a Multi-Expert Knowledge Adapter and Expertise-Aware Editing Switcher to update model knowledge. | Delivered up to 18.8% higher Reliability and 12.0% better Locality than baselines in editing tasks for molecule-caption generation [44]. |

Experimental Protocols for Multimodal Integration