Generative AI for Materials Science: Principles, Models, and Applications in Drug Development

This article provides a comprehensive overview of the principles of generative artificial intelligence (AI) and its transformative impact on materials discovery and design, with a special focus on applications for...

Generative AI for Materials Science: Principles, Models, and Applications in Drug Development

Abstract

This article provides a comprehensive overview of the principles of generative artificial intelligence (AI) and its transformative impact on materials discovery and design, with a special focus on applications for drug development professionals. We explore the foundational concepts of generative models, including Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), Diffusion Models, and Transformer-based architectures, and their specific adaptations for molecular and crystalline materials. The scope extends to methodological applications in inverse design and autonomous laboratories, strategies for overcoming critical challenges like data scarcity and model generalizability, and rigorous validation frameworks comparing AI-generated materials with traditional methods. By synthesizing insights from the latest research, this article serves as an essential resource for researchers and scientists aiming to leverage generative AI to accelerate the development of novel materials and therapeutics.

The Core Principles: How Generative AI Learns the Language of Materials

The discovery of novel materials has long been the cornerstone of technological progress, from the lithium cobalt oxide that powers modern batteries to the advanced composites in aerospace [1]. Historically, this process has been dominated by experiment-driven methods, relying on laborious trial-and-error, human intuition, and phenomenological theories [2] [3]. This approach is not only time-consuming and resource-intensive but is fundamentally limited in its ability to navigate the vastness of chemical space, which is estimated to exceed 10^60 carbon-based molecules alone [2]. Consequently, the timeline from a material's conception to its deployment has often spanned decades.

A profound paradigm shift is now underway, moving from this traditional model to an AI-driven inverse design approach. Inverse design reverses the traditional discovery process: it starts with the desired properties and uses computational models to generate candidate materials that meet those specific criteria [4] [3]. This shift is powered by generative artificial intelligence (AI) models, which learn the complex probability distributions linking material structures to their properties. Once learned, these models can sample from this distribution to propose novel, stable materials with targeted functionalities, dramatically accelerating the discovery pipeline for applications in sustainability, healthcare, and energy innovation [4] [5].

The Evolution of Materials Discovery Paradigms

The journey of materials discovery has evolved through several distinct paradigms, each building upon the previous one while introducing new capabilities and efficiencies.

Table 1: The Evolution of Materials Discovery Paradigms

| Paradigm | Core Approach | Key Tools/Methods | Limitations |

|---|---|---|---|

| Experiment-Driven | Trial-and-error experimentation based on intuition and observation [3]. | Lab synthesis, characterization, serendipitous discovery. | Time-consuming, resource-intensive, limited by human bias and cognitive limits [3]. |

| Theory-Driven | Using theoretical models to predict material behavior and properties [3]. | Density Functional Theory (DFT), molecular dynamics, thermodynamic models. | Computationally expensive, limited to relatively small system sizes, requires expert knowledge [5] [2]. |

| Computation-Driven | High-throughput screening of known or slightly modified material databases [5] [3]. | High-throughput computational screening, combinatorial chemistry. | Fundamentally limited by the size and diversity of the underlying database; cannot propose truly novel structures [6] [1]. |

| AI-Driven Inverse Design | Direct generation of novel material structures conditioned on desired properties [4] [6]. | Generative AI models (e.g., diffusion models, GANs, transformers). | Challenges with data scarcity, model generalizability, and experimental validation [5] [7]. |

The transition to the AI-driven paradigm represents the most significant leap. While computation-driven methods can screen millions of known candidates, they are ultimately constrained by the existing database. As noted in the development of MatterGen, screening-based methods "are still fundamentally limited by the number of known materials," exploring only a tiny fraction of potentially stable inorganic compounds [6]. Generative models break this constraint by exploring the near-infinite space of unknown but plausible materials.

Core Principles of Generative Models for Materials Science

Generative models for materials science are distinguished from discriminative models by their learning objective. Discriminative models learn a mapping function, ( y = f(x) ), to predict a property ( y ) from a structure ( x ). In contrast, generative models learn the underlying probability distribution, ( P(x) ), of the data itself [2]. This allows them to create new samples that resemble the training data.

A critical feature enabling inverse design is the latent space, a lower-dimensional representation that encodes the structure-property relationships of materials. By navigating and sampling from this latent space based on target properties, these models can generate novel, stable material structures that fulfill specific design requirements [2].

Key Generative Model Architectures

Several generative model architectures have been adapted and proven effective for inverse design in materials science, each with unique strengths.

Table 2: Key Generative Model Architectures in Materials Science

| Model Type | Core Principle | Example in Materials Science | Key Application/Strength |

|---|---|---|---|

| Variational Autoencoders (VAEs) | Learn a probabilistic latent space for data generation through an encoder-decoder structure [2]. | CDVAE [6] | Learning a continuous latent representation of material structures. |

| Generative Adversarial Networks (GANs) | Two neural networks (generator and discriminator) are trained adversarially to produce realistic data [2]. | - | Generating realistic molecular structures. |

| Diffusion Models | Generate samples by iteratively denoising data from a simple noise distribution, following a learned reverse process [6] [1]. | MatterGen [6] [1], DiffCSP [6] [8] | State-of-the-art performance in generating stable, diverse 3D crystal structures. |

| Transformers | Use self-attention mechanisms to model long-range dependencies in sequential data [7]. | MatterGPT [2], MEMOS [9] | Property-conditional generation of molecular sequences (e.g., SMILES). |

| Generative Flow Networks (GFlowNets) | Learn a generative policy for sequential decision-making to sample compositional structures with probabilities proportional to a given reward [2]. | Crystal-GFN [2] | Discovering crystal structures with stability rewards. |

Among these, diffusion models have recently shown remarkable success in generating 3D crystal structures. Models like MatterGen employ a customized diffusion process that respects the periodicity and symmetries of crystals, gradually refining atom types, coordinates, and the periodic lattice from a random initial state [6] [1].

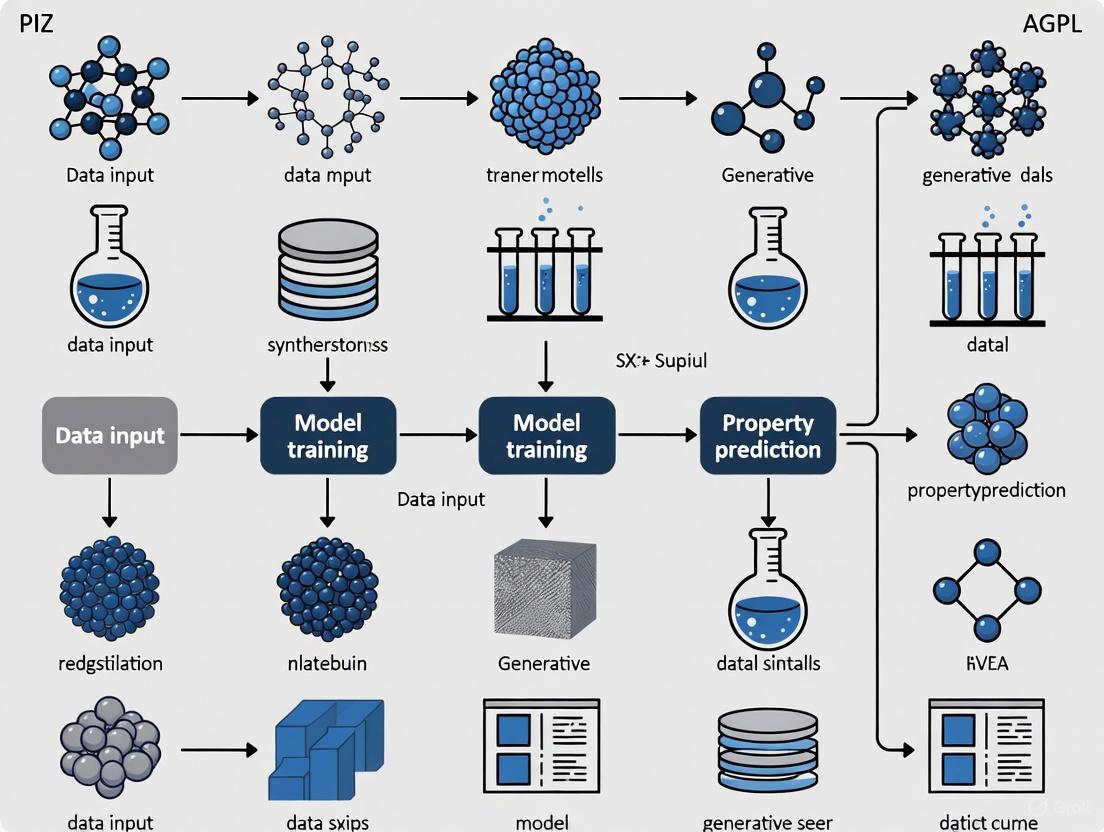

Diagram 1: Simplified workflow of a conditional diffusion model for materials generation. The model learns to reverse a noise process, gradually denoising a random input into a coherent material structure, guided by property constraints.

Leading Models and Experimental Protocols

MatterGen: A Foundational Diffusion Model

MatterGen is a diffusion-based generative model designed for creating stable, diverse inorganic materials across the periodic table [6] [1]. Its architecture is specifically tailored for crystalline materials, with a diffusion process that independently handles atom types, coordinates (respecting periodic boundaries), and the periodic lattice.

Key Experimental Protocol and Evaluation: The base MatterGen model was pretrained on a large and diverse dataset (Alex-MP-20) containing 607,683 stable structures from the Materials Project and Alexandria databases [6]. To evaluate its performance, researchers typically:

- Generate a set of candidate structures (e.g., 1,000-10,000 samples).

- Relax the generated structures using Density Functional Theory (DFT) calculations to find their local energy minimum.

- Assess stability by calculating the energy above the convex hull. A structure is considered stable if this energy is within 0.1 eV per atom of the convex hull defined by a large reference dataset (e.g., Alex-MP-ICSD with 850,384 structures) [6].

- Check for novelty and uniqueness using structure matching algorithms to ensure generated materials are both unique compared to other generated samples and new compared to known databases.

Performance Metrics: In benchmark tests, MatterGen demonstrated a substantial improvement over previous state-of-the-art models (CDVAE and DiffCSP) [6]:

- It more than doubled the percentage of generated materials that were stable, unique, and new (SUN).

- Generated structures were more than ten times closer to their DFT-relaxed ground-truth structures.

- 78% of its generated structures fell below the 0.1 eV per atom stability threshold on the Materials Project convex hull.

- 61% of generated structures were new, not present in the extended reference dataset [6].

Constrained Generation with SCIGEN

For designing materials with exotic quantum properties, standard generative models optimized for stability can struggle. SCIGEN (Structural Constraint Integration in GENerative model) is a tool developed by MIT researchers to address this [8]. It is not a standalone model but a computer code that can be integrated with existing diffusion models like DiffCSP.

Key Experimental Protocol: SCIGEN enables the generation of materials with specific geometric patterns (e.g., Kagome or Lieb lattices) known to host quantum phenomena like spin liquids or flat bands [8]. The workflow is as follows:

- Define Geometric Constraints: The user specifies the desired structural pattern, such as an Archimedean lattice.

- Constrained Generation: SCIGEN is applied to a diffusion model, blocking generation steps that do not align with the structural rules at each iterative denoising step.

- High-Throughput Screening: The model generates a massive pool of candidate materials (e.g., over 10 million).

- Stability Pre-screening: Candidates are filtered for stability, yielding a smaller subset (e.g., one million).

- Detailed Simulation: A smaller sample (e.g., 26,000) undergoes detailed DFT simulations to understand atomic behavior and properties like magnetism.

- Synthesis and Validation: Promising candidates are synthesized and experimentally characterized.

In a proof-of-concept, this protocol led to the synthesis of two previously undiscovered compounds, TiPdBi and TiPbSb, with properties that largely aligned with AI predictions [8].

MEMOS: Inverse Design for Molecular Emitters

For molecular materials, the MEMOS framework demonstrates inverse design for organic narrowband emitters used in displays [9]. MEMOS combines Markov molecular sampling with multi-objective optimization.

Key Experimental Protocol:

- Surrogate Model Training: A dataset of polymer repeat units (represented as SMILES strings) is annotated with molecular descriptors and available experimental properties (e.g., glass transition temperature Tg). Sparse experimental data is supplemented by training highly accurate surrogate models (e.g., Random Forest with R² > 0.90 for Tg) to predict missing values [10].

- Conditional Generation: A property-conditional Transformer model generates chemically valid SMILES strings conditioned on target properties.

- Closed-Loop Optimization: Generated candidates are automatically featurized, evaluated by the surrogate models, and selected through a score-diversity scheme that balances performance with novelty. This creates an iterative sampling, prediction, and refinement loop [9] [10].

- Validation: Successful frameworks have retrieved well-documented experimental structures and identified new emitters with a success rate up to 80% as validated by DFT calculations [9].

The implementation of AI-driven inverse design relies on a suite of computational tools and databases that form the modern materials scientist's toolkit.

Table 3: Key Resources for AI-Driven Materials Discovery

| Resource Name | Type | Function and Relevance | Access |

|---|---|---|---|

| Materials Project (MP) [6] [1] | Database | A core database of computed crystal structures and properties used for training and benchmarking generative models like MatterGen. | Open Access |

| Alexandria [6] [1] | Database | A large-scale materials database used alongside MP to provide a diverse and extensive training dataset for foundational models. | - |

| Inorganic Crystal Structure Database (ICSD) [6] | Database | A comprehensive collection of experimentally determined crystal structures, used as a reference for assessing the novelty of generated materials. | Licensed |

| Density Functional Theory (DFT) | Computational Method | The computational gold standard for relaxing AI-generated structures and verifying their stability and properties. Essential for model training and validation [5] [6]. | Software-dependent |

| Machine Learning Force Fields (MLFF) | Computational Method | Provides the accuracy of ab initio methods at a fraction of the computational cost, enabling large-scale simulations of generated materials [5] [2]. | - |

| SMILES/SELFIES [7] | Representation | String-based representations of molecular structures that enable the use of sequence-based models (e.g., Transformers) for organic molecule generation. | - |

| MatterGen [6] [1] | Generative Model | An open-source, diffusion-based model for generating novel inorganic crystals conditioned on a wide range of property constraints. | Open Source (MIT License) |

| SCIGEN [8] | Generative Tool | A tool for enforcing hard geometric constraints during generation with diffusion models, enabling the discovery of quantum materials. | - |

Challenges and Future Directions

Despite rapid progress, several challenges remain in the field of AI-driven materials discovery. Data scarcity for specific material classes and properties is a significant hurdle, often addressed by training surrogate models or using data augmentation [4] [10]. The synthesizability of AI-proposed materials is another critical concern; a material is only useful if it can be reliably synthesized in the lab. Furthermore, issues of model interpretability, dataset biases, and the computational cost of validation via DFT persist [4] [5].

Future directions focus on overcoming these limitations:

- Multimodal and Foundation Models: Developing models that can process and integrate information from multiple data types (text, images, structures) to build a more comprehensive understanding of materials [7].

- Physics-Informed Architectures: Incorporating physical laws and constraints directly into model architectures to improve generalizability and physical realism [4] [5].

- Closed-Loop Discovery Systems: Fully integrating generative AI, robotic automation, and high-throughput characterization into autonomous laboratories that can propose, synthesize, and test materials with minimal human intervention [5] [10].

- Explainable AI (XAI): Improving the transparency and trustworthiness of models by providing insights into the reasoning behind their predictions and generations [5].

The field of materials discovery is in the midst of a revolutionary paradigm shift, moving from the slow, intuition-guided process of trial-and-error to the targeted, accelerated approach of AI-powered inverse design. Foundational generative models like MatterGen are now capable of directly designing novel, stable inorganic crystals across the periodic table, while tools like SCIGEN and frameworks like MEMOS enable precise design for quantum materials and molecular systems. This shift is underpinned by core principles of generative AI, which learns the probability distribution of material structures to enable sampling from a near-infinite space of possibilities. While challenges remain, the ongoing integration of these models with experimental workflows, multimodal data, and physical knowledge is poised to dramatically accelerate the design of next-generation materials for sustainability, healthcare, and energy innovation.

The discovery and development of new materials are fundamental to advancements in sustainability, healthcare, and energy innovation. Traditional experiment-driven approaches, however, often involve laborious trial-and-error processes, making the timeline from material conception to deployment span decades [2]. Generative artificial intelligence (genAI) presents a paradigm shift, enabling the inverse design of new materials by generating candidate structures with targeted properties. This AI-driven approach enables researchers to navigate the vastness of the chemical space more efficiently than ever before [2] [11]. Among the most impactful architectures for this task are Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), Diffusion Models, and Transformers. Each offers distinct mechanisms for learning the underlying probability distribution of materials data, thus facilitating the creation of novel, plausible structures [2]. This whitepaper provides an in-depth technical guide to these core generative architectures, framing them within the context of principles of generative models for materials science research. It details their operational principles, comparative strengths and weaknesses, and practical experimental protocols for their application, serving as a comprehensive resource for researchers, scientists, and drug development professionals.

Core Architectural Principles

Variational Autoencoders (VAEs)

VAEs are generative models that combine autoencoders with probabilistic techniques to learn a meaningful latent representation of input data [12]. The architecture consists of an encoder that maps input data into a lower-dimensional latent space by producing parameters (mean and variance) of a probability distribution (e.g., Gaussian), and a decoder that reconstructs data from samples taken from this latent space [12] [13]. A critical component is the reparameterization trick, which allows gradients to flow through the stochastic sampling process, enabling model optimization via stochastic gradient descent [12].

In materials science, the latent space of a VAE can be traversed to interpolate between known structures or sample new ones, making it valuable for exploring continuous regions of the materials space [2]. For instance, a Supramolecular VAE (SmVAE) has been applied to design Metal-Organic Frameworks (MOFs) for carbon dioxide separation, successfully identifying top-performing structures by sampling from the learned distribution [11].

Generative Adversarial Networks (GANs)

GANs operate on an adversarial training paradigm where two neural networks, a generator (G) and a discriminator (D), are pitted against each other [12] [14]. The generator creates synthetic data from random noise, aiming to mimic real data. The discriminator evaluates inputs, attempting to distinguish real data from the generator's fakes. This setup forms a two-player minimax game, mathematically captured by the objective function [14]: ( \minG \maxD V(D, G) = \mathbb{E}{x \sim p{data}(x)}[\log D(x)] + \mathbb{E}{z \sim p{z}(z)}[\log (1 - D(G(z)))] )

For materials discovery, GANs can generate high-fidelity structural data. For example, ZeoGAN, a variant of Wasserstein GAN with gradient penalty (WGAN-GP), was used to generate pure silica zeolite structures with targeted methane adsorption properties, producing 121 new crystalline materials [11].

Diffusion Models

Diffusion models generate data through an iterative noising and denoising process [12]. The forward diffusion process systematically adds Gaussian noise to the training data over many steps until the original structure is destroyed. The reverse denoising process, learned by a neural network (typically a U-Net), then gradually removes this noise to reconstruct the data from pure noise [12]. In latent diffusion models, like Stable Diffusion, this process occurs in a lower-dimensional latent space encoded by a VAE, significantly improving computational efficiency [12].

These models excel at producing diverse and high-quality outputs. In materials science, DiffLinker is a diffusion model designed to generate 3D molecular structures, including the linker molecules for MOFs, and has been applied to design materials for CO2 capture [11].

Transformers

Transformers have revolutionized generative AI through the self-attention mechanism, which weighs the importance of different parts of the input data when generating an output [15] [16]. Unlike recurrent networks, transformers process entire sequences in parallel, making them highly efficient and capable of capturing long-range dependencies [16]. In generative tasks, decoder-only transformer architectures are often used to autoregressively produce sequences, such as text, code, or structured representations of materials [16].

For materials science, transformers can operate on sequence-based representations of molecules, such as SELFIES or SMILES strings. Models like MatterGPT and Space Group Informed Transformer learn the syntactic rules of these representations to generate novel and valid material structures from a prompt or by learning the distribution of a training dataset [2].

Comparative Analysis of Architectures

The selection of an appropriate generative architecture depends on the specific requirements of the materials discovery task. The table below provides a structured comparison of GANs, VAEs, Diffusion Models, and Transformers across key performance and operational metrics.

Table 1: Quantitative and Qualitative Comparison of Generative Architectures

| Metric / Characteristic | VAEs [12] [15] | GANs [12] [17] [15] | Diffusion Models [12] [15] | Transformers [15] [16] |

|---|---|---|---|---|

| Output Quality/Realism | Lower; often blurry | High; sharp, realistic | Very High; fine details | State-of-the-art (context-dependent) |

| Training Stability | High; robust training | Low; prone to mode collapse | High; more stable than GANs | High |

| Sample Diversity | Good | Can suffer from mode collapse | Excellent | Excellent |

| Inference Speed | Fast | Fast | Slow (many steps required) | Fast (after training) |

| Computational Cost | Moderate | High (during training) | Very High | Very High |

| Latent Space | Probabilistic, interpretable | Less interpretable | Varies (often in latent space) | Contextual embedding space |

| Key Advantage | Stable training, meaningful latent space | High-quality outputs | High-quality, diverse samples | Captures long-range dependencies |

| Primary Limitation | Blurry outputs | Unstable training, mode collapse | Computationally expensive | High data and compute requirements |

| Materials Science Use Case | Exploring continuous latent spaces, generating initial candidates [11] | Generating high-fidelity crystal structures (e.g., ZeoGAN) [11] | Generating complex 3D molecules (e.g., DiffLinker) [11] | Generating sequence-based material representations (e.g., MatterGPT) [2] |

Experimental Protocols for Materials Discovery

Protocol: Generating Novel Zeolites with a GAN (ZeoGAN)

Objective: To generate novel, stable zeolite structures with high methane adsorption capacity [11].

Workflow Diagram:

- Data Preparation:

- Source: A dataset of 31,713 pure silica zeolite structures.

- Representation: Convert each zeolite structure into two types of 3D voxelized grids:

- Material Grid: Encodes the positions of silicon (Si) and oxygen (O) atoms.

- Energy Grid: Encodes the potential energy of a methane probe molecule at each voxel.

- Model Training:

- Architecture: Train a Wasserstein GAN with Gradient Penalty (WGAN-GP).

- Generator (G): Takes random noise as input and outputs a 3D material grid.

- Discriminator (D): Takes either a real or generated grid and outputs a realism score.

- Training: The generator and discriminator are trained adversarially until the generator produces grids that the discriminator cannot reliably distinguish from real zeolite grids.

- Structure Generation & Post-processing:

- Generation: Sample random noise vectors and pass them through the trained generator to produce new 3D material grids.

- Cleanup: Convert the generated grids into atomic structures. This involves identifying atomic positions and ensuring proper chemical connectivity (e.g., Si-O bonds), which may require automated cleanup steps.

- Validation:

- Crystallographic Validation: Check the uniqueness and novelty of the generated structures by comparing them to known zeolites in databases like the International Zeolite Association (IZA) database or the Pearson's Crystal Database (PCOD).

- Property Validation: Use Grand Canonical Monte Carlo (GCMC) molecular simulations to predict the methane adsorption capacity and heat of adsorption of the newly generated zeolites. Successful designs showed a methane heat of adsorption between 18–22 kJ mol⁻¹ [11].

Protocol: Inverse Design of MOFs with a VAE

Objective: To perform inverse design of Metal-Organic Frameworks (MOFs) optimized for CO₂ separation from natural gas [11].

Workflow Diagram:

- Data Preparation:

- Source: A dataset of ~45,000 MOFs with property data (e.g., CO₂ and CH₄ uptake) and an additional ~2 million MOFs without property labels.

- Representation: Encode MOF structures using the RFcode representation, which describes an MOF in terms of its edges, vertices, and network topology.

- Model Training:

- Architecture: Train a Supramolecular VAE (SmVAE).

- Encoder: Maps an RFcode representation to a probability distribution in a latent space.

- Decoder: Maps a point from the latent space back to an RFcode representation, which can be translated into a full 3D atomistic structure.

- Latent Space Exploration:

- Sampling: Sample points from the latent space, focusing on regions that decode to MOF structures predicted to have high CO₂ capacity and CO₂/CH₄ selectivity.

- Interpolation: Interpolate between known high-performing MOFs in the latent space to generate novel candidates with intermediate or improved properties.

- Validation:

- Structural Validity: Check the chemical stability and synthesizability of the generated MOF structures using molecular dynamics (MD) simulations.

- Performance Validation: Use high-throughput computational screening, such as GCMC simulations, to evaluate the gas adsorption properties of the top-generated candidates. The SmVAE approach identified a top-performing MOF with a CO₂ capacity of 7.55 mol kg⁻¹ and a CO₂/CH₄ selectivity of 16.0 [11].

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential computational tools, data, and software required to implement the experimental protocols described in this whitepaper.

Table 2: Essential Research Reagents for Generative Materials Science

| Reagent / Resource | Type | Function / Application | Example Use Case |

|---|---|---|---|

| Crystallographic Information Files (CIFs) | Data | Standardized file format for representing crystal structures. | Primary data source for training models on crystalline materials like zeolites and MOFs [17]. |

| Pearson's Crystal Database | Database | A comprehensive database of crystal structures. | Source of training data and benchmark for validating the novelty of generated structures [17]. |

| SMILES/SELFIES/InChI | Representation | String-based representations of molecules and chemical compounds. | Encoding molecular structures for transformer-based or autoregressive models [2]. |

| RFcode | Representation | A specific representation for MOFs, describing edges, vertices, and topology. | Used in VAEs like SmVAE for the inverse design of MOFs [11]. |

| Density Functional Theory (DFT) | Simulation | Computational method for modeling electronic structure. | Provides high-accuracy data on material properties for training datasets [2]. |

| Grand Canonical Monte Carlo (GCMC) | Simulation | A molecular simulation technique for adsorption. | Validating the gas adsorption capacity of generated porous materials like MOFs and zeolites [11]. |

| Molecular Dynamics (MD) | Simulation | Models the physical movements of atoms and molecules over time. | Assessing the thermal stability and synthesizability of generated material structures [11]. |

| PyMC3 / Stan | Software | Probabilistic programming languages. | Implementing Bayesian models and variational inference for VAEs [16]. |

| PyTorch / TensorFlow | Software | Open-source machine learning frameworks. | Building, training, and deploying GANs, VAEs, Diffusion Models, and Transformers [16]. |

Generative models—GANs, VAEs, Diffusion Models, and Transformers—are powerful tools poised to accelerate the discovery of new materials. Each architecture offers a unique set of advantages: VAEs provide a stable and interpretable latent space for exploration; GANs can produce high-fidelity structural data; Diffusion Models excel at generating diverse and high-quality 3D molecules; and Transformers leverage sequence-based representations to capture complex, long-range dependencies in material structures [12] [17] [2]. The choice of model involves trade-offs between computational cost, output quality, training stability, and the specific representation of the material. Future progress will likely hinge on the development of hybrid models, improved multi-scale representations, and, crucially, the tight integration of AI-driven generation with robust physical validation and high-throughput experimental synthesis. By adhering to the detailed experimental protocols and leveraging the toolkit outlined in this guide, researchers can harness these generative architectures to navigate the vast chemical space and usher in a new era of inverse design in materials science.

The exploration of chemical space, estimated to exceed 10^60 carbon-based molecules, presents a monumental challenge for materials discovery [2]. Generative artificial intelligence (AI) offers a transformative paradigm, shifting from traditional trial-and-error approaches to inverse design—the process of generating new materials with pre-determined properties [2] [18]. The core of this paradigm lies in the effective representation or encoding of matter. The way a molecule or crystal is translated into a format understandable by machines critically determines the success of any subsequent generative model [7] [19]. Effective representations must not only capture atomic composition but also structural relationships, symmetries, and, in many cases, physical properties. This technical guide examines the dominant strategies for encoding molecular and crystalline structures, framing them within the principles of generative models for materials science. By providing a detailed overview of representations, their associated generative architectures, and experimental protocols, this review serves as a foundational resource for researchers and scientists aiming to harness AI-accelerated materials discovery.

Molecular Representation Strategies

The encoding of molecules for machine learning involves mapping their physical structure into a numerical or symbolic format that preserves key chemical information. The choice of representation involves a trade-off between simplicity, descriptive power, and ease of integration with generative models [19].

Table 1: Key Strategies for Molecular Representation

| Representation | Format | Key Features | Common Generative Models | Key Challenges |

|---|---|---|---|---|

| Sequence-Based | Text String (e.g., SMILES, SELFIES) | Compact, human-readable; captures atomic connectivity and simple bonds. | Transformer, RNN, LSTM [20] [21] | May generate invalid strings; does not explicitly capture 3D geometry [7]. |

| Graph-Based | Graph (Nodes=Atoms, Edges=Bonds) | Explicitly represents topology and bonding; natural for chemistry. | GVAE, GCPN, GANs [20] [21] | Decoding back to a valid structure can be complex [19]. |

| 3D Geometry-Based | Point Cloud / Set of Coordinates (x, y, z, atom type) | Captures precise spatial arrangement and conformation. | Diffusion Models, Equivariant GNNs [22] | Requires robust methods for handling rotational and translational invariance. |

Sequence-Based Encodings

Simplified Molecular-Input Line-Entry System (SMILES) strings are a prevalent sequential representation, using a grammar of characters and symbols to denote atoms, bonds, and branching [7]. While SMILES are compact and easy to generate, their primary limitation is that small changes in the string can lead to large, and often invalid, changes in molecular structure. To address this, the SELFIES (SELF-referencing Embedded Strings) representation was developed, which guarantees 100% validity in generated molecular structures [7]. These representations are naturally processed by sequence-based models like Transformers and Recurrent Neural Networks (RNNs). For instance, MolGPT utilizes the transformer architecture to learn the grammar of SMILES strings, enabling the generation of novel, valid molecules [21].

Graph-Based Encodings

Graph-based representations offer a more structurally intuitive encoding, where atoms are represented as nodes and chemical bonds as edges. This format naturally captures the molecular topology and is less susceptible to the validity issues of SMILES. Models like Graph Convolutional Policy Networks (GCPN) use reinforcement learning to iteratively build molecular graphs by adding atoms and bonds, optimizing for targeted chemical properties [20]. Similarly, GraphAF combines autoregressive flow-based models with graph representations for efficient sampling [20]. The primary challenge with graph-based models is designing a decoder that can reliably map the latent space back to a realistic and synthetically accessible molecular graph.

3D Geometry and Point Cloud Encodings

For tasks where three-dimensional conformation is critical, such as protein-ligand docking or predicting quantum chemical properties, representations that capture spatial coordinates are essential. Point cloud representations treat a molecule as a set of points in 3D space, each point annotated with its atom type and, potentially, other features [22]. Generative models using this representation, particularly Equivariant Graph Neural Networks and Diffusion Models, must account for the necessary symmetries—they should be invariant to rotation and translation, meaning the model's output does not change if the input molecule is rotated or moved. The Point Cloud-based Crystal Diffusion (PCCD) model demonstrates the application of this approach for generating bulk crystal structures [22].

Crystalline Material Representation Strategies

Representing crystalline materials introduces additional complexity due to periodicity and symmetry. A unit cell, the repeating building block of a crystal, is defined by lattice parameters, atomic coordinates, and atom types, often denoted as ( \mathcal{M}=({\bf{A}}, {\bf{F}}, {\bf{L}}) ) [23].

Table 2: Key Strategies for Crystalline Material Representation

| Representation | Format | Key Features | Common Generative Models | Key Challenges |

|---|---|---|---|---|

| Graph-Based | Crystal Graph (Periodic bonds) | Captures local coordination environment; can be made E(3)-equivariant. | CDVAE, DiffCSP, CrystalFlow [23] [18] | Defining periodic boundaries and long-range interactions. |

| String-Based | Tokenized Sequence (e.g., CIF, SLICES) | Enables use of transformer architectures; scalable to large datasets. | MatterGPT, CrystalFormer [23] [7] | Does not explicitly encode 3D symmetries. |

| Text-Guided | Text Embedding + Structure | Conditions generation on text prompts (e.g., composition, crystal system). | Chemeleon [24] | Requires high-quality, aligned text-structure data. |

| Point Cloud | Set of fractional coordinates & lattice | Represents atomic positions directly within the unit cell. | PCCD [22] | Handling symmetry and periodicity. |

Graph-Based Representations with Symmetry Awareness

Graph-based models are highly effective for crystals, where atoms are nodes and edges are formed based on interatomic distances within a cutoff radius, accounting for periodic boundary conditions [23]. A significant advancement in this area is the explicit incorporation of physical symmetries. Models like CrystalFlow use Continuous Normalizing Flows and Equivariant Graph Neural Networks to preserve periodic-E(3) symmetry, which includes invariance to permutations, rotations, and periodic translations [23]. This symmetry-aware design enables more data-efficient learning and the generation of physically realistic crystal structures. For example, the lattice is often parameterized using a rotation-invariant vector to decouple rotational and structural information [23].

String-Based and Multi-Modal Representations

An alternative approach involves tokenizing crystal structures into strings, such as the SLICES format or standardized Crystallographic Information Files (CIFs) [23]. These sequential representations allow the application of powerful transformer architectures, similar to those used in natural language processing. Furthermore, multi-modal models are emerging that bridge different types of data. The Chemeleon model, for instance, uses cross-modal contrastive learning to align text embeddings (from a transformer encoder) with graph embeddings (from an equivariant GNN) [24]. This allows the model to generate crystal structures from textual descriptions, such as a reduced composition or a target crystal system, enabling more intuitive and targeted inverse design.

Experimental Protocols and Benchmarking

Robust experimental protocols are essential for developing and validating generative models for materials science.

Model Training and Conditional Generation

The training of a generative model like CrystalFlow involves learning the conditional probability distribution ( p(\mathbf{x}|\mathbf{y}) ) over stable crystal structures, where ( \mathbf{x} = (F, L) ) represents structural parameters and ( \mathbf{y} = (A, P) ) represents conditioning variables like chemical composition and external pressure [23]. This is achieved using frameworks like Conditional Flow Matching (CFM) [23]. The Chemeleon model employs a two-stage training process: first, a Crystal CLIP module is pre-trained to align text and graph embeddings via contrastive learning; second, a classifier-free guidance denoising diffusion model is trained to generate compositions and structures, conditioned on the text embeddings [24].

Benchmarking and Validation

Evaluating the performance of generative models requires standardized benchmarks and metrics. Common quantitative metrics include:

- Validity: The percentage of generated structures that are chemically plausible and have realistic interatomic distances [18] [24].

- Uniqueness: The proportion of generated structures that are distinct from each other and from structures in the training set [18].

- Structure Matching: The ability to recover ground-truth crystal structures from a dataset [24].

Datasets such as MP-20 and MPTS-52 are widely used for benchmarking crystal structure prediction (CSP) tasks [23]. The ultimate validation often involves density functional theory (DFT) calculations to verify the thermodynamic stability and properties of the newly generated materials, ensuring they reside in low-energy regions of the potential energy surface [23] [22].

The development and application of generative models for materials rely on a suite of computational tools and databases.

Table 3: Key Resources for Generative Materials Science

| Resource Name | Type | Primary Function | Relevance to Generative AI |

|---|---|---|---|

| Materials Project [24] | Database | Repository of computed crystal structures and properties. | Primary source of training data for inorganic crystal generative models. |

| AlphaFold DB [25] | Database | AI-predicted protein structures. | Provides 3D structural data for generative protein design. |

| PubChem, ZINC, ChEMBL [7] | Database | Libraries of small molecules and their bioactivities. | Training data for molecular generative models in drug discovery. |

| Crystal CLIP [24] | Algorithm | Cross-modal contrastive learning for text-structure alignment. | Enables text-guided generation of crystals (e.g., in Chemeleon). |

| Mat2Vec / MatSciBERT [24] | NLP Model | Generates text embeddings from materials science literature. | Provides contextual text representations for multi-modal learning. |

| DFT (VASP, Quantum ESPRESSO) | Software | First-principles electronic structure calculation. | The "gold standard" for validating the stability and properties of generated materials. |

The strategic encoding of molecules and crystals is the cornerstone of modern generative AI for materials science. As the field evolves, future research will focus on developing unified generative frameworks capable of modeling molecules, crystals, and proteins within a single architecture [23] [2]. Key challenges remain, including improving model interpretability, effectively integrating physics-informed constraints, and managing data scarcity for novel material classes [2] [20]. The integration of multi-modal data, such as text and spectroscopy, alongside advances in foundation models pretrained on massive, diverse datasets, promises to further accelerate the inverse design pipeline, leading to faster discoveries in sustainability, healthcare, and energy innovation [7].

The discovery of new materials has historically been a painstaking, trial-and-error process, often spanning decades from conception to deployment. The fundamental challenge lies in navigating the vastness of chemical space, which is estimated to exceed 10^60 for carbon-based molecules alone, making exhaustive experimental exploration impractical [2]. Artificial intelligence, specifically generative models, is revolutionizing this paradigm by enabling inverse design—the process of generating new materials with user-defined, target properties. At the core of this revolution lies the concept of the latent space, a lower-dimensional, compressed mathematical representation that encodes the essential features and relationships of material structures and their properties [2]. By learning the underlying probability distribution P(x) of the training data, generative models construct a structured latent space where meaningful navigation and sampling become possible. This allows researchers to traverse a continuous landscape of material possibilities, moving beyond discrete, known compounds to discover novel, high-performing candidates for applications in sustainability, healthcare, and energy innovation [4] [5].

Core Architectures for Latent Space Learning

Different generative model architectures learn and structure the latent space in distinct ways, each with unique advantages for capturing the complex, continuous spectrum of material properties.

Model Typology and Principles

Table 1: Core Generative Model Architectures for Materials Science

| Model Architecture | Core Learning Principle | Latent Space Structure | Exemplary Applications in Materials |

|---|---|---|---|

| Variational Autoencoders (VAEs) | Learns a probabilistic latent space via an encoder-decoder structure, regularized by a prior distribution (often Gaussian). | Continuous, probabilistic. Encourages smooth interpolation between data points. | Generation of molecular structures and crystalline materials. |

| Generative Adversarial Networks (GANs) | A generator and discriminator are trained adversarially; the generator learns to produce data that fools the discriminator. | Continuous, but can suffer from mode collapse (limited diversity). | Material design and property optimization. |

| Diffusion Models | Iteratively denoises a random signal to generate data, learning a reversal of a fixed noise-adding process. | Highly expressive, capable of capturing complex, multi-modal distributions. | Crystal structure prediction (e.g., DiffCSP, SymmCD) [2]. |

| Transformers | Uses self-attention mechanisms to weigh the importance of different parts of sequential input data. | Structured based on learned sequential dependencies. | Sequence-based generation (e.g., MatterGPT, Space Group Informed Transformer) [2]. |

| Normalizing Flows | Learns an invertible, bijective mapping between the data distribution and a simple base distribution (e.g., Gaussian). | Invertible and explicitly computable, allowing for exact density estimation. | Crystal structure generation (e.g., CrystalFlow) [2]. |

| Generative Flow Networks (GFlowNets) | Learns a stochastic policy to sequentially construct objects with probability proportional to a given reward function. | Dynamically built through a series of actions; geared towards diversity. | Discovering stable crystalline materials (e.g., Crystal-GFN) [2]. |

The Role of Physics-Informed Architectures

A significant frontier in latent space learning is the move beyond purely data-driven approaches to physics-informed generative AI. These models embed fundamental physical constraints—such as crystallographic symmetry, periodicity, and energy conservation—directly into the model's architecture or learning process [26]. For instance, a framework developed at Cornell University ensures that generated crystal structures are not only statistically plausible but also chemically realistic by hard-coding these invariances [26]. This grounding in physical principle ensures that the latent space is not just a statistical abstraction but is structured according to the known laws of materials science, dramatically improving the synthesizability and physical meaningfulness of generated candidates.

Material Representations: The Foundation of the Latent Space

The efficacy of a latent space is fundamentally tied to how the material is initially represented. The choice of representation determines which structural features and properties the model can learn to encode.

Table 2: Key Material Representations for Latent Space Learning

| Representation Type | Description | Strengths | Limitations |

|---|---|---|---|

| Sequence-Based (e.g., SMILES, SELFIES) | Represents a molecular structure as a string of characters, akin to a language. | Simple, compatible with powerful NLP models like Transformers. | Can struggle with capturing 3D conformation and long-range interactions [7]. |

| Graph-Based | Atoms as nodes, chemical bonds as edges in a graph. | Naturally captures topological structure and local atomic environments. | Complexity increases with system size; can be computationally intensive [2]. |

| Voxel-Based | A 3D volumetric grid representing the electron density or atomic positions. | Provides a complete 3D picture of the material. | Computationally expensive; resolution-limited. |

| Physics-Informed | Incorporates known physical invariants or uses descriptors like symmetry functions. | Improves physical realism, generalizability, and data efficiency. | Requires domain expertise to implement effectively [2]. |

The emergence of multimodal models is crucial for creating richer latent spaces. These models can jointly process diverse data types—such as text from scientific papers, molecular structures from images, and tabular property data—to build a more holistic latent representation that aligns more closely with a human expert's understanding [7]. Tools like Plot2Spectra and DePlot further enhance this by extracting structured data from scientific plots and charts, making this information accessible for training [7].

Diagram 1: AI-Driven Materials Discovery Workflow

Experimental Validation: From Latent Space to Laboratory

A critical measure of a latent space's quality is its ability to generate novel, valid, and synthesizable material candidates. This requires rigorous experimental protocols to validate AI-generated hypotheses.

Case Study: Constrained Generation of Quantum Materials with SCIGEN

Objective: To design a generative model capable of producing materials with specific geometric patterns (e.g., Kagome, Lieb lattices) known to give rise to exotic quantum properties like superconductivity and magnetic states [8].

Methodology:

- Model and Tool: The MIT researchers developed SCIGEN (Structural Constraint Integration in GENerative model), a computer code that can be integrated with existing diffusion models (e.g., DiffCSP). SCIGEN acts as a guard, ensuring that at each step of the iterative generation process, the model's output adheres to user-defined geometric structural rules [8].

- Constraint Application: Instead of being limited to generating materials that mirror the stability-optimized distribution of its training data, the SCIGEN-equipped model is steered to produce structures that conform to specific Archimedean lattices—collections of 2D lattice tilings known to host quantum phenomena [8].

- Generation and Screening: The model generated over 10 million candidate materials with the desired lattices. This pool was subsequently screened for stability, yielding ~1 million candidates. A smaller subset of 26,000 was then selected for detailed simulation on supercomputers at Oak Ridge National Laboratory to understand atomic-level behavior [8].

- Synthesis and Characterization: From the simulated candidates, researchers selected and synthesized two previously undiscovered compounds, TiPdBi and TiPbSb. Subsequent experiments confirmed that the AI model's predictions of the materials' magnetic properties largely aligned with the actual measured properties [8].

Implication: This demonstrates that explicitly constraining the generative process within the latent space is a powerful strategy for targeting materials with high-impact, exotic properties that are otherwise rare in known material databases.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Experimental Tools

| Tool / Solution | Type | Function in the Workflow |

|---|---|---|

| Generative AI Models (DiffCSP, GFlowNets) | Software | Core engine for learning the material latent space and generating novel candidate structures [2]. |

| Constraint Algorithms (e.g., SCIGEN) | Software | Steers the generative model to produce structures adhering to specific design rules (geometric, chemical) [8]. |

| High-Throughput Synthesis Platforms | Laboratory Equipment | Enables rapid physical synthesis of AI-predicted materials, such as inkjet or plasma printing systems [2]. |

| High-Performance Computing (HPC) Clusters | Computational Resource | Runs detailed atomic-level simulations (DFT, MD) to screen and validate the properties of generated candidates [8]. |

| Machine-Learned Potentials (MLPs) | Software/Model | Provides a bridge between accurate quantum mechanics and scalable molecular dynamics, enabling faster, larger simulations [2]. |

| Multimodal Data Extraction Tools (Plot2Spectra, DePlot) | Software | Extracts structured materials data from scientific literature, plots, and images to enrich training datasets [7]. |

Diagram 2: Constrained Generation with SCIGEN

The learning of latent spaces represents a fundamental shift in materials science, moving the field from a slow, sequential process of hypothesis and testing to a targeted, generative one. By capturing the continuous spectrum of material properties in a structured, navigable space, AI enables the inverse design of novel candidates for the most pressing technological challenges. Current research is focused on building more powerful foundation models for materials science, developing next-generation representations, and, crucially, improving the physical grounding and interpretability of these models [27] [7].

The future of this field lies in the tight integration of AI with automated experimental workflows, creating closed-loop discovery systems where the AI not only proposes candidates but also directs robotic systems to synthesize and test them, with the results feeding back to refine the latent space [5]. This synergy between computational prediction and physical experimentation, all orchestrated through a deeply understood latent space, promises to dramatically accelerate the journey from material concept to world-changing application.

Foundation models are a class of artificial intelligence models characterized by their training on broad data, typically using self-supervision at scale, which enables them to be adapted to a wide range of downstream tasks [7]. The invention of the transformer architecture in 2017 and its subsequent development into generative pretrained transformer (GPT) models demonstrated a pathway to generalized representations through self-supervised training on large corpora of data [7]. This paradigm decouples the data-hungry task of representation learning from specific downstream applications, allowing target-specific tasks to be accomplished with little or no additional training. In materials science, this approach is revolutionizing how researchers discover and design new materials, enabling a shift from traditional trial-and-error methods toward data-driven inverse design.

The application of foundation models to materials discovery represents a significant advancement over earlier approaches. While traditional expert systems relied on hand-crafted symbolic representations, and later machine learning applications utilized task-specific, hand-crafted features, foundation models learn representations directly from data [7]. This capability is particularly valuable in materials science, where intricate dependencies exist and minute structural details can profoundly influence material properties—a phenomenon known as an "activity cliff" [7]. For instance, in high-temperature cuprate superconductors, critical temperature (Tc) can be dramatically affected by subtle variations in hole-doping levels, requiring models with rich, nuanced understanding.

Core Architectures and Technical Principles

Model Architectures and Their Applications

Foundation models for materials science typically employ either encoder-only or decoder-only architectures, each optimized for different types of downstream tasks. Encoder-only models, drawing from the success of Bidirectional Encoder Representations from Transformers (BERT), focus on understanding and representing input data to generate meaningful representations for further processing or predictions [7]. These are particularly well-suited for property prediction tasks, where the goal is to extract insights from material structures. Decoder-only models are designed to generate new outputs by predicting one token at a time based on given input and previously generated tokens, making them ideal for generating new chemical entities and material structures [7].

The transformer architecture serves as the foundational building block for these models, enabling efficient processing of sequential data through self-attention mechanisms. This capability is crucial for handling diverse material representations, including sequence-based formats like SMILES (Simplified Molecular Input Line Entry System) and SELFIES (Self-Referencing Embedded Strings), graph-based representations, and voxel-based formats [2]. The self-attention mechanism allows the model to weigh the importance of different parts of the input sequence when generating representations or predictions, capturing long-range dependencies that are essential for understanding complex material structures.

Key Generative Model Types

Several specialized generative model architectures have been developed specifically for materials discovery applications:

- Variational Autoencoders (VAEs): Learn a probabilistic latent space for data generation, enabling the creation of novel material structures by sampling from this space [2].

- Generative Adversarial Networks (GANs): Employ a generator-discriminator framework where the generator creates candidate materials while the discriminator evaluates their authenticity [2].

- Diffusion Models: Generate samples by reversing a fixed corruption process using a learned score network [6]. Models like MatterGen implement customized diffusion processes that respect the unique periodic structure and symmetries of crystalline materials [6].

- Recurrent Neural Networks (RNNs) and Transformers: Process sequential data representations of materials, with specialized versions like MatterGPT and Space Group Informed Transformer designed for material-specific applications [2].

- Normalizing Flows: Learn invertible transformations between complex data distributions and simple base distributions, enabling both density estimation and sample generation [2].

- Generative Flow Networks (GFlowNets): Frameworks for generating diverse candidates through a series of actions, with applications such as Crystal-GFN for crystalline material design [2].

Table 1: Comparison of Major Generative Model Types for Materials Science

| Model Type | Key Principle | Strengths | Common Materials Applications |

|---|---|---|---|

| Variational Autoencoders (VAEs) | Learns probabilistic latent space for generation | Stable training, continuous latent space | Molecular generation, crystal structure design |

| Generative Adversarial Networks (GANs) | Adversarial training between generator and discriminator | High-quality sample generation | Molecular design, synthetic data generation |

| Diffusion Models | Reverses corruption process using learned score network | High sample quality, training stability | Crystal structure generation (e.g., MatterGen, DiffCSP) |

| Transformers | Self-attention mechanisms for sequence processing | Captures long-range dependencies, flexible architecture | Sequence-based molecular generation (e.g., MatterGPT) |

| GFlowNets | Generative process as a flow network | Diverse candidate generation | Crystal structure generation (e.g., Crystal-GFN) |

Data Extraction and Preparation Methodologies

The development of effective foundation models for materials science depends critically on access to large, high-quality datasets. Chemical databases such as PubChem, ZINC, and ChEMBL provide structured information commonly used to train chemical foundation models [7]. However, these sources often face limitations in scope, accessibility due to licensing restrictions, dataset size, and biased data sourcing [7]. A significant volume of relevant materials information exists within scientific documents, including research papers, patents, and technical reports, necessitating robust data-extraction models capable of parsing multiple modalities.

Advanced data extraction approaches must handle information embedded in various formats, including text, tables, images, and molecular structures. For text-based extraction, Named Entity Recognition (NER) approaches identify materials and their properties within documents [7]. For visual data, algorithms utilizing Vision Transformers and Graph Neural Networks can identify molecular structures from images in documents [7]. Multimodal approaches that integrate both textual and visual information are particularly valuable for comprehensive data extraction, especially for complex representations such as Markush structures in patents, which encapsulate key patented molecules [7].

Specialized algorithms can extract specific types of materials data more effectively than general-purpose models. For example, Plot2Spectra demonstrates how specialized algorithms can extract data points from spectroscopy plots in scientific literature, enabling large-scale analysis of material properties that would otherwise be inaccessible to text-based models [7]. Similarly, DePlot converts visual representations such as plots and charts into structured tabular data, which can then be processed by large language models [7]. These tools enhance data extraction pipelines by providing domain-specific processing capabilities.

Data Representation Formats

Materials data can be represented in multiple formats, each with distinct advantages for different applications:

- Sequence-based representations: SMILES and SELFIES strings provide compact, text-based encodings of molecular structures that can be processed similarly to natural language [7]. These representations dominate current literature due to the availability of large datasets using these formats.

- Graph-based representations: Model materials as graphs with atoms as nodes and bonds as edges, naturally capturing connectivity and topological information [2].

- Voxel-based representations: Discretize 3D space into volumetric pixels, enabling convolutional processing of spatial structures [2].

- Physics-informed representations: Incorporate domain knowledge such as symmetry constraints, invariance requirements, and physical principles directly into the representation [2].

The choice of representation involves significant tradeoffs. While 2D representations such as SMILES are prevalent due to dataset availability, they omit critical 3D conformational information that strongly influences material properties [7]. An exception exists for inorganic solids like crystals, where property prediction models typically leverage 3D structures through graph-based or primitive cell feature representations [7]. The development of unified representations that capture essential structural information while remaining computationally tractable remains an active research area.

Property Prediction and Inverse Design

Property Prediction from Structure

Property prediction from structure represents a core application of foundation models in materials discovery, offering an alternative to highly approximate initial screening methods and computationally expensive physics-based simulations. Current models predominantly predict properties from 2D molecular representations, although this approach risks omitting critical 3D conformational information [7]. Encoder-only models based on the BERT architecture are commonly used for property prediction tasks, though architectures based on GPT are becoming increasingly prevalent [7].

The performance of property prediction models depends significantly on the quality and diversity of training data, particularly for capturing subtle effects like activity cliffs where minute structural variations cause substantial property changes [7]. Transfer learning approaches, where models pre-trained on large unlabeled datasets are fine-tuned on smaller labeled datasets for specific properties, have demonstrated strong performance across multiple material classes and property types.

Table 2: Quantitative Performance of Selected Foundation Models for Materials Design

| Model Name | Model Type | Key Performance Metrics | Materials Domain |

|---|---|---|---|

| MatterGen | Diffusion model | 78% of generated structures fall below 0.1 eV/atom on MP convex hull; 61% are new structures; >10x closer to local energy minimum than previous models [6] | Inorganic materials across periodic table |

| CDVAE (Baseline) | Variational Autoencoder | Lower performance on stable, unique, new (SUN) materials metric compared to MatterGen [6] | Crystalline materials |

| DiffCSP (Baseline) | Diffusion model | Lower performance on SUN materials metric and RMSD to DFT-relaxed structures compared to MatterGen [6] | Crystal structure prediction |

| LQMs (Large Quantitative Models) | Physics-informed AI | 95% reduction in prediction time for battery lifespan; 35x greater accuracy with 50x less data; reduced catalyst computation time from 6 months to 5 hours [28] | Battery materials, catalysts, alloys |

Inverse Design with Generative Models

Inverse design represents a paradigm shift in materials discovery, directly generating material structures that satisfy target property constraints rather than screening existing databases. Generative models enable this capability by learning the underlying probability distribution of materials data, allowing them to create novel samples that resemble the training set while satisfying desired constraints [2]. A critical feature enabling inverse design is the latent space—a lower-dimensional representation of the structure-properties relationship that facilitates navigation toward regions with desired characteristics [2].

MatterGen exemplifies advancements in inverse design capabilities for inorganic materials. This diffusion-based generative model creates stable, diverse inorganic materials across the periodic table and can be fine-tuned to steer generation toward specific property constraints [6]. The model introduces a diffusion process that generates crystal structures by gradually refining atom types, coordinates, and the periodic lattice, with adapter modules enabling fine-tuning on desired chemical composition, symmetry, and scalar property constraints [6]. Compared to previous generative models, MatterGen more than doubles the percentage of generated stable, unique, and new materials while producing structures more than ten times closer to their DFT local energy minimum [6].

The conditioning abilities of advanced generative models enable inverse design for a much wider range of problems than previously possible. After fine-tuning, MatterGen can generate stable new materials with desired chemistry, symmetry, and mechanical, electronic, and magnetic properties [6]. The model can also design materials satisfying multiple property constraints simultaneously, such as high magnetic density combined with chemical composition having low supply-chain risk [6]. As validation of this approach, one generated material was synthesized with measured property values within 20% of the target [6].

Experimental Protocols and Validation

Model Training and Fine-tuning Protocols

Successful implementation of foundation models for materials discovery requires careful attention to training methodologies. The typical approach follows a two-stage process: pretraining a base model on broad materials data followed by task-specific fine-tuning. For MatterGen, the base model was trained on the Alex-MP-20 dataset comprising 607,683 stable structures with up to 20 atoms recomputed from the Materials Project and Alexandria datasets [6]. This large and diverse dataset enables the model to learn general representations of inorganic materials across the periodic table.

Fine-tuning leverages adapter modules—tunable components injected into each layer of the base model—to alter outputs depending on given property labels [6]. This approach is particularly valuable when labeled datasets are small compared to unlabeled structure datasets, as is common due to the high computational cost of calculating properties. The fine-tuned model is used with classifier-free guidance to steer generation toward target property constraints [6]. This methodology has been successfully applied to multiple constraint types, producing specialized models for generating materials with target chemical composition, symmetry, or specific properties like magnetic density.

For forward design approaches using deep neural networks, active transfer learning with data augmentation enables expansion of reliable prediction domains toward regions with desired properties [29]. This framework gradually updates neural networks by adding relatively sparse, small additional datasets containing materials with incrementally superior properties, improving generalization through iterative refinement [29]. Architectures typically employ unbounded activation functions like leaky ReLU and residual networks with full pre-activation for better generalization performance [29].

Validation Methodologies

Rigorous validation is essential for establishing the reliability of foundation models in materials discovery. Standard validation protocols assess multiple aspects of model performance:

- Stability assessment: Generated structures are typically relaxed using density functional theory (DFT) calculations, with stability measured by the energy above the convex hull defined by reference datasets [6]. A common threshold considers structures stable if their energy per atom after relaxation is within 0.1 eV per atom above the convex hull [6].

- Uniqueness and novelty: Generated structures are compared to existing databases and other generated structures to ensure uniqueness and novelty [6]. Structure matching algorithms account for compositional disorder effects through ordered-disordered structure matching [6].

- Property accuracy: Predicted properties are compared against DFT calculations or experimental measurements to validate accuracy [6].

- Synthesizability: While challenging to assess computationally, the presence of generated structures that match experimentally verified but unseen structures provides evidence of synthesizability [6].

For MatterGen, validation on 1,024 generated structures showed that 78% fell below the 0.1 eV per atom threshold on the Materials Project convex hull, with 95% of generated structures having RMSD below 0.076 Å compared to their DFT-relaxed structures [6]. The model also demonstrated the ability to generate diverse structures without significant saturation even at large scales, with 61% of generated structures being new relative to expanded reference datasets [6].

Diagram 1: MatterGen Workflow: The complete pipeline for generating novel materials using the MatterGen diffusion model, from pretraining through validation.

Table 3: Essential Research Reagents and Computational Resources for Materials Foundation Models

| Resource Category | Specific Tools/Databases | Function and Application | Key Characteristics |

|---|---|---|---|

| Materials Databases | PubChem, ZINC, ChEMBL [7] | Provide structured chemical information for training foundation models | Varying scope and accessibility; licensing restrictions may apply |

| Crystalline Materials Databases | Materials Project (MP), Alexandria, Inorganic Crystal Structure Database (ICSD) [6] | Source of stable crystal structures for training and validation | Contain DFT-computed properties; curated for materials discovery |

| Data Extraction Tools | Named Entity Recognition (NER), Vision Transformers, Graph Neural Networks [7] | Extract materials information from scientific documents and patents | Handle multiple modalities (text, images, tables) |

| Specialized Extraction Algorithms | Plot2Spectra [7], DePlot [7] | Convert visual data (plots, charts) into structured information | Enable large-scale analysis of material properties from literature |

| Material Representations | SMILES, SELFIES [7], Graph-based, Voxel-based [2] | Encode material structures for model processing | Balance informational completeness with computational efficiency |

| Validation Tools | Density Functional Theory (DFT) codes [6] | Validate stability and properties of generated materials | Computational expensive but highly accurate |

| High-Performance Computing | GPU clusters, Cloud computing resources [28] | Enable training of large foundation models | Critical for scaling to complex materials and large datasets |

Implementation Workflow for Materials Discovery

Diagram 2: Implementation Workflow: End-to-end process for implementing foundation models in materials discovery, from data collection to experimental synthesis.

The implementation of foundation models for materials discovery follows a systematic workflow that integrates data, models, and validation. The process begins with comprehensive data collection from diverse sources, including publications, patents, and established materials databases. Multimodal data extraction techniques handle information in various formats, followed by representation in formats suitable for model training. Model development involves selecting appropriate architectures based on the target materials domain and application, followed by self-supervised pretraining on broad materials data. Task-specific fine-tuning with adapter modules enables specialization for particular property constraints or material classes.

In the inverse design phase, researchers define target property constraints encompassing chemical composition, symmetry requirements, and electronic, mechanical, or magnetic properties. Conditional generation techniques, such as classifier-free guidance, steer the model toward regions of the materials space satisfying these constraints. The validation loop provides critical feedback, with computational assessments of stability, property verification, and novelty checks preceding experimental synthesis and testing. This iterative process gradually improves model performance and reliability while expanding the reach of materials design into previously unexplored regions of chemical space.

Foundation models represent a transformative approach to materials discovery, leveraging broad data to enable diverse downstream tasks including property prediction, synthesis planning, and molecular generation. The decoupling of representation learning from specific applications allows these models to build generalizable knowledge that transfers across materials classes and property types. Advances in model architectures, particularly diffusion models like MatterGen, have dramatically improved the stability, diversity, and novelty of generated materials while enabling inverse design across a broad range of property constraints.

Future developments will likely focus on integrating multiple data modalities more seamlessly, improving sample efficiency through better physics incorporation, and developing more sophisticated conditioning mechanisms for complex property combinations. The integration of foundation models with automated experimental systems will further accelerate the materials discovery cycle, creating closed-loop systems that continuously refine models based on experimental feedback. As these technologies mature, foundation models are poised to dramatically accelerate the discovery and development of novel materials for applications in energy storage, catalysis, electronics, and beyond.

From Model to Material: Methodologies and Real-World Applications

The discovery of advanced materials has long been the cornerstone of technological progress, traditionally driven by experimental trial-and-error or theoretical predictions. These approaches, while fruitful, are often characterized by extended development cycles, high resource costs, and reliance on serendipity [30]. The landscape of materials science is now undergoing a radical transformation with the emergence of artificial intelligence (AI)-driven inverse design, moving from experimentally driven approaches toward AI-driven methodologies that realize 'inverse design' capabilities [4]. This paradigm shift enables researchers to start with desired material properties as inputs and efficiently generate candidate structures that meet these specifications, essentially inverting the traditional discovery process [31].

Inverse design represents a fundamental departure from conventional materials development. Where traditional "direct" design computes properties from known structures, inverse design begins with target properties and navigates the vast chemical space to identify corresponding structures [31]. This approach is particularly valuable for addressing urgent global challenges in sustainability, healthcare, and energy innovation, where specific material performance characteristics are required [4]. The core challenge of inverse design lies in establishing accurate mappings from desired performance attributes to structural configurations while adhering to physical constraints—a complex, high-dimensional optimization problem that AI is uniquely positioned to solve [30].

Core Methodologies in AI-Driven Inverse Design

Generative Models for Materials Exploration

Generative AI models form the technological backbone of modern inverse design frameworks, enabling the creation of novel material structures conditioned on target properties. These models learn the underlying probability distribution of existing materials data and can sample from this distribution to propose new candidates with desired characteristics [4]. The most advanced frameworks utilize several architectural approaches:

Diffusion models progressively refine atomic types, coordinates, and periodic lattices through a corruption and denoising process, effectively generating crystal structures by reversing a fixed corruption process [32]. These models have demonstrated remarkable capability in producing stable, novel crystal structures across a wide range of inorganic materials. Property-conditional Transformers generate chemically valid Simplified Molecular-Input Line-Entry System (SMILES) representations or structural parameters conditioned on target properties, serving as powerful sequence-based generators for molecular materials [10]. Conditional Generative Adversarial Networks (cGANs) employ a generator-discriminator architecture that contests during training, enabling the identification of multiple viable solutions for a single target property profile—a critical capability for addressing the fundamental "one-to-many" challenge in inverse design [33].

Active Learning and Closed-Loop Systems