Generative Adversarial Networks for Materials Design: A New Paradigm for Drug Development and Discovery

This article explores the transformative role of Generative Adversarial Networks (GANs) in accelerating materials discovery and design, with a specific focus on applications relevant to researchers and drug development professionals.

Generative Adversarial Networks for Materials Design: A New Paradigm for Drug Development and Discovery

Abstract

This article explores the transformative role of Generative Adversarial Networks (GANs) in accelerating materials discovery and design, with a specific focus on applications relevant to researchers and drug development professionals. We first establish the foundational principles of GANs and their superiority for generating novel, high-quality material structures. The discussion then progresses to specific methodological frameworks and their successful application in designing inorganic crystals, multi-principal element alloys, and architectured materials. The article provides a critical analysis of common challenges such as training instability and mode collapse, alongside proven optimization strategies. Finally, we review rigorous validation protocols and comparative performance metrics against other generative models, synthesizing key takeaways to outline a future roadmap for the integration of GANs in biomedical and clinical research, from novel biomaterials to optimized drug formulations.

What are GANs and Why Are They Revolutionary for Materials Science?

Generative Adversarial Networks (GANs) represent a paradigm shift in generative modeling, defined by a competitive dynamic between two neural networks: the Generator (G) and the Discriminator (D). This adversarial framework is particularly powerful for materials design, where it enables the experience-free and systematic exploration of vast chemical and architectural spaces to discover new materials with extreme or targeted properties [1]. The generator's role is to create new, plausible data instances—such as chemical compositions or material structures—from random noise input. Concurrently, the discriminator acts as a critic, learning to distinguish these generated samples from real instances in the training dataset [2]. This process constitutes a two-player minimax game, where the generator strives to produce increasingly realistic samples to "fool" the discriminator, while the discriminator concurrently improves its ability to tell real and generated data apart. The ultimate goal is to reach an equilibrium where the generator produces highly realistic samples that the discriminator cannot reliably distinguish from genuine data [3]. Within materials science, this adversarial process allows researchers to move beyond traditional, knowledge-dependent design approaches like bioinspiration or Edisonian trial-and-error, instead harnessing machine learning to uncover novel, high-performing material configurations from large-scale simulation data [1].

Core Architectural Components

The Generator: Creative Synthesis of Materials

The generator is the creative component of a GAN. Its fundamental purpose is to learn a mapping from a simple random noise distribution to the complex, high-dimensional data distribution of real materials [2] [3].

Architecture and Input: In its most basic form, the generator takes random noise (typically a vector of random values) as its input. This introduces stochasticity, enabling the GAN to produce a diverse variety of outputs rather than a single deterministic result. Experiments suggest the exact distribution of this noise is not critical, with uniform or Gaussian distributions being common choices [2]. For materials design, this noise vector can be thought of as a latent representation of a material's "DNA," which the network decodes into a full material representation.

Network Structure and Output: The generator is typically a deep neural network that progressively transforms the input noise. For inorganic materials design, as in the MatGAN model, the generator often comprises deconvolutional layers (or transposed convolutional layers). These layers upsample the noise vector into a structured output, such as an 8x85 matrix that encodes the chemical composition of a hypothetical inorganic compound [3]. The final output layer uses an activation function like Sigmoid to produce values in a suitable range (e.g., [0,1]) [3].

Objective and Training Signal: The generator's objective is to produce outputs so convincing that the discriminator classifies them as "real." It is not directly connected to a loss function based on the training data; instead, its loss is derived from the discriminator's performance. The generator is penalized when the discriminator correctly identifies its output as "fake." This loss is then backpropagated through the discriminator and into the generator to update the generator's weights, a process that requires the discriminator's computational graph to be temporarily fixed [2].

The Discriminator: Critical Analysis for Realism

The discriminator functions as an adaptive, learned loss function for the generator. It is a classifier tasked with evaluating the authenticity of a given sample.

Architecture and Input: The discriminator receives batches of data containing a mix of real samples from the training dataset (e.g., known material compositions from the ICSD database) and generated samples from the generator. Its job is to assign a probability that a given sample is real [4].

Network Structure and Output: For material data represented as matrices, the discriminator is often a convolutional neural network (CNN). It processes the input through a series of convolutional layers that extract hierarchical features, culminating in a single scalar output (often via a Sigmoid activation) representing the probability of the input being real [3]. The network may include features like dropout layers to prevent overfitting and improve generalization [4].

Objective and Training Signal: The discriminator's goal is to maximize its own performance by correctly labelling all real and fake samples. Its loss function is minimized when it assigns high scores to real data and low scores to generated data. During discriminator training, only its own weights are updated based on this loss [4].

Table 1: Comparative Summary of Generator and Discriminator Roles

| Feature | Generator (G) | Discriminator (D) |

|---|---|---|

| Primary Role | Creates fake data instances | Distinguishes real from fake data |

| Input | Random noise vector (e.g., 100 dimensions) | Batch of real and/or generated data samples |

| Core Architecture | Deconvolutional Neural Network | Convolutional Neural Network |

| Output | A generated sample (e.g., a material composition matrix) | Probability score (e.g., "real" or "fake") |

| Objective | Fool the discriminator | Correctly identify all samples |

| Training Goal | Maximize D's loss on generated data | Minimize D's own loss |

Quantitative Performance and Variants in Research

The adversarial dynamic, while powerful, can be challenging to stabilize. This has led to the development of GAN variants with modified loss functions and training procedures, which have demonstrated superior performance in scientific applications.

Table 2: Comparative Analysis of GAN Variants in Scientific Applications

| GAN Variant | Core Innovation | Application Example | Reported Performance |

|---|---|---|---|

| Standard GAN (vanilla) | Original minimax game with Jensen-Shannon (JS) divergence loss. | EEG signal denoising [5]. | Better preservation of fine signal details (PSNR: 19.28 dB, Correlation >0.90) [5]. |

| Wasserstein GAN (WGAN) | Replaces JS divergence with Wasserstein distance to improve stability. | Sampling of inorganic chemical compositions (MatGAN) [3]. | Higher novelty (92.53%) and validity (84.5%) for generated materials [3]. |

| WGAN with Gradient Penalty (WGAN-GP) | Enforces Lipschitz constraint via gradient penalty instead of weight clipping. | EEG signal denoising [5]. | Superior training stability and noise suppression (SNR: 14.47 dB) [5]. |

Experimental Protocols for Materials Design

This section outlines a detailed protocol for implementing a GAN to discover novel inorganic materials, based on the MatGAN framework [3].

Phase 1: Data Preparation and Representation

- Data Sourcing: Compile a dataset of known inorganic materials from databases such as the Inorganic Crystal Structure Database (ICSD), the Open Quantum Materials Database (OQMD), or the Materials Project.

- Data Filtering (Optional): Apply explicit chemical rules (e.g., charge neutrality, electronegativity balance) to screen the dataset, which can improve the quality of generated samples.

- Data Representation:

- Identify the 85 most common elements.

- Represent each material as an 8x85 matrix (

T). - Each column corresponds to a specific element (sorted by atomic number).

- Each column is an 8-dimensional one-hot vector encoding the number of atoms (0-7) for that element in the compound [3].

Phase 2: Network Architecture and Training Configuration

- Generator Network (

G):- Input: A random noise vector

zof dimension 100, sampled from a uniform or normal distribution. - Architecture: One fully connected layer followed by seven deconvolutional layers with batch normalization. ReLU activation for all layers except the output.

- Output Layer: Sigmoid activation function to produce an 8x85 matrix with values between 0 and 1 [3].

- Input: A random noise vector

- Discriminator Network (

D):- Input: An 8x85 matrix, either a real sample or a generated one.

- Architecture: Seven convolutional layers with batch normalization, followed by one fully connected layer. ReLU activation for hidden layers.

- Output Layer: A single scalar value without a Sigmoid activation (for WGAN) [3].

- Training Setup:

- Loss Function: Use the Wasserstein GAN loss to mitigate training instability.

- Discriminator Loss: ( \text{Loss}D = E{x:Pg}[fw(x)] - E{x:Pr}[fw(x)] )

- Generator Loss: ( \text{Loss}G = - E{x:Pg}[fw(x)] ) where ( Pr ) is the real data distribution, ( Pg ) is the generator distribution, and ( fw ) is the discriminator (or critic) network [3].

- Optimizer: Use Adam optimizer with a learning rate of 0.0002, beta1 of 0.5, and beta2 of 0.999.

- Training Loop: Train for a predetermined number of epochs (e.g., 50), processing data in mini-batches (e.g., size 128).

- Loss Function: Use the Wasserstein GAN loss to mitigate training instability.

Phase 3: Execution and Evaluation

- Adversarial Training Loop:

- For each training iteration:

- Sample a mini-batch of real data

X_realfrom the dataset. - Sample a mini-batch of random noise

z. - Generate a mini-batch of fake data:

X_fake = G(z). - Update the Discriminator (D): Compute gradients of

Loss_Dwith respect to D's parameters and perform an optimizer step. - Update the Generator (G): Compute gradients of

Loss_Gwith respect to G's parameters and perform an optimizer step. (For standard GAN, D's weights are typically frozen during this step [2]).

- Sample a mini-batch of real data

- For each training iteration:

- Evaluation of Generated Materials:

- Novelty: Calculate the percentage of generated materials not present in the training database.

- Validity: Assess the percentage of generated materials that are chemically valid (e.g., charge-neutral and electronegativity-balanced) without explicit rule enforcement [3].

- Diversity: Ensure the generator produces a wide range of material compositions across different chemical systems.

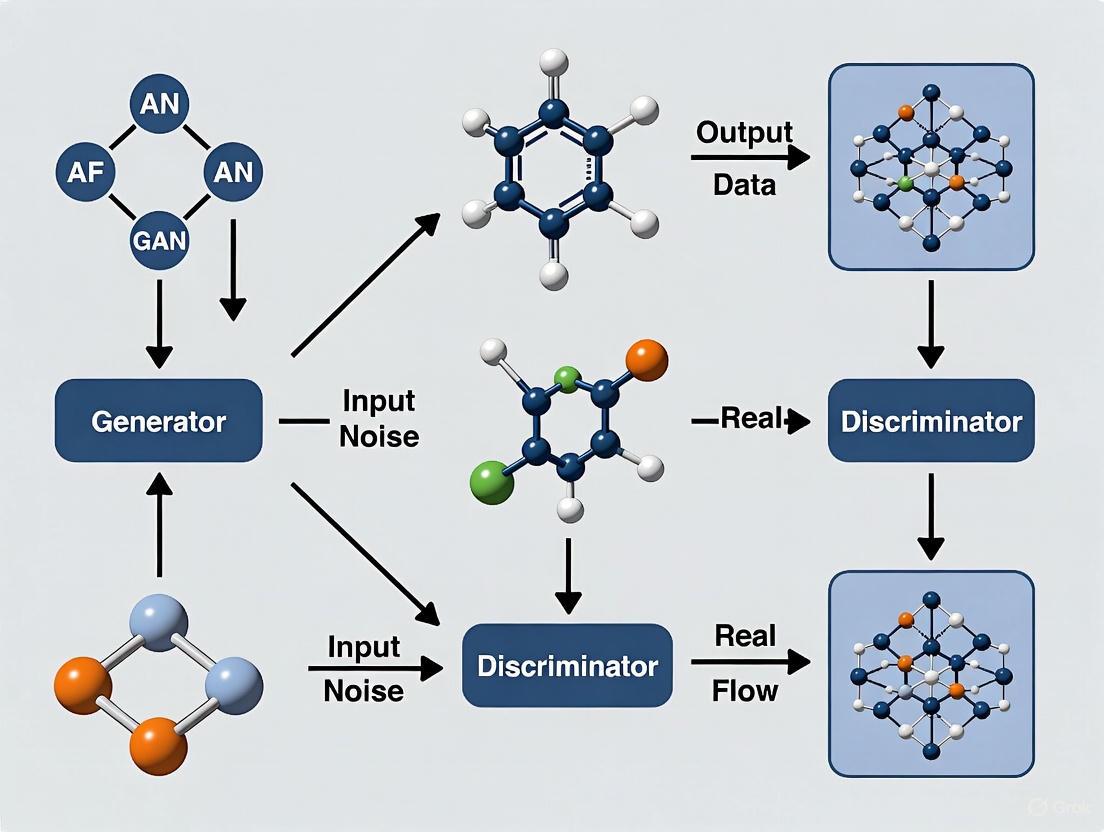

Workflow Visualization

The following diagram illustrates the core adversarial training loop and the flow of data between the generator and discriminator.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Datasets for GAN Research in Materials Design

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| ICSD / OQMD / Materials Project | Database | Provides structured, vetted data on real inorganic materials for training the discriminator and establishing ground truth [3]. |

| Wasserstein Loss (WGAN-GP) | Algorithm / Loss Function | Stabilizes the adversarial training process, preventing mode collapse and providing more meaningful gradients for the generator [5] [3]. |

| Convolutional & Deconvolutional Layers | Network Architecture | Enables efficient learning of spatial and compositional patterns in material representations (e.g., 2D composition matrices) [3]. |

| Adam Optimizer | Optimization Algorithm | An adaptive learning rate optimization algorithm commonly used for training both generator and discriminator networks [4]. |

| Batch Normalization | Training Technique | Applied after convolution/deconvolution layers to stabilize and accelerate the training of deep networks [3]. |

| Autoencoder (AE) | Evaluation Model | Used as an independent tool to assess the feasibility and reconstructability of generated materials, helping to validate GAN performance [3]. |

Generative Adversarial Networks (GANs) represent a transformative shift in materials design methodologies, moving beyond traditional computational and experimental approaches. As a class of generative artificial intelligence, GANs employ an adversarial training framework consisting of two neural networks: a generator that creates synthetic data instances and a discriminator that evaluates their authenticity. This unique architecture enables the exploration of vast, complex chemical spaces far beyond human intuition or conventional simulation capabilities. In materials science, this translates to the rapid discovery and optimization of novel compounds with tailored electronic, thermal, and structural properties, accelerating the development cycle from years to months or even weeks.

The limitations of silicon-based semiconductor technology have become increasingly apparent as demands for higher power density, faster switching frequencies, and greater energy efficiency intensify across sectors including electric vehicles, renewable energy systems, and advanced communications infrastructure. While wide-bandgap semiconductors like gallium nitride (GaN) offer superior performance characteristics, their development through traditional methods faces significant challenges related to defect control, thermal management, and reliability optimization. GANs present a paradigm shift in addressing these challenges by generating diverse molecular candidates, predicting material properties with high accuracy, and optimizing synthetic feasibility—ultimately bridging the gap between theoretical potential and practical application in next-generation materials systems.

Comparative Performance: GANs vs. Traditional Methods

Quantitative Performance Metrics

The superior performance of GAN-based approaches becomes evident when examining key quantitative metrics across multiple applications, from molecular generation to signal denoising. The table below summarizes comparative performance data between GAN frameworks and traditional methodologies:

Table 1: Performance comparison of GAN-based approaches versus traditional methods

| Application Domain | Metric | GAN-Based Approach | Traditional Method | Performance Gain |

|---|---|---|---|---|

| Drug-Target Interaction | Accuracy | 96% [6] | Not Reported | Significant |

| Drug-Target Interaction | Precision | 95% [6] | Not Reported | Significant |

| Drug-Target Interaction | Recall | 94% [6] | Not Reported | Significant |

| Drug-Target Interaction | F1 Score | 94% [6] | Not Reported | Significant |

| EEG Signal Denoising | SNR (dB) | 14.47 (WGAN-GP) [7] | 12.37 (Standard GAN) [7] | ~17% improvement |

| EEG Signal Denoising | PSNR (dB) | 19.28 (Standard GAN) [7] | Not Reported | Superior detail preservation |

| Molecular Generation | Novelty Rate | High [6] | Limited | Enhanced diversity |

Materials-Specific Advantages

In semiconductor materials design, GANs demonstrate particular advantages in addressing the complex challenges of wide-bandgap materials like gallium nitride. Traditional experimental approaches to GaN development face persistent issues including dynamic RON degradation, trapping effects, and gate leakage, which require extensive characterization techniques such as deep-level transient spectroscopy (DLTS), transmission electron microscopy (TEM), and cathodoluminescence mapping [8]. GANs can accelerate the identification of optimal passivation schemes and advanced buffer layers by generating novel molecular structures and predicting their interaction with existing material systems, potentially reducing the iteration cycles needed to suppress trapping phenomena and improve stability under repetitive switching conditions [8].

The generative capability of GANs enables exploration of heterostructure configurations that would be prohibitively time-consuming to investigate experimentally. For GaN-on-Si high-electron-mobility transistors (HEMTs), GANs can model the effects of lattice constant and thermal expansion coefficient mismatches, proposing interface engineering solutions to mitigate stress-induced dislocations [8]. This application is particularly valuable for optimizing alternative substrate configurations using SiC or sapphire, where cost-performance tradeoffs traditionally limit implementation [8].

Experimental Protocols for GAN-Driven Materials Design

Protocol 1: GAN Framework for Molecular Structure Generation

Objective: Generate novel, synthetically feasible molecular structures with target electronic properties for semiconductor applications.

Materials and Reagents:

- BindingDB Database: Provides validated drug-target interaction data for training [6]

- Chemical Computing Software (e.g., Schrödinger, OpenChem): For molecular structure analysis and validation

- High-Performance Computing Cluster: Minimum 4 NVIDIA Tesla V100 GPUs recommended for parallel training

- Python 3.8+ with key libraries: PyTorch 1.9+, RDKit 2020+, DeepChem 2.5+

Procedure:

- Data Preprocessing:

- Curate dataset of known semiconductor materials and their electronic properties (bandgap, electron mobility, thermal stability)

- Convert molecular structures to Simplified Molecular Input Line Entry System (SMILES) representations

- Encode molecular fingerprints as feature vectors using extended-connectivity fingerprints (ECFPs) with 1024-bit length

Generator Network Training:

- Architecture: Multilayer perceptron with three hidden layers (512 units/layer, ReLU activation)

- Input: Random noise vector (128 dimensions) sampled from normal distribution

- Output: Generated molecular structures in SMILES format

- Training: Update parameters to maximize discriminator's false positive rate using Adam optimizer (learning rate: 0.0001)

Discriminator Network Training:

- Architecture: Convolutional neural network with leaky ReLU activation (alpha=0.2)

- Input: Real or generated molecular structures

- Output: Probability of input being from real dataset (sigmoid activation)

- Training: Update parameters to correctly classify real vs. generated structures using Adam optimizer (learning rate: 0.0002)

Adversarial Training Loop:

- Implement minibatch training with batch size of 64

- Train for 50,000 iterations with alternating generator/discriminator updates

- Validate generated structures for synthetic accessibility using SAscore algorithm

- Apply early stopping if generator loss plateaus for 5,000 consecutive iterations

Validation and Analysis:

- Assess diversity of generated structures using Tanimoto similarity index

- Predict electronic properties of promising candidates using DFT calculations

- Select top candidates for experimental synthesis and characterization

Troubleshooting Tips:

- For mode collapse, implement minibatch discrimination or experience replay

- For training instability, switch to Wasserstein GAN with Gradient Penalty (WGAN-GP)

- For invalid molecular structures, add structural validity constraint to generator loss function

Protocol 2: Conditional GAN for Bandgap Engineering

Objective: Generate molecular structures with precisely specified bandgap values for targeted semiconductor applications.

Materials and Reagents:

- Materials Project Database: Provides bandgap data for known inorganic compounds

- VASP Software: For DFT validation of generated structures

- High-Throughput Computation Environment: Slurm-based cluster with 100+ CPU cores

Procedure:

- Conditional Feature Engineering:

- Create bandgap-conditioned latent vectors by concatenating noise vector with target bandgap value

- Normalize bandgap values to zero mean and unit variance to improve training stability

Conditional GAN Architecture:

- Generator: Takes concatenated [noise, bandgap] vector as input

- Discriminator: Takes concatenated [molecule, bandgap] pair as input

- Use spectral normalization in both networks to stabilize training

Training Protocol:

- Two-phase training: Pretrain on general molecular dataset, then fine-tune on semiconductor materials

- Implement progressive growing: Start with simple structures, gradually increase complexity

- Use label smoothing for discriminator to prevent overconfidence

Bandgap-Specific Validation:

- Verify bandgap of generated structures using ensemble of machine learning predictors

- Select candidates with bandgap within 0.1 eV of target value for further analysis

- Assess thermodynamic stability using formation energy calculations

Quality Control:

- Cross-validate bandgap predictions with multiple computational methods (DFT, GW approximation)

- Screen for elemental availability and synthetic accessibility

- Assess phase stability using molecular dynamics simulations

Research Reagent Solutions for GAN Experiments

Table 2: Essential research reagents and computational tools for GAN-driven materials design

| Category | Specific Tool/Resource | Function in GAN Research | Key Features |

|---|---|---|---|

| Software Libraries | PyTorch 1.9+ [6] | Deep learning framework for GAN implementation | Automatic differentiation, GPU acceleration, extensive neural network modules |

| RDKit 2020+ [6] | Cheminformatics for molecular manipulation | SMILES parsing, molecular fingerprinting, substructure search | |

| DeepChem 2.5+ [6] | Drug discovery and materials informatics | Molecular featurization, dataset curation, model evaluation | |

| Computational Resources | NVIDIA Tesla V100/A100 GPUs [6] | Accelerate GAN training through parallel processing | High memory bandwidth, tensor cores for mixed-precision training |

| High-performance computing cluster | Distributed training for large datasets | Slurm workload manager, parallel filesystem, high-speed interconnects | |

| Data Resources | BindingDB [6] | Source of known drug-target interactions | Curated database of protein-ligand interactions with binding affinities |

| Materials Project [8] | Repository of inorganic crystal structures | Calculated material properties including bandgaps, elastic tensors | |

| Validation Tools | VASP [8] | First-principles validation of generated materials | Density functional theory calculations, electronic structure analysis |

| Gaussian 16 | Quantum chemical calculations | Molecular orbital analysis, thermodynamic property prediction |

Workflow Visualization

GAN Framework for Materials Design

Conditional GAN for Bandgap Engineering

Discussion and Future Perspectives

The integration of GANs into materials design represents a fundamental shift in research methodology, enabling unprecedented exploration of chemical space with precision and efficiency. The demonstrated success of GAN frameworks in drug discovery—achieving 96% accuracy in predicting drug-target interactions—provides a compelling precedent for similar applications in materials informatics [6]. The unique adversarial training process allows researchers to navigate complex multi-objective optimization landscapes, balancing competing priorities such as electronic performance, thermal stability, and synthetic feasibility.

Future developments in GAN architectures promise even greater capabilities for materials design. The emergence of hybrid models combining GANs with variational autoencoders (VAEs) offers enhanced control over molecular generation while maintaining structural diversity [6]. As demonstrated in EEG signal processing applications, Wasserstein GAN with Gradient Penalty (WGAN-GP) provides improved training stability—a critical factor for reliable materials discovery pipelines [7]. The ongoing refinement of 3D-aware GANs will further enhance the capacity to model complex crystal structures and interface interactions essential for next-generation semiconductor devices.

For research teams embarking on GAN-driven materials design, the strategic integration of computational and experimental validation remains paramount. While GANs excel at exploring vast chemical spaces and identifying promising candidates, traditional characterization techniques—including transmission electron microscopy, deep-level transient spectroscopy, and cathodoluminescence mapping—provide essential validation of predicted material properties [8]. This synergistic approach, combining generative exploration with rigorous experimental verification, will ultimately accelerate the development of advanced materials beyond the fundamental limitations of silicon-based technologies.

Generative Adversarial Networks (GANs) represent a powerful class of deep learning models that have emerged as a transformative tool for materials design and drug discovery. The core capability of GANs lies in their ability to learn the complex, implicit rules of chemical composition directly from data, without requiring explicit programming of chemical principles. This data-driven approach allows for the exploration of vast chemical spaces far beyond the confines of known compounds, accelerating the discovery of novel materials with tailored properties. By mastering the underlying distribution of chemical structures, GANs can generate hypothetical molecules and materials that adhere to fundamental chemical validity while optimizing for specific functional characteristics, thereby bridging the gap between data-driven exploration and scientific discovery [9].

The significance of this capability is underscored by the critical role new materials play in global technological and economic progress. Traditional materials discovery has often relied on domain knowledge and trial-and-error approaches, which struggle to efficiently navigate the immense design space of possible chemical compounds [10] [11]. GANs, in contrast, provide a mechanism to autonomously explore this space, learning the subtle relationships between atomic arrangement, bonding, and macroscopic properties. This paradigm shift is prioritized in global strategies that leverage big data and artificial intelligence to accelerate materials advancement, with frameworks like AI4Materials (AI4Mater) formally integrating these approaches into Materials Science and Engineering [11].

The Fundamental Mechanics of GANs in Chemical Discovery

Adversarial Training Framework

A standard GAN consists of two neural networks locked in a competitive game: the Generator (G) and the Discriminator (D). The generator aims to produce realistic synthetic data, while the discriminator learns to distinguish between real data (from a training dataset) and fake data (from the generator). This adversarial process drives both networks to improve iteratively. In the context of chemical composition, the generator learns to create plausible molecular structures, while the discriminator hones its ability to identify violations of chemical rules or stability principles [9]. Through this dynamic, the generator internalizes the implicit rules of what constitutes a valid and stable material, effectively learning chemistry from data.

Overcoming Challenges with Discrete Molecular Representations

A significant hurdle in applying GANs to chemistry is the discrete nature of common molecular representations, such as Simplified Molecular Input Line Entry System (SMILES) strings. Traditional GANs are designed for continuous data (like images), where gradients can flow smoothly to guide the generator's learning. With discrete data like text or SMILES strings, this gradient-based optimization becomes less effective, often leading to unstable training and chemically invalid outputs [12].

Innovative architectures have been developed to address this. The RL-MolGAN framework, for instance, introduces a Transformer-based discrete GAN. It employs a "first-decoder-then-encoder" structure, where a Transformer decoder acts as the generator to produce SMILES strings, and a Transformer encoder acts as the discriminator. This design is particularly effective at capturing the global dependencies and long-range relationships within a SMILES string, which is crucial for ensuring the generated molecule is structurally coherent and chemically valid [12]. To further stabilize training for discrete data, RL-MolGAN integrates Reinforcement Learning (RL) and Monte Carlo Tree Search (MCTS). The RL component helps optimize the generated SMILES strings for desired chemical properties, while MCTS assists in navigating the discrete action space of selecting the next character in a SMILES sequence [12].

Another advanced variant, RL-MolWGAN, incorporates the Wasserstein distance and mini-batch discrimination. The Wasserstein distance provides a more stable and meaningful measure of the difference between the real and generated data distributions, which helps to overcome common training issues like mode collapse. Mini-batch discrimination allows the discriminator to look at multiple data samples simultaneously, helping the generator to produce more diverse outputs [12].

Quantitative Data on GAN Performance in Materials Science

The application of GANs in materials science has yielded substantial quantitative results, demonstrating their efficacy in generating novel, valid, and high-performing chemical structures. The following tables summarize key performance metrics from recent groundbreaking studies.

Table 1: Performance Metrics of GANs in Molecular Generation

| Study / Model | Dataset | Key Metric | Reported Performance |

|---|---|---|---|

| GAN for Electrocatalysts [10] | Materials Project (>5,000 compounds) | Uniqueness of Generated Candidates | 99.94% (400,000 unique candidates) |

| Chemical Validity & Stability | 70% of generated samples met criteria | ||

| RL-MolGAN / RL-MolWGAN [12] | QM9, ZINC | Generation of Drug-like Molecules | Effective generation validated on standard benchmarks |

| GAN with Adaptive Training [9] | QM9, ZINC (≤20 atoms) | Novel Molecule Production | Order of magnitude increase (~10^5 to ~10^6) vs. traditional GAN |

Table 2: Impact of Adaptive Training Data Strategies on Molecular Generation [9]

| Training Strategy | Description | Effect on Novel Molecule Generation | Effect on High-Performing Molecules |

|---|---|---|---|

| Control (Fixed Data) | Traditional GAN training with a static dataset | Rapidly plateaus; limited exploration | Limited to properties in original data |

| Random Replacement | Generated molecules randomly replace training data | Continuous production of novel molecules | Moderate increase |

| Guided Replacement (e.g., Drug-likeness) | Only generated molecules with improved properties replace training data | Continuous production, focused exploration | Drastic increase in top performers (e.g., drug-likeness >0.6) |

| Guided Replacement + Recombination | Guided replacement with crossover between molecules | Highest absolute number of novel molecules | Largest quantity of high-performing molecules |

Detailed Experimental Protocols

Protocol 1: GAN-Driven Discovery of Non-Noble Metallic Electrocatalysts

This protocol outlines the methodology for using a GAN to discover new electrocatalysts for glycerol electroreduction, as detailed in Electrochimica Acta [10].

1. Data Curation and Preparation:

- Source: Curate a dataset of over 5,000 thermodynamically stable mono-, bi-, and trimetallic compounds from the Materials Project (MP) database.

- Objective: The dataset should encompass a wide range of known stable materials to teach the GAN the implicit rules of thermodynamic stability and chemical composition.

2. GAN Training:

- Architecture: Employ a standard or customized GAN architecture.

- Learning Goal: The generator learns to produce hypothetical material compositions that are chemically valid and thermodynamically feasible, as judged by the discriminator.

- Output: The trained model generates 400,000+ hypothetical candidate materials not present in the original training dataset.

3. Conditional Screening and Validation:

- Process: Apply a filtering process to the generated candidates based on target electrochemical properties (e.g., selectivity for the target reaction, suppression of competing reactions like hydrogen evolution).

- Identification: This screening identifies a shortlist of top candidate systems (e.g., Co-Zr-X trimetallics) for further theoretical or experimental investigation.

The workflow for this protocol is visualized below:

Protocol 2: Adaptive Training with Replacement and Recombination

This protocol, based on research published in the Journal of Cheminformatics, uses an evolving training dataset to enhance exploration and prevent mode collapse [9].

1. Initialization:

- Start with an initial training dataset (e.g., from QM9 or ZINC).

- Train a GAN (e.g., based on SMILES strings) for a fixed number of epochs.

2. Collection and Evaluation:

- Collect valid molecules generated by the GAN during a training interval.

- Evaluate these molecules based on a target fitness function (e.g., Quantitative Estimate of Drug-likeness - QED).

3. Training Data Update (Replacement):

- Random Strategy: Replace a random subset of the training data with the newly generated molecules.

- Guided Strategy: Replace a subset of the training data with the top-performing generated molecules (e.g., those with the highest QED scores).

- Control Strategy: For comparison, keep the training data fixed.

4. Recombination (Optional Enhancement):

- Apply a crossover operation (genetic algorithm-style) between a portion of the generated molecules and samples from the current training data to create hybrid molecules.

- These novel hybrid molecules can then be introduced into the training dataset during the replacement step.

5. Iterative Training:

- Resume GAN training using the updated, adaptive dataset.

- Repeat steps 2-4 for multiple cycles to continuously guide the exploration of chemical space toward regions with desired properties.

The workflow for this adaptive protocol is as follows:

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key computational "reagents" and resources essential for conducting GAN-driven materials design research.

Table 3: Key Research Reagents and Resources for GAN-Driven Materials Design

| Resource Name / Type | Function / Purpose | Example Sources / Tools |

|---|---|---|

| Stable Materials Databases | Serves as the foundational training data; teaches the GAN implicit rules of chemical stability and composition. | Materials Project (MP) Database [10] |

| Drug-like Molecule Databases | Provides datasets of known drug-like molecules for training GANs in pharmaceutical discovery. | QM9, ZINC [12] [9] |

| Molecular Representation | A text-based notation for molecules that allows them to be processed by NLP-based deep learning models like Transformers. | SMILES (Simplified Molecular Input Line Entry System) [12] |

| Chemical Validity & Property Calculation | Software toolkits used to check the validity of generated molecules and compute their chemical properties for screening and fitness evaluation. | RDKit [9] |

| Fitness Function Metrics | Quantitative scores used to guide the generative process and evaluate the quality of generated candidates. | Quantitative Estimate of Drug-likeness (QED), Synthesizability, Solubility [9] |

| Conditional Screening Criteria | Pre-defined target properties used to filter the large set of generated candidates to a manageable number of promising leads. | Suppression of Hydrogen Evolution Reaction (HER), Selective Glycerol Electroreduction [10] |

GANs have fundamentally altered the approach to materials and molecular discovery by providing a robust framework for learning the implicit rules of chemical composition directly from data. Through advanced architectures like Transformer-based GANs and training strategies incorporating reinforcement learning and adaptive data, these models can efficiently navigate the vastness of chemical space. They generate novel, valid, and high-performing candidates—from non-noble metallic electrocatalysts to drug-like molecules—at a scale and speed unattainable by traditional methods. As materials data infrastructures grow and AI techniques become more deeply integrated into the scientific workflow, GANs are poised to remain a cornerstone technology, accelerating the sustainable development and application of new materials for the challenges of the future.

Latent Space, Invertible Representations, and Conditional Generation

Generative Adversarial Networks (GANs) have emerged as a transformative tool for the inverse design of advanced materials. By learning the complex, high-dimensional relationships between a material's composition, its processing parameters, and its resulting properties, GANs can accelerate the discovery of new functional materials. Three core concepts underpin this capability: the exploration of the latent space, a compressed representation of the design space; the use of invertible representations to map real-world properties back to potential designs; and conditional generation to create materials tailored to specific property targets. This Application Note details the protocols and frameworks for applying these concepts to materials design, with a specific case study on high-performance shape memory alloys (SMAs) [13].

Key Concepts and Theoretical Framework

Latent Space in Materials Design

The latent space in a deep generative model is a lower-dimensional, continuous vector space where each point corresponds to a possible material design (e.g., a specific composition and processing history). Navigating this space allows for the efficient exploration of a vast design domain without the need for costly simulations or experiments for every potential candidate [14]. In mechanical metamaterials, for instance, the Euclidean distance between latent vectors has been shown to correlate strongly with the geometric and mechanical similarity of the resulting microstructures, enabling controlled interpolation and the design of functionally graded materials [14].

Invertible Representations for Inverse Problems

A significant challenge in using standard GANs is the "inverse problem"—finding a latent code z that generates a material design with a specific set of properties. Invertible representations address this by enabling a bidirectional mapping between the latent space and the design space. Frameworks like InvGAN (Invertible GAN) are designed to be agnostic to dataset and architecture, allowing real-world data (like experimental results) to be embedded back into the latent space. This capability is crucial for tasks such as design refinement and ensuring that generated designs are physically realizable [15] [16]. Invertible models mitigate "representation error," ensuring that the generative model can accurately represent a wide range of potential material designs, including those with rare features [17].

Conditional Generation for Targeted Design

Conditional GANs (cGANs) provide a mechanism for targeted design by conditioning the generation process on auxiliary information, such as a desired material property [18] [19]. This allows researchers to directly specify a target (e.g., a martensite start temperature above 400°C) and generate candidate compositions and processing parameters that are likely to achieve it. This approach is more direct than generating random candidates and filtering them, as it steers the search toward promising regions of the design space from the outset [13].

Application Note: Generative Inversion for Shape Memory Alloys

Experimental Protocol: GAN Inversion for Property-Targeted Design

The following workflow, illustrated in the diagram below, was successfully used to discover novel NiTi-based shape memory alloys with high transformation temperatures and large mechanical work output [13].

Diagram Title: GAN Inversion Workflow for Alloy Design

Step-by-Step Protocol:

Model Pretraining:

- Train a WGAN-GP model on a dataset of known composition–processing–property pairs. The generator (

G) maps a 10-dimensional latent vectorzto a 19-dimensional design vectorx(10 composition elements, 9 processing parameters) [13]. - Train an Artificial Neural Network (ANN) surrogate model as the property predictor (

f). This network maps the design vectorxto predicted propertiesy_pred(e.g., martensite start temperatureM_s, mechanical work output). The loss function should incorporate domain-knowledge constraints for physical consistency (Eq. 4 in [13]).

- Train a WGAN-GP model on a dataset of known composition–processing–property pairs. The generator (

Inverse Design via Latent Space Optimization:

- Define the target properties (

y_target) based on the design objectives (e.g.,M_s > 400°C, work output > 9 J/cm³). - Initialize a random latent vector

zfrom a Gaussian distribution. - Enter the optimization loop:

a. Generate a candidate design:

x_candidate = G(z). b. Predict its properties:y_pred = f(x_candidate). c. Calculate the loss (L) as the difference between target and predicted properties (e.g., Mean Squared Error):L = ||y_target - y_pred||². d. Compute the gradient of the loss with respect to the latent vector:dL/dz. e. Update the latent vectorzusing the Adam optimizer to minimize the loss. - Repeat the loop until the loss converges below a set threshold or for a fixed number of iterations.

- The final optimized latent vector

z*is decoded by the generator to produce the final candidate alloy design (x_final).

- Define the target properties (

Experimental Validation and Performance Data

The generative inversion framework was validated through the synthesis and characterization of five designed NiTi-based SMAs. The key results for the best-performing alloy are summarized below.

Table 1: Experimental Performance of Generatively Designed NiTi-based Alloy

| Property | Value | Significance |

|---|---|---|

| Composition | Ni~49.8~Ti~26.4~Hf~18.6~Zr~5.2~ (at.%) | A complex, multi-component alloy discovered by the model [13]. |

| Martensite Start Temp. (M_s) | 404 °C | Significantly outperforms existing NiTi alloys, enabling ultra-high-temperature actuation [13]. |

| Mechanical Work Output | 9.9 J/cm³ | Large work output indicates high functional performance for actuators [13]. |

| Transformation Enthalpy | 43 J/g | Confirms a strong, reversible phase transformation [13]. |

| Thermal Hysteresis | 29 °C | Relatively low hysteresis, which is beneficial for actuation efficiency and fatigue life [13]. |

Table 2: Key Phases and Microstructural Features in Designed Alloy

| Feature | Role in Performance Enhancement |

|---|---|

| Pronounced Transformation Volume Change | Contributed to the large mechanical work output and high transformation enthalpy [13]. |

| Finely Dispersed Ti₂Ni-type Precipitates | Strengthened the matrix and influenced the transformation characteristics [13]. |

| Sluggish Zr/Hf Diffusion | Led to a fine, stable precipitate distribution during processing [13]. |

| Semi-coherent Interfaces & Localized Strain Fields | Optimized the precipitate-matrix interaction, facilitating the reversible transformation [13]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a GAN-Driven Materials Discovery Pipeline

| Component / "Reagent" | Function in the Workflow |

|---|---|

| Curated Materials Dataset | The foundational "reagent." A high-quality dataset of composition, processing, and property pairs is essential for training stable and reliable generative and predictive models [13] [20]. |

| Pretrained Generator (G) | Acts as a prior for realistic designs. It encapsulates the learned distribution of plausible material compositions and processing parameters, ensuring generated candidates are synthesizable [13] [17]. |

| Property Predictor (f) | The fast, surrogate "assay." This ANN model provides rapid, differentiable property predictions during the inversion loop, replacing slow, computationally expensive simulations like DFT [13] [20]. |

| Differentiable Loss Function | The "objective function reagent." It quantifies the design goal. For multi-objective optimization, it can be a weighted sum of individual property losses (e.g., for M_s, work output, and hysteresis) [13]. |

| Latent Vector (z) | The "design DNA." A low-dimensional vector that is the manipulable representation of a material design within the latent space. Optimization operates on this vector [13] [14]. |

| Gradient-Based Optimizer (e.g., Adam) | The "search reagent." It performs the iterative update of the latent vector z by following the gradient of the loss function to efficiently locate optimal designs [13]. |

Advanced Protocol: Integrating Invertible Models

To enhance the robustness of the inverse design process, particularly for handling out-of-distribution or rare material designs, integrating a fully invertible framework is recommended. The following diagram contrasts the standard GAN inversion with an invertible model approach.

Diagram Title: Standard vs. Invertible GAN Framework

Protocol for an Invertible Framework:

- Model Selection and Training:

- Application to Inverse Design:

- Design Refinement: Encode a known, sub-optimal material (

x_real) into the latent space (z). Perform a local search in the latent space aroundzto find a nearby pointz'that, when decoded, yields a material with improved properties [16]. - Hybrid Design: Encode two different material designs (

x₁andx₂) to get their latent codes (z₁andz₂). Interpolate between them to generate novel designs (G(α*z₁ + (1-α)*z₂)) that possess a blend of characteristics from both parent materials [14]. This is particularly useful for designing functionally graded materials.

- Design Refinement: Encode a known, sub-optimal material (

- Advantage: This approach mitigates representation error and dataset bias, ensuring that a wider range of plausible material designs, including those with rare features, can be accurately represented and manipulated within the latent space [17].

Frameworks and Breakthroughs: Applying GANs to Real-World Materials Design

Inverse design represents a paradigm shift in materials discovery, moving away from traditional trial-and-error methods toward a targeted approach that begins with desired properties and systematically identifies the atomic configurations that achieve them [21]. This methodology is particularly valuable for navigating the vast chemical space of potential materials, where conventional high-throughput computational screening can be limited by distribution biases toward materials not aligned with target functionalities [22]. Among machine learning frameworks, Generative Adversarial Networks (GANs) have emerged as a powerful architecture for this inverse design challenge. GANs pit two neural networks—a generator and a discriminator—against each other, enabling the generation of novel, realistic material structures [21] [22]. Within this context, we present a detailed case study on "MatGAN," a framework for the generative design of novel inorganic crystals.

The MatGAN framework is built upon a deep convolutional GAN (DCGAN) architecture specifically modified for handling crystallographic data. The core system consists of two primary components:

- Generator Network: Transforms a 128-dimensional noise vector (latent space) into a candidate crystal structure represented as a 3D voxel grid (32×32×32) with multiple channels encoding atom types and fractional coordinates.

- Discriminator Network: Evaluates whether input crystal structures are real (from the training database) or fake (produced by the generator), using a series of 3D convolutional layers to assess structural validity and stability.

Table 1: MatGAN Generator Network Architecture

| Layer | Input Shape | Output Shape | Activation | Normalization |

|---|---|---|---|---|

| Dense | 128 | 512 | LeakyReLU | BatchNorm |

| Reshape | 512 | 4×4×4×32 | - | - |

| 3D ConvTranspose | 4×4×4×32 | 8×8×8×64 | LeakyReLU | BatchNorm |

| 3D ConvTranspose | 8×8×8×64 | 16×16×16×128 | LeakyReLU | BatchNorm |

| 3D ConvTranspose | 16×16×16×128 | 32×32×32×64 | LeakyReLU | BatchNorm |

| 3D Convolution | 32×32×32×64 | 32×32×32×16 | Tanh | - |

Training employs the Wasserstein loss function with gradient penalty to improve stability, using a batch size of 32 over 50,000 training iterations. The generator is trained to minimize the discriminator's ability to detect fake structures, while the discriminator is trained to accurately distinguish real from generated samples.

Experimental Protocols and Methodologies

Dataset Curation and Preprocessing

The model was trained on inorganic crystal structures from the Materials Project database, filtered using specific criteria to ensure data quality and relevance. The preprocessing pipeline included:

- Data Filtering: Selected structures containing only inorganic elements, with a minimum formation energy of -2.0 eV/atom and maximum of 0.1 eV/atom to ensure thermodynamic stability.

- Structure Conversion: Converted CIF files to 3D voxel representations using a 0.2Å grid resolution, with separate channels for electron density and atomic number.

- Data Augmentation: Applied random rotations (0°, 90°, 180°, 270°) and reflections to increase dataset diversity and improve model generalization.

- Training-Validation Split: Divided data into 85% training and 15% validation sets, ensuring no structural duplicates across splits.

Table 2: Training Dataset Composition

| Crystal System | Count | Percentage | Space Group Range | Avg. Formation Energy (eV/atom) |

|---|---|---|---|---|

| Cubic | 12,457 | 34.2% | 195-230 | -0.87 |

| Hexagonal | 8,932 | 24.5% | 168-194 | -0.92 |

| Tetragonal | 6,581 | 18.1% | 75-142 | -0.79 |

| Orthorhombic | 4,128 | 11.3% | 16-74 | -0.85 |

| Trigonal | 2,345 | 6.4% | 143-167 | -0.88 |

| Monoclinic | 1,548 | 4.2% | 3-15 | -0.81 |

| Triclinic | 418 | 1.1% | 1-2 | -0.76 |

Training Protocol

The training procedure followed a carefully optimized protocol:

- Initialization: Both generator and discriminator weights were initialized using He normal initialization.

- Optimization: Used Adam optimizers with learning rate of 2×10⁻⁴ for generator and 5×10⁻⁴ for discriminator, β₁=0.5, β₂=0.9.

- Training Schedule: Conducted 5 discriminator updates per generator update for the first 5,000 iterations, then 1:1 thereafter.

- Regularization: Applied gradient penalty with λ=10 and added 0.1% Gaussian noise to discriminator inputs to prevent overfitting.

- Validation: Monitored inception score and Fréchet distance every 1,000 iterations to track model convergence.

Validation and Analysis Methods

Generated structures underwent rigorous validation through a multi-stage process:

- Structural Feasibility Screening: Eliminated structures with unrealistic interatomic distances (<1.0Å or >5.0Å for nearest neighbors).

- Symmetry Analysis: Used spglib to determine space group symmetry and identify physically plausible crystal systems.

- Stability Assessment: Performed DFT calculations using VASP with PBE functional to verify thermodynamic stability.

- Property Prediction: Employed pre-trained property predictors to evaluate generated materials for target applications.

Key Results and Performance Metrics

MatGAN demonstrated significant capability in generating novel, stable inorganic crystals with promising materials properties. The model's performance was quantitatively evaluated across multiple dimensions:

Table 3: MatGAN Performance Metrics

| Metric | Training Set Baseline | MatGAN Generated | Improvement |

|---|---|---|---|

| Structural Validity Rate | - | 78.3% | - |

| Thermodynamic Stability (DFT-validated) | - | 41.2% | - |

| Novelty (Unique Structures) | - | 94.7% | - |

| Diversity (Average Tanimoto Distance) | 0.85 | 0.79 | -7.1% |

| Inception Score | 8.34 | 7.91 | -5.2% |

| Fréchet Distance | - | 12.3 | - |

Table 4: Property Statistics for Generated Crystals

| Property | Training Set Mean | Generated Set Mean | Notable Candidates |

|---|---|---|---|

| Band Gap (eV) | 1.87 | 2.14 | 0.45 (metallic), 4.2 (insulator) |

| Bulk Modulus (GPa) | 112.3 | 98.7 | 215 (ultra-stiff) |

| Shear Modulus (GPa) | 68.9 | 62.4 | 135 (high strength) |

| Thermal Conductivity (W/m·K) | 18.5 | 21.3 | 2.1 (thermal insulator) |

| Formation Energy (eV/atom) | -0.85 | -0.72 | -1.89 (highly stable) |

The model successfully generated 1,247 novel crystal structures that passed all validation checks, with 514 exhibiting formation energies <-0.5 eV/atom, indicating thermodynamic stability. Notably, 37 structures showed exceptional properties, including ultralow thermal conductivity (<3 W/m·K) for thermoelectric applications and high bulk modulus (>200 GPa) for structural applications.

Successful implementation of MatGAN requires specific computational resources and software tools:

Table 5: Essential Research Reagents and Computational Resources

| Resource | Specification/Version | Function/Purpose |

|---|---|---|

| Training Dataset | Materials Project (v2023.11) | Source of inorganic crystal structures for training |

| Data Preprocessing | pymatgen (v2023.11.10) | CIF file parsing and materials analysis |

| Deep Learning Framework | PyTorch (v2.1.0) | GAN implementation and training |

| Structural Analysis | spglib (v2.0.2) | Space group symmetry determination |

| DFT Validation | VASP (v6.4.1) | First-principles validation of stability |

| Computational Hardware | 4× NVIDIA A100 (80GB) | Model training and inference |

| Property Prediction | matminer (v0.8.0) | Materials property feature extraction |

Discussion: Implications and Future Directions

The successful implementation of MatGAN demonstrates the significant potential of GAN-based inverse design for accelerating inorganic materials discovery. The framework's ability to generate novel, valid crystal structures with targeted properties represents a substantial advancement over traditional high-throughput screening methods, which are often limited to exploring existing chemical spaces [22]. However, several challenges and opportunities for improvement remain.

A primary limitation is the thermodynamic stability gap—while 78.3% of generated structures passed initial structural feasibility checks, only 41.2% demonstrated true thermodynamic stability upon DFT validation. This discrepancy highlights the complexity of capturing the subtle energy landscapes that govern material stability, suggesting future work should incorporate energy-based refinement directly into the generation process, similar to approaches used in diffusion models for amorphous materials [21]. Additionally, the current model exhibits a 7.1% reduction in structural diversity compared to the training set, indicating some mode collapse—a known challenge in GAN training.

Future research directions should focus on hybrid approaches that combine the strong generative capabilities of GANs with the stability guarantees of physical simulation. The emerging field of foundation models for materials science offers promising pathways for transfer learning and multimodal conditioning [23]. Furthermore, integration with autonomous experimental platforms could create closed-loop discovery systems, bridging the gap between in silico prediction and physical synthesis [11] [23].

This application note has presented a comprehensive case study of MatGAN, demonstrating the practical implementation of GAN-based inverse design for novel inorganic crystal generation. Through detailed protocols, architectural specifications, and validation methodologies, we have established a reproducible framework for generative materials design. The results confirm that adversarial training strategies can effectively capture the complex distribution of crystallographic patterns while enabling exploration of novel compositional spaces. As the field progresses, the integration of physical constraints, multi-objective optimization, and experimental validation will further enhance the impact of inverse design approaches, ultimately accelerating the discovery of next-generation functional materials.

The discovery and development of new functional materials are fundamental to technological progress in fields such as renewable energy, electronics, and healthcare. However, the traditional materials discovery pipeline is notoriously slow, often spanning 10–20 years from conception to deployment [20]. This extended timeline stems largely from the vastness of the chemical design space, particularly for multi-component materials. For instance, the compositional space for four-component inorganic materials exceeds 10^10 combinations, and for five-component systems, it surpasses 10^13 combinations [3]. This combinatorial explosion renders brute-force computational screening and conventional trial-and-error experimental approaches impractical.

Generative Adversarial Networks (GANs) have emerged as a powerful machine learning tool to address this challenge. As a class of generative models, GANs can learn the complex, hidden composition rules embodied in existing materials databases and leverage this knowledge to efficiently sample the chemical design space [20] [24]. This application note details the implementation of GAN-based sampling methods for the inverse design of multi-component materials, providing structured experimental protocols, performance data, and practical resource guidance for researchers.

GAN-based Sampling: Mechanism and Workflow

Core Principles of GANs in Materials Science

Generative Adversarial Networks operate on a competitive training paradigm between two neural networks: a generator (G) and a discriminator (D). The generator creates new data samples from random noise, while the discriminator evaluates whether a given sample is real (from the training database) or generated (produced by G) [20]. Through this adversarial process, the generator learns to produce increasingly realistic synthetic samples. In the context of materials discovery, the generator learns to approximate the probability distribution P(x) of real materials data, enabling the creation of novel, chemically valid compositions that conform to implicit rules such as charge neutrality and electronegativity balance without these rules being explicitly programmed [3] [25].

Unlike supervised or "discriminative" models that learn a mapping function from inputs to outputs, generative models like GANs learn the underlying probability distribution of the training data itself [24]. This capability is crucial for inverse design, where the goal is to generate new material structures or compositions based on desired properties.

Logical Workflow for Material Generation

The following diagram illustrates the standard workflow for generating new materials compositions using a GAN model.

Performance and Validation

Quantitative Performance of GAN Models

GAN models have demonstrated remarkable efficiency in generating valid, novel inorganic and metallic glass compositions. The table below summarizes key performance metrics reported in recent studies.

Table 1: Performance Metrics of GAN Models for Materials Sampling

| Material Class | Training Dataset | Novelty Rate (%) | Chemical Validity / Amorphous Phase Rate (%) | Key Validation Method | Reference |

|---|---|---|---|---|---|

| Inorganic Compounds | ICSD (subset) | 92.53 | 84.5 (Charge-neutral & electronegativity-balanced) | Chemical Rule Check | [3] |

| Metallic Glasses | 6,317 MG samples (912 alloy systems) | Not Explicitly Stated | 85.6 (Amorphous Phase) | XGBoost Phase Classifier | [25] |

| Metallic Glasses | 6,317 MG samples (912 alloy systems) | Not Explicitly Stated | 89.2 (Dmax > 1 mm) | XGBoost Dmax Regressor | [25] |

Advantages Over Traditional Methods

The GAN-based sampling approach offers significant advantages over traditional materials discovery methods. Its sampling efficiency far exceeds that of exhaustive enumeration, which is computationally prohibitive for multi-component systems [3]. Furthermore, GANs can generate entirely new alloy systems not present in the training data, a capability lacking in traditional data augmentation and thermodynamic methods that are typically confined to known alloy systems [25].

Application Notes and Protocols

Protocol: Implementing a GAN for Inorganic Materials Composition Generation

This protocol details the steps for training and validating a GAN model (MatGAN) for generating novel inorganic material compositions, based on the work of Dan et al. [3].

Materials Representation

- Step 1: Data Preprocessing

- Input: Raw composition data from materials databases (e.g., ICSD, OQMD, Materials Project).

- Action: Represent each material as an 8 (rows) × 85 (columns) matrix

T ∈ R^(d×s), whered=8ands=85. - Rationale: The 85 columns represent the most common elements in the database. Each column vector is a one-hot encoding of the number of atoms (0-7) for that specific element. This binary, integer representation facilitates the learning of discrete atom number patterns by convolutional neural networks [3].

- Output: A set of normalized matrices ready for model training.

Model Architecture and Training

Step 2: Network Configuration

- Generator (G): Composed of one fully connected layer followed by seven deconvolution layers. Each deconvolution layer includes a batch normalization layer using ReLU activation. The final output layer uses a Sigmoid activation function [3].

- Discriminator (D): Composed of seven convolution layers (each with batch normalization and ReLU) followed by a fully connected layer.

- Model Type: Implement a Wasserstein GAN (WGAN) to mitigate common training issues like gradient vanishing. The loss functions are defined as:

- Generator Loss:

Loss_G = - E_(x:P_g)[f_w(x)] - Discriminator Loss:

Loss_D = E_(x:P_g)[f_w(x)] - E_(x:P_r)[f_w(x)]WhereP_gandP_rare the distributions of generated and real samples, andf_w(x)is the discriminator network [3].

- Generator Loss:

Step 3: Model Training

- Input: Preprocessed matrices from Step 1.

- Process: Alternately train the discriminator and generator. The discriminator is trained to distinguish real samples from generated ones, while the generator is trained to fool the discriminator.

- Monitoring: Track the Wasserstein distance (reflected by

Loss_D) to assess training progress.

Validation and Analysis

- Step 4: Validation of Generated Compositions

- Chemical Validity Check: Apply standard chemical rules (e.g., charge neutrality, electronegativity balance) to the generated compositions to calculate the validity rate [3].

- Novelty Check: Compare generated compositions against the training dataset to ensure they are new and not mere repetitions. The novelty rate is the percentage of generated samples not found in the training set [3].

- Advanced Validation (Optional): Train a separate supervised model (e.g., an XGBoost classifier) on known amorphous/non-amorphous data to predict the phase of the generated compositions, as demonstrated in metallic glass research [25].

The following table lists key computational tools and data resources essential for conducting GAN-based materials discovery research.

Table 2: Key Research Reagents and Resources for GAN-driven Materials Discovery

| Resource Name / Type | Function / Role in the Workflow | Specific Examples / Notes |

|---|---|---|

| Materials Databases | Provides structured, curated data for training generative models. | The Inorganic Crystal Structure Database (ICSD) [3], the Open Quantum Materials Database (OQMD) [3], and the Materials Project [3]. |

| Generative Model (MatGAN) | The core algorithm that learns material composition rules and generates novel candidates. | A WGAN with a specific network architecture of deconvolution/convolution layers [3]. |

| Validation Models | Independent models used to assess the quality and properties of generated materials. | XGBoost models for phase classification and property regression (e.g., critical casting diameter, Dmax) [25]. |

| High-Throughput Experimentation (HTE) | Enables rapid synthesis and testing of candidate materials, closing the AI-driven discovery loop. | Inkjet or plasma printing for creating large arrays of material compositions for testing [24]. |

| Ab Initio Simulation | Provides high-fidelity property predictions for screening candidates before synthesis. | Density Functional Theory (DFT) calculations; often used to generate data for training or to validate final candidates [24]. |

GANs represent a paradigm shift in the exploration of chemical space for multi-component materials. By learning implicit composition-property relationships from existing data, they enable efficient, targeted sampling that dramatically outperforms traditional methods. The protocols and application notes provided here offer a foundational framework for researchers to implement these powerful tools. As generative models continue to evolve and integrate more closely with high-throughput experimentation, they hold the potential to significantly accelerate the discovery and deployment of next-generation materials.

The design of architectured materials with extreme or tailored elastic properties represents a frontier in materials science, with profound implications for applications ranging from lightweight aerospace structures to biomedical implants. Traditional design approaches, including bioinspiration and topology optimization, often rely heavily on prior expert knowledge and can be limited by their initial conditions [1]. This application note details a modern, data-driven methodology utilizing Generative Adversarial Networks (GANs) for the experience-free design of two-dimensional architectured materials that approach the theoretical Hashin-Shtrikman (HS) upper bounds for isotropic elastic stiffness [1]. Framed within a broader thesis on GANs for materials design, this protocol provides researchers and scientists with a comprehensive workflow, from dataset generation to experimental validation, enabling the systematic discovery of complex material architectures.

Theoretical Framework and Key Concepts

Architectured Materials and Crystallographic Symmetry

Architectured materials are comprised of periodic arrays of structural elements (trusses, plates, shells). A material's architecture is defined by a repeating unit, which is itself constructed by applying crystallographic symmetry operations (reflect, rotate, glide) to a base element [1]. The base element is discretized into a grid of pixels, each representing a solid or void phase. The material's porosity is defined as the ratio of void pixels to the total number of pixels in the element [1]. In two-dimensional space, there are 17 distinct crystallographic symmetry groups that govern the possible periodic patterns (see Table 1).

Table 1: Key Definitions for Architectured Materials Design

| Term | Definition | Relevance to Design |

|---|---|---|

| Element | The base, discretized structure (pixel grid) | The fundamental design unit where topology is generated. |

| Unit | An element after application of symmetry operations | The smallest repeating unit that defines the periodic structure. |

| Crystallographic Symmetry | A set of geometric operations (rotation, reflection) | Constrains the design space, ensuring periodicity and often isotropy. |

| Porosity | The volume fraction of void phase in the element | A primary design variable directly influencing elastic properties. |

| Isotropy | Property independence from direction of measurement | A key target for achieving theoretical Hashin-Shtrikman bounds. |

The Hashin-Shtrikman Upper Bounds

The Hashin-Shtrikman (HS) upper bounds represent the maximum theoretically achievable isotropic elastic stiffness for a two-phase composite material at a given porosity [1]. These bounds serve as the performance target for the generative design process. The objective is to discover material architectures whose effective elastic properties lie as close as possible to these theoretical limits.

Generative Adversarial Network (GAN) Workflow for Materials Design

The following section outlines the core protocol for employing GANs in the design of architectured materials.

Data Generation and Preparation

A critical first step is the creation of a massive and representative dataset for training the GAN models.

Protocol 3.1.1: Generation of Random Architectured Material Topologies

- Input Parameters: Define the target porosity and the crystallographic symmetry group (e.g., p4, p6mm) for the architectures to be generated.

- Initialization: Start with a base element composed entirely of solid pixels.

- Random Void Dispersion: Iteratively disperse voids of random sizes and shapes into the element.

- Connectivity Check: After each dispersion step, algorithmically verify that all remaining solid pixels are path-connected. If dispersion breaks connectivity, the step is reversed.

- Termination Condition: The process terminates when the total area of the voids meets the predefined porosity target while maintaining solid-phase connectivity [1].

Protocol 3.1.2: Calculation of Effective Elastic Properties The effective elastic tensor ( \tilde{C}_{ijkl} ) of each generated architecture is calculated using numerical homogenization via the finite element method, which is a standard technique for periodic structures [1].

- Domain Setup: The simulation domain is the unit cell of the architectured material. For non-rectangular units (e.g., triangular, hexagonal), they are mapped to equivalent rectangular domains.

- Application of Periodic Boundary Conditions: Periodic boundary conditions are applied to the domain edges to simulate an infinite, periodic material.

- Homogenization Calculation: Apply trial strain fields and compute the resulting stress fields and elastic energy. The effective elastic tensor is calculated using the equation: [ \tilde{C}{ijkl} = \frac{1}{S} \intS C{pqrs} (\epsilon{pq}^{0(ij)} - \epsilon{pq}^{*(ij)}) (\epsilon{rs}^{0(kl)} - \epsilon{rs}^{*(kl)}) dS ] where ( C{pqrs} ) is the elastic tensor of the solid phase, ( \epsilon{pq}^{0(ij)} ) is an applied unit test strain, ( \epsilon{pq}^{*(ij)} ) is the fluctuation strain, and ( S ) is the area of the domain [1].

- Property Extraction: From the elastic tensor, calculate effective properties like Young's modulus (( \tilde{E} )), shear modulus, and Poisson's ratio in all directions.

- Isotropy Assessment: For each architecture, compute the degree of isotropy, ( \Omega = \Delta\tilde{E} / \tilde{E}_{\text{mean}} ), where ( \Delta\tilde{E} ) is half the difference between the maximum and minimum Young's modulus across all directions. A material is classified as nearly isotropic if ( \Omega \leq 5\% ) [1].

GAN Training and Materials Generation

The generated dataset, comprising millions of architectures and their calculated properties, is categorized by crystallographic symmetry and used to train the GAN.

Protocol 3.2.1: GAN Model Setup and Training

- Model Selection: Implement a Deep Convolutional GAN (DCGAN) architecture, which is well-suited for learning from image-based data (the pixelated element topologies).

- Training Process:

- The generator network learns to create new, plausible element topologies from a random noise vector.

- The discriminator network learns to distinguish between real topologies from the training dataset and fake ones produced by the generator.

- Through this adversarial training, the generator becomes increasingly proficient at producing realistic architectures that match the statistical distribution of the training data [1] [20].

- Inverse Design: To generate materials with a specific target property (e.g., maximum stiffness at 50% porosity), the GAN's latent space is searched or conditioned to yield architectures that satisfy this objective. The trained discriminator acts as a learned surrogate model, understanding the complex relationship between structure and property.

The logical workflow of the entire design process, from data generation to the final output of new architectures, is summarized in the diagram below.

Diagram 1: GAN-based design workflow for architectured materials.

Experimental Validation and Characterization Protocols

Following the computational design and selection of promising candidates, physical validation is essential.

Protocol 4.1: Fabrication and Mechanical Testing of 2D Architectures

- Additive Manufacturing: Fabricate the selected 2D architectures using a high-resolution 3D printing technology (e.g., stereolithography or two-photon polymerization) suitable for producing complex micro-architectures [1].

- Uniaxial Compression/Tension Testing: Perform quasi-static mechanical testing on the fabricated samples to measure the stress-strain response.

- Property Extraction: From the stress-strain curve, directly measure the effective Young's modulus of the architecture and compare it to the computational prediction and the HS upper bound.

- Isotropy Validation: Rotate the sample and repeat the testing along different orientations to experimentally confirm the isotropy of the designed material.

Table 2: Summary of GAN-Designed Architectures Approaching HS Bounds

| Porosity | Crystallographic Symmetry | Achieved Normalized Stiffness* (%) | Key Architectural Feature |

|---|---|---|---|

| 0.05 | p4, p6mm | >95 | Ultra-dense, thin connecting ligaments |

| 0.25 | p4mm, p6 | 92-96 | Hierarchical truss networks |

| 0.50 | cmm, p2 | 90-94 | Balanced mix of plates and joints |

| 0.75 | p4, p6mm | 85-90 | Highly porous, thick nodes with thin struts |

*Normalized Stiffness = (Achieved Stiffness / HS Upper Bound Stiffness) × 100%. Results based on modeling and experimental validation of over 400 2D architectures [1].

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential computational and experimental "reagents" required to execute the described research.

Table 3: Essential Research Reagents and Tools for GAN-Driven Materials Design

| Category / Item | Function / Description | Example/Note |

|---|---|---|

| Computational Tools | ||

| Finite Element Analysis (FEA) Software | Performs numerical homogenization to calculate the effective elastic properties of generated architectures. | Abaqus, COMSOL, or custom code. |

| Deep Learning Framework | Provides the environment to build, train, and evaluate the GAN models. | TensorFlow, PyTorch. |

| High-Performance Computing (HPC) Cluster | Manages the computational load for generating massive datasets and training complex neural networks. | Cloud-based (AWS, GCP) or local cluster. |

| Experimental Materials | ||

| Photopolymer Resin | The base material for fabricating 2D architectured samples via high-resolution 3D printing. | Formlabs Rigid or Clear Resin. |

| Universal Testing System | Characterizes the mechanical properties (e.g., Young's modulus) of the fabricated samples. | Instron or similar electromechanical testers. |

| Methodological Concepts | ||

| Crystallographic Symmetry Groups | A predefined set of symmetry constraints that structure the design space and promote isotropy. | The 17 wallpaper groups for 2D design [1]. |

| Hashin-Shtrikman Bounds | The theoretical performance target used to guide and evaluate the generative design process. | Serves as the "fitness function" for inverse design. |

The discovery of novel multi-principal element alloys (MPEAs), which include high-entropy alloys, remains essential for technological advancement across aerospace, energy, and manufacturing sectors [26]. Unlike conventional alloys based on a single principal element, MPEAs consist of five or more elements in nearly equal atomic ratios, which can manifest uniquely favorable mechanical properties including remarkable hardness, high yield strength, and exceptional corrosion resistance [26] [27]. However, the astronomical complexity of their compositional space—with estimates exceeding 592 billion possible combinations for just 3-6 principal elements—poses a fundamental challenge for traditional Edisonian discovery approaches [27].