From Linear Models to Deep Learning: A Comprehensive Guide to Modern QSAR in Drug Discovery

This article explores the transformative integration of machine learning (ML) with Quantitative Structure-Activity Relationship (QSAR) modeling in drug discovery.

From Linear Models to Deep Learning: A Comprehensive Guide to Modern QSAR in Drug Discovery

Abstract

This article explores the transformative integration of machine learning (ML) with Quantitative Structure-Activity Relationship (QSAR) modeling in drug discovery. It traces the evolution from classical statistical approaches to advanced deep learning and generative models, detailing their application in virtual screening, ADMET prediction, and multi-target drug design. The content addresses critical challenges such as data quality, model interpretability, and overfitting, while providing guidance on rigorous validation practices and regulatory compliance. Aimed at researchers and drug development professionals, this review synthesizes current methodologies, best practices, and emerging trends—including quantum machine learning—to offer a practical roadmap for implementing robust and predictive QSAR workflows.

The Evolution of QSAR: From Classical Foundations to AI-Driven Paradigms

The Origins and Core Principles of Traditional QSAR

Quantitative Structure-Activity Relationship (QSAR) modeling stands as a cornerstone of computational chemistry and ligand-based drug design (LBDD), providing a mathematical framework to connect molecular structure to biological activity [1]. For over six decades, these models have been integral to computer-assisted drug discovery, enabling researchers to rationalize bioactivity measurements and predict the properties of unsynthesized compounds, thereby guiding experimental efforts and reducing costs [2] [3]. The core principle underpinning QSAR is that measurable or calculable molecular descriptors can be quantitatively correlated with a compound's biological potency, affinity, or other relevant endpoints [4] [5]. This article details the historical origins, fundamental principles, and standardized protocols of traditional QSAR, framing them within the context of modern, machine-learning-driven research.

Historical Foundations and Evolution

The conceptual roots of QSAR extend back over a century, long before the formalization of the field. Early observations by Meyer and Overton revealed a correlation between the narcotic properties of gases and organic solvents and their solubility in olive oil, marking one of the first recognitions that biological activity could be linked to a physicochemical property [1].

A pivotal advancement came with the work of Hammett in the 1930s and 1940s, who introduced linear free-energy relationships to physical organic chemistry [1]. His famous equation, log(K) = log(K₀) + ρσ, used a substituent constant (σ) to quantify the electronic effects of substituents on reaction rates and equilibria, providing a quantitative parameter that would become a fundamental descriptor in later QSAR work [1].

The field of QSAR was formally born in the early 1960s with the nearly simultaneous publication of two groundbreaking approaches, as summarized in Table 1.

Table 1: Foundational Methodologies in Traditional QSAR

| Methodology | Key Innovators | Core Principle | Mathematical Formulation |

|---|---|---|---|

| Hansch-Fujita Analysis | Corwin Hansch & Toshio Fujita [1] | Correlates activity with a combination of electronic, steric, and hydrophobic substituent parameters. | log(1/C) = b₀ + b₁σ + b₂logP |

| Free-Wilson Analysis | Spencer M. Free & James W. Wilson [1] | Uses additive group contributions from specific substituent positions to predict biological activity. | Activity = μ + ΣGᵢ |

The Hansch-Fujita approach was revolutionary for its time, multi-parametrically combining Hammett's electronic constant (σ) with hydrophobicity (logP) [1]. This acknowledged that biological activity often depends on a molecule's ability to reach the site of action (governed by hydrophobicity) and then interact with it (governed by electronic effects). The Free-Wilson model, based on the principle of additivity, offered a complementary approach that did not require pre-defined physicochemical parameters, instead deriving the contribution of each structural feature directly from the biological data [1].

Core Principles and Theoretical Assumptions

Traditional QSAR modeling is built upon several foundational principles and assumptions that guide its application and interpretation.

- The Chemical Space Principle: A QSAR model is considered reliable only for a specific, well-defined chemical space—the theoretical domain defined by the structural and physicochemical properties of the compounds used to train the model [1]. Predictions for compounds outside this space are unreliable.

- The Principle of Parsimony (Occam's Razor): Given the high dimensionality of molecular descriptors and the risk of overfitting, traditional best practices emphasize building models with a reduced number of highly significant descriptors [4] [5]. This leads to more interpretable and robust models.

- The Domain of Applicability: A robust QSAR model must define its applicability domain, which specifies the structural and property space within which the model's predictions are considered reliable [4]. The leverage method is one common technique used to define this domain statistically.

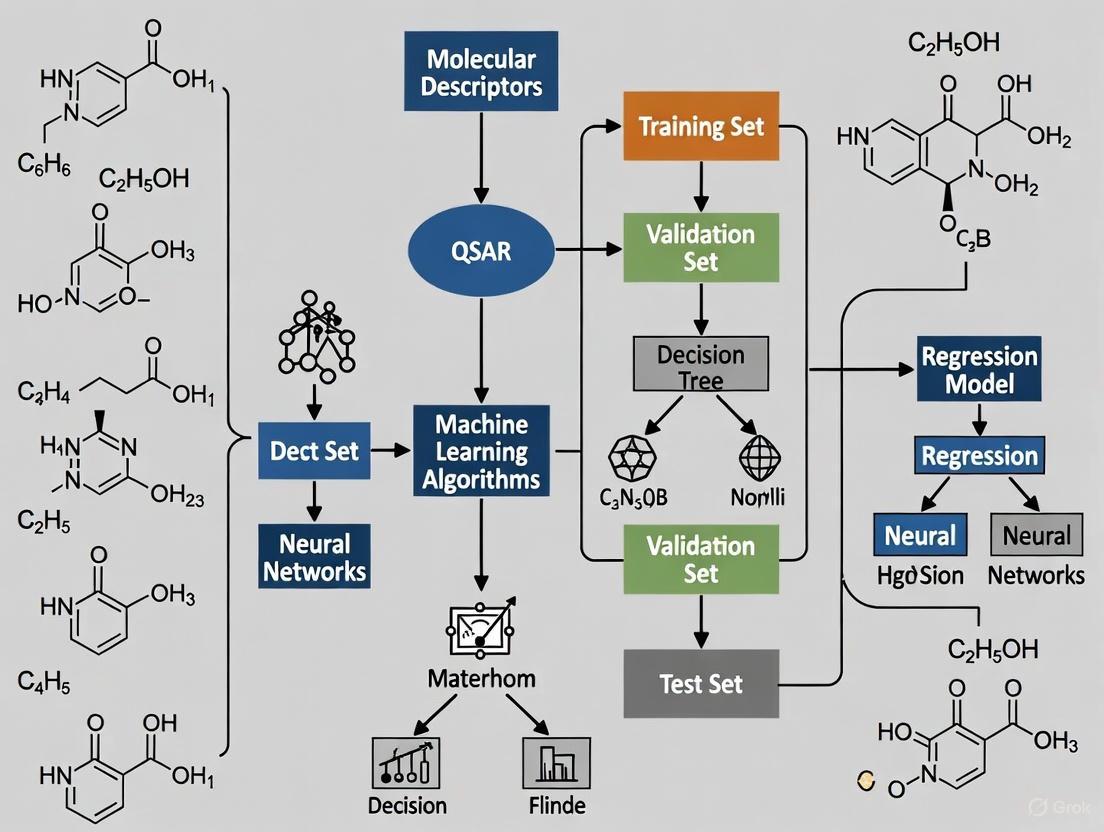

The following workflow diagram illustrates the standard process for developing a traditional QSAR model, from data collection to deployment.

Standard QSAR Methodology and Workflow

The development of a reliable QSAR model follows a rigorous, multi-step protocol designed to ensure predictive power and statistical significance [4]. The key stages are detailed below.

Data Acquisition and Curation

The process begins with assembling a dataset of compounds with consistently measured biological activity values (e.g., IC₅₀, EC₅₀, Ki) [4]. The dataset must be large enough (typically >20 compounds) and contain comparable activity values obtained from a standardized experimental protocol [4].

Molecular Descriptor Calculation and Feature Selection

Each compound is represented by a vector of molecular descriptors, which can include thousands of physicochemical, topological, and structural features [5]. Common descriptors include molecular weight, logP (octanol-water partition coefficient), topological polar surface area, and various connectivity indices [5]. Due to the high risk of overfitting in a high-dimensional space (p ≫ n), feature selection is critical. Methods include:

- Variance thresholding and correlation pruning to remove non-informative or redundant descriptors [5].

- Random Forest feature importance to select top descriptors [5].

- Penalized regression methods like Lasso (L₁ regularization) that automatically drive the coefficients of irrelevant descriptors to zero [5].

Model Construction and Validation

Classical QSAR models often employed Multiple Linear Regression (MLR) to build an interpretable linear model [4]. The model must undergo rigorous validation:

- Internal Validation: Uses techniques like k-fold cross-validation to assess robustness using only the training set [4].

- External Validation: The gold standard, where the model is used to predict a completely held-out test set of compounds not used in training [4].

- Statistical Metrics: Validation relies on metrics such as R² and root mean square error for regression models, and area under the ROC curve for classification models [5].

Modern Applications and Evolving Paradigms

While the core principles remain relevant, the application of QSAR in modern drug discovery has necessitated a re-evaluation of some traditional best practices, especially for virtual screening.

A significant paradigm shift concerns the handling of imbalanced datasets, which are common in drug discovery (e.g., high-throughput screening datasets are highly skewed towards inactive compounds) [2]. Traditional best practices recommended dataset balancing and optimizing for Balanced Accuracy (BA) to ensure models could predict both active and inactive classes equally well [2]. However, for the task of virtual screening of ultra-large chemical libraries, where the goal is to select a very small number of top-ranking compounds for experimental testing (e.g., 128 compounds matching a well-plate format), a different metric is more critical [2].

Recent studies demonstrate that models trained on imbalanced datasets and optimized for high Positive Predictive Value achieve a hit rate at least 30% higher than models using balanced datasets [2]. The PPV, also known as precision, directly measures the proportion of true actives among the top-ranked predictions, which aligns perfectly with the economic and practical constraints of experimental follow-up [2].

Furthermore, QSAR is increasingly integrated with modern machine learning techniques. The concept of the "informacophore" has been introduced, extending the traditional pharmacophore by incorporating data-driven insights from computed molecular descriptors, fingerprints, and machine-learned representations of chemical structure [3]. This fusion aims to reduce biased intuitive decisions and accelerate the discovery process.

Experimental Protocol: Developing a QSAR Model for NF-κB Inhibitors

The following protocol provides a detailed, practical guide for constructing a validated QSAR model, using the development of NF-κB inhibitors as a case study [4].

Data Compilation

- Source: Identify 121 compounds with reported IC₅₀ values for NF-κB inhibition from the scientific literature [4].

- Curation: Convert the IC₅₀ values (in molar units) to their negative logarithmic scale (pIC₅₀ = -log₁₀(IC₅₀)) to create a more normally distributed dependent variable for regression.

- Division: Randomly split the dataset into a training set (~80 compounds, ~66% of data) for model development and a test set (~41 compounds, ~34%) for external validation [4].

Descriptor Calculation and Selection

- Software: Use chemical computation software like RDKit, Dragon, or PaDEL to calculate a wide range of 1D, 2D, and 3D molecular descriptors for all 121 compounds [5].

- Pre-processing:

- Remove descriptors with zero or near-zero variance.

- Reduce redundancy by excluding one descriptor from any pair with a pairwise correlation coefficient >0.95.

- Feature Selection: Perform an Analysis of Variance (ANOVA) to identify molecular descriptors with high statistical significance for predicting the NF-κB inhibitory activity [4]. Alternatively, use a feature importance method from a Random Forest model to select the top N most relevant descriptors.

Model Construction

- Multiple Linear Regression (MLR): Develop a linear model using the selected descriptors. The general form of the model is:

pIC₅₀ = β₀ + β₁D₁ + β₂D₂ + ... + βₙDₙ, where β are the coefficients and D are the descriptors [4]. - Artificial Neural Network (ANN): For a non-linear model, train an ANN using the same training set and selected descriptors. A potential architecture is the [8.11.11.1] model, indicating an input layer with 8 descriptors, two hidden layers with 11 neurons each, and a single output neuron [4].

Model Validation and Analysis

- Internal Validation: For the MLR model, report the coefficient of determination (R²) and adjusted R². For both MLR and ANN, perform Leave-One-Out (LOO) or k-fold cross-validation and report the cross-validated R² (Q²) [4].

- External Validation: Use the held-out test set to evaluate the final model's predictive power. Report the R² and root mean square error between the predicted and actual pIC₅₀ values for the test compounds [4].

- Applicability Domain: Use the leverage method to define the model's applicability domain. Calculate the leverage (h) for each compound and plot Williams plots (standardized residuals vs. leverage) with a critical leverage threshold of h* = 3p/n, where p is the number of model parameters and n is the number of training compounds [4].

Table 2: Key Research Reagents and Computational Tools for QSAR Modeling

| Resource / Reagent | Type | Primary Function in QSAR |

|---|---|---|

| ChEMBL [2] | Database | A large-scale, open-access bioactivity database used for compiling training datasets. |

| PubChem [2] | Database | A public repository of chemical molecules and their biological activities. |

| eMolecules Explore / Enamine REAL [2] [3] | Virtual Library | Ultra-large, "make-on-demand" chemical libraries used for virtual screening. |

| RDKit [5] | Software Tool | An open-source cheminformatics toolkit for descriptor calculation, fingerprint generation, and molecular informatics. |

| Dragon [5] | Software Tool | A professional software for the calculation of thousands of molecular descriptors. |

| NF-κB Inhibition Assay [4] | Biological Assay | A functional assay (e.g., reporter gene assay) used to generate experimental IC₅₀ values for model training and validation. |

In the realm of Quantitative Structure-Activity Relationship (QSAR) modeling, molecular descriptors serve as the fundamental translation of chemical structures into a numerical language computable by statistical and machine learning algorithms [6] [7]. These descriptors are numerical values that encode various chemical, structural, or physicochemical properties of compounds, forming the basis for predicting biological activity, toxicity, and other pharmacological properties [8]. The evolution of QSAR from its early dependence on simple physicochemical parameters to its current state, which utilizes thousands of complex descriptors, has been pivotal in enhancing the predictive power and applicability of these models in modern drug discovery [7]. The critical challenge lies in selecting descriptors that comprehensively represent molecular properties, correlate meaningfully with biological activity, are computationally feasible, and possess distinct chemical interpretability [7]. This application note details the characteristics, calculation protocols, and practical applications of 1D through 4D molecular descriptors, providing researchers with a framework for their effective deployment in QSAR studies.

Descriptor Dimensions: Characteristics, Applications, and Comparative Analysis

Molecular descriptors are typically classified by their dimensionality, which corresponds to the level of structural information they encode [8]. Understanding the distinctions between these dimensions is crucial for selecting the appropriate descriptors for a specific QSAR problem.

Table 1: Comparative Analysis of Molecular Descriptor Dimensions in QSAR

| Dimension | Description & Data Encoded | Common Examples | Primary Applications | Key Advantages | Major Limitations |

|---|---|---|---|---|---|

| 1D Descriptors | Simple, atom-based counts and molecular properties [8]. | Molecular weight, atom counts, bond counts, number of rings, log P [6] [8]. | High-throughput initial screening, early-stage prioritization of compound libraries [9]. | Fast and easy to calculate; highly interpretable [10]. | Low informational content; poor at capturing complex structure-activity relationships [9]. |

| 2D Descriptors | Topological indices derived from molecular graph connectivity [6] [8]. | Wiener index, Zagreb indices, connectivity indices, 2D fingerprints [6]. | Ligand-based virtual screening, similarity searching, and predictive ADMET modeling [6] [11]. | Invariant to conformation; fast calculation; good for large datasets [12]. | Lack 3D stereochemical information; may miss critical bioactivity-related features [13]. |

| 3D Descriptors | Geometric and surface properties derived from a single, 3D conformation [12] [9]. | Molecular volume, surface area, polarizability, 3D-MoRSE descriptors, WHIM descriptors [9]. | Modeling ligand-target binding where 3D shape and electrostatic complementarity are critical [12]. | Captures steric and electronic effects directly relevant to binding [12]. | Dependent on correct bioactive conformation; alignment can be challenging and introduce bias [13] [9]. |

| 4D Descriptors | Ensembles of properties from multiple molecular conformations and/or protonation states [9] [8]. | Grid-based occupancy descriptors averaged over an ensemble of structures [9]. | Accounting for ligand flexibility and induced fit in binding; refining QSAR models for complex targets [9]. | Explicitly incorporates molecular flexibility; reduces bias from a single conformation [9]. | Computationally intensive; requires sophisticated sampling and analysis methods [9]. |

The choice of descriptor dimension involves a direct trade-off between computational cost, informational content, and the specific biological context. Higher-dimensional descriptors often provide a more realistic representation of the molecular system but require greater computational resources and more complex model-building protocols [9] [7].

Integrated Workflow for Descriptor Calculation and Selection

The process of moving from a chemical structure to a robust QSAR model involves a structured workflow. The following diagram outlines the key steps, emphasizing the iterative nature of descriptor selection and model validation.

Experimental Protocols for Descriptor Calculation and QSAR Modeling

This section provides detailed methodologies for calculating descriptors and building QSAR models, as applied in recent research.

Protocol 1: Building a Random Forest QSAR Model with Feature Selection

This protocol is adapted from a study that identified tankyrase (TNKS2) inhibitors for colon adenocarcinoma, showcasing a modern machine learning-assisted QSAR approach [11].

Dataset Curation:

- Source: Retrieve a curated dataset of known active and inactive compounds from a reliable database such as ChEMBL. For example, a study used 1100 TNKS inhibitors from ChEMBL (Target ID: CHEMBL6125) [11].

- Activity Data: Compile uniform activity data (e.g., IC₅₀, Ki) and convert to a common scale (e.g., pIC₅₀ = -log₁₀(IC₅₀)) [10].

- Structure Standardization: Standardize chemical structures using tools like RDKit or OpenBabel. This includes removing salts, normalizing tautomers, and handling stereochemistry [10].

Descriptor Calculation:

- Software: Use descriptor calculation software such as PaDEL-Descriptor, DRAGON, or Mordred to generate a comprehensive set of 1D, 2D, and 3D descriptors [6] [10].

- Configuration: For 3D descriptors, an energy minimization step is recommended to generate a reasonable 3D conformation before calculation [12].

Data Preprocessing and Feature Selection:

- Preprocessing: Remove descriptors with zero or near-zero variance. Handle any missing values, either by imputation or removal of the offending descriptors/compounds. Scale the remaining descriptors to have zero mean and unit variance [10].

- Feature Selection: Apply feature selection methods to reduce dimensionality and avoid overfitting.

Model Building and Validation:

- Data Splitting: Split the dataset into a training set (e.g., 80%) for model development and an external test set (e.g., 20%) for final validation. The external test set must be kept completely blind during model training [11] [10].

- Model Training: Build a Random Forest classification or regression model on the training set using the selected features.

- Hyperparameter Tuning: Optimize model hyperparameters (e.g., number of trees, tree depth) using cross-validation on the training set [11] [8].

- Validation: Assess model performance using the external test set. Report metrics such as accuracy, sensitivity, specificity, and Area Under the ROC Curve (AUC-ROC). The cited study achieved an AUC-ROC of 0.98 [11].

Protocol 2: Utilizing Bioactive Conformations for 3D-QSAR

This protocol, informed by a comparative study of 2D and 3D descriptors, emphasizes the importance of using biologically relevant conformations for 3D-QSAR [12].

Acquisition of Bioactive Conformations:

- Source: Mine the Protein Data Bank (PDB) for high-resolution crystal structures of protein-ligand complexes relevant to the target of interest [12].

- Curation: Compile a dataset of ligands from these complexes. Extract the 3D coordinates of the ligand in its bound (bioactive) conformation. Ensure the activity data (e.g., IC₅₀) for these ligands is uniform and reported in the same assay system [12].

Descriptor Calculation and Modeling:

- Multiple Descriptor Types: Calculate 2D descriptors, 3D descriptors (e.g., using DRAGON), and a combined "2D+3D" descriptor set for each ligand in its bioactive conformation [12].

- Model Building: Model the activity data using multiple machine learning algorithms (e.g., k-Nearest Neighbors, Random Forest, Lasso Regression) for each descriptor set [12].

- Performance Evaluation: Validate models via external test sets. The comparative study found that combining 2D and 3D descriptors often yields more significant models than using either type alone, as they encode complementary molecular information [12].

Protocol 3: Implementing a 4D-QSAR Analysis

4D-QSAR accounts for ligand flexibility by using an ensemble of conformations and/or orientations, thus incorporating an additional dimension beyond 3D-QSAR [9].

Conformational Sampling:

- Generation: For each molecule in the dataset, generate a representative ensemble of low-energy conformations using molecular mechanics or dynamics simulations. Tools like OMEGA or conformer generation functions in RDKit can be used.

- Alignment: Superimpose all conformers of all molecules according to a common pharmacophore or a scaffold present in the series.

Grid and Interaction Field Calculation:

- Grid Construction: Embed the aligned conformational ensembles within a 3D grid.

- Descriptor Generation: At each grid point, calculate interaction field descriptors (e.g., steric, electrostatic) for each conformation. The 4D descriptor is then the occupancy or average energy at each grid point over the entire ensemble of conformations for a given molecule [9].

Data Analysis and Model Building:

- Data Matrix: Construct a data matrix where rows represent compounds and columns represent the 4D grid descriptors.

- Model Development: Use data reduction techniques like Partial Least Squares (PLS) regression to correlate the 4D descriptors with biological activity and build the predictive model [9].

Table 2: Key Research Reagent Solutions for QSAR Modeling

| Tool / Resource | Type | Primary Function | Example Use in Protocol |

|---|---|---|---|

| ChEMBL [11] | Database | Public repository of bioactive molecules with drug-like properties and curated bioactivity data. | Sourcing a reliable dataset of tankyrase inhibitors for model building (Protocol 1). |

| PDB (Protein Data Bank) [12] | Database | Archive of 3D structural data of biological macromolecules, including protein-ligand complexes. | Acquiring bioactive conformations of ligands for accurate 3D-QSAR (Protocol 2). |

| PaDEL-Descriptor [8] [10] | Software | Calculate molecular descriptors and fingerprints. Supports both 2D and 3D descriptor calculation. | Generating a comprehensive set of 1D/2D molecular descriptors as part of the QSAR workflow. |

| DRAGON [8] | Software | Professional software for the calculation of a very large number of molecular descriptors (>5000). | Calculating advanced 2D, 3D, and 4D descriptors for complex QSAR analyses. |

| RDKit [8] [10] | Cheminformatics Library | Open-source toolkit for cheminformatics, including descriptor calculation, machine learning, and molecular operations. | Standardizing chemical structures, generating conformers, and integrating QSAR pipelines. |

| scikit-learn [8] | Software Library | Open-source machine learning library for Python, featuring a wide array of modeling and feature selection algorithms. | Implementing Random Forest, feature selection methods, and model validation (Protocol 1). |

Molecular descriptors are the critical link that transforms chemical intuition into predictive, quantitative models in QSAR research [7]. The strategic selection of descriptor dimension—from the simplicity of 1D to the conformational complexity of 4D—directly controls the balance between interpretability, computational cost, and biological accuracy of the resulting model [9] [7]. As the field advances, the integration of these classical descriptors with modern AI and deep learning methods, which can learn complex representations directly from molecular graphs or SMILES strings, promises to further expand the applicability and predictive power of QSAR in drug discovery [8] [7]. The protocols and tools outlined herein provide a foundation for researchers to rationally select and apply these descriptors, thereby generating more reliable and actionable hypotheses for rational drug design.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a fundamental methodology in modern chemoinformatics and drug discovery, establishing mathematical relationships between chemical structures and their biological activities or physicochemical properties. These models enable researchers to predict the behavior of untested compounds, prioritize synthesis targets, and rationalize molecular design strategies. Among the diverse statistical approaches available, Multiple Linear Regression (MLR) and Partial Least Squares (PLS) regression have emerged as cornerstone classical techniques for constructing interpretable and predictive QSAR models [14]. MLR provides straightforward, transparent models that directly correlate descriptor values to biological response, while PLS offers robust handling of correlated descriptors and high-dimensional data spaces common in chemical descriptor analysis [15] [16].

The continued relevance of these classical approaches persists even alongside advanced machine learning and deep learning methods, particularly when model interpretability is crucial for guiding chemical optimization in drug development pipelines [17] [18]. This application note details the practical implementation, comparative strengths, and appropriate application domains for both MLR and PLS within QSAR modeling workflows.

Theoretical Foundations

Multiple Linear Regression (MLR) in QSAR

Multiple Linear Regression establishes a linear relationship between multiple independent variables (molecular descriptors) and a single dependent variable (biological activity). [19] The fundamental MLR model takes the form:

Activity = β₀ + β₁D₁ + β₂D₂ + ... + βₙDₙ + ε

Where Activity represents the biological response, β₀ is the intercept, β₁...βₙ are regression coefficients for descriptors D₁...Dₙ, and ε denotes the error term [14]. In QSAR applications, the descriptors (D) quantify specific molecular characteristics including electronic, steric, hydrophobic, or topological properties [19].

A significant advantage of MLR is its high interpretability; each coefficient directly quantifies the contribution of its corresponding descriptor to the biological activity [15]. However, MLR requires careful variable selection to avoid overfitting, particularly when dealing with large descriptor pools where the number of descriptors may approach or exceed the number of compounds [20]. Techniques such as stepwise selection, genetic algorithms, or replacement methods are commonly employed to identify optimal descriptor subsets that yield robust, predictive models [15] [20].

Partial Least Squares (PLS) in QSAR

Partial Least Squares regression addresses a key limitation of MLR: the inability to effectively handle correlated descriptors and datasets where the number of variables exceeds the number of observations [16]. PLS operates by projecting the original descriptor variables into a new space of orthogonal latent variables (factors) that maximize covariance with the response variable [21] [16].

The PLS algorithm successively extracts factors as linear combinations of original descriptors, with each factor oriented to explain both descriptor variance and activity correlation [16]. This projection enables stable solutions even for correlated descriptor sets, making PLS particularly valuable for analyzing 3D-QSAR fields (e.g., CoMFA) and high-dimensional fingerprint descriptors [21] [19]. A critical step in PLS modeling is determining the optimal number of latent variables through cross-validation to prevent overfitting [16].

Comparative Analysis of MLR and PLS

Table 1: Characteristics of MLR and PLS Regression in QSAR Modeling

| Feature | Multiple Linear Regression (MLR) | Partial Least Squares (PLS) |

|---|---|---|

| Descriptor Handling | Requires independent, uncorrelated descriptors | Tolerates correlated descriptors effectively |

| Data Dimensionality | Suitable when n(compounds) >> n(descriptors) | Handles n(descriptors) >= n(compounds) |

| Model Interpretability | High - direct coefficient interpretation | Moderate - requires interpretation of latent variables |

| Variable Selection | Essential pre-processing step | Built-in dimensionality reduction |

| Primary QSAR Applications | 2D-QSAR with carefully selected descriptors | 3D-QSAR (CoMFA, CoMSIA), spectral data, high-dimensional descriptors |

| Validation Approach | Leave-one-out, external test set | Cross-validation to determine optimal factors, external validation |

| Implementation Complexity | Low to moderate (with variable selection) | Moderate to high (factor optimization required) |

Table 2: Performance Comparison of MLR, PLS, and Hybrid Approaches

| Method | Advantages | Limitations | Reported Predictive Performance |

|---|---|---|---|

| MLR | Simple interpretation, clear descriptor contributions | Fails with correlated descriptors, overfitting risk | Highly variable depending on variable selection quality [15] |

| PLS | Handles correlated variables, stable with many descriptors | Abstract factors, less intuitive interpretation | Highly predictive for 3D-QSAR fields and complex descriptor sets [21] |

| GA-MLR | Combines robust variable selection with interpretable models | Computationally intensive for large descriptor pools | Superior to stepwise-MLR and comparable to PLS in validation metrics [15] |

Experimental Protocols

Protocol 1: MLR-QSAR Model Development

Objective: Develop a validated MLR-QSAR model using optimal descriptor subset selection.

Materials and Software:

- Chemical structures of compounds with known biological activity (minimum 20 compounds recommended)

- Molecular descriptor calculation software (PaDEL, Mold2, RDKit, or Dragon)

- Statistical analysis environment (R, Python with scikit-learn, or MATLAB)

- Dataset partitioning utility

Procedure:

Dataset Preparation and Curation

- Compile chemical structures and corresponding experimental biological activities (e.g., IC₅₀, Ki, EC₅₀)

- Apply strict quality control: remove duplicates, compounds with ambiguous stereochemistry, and outliers

- Convert structures to standardized representation (e.g., canonical SMILES) and optimize 3D geometry if needed

Molecular Descriptor Calculation

- Calculate comprehensive descriptor set using multiple software tools (e.g., PaDEL for 1444 0D-2D descriptors, Mold2 for 777 descriptors) [20]

- Pre-filter descriptors: remove constant/near-constant variables and those with missing values

- Address collinearity by identifying highly correlated descriptor pairs (r > 0.95) and retaining one from each pair

Descriptor Selection and Model Construction

- Apply variable selection algorithm (Replacement Method, Genetic Algorithm, or Stepwise Regression)

- For Genetic Algorithm-MLR: Implement population size of 100-500, 50-100 generations, crossover probability 0.8, mutation probability 0.01 [15]

- Evaluate model quality using statistical metrics: R², adjusted R², and standard error of estimation

- Select final model based on parsimony principle and statistical significance

Model Validation

- Partition dataset using Balanced Subsets Method or Kennard-Stone algorithm: 70-80% training, 20-30% test [20]

- Perform internal validation: Leave-One-Out (LOO) or Leave-Multiple-Out cross-validation

- Calculate cross-validation metrics: Q², standard error of prediction

- Conduct external validation: Predict test set compounds not used in model building

- Apply Y-scrambling to verify absence of chance correlation (typically 100-500 iterations)

Model Interpretation and Applicability Domain

- Analyze regression coefficients and their statistical significance

- Define applicability domain using leverage approach or descriptor range analysis

- Generate Williams plots (standardized residuals vs. leverage) to identify outliers and influential compounds

Protocol 2: PLS-QSAR Model Development

Objective: Construct a validated PLS-QSAR model for high-dimensional or correlated descriptor data.

Materials and Software:

- Chemical structures and biological activity data

- Molecular descriptor/fingerprint calculation software

- PLS implementation (SIMCA, R pls package, Python scikit-learn)

- Cross-validation utilities

Procedure:

Data Preparation and Descriptor Calculation

- Prepare standardized molecular structures and experimental activities

- Calculate comprehensive descriptor sets or 3D-field descriptors (for CoMFA/CoMSIA)

- Standardize descriptors: mean-centering and unit variance scaling recommended

Initial Data Analysis and Pre-processing

- Perform exploratory analysis: Principal Component Analysis (PCA) to identify outliers

- Examine descriptor correlation matrix to assess multicollinearity

- Apply unsupervised clustering to verify dataset representativeness

PLS Factor Optimization

- Implement cross-validation (leave-one-out or group-based) to determine optimal number of latent variables [16]

- Plot prediction residual error sum of squares (PRESS) vs. number of components

- Select component number where PRESS is minimized or Q² is maximized

- Consider conservative factor selection to prevent overfitting

Model Training and Validation

- Develop PLS model with optimized number of components

- Calculate model statistics: R²X, R²Y, and Q²

- Validate using external test set prediction

- Perform permutation testing (Y-scrambling) to confirm model robustness

Model Interpretation and Visualization

- Analyze variable importance in projection (VIP) scores to identify influential descriptors

- Examine loading plots to interpret latent variable meaning

- Generate coefficient plots to visualize descriptor-activity relationships

- Create score plots to explore compound clustering and patterns

Table 3: Essential Software Tools for MLR and PLS QSAR Modeling

| Tool Name | Type | Primary Function | QSAR Application |

|---|---|---|---|

| PaDEL-Descriptor | Software | Calculates 1D, 2D molecular descriptors and fingerprints | Generates 1444 molecular descriptors for MLR/PLS input [20] |

| Mold2 | Software | Computes 777 molecular descriptors from 2D structures | Complementary descriptor source for comprehensive coverage [20] |

| QuBiLs-MAS | Software | Calculates 3D molecular descriptors using algebraic forms | Generates 8448 descriptors for complex property encoding [20] |

| R pls package | Library | Implements PLS regression with cross-validation | Factor optimization and model validation [14] |

| Genetic Algorithm | Algorithm | Performs variable selection for MLR | Identifies optimal descriptor subsets from large pools [15] |

| Replacement Method (RM) | Algorithm | Selects descriptor combinations minimizing standard deviation | Efficient alternative to exhaustive search for MLR [20] |

Advanced Applications and Case Studies

PLK1 Inhibitor Modeling Using MLR

A comprehensive study of 530 polo-like kinase-1 (PLK1) inhibitors demonstrated the application of MLR with advanced variable selection. Researchers computed 26,761 initial descriptors using PaDEL, Mold2, and QuBiLs-MAS software, which were pre-filtered to 11,565 linearly independent descriptors [20]. The Replacement Method variable selection technique identified optimal descriptor subsets, producing models with strong predictive performance for external test compounds. This case study highlights the importance of comprehensive descriptor calculation and rigorous variable selection in MLR-QSAR for kinase inhibitors.

3D-QSAR with PLS Regression

In Comparative Molecular Field Analysis (CoMFA) and other 3D-QSAR approaches, PLS regression is the standard statistical method for correlating steric and electrostatic field values with biological activity [19]. The technique successfully handles the thousands of correlated field descriptors generated at lattice points around molecular alignments. Cross-validation determines the optimal number of components, with typical Q² values >0.5 indicating predictive models. The integration of genetic algorithms for field selection further enhances PLS model quality in 3D-QSAR [16].

Troubleshooting and Quality Control

Common Issues and Solutions:

- Overfitting in MLR: Implement stricter variable selection criteria, increase training set size, or apply additional validation techniques

- Low Predictive Power in PLS: Re-evaluate molecular alignment (for 3D-QSAR), examine descriptor relevance, or adjust number of latent variables

- Model Instability: Apply bootstrapping to assess coefficient stability, check for influential outliers, or implement consensus modeling

- Chance Correlation: Always perform Y-randomization tests; significant degradation in scrambled models indicates real structure-activity relationships

Quality Control Metrics:

- For MLR: R² > 0.7, Q² > 0.6, and significance level p < 0.05 for critical descriptors

- For PLS: R²Y > 0.7, Q² > 0.5, and clear PRESS minimum for factor selection

- For both methods: external prediction R² > 0.6 and minimal performance degradation vs. training

MLR and PLS regression continue to be indispensable tools in the QSAR modeling repertoire, each with distinct advantages for specific data scenarios. MLR provides maximum interpretability for carefully curated descriptor sets, while PLS offers robust performance for high-dimensional, correlated data typical of modern chemical descriptor collections. The appropriate selection between these techniques, coupled with rigorous validation practices, enables researchers to develop reliable predictive models that accelerate drug discovery and molecular design.

The fundamental premise of structure-activity relationship (SAR) analysis faces a significant challenge known as the SAR Paradox, which states that it is not the case that all similar molecules have similar activities [19] [22] [23]. This paradox presents substantial obstacles in drug discovery and quantitative structure-activity relationship (QSAR) modeling, where small structural modifications can unexpectedly lead to dramatic fluctuations in biological properties [24]. This Application Note examines the mechanistic basis of the SAR paradox and provides detailed experimental protocols to identify, characterize, and navigate activity cliffs in pharmaceutical research.

The SAR paradox contradicts the central assumption in medicinal chemistry that structurally similar compounds exhibit predictable biological activities [22]. This phenomenon manifests as "activity cliffs" – where minute structural changes result in disproportionate changes in biological activity [24]. Understanding these discontinuities is crucial for developing predictive QSAR models, especially as machine learning approaches become increasingly integral to drug discovery [8] [25].

The paradox arises because different biological activities (e.g., receptor binding, solubility, metabolic stability) may depend on different molecular features, meaning that a "small difference" is not universally defined but varies according to the specific biological context [19] [23]. Recent advances in network pharmacology have further complicated this picture by revealing that drugs typically act on multiple targets rather than single ones, creating complex relationships between structure and activity [24].

Mechanistic Basis of the SAR Paradox

Key Factors Contributing to Activity Cliffs

- Binding Site Specificity: Minor structural modifications can significantly alter binding affinities to protein targets through subtle changes in electrostatic interactions, hydrogen bonding, or steric effects [24].

- Multi-Target Pharmacology: A single compound typically interacts with multiple biological targets, and small structural changes may differentially affect these various interactions [24].

- Molecular Descriptor Limitations: Traditional QSAR descriptors may fail to capture critical three-dimensional and electronic features responsible for discontinuous activity changes [19] [26].

- Physicochemical Property Discontinuities: Small structural changes can lead to disproportionate alterations in key properties like solubility, logP, or membrane permeability [27].

Table 1: Experimental Techniques for SAR Paradox Investigation

| Technique Category | Specific Methods | Information Gained | Throughput |

|---|---|---|---|

| Computational Screening | Matched Molecular Pair Analysis (MMPA), 3D-QSAR, Machine Learning Models | Identifies potential activity cliffs, predicts key molecular descriptors | High |

| Biophysical Assays | Surface Plasmon Resonance (SPR), Isothermal Titration Calorimetry (ITC) | Direct measurement of binding affinity and kinetics | Medium |

| Structural Biology | X-ray Crystallography, Cryo-EM | Atomic-level resolution of ligand-target interactions | Low |

| Cellular Profiling | High-content screening, phenotypic assays | Functional activity in biologically relevant systems | Medium-High |

Visualizing the SAR Paradox Concept

Diagram 1: The SAR Paradox conceptual framework showing how similar structures lead to unexpected activity profiles.

Experimental Protocols

Protocol 1: Systematic Identification of Activity Cliffs Using Matched Molecular Pair Analysis (MMPA)

Purpose: To systematically identify and quantify activity cliffs within compound datasets [19].

Materials:

- Curated chemical structures with associated biological activity data

- Computational tools: RDKit or OpenBabel for structure handling

- MMPA implementation (e.g., Open Source MMP application)

- Statistical analysis software (e.g., R, Python with pandas)

Procedure:

- Data Preparation:

- Compile chemical structures and corresponding biological activity measurements (e.g., IC50, Ki)

- Standardize chemical representations (remove salts, neutralize charges, generate canonical tautomers)

- Apply rigorous data quality filters to remove unreliable measurements

Matched Molecular Pair Generation:

- Fragment molecules at single bonds to identify identical structural contexts

- Identify all pairs of compounds differing only at a single site (e.g., -Cl vs -OH substitution)

- Calculate ΔPActivity = |pActivity₁ - pActivity₂| for each pair (where pActivity = -log10[Activity])

Activity Cliff Definition:

- Set threshold for significant activity difference (typically ΔPActivity > 2.0, representing 100-fold potency change)

- Flag pairs exceeding threshold as potential activity cliffs

- Exclude pairs with poor data quality or insufficient potency measurements

Context Analysis:

- Categorize cliffs by substitution type (e.g., halogen exchange, functional group changes)

- Analyze local chemical environment around substitution site

- Correlate cliff magnitude with specific molecular descriptors

Validation:

- Select representative cliff pairs for experimental confirmation

- Design synthetic routes for analogous compounds to validate cliff observations

Table 2: Key Research Reagents and Computational Tools for SAR Paradox Studies

| Category | Item | Specifications | Application/Function |

|---|---|---|---|

| Computational Descriptors | DRAGON Molecular Descriptors | 3,300+ descriptors covering structural, topological, electronic properties | Quantifying molecular features for QSAR modeling [24] |

| Machine Learning Algorithms | Random Forest, Support Vector Machines (SVM), Graph Neural Networks | Nonlinear pattern recognition, handling high-dimensional data [8] | Predicting biological activity and identifying descriptor importance [8] [25] |

| Structural Biology Reagents | Cryo-EM Grids | Ultra-thin carbon on 300 mesh gold | High-resolution structure determination of ligand-target complexes |

| Binding Assay Systems | SPR Chips | CM5 sensor chips | Label-free binding affinity and kinetics measurement |

| Chemical Informatics Platforms | RDKit, PaDEL-Descriptor | Open-source cheminformatics libraries | Molecular descriptor calculation and structural analysis [8] |

Protocol 2: Integrated QSAR-Gene Expression Approach to Resolve SAR Paradox

Purpose: To enhance QSAR model performance by integrating structural descriptors with gene expression profiles, addressing cases where structural similarity fails to predict biological activity [24].

Materials:

- Compound library with standardized structures

- Cell line appropriate for target biology

- RNA extraction kit (e.g., RNeasy Mini Kit)

- Microarray or RNA-seq platform

- Statistical software with machine learning capabilities

Procedure:

- Gene Expression Profiling:

- Treat biological system (cells, tissues) with compounds showing paradoxical SAR

- Include appropriate vehicle controls and biological replicates (n≥3)

- Extract RNA at optimized time points post-treatment

- Perform transcriptomic analysis using microarray or RNA-seq

Feature Selection:

- Identify differentially expressed genes (fold-change > 2, adjusted p-value < 0.05)

- Apply recursive feature elimination to select most informative genes

- Calculate frequency of selection for each gene across multiple model iterations

Integrated Model Construction:

- Compute conventional molecular descriptors (topological, electronic, geometrical)

- Combine selected molecular descriptors with gene expression features

- Build predictive models using support vector machines or random forests

- Validate model performance through cross-validation and external test sets

Mechanistic Interpretation:

- Pathway analysis of significant genes using KEGG or GO databases

- Relate key molecular descriptors to identified biological pathways

- Generate testable hypotheses regarding mechanism of activity cliffs

Case Study: Navigating the SAR Paradox in HDAC Inhibitor Development

A recent study on indole-based HDAC inhibitors demonstrates practical approaches to the SAR paradox through Quantitative Activity-Activity Relationship (QAAR) analysis [26]. Researchers developed multiple linear regression models correlating molecular descriptors with selectivity profiles (pIC50HDAC8/HDACX).

Key Findings:

- Selectivity-determining descriptors included ASP-6 (atom-type electrotopological state), SpMin3_Bhv (spectral moment descriptors), and PubchemFP697 (structural fingerprint features)

- Model statistics (R² = 0.920, Q² = 0.769 for HDAC8/HDAC1 selectivity) demonstrated robust predictive capability

- The resulting models enabled rational design of selective inhibitors despite complex SAR patterns

This case study illustrates how advanced modeling techniques can extract meaningful patterns from paradoxical SAR data, enabling more predictive chemical optimization.

The SAR paradox represents both a challenge and opportunity in drug discovery. By employing integrated experimental and computational approaches—including matched molecular pair analysis, advanced QSAR modeling, and transcriptomic profiling—researchers can better navigate activity cliffs and develop more predictive structure-activity models.

Emerging strategies including AI-integrated QSAR modeling [8], deep learning descriptors [25], and protein-ligand interaction fingerprints show particular promise for resolving paradoxical SAR cases. These approaches will become increasingly important as drug discovery tackles more complex targets and polypharmacological agents.

Diagram 2: Integrated workflow for addressing the SAR Paradox through computational and experimental approaches.

The field of Quantitative Structure-Activity Relationships (QSAR) has undergone a profound transformation, evolving from classical statistical approaches to modern, data-intensive machine learning (ML) and artificial intelligence (AI) methodologies [8]. This shift was catalyzed by the confluence of large-scale chemical databases, substantial increases in computational power, and advanced algorithmic innovations [8] [4]. Where traditional QSAR relied on linear regression models and manually curated molecular descriptors, contemporary frameworks now leverage graph neural networks, deep learning, and ensemble methods to capture complex, non-linear relationships in chemical data across billions of compounds [8]. This data revolution has fundamentally accelerated virtual screening, lead optimization, and toxicity prediction, establishing computational approaches as indispensable tools in modern drug discovery pipelines [8] [4].

The Evolution of Modeling Approaches

The transition from classical to ML-based QSAR represents not merely a methodological upgrade but a fundamental rethinking of how chemical data is analyzed and modeled.

Table 1: Comparison of Classical and Machine Learning QSAR Approaches

| Aspect | Classical QSAR | Modern ML-QSAR |

|---|---|---|

| Primary Methods | Multiple Linear Regression (MLR), Partial Least Squares (PLS) [8] [4] | Random Forests, Support Vector Machines, Artificial Neural Networks, Deep Learning [8] [4] |

| Data Handling | Limited datasets, linear relationships [8] | High-dimensional chemical spaces, non-linear patterns [8] |

| Descriptor Interpretation | Manual selection and interpretation [8] | Automated feature importance (e.g., SHAP, permutation importance) [8] |

| Computational Demand | Low to moderate [4] | High, requiring specialized hardware (GPUs) [8] |

| Applicability Domain | Clearly defined by training data [4] | Complex, often requiring specialized validation [4] |

Classical Foundations

Classical QSAR methodologies, including Multiple Linear Regression (MLR) and Principal Component Regression (PCR), established the foundational principle of correlating numerical molecular descriptors with biological activity [8] [4]. These methods are valued for their interpretability, simplicity, and regulatory acceptance [8]. They perform effectively when relationships between structure and activity are linear and datasets are reasonably small [8]. However, they frequently falter with highly non-linear relationships or noisy, high-dimensional data, limitations that became increasingly apparent as chemical databases expanded [8].

The Machine Learning Rise

Machine learning algorithms have significantly expanded the predictive power and flexibility of QSAR models [8]. Algorithms such as Random Forests (RF), Support Vector Machines (SVM), and k-Nearest Neighbors (kNN) became standard tools due to their ability to manage complex, non-linear descriptor-activity relationships without prior assumptions about data distribution [8]. The development of graph neural networks and SMILES-based transformers further enabled end-to-end learning from molecular structures without manual descriptor engineering, creating more data-driven and adaptable QSAR pipelines [8].

Application Note: Developing a Modern QSAR Model for NF-κB Inhibition

This protocol details the development of a robust QSAR model for predicting Nuclear Factor-κB (NF-κB) inhibition, illustrating the standard workflow that integrates machine learning and rigorous validation [4]. The process, from data collection to model deployment, typically spans several days to weeks, depending on computational resources and dataset size.

Materials and Reagents

Table 2: Essential Research Reagent Solutions for QSAR Modeling

| Reagent/Category | Specific Examples & Details | Primary Function |

|---|---|---|

| Chemical Compound Library | 121 curated NF-κB inhibitors with reported IC₅₀ values [4] | Provides the essential activity data for model training and validation. |

| Molecular Descriptor Calculator | DRAGON, PaDEL, RDKit [8] | Generates numerical representations (descriptors) of chemical structures. |

| Machine Learning Library | scikit-learn, KNIME, AutoQSAR [8] | Provides algorithms (e.g., ANN, SVM) for building the predictive model. |

| Model Validation Framework | QSARINS, Build QSAR [8] | Offers tools for internal/external validation and applicability domain definition. |

| Cloud/High-Performance Computing | Cloud-based platforms for computational modeling [8] | Supplies the processing power required for complex ML model training. |

Step-by-Step Methodology

Step 1: Data Curation and Preparation

- Activity Data Collection: Assemble a dataset of 121 compounds with experimentally determined IC₅₀ values against NF-κB [4].

- Chemical Structure Standardization: Curate and standardize molecular structures using a tool like RDKit to ensure consistency [8].

- Dataset Division: Randomly split the dataset into a training set (~80 compounds, ~66% for model development) and a test set (~41 compounds, ~34% for external validation) [4].

Step 2: Molecular Descriptor Calculation and Selection

- Descriptor Calculation: Compute a wide range of 1D, 2D, and 3D molecular descriptors using software such as DRAGON or PaDEL [8].

- Descriptor Preprocessing: Apply dimensionality reduction techniques like Principal Component Analysis (PCA) or feature selection methods (e.g., LASSO, recursive feature elimination) to identify the most statistically significant descriptors and reduce overfitting [8] [4].

Step 3: Model Training and Optimization

- Algorithm Selection: Train and compare different models, including Multiple Linear Regression (MLR) and Artificial Neural Networks (ANN) [4].

- Hyperparameter Tuning: Optimize model architectures using grid search or Bayesian optimization. For instance, an ANN architecture with topology

[8.11.11.1]has demonstrated superior performance for this specific task [4].

Step 4: Model Validation and Defining Applicability Domain

- Internal Validation: Assess the training set performance using metrics like the coefficient of determination (R²) and cross-validated R² (Q²) [8] [4].

- External Validation: Evaluate the model's generalizability by predicting the activity of the held-out test set [4].

- Applicability Domain: Use the leverage method to define the chemical space where the model's predictions are reliable [4].

The following workflow diagram visualizes the key stages of this QSAR modeling protocol:

Anticipated Results and Interpretation

- Performance Metrics: A successful ANN model should demonstrate high predictive accuracy on both training and test sets, with metrics such as Q² > 0.6 and R² > 0.8 for the external test set, indicating a robust and non-overfit model [4].

- Model Interpretation: Analyze the MLR model equation or use SHAP (SHapley Additive exPlanations) values for the ANN to identify which molecular descriptors (e.g., hydrophobicity, electronic properties) most significantly influence NF-κB inhibitory activity [8] [4].

- Utility: The validated model enables the efficient virtual screening of large chemical databases to identify new potential NF-κB inhibitor series for synthesis and experimental testing [4].

The Integrated Future: AI and Multi-Method Approaches

The data revolution in QSAR is characterized by the integration of multiple computational disciplines rather than the isolated use of single models. A prominent trend is the combination of ligand-based QSAR with structure-based methods like molecular docking and dynamics simulations [8]. This synergy provides deeper mechanistic insights into ligand-target interactions, enriching the predictive model with structural context. Furthermore, the adoption of cloud-based platforms is democratizing access to advanced modeling capabilities, allowing researchers to perform large-scale virtual screens of chemical libraries containing billions of compounds [8].

The following diagram illustrates how these computational approaches converge in a modern drug discovery pipeline:

Building Predictive Models: Machine Learning Algorithms and Real-World Applications in Drug Discovery

Algorithm Performance in QSAR Modeling

Table 1: Comparative Performance of Key ML Algorithms in QSAR Studies

| Algorithm | Typical QSAR Application | Reported Performance Metrics | Key Advantages for QSAR | Notable Case Studies |

|---|---|---|---|---|

| Random Forest (RF) | Predicting repeat-dose toxicity point-of-departure (POD) values [28] | RMSE: 0.71 log10-mg/kg/day, R²: 0.53 on external test set [28] | Robust to noisy data & outliers, handles high-dimensional descriptors, provides built-in feature importance [8] [29] | Toxicity prediction for 3592 environmental chemicals [28] |

| Support Vector Machine (SVM) | Classification and regression tasks in virtual screening and toxicity prediction [8] [29] | Often requires careful parameter tuning and feature selection for optimal performance [30] | Effective in high-dimensional spaces, works well with a clear margin of separation [8] | ADME evaluation and general molecular property prediction [31] |

| k-Nearest Neighbors (kNN) | Virtual screening, similarity searching, and preliminary compound classification [8] [1] | A simple and rough method to predict and rank molecules [31] | Simple implementation, effective for similarity-based chemical space navigation [1] | Ligand-based virtual screening based on molecular similarity [1] [31] |

Experimental Protocols for QSAR Modeling

Protocol: Developing a Random Forest QSAR Model for Toxicity Prediction

This protocol is adapted from a study that developed QSAR models to predict repeat-dose toxicity point-of-departure values using a large dataset of 3592 chemicals [28].

Reagents and Materials:

- Chemical Dataset: 3592 chemicals with experimentally derived in vivo toxicity data (e.g., from EPA's ToxValDB) [28].

- Software: Computational environment capable of running Random Forest (e.g., Python with scikit-learn, R) [8] [29].

Procedure:

- Data Compilation and Curation: Compile a dataset of chemicals with associated experimental toxicity values (e.g., NOAEL, LOAEL). This dataset may include multiple study types and species [28].

- Descriptor Calculation: Compute molecular descriptors encoding structural and physicochemical properties for each chemical. These can include 1D (e.g., molecular weight), 2D (e.g., topological indices), and 3D descriptors (e.g., molecular surface area) [8] [29].

- Data Preprocessing and Splitting: Split the curated data into a training set (e.g., 80%) for model development and an external test set (e.g., 20%) for final model validation [28].

- Model Training:

- Train a Random Forest regressor on the training set using chemical descriptors as features and the toxicity endpoint (e.g., log10-mg/kg/day) as the target variable [28].

- Optimize hyperparameters (e.g., number of trees, maximum depth) using techniques like grid search or Bayesian optimization within a cross-validation framework on the training set [8].

- Model Validation:

- Uncertainty Quantification (Optional): To account for experimental variability, construct a distribution for the predicted POD (e.g., with a standard deviation of 0.5 log10-mg/kg/day). Use bootstrap resampling to derive confidence intervals for each prediction [28].

- Model Interpretation: Use the RF model's built-in feature importance metrics or post-hoc interpretation tools (e.g., SHAP, LIME) to identify which molecular descriptors most strongly influence the toxicity predictions [8] [29].

Protocol: Comparative Analysis of ML Algorithms using a Bioactivity Dataset

This protocol outlines a method for comparing the performance of RF, SVM, and kNN against classical methods, based on a study screening for triple-negative breast cancer (TNBC) inhibitors [31].

Reagents and Materials:

- Bioactivity Dataset: A curated set of compounds with associated bioactivity data (e.g., IC₅₀, Ki). For example, 7,130 molecules with reported inhibitory activities from a source like ChEMBL [31].

- Software: A cheminformatics platform (e.g., KNIME, OCHEM) or programming environment with necessary ML libraries [31].

Procedure:

- Dataset Preparation: Collect and curate a dataset of compounds with reliable bioactivity data. Standardize the activity values (e.g., convert to log units) [31].

- Descriptor Generation: Calculate molecular descriptors or fingerprints for all compounds. The cited study used a combination of 613 descriptors from AlogP, ECFP, and FCFP fingerprints [31].

- Data Splitting: Randomly split the data into a training set (e.g., 85%) and a fixed external test set (e.g., 15%) [31].

- Model Building and Training:

- Train multiple models on the same training set:

- Random Forest: Optimize the number of trees and other parameters [31].

- Support Vector Machine (SVM): Tune hyperparameters such as the kernel type (e.g., RBF) and regularization parameter [31].

- k-Nearest Neighbors (kNN): Optimize the number of neighbors (k) [31].

- Classical Methods (for baseline): Include methods like Partial Least Squares (PLS) or Multiple Linear Regression (MLR) [31].

- Train multiple models on the same training set:

- Performance Evaluation:

- Use the same external test set to evaluate all trained models.

- Calculate and compare the R²pred (predictive R²) for regression tasks to quantify the models' performance on unseen data [31].

- Analysis of Training Set Size Impact (Optional): Investigate the robustness of each algorithm by repeating the training and evaluation with progressively smaller subsets of the original training data (e.g., 50%, 10%) and observing the change in R²pred on the fixed test set [31].

Workflow Visualization

Figure 1: Generic QSAR Machine Learning Workflow. This diagram outlines the standard process for developing and validating QSAR models using machine learning algorithms, highlighting the crucial step of external validation [28] [31].

Figure 2: Algorithm Performance in a Comparative Study. This diagram visualizes the setup and findings from a study that compared multiple algorithms, including RF, SVM, and kNN, for bioactivity prediction, showing RF's high predictive accuracy [31].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational Tools for ML-Driven QSAR

| Tool / Resource | Function / Application | Relevance to QSAR |

|---|---|---|

| Molecular Descriptors (e.g., ECFP, FCFP, 2D/3D descriptors) [31] | Numerical representations of chemical structure and properties. | Serve as the input features (X-variables) for ML models, capturing essential chemical information that correlates with biological activity [8] [31]. |

| Toxicity Value Database (ToxValDB) [28] | A publicly available database of in vivo toxicity data. | Provides high-quality experimental data (e.g., PODs) for training and validating predictive QSAR models for human health risk assessment [28]. |

| scikit-learn, KNIME [8] [29] | Open-source software libraries for machine learning and data analytics. | Provide accessible, standardized implementations of RF, SVM, and kNN algorithms, facilitating rapid model development, testing, and deployment [8] [29]. |

| SHAP (SHapley Additive exPlanations) [8] [29] | A method for interpreting the output of ML models. | Helps deconstruct "black-box" predictions by quantifying the contribution of each molecular descriptor to the final predicted activity, aiding mechanistic understanding [8] [29]. |

| ChEMBL Database [31] | A large-scale bioactivity database for drug discovery. | A rich source of curated, publicly available bioactivity data for thousands of compounds and protein targets, used to build training sets for ML models [31]. |

The field of Quantitative Structure-Activity Relationships (QSAR) has been fundamentally transformed by the integration of advanced deep-learning methodologies. Modern drug discovery now leverages sophisticated algorithms that can directly learn from molecular structures, moving beyond traditional descriptor-based approaches to enable more accurate and generalizable predictions of molecular properties and biological activities [17] [29]. Among these innovations, Graph Neural Networks (GNNs) and SMILES-based Transformers have emerged as particularly powerful architectures, each offering unique advantages for molecular representation learning [32] [25].

GNNs naturally represent molecules as graph structures, with atoms as nodes and bonds as edges, allowing for direct learning from structural topology [33]. Simultaneously, Transformer architectures adapted from natural language processing treat Simplified Molecular Input Line Entry System (SMILES) strings as sequential data, capturing complex patterns through self-attention mechanisms [32]. The convergence of these approaches represents a paradigm shift in QSAR modeling, enabling researchers to predict pharmacological properties, binding affinities, and toxicity profiles with unprecedented accuracy, thereby accelerating the drug discovery pipeline [17] [34].

Molecular Representation in QSAR

Evolution from Classical to Deep Learning Approaches

Traditional QSAR modeling relied heavily on hand-crafted molecular descriptors, which required significant domain expertise and often failed to capture complex structural relationships [29] [32]. Classical statistical methods including Multiple Linear Regression (MLR) and Partial Least Squares (PLS) were limited to linear relationships and predefined feature sets [29]. The advent of machine learning introduced algorithms like Random Forests and Support Vector Machines, which could capture nonlinear patterns but still depended on manual feature engineering [29].

The breakthrough came with deep learning approaches that enable end-to-end learning directly from molecular representations, eliminating the need for manual descriptor calculation and allowing models to discover relevant features automatically [33] [32]. This shift has dramatically expanded the scope and predictive power of QSAR models, particularly through two primary representation paradigms: graph-based structures and SMILES sequences [35].

Comparative Analysis of Molecular Representations

Table 1: Key Molecular Representation Formats in Modern QSAR

| Representation Type | Data Structure | Key Advantages | Limitations |

|---|---|---|---|

| Molecular Graph | Graph (nodes=atoms, edges=bonds) | Direct structural representation; Captures topology naturally [33] | Requires specialized architectures (GNNs); Over-smoothing/squashing issues [35] |

| SMILES String | Sequential text | Leverages NLP advancements; Simple serialization [32] | Loss of explicit structural information; Syntax sensitivity [35] |

| Molecular Fingerprints | Fixed-length binary vectors | Computational efficiency; Interpretability [36] | Information loss; Dependent on predefined patterns [32] |

| 3D Molecular Geometry | 3D coordinates with atomic features | Captures stereochemistry; Essential for binding affinity prediction [36] | Computationally intensive; Conformational flexibility challenges |

Graph Neural Networks for Molecular Property Prediction

Fundamental Principles and Architectures

GNNs operate on the message-passing framework, where information is propagated through the graph structure to learn meaningful molecular representations [33]. In this paradigm, each atom (node) aggregates information from its neighboring atoms and bonds, updating its own representation through multiple iterative steps [33]. The Message Passing Neural Network (MPNN) framework provides a standardized formulation for this process through three core operations: message generation, message aggregation, and node updating [33].

Several specialized GNN architectures have demonstrated exceptional performance in molecular property prediction:

- Graph Convolutional Networks (GCNs) apply convolutional operations to graph data, aggregating local neighborhood information [36]

- Graph Attention Networks (GATs) incorporate attention mechanisms to weight the importance of different neighbors during message passing [36]

- Graph Isomorphism Networks (GIN) offer maximal discriminative power based on the Weisfeiler-Lehman graph isomorphism test [37]

- Message Passing Neural Networks (MPNN) provide a general framework that encompasses many GNN variants [33]

Advanced GNN Architectures in Recent Applications

Recent research has developed increasingly sophisticated GNN architectures tailored to molecular modeling challenges. The MoleculeFormer architecture introduces a multi-scale feature integration model combining GCN and Transformer components while incorporating rotational equivariance constraints and 3D structural information [36]. This model processes both atom graphs and bond graphs, where bonds are treated as nodes and adjacent bonds are connected, providing complementary structural information [36].

Another significant advancement comes from Equivariant Graph Neural Networks (EGNNs), which maintain rotational and translational equivariance by updating 3D atomic coordinates based on relative positions and preserving distances between adjacent atoms [36]. This approach is particularly valuable for modeling molecular interactions and conformational properties where spatial arrangement is critical.

Table 2: Performance Comparison of GNN Architectures on Molecular Property Prediction Tasks

| Architecture | Key Features | Benchmark Tasks | Reported Performance |

|---|---|---|---|

| MoleculeFormer [36] | GCN-Transformer hybrid; 3D structural integration; Bond graphs | Efficacy/toxicity prediction; Phenotype screening; ADME evaluation | Robust performance across 28 drug discovery datasets |

| Meta-GTNRP [37] | GNN-Transformer fusion; Meta-learning for few-shot prediction | Nuclear receptor binding activity prediction | Outperforms conventional graph-based approaches on 11 NR targets |

| HRGCN+ [36] | Combined molecular graphs and descriptors | Molecular property prediction | Simple but highly efficient modeling |

| FP-GNN [36] | Integration of molecular fingerprints with graph attention | Molecular property prediction | Enhanced performance and interpretability |

SMILES-Based Transformers in Cheminformatics

Transformer Architecture Adaptation for Molecular Data

Transformer architectures originally developed for natural language processing have been successfully adapted to molecular sequences represented as SMILES strings [32]. The core innovation of Transformers is the self-attention mechanism, which computes pairwise relationships between all elements in a sequence, allowing the model to capture long-range dependencies and complex molecular patterns [32].

The adaptation process involves several key considerations:

- Tokenization: SMILES strings are decomposed into meaningful tokens representing atoms, bonds, and structural patterns [32]

- Positional Encoding: Since SMILES strings lack inherent positional information unlike natural language, positional encodings are added to provide sequence order context [32]

- Pretraining Strategies: Models are often pretrained on large unlabeled molecular datasets using objectives like masked language modeling before fine-tuning on specific property prediction tasks [35]

Advanced Transformer Applications and Hybrid Approaches

Recent applications have demonstrated the versatility of Transformer architectures in cheminformatics. ChemBERTa and similar models apply masked language modeling pretraining to SMILES sequences, learning rich molecular representations that transfer effectively to various downstream prediction tasks [35].

The UniMAP framework represents a significant advancement by integrating both SMILES and graph representations within a unified architecture [35]. This multi-modality approach employs four pretraining tasks: Multi-Level Cross-Modality Masking (CMM), SMILES-Graph Matching (SGM), Fragment-Level Alignment (FLA), and Domain Knowledge Learning (DKL) to achieve comprehensive cross-modality fusion [35]. By leveraging both global (molecular-level) and local (fragment-level) alignments, UniMAP captures fine-grained semantics between sequence and graph representations, enabling more nuanced molecular similarity assessments and property predictions [35].

Experimental Protocols and Application Notes

Protocol 1: Implementing a GNN-Transformer Hybrid Model

Purpose: To create a hybrid architecture combining GNNs and Transformers for molecular property prediction, specifically optimized for few-shot learning scenarios with limited labeled data [37].

Workflow:

- Molecular Graph Input Processing:

- Convert SMILES to molecular graphs using RDKit [37]

- Initialize node features using atom descriptors (atom type, degree, hybridization, etc.)

- Initialize edge features using bond descriptors (bond type, conjugation, stereochemistry, etc.)

Graph Neural Network Component:

- Implement a GNN backbone (GIN, GAT, or GCN) for local structural feature extraction [37]

- Apply 3-6 message passing layers to capture increasingly larger molecular substructures

- Generate graph-level embedding through hierarchical pooling or attention-based readout

Transformer Component:

- Process GNN-generated node embeddings as input sequence to Transformer encoder [37]

- Apply multi-head self-attention to capture global dependencies between all atom representations

- Utilize positional encodings adapted from molecular graph topology rather than sequence position

Meta-Learning Framework (for few-shot applications) [37]:

- Formulate learning across multiple related NR-binding tasks

- Implement Model-Agnostic Meta-Learning (MAML) for parameter initialization

- Separate training into meta-training and meta-testing phases with support and query sets

GNN-Transformer Hybrid Architecture for Molecular Property Prediction

Protocol 2: Multi-Modality Molecular Representation Learning

Purpose: To leverage both SMILES and graph representations through unified pretraining for enhanced performance on diverse molecular property prediction tasks [35].

Workflow:

- Multi-Modality Input Representation:

Embedding Layer:

- SMILES Embedding: Map tokens to embedding vectors using learned embeddings

- Graph Embedding: Generate initial atom embeddings using GCN or linear projection [35]

- Positional Encoding: Add learnable position embeddings to SMILES tokens

Transformer Encoder:

- Concatenate SMILES and graph embeddings into unified sequence [35]

- Process through multi-layer Transformer encoder with cross-attention between modalities

- Utilize shared weights across modalities for parameter efficiency

Multi-Task Pretraining:

- Implement Cross-Modality Masking (CMM): Mask tokens and nodes across both modalities

- SMILES-Graph Matching (SGM): Global alignment between modalities

- Fragment-Level Alignment (FLA): Local alignment using BRICS fragments [35]

- Domain Knowledge Learning (DKL): Incorporate chemical knowledge constraints

Multi-Modal Molecular Representation Learning Workflow

Table 3: Key Research Resources for GNN and Transformer Implementation in QSAR

| Resource Category | Specific Tools/Libraries | Primary Function | Application Notes |

|---|---|---|---|

| Cheminformatics Libraries | RDKit [37], DeepChem [35], PaDEL [29] | Molecular processing, descriptor calculation, fingerprint generation | RDKit essential for SMILES-to-graph conversion; DeepChem provides standardized ML pipelines |

| Deep Learning Frameworks | PyTorch, PyTorch Geometric, TensorFlow, DGL | Implementation of GNN and Transformer architectures | PyTorch Geometric offers specialized GNN layers and molecular datasets |

| Molecular Databases | PubChem [35], ChEMBL [37], BindingDB [37], NURA [37] | Source of labeled molecular data for training and validation | NURA database provides nuclear receptor activity data for 15,247 compounds across 11 NRs [37] |

| Benchmarking Platforms | MoleculeNet [36], TDC | Standardized benchmarks for molecular property prediction | MoleculeNet includes multiple classification and regression tasks for fair model comparison |

| Pretrained Models | ChemBERTa [35], GROVER [35], UniMAP [35] | Transfer learning for molecular property prediction | Pretrained on millions of compounds; can be fine-tuned with limited task-specific data |

| Fingerprint Algorithms | ECFP [36], RDKit fingerprints [36], MACCS keys [36] | Molecular representation for traditional ML or hybrid models | ECFP performs best for classification; MACCS keys favorable for regression tasks [36] |

Performance Benchmarking and Comparative Analysis

Quantitative Performance Assessment

Table 4: Performance Benchmarks of Deep Learning Models on Molecular Property Prediction

| Model Architecture | Representation Type | Nuclear Receptor Binding (AUC) | Toxicity Prediction (AUC) | ADME Properties (RMSE) | Few-Shot Learning Capability |

|---|---|---|---|---|---|