From In Silico to In Vitro: A Strategic Framework for Optimizing Computational Descriptors to Accelerate Experimental Validation in Biomedicine

This article provides a comprehensive guide for researchers and drug development professionals on refining computational screening descriptors to enhance the success rate of experimental validation.

From In Silico to In Vitro: A Strategic Framework for Optimizing Computational Descriptors to Accelerate Experimental Validation in Biomedicine

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on refining computational screening descriptors to enhance the success rate of experimental validation. Covering foundational principles, advanced methodological applications, common troubleshooting strategies, and rigorous validation techniques, it synthesizes current best practices from recent case studies in drug discovery and materials science. The content is designed to bridge the gap between computational prediction and laboratory confirmation, offering actionable insights to reduce experimental attrition and accelerate the development of new therapeutic compounds and materials.

Laying the Groundwork: Core Principles of Computational Descriptors and Screening Pipelines

In modern computational research, particularly in drug discovery and materials science, the prediction of complex properties relies on the calculation of specific numerical descriptors. These parameters are quantitative representations of molecular, energetic, and structural characteristics that enable researchers to predict biological activity, material functionality, and stability without exhaustive experimental testing. Molecular descriptors capture atomic-level interactions and electronic properties, energetic descriptors quantify binding affinities and stability, while structural descriptors define morphological features critical for function. This technical support center provides essential guidance for researchers employing these descriptors in computational screening workflows, focusing on practical implementation, troubleshooting, and optimization for experimental validation.

Frequently Asked Questions (FAQs)

Q1: What are the primary categories of computational descriptors and their main applications in screening?

Computational descriptors are broadly categorized into three domains with distinct applications:

Molecular Descriptors: These include electronic properties, orbital energies (HOMO-LUMO), and pharmacophoric features. They are predominantly used in ligand-based virtual screening and quantitative structure-activity relationship (QSAR) modeling to predict biological activity and optimize lead compounds [1] [2].

Energetic Descriptors: These encompass binding free energy, decomposition enthalpy (ΔHd), and docking scores. They are crucial for structure-based virtual screening (SBVS) to evaluate ligand-target complex stability, predict binding affinity, and assess material stability [3] [1] [4].

Structural Descriptors: These parameters, such as pore limiting diameter (PLD), largest cavity diameter (LCD), and void fraction, are essential in materials science for screening porous materials like metal-organic frameworks (MOFs) for applications in gas separation, adsorption, and catalysis [5] [6].

Q2: Which docking score threshold should I use for virtual screening to identify true hits?

Selecting an appropriate docking score threshold is context-dependent. A common starting point is a binding energy ≤ -10 kcal/mol, which was used to identify 109 natural compounds from 25,000 candidates targeting butyrate biosynthesis enzymes [3]. However, you must validate this threshold for your specific target:

- System-Specific Validation: Conduct a small-scale pilot screen with known actives and decoys to establish optimal thresholds for your target protein.

- Pose Inspection: Never rely solely on scores. Always visually inspect the top-ranking binding poses to confirm plausible interactions like hydrogen bonding and hydrophobic contacts [3] [7].

- Multi-Parameter Filtering: Combine docking scores with other descriptors like ligand efficiency, molecular weight, and interaction patterns to improve hit-rate [8].

Q3: My DFT-calculated reaction energies seem inaccurate. What are the best-practice functional and basis set combinations?

Outdated computational protocols are a common source of error. The popular B3LYP/6-31G* combination is known to have inherent errors, including missing dispersion effects and basis set superposition error (BSSE) [9]. Instead, consider these robust, modern alternatives:

- For Geometry Optimization & Energetics: Use composite methods like r²SCAN-3c or B3LYP-3c, which are more accurate and computationally efficient than outdated standards [9].

- For Robust Property Prediction: Employ a multi-level approach. Optimize structures with a fast, reliable functional like B97M-V combined with the def2-SVPD basis set, followed by single-point energy calculations with a higher-level method [9].

- Key Consideration: Ensure your system has a single-reference, closed-shell electronic structure. Multi-reference systems (e.g., biradicals) require more advanced wavefunction methods [9].

Q4: How do I determine the optimal structural descriptors for screening MOFs for gas adsorption?

For gas adsorption applications like carbon capture or iodine removal, key structural descriptors have optimal ranges that maximize performance [5] [6]:

Table: Optimal Structural Descriptor Ranges for MOFs in Iodine Capture

| Structural Descriptor | Optimal Range | Performance Impact |

|---|---|---|

| Largest Cavity Diameter (LCD) | 4.0 - 7.8 Å | Below 4Å, steric hindrance blocks adsorption; above 7.8Å, host-guest interactions weaken [6]. |

| Void Fraction (φ) | 0.09 - 0.17 | Lower porosity enhances framework-analyte interactions in competitive adsorption [6]. |

| Density | 0.9 - 2.2 g/cm³ | Lower densities provide more adsorption sites, but very low densities reduce structural stability [6]. |

| Pore Limiting Diameter (PLD) | 3.34 - 7.0 Å | Must be larger than the kinetic diameter of the target gas molecule (e.g., I₂ is 3.34Å) [6]. |

Q5: What are the key steps for experimental validation of computationally screened hits?

A robust validation pipeline is crucial for translating computational predictions into real-world results. Follow this integrated workflow:

- In Vitro Biological Assays: Culture bacterial strains (e.g., Faecalibacterium prausnitzii) with screened compounds and measure metabolic output (e.g., butyrate production via gas chromatography) and gene expression (e.g., qRT-PCR for enzyme upregulation) [3].

- Ex Vivo/Functional Assays: Treat relevant cell lines (e.g., C2C12 myocytes) with conditioned media from bacterial cultures to assess functional effects like cell viability, gene expression, and inflammatory marker reduction [3].

- Materials Synthesis & Characterization: For materials, synthesize predicted stable compositions (e.g., via solid-state reactions) and characterize structure with X-ray diffraction. Then, test performance (e.g., lithium diffusivity, capacity in batteries) [4].

Troubleshooting Guides

Poor Correlation Between Docking Scores and Experimental Activity

Problem: Compounds with favorable (negative) docking scores show weak or no activity in experimental assays.

Solution:

- Verify Binding Pose Quality: The score may be based on an incorrect pose. Manually inspect the top-ranked pose for key interactions with active site residues. Ensure the pose is chemically plausible [7].

- Incorporate System Flexibility: Standard docking treats the protein as rigid. If possible, use molecular dynamics (MD) simulations to account for protein flexibility and solvation effects, which can provide more accurate binding free energy estimates via methods like free energy perturbation (FEP) [1] [8].

- Use Consensus Scoring: Relying on a single scoring function can be misleading. Re-dock your ligands with multiple scoring functions or use consensus scoring approaches to improve reliability [7].

- Check Ligand Preparation: Ensure ligands were correctly prepared with proper protonation states, tautomers, and stereochemistry, as errors here lead to inaccurate poses and scores [3].

Unrealistic DFT-Calculated Energies or Geometries

Problem: Calculated reaction energies are implausible, or optimized molecular geometries are distorted.

Solution:

- Benchmark Your Method: Test your functional/basis set combination on a small, well-known model system from the literature to check for systematic errors [9].

- Confirm Electronic Ground State: For open-shell systems, use an unrestricted formalism. For potential multi-reference systems (e.g., diradicals, some transition metal complexes), use multi-reference methods instead of standard DFT [9].

- Inspect Initial Geometry: Ensure your input molecular structure is reasonable. Grossly distorted bond lengths or angles in the input can lead to convergence on incorrect local minima.

- Include Dispersion Corrections: Modern DFT requires empirical dispersion corrections (e.g., D3, D4) for accurate treatment of van der Waals interactions, which are critical for binding energies and conformational energies [9].

Machine Learning Models for Descriptor Analysis Have Poor Predictive Power

Problem: Your trained ML model (e.g., Random Forest) fails to accurately predict material or ligand properties based on computed descriptors.

Solution:

- Expand and Curate Feature Set: Poor performance often stems from inadequate descriptors. Move beyond basic structural features. Incorporate molecular features (atom types, bonding modes), chemical features (Henry's coefficient, heat of adsorption), and use molecular fingerprints (e.g., MACCS keys) for a more comprehensive representation [6].

- Assess Feature Importance: Use your ML model's built-in tools (e.g., featureimportances in scikit-learn) to identify which descriptors are most relevant. Retrain the model using only the top contributors to reduce noise [6].

- Ensure Data Quality and Quantity: ML models require sufficient, high-quality data. Check for errors in your descriptor calculations and ensure your dataset size is adequate for the model complexity.

Experimental Protocols & Workflows

Protocol: Integrated Computational-Experimental Screening for Bioactive Compounds

This protocol outlines the workflow for identifying natural compounds that enhance bacterial butyrate production and validating their effects on muscle cells [3].

A. Computational Screening Phase

- Target Preparation: Obtain 3D structures of key enzymes (e.g., butyryl-CoA dehydrogenase from F. prausnitzii). Use homology modeling (e.g., SWISS-MODEL) if experimental structures are unavailable [3].

- Ligand Library Preparation: Compile a library of natural compounds from databases like FooDB and PubChem. Prepare 3D structures, assign charges, and minimize energy [3].

- Molecular Docking: Perform virtual screening with AutoDock Vina. Use a grid box encompassing the active site and an exhaustiveness level of 8-12. Select compounds with binding energy ≤ -10 kcal/mol for further analysis [3].

- Network Pharmacology Analysis: Input selected compounds into SwissTargetPrediction to identify putative human targets. Construct compound-gene-disease networks using Cytoscape and perform KEGG pathway enrichment analysis to elucidate potential mechanisms [3].

B. Experimental Validation Phase

- Bacterial Culture and Treatment:

- Culture butyrate-producing bacteria (e.g., F. prausnitzii, A. hadrus) in appropriate anaerobic conditions.

- Treat bacteria with selected NCs (e.g., hypericin, piperitoside) for 0-48 hours in monoculture and coculture systems.

- Measure bacterial growth at OD600 and harvest samples for analysis.

- Butyrate and Gene Expression Measurement:

- Quantify butyrate production from culture supernatants using gas chromatography.

- Extract bacterial RNA and perform qRT-PCR to measure the expression of butyrate-pathway genes (BCD, BHBD, BCoAT).

- Muscle Cell Assay:

- Culture C2C12 myoblast cells and differentiate into myotubes.

- Treat C2C12 cells with filter-sterilized supernatants from NC-treated bacterial cultures.

- Assess cell viability (e.g., MTT assay), quantify myogenic gene expression (MYOD1, myogenin) via qRT-PCR, and measure inflammatory markers (PTGS2, NF-κB, IL-2) and insulin sensitivity genes (PPARA, PPARG).

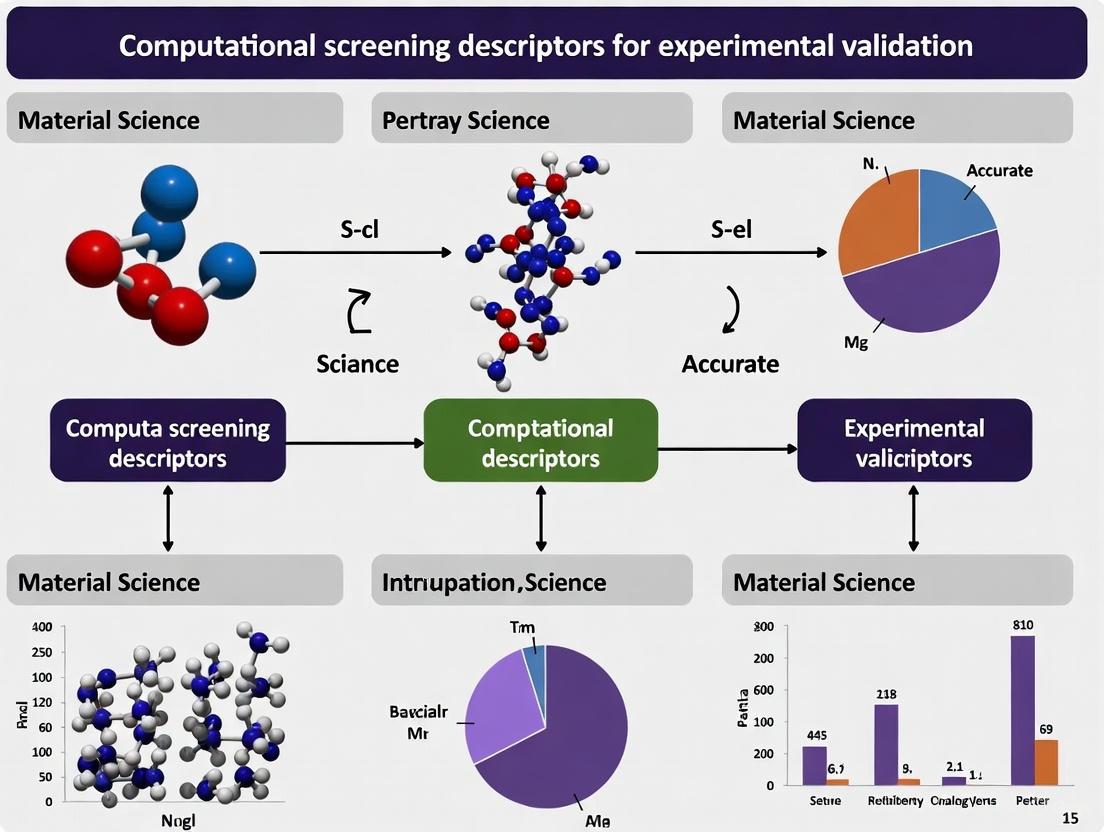

Diagram: Integrated Computational-Experimental Screening Workflow.

Protocol: High-Throughput Screening of Metal-Organic Frameworks (MOFs)

This protocol describes a computational workflow for screening MOF databases for gas adsorption applications like carbon capture or iodine removal [5] [6].

- Database Curation:

- Select a MOF database (e.g., CoRE MOF 2019, hMOF).

- Apply initial filters based on the application. For iodine capture, filter for PLD > 3.34 Å (kinetic diameter of I₂) [6].

- Descriptor Calculation:

- Use computational tools (e.g., Zeo++, Poreblazer) to calculate key structural descriptors: PLD, LCD, void fraction (φ), pore volume, surface area, and density [5].

- Perform molecular simulations (e.g., Grand Canonical Monte Carlo - GCMC) to calculate chemical descriptors like Henry's coefficient and heat of adsorption [6].

- Machine Learning Integration:

- Compile a feature set including structural, molecular (atom types, bonding), and chemical descriptors.

- Train ML regression models (e.g., Random Forest, CatBoost) on a subset of the data to predict adsorption performance.

- Use feature importance analysis to identify the most critical descriptors (e.g., Henry's coefficient is often a top predictor) [6].

- Identification of Promising Candidates:

- Apply the trained model to screen the entire database or focus on MOFs within the optimal descriptor ranges identified in the table in section 4.1.

- Select top candidates for subsequent experimental synthesis and validation.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagents and Materials for Computational-Experimental Validation

| Item Name | Function/Application | Technical Specification & Notes |

|---|---|---|

| Faecalibacterium prausnitzii | Model butyrate-producing gut bacterium used to validate compounds that enhance butyrate synthesis [3]. | Culture in anaerobic conditions; measure growth at OD600. |

| C2C12 Myoblast Cell Line | Mouse skeletal muscle cell line used to ex vivo validate the effects of bacterial metabolites on muscle cell growth and inflammation [3]. | Differentiate into myotubes; treat with bacterial supernatants. |

| AutoDock Vina | Widely used molecular docking software for structure-based virtual screening of compound libraries [3] [7]. | Open-source; grid box and exhaustiveness are key parameters. |

| CoRE MOF Database | Curated database of experimentally synthesized Metal-Organic Frameworks, used for high-throughput computational screening [5] [6]. | Structures are pre-processed for molecular simulations. |

| Zeo++ / Poreblazer | Software tools for calculating structural descriptors of porous materials, such as pore size distribution and surface area [5]. | Critical for characterizing MOFs and zeolites. |

| Quinoxaline-1,4-dioxide Derivatives | Example small molecules whose electronic properties (HOMO-LUMO, NLO) can be calculated using DFT as a model system for method validation [2]. | Use HF/6-311++G(d,p) or DFT/B3LYP/6-311++G(d,p) levels. |

Visualization of Key Signaling Pathways

The following diagram illustrates the key signaling pathways modulated in C2C12 muscle cells treated with bacterial supernatants from compound-treated F. prausnitzii, as identified in the referenced study [3].

Diagram: Butyrate-Induced Signaling in Muscle Cells.

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between structure-based and ligand-based virtual screening?

Structure-based virtual screening (SBVS) relies on the three-dimensional structure of a biological target, typically using molecular docking to automatically match small molecules from compound databases to a specified binding site on the target. The binding energy of possible binding modes is then calculated using a scoring function to rank compounds [10]. In contrast, ligand-based virtual screening (LBVS) does not require the target's 3D structure. Instead, it predicts compound activity by measuring the chemical similarity to one or more known active ligands, using methods like pharmacophore modeling, quantitative structure-activity relationship (QSAR), or structural similarity analysis [10]. LBVS is often the preferred method when the 3D structures of drug targets are unavailable [10].

FAQ 2: Why would I use consensus docking, and what are its benefits?

Using multiple docking programs and combining their results through consensus scoring can significantly improve the outcome of virtual screening [11]. Individual docking programs differ in their algorithms and scoring functions, and none is universally superior. This variability can lead to false positives or negatives in a screen reliant on a single program. Consensus docking mitigates this by averaging the rank or score of individual molecules from different programs, enhancing the predictive power and reliability of the virtual screening campaign by reducing program-specific biases [11].

FAQ 3: My virtual screening hits have good binding scores but poor experimental activity. What could be wrong?

This common issue can stem from several factors in the computational protocol:

- Scoring Function Limitations: Scoring functions are approximations and may not accurately predict absolute binding energies, sometimes favoring molecules that score well but do not bind effectively in reality [12] [11].

- Inadequate Protein Flexibility: If the protein structure used is too rigid and does not account for necessary side-chain or backbone movements upon ligand binding (induced fit), it may miss viable hits or identify false positives [10].

- Oversimplified System Conditions: The calculations might not properly model critical real-world conditions, such as the role of water molecules (solvent effects) in the binding pocket or the protonation states of key residues [10].

- Insufficient Post-Screening Analysis: Hits from docking should be subjected to more rigorous computational validation, such as molecular dynamics simulations and free-energy calculations, to assess binding stability and affinity more reliably before experimental testing [13] [14].

FAQ 4: When should I incorporate Density Functional Theory (DFT) calculations into my screening pipeline?

DFT is highly valuable for characterizing the electronic properties and stability of top candidate compounds identified from initial screening. It is typically used after filtering a large library down to a manageable number of promising hits. Key applications include:

- Assessing Reactivity: Analyzing Frontier Molecular Orbitals (FMOs) - the Highest Occupied (HOMO) and Lowest Unoccupied (LUMO) orbitals - to determine properties like chemical hardness, softness, and electrophilicity [13] [15].

- Identifying Interaction Sites: Generating Electrostatic Potential (ESP) maps to visualize electron-rich and electron-deficient regions on the molecule, which helps predict how it might interact with a protein target [13] [15].

- Validating Stability: Using the calculated HOMO-LUMO energy gap to infer the compound's stability; a larger gap generally suggests higher stability [14].

Troubleshooting Guides

Issue 1: Low Hit Rate and Poor Enrichment in Virtual Screening

Problem: After performing a virtual screen, very few experimentally validated hits are found, or the top-ranked compounds show no activity (poor enrichment).

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Non-representative protein structure | Check if the protein conformation (e.g., Apo vs. Holo) is relevant for ligand binding. | Use a co-crystallized structure with a similar inhibitor if possible. Consider using multiple protein conformations for docking [11]. |

| Incorrectly defined binding site | Verify the binding site location against known catalytic residues or from a structure with a native ligand. | Use literature and databases to define the binding site accurately. Tools like FTMap can help identify potential binding pockets [12]. |

| Limited chemical diversity in screened library | Analyze the chemical space coverage of your compound library. | Curate a diverse screening library or use a larger library encompassing broader chemical space [12]. |

| Inappropriate or biased scoring function | Perform a control docking with known actives and decoys to assess the scoring function's ability to distinguish them. | Switch to a different scoring function or, more effectively, implement a consensus docking approach [11]. |

Issue 2: Unstable Protein-Ligand Complexes in Molecular Dynamics

Problem: Complexes from molecular docking show high root-mean-square deviation (RMSD) and fail to maintain binding pose during molecular dynamics (MD) simulations.

Solutions:

- Refine Initial Poses: Before running long MD simulations, re-score and re-cluster docking poses using more rigorous scoring methods or short MD equilibration to eliminate unstable poses.

- Check System Setup: Ensure the protonation states of key protein residues (like His, Asp, Glu) in the binding site are correct for the simulated pH. Confirm the ligand was properly parameterized.

- Analyze Interactions: Use tools like

gmx hbondandgmx energyto track specific protein-ligand interactions (hydrogen bonds, salt bridges) over the simulation trajectory. A stable complex will typically maintain these key interactions [13] [14]. If hydrogen bonds are constantly breaking and reforming, the binding may be weak. - Validate with Advanced Calculations: Compute the binding free energy using methods like MM/GBSA or MM/PBSA on frames extracted from the MD trajectory. A favorable and stable free energy value confirms the stability observed in RMSD analysis [14].

Issue 3: Promising Computational Hits Exhibit Poor ADMET Properties

Problem: Identified virtual screening hits with strong predicted binding affinity have unfavorable pharmacokinetic or toxicity profiles, making them poor drug candidates.

Preventive Strategy and Solutions: Integrate ADMET prediction early in the virtual screening workflow. Don't wait until you have a final list of docking hits.

- Initial Filtering: Use tools like ADMETlab 3.0 or SwissADME to filter the entire screening library for compounds with poor drug-likeness (e.g., violating Lipinski's Rule of Five) or predicted toxicity before running computationally expensive docking [13].

- Intermediate Screening: After the primary docking screen, subject the top several hundred or thousand ranked compounds to ADMET profiling. This helps prioritize compounds with a balance of good binding affinity and acceptable properties.

- Hit Validation: For the final shortlist of candidates, use more detailed toxicity prediction tools like ProTox 3.0 to assess endpoints like hepatotoxicity and carcinogenicity [13].

Diagram 1: Integrated VS Workflow with DFT and ADMET

Experimental Protocols & Data

Protocol 1: Standard Workflow for a Structure-Based Virtual Screening Campaign

This protocol outlines key steps for a typical SBVS, from target preparation to hit identification [13] [12].

Protein Target Preparation:

- Obtain the 3D structure of the target protein from the PDB. If an experimental structure is unavailable, use homology modeling (e.g., with SWISS-MODEL) or AI-based prediction (e.g., AlphaFold) [11].

- Prepare the protein structure by adding hydrogen atoms, assigning correct protonation states, and removing water molecules and native ligands.

- Define the binding site coordinates, preferably based on the location of a co-crystallized ligand or known catalytic residues.

Compound Library Preparation:

- Select a database such as ZINC15 for commercially available compounds or an in-house library.

- Prepare ligands: generate 3D structures, assign correct tautomers and protonation states at physiological pH, and minimize their energy.

Molecular Docking:

- Choose a docking program (e.g., AutoDock Vina, DOCK3.7) and validate the docking protocol by re-docking a known ligand and checking if the predicted pose matches the experimental one (low RMSD).

- Run the docking calculation for all compounds in the prepared library.

Hit Identification and Analysis:

- Rank compounds based on the docking score (e.g., predicted binding affinity).

- Visually inspect the top-ranked poses to check for sensible binding interactions (e.g., hydrogen bonds, hydrophobic contacts).

- Apply consensus scoring or use more advanced scoring functions to re-rank the list and improve hit quality.

Protocol 2: Integrating DFT Calculations for Hit Characterization

This protocol is used for the electronic characterization of top screening hits [13] [15].

Geometry Optimization:

- Input: 3D structure of the ligand in a suitable format (e.g., .mol2).

- Method: Use a quantum chemistry package like Gaussian. Perform geometry optimization at the DFT level, typically using the B3LYP functional and a basis set like 6-31G* or 6-311++G(d,p), until a minimum energy structure is found.

Frontier Molecular Orbital (FMO) Analysis:

- Calculate the Highest Occupied Molecular Orbital (HOMO) and Lowest Unoccupied Molecular Orbital (LUMO) of the optimized structure.

- Derive reactivity descriptors:

- HOMO-LUMO Gap = ELUMO - EHOMO

- Ionization Potential (I) = -EHOMO

- Electron Affinity (A) = -ELUMO

- Chemical Hardness (η) = (I - A)/2

- Electrophilicity Index (ω) = μ²/2η, where μ = -(I + A)/2

Electrostatic Potential (ESP) Mapping:

- Calculate the ESP on the molecular surface.

- Visualize the map to identify regions of negative (red, electrophilic sites) and positive (blue, nucleophilic sites) potential, which indicate possible interaction sites with the protein.

Table 1: Comparison of Common Virtual Screening Approaches

| Approach | Key Principle | Data Required | Advantages | Limitations |

|---|---|---|---|---|

| Structure-Based (SBVS) [10] | Molecular docking into a protein binding site | 3D protein structure | Can find novel scaffolds; provides binding mode information | Scoring inaccuracy; requires a protein structure |

| Ligand-Based (LBVS) [10] | Chemical similarity to known actives | Set of known active compounds | Fast; no protein structure needed | Cannot find new scaffold classes; dependent on reference ligands |

| Consensus Docking [11] | Averages results from multiple programs | Same as SBVS | Improved reliability and enrichment | Increased computational cost and complexity |

| Machine Learning-Based [13] | Model trained on bioactivity data | Bioassay data for training | Very fast screening of ultra-large libraries | Model quality depends on training data |

Table 2: Key DFT-Based Reactivity Descriptors for Candidate Prioritization

| Descriptor | Formula | Interpretation | Significance in Drug Discovery |

|---|---|---|---|

| HOMO Energy | E_HOMO | High value = easier to donate electrons | Related to nucleophilicity; may indicate potential for metabolic oxidation. |

| LUMO Energy | E_LUMO | Low value = easier to accept electrons | Related to electrophilicity; can be linked to toxicity or reactivity with target. |

| HOMO-LUMO Gap | ΔE = ELUMO - EHOMO | Small gap = higher chemical reactivity | Low gap generally indicates higher reactivity and potential instability [15]. |

| Electrophilicity Index | ω = μ²/2η | High value = strong electrophile | Quantifies the molecule's propensity to attract electrons; very high values may suggest toxicity [14]. |

| Chemical Hardness | η = (I - A)/2 | High value = low reactivity, high stability | A pharmacologically desirable compound often has moderate hardness, balancing stability and reactivity [13]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Virtual Screening

| Tool Name | Type/Function | Key Use in Workflow |

|---|---|---|

| PyMOL | Molecular Visualization | Protein and ligand structure preparation, visualization of docking poses, and figure generation [13]. |

| AutoDock Vina | Molecular Docking Software | Performing the docking simulation between the protein and ligand library [12]. |

| Gaussian | Quantum Chemistry Package | Running DFT calculations for geometry optimization and electronic property analysis (HOMO, LUMO, ESP) [13]. |

| GROMACS/Desmond | Molecular Dynamics Engine | Running MD simulations to assess the stability of protein-ligand complexes over time [13] [16]. |

| ADMETlab 3.0 | Web-based Predictor | Predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity properties of candidate molecules [13]. |

| PaDEL-Descriptor | Molecular Descriptor Calculator | Generating 1D, 2D, and 3D molecular descriptors for QSAR or machine learning model development [13]. |

Diagram 2: Troubleshooting Poor Hit Rates

Frequently Asked Questions (FAQs)

Q1: Why is my compound library missing critical bioactive molecules after sourcing from general databases? The completeness of your library depends on the databases you use. Generalist databases like PubChem are comprehensive but may lack specialized bioactive compounds found in FooDB (for food components) or other niche collections. To ensure comprehensive coverage, you must use a multi-source strategy. Furthermore, the integrity of the data is paramount; automated curation and standardization protocols are essential to eliminate errors, maintain sample quality, and ensure that your computational searches and screens are run against a reliable dataset [17].

Q2: How can I resolve issues with compound structures that won't load correctly into my visualization or screening software? This is often a problem with data formatting or structural representations. Incompatible file formats or non-standard structures can cause failures. First, reprocess your compound data using a robust cheminformatics toolkit like RDKit to standardize structures, remove salts, and ensure all valences are correct [18]. Second, when exporting structures from software like Chimera for use in other platforms (e.g., Unity), be aware that color representations may be lost if they rely on vertex coloring, which requires specific shaders to display. You may need to use custom shaders or reapply colors in the new software environment [19].

Q3: What are the best practices for managing the IT infrastructure of a large, shared compound library? Successful compound management relies on interoperable hardware and software systems. Key challenges include system maintenance, automation, and ensuring different systems can communicate effectively. Invest in laboratory automation to minimize manual errors like mislabeling and to improve long-term cost-effectiveness. Prioritize next-generation software upgrades to maintain agility and keep pace with evolving stakeholder needs. A well-maintained IT system is critical for timely retrieval of compounds for experiments, preventing costly delays in research [17].

Q4: How do I programmatically color compounds in my library based on specific properties for visualization? You can use cheminformatics toolkits to compute molecular properties and assign colors accordingly. For instance, in a Python workflow using RDKit and NetworkX, you can create a chemical space network where nodes (compounds) are colored based on an attribute like bioactivity value (e.g., Ki). The code logic involves defining a color map that maps a property value to a specific hex color code for each compound node [18]. Always ensure that the chosen text and background colors have sufficient contrast for accessibility [20] [21].

Troubleshooting Guides

Issue 1: Inconsistent Structural Data Causing Screening Failures

- Problem: Compounds sourced from multiple databases have inconsistent representations (e.g., with/without salts, different tautomers), leading to errors in virtual screening and analysis.

- Solution: Implement a standardized data curation pipeline.

- Standardization: Use a toolkit like RDKit to parse all SMILES strings and standardize functional groups, neutralization, and stereochemistry.

- Desalting: Remove counterions and salts. Validate using RDKit's

GetMolFragsfunction to ensure each compound is a single fragment after desalting [18]. - Deduplication: Identify and merge duplicates by checking standardized SMILES or InChIKeys. For entries with multiple bioactivity values (e.g., Ki), calculate an average to create a single, canonical entry [18].

Issue 2: Poor Performance of Machine Learning Models on the Compound Library

- Problem: Predictive models show low accuracy, potentially due to low-quality data or biased chemical space sampling.

- Solution: Enhance library quality and diversity.

- Data Integrity: Apply the curation steps from Issue 1 to ensure model inputs are clean.

- Diversity Analysis: Generate a Chemical Space Network (CSN) to visualize your library's coverage. Calculate pairwise molecular similarity (e.g., Tanimoto similarity using RDKit 2D fingerprints) and create a network graph. This will reveal clusters and voids in your chemical space [18].

- Strategic Sourcing: Use the CSN analysis to identify underrepresented areas. Proactively source compounds from specialized databases like FooDB to fill these gaps and create a more balanced and diverse library for robust model training.

Issue 3: Compound Identity Mismatch Between Virtual and Experimental Screening

- Problem: A compound identified as a "hit" in computational screening cannot be located or does not match its virtual identity in the physical compound management system.

- Solution: Strengthen the link between digital and physical inventory.

- Unique Identifiers: Ensure every compound in the digital library has a unique, persistent identifier (e.g., InChIKey) that is linked to its physical location (e.g., plate barcode, well position).

- Barcode & Automation: Use automated compound management systems with barcoding to minimize manual handling errors during retrieval [17].

- Version Control: Maintain version control for your digital compound library. Any curation or update should result in a new library version, with a clear record of changes to prevent confusion.

Experimental Protocols & Data

Protocol 1: A Workflow for Curating a Multi-Source Compound Library

This protocol details the steps for creating a clean, unified compound library from FooDB, PubChem, and other sources.

- Data Acquisition: Download compound structures and metadata (e.g., SMILES, bioactivity) in standard formats from FooDB, PubChem, and specialized databases.

- Data Merging: Consolidate all downloaded files into a single dataset, preserving the source information for each entry.

- Standardization with RDKit:

- Read each SMILES string with RDKit.

- Apply molecular standardization (e.g., neutralize charges, standardize aromaticity).

- Remove salts and disconnected fragments, keeping only the largest molecular component.

- Generate canonical SMILES and InChIKeys for each processed molecule.

- Deduplication:

- Identify duplicate entries based on their InChIKey.

- For entries with associated quantitative data (e.g., Ki), compute the average value for all duplicates.

- Create a final, non-redundant set of compounds.

- Library Profiling:

- Calculate molecular descriptors (e.g., molecular weight, LogP) for the final library.

- Perform diversity analysis by generating a Chemical Space Network as described in Protocol 2.

Protocol 2: Creating a Chemical Space Network for Library Visualization

This protocol uses RDKit and NetworkX to visualize relationships within your compound library [18].

- Calculate Pairwise Similarity:

- For every compound in your curated library, compute an RDKit 2D fingerprint.

- Calculate the pairwise Tanimoto similarity for all compounds, resulting in a similarity matrix.

- Define the Network:

- Set a similarity threshold (e.g., 0.7). Only compound pairs with a similarity >= this threshold are connected by an edge in the network.

- Create a NetworkX graph where nodes are compounds and edges represent significant similarity.

- Visualize the CSN:

- Use a force-directed layout (e.g., Fruchterman-Reingold) to position the nodes.

- Color the nodes based on a property, such as bioactivity (Ki) or source database.

- Replace default circular nodes with 2D molecular depictions if desired for a more informative visual.

Chemical Space Network Creation Workflow

Table 1: Key Sourcing Databases for a High-Quality Compound Library

| Database | Focus & Specialty | Key Data Types | Relevance to Experimental Validation |

|---|---|---|---|

| FooDB | Food components and natural products. | Comprehensive chemical data on food constituents. | Essential for sourcing bioactive nutrients and natural products for screening. |

| PubChem | General-purpose, massive repository. | Bioactivity, pathways, depositor-provided screening data. | Provides a broad baseline of chemical space and published bioactivity data. |

| ChEMBL | Manually curated bioactive molecules. | Target-specific bioactivity data (e.g., Ki, IC50). | Critical for sourcing compounds with known, validated biological activities. |

Table 2: Research Reagent Solutions for Compound Library Management

| Item | Function | Example/Tool |

|---|---|---|

| Cheminformatics Toolkit | For programmatic data curation, standardization, and descriptor calculation. | RDKit (Open-Source) |

| Network Analysis Library | For constructing, analyzing, and visualizing chemical space networks. | NetworkX (Python) |

| Compound Management System | Computerized inventory for tracking physical samples and their locations. | Automated compound storage & retrieval systems |

| Visualization Software | For 3D structure visualization and analysis of screening hits. | Mol* Viewer, ChimeraX |

Troubleshooting Guides

Homology Modeling Challenges

Q1: The sequence identity between my target and the best template is only 25%. Can I still proceed with homology modeling, and what are the key risks?

Yes, you can proceed, but the model accuracy will be lower than with higher sequence identity. Key risks and mitigation strategies are outlined below.

| Challenge | Risk Consequence | Recommended Mitigation Strategy |

|---|---|---|

| Inaccurate Sequence Alignment | Misplacement of secondary structures and core elements [22]. | Use profile-profile alignment methods (e.g., HHsearch, PSI-BLAST) instead of simple pairwise BLAST [22] [23]. |

| Improper Template Selection | Incorrect overall fold, leading to a useless model [22]. | Use fold recognition (threading) servers or consensus meta-servers to identify the correct template [22]. |

| Poor Loop Modeling | High error (2–4 Å) in loop regions, affecting active site geometry [22]. | Use dedicated loop modeling algorithms and assess models with multiple validation tools [22] [23]. |

| Incorrect Side Chain Packing | Energetically unfavorable conformations, especially in the core [22]. | Perform side chain repacking and refinement using molecular dynamics or Monte Carlo sampling [23]. |

Experimental Protocol for Low-Identity Modeling:

- Template Identification: Submit your target sequence to a sensitive fold-recognition server like HHpred.

- Alignment: Manually inspect and refine the target-template alignment, paying close attention to conserved catalytic residues and secondary structure elements.

- Model Generation: Build multiple models using different software (e.g., MODELLER, Rosetta) and alignments.

- Validation: Critically assess all models. Prioritize those with better scores in the core regions and from servers that correctly identify known active sites [24].

Q2: My homology model has severe steric clashes after automated building. What is the best way to refine it?

Steric clashes indicate local structural inaccuracies that require refinement.

| Clash Location | Potential Cause | Resolution Workflow |

|---|---|---|

| Side Chains | Incorrect rotamer assignment during model building [23]. | 1. Identify clashes with a validation tool (e.g., MolProbity).2. Use side-chain repacking software (e.g., SCWRL4, RosettaFix).3. Perform local energy minimization. |

| Loop Regions | Poor fragment assembly or template gaps [22]. | 1. Isolate the loop and use dedicated loop modeling (e.g., MODELLER loop refinement).2. Check for allowed phi/psi angles in the new conformation.3. Validate the refined loop geometry. |

| Backbone | Alignment error in a conserved core region (serious issue). | 1. Re-examine the target-template alignment in the problematic region.2. If alignment is correct, use molecular dynamics simulations in explicit solvent to relax the structure [23]. |

Detailed Refinement Protocol:

- Energy Minimization: Begin with a short, constrained energy minimization using a molecular mechanics force field (e.g., AMBER, CHARMM) to relieve minor clashes without significantly altering the model's overall geometry [23].

- Molecular Dynamics (MD): For more significant refinements, run short MD simulations with positional restraints on the core Cα atoms, allowing loops and side chains to move and relax [23].

- Validation: After each refinement step, re-validate the model to ensure that the process has not introduced new errors or distorted the correct parts of the structure.

Crystal Structure Evaluation

Q3: When selecting a crystal structure from the PDB as a template, what specific quality metrics should I check beyond resolution?

Resolution is a key initial filter, but these additional metrics are critical for assessing reliability.

| Metric | Definition & Interpretation | Threshold for Reliability |

|---|---|---|

| R-value (R-work / R-free) | Measures how well the atomic model fits the experimental X-ray data. R-free is calculated from a subset of data not used in refinement and is less biased [25]. | R-free ≤ 0.25 for structures at ~2.5 Å resolution. Lower is better. A large gap (>0.05) between R-work and R-free indicates potential over-fitting [26]. |

| Clashscore | The number of serious steric overlaps per 1000 atoms. It assesses the stereochemical quality of the atomic packing [26]. | Clashscore < 10 is ideal. Higher scores indicate regions of poor local geometry that may be unreliable. |

| Ramachandran Outliers | Percentage of residues in disallowed regions of the phi/psi torsion angle plot. It assesses backbone conformation reliability [26]. | < 1% outliers is preferred. Models with >5% outliers should be treated with extreme caution, especially if outliers are near the active site. |

| B-factors (Temperature Factors) | Measure the vibrational motion or positional disorder of an atom. High B-factors indicate uncertainty or flexibility [26]. | Look for consistent B-factors across the chain. Peaks in B-factor plots often indicate flexible loops or poorly resolved regions. |

Experimental Protocol for Structure Validation:

- Download the Validation Report: For any PDB entry, always download the official wwPDB validation report from the PDB-101 or RCSB website [25].

- Visual Inspection: Use molecular graphics software (e.g., PyMOL, COOT) to visually inspect the electron density map (e.g., 2Fo-Fc map) in your region of interest, such as the active site. Ensure the atomic model fits the density well [26].

- Check the Header: Review the PDB file header for anomalies or NULL entries, which can sometimes correlate with lower overall model quality [26].

Active Site Identification

Q4: I have a protein structure, but the active site is not annotated. What computational methods can I use to identify potential binding pockets?

Several computational methods can predict binding pockets, ranging from geometry-based to energy-based approaches.

| Method Category | Principle | Example Tools & Techniques |

|---|---|---|

| Geometry-Based | Detects invaginations on the protein surface based on 3D coordinates [27]. | FPocket: Analyzes Voronoi tessellation and alpha spheres to find pockets.CASTp: Identifies and measures surface pockets and cavities. |

| Energy-Based | Probes the protein surface with chemical fragments to find energetically favorable binding spots [27]. | FTMap: Uses small molecular probes to find "hot spots" for binding.GRID: Calculates interaction energies for chemical groups on a 3D grid. |

| Template-Based | Identifies the active site by comparison to evolutionarily related proteins with known functional sites [24]. | SABER: Uses geometric hashing to find pre-arranged catalytic groups from a template (Catalytic Atom Map) in other structures [24]. |

| Machine Learning-Based | Trains algorithms on features of known binding sites to predict new ones [27]. | DeepSite: A deep learning-based method that considers the protein structure in the context of a 3D grid. |

Experimental Protocol for Active Site Validation:

- Consensus Prediction: Run at least two different types of methods (e.g., one geometry-based and one energy-based). Pockets predicted by multiple methods have higher confidence.

- Evolutionary Conservation: Perform a ConSurf analysis or map sequence conservation from a multiple sequence alignment onto your structure. Catalytic residues are often highly conserved.

- Docking & Mutagenesis: Perform computational docking of a known substrate or ligand. The highest-ranking poses should cluster in the predicted pocket. This hypothesis can then be tested experimentally by mutating predicted key residues to alanine and measuring the loss of activity.

Q5: How can I distinguish a true, druggable active site from a superficial surface pocket?

A "druggable" site not only binds ligands but can also bind drug-like molecules with high affinity. Key distinguishing features are compared below.

| Feature | Druggable Active Site | Superficial Surface Pocket |

|---|---|---|

| Geometry | Defined, concave cavity with substantial depth and volume [27]. | Shallow, flat, or convex surface feature. |

| Chemical Environment | Rich in hydrophobic residues and/or has specific features for hydrogen bonding/electrostatic interactions (e.g., charged residues, metal ions) [27]. | Chemically bland, primarily composed of polar side chains solvated by water. |

| Conservation | Residues are evolutionarily conserved across homologs [24]. | Shows low sequence conservation. |

| Probe Binding | Strong, energetic "hot spots" identified by methods like FTMap [27]. | Weak, diffuse probe binding. |

Workflow Visualization

Homology Modeling and Validation Workflow

Crystal Structure Selection Decision Tree

Research Reagent Solutions

Essential computational tools and databases for structure-based research.

| Item Name | Function & Application | Key Features |

|---|---|---|

| SWISS-MODEL [28] | Fully automated protein structure homology modeling server. | User-friendly web interface, integrated template search, model building, and quality assessment. |

| MODELLER [22] | Program for comparative or homology modeling of protein 3D structures. | Uses satisfaction of spatial restraints; highly customizable for expert users. |

| Rosetta [24] | Comprehensive software suite for macromolecular modeling and design. | Powerful for de novo structure prediction, docking, and design; has a steeper learning curve. |

| PyMOL | Molecular graphics system for 3D visualization and analysis. | Industry standard for rendering publication-quality images and analyzing structures. |

| PDB Validation Reports [25] [26] | Standardized reports on the quality of structures in the Protein Data Bank. | Provides key metrics (R-free, Clashscore, Ramachandran) for informed template selection. |

| FPocket [27] | Open-source platform for protein pocket detection and analysis. | Fast, geometry-based pocket detection; useful for initial blind binding site screening. |

| SABER [24] | Software for identifying active sites with specific 3D catalytic group arrangements. | Uses geometric hashing to find scaffolds for enzyme redesign based on a Catalytic Atom Map (CAM). |

| COSMO-RS [29] | Thermodynamic method for predicting solvent and coformer interactions. | Useful in crystal engineering for predicting multicomponent crystal (cocrystal) formation with APIs. |

Frequently Asked Questions

FAQ 1: What is the critical difference between formation enthalpy (ΔHf) and decomposition enthalpy (ΔHd), and why is ΔHd more relevant for assessing compound stability?

Formation enthalpy (ΔHf) measures the stability of a compound relative to its constituent elements in their standard states. In contrast, decomposition enthalpy (ΔHd) measures the stability of a compound relative to all other competing compounds in the same chemical space [30]. The reaction for ΔHd is given by ΔHd = E(compound) - E(competing phases), where E(competing phases) represents the lowest-energy combination of all other compounds and/or elements with the same overall composition [30]. For high-throughput screening, ΔHd is the more relevant metric because a compound must be stable against all possible decomposition pathways, not just reversion to its elements. Analysis of over 56,000 compounds revealed that only 3% decompose directly into elements (Type 1 decomposition), while 63% decompose exclusively into other compounds (Type 2), and 34% decompose into a mix of compounds and elements (Type 3) [30].

FAQ 2: What are the recommended stability thresholds (γ) for high-throughput screening, and how should they be applied?

In high-throughput screening, compounds are typically considered viable candidates if their decomposition enthalpy is below a specific threshold, i.e., ΔHd < γ. The chosen threshold represents a trade-off between the number of candidates and their likelihood of stability [30]. Commonly used values for γ range from approximately 20 to 200 meV/atom [30]. A stricter threshold (e.g., 20-50 meV/atom) prioritizes synthesizability but may miss promising metastable materials, while a more lenient threshold (e.g., 150-200 meV/atom) expands the candidate pool but includes compounds that may be more difficult to synthesize.

FAQ 3: My computational screening identified a promising candidate with excellent binding affinity, but experimental validation failed. What are common pitfalls?

A significant pitfall is the over-reliance on a single performance metric, such as binding affinity or catalytic activity, while overlooking critical stability factors [31] [32]. Computational models sometimes simplify complex real-world environments, and the predicted structure may not represent the true experimental conditions. To troubleshoot, ensure your screening workflow integrates multiple stability metrics (thermodynamic, mechanical, thermal) from the beginning [31]. Furthermore, experimental protocols for synthesis, activation, and testing can introduce unforeseen variables not captured in simulations [32].

FAQ 4: How can machine learning (ML) accelerate the prediction of stability and binding affinity?

Machine learning can drastically reduce the computational cost of stability and affinity predictions. For stability, ML models can be trained on existing datasets to predict properties like thermal and activation stability, bypassing the need for more expensive molecular dynamics simulations in initial screening stages [31]. In binding affinity calculations, ML algorithms can be used to develop sophisticated scoring functions that rapidly evaluate protein-ligand interactions, a crucial task in virtual drug screening [33] [34]. These approaches are integral to modern high-throughput workflows, where they help navigate vast chemical spaces efficiently [35].

Troubleshooting Guides

Issue 1: Inconsistent or Inaccurate Decomposition Enthalpy (ΔHd) Calculations

Problem: Calculated ΔHd values do not align with experimental observations of compound stability.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Incorrect reference phases. | Verify the convex hull construction for your chemical system using a trusted database (e.g., Materials Project). | Ensure your calculation includes all relevant competing compounds, not just elements. For a ternary compound, include binaries and other ternaries [30]. |

| Functional inaccuracy. | Benchmark your density functional theory (DFT) functional (e.g., PBE) against experimental data for a known set of compounds. | Consider using a more advanced functional like the meta-GGA SCAN, which shows better agreement with experiment (MAD = 59 meV/atom for ΔHd) compared to PBE (MAD = 70 meV/atom) [30]. |

| Insufficient stability metrics. | Check if thermodynamic stability is the only metric used. | Integrate additional stability checks. For porous materials like MOFs, evaluate mechanical stability via elastic moduli and thermal stability [31]. |

Issue 2: Poor Correlation Between Predicted and Experimental Binding Affinity

Problem: Computationally predicted binding affinities do not correlate well with experimental measurements.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Inadequate scoring function. | Test multiple scoring functions on a small set of ligands with known affinities. | Use a consensus of several scoring functions or employ machine learning-based scoring functions that incorporate more complex descriptors [33] [34]. |

| Incorrect binding site definition. | Validate the predicted binding site against experimental data (e.g., from a crystal structure). | Use a robust binding site prediction tool, which can be based on 3D structure, template similarity, or machine learning/deep learning methods [34]. |

| Ignoring system flexibility. | Assess if the protein's flexible side chains or backbone movements significantly impact ligand binding. | Consider using molecular dynamics (MD) simulations to account for protein flexibility and identify potential cryptic binding sites [34]. |

Issue 3: Unstable Computational Screening Hits

Problem: Top candidates from virtual screening are thermodynamically unstable and not synthesizable.

Solution: Integrate stability screening before or concurrently with performance screening [31].

- Define Stability Metrics: Decide on relevant stability metrics (e.g., thermodynamic, mechanical, thermal).

- Set Thresholds: Establish pass/fail criteria for each metric. For thermodynamic stability of MOFs, a relative free energy (ΔLMF) threshold of ~4.2 kJ/mol above a reference line of experimental MOFs can be used to filter unstable structures [31].

- Implement Workflow: Screen your database first for stability, then for performance, or apply both filters simultaneously. This ensures that only stable, synthesizable candidates are considered top performers [31].

Integrated Screening Workflow

Quantitative Data and Protocols

Table 1: Stability Thresholds and DFT Performance for Compounds

Summary of key metrics from the literature for assessing solid-state materials. [30]

| Metric / Functional | Value / Mean Absolute Difference (MAD) | Applicability & Notes |

|---|---|---|

| Stability Threshold (γ) | 20 - 200 meV/atom | Common range for ΔHd in high-throughput screening; specific choice depends on project goals. |

| PBE (GGA) for ΔHd | 70 meV/atom | MAD vs. experiment for 646 non-trivial decomposition reactions. |

| SCAN (meta-GGA) for ΔHd | 59 meV/atom | Improved accuracy over PBE for the same set of reactions. |

| Prevalence of Type 2 Decomp. | 63% | The most common decomposition type (into other compounds only). |

Common approaches used in drug discovery, with advantages and limitations. [33] [34]

| Method Category | Examples | Key Function | Considerations |

|---|---|---|---|

| Empirical Scoring | Scoring functions based on surface contact, H-bonds. | Fast evaluation of protein-ligand docking poses. | Speed vs. accuracy trade-off; may oversimplify interactions. |

| Structure-Based | Molecular docking, MD simulations. | Predicts binding mode and affinity using 3D protein structure. | Dependent on accurate binding site and force fields. |

| Machine Learning | Deep learning, QSAR models. | Learns complex patterns from data to predict affinity. | Requires large, high-quality training datasets. |

Experimental Protocol 1: Calculating Decomposition Enthalpy (ΔHd)

Objective: To determine the thermodynamic stability of a compound relative to all other compounds in its chemical space.

- Gather Total Energies: Obtain the ground-state total energy (E) for the compound of interest and all other known compounds in the same A-B-C-... chemical system using Density Functional Theory (DFT) [30].

- Construct the Convex Hull: For the composition of your compound, find the set of competing phases that minimizes the total energy. This is equivalent to finding the vertices of the convex hull in the formation enthalpy diagram [30].

- Calculate ΔHd: The decomposition enthalpy is calculated as ΔHd = E(compound) - E(competing phases), where E(competing phases) is the energy of the most stable linear combination of other phases at the same composition [30].

- Interpret Result: A negative ΔHd indicates the compound is stable on the convex hull. A positive ΔHd indicates it is metastable, with the value representing its energy above the hull.

Experimental Protocol 2: Integrating Stability in MOF Screening

Objective: To identify top-performing Metal-Organic Frameworks (MOFs) that are also stable and synthesizable for applications like CO2 capture [31].

- Initial Performance Screening: Shortlist MOFs from a large database based on application-specific performance metrics (e.g., CO2 uptake and CO2/N2 selectivity) [31].

- Assess Thermodynamic Stability:

- Calculate the free energy (F) of the shortlisted MOFs.

- Compare against a reference line (FLM) derived from free energies of similar experimental MOFs (e.g., from the CoRE MOF database).

- Calculate the relative free energy, ΔLMF = F - FLM. MOFs with ΔLMF greater than an upper bound (e.g., ~4.2 kJ/mol) are considered thermodynamically unstable and unlikely to be synthesizable [31].

- Evaluate Mechanical Stability:

- Perform molecular dynamics (MD) simulations to calculate elastic moduli (e.g., bulk modulus K, shear modulus G).

- While low moduli may indicate flexibility, they should be evaluated in the context of the material's intended application [31].

- Predict Thermal and Activation Stability:

- Use pre-trained machine learning (ML) models to predict the thermal decomposition temperature and the ability of the MOF to be activated (solvent removed) without collapsing [31].

- Final Selection: Select only the MOFs that satisfy all performance and stability criteria.

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item | Function | Example / Note |

|---|---|---|

| DFT Codes | Quantum mechanical calculation of total energies, electronic structure. | VASP, Quantum ESPRESSO. Critical for calculating ΔHf and ΔHd. |

| Materials Database | Repository of computed crystal structures and properties for benchmarking and hull construction. | Materials Project [30], NOMAD repository [30]. |

| Docking Software | Prediction of ligand binding pose and affinity. | AutoDock, GOLD. Used for structure-based binding affinity calculation. |

| Molecular Dynamics Software | Simulation of molecular movement over time to assess stability and flexibility. | GROMACS, LAMMPS. Used for evaluating mechanical stability of MOFs [31]. |

| Machine Learning Libraries | Building models for predictive screening of stability or affinity. | Scikit-learn, TensorFlow, PyTorch. Used to predict thermal/activation stability [31]. |

Advanced Screening Methodologies: Implementing AI and Multi-Target Workflows for Enhanced Prediction

Implementing a Multi-Tiered Docking Protocol with AutoDock Vina and Exhaustiveness Settings

Core Concepts and Quantitative Data

Understanding Exhaustiveness and its Impact

Table 1: Exhaustiveness Settings and Typical Outcomes in AutoDock Vina

| Exhaustiveness Value | Computational Effort | Typical Use Case | Expected Impact on Results |

|---|---|---|---|

| 8 (Default) | Low | Preliminary screening, very large libraries | Faster runs; may miss correct poses for challenging ligands [36] |

| 16-24 | Moderate | Standard virtual screening | Improved consistency over default; good balance of speed and accuracy [12] |

| 32 | High | Challenging ligands, final validation | More consistent docking results; higher probability of finding correct pose [36] |

| >32 | Very High | Problematic systems, research purposes | Maximum sampling; significantly increased run time [37] |

The exhaustiveness parameter in AutoDock Vina directly controls the extent of the conformational search. It determines the number of independent docking runs that are performed, each starting from a random conformation [37]. A higher exhaustiveness value leads to a more extensive exploration of the ligand's conformational space within the binding site, increasing the probability of finding the optimal binding mode but at the cost of increased computation time [36].

For the anticancer drug imatinib docked into c-Abl kinase, using the default exhaustiveness of 8 occasionally failed to find the correct pose. Increasing exhaustiveness to 32 yielded more consistent results with a single docked pose closely matching the crystallographic structure [36].

Scoring Functions in AutoDock Vina

Table 2: Comparison of Scoring Functions Available in AutoDock Vina

| Scoring Function | Command Line Flag | Theoretical Basis | Required Files | Sample Binding Affinity (Imatinib-c-Abl) |

|---|---|---|---|---|

| Vina (Default) | (default) | Empirical; combines Gaussians, hydrogen bonding, hydrophobic terms [38] | Receptor PDBQT, Ligand PDBQT | Approximately -13 kcal/mol [36] |

| AutoDock 4.2 | --scoring ad4 |

Physics-based; van der Waals, electrostatics, desolvation, hydrogen bonding [38] | Receptor PDBQT, Ligand PBDQT, Affinity maps | Approximately -14.7 kcal/mol [36] |

| Vinardo | --scoring vinardo |

Empirical; reweighted terms for improved performance [36] | Receptor PDBQT, Ligand PDBQT | Varies by system |

Experimental Protocols

Multi-Tiered Docking Protocol

We recommend a three-stage docking protocol to maximize efficiency and accuracy in computational screening campaigns.

Stage 1: Rapid Preliminary Screening

- Objective: Rapidly filter large compound libraries to identify potential hits.

- Exhaustiveness setting: 8-16

- Receptor preparation: Rigid receptor model

- Ligand preparation: Standard protonation states, minimal conformation sampling

- Output: Top 10-20% of compounds for Stage 2

Stage 2: Standard Resolution Docking

- Objective: More reliable assessment of binding modes for filtered compounds.

- Exhaustiveness setting: 24-32

- Receptor preparation: Consider flexible side chains if critical residues known

- Ligand preparation: Careful protonation state assessment, expanded conformation sampling

- Output: Top 1-5% of compounds for Stage 3

Stage 3: High-Resolution Validation

- Objective: Detailed characterization of top candidates.

- Exhaustiveness setting: 32 or higher

- Receptor preparation: Flexible side chains in binding site, explicit water molecules if relevant [38]

- Ligand preparation: Tautomer and protonation state enumeration, macrocycle flexibility if needed [38]

- Output: Final candidate selection for experimental validation

Workflow Visualization

Essential Research Reagent Solutions

Table 3: Key Software Tools for AutoDock Vina Workflows

| Tool Name | Function | Application in Workflow |

|---|---|---|

| Meeko | Receptor and ligand preparation for Vina | Converts PDB files to PDBQT format; adds partial charges and hydrogens [36] |

| AutoDock Tools (ADFR Suite) | Alternative preparation tool | Generates PDBQT files; useful for visual inspection and manual editing [36] |

| PyMOL | Molecular visualization | Visualizes docking results and binding site boxes [36] |

| Molscrub (scrub.py) | Ligand protonation | Correctly protonates ligands before docking; especially important when starting structures lack hydrogens [36] |

| AutoGrid4 | Affinity map generation | Precalculates interaction grids for AutoDock4.2 scoring function [36] |

Troubleshooting Guides and FAQs

Common Configuration Issues

Q: Why does Vina occasionally fail to find the correct binding pose even with high exhaustiveness? A: This can occur due to several factors:

- Inadequate binding site definition: Ensure your search space completely encompasses the binding pocket with sufficient margin.

- Incorrect protonation states: Verify that both receptor residues and ligand functional groups have physiologically relevant protonation states.

- Limited sampling: For particularly challenging systems with high flexibility, consider further increasing exhaustiveness (48-64) or implementing consensus docking with multiple scoring functions [36] [12].

Q: My docking results show high RMSD values between top poses. What does this indicate? A: High RMSD values between top-ranked poses (e.g., >2-3 Å) suggest that:

- The scoring function may be having difficulty distinguishing between similar energy states

- The ligand might have multiple viable binding modes

- Increasing exhaustiveness can help converge on a more consistent result [36]

Q: Why are my calculated binding energies different from the tutorial examples when using the same system? A: Binding energies from different scoring functions (Vina vs. AutoDock4.2) are not directly comparable as they use different energy calculations [36]. Additionally:

- Minor differences in preparation protocols can affect results

- The stochastic nature of the algorithm means different random seeds yield slightly different values

- Focus on relative rankings within a single screening campaign rather than absolute energy values

Performance and Technical Issues

Q: How do I determine the optimal search space size for my system? A: The search space should be "as small as possible, but not smaller" [37]:

- Typically 20×20×20 Å is sufficient for most drug-like molecules

- For larger binding sites or multiple ligands, increase size accordingly

- Volumes exceeding 27,000 ų (approximately 30×30×30 Å) may require increased exhaustiveness [37]

- Visualize the box in molecular viewers like PyMOL to ensure complete coverage [36]

Q: Vina runs successfully but the output poses look unreasonable. What should I check? A: Follow this diagnostic checklist:

- Verify receptor and ligand preparation, particularly bond order and charges

- Confirm the binding site center coordinates are correct

- Check that the search space size adequately accommodates the ligand

- Validate protonation states of key binding site residues

- Ensure no important water molecules or cofactors were omitted from the receptor [38]

Q: When should I consider using the AutoDock4.2 force field instead of the default Vina scoring?

A: The AutoDock4.2 force field (--scoring ad4) may be preferable when:

- Your system requires explicit electrostatic or desolvation terms [38]

- You need compatibility with specialized methods like hydrated docking or metal coordination [38]

- You're implementing consensus scoring across multiple force fields [12]

- Note that AD4.2 requires precalculated affinity maps and runs approximately 3× slower than standard Vina [38]

Advanced Feature Implementation

Q: How can I dock multiple ligands simultaneously with AutoDock Vina? A: AutoDock Vina 1.2.0 supports simultaneous docking of multiple ligands, which is useful for fragment-based drug design:

- Prepare each ligand separately, then combine into a single PDBQT file

- Use standard docking commands - Vina will automatically handle multiple ligands

- This approach can reveal cooperative binding effects or identify fragment linking opportunities [38]

Q: When should I implement receptor flexibility in my docking protocol? A: Consider receptor flexibility when:

- Crystal structures show significant side chain movements between apo and holo forms

- Key binding site residues have known conformational heterogeneity

- Rigid docking consistently produces poses that clash with side chains

- In Vina, flexible side chains are specified during receptor preparation and incur significant computational cost [37]

Q: What are the benefits of hydrated docking and when should I use it? A: Hydrated docking explicitly models water molecules that mediate protein-ligand interactions:

- Can improve pose prediction accuracy for systems with key bridging waters

- Particularly valuable when waters are structurally conserved in the binding site

- Implementation requires specialized preparation of hydrated ligand structures

- Has been shown to improve success rates by up to 17 percentage points in some systems [38]

The integration of artificial intelligence (AI) into epitope and molecular property prediction is transforming vaccine design and drug discovery by delivering unprecedented accuracy, speed, and efficiency. Traditional methods for epitope identification, which often relied on experimental screening or basic computational heuristics, are typically time-consuming, costly, and can achieve accuracies as low as 50-60% [39]. AI technologies, particularly deep learning models like Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Graph Neural Networks (GNNs), have revolutionized this field by learning complex sequence and structural patterns from large immunological datasets [39]. These models enable researchers to move beyond simple motif-based rules and capture non-linear correlations between amino acid features and immunogenicity, thereby streamlining the antigen selection process and significantly expanding the diversity of candidate targets [39]. This technical support center is designed to help researchers navigate the practical application of these advanced computational tools, troubleshoot common issues, and effectively bridge the gap between in silico predictions and experimental validation.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: What are the key performance differences between CNNs, RNNs, and GNNs for epitope prediction, and how do I choose?

Answer: The choice of model architecture significantly impacts the type of data you can leverage and the predictive performance you can achieve. The table below summarizes the core characteristics and benchmark performances of these models to guide your selection.

Table 1: Comparative Analysis of AI Model Architectures for Epitope and Property Prediction

| Model Architecture | Typical Application | Key Strengths | Reported Performance Metrics | Common Tools & Frameworks |

|---|---|---|---|---|

| Convolutional Neural Networks (CNNs) | B-cell and T-cell epitope prediction from sequence data [39]. | Excels at identifying local spatial patterns and motifs in sequences; provides interpretable outputs highlighting critical residues [39]. | ~87.8% accuracy (AUC = 0.945) for B-cell epitopes [39]; ~0.70 ROC AUC for T-cell epitopes with BiLSTM integration [39]. | NetBCE, DeepImmuno-CNN, NetMHC series [39]. |

| Recurrent Neural Networks (RNNs/LSTMs) | Predicting peptide-MHC binding affinity [39]. | Handles variable-length sequential data effectively; models temporal or sequential dependencies. | MHCnuggets (LSTM) showed a fourfold increase in predictive accuracy over earlier methods [39]. | MHCnuggets, DeepLBCEPred (with BiLSTM) [39]. |

| Graph Neural Networks (GNNs) | Molecular property prediction, drug-target interaction, and structure-based epitope analysis [40] [41] [42]. | Naturally models molecular structure (atoms as nodes, bonds as edges); integrates multi-modal data; superior for predicting physicochemical properties and binding affinity [41]. | GearBind GNN optimized SARS-CoV-2 spike antigens, resulting in a 17-fold higher binding affinity [39]. XGDP (GNN) enhanced prediction accuracy over pioneering methods [41]. | GearBind, XGDP, GraphConvolutional Networks (GCN) [39] [41]. |

Troubleshooting Guide:

- Problem: Model performs well on validation data but poorly on your specific experimental data.

- Solution: Investigate data drift. The training data for the pre-trained model may not be representative of your specific antigen or cell line. Consider fine-tuning the model on a smaller, curated dataset specific to your domain [43].

- Problem: GNN predictions lack interpretability, making it difficult to identify which molecular substructures drive the prediction.

- Solution: Leverage explainable AI (XAI) techniques such as GNNExplainer or Integrated Gradients. These methods can highlight salient functional groups of drugs and their interactions with significant genes, thereby revealing the mechanism of action [41].

FAQ 2: How can I effectively validate my AI-based epitope predictions experimentally?

Answer: Computational predictions must be translated into actionable experimental workflows. A robust validation pipeline is essential to confirm in silico findings. The following protocol outlines a systematic approach for validating predicted epitopes.

Experimental Validation Protocol for Predicted Epitopes

In Vitro Binding Assays:

- Objective: Confirm the physical binding between the predicted peptide and Major Histocompatibility Complex (MHC) molecules.

- Method: Utilize competitive binding assays (e.g., ELISAs) or surface plasmon resonance (SPR) to measure binding affinity and kinetics. This serves as the first critical check.

- Troubleshooting: A high rate of false positives (predicted binders that do not bind in vitro) may indicate that the model was trained on data not representative of your assay conditions. Cross-reference with models that have proven experimental validation, like MUNIS, which successfully identified novel CD8+ T-cell epitopes validated through HLA binding assays [39].

In Vitro Immunogenicity Assays:

- Objective: Determine if the MHC-bound peptide can be recognized by T-cells and elicit a functional immune response.

- Method: Isolate T-cells and co-culture them with antigen-presenting cells loaded with the predicted epitope. Measure T-cell activation through markers like IFN-γ release (ELISpot) or flow cytometry.

- Troubleshooting: If binding is confirmed but no immunogenicity is observed, the predicted epitope might not be naturally processed and presented. Incorporate mass spectrometry-based immunopeptidomics to verify natural processing [39].

In Vivo Challenge Models:

- Objective: Assess the protective efficacy of the epitope in a living organism.

- Method: Immunize animal models (e.g., mice) with the epitope and later challenge them with the pathogen. Monitor for disease progression and measure pathogen load.

- Troubleshooting: Lack of protection in vivo despite positive in vitro results suggests the epitope may not be immunodominant or the chosen adjuvant/delivery system is suboptimal [39].

FAQ 3: What are the best practices for representing molecular data for GNNs in property prediction?

Answer: A proper graph representation of a molecule is pivotal for GNN performance. Unlike simplified representations like SMILES strings, graphs naturally preserve structural information.

Detailed Methodology: Molecular Graph Construction and Feature Engineering

- Graph Definition: Represent the drug molecule as an undirected graph where atoms are nodes and chemical bonds are edges [41].

- Advanced Node Feature Engineering: Move beyond basic atom features (symbol, degree). Use a circular algorithm inspired by Extended-Connectivity Fingerprints (ECFP) to compute node features. This algorithm incorporates the atom's chemical properties and its surrounding environment by iteratively collecting information from its r-hop neighbors, hashing it, and converting it into a binary feature vector. This provides a richer description of each atom's local chemical environment [41].

- Edge Feature Incorporation: Explicitly incorporate chemical bond types (single, double, triple, aromatic) as edge features in the graph convolutional layers. This allows the GNN to model the strength and type of atomic interactions more accurately [41].

Troubleshooting Guide:

- Problem: The GNN model fails to learn meaningful representations.

- Solution: Verify the integrity of your node and edge features. Ensure that the circular feature computation is implemented correctly and that the bond order information is accurately encoded. The use of novel ECFP-inspired features has been demonstrated to enhance predictive power significantly [41].

Table 2: Key Research Reagent Solutions for AI-Driven Epitope and Property Validation

| Item Name | Function/Brief Explanation | Example Use Case in Validation |

|---|---|---|