From Data to Drugs: A Practical Guide to Machine Learning in QSAR for Modern Drug Discovery

This article provides a comprehensive overview of machine learning (ML) applications in Quantitative Structure-Activity Relationship (QSAR) modeling for drug discovery professionals and researchers.

From Data to Drugs: A Practical Guide to Machine Learning in QSAR for Modern Drug Discovery

Abstract

This article provides a comprehensive overview of machine learning (ML) applications in Quantitative Structure-Activity Relationship (QSAR) modeling for drug discovery professionals and researchers. It covers foundational principles, from classical statistical methods to advanced deep learning architectures like graph neural networks. The scope includes a detailed walkthrough of the QSAR workflow—data preparation, descriptor selection, and model training—alongside strategies for troubleshooting common pitfalls such as overfitting and data scarcity. A strong emphasis is placed on rigorous model validation, defining applicability domains, and comparative analysis of ML algorithms. Real-world case studies against targets like Plasmodium falciparum and SARS-CoV-2 Mpro illustrate how ML-driven QSAR accelerates lead optimization and virtual screening, offering a roadmap for integrating these powerful computational tools into the drug development pipeline.

From Hansch to Deep Learning: The Evolution and Core Principles of QSAR

Quantitative Structure-Activity Relationship (QSAR) modeling represents one of the most significant computational methodologies in medicinal chemistry and drug discovery. Founded more than fifty years ago by Corwin Hansch through his seminal 1962 publication, QSAR was initially conceptualized as a logical extension of physical organic chemistry into the realm of biological activity prediction [1]. The foundational principle of QSAR is that a mathematical relationship can be established between the chemical structure of compounds and their biological activity or physicochemical properties, enabling the prediction of activities for new, untested compounds [2]. This paradigm has evolved from application to small series of congeneric compounds using relatively simple regression methods to the analysis of very large datasets comprising thousands of diverse molecular structures using a wide variety of statistical and machine learning techniques [1]. The integration of artificial intelligence (AI), particularly machine learning (ML) and deep learning (DL), has recently transformed QSAR from a primarily statistical approach to a powerful predictive science capable of navigating complex chemical spaces with unprecedented accuracy [3] [4].

The Evolution of QSAR Methodologies

Classical QSAR: Statistical Foundations

The earliest QSAR approaches emerged from the recognition that biological activity could be correlated with quantifiable molecular properties through linear regression techniques. Hansch and Fujita pioneered this approach by incorporating Hammett substituent constants (σ) to account for electronic effects and octanol-water partition coefficients (logP) as a surrogate measure of lipophilicity [1]. This established the fundamental QSAR equation form: Activity = f(physicochemical properties) + error [2].

Table 1: Evolution of QSAR Modeling Techniques

| Era | Primary Methods | Molecular Descriptors | Key Applications |

|---|---|---|---|

| Classical (1960s-1980s) | Multiple Linear Regression (MLR), Partial Least Squares (PLS), Principal Component Regression (PCR) | 1D descriptors (molecular weight, logP), substituent constants (π, σ) | Congeneric series analysis, linear free-energy relationships |

| Chemoinformatics (1990s-2010s) | Support Vector Machines (SVM), Random Forests (RF), k-Nearest Neighbors (kNN) | 2D descriptors (topological indices), 3D descriptors (molecular fields) | Virtual screening of larger chemical libraries, toxicity prediction |

| AI-Integrated (2010s-Present) | Deep Neural Networks (DNNs), Graph Neural Networks (GNNs), Transformers, Generative Models | Learned representations from molecular graphs or SMILES, quantum chemical descriptors | De novo drug design, ultra-large virtual screening, multi-parameter optimization |

Classical QSAR relied heavily on statistical regression methods including Multiple Linear Regression (MLR), Partial Least Squares (PLS), and Principal Component Regression (PCR) [3]. These approaches were valued for their simplicity, speed, and interpretability, particularly in regulatory settings where understanding the relationship between molecular features and activity was essential [3]. The molecular descriptors used evolved from simple 1D properties like molecular weight to 2D topological indices and 3D fields capturing molecular shape and electrostatic potentials [3]. Validation of these early models depended on internal metrics such as R² (coefficient of determination) and Q² (cross-validated R²), as well as external validation using test sets of unseen compounds [3] [2].

The Machine Learning Revolution

The advent of machine learning algorithms significantly expanded the capabilities and applicability of QSAR modeling. Unlike classical linear models, ML algorithms could capture nonlinear relationships between molecular descriptors and biological activity without prior assumptions about data distribution [3]. Key algorithms that transformed the field included:

- Support Vector Machines (SVM): Effective in high-dimensional descriptor spaces and with limited samples [3]

- Random Forests (RF): Valued for robustness, built-in feature selection, and ability to handle noisy data [3] [5]

- k-Nearest Neighbors (kNN): Simple instance-based learning leveraging chemical similarity [3]

This era also saw the development of more sophisticated feature selection methods including LASSO (Least Absolute Shrinkage and Selection Operator), recursive feature elimination, and mutual information ranking to identify the most significant molecular descriptors and reduce overfitting [3]. The expansion of public chemical databases and open-source cheminformatics tools like RDKit democratized access to these methods beyond specialized computational groups [3].

Deep Learning and the Emergence of "Deep QSAR"

The most transformative development in QSAR modeling has been the integration of deep learning techniques, giving rise to what is now termed "deep QSAR" [4]. Deep learning has enabled the development of models that learn molecular representations directly from structure data without manual descriptor engineering, capturing hierarchical chemical features that often exceed the predictive power of traditional descriptors [3] [4].

Key deep learning architectures in modern QSAR include:

- Graph Neural Networks (GNNs): Operate directly on molecular graphs, treating atoms as nodes and bonds as edges, naturally capturing structural relationships [3] [4]

- SMILES-Based Transformers: Leverage natural language processing techniques to learn from string-based molecular representations [3] [4]

- Generative Models: Including variational autoencoders (VAEs) and generative adversarial networks (GANs) that can design novel molecular structures with desired properties [4] [6]

These approaches have demonstrated exceptional performance in predicting complex biological activities and physicochemical properties, particularly when applied to large, diverse chemical datasets [3] [4].

Table 2: Comparison of QSAR Model Validation Strategies

| Validation Type | Methodology | Purpose | Best Practices |

|---|---|---|---|

| Internal Validation | Cross-validation (e.g., leave-one-out, k-fold) | Measure model robustness | Use multiple cross-validation schemes; Q² > 0.5 generally acceptable |

| External Validation | Hold-out test set validation | Assess predictive performance on new data | Test set should be statistically representative but not used in training |

| Y-Scrambling | Randomization of response variable | Verify absence of chance correlations | Perform multiple iterations; scrambled models should show poor performance |

| Applicability Domain | Leverage, distance, or similarity measures | Define chemical space where model is reliable | Mandatory for regulatory acceptance; identifies extrapolation risks |

Experimental Protocols and Methodological Frameworks

Best Practices for QSAR Model Development

The development of robust, predictive QSAR models requires adherence to rigorously established protocols. The Organization for Economic Co-operation and Development (OECD) principles provide a foundational framework for regulatory acceptance, emphasizing: (1) a defined endpoint, (2) an unambiguous algorithm, (3) a defined domain of applicability, (4) appropriate measures of goodness-of-fit, robustness, and predictivity, and (5) a mechanistic interpretation when possible [1].

A modern QSAR development workflow typically includes these critical stages:

- Data Curation and Preparation: Compiling a high-quality dataset with reliable, consistent activity measurements. This includes removal of duplicates, error checking, and standardization of chemical structures [1] [5].

- Descriptor Calculation and Selection: Generation of molecular descriptors encoding structural, electronic, and physicochemical properties, followed by feature selection to reduce dimensionality and minimize noise [3] [5].

- Model Training and Optimization: Application of machine learning or deep learning algorithms with appropriate hyperparameter tuning using techniques like grid search or Bayesian optimization [3] [7].

- Validation and Applicability Domain Definition: Rigorous internal and external validation to assess predictive power, with clear definition of the chemical space where the model can be reliably applied [2] [1].

Case Study: Machine Learning-Assisted TNKS2 Inhibitor Identification

A recent study exemplifies the modern integration of machine learning with QSAR for drug discovery. The research aimed to identify novel Tankyrase (TNKS2) inhibitors for colorectal cancer treatment through the following protocol [5]:

- Data Collection: 1100 TNKS2 inhibitors with experimental IC₅₀ values were retrieved from the ChEMBL database (Target ID: CHEMBL6125).

- Descriptor Calculation and Feature Selection: 2D and 3D molecular descriptors were computed, followed by feature selection to identify the most relevant descriptors predictive of TNKS2 inhibition.

- Model Development: A Random Forest classification model was trained using the selected descriptors, with hyperparameter optimization through cross-validation.

- Validation: The model achieved a high predictive performance (ROC-AUC = 0.98) on external test sets, demonstrating strong generalizability.

- Virtual Screening and Experimental Validation: The model was used to screen compound libraries, identifying Olaparib as a potential TNKS2 inhibitor, which was subsequently validated through molecular docking and dynamics simulations [5].

This case study illustrates the power of combining machine learning with traditional QSAR approaches to accelerate the identification of novel therapeutic candidates with validated biological activity.

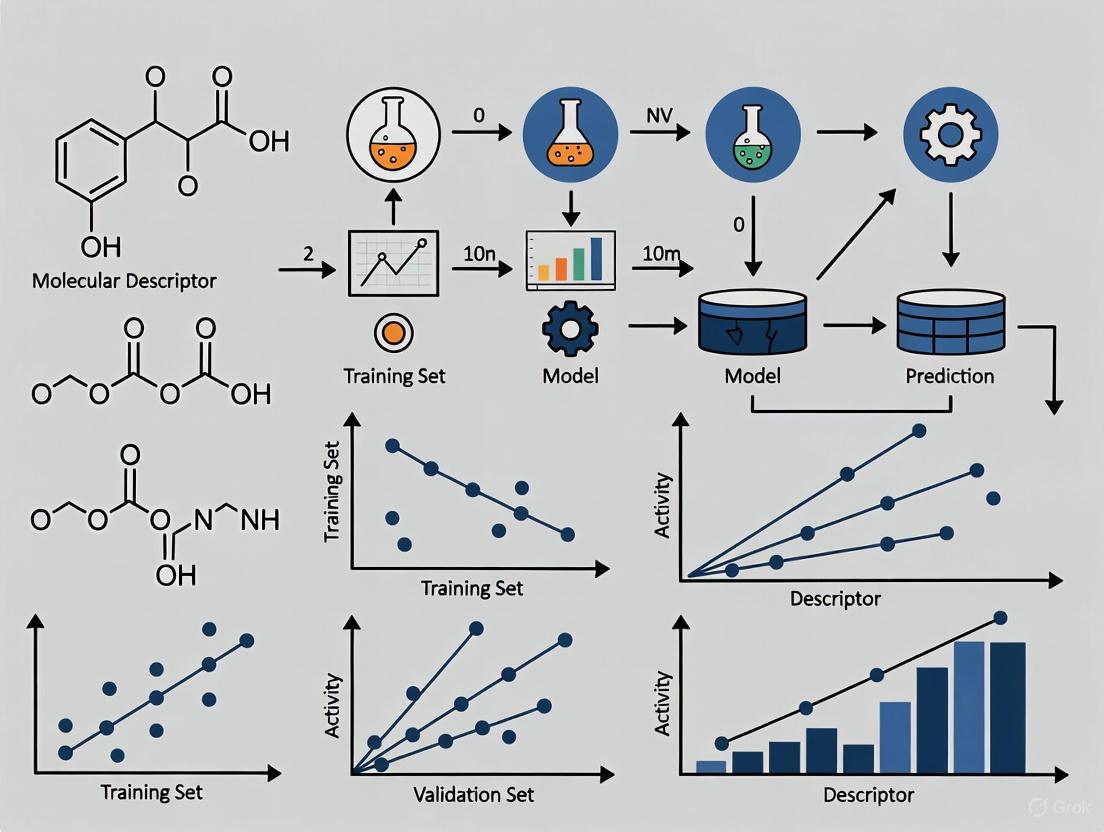

Diagram 1: The historical progression of QSAR methodologies, showing the transition from classical statistical approaches to modern AI-integrated models.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Modern QSAR research relies on a sophisticated ecosystem of computational tools, databases, and platforms that enable the development and application of predictive models. The table below details key resources cited in recent literature.

Table 3: Essential Computational Tools for Modern QSAR Research

| Tool/Resource | Type | Primary Function | Application in QSAR |

|---|---|---|---|

| DeepAutoQSAR [7] | Commercial Platform | Automated machine learning for QSAR | Training and application of predictive ML models with automated descriptor computation and model evaluation |

| RDKit [3] | Open-source Cheminformatics | Molecular descriptor calculation | Computation of molecular descriptors, fingerprints, and cheminformatics utilities |

| ChEMBL [5] | Public Database | Bioactivity data repository | Source of curated bioactivity data for model training and validation |

| Schrödinger Suite [7] | Commercial Software | Integrated drug discovery platform | Molecular docking, dynamics, and QSAR model implementation |

| PaDEL-Descriptor [3] | Open-source Software | Molecular descriptor calculation | Generation of 2D and 3D molecular descriptors for QSAR modeling |

| AutoQSAR [3] | Automated Modeling Tool | Machine learning workflow automation | Rapid generation and validation of QSAR models with multiple algorithms |

Integrated Workflows: Combining QSAR with Structural and Systems Biology

The most advanced contemporary QSAR applications are embedded within integrated workflows that combine ligand-based and structure-based approaches. As demonstrated in the TNKS2 inhibitor case study, successful drug discovery campaigns now typically combine:

- QSAR Modeling: For initial activity prediction and compound prioritization [5]

- Molecular Docking: To evaluate binding modes and protein-ligand interactions [3] [5]

- Molecular Dynamics Simulations: To assess binding stability and conformational flexibility [3] [5]

- Network Pharmacology: To contextualize targets within broader biological pathways and systems [5]

This integrated approach provides a more comprehensive understanding of the relationship between chemical structure and biological activity, moving beyond simple correlation to mechanistic interpretation [3] [8]. The synergy between these methods creates a powerful framework for rational drug design that leverages both pattern recognition from large datasets and atomic-level understanding from structural biology.

Diagram 2: Modern AI-QSAR integrated workflow showing the iterative process of model development, validation, and experimental integration.

The historical trajectory of QSAR modeling reveals a remarkable evolution from simple linear regression to sophisticated AI-integrated approaches. This journey has transformed QSAR from a specialized statistical tool to a central methodology in modern drug discovery. The integration of deep learning architectures such as graph neural networks and transformers has enabled the development of models that learn directly from molecular structure, capturing complex, hierarchical patterns beyond human intuition or traditional descriptors [3] [4].

Future developments in QSAR modeling are likely to focus on several key areas:

- Enhanced Interpretability: While AI models offer superior predictive power, their "black box" nature remains a challenge for regulatory acceptance and mechanistic understanding. Methods like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) are being increasingly integrated to address this limitation [3].

- Integration with Emerging Technologies: Quantum computing promises to further accelerate QSAR applications, particularly for quantum chemical descriptor calculations and complex molecular simulations [4].

- Democratization through Cloud Platforms: Cloud-based QSAR platforms and open-source resources are making advanced modeling accessible to non-specialists, supporting the democratization of AI-driven drug discovery [3] [7].

- Multi-Modal Data Integration: Future QSAR frameworks will increasingly incorporate diverse data types, including genomics, proteomics, and clinical data, to develop more comprehensive models of drug action and safety [6] [9].

The progression from classical linear regression to AI-integrated models represents not just a methodological shift but a fundamental transformation in how we understand and exploit the relationship between chemical structure and biological activity. This evolution has positioned QSAR as an indispensable component of modern computational drug discovery, capable of navigating the immense complexity of biological systems and chemical space to accelerate the development of novel therapeutics. As AI technologies continue to advance and integrate with experimental validation, QSAR's role in bridging computational prediction and therapeutic innovation will only grow more significant.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone computational methodology in modern cheminformatics and drug discovery. At its core, QSAR is a mathematical modeling approach that relates a chemical compound's molecular structure to its biological activity or physicochemical properties [10] [2]. The fundamental premise of QSAR is that molecular structure, encoded through numerical descriptors, contains deterministic features that predict biological response [11]. This principle enables researchers to move beyond qualitative assessments to quantitative predictions that guide chemical optimization.

The evolution of QSAR methodologies has progressed from classical linear regression models to contemporary machine learning and deep learning approaches [12] [13]. This transformation has significantly expanded the capability to model complex, non-linear relationships in high-dimensional chemical spaces. In the context of machine learning for QSAR research, these methodologies have unleashed considerable potential for processing unstructured data and predicting biological activities with increasing accuracy [12]. The integration of artificial intelligence (AI) with QSAR has further transformed modern drug discovery by empowering faster, more accurate identification of therapeutic compounds [13].

The Fundamental QSAR Equation

The fundamental equation of QSAR establishes a mathematical relationship between biological activity and molecular descriptors representing structural and physicochemical properties [2]. The generalized form of this equation is:

Activity = f(physicochemical properties and/or structural properties) + error [2]

This equation comprises three essential components: the biological activity measurement, the molecular descriptor function, and the error term. The biological activity is typically expressed quantitatively as the concentration of a substance required to elicit a specific biological response, such as IC₅₀ or EC₅₀ values [2]. The function of descriptors represents the mathematical model linking structural attributes to activity, while the error term encompasses both model bias and observational variability [2].

In practice, this generalized equation takes specific forms depending on the modeling approach:

- Linear QSAR Models: Activity = w₁d₁ + w₂d₂ + ... + wₙdₙ + b + ε [11]

- Non-linear QSAR Models: Activity = f(d₁, d₂, ..., dₙ) + ε [11]

Where wᵢ represents model coefficients, dᵢ are molecular descriptors, b is the intercept, and ε denotes the error term [11]. For non-linear models, the function f can be learned through various machine learning algorithms including neural networks, support vector machines, or random forests [11] [13].

Table 1: Components of the Fundamental QSAR Equation

| Component | Description | Examples |

|---|---|---|

| Biological Activity | Quantitative measure of compound's effect | IC₅₀, EC₅₀, Kᵢ, LD₅₀ [2] |

| Descriptor Function | Mathematical model relating structure to activity | Linear regression, PLS, neural networks [11] |

| Molecular Descriptors | Numerical representations of molecular features | Hydrophobicity, steric, electronic, topological descriptors [11] [10] |

| Error Term | Unexplained variability in the relationship | Model bias, observational noise [2] |

Molecular Descriptors in QSAR

Molecular descriptors are numerical values that encode various chemical, structural, or physicochemical properties of compounds [13]. They serve as quantitative fingerprints that capture essential features of molecular structure that influence biological activity. The selection and calculation of appropriate descriptors is a critical step in QSAR model development, as they determine the information content available for modeling structure-activity relationships [11].

Descriptors can be classified based on the dimensionality of the structural representation they encode:

Table 2: Classification of Molecular Descriptors in QSAR

| Descriptor Type | Basis of Calculation | Specific Examples | Applications |

|---|---|---|---|

| 1D Descriptors | Global molecular properties | Molecular weight, atom count, bond count [13] | Preliminary screening, simple property predictions |

| 2D Descriptors | Molecular topology | Topological indices, connectivity indices, path counts [14] [11] | High-throughput screening, large dataset analysis |

| 3D Descriptors | Three-dimensional structure | Molecular surface area, volume, Comparative Molecular Field Analysis (CoMFA) fields [14] [13] | Modeling ligand-receptor interactions, 3D-QSAR |

| 4D Descriptors | Conformational ensembles | Ensemble-based properties, conformational flexibility metrics [13] | Accounting for molecular flexibility, advanced 3D-QSAR |

The calculation of molecular descriptors requires specialized software tools. Commonly used packages include DRAGON, PaDEL-Descriptor, RDKit, Mordred, and OpenBabel [11]. These tools can generate hundreds to thousands of descriptors for a given set of molecules, necessitating careful feature selection to build robust and interpretable QSAR models [11].

Quantum chemical descriptors represent an advanced category that includes properties such as HOMO-LUMO gap, dipole moment, molecular orbital energies, and electrostatic potential surfaces [13]. These descriptors have found extensive application in QSAR modeling, particularly for drug-like molecules where electronic properties significantly influence bioactivity [13].

QSAR Modeling Workflow and Methodologies

The development of robust QSAR models follows a systematic workflow encompassing multiple critical stages. This process ensures the creation of predictive and reliable models that can be effectively applied to novel compounds.

Figure 1: QSAR Modeling Workflow: The comprehensive process for developing and validating QSAR models, from data preparation through to application.

Data Preparation and Preprocessing

The foundation of any robust QSAR model is high-quality data. The initial stage involves compiling a dataset of chemical structures and their associated biological activities from reliable sources such as literature, patents, and experimental data [11]. Data curation must address several critical aspects: removal of duplicate or erroneous entries, standardization of chemical structures (including handling of salts, tautomers, and stereochemistry), and conversion of biological activities to consistent units [11]. Appropriate data splitting into training, validation, and external test sets is essential for proper model development and evaluation [11].

Feature Selection and Model Building

With numerous molecular descriptors typically available, feature selection becomes crucial to identify the most relevant descriptors and avoid overfitting [11]. Common feature selection methods include filter methods (ranking descriptors based on individual correlation), wrapper methods (using the modeling algorithm to evaluate descriptor subsets), and embedded methods (feature selection as part of model training) [11].

The choice of modeling algorithm depends on the complexity of the structure-activity relationship and the available data. Classical approaches include Multiple Linear Regression (MLR) and Partial Least Squares (PLS), while machine learning methods encompass Support Vector Machines (SVM), Random Forests (RF), and Neural Networks (NN) [11] [13].

Table 3: QSAR Modeling Algorithms and Their Applications

| Algorithm | Type | Key Features | Best Suited For |

|---|---|---|---|

| Multiple Linear Regression (MLR) | Linear | Simple, interpretable, assumes linear relationship [11] [10] | Congeneric series with clear linear structure-activity relationships |

| Partial Least Squares (PLS) | Linear | Handles multicollinearity, works with many descriptors [14] [11] | 3D-QSAR (CoMFA, CoMSIA), datasets with correlated descriptors |

| Support Vector Machines (SVM) | Non-linear | Captures complex relationships, robust to overfitting [11] [15] | Non-linear relationships, smaller datasets with complex patterns |

| Random Forests (RF) | Non-linear | Handles noisy data, built-in feature selection [15] [13] | Large, complex datasets, robust predictive modeling |

| Neural Networks (NN) | Non-linear | Flexible, learns intricate patterns, deep learning architectures [16] [13] | Very large datasets, complex non-linear relationships, deep learning applications |

Model Validation and Applicability Domain

Rigorous validation is essential to ensure the reliability and predictive power of QSAR models. Validation assesses both the internal robustness of the model and its external predictivity for new compounds [2].

Validation Techniques

Internal validation employs the training data to estimate model performance, typically through cross-validation techniques. Leave-one-out (LOO) cross-validation involves using a single compound as the test set and the remainder as training, repeating this process for all compounds [11] [10]. k-fold cross-validation divides the training set into k subsets, using k-1 for training and one for testing, rotating through all subsets [11].

External validation uses an independent test set that was not involved in model development, providing a more realistic assessment of predictive performance on unseen data [11] [2]. Additional validation methods include data randomization (Y-scrambling) to verify the absence of chance correlations, and assessment of the model's applicability domain (AD) to define the chemical space where reliable predictions can be made [2].

Figure 2: QSAR Validation Framework: Comprehensive strategy for assessing model robustness, predictivity, and applicability domain.

Validation Metrics

Key metrics for evaluating QSAR models include R² (coefficient of determination) for goodness of fit, Q² (cross-validated R²) for internal predictive ability, and root mean square error (RMSE) for prediction errors [16] [2]. For classification QSAR models, additional metrics such as accuracy, sensitivity, specificity, and receiver operating characteristic (ROC) curves are employed [15].

Advanced QSAR Approaches in Machine Learning Research

The integration of machine learning with QSAR modeling has significantly expanded capabilities for drug discovery and chemical property prediction. Contemporary approaches leverage advanced algorithms and novel molecular representations to capture complex structure-activity relationships.

Machine Learning-Enhanced QSAR

Machine learning algorithms have dramatically improved QSAR predictive power, particularly for handling complex, high-dimensional chemical datasets [13]. Random Forests are valued for their robustness, built-in feature selection, and ability to handle noisy data, while Support Vector Machines excel in scenarios with limited samples and high descriptor-to-sample ratios [13]. Recent advances focus on improving model interpretability through techniques such as SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations), which help identify which descriptors most influence predictions [13].

Deep Learning and Graph-Based Approaches

Deep learning architectures have enabled the development of learned molecular representations without manual descriptor engineering [13]. Graph Neural Networks (GNNs) operate directly on molecular graphs, treating atoms as nodes and bonds as edges, thereby capturing inherent structural information [13]. SMILES-based transformers apply natural language processing techniques to chemical structures represented as text strings, allowing the model to learn complex patterns from large chemical databases [13].

3D-QSAR and Structural Methods

3D-QSAR approaches incorporate spatial molecular properties, providing enhanced capability for modeling steric and electrostatic interactions. Comparative Molecular Field Analysis (CoMFA) and Comparative Molecular Similarity Index Analysis (CoMSIA) represent prominent 3D-QSAR techniques that sample steric and electrostatic fields around aligned molecules [14]. These methods typically employ Partial Least Squares (PLS) regression for model building due to the high dimensionality of the field descriptors [14]. Recent advancements integrate machine learning with 3D-QSAR, demonstrating superior performance compared to traditional methods [15].

Experimental Protocols and Research Toolkit

Standard QSAR Development Protocol

A typical QSAR development protocol involves the following detailed steps:

Dataset Compilation: Collect a minimum of 20-30 compounds with consistent biological activity data from a common experimental protocol to ensure comparable potency values [10]. The dataset should cover a diverse but relevant chemical space to the problem domain [11].

Structure Standardization: Remove salts, normalize tautomers, handle stereochemistry consistently, and generate canonical representations using tools such as RDKit or OpenBabel [11].

Descriptor Calculation: Compute molecular descriptors using software such as DRAGON, PaDEL-Descriptor, or Mordred [11]. Include diverse descriptor types (constitutional, topological, electronic, geometric) to comprehensively represent molecular features.

Data Preprocessing: Address missing values through removal or imputation methods. Scale descriptors to zero mean and unit variance to ensure equal contribution during model training [11]. Split data into training (~70-80%), validation (~10-15%), and external test sets (~10-15%) using algorithms such as Kennard-Stone to ensure representative sampling [11].

Feature Selection: Apply appropriate feature selection methods (filter, wrapper, or embedded) to identify the most relevant descriptors and reduce overfitting [11]. Ensure selected descriptors are not highly correlated to avoid multicollinearity issues [10].

Model Training: Build models using selected algorithms, optimizing hyperparameters through grid search or Bayesian optimization with cross-validation [13]. For neural networks, optimize architecture, learning rate, and regularization parameters.

Model Validation: Perform internal validation through cross-validation, external validation using the test set, and robustness checks through Y-scrambling [11] [2]. Define the applicability domain to identify where the model can make reliable predictions [2].

The QSAR Researcher's Toolkit

Table 4: Essential Resources for QSAR Modeling

| Resource Category | Specific Tools/Software | Primary Function | Key Features |

|---|---|---|---|

| Descriptor Calculation | DRAGON, PaDEL-Descriptor, RDKit, Mordred [11] [13] | Generation of molecular descriptors | Comprehensive descriptor libraries, batch processing capabilities |

| Chemical Structure Handling | RDKit, OpenBabel, ChemAxon [11] | Structure standardization, format conversion | SMILES parsing, tautomer normalization, stereochemistry handling |

| Machine Learning Libraries | scikit-learn, TensorFlow, PyTorch [13] | Implementation of ML algorithms | Pre-built algorithms, neural network architectures, visualization tools |

| Specialized QSAR Software | SYBYL (CoMFA, CoMSIA), QSARINS, Build QSAR [14] [13] | Dedicated QSAR model development | 3D-QSAR capabilities, model validation workflows, visualization |

| Molecular Docking | MOE, Schrödinger Suite, GOLD, AutoDock [14] | Structure-based drug design | Protein-ligand docking, binding pose prediction, scoring functions |

| Data Sources | ChEMBL, PubChem, Food Animal Residue Avoidance Databank [16] | Experimental biological activity data | Large compound databases, curated bioactivity data, ADMET properties |

The fundamental equation of QSAR represents the mathematical embodiment of the structure-activity principle that has guided drug discovery and chemical design for decades. As QSAR methodologies have evolved from classical statistical approaches to contemporary machine learning and deep learning frameworks, the core objective remains unchanged: to establish quantitative, predictive relationships between molecular structure and biological activity.

The integration of artificial intelligence with QSAR modeling has created powerful synergies that enhance predictive accuracy, enable processing of complex chemical spaces, and accelerate therapeutic discovery. These advancements are particularly relevant in the context of modern drug discovery challenges, where the ability to rapidly identify and optimize lead compounds provides significant strategic advantages. As QSAR methodologies continue to evolve, they will undoubtedly remain essential components of the computational chemist's toolkit, bridging the gap between molecular structure and biological function through quantitative, data-driven approaches.

In modern Quantitative Structure-Activity Relationship (QSAR) modeling, molecular descriptors serve as the fundamental language that translates chemical structures into numerical data amenable to machine learning analysis. These quantitative representations of molecular properties provide the input features that enable artificial intelligence (AI) algorithms to establish mathematical relationships between chemical structure and biological activity [3] [17]. The evolution of QSAR from classical statistical methods to advanced machine learning frameworks has further elevated the importance of well-chosen molecular descriptors, as they directly influence model accuracy, interpretability, and predictive power [3].

Molecular descriptors encompass a wide spectrum of chemical information, ranging from simple atom counts to complex quantum-chemical properties and three-dimensional structural parameters [17]. The strategic selection and engineering of these descriptors is crucial for building robust QSAR models that can effectively navigate chemical space and generate reliable predictions for drug discovery applications [3] [18]. This technical guide examines the core categories of molecular descriptors essential for contemporary QSAR research, with particular emphasis on their computational derivation, strategic application in machine learning pipelines, and significance for rational drug design.

Molecular Descriptor Fundamentals

Definition and Purpose in QSAR

Molecular descriptors are numerical representations that encode chemical information derived from a molecule's structure [11] [17]. In QSAR modeling, they function as independent variables (features) that correlate with a dependent biological activity or property, forming the basis for predictive model building [11]. The underlying principle is that structural variations systematically influence biological activity, and these relationships can be captured mathematically through appropriate descriptor-activity mappings [11].

The calculation of molecular descriptors typically occurs after chemical structure standardization, which may include removal of salts, normalization of tautomers, and handling of stereochemistry [11]. Subsequently, specialized software tools generate hundreds to thousands of descriptor values for each compound, creating the feature matrix used for model training and validation [11] [17].

Integration with Machine Learning Workflows

In AI-driven QSAR, molecular descriptors serve as critical inputs for various machine learning algorithms, from traditional methods like Partial Least Squares (PLS) to advanced techniques including Random Forests, Support Vector Machines (SVM), and Graph Neural Networks (GNNs) [3] [11]. The choice and quality of descriptors significantly impact model performance, with optimal feature selection helping to mitigate overfitting and enhance interpretability [3] [18].

Recent innovations include "deep descriptors" learned automatically by neural networks from molecular graphs or SMILES strings, which can capture hierarchical chemical features without manual engineering [3]. However, traditional knowledge-driven descriptors remain vital for model interpretability and providing medicinal chemists with actionable insights for compound optimization [3] [17].

Table 1: Categories of Molecular Descriptors in QSAR Modeling

| Category | Description | Examples | QSAR Applications |

|---|---|---|---|

| Constitutional | Simple counts of atoms, bonds, and functional groups | Molecular weight, number of H-bond donors/acceptors, rotatable bonds | Preliminary screening, drug-likeness filters (e.g., Lipinski's Rule of 5) |

| Topological | Based on molecular connectivity and graph theory | Topological indices, molecular connectivity indices, Kier-Hall indices | Modeling absorption, permeability, and basic pharmacophore patterns |

| Electronic | Describe electronic distribution and properties | HOMO/LUMO energies, dipole moment, molecular orbital energies | Predicting reactivity, metabolism, and target interaction mechanisms |

| 3D Descriptors | Derived from three-dimensional molecular structure | Molecular surface area, volume, polar surface area, shape indices | Protein-ligand docking, binding affinity prediction, complex activity relationships |

| 4D Descriptors | Account for conformational flexibility and ensembles | Conformer-dependent properties, interaction energy fields | Enhanced prediction accuracy for flexible molecules with multiple bioactive conformations |

Constitutional Descriptors

Definition and Calculation

Constitutional descriptors represent the most fundamental category of molecular descriptors, consisting of simple counts of atoms, bonds, and functional groups within a molecule [11] [17]. These zero-dimensional descriptors are calculated directly from the molecular formula or connection table without considering atomic connectivity or spatial arrangement. Common examples include molecular weight, counts of specific atom types (e.g., carbon, oxygen, nitrogen), number of rotatable bonds, hydrogen bond donors and acceptors, and ring counts [17].

The computation of constitutional descriptors is computationally inexpensive and deterministic, requiring only 2D molecular structure information. Tools like RDKit, PaDEL-Descriptor, and Dragon can rapidly generate these descriptors for large compound libraries [11] [17].

Applications in Drug Discovery

Constitutional descriptors form the basis for drug-likeness filters such as Lipinski's Rule of Five, which uses molecular weight, H-bond donors, H-bond acceptors, and calculated logP to identify compounds with likely poor oral bioavailability [17]. In QSAR modeling, these descriptors provide baseline chemical information that often correlates with fundamental physicochemical properties and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) characteristics [18].

Despite their simplicity, constitutional descriptors frequently contribute significantly to QSAR models for properties dominated by bulk molecular features. For instance, molecular weight and rotatable bond count are important predictors of membrane permeability and oral bioavailability [17]. However, their limited chemical specificity makes them insufficient alone for modeling complex structure-activity relationships, necessitating supplementation with more sophisticated descriptor types.

Topological Descriptors

Theoretical Foundation

Topological descriptors, also known as 2D descriptors, are derived from the graph representation of a molecule, where atoms correspond to vertices and bonds to edges [11] [17]. These descriptors encode patterns of molecular connectivity using mathematical approaches from graph theory, capturing structural characteristics such as branching, cyclicity, and molecular complexity without requiring 3D coordinate information [17].

Key topological descriptors include various connectivity indices (e.g., Kier-Hall indices), path counts between atoms, and information-theoretic measures based on molecular symmetry and complexity [17]. These descriptors are typically generated from the hydrogen-suppressed molecular graph, focusing on the heavy atom skeleton and its connectivity pattern.

QSAR Applications and Significance

Topological descriptors have demonstrated exceptional utility in QSAR modeling across diverse applications. A comprehensive comparison of descriptor types for ADME-Tox prediction found that 2D descriptors frequently outperform fingerprint-based representations for targets including Ames mutagenicity, hERG inhibition, and blood-brain barrier permeability [18]. The study revealed that models built using traditional 2D descriptors achieved superior performance compared to those using Morgan fingerprints or MACCS keys across multiple machine learning algorithms [18].

The strength of topological descriptors lies in their ability to capture molecular complexity and substructural patterns that correlate with biological activity while remaining invariant to molecular conformation and orientation [17]. This makes them particularly valuable for high-throughput screening applications where 3D structure information may be unavailable or computationally prohibitive. Additionally, certain topological descriptors offer favorable interpretability, allowing medicinal chemists to trace model predictions back to specific structural features for rational drug design [11].

Electronic Descriptors

Quantum Chemical Foundations

Electronic descriptors quantify the electronic distribution and reactivity properties of molecules, derived from quantum mechanical calculations that solve the electronic Schrödinger equation for molecular systems [3] [17]. These descriptors provide insight into how molecules interact with biological targets through electrostatic, polar, and charge-transfer interactions. Essential electronic descriptors include HOMO-LUMO energies (Highest Occupied and Lowest Unoccupied Molecular Orbitals), HOMO-LUMO gap, dipole moment, atomic partial charges, and electrostatic potential surfaces [3] [17].

The computation of electronic descriptors typically involves quantum chemistry methods such as Density Functional Theory (DFT), which offers an optimal balance between accuracy and computational cost for drug-sized molecules [3]. Recent advances include machine learning potentials that dramatically accelerate these calculations while maintaining quantum-level accuracy [19].

Applications in Mechanism-Based QSAR

Electronic descriptors are indispensable for modeling biological activities where electronic interactions dominate the structure-activity relationship. The HOMO-LUMO gap, representing the energy required for electron excitation, frequently correlates with metabolic stability and reactivity [3]. Dipole moments and electrostatic potential maps help predict binding orientations in protein active sites and solvation effects [17].

In studies of persistent organic pollutants (POPs), HOMOEnergyDMol3 emerged as a critical descriptor for predicting air half-lives, reflecting the importance of electron donation capability in atmospheric degradation processes [20]. For drug discovery applications, electronic descriptors enhance predictions of metabolic transformations, toxicity mechanisms, and targeted protein degradation systems like PROTACs, where electronic properties influence the formation of ternary complexes [3].

3D Molecular Descriptors

Structural and Conformational Representation

3D molecular descriptors encode information derived from the three-dimensional structure of molecules, including spatial arrangement, shape, and surface properties [3] [17]. These descriptors require generation of low-energy conformations, typically through molecular mechanics force fields or quantum chemical optimization [17]. Common 3D descriptors include molecular surface area (van der Waals, solvent-accessible), molecular volume, polar surface area (PSA), radius of gyration, and principal moments of inertia [17].

Advanced 3D descriptors capture more complex spatial properties, such as Comparative Molecular Field Analysis (CoMFA) fields that represent steric and electrostatic interactions at grid points around the molecule, and shape descriptors that quantify molecular similarity based on volume overlap [3]. The generation of these descriptors necessitates careful conformational analysis to identify representative structures, often focusing on the presumed bioactive conformation [17].

Applications in Structure-Based Drug Design

3D descriptors excel in QSAR applications where molecular shape and spatial complementarity to biological targets significantly influence activity. They are particularly valuable for structure-based drug design, enabling correlation of structural features with binding affinity when protein structure information is available [3]. Polar Surface Area (PSA) has become a widely adopted descriptor for predicting membrane permeability, including blood-brain barrier penetration [17] [18].

The evolution beyond 3D to 4D descriptors incorporates conformational flexibility by considering ensembles of molecular structures rather than single static conformations [3]. These ensemble-based descriptors provide more realistic representations of molecules under physiological conditions and have demonstrated improved performance in QSAR refinement and ligand-based pharmacophore modeling [3]. Recent studies indicate that while 3D descriptors can enhance model accuracy for specific endpoints, their performance advantage over comprehensive 2D descriptors is often target-dependent [18].

Table 2: Computational Tools for Molecular Descriptor Calculation

| Software Tool | Descriptor Coverage | Key Features | License |

|---|---|---|---|

| RDKit | Comprehensive 1D, 2D, limited 3D | Open-source, Python integration, descriptor importance analysis | Open-source |

| PaDEL-Descriptor | 1D, 2D, and fingerprint types | Standalone software, 2D only, fast calculation of ~1875 descriptors | Free |

| Dragon | Extensive (over 5,000 descriptors) | Commercial grade, broad descriptor range, well-validated | Commercial |

| Mordred | 1D, 2D (over 1,800 descriptors) | Python-based, compatible with scikit-learn | Open-source |

| Schrödinger | Comprehensive 2D, 3D, quantum | Integrated drug discovery suite, high-quality 3D structures | Commercial |

Experimental Protocols for Descriptor Evaluation

Benchmarking Methodology for Descriptor Performance

Rigorous evaluation of molecular descriptor sets requires systematic benchmarking protocols. A representative methodology involves curating diverse datasets with known biological activities, calculating multiple descriptor types, and building QSAR models using different machine learning algorithms with standardized validation procedures [18]. For example, in ADME-Tox descriptor comparisons, datasets should include 1,000+ compounds with balanced active/inactive ratios for reliable statistics [18].

The experimental workflow typically includes: (1) data curation (removing duplicates, standardizing structures, handling missing values); (2) descriptor calculation using multiple software tools; (3) descriptor preprocessing (removing constant and highly correlated variables, normalization); (4) model building with various algorithms (e.g., XGBoost, SVM, Neural Networks); and (5) comprehensive validation using both internal (cross-validation) and external test sets [11] [18]. Performance metrics should extend beyond simple accuracy to include area under ROC curve, precision-recall curves, and applicability domain assessment [11] [18].

Case Study: ADME-Tox Descriptor Comparison

A recent benchmark study compared descriptor performance across six ADME-Tox targets (Ames mutagenicity, P-glycoprotein inhibition, hERG inhibition, hepatotoxicity, blood-brain barrier permeability, and CYP 2C9 inhibition) using two machine learning algorithms (XGBoost and RPropMLP) [18]. The research implemented strict data curation protocols including salt removal, element filtering (C, H, N, O, S, P, F, Cl, Br, I), and 3D structure optimization with Schrödinger's Macromodel [18].

Results demonstrated that traditional 2D descriptors frequently outperformed fingerprint-based representations, with 2D descriptors producing superior models for almost every dataset compared to descriptor combinations [18]. This finding highlights the enduring value of well-curated 2D descriptors despite the increasing popularity of fingerprint-based approaches and deep learning representations.

Visualization of QSAR Workflow with Molecular Descriptors

This workflow diagram illustrates the systematic process of incorporating diverse molecular descriptors into QSAR modeling pipelines, highlighting how different descriptor categories contribute to machine learning-based activity prediction and drug design.

Table 3: Essential Computational Tools for Descriptor-Based QSAR

| Tool/Resource | Type | Primary Function | Application in QSAR |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular descriptor calculation and fingerprint generation | Open-source platform for calculating 1D, 2D descriptors and molecular fingerprints [17] |

| PaDEL-Descriptor | Software Package | Molecular descriptor and fingerprint calculation | Standalone tool for calculating ~1875 molecular descriptors and 12 types of fingerprints [11] [17] |

| Dragon | Commercial Software | Comprehensive descriptor calculation | Industry-standard tool generating >5,000 molecular descriptors for QSAR modeling [3] [17] |

| Schrödinger Suite | Commercial Drug Discovery Platform | Molecular modeling and descriptor calculation | Integrated environment for generating high-quality 3D structures and advanced molecular descriptors [18] |

| scikit-learn | Machine Learning Library | Model building and feature selection | Python library for machine learning algorithms, feature selection, and model validation in QSAR [3] |

| AutoDock/Gnina | Molecular Docking Software | Protein-ligand docking and pose prediction | Structure-based approaches that complement ligand-based QSAR; Gnina uses CNN for scoring poses [19] |

Emerging Trends and Future Directions

The field of molecular descriptors continues to evolve with several emerging trends shaping future QSAR research. Causal inference frameworks are being developed to address confounding in high-dimensional descriptor spaces, using methods like Double Machine Learning to identify descriptors with genuine causal effects on biological activity rather than mere correlation [21]. Quantum machine learning approaches demonstrate potential advantages for QSAR prediction, particularly when dealing with limited data availability, as quantum classifiers may offer superior generalization power with reduced feature sets [22].

The integration of AI-generated descriptors with traditional knowledge-based representations represents a promising direction, combining the pattern recognition strength of deep learning with the interpretability of established descriptors [3] [19]. Additionally, federated learning approaches enable collaborative model development across institutions while preserving data privacy, facilitating the creation of more robust QSAR models using diverse chemical datasets without sharing proprietary information [23].

As these advancements mature, molecular descriptors will continue to serve as the foundational elements connecting chemical structure to biological activity, driving innovation in drug discovery through increasingly sophisticated QSAR methodologies that leverage the complementary strengths of computational chemistry and machine learning.

In the contemporary landscape of drug discovery and environmental chemistry, Quantitative Structure-Activity Relationship (QSAR) modeling has emerged as a pivotal computational approach that mathematically links a chemical compound's structure to its biological activity or physicochemical properties [11]. The foundation of any robust QSAR model rests on three critical pillars: a high-quality dataset of molecules with known activities, powerful machine learning algorithms to discern complex patterns, and sophisticated software tools that can translate molecular structures into numerical representations known as descriptors [24]. These molecular descriptors quantitatively capture structural, physicochemical, and electronic properties of molecules, serving as the essential input variables for QSAR models [11] [25].

The evolution of cheminformatics platforms has fundamentally transformed QSAR research from a traditionally linear, hypothesis-driven discipline to a data-rich, artificial intelligence (AI)-powered paradigm [24]. Modern QSAR workflows now leverage advanced machine learning (ML) and deep learning techniques to navigate complex chemical spaces and predict biological activities with remarkable accuracy. This whitepaper provides a comprehensive technical overview of essential software tools—including open-source solutions like PaDEL, RDKit, and Dragon, alongside leading commercial platforms—that are shaping the future of QSAR research in 2025. By examining their capabilities, integration potential, and specific applications in ML-driven QSAR workflows, this guide aims to equip researchers, scientists, and drug development professionals with the knowledge to select and implement the most appropriate tools for their computational research objectives.

Core Open-Source Cheminformatics Tools

Open-source cheminformatics tools have become fundamental components of modern QSAR research pipelines, offering transparency, flexibility, and cost-effectiveness. These tools primarily function as molecular descriptor calculators and chemical intelligence engines that feed machine learning algorithms with structurally encoded information.

RDKit: The Open-Source Standard

RDKit is an open-source cheminformatics toolkit (BSD-licensed) written in C++ with Python bindings that has become a de facto standard in the field due to its comprehensive functionality, high performance, and active community [26]. While RDKit is a library rather than a standalone graphical application, it provides robust core chemistry functions including molecule I/O, substructure search, fingerprint generation, descriptor calculation, and chemical reaction handling [26]. Its continuous development and updating by the community ensures it remains at the forefront of cheminformatics methodology.

RDKit offers a rich set of molecular fingerprint algorithms and similarity functions, including Morgan fingerprints (circular fingerprints akin to ECFP), classical Daylight-type path fingerprints (RDKit Fingerprint), Topological Torsion and Atom Pair fingerprints, and MACCS keys [26]. These fingerprints serve as critical inputs for machine learning models, particularly for similarity searching and clustering tasks. The toolkit also provides extensive capabilities for virtual screening through fast substructure searches and 2D similarity searches on large chemical libraries, especially when combined with its PostgreSQL cartridge or in-memory fingerprint indices [26]. A key strength of RDKit lies in its integration potential; it features Python, C++, Java, and JavaScript interfaces, allowing it to plug into diverse environments and connect with docking programs, machine learning frameworks, and visualization tools [26].

Dragon and E-Dragon: The Descriptor Specialists

Dragon is a specialized application for calculating molecular descriptors, developed by the Milano Chemometrics and QSAR Research Group [25]. It represents one of the most comprehensive descriptor calculation tools available, generating more than 1,600 molecular descriptors divided into 20 logical blocks [25]. These descriptors encompass everything from simple atom type and functional group counts to complex topological, geometrical, and constitutional descriptors [25]. Dragon requires 3D optimized molecular structures with hydrogen atoms as input, accepting common molecular file formats [25].

The E-Dragon platform provides a web-accessible interface to Dragon's descriptor calculation capabilities, though with some limitations—it can analyze a maximum of 149 molecules and 150 atoms per molecule using the Dragon 5.4 version [25]. For researchers requiring 3D structure generation, E-Dragon integrates CORINA (provided by Molecular Networks GMBH) to calculate 3D atom coordinates when unavailable in the input files [25]. Dragon's extensive descriptor sets have been widely adopted in regulatory and research applications, forming the computational foundation for numerous QSAR projects and models, including those integrated into the US EPA's Toxicity Estimation Software Tool (TEST) and the European CAESAR project for REACH legislation implementation [27].

PaDEL-Descriptor: The Java-Based Alternative

PaDEL-Descriptor is an open-source alternative for molecular descriptor calculation, designed as a Java-based application that provides a comprehensive suite of descriptor and fingerprint calculation capabilities [11]. While the search results provide limited specific details about PaDEL-Descriptor, it is recognized alongside Dragon, RDKit, Mordred, ChemAxon, and OpenBabel as one of the primary software packages available to calculate a wide variety of molecular descriptors [11]. These tools can generate hundreds to thousands of descriptors for a given set of molecules, making careful selection of the most relevant descriptors crucial for building robust and interpretable QSAR models [11].

Table 1: Comparison of Core Open-Source Cheminformatics Tools

| Tool | Primary Function | Descriptor Count | Key Features | Input Requirements | Integration & Licensing |

|---|---|---|---|---|---|

| RDKit | Comprehensive cheminformatics | Not specified (wide variety) | Multiple fingerprint types, substructure search, 3D conformer generation, Python/C++ APIs | SMILES, SDF, Mol, etc. | Open-source (BSD), Python/C++/Java/JS bindings, KNIME nodes |

| Dragon | Molecular descriptor calculation | >1,600 descriptors [25] | 20 descriptor blocks, extensive topological/geometrical descriptors | 3D optimized structures with hydrogens | Commercial, used in TEST, CAESAR, OCHEM [27] |

| E-Dragon | Online descriptor calculation | >1,600 descriptors [25] | Web-based Dragon interface, integrated 3D structure generation | SMILES, SDF, MOL2 files | Free web service (149 molecule limit) [25] |

| PaDEL-Descriptor | Descriptor & fingerprint calculation | Not specified (comprehensive) | Java-based, cross-platform compatibility | Molecular structure files | Open-source [11] |

Commercial Cheminformatics Platforms

Commercial cheminformatics platforms offer integrated, user-friendly solutions that often combine descriptor calculation, model building, and visualization capabilities within unified environments. These platforms are particularly valuable in regulated industries and for organizations requiring robust technical support.

MOE (Molecular Operating Environment)

The Chemical Computing Group's MOE offers an all-in-one platform for drug discovery that integrates molecular modeling, cheminformatics, and bioinformatics [28]. MOE excels in structure-based drug design, molecular docking, and QSAR modeling, while supporting critical tasks like ADMET prediction and protein engineering [28]. Its user-friendly interface and interactive 3D visualization tools make it accessible for a wide range of researchers, from computational specialists to medicinal chemists. MOE employs modular workflows, machine learning integration, and flexible licensing options, positioning it as a comprehensive solution for organizations of all sizes [28].

Schrödinger Suite

Schrödinger's platform integrates advanced quantum chemical methods with machine learning approaches for molecular catalyst design and drug discovery [28]. Their flagship product, Live Design, provides an entry point into most of Schrödinger's tools with scalable licensing. A key differentiator is Schrödinger's development of novel scoring functions, including GlideScore, which is specifically designed to maximize separation of compounds with strong binding affinity from those with little to no binding ability [28]. The platform also includes DeepAutoQSAR, a machine learning solution for predicting molecular properties based on chemical structure [28]. Schrödinger has partnered with Google Cloud to substantially increase the speed and capacity of its physics-based molecule modeling platform, enabling the simulation of billions of potential compounds per week [28].

ChemAxon Suite

ChemAxon offers a comprehensive suite of cheminformatics software tools, including the Plexus Suite and Design Hub, which are widely used in industry for enterprise-level chemical data management [28] [26]. The Plexus Suite is a web-based software package that incorporates ChemAxon's chemistry capabilities for accessing, displaying, searching, and analyzing scientific data [28]. It includes specialized tools such as Plexus Connect for data querying and visualization, Plexus Design for virtual library design, and Plexus Mining for chemically intelligent data mining [28]. Design Hub serves as ChemAxon's platform for compound design and tracking in drug discovery, connecting scientific hypotheses, candidate compound selection, and computational capabilities [28].

OECD QSAR Toolbox

The OECD QSAR Toolbox represents a specialized category of regulatory-focused software, developed to promote the use of (Q)SAR technology in regulatory contexts by making it "readily accessible, transparent, and less demanding in terms of infrastructure costs" [29]. This software application is intended for use by governments, chemical industry, and other stakeholders in filling gaps in (eco)toxicity data needed for assessing the hazards of chemicals [29]. The Toolbox incorporates information and tools from various sources into a logical workflow, with chemical categorization forming a crucial component of its methodology [29]. Its development has occurred in multiple phases, with version 4.7 released in July 2024 and version 4.8 in July 2025 [29].

Table 2: Commercial Cheminformatics Platforms for QSAR Research

| Platform | Primary Focus | Key QSAR Features | Target Users | Licensing Model |

|---|---|---|---|---|

| MOE | Integrated drug discovery | QSAR modeling, molecular docking, ADMET prediction, machine learning integration | Pharmaceutical R&D, academic research | Modular, flexible licensing [28] |

| Schrödinger | Physics-based modeling & ML | DeepAutoQSAR, quantum chemical methods, free energy calculations | Drug discovery organizations, computational chemists | Modular, scalable licensing [28] |

| ChemAxon | Chemical intelligence & enterprise data | Plexus Suite, Design Hub, chemical data management | Enterprise pharmaceutical companies, research institutions | Pay-per-use [28] |

| OECD QSAR Toolbox | Regulatory hazard assessment | Chemical categorization, read-across, (eco)toxicity prediction | Regulators, chemical industry, risk assessors | Free [29] |

| StarDrop | Small molecule design & optimization | Patented AI-guided optimization, QSAR models for ADME/physicochemical properties | Medicinal chemists, lead optimization teams | Modular pricing [28] |

| DataWarrior | Open-source data analysis & visualization | QSAR model development, molecular descriptors, machine learning integration | Academic researchers, small companies | Open-source [28] |

Integrated Workflows: From Molecular Structures to QSAR Predictions

The true power of modern cheminformatics tools emerges when they are integrated into cohesive workflows that transform molecular structures into reliable QSAR predictions. This section outlines standardized protocols and methodologies for leveraging these tools in ML-driven QSAR research.

Standardized QSAR Modeling Workflow

A typical QSAR modeling workflow incorporates multiple steps from data compilation to model validation [11]. The process begins with dataset compilation of chemical structures and associated biological activities from reliable sources, ensuring the dataset is high-quality and representative of the chemical space of interest [11]. This is followed by data cleaning and preprocessing, which involves removing duplicates, standardizing chemical structures (e.g., removing salts, normalizing tautomers, handling stereochemistry), converting biological activities to common units, and handling outliers or missing values [11]. The next step involves molecular descriptor calculation using tools like Dragon, RDKit, or PaDEL-Descriptor to generate numerical representations of the structural, physicochemical, and electronic properties of the compounds [11].

Feature selection techniques are then applied to identify the most relevant descriptors, which helps avoid overfitting and improves model interpretability [11]. The curated dataset is subsequently split into training and test sets, often using methods like the Kennard-Stone algorithm, to enable proper model validation [11]. The core model building phase employs regression or classification algorithms such as multiple linear regression (MLR), partial least squares (PLS), random forest, or more advanced machine learning techniques [11]. Finally, the models undergo rigorous validation using internal (e.g., cross-validation) and external test sets to assess predictive performance and robustness, with careful evaluation of the applicability domain to determine the chemical space where the models can make reliable predictions [11].

Diagram 1: QSAR Modeling Workflow. This diagram illustrates the standardized workflow for QSAR model development, highlighting the integration points for various software tools at different stages.

Machine Learning Integration in QSAR

The integration of machine learning with cheminformatics tools has significantly expanded the capabilities of QSAR modeling. Modern QSAR research employs a diverse array of ML algorithms, ranging from traditional methods to advanced deep learning techniques [30]. Commonly used algorithms include Support Vector Machines (SVM), which are particularly effective for handling non-linear relationships in high-dimensional descriptor spaces; Random Forests, which provide robust performance and feature importance metrics; Artificial Neural Networks (ANNs), which can capture complex non-linear patterns; and Gradient Boosting methods, which often deliver state-of-the-art predictive performance [24].

The selection between linear and non-linear QSAR models depends on the complexity of the structure-activity relationship and the size and quality of the available data [11]. Linear models, including Multiple Linear Regression (MLR) and Partial Least Squares (PLS), assume a straightforward relationship between molecular descriptors and biological activity, offering higher interpretability but potentially limited predictive power for complex endpoints [11]. Non-linear models, including those based on ANN or SVM, can capture more intricate patterns but require larger datasets for training and are more prone to overfitting without proper validation [11]. A comparative study highlighted this distinction, demonstrating that while both linear PLS and non-linear ANN QSAR models were developed for predicting the antioxidant capacity of phenolic compounds, the non-linear ANN model showed stronger predictive performance, underscoring the importance of non-linear relationships between molecular descriptors and biological activity in many scenarios [11].

Advanced Applications: Case Study on Cyclodextrin Complexes

Recent advances in QSAR methodologies are exemplified by sophisticated applications such as predicting the thermodynamic stability of cyclodextrin inclusion complexes [24]. Cyclodextrins are macrocyclic rings composed of glucose residues that form host-guest inclusion complexes, making them valuable in pharmaceutical, cosmetic, and food industries [24]. QSAR/QSPR approaches have been successfully employed to predict stability constants (log K) for these complexes, utilizing molecular descriptors of guest molecules in conjunction with various machine learning algorithms [24].

This application demonstrates the power of integrating comprehensive descriptor sets (such as those generated by Dragon or RDKit) with advanced ML techniques to address complex molecular interaction problems. The success of these models relies on three crucial components: a high-quality dataset of experimental stability constants, comprehensive molecular descriptors characterizing guest structure and physicochemistry, and appropriate ML algorithms that quantitatively express the relationship between descriptors and complex stability [24]. Such advanced applications highlight the growing sophistication of QSAR methodologies and their utility in predicting complex molecular interactions beyond traditional biological activity endpoints.

Essential Research Reagents and Computational Solutions

The experimental and computational infrastructure supporting modern QSAR research comprises both software tools and critical data resources. The table below details key "research reagent solutions" essential for conducting robust QSAR studies.

Table 3: Essential Research Reagents & Computational Solutions for QSAR Research

| Resource Category | Specific Tools/Databases | Function in QSAR Research | Access & Licensing |

|---|---|---|---|

| Descriptor Calculation Tools | Dragon, RDKit, PaDEL-Descriptor, Mordred | Generate numerical representations of molecular structures for ML model input | Commercial, Open-source [11] |

| Integrated Modeling Platforms | MOE, Schrödinger, StarDrop, OECD QSAR Toolbox | Provide end-to-end environments for QSAR model building and validation | Commercial, Free [28] [29] |

| Chemical Databases | PubChem, DrugBank, ZINC15, ChEMBL | Supply chemical structures and associated bioactivity data for training sets | Publicly accessible [31] |

| Machine Learning Frameworks | Scikit-learn, TensorFlow, PyTorch, XGBoost | Implement algorithms for building predictive QSAR models | Open-source |

| Specialized QSAR Applications | CAESAR, TEST, OCHEM, VEGA | Offer pre-validated models for specific endpoints (toxicity, environmental fate) | Free, Web-based [32] [27] |

| Data Preprocessing Tools | RDKit, KNIME, Pipeline Pilot | Handle structure standardization, duplicate removal, feature scaling | Open-source, Commercial [11] [31] |

The landscape of cheminformatics tools for QSAR research offers diverse solutions ranging from specialized open-source descriptor calculators to comprehensive commercial platforms. The strategic selection of appropriate tools depends on multiple factors, including research objectives, available computational expertise, regulatory requirements, and budget constraints. Open-source tools like RDKit and PaDEL-Descriptor provide unparalleled transparency and customization potential, making them ideal for academic research and method development. Commercial platforms such as MOE and Schrödinger offer integrated, user-friendly environments with robust technical support, catering well to industrial drug discovery pipelines. Regulatory-focused tools like the OECD QSAR Toolbox address specific needs for hazard assessment within regulatory frameworks.

As QSAR methodology continues to evolve, several trends are shaping tool development and application: the deepening integration of machine learning and artificial intelligence, the expansion of applicability domains to cover more complex endpoints, the growing importance of model interpretability and regulatory acceptance, and the emergence of hybrid approaches that combine multiple tools in optimized workflows. By understanding the capabilities, strengths, and limitations of the various software tools available, researchers can construct more effective, reliable, and predictive QSAR models that accelerate drug discovery, improve chemical safety assessment, and advance our fundamental understanding of structure-activity relationships across diverse chemical domains.

The Critical Importance of Data Curation and Standardization

In the realm of Quantitative Structure-Activity Relationship (QSAR) research, the pursuit of accurate, reliable, and universally applicable machine learning models is fundamentally dependent on the quality of the underlying data. QSAR modeling is a computational approach that mathematically links a chemical compound's structure to its biological activity or properties, playing a crucial role in drug discovery and environmental chemistry by prioritizing promising drug candidates and reducing animal testing [11]. These models operate on the principle that structural variations systematically influence biological activity, using physicochemical properties and molecular descriptors as predictor variables [11]. While advancements in mathematical algorithms and descriptor development have propelled the field forward, the generalization capability and predictive power of any QSAR model are ultimately constrained by the data from which it is derived [33]. As one comprehensive review notes, "a high-quality dataset is the cornerstone of building an effective QSAR model" [33]. Within a broader thesis on machine learning for QSAR, this whitepaper establishes why rigorous data curation and standardization are not merely preliminary steps but continuous, critical processes that determine the success or failure of computational predictive modeling in chemical sciences.

The development of QSAR models applicable to general molecules remains a significant challenge, primarily due to issues of molecular structure representation, inadequacy of molecular datasets, and limitations in model interpretability and predictive power [33]. These challenges underscore the necessity for meticulous data management. The "garbage in, garbage out" paradigm is particularly pertinent; without precise molecular descriptors and standardized, high-quality data, even the most sophisticated deep learning architectures will produce unreliable predictions [33]. This paper provides researchers, scientists, and drug development professionals with a technical examination of data curation methodologies, experimental protocols for data standardization, and practical visualization tools to enhance the reliability and regulatory acceptance of QSAR models in real-world applications.

The Critical Role of Data in QSAR Modeling

Fundamental Data Requirements for Robust QSAR

QSAR models are fundamentally data-driven, constructed based on molecular training sets that must satisfy several critical criteria to ensure model validity [33]. The quality and representativeness of these datasets directly influence a model's prediction and generalization capabilities [33]. Three primary data characteristics are essential:

- Structural Diversity: Datasets must encompass a wide variety of chemical structures to adequately represent the chemical space of interest, enabling models to recognize patterns beyond narrow structural classes [33] [11].

- Quantitative Activity Data: Biological activity data, typically obtained through rigorous experimentation, should be quantitative, consistently measured, and expressed in standardized units (e.g., Ki, IC50) to allow for meaningful comparative analysis [34] [11].

- Mechanistic Relevance: The structural features and descriptors encoded in the dataset should have plausible correlations with the biological activity or property being modeled, reflecting underlying mechanistic relationships [33].

Pursuing a universal QSAR model capable of reliably predicting the properties of general molecules poses significant data challenges. It requires "having a sufficient number of structure-activity relationship instances as training data to cope with the complexity and diversity of molecular structures and action mechanisms" [33]. This necessitates not only large volumes of data but also broad coverage of chemical space and biological endpoints.

Consequences of Inadequate Data Practices

Failure to implement robust data curation and standardization protocols leads to several critical failures in QSAR modeling:

- Poor Predictive Accuracy: Models trained on uncurated data often fail to generalize beyond their training set, producing inaccurate predictions for new chemical entities [33].

- Limited Applicability Domain: The chemical space where models can make reliable predictions becomes artificially constrained when datasets lack diversity or contain biases [33].

- Reduced Regulatory Acceptance: Regulatory agencies increasingly require transparent documentation of data quality and standardization procedures, with inadequate practices limiting the use of models in safety assessment [35].

The GenoITS workflow for genotoxicity assessment demonstrates the regulatory importance of standardized data, integrating experimental data and QSAR predictions within a structured framework following REACH regulations [35]. Such integration is only possible with rigorously curated and standardized data sources.

Data Curation: Methodologies and Protocols

Data curation encompasses the comprehensive process of collecting, cleaning, and preparing chemical and biological data for QSAR modeling. The following experimental protocols detail the key stages of this process.

Dataset Collection and Compilation

The initial phase involves gathering a comprehensive set of chemical structures and their associated biological activities from reliable sources.

- Protocol 3.1.1: Multi-Source Data Aggregation

- Objective: Compile a structurally diverse dataset representing the relevant chemical space.

- Materials: Public databases (e.g., PubChem, ChEMBL), proprietary corporate databases, peer-reviewed literature, and patent sources.

- Procedure:

- Identify relevant data sources for the target endpoint (e.g., genotoxicity [35], ALK tyrosine kinase inhibition [34]).

- Extract chemical structures in standardized formats (SMILES, InChI).

- Collect associated biological activity values, noting experimental conditions and measurement types (Ki, IC50, etc.).