From Composition to Performance: Decoding Process-Structure-Property Relationships for Advanced Materials and Biomedical Applications

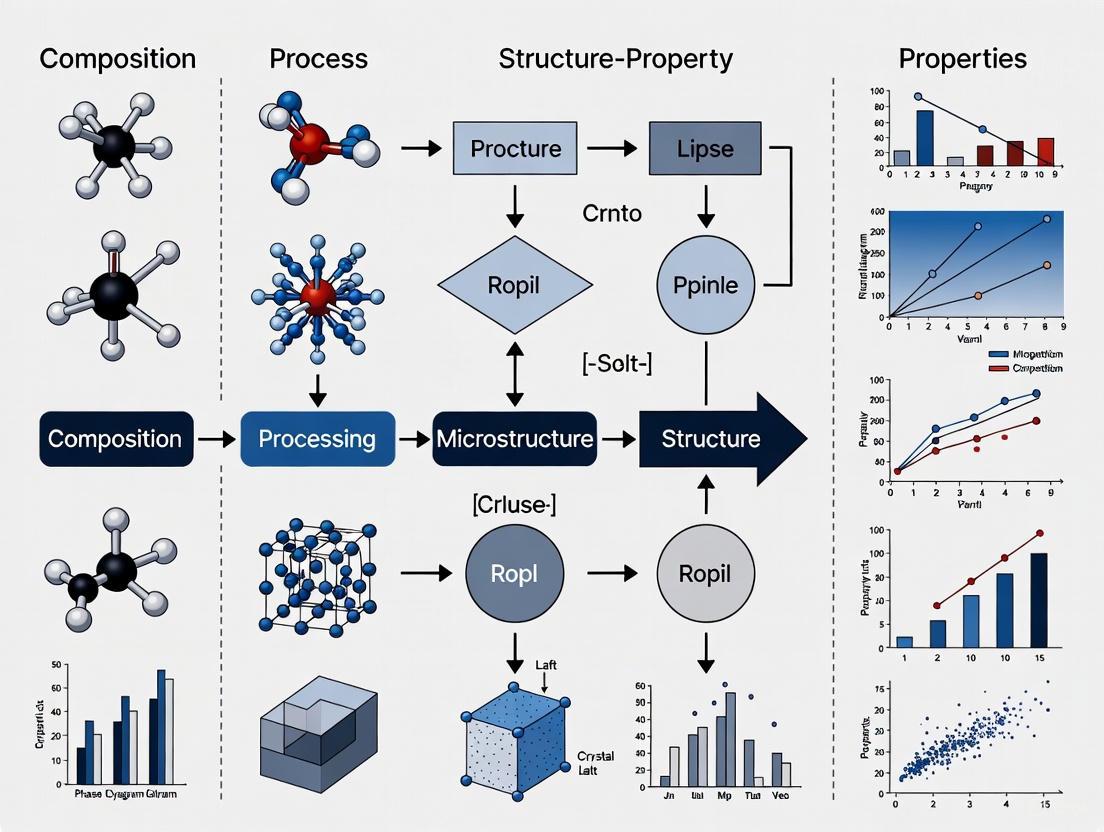

This article provides a comprehensive exploration of Composition-Process-Structure-Property (CPSP) relationships, a foundational paradigm in materials science with critical implications for biomedical and pharmaceutical development.

From Composition to Performance: Decoding Process-Structure-Property Relationships for Advanced Materials and Biomedical Applications

Abstract

This article provides a comprehensive exploration of Composition-Process-Structure-Property (CPSP) relationships, a foundational paradigm in materials science with critical implications for biomedical and pharmaceutical development. We examine the fundamental principles governing how processing conditions and material composition dictate microstructure, which in turn determines critical performance properties. The content covers traditional and emerging data-driven methodologies, including machine learning and generative AI, for modeling these complex relationships. A strong emphasis is placed on troubleshooting common challenges in process optimization, validating predictive models, and conducting comparative analyses of different material systems. This resource is tailored for researchers, scientists, and drug development professionals seeking to leverage CPSP understanding for designing advanced materials, optimizing manufacturing processes, and ensuring product performance and reliability.

The Core Principles: Unraveling the Fundamental Links Between Composition, Processing, and Material Architecture

The Composition-Process-Structure-Property (CPSP) paradigm represents the fundamental framework of materials science and engineering, establishing the causal relationships that govern material behavior and performance. This paradigm posits that a material's ultimate properties are determined by its internal structure, which in turn is controlled by its chemical composition and processing history. Recent advances in computational modeling, machine learning, and data science have transformed this classical concept into a powerful predictive framework for inverse materials design. This technical guide examines the core principles of the CPSP paradigm, explores cutting-edge implementation methodologies across diverse material systems, and provides detailed experimental protocols for establishing robust CPSP relationships. By examining applications from dual-phase steels to high-entropy alloys and drug delivery systems, we demonstrate how the systematic decoding of CPSP linkages enables accelerated development of advanced materials with tailored properties.

The Composition-Process-Structure-Property (CPSP) paradigm serves as the central dogma of materials design, providing a systematic framework for understanding how processing conditions and chemical composition govern microstructural evolution and ultimately determine material properties and performance. This foundational principle recognizes that material behavior cannot be understood through composition alone, but rather through the intricate interrelationships between synthesis parameters, resulting microstructure, and macroscopic manifestations [1] [2].

Traditional materials development has been constrained by forward "process-structure" models that often rely on costly uncertainty quantification and trial-and-error approaches. These methods frequently falter under sparse data conditions and when confronted with complex, heterogeneous microstructures [1]. The inverse design strategy—starting from desired properties and working backward to identify optimal compositions and processing routes—represents a paradigm shift enabled by computational advances. This approach replaces traditional uncertainty quantification with direct "structure-to-process modeling," leveraging real microstructural features to map composition and processing parameters [1].

The evolution of the CPSP paradigm has been accelerated by emerging technologies in artificial intelligence, high-throughput computation, and advanced characterization. Machine learning (ML) techniques now offer powerful tools to uncover underlying patterns from experimental data and predict material properties, providing an effective approach to materials design and optimization [3] [4]. These capabilities are particularly valuable for navigating complex material systems where traditional methods face limitations due to vast compositional spaces and processing parameters [3].

Core Principles of the CPSP Framework

The Fundamental Relationships

The CPSP framework establishes hierarchical relationships between four critical elements in materials design:

- Composition: The chemical identity and proportion of constituent elements, which establishes the fundamental building blocks and potential phase equilibria.

- Processing: The synthesis and manufacturing parameters that dictate how composition transforms into structure through thermal, mechanical, or chemical pathways.

- Structure: The arrangement of material components across multiple length scales, including crystal structure, phase distribution, grain morphology, and defects.

- Properties: The resulting macroscopic behaviors and performance metrics, including mechanical, thermal, electrical, and chemical characteristics.

These relationships are not linear but form an interconnected network with feedback loops. For example, in dual-phase steels, the optimal balance between soft-phase polygonal ferrite (facilitating plastic deformation) and hard-phase martensite (imparting strength) determines mechanical properties, with processing parameters controlling this phase distribution [1]. Similarly, in high-entropy alloys (HEAs), corrosion resistance is influenced not only by chemical composition but also by microstructure and processing techniques, particularly through how crystal structure affects elemental distribution and phase stability [3].

Inverse Design Strategy

A significant advancement in CPSP implementation is the shift from forward models to inverse design strategies. Where traditional approaches follow a "process→structure→property" sequence, inverse design begins with target properties and identifies optimal structures, then determines the composition and processing routes needed to achieve them [1]. This methodology is particularly powerful for applications such as unified dual-phase (UniDP) steels, which enable tailored performance from a single composition, addressing sustainability challenges related to recyclability and weldability [1].

Table 1: Comparison of Traditional vs. Inverse Design Approaches in CPSP

| Aspect | Traditional Forward Design | Inverse CPSP Design |

|---|---|---|

| Sequence | Process → Structure → Property | Property → Structure → Process |

| Uncertainty Handling | Relies on costly uncertainty quantification | Replaces UQ with direct structure-to-process modeling |

| Computational Demand | High for complex systems | Reduced through latent space sampling |

| Data Requirements | Extensive labeled datasets | Leverages both labeled and unlabeled microstructural data |

| Primary Applications | Well-characterized material systems | Complex, multi-component systems with sparse data |

Computational Frameworks for CPSP Modeling

Machine Learning Architectures for CPSP Relationships

Modern CPSP modeling leverages sophisticated machine learning architectures to establish robust relationships across the materials design chain:

Deep Learning Integration: Advanced frameworks integrate variational autoencoders (VAE) to encode authentic microstructural features into a latent space and multilayer perceptrons (MLP) to predict composition, processing routes, and properties [1]. This architecture effectively captures the complexity of free-form microstructures and the nonlinear relationships inherent in material systems, overcoming limitations of traditional microstructural descriptors that fail to capture topological complexity and stochasticity [1].

Knowledge Graph-Driven Approaches: The Mat-NRKG model exemplifies how knowledge graphs can organize and model unstructured process-related data in flexible graph structures, capturing complex relationships among composition, processing, and structure-performance correlations [3]. This approach uses the TransE algorithm for knowledge graph completion to predict crystal structure while integrating compositional and processing information through Graph Convolutional Networks (GCN) augmented with Deep Taylor Block modules [3].

Two-Stage Prediction Frameworks: The Composition and Processing-Driven Two-Stage Corrosion Prediction Framework with Structural Prediction (CPSP Framework) hierarchically models composition–processing–structure–performance relationships [3]. This approach first predicts crystal structure from composition and processing data, then integrates this predicted structure with original inputs to forecast properties, eliminating the need for experimentally obtained structural data during inference and improving engineering applicability [3].

Framework Performance Comparison

Evaluations of different CPSP modeling approaches demonstrate distinct performance characteristics:

Table 2: Performance Comparison of CPSP Modeling Frameworks for HEA Corrosion Prediction

| Model Framework | Base Model | R² Score | MSE | MAE | Key Characteristics |

|---|---|---|---|---|---|

| CP Framework | RF | 0.641 | 0.218 | 0.381 | Composition-only baseline |

| CPP Framework | RF | 0.672 | 0.201 | 0.362 | Includes processing information |

| CPSP Framework | RF | 0.703 | 0.194 | 0.354 | Adds predicted crystal structure |

| CPSP Framework | MLP | 0.689 | 0.207 | 0.368 | Neural network implementation |

| Mat-NRKG | GCN-DTB | 0.823 | 0.146 | 0.291 | Knowledge graph integration |

| Mat-NRKGCPP | GCN-DTB | 0.672 | 0.211 | 0.349 | Ablated without structure prediction |

The CPSP Framework consistently outperforms both Composition-Only (CP) and Composition-Processing-Based (CPP) frameworks, with the knowledge graph-driven Mat-NRKG model achieving the best performance (25% improvement in MSE over the best-performing baseline) [3]. These results quantitatively demonstrate the benefit of incorporating crystal structure information into property prediction processes [3].

Experimental Protocols for CPSP Relationship Establishment

Microstructure-Driven Inverse Design Protocol

Objective: To establish inverse CPSP linkages for dual-phase steels using microstructure-centric deep learning.

Materials and Equipment:

- Dataset of microstructural images from prior studies [1]

- Data augmentation pipeline (rotation, flipping, brightness adjustment)

- Computational resources for deep learning (GPU acceleration recommended)

- Variational Autoencoder (VAE) architecture

- Multilayer Perceptron (MLP) network

- Specific latent space sampling algorithm

Procedure:

Data Preparation:

- Collect microstructural images from prior studies of dual-phase steels [1]

- Apply binarization and data augmentation to enhance quality and variability

- Curate dataset to ensure representative sampling of microstructural features

Model Architecture Implementation:

- Implement VAE to encode microstructural images into compact latent space

- Design MLP to map latent representations to composition, processing parameters, and mechanical properties

- Establish physics-informed design environment enriched by precise CPSP relationships

Training and Validation:

- Train model using combined VAE-MLP architecture

- Validate predictions against experimental results

- Apply specific latent space sampling based on principles of physical metallurgy

Inverse Design Application:

- Identify target mechanical properties for three industrial grades

- Traverse latent space to identify microstructures yielding target properties

- Determine optimal composition and processing routes to achieve identified microstructures

Experimental Validation: Synthesize designed alloys and characterize mechanical properties, comparing with predictions. Successful implementation for UniDP steels has achieved target properties across three performance tiers at lower cost than commercial alloys [1].

Knowledge Graph-Driven CPSP Protocol

Objective: To predict corrosion resistance of high-entropy alloys using the Mat-NRKG model.

Materials and Equipment:

- HEA Corrosion Resistance Dataset (HEA-CRD) with composition, processing, and corrosion current records [3]

- Knowledge graph framework

- Graph Convolutional Network (GCN) with Deep Taylor Block module

- TransE algorithm for knowledge graph completion

- PyTorch or similar deep learning environment

Procedure:

Data Curation:

- Collect corrosion resistance records from literature, encompassing composition, processing techniques, and crystal structures

- Extract and standardize processing technique descriptions

- Annotate crystal structure information

Knowledge Graph Construction:

- Organize unstructured process-related data in flexible graph structure

- Establish nodes for composition elements, processing parameters, and structural features

- Define relationships between entities based on literature evidence

Model Implementation:

- Implement TransE algorithm for knowledge graph completion to predict crystal structure

- Build GCN with DTB module to integrate compositional and processing information

- Train end-to-end model to predict corrosion current

Model Validation:

- Split data into training, validation, and test sets (4:1:1 ratio)

- Perform multiple random splits to ensure statistical significance

- Synthesize validation HEAs for experimental confirmation

Experimental Validation: The Mat-NRKG model demonstrated 25% improvement in MSE over best-performing baseline models when predicting corrosion current, with successful experimental validation on five laboratory-synthesized HEAs [3].

CPSP Visualization Frameworks

Inverse Design Workflow

CPSP Inverse Design Workflow: This diagram illustrates the inverse design approach, beginning with target properties and working backward through microstructure analysis, composition optimization, and processing parameter design, culminating in experimental validation.

Two-Stage Prediction Framework

Two-Stage CPSP Prediction: This visualization represents the two-stage prediction framework that first predicts crystal structure from composition and processing parameters, then integrates this information for final property prediction.

Research Reagent Solutions for CPSP Studies

Table 3: Essential Research Reagents and Materials for CPSP Investigations

| Reagent/Material | Function in CPSP Research | Example Applications |

|---|---|---|

| Dual-Phase Steel Systems | Model material for establishing microstructure-property relationships | Unified dual-phase (UniDP) steel development [1] |

| High-Entropy Alloys (Al-Co-Cr-Fe-Cu-Ni-Mn) | Complex composition systems for corrosion resistance studies | CPSP framework validation for corrosion prediction [3] |

| Poly(Lactide-co-Glycolide) (PLGA) | Biopolymer for drug delivery system studies | Long-acting injectables development [4] |

| Variational Autoencoder (VAE) | Microstructural feature encoding into latent space | Inverse design of dual-phase steels [1] |

| Graph Convolutional Network (GCN) | Knowledge graph processing for materials data | Mat-NRKG model for HEA corrosion prediction [3] |

| TransE Algorithm | Knowledge graph completion for structure prediction | Crystal structure prediction from composition/processing [3] |

The CPSP paradigm represents the foundational framework for modern materials design, enabling accelerated development of advanced materials through systematic decoding of composition-process-structure-property relationships. The integration of machine learning, knowledge graphs, and inverse design strategies has transformed this classical materials science concept into a powerful predictive framework capable of navigating complex, multi-dimensional design spaces. The experimental protocols and computational frameworks presented herein provide researchers with robust methodologies for implementing CPSP-driven materials design across diverse material systems, from structural metals to functional polymers. As computational power advances and datasets expand, the CPSP paradigm will continue to evolve, offering increasingly sophisticated approaches for inverse materials design and accelerating the development of next-generation materials with tailored properties and enhanced performance.

Within the framework of composition-process-structure-property (CPSP) relationships research, the elemental composition of a material is a primary determinant of its final characteristics. It directly dictates the stable crystal phases that form during processing and governs the intrinsic properties—mechanical, magnetic, functional—that the material will exhibit. This foundational principle is critical for the goal-oriented design of advanced materials, from high-performance alloys to functional compounds. Moving beyond traditional trial-and-error methods, modern research leverages combinatorial experiments and data-driven modeling to unravel these complex relationships, enabling the precise inverse design of materials tailored for specific applications [5] [6] [1].

Core Principles: Composition and Phase Stability

The elemental composition of a material system establishes its fundamental thermodynamic landscape, which in turn dictates phase formation. A key manifestation of this principle is observed in High Entropy Alloys (HEAs), multi-principal element systems where the concentration of each element can be strategically varied to tailor structure and properties [5].

The Al Composition Dictates Crystal Structure

Research on the FeMnCoCrAl system demonstrates that varying the concentration of a single element, such as aluminum, can trigger complete phase transitions. The equiatomic FeMnCoCr base alloy (0 at.% Al) crystallizes in the complex α-Mn structure. However, introducing Al additions causes a shift to a more stable Body-Centered Cubic (BCC) random solid solution [5].

Table 1: Effect of Al Composition on Phase Formation in FeMnCoCrAl Thin Films [5]

| Aluminum Content (at.%) | Crystal Structure Evolution | Key Observations |

|---|---|---|

| 0 | α-Mn | Base alloy structure. |

| 4 | α-Mn + BCC | BCC phase begins to form. |

| 6 | BCC (dominant) | BCC phase becomes predominant. |

| 8 | Single BCC | Single-phase random solid solution; lattice parameter = 2.88 Å. |

| 20 - 38 | BCC | Lattice parameter increases with Al content (up to 1.04%). |

| 40 | BCC + B2 | Appearance of an ordered B2 (superlattice) phase. |

| >50 | Low Crystallinity | Broad, low-intensity XRD peaks; suggests amorphization. |

This structural evolution is consistent with density functional theory (DFT) predictions, which indicate that Al additions increase the stability of the BCC phase over the Face-Centered Cubic (FCC) phase, a phenomenon rationalized by the formation of a pseudogap at the Fermi level [5].

Impact of Composition on Intrinsic Properties

Elemental composition directly controls intrinsic properties by altering the electronic structure and atomic-level interactions within a material. A prime example is the manipulation of magnetic properties through compositional changes.

Magnetic Properties

In the paramagnetic FeMnCoCr base alloy, the addition of Al induces ferromagnetism. The saturation magnetization (Ms) does not increase monotonically; it reaches a maximum at a specific critical composition of 8 at.% Al and then decreases as the concentration of non-ferromagnetic Al is increased further. This trend is consistent with ab initio predictions of the Al concentration-induced changes in the magnetic moment [5].

Table 2: Al Composition and Resulting Magnetic Properties in FeMnCoCrAl [5]

| Aluminum Content (at.%) | Magnetic Behavior | Saturation Magnetization (Ms) |

|---|---|---|

| 0 | Paramagnetic | - |

| 8 | Ferromagnetic | Maximum |

| > 8 | Ferromagnetic | Concomitant decrease |

| > 40 | Not Reported | Not Reported |

The initial increase in Ms is attributed to the Al-driven phase transition to the BCC structure, which favors ferromagnetic ordering. The subsequent decrease beyond 8 at.% Al is due to a reduction in the number density of ferromagnetic species (Fe and Co) as they are progressively replaced by non-ferromagnetic Al [5].

Experimental and Computational Methodologies

Establishing robust CPSP links requires sophisticated experimental and computational protocols.

Combinatorial Thin-Film Synthesis & High-Throughput Characterization

This efficient methodology involves depositing compositionally graded thin-film libraries to explore a wide composition space in a single experiment [5].

- Experimental Protocol (FeMnCoCrAl Study) [5]:

- Deposition: Five separate FeMnCoCrAl thin-film spreads are deposited with Al concentration gradients ranging from 3.5 to 54.0 at.%.

- Composition Verification: The chemical composition across the library is characterized using Energy Dispersive X-ray (EDX) analysis.

- Phase Identification: X-ray diffraction (XRD) is used to map the phase formation and lattice parameter evolution as a function of Al content.

- Microstructural Analysis: Atom probe tomography (APT) is employed to probe the local chemical composition and distribution of elements at the atomic scale, confirming the formation of a random solid solution without segregation.

Data-Driven and Inverse Design Modeling

To overcome the limitations of costly experiments and high-fidelity simulations, machine learning models are increasingly used to decode PSP relationships [6]. A leading-edge approach is microstructure-centric inverse design, which inverts the traditional "process-structure" paradigm [1].

- Computational Protocol (Unified Dual-Phase Steel Design) [1]:

- Data Curation: A dataset of microstructural images is collected and pre-processed through binarization and data augmentation.

- Feature Encoding: A variational autoencoder (VAE) is used to encode the high-dimensional microstructural images into a compact, low-dimensional latent space representation.

- Property & Process Mapping: A multilayer perceptron (MLP) maps the latent microstructural representation to the corresponding composition, processing parameters, and mechanical properties, establishing a direct "structure-to-process" link.

- Inverse Design: By sampling the trained latent space, new microstructures that yield target properties can be generated, and the inverse model directly recommends the composition and processing needed to achieve them.

Visualizing Composition-Structure-Property Relationships

The complex relationships between composition, structure, and properties can be visualized as an interconnected workflow, encompassing both experimental and computational pathways.

Diagram 1: The CPSP relationships workflow, showing both forward (experimental) and inverse (data-driven) design pathways.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Composition-Property Research

| Item / Technique | Function in Research |

|---|---|

| Combinatorial Thin-Film Library | A high-throughput platform for synthesizing a continuous gradient of compositions on a single substrate, enabling rapid screening of phase formation and properties [5]. |

| Energy Dispersive X-ray (EDX) Analysis | Used for precise quantitative analysis of the local chemical composition across the material library [5]. |

| X-ray Diffraction (XRD) | A fundamental technique for identifying crystal structures, phases, lattice parameters, and detecting ordering or amorphization [5]. |

| Atom Probe Tomography (APT) | Provides three-dimensional, atomic-scale resolution mapping of the distribution of all elements within a material, confirming solid solution formation or revealing segregation [5]. |

| Vibrating Sample Magnetometer (VSM) / SQUID | Instruments used to measure the magnetic properties of a material, such as saturation magnetization and coercivity [5]. |

| Variational Autoencoder (VAE) | A type of generative machine learning model that encodes complex microstructural images into a lower-dimensional latent space for analysis and inverse design [1]. |

| Multilayer Perceptron (MLP) | A foundational neural network architecture used to establish predictive relationships between encoded microstructures, processing parameters, and final properties [1]. |

Within additive manufacturing (AM) and advanced materials processing, the established composition-process-structure-property (CPSP) relationship is paramount. This technical review posits that thermal cycles, energy input, and fabrication routes act as fundamental architectural elements that directly govern microstructural evolution. Through layer-by-layer fabrication, materials undergo complex thermal histories that trigger solid-state phase transformations, segregation phenomena, and grain restructuring, ultimately dictating the mechanical properties of the final component. This paper synthesizes recent research to provide an in-depth analysis of these relationships, offering a structured guide to the experimental methodologies and data-driven models that are shaping the future of microstructural design.

In traditional manufacturing, process parameters are typically designed to achieve a single set of microstructural characteristics. In contrast, additive manufacturing and similar advanced processes introduce a dynamic thermal landscape where the fabrication route itself becomes an active design variable. The concept of "Processing as the Architect" emerges from the ability to precisely control these thermal cycles to elicit specific microstructural responses from a single material composition.

The sequential nature of these processes means that previously deposited material is subjected to repeated heating and cooling cycles as new layers are added. This intrinsic heat treatment (IHT) leads to a non-equilibrium microstructure that cannot be replicated through conventional means. The following sections deconstruct the core mechanisms of this architectural control, providing quantitative data, experimental protocols, and visualizations of the underlying relationships.

Core Architectural Elements

Thermal Cycles as Microstructural Modulators

Thermal cycles during layered fabrication act as an in-situ heat treatment, profoundly altering microstructure. In laser-directed energy deposition (LDED) of GA151K Mg alloy, thermal cycles cause two significant solid-phase transformations: the intergranular phase changes from Mg3Gd to Mg5Gd, and fine β'-Mg7Gd precipitates form within the α-Mg matrix from a supersaturated solid solution [7]. These transformations are directly linked to changes in mechanical properties, with microhardness increasing by 6.9 ± 5.0 HV0.2 in areas experiencing more thermal cycles [7].

Similarly, in wire-based electron beam directed energy deposition (EB-DED) of nickel-based superalloys, thermal cycling creates an altered morphology along the build height and promotes the formation of Nb-rich interdendritic zones [8]. The thermal history determines the precipitation behavior of strengthening phases (γ" and δ), with longer deposition times favoring fine γ" precipitation throughout the build height [8].

Table 1: Microstructural Transformations Induced by Thermal Cycles in Different Alloy Systems

| Alloy System | AM Process | Phase Transformations | Microstructural Effects | Property Changes |

|---|---|---|---|---|

| GA151K (Mg-Gd) [7] | Laser-DED | Mg3Gd → Mg5Gd; Precipitation of β'-Mg7Gd | Reduced fraction of island-like intergranular phase (↓9.3±2.1%) | Microhardness increased by 6.9±5.0 HV0.2 |

| Nickel-based Superalloy [8] | Wire-based EB-DED | Precipitation of γ" and δ phases | Heterogeneous γ" distribution along build height; Nb segregation in interdendritic zones | Graded mechanical properties along build direction |

| X70 Pipeline Steel [9] | Laser-DED | Precipitation of Fe3C; Dislocation density reduction | Grain coarsening from bottom to top; Increased polygonal ferrite | Decreasing microhardness along building direction |

Energy Input as a Governing Parameter

Energy input, quantified as Energy Area Density (EAD) or Energy Volume Density (EVD), directly controls thermal profiles and resulting microstructure. In selective laser melting (SLM) of low-alloy steel, increasing EAD from 47 to 142 J/mm² transforms the microstructure from a mixture of lower bainite and martensite-austenite to granular bainite, while remarkably reducing grain size from 6.31 μm to 1.56 μm [10].

For L-DED repaired X70 steel, the energy input calculation follows:

EVD = P / (v * d * h)

where P is laser power (W), v is scanning speed (mm/min), d is laser spot diameter (mm), and h is layer height (mm). With an EVD of 50 J/mm³, this process produces microstructural inhomogeneity along the building direction, with grains gradually coarsening and polygonal ferrite proportion increasing from bottom to top [9].

Table 2: Energy Input Parameters and Their Microstructural Effects in Metal AM

| Material | AM Process | Energy Parameter | Value Range | Microstructural Effects | Grain Size (μm) |

|---|---|---|---|---|---|

| Low-alloy Steel [10] | Selective Laser Melting | Energy Area Density (EAD) | 47-142 J/mm² | Mixed lower bainite/martensite → Granular bainite; Bainite ferrite: lath → multilateral | 6.31 @ 47 J/mm²; 1.56 @ 142 J/mm² |

| X70 Steel [9] | Laser-DED | Energy Volume Density (EVD) | 50 J/mm³ | Grain coarsening bottom to top; Increased polygonal ferrite; Fe3C precipitation | Inhomogeneous along build direction |

| GA151K Mg Alloy [7] | Laser-DED | Not specified | Not specified | Intergranular phase transformation; β' precipitation within α-Mg matrix | Fine α-Mg grains (size not specified) |

Fabrication Routes and Thermal Management Strategies

The specific fabrication route and thermal management strategy employed during manufacturing significantly influence the resulting thermal history and microstructure. Research on nickel-based superalloys demonstrates that a discontinuous interpass cooling strategy (ICS) produces homogeneous mechanical properties throughout the build, while a continuous deposition strategy (CDS) results in graded mechanical properties and decreasing strength along the build height due to heterogeneous γ" distribution [8].

The scanning strategy also plays a crucial role. A zigzag scanning pattern with 45% overlap between adjacent tracks was utilized in SLM of low-alloy steel to ensure proper fusion while controlling thermal accumulation [10]. Similarly, L-DED of X70 steel employed a "layered reciprocating scanning strategy" to build 40×40×4.5 mm samples [9].

Experimental Protocols for Microstructural Analysis

Sample Preparation and Metallography

Standard metallographic procedures are essential for accurate microstructural characterization. For LDED GA151K Mg alloy, samples were prepared through mechanical grinding and polishing, with final etching using a solution of 5 mL HNO3 + 95 mL C2H5OH for approximately 10 seconds [10]. For EBSD analysis, electropolishing with 8 mL HClO4 + 92 mL C2H5OH at -15°C for 15 seconds was employed [10].

Microstructural characterization typically utilizes multiple complementary techniques:

- Optical Microscopy (OM) and Scanning Electron Microscopy (SEM) for general microstructural observation [10] [9]

- Electron Backscatter Diffraction (EBSD) for grain morphology and size analysis [10]

- Transmission Electron Microscopy (TEM) for substructure and fine precipitate characterization [10]

Thermal Cycle Monitoring and Analysis

Understanding thermal history is crucial for correlating process parameters with microstructure. Finite element method (FEM) simulations can model the evolution of the temperature field during manufacturing [10]. For accurate simulations, thermal physical properties must be experimentally measured below certain temperatures (e.g., 900°C) and calculated using specialized software like JMATPRO for higher temperatures [10].

Phase transformation temperatures can be experimentally determined using a thermal expansion tester with specific heating and cooling rates (e.g., 10°C/s heating and 5°C/s cooling) [10].

Mechanical Property Evaluation

Standard mechanical tests correlate microstructural features with performance:

- Microhardness testing (e.g., HV0.2) across different regions reveals property variations [7] [9]

- Tensile testing at room temperature (e.g., 0.6 mm/min) evaluates yield strength, ultimate tensile strength, and elongation [10] [9]

- Fracture surface analysis via SEM identifies fracture mechanisms (ductile vs. brittle) [10]

Data-Driven Modeling of Process-Structure-Property Relationships

Traditional physics-based modeling of PSP relationships faces challenges due to computational costs and complex multi-physics phenomena [6]. Data-driven approaches have emerged as powerful alternatives:

Machine Learning Frameworks

Gaussian process regression effectively models nonlinear relationships between process parameters and resulting features like molten pool geometry, requiring relatively small datasets [6]. This approach has successfully predicted molten pool depth in LPBF and identified parameter combinations for desirable conduction mode melting versus keyhole mode [6].

Variational autoencoders (VAE) can encode microstructural images into a latent space representation, while multilayer perceptrons (MLP) map this representation to composition, processing parameters, and mechanical properties [1]. This microstructure-centric inverse design strategy has successfully developed unified dual-phase (UniDP) steels that achieve target properties across multiple performance tiers from a single composition [1].

Microstructural Quantification Methods

Data-driven modeling relies on quantitative microstructural descriptors:

- Simplified descriptors: Phase fractions, grain size, morphological parameters [1]

- Complex descriptors: 2-point statistics, N-point statistics, and machine learning-derived features [1]

Generative models like VAEs and generative adversarial networks (GANs) effectively capture free-form microstructural complexity and nonlinear relationships in material systems [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Materials and Equipment for Thermal Cycle-Microstructure Studies

| Item Category | Specific Examples | Function/Application | Research Context |

|---|---|---|---|

| Base Materials | GA151K Mg alloy powder (Mg-15Gd-1Al-0.4Zr) [7] | LDED feedstock for studying phase transformations | Mg-RE alloy microstructural evolution under thermal cycles |

| Low-alloy steel powder (C: 0.15-0.25%, Mn: 0.6%, Ni: 1.0%, Mo: 0.5%) [10] | SLM feedstock for bainitic/martensitic transformation studies | Effect of EAD on microstructure in ferrous alloys | |

| X70 pipeline steel powder [9] | L-DED repair feedstock | Repair processes and microstructural inhomogeneity | |

| Characterization Equipment | Field Emission SEM (e.g., Nova NanoSEM50) [10] | High-resolution microstructural imaging | General microstructural characterization |

| EBSD System [10] | Grain morphology and orientation analysis | Crystallographic texture and grain size measurement | |

| Transmission Electron Microscope [10] | Fine precipitate and substructure analysis | Nanoscale precipitation characterization | |

| Process Monitoring | Thermal Expansion Tester [10] | Phase transformation temperature measurement | Determination of critical transformation temperatures |

| Finite Element Modeling Software | Temperature field simulation | Thermal history prediction | |

| Chemical Reagents | Nitric Acid Alcohol Etchant (4% HNO3 in C2H5OH) [9] | Microstructural etching for ferrous alloys | Revealing grain boundaries and phases |

| Electrolyte for Electropolishing (8% HClO4 in C2H5OH) [10] | Sample preparation for EBSD | Creating deformation-free surfaces for diffraction |

Visualization of Process-Microstructure Relationships

Microstructural Architecture Pathway: This diagram illustrates how process parameters govern thermal history, which in turn dictates microstructural evolution and final mechanical properties.

Experimental Workflow: This diagram outlines the comprehensive methodology for investigating thermal cycle effects on microstructure, from sample fabrication through characterization to final data analysis.

The architectural role of processing parameters—specifically thermal cycles, energy input, and fabrication routes—in defining microstructure is now firmly established within materials science research. The evidence demonstrates that these factors directly control phase transformations, precipitation behavior, grain evolution, and elemental segregation across diverse alloy systems.

Future research directions will likely focus on several key areas:

- Advanced thermal management strategies that provide finer control over thermal histories during fabrication

- Integrated computational materials engineering (ICME) approaches that combine multi-scale modeling with experimental validation

- Machine learning-enhanced inverse design methodologies that accelerate the development of materials with tailored properties [1] [6]

The understanding that "processing architects microstructure" provides a powerful framework for designing next-generation materials with spatially graded properties optimized for specific application requirements. This paradigm shift from passive processing to active microstructural design promises to revolutionize materials development across aerospace, automotive, energy, and biomedical sectors.

In the foundational paradigm of composition-process-structure-property relationships, microstructure serves as the pivotal, though often complex, link between how a material is made and how it performs. Microstructure refers to the spatial arrangement and connectivity of internal constituents—such as grains, pores, and phases—at length scales ranging from nanometers to micrometers [11]. Unlike inherent molecular structures, microstructures are emergent properties formed during manufacturing and processing, making their quantification essential for predicting and controlling final product attributes [11]. In pharmaceuticals, microstructure determines Critical Quality Attributes (CQAs) like drug release, stability, and content uniformity [12] [13]. In metallurgy, it governs mechanical properties such as hardness and strength [14] [6]. This technical guide details the evolution of microstructure quantification from simple, low-order descriptors to sophisticated spatial statistics and AI-driven characterization, providing researchers with methodologies to elucidate these critical process-structure-property relationships.

Foundational Microstructural Descriptors

Initial microstructure characterization relies on low-order metrics that provide global averages but lack detailed spatial information.

Table 1: Foundational Microstructural Descriptors and Their Limitations

| Descriptor Category | Specific Metrics | Applications | Key Limitations |

|---|---|---|---|

| Global Composition | Volume fraction, phase fraction | Ti6Al4V analysis [15], PLGA microspheres [12] | Does not capture spatial distribution |

| Basic Morphology | Grain size, particle size, alpha colony size [15], porosity, surface area [13] | Quality control, initial screening | Lacks information on spatial clustering or connectivity |

| Dispersion Form | Crystalline vs. amorphous state, domain size [12] | Predicting drug release kinetics [12] | Does not describe location within the matrix |

While these descriptors are invaluable for quality control and establishing baseline comparisons (e.g., Q1/Q2 similarity in generics [12]), they are insufficient for predicting complex properties like fracture toughness or controlled drug release, which are highly dependent on the spatial arrangement of microstructural features.

Statistical Microstructural Descriptors (SMDs) for Spatial Quantification

To move beyond averages, the field employs Statistical Microstructural Descriptors (SMDs)—mathematical functions that quantify the spatial arrangement and correlation of features.

Two-Point Correlation Functions

The two-point correlation function, ( P_{11}(r) ), is a fundamental SMD defined as the probability that two points separated by a vector ( r ) lie in the same phase of interest (e.g., a particle or pore) [16]. Its orientation-averaged version, estimated from metallographic sections, reveals the degree of spatial clustering [16]. Applications include quantifying clustering in SiC-reinforced aluminum composites, where extracted length scales from the correlation function directly correlate with processing parameters like particle size ratio and extrusion ratio [16].

Higher-Order Spatial Correlations

Two-point statistics have limitations, as different microstructures can share the same two-point correlation [17]. Higher-order descriptors are needed to uniquely characterize complex systems.

- n-Point Statistics and Polytope Functions: These capture complex morphological information, such as the probability of finding n vertices of a specific polytope (e.g., a triangle or square) within a given phase [17]. They are more powerful for reconstructing and distinguishing complex, multi-scale microstructures.

- Ripley's K and L Functions: Derived from spatial statistics, these functions assess if features exhibit a random, dispersed, or clustered distribution at a specific spatial scale [18]. The L function, ( \hat{L}(t) ), is a variance-stabilized version of the K function. For homogeneous data, ( \hat{L}(t) ) has an expected value of ( t ), and a plot of ( t - \hat{L}(t) ) against ( t ) helps identify deviations from spatial randomness [18].

Fractal Descriptions for Multi-Scale Heterogeneity

Fractal analysis describes how observed variance or heterogeneity changes with the scale of measurement. It is particularly useful for systems exhibiting self-similarity over a range of length scales. The core relationship is a power law:

[ RD(m) = RD(m0) \left( \frac{m}{m0} \right)^{1-D} ]

where ( RD(m) ) is the relative dispersion (coefficient of variation) at a measurement scale of mass ( m ), ( m_0 ) is a reference mass, and ( D ) is the fractal dimension [19]. This dimension provides a measure of spatial correlation, with specific bounds indicating randomness (( D = 1.5 )) or perfect uniformity (( D = 1.0 )) [19].

The following diagram illustrates the workflow for applying these spatial statistics to quantify a microstructure, from image acquisition to interpretation.

Figure 1: Workflow for Spatial Statistical Analysis of Microstructure

Experimental Protocols for Microstructure Quantification

Automated 2D Microstructural Analysis Protocol

This protocol, validated on Ti6Al4V, enables high-throughput, repeatable quantification of features like grain size and volume fraction of globular alpha grains [15].

- Image Acquisition: Obtain high-resolution 2D images via Scanning Electron Microscopy (SEM) or Optical Microscopy.

- Digital Image Processing: Apply algorithms to isolate individual microstructural features (e.g., grains, colonies).

- Image Segmentation: Use techniques like the watershed algorithm to partition the image into regions representing distinct microstructural entities.

- Morphological Measurement: Extract quantitative data (size, shape, volume fraction) from the segmented regions.

- Validation: Compare results with manual measurements to ensure consistency while achieving drastically improved speed and repeatability [15].

Protocol for 3D Two-Point Correlation Function Estimation

This stereological method allows for unbiased estimation of 3D spatial correlations from 2D vertical sections, crucial for understanding anisotropic microstructures [16].

- Vertical Section Sampling: Identify a symmetry axis (e.g., from an extrusion process). Section the sample along a plane containing this axis.

- Digital Image Montage: Capture a large, high-resolution montage of micrographs from the vertical section to ensure a representative field of view and minimize edge effects.

- Unbiased Lineal Measurement: Using digital image analysis, place test lines of varying length and orientation across the montage. For each line length ( r ), count the fraction where both endpoints lie in the phase of interest.

- Orientation Averaging: Compute the orientation-averaged mean two-point correlation function, ( \langle P_{ij}(r) \rangle ), by integrating measurements over all spatial directions, weighted by ( \sin \theta ) [16].

- Length Scale Extraction: Analyze the resulting correlation function to identify characteristic length scales of clustering, such as the distance at which the function plateaus to its long-range value.

The Scientist's Toolkit: Essential Reagents and Materials

Successful microstructural quantification relies on a suite of advanced analytical techniques and computational tools.

Table 2: Key Research Reagent Solutions for Microstructure Characterization

| Tool Category | Specific Technology | Primary Function in Microstructure Analysis |

|---|---|---|

| Advanced Microscopy | Scanning Electron Microscopy (SEM) [15] [11], Focused Ion Beam-SEM (FIB-SEM) [12], Confocal Laser Scanning Microscopy (CLSM) [12] | High-resolution 2D and 3D imaging of surface and sub-surface features. |

| 3D & Chemical Imaging | X-ray Microscopy (XRM) [12], Synchrotron Radiation X-ray μCT (SR-μCT) [12] [17], Confocal Raman Microscopy (CRM) [12] [11] | Non-destructive 3D volumetric imaging; mapping of chemical composition and component distribution. |

| Surface & Spectral Analysis | Time-of-Flight Secondary Ion Mass Spectrometry (ToF-SIMS) [12], Atomic Force Microscopy (AFM) [11], Energy Dispersive Spectroscopy (EDS) [12] | Elemental and isotopic surface mapping; nanoscale topographic imaging. |

| AI & Image Analytics | Generative Adversarial Networks (GANs) [17], Deep Convolutional Neural Networks [17] [6], Digital Image Processing Algorithms [15] [13] | Automated segmentation, feature measurement, and synthetic microstructure reconstruction. |

Advanced & Emerging Methodologies: AI and Multi-Scale Reconstruction

The frontier of microstructure quantification lies in integrating AI and higher-order statistics to overcome imaging limitations and discover new PSP relationships.

Deep Generative Models for Microstructure Reconstruction

Generative Adversarial Networks (GANs), such as Deep Convolutional GAN (DCGAN) and StyleGAN2, are used to synthetically generate statistically equivalent, high-resolution, and potentially 3D microstructures from limited 2D image data [17]. This addresses the critical trade-off between resolution and field of view in imaging technologies. The performance of these models is evaluated by comparing higher-order SMDs (e.g., n-point polytope functions) of the original and synthetic images, ensuring morphological equivalence beyond what two-point correlations can achieve [17].

Data-Driven Modeling of Process-Structure-Property Relationships

Machine learning (ML) is revolutionizing the establishment of high-dimensional PSP linkages. For instance, neural networks have been applied to map process parameters (e.g., laser power, scan speed) to structural indicators (e.g., grain size, porosity) and finally to properties (e.g., hardness, electrical resistivity) in Pt-Au nanocrystalline alloys [14] and metal additive manufacturing [6]. These models can rapidly identify optimal process windows to maximize material performance, a task that is prohibitively time-consuming using purely experimental or physics-based simulation approaches.

The following diagram summarizes this advanced, closed-loop framework for materials development, which integrates characterization, data science, and modeling.

Figure 2: AI-Enhanced Process-Structure-Property Framework

The journey from simplified descriptors to complex spatial statistics represents a paradigm shift in our ability to quantify microstructure. This evolution, powered by advancements in spatial statistics like two-point and n-point correlation functions and accelerated by AI and machine learning, is transforming microstructure from a qualitative micrograph into a rich, quantitative dataset. This quantitative description is the indispensable key to unlocking a fundamental, predictive understanding of composition-process-structure-property relationships. As these techniques continue to mature, they pave the way for the inverse design of materials and pharmaceutical products, enabling researchers to specify a target property and computationally design the optimal microstructure and manufacturing process to achieve it.

Dual-Phase (DP) steels, classified as first-generation Advanced High-Strength Steels (AHSS), have become indispensable materials in modern automotive engineering due to their exceptional combination of high strength and good ductility [20]. These properties are crucial for manufacturing vehicle components that enhance fuel efficiency through lightweighting while maintaining passenger safety [20]. The fundamental characteristic of DP steels is their composite-like microstructure consisting of a soft ferrite matrix with dispersed hard martensite islands, typically comprising 10-40 vol.% martensite [21]. This unique microstructure results in continuous yielding, high initial work hardening rates, and excellent energy absorption properties [21] [22].

This case study explores the intricate composition-process-structure-property relationships in DP steels, focusing specifically on how precise microstructural control enables tailoring of mechanical performance for specific applications. Understanding these relationships is paramount for researchers and engineers seeking to develop next-generation materials that meet increasingly stringent automotive requirements, particularly with the transition toward electric vehicles that demand optimized weight-to-strength ratios for extended battery range [23].

Microstructural Fundamentals of Dual-Phase Steels

Phase Characteristics and Their Roles

The mechanical behavior of DP steels derives from the synergistic interaction between its two constituent phases:

Ferrite Matrix: This body-centered cubic (BCC) iron phase is soft and ductile, contributing primarily to the material's formability and toughness. The ferrite phase typically contains a high density of mobile dislocations, particularly near phase boundaries, which facilitates continuous yielding without a pronounced yield point elongation [20] [21].

Martensite Islands: This hard, body-centered tetragonal (BCT) phase forms via diffusionless transformation from austenite during rapid cooling. The martensite islands act as strengthening components, enhancing strength and resistance to deformation. The carbon content in martensite significantly influences its hardness and the overall strength of the DP steel [20].

A critical microstructural feature is the ferrite-martensite interface, where a high density of geometrically necessary dislocations (GNDs) accumulates due to volume expansion (2-4%) during the austenite-to-martensite transformation [20] [21]. These GNDs are mobile and contribute significantly to the initial strain hardening behavior and continuous yielding phenomenon characteristic of DP steels [20].

Key Microstructural Parameters

Three primary microstructural characteristics govern the mechanical properties of DP steels:

- Ferrite Grain Size (FGS): The size of the ferrite grains in the matrix, which follows the Hall-Petch relationship for strengthening [21].

- Martensite Volume Fraction (MVF): The proportion of martensite in the microstructure, typically ranging from 10% to 40% [21].

- Martensite Morphology (MM): The spatial distribution, size, shape, and connectivity of martensite islands [20].

Advanced characterization techniques, particularly three-dimensional EBSD (3D EBSD) conducted via tomographic serial sectioning, have revealed the complex three-dimensional distribution of these phases, providing crucial insights for microstructure-property modeling [21].

Alloying Design and Compositional Control

The chemical composition of DP steels is carefully designed to achieve the desired microstructure after specific thermal processing. Each alloying element plays a distinct role in phase transformation kinetics and final properties [21].

Table 1: Key Alloying Elements in Dual-Phase Steels and Their Functions

| Element | Typical Content (wt.%) | Primary Function | Secondary Effects |

|---|---|---|---|

| Carbon | 0.06-0.15% [21] | Austenite stabilizer; determines martensite hardness | Strengthens martensite; controls phase distribution |

| Manganese | 1.5-3.0% [21] | Austenite stabilizer; retards ferrite formation | Solid solution strengthener in ferrite |

| Silicon | ~0.5-1.0% (inferred) | Promotes ferritic transformation | Enhances carbon enrichment of austenite |

| Chromium/Molybdenum | Up to 0.4% [21] | Retards pearlite and bainite formation | Increases hardenability |

| Microalloying (V, Nb) | Trace amounts [21] | Precipitation strengthening; grain refinement | Inhibits recrystallization; improves toughness |

Carbon content is particularly critical as it directly influences the martensite volume fraction and hardness. Manganese, silicon, chromium, and molybdenum collectively control the transformation kinetics during cooling, enabling the formation of the desired ferrite-martensite microstructure without unwanted phases like pearlite or bainite [21]. Microalloying with vanadium or niobium enables precipitation strengthening and grain refinement through the formation of carbonitrides that pin grain boundaries during processing [21].

Processing Routes for Microstructural Control

The microstructure of DP steels is primarily achieved through carefully designed thermal and thermomechanical processing routes. The fundamental principle involves intercritical annealing in the (α + γ) two-phase region followed by controlled cooling to transform the austenite to martensite.

Fundamental Processing Routes

Two primary processing methods are employed industrially:

Hot-Rolled DP Steels: Produced by controlled cooling from the austenite phase after hot rolling. The process involves cooling to the intercritical region to form ferrite before rapid quenching transforms remaining austenite to martensite [21].

Cold-Rolled and Annealed DP Steels: Produced by intercritical annealing of cold-rolled sheet in the two-phase ferrite plus austenite region, followed by rapid cooling to transform austenite to martensite [21]. This route typically provides better surface quality and dimensional control.

Advanced Thermomechanical Processing

Researchers have developed advanced processing techniques to achieve ultrafine-grained (UFG) DP steels with enhanced strength-ductility combinations:

Advanced Thermomechanical Processing (ATMP): Includes methods like deformation-induced ferrite transformation (DIFT), large strain warm deformation (LSWD), intercritical hot rolling, and multi-directional rolling [21]. These techniques are suitable for commercial-scale production.

Severe Plastic Deformation (SPD): Comprises methods such as equal-channel angular pressing (ECAP), accumulative roll bonding (ARB), and high-pressure torsion [21]. These techniques can produce ferrite grain sizes of approximately 1 μm but are generally confined to laboratory-scale samples.

A typical two-step processing route for UFG DP steels involves: (1) a deformation treatment to produce UFG ferrite with finely dispersed cementite or pearlite, followed by (2) short intercritical annealing in the ferrite/austenite two-phase field and quenching to transform austenite to martensite [21].

Quantitative Property-Structure Relationships

The mechanical properties of DP steels can be quantitatively correlated with the key microstructural parameters through established relationships.

Table 2: Effect of Microstructural Parameters on Mechanical Properties of DP Steels

| Microstructural Parameter | Effect on Strength | Effect on Ductility | Effect on Strain Hardening | Typical Property Range |

|---|---|---|---|---|

| Martensite Volume Fraction (Increase from 10% to 40%) | Significant increase [21] | Moderate decrease [21] | Increase at low strains [20] | UTS: 400-1200 MPa [21] |

| Ferrite Grain Size (Refinement from 12μm to 1μm) | Increase (Hall-Petch) [21] | Slight decrease or maintained [21] | Significant increase [21] | YS: 300-1000 MPa [20] |

| Martensite Carbon Content (Increase) | Increase (martensite strength) [24] | Variable | Moderate effect | Dictates martensite hardness [24] |

| Martensite Morphology (Banded to Dispersed) | Minor effect | Significant improvement [20] | More uniform deformation | Improved hole expansion capacity [21] |

These relationships enable materials engineers to tailor the mechanical performance of DP steels for specific applications. For instance, automotive components requiring high crash resistance benefit from higher martensite volume fractions and refined ferrite grain sizes, which simultaneously increase strength and strain hardening capacity [20].

Experimental Tempering Study

Tempering of DP steels represents an important secondary processing step that modifies the as-quenched microstructure. Recent research has systematically modeled the microstructural evolutions and mechanical properties during tempering of DP steels between 100°C and 550°C [24]. The study identified:

- Two distinct tempering stages: The first stage involves cementite precipitation, while the second stage includes cementite spheroidization and recovery phenomena [24].

- Manganese content effect: Increasing manganese content retards the second tempering stage but does not significantly affect the first stage [24].

- Modeling approach: A JMAK (Johnson-Mehl-Avrami-Kolmogorov) model was developed to predict microstructural evolution during tempering, combined with a Hybrid-mean Field Composite model to predict tensile curves [24].

This modeling approach successfully reproduced the effects of tempering parameters across a wide range of microstructural conditions, providing valuable tools for industrial heat treatment design [24].

Experimental Protocols for Microstructural Control

Protocol: Intercritical Annealing for DP Microstructure Generation

Objective: To produce a dual-phase microstructure with controlled martensite volume fraction and ferrite grain size.

Materials and Equipment:

- Cold-rolled low-carbon steel sheet (C: 0.1 wt%, Mn: 1.8 wt%, Si: 0.4 wt%)

- Salt bath or tube furnace with protective atmosphere (e.g., nitrogen or argon)

- Quenching system (water or oil quench)

- Metallographic preparation equipment

- Hardness tester and tensile testing machine

Procedure:

- Cut specimens to appropriate dimensions (e.g., 150 × 30 × 1.5 mm)

- Heat treatment in single-phase austenite region at 900°C for 5 minutes for complete austenitization

- Cool to intercritical annealing temperature (e.g., 740-800°C) at a controlled rate of 10°C/s

- Hold at intercritical temperature for 60-300 seconds to form desired volume fraction of ferrite and austenite

- Rapid quench to room temperature at rate >30°C/s to transform austenite to martensite

- Characterize resulting microstructure using optical and scanning electron microscopy

- Measure martensite volume fraction using point counting or image analysis

- Test mechanical properties using uniaxial tensile tests

Key Parameters to Control:

- Intercritical temperature (controls martensite volume fraction)

- Holding time (influences ferrite grain growth)

- Quenching rate (must exceed critical rate to avoid bainite formation)

Protocol: Grain Refinement via Severe Plastic Deformation

Objective: To produce ultrafine-grained (UFG) DP steel with grain size <2 μm for enhanced strength-ductility combination.

Materials and Equipment:

- Low-carbon steel sheet

- Equal-channel angular pressing (ECAP) setup or cold rolling mill

- Intercritical annealing furnace

- Microhardness tester

- Electron backscatter diffraction (EBSD) system

Procedure:

- Process material using ECAP for 4-8 passes via route Bc (90° rotation between passes) at 200°C

- Alternatively, perform heavy cold rolling with 80-90% thickness reduction

- Conduct short intercritical annealing at 740-780°C for 30-120 seconds

- Quench rapidly to room temperature to form martensite

- Characterize grain structure using EBSD

- Measure mechanical properties using nanoindentation and tensile testing

- Compare with conventionally processed DP steel

The Researcher's Toolkit: Essential Materials and Reagents

Table 3: Essential Research Reagents and Equipment for DP Steel Studies

| Item | Function/Application | Technical Specifications | Key Considerations |

|---|---|---|---|

| Low Carbon Steel Sheets | Base material for DP steel processing | C: 0.06-0.15%, Mn: 1.5-3.0%, Si: 0.2-1.0% [21] | Composition determines phase transformation behavior |

| Tube Furnace with Atmosphere Control | Intercritical annealing experiments | Maximum temperature: 1000°C, Protective atmosphere (N₂/Ar) | Precise temperature control (±2°C) critical for phase fractions |

| Salt Bath Setup | Rapid heating and cooling for heat treatment | Multiple baths with different temperature ranges | Enables precise intercritical annealing times |

| Electron Backscatter Diffraction (EBSD) | Microstructural and crystallographic analysis | SEM with EBSD detector, resolution <0.1 μm | Essential for phase identification and grain size measurement |

| Thermoelectric Power Measurement | Monitoring tempering kinetics [24] | Sensitivity to microstructural changes | Detects carbon departure from solid solution during tempering |

| Dilatometer | Studying phase transformations | High-precision length measurements | Determines critical transformation temperatures |

| Microhardness Tester | Local mechanical property assessment | Vickers or Knoop indenter, low loads (10-500 gf) | Enables phase-specific property measurements |

This case study has demonstrated the critical relationships between composition, processing, microstructure, and properties in dual-phase steels. Through precise control of three key microstructural parameters—ferrite grain size, martensite volume fraction, and martensite morphology—materials engineers can tailor the mechanical performance of DP steels across a wide spectrum (400-1200 MPa ultimate tensile strength) to meet specific application requirements [21].

The ongoing development of advanced processing routes, particularly those producing ultrafine-grained microstructures, continues to push the boundaries of strength-ductility combinations in these materials [21]. Furthermore, sophisticated modeling approaches, such as the Hybrid-mean Field Composite model for tempering behavior, are providing powerful tools for predicting mechanical properties based on microstructural evolution [24].

As automotive industry demands evolve, particularly with the transition to electric vehicles, DP steels will continue to play a crucial role in lightweight vehicle design. Future research directions will likely focus on further enhancing strength-ductility combinations through nanoscale microstructural control, improving sustainability through reduced alloying content and enhanced recyclability, and developing more sophisticated integrated computational materials engineering (ICME) models for accelerated alloy and process development.

Advanced Tools and Techniques: Data-Driven Modeling and AI for Predicting CPSP Relationships

The exploration of complex composition-process-structure-property (CPSP) relationships has long been a fundamental challenge across scientific and engineering disciplines. Traditional research methodologies have predominantly relied on iterative trial-and-error experimentation and high-fidelity physics-based simulations. While these approaches have yielded significant insights, they are often characterized by substantial time investments, high computational costs, and limited scalability. In fields ranging from materials science to pharmaceutical development, this conventional paradigm has constrained the pace of discovery and innovation. The emergence of data-driven modeling represents a transformative shift from this established methodology, offering a powerful framework for rapid exploration and prediction of complex systems without exhaustive physical testing [6] [25].

Data-driven modeling leverages advanced computational techniques, particularly machine learning (ML) and artificial intelligence (AI), to extract meaningful patterns and relationships from existing experimental and simulation data. This approach enables researchers to construct predictive models that map input parameters to desired outputs, effectively creating surrogates for expensive physical experiments or simulations. The core strength of data-driven modeling lies in its ability to handle high-dimensional, nonlinear relationships that are often intractable through traditional analytical methods. By learning directly from data, these models can uncover hidden correlations and provide quantitative predictions for previously unexplored regions of the parameter space, thereby accelerating the discovery and optimization processes [25] [26].

The integration of data-driven approaches into CPSP research represents more than just a technical advancement—it constitutes a fundamental reimagining of the scientific method itself. Where traditional approaches often proceed through sequential hypothesis testing and validation, data-driven methods facilitate parallel exploration of multiple design possibilities, dramatically reducing development timelines. This paradigm shift is particularly valuable in contexts where physical experiments are prohibitively expensive, time-consuming, or ethically challenging, such as in pharmaceutical development and advanced materials design. As digital data becomes increasingly abundant and computational power continues to grow, data-driven modeling is poised to become an indispensable tool for researchers seeking to navigate complex design spaces efficiently [27] [26].

Theoretical Foundations: Core Principles of Data-Driven Modeling

Fundamental Concepts and Terminology

Data-driven modeling encompasses a diverse set of methodologies united by their common reliance on empirical data as the foundation for predictive modeling. At its core, data-driven modeling involves the development of mathematical relationships between input variables (features) and output variables (responses) based on observed data, without necessarily incorporating explicit physical laws or first principles. The theoretical framework for these approaches draws from statistics, machine learning, and pattern recognition, creating an interdisciplinary foundation that complements traditional physics-based modeling [25]. Key concepts include features (input variables that characterize the system), labels (output variables to be predicted), training data (historical observations used to build the model), and generalization (the model's ability to perform well on unseen data).

The mathematical foundation of data-driven modeling typically involves identifying a function f that maps input variables X to output variables Y, such that Y = f(X) + ε, where ε represents noise or error. The specific form of f is determined through a learning process that minimizes a loss function quantifying the discrepancy between predictions and actual observations. This process can be formulated as an optimization problem where model parameters θ are adjusted to minimize L(θ) = Σ(yi - f(xi; θ))^2 for regression tasks, or to maximize classification accuracy for categorical predictions. Regularization techniques are often employed to prevent overfitting, ensuring that the model captures underlying patterns rather than memorizing noise in the training data [25] [26].

Comparison with Traditional Methodologies

Data-driven modeling differs fundamentally from traditional approaches in both philosophy and implementation. Physics-based modeling derives its predictive power from first principles, mathematical representations of known physical laws, and mechanistic understanding. While these models offer strong interpretability and reliability within their domain of applicability, they often struggle with systems where underlying physics are incompletely understood or where multiple physical phenomena interact in complex ways. In contrast, data-driven models make no inherent assumptions about underlying mechanisms, instead learning relationships directly from data, which enables them to handle systems with poorly understood physics or emergent behaviors [6].

Experimental trial-and-error approaches, while empirically grounded, face significant limitations in efficiency and scalability. The exhaustive exploration of parameter spaces through physical experiments becomes computationally prohibitive as dimensionality increases—a phenomenon known as the "curse of dimensionality." Data-driven modeling mitigates this challenge by building statistical models that can interpolate and, to some extent, extrapolate from limited data, thereby reducing the number of required experiments. However, it is crucial to recognize that data-driven approaches complement rather than replace traditional methods; the most powerful modeling frameworks often integrate data-driven techniques with physical principles and targeted experimentation, creating hybrid approaches that leverage the strengths of each methodology [6] [25].

Methodological Framework: Implementing Data-Driven Modeling

Data Acquisition and Preprocessing

The foundation of any effective data-driven model is high-quality, representative data. Data acquisition for CPSP modeling typically involves multiple sources, including experimental measurements, high-fidelity simulations, and historical databases. In materials science and additive manufacturing, for instance, data may encompass process parameters (e.g., laser power, scan speed), structural characteristics (e.g., microstructure images, porosity measurements), and property evaluations (e.g., tensile strength, hardness) [6] [25]. Pharmaceutical applications might include chemical structures, processing conditions, pharmacokinetic parameters, and clinical outcomes [27]. The integration of diverse data types and sources presents significant challenges in data harmonization, quality control, and metadata management.

Data preprocessing is a critical step that profoundly influences model performance. This phase typically involves handling missing values through imputation techniques, detecting and addressing outliers that may represent measurement errors, and normalizing or standardizing features to ensure comparable scaling across variables. For structural data, such as microstructural images or molecular representations, feature engineering techniques are employed to extract quantitative descriptors that capture relevant characteristics. Dimensionality reduction methods like Principal Component Analysis (PCA) or t-distributed Stochastic Neighbor Embedding (t-SNE) may be applied to mitigate the curse of dimensionality while preserving essential information. The preprocessed dataset is typically partitioned into training, validation, and test sets to facilitate model development and evaluation, with careful attention to maintaining representative distributions across splits [25] [26].

Model Selection and Algorithm Implementation

The selection of appropriate modeling algorithms depends on multiple factors, including the nature of the prediction task (regression vs. classification), data characteristics (size, dimensionality, noise level), and interpretability requirements. Established machine learning algorithms frequently applied in CPSP research include Gaussian Process Regression (GPR), Random Forests (RF), Support Vector Machines (SVM), and various neural network architectures [6] [25] [26]. The following table summarizes the key characteristics of these prevalent algorithms:

Table 1: Common Data-Driven Modeling Algorithms in CPSP Research

| Algorithm | Primary Use Cases | Key Advantages | Limitations |

|---|---|---|---|

| Gaussian Process Regression (GPR) | Process parameter optimization, molecular property prediction | Provides uncertainty estimates, performs well with small datasets | Computational cost scales poorly with large datasets |

| Random Forests (RF) | Material property prediction, crystal structure classification | Robust to outliers, handles high-dimensional data | Limited extrapolation capability, black-box nature |

| Support Vector Machines (SVM) | Classification of material phases, defect detection | Effective in high-dimensional spaces, memory efficient | Sensitivity to parameter tuning, poor performance with noisy data |

| Neural Networks (NN) | Image-based microstructure analysis, complex property prediction | Excellent for complex nonlinear relationships, handles diverse data types | Requires large datasets, computationally intensive training |

| Dynamic Mode Decomposition (DMD) | Pharmaceutical process control, system dynamics modeling | Captures temporal dynamics, interpretable model structure | Primarily for linear systems, extensions needed for nonlinearity |

Implementation of these algorithms requires careful consideration of hyperparameter tuning, which optimizes model performance by adjusting parameters that are not learned directly from the data. Techniques such as grid search, random search, and Bayesian optimization are commonly employed for this purpose. For neural networks, architectural decisions regarding depth, width, and connectivity patterns must be made based on problem complexity and available data. Recent advances in automated machine learning (AutoML) have begun to streamline portions of this process, but domain expertise remains essential for selecting appropriate model classes and evaluating results within scientific context [25] [28] [26].

Model Validation and Performance Metrics

Rigorous validation is essential to ensure that data-driven models provide reliable predictions for new, unseen data. The validation framework typically involves multiple techniques, including hold-out validation, k-fold cross-validation, and leave-one-out cross-validation, each offering different trade-offs between computational expense and validation reliability. For time-series or sequential data, specialized approaches such as rolling-origin validation are employed to preserve temporal dependencies. It is particularly important in scientific applications to validate models not only on statistical metrics but also against physical principles and domain knowledge to ensure plausible predictions [25] [26].

Performance metrics are selected based on the specific modeling task and application requirements. For regression problems, common metrics include Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and coefficient of determination (R²). Classification tasks typically employ accuracy, precision, recall, F1-score, and area under the Receiver Operating Characteristic (ROC) curve. In addition to these quantitative measures, model robustness should be assessed through sensitivity analysis, which evaluates how predictions change in response to small perturbations in inputs. For high-stakes applications, such as pharmaceutical development, additional validation may include establishing model credibility through comparison with experimental results and demonstrating applicability within the intended context of use [27] [25].

Applications Across Disciplines: Case Studies and Implementation

Additive Manufacturing and Materials Design

In additive manufacturing (AM), data-driven modeling has emerged as a powerful approach for establishing process-structure-property (PSP) relationships that are essential for quality control and process optimization. The complex physical phenomena in AM, including powder dynamics, heat transfer, fluid flow, and phase transformations, create challenges for traditional modeling approaches. Data-driven methods address these challenges by learning directly from experimental and simulation data, enabling prediction of structural characteristics and mechanical properties based on process parameters [6] [25]. For instance, Gaussian process regression has been successfully applied to predict molten pool geometry in laser powder bed fusion processes, with models achieving high accuracy in predicting depth and morphology based on laser power, scan speed, and beam size parameters [6].