Foundation Models for Property Prediction: A New Paradigm in Biomedical Research and Drug Discovery

This article explores the transformative role of foundation models in predicting molecular, material, and clinical properties.

Foundation Models for Property Prediction: A New Paradigm in Biomedical Research and Drug Discovery

Abstract

This article explores the transformative role of foundation models in predicting molecular, material, and clinical properties. Tailored for researchers and drug development professionals, it provides a comprehensive overview of the core principles, key architectures, and practical methodologies for applying these models. The content delves into strategies for overcoming common challenges like data scarcity, offers a comparative analysis of model performance across domains such as computational pathology and chemistry, and synthesizes key insights to guide future research and clinical application.

What Are Foundation Models and Why Are They Revolutionizing Property Prediction?

The field of molecular property prediction is undergoing a profound transformation, moving away from isolated, task-specific machine learning models toward versatile, general-purpose artificial intelligence systems known as foundation models. These models are characterized by their training on "broad data (generally using self-supervision at scale)" which enables them to be "adapted (e.g., fine-tuned) to a wide range of downstream tasks" [1]. This paradigm shift is pivotal for accelerating scientific discovery, as it decouples the data-hungry process of learning fundamental chemical representations from the application to specific prediction tasks with limited labeled data [1].

In domains like drug discovery and materials science, this translates to a powerful new capability: a model pre-trained on billions of unlabeled molecular structures can subsequently be fine-tuned with small, labeled datasets to achieve state-of-the-art performance on critical tasks, such as predicting the absorption, distribution, metabolism, excretion, and toxicity (ADMET) of candidate molecules [2]. This report details the core architecture, experimental protocols, and key resources that underpin this emerging paradigm.

The Foundation Model Workflow: Architecture and Implementation

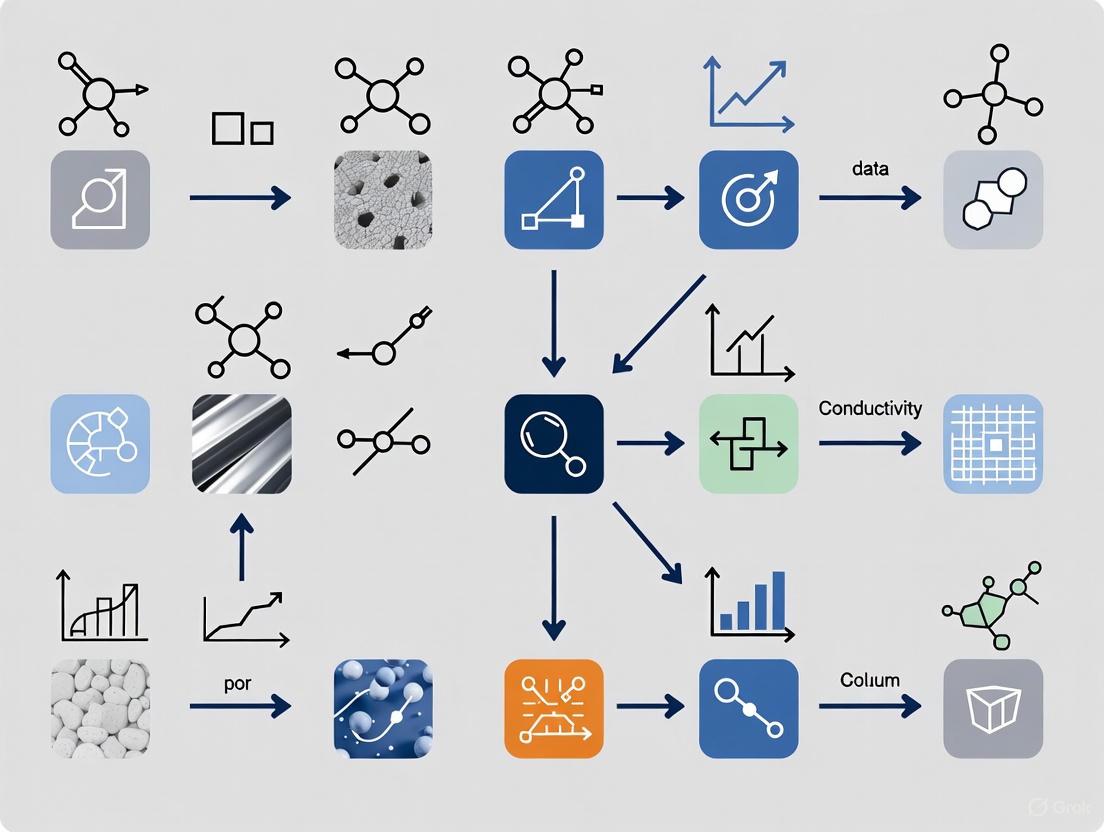

The power of foundation models lies in a structured workflow that progresses from broad pre-training to targeted fine-tuning. The diagram below illustrates this overarching architecture and process.

Core Architectural Components

Foundation models for molecular science are built upon several key components that enable them to process and understand complex chemical structures:

- Encoder-Decoder Structures: Modern architectures often decouple the understanding (encoding) and generation (decoding) of molecular data. Encoder-only models are focused on understanding input data to generate meaningful representations, making them ideal for property prediction tasks. In contrast, decoder-only models are designed for generative tasks, producing new molecular structures token-by-token [1].

- Adapted Transformer Architectures: The transformer architecture, which revolutionized natural language processing, has been successfully adapted for molecular graphs. The MolE (Molecular Embeddings) model, for instance, uses a modified disentangled attention mechanism from DeBERTa that explicitly accounts for relative atom positions in a molecular graph. This is crucial for capturing spatial relationships that define chemical properties [2].

- Multimodal Integration: Advanced frameworks like MultiMat demonstrate the ability to train on diverse material data types simultaneously. This multimodal approach allows models to leverage the rich variety of information available—from textual descriptions to structural graphs and images—creating more robust and generalizable representations [3].

Data Extraction and Preprocessing

The starting point for successful pre-training is the availability of massive, high-quality datasets. For molecular foundation models, this involves sophisticated data extraction pipelines that can parse information from various sources [1]:

- Structured Databases: Resources like PubChem, ZINC, and ChEMBL provide structured information on millions of molecules and are commonly used for training chemical foundation models [1].

- Scientific Literature and Patents: Advanced data-extraction models employ Named Entity Recognition (NER) and computer vision techniques, including Vision Transformers, to identify molecular structures and associated properties from documents, tables, and images in patents and scientific papers [1].

- Specialized Tool Integration: Modular approaches integrate specialized algorithms as intermediary tools. For example, Plot2Spectra can extract data points from spectroscopy plots, while DePlot converts visual charts into structured tabular data, making this information accessible to foundation models [1].

Experimental Protocols for Foundation Model Development

Protocol: Self-Supervised Pre-training for Molecular Graphs (MolE)

This protocol outlines the two-step pre-training strategy used to develop the MolE foundation model, which achieved state-of-the-art performance on 10 of 22 ADMET tasks [2].

Objective: To learn transferable molecular representations from unlabeled graph data.

Step 1: Self-Supervised Pre-training (Learning Chemical Structure)

- Input Representation: Represent molecules as graphs where atoms are nodes and bonds are edges. Compute atom identifiers by hashing atomic properties (Daylight atomic invariants) into a single integer.

- Model Architecture: Use a Transformer with disentangled self-attention. The model takes both atom identifiers and a topological distance matrix as input.

- Masking Strategy: Randomly mask 15% of atoms. For masked atoms, 80% are replaced with a mask token, 10% with a random token, and 10% are left unchanged.

- Training Task: The model is trained to predict the atom environment of radius 2 (all atoms within two bonds) for each masked atom. This forces the model to aggregate information from neighboring atoms.

- Training Data: Train on ~842 million molecular graphs from ZINC20 and ExCAPE-DB.

- Outcome: The model learns rich, local structural features of molecules without requiring labeled data.

Step 2: Supervised Graph-Level Pre-training (Learning Biological Information)

- Objective: Transition from local atomic features to global, biologically relevant molecular representations.

- Implementation: Follow the pre-training with supervised learning on a large labeled dataset of ~456,000 molecules.

- Rationale: This combined node- and graph-level pre-training helps the model integrate both local chemical and global biological information, enhancing its predictive power for downstream tasks.

The following diagram visualizes this two-step pre-training protocol.

Protocol: Fine-tuning for Downstream Property Prediction

Objective: To adapt a pre-trained foundation model to a specific molecular property prediction task.

- Dataset Curation: Assemble a labeled dataset specific to the target property (e.g., solubility, toxicity, metabolic stability). For low-data scenarios, techniques such as transfer learning and few-shot learning are particularly valuable [4] [5].

- Model Initialization: Initialize the model weights using the pre-trained foundation model.

- Fine-tuning: Train the model on the target task. The learning rate for this stage is typically set lower than during pre-training to avoid catastrophic forgetting.

- Evaluation: Rigorously evaluate the model on held-out test data. Use task-relevant metrics (e.g., ROC-AUC for classification, RMSE for regression) and perform multiple runs with different random seeds to ensure statistical significance [6]. Critical to this process is assessing performance on activity cliffs—molecules with high structural similarity but large property differences—which are a key challenge in chemical generalization [1] [6].

Performance Benchmarking and Quantitative Analysis

The transition to foundation models is substantiated by significant improvements in predictive performance across diverse chemical tasks. The table below summarizes key benchmark results from recent state-of-the-art models.

Table 1: Performance Benchmarks of Molecular Foundation Models

| Model Name | Architecture / Approach | Key Performance Metrics | Notable Achievements |

|---|---|---|---|

| MolE [2] | Transformer for molecular graphs with disentangled attention & 2-step pre-training. | State-of-the-art (SOTA) on 10/22 ADMET tasks in TDC benchmark (as of Sept 2023). | Outperformed models using pre-computed fingerprints (e.g., RDKit, Morgan) and other GNNs like ChemProp. |

| CheMeleon [7] | Directed Message-Passing Neural Network pre-trained on molecular descriptors. | 79% win rate on Polaris tasks; 97% win rate on MoleculeACE assays. | Outperformed Random Forest (46%), fastprop (39%), and Chemprop (36%) baselines. |

| Edge Set Attention [8] | Graph-based model with attention applied to edges (bonds) instead of nodes (atoms). | Outperformed other methods across >70 graph tasks, including molecular benchmarks. | Showed superior scaling and performance on long-range graph benchmarks. |

| MultiMat [3] | Multimodal foundation model for materials (graph, text, image). | SOTA performance on challenging material property prediction tasks. | Enabled accurate discovery of stable materials with desired properties via latent-space search. |

Table 2: Application to Targeted Protein Degraders (TPDs) [5]

| Property Predicted | Model Performance | Implications for Drug Discovery |

|---|---|---|

| Passive Permeability | Misclassification errors: <4% for glues, <15% for heterobifunctionals. | Demonstrates ML/QSPR models are applicable to novel, complex therapeutic modalities beyond traditional small molecules. |

| CYP3A4 Inhibition | Misclassification errors: <4% for glues, <15% for heterobifunctionals. | |

| Microsomal Clearance | Misclassification errors: 0.8% to 8.1% across all modalities. | Supports ML usage for TPD design to accelerate discovery. |

Building and applying foundation models requires a curated set of data, software, and computational resources. The following table details the key components of the modern computational scientist's toolkit.

Table 3: Key Research Reagents for Molecular Foundation Models

| Resource Name | Type | Function and Utility | Key Features / Examples |

|---|---|---|---|

| OMol25 (Open Molecules 2025) [9] | Dataset | High-accuracy quantum chemistry dataset for biomolecules, metal complexes, and electrolytes. | The largest and most diverse dataset of its kind; enables unprecedented accuracy in atomic-scale design. |

| ZINC20 [2] / ChEMBL [1] | Dataset | Large-scale, publicly available databases of molecular structures. | Provides hundreds of millions of compounds for self-supervised pre-training. |

| Therapeutic Data Commons (TDC) [2] | Benchmark | Curated suite of ADMET prediction tasks. | Standardized benchmark for fair comparison of model performance on clinically relevant properties. |

| Universal Model for Atoms (UMA) [9] | Pre-trained Model | Machine learning interatomic potential trained on >30 billion atoms. | A foundational model providing accurate predictions of atomic interactions; a versatile base for fine-tuning. |

| RDKit [6] | Software Library | Open-source cheminformatics toolkit. | Standard for computing molecular descriptors (e.g., 200+ 2D descriptors), fingerprints (e.g., Morgan/ECFP), and handling SMILES. |

| ChemXploreML [10] | Desktop Application | User-friendly, offline-capable software for molecular property prediction. | Democratizes access to state-of-the-art ML by eliminating the need for deep programming expertise. |

| Adjoint Sampling [9] | Algorithm | Reward-driven generative modeling for scenarios with limited or no training data. | Enables generation of diverse molecules from large-scale energy models like UMA. |

Critical Analysis and Future Directions

Despite their promise, the development and application of foundation models in molecular science require careful consideration of several critical factors:

- Data Quality and Bias: Foundation models are susceptible to learning biases present in their training data. The principle of "garbage in, garbage out" remains pertinent; a model cannot save an unqualified dataset [6]. Furthermore, subtle variations in molecular structures can lead to significant property changes (activity cliffs), which models must be robust to [1].

- Representation Limitations: Many current models are trained on 2D molecular representations (e.g., SMILES, 2D graphs), omitting critical 3D conformational information that profoundly influences properties and interactions [1].

- Evaluation Rigor: Heavy reliance on a few benchmark datasets can be misleading. Performance gains must be statistically rigorous and evaluated on data splits that mimic real-world challenges, such as generalizing to novel molecular scaffolds [6].

Future progress will be driven by several key trends: the expansion of multimodal training that integrates text, image, and 3D structural data [4] [3]; the creation of ever-larger and more diverse high-fidelity datasets like OMol25 [9]; and the development of more accessible tools that lower the barrier to entry for chemists and materials scientists [10]. As these elements converge, the paradigm will continue to shift from building single-use models to leveraging and adapting general-purpose AI, fundamentally accelerating the pace of scientific discovery.

Application Notes

Self-supervised learning (SSL) provides a powerful framework for overcoming the labeled data bottleneck in machine learning by leveraging large volumes of unlabeled data to learn transferable representations [11]. This approach is particularly valuable for foundation models in property prediction research, where labeled experimental data is often scarce and expensive to obtain [2]. SSL operates by defining pretext tasks that generate supervisory signals directly from the structure of the data itself, enabling models to learn meaningful representations without manual annotation [11] [12]. These pre-trained models can then be adapted to various downstream tasks through fine-tuning, often achieving state-of-the-art performance with minimal task-specific labeled data [2] [13].

In scientific domains like drug development, SSL has demonstrated remarkable effectiveness. The MolE foundation model exemplifies this approach, utilizing self-supervised pretraining on ~842 million molecular graphs to learn fundamental chemical structures, followed by supervised pretraining to incorporate biological information [2]. This two-stage process enables the model to capture both local atomic environments and global molecular properties, resulting in representations that transfer effectively to specialized downstream tasks such as ADMET property prediction [2].

Key Advantages for Scientific Research

- Reduced Annotation Cost: SSL eliminates the requirement for extensively labeled datasets, which are particularly costly and time-consuming to produce in experimental sciences [11] [2].

- Improved Generalization: Models pretrained with SSL learn robust, general-purpose representations that capture underlying data structures rather than superficial patterns, enhancing performance on specialized downstream tasks [12] [13].

- Domain Adaptation Capability: In domain-specific applications where large-scale pretraining datasets are unavailable, in-domain SSL pretraining with limited data can outperform large-scale general pretraining approaches [13].

Quantitative Performance Data

Table 1: MolE Performance on Therapeutic Data Commons (TDC) ADMET Benchmark [2]

| Task Category | Number of Tasks | Dataset Size Range | State-of-the-Art Performance |

|---|---|---|---|

| Classification | 13 | 475 - ~13,000 compounds | Top performance on 10 of 22 tasks |

| Regression | 9 | 475 - ~13,000 compounds | Top performance on 10 of 22 tasks |

Table 2: Self-Supervised Learning Outcomes Across Domains

| Domain | Pretraining Data Scale | Key Result | Reference |

|---|---|---|---|

| Molecular Graphs (MolE) | ~842 million molecules | Outperformed best published results on 10/22 ADMET tasks | [2] |

| Computer Vision | Varies (general to domain-specific) | In-domain low-data SSL can outperform large-scale general pretraining | [13] |

Experimental Protocols

Protocol 1: Self-Supervised Pretraining for Molecular Graphs (MolE)

Objective: To learn fundamental chemical structure representations by predicting atomic environments from large-scale unlabeled molecular data [2].

Materials: Unlabeled molecular graphs from ZINC20 and ExCAPE-DB databases (~842 million molecules) [2].

Procedure:

- Graph Tokenization: Convert each molecular graph into input tokens using atom identifiers calculated by hashing Daylight atomic invariants (neighboring heavy atoms, neighboring hydrogens, valence, atomic charge, atomic mass, bond types, ring membership) into a single integer via the Morgan algorithm [2].

- Input Representation: Construct a topological distance matrix d where each element dᵢⱼ represents the shortest path length (in bonds) between atom i and atom j. This matrix encodes graph connectivity as relative position information [2].

- Masking: Randomly select 15% of atoms in each molecular graph for masking. Replace 80% of selected tokens with a mask token, 10% with random tokens from vocabulary, and leave 10% unchanged [2].

- Pretext Task: For each masked atom, task the model with predicting its corresponding atom environment of radius 2 (all atoms within two bonds). This is formulated as a classification task where each possible environment has a predefined label [2].

- Model Training: Train the MolE transformer architecture using disentangled self-attention to incorporate both token information and relative positional relationships between atoms [2].

Protocol 2: Supervised Multi-Task Pretraining

Objective: To transfer learned chemical structure representations to biological domain by incorporating labeled data for various properties [2].

Materials: Labeled dataset of ~456,000 molecules with associated property annotations [2].

Procedure:

- Model Initialization: Initialize model weights from the self-supervised pretrained MolE checkpoint [2].

- Task Formulation: Frame the learning as a multi-task problem where the model simultaneously predicts multiple molecular properties from the labeled dataset [2].

- Graph-Level Prediction: Aggregate atomic-level representations into a single molecular representation, then use this to predict target properties through task-specific output heads [2].

- Joint Optimization: Train the model using a combined loss function that optimizes performance across all property prediction tasks simultaneously [2].

Protocol 3: Downstream Task Fine-Tuning

Objective: To adapt the pretrained foundation model to specific property prediction tasks with limited labeled data [2] [13].

Materials: Task-specific labeled dataset (e.g., 475 compounds for DILI task, ~13,000 for CYP inhibition) [2].

Procedure:

- Model Selection: Initialize with the fully pretrained MolE model (self-supervised + supervised pretraining) [2].

- Architecture Adaptation: Replace the multi-task prediction heads with a single task-specific output layer appropriate for the target property (classification or regression) [2].

- Transfer Learning: Fine-tune the entire model on the downstream task dataset using standard supervised learning. Employ smaller learning rates for pretrained layers and potentially higher rates for the new output layer [2].

- Evaluation: Assess model performance using task-specific metrics through 5 independent runs with different random seeds to ensure statistical significance [2].

Workflow Visualization

Figure 1: Two-stage pretraining and fine-tuning workflow for molecular foundation models.

Figure 2: MolE architecture with disentangled attention for molecular graphs.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item | Function/Description | Example/Reference |

|---|---|---|

| ZINC20 Database | Source of ~842 million unlabeled molecular structures for self-supervised pretraining | [2] |

| ExCAPE-DB Database | Additional source of molecular structures for expanding pretraining data | [2] |

| Therapeutic Data Commons (TDC) | Benchmark platform with 22 standardized ADMET tasks for evaluation | [2] |

| RDKit | Open-source cheminformatics toolkit used for computing molecular fingerprints and atom environments | [2] |

| Morgan Algorithm | Method for generating atom identifiers (radius 0) and atom environments (radius 2) for molecular graphs | [2] |

| Disentangled Attention | Modified self-attention mechanism that separately processes content and relative position information | [2] |

| Transformer Architecture | Base model architecture adapted for molecular graphs with modified attention mechanisms | [2] |

Application Notes for Property Prediction

Foundation models are revolutionizing property prediction in drug development by providing powerful, transferable representations of biological and chemical entities. The choice of architecture dictates the model's capabilities and optimal application domain.

Table 1: Architectural Comparison for Property Prediction

| Feature | Encoder-Only (e.g., BERT, RoBERTa) | Decoder-Only (e.g., GPT, LLaMA) | Multimodal (e.g., CLIP, Uni-Mol) |

|---|---|---|---|

| Core Function | Representation Learning & Understanding | Autoregressive Generation & In-Context Learning | Cross-Modal Alignment & Fusion |

| Typical Input | Full sequence (e.g., SMILES, Protein Sequence) | Sequence prompt or context | Multiple modalities (e.g., SMILES + Assay Data, Structure + Text) |

| Primary Mechanism | Bidirectional Attention | Causal Attention (Masked to past) | Fusion Encoder (e.g., Cross-Attention, Concatenation) |

| Property Prediction Use Case | Predicting binding affinity, toxicity, solubility from a single representation. | Generating novel compounds with desired properties via prompt-guided generation. | Predicting drug-target interaction by jointly modeling ligand structure and protein sequence. |

| Sample Benchmark (c-Score) | ~0.75 (Tox21) | ~0.68 (Tox21 via in-context learning) | ~0.82 (Drug-Target Interaction) |

| Parameter Efficiency | High for fine-tuning tasks. | High for few-shot learning; less efficient for fine-tuning. | Lower due to complex fusion architecture. |

| Data Requirement | Large unlabeled corpus for pre-training. | Massive text/sequence corpus for pre-training. | Large, aligned multimodal datasets (e.g., ChEMBL+PubChem). |

Experimental Protocols

Protocol 1: Fine-Tuning an Encoder-Only Model for Toxicity Prediction

Objective: To adapt a pre-trained molecular encoder (e.g., a SMILES-BERT model) to predict compound toxicity on the Tox21 dataset.

Materials:

- Pre-trained SMILES-BERT model weights.

- Tox21 dataset (12,707 compounds across 12 toxicity assays).

- Hardware: Single GPU (e.g., NVIDIA A100 with 40GB VRAM).

- Software: Python, PyTorch, Hugging Face Transformers, RDKit.

Procedure:

- Data Preprocessing:

- Standardize SMILES strings using RDKit.

- Split data into training (80%), validation (10%), and test (10%) sets, ensuring stratified splits per assay.

- Tokenize SMILES strings using the model's pre-defined tokenizer.

- Model Setup:

- Load the pre-trained encoder model.

- Add a custom classification head: a dropout layer (p=0.1) followed by a linear layer that maps the

[CLS]token embedding to 12 output logits (one per assay).

- Training:

- Loss Function: Binary Cross-Entropy Loss with logits.

- Optimizer: AdamW (Learning Rate = 2e-5, Weight Decay = 0.01).

- Batch Size: 32.

- Epochs: 10. Perform validation after each epoch.

- Stopping Criterion: Early stopping with patience of 3 epochs based on validation loss.

- Evaluation:

- Calculate the area under the receiver operating characteristic curve (ROC-AUC) for each of the 12 assays on the held-out test set.

- Report the mean ROC-AUC across all assays.

Protocol 2: Prompt-Based Property Prediction with a Decoder-Only Model

Objective: To leverage a pre-trained molecular decoder (e.g., a GPT-style model) for solubility prediction using in-context learning, without fine-tuning.

Materials:

- Pre-trained molecular generator (e.g., MolGPT).

- A dataset of (SMILES, Solubility Category) pairs for in-context examples.

- Test set of SMILES strings for prediction.

Procedure:

- Prompt Engineering:

- Construct a prompt containing 5-10 (SMILES, Solubility) example pairs. The final line is the SMILES string of the test compound followed by "Solubility:".

- Example Prompt:

CC(=O)O Soluble\nC1=CC=CC=C1 Insoluble\nC(CO)OC Soluble\n[C@H]1[C@@H]2CC[C@]3(...) Solubility:

- Model Inference:

- Tokenize the entire prompt and feed it to the decoder model.

- Perform autoregressive generation to predict the next tokens after "Solubility:".

- The model will generate a text completion (e.g., "Soluble" or "Insoluble").

- Output Parsing:

- Map the generated text to a solubility category.

- For quantitative regression, the prompt can be designed to output a numerical value or a range.

Protocol 3: Training a Multimodal Model for Drug-Target Interaction (DTI)

Objective: To train a model that predicts binding affinity by jointly encoding a drug's molecular graph and a protein's sequence.

Materials:

- DTI dataset (e.g., BindingDB) with Ki/Kd values.

- Hardware: Multi-GPU setup recommended.

- Software: PyTorch, PyTorch Geometric, Deep Graph Library (DGL).

Procedure:

- Data Preprocessing:

- Ligand: Convert SMILES to a molecular graph with node (atom) and edge (bond) features.

- Protein: Tokenize amino acid sequence and embed using a pre-trained protein language model (e.g., ESM-2) to get a feature vector per residue.

- Model Architecture:

- Ligand Encoder: A Graph Neural Network (GNN) to produce a single graph-level embedding.

- Protein Encoder: A 1D Convolutional Neural Network (CNN) or Transformer to process the residue embeddings into a single protein vector.

- Fusion Mechanism: Concatenate the ligand and protein embeddings. Pass the fused vector through a multi-layer perceptron (MLP) regressor head.

- Training:

- Loss Function: Mean Squared Error (MSE) for regression on pKi/pKd.

- Optimizer: Adam (Learning Rate = 1e-4).

- Batch Size: 64.

- Evaluation:

- Evaluate on a test set of unseen drug-target pairs using Concordance Index (CI) and MSE.

Visualizations

Diagram 1: Encoder-Only Model Flow

Diagram 2: Multimodal DTI Model

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Foundation Model Experiments

| Item | Function & Application |

|---|---|

| Pre-trained Model Weights (e.g., ChemBERTa, ESM-2) | Provides a foundational understanding of chemical/protein language, drastically reducing required data and compute for task-specific fine-tuning. |

| Curated Benchmark Dataset (e.g., Tox21, BindingDB) | Standardized dataset for fair model evaluation, comparison, and validation of property prediction tasks. |

| High-Performance Computing (HPC) Cluster | Essential for training large foundation models from scratch or for extensive hyperparameter optimization due to immense computational load. |

| Automated Hyperparameter Optimization Tool (e.g., Weights & Biays, Optuna) | Systematically searches the hyperparameter space to identify the optimal model configuration, maximizing predictive performance. |

| Structured Data Serialization Format (e.g., Apache Parquet, HDF5) | Enables efficient storage and rapid loading of large-scale molecular datasets and their associated features for training pipelines. |

The development of foundation models for molecular property prediction represents a paradigm shift in computational drug discovery. These models, pre-trained on vast, unlabeled molecular datasets, learn fundamental chemical and structural principles, which can then be efficiently adapted to specific downstream prediction tasks with limited labeled data. This approach directly addresses one of the most significant challenges in the field: the extreme cost and time required to obtain experimental property data for millions of drug-like compounds. By leveraging large-scale public resources such as PubChem and ZINC, researchers can create models with superior generalization capabilities, thereby accelerating the identification of promising drug candidates and reducing attrition rates in clinical phases [14].

The core advantage of this methodology lies in its ability to learn comprehensive molecular representations that capture intricate relationships from atomic to functional levels. Modern molecular pre-trained models (MPMs) have demonstrated remarkable success by utilizing diverse pre-training strategies on these large datasets, covering aspects from 2D molecular graphs to 3D spatial conformations and chemical functionality [14]. This document provides detailed application notes and experimental protocols for leveraging these data resources effectively within foundation model research, enabling researchers to build robust and accurate predictive models for molecular properties.

Database Characteristics and Access Protocols

Key Molecular Databases for Pre-training

Table 1: Core Characteristics of Major Molecular Databases

| Database | Primary Content | Data Volume | Key Features | Access Methods |

|---|---|---|---|---|

| PubChem [15] | Small molecules, bioactivity data | 119 million compounds; 295 million bioactivities | Highly integrated with biological annotations, extensive bioassay data | Web interface, REST API, PubChemRDF download |

| ZINC [16] | Commercially available compounds, 3D structures | 230 million purchasable compounds | Ready-to-dock 3D formats, focused on drug-like compounds | Web interface, bulk download of subsets |

Data Retrieval and Preprocessing Protocols

Protocol 1: Efficient Data Acquisition from PubChem for Pre-training

- Objective: To programmatically retrieve large-scale molecular structures in SMILES format from PubChem for model pre-training.

- Materials: Computational workstation with internet access and ≥100 GB storage capacity.

- Procedure:

- Identify Relevant Compound Sets: Utilize PubChem's classification trees to select compounds of interest (e.g., "Pharmaceutical Substances," "Bioactive Compounds").

- Batch Retrieval via PUG-REST API: Use the PubChem Programming User Gateway (PUG) REST interface to retrieve compounds in SMILES format. The base URL structure is:

https://pubchem.ncbi.nlm.nih.gov/rest/pug/compound/cid/[CID1,CID2,...]/property/CanonicalSMILES/JSON. - List-Based Download: For large-scale downloads (>100,000 compounds), split requests into batches of 10,000 CIDs to avoid server timeouts.

- Structure Standardization: Process raw SMILES strings using a cheminformatics toolkit (e.g., RDKit) to standardize tautomeric forms, remove salts, and neutralize charges, ensuring consistency across the dataset.

- Troubleshooting Note: For very large downloads (>1 million compounds), prefer the FTP bulk download option provided by PubChemRDF to minimize network load.

Protocol 2: Curating a Drug-like 3D Conformer Dataset from ZINC

- Objective: To obtain a high-quality dataset of 3D molecular conformations for geometric deep learning.

- Materials: High-performance computing cluster with molecular mechanics software (e.g., Open Babel, RDKit).

- Procedure:

- Subset Selection: Navigate the ZINC20 interface to filter for "in-stock," "drug-like" compounds with a molecular weight between 200 and 500 Da.

- Download 3D Structures: Download the pre-computed 3D structures in SDF or MOL2 format. These conformers are typically generated using the Merck Molecular Force Field (MMFF94).

- Conformational Energy Minimization (Optional but Recommended): For critical applications, perform further energy minimization on the downloaded structures using a force field like MMFF94s to ensure conformational stability.

- Format Conversion: Convert the final structures into a format compatible with the target deep learning framework (e.g., PyTorch Geometric).

- Validation: Manually inspect a random sample of 100 structures to verify correct geometry and absence of atomic clashes.

Foundational Model Architectures and Training Methodologies

State-of-the-Art Model Frameworks

The SCAGE (Self-Conformation-Aware Graph Transformer) architecture exemplifies the advanced integration of large-scale data and sophisticated model design [14]. Its pre-training framework, known as M4, integrates four distinct learning tasks to capture comprehensive molecular semantics, from structure to function. Concurrently, Kolmogorov-Arnold Graph Neural Networks (KA-GNNs) demonstrate how novel network architectures can enhance the expressivity and interpretability of models trained on these datasets [17].

Table 2: Comparative Analysis of Foundation Model Architectures

| Model Architecture | Core Innovation | Pre-training Tasks | Reported Advantages |

|---|---|---|---|

| SCAGE [14] | Multitask pre-training with multiscale conformational learning | 1. Molecular fingerprint prediction2. Functional group prediction3. 2D atomic distance prediction4. 3D bond angle prediction | Superior performance on 9 molecular properties and 30 structure-activity cliff benchmarks; provides atomic-level interpretability. |

| KA-GNN [17] | Integration of Fourier-based Kolmogorov-Arnold Networks into GNN components | Node embedding, message passing, and graph-level readout using learnable univariate functions | Enhanced parameter efficiency, interpretability, and ability to capture both low and high-frequency structural patterns. |

Experimental Protocol for Multi-Task Pre-training

Protocol 3: Implementing the M4 Multi-Task Pre-training Strategy (Inspired by SCAGE)

- Objective: To pre-train a graph transformer model using a balanced multi-task objective that incorporates 2D, 3D, and functional molecular information [14].

- Materials: Pre-processed molecular dataset (from Protocol 1 or 2), PyTorch/TensorFlow environment with geometric deep learning extensions (e.g., PyTorch Geometric).

- Procedure:

- Data Preparation:

- Graph Representation: Convert each molecule into a graph ( G = (V, E) ), where nodes ( V ) represent atoms (featurized by atomic number, hybridization, etc.) and edges ( E ) represent bonds (featurized by bond type, conjugation, etc.).

- 3D Conformation: Generate or retrieve the lowest-energy 3D conformation for each molecule.

- Functional Group Labels: Implement an atomic-level functional group annotation algorithm (e.g., using SMARTS patterns) to assign a unique functional group label to each atom.

- Model Setup: Implement a graph transformer backbone equipped with a Multiscale Conformational Learning (MCL) module to capture both local and global structural contexts.

- Multi-Task Loss Calculation: Compute the combined loss ( \mathcal{L}{total} ) using a dynamic adaptive weighting strategy ( wi ) for each task:

- ( \mathcal{L}{total} = w{FP} \cdot \mathcal{L}{FP} + w{FG} \cdot \mathcal{L}{FG} + w{2D} \cdot \mathcal{L}{2D} + w{3D} \cdot \mathcal{L}{3D} )

- ( \mathcal{L}{FP} ) (Fingerprint Prediction): Binary cross-entropy loss for predicting pubchem molecular fingerprints.

- ( \mathcal{L}{FG} ) (Functional Group Prediction): Cross-entropy loss for classifying the functional group of each atom.

- ( \mathcal{L}{2D} ) (2D Distance): Mean squared error loss for predicting pairwise atomic distances in the 2D graph.

- ( \mathcal{L}{3D} ) (3D Bond Angle): Mean squared error loss for predicting bond angles derived from the 3D conformation.

- Dynamic Weighting: Implement a learning strategy that dynamically adjusts ( wi ) based on the homoscedastic uncertainty of each task or the rate of change of its loss to balance learning across tasks.

- Data Preparation:

- Validation: Monitor the loss convergence for all four tasks simultaneously. A successful pre-training run should show a steady decrease in both individual and total loss without any single task dominating the learning process.

SCAGE M4 Pre-training Workflow: A multi-stage process from raw data to a pre-trained foundation model.

Downstream Application and Transfer Learning Protocols

Task-Similarity Enhanced Transfer Learning

Transfer learning is the critical step that unlocks the value of a pre-trained foundation model for specific, often data-scarce, molecular property predictions. The MoTSE (Molecular Tasks Similarity Estimator) framework provides a principled approach to this process by quantitatively estimating the similarity between the pre-training tasks and the target downstream task, thereby guiding the selection of the most relevant pre-trained model and the optimal fine-tuning strategy [18].

Protocol 4: Fine-tuning with Task Similarity Guidance

- Objective: To effectively adapt a foundation model to a target molecular property prediction task (e.g., toxicity, solubility) by leveraging insights from task similarity.

- Materials: A pre-trained foundation model (from Protocol 3), a labeled dataset for the target task, the MoTSE framework code.

- Procedure:

- Task Similarity Estimation: Use MoTSE to compute the similarity between the pre-training tasks (e.g., fingerprint prediction, functional group prediction) and the target property prediction task. This is achieved by comparing the learned representations of a shared set of probe molecules across task-specific models.

- Model Selection: If multiple pre-trained models are available, select the one whose pre-training tasks show the highest aggregate similarity to the target task.

- Adaptive Fine-tuning:

- High-Similarity Task: For a target task highly similar to the pre-training tasks (e.g., predicting a property tightly linked to functional groups), fine-tune all layers of the model with a low learning rate to achieve subtle adaptation.

- Low-Similarity Task: For a novel or dissimilar target task, consider a two-stage approach: first fine-tune only the task-specific prediction head with the backbone frozen, then unfreeze the entire network for end-to-end fine-tuning with a slightly higher learning rate.

- Performance Validation: Evaluate the fine-tuned model on a held-out test set, using metrics appropriate for the task (e.g., ROC-AUC for classification, RMSE for regression). Compare against a model trained from scratch to quantify the benefit of transfer learning.

- Analysis: The application of this protocol has been shown to consistently improve prediction performance, particularly on small datasets, by effectively leveraging the prior knowledge encoded in the foundation model [18].

Task-Similarity Guided Fine-tuning: A decision workflow for adapting a foundation model based on task relatedness.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for Molecular Foundation Models

| Reagent / Resource | Type | Primary Function in Workflow | Exemplars / Standards |

|---|---|---|---|

| Large-Scale Databases | Data Resource | Provide unlabeled molecular structures for self-supervised pre-training. | PubChem [15], ZINC [16] |

| Geometric Deep Learning Libraries | Software Library | Enable construction and training of graph-based neural networks. | PyTorch Geometric, Deep Graph Library (DGL) |

| Cheminformatics Toolkits | Software Library | Handle molecular I/O, featurization, standardization, and descriptor calculation. | RDKit, Open Babel |

| Force Field Software | Computational Tool | Generate stable 3D molecular conformations for geometric learning. | Merck Molecular Force Field (MMFF) [14], Open Babel |

| Multi-Task Optimization Algorithms | Algorithm | Dynamically balance the contribution of multiple pre-training tasks to total loss. | Dynamic Adaptive Multitask Learning [14], Uncertainty Weighting |

| Task Similarity Estimation Frameworks | Analytical Framework | Quantify relatedness between pre-training and target tasks to guide transfer learning. | MoTSE (Molecular Tasks Similarity Estimator) [18] |

The strategic leverage of large-scale unlabeled datasets from PubChem and ZINC, combined with advanced multi-task pre-training frameworks like SCAGE and novel architectures like KA-GNNs, establishes a powerful foundation for accurate and generalizable molecular property prediction. The protocols outlined herein provide a concrete roadmap for researchers to implement these state-of-the-art methods, from data curation and model pre-training to task-aware fine-tuning. As these foundation models continue to evolve, their ability to provide atomic-level interpretability and avoid activity cliffs will further solidify their role as indispensable tools in the next generation of computational drug discovery [14] [17]. The continued growth and curation of public molecular databases will remain the critical fuel for this transformative engine.

In the field of property prediction research, foundation models promise to revolutionize the pace of scientific discovery, particularly in domains like drug development. However, their adoption in scientific applications has been slower than in natural language processing, hampered by two interconnected core challenges: data scarcity and the need for robust generalization [19]. Data scarcity arises because generating reliable, high-quality labels in domains like pharmaceuticals is often prohibitively expensive or time-consuming [20]. Furthermore, models must generalize effectively, not just to unseen data from the same distribution, but often to out-of-distribution (OOD) samples, a common scenario in real-world research and development [21]. These application notes provide a detailed framework, including structured data and experimental protocols, to guide researchers in overcoming these hurdles.

Selecting the appropriate strategy to mitigate data scarcity requires an understanding of the performance characteristics and data requirements of different techniques. The following table summarizes key quantitative findings from recent research.

Table 1: Comparative Performance of Techniques for Low-Data Regimes

| Technique | Reported Performance Gain | Key Application Context | Data Requirements & Characteristics |

|---|---|---|---|

| Multi-task Learning (MTL) with Adaptive Checkpointing (ACS) [20] | Surpassed single-task learning by 8.3% on average; achieved accurate predictions with as few as 29 labeled samples. | Molecular property prediction (e.g., toxicity, fuel properties). | Effective for multiple related tasks, even with severe task imbalance and missing labels. |

| Data Augmentation [22] | Can enhance model accuracy by 5-10% and reduce overfitting by up to 30%. | Computer vision, with principles applicable to other data types. | Requires a foundational dataset; effectiveness depends on the chosen transformations. |

| Soft Causal Learning [21] | Demonstrated strong generalization ability across seven different OOD scenarios. | Molecular property prediction on graph-structured data. | Focuses on learning from "environments" to achieve OOD robustness, bypassing invariant rationales. |

| Noise Injection [23] | Improved model generalization to new, unseen aircraft types. | Aircraft fuel flow estimation. | A regularization technique that adds controlled noise to existing data to simulate variance. |

Detailed Experimental Protocols

This section provides step-by-step methodologies for implementing two of the most powerful techniques outlined above.

Protocol 1: Multi-task Learning with Adaptive Checkpointing (ACS)

ACS is a training scheme designed to mitigate negative transfer (NT) in MTL, which occurs when updates from one task degrade the performance of another [20].

Objective: To train a multi-task Graph Neural Network (GNN) that leverages shared representations across tasks while protecting individual tasks from detrimental parameter updates. Materials:

- Hardware: Standard GPU-enabled workstation.

- Software: Python, deep learning framework (e.g., PyTorch, TensorFlow), ACS implementation.

- Data: A multi-task molecular property dataset (e.g., Tox21, ClinTox). Data should be split using a Murcko-scaffold protocol to ensure rigorous evaluation [20].

Procedure:

- Model Architecture Setup:

- Implement a shared GNN backbone based on message passing to learn general-purpose molecular representations.

- Attach task-specific Multi-Layer Perceptron (MLP) heads to the backbone for each property prediction task.

Training Configuration:

- Use a joint training loss that aggregates task-specific losses (e.g., binary cross-entropy for classification). Apply loss masking for tasks with missing labels.

- Establish a separate validation set for each task to monitor performance.

Adaptive Checkpointing:

- Throughout the training process, continuously monitor the validation loss for every single task.

- For each task, checkpoint (save) the model parameters for the shared backbone and its corresponding task-specific head at the point when that task's validation loss reaches a minimum.

- This results in a unique, specialized backbone-head pair for each task, representing the model state that was most beneficial for that specific task during shared training.

Evaluation:

- For each task, evaluate the performance using its specialized checkpoint on the held-out test set.

- Compare against baselines like single-task learning and standard MTL without checkpointing.

The workflow for this protocol, which ensures robust model specialization, is detailed in the diagram below.

Protocol 2: Building a Functional Group-Centric Reasoning Dataset

Incorporating fine-grained, domain-specific knowledge like functional groups can significantly enhance model interpretability and generalization [24].

Objective: To construct a dataset (e.g., FGBench) that enables models to reason about molecular properties based on functional group modifications. Materials:

- Hardware: Standard CPU/GPU compute.

- Software: Chemistry toolkits (e.g., RDKit), pipeline for functional group annotation (e.g., AccFG).

- Data: Source molecular structures and properties (e.g., from PubChem, QM9).

Procedure:

- Molecule and Functional Group Annotation:

- Process source molecules to precisely identify and localize all functional groups. This goes beyond simple pattern matching to handle overlapping groups and accurately localize them within the molecular structure [24].

Define Molecular Modifications:

- Define a set of controlled modifications, such as the addition, deletion, or substitution of specific functional groups on a base molecular scaffold.

Generate Question-Answer (QA) Pairs:

- For each modification, generate QA pairs that probe the model's understanding. Structure these into three categories:

- Single Functional Group Impact: "How does adding a hydroxyl group (-OH) affect the solubility?"

- Multiple Functional Group Interactions: "Compare the logP of this molecule (with -COOH) to its analog (with -COO- and -NH₂)."

- Direct Molecular Comparisons: "Which of these two isomers has a higher boiling point and why?" [24]

- For each modification, generate QA pairs that probe the model's understanding. Structure these into three categories:

Validation-by-Reconstruction:

- Implement a critical validation step where the molecular structure resulting from a described functional group modification is reconstructed and verified against a known ground-truth structure to ensure data integrity [24].

Benchmarking:

- Use the curated dataset to benchmark the reasoning capabilities of foundation models, fine-tune them, and perform structure-activity relationship (SAR) analysis.

The logical flow for constructing this dataset, which is crucial for teaching models fine-grained causal relationships, is as follows.

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of the above protocols relies on a suite of software "reagents". The following table lists key tools and their functions in the context of foundation models for property prediction.

Table 2: Key Research Reagent Solutions for Foundation Model Development

| Tool Name | Type | Primary Function in Research |

|---|---|---|

| Neptune [25] | Experiment Tracker | Manages the complexity of ML experimentation, tracking runs, hyperparameters, and results for foundation model development. |

| ChemTorch [26] | Development Framework | Provides modular, standardized pipelines for developing and benchmarking chemical reaction property prediction models, ensuring reproducibility. |

| FGBench [24] | Dataset & Benchmark | Serves as a benchmark and fine-tuning resource for developing LLMs capable of functional group-level molecular property reasoning. |

| Albumentations / NLPAug [27] | Data Augmentation Library | Applies geometric, color-based, and semantic transformations to image and text data, respectively, to artificially expand training sets. |

| imbalanced-learn [27] | Data Augmentation Library | Implements algorithms like SMOTE to generate synthetic samples for minority classes in tabular/structured data, addressing class imbalance. |

| Chronos [19] | Foundation Model | A time series foundation model (TSFM) adapted from language models, useful for forecasting tasks in scientific domains like energy and traffic. |

Architectures, Workflows, and Real-World Applications in Biomedicine

Foundation models are revolutionizing property prediction in scientific domains, offering unprecedented capabilities for drug discovery and materials science. These models, pre-trained on extensive datasets, can be adapted to a wide range of downstream tasks with remarkable efficiency. Among the most impactful architectures are Graph Neural Networks (GNNs), Transformers, and Vision-Language Models (VLMs), each bringing unique strengths to scientific problem-solving. GNNs naturally represent molecular and crystalline structures, Transformers capture complex long-range dependencies, and VLMs integrate multimodal information for enhanced reasoning. This article examines the predominant architectures, their hybrid implementations, and provides detailed protocols for their application in property prediction research, offering scientists a comprehensive toolkit for advancing computational discovery.

Graph Neural Networks for Molecular Representation

Architectural Fundamentals and Advances

Graph Neural Networks have emerged as a fundamental architecture for molecular property prediction due to their inherent ability to represent non-Euclidean data structures. Molecules naturally correspond to graph representations, with atoms as nodes and bonds as edges, enabling GNNs to learn directly from structural information. Conventional GNNs operate through message-passing mechanisms where node representations are iteratively updated by aggregating information from neighboring nodes. This local aggregation process effectively captures atomic environments and bonding patterns essential for predicting chemical properties.

Recent advancements have significantly enhanced GNN capabilities through novel mathematical frameworks. The Kolmogorov-Arnold GNN (KA-GNN) integrates Kolmogorov-Arnold network modules into three fundamental GNN components: node embedding, message passing, and readout functions [17]. This integration replaces standard multi-layer perceptrons with learnable univariate functions based on Fourier series, improving both expressivity and interpretability. The Fourier-based formulation enables effective capture of both low-frequency and high-frequency structural patterns in graphs, benefiting gradient flow and parameter efficiency [17]. Theoretical analysis confirms that Fourier-based KANs possess strong approximation capabilities for square-integrable multivariate functions, providing mathematical foundations for their effectiveness.

Application Protocols for Molecular Property Prediction

Experimental Protocol: Implementing KA-GNN for Molecular Property Prediction

Data Preprocessing: Convert molecular structures into graph representations using cheminformatics libraries (e.g., RDKit). Node features should include atomic number, hybridization, valence, and other atomic descriptors. Edge features should incorporate bond type, bond length, and stereochemistry.

Model Architecture Configuration:

- Implement Fourier-based KAN layers with harmonic functions as activation functions

- Configure node embedding layer to process concatenated atomic features and neighboring bond features

- Design message-passing layers with residual KAN connections instead of traditional MLPs

- Implement graph-level readout using adaptive pooling followed by KAN transformation

Training Procedure:

- Utilize AdamW optimizer with learning rate 0.001

- Apply gradient clipping with maximum norm 1.0

- Implement early stopping with patience of 50 epochs

- Use balanced sampling for skewed datasets

Interpretation Analysis: Leverage the inherent interpretability of KAN layers to identify chemically meaningful substructures contributing to predictions through activation pattern analysis.

Performance Comparison: KA-GNN architectures have demonstrated consistent outperformance over conventional GNNs across seven molecular benchmarks, achieving 5-15% improvements in prediction accuracy while reducing parameter count by 20-30% [17].

Figure 1: KA-GNN Architecture for Molecular Property Prediction

Research Reagent Solutions: GNN Implementation

Table 1: Essential Research Reagents for GNN Implementation

| Reagent/Tool | Function | Implementation Example |

|---|---|---|

| RDKit | Molecular graph generation and cheminformatics | Convert SMILES to graph representation with atom/bond features |

| PyTorch Geometric | GNN architecture implementation | Prebuilt GNN layers and graph operations |

| DGL (Deep Graph Library) | Scalable graph neural network training | Distributed training for large molecular datasets |

| KAN Implementation | Kolmogorov-Arnold network layers | Fourier-based activation functions for enhanced expressivity |

| QM9 Dataset | Benchmark molecular property dataset | 130k molecules with 19 geometric/energetic properties |

Transformer Architectures for Materials Science

Transformer-Graph Hybrid Frameworks

Transformer architectures have demonstrated remarkable success in materials property prediction, particularly when combined with graph-based representations. The CrysCo framework exemplifies this approach, utilizing a hybrid Transformer-Graph architecture that leverages four-body interactions to capture periodicity and structural characteristics in crystalline materials [28]. This model addresses critical challenges in materials science, including data scarcity for specific properties and capturing thermodynamic stability.

The CrysCo architecture employs two parallel networks: a deep Graph Neural Network (CrysGNN) that processes crystal structures with up to 10 layers of edge-gated attention, and a Transformer and Attention Network (CoTAN) that processes compositional features and human-extracted physical properties [28]. The edge-gated attention mechanism simultaneously updates bond angles and distances by considering adjacent edges and nodes, enabling the model to capture four-body interactions including atom type, bond lengths, bond angles, and dihedral angles. This comprehensive representation surpasses traditional approaches that typically consider only two-body or three-body interactions.

Application Protocols for Materials Property Prediction

Experimental Protocol: CrysCo Framework Implementation

Data Preparation:

- Extract crystal structures from Materials Project database

- Generate three distinct graph representations: atomic graph (G8), line graph (L(G8)), and dihedral line graph (L(G8d))

- Compute compositional features including stoichiometry, element properties, and statistical moments

Model Configuration:

- Implement CrysGNN with 10 layers of edge-gated attention GNN (EGAT)

- Configure CoTAN with multi-head attention mechanisms for compositional features

- Design hybrid fusion layer integrating structural and compositional representations

Transfer Learning Protocol:

- Pre-train model on data-rich source tasks (formation energy prediction)

- Fine-tune on data-scarce downstream tasks (mechanical properties)

- Apply learning rate reduction (factor 0.1) during fine-tuning phase

- Utilize gradient accumulation for small batch sizes on scarce datasets

Interpretation Methods:

- Apply attention visualization to identify critical structural motifs

- Use saliency mapping to determine elemental contributions to properties

Performance Metrics: The CrysCo framework has demonstrated state-of-the-art performance across 8 materials property regression tasks, outperforming specialized models including CGCNN, SchNet, MEGNet, and ALIGNN [28]. For energy-related properties and data-scarce mechanical properties, the model achieves 15-30% reduction in mean absolute error compared to existing approaches.

Figure 2: Transformer-Graph Hybrid Architecture for Materials

Research Reagent Solutions: Transformer Implementation

Table 2: Essential Research Reagents for Transformer Implementation

| Reagent/Tool | Function | Implementation Example |

|---|---|---|

| Materials Project API | Access to crystalline structures and properties | JSON-based querying of 146K+ material entries |

| pymatgen | Materials analysis and processing | Crystal structure manipulation and feature generation |

| Transformer Libraries | Architecture implementation | Hugging Face Transformers or custom PyTorch implementations |

| ALIGNN | Higher-order graph representations | Angle-based graph constructions for materials |

| MatDeepLearn | Benchmarking materials ML models | Standardized evaluation across multiple property tasks |

Vision-Language Models for Multimodal Molecular Understanding

Multimodal Fusion Architectures

Vision-Language Models represent an emerging paradigm in molecular property prediction that leverages both structural visual representations and textual descriptions. The MolVision framework exemplifies this approach, integrating molecular structure images with textual information to enhance property prediction accuracy [29] [30]. This multimodal strategy addresses limitations of text-only representations (e.g., SMILES/SELFIES strings) that can be ambiguous and structurally uninformative.

MolVision employs Vision-Language Models (VLMs) pretrained on general vision-language tasks and adapts them to molecular domain through efficient fine-tuning strategies such as Low-Rank Adaptation (LoRA). The architecture processes 2D molecular depictions as images while simultaneously analyzing textual descriptions of molecular characteristics. Experimental results across nine diverse datasets demonstrate that while visual information alone is insufficient for accurate property prediction, multimodal fusion significantly enhances generalization across molecular properties [30]. The adaptation of vision encoders specifically for molecular images, in conjunction with LoRA fine-tuning, further improves performance.

Application Protocols for Multimodal Property Prediction

Experimental Protocol: MolVision Implementation for Molecular Analysis

Multimodal Data Preparation:

- Generate 2D molecular structure images using standardized depiction rules (e.g., RDKit molecular depictions)

- Curate textual descriptions from scientific literature or generate synthetic descriptions using molecular feature extraction

- Align image-text pairs for contrastive learning

Model Adaptation:

- Select pretrained VLM architecture (e.g., CLIP, BLIP)

- Implement LoRA fine-tuning for efficient parameter adaptation

- Adapt vision encoder for molecular structure images through specialized preprocessing

- Design modality fusion mechanisms for image-text integration

Training Strategy:

- Employ contrastive pretraining to align visual and textual representations

- Implement cross-modal attention mechanisms for feature fusion

- Use multi-task learning for diverse property prediction

- Apply progressive unfreezing during fine-tuning

Evaluation Framework:

- Benchmark across diverse molecular property datasets (classification, regression, description tasks)

- Compare zero-shot, few-shot, and fine-tuned performance

- Analyze cross-modal attention weights for interpretability

Performance Analysis: Evaluations of nine different VLMs across multiple settings reveal that multimodal approaches consistently outperform unimodal baselines, with particular advantages in low-data regimes and for complex properties requiring structural reasoning [30].

Figure 3: Vision-Language Model for Molecular Property Prediction

Integrated Architectures and Emerging Trends

Hybrid Architecture Design Patterns

The most advanced foundation models for property prediction increasingly leverage hybrid architectures that combine the strengths of GNNs, Transformers, and VLMs. The EHDGT framework exemplifies this trend, enhancing both GNNs and Transformers while introducing sophisticated fusion mechanisms [31]. This approach addresses common deficiencies in local feature learning and edge information utilization inherent in pure Transformer architectures while mitigating the limited receptive field of traditional GNNs.

EHDGT incorporates several key innovations: edge-level positional encoding superimposed on node-level random walk encodings, subgraph encoding strategies to enhance local information processing, edge incorporation into attention calculations, and a gate-based fusion mechanism for dynamically integrating GNN and Transformer outputs [31]. The linear attention mechanism reduces computational complexity from quadratic to linear, enabling application to larger molecular systems. This hybrid design demonstrates superior performance across multiple benchmarks compared to traditional message-passing networks and standalone Graph Transformers.

Multimodal Foundation Models

The MultiMat framework represents another significant advancement, enabling self-supervised multimodal training of foundation models for materials science [3]. This approach moves beyond single-modality tasks to leverage rich multimodal data available in materials databases. MultiMat achieves state-of-the-art performance for challenging material property prediction tasks while enabling novel material discovery through latent-space similarity searching.

The framework demonstrates that learned representations correlate well with material properties, indicating effective capture of essential materials information. This capability enables screening for stable materials with desired properties and provides emergent features that may offer novel scientific insights [3]. The success of MultiMat highlights the growing importance of multimodal pre-training in scientific domains where diverse data types contain complementary information.

Research Reagent Solutions: Integrated Frameworks

Table 3: Research Reagents for Hybrid Architecture Implementation

| Reagent/Tool | Function | Implementation Example |

|---|---|---|

| EHDGT Framework | Enhanced GNN-Transformer hybrid | Gate-based fusion of local and global features |

| MultiMat | Multimodal foundation model | Self-supervised pre-training on diverse material data |

| Graph Transformer Libraries | Hybrid architecture components | GraphGPS, GraphTrans implementations |

| Line Graph Tools | Higher-order graph constructions | Dihedral angle and four-body interaction graphs |

| Latent Space Analysis | Representation quality assessment | t-SNE projections and similarity metrics |

Comparative Analysis and Implementation Guidelines

Architecture Selection Framework

Selecting the appropriate architecture for property prediction requires careful consideration of data characteristics and research objectives. GNN-based approaches excel when molecular structure directly determines properties and interpretability is prioritized. Transformer hybrids demonstrate superior performance for complex materials where long-range interactions and periodicity are significant. Vision-Language Models offer advantages when multimodal data is available and human-interpretable reasoning is valuable.

Table 4: Architecture Selection Guidelines for Property Prediction

| Architecture | Optimal Use Cases | Data Requirements | Interpretability | Implementation Complexity |

|---|---|---|---|---|

| GNN (KA-GNN) | Molecular properties determined by local structure | Molecular graphs with atom/bond features | High (substructure highlighting) | Medium |

| Transformer-Graph (CrysCo) | Crystalline materials with long-range interactions | Crystal structures & composition data | Medium (attention visualization) | High |

| VLM (MolVision) | Multimodal molecular data with textual descriptions | Image-text pairs of molecules | Medium (cross-modal attention) | Medium-High |

| Hybrid (EHDGT) | Complex systems requiring both local and global context | Large graphs with rich edge features | Medium (gate activation analysis) | High |

Performance Benchmarks

Across multiple studies, hybrid architectures consistently outperform single-modality approaches. KA-GNNs demonstrate 5-15% accuracy improvements over conventional GNNs on molecular benchmarks [17]. The CrysCo framework achieves 15-30% reduction in mean absolute error for materials property prediction compared to state-of-the-art baselines [28]. Vision-Language Models show particular advantages in low-data regimes, with few-shot performance gains of 10-20% over text-only approaches [30].

The computational efficiency of these architectures varies significantly, with KA-GNNs offering parameter reductions of 20-30% while maintaining superior accuracy [17]. Transformer-based models typically require more computational resources but capture more complex relationships. The integration of linear attention mechanisms in hybrid models like EHDGT helps mitigate computational complexity while preserving performance [31].

The field of foundation models for property prediction is rapidly evolving toward more integrated, multimodal approaches. Future developments will likely focus on unified architectures that seamlessly combine geometric, topological, and textual information while improving computational efficiency. Self-supervised pre-training strategies will continue to advance, reducing dependency on labeled data for specialized domains. Interpretability enhancements will remain a critical research direction, enabling scientific discovery alongside prediction accuracy.

For researchers implementing these architectures, the protocols and frameworks presented provide practical starting points while emphasizing modular design to accommodate rapid algorithmic advances. As these technologies mature, they promise to significantly accelerate discovery cycles in drug development and materials science, bridging the gap between data-driven prediction and fundamental scientific understanding.

The pretrain-finetune paradigm has emerged as a powerful framework in machine learning to overcome data scarcity and enhance model performance on specialized scientific tasks. This approach involves first pretraining a model on a large, broad dataset to learn general-purpose representations, followed by finetuning on a smaller, task-specific dataset to adapt this knowledge to a particular domain [32]. In fields such as chemistry and materials science, where acquiring large, labeled experimental datasets is a major bottleneck, this strategy decouples feature extraction from property prediction, enabling robust models even in low-data regimes [32].

Foundation models—large models pretrained on diverse datasets—are particularly effective starting points for this workflow. Their extensive initial training allows them to capture a wide range of underlying patterns, making them exceptionally adaptable to downstream tasks with limited data through finetuning [32]. This paradigm is revolutionizing property prediction, from small molecule drug discovery to polymer design and protein engineering, by providing a structured pathway to develop accurate, data-efficient models.

Core Workflow and Key Methodological Variations

The foundational pretrain-finetune workflow consists of several key stages, from data preparation through to final model deployment. The diagram below illustrates this generalized, high-level process.

Several methodological variations exist within this core workflow, each suited to different data availability and task requirements. Pair-wise Pretrain-Finetune involves transferring knowledge from a single, often large, source property to a target property. Systematic exploration has shown this approach consistently outperforms models trained from scratch on the target dataset alone [33]. Multi-task Pretraining (MPT) extends this concept by pretraining a single model on multiple source properties simultaneously. This strategy creates more robust and generalizable foundation models, which have demonstrated superior performance on novel, out-of-domain target tasks compared to pair-wise models [33].

Multi-task Finetuning occurs when a pretrained model is subsequently finetuned on multiple target tasks at once. This approach can be particularly powerful, as it leverages potential synergies between related properties [34]. However, it introduces the risk of negative transfer (NT), where performance on one task is degraded by updates from another due to task imbalance or low relatedness [20]. Techniques like Adaptive Checkpointing with Specialization (ACS) have been developed to mitigate this by monitoring validation loss for each task individually and checkpointing the best backbone-head pair for each task when it reaches a new minimum, thus preserving task-specific knowledge [20].

Quantitative Performance of Pretrain-Finetune Strategies

The effectiveness of the pretrain-finetune paradigm is demonstrated by measurable improvements in predictive accuracy across diverse scientific domains. The following tables summarize key performance metrics from recent studies.

Table 1: Performance of Pretrain-Finetune vs. Training from Scratch on Material Property Prediction (ALIGNN Model) [33]

| Target Property | Pretraining Property | FT Dataset Size | Scratch Model R² | PT-FT Model R² | Relative Improvement |

|---|---|---|---|---|---|

| Formation Energy (FE) | Band Gap (BG) | 800 | 0.920 | 0.936 | +1.7% |

| Band Gap (BG) | Formation Energy (FE) | 800 | 0.572 | 0.609 | +6.5% |

| Band Gap (BG) | Dielectric Constant (DC) | 800 | 0.572 | 0.598 | +4.5% |

| Dielectric Constant (DC) | Band Gap (BG) | 800 | 0.895 | 0.909 | +1.6% |

Table 2: Mitigating Negative Transfer with ACS on Molecular Property Benchmarks (Average ROC-AUC) [20]

| Training Scheme | ClinTox | SIDER | Tox21 | Average |

|---|---|---|---|---|

| Single-Task Learning (STL) | 0.835 | 0.645 | 0.801 | 0.760 |

| Multi-Task Learning (MTL) | 0.842 | 0.658 | 0.815 | 0.772 |

| MTL with Global Checkpointing | 0.844 | 0.661 | 0.817 | 0.774 |

| ACS (Proposed) | 0.887 | 0.667 | 0.820 | 0.791 |

These results confirm that pretrain-finetune strategies consistently deliver superior performance compared to models trained from scratch, especially on smaller target datasets [33]. Furthermore, advanced techniques like ACS provide significant gains by effectively managing the challenges of multi-task learning [20].

Experimental Protocols and Detailed Methodologies

Protocol 1: Pair-wise Pretraining and Finetuning for Material Properties

This protocol details the steps for transferring knowledge from one material property to another using a GNN architecture like ALIGNN [33].

Data Sourcing and Curation:

- Source Data: Select a large dataset for pretraining (e.g., formation energies from the Materials Project). The dataset should ideally have >10^4 data points for effective pretraining.

- Target Data: Curate a smaller, labeled dataset for the target property (e.g., piezoelectric modulus). Perform standard cleaning: remove duplicates, handle missing values, and ensure SMILES strings are canonicalized [35].

Model Pretraining (Source Task):

- Initialize a GNN model (e.g., ALIGNN) with random weights.

- Train the model on the source property until convergence using a mean absolute error (MAE) or mean squared error (MSE) loss. Standard hyperparameters include an initial learning rate of 0.001, AdamW optimizer, and a batch size suitable for the dataset size and GPU memory.

Model Finetuning (Target Task):

- Strategy 1 (Full Finetuning): Take the pretrained model, replace the output regression head, and finetune all layers on the target dataset.

- Strategy 2 (Differentiated Learning Rates): To prevent overfitting, use a lower learning rate for the model's backbone (e.g., one order of magnitude lower) and a higher rate for the new regression head [35].

- Train the model on the target property. A one-cycle learning rate schedule with linear annealing is often effective [35].

Validation and Evaluation:

- Use 5-fold cross-validation on the target dataset to ensure reliable performance estimates [35].

- Report key metrics (R², MAE) on a held-out test set and compare against a scratch model baseline.

Protocol 2: Multi-task Finetuning of a Pretrained Chemical Model with ACS

This protocol describes how to finetune a chemically pretrained model on multiple ADMET properties simultaneously while using ACS to mitigate negative transfer [20] [34].

Model and Data Preparation:

ACS Model Architecture Setup:

- Employ a shared GNN backbone based on message passing for task-agnostic representation learning.

- Attach separate, task-specific Multi-Layer Perceptron (MLP) heads for each property to be predicted [20].

Training with Adaptive Checkpointing:

- Train the entire model (shared backbone + task heads) on all tasks simultaneously.

- Monitor the validation loss for each individual task throughout the training process.

- Implement the checkpointing logic: whenever the validation loss for a specific task reaches a new minimum, save the state of the shared backbone and its corresponding task-specific head as the specialized model for that task [20].

Inference:

- For prediction on a given task, use the specialized backbone-head pair that was checkpointed for that specific task.

The logical architecture and data flow of the ACS method are detailed in the diagram below.

The Scientist's Toolkit: Essential Research Reagents

Implementing the pretrain-finetune workflow requires a suite of software tools, datasets, and model architectures. The table below catalogs key resources referenced in recent literature.

Table 3: Essential Tools and Resources for Pretrain-Finetune Research

| Category | Resource Name | Description & Function |

|---|---|---|

| Model Architectures | ALIGNN [33] | A GNN architecture that incorporates both atomic and bond information for accurate material property prediction. |

| D-MPNN [35] [20] | (Directed Message Passing Neural Network) A graph model effective for molecular property prediction, robust on small datasets. | |

| Uni-Mol-2-84M [35] | A 3D molecular model used for capturing spatial structure in tasks like polymer property prediction. | |

| Software & Frameworks | AutoGluon [35] | An automated machine learning framework, effective for tabular data and ensemble creation. |

| RDKit [35] [32] | A core cheminformatics toolkit for processing SMILES, generating molecular descriptors, fingerprints, and images. | |

| Optuna [35] | A hyperparameter optimization framework for automating the search for optimal model settings. | |

| Datasets & Benchmarks | MoleculeNet [20] [32] | A benchmark collection of datasets for molecular property prediction (e.g., ClinTox, SIDER, Tox21). |

| Matminer Libraries [33] | Curated collections of datasets for materials science property prediction. | |

| PI1M [35] | A large-scale dataset of 1 million hypothetical polymers, used for pretraining in the polymer challenge. | |

| Pretrained Models | ModernBERT [35] | A general-purpose foundation model (BERT variant) that can be adapted for chemical sequence tasks. |

| CLIP [32] | A vision foundation model used as a backbone for molecular image representation in MoleCLIP. | |

| Chemically Pretrained Models (KERMT, KGPT) [34] | Graph neural networks pretrained on large chemical corpora using self-supervised tasks. |