Exploratory Research Design for Novel Materials: A Foundational Guide for Scientists and Developers

This article provides a comprehensive guide to exploratory research design tailored for researchers, scientists, and drug development professionals working with novel materials.

Exploratory Research Design for Novel Materials: A Foundational Guide for Scientists and Developers

Abstract

This article provides a comprehensive guide to exploratory research design tailored for researchers, scientists, and drug development professionals working with novel materials. It covers the foundational principles of exploratory studies, applicable methodological approaches, strategies for troubleshooting common challenges, and frameworks for validating findings and transitioning to confirmatory research. By addressing the unique uncertainties of new material development, this guide aims to equip professionals with the tools to effectively navigate initial discovery phases, generate robust hypotheses, and lay a solid groundwork for future applied research and clinical translation.

Laying the Groundwork: Foundational Principles of Exploratory Research for Novel Materials

Exploratory research is an investigative method used in the early stages of a research project to delve into a topic where little to no existing knowledge or information is available [1] [2]. It is a dynamic and flexible approach aimed at gaining insights, uncovering trends, and generating initial hypotheses rather than providing definitive answers [1]. In the context of materials science, this approach is particularly valuable when investigating novel materials, unknown material properties, or unexplored synthesis pathways where established frameworks are lacking.

The primary purposes of exploratory research in materials science include understanding complexity, idea generation, problem identification, and decision support [2]. This methodology helps researchers understand the intricate and multifaceted nature of materials behavior, serves as fertile ground for generating new hypotheses, identifies research problems or gaps in existing knowledge, and provides valuable information for making informed decisions about research direction [2]. Exploratory research holds immense significance as it reduces the risk of pursuing unproductive research paths, lays the groundwork for subsequent research phases, encourages creative exploration of novel material systems, and offers adaptable methods tailored to unique research questions [2].

Methodological Approaches in Exploratory Research

Exploratory research encompasses several distinct methodological approaches, each with specific applications in materials science investigation. The table below summarizes the primary methods employed:

Table 1: Methodological Approaches in Exploratory Materials Research

| Method | Primary Purpose | Applications in Materials Science | Key Characteristics |

|---|---|---|---|

| Literature Review [2] | Identify existing theories, concepts, and research gaps | Understanding current state of knowledge on material systems | Systematic examination of academic papers, books, and scholarly sources |

| Pilot Studies [2] | Test research procedures and instruments | Assessing feasibility of synthesis methods or characterization techniques | Small-scale research projects conducted before full-scale study |

| Case Studies [2] | Explore real-life contexts and understand complex situations | In-depth examination of specific material failures or exceptional performances | Holistic view of particular phenomena through interviews, observations, and document analysis |

| Focus Groups [2] | Explore group dynamics and collective opinions | Gathering expert perspectives on material applications or research priorities | Structured discussions with small groups of domain experts |

| Observational Research [2] | Understand behavior or phenomena in natural settings | Monitoring material degradation or performance under actual operating conditions | Systematic observation and recording in natural environments |

These methodologies are not mutually exclusive and are often combined in materials science research to provide complementary insights. The flexibility of exploratory research design allows investigators to adjust their approach as new information emerges during the investigation [1].

Key Experimental Protocols in Materials Exploration

Operational Bounds Testing

Operational bounds testing is a fundamental exploratory approach in materials science that investigates the boundary conditions and performance limits of materials under extreme conditions [3]. This protocol consists of several specialized testing methodologies:

- Stress Testing: Evaluates how materials perform at extremes beyond normal operating parameters. For example, researchers might test a new ceramic composite's thermal stability by progressively increasing temperature until structural failure occurs, determining the absolute upper temperature limit [3].

- Performance Testing: Assesses how well material behavior conforms to theoretical expectations under specified conditions. A researcher might measure the electrical conductivity of a novel semiconductor material across a range of temperatures to verify alignment with predicted semiconductor behavior [3].

- Load Testing: Exercises materials at maximum expected load conditions. This might involve applying progressively increasing mechanical stress to a newly developed metal alloy until it reaches its yield strength to understand behavior under anticipated service conditions [3].

Table 2: Data Collection Parameters for Operational Bounds Testing

| Test Type | Primary Variables | Measurement Techniques | Data Output |

|---|---|---|---|

| Stress Testing [3] | Temperature, Pressure, Mechanical Load, Environmental Exposure | Thermal analysis, Mechanical testing, Structural characterization | Maximum tolerance limits, Failure modes |

| Performance Testing [3] | Functional properties under standard conditions | Electrical characterization, Mechanical testing, Optical measurements | Performance metrics, Property validation |

| Load Testing [3] | Incrementally increasing operational demands | Fatigue testing, Creep testing, Durability assessment | Service life prediction, Degradation patterns |

Sensitivity Analysis

Sensitivity analysis formally investigates how precisely the outputs of a material system are correlated to the inputs and processing parameters [3]. This approach is particularly valuable for understanding the relationship between synthesis conditions and resulting material properties:

- Parameter Variation: Systematically altering one synthesis variable while holding others constant. For instance, a researcher might vary the sintering temperature of a ceramic material while maintaining consistent powder composition, pressing pressure, and sintering atmosphere.

- Response Measurement: Quantifying how specific material properties change in response to parameter variations. This might include measuring changes in density, grain size, hardness, or fracture toughness as functions of processing parameters.

- Correlation Analysis: Identifying which processing parameters have the greatest influence on final material properties, enabling optimization of synthesis protocols for desired characteristics.

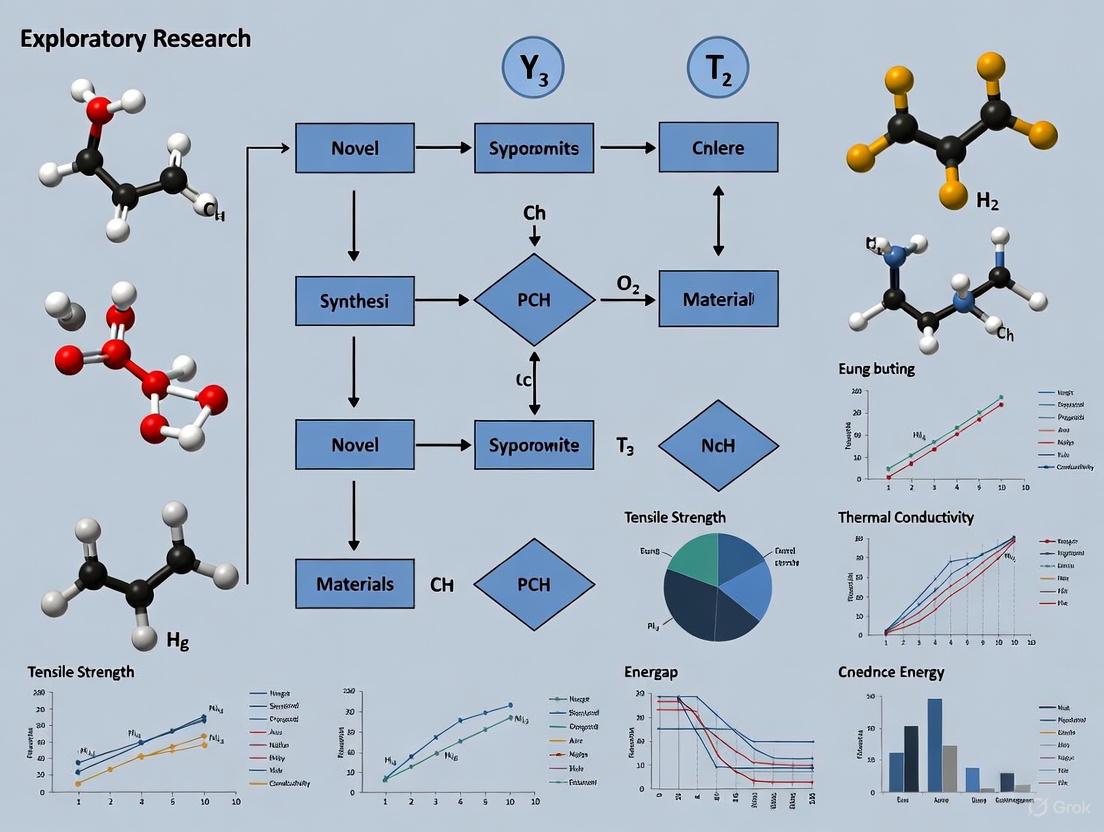

The following diagram illustrates the generalized workflow for exploratory materials research:

Case Control Methodology

Case control methodology represents another valuable exploratory approach in materials science, particularly for investigating material failures or exceptional performance [3]. This observational approach involves:

- Case Group Identification: Selecting materials that exhibit specific characteristics of interest (e.g., premature failure, exceptional corrosion resistance, unexpected superconducting behavior).

- Control Group Establishment: Identifying comparable materials that lack the characteristic of interest but share similar baseline properties or processing histories.

- Comparative Analysis: Systematically investigating differences in processing parameters, compositional variations, microstructural features, or environmental exposures between case and control groups to identify potential causal factors.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful exploratory research in materials science requires specific reagents, instrumentation, and analytical tools. The following table details essential components of the materials researcher's toolkit:

Table 3: Essential Research Reagents and Materials for Exploratory Materials Science

| Tool/Reagent | Function | Exploratory Application |

|---|---|---|

| Precursor Materials | Base components for material synthesis | Systematic variation of stoichiometry to discover new phases |

| Characterization Standards | Reference materials for instrument calibration | Ensuring measurement accuracy during preliminary investigations |

| Analytical Solvents | Medium for chemical reactions and processing | Exploring solution-based synthesis routes |

| Structural Probes | Techniques for atomic and molecular structure analysis | Determining crystal structure of newly synthesized compounds |

| Property Testing Apparatus | Equipment for measuring functional properties | Initial assessment of mechanical, electrical, or thermal behavior |

The specific reagents and tools vary significantly based on the material class under investigation. For ceramic materials, this might include high-purity oxide powders, sintering aids, and binder systems; for metallic systems, master alloys, refining agents, and mold materials; for polymeric materials, monomers, initiators, catalysts, and stabilizers.

Data Visualization and Analysis in Exploratory Research

Effective data visualization is critical in exploratory materials research for identifying patterns, trends, and relationships within complex datasets. The following diagram illustrates the interplay between different data types and analytical approaches:

The selection of appropriate visualization methods depends on the nature of the data and the research questions being explored. For compositional data, ternary diagrams might be most appropriate; for processing-structure-property relationships, multi-axis plots with linked parameters are valuable; for microstructural analysis, image-based data representation is essential.

Exploratory research employs both qualitative and quantitative data analysis approaches [2]. For qualitative data, approaches like thematic analysis, content analysis, or narrative analysis help identify patterns, themes, and trends within the data. For quantitative data, statistical analysis and data visualization techniques reveal trends, correlations, and significant findings. When both qualitative and quantitative data are collected, mixed-methods analysis provides a more comprehensive understanding [2].

Advantages and Limitations in Materials Science Context

Exploratory research offers several distinct advantages for materials science investigations, along with important limitations that researchers must consider:

Table 4: Advantages and Limitations of Exploratory Research in Materials Science

| Advantages | Limitations |

|---|---|

| Flexibility in research design allows adaptation to unexpected findings [1] | Limited generalizability due to small samples or specific conditions [1] |

| Generation of new insights and hypotheses for novel materials [1] | Difficulty establishing cause-effect relationships without controlled testing [1] |

| Foundation for future research in emerging materials domains [1] | Potential for researcher bias in interpretation of unstructured data [1] |

| Risk reduction by exploring feasibility before major investment [2] | Resource intensive without guaranteed outcomes or discoveries |

These characteristics make exploratory research particularly well-suited for nascent areas of materials science such as quantum materials, bio-inspired materials, and high-entropy alloys, where fundamental understanding is still developing and research pathways are not yet established.

Exploratory research serves as a critical foundation for advancing materials science by enabling investigation into unknown material systems, processes, and phenomena. Through its flexible methodological approaches, including literature reviews, pilot studies, case controls, and operational bounds testing, researchers can navigate complex, uncharted territories in materials development. The systematic application of sensitivity analysis, combined with rigorous data collection and visualization, allows for the generation of hypotheses and insights that drive subsequent targeted research.

While exploratory research has limitations in establishing definitive cause-effect relationships and producing generalizable conclusions, its value in mapping the unknown realms of materials behavior, discovering new material phenomena, and identifying promising research directions is undeniable. As materials science continues to expand into increasingly complex and multifunctional material systems, the strategic application of exploratory research methodologies will remain essential for pioneering breakthroughs and building the foundational knowledge required for more definitive experimental and descriptive studies.

This technical guide delineates the core purposes of exploratory research within the context of novel materials research. Framed for an audience of researchers, scientists, and drug development professionals, it provides a structured examination of how exploratory methodologies drive the initial phases of scientific inquiry. The document details systematic approaches for generating hypotheses, identifying critical variables, and comprehending systemic complexity, supported by structured data presentation, experimental protocols, and visual workflows. The objective is to offer a formalized framework that enhances the rigor and productivity of early-stage investigative processes in the field of advanced materials.

Exploratory research constitutes the initial investigation that forms the foundation for more conclusive studies [4]. It is fundamentally an inductive process, emphasizing discovery through detailed observation and moving from particular data points to general theories and models [4]. In the high-stakes field of novel materials research, where understanding multifaceted interactions is paramount, this approach is not merely a preliminary step but a critical phase for mapping the problem space. It helps in determining viable research designs, sampling methodologies, and data collection methods before committing to large-scale, confirmatory studies [4]. This guide articulates the three core purposes of this approach—generating hypotheses, identifying variables, and understanding complexity—providing a structured toolkit for navigating the inherent uncertainties of developing new materials.

Core Purpose One: Generating Hypotheses

The first core purpose of exploratory research is the generation of testable hypotheses. This process is described as a "logic of discovery," where researchers construct theories from observing the environment, effectively functioning as a theory-generation approach [4].

Methodological Workflow for Hypothesis Generation

The following diagram illustrates the systematic, iterative workflow for generating hypotheses in an exploratory context:

Data Collection Strategies

Effective hypothesis generation relies on diverse data collection strategies, chosen for their ability to reveal underlying patterns without preconceived structures.

Table 1: Data Collection Methods for Hypothesis Generation

| Method | Primary Function | Application in Novel Materials Research |

|---|---|---|

| Unstructured Observations [4] | To obtain data in an open-ended format without predetermined categories. | Observing material behavior under stress (e.g., via video recordings) to identify unexpected failure modes or properties. |

| Surveys & Focus Groups [4] | To efficiently gather a wide variety of self-reported perspectives and experiences. | Collecting expert opinions from scientists on the processing challenges of a new polymer blend. |

| Literature Review [4] | To synthesize existing knowledge and identify gaps or contradictions. | Analyzing published studies on perovskite crystal structures to propose a new synthesis variable. |

The generalizations formed from this data are not typically applicable to broader populations but are instead used to construct hypotheses for future testing [4]. Researchers achieve this by meticulously recording similarities, differences, and relationships within the collected data to develop these general, testable statements [4].

Core Purpose Two: Identifying Variables

A second critical purpose is the identification and categorization of all relevant variables. This process is essential for structuring subsequent research phases. The conceptualization of data into fields (columns) and records (rows) is fundamental to this task [5].

Data Structure and Variable Typology

Understanding what a single row in a dataset represents—the granularity—is crucial for correctly identifying variables [5]. Each variable, or field, should contain items that can be grouped into a larger relationship, defined by its domain—the values that could or should be present [5]. Variables in a dataset are categorized along two primary dimensions, which guide their subsequent analysis and visualization:

Table 2: Variable Categorization Framework for Data Analysis

| Category | Definition | Role in Analysis | Example from Materials Research |

|---|---|---|---|

| Dimension [5] | Qualitative data that cannot be measured but is described. Usually discrete. | Defines groups, creates panes in visualizations. | Material batch ID, synthesis method, catalyst type. |

| Measure [5] | Quantitative, numerical data that can be aggregated. Usually continuous. | Values are aggregated (summed, averaged) and plotted on axes. | Tensile strength (MPa), conductivity (S/m), reaction yield (%). |

| Discrete [5] | Individually separate and distinct values. Coded as blue in some analysis software. | Treated as labels in visualizations. | Material type (e.g., "Ceramic A", "Polymer B"). |

| Continuous [5] | Forming an unbroken spectrum of numerical values. Coded as green in some analysis software. | Treated as axes in visualizations. | Temperature (°C), pressure (Pa), concentration (mol/L). |

Experimental Protocol for Variable Identification

Objective: To systematically identify and document all potential factors (variables) influencing the properties of a novel material during initial synthesis and testing.

Procedure:

- Define the Unit of Analysis: Clearly articulate what a single record (row) represents in the study (e.g., "one synthesis reaction," "one tested sample," "one measurement from a specific location on a substrate") [5].

- Document Input Factors (Independent Variables): For each unit, record all plausible input parameters. Structure these as dimensions in a data table.

- Synthesis Conditions: Precursor concentrations, temperature profiles, pressure, time, pH.

- Processing Conditions: Mixing speed, annealing time and temperature, coating thickness.

- Environmental Conditions: Humidity, ambient light exposure.

- Document Output Metrics (Dependent Variables): For each unit, measure all relevant output properties. Structure these as measures in a data table.

- Physical Properties: Density, hardness, viscosity.

- Functional Properties: Electrical conductivity, catalytic activity, photon emission efficiency.

- Structural Properties: Crystal phase (from XRD), surface morphology (from SEM).

- Data Structuring: Organize all documented variables into a single table with rows representing the unit of analysis and columns representing each identified variable, ensuring correct data types (numeric, text, date) [5].

Core Purpose Three: Understanding Complexity

The third core purpose is to understand the complexity of the system under study, particularly the relationships and interactions between the identified variables.

Visualizing Relationships and Distributions

Comparing quantitative data across different groups is a primary method for uncovering relationships. Appropriate graphical representations are selected based on the data structure and the nature of the comparison [6].

Table 3: Graphical Methods for Analyzing Complex Relationships

| Graph Type | Best Use Case | Key Strength | Illustrative Example |

|---|---|---|---|

| Back-to-Back Stemplot [6] | Comparing two groups with small amounts of data. | Retains the original data values. | Comparing the particle size distribution of two synthesis batches. |

| 2-D Dot Chart [6] | Comparing any number of groups with small/moderate data. | Shows individual data points; effective for jittered or stacked points to avoid overplotting. | Plotting the yield strength of multiple material composites against a baseline. |

| Boxplots [6] | Comparing any number of groups (except very small datasets). | Summarizes distribution using the five-number summary (min, Q1, median, Q3, max) and identifies outliers. | Comparing the thermal stability of three different polymer formulations. |

The following workflow outlines the process for moving from structured data to a understanding of complex relationships:

Numerical Summaries for Comparison

When a quantitative variable (a measure) is observed across different groups defined by a qualitative variable (a dimension), the data must be summarized for each group. A key numerical summary is the difference between means (or medians) [6]. For example, a study might summarize the corrosion resistance of a new alloy with and without a protective coating by presenting the mean, standard deviation, and sample size for each group, and explicitly calculating the difference between the two means [6]. This direct comparison is fundamental to quantifying the effect of a variable and understanding its role within the complex system.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and tools commonly employed in the exploratory research phase for novel materials.

Table 4: Essential Research Reagents and Materials for Exploratory Studies

| Item / Solution | Function in Exploratory Research |

|---|---|

| Biological Specimens [7] | Used in biomaterials research to assess biocompatibility, cytotoxicity, and cell-material interactions in early-stage testing. |

| Residential Environmental Samples [7] | Includes air, dust, drinking water, and soil samples; used to test the environmental degradation and stability of new materials. |

| Data Profiling and Visualization Software [5] | Tools that provide summary and detail views (e.g., histograms) to understand data distributions and detect outliers early in the analysis. |

| Unique Identifier (UID) [5] | A value (like a serial number or URL) that uniquely identifies each sample or data record, crucial for tracking in complex, multi-stage experiments. |

Exploratory research, with its core purposes of hypothesis generation, variable identification, and understanding complexity, is an indispensable methodology in the development of novel materials. By adhering to the structured workflows, data presentation standards, and experimental protocols outlined in this guide, researchers can transform initial observations into a robust foundation for conclusive research. This systematic approach ensures that the inherent uncertainty of exploration is navigated with rigor, ultimately accelerating the discovery and optimization of next-generation materials.

Within the rigorous domain of novel materials research, the pressure to deliver definitive, quantitative results can often overshadow a critical preliminary stage: exploratory research design. This technical guide delineates the precise scenarios in which an exploratory design is not merely useful but essential for pioneering scientific advancement. Framed within a broader thesis on research methodology for novel materials, this paper provides a structured framework for researchers and scientists to identify situations characterized by new phenomena, uncharted material properties, or fundamental feasibility questions. We detail specific methodological protocols—including literature reviews, pilot studies, and qualitative techniques—and integrate these with visualized workflows and reagent solutions to equip professionals with a practical toolkit for navigating the initial, ambiguous phases of discovery, thereby laying a robust foundation for subsequent explanatory and experimental studies.

In the fast-paced fields of materials science and drug development, the scientific method is often synonymous with rigorous hypothesis testing. However, this approach presupposes a sufficient foundation of existing knowledge. Exploratory research design serves as the critical antecedent to this process, providing a methodological framework for investigating topics or questions that lack established paradigms or extensive prior study [1] [8]. Its primary goal is not to provide definitive answers but to gain initial insights, generate formal hypotheses, and identify key variables and relationships for future research [1].

When applied to novel materials research, an exploratory design is indispensable for navigating uncertainty. It offers the flexibility and adaptability needed when researchers are confronted with unprecedented behaviors, unknown property profiles, or unvalidated synthetic pathways [2]. This guide establishes a tailored framework, arguing that the decision to employ an exploratory design is paramount when research is directed at (1) New Phenomena, (2) Uncharted Properties, or (3) Feasibility Testing. By adopting this structured approach, researchers can systematically convert ambiguity into a directed research trajectory, optimizing resources and maximizing the impact of subsequent investigative cycles.

A Framework for Applying Exploratory Research Design

The following framework synthesizes core principles to guide researchers in determining when an exploratory design is the most appropriate scientific choice. The decision matrix is based on the state of existing evidence and the specific objectives of the inquiry.

Core Decision Matrix

The table below outlines the three primary scenarios that necessitate an exploratory design, contrasting them with situations where other research designs are more suitable.

Table 1: Framework for Selecting an Exploratory Research Design

| Scenario | State of Existing Evidence | Primary Research Objective | Suitable Alternative Design |

|---|---|---|---|

| Investigating New Phenomena | Little to no prior data or theoretical frameworks exist [1] [9]. | To discover initial patterns, generate fundamental questions, and develop preliminary conceptual models. | N/A (Exploratory is the only viable starting point) |

| Mapping Uncharted Properties | A material is known, but its characteristics or behaviors under specific conditions are unrecorded [1]. | To identify, describe, and categorize key variables and their potential interrelationships. | Descriptive (once key variables are identified) |

| Conducting Feasibility Testing | A process or synthesis method is conceptual but untried in a specific context. | To assess practicality, refine methodologies, and identify potential obstacles before large-scale investment [2]. | Experimental (once feasibility is established and a hypothesis is formed) |

| Explaining Causal Relationships | Variables are well-defined, and their baseline behavior is understood. | To test a specific hypothesis and establish cause-and-effect relationships. | Explanatory (e.g., Experimental) [10] |

| Describing Defined Characteristics | The phenomenon is known, and key variables are identified. | To accurately and systematically measure and describe the characteristics of a population or phenomenon. | Descriptive [1] [10] |

Visualizing the Exploratory Research Workflow

The following diagram maps the iterative workflow of a typical exploratory research project, from problem identification through to the transition into more definitive research phases.

Key Application Scenarios in Detail

Investigating New Phenomena

The most straightforward application of exploratory research is in the face of entirely new or emerging phenomena. This scenario is common with the discovery of new material classes (e.g., graphene in its early days) or the observation of unexpected behaviors in known materials.

- Defining Characteristic: The research initiative begins with a broad, open-ended question rather than a focused hypothesis. Examples include: "What are the fundamental electronic properties of this newly synthesized quantum material?" or "What happens to a polymer's structure when subjected to an unprecedented stimulus?" [1].

- Methodological Approach: Research in this phase is highly flexible and relies heavily on qualitative data and open-ended inquiry [1] [8]. Techniques include in-depth interviews with domain experts, observation of material behavior under various conditions, and analysis of insightful case studies from analogous material systems.

- Outcome: The goal is to move from complete uncertainty to a point where specific, researchable questions can be formulated. This process often involves generating initial hypotheses and identifying which variables are critical to measure in subsequent, more structured studies [1].

Mapping Uncharted Properties

Often, a material itself is known, but its full spectrum of properties or its behavior in a specific application context remains uncharted. Exploratory research is used to map this terrain.

- Defining Characteristic: The research focuses on a known subject but with the aim of uncovering and categorizing its unknown attributes. For instance, a researcher might be familiar with a ceramic's thermal stability but not its response to prolonged radiation exposure.

- Methodological Approach: This stage often employs a mix of pilot studies and focused case studies [2]. A pilot study might involve testing a small batch of material under a range of conditions to see which parameters most significantly impact its performance. This helps in refining the experimental setup and data collection instruments for a larger, more definitive study.

- Outcome: The result is a clearer understanding of the material's key characteristics, leading to a well-defined set of variables for future research. It establishes the "what" and "where" before later studies attempt to explain the "why" [10].

Conducting Feasibility Testing

Before committing significant resources to a large-scale experimental study, researchers must assess the practicality of their proposed methods, synthesis pathways, or measurement techniques.

- Defining Characteristic: The central question is one of practicality: "Can this novel nanomaterial be synthesized reliably at a scale sufficient for property testing?" or "Is this experimental protocol suitable for measuring the intended property without damaging the sample?" [2].

- Methodological Approach: Feasibility studies, a form of pilot study, are the primary tool here [2]. This involves executing a small-scale version of the proposed research process to identify logistical problems, estimate time and resource requirements, and test the effectiveness of data collection instruments.

- Outcome: A feasibility assessment determines whether a full study is viable and, if so, provides a refined and validated methodology. It mitigates the risk of pursuing unproductive or flawed research paths, saving time, money, and effort [2].

Methodological Protocols for Exploratory Research

This section details specific experimental protocols and methodologies tailored for exploratory research in a technical context.

The Systematic Literature Review

A systematic literature review is more than a casual reading; it is a methodical process to identify gaps and build foundational knowledge [1] [11].

- Protocol:

- Define Scope: Clearly articulate the broad topic and set boundaries for the search (e.g., materials of a specific class, applications within a certain industry).

- Source Identification: Utilize academic databases (e.g., Scopus, Web of Science), patent repositories, and technical reports.

- Search & Filter: Execute structured keyword searches, followed by application of inclusion/exclusion criteria to filter irrelevant works.

- Synthesize & Analyze: Systematically extract data on material compositions, methods, properties, and reported findings. Identify consistent results and, more importantly, contradictions and areas with a complete lack of data.

- Outcome: A detailed report summarizing the current state of knowledge, explicitly highlighting research gaps and opportunities for novel inquiry, which directly informs the generation of exploratory research questions.

The Pilot Study

A pilot study is a small-scale, preliminary run of a planned experiment or research process [2].

- Protocol:

- Objective Setting: Define what the pilot aims to test (e.g., feasibility of a synthesis procedure, functionality of a new characterization tool).

- Miniature Experimentation: Conduct the proposed methods on a small sample size or limited batch.

- Data Collection & Instrument Validation: Collect initial data and assess whether the chosen analytical instruments (e.g., SEM, XRD) yield the expected quality and type of data.

- Procedure Refinement: Note any practical difficulties, safety issues, or procedural ambiguities. Use these observations to refine the standard operating procedure (SOP) for the main study.

- Outcome: A validated and refined research protocol, estimates of variability for power analysis, and a go/no-go decision for a larger-scale study.

Experience Surveys and Focus Groups

Leveraging expert knowledge is a rapid way to gain insights into complex or novel material systems [11].

- Protocol:

- Participant Selection: Identify and recruit a diverse group of experts (e.g., synthetic chemists, spectroscopists, process engineers).

- Guide Development: Create a semi-structured discussion guide with open-ended questions designed to stimulate conversation and uncover tacit knowledge [1].

- Facilitation: Conduct the session, encouraging dynamic discussion and probing into underlying reasoning and experiences.

- Thematic Analysis: Transcribe and analyze the discussion to identify recurring themes, unique viewpoints, and consensus areas on challenges and opportunities.

- Outcome: Rich, qualitative data on potential applications, perceived challenges, and novel hypotheses grounded in collective expert experience.

The Scientist's Toolkit: Reagents and Methods for Exploration

The following table details key research reagents and methodological solutions commonly employed during the exploratory phase of novel materials research.

Table 2: Key Research Reagent Solutions for Exploratory Materials Research

| Item / Solution | Primary Function in Exploration | Example in Novel Materials Research |

|---|---|---|

| Combinatorial Chemistry Kits | High-throughput synthesis of diverse molecular or material libraries to rapidly screen for desired properties. | Screening hundreds of catalyst compositions simultaneously for activity in a new reaction. |

| High-Throughput Screening (HTS) Platforms | Automated, rapid testing of material libraries against specific assays or property measurements. | Testing a library of polymer thin films for photovoltaic efficiency. |

| Modular Synthesis Apparatus | Flexible lab equipment that can be reconfigured for various synthetic pathways or process conditions. | A glassware system that can be easily adapted for reflux, distillation, or Schlenk line techniques. |

| Broad-Spectrum Characterization Tools | Instruments that provide a wide range of data from a single sample to uncover unexpected properties. | Using Scanning Electron Microscopy (SEM) with EDS for simultaneous morphological and elemental analysis. |

| Computational Simulation Software | Modeling material behavior in silico to generate hypotheses and identify promising candidates for synthesis. | Using Density Functional Theory (DFT) to predict the band gap of a proposed novel semiconductor. |

| Structured Interview Guides | A protocol for consistently gathering qualitative insights from domain experts [1]. | Interviewing battery researchers about failure modes observed in early-stage solid-state electrolyte prototypes. |

Visualizing the Method Selection Logic

The logic for choosing a specific exploratory method based on the research scenario can be visualized as a decision pathway.

In the rigorous, outcome-driven environment of materials science and drug development, the deliberate application of an exploratory research design is a mark of methodological sophistication. It provides the essential scaffolding for breakthrough discoveries by offering a structured yet flexible approach to investigating the unknown. This guide has established that an exploratory paradigm is most critical when confronting new phenomena, mapping uncharted properties, or assessing project feasibility. By employing the detailed protocols, visual workflows, and toolkit resources outlined herein, researchers can confidently navigate the initial ambiguity of pioneering work. This approach ensures that foundational knowledge is securely built, thereby de-risking subsequent investments and paving the way for robust, hypothesis-driven research that can truly exploit the potential of novel materials.

Formulating Effective Exploratory Research Questions for Material Behavior

Exploratory research serves as a critical foundation in materials science, enabling researchers to investigate uncharted territories and generate insights into complex material behaviors. This approach is particularly valuable when studying novel materials where existing theories and models may be inadequate or nonexistent. Unlike confirmatory research that tests specific hypotheses, exploratory inquiry seeks to understand the "who," "how," and "why" behind material phenomena, allowing for the discovery of patterns and relationships that may not be immediately apparent [12]. This methodology fosters creativity and open-mindedness, encouraging researchers to develop a nuanced understanding of material characteristics, performance limitations, and potential applications without the constraints of predetermined outcomes.

Within the broader context of exploratory research design for novel materials, this approach enables scientists to map the landscape of unknown material behaviors before committing to more structured, resource-intensive investigations. The flexible nature of exploratory research makes it ideally suited for initial investigations where parameters are not well-defined and the research domain lacks established frameworks [12]. For materials researchers and drug development professionals, this methodology provides a systematic approach to probe fundamental questions about material-cell interactions, degradation profiles, structural dynamics, and functional performance under various conditions, ultimately paving the way for more targeted studies and hypothesis-driven experimentation.

Theoretical Framework for Exploratory Research Questions

Fundamental Characteristics of Exploratory Questions

Exploratory research questions in materials science possess distinct characteristics that differentiate them from other research approaches. These questions are inherently open-ended, allowing for a wide range of responses and facilitating a deeper understanding of material phenomena [12]. This open-ended nature provides the flexibility needed to adapt research focus as new insights emerge during experimentation, which is particularly valuable when investigating novel materials with unknown properties. Exploratory questions often originate from vague areas of interest rather than precise hypotheses, which frequently leads to unexpected findings that can inspire entirely new research directions [12].

Another critical characteristic is the emphasis on contextual understanding rather than concrete validation. Instead of aiming for definitive answers, exploratory inquiries prioritize comprehending the complexities of material behaviors and interactions [12]. This approach typically employs qualitative methods in initial stages, such as systematic observations and pattern recognition, which promote thorough exploration of material phenomena. The primary objective is to uncover underlying patterns, relationships, and themes that can inform future research directions and hypothesis development, making exploratory research an indispensable preliminary step in the materials research process [12].

Contrasting Exploratory with Other Research Approaches

Exploratory research questions differ significantly from other methodological approaches in materials science. While traditional hypothesis-driven research typically tests specific predictions through controlled experimentation, exploratory inquiry seeks to uncover new insights and generate questions in domains with limited existing knowledge [12]. This distinction is particularly important for researchers investigating novel materials, where established theoretical frameworks may be insufficient or nonexistent.

The table below summarizes key differences between exploratory research and other common methodological approaches in materials science:

Table 1: Comparison of Research Approaches in Materials Science

| Aspect | Exploratory Research | Descriptive Research | Hypothesis-Testing Research |

|---|---|---|---|

| Primary Goal | Discover insights and generate ideas | Describe characteristics of materials | Test specific predictions |

| Structure | Flexible and adaptable | Fixed and structured | Rigorously controlled |

| Data Collection | Emerges during research | Determined beforehand | Precisely defined protocols |

| Theoretical Foundation | Often minimal or emerging | Established framework | Well-developed theoretical base |

| Outcome | New questions and patterns | Comprehensive descriptions | Causal inferences |

Exploratory research emphasizes qualitative data and subjective interpretations, which can lead to rich, nuanced understandings of material behaviors [12]. In contrast, conventional methods often rely on quantitative metrics and structured frameworks. By focusing on exploration rather than confirmation, researchers can identify patterns or themes that may not emerge through more rigid methodologies, enabling deeper investigation of complex material phenomena and paving the way for future specialized studies.

Constructing Effective Exploratory Research Questions

Adapting Frameworks for Materials Research

The construction of effective exploratory research questions in material behavior requires strategic adaptation of established frameworks to address the unique challenges of materials science. The PICO framework (Patient/Population, Intervention, Comparison, Outcome), while originally developed for clinical research, can be effectively modified for materials investigations [13]. In this adapted context, "Population" refers to the specific material system or class being studied; "Intervention" represents processing techniques, environmental exposures, or experimental treatments; "Comparison" entails alternative material compositions or processing conditions; and "Outcome" focuses on observed material properties, behaviors, or performance metrics [13].

For exploratory research in novel materials, the SPICE framework (Setting, Perspective, Intervention/Interest/Exposure, Comparison, Evaluation) offers another valuable approach, particularly for investigations of material processing, service conditions, or policy implications [13]. The "Setting" component defines the environmental or operational context; "Perspective" considers stakeholder viewpoints (e.g., manufacturers, end-users); "Intervention" examines processing treatments or environmental exposures; "Comparison" explores alternative material systems or conditions; and "Evaluation" assesses material performance or behavior metrics. These frameworks provide systematic approaches for researchers to define the fundamental domains of their investigative focus, ensuring comprehensive coverage of relevant aspects in material behavior studies.

Practical Application of Question Development Techniques

Developing impactful exploratory research questions requires methodical techniques tailored to the unique characteristics of material behavior investigation. Researchers should begin by identifying knowledge gaps through comprehensive literature review and anecdotal observations from preliminary experiments [12]. This process helps illuminate areas where existing knowledge is insufficient, sparking curiosity about unexplained material phenomena. Utilizing brainstorming sessions and collaborative workshops with interdisciplinary teams can enhance creativity, resulting in a rich pool of potential exploratory inquiry topics [12].

The following systematic approach facilitates effective question development:

- Define target material systems with precise specifications regarding composition, structure, processing history, and relevant characteristics

- Formulate open-ended questions that encourage expansive thinking and exploration of multiple dimensions of material behavior

- Group related ideas and find common threads to surface insights that may have otherwise remained hidden [12]

- Iteratively refine questions through self-evaluation and peer feedback to incrementally improve specificity and focus

This technique emphasizes creating questions that promote curiosity and exploration while ensuring they encourage dialogue among research team members, allowing participants to share rich insights and varied viewpoints [12]. The ultimate aim is to establish a foundation for further investigation, guiding materials research toward meaningful outcomes with potential for scientific advancement and practical application.

Methodological Protocols for Exploratory Material Research

Essential Elements of Experimental Protocols

Comprehensive experimental protocols are fundamental for ensuring reproducibility and reliability in exploratory materials research. Effective protocols should contain sufficient detail to enable other researchers to replicate experiments precisely and obtain consistent results [14]. Based on analysis of reporting guidelines across major scientific journals and protocol repositories, 17 key data elements have been identified as essential for facilitating proper protocol execution [14].

The table below outlines these critical elements organized by category:

Table 2: Essential Data Elements for Experimental Protocols in Materials Research

| Category | Data Elements | Description and Examples |

|---|---|---|

| Study Context | Objectives, Hypothesis/Rationale, Research Questions | Clear statement of purpose and scientific basis |

| Materials Specification | Sample Description, Reagents, Equipment, Software | Complete details with unique identifiers where available |

| Experimental Setup | Pre-experiment Preparation, Safety Considerations, Environmental Conditions | Setup parameters, safety protocols, ambient conditions |

| Process Documentation | Step-by-Step Instructions, Timing, Parameter Values | Sequential description with precise measurements and durations |

| Quality Assurance | Controls, Troubleshooting, Calibration Procedures | Quality control measures and problem-solving guidance |

| Data Management | Data Recording, Analysis Methods, Deliverables | Data collection standards and analytical approaches |

These elements should be reported with precision and completeness to address common deficiencies in protocol reporting, such as ambiguous parameter descriptions, insufficient reagent specification, and omitted troubleshooting guidance [14]. For instance, when reporting reagents and equipment, researchers should include catalog numbers, manufacturers, and relevant experimental parameters rather than generic descriptions [14]. Similarly, environmental conditions should be specified numerically (e.g., "store samples at 22°C" rather than "room temperature") to eliminate ambiguity [14].

Materials researchers have access to numerous specialized resources for protocol development and access. Major licensed repositories include Springer Nature Experiments (combining Nature Protocols, Nature Methods, and Springer Protocols), Current Protocols series covering multiple specialized domains, and JoVE (Journal of Visualized Experiments) which provides video-based protocol demonstrations [15]. These resources offer peer-reviewed, detailed methodologies that can be adapted for exploratory research on material behavior.

The growing emphasis on open science has expanded availability of open-access protocol resources including Bio-Protocol, a peer-reviewed collection organized by field of study and organisms, and protocols.io, a platform for creating, organizing, and publishing reproducible research protocols [15]. Additionally, the Global Unique Device Identification Database (GUDID) provides key identification information for medical devices, while the Antibody Registry offers universal identification for antibodies used in research involving biological materials [14]. These resources facilitate accurate reporting of key research resources through unique identifiers, enhancing reproducibility in exploratory materials research.

Data Visualization and Analysis in Exploratory Research

Strategic Selection of Comparison Charts

Effective data visualization is essential for identifying patterns and relationships in exploratory materials research. The strategic selection of comparison charts depends on data characteristics and analytical objectives. Bar charts provide the most straightforward approach for comparing categorical data across different groups or conditions, making them ideal for presenting measured material properties across multiple experimental conditions [16]. Line charts effectively display trends over continuous variables such as time, temperature, or stress levels, revealing patterns in material behavior under varying parameters [16].

For composition analysis, pie charts or doughnut charts visualize part-to-whole relationships, showing proportional distributions of phases, elements, or constituents within materials [16]. When examining distributions of measured properties across value ranges, histograms present frequency distributions of quantitative data, revealing patterns in material characteristics, defect distributions, or measurement variations [16]. For complex datasets with multiple variable types, combo charts (hybrid visualizations combining bars and lines) can illustrate different data dimensions simultaneously, such as displaying both absolute measurements and percentage changes in material properties [16].

The following Dot code generates a flowchart guiding chart selection based on data characteristics and research objectives:

Diagram 1: Guide for Selecting Comparison Charts

Principles of Effective Data Presentation in Tables

Tables play an essential role in materials research for presenting detailed numerical data, specifications, and comparative measurements. Unlike charts that emphasize trends and patterns, tables accommodate extensive detailed information while enabling precise value comparisons [17]. Effective table design follows specific formatting principles to enhance readability, clarity, and comprehension of complex materials data.

The structural components of well-formatted tables include a clear title concisely summarizing content, informative column headers identifying data categories, logical row headers labeling each entry, properly aligned data cells, and appropriate summary statistics where applicable [17]. Numerical data should be right-aligned for easy comparison, while text descriptions should be left-aligned [17]. Large numbers should include thousand separators to improve readability, and units of measurement should be clearly indicated in column headers or separate rows [17].

Additional formatting considerations significantly enhance table utility in materials research documentation. Alternating row shading improves readability by visually distinguishing between consecutive data rows [17]. Consistent decimal precision and limitation of decimal places based on measurement precision avoids unnecessary clutter [17]. Strategic highlighting using bold, italics, or color draws attention to critical results or outliers, while grouping related data visually connects similar materials or conditions through spacing or background variations [17]. These practices collectively transform raw materials data into structured, interpretable information that supports the exploratory research process.

The Scientist's Toolkit: Essential Research Reagents and Materials

The selection of appropriate reagents and materials is fundamental to exploratory research on material behavior. Precise specification of these components ensures experimental reproducibility and reliability of research outcomes. The following table details essential categories of research reagents and materials commonly employed in investigations of material behavior, along with their specific functions in experimental protocols:

Table 3: Essential Research Reagents and Materials for Material Behavior Studies

| Reagent/Material Category | Specific Examples | Primary Functions and Applications |

|---|---|---|

| Structural Characterization | X-ray diffractometers, Electron microscopes (SEM/TEM), Atomic force microscopes | Crystallographic analysis, microstructural imaging, surface topography characterization |

| Spectroscopic Analysis | FTIR spectrometers, Raman spectrometers, XPS equipment | Molecular structure identification, chemical bonding analysis, surface composition determination |

| Thermal Analysis | Differential scanning calorimeters (DSC), Thermogravimetric analyzers (TGA) | Phase transition temperatures, thermal stability, decomposition behavior |

| Mechanical Testing | Universal testing machines, Nanoindenters, Dynamic mechanical analyzers | Stress-strain behavior, hardness, viscoelastic properties, fracture toughness |

| Chemical Reagents | Etchants, Solvents, Functionalization compounds | Surface preparation, dissolution processes, chemical modification |

| Sample Preparation | Polishing systems, Microtomes, Sputter coaters | Specimen surface finishing, thin section preparation, conductive coating |

| Reference Materials | Certified reference materials, Calibration standards | Instrument calibration, method validation, measurement uncertainty quantification |

Each reagent and material should be precisely identified using unique identifiers where available, such as catalog numbers, manufacturer specifications, and lot numbers when relevant [14]. This precise documentation addresses the critical need for adequate reporting identified in studies showing that a significant percentage of biomedical research resources lack unique identification in the scientific literature [14]. The Resource Identification Portal provides a centralized platform for locating appropriate identifiers across multiple resources, facilitating accurate reporting practices [14].

For specialized subfields within materials research, additional specific reagents and materials may be required. Researchers should consult domain-specific resources such as the Current Protocols series [15], which provides detailed methodologies across multiple specialized domains, or Cold Spring Harbor Protocols [15], which offers definitive sources for research techniques across biological and materials science disciplines. These resources provide comprehensive guidance on reagent selection, preparation, and application standards essential for conducting rigorous exploratory research on material behavior.

Exploratory research represents a fundamental approach for investigating material behavior, particularly when navigating uncharted territories of novel material systems. The formulation of effective exploratory research questions serves as the critical foundation upon which successful materials research is built, guiding experimental design, methodology selection, and data interpretation. By employing adapted frameworks such as PICO and SPICE, researchers can ensure comprehensive coverage of relevant investigative domains, while adherence to detailed protocol reporting standards enhances reproducibility and scientific rigor.

The dynamic and flexible nature of exploratory research fosters critical thinking and encourages dialogue among researchers, enhancing collective knowledge while stimulating further questions that propel future research endeavors [12]. For materials scientists and drug development professionals, this approach provides a systematic methodology for probing unknown aspects of material behavior, ultimately contributing to advanced material development, optimized processing techniques, and innovative applications across scientific and industrial domains.

The development of novel materials is a complex endeavor that rarely follows a linear path. Instead, it thrives on an iterative process of discovery characterized by flexibility and open-ended inquiry. This approach is particularly valuable when investigating poorly understood phenomena or pioneering unprecedented material systems. Exploratory research serves as the critical first step in this journey, investigating problems that are "not clearly defined, have been under-investigated, or are otherwise poorly understood" [18]. Unlike research designed to derive conclusive results, exploratory research gleans insights that form the foundation for more specific, hypothesis-driven investigation [18].

In the context of materials science, this iterative exploration enables researchers to develop processing-microstructure-property relationships without predetermined constraints. The "Farbige Zustände" (Colored States) method exemplifies this approach, utilizing high-temperature droplet generation to produce thousands of spherical micro-samples for rapid experimentation [19]. This methodology embraces the core characteristics of exploratory research: it is unstructured in nature, focuses on "what" rather than "why," and allows researchers to be flexible, pragmatic, and open-minded throughout the investigation [18]. By adopting this mindset, researchers can navigate the inherent uncertainties of developing novel materials while maximizing opportunities for discovery.

Theoretical Framework: Iterative Principles in Research Design

Characteristics of Iterative, Exploratory Research

Iterative research in materials science embodies several distinct characteristics that differentiate it from conventional linear approaches. It is fundamentally unstructured in nature, avoiding highly standardized data collection protocols that might restrict the type of data obtained [18]. This flexibility enables investigators to explore different dimensions of interest and discover novel information they might not have anticipated. The approach is highly interactive, facilitating dynamic information exchange between researchers and their experiments or data, often through continuous refinement of testing parameters based on preliminary results [18].

Another critical characteristic is its focus on discovery rather than verification. Exploratory research aims to answer questions like "what is the problem?" and "what is the purpose?" rather than explaining why phenomena occur [18]. This perspective is essential for materials researchers investigating unprecedented material systems or processing techniques. While often qualitative in initial stages, iterative materials research frequently progresses to quantitative analysis as patterns emerge, utilizing both observational and statistical methods to build understanding progressively [18]. The entire process operates without strict procedural rules, allowing researchers to adapt methods and directions based on emerging findings rather than predetermined protocols [18].

The Role of Iteration in Hypothesis Development

The iterative process fundamentally transforms how hypotheses emerge and evolve in materials research. In traditional deductive research, hypotheses precede experimentation, but in iterative exploration, hypotheses often emerge from initial data collection [18]. This grounded theory approach allows material scientists to develop nuanced understanding through successive cycles of experimentation and analysis.

For example, when investigating new alloy systems, researchers might begin with broad compositional variations, then iteratively refine their focus based on initial characterization results. This process enables gradual refinement of research questions from broad inquiries to specific, testable hypotheses [18]. Each iteration helps narrow the data requirements for subsequent cycles, ensuring research efforts become increasingly focused and efficient over time [18]. This approach is particularly valuable when studying complex material behaviors such as fracture mechanics in quasi-brittle materials, where "conventional iterative methods" for modeling crack localization may struggle with convergence, necessitating specialized approaches like the Total Iterative Approach [20].

Experimental Methodologies for Iterative Materials Investigation

High-Throughput Experimental Approaches

High-throughput experimentation represents a powerful implementation of iterative principles in materials research. The "Farbige Zustände" method demonstrates how rapid sample generation enables comprehensive exploration of processing parameters. Using a high-temperature droplet generator, researchers can produce "several thousand samples per experiment at a droplet frequency of 20 Hz," creating spherical micro-samples between 300-2000 µm in diameter [19]. This approach achieves remarkable throughput, with researchers generating "more than 6000 individual samples from different steels, heat-treated and characterized within 1 week" [19].

The methodology extends to efficient post-synthesis processing through batch heat treatments. Samples undergo collective austenitization in specialized furnaces followed by quenching, with subsequent tempering operations performed either in conventional furnaces or automated DSC systems with sample changers [19]. This parallel processing capability is essential for maintaining iteration velocity. The approach generates extensive datasets, with researchers determining "more than 90,000 descriptors to specify the material profiles of the different alloys" during intensive characterization weeks [19]. These descriptors provide the foundation for subsequent iterations and more focused investigations.

Table 1: High-Throughput Characterization Techniques for Iterative Materials Research

| Characterization Method | Sample Requirements | Data Output | Throughput Potential |

|---|---|---|---|

| Micro-compression testing | Spherical micro-samples | Force-displacement curves, mechanical work (Wt) | Medium (10 samples per data point) |

| Nano-indentation | Flat, polished surfaces (embedded & sectioned) | Hardness, modulus | High |

| Differential Scanning Calorimetry (DSC) | As-produced spheres | Thermal stability, precipitation behavior | Medium |

| X-ray Diffraction (XRD) | Embedded & polished surfaces | Phase identification, crystal structure | Medium |

| Particle-oriented peening | Spherical micro-samples | Deformation response from impact | High |

Adaptive Characterization Techniques

Iterative materials research requires characterization methods that provide rapid feedback to inform subsequent experimentation. Micro-compression testing exemplifies this approach by performing classic compression tests on spherical micro-samples using miniaturized pressure units that apply defined force while continuously measuring displacement [19]. The resulting force-displacement curves yield mechanical properties that guide further alloy development. Similarly, nano-indentation provides localized mechanical property data, though it requires sample preparation including embedding, grinding, and polishing to create flat surfaces [19].

The iterative paradigm also inspires novel characterization methods specifically designed for high-throughput formats. Particle-oriented peening introduces deformation through fast impact, allowing researchers to assess material response without extensive sample preparation [19]. These adaptive characterization techniques operate within a framework that represents "a paradigm change in materials science," where "the sample defines possible test procedures" rather than conforming to standardized geometries [19]. This fundamental shift enables the rapid iteration cycles essential for exploratory materials research.

Essential Research Tools and Reagents

Table 2: Essential Research Reagent Solutions for High-Throughput Materials Investigation

| Reagent/Equipment | Function in Iterative Research | Key Specifications |

|---|---|---|

| High-temperature droplet generator | Sample synthesis | Temperature up to 1600°C, droplet frequency of 20 Hz, droplet size 300-2000 µm |

| Batch heat treatment equipment | Parallel thermal processing | Vacuum capability (~5×10⁻² mbar), rapid heating (30 K/s), agitated gas quenching |

| Automated DSC with sample changer | Simultaneous heat treatment & thermal analysis | Non-equilibrium heating rates, high sample throughput |

| Micro-compression tester | Mechanical characterization of micro-samples | Miniaturized pressure unit, continuous displacement measurement |

| Embedding resins & polishing systems | Sample preparation for specific analyses | Creates flat, polished surfaces for nano-indentation, XRD |

| Inert gas atmosphere systems | Control of sample oxidation during processing | Maintains inert environment during 6.5m falling distance |

The experimental toolbox for iterative materials research combines specialized equipment adapted from traditional materials science with novel instruments designed specifically for high-throughput investigation. Sample synthesis systems like the high-temperature droplet generator enable rapid alloy prototyping with high reproducibility [19]. Thermal processing equipment must accommodate unusual sample geometries and enable parallel processing, with custom batching equipment capable of handling spherical samples [19].

Characterization instruments increasingly prioritize speed and miniaturization without sacrificing data quality. The integration of multiple characterization modalities within unified workflows is essential for maintaining iteration velocity. As researchers pursue increasingly complex material systems, these toolkits continue to evolve, with special issues dedicated to "Novel Techniques for Materials Characterization" highlighting emerging methods in microscopy, spectroscopy, and diffraction-based analysis [21].

Workflow Visualization of Iterative Research Processes

Iterative Research Workflow for Novel Materials

The visualization above captures the essential cyclic nature of iterative materials research. The process begins with broad research area definition rather than specific hypotheses, followed by high-throughput sample synthesis using methods like droplet generation [19]. Researchers then employ multi-modal characterization to generate diverse descriptors of material behavior [19], leading to data analysis and pattern identification that informs hypothesis development.

This workflow highlights how iterative refinement guides the research direction based on emerging findings rather than predetermined endpoints [18]. The loop continues through multiple cycles until sufficient understanding is achieved to transition to conclusive research phases. This nonlinear approach stands in contrast to traditional linear methodologies and enables more responsive investigation of complex material systems.

Data Management and Analysis in Iterative Research

Handling Multi-dimensional Datasets

Iterative, high-throughput materials research generates complex, multi-dimensional datasets that require specialized management and analysis approaches. The "Farbige Zustände" method demonstrates this scale, producing "more than 90,000 descriptors" from thousands of individual samples in a single week [19]. These datasets encompass diverse data types including physical, mechanical, technological, and electrochemical properties that collectively define material profiles [19].

Effective iteration requires rapid data reduction techniques that transform raw experimental measurements into actionable insights. For composite materials, this might involve determining fundamental elastic constants and strengths from multilayer specimen tests, then using "appropriate laminate theory to reduce the results in terms of lamina properties" [22]. The volume fraction of constituents represents "the single most important parameter influencing the composite's properties" and serves as a critical variable for iteration [22]. Managing these complex relationships demands structured data frameworks that maintain context across iterative cycles.

Knowledge Systems for Iterative Research

Advanced knowledge management systems play an increasingly important role in iterative materials research. Knowledge graph tools enable researchers to "interlink diverse data sources for a holistic view," creating "interconnected data systems" that make information visible and connected [23]. These systems provide "coherent and searchable frameworks for enhanced analytics and insights" that accelerate the iteration process [23].

The implementation of these systems typically involves graph databases like Neo4j, Amazon Neptune, and Microsoft Azure Cosmos DB, which are "optimized for storing and managing relationships" inherent in materials research data [23]. These tools facilitate relationship emphasis that helps researchers "reveal how different data sets are linked," providing "contextual search, suggestions, or visualizations of the relationships between data" [23]. This capability is particularly valuable for identifying non-obvious correlations across iterative experimentation cycles.

Case Study: Iterative Development of Novel Steel Alloys

A concrete example of iterative materials research can be found in the investigation of Ni-modified X210Cr12 steel variants [19]. Researchers began with broad compositional exploration, producing multiple alloy variants including X210Cr12NiX with X = 0, 2, 4 mass% [19]. This initial iteration established baseline properties in the "initial condition after being quenched directly after droplet solidification in oil" [19].

Subsequent iterations introduced systematic heat treatment variations, including Q3T1 (tempered at 180°C for 2h) and Q3T5 (tempered at 580°C for 2h) conditions [19]. Each iteration generated characterization data from multiple techniques, including micro-compression testing that provided "force-displacement curves" from which "the mechanical work, Wt, can be determined independent of the loading" [19]. This iterative approach enabled researchers to efficiently map the complex relationships between composition, processing, microstructure, and properties without predetermined constraints.

The case study demonstrates how iterative methods enable resource-efficient materials development. The spherical micro-samples used in this approach have a mass approximately 1/1300th of a conventional tensile test sample, representing significant efficiency in material usage during the exploratory phase [19]. This efficiency enables researchers to investigate a broader experimental space with the same resources, accelerating the discovery of novel material systems with tailored properties.

The iterative process represents more than a methodological choice—it embodies a fundamental shift in how researchers approach complex problems in materials science. By embracing flexibility and open-ended inquiry, materials researchers can navigate the inherent uncertainties of developing novel material systems while maximizing opportunities for discovery. The high-throughput methodologies and adaptive characterization techniques described in this work provide practical frameworks for implementing iterative approaches across diverse materials classes.

As the field advances, the integration of machine learning algorithms with knowledge graphs promises to further enhance iterative research by systematically "improving the accuracy of machine learning systems" and extending "their range of capabilities" [23]. These developments will continue to transform exploratory materials research from art to science, enabling more efficient translation of fundamental discoveries to practical applications. By institutionalizing these iterative approaches, research organizations can accelerate innovation while maintaining the rigor necessary for scientific advancement.

A Practical Toolkit: Methodological Approaches and Real-World Applications in Materials Science

Within the rigorous domain of novel materials research, the path from conceptualization to application is fraught with complex, non-quantifiable challenges. While quantitative data reveals what works, qualitative research uncovers the critical how and why behind these outcomes [24]. This guide details the application of two foundational qualitative methods—In-Depth Interviews (IDIs) and Focus Groups—within the context of exploratory research design for novel materials. These methods are uniquely capable of capturing the deep, experiential knowledge of domain experts, thereby illuminating latent barriers, unanticipated application pathways, and nuanced decision-making processes that remain invisible to purely quantitative approaches [9]. By systematically employing these techniques, researchers can deconstruct the complexities of materials development, optimizing interventions and accelerating the translation of laboratory innovation into tangible solutions.

Foundational Concepts and Research Objectives

Qualitative research is defined as “the study of the nature of phenomena,” focusing on their quality, different manifestations, and the context in which they appear [24]. In applied research, including materials science, qualitative studies can be categorized by three primary objectives, each demanding distinct methodological considerations [9].

- Exploratory Studies: These are initiated when little to no prior data exists on a specific topic. The research aims are broad, seeking to identify key issues, generate hypotheses, and map the conceptual terrain. In novel materials research, this might involve investigating expert perceptions of a material's potential applications or the initial challenges in scaling up a new synthesis process.

- Descriptive Studies: When exploratory data exists, descriptive studies aim to provide a detailed and accurate account of a phenomenon's characteristics within a specific context. For example, a descriptive study might detail the standard operating procedures and decision-making criteria used by different research groups when characterizing a specific class of polymers.

- Comparative Studies: These studies build upon existing exploratory and descriptive knowledge to examine differences between groups or conditions. A comparative approach might be used to understand why one research consortium successfully commercialized a material while another, working on a similar technology, failed, explicitly comparing leadership, resource allocation, and collaboration dynamics.

Selecting between In-Depth Interviews and Focus Groups must align with the overarching research objective, as each method offers distinct advantages for generating specific types of evidence within this framework [9] [25].

Core Methodologies: A Detailed Examination

In-Depth Interviews (IDIs) with Experts

In-depth interviews are one-on-one conversations between a researcher and an expert participant, structured around open-ended questions to obtain rich, detailed insights into individual perspectives, experiences, and reasoning [25]. The flexible, adaptive nature of IDIs makes them particularly valuable for probing complex, specialist knowledge.

Key Benefits:

- Depth of Insight: IDIs excel at uncovering nuanced opinions, personal experiences, and sophisticated technical reasoning that may not emerge in a group setting [25].

- Confidentiality: The private nature of the interview fosters trust, making it the preferred method for discussing sensitive topics such as proprietary research methods, funding challenges, or critical opinions of prevailing scientific paradigms [26].

- Flexibility: Researchers can adapt questions in real-time based on the participant's responses, allowing for personalized exploration of topics and the organic emergence of unanticipated lines of inquiry [25].

- Complexity Management: The one-on-one format enables a thorough exploration of complex, multifaceted technical topics that require extended discussion and deep reflection [25].

Table 1: Key Applications and Advantages of In-Depth Interviews

| Application Scenario | Primary Advantage | Expert Type |

|---|---|---|

| Investigating proprietary or sensitive R&D processes | Confidentiality and trust enable candid discussion | Senior Principal Investigator, CTO |

| Understanding complex, individual decision-making | Depth of insight into personal reasoning and heuristics | Materials Synthesis Specialist, Process Engineer |