Empirical vs. In Silico Off-Target Prediction: A Guide for Safer Therapeutics and Genome Editing

This article provides a comprehensive analysis for researchers and drug development professionals on the critical task of predicting off-target effects, a major challenge in drug discovery and CRISPR-based genome editing.

Empirical vs. In Silico Off-Target Prediction: A Guide for Safer Therapeutics and Genome Editing

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on the critical task of predicting off-target effects, a major challenge in drug discovery and CRISPR-based genome editing. We explore the foundational principles of both empirical (experimental) and in silico (computational) prediction methods, detailing their specific applications and workflows. The content further offers strategies for troubleshooting and optimizing these approaches, and concludes with a rigorous framework for the validation and comparative assessment of predictions. By synthesizing insights from both methodologies, this guide aims to equip scientists with the knowledge to build safer, more reliable development pipelines for novel therapeutics and gene therapies.

Understanding Off-Target Effects: Why Prediction is Crucial for Safety and Efficacy

In both small-molecule drug discovery and CRISPR-Cas9 genome editing, off-target effects represent a fundamental challenge that can compromise therapeutic efficacy and safety. While these fields operate through distinct mechanisms—small molecules modulating protein function versus CRISPR enzymes cleaving DNA—they share the common vulnerability of unintended interactions. In pharmacology, off-target effects occur when a drug interacts with proteins or pathways other than its primary intended target, potentially causing adverse reactions or revealing new therapeutic applications through drug repurposing [1]. In genome editing, off-target effects refer to unintended cleavage at genomic sites with sequence similarity to the intended target, which could lead to detrimental mutations and carcinogenic potential [2]. Understanding these parallel phenomena is critical for advancing therapeutic development, necessitating a comprehensive comparison of the empirical and computational methods used to predict and characterize these effects across disciplines.

Off-Target Effects in Small-Molecule Drugs

Mechanisms and Implications

Small-molecule drugs typically exert their effects by binding to specific protein targets, but their polypharmacology—interaction with multiple targets—can lead to both detrimental side effects and beneficial repurposing opportunities. For instance, nonsteroidal anti-inflammatory drugs (NSAIDs) primarily target cyclooxygenase (COX) enzymes to alleviate pain and inflammation but can cause gastrointestinal damage due to COX-1 inhibition [1]. Conversely, positive off-target effects have enabled successful drug repurposing, as demonstrated by Gleevec (originally for leukemia) being redeployed for gastrointestinal stromal tumors, and Viagra (originally for hypertension) finding application for erectile dysfunction [1]. These examples underscore the dual nature of off-target effects in pharmacology, where unintended interactions can simultaneously represent significant clinical risks and opportunities for therapeutic innovation.

Prediction Methods for Small-Molecule Off-Target Effects

Computational prediction of small-molecule off-target effects relies primarily on two approaches: target-centric and ligand-centric methods. Target-centric methods build predictive models for specific protein targets using Quantitative Structure-Activity Relationship (QSAR) models with machine learning algorithms like random forest or Naïve Bayes classifiers, or through molecular docking simulations that leverage 3D protein structures [1]. Ligand-centric methods focus on similarity between query molecules and known ligands annotated with their targets, assuming that structurally similar molecules share biological targets [1].

A 2025 systematic comparison of seven target prediction methods using a shared benchmark dataset of FDA-approved drugs revealed significant performance variations [1]. The study evaluated stand-alone codes and web servers including MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred, with MolTarPred emerging as the most effective method [1]. The research also explored optimization strategies, finding that high-confidence filtering reduces recall, making it less ideal for drug repurposing applications where broader target identification is valuable [1].

Table 1: Comparison of Small-Molecule Target Prediction Methods [1]

| Method | Type | Algorithm | Key Features | Database Source |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity | MACCS fingerprints; Top 1,5,10,15 similar ligands | ChEMBL 20 |

| PPB2 | Ligand-centric | Nearest neighbor/Naïve Bayes/deep neural network | MQN, Xfp, ECFP4 fingerprints; Top 2000 similar ligands | ChEMBL 22 |

| RF-QSAR | Target-centric | Random forest | ECFP4 fingerprints; Top 4,7,11,33,66,88,110 similar ligands | ChEMBL 20&21 |

| TargetNet | Target-centric | Naïve Bayes | FP2, Daylight-like, MACCS, E-state, ECFP2/4/6 fingerprints | BindingDB |

| ChEMBL | Target-centric | Random forest | Morgan fingerprints | ChEMBL 24 |

| CMTNN | Target-centric | ONNX runtime | Morgan fingerprints | ChEMBL 34 |

| SuperPred | Ligand-centric | 2D/fragment/3D similarity | ECFP4 fingerprints | ChEMBL & BindingDB |

Experimental Validation for Small-Molecule Off-Target Effects

Binding affinity assays serve as the gold standard for experimentally validating predicted drug-target interactions. These assays quantitatively measure the strength of interaction between a small molecule and its protein target, providing crucial data on binding constants (Kd), inhibitory concentrations (IC50), or effective concentrations (EC50) [1]. The experimental protocol typically involves:

- Target Preparation: Purifying the recombinant protein of interest and confirming its structural integrity and functionality.

- Compound Preparation: Serially diluting the small molecule compound in appropriate buffers to create a concentration gradient.

- Binding Measurement: Utilizing techniques such as surface plasmon resonance (SPR), isothermal titration calorimetry (ITC), or fluorescence polarization to detect and quantify molecular interactions.

- Data Analysis: Calculating binding parameters from the measured data using appropriate mathematical models.

For comprehensive off-target profiling, high-throughput screening approaches using protein arrays or fragment-based screening methods can systematically evaluate compound interactions across hundreds of potential targets simultaneously [1].

Off-Target Effects in CRISPR-Cas9 Genome Editing

Mechanisms and Consequences

CRISPR-Cas9 genome editing operates through the guidance of a programmable RNA molecule (sgRNA) to direct the Cas9 nuclease to specific DNA sequences, where it introduces double-strand breaks. Off-target effects occur when Cas9 cleaves DNA at sites with sequence similarity to the intended target, particularly at loci with mismatches, especially in the PAM-distal region, or DNA bulges [2]. The frequency of off-target activity can be as high as 50% or more in some applications, raising significant concerns for therapeutic use where unintended mutations could disrupt tumor suppressor genes, activate oncogenes, or cause other detrimental genetic alterations [2]. The core challenge stems from the inherent flexibility of the Cas9-sgRNA complex, which can tolerate certain degrees of sequence mismatch while maintaining catalytic activity.

Prediction Methods for CRISPR-Cas9 Off-Target Effects

Computational prediction of CRISPR off-target effects has evolved from simple sequence similarity algorithms to sophisticated machine learning and deep learning models that incorporate multiple genomic and molecular features. Traditional methods relied primarily on sequence alignment techniques to identify genomic sites with homology to the sgRNA, but these approaches often lacked comprehensive understanding of the cellular context and Cas9 behavior [3].

Modern deep learning tools analyze diverse features including chromatin accessibility, DNA methylation status, sgRNA sequence composition, and Cas9 version-specific characteristics to predict cleavage probabilities at potential off-target sites [3]. These models are trained on large datasets generated from experimental methods such as CIRCLE-seq, GUIDE-seq, and BLESS, which comprehensively map Cas9 cleavage sites across the genome [3]. However, the prediction accuracy of these models remains limited by the amount and quality of available training data, and as more sequence and cellular features are incorporated, predictions are expected to better align with experimental results [3].

Table 2: Comparison of CRISPR-Cas9 Off-Target Prediction and Mitigation Approaches

| Method Category | Examples | Key Principles | Strengths | Limitations |

|---|---|---|---|---|

| Computational Prediction | Deep learning models, Sequence alignment tools | Identification of genomic sites with sequence similarity to target | Scalability, pre-experimental guidance | Accuracy limited by training data quality |

| Experimental Detection | GUIDE-seq, CIRCLE-seq, BLESS | Genome-wide mapping of Cas9 cleavage sites | Comprehensive, empirical data | Technical variability, cost |

| Cas9 Engineering | High-fidelity variants, Nickases | Structural modifications to reduce off-target binding | Reduced off-target activity with maintained on-target efficiency | Potential reduction in on-target efficiency |

| sgRNA Optimization | Specificity scoring, Modified sgRNAs | Design improvements to enhance target discrimination | Easily implementable, cost-effective | Limited efficacy as standalone approach |

Experimental Detection of CRISPR Off-Target Effects

GUIDE-seq (Genome-wide Unbiased Identification of DSBs Enabled by Sequencing) represents one of the most comprehensive methods for empirically detecting CRISPR off-target effects. The detailed experimental protocol includes:

- Transfection: Co-deliver Cas9-sgRNA ribonucleoprotein complexes with double-stranded oligodeoxynucleotides (dsODNs) into target cells.

- Integration: The dsODNs integrate into double-strand break sites through non-homologous end joining, tagging both on-target and off-target cleavage locations.

- Genomic DNA Extraction: Harvest cells 48-72 hours post-transfection and isolate genomic DNA.

- Library Preparation and Sequencing: Fragment DNA, enrich for dsODN-integrated fragments via PCR, and perform high-throughput sequencing.

- Bioinformatic Analysis: Map sequencing reads to the reference genome to identify all dsODN integration sites, representing Cas9 cleavage events.

This method typically detects off-target sites with high sensitivity, though it may miss off-target events occurring in low-abundance cell populations or difficult-to-sequence genomic regions [2].

Comparative Analysis: Empirical vs. In Silico Prediction Methods

Small-Molecule Drug Discovery

The comparison between empirical and computational approaches for predicting small-molecule off-target effects reveals complementary strengths and limitations. Empirical methods such as binding affinity assays and high-throughput screening provide direct, experimental evidence of drug-target interactions but are resource-intensive, low-throughput, and may miss interactions under specific cellular conditions [1]. In silico methods offer high-throughput capabilities and can predict interactions for novel compounds without synthesizing them, but their accuracy depends heavily on the quality and comprehensiveness of training data, and they may generate false positives that require experimental validation [1].

A key finding from recent research is that no single computational method outperforms all others across all scenarios, with different tools exhibiting specialized strengths depending on the specific application [1]. For instance, methods optimized for high-confidence predictions may sacrifice sensitivity, making them less suitable for drug repurposing where broader target identification is valuable [1]. Furthermore, the choice of molecular fingerprints and similarity metrics significantly impacts prediction performance, with Morgan fingerprints with Tanimoto scores outperforming MACCS fingerprints with Dice scores in the MolTarPred platform [1].

CRISPR-Cas9 Genome Editing

In CRISPR-Cas9 applications, empirical off-target detection methods provide the most comprehensive and reliable identification of unintended cleavage events but require significant experimental effort and may not detect off-targets occurring in rare cell populations [2] [3]. Computational prediction tools offer the advantage of guiding sgRNA design before any experimental work, potentially saving time and resources, but current models still show limited accuracy and must continually evolve as more training data becomes available [3].

The most effective approach emerges as a hybrid strategy that combines computational prediction with empirical validation. Initial sgRNA selection using multiple prediction tools followed by comprehensive off-target assessment using sensitive experimental methods like GUIDE-seq provides a balanced approach that maximizes on-target efficiency while minimizing off-target risks [2] [3]. Additionally, the development of high-fidelity Cas9 variants with reduced off-target propensity represents a complementary engineering approach that addresses the problem at the molecular level [2].

Integrated Workflow and Research Toolkit

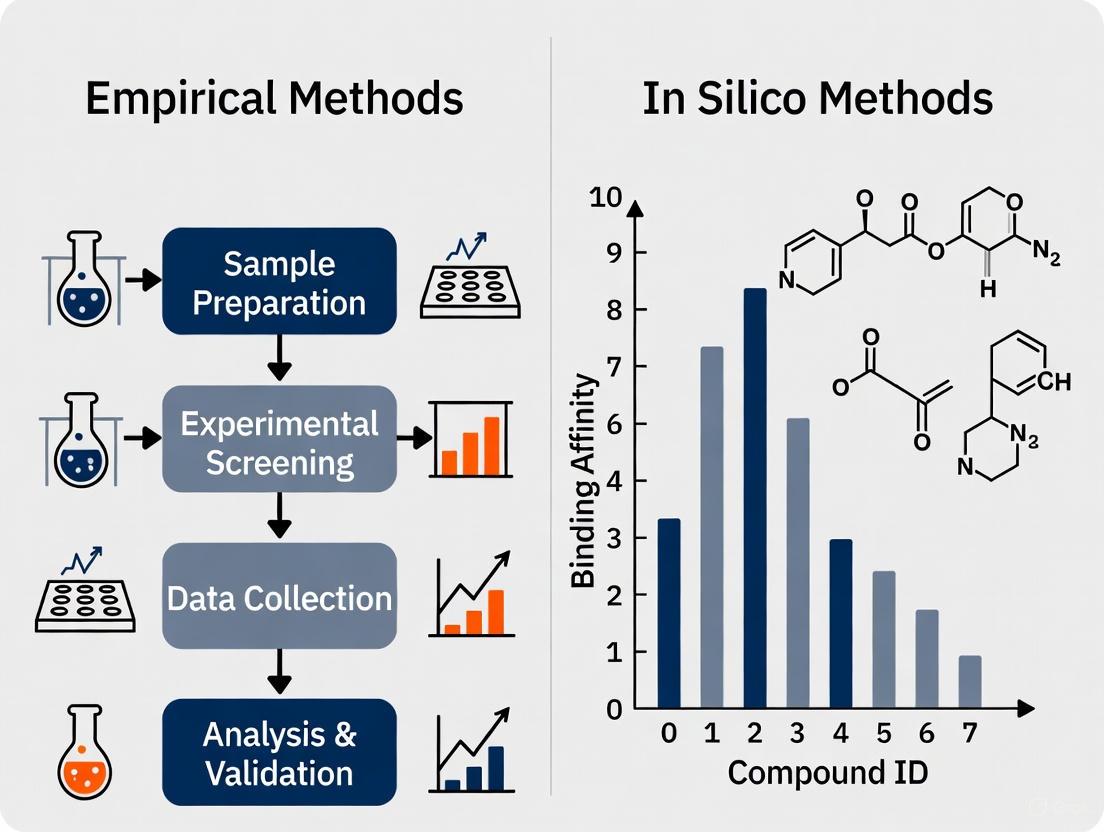

Experimental Workflow Diagram

The following diagram illustrates an integrated approach for off-target assessment that combines computational prediction with experimental validation, applicable to both small-molecule and CRISPR-Cas9 development pipelines:

Research Reagent Solutions

Table 3: Essential Research Reagents for Off-Target Assessment

| Reagent/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Bioactivity Databases | ChEMBL, BindingDB, DrugBank | Source of annotated compound-target interactions | Small-molecule target prediction |

| Genome Editing Databases | CRISPR-specific databases (multiple) | Repository of sgRNA sequences and off-target data | CRISPR off-target prediction |

| Target Prediction Servers | MolTarPred, PPB2, RF-QSAR, TargetNet | Ligand- and target-centric prediction algorithms | Small-molecule off-target screening |

| CRISPR Prediction Tools | Deep learning models (various) | sgRNA specificity scoring and off-target site prediction | CRISPR experimental design |

| Detection Kits | GUIDE-seq, CIRCLE-seq kits | Experimental detection of DNA cleavage sites | CRISPR off-target validation |

| Binding Assay Reagents | SPR chips, fluorescence polarization kits | Quantitative measurement of molecular interactions | Small-molecule binding validation |

| Cas9 Variants | High-fidelity Cas9, Nickases | Engineered nucleases with reduced off-target activity | CRISPR genome editing |

| Control Compounds | Known promiscuous binders, reference standards | Assay validation and quality control | Small-molecule screening |

The systematic comparison of off-target effects across small-molecule drugs and CRISPR-Cas9 genome editing reveals both domain-specific challenges and common themes in prediction and mitigation strategies. While the mechanisms fundamentally differ—protein-ligand interactions versus DNA-enzyme recognition—both fields face similar limitations in purely computational or exclusively empirical approaches. The most effective frameworks integrate multiple prediction methods with orthogonal experimental validation, acknowledging that our understanding of off-target effects remains incomplete despite significant advances.

For small-molecule drug discovery, the evolution of target prediction methods continues to improve our ability to anticipate polypharmacology, though the trade-off between sensitivity and specificity requires careful consideration based on application context [1]. In CRISPR-Cas9 genome editing, the development of more sophisticated deep learning models and sensitive detection methods has enhanced our capacity to identify potential off-target sites, though accuracy limitations persist [3]. Across both domains, the integration of computational and empirical approaches provides the most robust strategy for characterizing off-target effects, ultimately supporting the development of safer, more precise therapeutic interventions.

The advent of CRISPR-based gene editing has revolutionized biomedical research and therapeutic development, culminating in the recent approval of the first CRISPR medicines for sickle cell disease and beta-thalassemia. However, this breakthrough technology carries an inherent risk: off-target effects, where unintended edits occur at genomic locations beyond the intended target. These unintended mutations pose significant challenges for clinical translation, potentially compromising both therapeutic efficacy and patient safety. The precise evaluation of off-target activity has become a critical bottleneck in the development pathway, sparking an ongoing debate between proponents of empirical methods (laboratory-based detection) and in silico approaches (computational prediction) for comprehensive off-target assessment [4] [5] [6].

This guide provides an objective comparison of the current methodologies for CRISPR off-target prediction and detection, focusing on their application in preclinical safety assessment. We examine the performance characteristics, experimental requirements, and practical considerations for both computational and empirical approaches, providing drug development professionals with the data needed to inform their safety evaluation strategies.

Methodological Frameworks: Empirical vs. In Silico Approaches

Off-target assessment methodologies fall into two broad categories: empirical detection through laboratory experiments and computational prediction via bioinformatic tools. The table below summarizes the core characteristics of each approach.

Table 1: Fundamental Characteristics of Off-Target Assessment Methods

| Feature | Empirical Methods | In Silico Methods |

|---|---|---|

| Basic Principle | Direct detection of DNA breaks or repair outcomes in laboratory settings | Computational prediction of potential off-target sites based on sequence similarity and algorithms |

| Data Requirements | Isolated genomic DNA or edited cells; sequencing infrastructure | Reference genome and guide RNA sequence |

| Key Examples | GUIDE-seq, CIRCLE-seq, DISCOVER-seq, Digenome-seq | Cas-OFFinder, CCTop, CRISOT, CCLMoff, DNABERT-Epi |

| Throughput | Lower; requires experimental work for each guide RNA | Higher; rapid screening of multiple guide designs |

| Cost Considerations | Higher due to reagents and sequencing | Lower; primarily computational resources |

| Regulatory Acceptance | Often expected for clinical applications [7] [6] | Used for initial screening and guide selection |

The Empirical Toolkit: Wet-Lab Detection Methods

Empirical methods directly detect the molecular consequences of CRISPR activity through various laboratory techniques. The methodology varies significantly based on whether the analysis occurs in controlled cell-free systems or within the complex environment of living cells.

Table 2: Experimental Methods for Off-Target Detection

| Method | Type | Core Principle | Key Strengths | Key Limitations |

|---|---|---|---|---|

| GUIDE-seq [4] [8] | In cellula | Tags double-strand breaks with oligonucleotides for sequencing | Genome-wide, works in living cells | Lower sensitivity for rare events, requires oligonucleotide delivery |

| CIRCLE-seq [4] [9] [8] | In vitro | Circularizes DNA for ultra-sensitive detection of cleavage in genomic DNA | Extremely sensitive, cell-free system | Lacks cellular context (chromatin, DNA repair) |

| DISCOVER-seq [4] [8] | In cellula | Detects DNA repair factors recruited to break sites | Captures editing in relevant cellular contexts | Limited to active repair sites, moderate sensitivity |

| Digenome-seq [9] [8] | In vitro | In vitro digestion of genomic DNA followed by sequencing | Sensitive, works with low input DNA | Lacks cellular context, computationally intensive |

| BLESS [9] [8] | In cellula | Direct labeling of DNA breaks in fixed cells | Captures transient breaks, multiple nuclease types | Requires fixation, not all breaks may be captured |

| CHANGE-seq [8] | In vitro | High-throughput sequencing of cleaved DNA fragments | Quantitative, highly sensitive | Lacks cellular context |

The following diagram illustrates the fundamental workflow differences between major empirical detection methods:

Computational Prediction: The In Silico Landscape

In silico methods predict potential off-target sites using algorithms that identify genomic locations with sequence similarity to the guide RNA target. These tools have evolved from simple sequence alignment to sophisticated machine learning models incorporating various biological features.

Table 3: Computational Tools for Off-Target Prediction

| Tool | Algorithm Type | Key Features | Strengths | Limitations |

|---|---|---|---|---|

| Cas-OFFinder [8] [6] | Alignment-based | Finds potential off-target sites with bulges and mismatches | Comprehensive search, user-friendly | Limited to sequence features only |

| CCTop [4] [8] | Formula-based | Weighting of mismatch positions (PAM-distal vs PAM-proximal) | Position-specific scoring, web interface | Limited validation in primary cells |

| CRISOT [10] | Learning-based (MD-informed) | Molecular dynamics simulations for interaction fingerprints | Incorporates biophysical properties | Computationally intensive |

| CCLMoff [8] | Learning-based (Transformer) | RNA language model pretrained on diverse datasets | Strong generalization across data types | Complex implementation |

| DNABERT-Epi [11] | Learning-based (Foundation model) | DNABERT pretrained on human genome + epigenetic features | State-of-art performance, multi-modal | Requires epigenetic data input |

Recent advances incorporate deeper biological understanding. CRISOT uses molecular dynamics simulations to derive RNA-DNA interaction fingerprints that capture the biophysical properties of Cas9 binding [10]. Meanwhile, DNABERT-Epi leverages a foundation model pretrained on the human genome and integrates epigenetic features (H3K4me3, H3K27ac, ATAC-seq) that significantly enhance prediction accuracy by accounting for chromatin context [11].

The following diagram illustrates how modern computational tools integrate multiple data types for improved off-target prediction:

Head-to-Head Comparison: Performance in Clinically Relevant Models

A critical 2023 study directly compared both prediction and detection methods in primary human hematopoietic stem and progenitor cells (HSPCs) - a clinically relevant model for ex vivo gene therapies [4]. Researchers evaluated 11 different gRNAs with both high-fidelity (HiFi) Cas9 and wild-type Cas9, then performed targeted sequencing of nominated off-target sites.

Table 4: Experimental Performance Comparison in Primary Human HSPCs

| Method | Type | Sensitivity | Positive Predictive Value (PPV) | Key Findings |

|---|---|---|---|---|

| COSMID [4] | In silico | High | High | Among highest PPV, effective for HiFi Cas9 |

| CCTop [4] | In silico | High | Moderate | More permissive mismatch criteria (5 vs 3) |

| Cas-OFFinder [4] | In silico | High | Moderate | Comprehensive search including bulges |

| GUIDE-seq [4] | Empirical | High | High | High PPV in cellular context |

| DISCOVER-seq [4] | Empirical | High | High | High PPV, detects active repair |

| CIRCLE-seq [4] | Empirical | High | Moderate | Ultra-sensitive but may overpredict |

| SITE-seq [4] | Empirical | Lower | Moderate | Missed some validated sites |

This comparative analysis revealed several critical insights for therapeutic development:

Off-target editing in primary HSPCs is rare, with an average of less than one off-target site per gRNA when using HiFi Cas9 [4]

High-fidelity Cas9 variants dramatically reduce off-target activity without completely eliminating it [4] [6]

Empirical methods did not identify off-target sites that were not also identified by bioinformatic methods in this clinically relevant system [4]

Refined bioinformatic algorithms can maintain both high sensitivity and PPV, potentially enabling efficient identification without comprehensive empirical screening for every gRNA [4]

Successful off-target assessment requires careful selection of reagents and methodologies. The following table outlines key solutions for comprehensive off-target evaluation.

Table 5: Research Reagent Solutions for Off-Target Assessment

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| High-Fidelity Cas9 [4] [6] | Engineered nuclease with reduced off-target activity | HiFi Cas9, eSpCas9, SpCas9-HF1; significantly reduces but doesn't eliminate off-targets |

| Chemically Modified gRNAs [7] [6] | Enhanced stability and specificity | 2'-O-methyl analogs (2'-O-Me), phosphorothioate bonds reduce off-target editing |

| Truncated gRNAs (tru-gRNAs) [9] [6] | Shorter guides with reduced off-target potential | 17-18nt spacers instead of 20nt; reduce off-target while maintaining on-target activity |

| Cas9 Nickase [9] [6] | Single-strand cutting enzyme requiring paired gRNAs | Dramatically reduces off-target effects; requires two closely spaced target sites |

| Specificity-Enhanced Base Editors [6] | DNA base editing without double-strand breaks | Reduced off-target compared to nuclease editing; but still require careful assessment |

| Ribonucleoprotein (RNP) Delivery [6] | Direct delivery of precomplexed Cas9-gRNA | Transient activity reduces off-target potential compared to plasmid delivery |

Regulatory Considerations and Strategic Implementation

Regulatory agencies including the FDA and EMA now expect thorough off-target assessment for CRISPR-based therapeutics [7] [6]. The recent approval of Casgevy (exa-cel) involved extensive evaluation of potential off-target effects, with particular attention to patients carrying rare genetic variants that might create novel off-target sites [7].

A strategic approach to off-target assessment should include:

Initial computational screening of guide RNA designs using multiple algorithms to select candidates with minimal predicted off-targets [4] [6]

Combinatorial testing approaches using both cell-free methods (CIRCLE-seq, Digenome-seq) for sensitivity and cell-based methods (GUIDE-seq, DISCOVER-seq) for biological relevance [4] [6]

Final validation in therapeutically relevant cell types using targeted sequencing of nominated sites, as chromatin structure and DNA repair mechanisms can vary between cell types [4] [6]

The following decision framework provides a systematic approach to off-target assessment for therapeutic development:

The comprehensive comparison of off-target assessment methods reveals that both empirical and in silico approaches offer complementary strengths for therapeutic development. While empirical methods provide direct experimental evidence of nuclease activity, advanced computational tools now achieve comparable performance in predicting clinically relevant off-target sites [4].

For therapeutic developers, the strategic integration of both approaches provides the most robust safety assessment. Initial computational screening enables efficient guide RNA selection, followed by empirical validation in therapeutically relevant models. The field is evolving toward refined bioinformatic algorithms that maintain both high sensitivity and positive predictive value, potentially reducing the need for exhaustive empirical screening for every candidate [4].

As CRISPR therapeutics expand to treat more genetic diseases, the rigorous assessment of off-target effects remains essential for ensuring patient safety and regulatory approval. The continuing refinement of both prediction and detection methodologies will further enhance the safety profile of these transformative medicines, ultimately fulfilling their potential to treat previously incurable genetic diseases.

In the realm of CRISPR-Cas9 genome editing, the precision of therapeutic and research applications is fundamentally governed by understanding core concepts like Protospacer Adjacent Motif (PAM) requirements, single guide RNA (sgRNA) mismatch tolerance, and the emerging field of polypharmacology. The PAM sequence, a short DNA motif adjacent to the target site, is essential for initiating Cas9 binding and cleavage, thereby defining the editable genomic space [12]. Meanwhile, sgRNA mismatches—particularly those distal to the PAM—can lead to off-target editing, where unintended genomic loci are cleaved, posing significant safety risks in therapeutic contexts [13]. Polypharmacology, which involves predicting a drug's interaction with multiple targets, shares a conceptual parallel with off-target prediction: both require robust models to anticipate unintended interactions, whether for small-molecule drugs or CRISPR guide RNAs [1].

The central thesis driving methodological innovation is a critical trade-off between empirical approaches, which rely on experimental measurement of editing outcomes, and in silico methods, which use computational models to predict off-target effects. Empirical methods provide direct biological evidence but are often low-throughput and resource-intensive. In silico predictions offer scalability but have historically struggled with accuracy and generalizability. This guide objectively compares the performance of these methodological paradigms, providing a structured analysis of their capabilities, limitations, and the experimental data that underpin current best practices in the field.

Empirical Methods for Off-Target Assessment

Empirical methods directly measure CRISPR-Cas9 editing outcomes in experimental systems, providing tangible data on on-target efficiency and off-target activity. These approaches are indispensable for validating the safety and specificity of editing systems, as they capture the complex biological reality of cellular environments.

Key Experimental Protocols and Workflows

Several high-throughput experimental methods have been developed to profile CRISPR-Cas9 activity genome-wide:

Primer-Extension-Mediated Sequencing (PEM-seq): This method comprehensively captures various editing outcomes, including small insertions/deletions (indels), large deletions, and off-target translocations [14]. The workflow begins by transfecting cells with Cas9 and sgRNA plasmids, followed by fluorescence-activated cell sorting (FACS) to isolate successfully transfected cells. Genomic DNA is then extracted, and a biotinylated primer is used for primer extension near the Cas9 target site. After extension, the DNA is pulled down, and a nested PCR is performed to create sequencing libraries, which are then analyzed to identify off-target sites and structural variations.

High-Throughput Robotic Isolation of Clones: For fragile cell types like human induced pluripotent stem cells (iPS cells), a clump-picking method is employed [15]. Genome-edited iPS cells are dissociated and cultured as single cells in extracellular matrices (e.g., Matrigel) to form cell clumps. A cell-handling robot then isolates these clumps, which are expanded into clones. The genotypes of these clones are subsequently determined via amplicon sequencing, allowing for systematic profiling of editing outcomes at the single-cell level.

Molecular Dynamics (MD) Simulations: While computational, MD simulations provide mechanistic, structural insights into empirical observations. For instance, simulations of the Cas9-sgRNA-DNA complex can reveal how specific mismatches induce conformational instability in the RNA-DNA duplex, leading to elevated root mean square deviation (RMSD) values that correlate with reduced catalytic activity [13].

The following diagram illustrates a generalized workflow for empirical off-target assessment, integrating both cellular and computational methods:

Performance Comparison of Cas9 Variants

Empirical studies have systematically compared the performance of various high-fidelity and PAM-flexible Cas9 variants. The data below, derived from PEM-seq analysis at multiple genomic loci, highlights the critical trade-off between editing efficiency and specificity [14].

Table 1: Performance Comparison of High-Fidelity SpCas9 Variants at NGG PAM Sites

| Cas9 Variant | Editing Efficiency (Relative to Wild-Type) | Off-Target Activity (Relative to Wild-Type) | Key Engineering Strategy |

|---|---|---|---|

| Wild-Type SpCas9 | 100% (Baseline) | 100% (Baseline) | N/A |

| eSpCas9(1.1) | Comparable | Significantly Lower | Weakened sgRNA-DNA binding affinity |

| HypaCas9 | Comparable | Significantly Lower | Enhanced proofreading capacity |

| evoCas9 | Very Low (at some loci) | Significantly Lower | High-throughput screening |

| Sniper-Cas9 | Comparable | Lower (but less than others) | High-throughput screening |

Table 2: Performance Comparison of PAM-Flexible SpCas9 Variants

| Cas9 Variant | PAM Requirement | Editing Efficiency (Relative to SpCas9 at NGG) | Off-Target Activity |

|---|---|---|---|

| SpCas9 | NGG | 100% (Baseline at NGG) | Baseline |

| xCas9(3.7) | NGN | Lower at NGG sites | Increased |

| SpG | NGN | Varies by locus | Increased |

| SpRY | NRN > NYN | Moderate at NRN PAMs | Significantly Increased |

The data reveals a consistent pattern: engineering Cas9 for higher fidelity (reduced off-targets) often comes at the cost of reduced on-target efficiency, as seen with variants like eSpCas9(1.1) and HypaCas9 [14]. Conversely, engineering for PAM flexibility (e.g., SpG, SpRY) to expand the targeting range invariably increases off-target activity, creating a fundamental trade-off that must be carefully managed for therapeutic applications.

3In SilicoMethods for Off-Target Prediction

In silico methods use computational models to predict CRISPR off-target effects or small-molecule polypharmacology based on sequence similarity, structural features, and machine learning algorithms.

Computational Workflows and Model Training

The predictive workflow for off-target sites or drug-target interactions relies on feature extraction and model training, as illustrated below:

Two primary computational approaches exist:

Ligand-Centric (Similarity-Based) Methods: These methods, such as MolTarPred, operate by calculating the similarity between a query molecule (or sgRNA) and a database of known molecules (or genomic sequences) with annotated targets [1]. For small molecules, molecular fingerprints like Morgan fingerprints are used. For sgRNAs, sequence homology is the primary metric. The underlying assumption is that structurally similar molecules or sequence-similar genomic loci will have similar interaction profiles.

Target-Centric (Model-Based) Methods: These methods build predictive models for specific targets. They include:

- QSAR Models: Use machine learning (e.g., random forest) on chemical structures to predict bioactivity [1].

- Structure-Based Docking: Simulate molecular binding using 3D protein structures, though this is limited by the availability of high-quality structures [1].

- Deep Learning Models: Newer frameworks like CCLMoff use deep learning and RNA language models to predict CRISPR-Cas9 off-target effects with improved accuracy across diverse datasets [16].

BenchmarkingIn SilicoPrediction Tools

Systematic comparisons of target prediction methods reveal significant performance variations. A 2025 benchmark of seven target prediction methods for small-molecule drugs using an FDA-approved drug dataset found that MolTarPred was the most effective method, particularly when using Morgan fingerprints with Tanimoto scores [1].

In CRISPR guide RNA design, the Vienna Bioactivity CRISPR (VBC) score has been shown to be a strong predictor of sgRNA efficacy. A benchmark study comparing six public genome-wide libraries demonstrated that a minimal library composed of the top three guides per gene selected by VBC scores performed as well as or better than larger libraries in essentiality and drug-gene interaction screens [17].

Table 3: Benchmarking of Ligand-Centric Target Prediction Methods

| Prediction Method | Algorithm Type | Primary Database | Key Finding from Benchmark |

|---|---|---|---|

| MolTarPred | 2D similarity | ChEMBL 20 | Most effective method; optimized with Morgan fingerprints. |

| PPB2 | Nearest neighbor/Naïve Bayes | ChEMBL 22 | Performance depends on fingerprint type (MQN, Xfp, ECFP4). |

| SuperPred | 2D/fragment/3D similarity | ChEMBL & BindingDB | Wide target coverage but algorithm details less clear. |

| RF-QSAR | Random forest | ChEMBL 20 & 21 | Performance varies with fingerprint and model parameters. |

A critical limitation of many early in silico off-target predictors is their poor performance on previously unseen guide RNA sequences [16]. This highlights a generalizability problem, where models trained on one dataset fail to maintain accuracy when applied to new genomic contexts, a challenge that newer deep learning models are attempting to address.

Integrated Comparison: Empirical vs.In SilicoApproaches

The following table provides a direct, data-driven comparison of the two methodological paradigms, synthesizing insights from the analyzed research.

Table 4: Core Paradigm Comparison - Empirical vs. In Silico Methods

| Aspect | Empirical Methods | In Silico Methods |

|---|---|---|

| Fundamental Basis | Direct experimental measurement in biological systems (e.g., PEM-seq, clone sequencing) [15] [14]. | Computational modeling of interactions using algorithms and existing datasets [1] [18]. |

| Key Strengths | Captures biological complexity (e.g., chromatin effects, DNA repair); Provides direct, empirical evidence for validation. | High throughput and scalability; Lower cost and faster turnaround; Predicts outcomes for unobserved variants [18]. |

| Key Limitations | Resource-intensive (time, cost, labor); Lower throughput; Difficult to scale for thousands of targets. | Accuracy and generalizability are data-dependent; Struggles with complex biological context; Cannot discover completely unknown off-targets. |

| Reported Accuracy | High accuracy for detected sites (direct observation); PEM-seq identifies translocations and large deletions [14]. | Variable; MolTarPred led benchmark [1]; Deep learning models (CCLMoff) show improved accuracy [16]. |

| Therapeutic Context | Considered gold standard for pre-clinical safety validation; e.g., used to profile high-fidelity Cas9 variants [14]. | Used for initial sgRNA selection and prioritization; critical for library design in high-throughput screens [17]. |

| Data Output | Quantitative editing efficiencies, lists of validated off-target sites, structural variations. | Predictive scores (e.g., off-target potential, fitness effects, interaction likelihood). |

The Scientist's Toolkit: Research Reagent Solutions

Successful off-target profiling and editing optimization rely on a suite of specialized reagents and tools. The following table details key solutions used in the experiments cited throughout this guide.

Table 5: Essential Research Reagents and Tools for Off-Target Analysis

| Reagent / Tool | Function / Description | Example Use Case |

|---|---|---|

| High-Fidelity Cas9 Variants (e.g., HypaCas9, eSpCas9(1.1)) | Engineered proteins with reduced off-target activity via enhanced proofreading or weakened DNA binding [14]. | Improving specificity in therapeutic editing protocols. |

| PAM-Flexible Variants (e.g., SpG, SpRY) | Engineered proteins with relaxed PAM requirements (e.g., NGN or NRN) to expand targeting range [14]. | Targeting disease loci inaccessible to wild-type SpCas9. |

| Lipid Nanoparticles (LNPs) | Delivery vehicles for in vivo CRISPR components; tend to accumulate in the liver [19]. | Systemic administration for liver-targeted therapies (e.g., for hATTR amyloidosis). |

| Primer-Extension-Mediated Sequencing (PEM-seq) | High-throughput sequencing method to comprehensively detect off-target effects and structural variants [14]. | Gold-standard empirical off-target profiling for pre-clinical safety studies. |

| Genome-Wide sgRNA Libraries (e.g., Vienna library, Yusa v3) | Pooled libraries of sgRNAs for systematic loss-of-function screens [17]. | Functional genomics screens to identify essential genes and drug targets. |

| VBC (Vienna Bioactivity CRISPR) Score | A principled algorithm for predicting sgRNA on-target efficacy [17]. | Designing minimal, highly effective sgRNA libraries for pooled screens. |

| Molecular Dynamics Simulation Software | Computational modeling of biomolecular structures and dynamics over time [13]. | Mechanistic study of how mismatches affect RNA-DNA duplex stability and Cas9 function. |

The journey toward perfectly precise genome editing is navigated with two distinct maps: the empirically charted terrain of experimental biology and the computationally projected landscape of in silico prediction. Empirical methods like PEM-seq provide the ground truth, revealing the complex biological reality of off-target effects and enabling the validation of high-fidelity editors like HypaCas9 [14]. Conversely, in silico tools, from similarity-based methods like MolTarPred to modern deep learning models, offer the scalability necessary to navigate the vastness of genomic and chemical space [1] [16].

The prevailing thesis, strongly supported by current data, is not that one paradigm supersedes the other, but that they are fundamentally synergistic. The future of safe and effective therapeutic design, both in CRISPR and polypharmacology, lies in a hybrid workflow. In this integrated approach, computational models are used for initial, high-throughput prioritization of guides or drug candidates, the outputs of which are then rigorously validated by focused empirical methods. This combined strategy leverages the scalability of computation with the reliability of experimental evidence, creating a more efficient and robust path for translating precision biological tools into clinical realities.

In the field of CRISPR-Cas9 genome editing, off-target effects present a significant challenge for both basic research and clinical therapy development. Accurately identifying these unintended editing events is crucial, and the scientific community primarily relies on two distinct paradigms: empirical (experimental) methods and in silico (computational) prediction tools. This guide provides a objective comparison of these approaches, detailing their principles, performance, and practical applications in modern research.

Core Principles and Methodologies

The empirical and in silico approaches are founded on fundamentally different philosophies for discovering CRISPR off-target sites.

In Silico (Computational) Prediction

In silico methods rely on algorithms to computationally nominate potential off-target sites based on sequence similarity to the guide RNA (gRNA).

- Principle: These tools scan a reference genome to identify loci that bear sequence homology to the gRNA spacer sequence, allowing for a limited number of mismatches and/or DNA/RNA bulges. The underlying assumption is that the likelihood of off-target cleavage is primarily determined by the degree of sequence complementarity between the gRNA and the genomic DNA [4] [8].

- Evolution of Methods: Early tools were alignment-based (e.g., Cas-OFFinder, CHOPCHOP) and focused on efficient genome-wide scanning for homologous sequences [8]. Formula-based methods (e.g., CCTop) introduced weighted scoring schemes that assign greater importance to mismatches in the PAM-proximal "seed" region [4] [8]. The current state-of-the-art employs deep learning-based models (e.g., CCLMoff, DeepCRISPR). These frameworks use pretrained language models on large RNA sequence databases to automatically extract complex sequence features and genomic contexts, enabling more accurate prediction of off-target activity, including for unseen gRNA sequences [8].

Empirical (Experimental) Discovery

Empirical methods use laboratory experiments to directly detect the biological consequences of Cas9 activity—such as DNA binding, double-strand breaks (DSBs), or repair products—across the genome without prior reliance on sequence homology.

- Principle: These techniques are data-driven, capturing off-target events through direct observation or experience in a laboratory setting [20]. They are designed to be unbiased by sequence homology, thereby potentially discovering off-target sites with unexpected genomic contexts or higher numbers of mismatches [4].

- Categories of Methods: Empirical techniques can be classified based on what they detect [8]:

- Cas9 Binding Detection: Methods like Extru-seq and SITE-seq identify genomic regions where the Cas9 nuclease binds, regardless of cleavage [8].

- DSB Detection: Techniques such as CIRCLE-seq (in vitro) and DISCOVER-seq (in vivo) directly enrich and sequence DNA fragments that have undergone Cas9-induced double-strand breaks [4] [8].

- Repair Product Detection: Approaches like GUIDE-seq integrate a short, double-stranded oligodeoxynucleotide tag into DSB sites during repair in living cells, allowing for the high-sensitivity identification of off-target cleavage events [4] [8].

The following diagram illustrates the foundational workflows that distinguish these two approaches.

Head-to-Head Performance Comparison

A direct comparison in primary human hematopoietic stem and progenitor cells (HSPCs)—a clinically relevant model for ex vivo gene therapy—reveals the relative strengths and limitations of each method [4].

Quantitative Performance Metrics

The table below summarizes the performance of various tools from a comparative study that used targeted next-generation sequencing to validate nominated off-target sites [4].

| Method | Type | Key Principle | Sensitivity | Positive Predictive Value (PPV) |

|---|---|---|---|---|

| COSMID | In Silico | Bioinformatics algorithm | High | High |

| CCTop | In Silico | Bioinformatics algorithm | High | Not Specified |

| Cas-OFFinder | In Silico | Alignment-based search | High | Not Specified |

| GUIDE-seq | Empirical | Tags DSB repair products | High | High |

| DISCOVER-Seq | Empirical | Detects DSBs in vivo | High | High |

| CIRCLE-Seq | Empirical | Detects DSBs in vitro | High | Moderate |

| SITE-Seq | Empirical | Detects Cas9 binding in vitro | Lower | Moderate |

Key Findings from Comparative Data [4]:

- Overall Off-Target Rate: When using High-Fidelity (HiFi) Cas9 with a standard 20-nt gRNA in primary HSPCs, off-target editing was found to be "exceedingly rare," with an average of less than one off-target site per gRNA.

- Sensitivity: The majority of off-target nomination tools demonstrated high sensitivity. Notably, all true off-target sites generated by HiFi Cas9 were identified by all methods except SITE-seq.

- Positive Predictive Value (PPV): Among the tested methods, COSMID (in silico), DISCOVER-Seq, and GUIDE-seq (both empirical) attained the highest PPV, meaning a high proportion of their nominated sites were validated as true off-targets.

- Overlap in Discovery: A critical finding was that empirical methods did not identify any unique, validated off-target sites that were not also identified by bioinformatic methods in this primary cell system.

Detailed Experimental Protocols

To ensure reproducibility, here are the detailed methodologies for key experiments cited in the performance comparison.

This protocol outlines the head-to-head comparison performed in primary cells.

- Cell Preparation: Isolate and purify human CD34+ hematopoietic stem and progenitor cells (HSPCs).

- CRISPR Editing: Deliver CRISPR-Cas9 ribonucleoprotein (RNP) complexes into HSPCs ex vivo. The study compared:

- Cas9 Variants: Wild-type (WT) Cas9 vs. High-Fidelity (HiFi) Cas9.

- gRNA Design: 20-nucleotide (nt) vs. 18-nt spacer lengths.

- Off-Target Nomination: A panel of 11 disease-relevant gRNAs was used. For each gRNA, potential off-target sites were nominated by:

- In Silico Tools: COSMID, CCTop, Cas-OFFinder.

- Empirical Methods: CHANGE-Seq, CIRCLE-Seq, DISCOVER-Seq, GUIDE-Seq, SITE-Seq.

- Targeted Deep Sequencing: Design amplicons for all nominated off-target sites. Perform next-generation sequencing (NGS) on the edited HSPC samples.

- Data Analysis: Analyze NGS data for insertion/deletion (indel) frequencies at each nominated site. Classify sites as true positives (validated off-target) or false positives (no editing detected). Calculate sensitivity and PPV for each nomination method.

This protocol describes the development of a state-of-the-art deep learning prediction tool.

- Data Curation: Compile a comprehensive off-target dataset from 21 publications, encompassing 13 genome-wide deep sequencing techniques (e.g., GUIDE-seq, CIRCLE-seq, DISCOVER-seq).

- Negative Sample Construction: Use Cas-OFFinder to generate negative samples (non off-target sites) by scanning the genome with constraints on mismatches and bulges.

- Model Architecture:

- Input: The sgRNA sequence and a candidate DNA target site (converted to pseudo-RNA).

- Encoder: A transformer-based language model (initialized with RNA-FM, a model pretrained on 23 million RNA sequences).

- Output: A multilayer perceptron (MLP) predicts the probability of the candidate site being an off-target.

- Model Training: Train the model using binary cross-entropy loss and the AdamW optimizer, employing a learning rate warm-up strategy.

- Model Interpretation: Use interpretation techniques to confirm the model successfully captures the biological importance of the seed region.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful off-target assessment requires a combination of computational tools, laboratory reagents, and experimental models. The table below lists key solutions for designing and executing these studies.

| Item | Function & Application |

|---|---|

| High-Fidelity Cas9 | Engineered Cas9 variant (e.g., HiFi Cas9) with reduced off-target cleavage activity while maintaining robust on-target editing; crucial for therapeutic development [4] [7]. |

| Synthetic gRNA with Chemical Modifications | Chemically modified guide RNAs (e.g., with 2'-O-methyl analogs and phosphorothioate bonds) enhance stability and reduce off-target effects while potentially increasing on-target efficiency [7]. |

| Primary Cell Models (e.g., CD34+ HSPCs) | Physiologically relevant human cells, such as hematopoietic stem and progenitor cells, are critical for evaluating editing and off-target effects in a clinically meaningful context [4]. |

| In Silico gRNA Design Tools (e.g., CRISPOR) | Software that ranks multiple potential gRNAs based on predicted on-target efficiency and off-target risk, guiding the selection of the optimal guide for experiments [7]. |

| NGS Library Prep Kits for Targeted Sequencing | Reagents for preparing sequencing libraries from specific nominated off-target sites or from genome-wide DSB enrichment protocols (e.g., GUIDE-seq, CIRCLE-seq) [4] [8]. |

| Deep Learning Prediction Tools (e.g., CCLMoff) | State-of-the-art computational frameworks that use pretrained language models to achieve high accuracy and strong generalization for off-target prediction across diverse datasets [8]. |

The comparative data reveals that the traditional dichotomy between empirical and in silico methods is evolving. In primary cell systems, refined bioinformatic algorithms can achieve high sensitivity and PPV, identifying the same true off-target sites as empirical methods [4]. The emergence of deep learning models trained on comprehensive empirical datasets further blurs the lines, creating powerful in silico tools with robust generalization capabilities [8].

For researchers and drug developers, this suggests that an integrated, hierarchical approach is optimal: begin with advanced in silico screening (using modern deep learning tools) to select the safest gRNAs and nominate high-risk candidate sites, then use targeted empirical validation in physiologically relevant models to confirm the absence of off-target editing before proceeding to the clinic. This strategy maximizes efficiency and thoroughness, streamlining the development of safer CRISPR-based therapies.

A Deep Dive into Methodologies: From Benchtop Assays to AI Models

The therapeutic application of CRISPR-Cas9 gene editing hinges on precisely characterizing its unintended, off-target effects. While in silico prediction tools offer computational efficiency for initial sgRNA screening, they are inherently limited by their dependence on existing sequence databases and their inability to fully capture the complex biological factors influencing nuclease activity [21] [22]. Consequently, empirical, genome-wide methods have become the cornerstone for comprehensive off-target profiling. These experimental techniques can be broadly categorized by their fundamental approach: biochemical methods (using purified genomic DNA) and cell-based methods (using living cells) [21]. Among the numerous assays developed, three have emerged as foundational workhorses: the biochemical methods CIRCLE-seq and Digenome-seq, and the cell-based method GUIDE-seq. This guide provides a detailed objective comparison of these three pivotal techniques, framing them within the critical research thesis that robust off-target assessment requires a multi-modal strategy integrating both empirical and computational approaches.

Core Technologies and Workflows

GUIDE-seq (Genome-wide, Unbiased Identification of DSBs Enabled by Sequencing)

GUIDE-seq is a cell-based method that directly captures the biological reality of double-strand breaks (DSBs) within the native cellular environment, including the influences of chromatin structure and DNA repair pathways [21] [22]. Its core innovation involves introducing a short, double-stranded oligodeoxynucleotide (dsODN) tag into DSBs generated by the CRISPR-Cas9 nuclease in living cells [23]. These incorporated tags then serve as primers for amplification and sequencing, allowing for the genome-wide mapping of off-target sites [22].

Table 1: Key Research Reagents for GUIDE-seq

| Reagent/Material | Function in the Protocol |

|---|---|

| dsODN Tag | A short, double-stranded oligonucleotide that is incorporated into CRISPR-induced DSBs by cellular repair machinery; essential for later enrichment and sequencing [22]. |

| Transfection Reagent | Enables efficient co-delivery of the CRISPR-Cas9 components (sgRNA and Cas9) along with the dsODN tag into the target cells [21]. |

| PCR Primers Specific to dsODN | Used to selectively amplify the genomic regions that have successfully incorporated the dsODN tag, enriching the sequencing library for true off-target sites [22]. |

CIRCLE-seq (Circularization for In Vitro Reporting of Cleavage Effects by Sequencing)

CIRCLE-seq is a highly sensitive biochemical method performed in vitro using purified genomic DNA [24] [25]. Its key differentiator is a circularization step that dramatically reduces background noise, enabling the detection of very rare off-target events.

Table 2: Key Research Reagents for CIRCLE-seq

| Reagent/Material | Function in the Protocol |

|---|---|

| Purified Genomic DNA | The substrate for the assay; sheared and circularized. Isolation requires a commercial kit for high-quality, high-molecular-weight DNA [25]. |

| T4 DNA Ligase | Enzymatically catalyzes the circularization of sheared genomic DNA fragments, a critical step for background reduction [24]. |

| Exonuclease | Digests any remaining linear DNA fragments post-circularization, thereby enriching the final library for circularized molecules [24] [25]. |

| Cas9-gRNA RNP Complex | The active editing complex; incubated with the circularized DNA to cleave at sites complementary to the gRNA [25]. |

Digenome-seq (Digested Genome Sequencing)

Digenome-seq is another biochemical, in vitro method that relies on the direct sequencing of genomic DNA digested by the CRISPR-Cas9 ribonucleoprotein (RNP) complex [22]. Identification of off-target sites is achieved bioinformatically by searching for genomic locations with a cluster of sequencing reads that have uniform start and end positions, which is the signature of a Cas9-induced DSB [24].

Table 3: Key Research Reagents for Digenome-seq

| Reagent/Material | Function in the Protocol |

|---|---|

| Purified Genomic DNA | The substrate for the assay; incubated directly with the Cas9 RNP complex. |

| Cas9 RNP Complex | The active editing complex; digests the genomic DNA at both on-target and off-target sites in vitro [22]. |

| Whole-Genome Sequencing Kit | Standard kits for library preparation and sequencing are used, as there is no specific enrichment step for cleaved fragments [21]. |

Objective Comparison of Performance and Practical Application

Direct Comparison of Key Characteristics

Table 4: Comprehensive Comparison of GUIDE-seq, CIRCLE-seq, and Digenome-seq

| Feature | GUIDE-seq | CIRCLE-seq | Digenome-seq |

|---|---|---|---|

| Fundamental Approach | Cellular (in cells) | Biochemical (in vitro) | Biochemical (in vitro) |

| Detection Principle | Tagging of DSBs in living cells [22] | Cleavage of circularized genomic DNA [24] | Direct WGS of Cas9-digested DNA [22] |

| Input Material | Living cells [21] | Purified genomic DNA (nanogram amounts) [21] | Purified genomic DNA (microgram amounts) [21] |

| Sensitivity | High sensitivity for cellularly relevant sites [24] | Very high sensitivity; can detect extremely rare cleavage events [24] [21] | Moderate sensitivity; requires deep sequencing [24] [21] |

| Biological Context | Yes - includes chromatin effects, cellular repair [21] | No - uses naked DNA, lacks cellular context [21] | No - uses naked DNA, lacks cellular context [21] |

| Relative Cost & Throughput | Moderate cost; lower throughput due to cell culture and transfection [21] | Moderate to high cost; suitable for moderate throughput [25] | High cost due to very deep sequencing requirements; lower throughput [24] [21] |

| Key Strengths | Identifies biologically relevant off-targets; lower false positive rate from biological filtering [24] [21] | Ultra-sensitive; comprehensive; standardized; does not require a reference genome [24] | Conceptually simple; no complex enrichment steps [21] |

| Key Limitations | Requires efficient delivery into cells; may miss rare sites or sites in hard-to-transfect cells [21] [22] | May overestimate cleavage due to lack of biological context (higher false positives) [21] [25] | High background noise; requires a reference genome; lower signal-to-noise ratio [24] |

Performance and Validation Data

Direct comparative studies have demonstrated that CIRCLE-seq possesses a higher signal-to-noise ratio compared to Digenome-seq, requiring approximately 100-fold fewer sequencing reads to achieve greater sensitivity [24]. In one evaluation, CIRCLE-seq identified 26 out of 29 off-target sites previously found by Digenome-seq for a specific gRNA, plus 156 new sites [24]. When compared to the cell-based method GUIDE-seq, CIRCLE-seq performed remarkably well, detecting all or all but one off-target sites found by GUIDE-seq for multiple gRNAs, while also identifying many additional sites not detected in the cellular assay [24]. This pattern underscores a critical trade-off: highly sensitive in vitro methods like CIRCLE-seq can reveal a broader spectrum of potential off-target sites, but validation in a cellular context is often necessary to determine their biological relevance [21].

The selection of an off-target detection method is not a choice of one "best" technology, but a strategic decision based on the research or development phase. GUIDE-seq is unparalleled for identifying which off-target sites are actually edited in a specific cellular context, providing critical data for preclinical safety assessment. In contrast, CIRCLE-seq offers a powerful, hyper-sensitive first-pass screen to nominate a comprehensive list of potential off-target sites for further investigation. Digenome-seq, while historically important, is now often superseded by more sensitive and efficient biochemical methods like CIRCLE-seq and CHANGE-seq [21].

The future of off-target analysis lies in the intelligent integration of these empirical workhorses with the next generation of in silico tools. Newer deep learning models, such as CCLMoff and CRISOT, are beginning to incorporate features from multiple biochemical and cellular datasets, and some even integrate epigenetic information to better predict activity in specific cell types [8] [26] [27]. As the field moves toward clinical applications, a multi-tiered strategy—using sensitive in vitro methods for broad discovery, followed by cell-based validation and supplemented by sophisticated computational predictions—will provide the most robust and defensible assessment of CRISPR off-target effects, ensuring the safety of future gene therapies.

In silico methods have become indispensable tools in modern drug discovery, offering a computational strategy to predict interactions between small molecules and biological targets. These approaches directly address the immense costs, extended timelines, and high failure rates associated with traditional drug development [28]. By leveraging computational power, researchers can rapidly screen thousands of compounds, prioritize the most promising candidates for experimental validation, and generate crucial hypotheses about mechanisms of action and potential off-target effects [29] [28]. Molecular docking, one of the earliest and most established in silico techniques, specifically predicts how small molecules (ligands) bind to receptor proteins, simulating the binding conformation and estimating the binding affinity that determines the stability of the ligand-receptor complex [30]. This foundational method, alongside newer machine learning approaches, provides a critical framework for understanding molecular interactions before committing to laborious wet-lab experiments, thereby accelerating the entire drug discovery pipeline [28] [30].

Molecular Docking: Core Algorithms and Workflows

Search Algorithms: Exploring Conformational Space

The process of molecular docking involves two fundamental steps: sampling ligand conformations within the protein's binding site and ranking these conformations using a scoring function [30]. The sampling algorithms are designed to systematically explore the vast conformational space of the ligand relative to the receptor. These methods can be broadly classified into systematic and stochastic approaches [31] [30].

Systematic Methods: These algorithms exhaustively explore conformational space by incrementally varying the ligand's torsional, translational, and rotational degrees of freedom.

- Conformational Search: Gradually changes structural parameters like dihedral angles [30].

- Fragmentation: Breaks the molecule into rigid fragments, docks them separately into suitable sub-pockets, and then connects them with flexible linkers. Tools like FlexX and DOCK employ this method [31] [30].

- Database Search: Utilizes pre-generated conformations from molecular databases for rigid-body docking [30].

Stochastic Methods: These techniques use probabilistic approaches to sample the conformational space more efficiently, particularly for ligands with high flexibility.

- Monte Carlo: Makes random changes to ligand conformation and accepts or rejects them based on energy criteria and probabilistic rules [31] [30].

- Genetic Algorithm (GA): Mimics natural evolution by generating a population of ligand poses, using the docking score as a "fitness" function, and creating new generations through cross-over and mutation. AutoDock and GOLD are prominent examples [31] [30].

- Tabu Search: Explores new configurations while avoiding previously sampled regions of the conformational space [30].

Scoring Functions: Predicting Binding Affinity

Scoring functions are mathematical models used to predict the binding affinity of a ligand pose generated by the search algorithm. They are crucial for ranking different poses and identifying the most biologically relevant binding mode [31] [30]. The four primary types of scoring functions are:

- Force Field-Based: Calculate binding energy by summing contributions from non-bonded interactions like van der Waals forces, electrostatic interactions, and sometimes bond stretching and angle bending. Examples include the scoring functions in AutoDock and DOCK [30].

- Empirical: Use linear regression on training sets of protein-ligand complexes with known binding affinities. They parameterize different interaction types (e.g., hydrogen bonds, hydrophobic contacts). ChemScore and LUDI are empirical functions [30].

- Knowledge-Based: Derive potentials of mean force from statistical analyses of atom pair frequencies in known protein-ligand structures. PMF and DrugScore are knowledge-based functions [30].

- Consensus Scoring: Combines multiple scoring functions to improve reliability and reduce the limitations of any single method [30].

Comparative Performance of In Silico Prediction Methods

Molecular Docking Software Landscape

Numerous molecular docking programs have been developed, each with unique algorithms and capabilities. The table below summarizes some widely used software and their key features.

Table 1: Comparison of Popular Molecular Docking Software

| Software | Search Algorithm | Scoring Function | Key Features | Applications |

|---|---|---|---|---|

| AutoDock/Vina | Genetic Algorithm, Monte Carlo | Empirical, Force Field | Fast, open-source; good for flexible docking | Virtual screening, binding mode prediction [30] |

| GOLD | Genetic Algorithm | Empirical (GoldScore, ChemScore) | Handles ligand and protein flexibility | High-accuracy pose prediction [31] [30] |

| Glide | Systematic search, Monte Carlo refinement | Empirical (GlideScore) | Hierarchical filtering; accurate for rigid receptors | Database screening, lead optimization [31] [30] |

| DOCK | Incremental construction, Fragmentation | Force Field, Empirical | One of the earliest docking programs | Binding site detection, molecular matching [31] [30] |

| FlexX | Incremental construction | Empirical | Efficient fragment-based docking | De novo design, virtual screening [31] |

Performance Comparison of Target Prediction Methods

Beyond traditional docking, various target prediction methods have been developed and systematically evaluated. A 2025 benchmark study compared seven target prediction methods using a shared dataset of FDA-approved drugs, providing valuable performance insights [29].

Table 2: Performance Comparison of Molecular Target Prediction Methods [29]

| Method | Type | Key Algorithm/Approach | Performance Notes | Best Use Cases |

|---|---|---|---|---|

| MolTarPred | Stand-alone code | Morgan fingerprints with Tanimoto scores | Most effective method in benchmark | General target prediction, drug repurposing [29] |

| PPB2 | Web server | Not specified | Evaluated in benchmark | Target identification [29] |

| RF-QSAR | Machine Learning | Random Forest, QSAR | Evaluated in benchmark | Activity prediction based on chemical structure [29] |

| TargetNet | Web server | Not specified | Evaluated in benchmark | Target prediction [29] |

| CMTNN | Deep Learning | Convolutional Neural Network | Evaluated in benchmark | Pattern recognition in molecular structures [29] |

| High-confidence Filtering | Strategy | Confidence thresholding | Reduces recall | When precision is prioritized over comprehensive screening [29] |

The study found that model optimization strategies like high-confidence filtering can reduce recall, making them less ideal for drug repurposing where broad screening is desired [29]. For MolTarPred, the use of Morgan fingerprints with Tanimoto scores outperformed MACCS fingerprints with Dice scores [29].

Advanced In Silico Methodologies and Experimental Protocols

Machine Learning and AI-Enhanced Approaches

Recent advances have integrated machine learning and artificial intelligence to overcome limitations of traditional docking, particularly in scoring function accuracy and handling protein flexibility [28].

- Deep Learning Models: Frameworks like DeepAffinity capture nonlinear dependencies between protein residues and compound atoms through unsupervised pretraining, capturing "long-distance" interactions crucial for binding [28].

- Hybrid Approaches: Models such as BridgeDPI integrate "guilt-by-association" principles from network-based methods with learning-based approaches to enhance prediction accuracy [28].

- Language Models: Newer approaches like MMDG-DTI leverage pretrained large language models (LLMs) to capture generalized text features across biological vocabulary, improving generalization [28].

- AI-Enhanced Sampling: Methods like AI-Bind combine network science with unsupervised learning to mitigate over-fitting and annotation imbalance, using node embeddings from extensive chemical and protein structure collections [31].

Experimental Validation Protocols for In Silico Predictions

Rigorous experimental validation is crucial for verifying computational predictions. For target prediction and off-target assessment, several methodological approaches have been developed.

Table 3: Experimental Methods for Validating In Silico Predictions

| Method Category | Example Techniques | Key Principle | Application in Validation |

|---|---|---|---|

| Biochemical (Cell-free) | Digenome-seq, CIRCLE-seq, CHANGE-seq | Uses purified genomic DNA + nuclease; maps cleavage sites in vitro | High-sensitivity off-target discovery; identifies potential cleavage sites [21] |

| Cellular | GUIDE-seq, DISCOVER-seq, HTGTS | Tags or sequences double-strand breaks (DSBs) in living cells | Validates biologically relevant off-target effects in physiological conditions [22] [21] |

| In Situ | BLISS, BLESS, END-seq | Captures DSBs in fixed cells, preserving genomic architecture | Maps breaks in native chromatin context [22] [21] |

| Binding Detection | ChIP-seq, Discover-seq | Uses catalytically inactive Cas9 (dCas9) or repair proteins to map binding | Identifies binding sites genome-wide, including non-cleaving interactions [22] |

Case Study: Protocol for Validating Off-Target Predictions

A typical experimental workflow for validating in silico off-target predictions involves:

In Silico Prediction Phase: Use computational tools (e.g., Cas-OFFinder, CCTop) to nominate potential off-target sites based on sequence similarity to the intended target [22] [8].

Biochemical Verification: Perform CIRCLE-seq or Digenome-seq on purified genomic DNA to identify potential cleavage sites without cellular context [21]. For example, CIRCLE-seq involves:

Cellular Context Validation: Conduct GUIDE-seq or DISCOVER-seq in relevant cell lines to confirm which predicted sites are actually edited in a cellular environment [21]. GUIDE-seq involves:

Functional Assessment: Validate biologically significant off-target edits through targeted sequencing of predicted sites and assessment of functional consequences [22].

Research Reagent Solutions for In Silico Experiments

The implementation and validation of in silico predictions require specific computational tools and experimental reagents. The following table outlines key resources for conducting molecular docking studies and related experimental validations.

Table 4: Essential Research Reagents and Tools for In Silico Experiments

| Category | Resource | Specification/Function | Application Context |

|---|---|---|---|

| Docking Software | AutoDock Vina, GOLD, Glide | Molecular docking algorithms with scoring functions | Predicting ligand-receptor binding poses and affinities [30] |

| Target Prediction Tools | MolTarPred, PPB2, RF-QSAR | Machine learning models for identifying potential protein targets | Drug repurposing, mechanism of action studies [29] |

| Off-Target Prediction | CCLMoff, Cas-OFFinder, DeepCRISPR | Algorithms predicting off-target sites for gene editing or small molecules | CRISPR guide RNA design, drug safety profiling [22] [8] |

| Structure Resources | PDB (Protein Data Bank), AlphaFold DB | Repository of experimental and predicted protein 3D structures | Source of receptor structures for docking studies [28] [30] |

| Validation Kits | GUIDE-seq, CIRCLE-seq kits | Commercial kits for experimental off-target detection | Validating computational predictions in biological systems [21] |

| Compound Libraries | ZINC, ChEMBL | Databases of commercially available or bioactive compounds | Virtual screening for hit identification [29] [28] |

Molecular docking remains a foundational in silico method with proven utility in drug discovery, particularly for understanding binding modes and initial screening [30]. However, its limitations in scoring accuracy and handling full system flexibility have driven the development of complementary machine learning approaches that show superior performance in specific applications like target prediction [29] [28]. The most effective drug discovery pipelines integrate multiple computational methods—leveraging the mechanistic insights from traditional docking with the pattern recognition capabilities of modern AI—while maintaining rigorous experimental validation using biochemical, cellular, and in situ assays [21] [28]. This integrated framework accelerates the identification of promising therapeutic candidates and provides a more comprehensive assessment of their on-target efficacy and off-target risks, ultimately contributing to more efficient and successful drug development.

The application of artificial intelligence in biological sciences represents a fundamental shift from empirical laboratory methods to sophisticated in silico prediction systems. Traditional experimental approaches for identifying biological interactions—from drug-target binding to CRISPR-Cas9 off-target effects—face significant challenges of scale, cost, and time intensity. Empirical methods, while providing direct experimental evidence, often require extensive laboratory work spanning months or years, with costs frequently reaching millions of dollars per investigated target [28]. In contrast, computational approaches leverage deep learning and large language models to analyze complex biological data patterns, offering rapid predictions that prioritize experimental efforts and reduce resource expenditures [28] [26]. This comparison guide objectively evaluates the performance of leading AI models against traditional methods, focusing specifically on their application in drug-target interaction (DTI) prediction and CRISPR off-target effect identification—two domains where AI has demonstrated particularly transformative potential.

Performance Comparison: AI Models Versus Traditional Methods

Quantitative Performance Metrics Across Prediction Domains

Table 1: Performance comparison of AI models versus traditional methods for off-target prediction

| Model/Method | Prediction Domain | AUROC | AUPRC | Accuracy | Key Advantage |

|---|---|---|---|---|---|

| DNABERT-Epi [26] | CRISPR Off-target | 0.989 | 0.812 | N/A | Integrates epigenetic features with pre-trained genomic knowledge |

| CRISPR-BERT [26] | CRISPR Off-target | 0.978 | 0.721 | N/A | Transformer architecture optimized for sequence analysis |

| CRISTA [26] | CRISPR Off-target | 0.961 | 0.612 | N/A | Traditional deep learning approach |

| DrugGPT [32] | Drug Recommendation | N/A | N/A | 86.5% | Clinical decision support with evidence tracing |

| Molecular Docking [28] | Drug-Target Interaction | Variable (structure-dependent) | N/A | N/A | Physical simulation of binding interactions |

| GUIDE-seq (Empirical) [26] | CRISPR Off-target Detection | N/A | N/A | High (but limited coverage) | Experimental validation gold standard |

Table 2: Performance comparison of AI models across different biological languages

| Model | Application Domain | Architecture | Pre-training Data | Key Performance Metric |

|---|---|---|---|---|

| DNABERT [26] | Genomic Sequence Analysis | BERT-based | Human Genome | AUROC: 0.989 on off-target prediction |

| BioBERT [33] | Biomedical Text Mining | BERT-based | PubMed articles | Improved named entity recognition (F1: 0.887) |

| BioGPT [33] | Biomedical Literature | GPT-based | PubMed articles | State-of-the-art on relation extraction tasks |

| ESMFold [33] | Protein Structure Prediction | Transformer | Protein Sequences | High-accuracy 3D structure prediction |

Performance Analysis and Interpretation