Direct vs. Indirect Methods in Health Research: A Strategic Guide for Evidence Synthesis and HTA

This article provides a comprehensive guide for researchers and drug development professionals on selecting and applying direct and indirect comparison methods for health technology assessment (HTA).

Direct vs. Indirect Methods in Health Research: A Strategic Guide for Evidence Synthesis and HTA

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on selecting and applying direct and indirect comparison methods for health technology assessment (HTA). It covers foundational concepts, methodological execution, and troubleshooting, aligned with the latest EU HTA 2025 guidelines. Readers will gain practical insights into methods like Network Meta-Analysis (NMA), MAIC, and STC, learn to navigate common challenges like effect modifiers and population heterogeneity, and understand the criteria for robust methodological validation and acceptance by HTA bodies.

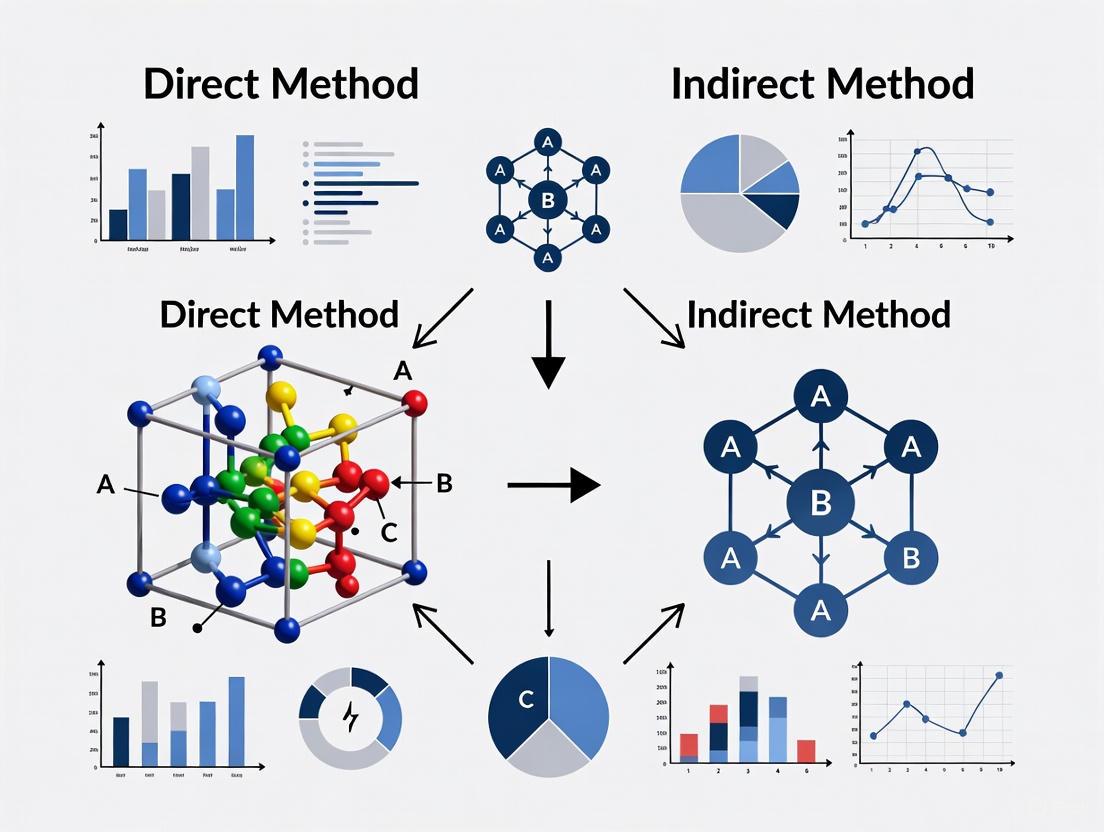

Understanding the Basics: What Are Direct and Indirect Comparison Methods?

Welcome to the HTA Research Support Center

This support center provides troubleshooting guides and FAQs for researchers, scientists, and drug development professionals conducting treatment comparisons for Health Technology Assessment (HTA). The content is framed within a broader thesis on direct versus indirect method keyword recommendation research, helping you navigate common methodological challenges.

Troubleshooting Guides

Guide 1: Selecting the Appropriate Treatment Comparison Method

Problem Statement: A researcher is unsure whether to use a direct or indirect method for comparing a new intervention against relevant comparators.

Diagnosis and Resolution:

- Step 1: Assess Available Evidence - Determine if head-to-head Randomized Controlled Trials (RCTs) exist for all treatments of interest. If they do, a direct comparison is feasible [1].

- Step 2: Evaluate Feasibility of Direct Comparison - If head-to-head RCTs are unavailable, unethical, or impractical (e.g., in rare diseases), an indirect treatment comparison (ITC) is necessary [1].

- Step 3: Select ITC Technique - Based on the evidence network and data availability, select an appropriate ITC method. The flowchart below outlines this decision pathway.

Guide 2: Addressing Heterogeneity Between Studies in an ITC

Problem Statement: Significant clinical or methodological differences exist between studies included in an indirect treatment comparison, potentially biasing results.

Diagnosis and Resolution:

- Step 1: Identify Sources of Heterogeneity - Systematically document differences in patient populations, study designs, interventions, and outcomes [1].

- Step 2: Assess Impact - Use statistical tests (e.g., I² statistic) and visual inspection (e.g., forest plots) to quantify heterogeneity [1].

- Step 3: Apply Adjustment Techniques - If heterogeneity is confirmed, consider population-adjusted methods like MAIC or Network Meta-Regression [1].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between direct and indirect treatment comparisons? A: Direct comparisons estimate treatment effects from studies that randomly assign patients to the interventions being compared (e.g., RCTs). Indirect comparisons estimate relative effects between treatments that have not been compared in head-to-head trials, using a common comparator to link them [1].

Q2: When is an indirect treatment comparison necessary? A: ITCs are necessary when a direct comparison is unavailable, unethical, unfeasible, or impractical. This often occurs in oncology, rare diseases, or when multiple comparators are relevant and comparing all directly in trials is not feasible [1].

Q3: Which ITC method should I use if I only have single-arm studies? A: When only single-arm studies are available (common in oncology), Matching-Adjusted Indirect Comparison (MAIC) or Simulated Treatment Comparison (STC) are appropriate techniques, as they can adjust for differences in patient characteristics between studies [1].

Q4: How do HTA bodies view indirect treatment comparisons? A: HTA agencies prefer direct evidence from RCTs but recognize the necessity of ITCs when direct evidence is lacking. They consider ITCs on a case-by-case basis, with acceptability depending on the methodology's rigor and the validity of underlying assumptions [1].

Q5: What are the main limitations of indirect treatment comparisons? A: Key limitations include the need for stronger assumptions (e.g., similarity assumption), potential for unmeasured confounding, sensitivity to between-study heterogeneity, and generally lower certainty of evidence compared to well-conducted direct comparisons [1].

Methodological Comparison Tables

| Method Type | Key Features | Data Requirements | Key Assumptions | Common Applications |

|---|---|---|---|---|

| Direct Comparison | Head-to-head comparison in randomized setting | RCTs directly comparing treatments of interest | Randomization ensures balance of known and unknown confounders | Gold standard when feasible; regulatory submissions |

| Indirect Comparison (Bucher Method) | Uses common comparator to link treatments | Aggregate data for A vs C and B vs C | Similarity between studies (no effect modifiers) | Simple connected networks; early technology assessments |

| Network Meta-Analysis | Simultaneous comparison of multiple treatments | Network of RCTs (connected evidence) | Consistency assumption (direct & indirect evidence agree) | HTA submissions comparing multiple treatments; clinical guidelines |

| Matching-Adjusted Indirect Comparison | Adjusts for population differences | Individual Patient Data (IPD) for one trial, AD for another | All effect modifiers are measured and included | Oncology; single-arm trials vs. comparator from RCT |

| ITC Technique | Frequency in Literature | IPD Requirement | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Network Meta-Analysis (NMA) | 79.5% | No | Simultaneous multiple treatment comparisons; uses all available evidence | Requires connected network; relies on consistency assumption |

| Matching-Adjusted Indirect Comparison (MAIC) | 30.1% | Yes (partial) | Adjusts for cross-trial differences; handles single-arm studies | Depends on measured effect modifiers; reduced effective sample size |

| Simulated Treatment Comparison (STC) | 21.9% | Yes (partial) | Models outcomes for common comparator; flexible framework | Model-dependent; requires strong assumptions |

| Bucher Method | 23.3% | No | Simple to implement; transparent calculations | Limited to three treatments; no heterogeneity adjustment |

The Scientist's Toolkit: Research Reagent Solutions

Essential Methodological Approaches for Treatment Comparisons

| Item | Function/Application |

|---|---|

| Network Meta-Analysis | Statistical technique for comparing multiple treatments simultaneously using direct and indirect evidence within a connected network of trials [1]. |

| Matching-Adjusted Indirect Comparison | Population-adjusted method that re-weights individual patient data from one study to match the baseline characteristics of another study when IPD is available for only one trial [1]. |

| Bucher Method | Simple adjusted indirect comparison method for comparing two treatments via a common comparator in a connected network of three treatments [1]. |

| Simulated Treatment Comparison | Method that uses individual patient data to develop a model of the outcome for the common comparator and applies it to the target population when only aggregate data is available for the comparator [1]. |

| Systematic Literature Review | Foundation of any treatment comparison that ensures all relevant evidence is identified, selected, and synthesized in a comprehensive and reproducible manner [1]. |

Experimental Protocols

Protocol 1: Conducting a Network Meta-Analysis

Objective: To compare multiple interventions simultaneously by combining direct and indirect evidence.

Methodology:

- Develop Systematic Review Protocol - Define PICOS (Population, Intervention, Comparator, Outcomes, Study design) criteria [1].

- Conduct Comprehensive Literature Search - Search multiple databases (e.g., PubMed, Embase) and grey literature sources [1].

- Study Selection and Data Extraction - Use dual independent review for study selection and extract data using standardized forms [2].

- Assess Risk of Bias and Similarity - Evaluate study quality and assess transitivity assumption (that studies are sufficiently similar to be combined) [1].

- Perform Statistical Analysis -

- Use frequentist or Bayesian approaches

- Check for inconsistency between direct and indirect evidence

- Present results with league tables and rank probabilities

Protocol 2: Implementing Matching-Adjusted Indirect Comparison

Objective: To compare treatments when individual patient data is available for only one study.

Methodology:

- Identify Effect Modifiers - Identify and select prognostic variables and effect modifiers based on clinical knowledge [1].

- Estimate Weights - Calculate weights for each patient in the IPD study so that the weighted baseline characteristics match those reported in the aggregate data study [1].

- Assess Effective Sample Size - Evaluate the loss of effective sample size after weighting and its implications for precision [1].

- Compare Outcomes - Compare the outcomes of the re-weighted IPD population with the aggregate data from the comparator study [1].

- Conduct Sensitivity Analyses - Assess robustness of findings to different selections of effect modifiers and weighting approaches [1].

Evidence Network Visualization

In scientific research and drug development, a direct comparison is a head-to-head evaluation where two or more interventions are tested against each other simultaneously under controlled conditions. This approach provides the highest quality evidence for determining the relative effectiveness, safety, and value of competing therapies [3]. Unlike indirect methods that rely on proxy measures or comparisons across different study populations, direct comparisons yield unambiguous evidence about which intervention performs superiorly for specific clinical outcomes.

The alternative, indirect assessment, utilizes proxy measures that require reflection on or self-reporting of outcomes rather than direct demonstration of the measured phenomenon [4]. In clinical research, this typically involves comparing interventions through historical controls, network meta-analyses, or real-world evidence studies that attempt to bridge data from separate clinical trials. While sometimes necessary when head-to-head trials are unavailable, indirect methods introduce significant limitations for establishing causal relationships between interventions and outcomes.

The distinction between these approaches mirrors methodologies in other fields. In financial accounting, the direct method of cash flow statement preparation shows actual cash receipts and payments, providing clear visibility into transactions, while the indirect method starts with net income and adjusts for non-cash items, offering less transparency into specific cash movements [5] [6]. Similarly, in educational assessment, direct methods require students to demonstrate knowledge or skills, while indirect methods rely on self-reported perceptions of learning [4] [7].

The Scientific and Regulatory Case for Direct Comparisons

Why Head-to-Head Trials Represent the Gold Standard

Head-to-head randomized controlled trials (RCTs) constitute the most rigorous scientific approach for comparing competing interventions because their design minimizes biases that plague indirect comparisons. By randomly assigning participants to different treatment groups within the same study protocol, head-to-head trials ensure that population characteristics, measurement techniques, and study procedures remain consistent across comparison groups. This controlled environment enables researchers to attribute outcome differences directly to the interventions being studied rather than to confounding variables.

The regulatory and evidence-based medicine communities consistently prioritize head-to-head trial data when making formulary and treatment recommendations. As noted in gastroenterology research, "Head-to-head clinical trials are the highest quality of evidence to support comparative effectiveness" for positioning biologic therapies [8]. This preference stems from the superior internal validity of direct comparative studies, which provide the most reliable foundation for clinical decision-making and health technology assessments.

Documented Limitations of Indirect Comparison Methods

Indirect comparisons and real-world evidence (RWE), while valuable in certain contexts, present significant methodological challenges that limit their reliability for establishing comparative effectiveness:

Susceptibility to Unmeasured Confounding: Indirect comparisons struggle to account for variations in patient populations, treatment strategies, and endpoint assessments across different studies and time periods [8].

Inconsistent Correlation with Direct Measures: Research comparing indirect and direct assessment methods in pediatric physical activity found only "low-to-moderate correlations (range: -0.56 to 0.89)" between the two approaches, with indirect measures typically overestimating directly measured values by 72% [9].

Limited Strength Without Anchor Trials: Network meta-analyses rely heavily on the presence of at least one head-to-head comparison to inform the overall network. When no direct trials exist, "the strength of the network is somewhat limited" [8].

Table: Documented Limitations of Indirect Comparisons in Clinical Research

| Limitation | Impact on Evidence Quality | Empirical Support |

|---|---|---|

| Population Heterogeneity | Reduces generalizability and introduces selection bias | Clinical trial patients often don't represent real-world populations [8] |

| Measurement Inconsistency | Compromises validity of cross-trial comparisons | Indirect measures overestimate direct measurements by 72% [9] |

| Temporal Confounding | Fails to account for evolving standards of care | Trials spanning decades show different outcomes due to practice changes [8] |

| Analytical Complexity | Increases risk of methodological errors | Requires sophisticated statistical adjustments with inherent assumptions [8] |

Practical Challenges in Implementing Direct Comparisons

Barriers to Conducting Head-to-Head Trials

Despite their methodological superiority, several significant obstacles limit the widespread implementation of head-to-head trials in clinical research:

Dominance of Industry Sponsorship: A comprehensive analysis of head-to-head randomized trials revealed that "the literature of head-to-head RCTs is dominated by the industry," with 82.3% of randomized subjects included in industry-sponsored trials [10]. This sponsorship creates inherent conflicts of interest that can influence trial design and interpretation.

Systematic Favorability toward Sponsors: Industry-funded head-to-head comparisons "systematically yield favorable results for the sponsors," with sponsored trials being 2.8 times more likely to report "favorable" findings (OR 2.8; 95% CI: 1.6, 4.7) [10]. This bias is particularly pronounced in noninferiority trials, where 96.5% of industry-funded studies reported desirable "favorable" results for the sponsor's product.

Strategic Use of Noninferiority Designs: Industry-sponsored trials "used more frequently noninferiority/equivalence designs," which were strongly associated with "favorable" findings (OR 3.2; 95% CI: 1.5, 6.6) [10]. These designs potentially allow sponsors to demonstrate that their product is "not worse than" rather than superior to competitors, facilitating market entry without proving added clinical benefit.

Resource Intensiveness and Complexity: Head-to-head trials require larger sample sizes, longer durations, and more complex statistical designs than placebo-controlled studies, creating significant financial and logistical barriers for non-commercial researchers.

Therapeutic Areas with Limited Direct Evidence

The absence of head-to-head trials is particularly problematic in certain therapeutic areas. In Crohn's disease, for example, "there are currently no head-to-head phase 3 clinical trials of biologics," forcing clinicians to rely on potentially misleading indirect comparisons [8]. This evidence gap creates significant uncertainty for treatment positioning and clinical decision-making in routine practice.

Diagram Title: Industry Sponsorship Influence on Head-to-Head Trial Outcomes

Methodological Framework for Direct Comparisons

Essential Components of Valid Head-to-Head Designs

Implementing methodologically sound direct comparisons requires careful attention to several key design elements:

Appropriate Sample Sizing: Industry-sponsored head-to-head trials are typically "larger" than non-industry trials, enhancing their statistical power to detect true differences between interventions [10]. Adequate sample sizing ensures that trials can detect clinically meaningful differences with sufficient precision.

Proper Endpoint Selection: Direct comparisons should utilize clinically relevant, objectively measurable endpoints that reflect meaningful patient outcomes rather than surrogate markers. The choice between primary endpoints significantly influences trial interpretation and clinical applicability.

Randomization and Blinding Procedures: Maintaining rigorous randomization and blinding procedures remains essential for minimizing bias in treatment allocation and outcome assessment, even in comparative effectiveness research.

Predefined Statistical Analysis Plans: Given the heightened risk of sponsorship bias, pre-registered statistical analysis plans with clearly defined primary outcomes and analysis methods are crucial for maintaining methodological integrity.

Emerging Methodological Innovations

Recent methodological advances aim to enhance the feasibility and applicability of direct comparison evidence:

Real-World Data Emulation: Researchers are pioneering "Head-to-Head Comparisons using Real World Data" through emulation of target trials, which can "successfully deal with most of the biases that used to plague the use of observational data" [3]. This approach leverages high-quality real-world data to approximate head-to-head comparisons when randomized trials are impractical.

Adaptive Trial Designs: Bayesian adaptive designs and platform trials allow for more efficient evaluation of multiple interventions within a single master protocol, reducing the resources required for comprehensive direct comparisons.

Standardized Methodological Frameworks: Organizations like ISPOR have developed "a framework for consideration when relying on evidence generated from RWD (real world evidence, RWE)" to improve the methodological rigor of comparative effectiveness research [8].

Table: Key Research Reagent Solutions for Comparative Studies

| Reagent/Resource | Function in Comparative Research | Implementation Example |

|---|---|---|

| Real-World Data (RWD) Repositories | Provides regulatory-grade data for comparative analyses | Emulation of target trials for head-to-head comparisons [3] |

| Propensity Score Matching | Balances covariates in non-randomized comparisons | Used in nationwide registry-based cohort studies [8] |

| Network Meta-Analysis | Enables indirect treatment comparisons | Informs relative positioning when direct data is absent [8] |

| Standardized Outcome Measures | Ensures consistent endpoint assessment | Facilitates cross-trial comparisons and evidence synthesis |

Troubleshooting Guide: Addressing Common Direct Comparison Challenges

Frequently Asked Questions on Implementation

Q: How can researchers mitigate sponsorship bias when designing head-to-head trials?

A: Implementation of several safeguards can reduce sponsorship influence: (1) Establish independent steering committees with final authority over trial design and interpretation; (2) Pre-register statistical analysis plans before trial initiation; (3) Utilize independent endpoint adjudication committees; (4) Ensure data analysis is conducted by independent statisticians; (5) Secure contractual agreements guaranteeing publication rights regardless of outcome. These measures are particularly important given that "industry-sponsored comparative assessments systematically yield favorable results for the sponsors" [10].

Q: What methodological approaches can enhance the validity of real-world head-to-head comparisons?

A: When randomized trials are not feasible, researchers can improve real-world evidence through: (1) Emulation of target trials using real-world data, which helps "deal with most of the biases that used to plague the use of observational data" [3]; (2) Comprehensive propensity score matching that balances both measured and clinically relevant unmeasured confounders; (3) Utilization of active comparator designs that compare new interventions against current standard of care rather than placebo; (4) Implementation of new-user designs to avoid prevalent user bias; (5) Validation of outcome definitions within specific data sources.

Q: How should clinicians interpret head-to-head trials with noninferiority designs?

A: Noninferiority trials require careful scrutiny of several elements: (1) The predefined noninferiority margin must be clinically justified and methodologically sound; (2) The analysis should follow both per-protocol and intention-to-treat principles; (3) Readers should assess whether the comparator drug was administered optimally and whether the trial population reflects real-world practice; (4) Consider that "industry-funded noninferiority/equivalence trials" have exceptionally high rates (96.5%) of favorable results for sponsors [10]. When possible, consult independent methodological reviews of noninferiority trials.

Q: What strategies can address the absence of head-to-head trials in therapeutic areas like Crohn's disease?

A: In the absence of direct trials, clinicians and researchers can: (1) Critically evaluate real-world comparative effectiveness studies, paying particular attention to methodological quality and potential confounding; (2) Consider network meta-analyses while recognizing their limitations when no head-to-head trials anchor the network; (3) Support the development of clinician-initiated trials and registry-based comparative studies; (4) Advocate for funding mechanisms that enable independent head-to-head comparisons of established therapies; (5) Implement systematic data collection within clinical practice to support future comparative analyses [8].

Troubleshooting Experimental Design Issues

Diagram Title: Solutions for Missing Head-to-Head Evidence

The scientific community must prioritize direct comparisons through head-to-head trials as the gold standard for establishing comparative therapeutic effectiveness. While indirect methods and real-world evidence provide valuable complementary information, they cannot replace the methodological rigor of properly conducted direct comparisons. The current landscape, dominated by industry-sponsored trials with systematic favorability toward sponsors, necessitates increased investment in independent comparative effectiveness research.

Moving forward, researchers should leverage emerging methodologies like real-world data emulation and adaptive trial designs to make head-to-head comparisons more feasible and efficient. Simultaneously, the field must develop enhanced safeguards against sponsorship bias and promote transparency in trial design and reporting. Only through these concerted efforts can we ensure that clinicians, patients, and policymakers have access to the reliable comparative evidence needed to make informed treatment decisions.

In the evidence-based landscape of healthcare decision-making, direct head-to-head randomized controlled trials (RCTs) represent the gold standard for comparing the efficacy and safety of two or more treatments [1] [11]. However, ethical considerations, practical constraints, and the dynamic nature of treatment landscapes often make such direct comparisons unfeasible or unavailable [1] [12]. This evidence gap is particularly pronounced in oncology and rare diseases, where patient numbers may be low and comparing against inferior treatments or placebo is ethically problematic [1] [12].

Indirect Treatment Comparisons (ITCs) have emerged as a critical methodological framework that enables researchers and health technology assessment (HTA) bodies to compare interventions when direct evidence is lacking [13]. These statistical techniques allow for the estimation of relative treatment effects by leveraging a network of evidence across different studies, preserving the randomization of the originally assigned patient groups where possible [11] [14]. The use of ITCs has increased significantly in recent years, with numerous oncology and orphan drug submissions incorporating ITCs to support regulatory decisions and HTA recommendations [12].

Understanding ITC Fundamentals: FAQs

What justifies the use of an ITC instead of waiting for direct evidence?

ITCs are primarily justified when direct head-to-head evidence between treatments of interest is not available, would be unethical to collect, or is impractical to obtain within relevant decision-making timelines [1] [13]. This frequently occurs in situations where a new treatment has only been compared against placebo rather than active comparators, when multiple relevant comparators exist across different jurisdictions, or when patient populations are too small for adequately powered direct trials (as in rare diseases) [1] [12].

What are the main categories of ITC methods?

ITC methods can broadly be categorized into unadjusted and adjusted approaches:

- Naïve Comparisons: Directly compare study arms from different trials without adjustment (generally discouraged due to susceptibility to bias) [11]

- Adjusted Indirect Comparisons: Preserve randomization by comparing treatments via a common comparator [11] [14]

- Network Meta-Analysis (NMA): Simultaneously incorporates multiple treatments and comparisons in a connected evidence network [1]

- Population-Adjusted Methods: Adjust for differences in patient characteristics across studies (e.g., MAIC, STC) [1]

How do HTA agencies and regulators view ITC evidence?

ITCs are currently considered by HTA agencies on a case-by-case basis, with acceptability remaining variable [1]. However, their use in submissions does not appear to negatively impact recommendation outcomes compared to head-to-head trial evidence [15]. Authorities more frequently favor anchored or population-adjusted ITC techniques for their effectiveness in data adjustment and bias mitigation, while naïve comparisons are generally considered insufficiently robust for decision-making [15] [12].

What are the most common critiques of submitted ITCs?

Common critiques include unresolved heterogeneity in study designs included in the ITCs and failure to adjust for all potential prognostic or effect-modifying factors in population-adjusted methods [15]. The limited strength of inference from indirect comparisons compared to direct evidence is also a fundamental consideration [14].

ITC Methodologies: Technical Guide

Adjusted Indirect Comparison (Bucher Method)

The Bucher method, one of the foundational ITC techniques, enables comparison of two treatments (A and B) through a common comparator (C) [11]. This approach preserves the randomization of the original trials by comparing the treatment effect of A versus C with that of B versus C [11] [14].

Experimental Protocol:

- Identify two randomized trials with a common comparator

- Extract relative treatment effects for A vs. C and B vs. C

- Calculate indirect effect of A vs. B as the difference between the two direct effects

- Compute variance of the indirect effect as the sum of variances of the two direct effects

Workflow Diagram:

Network Meta-Analysis

Network Meta-Analysis extends the principles of indirect comparison to multiple treatments simultaneously, forming connected networks of evidence [1]. NMA can incorporate both direct and indirect evidence, reducing uncertainty through more efficient use of all available data [1] [11].

Experimental Protocol:

- Conduct systematic literature review to identify all relevant RCTs

- Map treatments and comparators to establish network connectivity

- Extract outcome data and assess trial characteristics and quality

- Choose statistical model (frequentist or Bayesian)

- Evaluate network consistency and heterogeneity

- Estimate relative treatment effects for all possible pairwise comparisons

Workflow Diagram:

Matching-Adjusted Indirect Comparison (MAIC)

MAIC is a population-adjusted method used when patient-level data (IPD) is available for one study but only aggregate data is available for the other [1]. This method weights patients from the IPD study to match the aggregate baseline characteristics of the comparator study.

Experimental Protocol:

- Obtain IPD for the index treatment trial

- Extract aggregate baseline characteristics from the comparator trial

- Estimate weights using method of moments or maximum entropy

- Apply weights to the IPD to create a balanced population

- Compare outcomes between the weighted IPD population and aggregate comparator

Troubleshooting Common ITC Challenges

How should I handle disconnected evidence networks?

Problem: Treatments of interest cannot be connected through common comparators in the available evidence base. Solution:

- Extend literature search to identify additional linking studies

- Consider multiple comparators that create connecting pathways

- Explore population-adjusted methods if studies have different comparators but similar populations

- If connection remains impossible, acknowledge this fundamental limitation

What if studies show significant clinical or methodological heterogeneity?

Problem: Included studies differ substantially in population characteristics, outcomes measurement, or methodological quality. Solution:

- Document sources of heterogeneity systematically

- Use random-effects models to account for between-study heterogeneity

- Conduct subgroup analysis or meta-regression to explore heterogeneity sources

- Consider population-adjusted methods (MAIC, STC) for addressing cross-trial differences

- Evaluate whether indirect comparison remains appropriate given the heterogeneity level

How can I validate ITC results when direct evidence becomes available?

Problem: Assessing reliability of ITC findings after direct head-to-head evidence emerges. Solution:

- Compare ITC results with subsequent direct evidence

- Evaluate whether confidence intervals overlap and point estimates are similar

- Document and analyze reasons for any discrepancies

- Use this validation to refine future ITC methodology selection

ITC Method Selection Guide

Table 1: ITC Method Applications and Data Requirements

| Method | Best Application Context | Data Requirements | Key Assumptions |

|---|---|---|---|

| Bucher Method [11] | Two treatments with a common comparator | Aggregate data for both trials | Similarity between trials in effect modifiers |

| Network Meta-Analysis [1] | Multiple treatments with connected evidence network | Aggregate data for all trials | Consistency between direct and indirect evidence |

| Matching-Adjusted Indirect Comparison (MAIC) [1] | Single-arm trials or different comparators with IPD for one study | IPD for index treatment, aggregate for comparator | All effect modifiers are measured and included |

| Simulated Treatment Comparison (STC) [1] | Different comparators with IPD for one study | IPD for index treatment, aggregate for comparator | Appropriate model specification for outcome prediction |

Table 2: Prevalence of ITC Methods in Recent Submissions

| ITC Method | Frequency in Literature [1] | Use in HTA Submissions [15] | Regulatory Acceptance Level |

|---|---|---|---|

| Network Meta-Analysis | 79.5% | 51% | High |

| Matching-Adjusted Indirect Comparison | 30.1% | 27% | Moderate to High |

| Naïve Comparison | Not reported | 17% | Low |

| Bucher Method | 23.3% | Not reported | Moderate |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Methodological Components for ITC Analysis

| Component | Function | Implementation Examples |

|---|---|---|

| Systematic Review Protocol [1] | Ensumes comprehensive, unbiased evidence identification | PRISMA guidelines, predefined search strategy and inclusion criteria |

| Statistical Software Packages | Enables implementation of complex ITC methods | R (gemtc, pcnetmeta), SAS, WinBUGS/OpenBUGS |

| Quality Assessment Tools | Evaluates risk of bias in included studies | Cochrane Risk of Bias tool, ISPOR checklist for ITC quality |

| Consistency Evaluation Methods | Assesses agreement between direct and indirect evidence | Side-splitting approach, node-splitting, inconsistency factors |

Indirect Treatment Comparisons represent a sophisticated methodological framework that continues to evolve in response to the complex evidence needs of healthcare decision-making [1] [13]. While not replacing direct evidence, ITCs provide valuable insights for comparative effectiveness assessment when head-to-head trials are unavailable [12]. The appropriate application of these methods requires careful consideration of the evidence structure, potential effect modifiers, and underlying assumptions [1] [11].

As treatment landscapes grow increasingly complex, particularly in oncology and rare diseases, the strategic use of ITCs will remain essential for informing reimbursement decisions and clinical understanding [15] [12]. Future methodological developments will likely focus on enhancing population adjustment methods, standardizing quality assessment, and improving the transparency and interpretation of ITC results for healthcare decision-makers [1] [13].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between the direct and indirect methods in research?

The core difference lies in how comparisons are made. A direct method involves a head-to-head comparison, such as a clinical trial that directly compares two interventions (Drug A vs. Drug B) or a keyword recommendation system that suggests terms based on a direct analysis of a target dataset's abstract text against keyword definitions [16] [11]. In contrast, an indirect method compares two items through a common link. For example, it compares the efficacy of Drug A vs. Drug B by analyzing their independent comparisons against a common control (like a placebo) [11] [17]. Similarly, in keyword recommendation, an indirect method would suggest keywords for a target dataset based on the keywords assigned to other, similar existing datasets [16].

Q2: Why are the assumptions of Similarity, Homogeneity, and Consistency critical for indirect comparisons?

These assumptions are the foundation for ensuring the validity of indirect comparisons. If they are not met, the results can be misleading or biased [18].

- Similarity ensures that the studies being linked are comparable enough for the comparison to be meaningful [18].

- Homogeneity confirms that the data within each comparison group is consistent, reducing the risk that internal variability is skewing the result [19] [18].

- Consistency validates the indirect approach by checking that its results align with any available direct evidence for the same comparison [18].

Q3: I have both direct and indirect evidence for a comparison. Should I combine them?

Combining direct and indirect evidence should be done with extreme caution and only after formally assessing the consistency between the two [18]. Combining evidence that is in conflict can lead to an invalid and misleading overall result. It is essential to investigate the causes of any inconsistency before proceeding [18].

Q4: What is a "naïve" indirect comparison, and why is it not recommended?

A naïve indirect comparison directly compares results from two different studies without adjusting for the fact that they were conducted separately with different populations and conditions. This approach "breaks" the original randomization of the individual studies and is considered as unreliable as a simple observational comparison, as it is highly susceptible to confounding and bias [11] [18]. The accepted approach is an adjusted indirect comparison, which preserves the within-trial randomization by comparing the relative effects of each intervention against a common comparator [11] [17].

Troubleshooting Guides

Issue 1: Suspected Violation of the Similarity Assumption

Problem: The trials or datasets you are attempting to link indirectly have fundamental differences that may invalidate the comparison.

Solution: Investigate and Test for Similarity

Compare Trial/Dataset Characteristics: Create a table to systematically compare key characteristics across all studies. This is a primary method for assessing the similarity assumption [18].

Table: Key Characteristics for Similarity Assessment

Characteristic Trial Set A vs. C Trial Set B vs. C Patient Demographics (e.g., mean age) Disease Severity Concomitant Medications Trial Duration Outcome Definitions Use Statistical Techniques: If differences are found, employ sensitivity analysis, subgroup analysis, or meta-regression to investigate how these characteristics influence the indirect comparison result [18].

Issue 2: Handling Heterogeneity in Your Data

Problem: The data within a single group (e.g., all trials comparing Drug A and Placebo) shows high variability, violating the homogeneity assumption.

Solution: Assess and Address Heterogeneity

- Visual Inspection: Use forest plots to visually assess the variability (heterogeneity) in effect sizes across studies [20].

- Statistical Tests: Use formal statistical tests to quantify heterogeneity, such as the I² statistic or Cochran's Q test [18].

- Investigate Sources: If significant heterogeneity is found, do not ignore it. Use subgroup analysis or meta-regression to identify its potential causes, such as differences in drug dosage or patient subgroups [18].

- Model Selection: Consider using a random-effects model for your meta-analysis, which inherently accounts for some degree of heterogeneity between studies, as opposed to a fixed-effect model which assumes a single true effect size [18].

Issue 3: Inconsistency Between Direct and Indirect Evidence

Problem: The result from your indirect comparison conflicts with the result from a head-to-head (direct) comparison of the same two interventions.

Solution: Assess and Reconcile Inconsistency

- Formal Testing: Use proposed statistical methods to test for consistency between direct and indirect evidence [18].

- Prioritize and Investigate: Do not combine conflicting evidence. If a direct comparison is available from high-quality, well-designed randomized trials, it is generally considered the highest standard of evidence [18]. Scrutinize the direct evidence for potential biases (e.g., inadequate blinding, allocation concealment) that may exaggerate treatment effects [17]. Also, re-check the similarity and homogeneity assumptions in your indirect comparison.

- Report with Caution: Clearly report the inconsistency and urge caution in interpreting the results. State whether a result is derived from indirect evidence [18].

Protocol 1: Conducting an Adjusted Indirect Comparison

This protocol outlines the steps for comparing two interventions, A and B, via a common comparator C [11].

Objective: To estimate the relative efficacy of Intervention A versus Intervention B using adjusted indirect comparison.

Methodology:

- Identify Trial Sets: Systematically identify two sets of randomized controlled trials (RCTs):

- Set 1: RCTs comparing Intervention A vs. Common Comparator C.

- Set 2: RCTs comparing Intervention B vs. Common Comparator C.

- Calculate Relative Effects: For each trial set, calculate the pooled relative treatment effect (e.g., risk ratio, mean difference) and its variance against the common comparator C.

- Perform Indirect Comparison: The indirect estimate of A vs. B is calculated as the difference between the two pooled effects.

- For continuous data (e.g., blood glucose): (A - C) - (B - C)

- For binary data (e.g., response rate): (A / C) / (B / C)

- Calculate Variance: The variance of the indirect estimate (A vs. B) is the sum of the variances of the two direct comparisons (A vs. C and B vs. C). This leads to greater uncertainty than a direct head-to-head trial [11].

Table: Hypothetical Example of Adjusted Indirect Comparison (Continuous Data)

| Trial Set | Observed Change (vs. C) | Variance |

|---|---|---|

| A vs. C | -3.0 mmol/L | 1.0 |

| B vs. C | -2.0 mmol/L | 1.0 |

| Adjusted Indirect Comparison (A vs. B) | -1.0 mmol/L | 2.0 |

Protocol 2: Assessing Homogeneity of Variance

Objective: To test the assumption that variability is equal across groups, a requirement for many statistical tests like ANOVA [20] [21].

Methodology:

- Formulate Hypotheses:

- Null Hypothesis (H₀): Variances across all groups are equal (homogeneous).

- Alternative Hypothesis (H₁): At least one group's variance is different.

- Select a Statistical Test:

- Interpret Results: A resulting p-value below your significance level (e.g., 0.05) provides evidence to reject the null hypothesis, indicating heterogeneous variances. In this case, the standard ANOVA may not be appropriate, and alternative procedures (e.g., Welch's ANOVA, data transformation) should be considered.

Method Comparison & Visualization

Table: Comparison of Direct and Indirect Method Characteristics

| Feature | Direct Method | Indirect Method |

|---|---|---|

| Core Principle | Head-to-head comparison of A vs. B [11] | Comparison of A vs. B via a common link C [11] |

| Key Assumption | Proper randomization and blinding to minimize bias | Similarity, Homogeneity, Consistency [18] |

| Primary Advantage | Considered the highest quality evidence; avoids similarity assumption [18] | Can be performed when head-to-head trials are unavailable [11] |

| Primary Disadvantage | Expensive and time-consuming to conduct [11] | Increased statistical uncertainty; relies on untestable assumptions [11] [18] |

| Application in Keyword Research | Recommends keywords by matching a dataset's abstract directly to keyword definitions [16] | Recommends keywords based on terms assigned to similar existing datasets [16] |

Indirect Comparison Logic

Core Assumptions for Validity

The Scientist's Toolkit: Key Reagents & Materials

Table: Essential "Reagents" for Robust Research Comparisons

| Item | Function |

|---|---|

| Systematic Review Protocol | Ensures a comprehensive and unbiased identification of all relevant studies (trial sets), forming a reliable foundation for any comparison [18]. |

| Common Comparator (C) | The crucial "reagent" that links two interventions (A and B) in an indirect comparison. It must be a relevant and consistent standard (e.g., placebo, standard therapy) across trial sets [11]. |

| Statistical Software (e.g., R, Python) | Used to perform pooled meta-analyses, calculate indirect estimates and their confidence intervals, and run critical tests for homogeneity and consistency [18]. |

| Quality Assessment Tool (e.g., Cochrane RoB Tool) | A "calibration" tool to assess the methodological rigor of included trials, helping to identify potential biases that could skew results [18]. |

| Pre-specified Analysis Plan | A detailed protocol that defines all assumptions, statistical methods, and subgroup analyses before conducting the comparison, guarding against data dredging and spurious findings [18]. |

What is an Indirect Treatment Comparison and when is it necessary?

Answer: An Indirect Treatment Comparison (ITC) is a statistical methodology used to compare the efficacy or safety of multiple treatments when direct, head-to-head evidence from randomized controlled trials (RCTs) is unavailable or impractical to obtain [1] [22] [23]. These methods are essential in several scenarios:

- Lack of Direct Evidence: When two treatments of interest have never been directly compared in an RCT [1] [13].

- Ethical or Practical Constraints: In situations where conducting a direct RCT would be unethical (e.g., for life-threatening diseases) or impractical (e.g., for rare diseases with low patient numbers) [1] [23].

- Multiple Comparators: When multiple treatment options exist, and comparing all of them directly in trials would be prohibitively expensive or time-consuming [1] [22].

HTA agencies express a clear preference for RCTs, but ITCs provide valuable alternative evidence where direct comparative evidence is missing [1] [13] [23].

What is the fundamental difference between a 'naïve' comparison and an 'adjusted' ITC?

Answer: A 'naïve' comparison (or unadjusted comparison) directly compares study arms from different trials as if they were from the same RCT. This approach is generally avoided because it is highly susceptible to bias from confounding factors, particularly imbalances in effect-modifying patient characteristics between trials. The treatment effect may be significantly over- or under-estimated [1] [13].

In contrast, 'adjusted' ITC techniques are statistically rigorous methods designed to account for the lack of randomization between trials. They respect the randomization that occurred within each trial and aim to minimize bias by adjusting for differences in study populations and characteristics. All modern ITC techniques, including those discussed in this guide, are forms of adjusted indirect comparisons [1].

Core ITC Methods: From Foundational to Advanced

This section details the key ITC methodologies, ordered from foundational to more complex, population-adjusted techniques.

The Bucher Method

Answer: The Bucher method is a foundational adjusted indirect comparison technique for a simple three-treatment network where two interventions (B and C) have been compared to a common comparator (A) but not to each other [24] [22].

- Methodology: The indirect effect estimate for B vs. C is calculated as the difference between the direct effect of B vs. A and the direct effect of C vs. A. The variance of the indirect estimate is the sum of the variances of the two direct comparisons. For ratio measures like Odds Ratios or Hazard Ratios, calculations are performed on a logarithmic scale [24].

- Key Assumption: It relies on the transitivity assumption, meaning that the trials used for the direct comparisons (A vs. B and A vs. C) are sufficiently similar with respect to potential effect modifiers (e.g., patient characteristics, trial design) [24].

- Applicability: It is recommended for simple networks and produces results identical to a frequentist Network Meta-Analysis for the same three-treatment network [24].

Table: Summary of the Bucher Method

| Aspect | Description |

|---|---|

| Network Structure | Three treatments (A, B, C) with a common comparator A. |

| Input Data | Aggregate data (e.g., effect estimates and confidence intervals) from the direct comparisons A vs. B and A vs. C. |

| Output | An indirect effect estimate and confidence interval for the comparison B vs. C. |

| Key Strength | Simplicity and ease of use; provides a valid indirect estimate for a common scenario [24]. |

| Key Limitation | Limited to a simple three-treatment network and cannot incorporate direct evidence on B vs. C if it becomes available [22]. |

Network Meta-Analysis (NMA)

Answer: Network Meta-Analysis is an extension of the Bucher method that allows for the simultaneous comparison of multiple treatments (typically more than three) within a single, coherent statistical model. It integrates both direct and indirect evidence across an entire network of treatments [22] [23].

- Methodology: NMA uses all available direct and indirect evidence to estimate the relative effects between all treatments in the network. It can be conducted within frequentist or Bayesian statistical frameworks. A key output is the relative ranking of treatments for a given outcome [22].

- Key Assumption: NMA requires the consistency assumption, which is an extension of transitivity to more complex networks. This means that direct and indirect evidence on the same comparison are in agreement [22].

- Applicability: NMA is the most frequently described ITC technique and is suitable for connected networks of evidence where multiple treatments have been compared in various combinations [1] [23].

Table: Summary of Network Meta-Analysis (NMA)

| Aspect | Description |

|---|---|

| Network Structure | Complex networks with multiple treatments and both direct and indirect connections. |

| Input Data | Aggregate data from all available trials in the network. |

| Output | Relative effect estimates for all possible treatment pairings and often treatment rankings. |

| Key Strength | Maximizes the use of all available evidence; provides a comprehensive overview of a treatment landscape [1] [22]. |

| Key Limitation | Increased complexity; requires careful assessment of consistency and network geometry [22]. |

Population-Adjusted Indirect Comparisons (MAIC and STC)

Answer: When the transitivity assumption is violated due to differences in patient characteristics (effect modifiers) between trials, standard ITCs may be biased. Population-adjusted methods use Individual Patient Data (IPD) from one trial and aggregate data from another to adjust for these imbalances [25].

Matching-Adjusted Indirect Comparison (MAIC)

- Methodology: MAIC uses a method similar to propensity score weighting. IPD from one trial is re-weighted so that the distribution of its selected baseline characteristics matches the published summary characteristics of the other trial. The analysis is then performed on the weighted population [25] [23].

- Applicability: Particularly common in submissions for oncology and rare diseases, where single-arm studies are frequent [1] [23].

Simulated Treatment Comparison (STC)

- Methodology: STC uses regression modeling on the IPD to model the relationship between patient characteristics and the outcome. This model is then applied to the aggregate data population of the other trial to predict the treatment outcome [25].

- Applicability: Like MAIC, it is used when IPD is available for only one of the studies being compared [25].

A critical distinction is between anchored and unanchored comparisons. An anchored comparison uses a common comparator arm and is always preferred. An unanchored comparison, which lacks a common comparator, requires much stronger, often infeasible, assumptions and should be used with extreme caution [25].

Table: Comparison of MAIC and STC

| Aspect | Matching-Adjusted Indirect Comparison (MAIC) | Simulated Treatment Comparison (STC) |

|---|---|---|

| Core Methodology | Propensity score re-weighting of IPD. | Regression modeling on IPD, then prediction. |

| Data Requirement | IPD from one trial; aggregate data from the other. | IPD from one trial; aggregate data from the other. |

| Adjustment Mechanism | Creates a pseudo-population from the IPD that matches the aggregate trial's covariates. | Models the outcome conditional on covariates in the IPD and applies it to the aggregate population. |

| Key Strength | Does not require specifying an outcome model; focuses on balancing covariates. | Can potentially adjust for a wider range of effect modifiers if correctly specified. |

| Key Limitation | Can lead to reduced effective sample size and precision after weighting [25]. | Relies on correct specification of the outcome model, risking ecological bias [25]. |

The Researcher's Toolkit: ITC Method Selection and Reagents

How do I select the appropriate ITC method for my research?

Answer: The choice of ITC technique is critical and should be guided by the available evidence and the structure of your research question [1]. The following workflow provides a logical path for selecting the most appropriate method.

What are the essential "research reagents" for conducting a robust ITC?

Answer: Just as a laboratory experiment requires specific reagents, conducting a valid ITC depends on key methodological components.

Table: Essential "Research Reagents" for ITCs

| Research Reagent | Function and Importance |

|---|---|

| Systematic Literature Review | Forms the foundation by identifying all relevant evidence. Ensures the analysis is comprehensive and minimizes selection bias [1]. |

| Connected Evidence Network | The structure of available comparisons. A connected network (e.g., via a common comparator) is essential for anchored, bias-reduced comparisons [24] [25]. |

| Individual Patient Data (IPD) | The "gold standard" data for population-adjusted methods like MAIC and STC. Allows for detailed adjustment of patient-level covariates [25]. |

| Risk of Bias Assessment Tool | Critical for evaluating the internal validity of the included trials. The certainty of an ITC cannot exceed that of the input studies [24]. |

| Statistical Software (R, Stata, SAS) | Platforms with specialized packages (e.g., gemtc, BUGSnet for R) are necessary for performing complex analyses like NMA and MAIC [24]. |

Troubleshooting Common ITC Experimental Challenges

How do I assess the validity of the transitivity assumption?

Answer: Assessing transitivity is a qualitative process performed before the statistical analysis. It involves comparing the clinical and methodological characteristics of the included trials to ensure they are sufficiently similar [24].

- Protocol: Follow these steps:

- List Potential Effect Modifiers: Identify patient or trial characteristics (e.g., disease severity, age, prior lines of therapy, outcome definitions) that are known to influence the treatment effect.

- Compare Study Populations: Create a table comparing the summary statistics (means, proportions) for these characteristics across all trials.

- Compare Trial Designs: Assess differences in dosage, follow-up time, and randomization procedures.

- Expected Outcome: No major, systematic differences in key effect modifiers between the trials. The presence of statistical heterogeneity in pairwise meta-analyses may signal a violation of transitivity [24].

What should I do if my network has a closed loop and I suspect inconsistency?

Answer: Inconsistency occurs when direct and indirect evidence for the same treatment comparison disagree. It violates the key assumption of NMA.

- Protocol:

- Local Approaches: Use statistical tests, such as the Bucher method for a single closed loop, to check if the direct and indirect estimates are in agreement.

- Global Approaches: Employ statistical models that can detect inconsistency across the entire network (e.g., inconsistency models in NMA software).

- Investigate Sources: If inconsistency is found, explore clinical or methodological differences between the studies in the direct and indirect pathways that might explain the disagreement.

- Solution: If inconsistency is detected and explained, consider using network meta-regression or subgroup analysis to account for the effect modifier causing the inconsistency [22].

My MAIC analysis resulted in a very small effective sample size. What went wrong?

Answer: A large reduction in effective sample size (ESS) after weighting is a common issue in MAIC, indicating poor "population overlap."

- Problem: This occurs when the patient population in the IPD trial is very different from the aggregate trial's population. The weighting model assigns very high weights to a small number of patients who resemble the target population, making the estimate unstable and imprecise [25].

- Solutions:

- Re-evaluate Covariates: Ensure you are only weighting on true effect modifiers, not all available covariates.

- Check Overlap: Graphically explore the distribution of propensity scores before and after matching to assess overlap.

- Consider Alternatives: If ESS is too low, the results may be unreliable. Consider using STC or clearly acknowledging the high uncertainty in your conclusions.

How is the certainty of evidence from an ITC evaluated?

Answer: The certainty of evidence from an ITC should be formally graded using approaches like GRADE (Grading of Recommendations, Assessment, Development, and Evaluations). Key considerations specific to ITCs include [24]:

- Indirectness: The evidence is by definition indirect, which typically lowers the certainty rating.

- Intransitivity: If there are concerns about violations of the transitivity assumption, the certainty should be downgraded.

- Incoherence/Inconsistency: The presence of statistical inconsistency between direct and indirect evidence lowers certainty.

- Imprecision: The confidence intervals of the ITC estimate are often wider than those from direct comparisons, which may lead to downgrading for imprecision.

The final certainty of the indirect evidence cannot be higher than the lowest certainty of the two direct comparisons that contributed to it [24].

Executing the Analysis: A Practical Guide to Key Indirect Comparison Methodologies

Frequently Asked Questions (FAQs)

1. What is the core difference between Frequentist and Bayesian statistics?

The core difference lies in how they interpret "probability." Frequentist statistics view probability as the long-term frequency of an event occurring. For example, a Frequentist would say that if you flip a fair coin countless times, the probability of heads is 50% because it lands heads half the time. Bayesian statistics, however, treat probability as a measure of belief or plausibility in a proposition. A Bayesian would be comfortable stating there is a 50% chance a coin will land on heads on the next toss based on their current state of knowledge [26].

2. How do the approaches differ in incorporating prior knowledge or beliefs?

This is a major point of divergence. The Bayesian approach formally incorporates prior knowledge or existing beliefs into the analysis. You start with a "prior" probability, which is then updated with new experimental data to form a "posterior" probability [26]. The Frequentist approach typically does not formally incorporate prior beliefs. It relies solely on the data from the current experiment, operating under an initial assumption of no effect (the null hypothesis) [26].

3. In plain English, how does the reasoning differ?

Imagine you've misplaced your phone somewhere in your home and you press a button to make it beep [27].

- A Frequentist hears the beep and uses that data (the sound) to infer the area they should search.

- A Bayesian also hears the beep, but they combine this information with their prior knowledge of where they have misplaced their phone in the past to identify the best area to search [27].

4. When should I use a Frequentist approach for my experiments?

A Frequentist approach is often suitable when [26]:

- You have no strong prior knowledge or historical data on the subject.

- You need a straightforward, objective measure of statistical significance (like a p-value).

- You can collect a large sample size to ensure strong statistical power.

- Your research field or regulatory context traditionally relies on p-values and confidence intervals.

5. When is a Bayesian approach more beneficial?

A Bayesian approach is particularly powerful when [26]:

- You have valuable prior information from previous experiments, pilot studies, or literature that you want to incorporate.

- You are working with limited data, as the prior information can lead to more informed estimates.

- You want to make direct probability statements about your hypotheses (e.g., "There is an 85% probability that the new drug is better").

- Your experiment is part of an iterative process, and you want to continuously update your understanding as new data streams in.

6. Can you give an example of how both methods would work in an A/B test?

Suppose you are A/B testing a new website feature to improve user engagement [26].

- Frequentist: You would start with a null hypothesis (the new feature has no effect). After collecting data, you calculate a p-value. If the p-value is below a threshold (e.g., 0.05), you reject the null hypothesis and conclude there is a statistically significant difference.

- Bayesian: You would start with a prior belief about the feature's effectiveness (e.g., a 60% chance it improves engagement). As user interaction data comes in, you update this belief to a posterior probability (e.g., "Given the new data, there is now a 78% chance the feature improves engagement").

Troubleshooting Guide: Common Issues in Statistical Analysis

Problem 1: Inconclusive or Borderline Significant Results

Symptoms: Your p-value is hovering around the 0.05 significance level, making it difficult to draw a firm conclusion. Alternatively, your Bayesian posterior probability is around 50-60%, indicating high uncertainty.

Diagnosis and Solutions:

- Check Your Sample Size:

- Issue: The experiment may be underpowered.

- Action: Conduct a power analysis to determine if your sample size was sufficient to detect a meaningful effect. Plan to increase your sample size if necessary [26].

- Review Your Experimental Design:

- Issue: High variability in your measurements could be obscuring the true effect.

- Action: Use blocking, randomization, and controlled conditions to reduce unexplained variance. Ensure your measurement tools are precise [28].

- Consider a Different Approach:

- Action: If you have prior data, consider switching to or incorporating a Bayesian framework. This allows you to formally use the prior information to reduce uncertainty in your estimates [26].

Problem 2: Results Contradict Prior Knowledge or Established Theory

Symptoms: Your experiment shows a surprising effect that seems to defy logical explanation or previous findings.

Diagnosis and Solutions:

- Troubleshoot the Experiment Itself:

- Action: Systematically check your experimental setup. Were the appropriate controls in place and did they behave as expected? Could there be contamination, instrument miscalibration, or a software bug? Propose new control experiments to isolate the source of the unexpected result [28].

- Re-evaluate Your Assumptions:

- Check for Confounding Variables:

- Action: Identify factors you couldn't control but could measure. In your analysis, treat these factors as covariates to account for their potential influence [29].

Problem 3: Deciding Between Methods for a New, Complex Experiment

Symptoms: You are designing a novel experiment and are unsure whether a Frequentist or Bayesian framework is more appropriate.

Diagnosis and Solutions:

- Define Your Goal:

- If your goal is to test a specific null hypothesis and provide a strict "yes/no" answer about an effect's existence, a Frequentist approach is straightforward.

- If your goal is to quantify the evidence for a hypothesis and update your beliefs iteratively, a Bayesian approach is more natural [26].

- Assess Your Available Information:

- Action: Use the decision table in the next section to evaluate your context, data availability, and need for incorporating prior knowledge.

Comparison of Frameworks

The table below provides a structured comparison to help you choose the right statistical framework for your experimental analysis [26].

| Aspect | Frequentist Approach | Bayesian Approach |

|---|---|---|

| Definition of Probability | Long-term frequency of an event | Degree of belief or plausibility in a proposition |

| Incorporation of Prior Knowledge | Not formalized; relies only on current data | Formalized via "prior" probabilities |

| Output of Analysis | Point estimates, Confidence Intervals, p-values | Posterior distributions, Credible Intervals |

| Interpretation of Results | "If the null hypothesis were true, the probability of observing data this extreme is X (p-value)." | "The probability of our hypothesis being true, given the collected data, is X." |

| Ideal Use Case Context | Novel research with no prior data, traditional hypothesis testing, regulatory settings | Iterative optimization, incorporating historical data, making direct probability statements |

| Sample Size | Often requires larger sample sizes | Can provide insights with smaller sample sizes when prior information is strong |

Experimental Protocols and Workflows

Protocol 1: Conducting a Frequentist A/B Test

Aim: To determine if there is a statistically significant difference between two variants (A and B) using a Frequentist hypothesis test.

Methodology:

- Formulate Hypotheses:

- Null Hypothesis (H₀): There is no difference in the performance metric between variant A and B.

- Alternative Hypothesis (H₁): There is a difference in the performance metric between variant A and B.

- Design Experiment: Determine the primary metric (e.g., conversion rate), significance level (α, typically 0.05), and desired statistical power. Calculate the required sample size.

- Randomize and Execute: Randomly assign subjects to either variant A or B. Run the experiment until the required sample size is reached.

- Collect Data: Record the outcome metric for all subjects.

- Perform Statistical Test: Calculate a test statistic (e.g., t-statistic) and the corresponding p-value.

- Draw Conclusion: If p-value ≤ α, reject the null hypothesis and conclude a significant difference exists. If p-value > α, fail to reject the null hypothesis.

Protocol 2: Implementing a Bayesian Analysis

Aim: To update the belief about a parameter (e.g., conversion rate) by combining prior knowledge with new experimental data.

Methodology:

- Define Prior Probability: Quantify existing knowledge or belief about the parameter before the experiment as a probability distribution (the "Prior").

- Collect Data: Run the experiment and collect new data, just as in the Frequentist protocol.

- Apply Bayes' Theorem: Use the theorem to combine the prior distribution with the new data (the "Likelihood").

- Compute Posterior Probability: The result of Bayes' Theorem is the "Posterior" distribution, which represents your updated belief about the parameter.

- Interpret Results: Analyze the posterior distribution. You can calculate credible intervals (e.g., "There is a 95% probability the true value lies within this interval") and make direct probability statements.

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Tool | Function in Analysis |

|---|---|

| P-value | A Frequentist metric measuring the probability of observing the collected data (or more extreme) if the null hypothesis is true. Used as a criterion for statistical significance [26]. |

| Confidence Interval (CI) | A Frequentist range of values, derived from the sample data, that is likely to contain the true population parameter. A 95% CI means that if the experiment were repeated many times, 95% of such intervals would contain the true value. |

| Prior Distribution | The cornerstone of Bayesian analysis. It represents the researcher's belief about a parameter before observing the current data. It can be informative (based on past data) or uninformative (neutral) [26]. |

| Likelihood Function | Represents the probability of observing the collected data given different possible values of the parameter being estimated. It is a key component in both statistical frameworks. |

| Posterior Distribution | The Bayesian output representing the updated belief about the parameter after combining the prior distribution with the new data via Bayes' Theorem. It is the basis for all Bayesian inference [26]. |

| Credible Interval | The Bayesian counterpart to a confidence interval. It is a range of values from the posterior distribution within which the parameter has a specified probability (e.g., 95%) of lying. Its interpretation is more intuitive than a CI. |

Foundational Concepts: FAQs on Network Meta-Analysis

What is a Network Meta-Analysis and how does it differ from a standard meta-analysis?

A Network Meta-Analysis is an advanced statistical technique that allows for the simultaneous comparison of multiple treatments in a single, unified analysis by combining both direct and indirect evidence from a network of randomized controlled trials (RCTs). Direct evidence comes from head-to-head trials comparing two interventions directly (e.g., A vs. B). Indirect evidence is estimated for a treatment comparison (e.g., A vs. C) through a common comparator (e.g., trials of A vs. B and B vs. C). NMA synthesizes these to produce mixed treatment effect estimates for all comparisons within the network [30] [31]. This differs from a standard pairwise meta-analysis, which is limited to synthesizing evidence for only two interventions at a time [30].

What are the key assumptions underlying a valid NMA?

Two critical assumptions must be evaluated to ensure the validity of an NMA [30]:

- Transitivity: This is the core assumption that the available comparisons are similar in all important aspects other than the treatments being compared. Essentially, in a hypothetical RCT that included all treatments in the network, participants could be randomized to any of them. Violation (intransitivity) occurs if there are systematic differences in effect modifiers—clinical or methodological characteristics that can influence the treatment effect—between different sets of comparisons [30].

- Consistency (or Coherence): This refers to the statistical agreement between direct and indirect evidence for the same treatment comparison. When both direct and indirect evidence exist for a specific comparison (e.g., A vs. C), their effect estimates should be statistically compatible. Inconsistency arises when these estimates disagree beyond chance [32].

What are direct and indirect methods in the context of NMA evidence synthesis?

In NMA, the terms "direct" and "indirect" refer to types of evidence, not methods of keyword recommendation.

- Direct Evidence: The treatment effect estimate obtained exclusively from studies that make a head-to-head comparison of the interventions in question (e.g., trials directly comparing Treatment A to Treatment B) [30] [31].

- Indirect Evidence: The treatment effect estimate for a pair of treatments derived through one or more common comparators. For example, if A has been compared to C, and B has been compared to C, then an indirect estimate for A vs. B can be obtained by combining these two pieces of evidence [30] [31]. The NMA methodology itself is a framework for integrating these two types of evidence.

The NMA Troubleshooting Guide

This guide addresses common challenges encountered during the conduct and interpretation of Network Meta-Analyses.

Issue 1: Violation of the Transitivity Assumption

Problem: The transitivity assumption is suspected to be violated due to systematic differences in effect modifiers (e.g., patient age, disease severity, study design) across different treatment comparisons [30].

Diagnosis:

- Clinical Reasoning: Actively identify potential effect modifiers a priori during the protocol stage based on clinical knowledge and a review of the literature [30].

- Qualitative Synthesis: During study selection and data extraction, abstract data on potential effect modifiers. Then, compare the distribution of these modifiers across the different treatment comparisons. If substantial imbalances are found (e.g., all studies for comparison A vs. B are in a severe population, while all studies for B vs. C are in a mild population), intransitivity is suspected [30].

Solution: If intransitivity is suspected, the following actions can be taken:

- Refine the Network: Reconsider the scope of the review question or the treatments included. It may be necessary to exclude certain treatments or populations to create a more homogeneous network [30].

- Subgroup Analysis or Meta-Regression: Conduct these analyses to investigate whether the identified effect modifiers explain the observed differences in treatment effects. This can help adjust for the intransitivity [30].

Issue 2: Detection and Handling of Inconsistency

Problem: The direct and indirect evidence for a particular treatment comparison are in statistical disagreement, threatening the validity of the network estimates [32].

Diagnosis: Several statistical methods can be used to detect inconsistency [32] [33]:

- Global Methods: Such as the design-by-treatment interaction model, which provides an overall test for inconsistency anywhere in the network. This is a comprehensive approach that handles multi-arm trials effectively [32].

- Local Methods: Such as node-splitting, which separately estimates the treatment effect for a specific comparison using only direct evidence and only indirect evidence, and then tests for a difference between them [33].

Solution:

- Investigate Sources: If inconsistency is detected, explore clinical or methodological differences that might explain the disagreement. Check for differences in effect modifiers specific to the loops or comparisons where inconsistency was found [32].

- Model Choice: Use inconsistency models that account for the disagreement, or consider presenting direct and indirect estimates separately if the inconsistency cannot be resolved. Note that different parameterizations of models like node-splitting can yield different results, so the choice of method should be justified [33].

Issue 3: Poor Network Connectivity

Problem: The network of evidence is sparsely connected, with many treatments only connected through long indirect pathways or with isolated treatment "islands." This weakens the reliability of indirect estimates and mixed treatment effects.

Diagnosis:

- Visual Inspection: Create a network graph. A poorly connected network will have few connections between nodes, or some nodes will be far apart from others [30].

- Quantify Connectivity: Describe the number of studies and patients informing each direct comparison. Note which comparisons have no direct evidence and rely entirely on indirect estimation.

Solution:

- Broaden Literature Search: Ensure the search strategy was comprehensive enough to capture all relevant studies for the treatments of interest [30].

- Incorporate Common Comparators: The inclusion of placebo or standard care, if clinically relevant, can often improve network connectivity by serving as a common link between treatments [30].

- Interpret with Caution: Acknowledge the limitations of the evidence base and interpret indirect estimates from long pathways or sparse networks with great caution.

Experimental Protocols for Key NMA Workflows

Protocol 1: Evaluating Transitivity

Objective: To systematically assess whether the assumption of transitivity is likely to hold in the evidence network.

Materials:

- Included study data

- Table of study characteristics

Methodology:

- A Priori Identification: Before data analysis, based on clinical expertise and literature review, compile a list of potential effect modifiers (e.g., baseline risk, age, disease subtype, dose, study duration, risk of bias items) [30].

- Data Extraction: Systematically extract data on these potential effect modifiers from each included study.

- Structured Comparison: Create a table summarizing the distribution of each effect modifier across the different direct comparisons in the network (e.g., mean age of participants in A vs. B trials, A vs. C trials, and B vs. C trials).

- Clinical Judgment: Evaluate whether there are any important systematic differences in the distributions of these modifiers between the comparisons. The clinical relevance of any observed differences should be judged.

Protocol 2: Assessing Inconsistency using Node-Splitting

Objective: To evaluate local inconsistency between direct and indirect evidence for a specific treatment comparison.

Materials:

- Dataset prepared for NMA

- Statistical software capable of running node-splitting models (e.g., R, WinBUGS/OpenBUGS)

Methodology:

- Select Comparison: Identify a treatment comparison of interest for which both direct and indirect evidence exist.

- Model Specification: Implement a node-splitting model. This model effectively "splits" the evidence for the chosen treatment contrast into two parts:

- The direct estimate from studies that directly compare the two treatments.

- The indirect estimate derived from the rest of the network.

- Parameterization Choice: Choose a model parameterization. Note that different assumptions exist (e.g., inconsistency parameter assigned to one treatment, or split symmetrically), which can lead to slightly different results, particularly with multi-arm trials [33].