De Novo Materials Design: From AI-Driven Discovery to Functional Proteins and Therapeutics

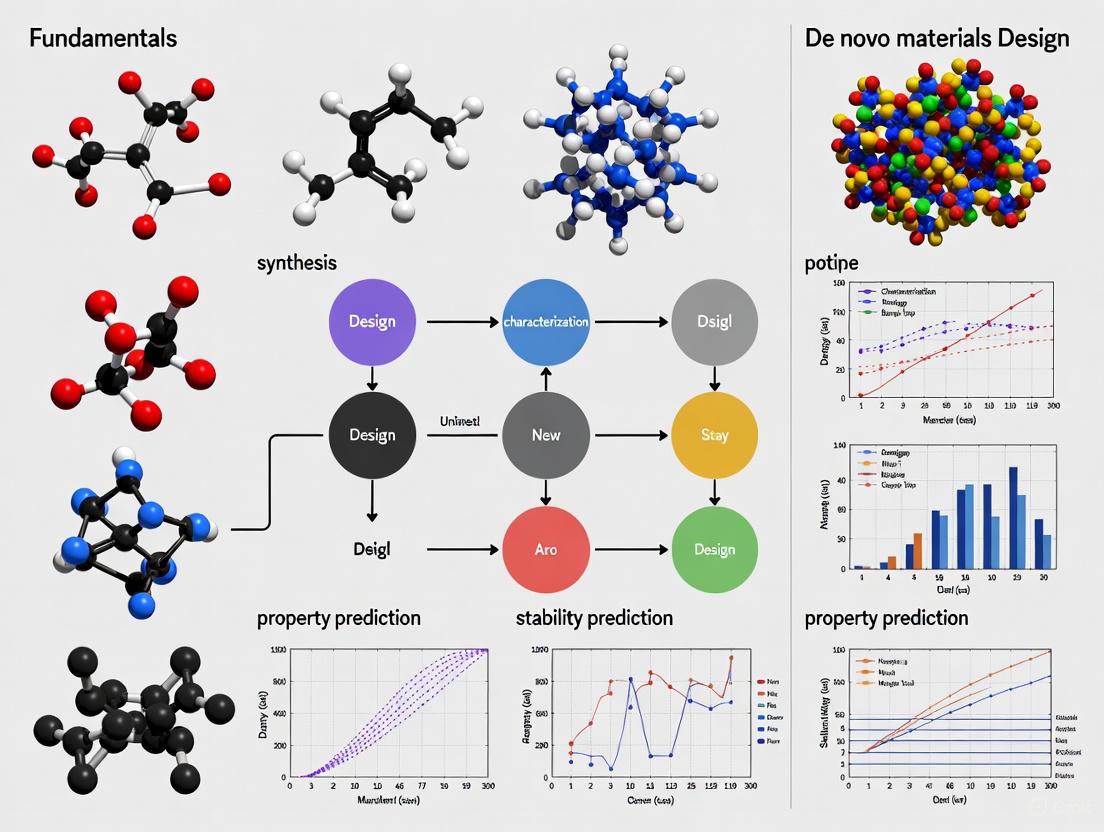

This article provides a comprehensive overview of the fundamentals of de novo materials design, a transformative approach that creates new materials and molecules from scratch rather than modifying existing ones.

De Novo Materials Design: From AI-Driven Discovery to Functional Proteins and Therapeutics

Abstract

This article provides a comprehensive overview of the fundamentals of de novo materials design, a transformative approach that creates new materials and molecules from scratch rather than modifying existing ones. Tailored for researchers, scientists, and drug development professionals, it explores the core principles that distinguish design from serendipitous discovery. The scope spans the latest computational methodologies, including generative AI, active learning frameworks, and deep learning models like RFdiffusion and DRAGONFLY, highlighting their application in designing small-molecule drugs and functional proteins. It also addresses critical challenges in troubleshooting and optimization, such as ensuring synthetic accessibility and overcoming low success rates, and details rigorous in silico and experimental validation techniques. By synthesizing insights from foundational concepts to cutting-edge applications, this article serves as a guide to the current state and future potential of this rapidly advancing field.

What is De Novo Design? Core Principles and the Shift from Discovery to Creation

De novo design represents a fundamental shift in the approach to creating novel molecules and materials, moving from reliance on chance discovery or modification of existing structures to the intentional construction from first principles. The term "de novo," derived from Latin for "from the new," in scientific contexts signifies generation from scratch, guided by computational prediction and fundamental physical laws rather than evolutionary templates or accidental discovery [1] [2]. This methodology stands in stark contrast to traditional discovery processes, where breakthroughs often emerged serendipitously from experimental observation. A famous example of such chance discovery is the polymer Teflon, which emerged unexpectedly from a refrigerant research program [3].

The paradigm of de novo design is revolutionizing fields ranging from therapeutic antibody development to functional materials science by enabling the creation of structures with atom-level precision for user-specified functions [4] [3]. This technical guide examines the core principles, methodologies, and applications of de novo design within materials science research, providing researchers with a comprehensive framework for distinguishing and implementing first-principles design approaches versus traditional discovery methods.

Core Principles: First Principles vs. Chance Discovery

Fundamental Distinctions

Table 1: Comparative Analysis of De Novo Design versus Chance Discovery

| Aspect | De Novo Design (First Principles) | Chance Discovery |

|---|---|---|

| Foundation | Computational prediction, physical laws | Experimental observation, serendipity |

| Process | Rational, targeted, iterative | Unplanned, opportunistic |

| Control | Atom-level precision [4] [2] | Limited, post-discovery optimization |

| Speed | Accelerated through computational screening | Unpredictable timeline |

| Examples | Designed antibodies via RFdiffusion [4] | Teflon from refrigerant research [3] |

The Materials Design Challenge

A central challenge in de novo materials design lies in the astronomical number of possible atomic combinations, most of which are not thermodynamically stable or synthetically accessible [3]. As Professor Andy Cooper notes, "You can sketch out just about anything as a conceptual material but you're not free to just make anything you'd like" [3]. This reality constrains purely computational approaches and necessitates robust validation frameworks.

Computational Frameworks for First-Principles Design

Protein Design Architectures

Recent advances in artificial intelligence have produced sophisticated computational frameworks capable of designing novel proteins with atomic accuracy:

RFdiffusion: A fine-tuned network for de novo antibody design that generates variable heavy chains (VHHs), single-chain variable fragments (scFvs), and full antibodies binding to user-specified epitopes with atomic-level precision [4]. The system employs a denoising process that iteratively refines random residue distributions into novel protein structures while maintaining framework integrity.

AlphaDesign: A hallucination-based framework combining AlphaFold with autoregressive diffusion models to generate proteins with controllable interactions, conformations, and oligomeric states without class-dependent model retraining [5].

LUCS: A physics-based method for generating geometrically diverse protein folds that more closely mimics natural structural variation compared to deep learning approaches [6].

These frameworks demonstrate the power of first-principles approaches to create functional proteins unprecedented in nature, such as inhibitors of bacterial phage defense systems [5].

Materials Design Approaches

In materials science, de novo design employs computational methods to predict molecular assembly and properties before synthesis:

Structure-property mapping: Computational prediction of how molecules assemble into crystals and the resulting material properties, enabling targeted searches for optimal molecules for specific applications [3].

Chemical knowledge encoding: Incorporating existing chemical knowledge into predicted structures to guide experimental discovery of materials with novel compositions and complexity [3].

These approaches significantly narrow the experimental search space, though high failure rates persist due to thermodynamic constraints [3].

Methodologies and Experimental Protocols

Computational Workflow for De Novo Protein Design

The standard workflow for computational de novo protein design involves multiple stages of in silico modeling and filtering:

Backbone Sampling: Generation of protein backbone structures using generative methods like RFdiffusion [7] or AF2-based hallucination [6].

Sequence Design: Prediction of optimal sequences for these backbones using graph neural networks (ProteinMPNN) or structure-conditioned language models (Frame2seq) [6].

In Silico Validation: Assessment of whether designed sequences fold into intended structures using prediction models like AlphaFold2 or ESMFold [6].

Experimental Testing: Expression and biophysical characterization of designed proteins to validate computational predictions [6].

Diagram 1: De Novo Protein Design Workflow

Materials Discovery and Validation

For de novo materials design, the process integrates computational prediction with experimental synthesis:

Computational Mapping: Generation of energy maps and structure-property relationships to identify promising molecular configurations [3].

Robotic Synthesis: Automated platforms like the Formulation Engine perform high-throughput processing, mixing, heating, and cooling to synthesize predicted materials [3].

Property Characterization: Evaluation of synthesized materials for target applications (e.g., gas storage, catalysis) [3].

Iterative Refinement: Computational models are refined based on experimental results to improve prediction accuracy [3].

Key Experimental Applications and Results

Antibody Design with Atomic Accuracy

A landmark application of de novo design is the creation of novel antibodies targeting disease-relevant epitopes. As reported in a 2025 Nature study, researchers combined RFdiffusion with yeast display screening to generate antibody variable heavy chains (VHHs) binding to four disease-relevant targets: influenza haemagglutinin, Clostridium difficile toxin B (TcdB), respiratory syncytial virus, and SARS-CoV-2 receptor-binding domain [4].

Cryo-electron microscopy validation confirmed the binding pose of designed VHHs with high-resolution data verifying atomic accuracy of the designed complementarity-determining regions (CDRs) [4]. While initial computational designs exhibited modest affinity (tens to hundreds of nanomolar Kd), affinity maturation produced single-digit nanomolar binders maintaining intended epitope selectivity [4].

Functional Materials Design

In materials science, de novo design has enabled creation of porous materials for sustainable energy applications. Researchers at the University of Liverpool and Southampton applied computational mapping to identify a highly porous solid storing more than 150 times its own volume of methane, addressing the challenge of methane storage for gas-powered vehicles [3].

Similarly, organic materials designed through de novo approaches have demonstrated effectiveness in capturing formaldehyde, a known carcinogen released from materials in newly constructed buildings, enabling development of prototype air filters [3].

Table 2: Experimentally Validated De Novo Designs

| Designed System | Target Application | Validation Method | Key Result |

|---|---|---|---|

| VHH antibodies [4] | Influenza, C. difficile targeting | Cryo-EM, SPR | Atomic-level precision in CDR loops |

| Porous organic material [3] | Methane storage | Gas adsorption measurements | 150x volume storage capacity |

| Formaldehyde capture material [3] | Air purification | Filtration efficiency testing | Effective carcinogen capture |

| Geometrically diverse Rossmann folds [6] | Protein scaffold diversification | Yeast display protease assay | 38% folding success rate |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for De Novo Design Experiments

| Reagent/Resource | Function | Example Use |

|---|---|---|

| RFdiffusion [4] | Protein backbone generation | De novo antibody design |

| ProteinMPNN [6] | Protein sequence design | Optimizing sequences for generated backbones |

| AlphaFold2 [5] | Structure validation | Predicting folded state of designs |

| Yeast display system [4] [6] | High-throughput screening | Identifying stable binders from designed libraries |

| Formulation Engine [3] | Robotic materials synthesis | Automated processing of predicted materials |

Integration of First Principles and Discovery

While de novo design emphasizes rational creation from first principles, the most effective research strategies often integrate systematic design with the potential for unexpected discovery. This hybrid approach acknowledges that computational methods, while powerful, cannot yet capture all relevant physics and chemistry [6].

Deep learning models like AlphaFold2 show systematic bias toward idealized geometries, failing to capture the full diversity of natural protein structures [6]. This limitation necessitates experimental validation and creates opportunities for discovering unexpected properties in computationally designed molecules.

Diagram 2: Integrated Design-Discovery Research Cycle

De novo design represents a transformative approach to creating novel molecules and materials by leveraging computational power to build from first principles rather than relying solely on chance discovery. While the potential for serendipitous discovery remains valuable, the targeted, rational approach of de novo design enables unprecedented precision in developing antibodies with atomic-level accuracy [4] and functional materials with tailored properties [3].

The continued advancement of de novo design methodologies depends on addressing current limitations, including the geometric biases in deep learning models [6] and the thermodynamic constraints on synthesizable materials [3]. As computational power increases and algorithms become more sophisticated, the integration of first-principles design with high-throughput experimental validation promises to accelerate the development of novel solutions to pressing challenges in medicine, energy, and environmental sustainability.

For researchers in drug development and materials science, mastering de novo design principles provides a powerful framework for systematic innovation, complementing traditional discovery-based approaches and expanding the boundaries of what can be created.

The concept of compositional space represents a fundamental framework for understanding and navigating the universe of possible molecules in de novo materials design. In environmental chemistry, this approach has proven invaluable for identifying persistent organic pollutants (POPs), where the first step in identifying a contaminant molecule is determining the type and number of its constituent elements—its elemental composition—from mass-to-charge (m/z) measurements and ratios of isotopic peaks [8]. Not every combination of elements is possible; boundaries exist in compositional space that divide feasible and improbable compositions as well as different chemical classes [8]. For researchers pursuing de novo design of enzymes and functional materials, mastering the navigation of this expansive combinatorial space is the critical first step toward innovation.

The challenge of compositional space navigation is magnified in de novo protein design, where the possible sequence combinations exceed astronomical numbers. De novo genes—protein-coding genes arising from previously noncoding DNA—have been identified across all domains of life, fundamentally challenging the view that genetic novelty must originate solely from preexisting gene templates [9]. Plants, with their expansive genomes, abundant non-coding regions, and high transposable element content, provide a rich substrate for the birth of novel genes [9]. Similarly, in computational enzyme design, the vast combinatorial space of possible amino acid sequences and structural configurations presents a formidable challenge that requires sophisticated computational strategies to navigate efficiently.

Table: Key Concepts in Compositional Space Navigation

| Concept | Definition | Application in De Novo Design |

|---|---|---|

| Elemental Composition | The type and number of constituent elements in a molecule [8] | Determined from mass-to-charge (m/z) measurements and isotopic peak ratios [8] |

| Compositional Space Boundaries | Boundaries that divide feasible and improbable chemical compositions [8] | Constrain the search space for potential functional molecules [8] |

| De Novo Genes | Protein-coding genes arising from previously noncoding DNA [9] | Provide insights into evolutionary innovation and adaptive evolution [9] |

| Halogenation Constraints | Regions of compositional space characterized by higher degrees of halogenation [8] | Identify persistent bioaccumulative organics with specific properties [8] |

Computational Frameworks for Navigating Compositional Space

Defining and Constraining the Search Space

The initial phase of navigating compositional space involves establishing boundaries to make the search for functional molecules tractable. Research on persistent bioaccumulative organics has demonstrated that these compounds reside in constrained regions of compositional space characterized by a higher degree of halogenation, while boundaries surrounding non-halogenated chemicals are more difficult to define [8]. This principle of constraint is equally applicable to de novo enzyme design, where the combinatorial explosion of possible sequences necessitates intelligent boundary definitions. Through analysis of approximately 305,134 compounds from PubChem, researchers have successfully visualized the compositional space occupied by fluorine, chlorine, and bromine compounds as defined by m/z and isotope ratios [8].

In de novo protein design, the architectural features of natural genomes provide guidance for establishing these constraints. Plant genomes, for instance, reveal that transposable elements (TEs) play a crucial role as catalysts for de novo gene birth, actively facilitating gene origination through multiple mechanisms [9]. TEs constitute 45-85% of many plant genomes and contribute to approximately 30-40% of recently originated de novo genes through direct sequence contribution or regulatory element donation [9]. This biological insight informs computational approaches by highlighting the importance of specific genomic features that constrain the vast compositional space of possible functional sequences.

Machine Learning and Modeling Approaches

Advanced computational strategies that combine machine learning with atomistic modeling have emerged as powerful tools for navigating compositional space in de novo enzyme design. The rotamer inverted fragment finder–diffusion (Riff-Diff) methodology represents a hybrid machine learning and atomistic modeling strategy for scaffolding catalytic arrays in de novo proteins [10]. This approach has demonstrated general applicability by designing enzymes for two mechanistically distinct chemical transformations: the retro-aldol reaction and the Morita-Baylis-Hillman reaction [10].

The success of Riff-Diff highlights several key principles for effective navigation of compositional space. First, the integration of multiple computational approaches—combining machine learning with physical modeling—enables more effective exploration of the combinatorial landscape. Second, focusing on catalytically competent amino acid constellations provides anchor points within the vast sequence space [10]. Third, high-resolution structural validation confirms the achievement of Angstrom-level active site design precision, demonstrating that computational navigation can yield experimentally verifiable results [10]. These computational frameworks produce catalysts exhibiting activities rivaling those optimized by in-vitro evolution, along with exquisite stereoselectivity, bringing de novo protein catalysts closer to practical applications in synthesis [10].

Table: Computational Methods for Compositional Space Navigation

| Method | Approach | Advantages |

|---|---|---|

| Riff-Diff [10] | Hybrid machine learning and atomistic modeling for scaffolding catalytic arrays | Generates catalysts with activities rivaling in-vitro evolution; applicable to distinct chemical transformations [10] |

| Phylostratigraphy | Gene age dating based on sequence homology | Identifies evolutionarily young genes; reveals de novo gene origination patterns [9] |

| Multi-species Genomic Comparison | Comparative analysis across related species | Identifies lineage-specific genes lacking detectable homologs [9] |

| Weighted Gene Co-expression Network Analysis (WGCNA) [9] | Network-based analysis of gene expression patterns | Demonstrates how de novo genes integrate into existing regulatory networks [9] |

| Cactus Whole-Genome Alignment [9] | Progressive whole-genome alignment across divergent species | Enables high-confidence synteny-based identification surpassing BLAST-based approaches [9] |

Experimental Methodologies and Validation

Mass Spectrometry-Based Screening Protocols

High-resolution mass spectrometry (MS) provides one of the most powerful experimental methodologies for exploring compositional space and validating computational predictions. The standard workflow begins with the determination of elemental composition from mass-to-charge (m/z) measurements and ratios of isotopic peaks (M+1, M+2, etc.) [8]. This approach has been formalized through the development of script tools (R code) to select potential POPs from high-resolution MS data [8]. When applied to household dust (SRM 2585), this methodology resulted in the discovery of previously unknown chlorofluoro flame retardants, demonstrating its practical utility for identifying novel compounds within constrained regions of compositional space [8].

The experimental protocol for mass spectrometry-based screening involves several critical steps:

Sample Preparation: Extraction and purification of compounds from the target source material, followed by appropriate dilution to avoid instrument saturation.

Mass Spectrometry Analysis: Acquisition of high-resolution mass spectra using instruments capable of precise m/z measurements (typically FT-ICR, Orbitrap, or TOF mass spectrometers).

Elemental Composition Determination: Calculation of possible elemental formulas based on exact mass measurements and isotope pattern matching, typically using software tools that incorporate heuristic rules for formula assignment.

Compositional Space Filtering: Application of script-based tools to filter potential candidates based on their position in constrained regions of compositional space, particularly for halogenated compounds [8].

Structural Elucidation: Further characterization using tandem MS and comparison with spectral libraries or synthetic standards when available.

This methodology enables researchers to efficiently navigate compositional space by experimentally verifying computational predictions and discovering novel compounds with desired properties.

Functional Validation of De Novo Designs

For de novo enzymes and functional materials, validation requires rigorous experimental assessment of activity, specificity, and structure. The validation protocol for computationally designed enzymes typically includes:

Heterologous Expression: Cloning of designed gene sequences into appropriate expression vectors and transformation into expression hosts (typically E. coli or yeast).

Protein Purification: Affinity chromatography followed by size-exclusion chromatography to obtain pure, monodisperse protein samples.

Activity Assays: Implementation of enzyme-specific activity measurements using spectrophotometric, fluorometric, or chromatographic methods to determine kinetic parameters (kcat, KM).

Stereoselectivity Assessment: Evaluation of enantiomeric excess for reactions producing chiral centers using chiral chromatography or polarimetry.

Structural Characterization: Determination of high-resolution structures through X-ray crystallography or cryo-electron microscopy to verify design accuracy.

This validation pipeline has been successfully applied to de novo enzymes designed through computational methods, confirming Angstrom-level active site design precision and activities rivaling naturally evolved enzymes [10]. High-resolution structures of six de novo designs revealed atomic-level precision, providing crucial feedback for refining computational navigation strategies [10].

Diagram: Experimental workflow for validating de novo enzyme designs

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful navigation of compositional space requires specialized reagents and materials that enable both computational and experimental approaches. The following toolkit outlines essential resources for researchers in de novo materials design.

Table: Essential Research Reagent Solutions for Compositional Space Navigation

| Reagent/Material | Function | Application Example |

|---|---|---|

| High-Resolution Mass Spectrometer | Precise m/z measurement and isotope ratio analysis [8] | Elemental composition determination for novel compounds [8] |

| PubChem Compound Database (~305,134 compounds) [8] | Reference dataset for visualizing compositional space | Defining boundaries for feasible chemical compositions [8] |

| R Script Tool for MS Data Filtering [8] | Computational selection of potential POPs from HRMS data | Identifying novel halogenated compounds in environmental samples [8] |

| Heterologous Expression System (E. coli, yeast) | Production of designed protein sequences | Testing computational designs of de novo enzymes [10] |

| Crystallization Reagents | Formation of protein crystals for structural analysis | Verification of Angstrom-level design precision [10] |

| Chromatography Materials (Affinity, Size Exclusion) | Protein purification | Isolation of pure de novo enzymes for functional characterization [10] |

| Multi-well Plate Assay Systems | High-throughput activity screening | Rapid assessment of multiple design variants [10] |

| Stable Isotope-labeled Compounds | Isotopic tracing and quantification | precise measurement of metabolic fluxes in engineered systems |

Future Directions and Implications for De Novo Materials Design

The continued advancement of compositional space navigation holds profound implications for the future of de novo materials design. Several emerging trends are particularly promising. First, the integration of multi-omics data—combining RNA-seq, Ribo-seq, proteomics, and metabolomics—provides convergent evidence for molecular functionality, addressing the challenge of distinguishing genuine de novo designs from non-functional variants [9]. Second, advanced computational frameworks incorporating deep learning (such as AlphaFold2) show increasing capability for predicting protein structures, revealing that some de novo proteins can achieve well-folded conformations despite lacking conserved domains [9].

Population genomic approaches using dN/dS ratios and selection signatures reveal patterns of adaptive evolution that can inform design strategies [9]. These integrative pipelines, combining phylostratigraphy, expression profiling, and functional validation through CRISPR/Cas9, are establishing robust standards for de novo gene annotation and functional characterization [9]. As these methodologies mature, they will enable more efficient navigation of compositional space, accelerating the design of novel enzymes and functional materials with tailored properties for specific applications in synthesis, medicine, and biotechnology.

The fundamental principles of compositional space navigation—defining constrained search regions, leveraging computational screening, and implementing rigorous experimental validation—provide a robust framework for overcoming the critical challenge of vast combinatorial complexity. By adopting these strategies, researchers can systematically explore the universe of possible molecules to identify those with desired functions, bringing the promise of de novo materials design closer to practical reality.

The Role of Thermodynamics and Stability in Feasible Material Design

The design of new materials with specific properties represents a significant challenge in materials science, primarily due to the vastness of compositional space. The number of compounds that can be feasibly synthesized in a laboratory is only a minute fraction of the total possible combinations, a predicament often likened to finding a needle in a haystack [11]. In this context, thermodynamic stability serves as a fundamental screening criterion that enables researchers to winnow out materials that are arduous to synthesize or unable to endure under operational conditions, thereby dramatically enhancing the efficiency of materials development [11]. Thermodynamic stability, typically represented by decomposition energy (ΔHd), provides the essential foundation for predicting phase equilibrium—the stable phases, their fractions, and compositions as functions of overall composition, temperature, and pressure (X-T-P) [12]. These correlations constitute the principal requirements for designing new materials and improving existing ones, forming the cornerstone of the materials design paradigm [13] [12].

The CALculation of PHAse Diagrams (CALPHAD) approach has emerged as the preferred method for predicting phase stability due to its powerful capability to extrapolate into regions of X-T-P space with limited direct experimental or simulated information [12]. By calibrating Gibbs energies using modeled polynomials within the compound energy formalism, CALPHAD enables physically reasonable predictions even in multi-component systems where exhaustive experimental sampling would be prohibitively expensive [12]. However, the accuracy of these predictions varies considerably across X-T-P space due to experimental error, model inadequacy, and unequal data coverage, introducing uncertainty that must be quantified for reliable materials design [13] [12].

Fundamental Thermodynamic Principles of Material Stability

Stability Metrics and Phase Equilibrium

At its core, thermodynamic stability determines whether a material will remain in its current form or transform into more stable configurations under given conditions. The decomposition energy is defined as the total energy difference between a given compound and its competing compounds in a specific chemical space [11]. This metric is ascertained by constructing a convex hull using the formation energies of compounds and all pertinent materials within the same phase diagram [11]. Materials lying on the convex hull are considered thermodynamically stable, while those above it are metastable or unstable.

The determination of phase fractions, compositions, and energies of stable phases as functions of macroscopic composition, temperature, and pressure (X-T-P) represents the primary correlation needed for rational materials design [13] [12]. These parameters enable prediction of:

- Equilibrium phase stability across compositional and temperature ranges

- Susceptibility to formation of deleterious phases during processing or service

- Optimal processing routes to achieve desired microstructures

- Thermodynamic driving forces for phase transformations

The CALPHAD Methodology

The CALPHAD approach employs sophisticated models for the Gibbs energies of individual phases, typically using Redlich-Kister polynomials within the compound energy formalism [12]. This methodology enables:

- Extrapolation capability into multi-component systems and regions without direct measurements

- Integration of diverse data types including thermodynamic properties, phase equilibria, and crystal structure information

- Quantitative prediction of phase stability in complex, multi-component systems

However, CALPHAD predictions inherit uncertainties from multiple sources, including random and systematic errors in experimental measurements used for calibration, as well as the choice of specific model forms utilized to describe thermodynamic properties [12].

Computational Frameworks for Stability Prediction

Machine Learning Approaches

Machine learning offers a promising avenue for expediting the discovery of new compounds by accurately predicting their thermodynamic stability, providing significant advantages in time and resource efficiency compared to traditional experimental and computational methods [11]. Composition-based models are particularly valuable in materials discovery as compositional information can be known a priori, unlike structural information which requires complex experimental techniques or computationally expensive simulations [11].

Table 1: Machine Learning Approaches for Thermodynamic Stability Prediction

| Model Name | Input Features | Algorithm | Advantages | Limitations |

|---|---|---|---|---|

| ElemNet [11] | Elemental composition | Deep Learning | Direct composition-property mapping | Large inductive bias from composition-only assumption |

| Magpie [11] | Statistical features of elemental properties | Gradient Boosted Regression Trees (XGBoost) | Captures atomic diversity across elements | Relies on manually crafted features |

| Roost [11] | Chemical formula as complete graph | Graph Neural Networks with attention mechanism | Captures interatomic interactions | Assumes all nodes in unit cell have strong interactions |

| ECCNN [11] | Electron configuration matrices | Convolutional Neural Networks | Incorporates intrinsic electronic structure | Requires specialized encoding of electron configurations |

| ECSG [11] | Combines multiple knowledge domains | Stacked Generalization | Mitigates individual model biases; highest accuracy | Increased computational complexity |

Recent advances include ensemble frameworks based on stacked generalization (SG) that amalgamate models rooted in distinct domains of knowledge [11]. For instance, the Electron Configuration models with Stacked Generalization (ECSG) framework integrates three base models—Magpie, Roost, and ECCNN—to construct a super learner that effectively mitigates limitations of individual models and harnesses synergy that diminishes inductive biases [11]. This approach has demonstrated exceptional performance, achieving an Area Under the Curve score of 0.988 in predicting compound stability within the Joint Automated Repository for Various Integrated Simulations (JARVIS) database, while requiring only one-seventh of the data used by existing models to achieve equivalent accuracy [11].

Uncertainty Quantification in Thermodynamic Modeling

Uncertainty quantification (UQ) has emerged as a critical component of reliable materials design, addressing the varying accuracy of CALPHAD predictions across X-T-P space [13] [12]. Traditional representations of uncertainty as intervals on phase boundaries have limitations, including inability to represent uncertainty in invariant reactions or phase region stability, and difficulty extending to systems of three or more components [12].

Novel UQ approaches leverage Monte Carlo samples from the distribution of CALPHAD model parameters to represent uncertainty in forms particularly suited to materials design [12]. These include:

- Distribution of phase diagrams and their features through superposition of phase boundaries from multiple parameter sets

- Uncertainty of invariant points by calculating their locations across parameter samples

- Probability of phase stability at specific X-T-P points, irrespective of component count

- Distributions of phase fractions, compositions, activities, and Gibbs energies across X-T-P space

These methodologies enable materials designers to interrogate composition and temperature domains and obtain probabilities for different phases to be stable, significantly enhancing design decision-making [13] [12].

Diagram 1: UQ workflow for thermodynamic modeling

Experimental Protocols and Validation

High-Throughput Computational Validation

Validation of predicted stable compounds typically proceeds through first-principles calculations, primarily Density Functional Theory (DFT). The standard protocol involves:

- Structure Prediction: Generating candidate crystal structures for compositions identified as stable by machine learning models.

- DFT Optimization: Performing geometry optimization to find ground-state structures and energies.

- Convex Hull Construction: Calculating formation energies and constructing phase diagrams to verify thermodynamic stability.

- Property Prediction: Computing relevant functional properties (band gaps, elastic constants, etc.) for promising candidates.

This approach has been successfully applied to explore new materials classes, including two-dimensional wide bandgap semiconductors and double perovskite oxides, with validation results from first-principles calculations demonstrating remarkable accuracy in correctly identifying stable compounds [11].

Bayesian CALPHAD Parameter Estimation

The ESPEI (Extensible Self-optimizing Phase Equilibria Infrastructure) package implements Bayesian inference for CALPHAD model parameters using Markov Chain Monte Carlo (MCMC) sampling [12]. The methodology involves:

- Prior Definition: Establishing prior distributions for model parameters based on existing knowledge.

- Likelihood Calculation: Evaluating how well parameter sets reproduce experimental data.

- MCMC Sampling: Generating samples from the posterior distribution of parameters.

- Convergence Assessment: Ensuring adequate sampling of the parameter space.

- Uncertainty Propagation: Using parameter samples to quantify uncertainty in all predicted properties.

This approach has been demonstrated for binary systems such as Cu-Mg, revealing varying uncertainty across different phase regions and enabling quantitative assessment of stability probabilities [12].

Advanced Applications and Case Studies

Exploration of Unexplored Composition Spaces

Machine learning frameworks based on electron configuration and stacked generalization have demonstrated exceptional capability in navigating unexplored composition spaces [11]. Case studies include:

- Two-dimensional wide bandgap semiconductors: Identification of novel stable compounds with potential electronic applications.

- Double perovskite oxides: Discovery of new perovskite structures with tailored functional properties.

In these applications, the ECSG framework successfully identified promising compositions, with subsequent DFT validation confirming thermodynamic stability and revealing promising functional properties [11].

Metastable Materials and Non-Conventional Thermodynamics

Recent research has revealed materials exhibiting seemingly anomalous thermodynamic behavior, such as negative thermal expansion (shrinking when heated) and negative compressibility (expanding when crushed) in metastable oxygen-redox active materials [14]. These "thermodynamics-defying" materials, when in a metastable state, display flipped responses to external stimuli:

- Zero thermal expansion materials could revolutionize construction by eliminating thermal expansion effects in building components.

- Structural batteries could enable electric vehicle airplane walls to double as battery components, creating lighter, more efficient aircraft.

- Electrochemical regeneration could restore aged EV batteries to factory-fresh performance through voltage activation, returning metastable materials to their stable states [14].

These discoveries not only enable novel technologies but also represent advances in fundamental science, challenging and expanding our understanding of thermodynamic principles [14].

Diagram 2: Integrated materials design workflow

Research Reagent Solutions: Computational Tools for Thermodynamic Materials Design

Table 2: Essential Computational Tools for Thermodynamic Materials Design

| Tool/Resource | Type | Primary Function | Application in Materials Design |

|---|---|---|---|

| ESPEI [12] | Software Package | Bayesian parameter estimation for CALPHAD models | Quantifies uncertainty in thermodynamic model parameters through MCMC sampling |

| pycalphad [12] | Python Library | Thermodynamic calculations for multi-component systems | Performs equilibrium calculations using CALPHAD databases |

| Materials Project [11] | Database | Repository of calculated materials properties | Provides training data for machine learning models and validation references |

| JARVIS [11] | Database | Joint Automated Repository for Various Integrated Simulations | Benchmark database for evaluating prediction accuracy |

| ECCNN [11] | Machine Learning Model | Electron Configuration Convolutional Neural Network | Predicts stability from fundamental electronic structure information |

| Stacked Generalization Framework [11] | ML Methodology | Ensemble model combining diverse knowledge domains | Enhances prediction accuracy and reduces inductive bias |

Thermodynamic stability remains the foundational criterion for feasible materials design, serving as the critical filter that enables efficient navigation of vast compositional spaces. The integration of computational approaches—from CALPHAD modeling with comprehensive uncertainty quantification to advanced machine learning frameworks—has created a powerful paradigm for accelerating materials discovery and development. The emerging capabilities to quantify stability probabilities across composition-temperature-pressure space and to identify materials with non-conventional thermodynamic behavior represent significant advances in the field. As these methodologies continue to mature, incorporating increasingly sophisticated physical insights and computational approaches, they promise to further enhance our ability to design novel materials with tailored properties and performance characteristics, ultimately transforming the landscape of materials research and development.

The ability to engineer proteins with desired functions is a cornerstone of modern biotechnology, with profound implications for therapeutic development, enzyme engineering, and synthetic biology. The field is primarily governed by three distinct methodological paradigms: traditional optimization, rational design, and de novo design. Each approach operates on fundamentally different principles, with varying requirements for pre-existing knowledge, technological infrastructure, and design philosophy.

Traditional optimization methods, such as directed evolution, mimic natural evolutionary processes through iterative cycles of randomization and selection. Rational design employs structural knowledge and computational analysis to make specific, informed changes to protein sequences. In contrast, de novo design represents the most ambitious paradigm, generating entirely novel protein structures and sequences not found in nature, based solely on first principles and computational predictions [15] [16].

This technical guide provides an in-depth comparison of these three methodologies, examining their theoretical foundations, workflow differences, technical requirements, and practical applications within materials design research. By elucidating the key distinctions between these approaches, we aim to provide researchers with a framework for selecting appropriate strategies for specific protein engineering challenges.

Conceptual Foundations and Definitions

Traditional Optimization

Traditional optimization methods, particularly directed evolution, rely on introducing genetic diversity into existing protein sequences followed by high-throughput screening or selection for desired traits. This approach requires no prior structural knowledge of the protein and operates through iterative "design-make-test-analyze" cycles. While powerful, it is constrained by its reliance on existing protein scaffolds and the limitations of screening methodologies [15] [16].

Rational Design

Rational design employs structural biology, biophysical principles, and computational analysis to make specific, targeted modifications to protein sequences. This approach requires detailed knowledge of the protein's three-dimensional structure and the relationship between structure and function. Key techniques include site-directed mutagenesis, molecular docking, and structure-based virtual screening [17] [18]. Rational design has been successfully applied to optimize protein stability, affinity, and specificity, with prominent applications in the development of therapeutic antibodies and enzymes.

De Novo Design

De novo design represents the most advanced paradigm, generating entirely novel protein structures and sequences from scratch without relying on natural templates. This approach leverages fundamental biophysical principles and advanced computational models, including deep learning and diffusion networks, to create proteins with customized functions [15] [16] [2]. Recent breakthroughs, such as RFdiffusion for antibody design, demonstrate the capability to generate proteins with atomic-level precision, enabling targeting of specific epitopes with novel complementarity-determining regions [4].

Table 1: Core Conceptual Differences Between Design Paradigms

| Feature | Traditional Optimization | Rational Design | De Novo Design |

|---|---|---|---|

| Starting Point | Existing natural protein | Existing natural protein with structural data | First principles; no natural template required |

| Knowledge Requirement | No structural knowledge needed | High-resolution structure and mechanism | Fundamental biophysical principles |

| Evolutionary Constraint | Limited to variations of natural sequences | Limited to modifications of natural structures | Unconstrained by natural evolution |

| Theoretical Basis | Empirical selection | Structure-function relationships | Physical chemistry & deep learning |

| Technological Era | 1990s-present | 1980s-present | 2010s-present |

Methodological Comparisons and Workflows

Traditional Optimization Workflow

Traditional optimization follows an iterative experimental cycle:

- Diversity Generation: Random mutagenesis of parent gene(s) through error-prone PCR or DNA shuffling

- Library Construction: Generation of variant libraries (typically >10⁴ members)

- Screening/Selection: Application of high-throughput assays to identify improved variants

- Lead Identification: Isolation of top-performing variants for subsequent cycles This process continues until the desired performance metrics are achieved, often requiring multiple iterations and significant experimental resources [15].

Rational Design Workflow

Rational design employs a more targeted, computational approach:

- Structural Analysis: Examination of protein structure to identify key residues for modification

- Computational Modeling: Prediction of how specific mutations will affect structure and function

- In Silico Evaluation: Assessment of predicted variants using molecular dynamics, docking, or energy calculations

- Experimental Validation: Synthesis and testing of a limited set of designed variants This approach benefits from requiring smaller variant libraries but depends heavily on accurate structural models and computational predictions [17] [18].

De Novo Design Workflow

De novo design implements a computational pipeline that may include:

- Specification: Definition of target structure or function (e.g., binding epitope, catalytic site)

- Computational Generation: Use of deep learning models (e.g., RFdiffusion, ProteinMPNN) to create novel protein structures and sequences

- In Silico Validation: Prediction of folding and stability using tools like AlphaFold or RoseTTAFold

- Experimental Characterization: Synthesis and structural/functional validation of designed proteins [4] [16]

Recent advances have demonstrated the remarkable precision of de novo design, with cryo-electron microscopy confirming atomic-level accuracy of designed antibody complementarity-determining regions [4].

Technical Requirements and Resource Considerations

The implementation of each design paradigm demands distinct technical resources, computational infrastructure, and experimental capabilities. These requirements significantly influence methodology selection based on available resources and project constraints.

Table 2: Technical Requirements Across Design Paradigms

| Resource | Traditional Optimization | Rational Design | De Novo Design |

|---|---|---|---|

| Computational Needs | Minimal | Moderate to high (molecular dynamics, docking) | Very high (deep learning, diffusion models) |

| Experimental Throughput | Very high (10⁴-10⁸ variants) | Low to moderate (10-100 variants) | Low to moderate (10-1000 variants) |

| Specialized Expertise | Molecular biology, screening development | Structural biology, computational chemistry | Machine learning, biophysics, programming |

| Key Software/Tools | Laboratory automation systems | Rosetta, AutoDock, GROMACS | RFdiffusion, ProteinMPNN, AlphaFold |

| Infrastructure Cost | High (automation, screening) | Moderate (computing, structural biology) | High (HPC, AI/ML infrastructure) |

| Time Investment | Months to years | Weeks to months | Days to months |

Data Dependencies and Knowledge Requirements

Traditional optimization requires no prior structural knowledge, making it applicable to proteins with unknown structures. However, it demands robust high-throughput screening assays and potentially large laboratory operations [15].

Rational design depends critically on high-resolution structural information (from crystallography, cryo-EM, or NMR) and understanding of structure-function relationships. The quality of structural data directly correlates with success rates [17].

De novo design has the most complex data requirements, typically needing both structural principles and training data for machine learning models. However, once trained, models like RFdiffusion can generate designs with only functional specifications [4] [16].

Case Studies and Experimental Protocols

De Novo Antibody Design with RFdiffusion

A landmark 2025 study demonstrated the de novo generation of antibody variable heavy chains (VHHs), single-chain variable fragments (scFvs), and full antibodies targeting user-specified epitopes with atomic-level precision [4].

Experimental Protocol:

- Computational Design:

- Fine-tuned RFdiffusion network was conditioned on framework structure and target epitope

- Network designed CDR loops and rigid-body placement simultaneously

- ProteinMPNN generated sequences for designed backbones

In Silico Validation:

- Fine-tuned RoseTTAFold2 predicted structure of designed complexes

- Designs with high self-consistency scores were selected for experimental testing

Experimental Screening:

- Designed VHHs screened via yeast surface display (9,000 designs/target)

- Alternatively, E. coli expression with SPR screening (95 designs/target)

Affinity Maturation:

- OrthoRep system employed for affinity maturation of initial binders

- Achieved single-digit nanomolar binders maintaining epitope specificity

Structural Validation:

- Cryo-EM confirmed binding pose of designed VHHs targeting influenza haemagglutinin and Clostridium difficile toxin B

- High-resolution structure verified atomic accuracy of designed CDRs [4]

Protein Stability Optimization

A comparative study illustrates different approaches to enhancing protein stability:

Traditional Optimization Approach:

- Error-prone PCR applied to gene of interest

- Library screened for thermal stability using thermal shift assays

- Multiple rounds (4-6) of mutation and screening typically required

- Achieved 5-15°C improvement in thermal stability [15]

Rational Design Approach:

- Analysis of crystal structure to identify flexible regions

- Introduction of disulfide bonds or proline residues to restrict flexibility

- Computational calculation of folding free energy changes (ΔΔG)

- Typically achieves 3-10°C improvement with fewer than 10 variants tested [15]

De Novo Stability Design:

- Computational methods like RFdiffusion generate entirely new protein scaffolds

- Sequences optimized for native-state stability while maintaining function

- Can achieve >15°C improvement in thermal stability

- Enables expression of previously challenging proteins (e.g., malaria vaccine candidate RH5) [15]

The Scientist's Toolkit: Research Reagent Solutions

Implementing these design paradigms requires specialized reagents and computational resources. The following table outlines key components of the research toolkit for protein design.

Table 3: Essential Research Reagents and Resources for Protein Design

| Resource | Function/Purpose | Design Paradigm |

|---|---|---|

| RFdiffusion | Deep learning model for protein structure generation | De novo design |

| ProteinMPNN | Neural network for protein sequence design | De novo & rational design |

| AlphaFold2/3 | Protein structure prediction from sequence | All paradigms |

| RoseTTAFold | Protein structure prediction with co-evolutionary data | All paradigms |

| OrthoRep | In vivo mutagenesis system for directed evolution | Traditional optimization |

| Yeast Surface Display | High-throughput screening of protein libraries | Traditional optimization & de novo validation |

| CETSA | Cellular target engagement validation | All paradigms (validation) |

| AutoDock | Molecular docking for binding pose prediction | Rational design |

| Rosetta | Suite for protein structure prediction and design | Rational & de novo design |

| DNA-Encoded Libraries (DELs) | High-throughput screening technology | Traditional optimization |

Performance Metrics and Comparative Outcomes

Quantitative assessment of each paradigm's performance reveals distinct strengths and limitations across various metrics.

Table 4: Performance Comparison Across Design Paradigms

| Metric | Traditional Optimization | Rational Design | De Novo Design |

|---|---|---|---|

| Success Rate | Moderate (high throughput compensates) | Variable (structure-dependent) | Improving rapidly with AI advances |

| Timeline | 6-24 months | 3-12 months | 1-6 months (computational phase) |

| Development Cost | High (screening intensive) | Moderate | Variable (high computational cost) |

| Innovation Potential | Incremental improvements | Moderate improvements | High (novel scaffolds/functions) |

| Atomic-Level Precision | Limited | Achievable with high-quality structures | Demonstrated (e.g., antibody CDRs) |

| Throughput | 10⁴-10⁸ variants | 10¹-10³ variants | 10¹-10⁴ variants |

| Epitope-Specific Targeting | Not directly possible | Possible with structural knowledge | Directly programmable [4] |

Key Performance Insights

Traditional optimization excels at property improvement (affinity, stability) when high-throughput screening is feasible but struggles with multi-property optimization and epitope-specific targeting [15].

Rational design enables more targeted interventions but remains constrained by the quality of structural data and computational predictions. Success rates improve significantly with higher-resolution structures and better energy functions [17].

De novo design represents the most transformative approach, enabling creation of proteins with precisely specified functions. Recent demonstrations of atomically accurate antibody design highlight the paradigm's potential to generate therapeutics targeting previously inaccessible epitopes [4]. The integration of deep learning methods has dramatically improved success rates, with RFdiffusion capable of generating stable, functional proteins in seconds [16].

Implementation Considerations and Future Directions

Hybrid Approaches

The most successful protein engineering strategies often combine elements from multiple paradigms. For example:

- De novo + Traditional: Computational designs subjected to limited directed evolution for fine-tuning

- Rational + De novo: Structure-based insights informing de novo design parameters

- All Three: RFdiffusion-generated antibodies affinity-matured using directed evolution [4] [15]

Emerging Trends and Future Outlook

The field is rapidly evolving toward increased integration of artificial intelligence and machine learning across all design paradigms. Key trends include:

- Explainable AI: Addressing the "black-box" nature of deep learning models to enhance trust and interpretability [17]

- Federated Learning: Enabling collaborative model training across institutions while preserving data privacy [17]

- Closed-Loop Design: Integrating design, synthesis, and testing into automated workflows [19] [20]

- Multi-Objective Optimization: Simultaneous optimization of multiple protein properties (stability, activity, specificity) [15]

As de novo design methodologies mature, they are expected to become mainstream approaches in protein science and engineering, potentially reducing reliance on traditional methods for many applications. However, traditional optimization will likely remain valuable for applications where high-throughput screening is feasible and structural information is limited [15].

The convergence of these paradigms, powered by advances in artificial intelligence and high-throughput experimentation, is poised to accelerate the development of novel proteins for therapeutic applications, industrial enzymes, and biomaterials, ultimately expanding the functional protein space beyond natural evolutionary boundaries [16] [2].

AI and Computational Methodologies: From Generative Models to Real-World Applications

The field of de novo materials design is undergoing a paradigm shift, moving away from traditional, inefficient discovery methods toward a future guided by generative artificial intelligence (AI). Traditional approaches to molecular discovery, such as high-throughput screening and natural product isolation, are often costly, time-consuming, and limited in their ability to explore the vastness of chemical space, which is estimated to contain up to 10^60 drug-like molecules [21] [22] [23]. Generative AI offers a powerful alternative by enabling the data-driven creation of novel molecular structures tailored to specific physicochemical and biological requirements [21] [24]. This technical guide examines three foundational pillars of generative AI for molecular design—Variational Autoencoders (VAEs), Chemical Language Models (CLMs), and Diffusion Models—framing them as essential components of a modern, computationally driven research pipeline for de novo materials design. These technologies collectively provide the means to efficiently navigate the immense molecular universe and accelerate the development of new therapeutics and functional materials [22] [25].

Molecular Representations: The Substrate for AI

Before a generative model can process a molecule, the chemical structure must be converted into a machine-readable format. The choice of representation profoundly influences the model's ability to learn and generate valid and novel structures [23].

- String-Based Representations: These treat a molecule as a sequence of characters.

- SMILES (Simplified Molecular Input Line Entry System): A compact string notation obtained by traversing a molecular graph. While human-readable, SMILES can be syntactically fragile, often leading to invalid string generation [26] [23].

- SELFIES (Self-referencing embedded strings): A robust alternative where every string, by design, corresponds to a valid molecular structure. This enforced validity makes SELFIES particularly attractive for generative modeling, as it eliminates the problem of invalid outputs [26] [23].

- Graph-Based Representations: These offer a more intuitive description of molecular structure.

- 2D Molecular Graphs: Represent a molecule as a set of nodes (atoms) and edges (bonds), capturing topological connectivity [23].

- 3D Molecular Graphs: Incorporate spatial atomic coordinates, which are critical for modeling stereochemistry and ligand-protein interactions in structure-based drug design [26] [27].

- Surface and Point Cloud Representations: Used in more advanced applications, these represent the molecular surface or 3D structure as a set of points (point clouds) or polygons (meshes), which can be characterized by chemical and geometric features for shape-based matching [23].

Table 1: Key Molecular Representations in Generative AI

| Representation Type | Format | Key Features | Common Applications |

|---|---|---|---|

| SMILES | String | Compact, human-readable; prone to syntactic invalidity [26] [23] | Ligand-based design, early generative models |

| SELFIES | String | Guaranteed chemical validity; robust for generation [26] [23] | De novo molecular generation, complex macromolecules |

| 2D Graph | Graph (Nodes & Edges) | Intuitively represents atomic connectivity [23] | Property prediction, topology-focused generation |

| 3D Graph | Graph with Coordinates | Encodes spatial and stereochemical information [26] [27] | Structure-based drug design, molecular docking |

Generative Model Architectures

Variational Autoencoders (VAEs)

VAEs are a class of generative neural networks that learn a compressed, continuous latent representation of input data. The model consists of an encoder that maps an input molecule (e.g., a SMILES string or graph) into a probability distribution in a lower-dimensional latent space, and a decoder that reconstructs the molecule from a point sampled from this distribution [24]. This architecture ensures a smooth and structured latent space, enabling the generation of novel, realistic molecular structures by sampling and decoding new latent points [24].

Optimization Strategies for VAEs: A primary application of VAEs in molecular design is goal-directed optimization, which is often achieved by coupling the VAE with an optimization strategy that operates on the latent space.

- Property-Guided Generation: A predictive model (e.g., a neural network) is trained to map latent vectors to specific molecular properties. This allows researchers to perform optimization in the latent space, moving in directions that increase the predicted property before decoding the optimized vector into a new molecule [24].

- Bayesian Optimization (BO): In scenarios where property evaluation is computationally expensive (e.g., docking simulations), BO is used as a sample-efficient optimizer. It builds a probabilistic model of the objective function and uses it to select the most promising latent vectors to decode and evaluate next [24].

Chemical Language Models (CLMs)

Chemical Language Models leverage architectures from natural language processing, such as Transformers, by treating molecular representations (primarily SMILES or SELFIES) as sentences and atoms or tokens as words [24]. These models learn the statistical "grammar" and "syntax" of chemical structures, allowing them to generate novel, valid molecular strings in an autoregressive manner—predicting the next token in a sequence based on all previous tokens [28]. The transformer's self-attention mechanism is particularly adept at capturing long-range dependencies in the molecular string, which is crucial for learning complex structural patterns [24].

Training and Optimization Protocols:

- Pre-training: CLMs are often first pre-trained on large, unlabeled corpora of chemical structures (e.g., from public databases like ChEMBL or ZINC) in a self-supervised manner. This step teaches the model the general rules of chemical validity and the distribution of chemical space [28].

- Fine-Tuning for Goal-Directed Design: To steer generation toward molecules with desired properties, CLMs are fine-tuned using reinforcement learning (RL). A reward function is defined that incorporates multiple objectives, such as target affinity, drug-likeness (QED), and synthetic accessibility (SAscore). The RL algorithm then updates the model's parameters to maximize the expected reward, effectively guiding the generation process [26] [29].

Diffusion Models

Diffusion models have recently emerged as state-of-the-art generative models, demonstrating exceptional performance in generating high-quality and diverse molecular structures [25]. Their operation is based on a two-step probabilistic process:

- Forward Diffusion Process: The original molecular data (e.g., a 3D graph or structure) is progressively corrupted by adding Gaussian noise over a series of steps until it becomes pure noise.

- Reverse Denoising Process: A neural network is trained to learn to reverse this noising process. Starting from pure noise, the model iteratively denoises the data to reconstruct a novel molecular sample from the original data distribution [25].

These models are particularly powerful for generating molecules directly in 3D, capturing crucial geometric and stereochemical information necessary for predicting biological activity and binding modes [27] [25]. Frameworks like Equivariant Diffusion Models ensure that the generated 3D structures are rotationally and translationally invariant, a critical property for meaningful geometric data [25].

Key Formulations:

- Denoising Diffusion Probabilistic Models (DDPMs): A foundational formulation that uses a fixed Markov chain to define the forward and reverse processes [25].

- Score-Based Generative Models (SGMs): These models learn to estimate the gradient of the log-probability of the data (the "score") and use Langevin dynamics to sample from the data distribution [24].

Table 2: Comparative Analysis of Core Generative Architectures

| Architecture | Core Principle | Molecular Representation | Strengths | Weaknesses |

|---|---|---|---|---|

| Variational Autoencoders (VAEs) | Learns compressed latent space for encoding/decoding [24] | SMILES, Graphs [24] [23] | Smooth latent space enables interpolation and optimization [24] | Can generate invalid structures; prone to posterior collapse [23] |

| Chemical Language Models (CLMs) | Autoregressive generation of molecular sequences [28] | SMILES, SELFIES [26] [23] | Captures complex, long-range dependencies; leverages NLP advances [24] | Sequential generation is slow; error propagation in sequences |

| Diffusion Models | Iterative denoising from noise to data [25] | 3D Graphs, Point Clouds, Surfaces [23] [25] | State-of-the-art sample quality; excels at 3D structure generation [27] [25] | Computationally intensive due to iterative steps [25] |

Advanced Optimization and Multi-Target Design

Generating chemically valid structures is only the first step. For practical applications, molecules must be optimized for multiple, often competing, properties simultaneously.

- Reinforcement Learning (RL): RL frameworks train an agent (the generative model) to take actions (generating molecular structures) that maximize a cumulative reward. In molecular design, the reward function is a composite score integrating multiple objectives, such as binding affinity for one or more targets, drug-likeness (QED), low toxicity, and high synthetic accessibility (SAscore) [24] [26]. Models like the Graph Convolutional Policy Network (GCPN) use RL to sequentially add atoms and bonds, directly constructing molecular graphs with targeted properties [24].

- Multi-Objective Optimization: Designing drugs for complex diseases like cancer often requires modulating multiple biological targets simultaneously to overcome redundancy and resistance mechanisms [26]. Deep generative models, empowered by RL, provide scalable platforms for the de novo generation of small molecules with polypharmacological profiles. The reward function in such systems is carefully shaped to balance potency, selectivity, and safety across several targets [26].

- Self-Improving Discovery Frameworks: The integration of generative models, predictive oracles, and RL within a Design-Make-Test-Analyze (DMTA) cycle creates a closed-loop, self-improving system [26]. The generative model produces candidates, which are evaluated by predictive models (or experimentally). The results form a reward signal that refines the generative model via RL. Active learning can be incorporated to select the most informative candidates for the next "Test" cycle, leading to continuous, autonomous improvement of molecular candidates [26].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Generative Molecular AI

| Tool / Resource | Type | Primary Function | Relevance to Research |

|---|---|---|---|

| SMILES/SELFIES | Molecular Representation | String-based encoding of molecular structure [26] [23] | Standardized input format for CLMs and VAEs; SELFIES ensures validity. |

| Molecular Graphs (2D/3D) | Molecular Representation | Graph-based description of atomic connectivity and geometry [26] [23] | Input for graph neural networks in VAEs and diffusion models; essential for 3D property prediction. |

| QED (Quantitative Estimate of Drug-likeness) | Computational Metric | Calculates the drug-likeness of a molecule [28] | Key objective in reward functions for RL-driven optimization. |

| SAscore (Synthetic Accessibility Score) | Computational Metric | Estimates the ease of synthesizing a molecule [28] | Crucial reward component to ensure generated molecules are practical to make. |

| Benchmarking Datasets (e.g., ZINC, ChEMBL) | Data Resource | Large, publicly available libraries of chemical compounds [21] [28] | Standardized data for pre-training and benchmarking generative models. |

| Docking Simulations (e.g., AutoDock Vina) | Software | Predicts the binding pose and affinity of a ligand to a protein target [24] | High-fidelity evaluation method used in BO loops or as a reward signal in RL. |

Experimental Protocols for Model Evaluation

Robust evaluation is critical for validating the performance of generative models in a preclinical research setting. The following protocol outlines a standard workflow for benchmarking and prospective testing.

Protocol 1: Benchmarking Generative Models for De Novo Molecular Design

Objective: To quantitatively evaluate and compare the performance of VAE, CLM, and diffusion models on a set of standardized tasks related to goal-directed molecular generation.

Materials:

- Software: Python, deep learning frameworks (PyTorch/TensorFlow), cheminformatics libraries (RDKit).

- Data: Standardized benchmarking dataset (e.g., ZINC250k [23] or MOSES [28]).

- Hardware: GPU-enabled computing cluster.

Method:

- Training and Validation:

- Partition the dataset into training, validation, and test sets (e.g., 80/10/10 split).

- Train each generative model (VAE, CLM, diffusion) on the training set.

- Tune hyperparameters using the validation set to maximize performance.

- Unconditional Generation and Metric Calculation:

- Generate 10,000-50,000 molecules from each trained model.

- Calculate the following key metrics using the test set as a reference:

- Validity: The proportion of generated strings that correspond to a valid chemical structure.

- Uniqueness: The proportion of unique molecules among the valid generated ones.

- Novelty: The fraction of generated molecules not present in the training set.

- Internal Diversity: Measures the structural diversity within the set of generated molecules.

- Goal-Directed Optimization Benchmark:

- Define a target property for optimization (e.g., penalized logP, or a specific biological activity).

- For each model, run a defined optimization procedure (e.g., latent space optimization for VAEs, RL fine-tuning for CLMs, guided diffusion).

- Generate a set of optimized molecules and record the property improvement and success rate (e.g., the number of molecules exceeding a property threshold).

Reporting: Results should be reported in a table format for clear comparison, including all calculated metrics and computational costs.

Generative AI, with its core architectures of VAEs, CLMs, and diffusion models, has fundamentally reshaped the landscape of de novo materials design research. By enabling the direct, data-driven creation of novel molecules optimized for complex, multi-objective criteria, these technologies are accelerating the journey from hypothesis to candidate. While challenges remain—including the need for better integration of physicochemical priors, improved data efficiency, and enhanced model interpretability—the trajectory is clear [21]. The convergence of these generative paradigms with automated closed-loop experimentation and increasingly powerful predictive oracles points toward a future of autonomous, self-improving molecular discovery systems, poised to tackle some of the most pressing challenges in drug development and materials science [26] [27].

In the field of de novo materials design, researchers face a fundamental challenge: the vastness of possible material compositions and processing routes makes exhaustive experimental investigation prohibitively expensive and time-consuming. Active Learning (AL) has emerged as a powerful computational framework to address this by strategically guiding the discovery process. AL is a machine learning paradigm in which a model intelligently selects the most informative data points on which to learn, thereby achieving high performance with minimal labeled data [30] [31]. By iteratively cycling between computational predictions and targeted experimental validation, AL prioritizes experiments or simulations that are most likely to yield valuable information, dramatically accelerating the optimization of materials and molecules [30] [32]. This guide details the core principles, methodologies, and applications of AL frameworks for the iterative refinement of materials and molecules, positioning it as a cornerstone of modern, data-driven design research.

Core Components and Workflow of an AL Framework

A typical AL framework functions as a closed-loop system, integrating several key components to enable intelligent exploration of a design space. The core cycle involves an initial model trained on a small dataset, which is then used to evaluate a larger pool of unlabeled candidates. The most promising candidates are selected via a specific acquisition function, after which they are experimentally synthesized and tested. The results from these experiments are fed back into the model for retraining, refining its predictive power for the next cycle [30] [32] [33].

Key Workflow Stages

The logical flow of a generalized AL framework for materials and molecule design is illustrated below.

Diagram Title: Active Learning Iterative Cycle

- Dataset Construction and Initial Model Training: The process begins with the assembly of an initial dataset, which can combine historical experimental data, literature results, and computational data [32]. This dataset, though small, is used to train a preliminary surrogate model (or an ensemble of models) to predict material or molecular properties [32].

- Candidate Evaluation and Selection via Acquisition Functions: The trained model is used to screen a vast pool of virtual candidates. The selection of which candidates to test experimentally is governed by an acquisition function, which balances two key objectives [32] [31]:

- Exploitation: Prioritizing candidates predicted to have high performance (e.g., superior strength or binding affinity).

- Exploration: Prioritizing candidates where the model's predictions are most uncertain, thereby gaining knowledge about underrepresented regions of the design space. A common ranking criterion combines the mean predicted property (e.g., strength) and the standard deviation (uncertainty) to systematically balance this trade-off [32].

- Experimental Validation and Iterative Feedback: The top-ranked candidates are synthesized and characterized in the laboratory. The results of these "closed-loop" experiments are then added to the training dataset. The model is retrained on this enlarged and improved dataset, ready to begin a new, more informed cycle of discovery [32] [33]. This iterative process continues until a performance target is met or resources are exhausted.

Acquisition Functions: The "Brain" of Active Learning

The acquisition function is the intelligence engine of the AL cycle, determining its efficiency. Several core strategies have been developed, each with distinct advantages.

Table: Core Acquisition Functions in Active Learning

| Strategy | Core Principle | Typical Use Case | Key Advantage |

|---|---|---|---|

| Uncertainty Sampling [31] | Selects data points for which the model's prediction is least confident. | Rapidly reduces model confusion on ambiguous cases. | Simple to implement and computationally efficient. |

| Query-by-Committee (QBC) [31] | Uses a committee of models; selects points where committee disagreement is highest. | Complex spaces where model uncertainty is high. | More robust than single-model uncertainty; captures model uncertainty. |

| Expected Model Change [31] | Selects samples that would cause the largest change to the model parameters if labeled. | Maximizing learning progress per experiment. | Directly targets data that will most improve the model. |

| Diversity Sampling [31] | Selects a diverse set of points to broadly cover the input data space. | Initial stages or to prevent sampling bias. | Ensures a representative dataset and improves generalization. |

| Bayesian Optimization [30] | Uses a probabilistic model to balance exploration and exploitation for global optimization. | Optimizing black-box functions with expensive evaluations. | Formally balances exploration and exploitation. |

In practice, hybrid strategies that combine, for example, uncertainty and diversity are often used to prevent the selection of a batch of very similar, albeit uncertain, candidates [31].

Case Studies in Materials and Drug Design

The following case studies demonstrate the practical implementation and efficacy of AL frameworks in real-world research scenarios.

Case Study 1: Process-Synergistic Active Learning (PSAL) for High-Strength Al-Si Alloys

Challenge: Designing high-strength Al-Si alloys is difficult due to complex composition-processing-property relationships and severely imbalanced data across different processing routes (PRs). Simple gravity casting (GC) has abundant data, while complex routes like hot extrusion (GC+HE) have very little [32].

Solution: The PSAL framework was developed to synergistically use data from multiple PRs. It employs a conditional Wasserstein Autoencoder (c-WAE) to generate compositions conditioned on the PR. An ensemble surrogate model (Neural Network and XGBoost) predicts ultimate tensile strength (UTS). Candidates are selected using a ranking criterion balancing predicted UTS (exploitation) and uncertainty (exploration) [32].

Experimental Protocol & Workflow:

- Initial Dataset: 140 composition-process-property entries for 4 PRs (GC, GC+T6, GC+HE, GC+HE+T6).

- Generation & Prediction: The c-WAE generates novel alloy compositions. The ensemble model predicts their UTS.

- Candidate Selection: Top candidates are selected based on a high

Predicted UTS + λ * Standard Deviationscore, with a diversity constraint ensuring selected compositions differ by ≥0.5% in at least one element's mass percent [32]. - Validation & Feedback: The top 3 ranked candidates are experimentally fabricated and tested. The new UTS data is added to the dataset, and the model is retrained.