Data Veracity and Quality in Biomedical Research: Foundational Principles and Practical Solutions for Drug Development

This article provides a comprehensive guide to data quality and veracity challenges in drug discovery and development.

Data Veracity and Quality in Biomedical Research: Foundational Principles and Practical Solutions for Drug Development

Abstract

This article provides a comprehensive guide to data quality and veracity challenges in drug discovery and development. Tailored for researchers, scientists, and drug development professionals, it synthesizes foundational concepts, methodological frameworks, practical troubleshooting strategies, and validation techniques. The content explores the severe implications of poor data quality, from costly delays to regulatory rejections, and offers actionable insights for building robust data management practices that ensure reliability, compliance, and ultimately, the success of biomedical innovations.

The High Stakes of Data Quality: Defining Veracity and Its Impact on Drug Development

In the data-driven disciplines of materials science and drug development, the integrity of data is not a singular concept. It is a multi-faceted imperative where data veracity and data quality play distinct yet complementary roles. Data veracity concerns the inherent truthfulness and trustworthiness of the data source and its contextual accuracy, while data quality is a measurable state defined by specific, intrinsic characteristics like accuracy and completeness. For researchers dealing with complex datasets from high-throughput experiments or real-world evidence, understanding this distinction is not academic—it is critical for ensuring that groundbreaking discoveries in the lab translate into safe and effective real-world applications.

Defining the Concepts: Beyond the Basics

Data Veracity: The Truthfulness of Data

Data veracity, in the context of big data, extends beyond simple accuracy. It refers to how accurate or truthful a data set may be, and more broadly, how trustworthy the data source, type, and processing are within a specific context [1]. It is the dimension that asks, "Can I trust this data for my specific purpose?" and involves filtering out what is important from the noise to generate a deeper, more contextualized understanding [1].

Key challenges to veracity include:

- Bias, abnormalities, and inconsistencies in data collection.

- Duplication across disparate data sources.

- Data Volatility: The rate of change and lifetime of the data. For instance, social media sentiment data is highly volatile, while weather trends are less so [1].

Data Quality: The Condition of Data

Data quality, in contrast, is a measure of the condition of data based on factors such as accuracy, completeness, consistency, and reliability [2]. It is an outcome—a state of being that can be defined, measured, and managed against a set of standards. Industry literature often breaks down data quality into intrinsic and extrinsic dimensions [2].

A Comparative Analysis: Veracity vs. Quality

The table below synthesizes the core distinctions between these two critical concepts.

Table 1: A Comparative Framework of Data Veracity and Data Quality

| Aspect | Data Veracity | Data Quality |

|---|---|---|

| Core Focus | Truthfulness, credibility, and contextual reliability of the data and its sources [1]. | Intrinsic and extrinsic characteristics that determine the data's fitness for use [2]. |

| Primary Concern | "Can I trust this data in this specific context?" | "Is this data accurate, complete, and timely?" |

| Scope | Broader, encompassing the origin, processing method, and applicability of the data [1]. | Narrower, focusing on the technical condition and characteristics of the data itself [2]. |

| Nature | Contextual and often qualitative. | Measurable and quantifiable through defined dimensions. |

| Key Challenges | Bias, volatility, relevance of data processing to business needs, trust in source [1]. | Inaccuracy, missing values, inconsistency, lack of timeliness [3] [2]. |

Assessment Methodologies and Experimental Protocols

Establishing robust protocols is essential for managing both veracity and quality in research settings.

Assessing Data Veracity

Veracity assessment is a holistic process that evaluates the data's entire lifecycle. The following workflow outlines a systematic protocol for establishing data veracity.

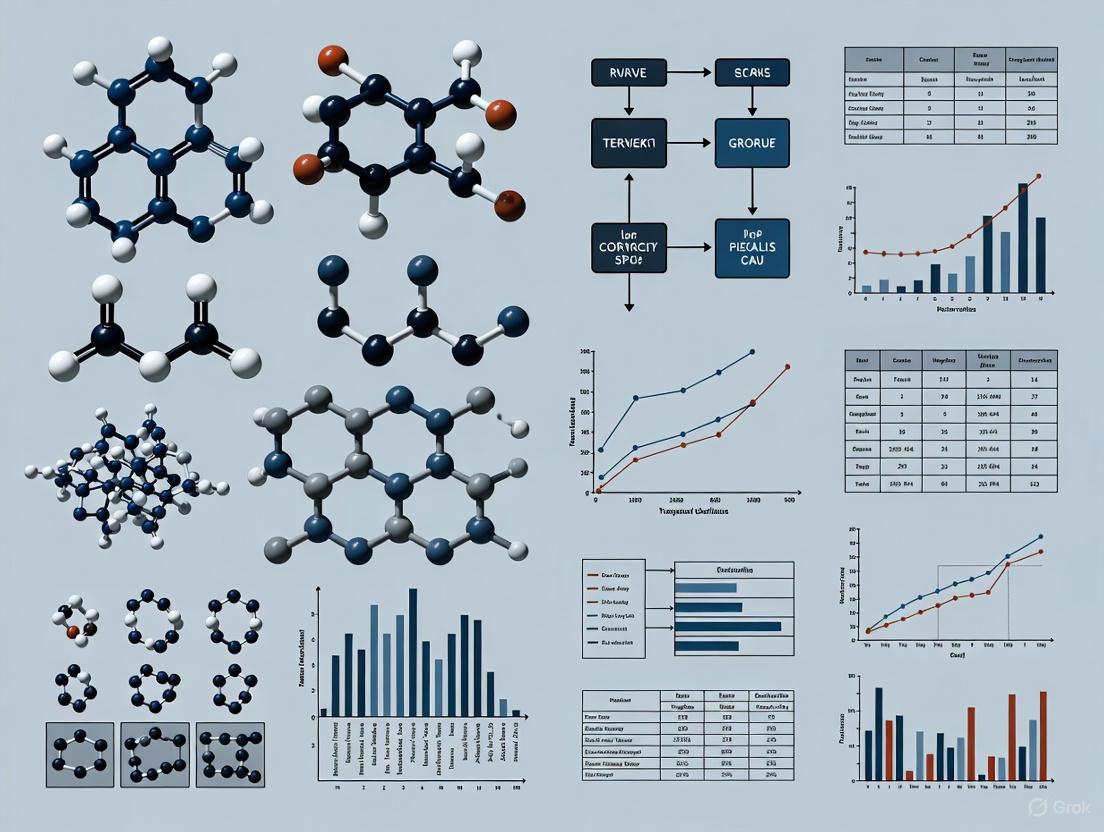

Diagram 1: Veracity Assessment Workflow

The corresponding experimental protocol for this workflow is detailed below.

Table 2: Experimental Protocol for Data Veracity Assessment

| Step | Methodology | Objective | Tools & Techniques |

|---|---|---|---|

| 1. Source Trustworthiness Audit | Evaluate the provenance and historical reliability of the data source. | Establish foundational credibility of the data origin. | Provenance tracking metadata, source certification records. |

| 2. Context & Relevance Check | Verify that the data and its processing logic align with the specific research objectives. | Ensure the data is meaningful and applicable to the problem. | Consultation with domain experts, review of data dictionaries. |

| 3. Bias & Anomaly Detection | Employ statistical and ML techniques to identify outliers, duplicates, and systematic biases. | Remove abnormalities that distort the data's truthfulness. | Statistical process control (SPC), clustering algorithms (e.g., DBSCAN). |

| 4. Processing Method Review | Scrutinize the ETL/ELT logic and transformations for contextual sense. | Ensure the processing amplifies the signal, not the noise. | Code review, data lineage tools (e.g., Datafold, OpenLineage). |

| 5. Generate Veracity Score | Synthesize findings from steps 1-4 into a quantifiable metric or a qualitative trust tier. | Provide a summary indicator of the dataset's overall veracity. | Multi-criteria decision analysis (MCDA), weighted scoring models. |

Ensuring Data Quality

Data quality is managed through continuous monitoring and validation against predefined dimensions. Data observability platforms serve as the technological means to this end, providing real-time visibility into the health of data systems [2].

Table 3: Data Quality Dimensions and Observability Metrics

| Data Quality Dimension | Definition | Observability Metrics & Checks |

|---|---|---|

| Accuracy (Intrinsic) | Does the data correctly represent the real-world object or event? [2] | Record-level validation, rule-based checks (e.g., value in allowed set). |

| Completeness (Intrinsic) | Are the data model and values complete? Are required fields populated? [2] | Percentage of non-null values, monitoring for sudden drops in row count. |

| Consistency (Intrinsic) | Is the data internally consistent across its ecosystem? [2] | Cross-table validation, checks for contradictory facts, freshness deviation. |

| Freshness (Intrinsic) | Is the data up-to-date and available when needed? [2] [3] | Data timestamp monitoring, alerting on pipeline execution failures/delays. |

| Timeliness (Extrinsic) | Is the data available when needed for the use cases at hand? [2] | End-to-end pipeline latency measurement against service level agreements (SLAs). |

The Scientist's Toolkit: Research Reagent Solutions

Implementing a framework for veracity and quality requires a suite of methodological and technical tools. The following table catalogs essential "reagents" for this endeavor.

Table 4: Essential Tools for Managing Data Veracity and Quality

| Tool Category | Specific Technology/Method | Function in Research |

|---|---|---|

| Causal Machine Learning (CML) | Doubly Robust Estimation, Targeted Maximum Likelihood Estimation (TMLE) [4] | Mitigates confounding in observational data (e.g., RWD), strengthening causal validity for veracity. |

| Data Observability Platforms | Metaplane, Monte Carlo [2] | Provides continuous monitoring of data pipelines, automatically detecting quality anomalies. |

| High-Performance Computing (HPC) | High-Throughput Screening Simulations [5] | Enables rapid, large-scale validation of data and hypotheses across vast material or chemical spaces. |

| Data Validation Frameworks | dbt Tests, Great Expectations | Codifies business rules and data quality tests directly into data transformation workflows. |

| Color Palette Tools | ColorBrewer, Viz Palette [6] [7] | Ensures accessible and accurate data visualization, critical for correct interpretation of complex results. |

Applications in Materials and Drug Development Research

The distinction between veracity and quality is acutely relevant in high-stakes research fields.

Case Study: Accelerating CO2 Capture Catalyst Discovery

In a project led by NTT DATA, a Materials Informatics (MI) approach was used to discover novel molecules for CO2 capture and conversion [5]. The veracity of the endeavor was established by leveraging the trusted, high-quality data from peer-reviewed sources and university partners. The quality of the computational output was ensured through high-performance computing (HPC) and rigorous machine learning (ML) models. The project integrated Generative AI to propose new molecular structures, but the final candidates were subjected to evaluation by chemistry experts, a critical veracity step to contextualize the output [5]. This workflow demonstrates how quality computational data and veracious scientific judgment must converge for successful innovation.

The Role of Real-World Data (RWD) and Causal Machine Learning (CML) in Drug Development

The integration of RWD (e.g., from electronic health records, wearables) into drug development presents a prime example of the veracity/quality interplay [4]. While RWD can be of high quality (complete, accurate), its veracity for causal inference is inherently challenged by confounding and biases due to the lack of randomization [4]. Here, Causal Machine Learning (CML) methods are employed not to improve data quality, but to bolster data veracity. Techniques like advanced propensity score modeling and doubly robust inference are used to mitigate confounding, making the data more truthful for estimating real-world treatment effects [4]. This allows for more robust trial emulation and identification of patient subgroups, enhancing the drug development pipeline.

For researchers and scientists at the forefront of materials science and pharmaceutical development, a nuanced understanding of data veracity and data quality is non-negotiable. Data quality is the foundation—the measurable hygiene of the data. Data veracity is the overarching principle of trust and contextual truth that ensures this quality data leads to valid, reliable, and impactful conclusions. By implementing distinct yet integrated protocols for both, as outlined in this guide, research teams can significantly de-risk their innovation pipelines and accelerate the journey from raw data to transformative real-world solutions.

In the context of materials science and drug development, the veracity of data is not merely an operational concern but a foundational pillar of scientific integrity and innovation. High-quality data powers accurate analysis, which in turn drives trusted business decisions and groundbreaking research [8]. Poor data quality, however, carries staggering costs—both financial and strategic—with one Gartner estimate suggesting poor data quality results in additional spend of $15M in average annual costs [8]. For researchers and scientists, the multidimensional nature of data quality represents both a challenge and an imperative, as the "rule of ten" dictates that it costs ten times as much to complete a unit of work when the data is flawed than when the data is perfect [8].

This technical guide examines the four core dimensions of data quality—Accuracy, Completeness, Consistency, and Timeliness—through the specific lens of materials data veracity and quality issues research. These dimensions serve as measurement attributes that can be individually assessed, interpreted, and improved to ensure data fitness for purpose in high-stakes research environments [8]. The aggregated scores across these dimensions provide a comprehensive picture of data quality and its fitness for use in scientific applications ranging from pharmaceutical development to materials characterization [8].

Core Dimensions of Data Quality

Data quality dimensions are a framework for effective data quality management, serving as a practical way to measure current data quality and set realistic improvement goals [9]. Instead of vaguely aiming for "better data," research teams can target specific problems like reducing duplicate experimental records by 50% or ensuring all critical material property fields are populated 99% of the time [9]. When data meets standards across all dimensions, downstream analytics and scientific intelligence actually work: research reports reflect reality, machine learning models train on clean inputs, and experimental dashboards show numbers people can trust [9].

Accuracy

Definition and Scientific Importance

Data accuracy represents the degree to which data correctly represents the real-world scenario and conforms to a verifiable source [8]. In materials science and drug development, accuracy ensures that the associated real-world entities can participate as planned in research workflows. Accurate data ensures that experimental results reflect true phenomena rather than measurement artifacts or systematic errors, making it fundamental for reproducible research [10].

The consequences of inaccurate data in scientific contexts can be severe. In healthcare research, inaccurate patient medication dosage data could literally threaten lives if acted upon incorrectly [9]. In materials research, inaccurate characterization data could lead to faulty structure-property relationships and invalid scientific conclusions. Accuracy is highly impacted by how data is preserved through its entire journey, and successful data governance can promote this data quality dimension [8].

Measurement and Metrics

Measuring data accuracy requires verification with authentic references or through testing against known standards [8]. The following table summarizes key accuracy metrics and their application in research contexts:

Table 1: Data Accuracy Metrics and Measurement Approaches

| Metric | Definition | Research Application Example | Measurement Technique |

|---|---|---|---|

| Precision | The ratio of relevant data to retrieved data | Measuring accuracy of automated material property extraction from literature | Statistical analysis of retrieved versus relevant data points [9] |

| Recall | Measures sensitivity; the ratio of relevant data to the entire dataset | Comprehensive identification of all relevant drug compound interactions | Sampling techniques to estimate coverage of known interactions [9] |

| F-1 Score | The harmonic mean of precision and recall | Evaluating performance of automated experimental data classification systems | Calculation based on precision and recall metrics [9] |

| Error Rate | Percentage of data values failing verification against authoritative sources | Quality control of experimental measurements against certified reference materials | Automated validation processes comparing values to known standards [9] |

Experimental Protocol for Assessing Accuracy

Title: Reference Material Verification Protocol for Experimental Data Accuracy Assessment

Purpose: To verify the accuracy of experimental measurements through comparison with certified reference materials (CRMs) or authoritative data sources.

Materials and Reagents:

- Certified Reference Materials relevant to the experimental domain

- Control samples with known properties

- Measurement instrumentation with recent calibration records

- Data recording system with audit trail capabilities

Procedure:

- Select appropriate reference materials that span the expected range of experimental measurements.

- Perform triplicate measurements of each reference material using standard experimental protocols.

- Record all measurement data along with environmental conditions (temperature, humidity, etc.) and instrument settings.

- Calculate measurement accuracy using the formula:

Accuracy (%) = [1 - |(Measured Value - Reference Value)| / Reference Value] × 100 - Establish accuracy thresholds based on research requirements (e.g., >95% for critical material properties).

- Document all deviations from reference values and investigate systematic errors.

- Implement corrective actions for accuracy values falling below established thresholds.

Validation: Repeat the verification protocol following any significant change to measurement systems or procedures.

Completeness

Definition and Scientific Importance

Data completeness describes whether the data collected reasonably covers the full scope of the research question being investigated, assessing if there are any gaps, missing values, or biases introduced that will impact results [9]. In materials data veracity research, completeness ensures that all necessary data points are available to draw valid scientific conclusions without gaps that might compromise analytical integrity [10].

Incomplete data can skew results and lead to wrong conclusions in scientific research [9]. Missing entries or fields might cause undercounting or misrepresentation of phenomena. If 10% of experimental trials lack critical environmental condition data, any analysis of process-property relationships becomes biased or invalid. In drug development, missing data points in high-throughput screening can lead to false negatives in compound activity assessment, potentially overlooking promising therapeutic candidates [10].

Measurement and Metrics

Completeness is typically measured by assessing the presence of required data elements across datasets. The following table outlines key completeness metrics relevant to research contexts:

Table 2: Data Completeness Dimensions and Assessment Methods

| Completeness Level | Definition | Assessment Method | Research Impact |

|---|---|---|---|

| Attribute-level | Evaluates how many individual attributes or fields are missing within a dataset | Null check analysis for mandatory fields [9] | Impacts granularity of analysis and modeling capabilities |

| Record-level | Evaluates the completeness of entire records or entries in a dataset | Record count checks against expected volumes [9] | Affects statistical power and representativeness of samples |

| Referential Completeness | Ensures that dataset references resolve correctly | Verification of foreign key relationships and cross-references [9] | Critical for integrating data from multiple experimental techniques |

| Temporal Completeness | Assesses whether data covers the required time period | Analysis of timestamps and experimental sequence gaps | Essential for time-dependent phenomena and kinetic studies |

Experimental Protocol for Assessing Completeness

Title: Systematic Completeness Assessment for Experimental Datasets

Purpose: To quantitatively evaluate and document data completeness across experimental datasets to identify and address gaps that may compromise research validity.

Materials and Reagents:

- Complete dataset specification defining all required data elements

- Data validation framework or tooling

- Statistical analysis software

- Data curation and imputation tools

Procedure:

- Define completeness requirements for each data entity, specifying mandatory versus optional fields based on research objectives.

- Execute attribute-level completeness checks by scanning each field in the dataset for null or blank values.

- Perform record-level completeness assessment by comparing actual record counts against expected volumes based on experimental design.

- Conduct referential completeness verification by ensuring all data relationships (e.g., sample ID to characterization data) resolve correctly.

- Calculate completeness metrics using the formula:

Completeness (%) = (Number of Complete Records / Total Number of Records) × 100 - Analyze patterns in missing data to identify systematic data collection issues (e.g., specific instruments, time periods, or researchers associated with missing data).

- Implement data imputation strategies where appropriate, using methods such as mean/median substitution, predictive modeling, or business rule application [11].

- Document completeness results in research metadata to inform downstream analysis.

Validation: Periodically re-assess completeness throughout the data lifecycle, particularly after data transformations or integrations.

Consistency

Definition and Scientific Importance

Data consistency means that data does not conflict between systems or within a dataset, ensuring that all copies or instances of a data point agree across representations [9]. Consistency also covers format and unit consistency, ensuring data is represented uniformly throughout research datasets [10]. In scientific contexts, consistency ensures that experimental data collected across different instruments, time periods, or research groups can be meaningfully compared and integrated.

Inconsistencies create confusion and errors in research interpretation [9]. If one analytical instrument reports concentration in molar units while another uses millimolar, direct comparison becomes problematic without conversion. Such conflicts erode confidence in data and can lead to "multiple versions of the truth," causing misreporting or faulty scientific conclusions [9]. Consistency becomes especially critical in integrated research environments when multiple databases or data lakes consolidate information from various experimental sources.

Measurement and Metrics

Consistency assessment involves identifying contradictions or format discrepancies across datasets and systems. The following table outlines key consistency metrics:

Table 3: Data Consistency Dimensions and Verification Methods

| Consistency Type | Definition | Verification Method | Research Application |

|---|---|---|---|

| Cross-system Consistency | Agreement of data values across different systems | Cross-system reconciliation and checksum validation [9] | Ensuring analytical instruments and LIMS systems report matching values |

| Temporal Consistency | Maintenance of logical order and sequencing over time | Timestamp validation and sequence analysis [11] | Verification of experimental procedure sequencing and time-series data integrity |

| Format Consistency | Uniformity of data representation and units | Format standardization checks and pattern validation [11] | Standardization of measurement units and data formats across research groups |

| Semantic Consistency | Consistent meaning of data elements across contexts | Business rule confirmation and ontology alignment [11] | Alignment of terminology across multidisciplinary research teams |

Experimental Protocol for Assessing Consistency

Title: Cross-System Consistency Validation for Research Data

Purpose: To identify and resolve inconsistencies in research data across multiple systems, instruments, or datasets to ensure reliable integration and comparison.

Materials and Reagents:

- Multiple data sources containing related research data

- Data comparison and profiling tools

- Unit conversion libraries and standardization rules

- Reference standards for measurement normalization

Procedure:

- Identify systems and datasets containing related research data that should be consistent.

- Select key data points for comparison that are common across systems and critical to research outcomes.

- Establish a baseline system that will serve as the standard for comparison based on data quality assessment.

- Extract comparable data from each system using consistent query parameters and timeframes.

- Execute comparison logic to match equivalent data points across systems, flagging discrepancies beyond established thresholds.

- Quantify consistency using the formula:

Consistency (%) = (Number of Consistent Values / Total Number of Comparisons) × 100 - Analyze inconsistency patterns to identify root causes (e.g., different measurement principles, calibration schedules, or unit conventions).

- Implement resolution strategies such as format standardization, unit conversion, or measurement protocol alignment.

- Establish ongoing monitoring through automated consistency checks in data pipelines.

Validation: Re-test consistency following resolution actions and after system or procedural changes.

Timeliness

Definition and Scientific Importance

Data timeliness is the degree to which data is up-to-date and available at the required time for its intended use [9]. Also referred to as data freshness, this dimension is crucial for enabling researchers to make accurate decisions based on the most current information available [10]. In fast-moving research domains such as high-throughput screening or dynamic material synthesis, having the most recent data is critical for experimental direction and resource allocation.

Many research decisions are time-sensitive [9]. In drug discovery, using last week's compound screening data for today's synthesis decisions becomes problematic when new results continuously emerge. A lack of timeliness results in decisions based on old information, which proves especially dangerous in competitive research environments where being first to discovery carries significant advantage [10]. Timeliness also affects collaborative research, where delayed data sharing can impede project progress across multiple teams.

Measurement and Metrics

Timeliness assessment focuses on the age of data and its availability relative to need. The following table outlines key timeliness metrics:

Table 4: Data Timeliness Dimensions and Monitoring Approaches

| Timeliness Metric | Definition | Monitoring Approach | Research Significance |

|---|---|---|---|

| Data Freshness | Age of data and refresh frequency | Timestamp analysis and update frequency tracking [9] | Determines relevance of experimental data to current research decisions |

| Data Latency | Delay between data generation and availability | Pipeline monitoring and processing time measurement [9] | Impacts speed of research iteration and experimental adjustment |

| Time-to-Insight | Total time from data generation to actionable insights | End-to-end process timing from experiment completion to analysis availability [9] | Measures overall research efficiency and agility |

| SLA Compliance | Adherence to scheduled data availability targets | Monitoring of data delivery against service level agreements [9] | Ensures reliable data flow for time-sensitive research activities |

Experimental Protocol for Assessing Timeliness

Title: Data Timeliness and Freshness Evaluation for Research Pipelines

Purpose: To measure and optimize the timeliness of research data availability to ensure experimental decisions are based on current information.

Materials and Reagents:

- Data generation timestamps from instruments and systems

- Data pipeline monitoring tools

- Processing time tracking framework

- Alerting system for timeliness threshold violations

Procedure:

- Establish timeliness requirements for each data type based on research urgency and decision cycles.

- Implement timestamp capture at each stage of data generation, processing, and availability.

- Measure data latency by calculating the time difference between data generation and availability for analysis using the formula:

Latency = Data Available Timestamp - Data Generated Timestamp - Calculate data freshness by assessing the age of data when accessed for analysis:

Freshness = Analysis Timestamp - Data Generated Timestamp - Monitor pipeline processing times to identify bottlenecks in data availability.

- Set timeliness thresholds based on research requirements (e.g., "experimental results within 4 hours of assay completion").

- Implement alerting mechanisms for when timeliness thresholds are violated.

- Optimize data workflows to reduce latency through process improvements or technical enhancements.

Validation: Continuously monitor timeliness metrics and re-assess requirements as research priorities evolve.

Integrated Data Quality Assessment Framework

Interdimensional Relationships

The four core dimensions of data quality do not operate in isolation but interact in complex ways that impact overall data veracity. Understanding these interdependencies is crucial for effective data quality management in research environments. For instance, consistency is often associated with accuracy, and any dataset scoring high on both will be a high-quality dataset [8]. Similarly, invalid data will affect the completeness of data, as records may be excluded from analysis due to validity issues [8].

The relationship between timeliness and accuracy presents a particular challenge in research settings. There is often a trade-off between delivering data quickly and ensuring its accuracy, requiring careful balance based on the specific research context. Experimental data used for real-time process control may prioritize timeliness with slightly reduced accuracy, while data for publication must prioritize accuracy even at the cost of longer processing times.

Data Quality Assessment Workflow

Diagram 1: Data Quality Assessment Workflow for Research Data

Research Reagent Solutions for Data Quality Assessment

The following table outlines essential tools and approaches for implementing data quality assessment in research environments:

Table 5: Research Reagent Solutions for Data Quality Management

| Solution Category | Specific Tools/Techniques | Primary Function | Application Context |

|---|---|---|---|

| Data Profiling Tools | OvalEdge, custom Python/R scripts, SQL analysis queries | Automated discovery of data patterns, anomalies, and quality issues [10] | Initial data assessment and ongoing quality monitoring |

| Reference Materials | Certified Reference Materials (CRMs), control samples, standard datasets | Providing ground truth for accuracy verification [10] | Instrument calibration and measurement validation |

| Validation Frameworks | Great Expectations, Deequ, custom business rule engines | Implementing and executing data validation rules [11] | Automated quality checks in data pipelines |

| Metadata Management | Electronic Lab Notebooks (ELNs), Laboratory Information Management Systems (LIMS) | Capturing contextual information and provenance [10] | Ensuring data completeness and lineage tracking |

| Standardization Tools | Unit conversion libraries, ontology management systems, format validators | Enforcing consistency across data sources [11] | Data integration and cross-study comparison |

The multidimensional nature of data quality—encompassing accuracy, completeness, consistency, and timeliness—represents a critical framework for ensuring data veracity in materials science and drug development research. As the volume and complexity of research data continue to grow, systematic approaches to data quality assessment become increasingly essential for maintaining scientific integrity and accelerating discovery.

By implementing the experimental protocols and assessment methodologies outlined in this technical guide, research organizations can establish a robust foundation for data quality management. This foundation enables not only more reliable research outcomes but also more efficient research processes, as high-quality data reduces the need for rework and clarification. In an era where data-driven discovery dominates scientific progress, excellence in data quality management provides a significant competitive advantage and accelerates the translation of research insights into practical applications.

The interconnected nature of these quality dimensions necessitates an integrated approach to assessment and improvement. Research organizations that successfully master these dimensions will be better positioned to leverage emerging technologies such as artificial intelligence and machine learning, which depend critically on high-quality input data to generate valid insights. As research continues to evolve toward more data-intensive methodologies, the principles and practices outlined in this guide will become increasingly central to scientific advancement.

In the high-risk landscape of clinical development, data quality has evolved from a technical concern to a fundamental determinant of financial return on investment (ROI). The pharmaceutical industry invests an average of $2.6 billion to bring a single drug to market, with R&D cycles stretching over 15 years and a success rate of just 6.1% from first-in-human trials to approval [12]. Within this context, poor data quality introduces catastrophic risks that extend beyond scientific validity to directly undermine economic viability. A staggering 67% of organizations across the healthcare landscape report they do not completely trust their data for decision-making, creating a foundation of uncertainty upon which critical, high-value decisions are made [13].

This whitepaper examines the direct and indirect pathways through which deficient data quality derails clinical trials and erodes ROI. By quantifying these impacts and presenting structured frameworks for mitigation, we provide researchers, scientists, and drug development professionals with the evidence and methodologies necessary to safeguard their investments and enhance the probability of technical and regulatory success.

Quantifying the Impact: Data Quality and Trial Outcomes

The financial consequences of poor data quality manifest across the entire clinical trial lifecycle. The following table summarizes the primary cost drivers and their quantitative impacts.

Table 1: Quantitative Impact of Data Quality Issues on Clinical Trials

| Impact Area | Key Statistic | Financial/Business Consequence |

|---|---|---|

| Overall Trial Cost & Efficiency | Average cost to bring a drug to market exceeds $2.6 billion [12]. | Poor data quality contributes to this high cost by causing delays and inefficiencies. |

| Trial Timelines | 80% of clinical trials are delayed [12]. | Data issues are a significant contributor to these delays, increasing operational costs. |

| Trial Success Rates | Only 6.1% of drugs succeed from first-in-human trials to approval [12]. | Unreliable data undermines go/no-go decisions, leading to pursuit of doomed candidates. |

| Operational Trust | 67% of organizations don't completely trust their data for decision-making [13]. | Leads to duplicated efforts, re-work, and inability to make confident, timely decisions. |

| External Data Integration | 82% of healthcare professionals are concerned about the quality of data from external sources [14]. | Hinders collaboration and integration of real-world data, limiting trial insights. |

The Cascade of Data Quality Deficiencies

The relationship between data quality failures and the erosion of ROI is a cascading process, where initial data defects trigger a series of compounding problems that ultimately impact the trial's financial outcome. The following diagram visualizes this critical pathway.

This cascade demonstrates how foundational data issues propagate through the trial lifecycle. For instance, inconsistent data definitions and formats are a top challenge for 45% of organizations, preventing effective data integration and leading to flawed trial design [13]. Furthermore, inadequate tools for automating data quality processes, cited by 49% of organizations as their primary barrier to high-quality data, allow these initial flaws to persist and amplify [13].

Defining Data Quality: A Framework for Clinical Research

For clinical development data to be considered "good" and reliable for high-stakes decision-making, it must exhibit six core attributes. These characteristics align closely with the FAIR (Findable, Accessible, Interoperable, Reusable) principles for scientific data management [15].

Table 2: The Six Core Attributes of High-Quality Clinical Development Data

| Attribute | Definition | FAIR Principle Alignment | Impact of Deficiency |

|---|---|---|---|

| Completeness | Captures the full picture with all relevant variables (e.g., trial design, endpoints, drug modality) [15]. | Findable, Reusable | Missing patient population details or biomarker data can skew AI predictions and derail analysis. |

| Granularity | Provides a detailed, multi-dimensional view at the level of cohorts, endpoints, and patient subgroups [15]. | Interoperable, Reusable | Superficial data masks critical differences between programs, impacting risk assessment. |

| Traceability | Every data point is linked to its source with metadata for validation and compliance [15]. | Findable | Prevents regulatory compliance failures and enables internal validation of results. |

| Timeliness | Data is updated continuously to reflect new trial results and regulatory changes [15]. | Accessible | Outdated data leads to proactive decision-making based on an obsolete landscape. |

| Consistency | Uniform terminology, harmonized ontologies (MeSH, EFO), and standard data formats are used [15]. | Interoperable | A leading cause of poor AI model performance and prevents dataset combination. |

| Contextual Richness | Data is linked to its clinical and regulatory background (e.g., biomarker usage, endpoint rationale) [15]. | Reusable | The difference between predicting technical success and understanding why a program may succeed or fail. |

The Data Reliability Framework

Achieving reliable data in a clinical trial environment requires a systematic approach that extends beyond point-in-time checks to encompass the entire data lifecycle. The following framework outlines the key pillars for building and maintaining data reliability.

This framework is operationalized through specific methodologies. The architectural foundation should be built on principles of modularity, idempotency, and fault tolerance [16]. Multi-stage validation must be implemented across ingestion, transformation, and pre-production stages to catch issues early [16]. Furthermore, establishing clear Service Level Agreements (SLAs) and Objectives (SLOs) for data availability, freshness, and accuracy aligns data system performance with business requirements [16].

The Scientist's Toolkit: Essential Solutions for Data Quality

Implementing the data reliability framework requires a suite of methodological and technological tools. The following table details key research reagent solutions essential for ensuring data veracity in clinical trials.

Table 3: Research Reagent Solutions for Clinical Trial Data Quality

| Solution Category | Specific Tools & Standards | Primary Function |

|---|---|---|

| Data Collection & Management | Electronic Data Capture (EDC) Systems, Clinical Data Management Systems (CDMS) [12]. | Digital backbone for accurate data collection, validation, and query resolution, replacing error-prone paper forms. |

| Data Standards & Ontologies | CDISC (SDTM, ADaM), MeSH (Medical Subject Headings), EFO (Experimental Factor Ontology) [15] [12]. | Provide standardized vocabularies and data structures to ensure consistency and interoperability across datasets. |

| Quality Validation & Testing | Rule-based frameworks (e.g., Great Expectations, Soda) [16]. | Allow teams to define, test, and automate explicit data quality rules against predefined benchmarks. |

| Monitoring & Observability | End-to-end platforms (e.g., Monte Carlo, Anomalo) and Risk-Based Monitoring (RBM) solutions [16] [12]. | Provide real-time pipeline health tracking, anomaly detection, and focus monitoring efforts on key risks. |

| Advanced Analytics & AI | Artificial Intelligence (AI) & Machine Learning (ML) Models, Natural Language Processing (NLP) [12]. | Predict patient responses, identify safety signals, and extract data from unstructured text like physician notes. |

Experimental Protocols for Data Quality Assurance

Protocol: Multi-Stage Data Validation

This protocol provides a detailed methodology for implementing the layered validation critical to catching data quality issues at their source.

- Objective: To systematically identify and remediate data defects at each stage of the clinical data lifecycle, preventing the propagation of errors and ensuring the integrity of analysis-ready datasets.

- Materials: Source data (e.g., EHR, lab systems), EDC/CDMS, rule-based validation software, statistical analysis software (e.g., R, SAS).

- Procedure:

- Ingestion-Level Validation:

- Perform schema validation to detect and flag structural changes from source systems.

- Implement freshness checks to identify delayed or missing data deliveries.

- Run volume anomaly detection to spot statistically significant increases or decreases in data flow.

- Apply format consistency validation to ensure uniformity across similar data sources [16].

- Transformation-Level Validation:

- Execute business rule validation to ensure logical consistency (e.g., a patient's death date cannot precede their birth date).

- Conduct cross-reference checks to verify accuracy against related datasets (e.g., lab values against normal ranges).

- Utilize statistical profiling to identify outliers and anomalies in key variables.

- Perform historical comparisons to detect gradual data drift over time that may indicate a systemic issue [16].

- Pre-Production Validation:

- Conduct comprehensive data quality testing on the final, locked dataset before it is used for analysis or reporting.

- Perform impact analysis to understand the effects of the dataset on all downstream systems, reports, and analytics.

- Engage in user acceptance testing (UAT) with actual business stakeholders (e.g., biostatisticians, clinical scientists) to guarantee the data meets real-world needs.

- Ensure rollback procedures are documented and tested to revert to a previous valid dataset state if any validation fails [16].

- Ingestion-Level Validation:

Protocol: Predictive Analytics for Patient Recruitment Optimization

This protocol leverages AI to address one of the most persistent and costly challenges in clinical trials: patient recruitment and retention.

- Objective: To accelerate patient enrollment, improve cohort quality, and reduce recruitment costs by using predictive models to identify eligible patients with high precision from complex, unstructured data sources.

- Materials: Electronic Health Records (EHRs), clinical trial protocol with eligibility criteria, Natural Language Processing (NLP) engine, machine learning platform, data anonymization or federated learning software.

- Procedure:

- Data Harmonization: Structure the trial's eligibility criteria into a computable format. Map these criteria to standardized ontologies (e.g., MeSH, SNOMED CT) to ensure consistent interpretation across different data sources [15].

- Model Training: Train an NLP algorithm on a labeled dataset of clinical notes. The model learns to identify mentions of specific conditions, medications, procedures, and genetic markers within unstructured physician notes and pathology reports [12].

- Candidate Identification: Deploy the trained model to scan millions of de-identified EHR records. The model extracts patient phenotypes from the text, which are then compared against the computable eligibility criteria to generate a list of potentially eligible patients [12].

- Risk Stratification (Optional): Integrate additional data, such as genomic profiles, to identify patients at high risk for specific adverse events, enabling proactive safety monitoring [12].

- Validation & Output: In a federated learning setup, the model travels to different hospital data centers, trains locally without data leaving the institution, and only aggregated insights are shared. The final output is a prioritized list of candidate patients for site investigators to review and contact, dramatically reducing manual screening efforts [12].

Regulatory Implications and the Path Forward

Global regulators are increasingly emphasizing the importance of data quality and reliability, not just as a component of submission integrity but as a matter of algorithmic accountability. The message from regulators is clear: "It's not enough for data to be accurate, actors must also prove it is reliable" [16]. Initiatives like the FDA's Quality Metrics Reporting Program aim to use manufacturing quality data to develop more risk-based inspection schedules and predict drug shortages [17]. Similarly, the FDA Sentinel initiative demonstrates the power of integrating disparate, high-quality data sources for safety monitoring and risk assessment [15]. This regulatory trajectory means that the cost of non-compliance and poor data governance now far exceeds the investment required for a strong data infrastructure.

The quantification is unequivocal: poor data quality directly derails clinical trials by inflating costs, prolonging timelines, and sabotaging success rates, thereby critically eroding ROI. In an industry where the cost of a wrong decision is measured in years and millions of dollars, investing in robust data quality frameworks is not an optional technical overhead but a strategic business imperative [15]. For drug development professionals, the path forward requires a cultural and operational shift towards prioritizing data veracity from the ground up—embedding reliability into pipeline architecture, implementing rigorous multi-stage validation, and leveraging advanced analytics. By doing so, the industry can transform data from a latent liability into its most powerful asset for de-risking development and delivering life-saving therapies to patients.

For drug development researchers and scientists, regulatory rejection represents the culmination of complex technical and data quality failures rather than simple administrative decisions. The U.S. Food and Drug Administration's (FDA) recent initiative toward "radical transparency" in publishing Complete Response Letters (CRLs) provides unprecedented insight into the systematic barriers preventing new therapies from reaching patients [18] [19]. These documents reveal that manufacturing, data integrity, and clinical trial design deficiencies—not lack of efficacy alone—account for the majority of regulatory setbacks.

This whitepaper analyzes recent FDA rejection data and case studies within the critical framework of materials data veracity and quality issues. For technical professionals engaged in therapeutic development, understanding these failure patterns provides a strategic roadmap for building more robust development programs anchored in data integrity, rigorous quality systems, and predictive experimental design.

Quantitative Analysis of FDA Rejection Data

Recent FDA transparency initiatives have yielded quantitative data on the most frequent deficiencies cited in CRLs. Analysis of 89 recently released letters reveals a consistent pattern of issues across applications [18].

Table 1: Primary Deficiencies Cited in FDA Complete Response Letters (CRLs)

| Deficiency Category | Frequency | Common Subcategories | Typical Impact Timeline |

|---|---|---|---|

| Facility/Manufacturing Issues | 56% (50 of 89 CRLs) [18] | - CGMP non-compliance- Inadequate quality systems- Facility inspection failures | 12-18 month delays for re-inspection [18] |

| Product Quality (CMC) | 47% (42 of 89 CRLs) [18] | - Analytical method validation- Stability data gaps- Unjustified specifications- Process validation flaws | Varies; often requires major re-validation efforts [18] |

| Clinical/Statistical Deficiencies | Over 30% (29 of 89 CRLs) [18] | - Inadequate efficacy evidence- Safety concerns- Trial design flaws | Potentially multi-year delays for new trials [20] |

| Safety and Efficacy (Combined) | 48% of CRLs (Broader Dataset) [19] | - Insufficient risk-benefit profile- Inadequate safety characterization | Significant delays; may require additional clinical studies [19] [21] |

Historical data covering 2000-2012 for New Molecular Entities (NMEs) provides additional context, showing that only 50% of applications were approved on the first cycle, with 73.5% eventually achieving approval after resubmissions that incurred a median delay of 435 days [21].

Case Studies of Technical and Data Quality Failures

Manufacturing and Facility Deficiencies

Case: Manufacturing Process and Data Integrity (Theoretical Reconstruction) Following a CRL citing "inadequate analytical method validation," a subsequent internal investigation revealed that 7 analytical methods required complete revalidation, invalidating 18 months of stability data [18]. The consequence was a 2-year approval delay and several million dollars in remediation costs, stemming from a fundamental failure in initial method validation protocols.

Experimental Protocol: Analytical Method Validation

- Objective: To establish and document that an analytical procedure is suitable for its intended use, ensuring the veracity and reliability of generated stability and potency data.

- Methodology:

- Specificity: Demonstrate ability to unequivocally assess the analyte in the presence of expected components (e.g., impurities, excipients).

- Linearity & Range: Prepare analyte at a minimum of 5 concentrations across a specified range. The correlation coefficient, y-intercept, and slope of the regression line should meet pre-defined criteria.

- Accuracy: Spike placebo with known analyte quantities (e.g., 50%, 100%, 150% of target) and demonstrate recovery within acceptable limits (e.g., 98-102%).

- Precision:

- Repeatability: Minimum of 6 determinations at 100% of test concentration.

- Intermediate Precision: Perform on a different day, with different analyst, different equipment.

- Quantitation Limit (QL) & Detection Limit (DL): Establish via signal-to-noise ratio or standard deviation of response/slope methods.

- Robustness: Deliberately vary method parameters (e.g., pH, temperature, flow rate) to evaluate reliability.

Clinical Data and Trial Execution Failures

Case: Zealand Pharma's Glepaglutide The FDA rejected Zealand's GLP-2 drug for short bowel syndrome based on a single Phase 3 trial (EASE-1) [20]. While the trial met its primary endpoint for one dosing regimen, the CRL cited "numerous uncertainties that limit the interpretability and/or persuasiveness of the results" [20]. Critical data veracity issues included:

- Protocol Deviations: Parenteral support adjustments made outside the trial protocol, potentially confounding efficacy results.

- Incomplete/Missing Data: Urinary output documentation was missing for up to 12% of patients in EASE-1 and 68% in the follow-on EASE-2 study.

- Unreported Adverse Events: An FDA inspection found "numerous unreported adverse events" at one site, including two serious hospitalizations (acute kidney injury, hypomagnesemia) [20].

Case: Lykos Therapeutics' MDMA-Assisted Therapy The FDA rejected the application for midomafetamine for PTSD, citing fundamental trial design and data capture flaws [20]. Key issues included:

- Inability to Maintain Blinding: The psychedelic's profound effects made blinding practically impossible, introducing significant expectancy bias.

- Incomplete Safety Data: The failure to systematically record all "positive" or "favorable" drug experiences as adverse events meant the application failed to adequately characterize the drug's safety profile, abuse potential, or duration of impairment [20].

Experimental Protocol: Ensuring Data Integrity in Clinical Trials

- Objective: To ensure the reliability, accuracy, and completeness of all clinical trial data, adhering to ALCOA+ principles (Attributable, Legible, Contemporaneous, Original, Accurate, plus Complete, Consistent, Enduring, Available).

- Methodology:

- Audit Trails: Ensure all computerized systems (e.g., EDC, CTMS) have secure, computer-generated, time-stamped audit trails to independently record user actions. Audit trails must be on and reviewed regularly [22].

- User Access Controls: Implement role-based access to prevent unauthorized data creation, modification, or deletion.

- Source Data Verification (SDV): Compare data entered in the Case Report Form (CRF) against original source documents (e.g., medical records, lab reports) to ensure accuracy.

- Centralized Monitoring: Use statistical and analytical methods on aggregated data to identify atypical patterns, inconsistencies, or protocol deviations across sites.

- Training and Delegation Logs: Maintain documentation ensuring all site personnel are trained on the protocol and procedures.

Computer System Validation (CSV) and Software Deficiencies

Case: Laboratory Information Management System (LIMS) Failure A major pharmaceutical company received an FDA Warning Letter after inspectors found critical data integrity flaws in a newly implemented LIMS [22]. Deficiencies included:

- Disabled Audit Trails: The system was configured with audit trails turned off, making it impossible to track data changes.

- Data Manipulation: Analysts could overwrite test results without valid, documented justification.

- Inadequate Backup Procedures: Inconsistent data backup practices threatened the integrity of critical test data. The company was forced to undertake a full, costly revalidation of the LIMS [22].

Case: Electronic Batch Record (EBR) System Validation A medium-sized manufacturer faced regulatory non-compliance from the EMA after hastily implementing an EBR system [22]. The failure was rooted in poor upfront specification and testing:

- Incomplete User Requirement Specifications (URS).

- Inadequate testing of high-risk system functionalities.

- Non-compliance with 21 CFR Part 11 for electronic signatures.

- Poor Change Control: Patches were applied to the system without proper impact assessment or revalidation.

Table 2: Common Root Causes of Computer System Validation (CSV) Failures

| Root Cause | Technical Manifestation | Regulatory Consequence |

|---|---|---|

| Lack of Risk-Based Approach | Generic testing that misses high-risk functionalities (e.g., batch disposition, data calculation). | FDA Form 483 observations; requirement for extensive remediation [22]. |

| Poor Documentation | Missing or incomplete validation protocols, test scripts, and summary reports. | Inability to demonstrate system reliability to auditors [22] [23]. |

| Weak Change Control | Software patches, updates, or configuration changes implemented without impact assessment or revalidation. | System deemed out of compliance, potentially invalidating all data generated post-change [22]. |

| Inadequate Data Integrity Controls | Disabled audit trails, lack of user access controls, no backup/recovery procedures. | Warning Letters, clinical holds, or import bans due to unreliable data [22] [23]. |

Visualizing the Pathway from Data Quality to Regulatory Outcome

The relationship between foundational data quality, experimental execution, and regulatory consequences follows a logical pathway that can be systematically mapped.

Diagram 1: Data quality to regulatory outcome pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

Ensuring data veracity requires leveraging specific technical tools and methodologies throughout the drug development lifecycle. The following table details key solutions for maintaining data integrity and quality.

Table 3: Essential Tools and Reagents for Ensuring Data Veracity

| Tool/Reagent Category | Specific Example | Primary Function in Ensuring Data Quality |

|---|---|---|

| Validated Analytical Methods | HPLC with UV/Vis Detection, Mass Spectrometry | Provides accurate, precise, and reliable quantification of drug substance and product, forming the basis for stability and potency claims. Must be fully validated. |

| Certified Reference Standards | USP Reference Standards, Characterized Drug Substance | Serves as an absolute benchmark for calibrating analytical instruments and methods, ensuring the accuracy of all generated analytical data. |

| Quality Management Software | Electronic Quality Management System (eQMS) | Digitally manages deviations, CAPA, change control, and training records, ensuring robust quality system oversight and data integrity. |

| Clinical Data Management System | Electronic Data Capture (EDC) System with Audit Trail | Securely captures clinical trial data from sites with a full audit trail, ensuring data is attributable, legible, contemporaneous, original, and accurate (ALCOA). |

| Computerized System Validation Package | Installation/Operational/Performance Qualification (IQ/OQ/PQ) Protocols | Documentary evidence that a computerized system (e.g., LIMS, EDC) is properly installed, works as expected, and performs correctly in its operating environment. |

| Stability Testing Chambers | GMP Stability Chambers (Controlled Temp/Humidity) | Generate reliable stability data for establishing retest dates/shelf life. Requires calibrated monitoring and controlled conditions per ICH guidelines. |

The case studies and data presented demonstrate a clear and consistent narrative: regulatory rejection is predominantly a consequence of preventable failures in data quality, manufacturing control, and systematic planning. The FDA's published CRLs consistently point to issues with facility readiness, product quality, and clinical trial execution—all of which are underpinned by the veracity of the data generated to support claims [18] [20].

For researchers and drug development professionals, the path forward requires a foundational commitment to data integrity and quality-by-design. This involves implementing robust, validated computerized systems, establishing rigorous analytical methods early in development, designing clinically meaningful trials with minimal bias, and maintaining manufacturing systems under a state of control. Proactive investment in these areas, guided by the real-world failure patterns now visible through FDA transparency, is the most effective strategy for navigating the complex regulatory landscape and delivering needed therapies to patients without unnecessary delay.

In pharmaceutical research and development, data is the fundamental asset that guides decisions from initial discovery to regulatory approval. The data lifecycle in drug development encompasses the generation, collection, processing, analysis, and submission of data, with its veracity—accuracy, consistency, and reliability—being paramount. Poor data quality jeopardizes patient safety, undermines research validity, and can lead to significant regulatory and financial consequences [24]. Within the context of materials data veracity research, this whitepaper examines the systematic processes that ensure data quality throughout the drug development pipeline, addressing critical challenges and presenting methodologies to maintain integrity across complex, data-intensive workflows.

The Drug Development Pipeline and Associated Data

The journey of a new drug from concept to market is a long, complex, and costly endeavor, typically taking 10 to 15 years and costing over $2 billion [25]. This process is segmented into distinct stages, each generating and relying upon specific types of data with stringent quality requirements.

- Discovery and Preclinical Research: This initial phase involves identifying a promising biological target and compound through laboratory tests and animal studies. Key data outputs include in vitro assay results, pharmacokinetics, and toxicology profiles [25]. The goal is to gather sufficient evidence of biological activity and initial safety to justify human testing.

- Clinical Development: This phase tests the investigational product in human subjects through three main trial phases, each with expanding scope [25]:

- Phase I: Focuses on safety and dosage in small groups of healthy volunteers.

- Phase II: Assesses efficacy and further evaluates safety in small groups of patients with the target disease.

- Phase III: Large-scale studies to confirm efficacy, monitor side effects, and compare to standard treatments.

- Regulatory Review and Approval: All data generated during discovery, preclinical, and clinical stages are compiled and submitted to regulatory bodies like the FDA for review. The submitted data must robustly demonstrate the drug's safety, efficacy, and quality [26].

- Post-Marketing Surveillance (Phase IV): After approval, studies continue to monitor long-term safety and effectiveness in the general population [25].

Table 1: Quantifying the Drug Development Pipeline

| Development Stage | Primary Objective | Typical Duration | Key Data Types Generated |

|---|---|---|---|

| Discovery & Preclinical | Target ID, Compound Optimization, Safety & PK/PD in animals | 3-6 Years | Assay Data, Genomic/Protein Data, Toxicology Reports, Chemical Compound Libraries |

| Clinical Phase I | Initial Safety & Tolerability | 1-2 Years | Safety Endpoints (AEs), Pharmacokinetic Data, Dosage Findings |

| Clinical Phase II | Therapeutic Efficacy & Side Effects | 2-3 Years | Preliminary Efficacy Endpoints, Short-Term Safety Data, Biomarker Data |

| Clinical Phase III | Confirmatory Efficacy & Safety Monitoring | 3-4 Years | Primary & Secondary Efficacy Endpoints, Long-Term Safety Data, Comparative Effectiveness Data |

| Regulatory Review | Approval for Market | 1-2 Years | Integrated Summary of Safety, Integrated Summary of Efficacy, Clinical Study Reports |

| Phase IV (Post-Marketing) | Long-Term Safety & Additional Uses | Ongoing | Real-World Evidence (RWE), Pharmacovigilance Reports, Patient-Reported Outcomes |

A critical trend impacting this pipeline is the adoption of Artificial Intelligence (AI). AI is being leveraged to analyze massive datasets for faster target identification, improved drug design, and better safety predictions, potentially reducing development timelines from decades to years and costs by up to 45% [27]. Furthermore, there is a growing regulatory acceptance of Real-World Evidence (RWE), which is increasingly used to support label expansions and enhance safety monitoring [28].

The Clinical Data Lifecycle: A Detailed Workflow

The clinical phase represents the most data-intensive and rigorously managed part of drug development. The lifecycle of clinical data is a multi-step process designed to ensure its quality, integrity, and traceability from the patient to the regulatory submission.

Diagram 1: Clinical Data Management Workflow

The workflow, governed by Good Clinical Practice (GCP) and 21 CFR Part 11 for electronic records, involves the following critical stages [29]:

- Protocol and Case Report Form (CRF) Design: The clinical trial protocol serves as the "bible," detailing every aspect of how the trial will be conducted. The CRF, whether electronic (eCRF) or paper, is the specific tool designed to capture all patient data required by the protocol [29]. A meticulous design at this stage is the first control for ensuring data quality.

- Data Capture and Entry: Subject data is transcribed from source documents (e.g., hospital records) into the CRFs. Increasingly, this involves electronic source (eSource) data and direct entry into Clinical Data Management Systems (CDMS) like Oracle Clinical or Rave to minimize transcription errors [29].

- Source Data Verification (SDV): Clinical Research Associates (CRAs) monitor the study sites and perform SDV, comparing the data entered in the CRF against the original source records to ensure accuracy and completeness. This can be a 100% check or a targeted (tSDV) approach focusing on critical data elements [29].

- Query Management: Discrepancies, inconsistencies, or missing data identified during monitoring or automated checks trigger "queries." The data management team resolves these by communicating with the clinical site investigators to confirm or correct the data [29].

- Medical Coding: Adverse events (AEs) and medications are coded using standardized medical dictionaries like MedDRA (Medical Dictionary for Regulatory Activities) to ensure consistency for analysis and regulatory review [29].

- Database Lock: After all data are cleaned and queries resolved, the database is formally locked ("hard lock") to prevent any further changes. This creates the final, frozen dataset for statistical analysis [29].

- Analysis, Reporting, and Submission: The locked data are analyzed according to a pre-specified statistical analysis plan. The results are compiled into Clinical Study Reports (CSRs) and submitted to regulatory agencies in standardized formats (e.g., CDISC SDTM/ADaM) [29].

Critical Data Veracity Challenges and Mitigation

Despite a structured lifecycle, several persistent challenges threaten data quality and integrity.

Prevalent Data Management Challenges

- Data Silos: Information isolated within departments, legacy systems, or external partners is a major obstacle. Data silos hinder aggregation and analysis, lead to resource redundancy, and slow down innovation [30] [31]. Solutions include implementing advanced data integration platforms and fostering a collaborative culture [30].

- Data Security and Compliance: Pharmaceutical data, especially patient information, is highly sensitive. Breaches or non-compliance with regulations like HIPAA and GDPR can result in severe legal and financial penalties and erode trust. Robust cybersecurity measures (encryption, access controls) and regular audits are essential [30].

- Volume and Computational Demands: The rise of biomedical imaging, genomics, and other complex data types has led to petabyte-scale datasets. Managing the computational demands for processing and analyzing this data requires modern, scalable cloud infrastructure [31].

- Ensuring Quality for AI/ML: AI and machine learning models require vast amounts of normalized, high-quality training data. Preparing historical and diverse data for AI is a "Herculean" task, and inconsistencies can lead to biased models and missed insights [31] [27].

The High Cost of Poor Data Quality

Compromised data veracity has direct and severe consequences:

- Regulatory Rejection: Regulatory agencies will reject applications if submitted data are inadequate. For example, Zogenix faced an FDA application denial in 2019 because its dataset lacked certain nonclinical toxicology studies, causing a 23% drop in its share value [24].

- Patient Safety Risks: Inaccurate data on drug interactions, allergies, or dosage can lead to adverse reactions, directly endangering patient lives [24].

- Resource Drain: Poor data quality leads to erroneous conclusions, wasted resources on invalid research paths, and costly drug recalls [32] [24].

Table 2: Data Quality Issues and Corresponding Mitigation Strategies

| Data Quality Challenge | Impact on Drug Development | Recommended Mitigation Strategy |

|---|---|---|

| Data Silos & Disorganization | Hinders collaborative research; slows discovery; duplicates effort | Implement advanced data integration platforms; adopt interoperable standards [30] |

| Inconsistent Data Modalities | Manual curation is error-prone; inefficient for large-scale data | Create automated, modality-specific workflows; use comprehensive data architectures [31] |

| Insufficient Data for AI/ML | Leads to biased models; inaccurate predictions; failed trials | Invest in consistent data curation & provenance tracking; use federated learning [31] [27] |

| Fragmented Security & Compliance | Regulatory penalties; data breaches; loss of trust | Conduct regular security audits; deploy encryption & multi-factor authentication [30] |

| Inaccurate/Incomplete Records | Misdiagnoses; manufacturing errors; jeopardized patient safety | Deploy ML-powered data validation tools; implement robust data governance [24] |

Experimental Protocols for Ensuring Data Quality

To combat these challenges, the industry relies on rigorous, standardized experimental and quality control methodologies.

Protocol for Clinical Data Quality Control

This protocol outlines the core process for maintaining data veracity during a clinical trial.

- Objective: To ensure that all data collected during a clinical trial are accurate, complete, verifiable, and compliant with GCP and regulatory standards.

- Materials:

- Clinical trial protocol and annotated Case Report Form (CRF).

- Clinical Data Management System (CDMS) (e.g., Oracle Clinical, Medidata Rave).

- Medical coding dictionaries (MedDRA, WHODrug).

- Query management system within the CDMS.

- Methodology:

- Validation Rule Programming: Pre-program automated validation checks into the CDMS to flag discrepancies (e.g., values outside expected range, inconsistent dates) upon data entry [29].

- Source Data Verification (SDV): CRAs perform periodic on-site or remote monitoring visits to verify that data recorded on the CRF matches the source documents at the clinical site. A minimum of 100% verification of primary efficacy and safety endpoints is standard, though targeted approaches are increasingly used [29].

- Centralized Monitoring: Utilize statistical and analytical methods to review aggregated data from all sites to identify unusual patterns, outliers, or potential data integrity issues that may not be apparent at the individual site level.

- Query Resolution Workflow: For each discrepancy found:

- A query is automatically generated in the CDMS and assigned to the investigator at the clinical site.

- The investigator reviews the query and provides a clarification or correction.

- The data management team reviews the response and closes the query once resolved.

- Quality Control (QC) Audit: Before database lock, a separate quality assurance team may perform a sample audit of the data to ensure the quality control processes have been effective [26] [32].

Protocol for AI-Ready Data Curation

With the growing role of AI, a specialized protocol for preparing data is critical.

- Objective: To transform raw, disparate biomedical data (e.g., medical images, genomic sequences) into a normalized, annotated, and analysis-ready format suitable for training and validating AI/ML models.

- Materials:

- Diverse datasets (e.g., MR/CT scans, genomic data, EHR extracts).

- High-performance computing (HPC) or cloud-scale computational resources.

- Data curation and workflow automation platform (e.g., Flywheel, Lifebit).

- Standardized ontologies and data models (e.g., CDISC, FHIR).

- Methodology:

- Data Ingestion and De-identification: Securely transfer data from source systems. Automatically remove or encrypt all 18 Protected Health Information (PHI) identifiers to ensure patient privacy [31] [27].

- Modality-Specific Processing: Apply automated, algorithm-driven workflows tailored to each data type. For imaging, this may include format conversion (DICOM to NIfTI), re-orientation to standard coordinate space, and voxel intensity normalization [31].

- Metadata Annotation and Tagging: Automatically extract and standardize key metadata (e.g., pixel dimensions for images, sequencing platform for genomic data). Manually or semi-automatically annotate data with ground-truth labels required for supervised learning.

- Data Harmonization: Normalize data across different sources, scanners, or protocols to reduce batch effects and technical variability that could bias AI models.

- Provenance Tracking: Automatically and comprehensively log all data processing steps, including software versions, parameters, and user actions, to ensure full reproducibility—a necessity for regulatory approval [31].

- Federated Learning Implementation: In cases where data cannot be centralized, deploy the AI model to the data locations (e.g., different hospitals). Train the model locally and share only the model weights or insights back to a central server, thus preserving data privacy [27].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents and materials are fundamental to conducting the experiments that generate high-quality data in drug development.

Table 3: Key Research Reagent Solutions for Data-Generating Experiments

| Reagent/Material | Function in Drug Development | Specific Application Example |

|---|---|---|

| Cell-Based Assays | To evaluate the biological activity of a compound on a cellular target. | High-throughput screening of compound libraries for hit identification. |

| Animal Disease Models | To study the efficacy, pharmacokinetics, and toxicity of a drug candidate in vivo. | Testing a novel oncology drug in a mouse xenograft model. |

| Clinical Trial Kits | Standardized materials for consistent sample collection and processing across sites. | Phlebotomy kits for centralized pharmacokinetic analysis in a global trial. |

| Assay Development Reagents | Antibodies, enzymes, and probes used to create robust biochemical tests. | Developing an ELISA to measure target engagement biomarker in patient serum. |

| Stable Isotope Labels | To track and quantify the absorption, distribution, metabolism, and excretion (ADME) of a drug. | Using 14C-labeled drug in a human Mass Balance study. |

| GMP-Grade Chemicals | Raw materials produced under strict quality controls for manufacturing clinical trial supplies. | Producing the active pharmaceutical ingredient (API) for Phase III trials. |

The data lifecycle in drug development is a meticulously managed process where veracity is non-negotiable. From the initial design of a clinical trial protocol to the final regulatory submission, every step is governed by frameworks designed to ensure data accuracy, integrity, and traceability. While challenges like data silos, security threats, and the demands of AI pose significant hurdles, the industry is responding with advanced technologies such as automated data validation tools, federated learning, and robust data governance frameworks. In an era of increasingly complex and data-driven science, a relentless focus on data quality is not merely a regulatory requirement but the very foundation for delivering safe and effective medicines to patients.

Building a Robust Data Foundation: Standards, FAIR Principles, and Management Protocols

Implementing FAIR Data Principles for Findable, Accessible, Interoperable, and Reusable Data

The research data landscape is undergoing a fundamental transformation. In materials science and pharmaceutical research, high volumes of complex, inconsistently annotated data are frequently inaccessible, creating significant barriers to scientific progress [33]. The FAIR principles (Findable, Accessible, Interoperable, and Reusable) provide a systematic framework to tackle these challenges by enabling advanced analyses, including machine learning (ML) and artificial intelligence (AI) techniques that are rapidly becoming essential for innovation [33] [34]. For materials researchers specifically, implementing FAIR principles addresses critical materials data veracity and quality issues by ensuring data completeness, accuracy, and contextual integrity throughout the research lifecycle.

The economic imperative for FAIR implementation is substantial. Research organizations face significant costs in bringing safe and effective medicines to market, with R&D expenses estimated at $900 million to $2.8 billion per new drug [35]. Meanwhile, poor data quality costs businesses an estimated $15 million annually due to inefficiencies, compliance risks, and flawed analytics [36]. Implementing FAIR offers a path to reducing these costs by maximizing the value of scientific data assets and minimizing redundant research efforts [35].

The FAIR Principles: A Technical Deep Dive

Core Principles and Definitions

FAIR represents a continuum of increasing reusability with 15 facets that make data not only human- but also machine-actionable [37]. The core principles are:

- Findable: Data and metadata should be easily discoverable by humans and machines. This requires persistent identifiers, rich metadata, and registration in searchable resources [38].

- Accessible: Data should be retrievable using standard protocols, with authentication and authorization where necessary. Metadata should remain accessible even when the data is no longer available [38].

- Interoperable: Data should integrate with other datasets and workflows through use of formal, accessible, shared languages and vocabularies [38].

- Reusable: Data should be richly described with multiple attributes to enable replication and integration across diverse applications while preserving research integrity [38].

Quantitative Impact of FAIR Implementation

Table 1: Economic and Operational Impact of FAIR Data Principles

| Metric Area | Current Status/Impact | Data Source |

|---|---|---|

| Data Quality Challenges | 64% of organizations cite data quality as their top data integrity challenge | Precisely's 2025 Data Integrity Trends Report [39] |

| Economic Impact of Poor Data | Poor data quality costs businesses over $15 million annually | Gartner's Data Quality Benchmark Report [36] |

| Data Reuse Potential | High-quality data could reduce capitalised R&D costs by ~$200M per new drug | Industry analysis [35] |

| System Integration | Organizations average 897 applications but only 29% are integrated | MuleSoft's 2025 Connectivity Benchmark [39] |