Data Analysis for High-Throughput Experimentation: A 2025 Guide for Accelerating Scientific Discovery

This article provides a comprehensive guide to data analysis for high-throughput experimentation (HTE), tailored for researchers, scientists, and drug development professionals.

Data Analysis for High-Throughput Experimentation: A 2025 Guide for Accelerating Scientific Discovery

Abstract

This article provides a comprehensive guide to data analysis for high-throughput experimentation (HTE), tailored for researchers, scientists, and drug development professionals. It covers the foundational principles of HTE and its role in accelerating drug discovery and materials science. The scope extends to modern methodologies, including AI-driven software platforms and automated workflows, followed by practical strategies for troubleshooting and optimizing data management. Finally, it explores validation techniques and comparative benchmarking of analytical platforms, synthesizing key takeaways to highlight future directions and implications for biomedical and clinical research.

Understanding HTE: Core Concepts and Its Revolutionary Role in Modern Science

Defining High-Throughput Experimentation vs. High-Throughput Screening

In the landscape of modern scientific discovery, the ability to rapidly conduct and analyze vast arrays of experiments has become transformative across multiple disciplines. While often used interchangeably, High-Throughput Screening (HTS) and High-Throughput Experimentation (HTE) represent distinct methodologies with different applications, implementations, and philosophical approaches. HTS primarily serves as a tool for biological discovery and drug development, enabling researchers to quickly test millions of chemical or biological compounds for activity against specific targets [1] [2]. In contrast, HTE represents a broader methodology applied mainly in chemical research to systematically explore experimental parameters and optimize reactions using rationally designed arrays [3]. Within the context of data analysis for high-throughput research, understanding this distinction is crucial for selecting appropriate experimental designs, analytical frameworks, and computational tools tailored to each approach's unique data structures and challenges.

Defining the Concepts: Core Principles and Philosophical Approaches

High-Throughput Screening (HTS)

High-Throughput Screening is defined as a method for scientific discovery that uses robotics, data processing software, liquid handling devices, and sensitive detectors to quickly conduct millions of chemical, genetic, or pharmacological tests [2]. The primary goal of HTS is the rapid identification of active compounds, antibodies, or genes that modulate specific biomolecular pathways, providing crucial starting points for drug design and understanding biological mechanisms [2] [4]. In practice, HTS functions as a high-volume filtering process where large compound libraries are tested against defined biological targets to identify initial "hits" worthy of further investigation [4] [5].

The philosophical approach of HTS is one of comprehensive interrogation of available chemical space, where the emphasis lies on testing as many compounds as possible with relatively simple, automation-compatible assay designs [4]. This methodology prioritizes breadth over depth in initial stages, with the understanding that promising hits will undergo more rigorous secondary testing.

High-Throughput Experimentation (HTE)

High-Throughput Experimentation represents a more recent adaptation of high-throughput principles to chemical synthesis and reaction optimization. Conceptually, HTE enables the execution of large numbers of rationally designed experiments conducted in parallel while requiring less effort per experiment compared to traditional sequential approaches [3]. Rather than simply screening for activity, HTE employs systematic arrays of reaction conditions to explore chemical space, optimize transformations, and understand fundamental reaction parameters [3].

The philosophical foundation of HTE is hypothesis-driven exploration of chemical space, where researchers compose arrays of experiments consisting of permutations of literature conditions augmented with scientific intuition [3]. This approach emphasizes rational design and explicit examination of parameter combinations to develop a detailed understanding of chemical behavior across multiple variables simultaneously.

Table 1: Conceptual Comparison Between HTS and HTE

| Aspect | High-Throughput Screening (HTS) | High-Throughput Experimentation (HTE) |

|---|---|---|

| Primary Focus | Identifying active compounds from large libraries | Understanding and optimizing chemical reactions |

| Experimental Approach | Standardized assays across many samples | Systematic variation of reaction parameters |

| Typical Output | Qualitative "hits" or quantitative activity measures | Reaction optimization data, structure-activity relationships |

| Philosophical Basis | Comprehensive interrogation | Hypothesis-driven exploration |

| Domain Prevalence | Predominantly biological sciences | Primarily chemical synthesis and optimization |

Technical Implementation: Methodologies and Workflows

HTS Workflow and Infrastructure

The HTS process relies on specialized laboratory infrastructure and standardized workflows designed for maximum throughput. The core technical elements include:

Assay Plate Preparation: HTS utilizes microtiter plates with dense well arrays (96, 384, 1536, or even 3456 wells) as primary testing vessels [2]. These plates contain test compounds, often dissolved in DMSO, along with biological entities such as cells, enzymes, or proteins. A screening facility typically maintains a library of stock plates whose contents are carefully catalogued, with assay plates created as needed by pipetting small liquid amounts (often nanoliters) from stock to empty plates [2].

Automation Systems: Automation is an essential element in HTS effectiveness [2]. Integrated robot systems transport assay microplates between stations for sample/reagent addition, mixing, incubation, and detection. Modern HTS systems can prepare, incubate, and analyze many plates simultaneously, with advanced systems capable of testing 100,000 compounds per day [2]. Ultra-HTS (uHTS) extends this capability to screens exceeding 100,000 compounds daily [2].

Detection and Reaction Observation: After incubation time allows biological matter to react with compounds, measurements are taken across all wells using specialized automated analysis machines [2]. These systems output experimental data as numeric value grids corresponding to individual wells, generating thousands of data points rapidly. Follow-up assays then "cherrypick" liquid from source wells that gave interesting results ("hits") into new assay plates to refine observations [2].

HTS Process Flow

HTE Workflow and Infrastructure

HTE employs a distinct technical approach focused on experimental design and parameter optimization:

Rational Array Design: HTE begins with carefully composed experimental arrays that systematically examine combinations of reaction components [3]. Unlike traditional experimentation that tests small numbers of conditions sequentially, HTE explicitly tests permutations of parameters including catalysts, ligands, solvents, reagents, and substrates. This approach allows researchers to ask questions about how reaction components affect outcomes and develop comprehensive understanding through single experimental cycles [3].

Miniaturization and Parallel Processing: Chemical HTE is conducted in miniature reaction vessels, frequently in 96-well format, allowing small amounts of precious materials to support numerous experiments [3]. Fast quantitative analytical techniques like HPLC and UPLC with MS detection generate results quickly with minimal workup. This miniaturization enables researchers to "go small" when material is limited while still executing diverse experimental arrays [3].

Data-Rich Experimentation: A key differentiator of HTE is the focus on generating rich datasets that illuminate structure-activity relationships and reaction mechanisms [3]. By including negative controls and examining parameter combinations that test theoretical boundaries, HTE can reveal unexpected insights that redirect research directions productively.

HTE Process Flow

Data Analysis Frameworks: Statistical Approaches and Computational Challenges

HTS Data Analysis

The massive data generation capability of HTS presents unique statistical challenges that require specialized analytical approaches:

Quality Control Metrics: High-quality HTS assays require sophisticated quality control methods to identify systematic errors and measure assay robustness [6]. Key QC metrics include the Z-factor, which measures the separation between positive and negative controls; signal-to-background ratio; signal-to-noise ratio; and strictly standardized mean difference (SSMD) [2] [6]. Effective plate design helps identify positional effects and determines appropriate normalization strategies to remove systematic errors [6].

Hit Selection Methods: The process of identifying active compounds ("hits") employs statistical methods tailored to screen replication characteristics [2]. For primary screens without replicates, methods include z-score, z-score (robust to outliers), and SSMD approaches that assume compounds share variability with negative controls [2]. For confirmatory screens with replicates, t-statistics and SSMD directly estimate variability for each compound without relying on distributional assumptions [2]. SSMD is particularly valuable as it directly assesses effect size rather than just statistical significance [2].

False Discovery Control: A fundamental challenge in HTS is minimizing both false positives and false negatives [6]. Replicate measurements are increasingly recognized as essential for verifying methodological assumptions and developing appropriate data analysis strategies [6]. The integration of replicates with robust statistical methods improves screening sensitivity and specificity, facilitating discovery of reliable hits [6].

Table 2: Statistical Methods for HTS Data Analysis

| Analytical Stage | Methods | Application Context |

|---|---|---|

| Quality Control | Z-factor, SSMD, Signal-to-Noise | Assay validation and plate quality assessment |

| Hit Identification (without replicates) | z-score, z-score, SSMD | Primary screening campaigns |

| Hit Identification (with replicates) | t-statistic, SSMD, ANOVA | Confirmatory screening and dose-response studies |

| False Discovery Control | Replicate measurement, robust normalization, outlier detection | All screening stages |

HTE Data Analysis

HTE data analysis focuses on extracting meaningful patterns from multidimensional parameter spaces and building predictive models:

Multivariate Analysis: HTE datasets naturally lend themselves to multivariate statistical approaches that can identify correlations between reaction parameters and outcomes [3]. By examining all combinations of experimental factors, HTE reveals patterns that would remain hidden with traditional one-variable-at-a-time approaches [3]. This enables researchers to understand interaction effects between variables such as catalysts, solvents, and reagents.

Response Surface Modeling: A powerful application of HTE data involves building mathematical models that describe how reaction components influence outcomes [3]. These models can predict optimal conditions for desired results and inform understanding of reaction mechanisms. The inclusion of negative controls and experimental conditions that test theoretical boundaries provides crucial data points for robust model building [3].

Data-Driven Discovery: The rich datasets generated by HTE can reveal unexpected reactivity and guide discovery of new synthetic methodologies [3]. For example, the discovery that PdSO₄·2H₂O—included as a presumed negative control due to its low solubility—could confer high reactivity in Pd-catalyzed cyanation led to fundamentally new catalyst systems [3]. Such discoveries emerge from rationally designed arrays that include diverse chemical space exploration.

Applications and Case Studies

HTS in Drug Discovery and Toxicology

HTS has become a cornerstone of modern drug discovery, with several well-established applications:

Lead Compound Identification: HTS is extensively used in pharmaceutical companies to identify compounds with pharmacological activity as starting points for medicinal chemistry optimization [4] [7]. The typical HTS process tests compound libraries at single concentrations (often 10 μM) in targeted assays against specific biological mechanisms [4]. Quantitative HTS (qHTS), which tests compounds at multiple concentrations to generate concentration-response curves, has gained popularity as it more fully characterizes biological effects and reduces false positive/negative rates [4] [7].

Toxicology and Safety Assessment: HTS approaches are increasingly applied in toxicology to evaluate compound effects on drug-metabolizing enzymes, assess genotoxicity, and perform broad pharmacological profiling [5]. Cellular microarrays in 96- or 384-well microtiter plates with 2D cell monolayer cultures enable high-throughput assessment of cytotoxicity [5]. These systems can model human liver metabolism while simultaneously evaluating small molecule cytotoxicity, providing early safety assessment in drug development [5].

HTE in Reaction Optimization and Discovery

HTE has proven particularly valuable in solving complex synthetic challenges:

Reaction Optimization: A case study in the application of HTE to a key synthetic step in drug discovery demonstrated how large arrays of experiments could identify optimal conditions for challenging transformations [3]. In the Heck coupling of methyl vinyl ketone with an aryl bromide, HTE identified that the nature of the ligand (the most important factor) required 12 conditions, base selection required 4 conditions, and solvent selection required 2 conditions to systematically map the optimization space [3]. This approach revealed that weak base was essential for high yield due to product sensitivity, a finding that may have been missed with traditional approaches.

Chemical Probe Development: HTE enables the rapid exploration of structure-activity relationships for medicinal chemistry optimization [3]. By testing arrays of analogous compounds under standardized conditions, researchers can quickly establish preliminary SAR and focus synthetic efforts on promising structural motifs. This application of HTE is particularly valuable in academic settings where material resources may be limited [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for High-Throughput Methods

| Reagent/Material | Function | Application Context |

|---|---|---|

| Microtiter Plates (96-3456 wells) | Miniaturized reaction vessels | Both HTS and HTE |

| Robotic Liquid Handlers | Automated sample/reagent transfer | Both HTS and HTE |

| Compound Libraries | Diverse chemical space representation | Primarily HTS |

| Cellular Assay Systems | Biological target representation | Primarily HTS |

| Catalyst/Ligand Libraries | Systematic reaction space exploration | Primarily HTE |

| Solvent Arrays | Dielectric and coordination property variation | Primarily HTE |

| Fluorescent Detection Reagents | Quantitative signal generation | Primarily HTS |

| High-Speed LC/MS Systems | Rapid reaction outcome analysis | Primarily HTE |

Future Directions and Integrative Approaches

The evolving landscape of high-throughput research points toward several emerging trends:

Quantitative High-Throughput Screening (qHTS): The integration of complete concentration-response testing in primary screens represents a significant advancement in HTS methodology [2]. By generating EC₅₀, maximal response, and Hill coefficient data for entire libraries, qHTS enables assessment of nascent structure-activity relationships immediately from primary screening data [2]. This approach decreases false positive rates and provides richer datasets for chemical biology.

Automation and Miniaturization: Ongoing trends toward further miniaturization continue to push the boundaries of both HTS and HTE [5]. Microfluidic approaches using drop-based fluid handling enable dramatically increased throughput (100 million reactions in 10 hours) at significantly reduced cost and reagent consumption [2]. These systems replace microplate wells with drops of fluid separated by oil, allowing analysis and hit sorting during continuous flow through channels [2].

Data Integration and Machine Learning: The generation of massive datasets from both HTS and HTE campaigns has stimulated development of sophisticated computational analysis methods [8]. Artificial intelligence and machine learning approaches are being integrated into high-throughput research pipelines to analyze samples and direct subsequent experimental decisions automatically, creating closed-loop discovery systems [8]. This integration helps address the bottleneck that traditional experimentation poses relative to computational prediction capabilities.

High-Throughput Screening and High-Throughput Experimentation represent complementary but distinct methodologies within the modern research arsenal. HTS serves as a powerful tool for biological interrogation and compound discovery, employing standardized assays and automated systems to rapidly evaluate vast chemical libraries. In contrast, HTE functions as a chemical optimization platform, using rationally designed experimental arrays to systematically explore reaction parameters and develop fundamental understanding of chemical behavior. Both approaches generate complex datasets that require specialized statistical analysis and computational infrastructure, presenting rich opportunities for advancing data science methodologies in scientific research. As high-throughput technologies continue to evolve toward greater automation, miniaturization, and integration with artificial intelligence, the distinction between these approaches may blur, giving rise to even more powerful paradigms for scientific discovery across biological and chemical domains.

The journey of drug discovery has progressively shifted from fortuitous, serendipitous discoveries to meticulously planned, data-driven strategic operations. High-Throughput Experimentation (HTE) stands at the forefront of this transformation, enabling researchers to systematically explore vast chemical and biological spaces with unprecedented speed and precision. Within the context of data analysis research, HTE has evolved from a simple tool for increasing experimental volume to a sophisticated platform for generating high-quality, machine-readable data that fuels artificial intelligence (AI) and machine learning (ML) models. This evolution is critical in an industry where the development of a new medicine typically takes 12-15 years and costs approximately $2.8 billion from inception to launch, with only a small fraction of investigational compounds ultimately receiving approval [9].

The strategic implementation of HTE allows research organizations to navigate this challenging landscape by accelerating one of the most costly and challenging phases: initial candidate selection and optimization. While high-throughput screening (HTS) allows for the rapid assessment of hundreds of thousands of compounds to identify potential hits, HTE encompasses a broader paradigm, looking to massively increase throughput across all processes employed in drug discovery and development [9]. This whitepaper examines the technical evolution of HTE workflows from their rudimentary beginnings to their current state as integrated, data-generating engines, with particular emphasis on methodology, data infrastructure, and their indispensable role in modern analytical research frameworks.

The Hardware Evolution: Enabling Precision at Scale

From Manual Operations to Automated Workstations

The physical execution of HTE has undergone a revolutionary transformation, moving from manual manipulations in traditional glassware to fully automated systems operating at microgram scales. Early HTE implementations, such as the initial system at AstraZeneca (AZ), relied on foundational equipment like the Minimapper robot for liquid handling and the Flexiweigh robot (Mettler Toledo) for powder dosing. Although imperfect, these systems established the core principle that automation is essential for performing experiments in potentially hazardous conditions and for achieving the reproducibility required for meaningful data analysis [9].

The collaboration between industry and instrumentation vendors has been a key driver in this evolution. For instance, the team at AstraZeneca helped develop user-friendly software for Quantos Weighing technology around 2010, which later culminated in the creation of the CHRONECT XPR workstation through a collaboration between Trajan and Mettler [9]. This system exemplifies the modern hardware platform, capable of handling a wide range of solids—from free-flowing to fluffy, granular, or electrostatically charged powders—with a dispensing range of 1 mg to several grams. This technological progression has been critical for data quality, as it enables precise and reproducible reagent dosing, which is the foundation of reliable experimental outcomes and subsequent analysis.

Integrated Workflow Design

Modern HTE facilities are designed with compartmentalized, integrated workflows to maximize efficiency and data integrity. A case study from AstraZeneca's Gothenburg site illustrates this strategic approach, featuring three specialized gloveboxes [9]:

- Glovebox A: Dedicated to automated processing of solids using a CHRONECT XPR system, providing a secure environment for storing catalysts and other sensitive materials.

- Glovebox B: Focused on conducting automated reactions and validating HTE conditions at gram scales, bridging the gap between miniaturized screening and practical synthesis.

- Glovebox C: Equipped with standard global HTE equipment for reaction screening using liquid reagents, allowing teams to continue advancing miniaturization expertise.

This compartmentalization reflects a mature understanding that workflow design must align with both experimental objectives and data quality requirements. By separating solid handling, reaction execution, and liquid dispensing, laboratories can maintain specialized conditions for each process step while generating consistent, high-fidelity data across all operations.

Quantitative Impact of Hardware Automation

The implementation of advanced automation systems has yielded measurable improvements in throughput and data quality. The following table summarizes key performance metrics from documented case studies:

Table 1: Performance Metrics of Automated HTE Systems

| Metric | Pre-Automation Baseline | Post-Automation Performance | Data Source |

|---|---|---|---|

| Screening Throughput | 20-30 screens per quarter | 50-85 screens per quarter | [9] |

| Conditions Evaluated | <500 per quarter | ~2000 per quarter | [9] |

| Weighing Time per Vial | 5-10 minutes manually | <30 minutes for entire experiment (planning & preparation) | [9] |

| Dosing Accuracy (low mass) | N/A | <10% deviation from target mass (sub-mg to low single-mg) | [9] |

| Dosing Accuracy (high mass) | N/A | <1% deviation from target mass (>50 mg) | [9] |

These quantitative improvements are not merely about doing more experiments faster; they represent a fundamental enhancement in data quality and experimental reliability. The significant reduction in human error, particularly when weighing powders at small scales, directly translates to more trustworthy datasets for subsequent analysis [9].

The Software Revolution: Managing Complexity and Enabling Analysis

The Data Management Challenge

As HTE capacity expanded, the limitation shifted from physical execution to data management. The organizational load of processing multiple reaction arrays, some encompassing 1,536 wells, became overwhelming for traditional lab notebooks or spreadsheets [10]. Furthermore, standard electronic lab notebooks (ELNs) often proved inadequate for storing HTE details in a tractable manner or for providing simple interfaces to extract and compare data from multiple experiments simultaneously [10]. This created a critical bottleneck where the value of high-throughput experimentation was constrained by low-throughput data management and analysis capabilities.

Integrated Software Platforms

The development of specialized HTE software platforms has been pivotal in transitioning from disconnected experiments to analyzable data streams. Tools like phactor exemplify this evolution, providing an integrated environment for designing reaction arrays, generating robotic instructions, and analyzing results [10]. The software enables researchers to rapidly design arrays of chemical reactions or direct-to-biology experiments in standardized wellplate formats (24, 96, 384, or 1,536 wells), then access online reagent data to virtually populate wells and produce execution instructions [10].

A critical feature of modern HTE platforms is their focus on machine-readable data formats that facilitate analysis. As the developers of phactor noted, their philosophy was to "record experimental procedures and results in a machine-readable yet simple, robust, and abstractable format to naturally translate to other system languages" [10]. This interoperability is essential for connecting HTE data with downstream AI/ML analysis, creating a seamless pipeline from experiment to insight.

The Closed-Loop Workflow

Advanced software enables a fundamental shift in experimental approach: the creation of closed-loop workflows where experimental results directly inform subsequent experimental designs. This creates a virtuous cycle of hypothesis generation, testing, and refinement that dramatically accelerates the research process. The phactor implementation demonstrates this principle by interconnecting experimental results with online chemical inventories through a shared data format, creating a continuous feedback loop for HTE-driven chemical research [10].

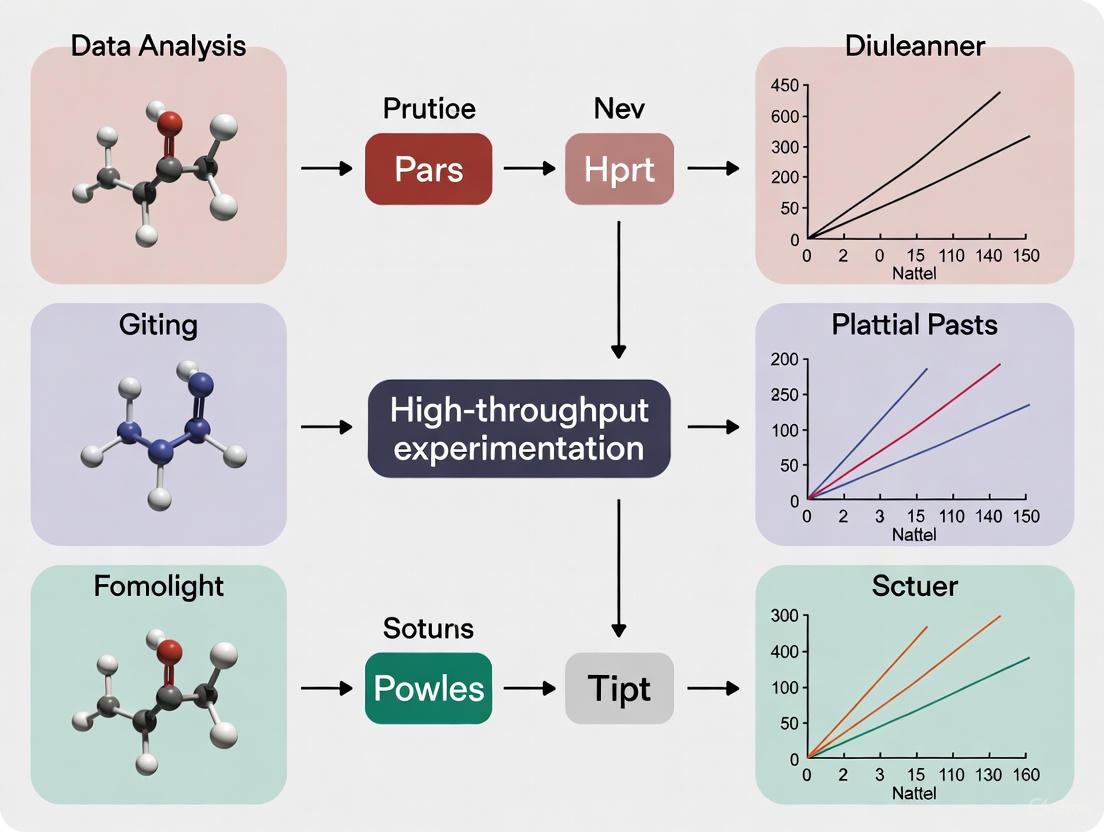

Diagram: The HTE Closed-Loop Research Cycle

This diagram illustrates the continuous, data-driven workflow that modern HTE platforms enable, where each cycle generates richer datasets for analysis and progressively more refined experimental designs.

Experimental Protocols: Methodologies for Data-Rich Experimentation

Reaction Discovery and Optimization Protocols

Modern HTE methodologies are characterized by standardized yet flexible protocols that maximize information gain while minimizing resource consumption. A representative example is the deaminative aryl esterification discovery protocol implemented using phactor [10]:

- Reagent Preparation: An amine (activated as its diazonium salt) and a carboxylic acid are selected as core substrates.

- Condition Variation: Three transition metal catalysts and four ligands are selected, with presence/absence variation of a silver nitrate additive.

- Array Design: The software automatically designs a reagent distribution recipe, splitting a 24-well plate into a four-row by six-column multiplexed array.

- Execution: Reactions are dosed in acetonitrile and stirred at 60°C for 18 hours.

- Analysis: Caffeine is added as an internal standard, with UPLC-MS analysis performed using peak integration.

- Data Processing: Chromatographic data is processed to yield a heatmap visualization showing assay yields across conditions.

This methodology enabled the identification of a hit condition (30 mol% CuI, pyridine, and AgNO3) yielding 18.5% of the desired ester product, which was then triaged for further investigation [10].

Direct-to-Biology Screening Protocols

HTE has expanded beyond traditional chemistry to encompass direct-to-biology approaches, where compounds are synthesized and screened without purification. A demonstrated protocol for identifying a SARS-CoV-2 main protease inhibitor exemplifies this methodology [10]:

- Library Design: Reaction arrays are designed to generate diverse compound libraries directly in assay plates.

- Miniaturized Synthesis: Reactions are performed in 96- or 384-well plates at micromolar scales.

- In-situ Screening: Crude reaction mixtures are directly screened for biological activity.

- Hit Identification: Active wells are identified through activity measurements.

- Rapid Follow-up: Hit conditions are rapidly resynthesized at slightly larger scales for confirmation and preliminary SAR.

This approach collapses the traditional sequential workflow of synthesis, purification, and screening into a single streamlined process, dramatically accelerating the identification of bioactive compounds.

Automated Biochemical Assay Protocols

In the biopharmaceutical domain, HTE protocols have been developed for challenging targets such as membrane proteins and kinases. The Nuclera eProtein Discovery System exemplifies this with a standardized protocol [11]:

- DNA Template Design: Construct design for expression screening.

- Parallelized Expression: Simultaneous expression of up to 192 construct and condition combinations.

- Automated Purification: High-throughput purification of soluble, active protein.

- Quality Control: Automated characterization of protein function and stability.

- Data Integration: Cloud-based software management of experimental design and results analysis.

This integrated protocol reduces the timeline from DNA to purified protein from weeks to under 48 hours, enabling rapid iteration and optimization—a crucial capability given the growing importance of biologics, which constituted two-thirds of FDA-approved drugs in 2024 [9] [11].

The Scientist's Toolkit: Essential Research Reagent Solutions

The effective implementation of HTE workflows relies on a suite of specialized tools and reagents designed for miniaturization, automation, and data traceability. The following table catalogs key solutions referenced in contemporary HTE implementations:

Table 2: Essential Research Reagent Solutions for HTE Workflows

| Tool/Reagent | Function | Application Example | Source |

|---|---|---|---|

| CHRONECT XPR | Automated powder dispensing | Handling solids in inert environments for reaction screening | [9] |

| phactor Software | HTE experiment design & analysis | Designing reaction arrays and analyzing UPLC-MS results | [10] |

| Mettler Toledo Quantos | Automated weighing technology | Precense powder dosing for library synthesis | [9] |

| Opentrons OT-2 | Liquid handling robot | Automated reagent distribution for 384-well plates | [10] |

| SPT Labtech mosquito | Liquid handling robot | Reagent dosing for 1536-well ultraHTE | [10] |

| Virscidian Analytical Studio | Analytical data processing | Conversion of UPLC-MS output to structured CSV files | [10] |

| Library Validation Experiment (LVE) | Reaction validation | Evaluating building block chemical space in 96-well format | [9] |

| Nuclera eProtein Discovery | Protein expression screening | High-throughput expression of challenging proteins | [11] |

| Agilent SureSelect Kits | Target enrichment | Automated library preparation for genomic sequencing | [11] |

| 3D Cell Culture Systems | Biologically relevant screening | Production of consistent organoids for efficacy testing | [11] |

This toolkit continues to evolve, with emerging technologies focusing on integration and data generation capabilities. As noted at ELRIG's Drug Discovery 2025 conference, the emphasis has shifted toward "technology that integrates easily, delivers reliable data and saves time" [11].

Data Analysis Frameworks: From Experimental Results to Predictive Models

Structured Data Generation

The transformation of HTE from a screening tool to a strategic asset hinges on its ability to generate consistently structured, analyzable data. Modern HTE platforms address this requirement through standardized data schemas that capture both experimental parameters and outcomes. The phactor implementation, for example, uses a standardized reaction template that classifies substrates, reagents, and products in a consistent format, enabling the interconnection of experimental results with chemical inventories [10]. This structured approach is fundamental for building datasets suitable for computational analysis.

The critical importance of metadata and traceability in HTE data generation was emphasized at the ELRIG Drug Discovery 2025 conference: "If AI is to mean anything, we need to capture more than results. Every condition and state must be recorded, so models have quality data to learn from" [11]. This represents a maturation in understanding—that the value of HTE extends beyond immediate experimental outcomes to encompass the creation of foundational datasets for predictive modeling.

Integration with AI/ML Pipelines

The ultimate strategic application of HTE data lies in its integration with artificial intelligence and machine learning pipelines. The 2025 Gordon Research Conference on High-Throughput Chemistry and Chemical Biology highlights this progression, focusing on the theme of "Harnessing Chemical and Biological Data at Scale in Pursuit of Generative AI for Drug Discovery" [12]. This reflects the field's transition from using HTE primarily for empirical screening to employing it as a data generation engine for AI training.

Successful implementation requires not just data volume, but data quality and structure. As noted in a review of biomanufacturing applications, "Automated and high-throughput workflows also generate robust data for AI-ML approaches" [13]. This is particularly valuable for optimizing complex multi-parameter systems such as microbial conversions, where the parametric space is too vast for traditional experimental approaches. The creation of accurate models through HTE data can significantly expedite the development and scale-up of engineered biological systems [13].

Visualization and Interpretation Tools

Advanced visualization capabilities have become essential for interpreting complex, multi-dimensional HTE datasets. Modern platforms incorporate tools for generating heatmaps, multiplexed pie charts, and other visual representations that enable researchers to rapidly identify patterns and outliers across hundreds of experimental conditions [10]. These visualization tools transform raw analytical data into intelligible representations that support hypothesis generation and decision-making.

Diagram: HTE Data Analysis Pipeline

This diagram outlines the flow from raw experimental data to research decisions, highlighting how structured data enables both visual analysis and predictive modeling in an iterative framework.

Future Perspectives: Autonomous Experimentation and Advanced Integration

The evolution of HTE workflows continues toward increasingly autonomous systems. As observed at AstraZeneca, while much of the necessary hardware has reached maturity, significant development is still needed in software to enable "full closed loop autonomous chemistry" [9]. Current systems still require substantial human involvement in experimentation, analysis, and planning, presenting an opportunity for more advanced integration and decision-making algorithms.

The convergence of HTE with other technological trends points toward several key developments:

- Self-Driving Laboratories: The integration of HTE with AI-controlled robotic systems will enable fully autonomous hypothesis testing and optimization cycles.

- Cloud Laboratories: Remote execution of HTE campaigns through cloud-based interfaces will democratize access to advanced experimental capabilities.

- Enhanced Data Standards: Continued development of shared data standards will facilitate broader integration of HTE data into AI training sets across organizations.

- Cross-Domain Data Integration: The combination of chemical HTE data with biological, genomic, and clinical datasets will enable more comprehensive predictive models of compound behavior.

These advancements will further transform HTE from a specialized tool for reaction screening into a central platform for generating the high-quality, diverse datasets needed to power the next generation of AI-driven discovery research.

The journey of High-Throughput Experimentation from serendipity to strategy represents one of the most significant transformations in modern drug discovery research. Through the systematic implementation of automated hardware platforms, sophisticated software solutions, and data-aware methodologies, HTE has evolved into an indispensable source of structured, machine-readable data for analytical research. The integration of these capabilities with AI and ML pipelines creates a powerful framework for accelerating discovery across chemical and biological domains.

As the field continues to mature, the strategic value of HTE will increasingly derive not merely from its capacity to conduct experiments at scale, but from its role as a knowledge-generating engine that systematically explores chemical space and builds predictive models of molecular behavior. This evolution positions HTE as a cornerstone of data-driven research strategy, enabling a more efficient, predictive, and insightful approach to solving the most challenging problems in drug discovery and development.

High-Throughput Experimentation (HTE) has revolutionized the field of drug discovery by enabling the rapid testing and synthesis of vast chemical libraries. This methodology leverages automation, robotics, and sophisticated data processing to conduct millions of chemical, genetic, or pharmacological tests efficiently [2]. The core principle involves preparing assay plates—often microtiter plates with 96, 384, or even 1536 wells—using robotic liquid handling systems [2]. These platforms allow researchers to quickly identify active compounds, antibodies, or genes that modulate specific biomolecular pathways, providing crucial starting points for drug design [2]. The evolution of HTE has been marked by significant trends toward miniaturization and automation, with modern systems capable of testing over 100,000 compounds per day, a process often referred to as ultra-high-throughput screening (uHTS) [2] [5].

The integration of HTE into the drug discovery pipeline addresses the critical need to accelerate hit-to-lead progression and optimize lead compounds in a cost-effective manner. Recent advances have demonstrated the power of combining miniaturized HTE with deep learning and multi-dimensional optimization to significantly reduce cycle times [14]. This synergistic approach not only expedites the identification of promising drug candidates but also enriches the scientific understanding of structure-activity relationships, ultimately enhancing the quality of drug development campaigns.

Reaction Discovery

Fundamental Principles and Methodologies

Reaction discovery in HTE focuses on identifying novel chemical transformations and evaluating their potential for constructing diverse molecular architectures. The process typically begins with target identification and reagent preparation, followed by assay development where chemical reactions are conducted in high-density microtiter plates [5]. Contemporary approaches often employ high-throughput experimentation (HTE) to generate comprehensive datasets that serve as foundations for predictive modeling [14]. For instance, researchers have utilized HTE to generate datasets encompassing thousands of novel reactions, such as Minisci-type C-H alkylation reactions, which provide valuable insights into reaction scope and limitations [14].

The critical innovation in modern reaction discovery lies in the marriage of experimental data generation with computational prediction. By employing high-throughput experimentation, researchers can rapidly explore chemical space and generate robust datasets that train deep learning models to accurately predict reaction outcomes [14]. This integrated workflow enables the effective diversification of hit and lead structures, significantly accelerating the early stages of drug discovery where novel bioactive compound synthesis remains a substantial hurdle.

Experimental Protocol: Minisci-Type Reaction Screening

Objective: To identify novel Minisci-type C-H alkylation reactions for diversifying lead structures in drug discovery [14].

Materials and Equipment:

- Microtiter plates (96-well or 384-well format)

- Robotic liquid handling systems for reagent addition

- Dimethyl sulfoxide (DMSO) for compound dissolution

- Stock solutions of substrates and reagents

- Automated incubation and monitoring systems

Procedure:

- Library Design: Design a virtual library of potential reaction products through scaffold-based enumeration of core structures.

- Plate Preparation: Using automated liquid handlers, prepare assay plates by transferring nanoliter volumes of stock solutions from stock plates to corresponding wells of empty microtiter plates [2].

- Reaction Setup: In each well, combine moderate inhibitors of the target enzyme (e.g., monoacylglycerol lipase) with appropriate alkylating agents and reaction catalysts.

- Reaction Execution: Incubate the plates under controlled temperature and atmospheric conditions to facilitate the Minisci-type C-H alkylation reactions.

- Data Collection: After incubation, measure reaction outcomes using appropriate detection methods. For the Minisci reactions, this may involve liquid chromatography-mass spectrometry (LC-MS) analysis to quantify product formation [14].

- Hit Identification: Process the experimental data to identify successful reactions based on conversion rates and product yield.

Data Analysis:

- Compile reaction outcome data into a structured database.

- Use the dataset to train deep graph neural networks for reaction prediction.

- Apply physicochemical property assessment and structure-based scoring to prioritize the most promising reactions for further investigation [14].

Key Research Reagent Solutions

Table 1: Essential Reagents for High-Throughput Reaction Discovery

| Reagent Category | Specific Examples | Function in Experiments |

|---|---|---|

| Chemical Libraries | Diverse compound collections | Provide structural variety for screening novel reactions and bioactivities [5] |

| Enzymes/Target Proteins | Tyrosine kinase, monoacylglycerol lipase (MAGL) | Serve as biological targets for evaluating compound efficacy [14] [15] |

| Fluorescent Probes | FRET pairs, fluorescence anisotropy markers | Enable sensitive detection of molecular interactions and enzymatic activities [15] |

| Assay Reagents | Detergents (e.g., Triton X-100), buffer components | Maintain assay integrity and prevent compound aggregation [15] |

| Reaction Components | Alkylating agents, catalysts, substrates | Facilitate specific chemical transformations under investigation [14] |

Reaction Optimization

Advanced Strategies for Parameter Enhancement

Reaction optimization in HTE employs systematic approaches to refine chemical processes for maximum efficiency, yield, and selectivity. Traditional optimization methods often rely on Design of Experiments (DoE), but recent advances have introduced more sophisticated machine learning-driven approaches [16]. These methods leverage algorithms that can process and analyze vast amounts of data, identifying complex, non-linear relationships between chemical descriptors and catalytic performance that might be overlooked by traditional methods [16]. The implementation of Bayesian Optimization strategies enables researchers to maximize desired outcomes, such as reaction yield or selectivity, while minimizing the number of experiments required [16].

A key application of reaction optimization involves ligand screening from large chemical libraries where each compound possesses unique chemical descriptors such as molecular weight, polarizability, and electronic properties [16]. The challenge lies in effectively leveraging all descriptors to find significant correlations that meet specific optimization goals. Modern platforms address this by mapping and classifying descriptors based on their importance to the objective, then selecting the best-performing ligands through predictive modeling [16]. This approach has demonstrated significant success in real-world applications, with some implementations identifying optimal ligands that maximize yield in less than two months of testing [16].

Quantitative Analysis of Optimization Outcomes

Table 2: Performance Metrics in Reaction Optimization Studies

| Study Focus | Library Size | Key Optimization Parameters | Results Achieved |

|---|---|---|---|

| Ligand Screening [16] | Large chemical library | Conversion, selectivity | Identified optimal ligands maximizing yield while minimizing experiments and cost |

| Minisci Reaction Optimization [14] | 26,375 molecules virtual library | Reaction outcome prediction, physicochemical properties, structure-based scoring | 14 synthesized compounds exhibited subnanomolar activity, representing up to 4500-fold potency improvement |

| HTS for AMACR Inhibitors [15] | 20,387 drug-like compounds | Inhibition potency, specificity | Identified two novel inhibitor families (pyrazoloquinolines and pyrazolopyrimidines) with mixed competitive or uncompetitive inhibition |

Workflow Diagram for AI-Driven Reaction Optimization

Library Synthesis

Strategic Approaches for Diverse Compound Collections

Library synthesis represents a critical application of HTE in constructing diverse sets of compounds for biological evaluation. The process involves systematic assembly of related chemical structures to explore structure-activity relationships and identify promising lead compounds. Modern approaches to library synthesis emphasize automated chemistry platforms that enable large-scale organic synthesis campaigns with minimal human intervention [17]. The efficiency of such platforms depends significantly on the schedule according to which synthesis operations are executed, leading to the development of sophisticated scheduling algorithms that can reduce total synthesis campaign duration by up to 58% compared to baseline approaches [17] [18].

A key innovation in this domain is the formalization of library synthesis as a flexible job-shop scheduling problem (FJSP) with chemistry-relevant constraints [17]. This formulation considers the interdependent nature of synthetic routes, where reactions can have arbitrary dependencies originating from shared intermediate products for multiple downstream reactions [17]. The scheduling optimization must account for various laboratory constraints, including temporal limitations imposed by materials, hardware, and operators, such as time lags between solution preparation and usage, hardware capacity limitations, and operator shift patterns [17]. This comprehensive approach ensures that library synthesis campaigns proceed with maximum efficiency while respecting the practical constraints of laboratory environments.

Schedule Optimization Methodology

Objective: To minimize makespan (total duration) of chemical library synthesis campaigns through optimized scheduling of operations [17].

Prerequisites:

- Pre-defined synthetic routes for all target chemicals

- Known or conservatively estimated reaction yields

- Specifications of available hardware units and their capabilities

Procedure:

- Reaction Network Definition: Represent synthesis routes to all target chemicals as a bipartite acyclic directed graph where nodes are either chemicals or chemical reactions [17].

- Quantity Calculation: Given desired quantities of target chemicals, traverse the reaction network in reverse to determine quantities of all intermediate chemicals [17].

- Operation Network Definition: Decompose each reaction into a set of operations represented as a directed acyclic graph, where nodes represent operations and edges represent required precedence relations [17].

- Problem Formulation: Formalize the scheduling problem as a mixed integer linear program (MILP) with the objective of minimizing makespan [17].

- Constraint Incorporation: Include relevant laboratory constraints:

- Module capacity (e.g., multi-position heaters)

- Minimum/maximum time lags between operations

- Work shifts defining available time slots for operations [17]

- Schedule Generation: Solve the MILP to produce an optimal schedule that defines:

- Assignment of operations to specific hardware modules

- Start times for all operations

- Overall sequence of execution [17]

- Schedule Execution: Implement the optimized schedule on the automated chemistry platform.

Validation:

- Test the scheduling approach on simulated instances for realistically accessible chemical libraries.

- Compare makespan against baseline scheduling approaches to quantify improvements [17].

Library Synthesis Scheduling Framework

Integrated Data Analysis and Visualization

Data Management and Analytical Frameworks

The implementation of HTE across reaction discovery, optimization, and library synthesis generates enormous datasets that require sophisticated analysis frameworks. Effective data management begins with quality control measures including proper plate design, selection of effective positive and negative controls, and development of quality assessment metrics [2]. Common quality assessment measures include signal-to-background ratio, signal-to-noise ratio, signal window, assay variability ratio, and Z-factor [2]. More recently, strictly standardized mean difference (SSMD) has been proposed as a robust statistical measure for assessing data quality in HTS assays, offering advantages over traditional metrics [2].

Hit selection represents a critical analytical step, with methods varying depending on whether screens include replicates. For primary screens without replicates, approaches such as z-score, z-score, SSMD, B-score, and quantile-based methods are employed [2]. In screens with replicates, SSMD or t-statistics are preferred as they can directly estimate variability for each compound without relying on strong assumptions about distribution [2]. The application of these analytical frameworks ensures that true hits are identified while minimizing false positives that could lead research in unproductive directions.

Visualization Principles for Complex Datasets

Effective data visualization is essential for interpreting the complex datasets generated by HTE applications. The fundamental objective of any graphic in scientific publications is to effectively convey information without overwhelming the reader [19]. Key guidelines for effective visualization include:

- Know Your Audience: Tailor visualizations to the background and expertise of the intended audience [19].

- Choose the Appropriate Visual : Select visualization types that accurately represent the nature of the data and relationships being highlighted [19].

- Avoid Chart Junks: Eliminate unnecessary non-data elements that do not contribute to understanding [19].

- Use Log Scales When Appropriate: Employ logarithmic scales when data spans several orders of magnitude [19].

- Use Color Effectively: Utilize color to enhance comprehension rather than for decorative purposes [19].

Accessibility considerations are equally important when creating visualizations. This includes ensuring sufficient color contrast (at least 4.5:1 for text and 3:1 for graphical elements), not relying on color alone to convey meaning, and providing alternative text descriptions for complex graphics [20]. Furthermore, providing supplemental formats such as data tables alongside visualizations accommodates different learning preferences and enhances overall comprehension [20].

Data Visualization and Analysis Tools

Table 3: Analytical Approaches for High-Throughput Experimentation Data

| Analysis Type | Primary Methods | Application Context |

|---|---|---|

| Quality Control | Z-factor, SSMD, signal-to-noise ratio | Assessing assay performance and data reliability [2] |

| Hit Selection | z-score, t-statistic, SSMD | Identifying active compounds from primary and confirmatory screens [2] |

| Reaction Prediction | Deep graph neural networks, geometric deep learning | Predicting reaction outcomes and optimizing synthetic routes [14] |

| Scheduling Optimization | Mixed integer linear programming (MILP) | Minimizing makespan for chemical library synthesis [17] |

| Ligand Performance Prediction | Bayesian optimization, machine learning classification | Identifying optimal ligands from chemical libraries [16] |

High-Throughput Experimentation (HTE) has revolutionized fields like drug discovery by enabling the rapid testing of thousands of chemical reactions or compounds. However, the immense volume and complexity of data generated pose significant analytical challenges. This guide explores why robust statistical analysis is essential for navigating this data deluge and deriving reliable, actionable insights from HTE campaigns.

Quantitative High Throughput Screening (qHTS) assays can test thousands of compounds using cells or tissues in a very short period, generating complex dose-response data for each one [21]. The scale is staggering; for example, a single recent study on acid-amine coupling reactions conducted 11,669 distinct reactions in just 156 instrument working hours [22]. This volume makes it practically infeasible for an investigator to manually inspect each result or determine the appropriate statistical model for each compound, necessitating automated, robust, and sophisticated analysis methodologies to avoid both false discoveries and missed opportunities [21].

Statistical Foundations for HTE Data Analysis

The core of HTE data analysis often involves fitting mathematical models to the data to quantify a compound's effect. A frequently used model for dose-response data is the Hill model (or Hill function):

f(x,θ)=θ0+ (θ1 * θ3^θ2)/(x^θ2 + θ3^θ2)

Where:

xis the dose of the chemical.θ0is the lower asymptote.θ1is the efficacy (the maximum change from baseline).θ2is the slope parameter.θ3is the ED50 (the dose producing 50% of the maximum effect) [21].

Two critical challenges in fitting these models are:

- Heteroscedasticity: The variability in the observed response may not be constant across all dose groups. Ignoring this can lead to biased parameter estimates and incorrect conclusions [21].

- Outliers: Given the number of chemicals investigated, outliers and influential observations are common and can disproportionately skew model fitting [21].

Robust Methodologies

To address these issues, standard Ordinary Least Squares (OLS) estimation is often insufficient. Robust alternatives include:

- M-Estimation: Using M-estimators (e.g., with a Huber-score function) in place of least squares provides robustness against outliers [21].

- Preliminary Test Estimation (PTE): This methodology is designed to be robust to both uncertain variance structures (homoscedasticity vs. heteroscedasticity) and potential outliers. Simulation studies have shown that PTE can achieve better control of the False Discovery Rate (FDR) while maintaining good statistical power compared to more conservative or liberal existing methods [21].

- Weighted M-Estimation (WME): When heteroscedasticity is detected, a weighted approach can be used to model the variance as a function of dose, improving the reliability of the parameter estimates [21].

The following diagram illustrates a robust analytical workflow that can adapt to data characteristics.

Advanced Modeling: Integrating Bayesian Deep Learning

Moving beyond classic regression, cutting-edge approaches are integrating Bayesian Deep Learning with HTE to tackle even more complex challenges like predicting global reaction feasibility and robustness.

- Bayesian Neural Networks (BNNs): These models can achieve high prediction accuracy for reaction feasibility (e.g., 89.48% in a recent study) while also providing uncertainty estimates for their predictions [22].

- Uncertainty Disentanglement: BNNs can disentangle uncertainty into different types, such as model uncertainty (related to the model's parameters) and data uncertainty (intrinsic stochasticity of the reaction itself). This allows for:

- Active Learning: The model can identify which experiments would be most informative to run next, potentially reducing data requirements by up to 80% [22].

- Robustness Prediction: The intrinsic data uncertainty can be correlated with reaction robustness, helping to identify reactions that are sensitive to subtle environmental changes and may be difficult to scale up [22].

Practical Implementation and Reagent Solutions

For researchers implementing an HTE platform, having the right tools and reagents is fundamental. The following table details key components of a robust HTE system, drawing from platforms used in recent high-impact studies [22] [23].

| Component Category | Specific Item / Solution | Function & Importance in HTE |

|---|---|---|

| Reaction Components | Carboxylic Acids & Amines | The core building blocks for the reactions being studied (e.g., coupling reactions). Diversity-guided sampling is critical for exploring broad chemical space [22]. |

| Condensation Reagents | Facilitate the formation of the desired bond (e.g., amide bond). Multiple reagents are tested in parallel to find optimal conditions [22]. | |

| Bases & Solvents | Critical for controlling reaction kinetics and yield. A limited set is often used to create a standardized yet informative condition space [22]. | |

| Automation & Hardware | Automated Synthesis Platform (e.g., CASL-V1.1) | Robotic liquid handling systems that enable the precise, rapid, and parallel setup of thousands of reactions in microtiter plates (e.g., at 200–300 μL scale) [22]. |

| Analysis & Data Generation | Liquid Chromatography-Mass Spectrometry (LC-MS) | The primary analytical tool for high-throughput determination of reaction outcomes, such as yield, often using uncalibrated UV absorbance ratios [22]. |

Validation and Interpretation: From Data to Decisions

Robust analysis is not an end in itself; it must feed into a clear decision-making framework. Different analytical methodologies lead to different classification rules for designating a compound as "active" or a reaction as "feasible."

The table below summarizes and compares the decision criteria of two established methods with the proposed robust approach, highlighting how they handle parameter uncertainty.

| Method / Criteria | NCGC Method [21] | Parham Methodology [21] | Proposed Robust PTE Method [21] |

|---|---|---|---|

| Basis of Decision | Ordinary Least Squares (OLS) estimates and R². | Likelihood Ratio Test (LRT) on θ₁, with additional rules. | Preliminary Test Estimation (PTE) robust to variance and outliers. |

| Key Activity Thresholds | Class 1: θ̂₁ > 30, θ̂₃ ∈ (xmin, xmax), R² > 0.9.Class 2: θ̂₁ > 30, θ̂₃ ∈ (xmin, xmax), R² > 0.9, θ̂₁ > 80. | H₀: θ₁ = 0 rejected (α=0.05, Bonferroni-corrected), θ̂₂ > 0, θ̂₃ < xmax, |y_xmax| > 10. | Formal statistical inference that accounts for uncertainty in all parameters and is robust to data anomalies. |

| Handling of Uncertainty | Ignores uncertainty in parameter estimates (θ̂). | Uses formal test for θ₁ but ignores uncertainty in other parameters (θ₂, θ₃). | Comprehensively accounts for uncertainty in all parameters and model structure. |

| Reported Performance | Can be either overly conservative or liberal, leading to suboptimal FDR control [21]. | Tends to be very conservative, resulting in low statistical power [21]. | Achieves a better balance, controlling FDR while maintaining good power [21]. |

The success of High-Throughput Experimentation is fundamentally dependent on the robustness of its data analysis. As HTE platforms generate ever-larger and more complex datasets, reliance on simple, assumption-laden statistical methods becomes a critical liability. The integration of robust statistics, including M-estimation and Preliminary Test Estimation, with advanced Bayesian modeling provides a powerful framework to navigate this data deluge. This approach ensures that the conclusions drawn about compound activity or reaction feasibility are not merely artifacts of noisy data or flawed models, but reliable insights that can truly accelerate scientific discovery and process development.

Modern HTE Workflows: From Automated Platforms to AI-Powered Analysis

High-Throughput Experimentation (HTE) has revolutionized chemical synthesis and drug discovery by enabling the rapid execution and analysis of vast arrays of chemical reactions. However, the immense data volumes generated by HTE campaigns present significant challenges in data management, processing, and interpretation. This whitepaper examines three specialized software platforms—phactor, Virscidian's Analytical Studio, and ACD/Labs' Katalyst D2D—that have been developed to navigate these data-rich environments. Within the broader thesis of data analysis for HTE research, we explore how these tools facilitate the entire Design-Make-Test-Analyze (DMTA) cycle, enhance decision-making, and ensure data integrity and FAIR (Findable, Accessible, Interoperable, and Reusable) principles in scientific research.

Core Platform Capabilities

The HTE software landscape comprises solutions addressing specific workflow stages, from experimental design to data analysis and decision support.

phactor is an HTE management system designed to streamline the setup and data collection of reaction arrays in standardized wellplate formats (24, 96, 384, or 1,536 wells). It focuses on facilitating rapid experiment design, interfacing with laboratory inventories and liquid handling robots, and storing all chemical data and results in a machine-readable format for downstream analysis [24] [10]. A key advantage is its availability for free academic use in 24- and 96-well formats [10].

Virscidian's Analytical Studio Professional (AS-Pro) is a centralized data processing and review platform, particularly powerful for chromatography and mass spectrometry data. It enables scientists to visualize, review, and report results from multiple vendors and experiments within a single interface. Its core strength lies in automating data interpretation, employing a "review-by-exception" workflow where samples generating errors are flagged for manual inspection, thereby reducing false positives and accelerating analysis [25] [26].

Katalyst D2D (Design-to-Decide) provides an integrated, browser-based platform that spans the entire experimental workflow, from design and planning to execution and analysis for HTE, process chemistry, and material studies. It automatically assembles all data from entire studies, providing contextualized, structured data that is readily exportable for AI/ML modeling, thereby accelerating the journey from experimental design to decisive decision-making [27] [28].

Quantitative Platform Comparison

The following table summarizes the key quantitative and functional characteristics of the three platforms for direct comparison.

Table 1: Key Software Features for High-Throughput Experimentation

| Feature | phactor | Virscidian Analytical Studio | Katalyst D2D |

|---|---|---|---|

| Primary Function | HTE Management & Workflow [10] | Data Processing & Automated Analysis [25] [26] | End-to-End Workflow Management (DMTA Cycle) [27] |

| Supported Wellplate Formats | 24, 96, 384, 1,536 [10] | Not Explicitly Stated | Wide range of plate-based and single-vessel reactors [27] |

| Key Workflow Stage | Design & Make [24] | Test & Analyze [25] | Design-Make-Test-Analyze (Full Cycle) [27] |

| Automation & Robotics Integration | Opentrons OT-2, SPT Labtech mosquito [10] | Not Explicitly Stated | Integration with networked hardware, automation equipment, and informatics systems [27] |

| Data Analysis Capabilities | Basic heatmap visualization; relies on external tools (e.g., Virscidian) for chromatographic analysis [10] | Advanced, automated data processing for LC/MS, Boolean logic for decision-making, cross-hit correlation analysis [25] | Automated targeted processing for LC/MS, HPLC, UHPLC, NMR; supports >150 vendor data formats [27] |

| Data Structure & AI/ML Readiness | Machine-readable data storage [10] | Actionable intelligence and insights [26] | Structured, contextualized, and normalized data for AI/ML [27] |

Experimental Protocols and Workflows

Workflow Diagram of an Integrated HTE Campaign

The following diagram illustrates the logical flow and integration points between the three software platforms in a typical, sophisticated HTE campaign.

Detailed Experimental Methodology

This section outlines a real-world experiment from the literature to demonstrate the practical application of these tools.

Protocol: Discovery of a Deaminative Aryl Esterification using phactor and Virscidian Analytical Studio [10]

1. Experimental Design (phactor):

- Objective: Discover optimal catalysts and additives for the deaminative aryl esterification between a diazonium salt (1) and a carboxylic acid (2) to form ester (3).

- Array Design: A 24-well plate array was designed to test three transition metal catalysts and four ligands in the presence or absence of a silver nitrate additive.

- Procedure in phactor: Reagents were selected from an integrated lab inventory. phactor automatically generated a reagent distribution recipe, splitting the plate into a multiplexed four-row by six-column array. Instructions for manual or robotic (e.g., Opentrons OT-2) liquid handling were produced.

2. Execution:

- Reaction Setup: Stock solutions were prepared and dosed into the reaction wellplate per phactor's instructions. The plate was stirred at 60°C for 18 hours.

- Quenching & Analysis: Reactions were quenched with a solution containing caffeine as an internal standard. An aliquot from each well was transferred to a analysis plate, diluted with acetonitrile, and analyzed by UPLC-MS.

3. Data Processing (Virscidian Analytical Studio):

- UPLC-MS output files were automatically processed by Virscidian Analytical Studio.

- The software generated a CSV file containing peak integration values for the desired product in all 24 chromatographic traces, calculating metrics like percent conversion or assay yield.

4. Data Analysis and Decision (phactor / Katalyst D2D):

- The results CSV file was uploaded back into phactor, which produced a heatmap visualization of reaction outcomes.

- Analysis identified the optimal condition (Well using 30 mol% CuI, pyridine, and AgNO₃ with an 18.5% assay yield) [10].

- In a Katalyst D2D-centric workflow, these results would be automatically assembled with all other experimental parameters, allowing for advanced visualization (e.g., custom charts, plots) and seamless export of the structured dataset for further AI/ML-driven analysis to guide the next experimental series [27].

Research Reagent Solutions

The following table details key materials and their functions in the featured deaminative aryl esterification experiment.

Table 2: Essential Research Reagents for Deaminative Aryl Esterification Screening

| Reagent / Material | Function in the Experiment |

|---|---|

| Diazonium Salt (1) | Electrophilic coupling partner; provides the aryl group under mild conditions [10]. |

| Carboxylic Acid (2) | Nucleophilic coupling partner; provides the ester moiety [10]. |

| Transition Metal Catalysts (e.g., CuI) | Primary catalyst; facilitates the key bond-forming cross-coupling reaction [10]. |

| Ligands (e.g., Pyridine) | Coordinates with the metal catalyst to modulate its reactivity and selectivity [10]. |

| Silver Nitrate (AgNO₃) | Additive; can act as a halide scavenger or co-catalyst to improve reaction yield [10]. |

| Caffeine | Internal Standard; added post-reaction to enable quantitative analysis by UPLC-MS [10]. |

| Acetonitrile (Solvent) | Reaction medium; chosen for its ability to dissolve reactants and compatibility with reaction conditions [10]. |

The modern HTE software landscape, as represented by phactor, Virscidian Analytical Studio, and Katalyst D2D, offers robust, complementary solutions to the data challenges in chemical research. phactor excels in democratizing access to HTE setup and data capture, Virscidian provides unparalleled, automated analytical data processing, and Katalyst D2D delivers a fully integrated, enterprise-level platform for the entire experimental lifecycle. The choice of tool(s) depends on the specific workflow needs, scale, and resources of the research team. Critically, all three platforms emphasize the generation of machine-readable, structured data, thereby positioning HTE research to fully leverage the power of artificial intelligence and machine learning for accelerated scientific discovery.

High-Throughput Experimentation (HTE) has become a cornerstone of modern scientific discovery, particularly in fields like drug development and materials science, by enabling the rapid testing of thousands of reactions or conditions in parallel [29]. The power of HTE, however, is only fully realized when the resulting data is robust, interpretable, and statistically sound. This places immense importance on the initial design of the experiment array—specifically, the plate layouts and reagent selection. A well-designed array is the critical first step in a data analysis pipeline, generating high-quality data that enables reliable conclusions and effective downstream modeling. This guide details the methodologies for constructing these foundational experiment arrays within the broader context of a data-centric research thesis.

Core Principles for Designing Experiment Arrays

Before detailing specific protocols, it is essential to establish the core principles that guide effective experimental design. These principles ensure that the data generated is fit for purpose and can withstand rigorous statistical analysis.

- Define the Objective and Message First: The most critical step occurs before any plate is designed. You must precisely determine the scientific question—whether it is to compare performance, optimize a reaction, or screen for activity—and envision the story the data will tell [30]. This clarity dictates the structure of the entire array.

- Prioritize a High Data-Ink Ratio: A concept from data visualization, the data-ink ratio emphasizes maximizing the ink (or information) used to present actual data versus non-data elements [30]. In experimental design, this translates to eliminating unnecessary experimental conditions and ensuring every well in a plate is purposefully designed to contribute meaningful information, thereby increasing the efficiency and data density of your screen.

- Account for Batch Effects and Spatial Variability: No plate is perfectly uniform. Factors like edge effects from uneven evaporation or temperature gradients can introduce bias [31]. A robust design incorporates controls throughout the plate (not just in a single column or row) and uses randomization or blocking to ensure that systematic noise does not confound the experimental results.

Designing Effective Plate Layouts

The physical arrangement of samples and controls on a microtiter plate is a fundamental determinant of data quality. The choice of layout is driven by the specific experimental goal.

Common Plate Layout Strategies

The table below summarizes key layout strategies and their applications.

Table 1: Common plate layout strategies for high-throughput experimentation.

| Layout Type | Description | Best Use Cases | Key Advantages | Considerations |

|---|---|---|---|---|

| Checkerboard | Samples and controls are alternated in a grid pattern. | Controlling for spatial gradients (e.g., in cell-based assays). | Effective at identifying and mitigating positional biases. | Reduces the total number of experimental samples per plate. |

| Systematic Variation | A single parameter (e.g., concentration) is varied systematically across rows or columns. | Dose-response studies, concentration gradients. | Intuitive to set up and interpret. | Highly susceptible to spatial biases; requires robust validation. |

| Randomized | The assignment of experimental conditions to wells is fully randomized. | Any screen where spatial bias is a concern. | The gold standard for eliminating confounding spatial effects. | Logistically more complex to set up; requires meticulous tracking. |

| Predrugged Assay Ready Plates (ARPs) | Compounds are pre-dispensed into plates, to which cells and reagents are added later [31]. | Large-scale compound library screens. | Streamlines workflow, improves assay reliability, and minimizes plate handling. | Requires upfront investment in plate preparation and storage. |

Practical Protocol: Implementing a Checkerboard Control Layout

The following workflow details the steps for creating a checkerboard layout for a 96-well plate cell-based assay.

Materials:

- 96-well microtiter plate

- Positive control solution (e.g., 100% cell viability control)

- Negative control solution (e.g., 0% viability/background control)

- Experimental samples

- Cell suspension

- Assay reagents

- Multichannel pipette or automated liquid handler

Methodology:

- Pattern Definition: Designate all wells in column 1, row A, and every other well in a checkerboard pattern as control wells. For instance, all wells where the sum of the row and column indices is an even number (e.g., A1, A3, B2, B4) receive the negative control, and wells where the sum is odd (e.g., A2, A4, B1, B3) receive the positive control.

- Dispense Controls: Using a multichannel pipette, dispense the predetermined volume of negative and positive control solutions into their respective wells according to the checkerboard pattern.

- Dispense Samples: Dispense the experimental samples into all remaining wells not occupied by controls.

- Add Cells and Reagents: Add a uniform volume of cell suspension to every well on the plate, ensuring consistent cell density across the entire array.

- Assay Execution: Add assay reagents as required by the specific protocol (e.g., single-step "add and read" reagents to minimize handling) [31]. Incubate the plate under the required conditions.

- Data Acquisition and Analysis: Read the assay signal using a plate reader. During data analysis, use the signal from the interspersed control wells to model and correct for spatial gradients across the plate, normalizing the experimental sample data.

Strategic Reagent Selection for High-Throughput Experimentation

Reagent selection is not merely a logistical task; it is an experimental design choice that directly impacts data quality, interpretability, and the feasibility of scale-up.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential materials and reagents for high-throughput experimentation, with their primary functions.

| Reagent / Material | Function in HTE | Key Considerations |

|---|---|---|

| Assay Ready Plates (ARPs) | Microplates pre-dispensed with compounds, enabling high-throughput screening of large chemical libraries [31]. | Streamlines workflow, reduces plate-handling errors, and improves assay robustness. |

| Process Analytical Technology (PAT) | Inline or real-time analytical tools (e.g., flow NMR, IR) integrated into flow chemistry systems [29]. | Provides immediate feedback on reaction progress, enabling rapid optimization and high-throughput kinetic studies. |

| Positive & Negative Controls | Benchmarks for defining the upper and lower limits of the assay signal, enabling data normalization and quality control. | Must be biologically and chemically relevant to the experimental system. Should be distributed throughout the plate. |

| Design of Experiments (DoE) Reagents | A curated set of reagents (catalysts, bases, ligands) selected to systematically explore a chemical reaction space [29]. | Moves beyond "one-variable-at-a-time" screening to efficiently model interactions and identify optimal conditions. |

Protocol: Reagent Screening for Reaction Optimization using a DoE Approach

This protocol outlines a systematic approach to reagent selection for optimizing a chemical reaction, moving beyond simple one-variable screening.

Materials:

- Automated liquid handling system

- Source plates containing stock solutions of candidate reagents (e.g., catalysts, bases, solvents)

- Assay Ready Plates (for batch) or a flow chemistry system with automated reagent injection [29]

- Design of Experiments (DoE) software

Methodology:

- Define Objective and Variables: Clearly state the optimization goal (e.g., maximize yield, minimize impurities). Identify the critical reagent variables to test (e.g., 4 photocatalysts, 3 bases, 3 solvents) [29].

- Reagent Selection: Choose a diverse set of candidate reagents for each variable category based on literature and chemical intuition.

- Design Experimental Matrix: Instead of testing all possible combinations (a full factorial design), use a DoE software to generate a fractional factorial or response surface methodology design. This approach strategically selects a subset of conditions to efficiently probe the entire experimental space and model variable interactions.