Combinatorial Materials Science: A High-Throughput Methodology for Accelerating Discovery in Biomedicine and Beyond

This article provides a comprehensive overview of combinatorial materials science (CMS), a high-throughput research paradigm that accelerates the discovery and optimization of new materials.

Combinatorial Materials Science: A High-Throughput Methodology for Accelerating Discovery in Biomedicine and Beyond

Abstract

This article provides a comprehensive overview of combinatorial materials science (CMS), a high-throughput research paradigm that accelerates the discovery and optimization of new materials. Initially pioneered by the pharmaceutical industry, CMS utilizes parallel synthesis and rapid screening of large materials libraries to efficiently navigate vast compositional and processing spaces. We explore the foundational principles of CMS, detailing key methodological approaches like thin-film materials libraries and codeposited composition spreads. The article further examines its transformative applications in energy, electronics, and the critical development of new catalysts and biomaterials. Finally, we discuss the integration of CMS with advanced data science, machine learning, and AI to overcome combinatorial challenges and outline its future implications for driving innovation in biomedical and clinical research.

From Pharmaceuticals to Materials: The Origins and Core Principles of Combinatorial Science

Combinatorial technology, a paradigm that has fundamentally reshaped modern research and development, did not emerge from a vacuum. Its origins are deeply rooted in the pressing needs of the pharmaceutical industry of the late 20th century. Confronted with the painstakingly slow and labor-intensive process of traditional step-by-step compound synthesis, the industry required a radical approach to accelerate drug discovery [1]. The core idea was to shift from synthesizing and testing single compounds to systematically creating and screening immense molecular libraries containing thousands to millions of organic compounds in a single process [2] [3]. This paradigm change was pioneered by researchers like Bruce Merrifield, who investigated solid-phase synthesis of peptides in the 1960s, and later by Árpád Furka, who devised the seminal "split and mix" approach in the 1980s [2] [3]. The subsequent development of parallel synthesis techniques by scientists such as Mario Geysen, and the groundbreaking work on peptide arrays by Fodor et al., laid the foundational methodology that would not only revolutionize pharmaceutical research but also seed a technological revolution that would eventually permeate materials science [3]. This article traces the journey of combinatorial technology from its pharmaceutical origins to its current status as a cross-disciplinary powerhouse.

Core Pharmaceutical Combinatorial Methodologies

The power of combinatorial chemistry in drug discovery stems from its innovative synthetic and screening methodologies, which were specifically designed to navigate the vast landscape of potential drug molecules with unprecedented efficiency.

The Split and Mix (Split-Pool) Synthesis

Developed as a highly efficient method for generating vast libraries of compounds, the split and mix synthesis is a cornerstone of combinatorial technology [3]. This solid-phase technique involves a cyclic process of dividing solid support beads into equal portions, coupling a different amino acid or building block to each portion, and then recombining and mixing all portions before the next cycle.

- Exponential Library Growth: The number of compounds formed increases exponentially with each synthetic cycle. For instance, using 20 amino acids, a single cycle produces 20 dipeptides, two cycles yield 400 (20²) dipeptides, three cycles generate 8,000 (20³) tripeptides, and four cycles create 160,000 tetrapeptides [3].

- Key Feature - One-Bead-One-Compound: A critical feature of this method is that only a single peptide sequence forms on each bead of the solid support. This isolation is a consequence of using only one amino acid per coupling step, making individual beads discrete, assayable entities [3].

- Advantages and Limitations: While this method is exceptionally efficient at generating diverse libraries, a significant limitation is that the identity of the compound on any given bead is initially unknown. This necessitated the development of encoding and deconvolution strategies to identify active compounds.

Parallel Synthesis and Automation

In contrast to the mix-and-split method, parallel synthesis was developed to generate arrays of compounds where the identity of each compound is known and tracked throughout the process [3]. Mario Geysen and his colleagues pioneered this approach by synthesizing 96 peptides simultaneously on plastic rods (pins) coated with solid support, which were immersed into solutions of reagents placed in the wells of a microtiter plate [3]. Although slower than the true combinatorial split-and-mix method, its principal advantage is the exact knowledge of which compound forms at each discrete location. The drive for efficiency in parallel synthesis led to early automation, notably at Parke-Davis Pharmaceutical Research, where scientist Anthony Czarnik directed research that produced the first use of automation in synthesizing compound libraries and the first commercially available equipment for combinatorial chemistry (the Diversomer synthesizer) [3]. This integration of robotics and liquid handling marked a critical step in industrializing the discovery process, enabling companies to routinely produce over 100,000 new and unique compounds per year [3].

DNA-Encoded Libraries

A more recent and powerful innovation that revitalized combinatorial technology is the development of DNA-encoded libraries (DELs) [2]. This approach merges combinatorial synthetic chemistry with molecular biology. In DELs, each small molecule in a library is covalently tagged with a unique DNA oligonucleotide that serves as a barcode recording its synthetic history. The immense power of this technology lies in the ability to use affinity-based selection against a protein target to pull out active compounds from a pool of billions, and then identify them through amplification (e.g., PCR) and decoding of their DNA barcodes via next-generation sequencing [2]. This innovation makes it possible to screen billions of compounds in a single process, a scale that was unimaginable with traditional high-throughput screening.

Table 1: Evolution of Key Combinatorial Synthesis Methodologies in Pharmaceuticals

| Methodology | Key Innovator(s)/Pioneers | Time Period | Key Advantage | Typical Library Scale |

|---|---|---|---|---|

| Solid-Phase Synthesis | Bruce Merrifield | 1960s | Simplified purification; reaction driving to completion | Single compounds |

| Split and Mix Synthesis | Árpád Furka | 1980s | Exponential compound generation; one-bead-one-compound | Millions of compounds |

| Parallel Synthesis | Mario Geysen | 1980s | Known compound identity at each location | 100s - 10,000s of compounds |

| DNA-Encoding | Multiple groups | 2000s+ | Ultra-high-throughput screening via barcode sequencing | Billions of compounds |

The Paradigm Shift: Migration to Materials Science

The remarkable success of combinatorial methodologies in accelerating pharmaceutical discovery did not go unnoticed in other scientific fields. By the 1990s, the paradigm began a deliberate migration to materials science, a field facing a similar challenge of exploring an almost limitless compositional space for new functional materials [4]. This transition required adapting solution-based molecular synthesis techniques to suit the synthesis of solid-state electronic, magnetic, optical, and structural materials [5].

The core principles remained identical: the high-speed synthesis of "libraries" containing numerous different material compositions, followed by high-throughput screening to identify candidates with desirable properties [4]. In materials science, a "library" often takes the form of a thin-film with continuous composition gradients, fabricated using techniques like co-sputtering or multilayer deposition from multiple sources [6]. This allows a single sample to encompass an entire binary or ternary phase diagram. The subsequent high-throughput characterization employs automated, rapid measurement schemes—often using spatially resolved techniques like scanning probe microscopy—to generate massive, uniform datasets mapping composition to properties [5] [7]. This systematic and deliberate exploration of composition-property relationships dramatically accelerated the fight against the "extremely high cost and long development times for new materials" [5]. The migration of this paradigm has since enabled discoveries in areas ranging from luminescent materials and catalysts to lead-free ferroelectrics and energy-related materials [4] [8].

Experimental Protocols: From Molecules to Materials

The following workflows illustrate the core experimental processes in both the pharmaceutical and materials science domains, highlighting their conceptual similarities.

Protocol 1: Pharmaceutical Drug Candidate Screening

This protocol details the classic split-and-pool method for identifying a bioactive peptide lead, a foundational workflow in early combinatorial drug discovery.

Library Synthesis (Split-and-Pool Cycle)

- Step 1 - Divide: The solid support resin (e.g., polystyrene beads) is divided into equal portions corresponding to the number of building blocks (e.g., 20 amino acids) for the first coupling cycle.

- Step 2 - Couple: Each portion of resin is reacted with a single, unique amino acid. Reactions use standard solid-phase peptide coupling reagents.

- Step 3 - Mix and Wash: All portions of the resin are combined, thoroughly mixed, and washed to remove excess reagents.

- Step 4 - Repeat: The divide-couple-mix cycle is repeated for the desired number of iterations to achieve the target peptide chain length. After

ncycles, the library contains20^nunique peptides.

High-Throughput Screening

- The library of resin beads is exposed to a solution of a purified, labeled protein target (e.g., an enzyme with a fluorescent tag).

- The mixture is incubated, allowing the binding interaction between the protein and bioactive peptides on specific beads.

- Unbound protein is washed away. Beads that display binding (e.g., through fluorescence) are physically isolated under a microscope.

Lead Identification

- Method A (Edman Degradation): The peptide sequence on a single active bead is determined sequentially using Edman degradation.

- Method B (Tag Decoding): For libraries synthesized with chemical or radiofrequency tags, the tag is chemically cleaved and decoded to reveal the peptide's identity.

- Method C (DNA Sequencing): For DNA-encoded libraries, the DNA barcode is amplified via PCR and sequenced to determine the structure of the attached small molecule [2].

Protocol 2: Combinatorial Materials Library Investigation

This protocol describes the synthesis and screening of a thin-film materials library for discovering a novel electronic material, such as a lead-free ferroelectric [8].

Combinatorial Library Fabrication

- Method A (Co-sputtering): Multiple magnetron sputtering targets of different elemental compositions (e.g., metal targets for BCT and BZT) are used simultaneously. Computer-controlled movable shutters are placed in front of the substrates to create well-defined thickness and composition gradients across the substrate [6].

- Method B (Wedge-Type Multilayer Deposition): Nanoscale wedge-shaped layers of each precursor material are deposited sequentially, oriented at angles (e.g., 120° for ternaries). The resulting precursor structure is then subjected to a post-deposition annealing step at a precisely controlled temperature to induce interdiffusion and phase formation [6].

High-Throughput Characterization

- Structural Analysis: The library is scanned using automated X-ray diffraction (XRD) to rapidly determine the crystal structure and phase composition at hundreds of discrete points.

- Functional Property Mapping: Automated scanning probe microscopy techniques, such as Piezoresponse Force Microscopy (PFM) for ferroelectrics, are used to map functional properties like piezoelectric response with high spatial resolution across the library [7].

- Compositional Verification: Techniques like X-ray fluorescence (XRF) or electron microprobe analysis are used to correlate the measured properties with the exact chemical composition at each characterized point.

Data Analysis & Lead Identification

- The structural, functional, and compositional data are integrated into a multidimensional database.

- Data analysis and visualization tools are used to generate "property phase diagrams" that reveal the composition(s) with optimal target properties (e.g., highest ferroelectric polarization) [6].

- These "hit" compositions are then selected for larger-scale synthesis and more detailed validation.

The Scientist's Toolkit: Essential Reagents and Materials

The implementation of combinatorial technology across disciplines relies on a specialized set of tools and materials.

Table 2: Key Research Reagent Solutions in Combinatorial Technology

| Item | Function/Description | Pharmaceutical Application | Materials Science Application |

|---|---|---|---|

| Solid Support (Resin Beads) | Insoluble polymer (e.g., polystyrene) for anchoring molecules during synthesis, enabling easy filtration and washing. | Peptide and small molecule synthesis via split-and-pool and parallel methods [3]. | Not typically used. |

| Building Blocks | Diverse sets of molecular or atomic precursors that form the core structure of the library members. | Amino acids, nucleotides, and small organic molecules for creating chemical diversity [1]. | Pure elemental targets (e.g., Mg, Ca, Ti, Zr) or pre-alloyed sputtering targets for thin-film deposition [6]. |

| DNA Oligonucleotides | Short DNA sequences used as unique, amplifiable barcodes attached to each molecule in a library. | Encoding and deconvoluting ultra-large small-molecule libraries (DNA-encoded libraries) [2]. | Not typically used. |

| Sputtering Targets | High-purity solid materials used as sources for deposition in physical vapor deposition systems. | Not typically used. | Source of atoms for creating composition-spread thin-film libraries via co-sputtering [6] [8]. |

| Microtiter Plates | Plastic plates with an array of wells (e.g., 96, 384) used as reaction vessels. | Parallel synthesis and high-throughput biological screening [3]. | Used in some solution-based nanoparticle synthesis libraries. |

| Encoding Tags (RFID/Chemical) | Tags that record a compound's synthetic history without interfering with screening. | Radiofrequency tags or chemical molecular tags used in encoded synthesis to track identity on a single bead [3]. | Not typically used. |

Quantitative Impact: A Tale of Two Fields

The quantitative impact of combinatorial technology is profound, dramatically accelerating the exploration of chemical and compositional space in both pharmaceuticals and materials science.

Table 3: Quantitative Impact of Combinatorial Technology Across Disciplines

| Metric | Pre-Combinatorial Paradigm | Combinatorial Paradigm | Key Enabling Technologies |

|---|---|---|---|

| Library/Sample Throughput | Single compounds synthesized sequentially [1]. | Millions of compounds in a single process (pharma); complete ternary systems in one library (materials) [2] [6]. | Split-and-pool synthesis; DNA-encoding; magnetron co-sputtering. |

| Screening Throughput | Assaying 10s-100s of compounds per week. | Screening billions of DNA-encoded compounds in a single affinity selection [2]. | Next-generation sequencing; automated high-throughput screening robotics; spatially resolved characterization (e.g., PFM). |

| Discovery Timeline | Years to decades for new drug leads or materials. | Rapid discovery and optimization cycles, e.g., expedited synthesis of lead-free ferroelectric systems [8]. | Integrated workflows combining combinatorial synthesis, high-throughput characterization, and data informatics. |

| Data Generation | Limited, manually curated datasets. | Massive, multidimensional datasets linking composition, structure, and properties [6]. | Laboratory Information Management Systems (LIMS); automated data analysis pipelines. |

The journey of combinatorial technology from a pharmaceutical-specific solution to a universal research paradigm represents a true paradigm shift in scientific methodology. What began with the synthesis of peptide libraries on solid support has evolved into a sophisticated suite of technologies capable of navigating the immense complexity of both molecular and materials space. The core principles of creating diversity, parallel processing, and high-throughput screening, forged in the fires of drug discovery, have proven universally applicable. This migration has not only accelerated the development of new functional materials for electronics, energy, and catalysis but has also created a feedback loop, where advancements in one field, such as DNA-encoding in biology, inspire new directions in others. As combinatorial technology continues to mature, increasingly integrated with computational prediction and artificial intelligence, its foundational pharmaceutical origin remains a powerful testament to how tools developed for one scientific challenge can transform our approach to discovery across the entire scientific landscape.

Combinatorial Materials Science (CMS) represents a fundamental paradigm shift in the discovery and development of new materials, moving away from traditional one-sample-at-a-time approaches toward the parallel synthesis and high-throughput characterization of large, systematically varied materials libraries [9] [10]. This methodology, pioneered by the pharmaceutical industry for drug discovery, has been widely embraced across materials science to accelerate research cycles that traditionally spanned decades into months or weeks [11] [10]. At its core, CMS involves creating "materials libraries" – well-defined sets of materials synthesized under identical conditions but with systematic variations in composition or processing parameters – followed by rapid, automated characterization to establish composition-structure-property relationships across vast multidimensional search spaces [6] [5].

The historical context of materials discovery reveals a transition from serendipitous findings, such as the accidental discovery of shape memory alloy NiTi, toward increasingly systematic, data-guided approaches [6]. This shift is driven by the recognition that the possible combinations of chemical elements in multinary systems are immense – with more than two million possible combinations for quinaries alone when starting from 50 earth-abundant elements [6]. Faced with this nearly unlimited search space, CMS offers a structured methodology to efficiently explore composition spaces that would be practically inaccessible through traditional methods, thereby increasing the probability of discovering breakthrough materials with unprecedented properties [12] [6].

Core Methodology and Workflow

The combinatorial approach fundamentally restructures the materials research pipeline from a linear, sequential process to an integrated, cyclical workflow centered on materials libraries. This comprehensive framework enables researchers to explore immense compositional landscapes with unprecedented efficiency.

Combinatorial Synthesis of Materials Libraries

Combinatorial synthesis techniques enable the efficient fabrication of materials libraries containing hundreds to thousands of discrete compositions in a single experiment. The two primary approaches for creating thin-film materials libraries are codeposited composition spreads and wedge-type multilayer deposition:

Codeposited Composition Spread (CCS): This versatile method utilizes physical vapor deposition from multiple spatially separated sources to create thin films with inherent composition gradients across a substrate [12]. In a single experiment with three sources, an entire ternary phase diagram can be produced with composition resolution often approaching 1 atomic percent per millimeter [12]. The CCS approach allows preparation of materials with minimal subsequent processing, making it suitable for discovering low-temperature or metastable phases [12].

Wedge-Type Multilayer Deposition: This alternative method employs computer-controlled movable shutters to deposit nanoscale layers oriented at specific angles (180° for binaries, 120° for ternaries) [6]. Subsequent annealing at optimized temperatures enables interdiffusion and phase formation through solid-state reactions, transforming the multilayer precursor into functional materials phases [6].

Sputtering has emerged as a particularly versatile technique for combinatorial synthesis due to its constant deposition rates, minimal source interactions, and ability to deposit diverse material classes including metals, oxides, nitrides, and carbides [12]. However, researchers must consider limitations including difficulty in adjusting composition gradients and challenges with highly reactive target materials [12].

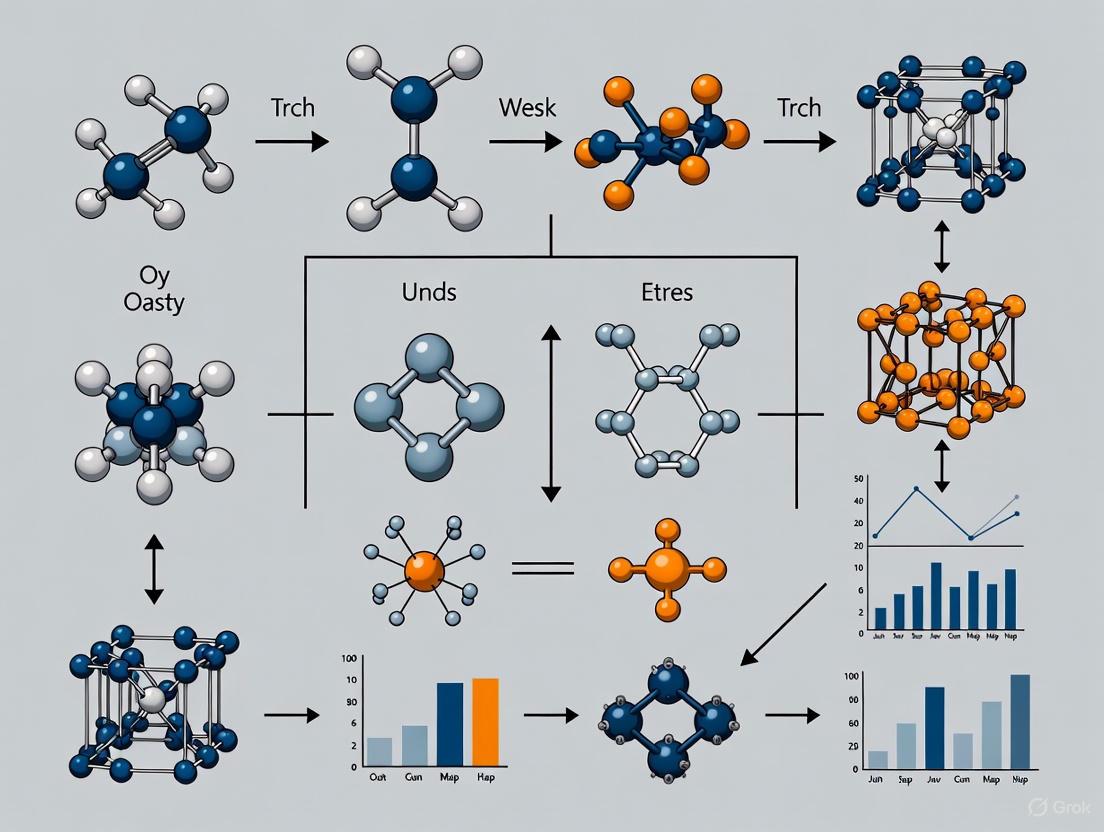

Figure 1: Combinatorial Materials Science Workflow. This integrated, cyclical process enables rapid iteration between synthesis, characterization, and analysis for accelerated materials discovery.

High-Throughput Characterization Techniques

The value of combinatorial synthesis is fully realized only when paired with equally sophisticated high-throughput characterization methods capable of rapidly determining structural and functional properties across materials libraries. Scanning probe microscopy (SPM) techniques have emerged as particularly powerful tools in this context, offering nanoscale to atomic-scale resolution in various environments [7]. These methods include:

- Piezoresponse Force Microscopy (PFM): Characterizes piezoelectric and ferroelectric materials by detecting electromechanical coupling [7].

- Electrochemical Strain Microscopy (ESM): Probes ionic diffusion and electrochemical processes at the nanoscale [7].

- Conductive Atomic Force Microscopy (C-AFM): Measures electrical transport properties with spatial resolution [7].

- Surface Photovoltage Measurements: Characterizes electronic properties and charge separation in photovoltaic materials [7].

For structural analysis, automated X-ray diffraction systems – particularly synchrotron-based approaches – can acquire hundreds of diffraction patterns across a single composition-spread substrate, enabling rapid phase identification and mapping of phase fields [12]. The integration of these characterization techniques into automated workflows represents a crucial advancement, with SPM positioned to play an increasingly important role in closing the loop from material prediction and synthesis to characterization [7].

Data Management and Analysis

The combinatorial approach generates multidimensional datasets that require sophisticated data management and analysis strategies. The transition to data-driven materials science represents what many consider the fourth scientific paradigm, following experimentally, theoretically, and computationally propelled eras [13]. This new paradigm is characterized by:

- Materials Informatics: Systematic extraction of knowledge from materials datasets, frequently employing machine learning to identify hidden correlations and patterns [13].

- Database Development: Creation of structured repositories for compositional, structural, and functional properties data [6] [13].

- Visualization Tools: Development of multifunctional existence diagrams that correlate composition, processing, structure, and properties [6].

The emergence of the Open Science movement has significantly influenced data-driven materials science, with increasing mandates for open access to publicly funded research data accelerating the development of materials data infrastructures [13]. However, significant challenges remain in data veracity, integration of experimental and computational data, standardization, and data longevity [13].

Experimental Protocols and Implementation

Representative Protocol: Codeposited Composition Spread for Electrocatalyst Discovery

The discovery of improved electrocatalysts for polymer electrolyte membrane (PEM) fuel cells exemplifies the power of combinatorial methodologies. The following protocol details the identification of Pt-Ta catalysts with enhanced activity for methanol oxidation:

Library Fabrication: Create a binary Pt-Ta composition spread using codeposited cosputtering from separate Pt and Ta targets in an ultra-high vacuum system (base pressure: 10⁻⁹-10⁻⁸ Torr) [12] [14]. Deposit onto an appropriate substrate (e.g., silicon with native oxide) at room temperature to form an atomic mixture.

Structural Characterization: Perform high-throughput X-ray diffraction mapping across the composition spread using an automated diffractometer or synchrotron beamline. Acquire diffraction patterns at 1-2 mm intervals (equivalent to ~1 at% composition resolution) to identify phase fields [12].

Functional Screening: Implement optical fluorescence-based screening for catalytic activity toward methanol oxidation. Measure the half-wave potential (E₁/₂) across the library, where lower values indicate greater catalytic activity [12].

Data Correlation: Correlate catalytic performance with structural data to identify composition-structure-property relationships. In the Pt-Ta system, this analysis revealed that optimal catalytic activity was strongly associated with the orthorhombic Pt₂Ta phase and was maximized at the composition Pt₀.₇₁Ta₀.₂₉ [12].

This integrated approach enabled researchers to efficiently map the relationship between composition and catalytic performance with high resolution, identifying an optimal composition that might have been overlooked in discrete sampling strategies [12].

High-Throughput Electrochemical Characterization

Electrochemical methods are particularly well-suited for high-throughput characterization due to the ability to precisely control and automate voltage and current application [15]. Key implementations include:

- Parallel Electrode Arrangements: Multiple working electrode configurations enabling simultaneous electrochemical characterization of different compositions [15].

- Scanning Electrochemical Microscopy: Spatial mapping of electrochemical activity across materials libraries with microscopic resolution [15].

- Optical Screening Methods: Fluorescence-based assays for catalytic activity that provide semiquantitative data across composition spreads [12].

These high-throughput electrochemical methods have been successfully applied to diverse areas including battery development, electrocatalysis, corrosion protection, and sensor development [15].

Key Tools and Reagents for Combinatorial Materials Science

Table 1: Essential Research Reagent Solutions for Combinatorial Materials Science

| Category | Specific Examples | Function/Application | Technical Considerations |

|---|---|---|---|

| Sputtering Targets | Metals (Pt, Ta), Oxides (In₂O₃, ZnO), Nitrides | Source materials for thin-film deposition by physical vapor deposition | Purity (>99.9%), density, uniformity; reactive targets (alkali metals) require special handling [12] [14] |

| Process Gases | Ar (sputtering), O₂ (oxide formation), N₂ (nitrides), N₂/H₂ mixtures | Sputtering medium and reactive gas for compound formation | High purity (>99.999%), precise flow control for reactive sputtering [14] |

| Substrates | Silicon wafers, glass, specialized single crystals | Support for thin-film materials libraries | Thermal stability, surface finish, chemical compatibility; heating capabilities (<1000°C) often required [14] |

| Characterization Reagents | Fluorescent indicators for electrochemical screening | Functional assessment of catalytic activity and other properties | Chemical compatibility, sensitivity, stability under measurement conditions [12] |

Integration with Computational Methods and Future Perspectives

The full potential of combinatorial materials science is realized through integration with computational methods and emerging data science approaches. This convergence enables a more targeted exploration of the immense compositional space, moving from purely empirical screening toward predictive materials design.

Synergy with High-Throughput Computation

The combination of combinatorial experiments with computational screening creates a powerful feedback loop for accelerated materials discovery:

Hypothesis Generation: Computational methods (e.g., density functional theory) screen thousands of potential compositions to identify promising candidates for experimental investigation [6]. For example, researchers might start with 68,860 materials and computationally identify 43 promising photocathodes for CO₂ reduction [6].

Experimental Validation: Combinatorial synthesis rapidly tests computational predictions across focused composition ranges, providing experimental validation and identifying discrepancies [6].

Model Refinement: Experimental results from materials libraries provide high-quality data for refining computational models and improving their predictive accuracy [6].

This integrated approach was demonstrated in the discovery of novel nitrides, where DFT calculations predicted 21 promising ternary nitride semiconductors, with CaZn₂N₂ subsequently realized through high-pressure synthesis [6].

Emerging Frontiers and Challenges

As combinatorial materials science matures, several emerging frontiers and persistent challenges will shape its future development:

- Self-Driving Laboratories: The integration of automated synthesis, robotic characterization, and artificial intelligence decision-making promises to create fully autonomous materials discovery systems [7].

- Multifunctional Materials Optimization: Increasing focus on materials that perform multiple functions simultaneously, requiring characterization of diverse properties across composition spreads [15].

- Bridging the Industry-Academy Gap: While combinatorial methodologies have proven effective in informing commercial practice, they remain underutilized in industrial research and development, partly due to equipment costs and proprietary research barriers [5].

- Data Standardization and Longevity: Developing community standards for materials data representation and ensuring long-term accessibility of combinatorial datasets remain significant challenges [13].

Figure 2: Integration of Combinatorial and Computational Methods. This synergistic framework creates a closed-loop materials discovery ecosystem that leverages both experimental and computational approaches.

Combinatorial Materials Science has fundamentally transformed the approach to materials discovery and optimization, representing a definitive shift from serendipity-driven findings to systematic, data-guided exploration. By integrating high-throughput synthesis, automated characterization, and computational methods, CMS enables researchers to navigate the immense multidimensional search space of potential materials with unprecedented efficiency. This paradigm has already demonstrated significant successes across diverse applications including energy storage, electronic materials, and catalysis.

The future development of CMS will be shaped by increasing integration with artificial intelligence and machine learning, the emergence of self-driving laboratories, and ongoing efforts to address challenges in data standardization and industry adoption. As these trends converge, combinatorial methodologies will play an increasingly crucial role in accelerating the materials innovation pipeline from discovery to deployment, ultimately enabling the timely development of advanced materials needed to address pressing global challenges in sustainable energy and advanced technologies.

The discovery and development of next-generation functional materials are pivotal for addressing pressing global challenges in sustainable energy, microelectronics, and biomedical applications. The conventional Edisonian approach, characterized by sequential trial-and-error, is significantly outpaced by the combinatorial explosion of possible material compositions and structures. This whitepaper delineates a systematic framework for navigating this vast, multi-dimensional design space by integrating data-driven and physics-based methodologies. We detail a tripartite strategy encompassing knowledge extraction from dispersed literature, machine learning-enabled virtual screening, and adaptive design optimization to efficiently identify promising material candidates. The framework is contextualized within combinatorial materials science, providing researchers and drug development professionals with robust experimental protocols and analytical tools to accelerate the transition from materials discovery to commercial application.

The growing societal needs for sustainable energy and advanced computing technologies necessitate the development of functional materials with unprecedented properties. The design space for such materials, defined by chemical composition and atomic structure, is inherently high-dimensional and combinatorial. For instance, even among defect-free crystalline materials, the various configurations of different atoms within crystal structures can lead to a design space encompassing thousands to millions of possible candidates [16]. This vastness makes exhaustive exploration through traditional experimental or computational methods prohibitively expensive and time-consuming. Combinatorial materials science has emerged as a research paradigm to combat the high cost and long development times associated with new materials [5]. This methodology involves the synthesis of "library" samples containing vast materials variations and employs rapid, localized measurement schemes to generate massive, uniform data sets [5]. The core objective is to develop systematic strategies to navigate this complex search space efficiently, moving beyond serendipity toward rational materials design [16].

A Framework for Navigating the Design Space

To manage the complexity of the combinatorial design space, an integrated framework that couples data-driven and physics-based methods is essential. The following workflow outlines a systematic approach for materials design, from problem formulation to the identification of optimal candidates.

Figure 1: A systematic framework for combinatorial materials design, integrating data-driven and physics-based methods to efficiently navigate the high-dimensional search space [16].

Component 1: Knowledge Extraction from Dispersed Literature

The initial challenge in materials design is the scarcity and dispersity of relevant data. Prior findings are often reported across numerous publishers and scientific fields, creating a significant data acquisition bottleneck [16].

Text Mining Pipeline: Natural language processing (NLP) techniques are employed to automatically extract and organize critical information from the scientific literature. This information includes investigated material systems, key material descriptors, measured properties, and synthesis procedures [16]. This process transforms unstructured text into a structured, machine-readable database that serves as the foundation for all subsequent data-driven modeling.

Application to Metal-Insulator Transition (MIT) Materials: When applied to MIT materials—a class promising for next-generation memory devices—this approach successfully consolidated data on under 70 known materials spread across perovskite, spinel, and rutile families into a unified knowledge base [16].

Component 2: Data-Driven Virtual Screening

Once an initial database is established, machine learning (ML) models are trained to predict the target properties of unseen materials, enabling rapid virtual screening of vast candidate spaces.

Model Training and Prediction: ML models, such as graph neural networks (e.g., CHGNET) or other surrogate models, learn the complex relationships between material descriptors (input) and their properties (output) from the extracted data [16]. These models can inexpensively predict properties for millions of virtual candidates, bypassing costly simulations.

Design Space Reduction: The primary goal of virtual screening is to decompose the intractably large design space (often >10⁶ candidates) into smaller, promising material families comprising thousands or hundreds of candidates for further investigation [16]. This step is crucial for focusing resources on the most likely candidates for success.

Component 3: Adaptive Design Optimization

Within the identified promising material families, adaptive design optimization techniques are used to pinpoint the best-performing candidates with high efficiency.

Bayesian Optimization (BO): BO is a powerful strategy for global optimization of expensive black-box functions. It builds a probabilistic surrogate model of the property landscape and uses an acquisition function to strategically select the next most informative sample, balancing exploration and exploitation [16]. This approach significantly reduces the number of samples requiring computationally expensive evaluation.

Handling Mixed Variables: Materials design often involves a mix of categorical variables (e.g., element type, crystal system) and numerical variables (e.g., elemental fraction, temperature). Advanced, uncertainty-aware ML methods have been developed to extend BO's capability to handle these mixed-variable, disjoint design spaces effectively [16].

Experimental Protocols in Combinatorial Materials Science

The theoretical framework must be coupled with robust experimental protocols. The Codeposited Composition Spread (CCS) technique is a versatile method for high-throughput synthesis.

Codeposited Composition Spread (CCS) Synthesis

This method enables the creation of a continuous composition gradient of a thin-film material on a single substrate, allowing for the investigation of thousands of compositions in one experiment [17].

- Process: Thin films are deposited via physical vapor deposition (e.g., sputtering) onto a substrate simultaneously from two or more spatially separated sources. This produces a film with an inherent composition gradient and intimate mixing of the constituents. With three sources, an entire ternary phase diagram can be produced [17].

- Composition Resolution: The CCS approach allows properties to be determined with very fine composition resolution, often at 1 mol% intervals, limited only by the resolution of the property measurement technique itself [17].

Table 1: High-Throughput Synthesis Techniques in Combinatorial Materials Science

| Technique | Description | Key Advantage | Common Deposition Methods |

|---|---|---|---|

| Codeposited Composition Spread (CCS) | Simultaneous deposition from multiple sources creating a continuous gradient [17]. | Prepares materials with no subsequent processing; fine composition resolution [17]. | Sputtering, Evaporation, Pulsed-Laser Deposition (PLD) [17]. |

| Discrete Combinatorial Synthesis (DCS) | Sequential deposition of discrete precursor layers followed by diffusion/reaction [17]. | Can prepare arbitrary compositions with a large number of constituents [17]. | Various physical vapor deposition techniques. |

High-Throughput Characterization and Screening

Parallel synthesis must be matched with parallel characterization to realize the benefits of the combinatorial approach.

- Structural Analysis: Thin films from CCS are well-suited for high-throughput X-ray diffraction. Using automated data acquisition, hundreds of diffraction patterns can be collected on a single substrate to identify phase fields and crystal structures [17]. A key unsolved challenge is the automated clustering of diffraction patterns into contiguous phase fields.

- Functional Property Screening: For properties like electrocatalytic activity, optical screening methods have been developed. For instance, catalytic activity for methanol oxidation can be semi-quantitatively assessed using optical fluorescence, allowing for the rapid identification of active compositions [17].

The logical flow of a full combinatorial study, from library design to lead candidate validation, is outlined below.

Figure 2: The workflow for a high-throughput combinatorial materials study, from library synthesis to lead candidate identification [17].

The Scientist's Toolkit: Research Reagent Solutions

The successful execution of combinatorial experiments relies on a suite of essential materials and instruments.

Table 2: Essential Reagents and Materials for Combinatorial Research

| Item / Solution | Function / Purpose | Example Application |

|---|---|---|

| Sputtering Targets | High-purity sources for physical vapor deposition of thin films. | Creating composition spread libraries of metals, alloys, oxides (e.g., Pt-Ta system) [17]. |

| Structural Analogs | Well-characterized materials for instrument calibration & validation. | Ga₂O₃, SnO₂, ZnO, CdO, In₂O₃ for transparent conductivity studies [17]. |

| Precursor Inks/Solutions | For solution-based synthesis of material libraries. | Optimization of catalysts (polymerization, oxidation) & other functional materials [17]. |

| High-Throughput Characterization Tools | Automated systems for rapid property measurement. | Synchrotron XRD for phase identification; fluorescence for catalytic activity [17]. |

The framework presented provides a structured methodology for navigating the multi-dimensional search space of materials, significantly accelerating the discovery and optimization process. By integrating text mining, machine learning-based virtual screening, and adaptive design optimization, researchers can effectively decompose vast combinatorial problems into tractable tasks. The application of this approach to metal-insulator transition materials demonstrates its power in identifying promising new candidates for microelectronic devices [16]. Despite these advances, outstanding challenges remain, including materials data quality issues, the property-performance mismatch in real-world applications, and the need for robust algorithms for autonomous data analysis [16] [17]. Future progress in combinatorial materials science will be driven by the effective coupling of synthesis, characterization, and theory, as well as the ability to manage large, multi-format data sets—a core challenge highlighted by the Materials Genome Initiative [5]. As these methodologies mature and become more accessible, they are poised to become an indispensable tool for researchers and developers aiming to bring innovative materials to market.

Combinatorial materials science accelerates the discovery and optimization of novel materials by integrating three core methodologies: the construction of systematic Materials Libraries, their efficient production via High-Throughput Synthesis, and subsequent evaluation through Rapid Screening. This paradigm shift from traditional sequential experimentation enables the mapping of complex composition-structure-property relationships at an unprecedented pace, which is critical for applications in catalysis, energy storage, and pharmaceutical development.

Materials Libraries: The Foundation of Exploration

A materials library is a deliberately designed collection of samples where composition, processing parameters, or structure are systematically varied. Their design is governed by the experimental goal, such as identifying a novel catalyst or optimizing a polymer blend.

Table 1: Common Materials Library Design Strategies

| Library Type | Description | Key Variables | Typical Application |

|---|---|---|---|

| Discrete Composition Spread | Individual samples with distinct, pre-defined compositions. | Elemental ratios (e.g., A_xB_yC_z). |

Alloy hardening, catalyst discovery. |

| Continuous Gradient | A single sample with a continuous variation in a property (e.g., composition, thickness). | Composition, thickness, annealing temperature. | Phase diagram mapping, thin-film optimization. |

| Polymer Microarray | Thousands of polymer spots printed on a functionalized slide. | Monomer combinations, chain length, side groups. | Biomaterial screening for cell response. |

| Zeolitic Imidazolate Framework (ZIF) Library | A suite of Metal-Organic Frameworks (MOFs) synthesized combinatorially. | Metal ion (Zn²⁺, Co²⁺), organic linker. | Gas adsorption, drug delivery vectors. |

Experimental Protocol: Fabrication of a Thin-Film Composition Spread Library via Co-Sputtering

- Objective: To create a continuous gradient of two metals (A and B) across a substrate.

- Materials: High-purity metal targets (A & B), inert gas (Ar), substrate wafer (e.g., Si/SiO₂).

- Procedure:

- Load Substrate: Place the substrate in a high-vacuum sputtering chamber.

- Positioning: Orient the substrate such that one half is directly facing Target A and the other half is directly facing Target B.

- Sputter Deposition:

- Evacuate the chamber to a base pressure of <1 x 10⁻⁶ Torr.

- Introduce Ar gas to a working pressure of 3 mTorr.

- Initiate plasma and sputter from both targets simultaneously for a fixed duration (e.g., 30 minutes).

- Result: A thin film is deposited where the composition transitions from pure A on one edge, through a gradient of A

_xB_1-x, to pure B on the opposite edge.

High-Throughput Synthesis: Scalable Generation

High-Throughput Synthesis (HTS) encompasses automated and parallelized techniques for the physical creation of materials libraries.

Table 2: High-Throughput Synthesis Techniques and Metrics

| Technique | Throughput (Samples/Batch) | Typical Sample Size | Key Advantage | Limitation |

|---|---|---|---|---|

| Inkjet Printing | 1,000 - 10,000+ | Picoliters to nanoliters | Extreme miniaturization, low waste. | Clogging, formulation complexity. |

| Combinatorial Sputtering | 1 (gradient library) | 100 mm wafer | High-quality thin films, continuous gradients. | Limited to compatible materials. |

| Parallel Microreactor | 96 - 384 | 1 - 100 µL | Precise control over reaction conditions. | High cost per reactor. |

| Sol-Gel Dip-Coating | 10 - 100 | ~1 cm² | Simple, applicable to oxides. | Film uniformity challenges. |

HTS Methodology Flow

Rapid Screening: High-Speed Evaluation

Rapid Screening involves the automated characterization of a materials library's properties. The technique must be fast, non-destructive (or minimally destructive), and correlate with the property of interest.

Experimental Protocol: High-Throughput Photocatalytic Screening via Fluorescence Imaging

- Objective: To identify compositions in a materials library with high photocatalytic activity for dye degradation.

- Materials: Materials library, fluorescent probe (e.g., Resazurin), UV light source, fluorescence scanner.

- Procedure:

- Incubation: Immerse the entire library in a solution of Resazurin.

- Irradiation: Expose the library to uniform UV light for a fixed time (e.g., 10 minutes). Active photocatalysts will reduce Resazurin (blue, fluorescent) to Resorufin (pink, highly fluorescent).

- Scanning: Image the library using a fluorescence scanner with appropriate excitation/emission filters.

- Analysis: Quantify the fluorescence intensity of each library member. Higher fluorescence correlates with higher photocatalytic activity. Data is automatically processed to generate an activity map of the library.

Table 3: Rapid Screening Techniques and Key Performance Indicators (KPIs)

| Screening Technique | Property Measured | Throughput (Samples/Hour) | Detection Limit | Key Metric |

|---|---|---|---|---|

| 4-Point Probe | Electrical Conductivity | 10,000 | 10 µΩ·cm | Sheet Resistance (Ω/sq) |

| X-ray Diffraction (XRD) Mapping | Crystalline Phase | 1,000 | 5% phase fraction | Phase Identification |

| Photoluminescence Imaging | Band Gap, Defects | 50,000 | 0.01% quantum yield | Emission Wavelength/Intensity |

| Mass Spectrometry (MS) Imaging | Catalytic Activity | 100 | 1 pmol | Turnover Frequency (TOF) |

Screening Data Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| Resazurin Sodium Salt | Redox-sensitive fluorescent dye used for high-throughput screening of catalytic and electrochemical activity. |

| Poly(DL-lactide-co-glycolide) (PLGA) | A biodegradable polymer used in inkjet printing to create combinatorial polymer libraries for drug delivery studies. |

| Precision Sputtering Targets (4N-5N Purity) | High-purity metal or ceramic targets used in physical vapor deposition to ensure reproducible thin-film library synthesis. |

| Functionalized Glass Slides (e.g., NH₂, SiO₂) | Provide a uniform, chemically reactive surface for printing and immobilizing polymer or biomaterial libraries. |

| High-Throughput Microreactor Blocks (96-well) | Enable parallel synthesis under controlled temperature and pressure, typically used for catalyst testing and nanomaterial synthesis. |

Synthesis, Screening, and Success Stories: How Combinatorial Methods Are Applied

Combinatorial materials science represents a paradigm shift in research methodology, designed to dramatically accelerate the discovery and optimization of new compounds. In contrast to the conventional 'one-by-one' synthesis approach, which has been a major rate-limiting factor in exploring complex materials, combinatorial methods enable the parallel synthesis and screening of hundreds to thousands of different compositions in a single experiment [18] [19]. This approach initially revolutionized the pharmaceutical and biochemical industries and has since been successfully extended to solid-state and inorganic materials research [18] [19]. The core of this methodology involves creating combinatorial libraries—individual samples containing a vast array of compositionally varying specimens—which are then rapidly characterized using high-throughput screening techniques to map desired physical properties across the compositional space [18].

The scope of combinatorial materials science is far-reaching, addressing issues across a wide spectrum of topics ranging from catalytic powders and polymers to electronic, magnetic, and bio-functional materials [18]. This guide focuses on two principal synthesis techniques for creating these libraries: codeposited composition spreads and discrete combinatorial synthesis. Understanding the distinctions, advantages, and limitations of these two core techniques is fundamental for researchers aiming to leverage combinatorial methods for materials discovery and optimization, particularly in fields such as drug development where efficient screening is critical [18].

Core Principles and Definitions

Codeposited Composition Spreads

The codeposited continuous composition spread approach involves the simultaneous deposition of multiple elemental components onto a substrate to create a thin film with a continuous gradient of compositions. This technique results in an atomic mixture in the as-deposited film, making it particularly suitable for fabricating metastable materials when performed at room temperature [6]. The primary objective is to generate a library where every possible composition in a multinary system is represented in a single sample, enabling the continuous mapping of properties across the entire compositional phase diagram [20].

Discrete Combinatorial Synthesis

Discrete combinatorial synthesis involves creating a library of distinct, separate samples arranged in an array format on a single substrate. Unlike the continuous gradient of codeposited spreads, this approach yields individual, addressable samples, each with a specific, predefined composition [19]. A pioneering example of this method involved the fabrication of a 128-member library of copper oxide superconductors on a single substrate, demonstrating the power of discrete synthesis for rapidly screening large numbers of compounds [19].

Table 1: Fundamental Characteristics of Core Combinatorial Techniques

| Feature | Codeposited Composition Spreads | Discrete Combinatorial Synthesis |

|---|---|---|

| Spatial Structure | Continuous gradient | Array of discrete spots |

| Composition Control | Continuous variation | Predefined, specific compositions |

| Library Density | Very high (virtually infinite points) | High (dozens to hundreds of members) |

| Typical Fabrication | Co-sputtering, co-evaporation | Sequential deposition, inkjet printing |

| Informed by | [6] [20] | [19] |

Synthesis Methodologies and Experimental Protocols

Fabrication of Codeposited Composition Spreads

The creation of codeposited composition spreads typically relies on physical vapor deposition (PVD) techniques, with magnetron sputtering being one of the most versatile and widely used methods [6]. The experimental protocol can be broken down into several key steps:

- Substrate Preparation: A polished, clean substrate (e.g., sapphire, silicon) is placed in a high-vacuum chamber. The substrate's position and orientation are critical for defining the resulting composition gradient.

- Strategic Target Placement: Multiple elemental or compound targets are arranged around the substrate. The spatial arrangement of these sources relative to the substrate is the primary factor determining the compositional spread [18].

- Simultaneous Co-deposition: The targets are activated simultaneously (e.g., via sputtering or laser pulse in pulsed laser deposition). Atoms from each target are ejected and travel to the substrate, where they co-deposit. The thickness profile of the film from each source varies across the substrate, creating a continuous composition gradient that covers a large fraction of a ternary or higher-order system in a single experiment [18] [6].

- Post-Deposition Annealing (if required): For libraries requiring crystallization or phase formation, a post-deposition annealing step is performed at suitable temperatures to enable interdiffusion and reaction, transforming the atomic mixture into stable or metastable phases [6].

Fabrication of Discrete Combinatorial Libraries

Discrete libraries involve the synthesis of distinct materials in a predefined array. One common method is the wedge-type multilayer deposition technique [6]:

- Library Design: A mask or shutter system is programmed to deposit each material component in a specific sequence and spatial pattern on the substrate.

- Sequential Layer Deposition: Using computer-controlled moveable shutters, nanoscale layers of different materials are deposited in a sequential manner. The thickness of each layer is varied across the substrate to create a precursor stack with controlled compositional variations.

- Thermal Processing for Interdiffusion: The multilayer precursor structure is subjected to a post-deposition annealing process at elevated temperatures. This allows the layers to diffuse into each other rapidly, forming the desired phases at each discrete location on the library [6].

An alternative approach for creating discrete libraries involves using solution-based methods or inkjet printing to deposit tiny droplets of precursor solutions in a predefined array, followed by thermal treatment to form the final compounds [19].

Synthesis Workflow

Characterization and High-Throughput Screening

Rapid and localized characterization is the cornerstone that enables the combinatorial approach to be effective. Making quick and accurate measurements of specific physical properties from the small volumes of materials in libraries often requires specialized instrumentation and, in some cases, has led to the invention of new measurement tools [18].

Techniques for Codeposited Spreads

For continuous composition spreads, characterization techniques must be capable of spatially resolved mapping.

- Structural Analysis: X-ray diffraction (XRD) mapping is used to identify crystallographic phases across the library. By scanning the substrate and collecting diffraction patterns at numerous points, researchers can construct structural phase diagrams that correlate directly with composition [18].

- Magnetic Properties: The magnetic-o-optical Kerr effect (MOKE) is used for rapid mapping of magnetic hysteresis loops, providing information on saturation magnetization and coercive fields [18]. Scanning SQUID microscopy and scanning Hall probe techniques can also map magnetic field distributions, from which magnetization values can be extracted using inversion algorithms [18].

- Electrical and Optical Properties: Four-point probe measurements can map sheet resistance, while optical spectroscopy techniques can reflectivity and transmission across the spread.

Techniques for Discrete Libraries

Discrete libraries, with their array of separate samples, are amenable to parallel measurement techniques and automated serial screening.

- Parallel Imaging: Techniques like infrared imaging or fluorescence microscopy can simultaneously assess properties across many discrete spots.

- Automated Robotic Probes: Computer-controlled multi-axis systems with specialized probes can sequentially measure electrical, magnetic, or electrochemical properties of each discrete sample in the array.

- High-Throughput Synchrotron XRD: By using dedicated beamlines with automated sample stages, the crystal structure of hundreds of samples in a discrete library can be rapidly determined.

Table 2: High-Throughput Characterization Techniques for Different Library Types

| Property | Characterization Technique | Applicable Library Type | Key Advantage |

|---|---|---|---|

| Crystal Structure | Spatially Resolved X-ray Diffraction | Codeposited Spreads | Continuous phase mapping |

| Crystal Structure | Automated X-ray Diffraction | Discrete Libraries | High-quality data for each spot |

| Magnetism | Scanning SQUID Microscopy | Codeposited Spreads | High sensitivity, quantitative |

| Magnetism | Magnetic-Optical Kerr Effect | Both | Rapid hysteresis loop mapping |

| Electrical Conductivity | 4-Point Probe Mapping | Codeposited Spreads | Continuous property correlation |

| Electrical Conductivity | Automated 4-Point Probe | Discrete Libraries | Precise measurement per sample |

| Optical Properties | Photoluminescence/UV-Vis Mapping | Codeposited Spreads | Identify optical trends |

| Catalytic Activity | Fluorescence-based Screening | Discrete Libraries | Parallel activity assessment |

| Informed by | [18] [6] [19] |

Comparative Analysis and Applications

Advantages and Limitations

Each synthesis technique offers distinct benefits and faces specific challenges, making them suitable for different stages of the materials discovery pipeline.

Codeposited Composition Spreads are exceptionally powerful for exploratory research and phase diagram mapping. Their primary strength lies in the seamless, continuous coverage of compositional space, which eliminates the risk of missing promising compositions that might fall between discrete data points [20]. They are particularly valuable for identifying narrow regions of optimal performance or phase boundaries that might be overlooked with discrete sampling. However, a significant challenge is that properties measured from thin-film spreads may sometimes differ from bulk material behavior, creating what can be considered "thin-film phase diagrams" [18]. While these are directly relevant for thin-film applications, care must be taken when extrapolating to bulk materials.

Discrete Combinatorial Synthesis offers superior compositional control and is often more straightforward for property optimization once a promising region of compositional space has been identified. Because each sample is distinct, it is easier to ensure that measurements are not affected by cross-contamination or interference from adjacent compositions. Discrete libraries also more readily allow for different processing conditions (e.g., annealing temperature gradients) to be applied across a single library, enabling the simultaneous exploration of both composition and processing parameters [19]. The main limitation is the discrete nature of the sampling, which could potentially miss fine features in the composition-property landscape.

Application Case Studies

The effectiveness of both methodologies has been demonstrated through numerous successful discoveries across various technological domains.

- Magnetic Materials: Comprehensive mapping of structural and magnetic properties was demonstrated for the Fe-Ni-Co system. A thin-film library was fabricated and annealed to allow complete interdiffusion. X-ray diffraction mapping revealed the distribution of fcc and bcc phases, while MOKE measurements provided corresponding magnetization maps, showing a clear correlation between phase distribution and magnetic properties [18].

- Dielectric Materials: The codeposited composition spread approach has been extensively applied to the discovery and optimization of complex oxide dielectrics, enabling researchers to efficiently navigate multicomponent systems to find materials with high dielectric constants and other desirable properties [20].

- Noble-Metal-Free Catalysts: A discovery highlighted in the search results is a nanoparticulate electrocatalyst, CrMnFeCoNi, with catalytic activity for the oxygen reduction reaction. This unexpected discovery in a multinary system was made possible by applying new combinatorial synthesis and characterization methods to test a property where no prior results existed [6].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of combinatorial synthesis requires specific materials and instrumentation. The following table details key components essential for establishing a combinatorial workflow.

Table 3: Essential Research Reagents and Materials for Combinatorial Synthesis

| Item/Reagent | Function/Purpose | Technical Specifications |

|---|---|---|

| High-Purity Metal Targets | Source materials for deposition | 99.95%-99.999% purity; various diameters for sputter guns |

| Single-Crystal Substrates | Support for thin-film libraries | Sapphire, Si, MgO, STO; polished, epi-ready surfaces |

| Magnetron Sputter Sources | For physical vapor deposition | Ultra-high vacuum compatible; multiple guns for co-deposition |

| Computer-Controlled Shutters | Precise deposition control | Motorized, programmable for wedge/multilayer deposition |

| Rapid Thermal Annealer | Post-deposition processing | Capable of 200-1000°C in controlled atmospheres |

| X-ray Diffraction System | Structural characterization | Mapping stage, 2D detector for high-throughput |

| Automated Probe Station | Electrical property mapping | 4-point probe, temperature stage, automated x-y-z control |

| Informed by | [18] [21] [6] |

Future Outlook and Integration with Computational Methods

The future of combinatorial materials science lies in its deeper integration with computational methods and materials informatics. The immense, multidimensional search space of possible multinary materials necessitates a down-selection of candidate systems, which can be effectively guided by high-throughput computational screening [6]. Computational methods can screen thousands of virtual compounds, predicting stability and properties, thereby identifying the most promising candidates for experimental synthesis in "focused" combinatorial libraries [6].

This synergistic approach creates a powerful discovery cycle: computational predictions guide the experimental exploration of combinatorial libraries, and the high-quality, multidimensional data generated from these libraries, in turn, validates and refines the computational models [6]. This data-driven paradigm is central to initiatives like the Materials Genome Initiative and is transforming materials discovery from a serendipitous process into a more efficient, engineered endeavor [5] [6]. As these methodologies mature, they are poised to significantly accelerate the development of new materials for demanding applications in sustainable energy, electronics, and medicine.

Combinatorial materials science represents a paradigm shift in the discovery and development of new materials. Instead of synthesizing and testing individual samples one at a time, this approach enables the efficient fabrication and high-throughput characterization of vast materials libraries containing hundreds or thousands of unique compositions on a single substrate [6]. This methodology is particularly powerful for exploring multinary materials systems, where the number of possible combinations becomes immense—for example, more than two million possible combinations for quinaries derived from just 50 starting elements [6]. The potential for materials discovery is therefore tremendous in the largely unexplored search space of the periodic table.

Thin-film materials libraries stand as a cornerstone of this combinatorial approach, allowing researchers to create complete ternary systems or substantial fractions of higher-order systems in a single experiment [6]. These libraries are essential for verifying or falsifying hypotheses and computational predictions while providing the multidimensional datasets necessary for data-driven materials discovery. The technology is particularly relevant for sustainable energy technologies and energy-efficient processes, where new materials discoveries can enable advancements in areas such as solar water splitting, hydrogen storage, and noble-metal-free catalysts [6].

Fabrication of Thin-Film Materials Libraries

Synthesis Techniques

The creation of thin-film materials libraries relies on sophisticated deposition techniques that generate controlled composition gradients across a substrate. Two primary methods have emerged as particularly effective for this purpose:

Combinatorial Magnetron Sputtering: This versatile process utilizes multiple sputter sources with computer-controlled moveable shutters to deposit nanoscale layers oriented at specific angles (180° for binaries, 120° for ternaries) [6]. The resulting wedge-type multilayer structure serves as a precursor that transforms into phases through post-deposition annealing at optimized temperatures where rapid interdiffusion occurs.

Co-sputtering Deposition: This alternative approach creates an atomic mixture during deposition by simultaneously co-depositing from multiple sources [6]. When performed at room temperature, this method is particularly suitable for fabricating metastable materials that might not form under equilibrium conditions.

A specific implementation for studying Cu-Cr-Co systems employed high-throughput ion beam sputtering to create combinatorial multilayer thin-films [22]. By carefully controlling the thickness ratio among individual nanoscale monolayers (Cu, Cr, Co), researchers achieved stoichiometries covering the entire ternary phase diagram, enabling comprehensive investigation of structural evolution during solid-state reactions.

Advanced Manufacturing and Automation

Recent advancements have introduced autonomous experimentation to thin-film synthesis, combining robotics with artificial intelligence to create self-driving laboratory systems. Researchers at the University of Chicago Pritzker School of Molecular Engineering have developed a system that automates the entire materials development loop—running experiments, measuring results, and feeding those results back into a machine-learning model that guides subsequent attempts [23]. This approach has demonstrated remarkable efficiency, hitting desired targets for silver films with specific optical properties in an average of just 2.3 attempts, exploring the full range of experimental conditions in a few dozen runs—a task that would normally require weeks of human effort [23].

Concurrent developments at Pacific Northwest National Laboratory focus on machine learning applications for real-time monitoring of film growth. Their RHAAPsody system can identify subtle changes in growing films that are imperceptible to human observers, flagging emerging differences in film growth data faster than human experts [24]. This capability represents a crucial step toward fully autonomous film growth systems that can adapt growth conditions to counteract problems as they emerge.

Table 1: Thin-Film Library Fabrication Methods

| Method | Key Features | Advantages | Representative Systems |

|---|---|---|---|

| Wedge-Type Multilayer Deposition | Computer-controlled shutters; nanoscale layers; post-deposition annealing | Well-defined composition gradients; suitable for phase formation studies | Cu-Cr-Co combinatorial chips [22] |

| Co-sputtering Deposition | Simultaneous deposition from multiple sources; atomic mixture | Suitable for metastable materials; room temperature processing | Silver films for optical properties [23] |

| Physical Vapor Deposition (PVD) | Material vaporized then condensed as ultra-thin layer; AI-guided parameters | Autonomous optimization; handles sensitive variables | Self-driving PVD for silver films [23] |

High-Throughput Characterization and Analysis

The value of thin-film materials libraries is fully realized only when coupled with efficient, high-quality characterization methods that can rapidly determine compositional, structural, and functional properties across the library. Automated characterization techniques are essential for extracting meaningful data from these complex samples.

For compositional analysis, techniques such as micro-X-ray fluorescence (μ-XRF) provide rapid, non-destructive mapping of element distributions across the materials library [22]. This method enables researchers to verify composition gradients and correlate specific positions on the library with exact chemical compositions.

Structural characterization heavily utilizes high-throughput X-ray diffraction (XRD), with synchrotron sources offering particularly rapid data collection for comprehensive phase analysis [22]. The resulting diffraction patterns are amenable to automated analysis employing hierarchical clustering techniques to identify structural relationships and phase distributions across composition space [22].

Functional properties characterization varies depending on the target application but may include optical spectroscopy for photovoltaic materials, electrical measurements for conductive compounds, or catalytic testing for energy applications. The discovery of noble-metal-free nanoparticulate electrocatalysts like CrMnFeCoNi for the oxygen reduction reaction exemplifies how testing multinary systems for previously unexplored functionalities can lead to unexpected discoveries [6].

Data Management and Informatics

The combinatorial approach generates multidimensional datasets that require sophisticated informatics tools for analysis, visualization, and interpretation. These datasets form the basis for multifunctional existence diagrams that correlate composition, processing, structure, and properties—essential resources for the design of future materials [6].

Materials informatics leverages prior knowledge stored in databases or extracted from literature through computational means to guide exploration strategies [6]. The emergence of the AI4Materials framework represents a structured approach to integrating artificial intelligence into materials science and engineering, built around three core elements: materials data infrastructure, AI4Mater techniques, and applications [25]. This integration aims to foster open access to AI resources and enhance collective advancement in materials science.

Machine learning algorithms play increasingly important roles in analyzing combinatorial data. For the Cu-Cr-Co system, hierarchical clustering techniques enabled automated identification of structural relationships across the composition spread [22]. In self-driving laboratories, machine learning models predict parameters needed for specific thin-film properties, then synthesize and analyze the resulting product, iteratively tweaking parameters until desired specifications are met [23].

Experimental Protocols and Methodologies

Fabrication of Cu-Cr-Co Combinatorial Libraries

The investigation of Cu-Cr-Co combinatorial multilayer thin-films exemplifies a rigorous approach to ternary systems exploration [22]. The protocol begins with the preparation of combinatorial chips using a high-throughput ion beam sputtering system. Individual nanoscale monolayers of Cu, Cr, and Co are deposited with precisely controlled thickness ratios to ensure coverage of the complete ternary composition range. The samples are then subjected to systematic heat treatments varying temperature, time, and modulation period to study solid-state reaction kinetics and phase evolution.

Critical to this methodology is the understanding that reducing the modulation period produces effects equivalent to increasing temperature on phase evolution, providing multiple pathways to achieve desired structural outcomes [22]. The elemental distribution in the depth direction must be carefully characterized to gain insights regarding phase transformation mechanisms.

Autonomous Synthesis of Functional Films

The self-driving physical vapor deposition system developed at UChicago represents a transformative experimental protocol [23]. The process begins with the system creating a very thin "calibration layer" of film that helps the algorithm read the unique conditions of each run, accounting for unpredictable quirks such as subtle differences between substrates or trace amounts of gases in the vacuum chamber.

The autonomous system then executes a continuous loop of synthesis, characterization, and machine-learning-guided parameter adjustment. A researcher specifies desired film properties, and the machine learning model guides the system through a sequence of experiments to achieve the target, making sample-specific decisions in real-time to optimize conditions [23]. This approach has demonstrated particular effectiveness in addressing the irreproducibility challenges that have long plagued physical vapor deposition, where tiny variations in hidden variables make consistent results difficult to achieve.

Table 2: Key Experimental Parameters and Their Effects in Thin-Film Library Synthesis

| Parameter | Influence on Material Properties | Characterization Methods | Optimization Approaches |

|---|---|---|---|

| Composition Spread | Determines phase formation; affects functional properties | μ-XRF; EDX | Wedge multilayer design; co-sputtering power control |

| Annealing Temperature | Controls interdiffusion; phase transformations | High-throughput XRD; TEM | Ramp studies; combinatorial heating stages |

| Deposition Rate | Affects microstructure; defect density | Quartz crystal monitoring; SEM | Source power calibration; shutter programming |

| Modulation Period | Influences reaction kinetics; equivalent to temperature effects | XRD; cross-sectional SEM | Multilayer thickness design [22] |

| Substrate Effects | Impacts strain; epitaxial relationships | XRD pole figures; AFM | Multiple substrate libraries; buffer layers |

Research Reagent Solutions and Essential Materials

The successful implementation of combinatorial thin-film research requires specialized materials and instrumentation. The following table details key research reagents and equipment essential for exploring complete ternary systems through thin-film materials libraries.

Table 3: Essential Research Reagents and Equipment for Combinatorial Thin-Film Studies

| Item | Function/Purpose | Technical Specifications | Application Examples |

|---|---|---|---|

| High-Purity Metal Targets | Source materials for deposition; determines final film purity | 99.95%-99.999% purity; various diameters | Cu, Cr, Co for ternary systems [22]; Ag for optical films [23] |

| Specialized Substrates | Support for thin-film growth; influences microstructure and properties | Silicon wafers; glass; oriented single crystals | Temperature-resistant substrates for annealing studies |

| Sputtering Systems | Combinatorial deposition of thin-film libraries | Multiple sources; computer-controlled shutters; UHV capability | Wedge-type multilayer deposition [6]; ion beam sputtering [22] |

| Post-Deposition Annealing Equipment | Phase formation through solid-state reactions | Programmable temperature profiles; controlled atmospheres | Studying structural evolution in Cu-Cr-Co [22] |

| Characterization Tools | High-throughput materials property assessment | μ-XRF; automated XRD; SEM/EDS | Composition-structure mapping [22] |

| Machine Learning Platforms | Data analysis; experimental guidance; autonomous decision-making | Python-based frameworks; real-time processing | RHAAPsody for growth monitoring [24]; self-driving PVD [23] |

Integration with Computational Methods

The power of thin-film materials libraries is greatly enhanced through integration with computational materials science. High-throughput computations can screen thousands of potential systems, predicting stable compounds and promising properties to guide experimental exploration [6]. This synergistic approach enables researchers to focus experimental efforts on the most promising regions of composition space.