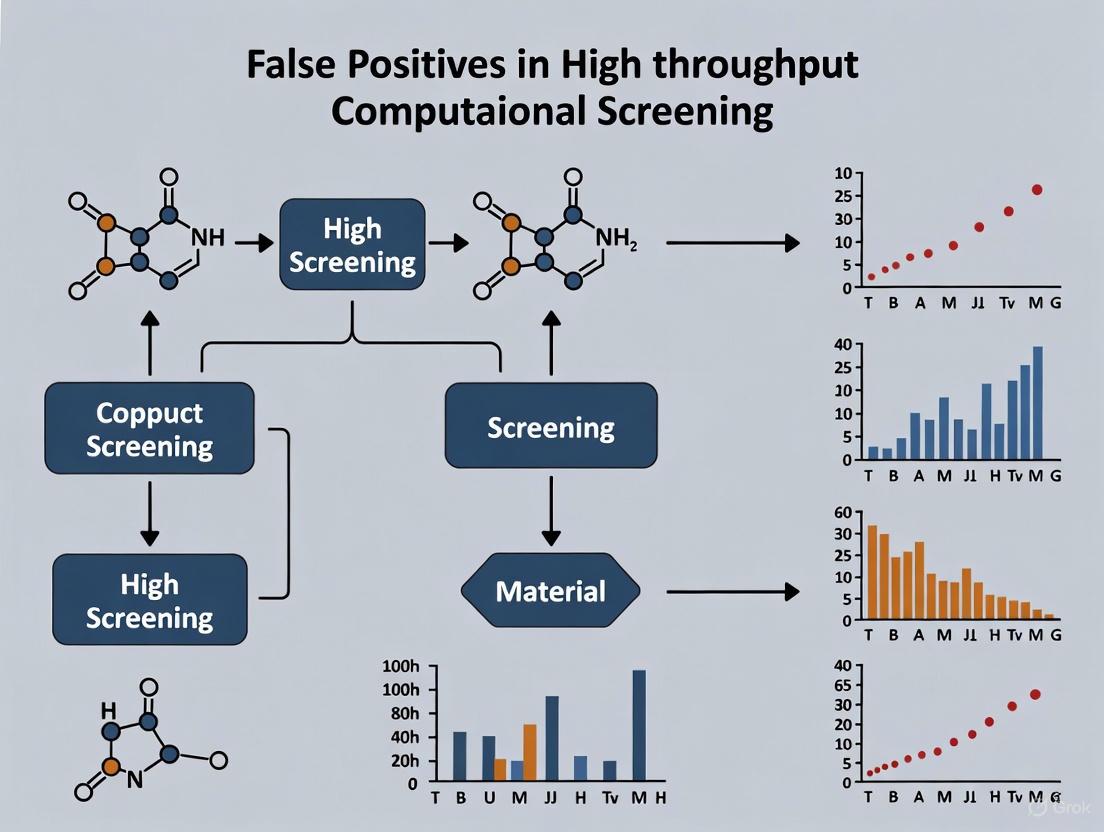

Combating False Positives in High-Throughput Screening: A Strategic Guide for Drug Discovery

False positives present a formidable challenge in high-throughput screening (HTS), leading to significant resource waste and delays in drug discovery.

Combating False Positives in High-Throughput Screening: A Strategic Guide for Drug Discovery

Abstract

False positives present a formidable challenge in high-throughput screening (HTS), leading to significant resource waste and delays in drug discovery. This article provides a comprehensive framework for researchers and scientists to understand, identify, and mitigate false positives in computational and experimental screening. Drawing on the latest advancements, we explore the foundational mechanisms of assay interference, from colloidal aggregation and chemical reactivity to metal impurities and luciferase inhibition. We then detail modern methodological approaches, including integrated computational platforms like ChemFH and Liability Predictor, which leverage advanced machine learning for robust prediction. The article further offers practical troubleshooting strategies for optimizing assay conditions and validates these approaches through comparative analysis of next-generation tools versus traditional methods like PAINS filters. By synthesizing insights across these four core intents, this guide aims to equip drug development professionals with the knowledge to enhance screening efficiency, improve hit validation, and accelerate the path to viable lead compounds.

Understanding the Scope and Mechanisms of HTS False Positives

Frequently Asked Questions (FAQs)

Q1: What constitutes a false positive in high-throughput computational screening? A false positive (or assay artifact) is a compound that appears active in a primary screen but does not actually interact with the biological target of interest. These compounds interfere with the assay detection technology itself through mechanisms like chemical reactivity, inhibition of reporter enzymes (e.g., luciferase), or formation of colloidal aggregates that non-specifically perturb biomolecules [1].

Q2: What is the real-world impact of false positives on a research project? False positives consume significant time and financial resources. One study comparing screening approaches found that a system prone to false positives incurred 3.4 times the cost ($329 million vs. $98 million) and led to 150 times higher cumulative burden of false positives per screening round compared to a more specific method [2]. They waste investigator time on fruitless follow-up experiments and can delay projects for months [3].

Q3: Are some types of assays more susceptible to false positives than others? Yes, certain assay technologies are more vulnerable. Luciferase reporter assays are often inhibited by some compounds, generating false positives. Fluorescence- and absorbance-based readouts can be interfered with by compounds that are themselves fluorescent or colored. Homogeneous proximity assays (e.g., ALPHA, FRET, HTRF) are also susceptible to various compound-mediated interferences [1].

Q4: Can't we just use computational filters like PAINS to remove false positives? While popular, Pan-Assay INterference compoundS (PAINS) filters are known to be oversensitive. They disproportionately flag compounds as potential false positives while failing to identify a majority of truly interfering compounds. More modern, reliable Quantitative Structure-Interference Relationship (QSIR) models are being developed to replace them [1].

Q5: What is the single most important step to avoid failure in virtual screening? The most critical step is redocking validation. Before screening thousands of compounds, researchers should test their computational docking protocol by removing a known ligand from its crystal structure and attempting to re-dock it. A successful re-docking, with a Root-Mean-Square Deviation (RMSD) of less than 2Å from the original pose, validates the protocol. Skipping this step is like using a broken ruler for all your measurements [3].

Troubleshooting Guides

Guide 1: Identifying and Triage of Apparent Hits

Problem: A primary high-throughput screen (HTS) has yielded an unusually high number of hits, many of which are suspected false positives.

Solution: Follow this systematic triage workflow to identify and eliminate false positives.

Steps:

- Computational Triage: First, subject the hit list to computational filters. Use modern QSIR models, such as the "Liability Predictor" webtool, to flag compounds with known interference behaviors like thiol reactivity, redox activity, or luciferase inhibition [1]. This is more reliable than older PAINS filters.

- Orthogonal Assay Confirmation: Test the remaining compounds in a secondary, orthogonal assay that uses a completely different detection technology. For example, if the primary screen was a luminescence-based assay, use a fluorescence polarization or NMR-based assay for confirmation. This step helps rule out technology-specific interference [1].

- Confirm Dose-Response and Identity: For compounds that pass the orthogonal assay, confirm activity by generating a dose-response curve (e.g., IC50). Re-synthesize or re-purchase the compound to confirm its identity and purity, as impurities can sometimes be the source of activity [4].

- Test for Aggregation: A common source of false positives is colloidal aggregation. To test for this, repeat the activity assay in the presence of a non-ionic detergent like Triton X-100 (e.g., 0.01%). If the compound's activity is significantly reduced or abolished, it is likely a colloidal aggregator, or a "Small, Colloidally Aggregating Molecule (SCAM)" [1].

Guide 2: Validating a Computational Docking Protocol

Problem: Virtual screening of a compound library fails to yield any confirmed active compounds in subsequent experimental testing.

Solution: This is often due to an unvalidated docking protocol. Before any virtual screening, perform a redocking validation to ensure your computational method can accurately reproduce known experimental results [3].

Steps:

- Source a Crystal Structure: Obtain a high-resolution crystal structure of your target protein with a known active ligand bound (from the Protein Data Bank, PDB).

- Prepare the System: Separate the ligand from the protein structure. Prepare both the protein and ligand coordinate files for docking (e.g., generating PDBQT files with tools like AutoDockTools), ensuring correct protonation states and atom types [5].

- Perform Redocking: Dock the ligand back into the protein's binding site using your chosen docking software and parameters.

- Analyze the Result: Calculate the Root-Mean-Square Deviation (RMSD) between the docked ligand's pose and its original position in the crystal structure.

- Success: An RMSD of less than 2.0 Å typically indicates your docking protocol is reliable and can be used for virtual screening [3].

- Failure: An RMSD greater than 2.0 Å means your protocol needs optimization. Revisit parameters such as the size and location of the docking search box, treatment of protein flexibility (e.g., specifying flexible side chains), and the scoring function used [5].

Quantitative Data on Screening Efficiency

The table below summarizes a direct comparison between two blood-based cancer screening approaches, highlighting the dramatic resource impact of false positives [2].

| Performance Metric | Single-Cancer Early Detection (SCED-10) System | Multi-Cancer Early Detection (MCED-10) System |

|---|---|---|

| Cancers Detected | 412 | 298 |

| False Positives | 93,289 | 497 |

| Positive Predictive Value | 0.44% | 38% |

| Number Needed to Screen | 2,062 | 334 |

| Diagnostic Cost | $329 Million | $98 Million |

| Cumulative Burden of False Positives | 18 | 0.12 |

Data modeled for a population of 100,000 adults, incremental to existing recommended screening [2].

The Scientist's Toolkit: Key Research Reagents & Solutions

The following table lists essential tools and reagents used to combat false positives in HTS and virtual screening.

| Tool or Reagent | Function/Brief Explanation |

|---|---|

| Liability Predictor | A freely available webtool that predicts HTS artifacts by applying QSIR models for thiol reactivity, redox activity, and luciferase interference [1]. |

| Orthogonal Assay Reagents | Kits or reagents for a secondary assay with a different detection principle (e.g., NMR, fluorescence polarization, SPR) to confirm primary screen hits [1]. |

| Triton X-100 | A non-ionic detergent used to test for colloidal aggregation. Loss of activity in its presence suggests a false positive SCAM [1]. |

| AutoDock Suite / Vina | Open-source software for computational docking and virtual screening. Used for redocking validation and virtual screening campaigns [5]. |

| Redox/Fluorescent Assay Kits | Specific assays (e.g., MSTI for thiol reactivity) used to experimentally profile the interference potential of compound hits [1]. |

| Stem Cell-Derived Models | Human stem cell-derived cell lines (hESC, iPSC) used in HTS for more physiologically relevant and predictive toxicity and efficacy testing [6]. |

| Content Disarm and Reconstruction (CDR) | A cybersecurity-inspired file sanitization technology that proactively removes potential threats from files, achieving near-zero false positives [7]. |

Troubleshooting Guides & FAQs

Colloidal Aggregation

Q: My high-throughput screening (HTS) hit shows potent inhibition, but the structure-activity relationship is flat and the Hill coefficient is steep. What could be the cause?

A: These characteristics are classic signs of colloidal aggregation [8]. At a compound-specific critical aggregation concentration (CAC), small molecules can self-assemble into nano-sized colloidal particles (typically 50-1000 nm) [8] [9]. These aggregates can non-specifically inhibit enzymes by binding to and partially unfolding proteins on their surface, leading to a loss of catalytic activity [8]. The high apparent potency and steep Hill slopes occur because the aggregates have a much higher affinity for their target proteins than the concentration of the targets in the assays [8].

Experimental Protocol to Confirm Aggregation:

- Detergent Sensitivity Test: Repeat the assay in the presence of a non-ionic detergent like Triton X-100 (start at 0.01% v/v). A significant reduction or abolition of inhibitory activity strongly suggests aggregation-based interference [9].

- Critical Aggregation Concentration (CAC) Measurement: Use a fluorescent dye like pyrene, which changes its emission properties in a hydrophobic environment. As the compound concentration increases, a shift in the emission spectrum will indicate the CAC, the point at which aggregates form [8].

- Direct Visualization: Techniques like dynamic light scattering (DLS) or transmission electron microscopy (TEM) can be used to visualize and measure the size of the colloidal particles [9].

Q: How can I prevent colloidal aggregation from derailing my screening campaign?

A: Proactive steps can significantly mitigate the impact of aggregators.

- Modify Assay Buffers: Include non-ionic detergents (e.g., Triton X-100, Tween-20) in your assay buffer. This is one of the most effective strategies to disrupt colloid formation [9].

- Use Decoy Proteins: Adding a carrier protein like bovine serum albumin (BSA) at ~0.1 mg/mL before adding the test compound can pre-saturate the aggregates, protecting the target enzyme. Note that BSA may not reverse inhibition once it has occurred [9].

- Adjust Enzyme Concentration: Increasing the concentration of the target enzyme can sometimes mitigate the effects of non-stoichiometric inhibitors like aggregators [9].

Reporter Inhibition (Firefly Luciferase)

Q: In my firefly luciferase (FLuc) reporter gene assay, some compounds cause an unexpected increase in luminescence. How is this possible?

A: This counterintuitive result is a well-documented interference mechanism. Some compounds inhibit FLuc but, in doing so, bind to and stabilize the enzyme, protecting it from cellular degradation. This extends its cellular half-life, leading to a net increase in the luminescence signal over time. This effect can cause false positives in assays where an increase in signal is the desired readout [10].

Q: Are FLuc inhibitors common, and how do they affect HTS data?

A: Yes, FLuc inhibitors are frequently encountered. One analysis of public screening data identified over 24,000 FLuc inhibitors [10]. These inhibitors exhibit a general tendency to cause false positives across many different types of assays with FLuc-dependent readouts, regardless of whether the assay is designed to detect an increase or decrease in signal [10]. They can act through various mechanisms, including competitive inhibition with respect to the substrate luciferin [11].

Experimental Protocol to Identify FLuc Interference:

- Counter-Screening: Test active compounds in a cell-free, biochemical FLuc inhibition assay. Inhibition in this counter-screen suggests the compound is directly interfering with the reporter rather than acting on the biological target of interest [10] [12].

- Analyze Kinetics: A mechanism-of-action study, such as varying the concentration of the luciferin substrate, can help determine if the inhibitor is competitive [11].

- Use Computational Predictors: Tools like InterPred leverage machine learning models trained on large HTS datasets to predict the likelihood that a new chemical structure will interfere with FLuc or fluorescent assays [12].

Chemical Reactivity & Autofluorescence

Q: My compound is active in a fluorescence-based assay but shows no activity in an orthogonal, non-fluorescent assay. What should I suspect?

A: This discrepancy points to assay interference, likely through compound autofluorescence or fluorescence quenching [13] [12]. Autofluorescent compounds emit light that overlaps with the assay's detection spectrum, creating a false positive signal. Conversely, compounds that quench fluorescence can absorb the emitted light, leading to false negatives.

Experimental Protocol to Identify Fluorescence Interference:

- Measure Compound Alone: In a plate reader, measure the signal from the compound in assay buffer (without other reagents) at the same wavelengths used in your assay. A high signal indicates autofluorescence [12].

- Test in Cell-Free Systems: Run the assay in a cell-free format that retains the fluorescent readout. Activity in this context, without the biological target, indicates direct interference with the detection system [12].

- Use Orthogonal Assays: The most robust strategy is to confirm activity using an assay with a different detection technology (e.g., radiometric, absorbance, or luminescence) [13].

Quantitative Data on Assay Interference

The table below summarizes quantitative data on the prevalence of different interference mechanisms from large-scale screening efforts, highlighting that a significant portion of apparent "actives" in HTS can be attributed to these artifacts.

Table 1: Prevalence of Common Interference Mechanisms in HTS

| Interference Mechanism | Typical Prevalence in Screening Libraries | Key Characteristics | Reference Assay |

|---|---|---|---|

| Colloidal Aggregation | ~1.7% - 1.9% of a library; can comprise >90% of initial actives in susceptible biochemical assays [9]. | Detergent-sensitive inhibition, steep Hill slopes, flat SAR [8] [9]. | AmpC β-lactamase inhibition [9]. |

| Firefly Luciferase (FLuc) Inhibition | 9.9% of the Tox21 library (8,305 chemicals) were active in a cell-free luciferase inhibition assay [12]. | Can cause either an increase or decrease in signal in cell-based reporter assays; often concentration-dependent [10]. | Cell-free biochemical luciferase assay [12]. |

| Compound Autofluorescence | Varies by wavelength: ~0.5% (red) to 4.6% (green) of the Tox21 library in cell-based conditions [12]. | Signal is generated in the absence of the biological target; activity is not replicable in orthogonal assays [13] [12]. | Fluorescence measurement in cell-based and cell-free conditions [12]. |

Experimental Workflows for Interference Identification

The following diagram illustrates a general decision workflow for triaging HTS hits and systematically identifying the common interference mechanisms discussed.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Reagents for Mitigating and Identifying Assay Interference

| Item | Function/Benefit | Example Use Case |

|---|---|---|

| Non-ionic Detergents (Triton X-100, Tween-20) | Disrupts the structure of colloidal aggregates, raising the Critical Aggregation Concentration (CAC). Mitigates nonspecific binding to container walls [9]. | Add to biochemical assay buffers at 0.01% (v/v) to test if inhibitory activity is abolished [9]. |

| Bovine Serum Albumin (BSA) | Acts as a "decoy" protein that can pre-saturate aggregates, preventing them from inhibiting the target enzyme [9]. | Include at ~0.1 mg/mL in the assay buffer before adding the test compound [9]. |

| Control Aggregator Compounds (e.g., Cinnarizine, Ritnovir) | Provide a positive control for aggregation behavior. Their known CAC and detergent-sensitive profile help validate counter-screens [8]. | Use as a technical control when developing new biochemical assays to ensure the buffer conditions can suppress aggregation interference [8]. |

| Fluorescent Dyes (e.g., Pyrene) | Used to measure the Critical Aggregation Concentration (CAC). The dye's emission spectrum shifts as it partitions into the hydrophobic environment of aggregates [8]. | Titrate the test compound and monitor pyrene fluorescence to determine the concentration at which aggregates begin to form [8]. |

| Firefly Luciferase Inhibitors (e.g., PTC-124) | Serve as positive controls for luciferase-based counter-screens and for studying signal stabilization effects [10] [12]. | Use in a cell-free luciferase enzyme assay to validate the counter-screen and as a control in cell-based reporter assays [12]. |

Frequently Asked Questions

What are inorganic metal impurities, and how do they cause false positives? Inorganic metal impurities are residual metal ions, such as zinc, palladium, or nickel, that can remain in compound libraries after synthesis. These metals can directly inhibit biological targets or interfere with assay detection systems, leading to signals that mimic genuine bioactive compounds. Unlike organic impurities, they are not detected by standard purity checks like NMR or mass spectrometry [14].

Why are these false positives particularly problematic in HTS? False positives caused by metal impurities can appear potent (often in the low micromolar range), making them attractive for follow-up. They can produce consistent results across various orthogonal assays, including biochemical and biosensor-based binding assays, leading project teams to waste significant time and resources before the true cause is identified [14].

Which metals are most commonly involved? A study investigating one specific project found that zinc (Zn²⁺) was a particularly potent source of interference, with an IC₅₀ of 1 μM against the target enzyme Pad4. Other metals like iron, palladium, nickel, and copper also showed inhibitory effects, though with lower potency [14].

| Metal | IC₅₀ against Pad4 (μM) [14] |

|---|---|

| Zinc (Zn²⁺) | 1 |

| Iron (Fe³⁺) | 192 |

| Palladium (Pd²⁺) | 231 |

| Nickel (Ni²⁺) | 242 |

| Copper (Cu²⁺) | 279 |

| Barium (Ba²⁺) | >1000 |

| Calcium (Ca²⁺) | >1000 |

| Magnesium (Mg²⁺) | >1000 |

How prevalent is this issue in real-world HTS campaigns? A retrospective analysis of 175 historical HTS screens at Roche found that 41 campaigns showed a dramatically elevated hit rate (≥25%) for compounds suspected of zinc contamination. This suggests that metal impurities can affect a wide variety of targets and assay systems [14].

Are certain types of screens more vulnerable? Fragment-based screens (FBS), which typically test compounds at much higher concentrations (e.g., 250 μM), are particularly prone to false positives from metal-contaminated compounds. In one noted case, all 36 zinc-contaminated compounds in a Ras fragment screen produced positive signals [14].

Troubleshooting Guide: Identifying and Mitigating Metal-Based False Positives

This section provides a step-by-step protocol to diagnose and eliminate false positives caused by metal impurities in your screening results.

Step 1: Recognize the Warning Signs Be suspicious of your HTS hit series if you observe any of the following:

- Lack of Conclusive Structure-Activity Relationships (SAR): Activity is not consistently linked to the compound's core structure.

- Inconsistent Batch-to-Batch Activity: Different syntheses or batches of the same compound show vastly different potencies (e.g., IC₅₀ from low micromolar to completely inactive) [14].

- Unexplained Activity in Orthogonal Assays: The "activity" is confirmed across different assay formats (e.g., functional ELISA and biosensor binding), suggesting a real inhibition that may, in fact, be caused by a metal contaminant [14].

Step 2: Perform a Targeted Counter-Screen The most straightforward method to confirm zinc-related interference is to use the specific chelator TPEN (N,N,N',N'-tetrakis(2-pyridylmethyl)ethylenediamine).

- Procedure: Re-test your hit compounds in the presence and absence of TPEN.

- Interpretation: A significant right-shift in the dose-response curve (e.g., a greater than 7-fold potency shift in the presence of TPEN) strongly suggests that the observed activity is due to zinc contamination [14].

Step 3: Conduct a Direct Metal Screen If available, use elemental analysis (e.g., ICP-MS) to quantify metal content in your solid compound samples. Active batches of compounds have been found to contain zinc impurities of up to 20% by mass, whereas inactive batches of the same compound contained only trace amounts [14].

Step 4: Test the Metal Itself Determine the IC₅₀ of the suspected metal salt (e.g., ZnCl₂) in your assay. If the metal alone is a potent inhibitor of your target, it confirms a pathway for interference [14].

Experimental Protocol: TPEN Counter-Screen for Zinc-Dependent False Positives

Objective: To determine if the biological activity of a screening hit is due to zinc contamination by using the selective zinc chelator TPEN.

Materials:

- Hit compound(s) in solution (from DMSO stock)

- TPEN stock solution (e.g., 10-100 mM in DMSO)

- Assay buffer and components

- Zinc chloride (ZnCl₂) solution for a positive control

Method:

- Prepare Assay Plates: Set up two identical plates for your standard activity assay (e.g., an ELISA-based enzyme assay).

- Add Chelator: To the experimental plate, add TPEN to a final concentration of 10-100 μM. To the control plate, add an equivalent volume of solvent (DMSO) [14].

- Dose-Response Curves: Perform a serial dilution of your hit compound(s) on both plates.

- Run Assay: Complete the assay according to your standard protocol and calculate the IC₅₀ values for each compound in the presence and absence of TPEN.

- Include Controls:

- Negative Control: A known, zinc-free active compound should show no significant shift in the presence of TPEN.

- Positive Control: ZnCl₂ should show a complete loss of activity in the presence of TPEN.

Data Analysis: Calculate the fold-change in IC₅₀. A fold-change greater than 7 is a conservative indicator that the compound's activity is likely mediated by zinc contamination [14].

Diagnostic Workflow for Metal Impurities

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function / Purpose |

|---|---|

| TPEN (N,N,N',N'-tetrakis(2-pyridylmethyl)ethylenediamine) | A potent and selective membrane-permeable zinc chelator. Used in counter-screens to chelate zinc impurities and abolish their activity, confirming a zinc-based false positive [14]. |

| EDTA (Ethylenediaminetetraacetic acid) | A broad-spectrum metal chelator. Can be used to test for interference from various divalent metal cations, though it is less specific than TPEN [14]. |

| Zinc Chloride (ZnCl₂) | Used as a positive control to determine the intrinsic sensitivity of a target or assay system to zinc ions [14]. |

| Elemental Analysis (e.g., ICP-MS) | Analytical techniques used to directly quantify the metal content (e.g., zinc, palladium, nickel) in solid compound samples [14]. |

Mechanism of Zinc Interference and TPEN Rescue

Troubleshooting Guides

Luciferase Reporter Assay Interference

Problem: Unexpected inhibition or amplification of luminescence signal in luciferase-based assays.

| Interference Type | Common Causes | Characteristic Symptoms |

|---|---|---|

| Enzyme Inhibition [15] [1] | Direct inhibition of luciferase enzyme by compounds resembling substrates (e.g., benzothiazoles, aryl sulfonamides). | Potent, nanomolar-potency inhibition in concentration-response curves; signal suppression in cell-based and biochemical assays. |

| Redox Interference [1] | Redox-active compounds generating hydrogen peroxide (H₂O₂) in assay buffers. | Oxidation of luciferase residues; confounding activity in cell-based phenotypic screens involving signaling pathways. |

| Signal Quenching [15] | Light-absorbing compounds attenuating emitted luminescence signal via "inner-filter" effects. | Signal attenuation follows Beer-Lambert law (exponential decay with increasing absorber concentration). |

Diagnosis and Resolution:

- Step 1: Counterscreen – Run a dedicated luciferase enzyme inhibition assay (e.g., cell-free format with D-luciferin and ATP) to identify direct inhibitors [15] [12].

- Step 2: Orthogonal Validation – Confirm true biological activity using a non-luciferase based assay (e.g., ELISA, RT-qPCR, mass spectrometry) [15].

- Step 3: In-silico Prediction – Use tools like Liability Predictor or Luciferase Advisor to flag potential luciferase inhibitors in your compound library before screening [1].

Fluorescence and Absorbance Assay Interference

Problem: High background, signal quenching, or false-positive signals in fluorescence/absorbance-based assays.

| Interference Type | Common Causes | Characteristic Symptoms |

|---|---|---|

| Autofluorescence [12] | Test compounds emitting light within the detection spectrum of the fluorophore. | High signal in negative controls; non-saturable, linear concentration-response; signal persists in cell-free conditions. |

| Inner-Filter Effect [15] | Colored or light-absorbing compounds attenuating excitation or emission light. | Signal quenching that correlates with compound absorbance; violates Beer-Lambert law expectations. |

| Compound Fluorescence [1] | Fluorescent compounds in screening libraries. | Varies with fluorophore and filter settings; can cause both false positives and negatives. |

Diagnosis and Resolution:

- Step 1: Control Experiments – Test compounds in a cell-free assay containing only buffer and detection reagents. Also, test in wells without cells or biochemical target [12].

- Step 2: Spectral Profiling – Shift assay readouts to longer, red-shifted wavelengths (far-red spectrum) to dramatically reduce interference from compound autofluorescence [1].

- Step 3: Alternative Detection – Where possible, switch to a non-optical detection method, such as mass spectrometry-based readouts (e.g., RapidFire MS), which are immune to these interferences [16].

General Chemical Reactivity Interference

Problem: Non-specific compound activity caused by undesirable chemical reactions.

| Interference Type | Common Causes | Characteristic Symptoms |

|---|---|---|

| Thiol Reactivity [1] | Compounds (e.g., alkyl halides, isothiocyanates) covalently modifying cysteine residues. | Irreversible activity; non-specific inhibition across multiple unrelated protein targets. |

| Colloidal Aggregation [1] | Compounds forming sub-micrometer aggregates that non-specifically sequester proteins. | Loss of potency with addition of non-ionic detergents (e.g., Triton X-100, Tween-20); sharp, steep inhibition curves. |

Diagnosis and Resolution:

- Step 1: Detergent Challenge – Add low concentrations (e.g., 0.01-0.1%) of non-ionic detergent to the assay buffer. Activity lost upon detergent addition indicates colloidal aggregation [1].

- Step 2: Thiol-Reactivity Assay – Use a dedicated biochemical assay (e.g., using MSTI or DTNB) to identify thiol-reactive compounds [1].

- Step 3: Cytotoxicity Check – For cell-based assays, rule out general cytotoxicity as the cause of signal reduction using a viability assay (e.g., ATP content).

Immunoassay Interference

Problem: Falsely elevated or decreased analyte concentration in antibody-based assays.

| Interference Type | Common Causes | Characteristic Symptoms |

|---|---|---|

| Heterophilic Antibodies [17] [18] | Human antibodies that bind animal-derived assay antibodies. | Falsely elevated results in sandwich immunoassays; non-linear dilution; discordant results between different assay platforms. |

| Cross-reactivity [17] [18] | Metabolites or structurally similar molecules binding the assay antibody. | Falsely elevated analyte readings; known issues with steroid hormones, digoxin, and cyclosporine A assays. |

| Hook Effect [18] | Extremely high analyte concentration saturating capture and detection antibodies. | Falsely low measurement at high analyte concentrations; resolved upon sample dilution. |

Diagnosis and Resolution:

- Step 1: Sample Dilution – Dilute the sample and re-assay. A non-linear response suggests interference [18].

- Step 2: Blocking Reagents – Re-test the sample after addition of blocking agents (e.g., heterophile blocking tubes) that bind interfering antibodies [17].

- Step 3: Alternative Platform – Measure the analyte using an immunoassay from a different manufacturer or a different methodology (e.g., LC-MS/MS) [17].

Quantitative Data on Assay Interference

Table 1: Prevalence of Assay Artifacts in Compound Screening

| Interference Mechanism | Typical Hit Rate in HTS | Potency Range of Common Artifacts | Key Structural Alerts / Compound Classes |

|---|---|---|---|

| Firefly Luciferase Inhibition [15] [1] | ~5% at 10-11 µM | Single-digit nM to µM | Benzothiazoles, benzoxazoles, benzimidazoles, diaryl structures, aryl carboxylates (e.g., PTC124) [15]. |

| Nano Luciferase Inhibition [1] | Data from dedicated screens | Data from dedicated screens | Curated datasets and QSIR models available via "Liability Predictor" [1]. |

| Autofluorescence [12] | Up to 9.9% (varies by wavelength) | N/A | Varies by fluorophore; rule-based alerts on ring structures/properties [12]. |

| Thiol Reactivity [1] | Data from dedicated screens | Data from dedicated screens | Thiol or quinone substructures (e.g., alkyl halides, isothiocyanates, Michael acceptors) [1]. |

| Redox Activity [1] | Data from dedicated screens | Data from dedicated screens | Quinones, catechols, hydroxylamines [1]. |

Experimental Protocols

Protocol: Cell-Free Luciferase Inhibition Counterscreen

Purpose: To identify compounds that directly inhibit the firefly luciferase enzyme, a common source of false positives in reporter gene assays [15] [12].

Reagents:

- Firefly Luciferase enzyme (commercially available)

- D-Luciferin substrate

- ATP

- Assay Buffer: 50 mM Tris-acetate pH 7.6, 13.3 mM magnesium acetate, 0.01% Tween-20, 0.05% BSA [12].

- Test compounds and control inhibitor (e.g., PTC124) [12].

Procedure:

- Prepare Substrate Mix: In assay buffer, create a substrate mixture containing 0.01 mM D-luciferin and 0.01 mM ATP [12].

- Dispense: Add 3 µL of substrate mix to each well of a white 1536-well plate.

- Transfer Compounds: Pin-transfer 23 nL of test compounds, control inhibitor (PTC124, 0.035 nM - 1.15 µM), and DMSO control to the assay plate [12].

- Initiate Reaction: Add 1 µL of a 10 nM firefly luciferase solution in assay buffer to all wells.

- Incubate and Read: Incubate the plate for 5 minutes at room temperature. Measure luminescence intensity using a plate reader [12].

- Data Analysis: Normalize raw luminescence data relative to DMSO (0% inhibition) and high-concentration PTC124 (100% inhibition) controls. Fit concentration-response curves to determine IC₅₀ values [12].

Protocol: Autofluorescence Testing Assay

Purpose: To characterize compound autofluorescence at different wavelengths to troubleshoot fluorescence-based assays [12].

Reagents:

- Cell culture medium (with and without cells)

- Assay buffer (cell-free)

- White and black clear-bottom assay plates

Procedure:

- Plate Preparation:

- For cell-based conditions: Seed cells (e.g., HEK-293 or HepG2) in culture medium.

- For cell-free conditions: Use culture medium or assay buffer only [12].

- Compound Addition: Add a dilution series of the test compound to both cell-based and cell-free wells. Include DMSO controls.

- Incubation: Incubate plates under standard assay conditions (e.g., 37°C, 5% CO₂ for cell-based).

- Signal Detection: Read the plates using the same filter settings/wavelengths (e.g., blue, green, red) as your primary assay without adding any fluorescent reagents [12].

- Data Analysis: A concentration-dependent signal in the cell-free wells confirms compound autofluorescence. Compare the signal intensity to that of your primary assay to assess potential interference [12].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Identifying and Mitigating Assay Interference

| Tool / Reagent | Function | Example Use Case |

|---|---|---|

| Dual-Luciferase Assay Systems [19] | Measures two spectrally resolved luciferases in one sample, using one as a normalizing control. | Correcting for variations in cell viability and transfection efficiency; identifying specific vs. general signal effects. |

| Liability Predictor (Webtool) [1] | Free QSIR models predicting luciferase inhibition, thiol reactivity, and redox activity. | Triage of HTS hits; design of screening libraries to pre-filter potential interferents. |

| InterPred (Webtool) [12] [20] | Machine learning models predicting autofluorescence and luciferase interference. | Assessing risk of assay interference for new chemical structures prior to screening. |

| Heterophile Blocking Reagents [17] [18] | Solutions of animal immunoglobulins that bind interfering human antibodies. | Added to patient samples to eliminate false positives/negatives in clinical immunoassays. |

| Non-ionic Detergents [1] | Disrupts colloidal aggregates formed by small molecules. | Added to assay buffers (e.g., 0.01% Triton X-100) to confirm/rule out aggregation-based inhibition. |

| CETSA (Cellular Thermal Shift Assay) [21] | Measures target engagement in intact cells by detecting ligand-induced thermal stabilization. | Orthogonal validation of direct target binding, independent of reporter enzyme systems. |

Frequently Asked Questions (FAQs)

Q1: My HTS hit is potent in my luciferase reporter assay but inactive in follow-up orthogonal assays. What is the most likely cause? A1: The most probable cause is direct inhibition of the firefly luciferase enzyme. Potent, nanomolar-range inhibitors are common, with hit rates of ~5% in typical screening libraries. These compounds often contain benzothiazole or other planar, heterocyclic structures that mimic the D-luciferin substrate [15] [1]. Immediately run a luciferase enzyme counterscreen to confirm this interference.

Q2: Are PAINS filters sufficient for identifying all types of assay interference? A2: No. While popular, PAINS filters are known to be oversensitive (flagging too many compounds) and can miss a majority of true interferents. More reliable, mechanism-specific computational tools are now available, such as Liability Predictor for luciferase inhibition and reactivity, and InterPred for fluorescence interference [1] [12] [20].

Q3: How can I definitively prove that my compound's activity is not due to assay interference? A3: Confirmation requires a combination of strategies:

- Counterscreens: Run targeted assays for specific interference mechanisms (luciferase inhibition, autofluorescence, aggregation).

- Orthogonal Assays: Confirm activity in an assay with a fundamentally different detection technology (e.g., MS-based, CETSA, SPR) that is not susceptible to the same artifacts [15] [21] [16].

- Dose-Response: Ensure clean, saturable concentration-response curves consistent with a specific biological interaction.

Q4: What is the single most effective strategy to reduce false positives in my screening workflow? A4: Proactive design is key. Use orthogonal assay formats from the start. For a luciferase-based primary screen, plan a secondary, orthogonal assay (e.g., ELISA, high-content imaging) during the experimental design phase. Additionally, use in-silico prediction tools to profile compound libraries before screening to flag and test potential interferents early [15] [1].

Leveraging Advanced Computational Tools and Experimental Counterscreens

In high-throughput screening (HTS) for drug discovery, false positives are a significant obstacle, often accounting for over 95% of positive results and leading to costly resource waste [22]. These false positives, or frequent hitters (FHs), arise from various assay interference mechanisms. This guide provides technical support for two computational platforms, ChemFH and Liability Predictor, designed to identify these problematic compounds and improve the efficiency of your screening workflows.

FAQ: Understanding the Platforms and False Positives

What are the primary types of assay interference these platforms address?

Both platforms specialize in identifying several key types of assay interference [1] [23] [22]:

- Colloidal Aggregators: Compounds that form aggregates in screening assays, leading to non-specific binding and denaturation of target proteins.

- Chemical Reactive Compounds: Substances that chemically modify protein residues or assay reagents, such as thiol-reactive compounds (TRCs) and redox-cycling compounds (RCCs) [1].

- Luciferase Inhibitors: Molecules that inhibit reporter enzymes like firefly luciferase (FLuc), causing false signals in bioluminescence-based assays.

- Promiscuous Compounds: Compounds that bind non-specifically to multiple unrelated biological targets.

- Spectroscopic Interference: Compounds that interfere with detection methods, such as fluorescent or colored molecules that absorb light in assay spectral windows [1] [23].

How do ChemFH and Liability Predictor differ from older methods like PAINS filters?

Traditional PAINS (Pan-Assay INterference compoundS) filters use substructural alerts but are known to be oversensitive and often fail to identify a majority of truly interfering compounds [1]. In contrast:

- ChemFH employs robust multi-task Directed Message Passing Neural Network (DMPNN) models trained on a high-quality dataset of over 810,000 compounds, providing more reliable and accurate predictions [23] [22].

- Liability Predictor uses Quantitative Structure-Interference Relationship (QSIR) models specifically developed and validated for endpoints like thiol reactivity, redox activity, and luciferase inhibition, showing 58–78% external balanced accuracy [1].

What quantitative performance can I expect from these tools?

The table below summarizes the key performance metrics and features of each platform.

| Feature | ChemFH | Liability Predictor |

|---|---|---|

| Core Technology | Multi-task DMPNN models & substructure alerts [23] [22] | Quantitative Structure-Interference Relationship (QSIR) models [1] |

| Dataset Size | >810,000 compounds [23] | 5,098 compounds from the NPACT library (per assay) [1] |

| Reported Accuracy (AUC) | Average AUC of 0.91 [22] | 58-78% external balanced accuracy [1] |

| Key Add-on Features | 10+ FH screening rules & 1441 alert substructures; API for batch screening [23] [22] | Can integrate lab/field data to refine predictions [1] |

| Validated Use Case | Successfully screened 2575 FDA-approved drugs; identified 6.44% as colloidal aggregators [22] | 256 external compounds experimentally tested per assay [1] |

Troubleshooting Common User Issues

Issue 1: Interpreting Low-Confidence Predictions

Problem: The platform returns a prediction labeled as "Low-Confidence."

Solution:

- For ChemFH Users: This indicates the platform's built-in uncertainty estimation is at work. The model flags predictions where it is less certain. Treat these results with caution and consider verifying them with an orthogonal experimental assay [23].

- General Guidance: A low-confidence result often means the queried molecule is structurally distinct from the compounds in the model's training set. Use this information to highlight areas where your chemical library may be exploring novel space.

Issue 2: Handling a Compound Flagged for Multiple Liabilities

Problem: A single compound is predicted to be a colloidal aggregator, a luciferase inhibitor, and chemically reactive.

Solution:

- Prioritize by Assay Context: If you are running a luciferase-based assay, prioritize the luciferase inhibitor flag. For a biochemical assay with reducing agents, the redox-activity (chemical reactivity) flag may be most critical [1].

- Consult Structural Alerts: Use the substructure alerts provided by ChemFH to understand which specific molecular features are triggering the flags. This can provide a rational starting point for medicinal chemistry optimization [23] [22].

- Triage Experimentally: Design a simple counter-screen specific to the highest-priority liability (e.g., a detergent-based assay to test for aggregation) to confirm the computational prediction before discarding the compound [1].

Issue 3: Integrating Platform Output into a High-Throughput Workflow

Problem: The need to screen large virtual libraries efficiently.

Solution:

- Utilize the API: ChemFH offers flexible API interfaces designed specifically for batch calculations on extensive datasets. This allows you to integrate its screening capability directly into your automated virtual screening pipeline without manual intervention [23].

- Standardize Input/Output: Ensure your compound library is in a format accepted by the platform (e.g., SMILES strings) and write a script to parse the output (e.g., CSV files with prediction scores and flags) for seamless integration into your downstream workflow.

Experimental Protocols for Validation

Protocol 1: Experimental Validation of a Predicted Luciferase Inhibitor

This protocol is adapted from the experimental validation procedures used to develop and test the Liability Predictor models [1].

Principle: Confirm computational predictions by testing the compound's activity in a luciferase-based reporter assay under controlled conditions.

Materials:

- Recombinant firefly or nano luciferase.

- Luciferase assay reagent (substrate, e.g., D-luciferin).

- Assay buffer.

- White, opaque-walled multiwell plates.

- Plate reader capable of measuring luminescence.

- Test compound(s) and a DMSO control.

Method:

- Dilution Series: Prepare a dilution series of the test compound in DMSO, then further dilute in assay buffer to the desired final concentrations (e.g., 0.1 nM to 100 µM).

- Enzyme Reaction: In a well plate, mix the luciferase enzyme with the test compound or vehicle control and pre-incubate for a set time (e.g., 15-30 minutes).

- Signal Measurement: Initiate the reaction by adding the luciferase substrate. Measure the luminescence signal immediately using the plate reader.

- Data Analysis: Plot the luminescence signal against the compound concentration. A concentration-dependent decrease in luminescence signal, compared to the DMSO control, confirms luciferase inhibition.

Protocol 2: Counter-Screen for Predicted Colloidal Aggregation

This protocol outlines a general method to confirm if a hit compound acts via colloidal aggregation.

Principle: Colloidal aggregates often see their inhibitory effect reversed in the presence of non-ionic detergents or increased enzyme concentration.

Materials:

- Target enzyme and its substrate.

- Assay buffer.

- Test compound.

- Non-ionic detergent (e.g., 0.01% Triton X-100).

- Standard assay equipment (pipettes, plates, plate reader).

Method:

- Standard Assay: Run your primary activity assay with the test compound under standard conditions.

- Detergent Assay: Run the same activity assay, but include a low concentration of a non-ionic detergent (like 0.01% Triton X-100) in the reaction buffer.

- Analysis: A significant reduction or complete loss of the compound's inhibitory activity in the presence of detergent is a strong indicator that the inhibition was caused by colloidal aggregation.

Research Reagent Solutions

The table below lists key reagents and their functions for experimentally validating common assay interferences.

| Reagent / Assay | Function in Validation |

|---|---|

| Non-ionic Detergent (Triton X-100) | Disrupts colloidal aggregates; loss of inhibition in its presence confirms aggregation-based interference [1]. |

| Thiol-based Reagent (e.g., DTT, β-mercaptoethanol) | Acts as a reducing agent; can mitigate signal from redox-cycling compounds (RCCs) or quench thiol-reactive compounds (TRCs) [1]. |

| Luciferase Reporter Assay | Directly tests for compounds that inhibit the firefly or nano luciferase enzymes, a common source of false positives in HTS [1]. |

| MSTI Fluorescence Assay | A specific assay used to detect and characterize thiol-reactive compounds (TRCs) by monitoring fluorescence changes [1]. |

Platform Workflow and Assay Interference Pathways

Screening and Triage Workflow

The following diagram illustrates the logical workflow for using these platforms in a drug discovery pipeline, from virtual screening to experimental triage.

Mechanisms of Assay Interference

This diagram maps the core mechanisms of assay interference that ChemFH and Liability Predictor are designed to detect, showing how they lead to false positive signals.

Frequently Asked Questions (FAQs)

Q1: Our high-throughput screening (HTS) hit list is overwhelmed with false positives. How can a multi-task DMPNN model help where traditional filters like PAINS fail?

Traditional substructure filters (e.g., PAINS) are often oversensitive and fail to account for the full chemical context, leading to many valid compounds being flagged incorrectly [1]. A multi-task Directed Message Passing Neural Network (DMPNN) architecture addresses this by simultaneously learning multiple interference mechanisms—such as colloidal aggregation, luciferase inhibition, and chemical reactivity—from a large, high-quality dataset [24] [23]. This holistic approach evaluates a compound's risk based on its overall structure and predicted behaviors across multiple tasks, resulting in a more reliable and nuanced assessment than single-task or rule-based methods [24].

Q2: What does a "low-confidence" prediction mean, and how should we handle these results in our analysis?

A "low-confidence" prediction indicates that the model's uncertainty for a given compound is high, often because the compound's structural features are under-represented in the training data [23]. When this occurs:

- Do not automatically discard the compound. Treat it as an uncertain result.

- Perform manual inspection by checking for known alert substructures provided by the tool.

- Prioritize experimental validation using confirmatory assays (e.g., dose-response curves in the presence of detergents for aggregators) to make a final determination [1] [23].

Q3: The model performed well on our initial dataset but is generating unexpected results on new compound classes. What could be the cause?

This is typically a data drift issue. Machine learning models are trained on specific chemical spaces. If your new compounds possess scaffolds or functional groups not well-represented in the model's original training data, its predictions become less reliable. To troubleshoot:

- Audit your chemical library: Compare the new compounds' descriptors (e.g., molecular weight, logP) against the training set's chemical space.

- Re-train or fine-tune the model: If possible, incorporate new, labeled data from your specific compound classes to adapt the model.

- Use the model as a prioritization tool, not an absolute filter, and always confirm findings with orthogonal experimental assays [24].

Q4: What are the critical experimental parameters for validating a prediction of colloidal aggregation?

If the model flags a compound as a potential colloidal aggregator, confirmation requires a detergent-based assay [23]. The key parameters are:

- Critical Aggregation Concentration (CAC): Determine this using dynamic light scattering (DLS) or by measuring enzymatic inhibition in the presence of increasing compound concentrations.

- Detergent Reversal: The primary confirmatory test. Run your activity assay in the presence and absence of a non-ionic detergent like Triton X-100 (0.01%). A significant reduction or loss of activity in the presence of the detergent strongly supports the aggregation hypothesis [1] [23].

Troubleshooting Guide

| Problem | Possible Cause | Solution |

|---|---|---|

| High false negative rate in model predictions. | Model was trained on data that doesn't fully capture the chemical diversity of your library. | Curate a set of confirmed interferers from your lab and use them to test the model; consider fine-tuning if possible. |

| Inconsistent results between similar compounds. | The model is sensitive to specific substructures and their chemical environment, which is a strength, not an error. | Manually inspect the structures and the model's uncertainty estimates; run confirmatory assays for the specific compounds in question. |

| Cannot distinguish between specific interference mechanisms. | The compound may exhibit multiple interference behaviors, or the model's task-specific features are not discriminative enough. | Consult the tool's alert substructure library to see if a specific rule is triggered [23]. Design experiments that isolate a single mechanism (e.g., a counterscreen). |

Experimental Protocols & Methodologies

1. Protocol for Confirmatory Assay: Detergent-Based Reversal for Colloidal Aggregators

Purpose: To experimentally confirm that a hit compound's apparent activity is due to nonspecific colloidal aggregation [23].

Key Reagents:

- Purified target protein/enzyme.

- Hit compounds and a known inactive control.

- Assay buffer.

- Triton X-100 detergent.

Methodology:

- Prepare a dilution series of the hit compound in assay buffer.

- For each concentration, set up two parallel reactions:

- Standard Condition: Compound + Buffer + Target.

- Detergent Condition: Compound + Buffer + 0.01% Triton X-100 + Target.

- Initiate the reaction with the appropriate substrate and measure activity (e.g., fluorescence, absorbance).

- Include control wells with a known aggregator and a specific inhibitor.

Interpretation: A significant right-shift or complete loss of the dose-response curve in the detergent condition confirms the compound is a colloidal aggregator. Activity that persists in detergent suggests specific, target-related inhibition [1] [23].

2. Protocol for Confirmatory Assay: Luciferase Inhibitor Counterscreen

Purpose: To determine if a compound's activity in a luciferase reporter assay is due to target engagement or direct inhibition of the luciferase enzyme [1] [24].

Key Reagents:

- Firefly luciferase enzyme.

- D-luciferin substrate.

- Assay buffer with ATP.

- Hit compounds and a known luciferase inhibitor control.

Methodology:

- In a white, opaque plate, mix luciferase enzyme with assay buffer.

- Add the hit compound at the concentration where activity was observed in the primary screen.

- Initiate the reaction by injecting the D-luciferin substrate.

- Measure luminescence immediately using a plate reader.

- Normalize luminescence to control wells (enzyme + substrate without compound).

Interpretation: A significant reduction in luminescence compared to the control indicates direct inhibition of the luciferase enzyme, marking the compound as an assay artifact [1].

The following table summarizes the quantitative performance of a multi-task DMPNN model (as implemented in the ChemFH platform) in predicting various types of assay interferers [24].

Table 1: Performance Metrics of the Multi-task DMPNN Model for Interference Prediction

| Interference Mechanism | Balanced Accuracy (External Test Set) | Area Under the Curve (AUC) | Key Metric |

|---|---|---|---|

| Thiol Reactivity | 58-78% [1] | ~0.91 (Average across tasks) [24] | Predicts covalent modification of cysteine residues. |

| Redox Activity | 58-78% [1] | ~0.91 (Average across tasks) [24] | Identifies compounds that produce hydrogen peroxide. |

| Luciferase Inhibition | 58-78% [1] | ~0.91 (Average across tasks) [24] | Flags inhibitors of firefly or nano luciferase reporters. |

| Colloidal Aggregation | N/A (See ChemFH) | ~0.91 (Average across tasks) [24] | Detects compounds that form aggregates, denaturing proteins. |

Table 2: Essential Research Reagents for Experimental Validation

| Reagent / Material | Function in Validation |

|---|---|

| Triton X-100 | Non-ionic detergent used to disrupt colloidal aggregates in confirmation assays. |

| D-Luciferin | Substrate for firefly luciferase, used in counterscreens for luciferase inhibitors. |

| β-lactamase | A model enzyme often used in aggregation and promiscuity inhibition studies. |

| (E)-2-(4-mercaptostyryl)-1,3,3-trimethyl-3H-indol-1-ium (MSTI) | A fluorescent thiol-containing probe used in experimental assays to detect thiol-reactive compounds [1]. |

Workflow and Architecture Visualizations

DMPNN Multi-Task Architecture

HTS Hit Triage Workflow

High-throughput virtual screening is a cornerstone of modern drug discovery, enabling researchers to evaluate millions of compounds for potential biological activity. However, this approach is significantly hampered by false positives—compounds identified as active that subsequently prove inactive in experimental validation. These false positives consume substantial computational, temporal, and financial resources, ultimately slowing drug discovery pipelines.

The concept of Pan-Assay Interference Compounds (PAINS) represents an initial effort to address this challenge by identifying molecular substructures prone to promiscuous behavior across multiple assay types. While valuable, PAINS filters alone are insufficient for comprehensive false positive mitigation. This technical support center provides implementation guidance for two advanced frameworks: Quantitative Structure-Interference Relationship (QSIR) models and Representative Substructure Rules, which together offer a more sophisticated, data-driven approach to this persistent challenge.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between PAINS filters and a QSIR model?

PAINS filters operate as a binary classification system based on predefined structural alerts, whereas QSIR models are quantitative, probabilistic predictors [25]. A QSIR model uses machine learning algorithms trained on historical screening data to assign interference likelihood scores, enabling more nuanced risk assessment compared to the simple pass/fail outcome of PAINS filters [25].

Q2: Why are "Representative Substructure Rules" considered an advancement over traditional substructure filters?

Traditional substructure filters often rely on overly broad structural patterns, which can lead to the inappropriate elimination of genuinely promising compounds [25]. Representative Substructure Rules are derived from systematic analysis of confirmed interference mechanisms and incorporate contextual chemical environments, significantly improving their specificity while maintaining sensitivity [25].

Q3: What are the most common technical issues when implementing a QSIR model, and how can they be resolved?

Common implementation challenges include:

- Data Quality Issues: Models trained on small, uncurated, or biased datasets produce unreliable predictions [26].

- Feature Representation Problems: Inadequate molecular descriptors fail to capture essential interference characteristics [26].

- Overfitting: Complex models memorize training data artifacts rather than learning generalizable interference patterns [26].

Q4: How can researchers validate that their QSIR model is performing effectively before full deployment?

Effective validation requires a multi-faceted approach [26]:

- Internal Validation: Use k-fold cross-validation with stratified sampling to ensure performance consistency across diverse chemical structural classes.

- External Validation: Test the model against a completely held-out dataset not used in any training or parameter optimization steps.

- Prospective Validation: Apply the model to a new screening campaign and track its accuracy in predicting experimentally confirmed interference.

Q5: What specific metadata should be documented when applying substructure rules to ensure reproducibility?

Critical metadata for reproducibility includes [25]:

- Rule Set Version: The specific version of the rules applied.

- Chemical Environment Parameters: Any defined atomic neighborhoods or steric constraints.

- Software and Fingerprint Types: The cheminformatics toolkit and specific fingerprint algorithms used.

- Threshold Settings: Any similarity cutoffs or probability thresholds employed.

Troubleshooting Guides

QSIR Model Performance Issues

| Problem Symptom | Possible Causes | Diagnostic Steps | Resolution Steps |

|---|---|---|---|

| Poor predictive accuracy on new compound sets | Training data not representative of new chemical space; overfitting to training set. | 1. Analyze chemical space coverage via PCA [26].2. Check performance disparity between training/test sets. | 1. Expand training data with diverse analogs.2. Apply regularization techniques or simplify model complexity. |

| High false negative rate for known interferers | Model is overly conservative; key interference features are underrepresented. | 1. Analyze misclassification patterns [26].2. Review feature importance rankings. | 1. Adjust classification threshold.2. Add specialized molecular descriptors for missed mechanisms. |

| Inconsistent predictions across similar compounds | Unstable model; high sensitivity to small structural changes. | 1. Test predictions on structural analogs.2. Assess model certainty estimates. | 1. Ensemble modeling with multiple algorithms.2. Implement consensus prediction approach. |

Escalation Path: If performance issues persist after implementing these resolutions, consult with a computational chemistry specialist to review feature engineering and model architecture. Systematic performance validation against an external benchmark dataset is recommended [26].

Substructure Rule Application Errors

| Problem Symptom | Possible Causes | Diagnostic Steps | Resolution Steps |

|---|---|---|---|

| Valid compounds incorrectly flagged as interferers | Overly broad rule definitions; inappropriate threshold settings. | 1. Manually review false positives [25].2. Check rule match specificity. | 1. Refine rules with contextual constraints.2. Implement rule confidence scoring. |

| Known interferers not being captured | Rules lack necessary coverage; emerging interference mechanisms. | 1. Test against known interference compound set.2. Analyze structural features of missed interferers. | 1. Expand rule set with new patterns.2. Implement periodic rule set updates. |

| Inconsistent results across computing platforms | Differing cheminformatics toolkits; algorithm implementation variations. | 1. Run standardized test set on all platforms.2. Compare fingerprint implementations. | 1. Standardize software environment.2. Implement platform-specific validation tests. |

Validation Step: After implementing resolutions, verify system performance against a standardized validation set of 50-100 compounds with confirmed interference status [25].

Experimental Protocols & Data

QSIR Model Development Protocol

Purpose: To construct a validated Quantitative Structure-Interference Relationship model for predicting compound interference likelihood in high-throughput screening assays.

Materials:

- Confirmed interference compound database (minimum 500 compounds)

- Confirmed non-interference compound database (minimum 500 compounds)

- Cheminformatics software (RDKit, OpenBabel, or similar)

- Machine learning environment (Python/R with appropriate libraries)

Methodology:

- Data Curation: Compile and curate a dataset of compounds with experimentally confirmed interference status. Ensure balanced representation across major interference mechanisms (aggregation, reactivity, fluorescence, etc.) [27].

- Descriptor Calculation: Compute comprehensive molecular descriptors including (but not limited to): topological indices, electronic parameters, constitutional descriptors, and 3D molecular fields.

- Feature Selection: Apply feature selection algorithms (genetic algorithms, recursive feature elimination) to identify the most predictive descriptor subset.

- Model Training: Implement multiple machine learning algorithms (Random Forest, Support Vector Machines, Neural Networks) using k-fold cross-validation.

- Model Validation: Assess model performance using external validation sets and prospective testing [26].

Representative Substructure Rule Derivation Protocol

Purpose: To develop context-aware substructure rules for identifying compounds with high interference potential.

Materials:

- Structured database of confirmed interference compounds

- Matched non-interference compounds with similar scaffolds

- Cheminformatics toolkit with substructure mining capabilities

Methodology:

- Pattern Mining: Apply frequent subgraph mining algorithms to identify substructures enriched in interference compounds.

- Context Definition: For each candidate substructure, define the essential chemical environment that confers interference potential.

- Specificity Validation: Test each proposed rule against non-interference compounds to minimize false positives.

- Mechanistic Alignment: Where possible, correlate structural rules with established interference mechanisms.

- Performance Benchmarking: Compare derived rules against existing filters (PAINS, etc.) for sensitivity and specificity [25].

Table 1: Comparative Performance of Interference Detection Methods

| Method | Sensitivity (%) | Specificity (%) | False Positive Rate (%) | Coverage of Known Mechanisms |

|---|---|---|---|---|

| PAINS Filters | 72 | 85 | 15 | 6/10 |

| QSIR Model (Basic) | 88 | 82 | 18 | 8/10 |

| QSIR Model (Advanced) | 91 | 89 | 11 | 9/10 |

| Representative Substructure Rules | 85 | 93 | 7 | 7/10 |

| Combined Approach | 94 | 91 | 9 | 10/10 |

Table 2: Computational Resource Requirements

| Method | Setup Time (Person-Weeks) | Runtime per 10K Compounds | Required Expertise Level |

|---|---|---|---|

| PAINS Filters | <1 | <1 minute | Beginner |

| QSIR Model (Basic) | 4-6 | 5-10 minutes | Intermediate |

| QSIR Model (Advanced) | 8-12 | 15-30 minutes | Advanced |

| Representative Substructure Rules | 2-3 | 2-5 minutes | Intermediate |

| Combined Approach | 10-14 | 20-35 minutes | Advanced |

Workflow Visualization

QSIR Implementation Workflow

Substructure Rule Application Logic

Research Reagent Solutions

| Resource Name | Type | Function | Source/Implementation |

|---|---|---|---|

| Interference Compound Database | Data Resource | Curated collection of confirmed interference compounds with mechanisms | Internal compilation from published literature + proprietary data |

| Molecular Descriptor Toolkit | Software | Computes comprehensive molecular features for model development | RDKit, OpenBabel, or commercial alternatives |

| Rule-Based Filtering Engine | Software | Applies substructure rules with configurable parameters | KNIME, Pipeline Pilot, or custom Python scripts |

| Model Validation Framework | Methodology | Standardized protocols for performance assessment | Custom implementation following cross-validation standards |

| Performance Benchmark Suite | Testing Resource | Standardized compound sets for method comparison | Publicly available datasets + internally validated compounds |

In high-throughput screening (HTS), the reliable identification of true bioactive compounds is paramount. However, false positives arising from compound-mediated assay interference easily obscure genuine activity, as true active compounds are rare (~0.01–0.1% of a typical library) [28]. This technical guide provides troubleshooting advice and detailed protocols for implementing essential counterscreens to identify and eliminate these artifacts, thereby ensuring the selection of high-quality hits for further development.

FAQs: Addressing Common Counterscreening Challenges

1. My hit compound shows beautiful dose-response curves in my primary biochemical assay, but I suspect it is a promiscuous aggregator. How can I confirm this?

Aggregation-based inhibition is a leading cause of promiscuous enzyme inhibition and false positives in HTS [28]. To test for this:

- Add Detergent: Include a non-ionic detergent like Triton X-100 or CHAPS in your assay buffer at a final concentration of 0.01-0.1% [28]. A genuine inhibitor's potency will be largely unaffected, while an aggregator's activity will often be significantly reduced or abolished.

- Check for Steep Hill Slopes: Analyze the Hill slope of your dose-response curve. Aggregators often produce curves with unusually steep slopes (e.g., >1.5) [28].

- Test for Reversibility: Dilute the pre-formed compound-enzyme mixture significantly. Genuine, reversible inhibitors will show a loss of activity upon dilution, whereas the inhibition caused by aggregates is often not immediately reversible [28].

2. My primary screen uses a fluorescence-based readout. How do I rule out compound autofluorescence or signal quenching?

Compound fluorescence is a major source of interference in assays using light-based detection [28].

- Run a Pre-Read: Perform a fluorescence measurement of the compound in the assay buffer before initiating the reaction with the target or substrate. A high signal indicates autofluorescence.

- Use Orthogonal Detection: Confirm the activity using an assay with a fundamentally different readout technology, such as luminescence or absorbance [29]. A compound that is active only in the fluorescence-based assay but not in the orthogonal format is likely interfering with the detection system.

3. I have a hit from a cell-based reporter assay using firefly luciferase (FLuc). How can I be sure it's not just inhibiting the reporter enzyme?

Direct inhibition of common reporter enzymes like FLuc is a frequent cause of false positives in cell-based assays [28] [13].

- Perform a Counterscreen: Test your hit compound in a cell-free assay against the purified reporter enzyme (e.g., FLuc) under the same substrate conditions (at KM) used in your primary assay [28]. Concentration-dependent inhibition in this counterscreen confirms the compound is interfering with the reporter.

- Employ an Orthogonal Reporter: Engineer your cellular system to use a different reporter gene (e.g., Renilla luciferase, β-lactamase) for the same biological pathway. A true target-specific hit will modulate both reporters, while a FLuc-specific inhibitor will not [28].

4. My compound appears to react non-specifically. How can I test for redox activity or metal chelation?

- For Redox Cyclers: If your assay buffer contains strong reducing agents like DTT or TCEP, redox-cycling compounds (e.g., some quinones) can generate hydrogen peroxide, leading to apparent inhibition [28]. Add catalase to the reaction; a reduction in compound activity suggests the generation of H2O2 was responsible for the signal.

- For Chelators: If your target enzyme requires a metal cofactor (e.g., Mg2+, Zn2+), chelation of that metal can cause inhibition. Supplement the reaction with an excess of the required metal ion. If the compound's potency is significantly reduced, it may be acting as a chelator [13].

Troubleshooting Guides

Problem: High Rate of False Positives in a Biochemical HTS

Potential Causes and Solutions:

| Cause of Interference | Characteristic Signs | Recommended Counterscreens & Solutions |

|---|---|---|

| Compound Aggregation | Steep Hill slope; inhibition sensitive to enzyme concentration; reversible by detergent [28]. | Add 0.01-0.1% Triton X-100 to assay buffer [28]. |

| Compound Fluorescence | High signal in pre-read; activity not confirmed in orthogonal (e.g., luminescent) assays [29]. | Use red-shifted fluorophores; implement pre-read step; confirm with non-fluorescence assay [28]. |

| Redox Cycling | Activity is dependent on presence of reducing agent (DTT/TCEP); effect diminished by catalase [28]. | Replace DTT/TCEP with weaker agents (e.g., glutathione); include catalase control [28]. |

| Enzyme Reporter Inhibition | Active in cell-based reporter assays but inactive in orthogonal formats; inhibits purified reporter enzyme [28]. | Counter-screen against purified reporter enzyme (e.g., FLuc); use orthogonal cellular reporter [28]. |

Problem: Confirming Target Engagement in a Phenotypic Cell-Based Screen

Recommended Validation Workflow:

- Counterscreen for Cytotoxicity: Use a viability assay (e.g., CellTiter-Glo, MTT) to ensure the phenotype is not due to general cell death [29].

- Orthogonal Assay with Different Readout: If the primary screen was high-content imaging, use a biochemical assay on cell lysates, or vice versa [29].

- Use Relevant Disease Models: Confirm activity in more physiologically relevant models, such as primary cells or 3D cell cultures [29].

- Biophysical Target Engagement: Where possible, use techniques like Cellular Thermal Shift Assay (CETSA) or Surface Plasmon Resonance (SPR) to demonstrate direct binding to the intended target [30] [29].

Key Experimental Protocols

Protocol 1: Counterscreen for Compound Aggregation

Principle: Distinguish specific inhibitors from non-specific aggregators by exploiting the sensitivity of aggregates to detergents. Materials:

- Assay buffer (e.g., PBS or Tris-based)

- 10% (v/v) Triton X-100 stock solution

- Hit compound(s) in DMSO

- Positive control (known aggregator)

- Positive control (known specific inhibitor)

Method:

- Prepare two sets of identical reaction mixtures containing your target enzyme and substrate.

- To the experimental set, add Triton X-100 to a final concentration of 0.01%. To the control set, add an equivalent volume of buffer.

- Dispense the hit compounds and controls into both assay sets.

- Run the assay and generate dose-response curves for all compounds under both conditions.

- Interpretation: A significant right-shift (loss of potency) in the presence of detergent is indicative of aggregation-based inhibition. The activity of a specific inhibitor should remain relatively unchanged [28].

Protocol 2: Implementing an Orthogonal Assay for Hit Confirmation

Principle: Confirm biological activity using a detection method fundamentally different from the primary screen to rule out technology-specific interference. Materials:

- Cell line or enzyme system for the same biological target.

- Reagents for orthogonal detection (e.g., if primary was fluorescence, use luminescence or absorbance).

Method:

- Assay Design: Develop a secondary assay that measures the same biological endpoint but uses a different physical principle for detection.

- Primary: Fluorescence Polarization (FP) → Orthogonal: Time-Resolved Fluorescence Resonance Energy Transfer (TR-FRET) or Luminescence [29].

- Primary: Reporter Gene (FLuc) → Orthogonal: Reporter Gene (Renilla Luciferase) or HT-SPR [31].

- Primary: Biochemical Activity → Orthogonal: Cellular Thermal Shift Assay (CETSA) [30].

- Test all primary hit compounds in the orthogonal assay.

- Interpretation: Compounds that show congruent activity in both the primary and orthogonal assays are high-confidence hits. Those active in only one assay are likely artifacts of that specific detection system [29] [31].

Research Reagent Solutions

A toolkit of common reagents is essential for diagnosing and preventing assay interference.

| Reagent | Function in Counterscreening | Example Use Case |

|---|---|---|

| Triton X-100 (Detergent) | Disrupts compound aggregates, eliminating non-specific inhibition [28]. | Added to biochemical assay buffer at 0.01-0.1% to identify aggregators. |

| Catalase | Degrades hydrogen peroxide (H₂O₂), identifying redox-cycling compounds [28]. | Added to assay buffer to determine if H₂O₂ generation is causing apparent inhibition. |

| Dithiothreitol (DTT) | Reducing agent; its presence can promote redox cycling. Used diagnostically [28]. | Comparing compound activity in buffers with and without DTT (or with weaker agents like glutathione). |

| Bovine Serum Albumin (BSA) | Binds to and sequesters promiscuous, hydrophobic compounds, reducing non-specific binding [29]. | Added to assay buffers to reduce false positives from sticky compounds. |

| Purified Reporter Enzyme (e.g., FLuc) | Directly test if a compound inhibits the assay's detection enzyme rather than the biological target [28]. | Used in a counter-screen for cell-based assays employing a reporter gene system. |

Experimental Workflows and Pathways

Hit Triage and Validation Workflow

Orthogonal Assay Selection Logic

Practical Strategies for Assay Design and Hit Triage

Frequently Asked Questions (FAQs)

FAQ 1: What are PAINS, and why are they a critical concern in High-Throughput Screening (HTS)?

Pan-Assay Interference Compounds (PAINS) are chemical compounds or classes of compounds that appear as "hits" in a wide variety of biological assays through non-specific, undesirable mechanisms rather than through genuine, target-specific interactions [32]. These mechanisms can include chemical reactivity, compound aggregation, fluorescence, quenching, or redox cycling [33] [32]. PAINS are a critical concern because they are a major source of false positives in HTS campaigns. Pursuing these false leads consumes significant time and financial resources, with estimates suggesting that bringing a new drug to market can take 10-15 years and cost over $2.5 billion [33]. Early identification and removal of PAINS during library design are therefore essential for protecting the integrity and efficiency of the drug discovery pipeline [34].

FAQ 2: At what stage should PAINS filters be applied in the drug discovery workflow?

Computational PAINS filters should be applied proactively, ideally during the library design and preparation stage, before any screening occurs [34]. This pre-screening application ensures that valuable resources are not wasted on acquiring, plating, and screening compounds with a high propensity for interference. Furthermore, applying these filters during the hit validation process, immediately after a primary screen, helps triage results and prioritize compounds with a higher likelihood of genuine activity for follow-up [34]. A multi-stage filtering strategy is considered a best practice.

FAQ 3: My HTS campaign generated a high hit rate. How can I determine if PAINS are the cause?

A high hit rate (e.g., significantly above 1-2%) is a classic red flag for potential PAINS contamination [32]. To investigate, you can:

- Analyze Chemical Patterns: Check if the hit compounds are enriched with known PAINS substructures using computational filters [32].

- Profile with a Robustness Set: Screen your hits against a bespoke "Robustness Set" – a defined library of known bad actors (e.g., aggregators, redox cyclers, fluorescent compounds) [32]. If a large percentage (>25%) of this set appears active in your assay, it indicates a high susceptibility to interference [32].

- Examine Dose-Response Curves: PAINS often produce shallow or non-sigmoidal Hill slopes in dose-response experiments, indicating a non-specific mechanism of action [32].

- Implement Orthogonal Assays: Confirm activity using a secondary assay with a different detection technology (e.g., switching from fluorescence to mass spectrometry) to rule out technology-specific interference [33].

FAQ 4: Are there limitations to relying solely on computational PAINS filters?

Yes, while computational filters are invaluable, they are not infallible. Their limitations include:

- Context Dependence: A compound's interfering behavior can depend on specific assay conditions (e.g., buffer composition, protein concentration, detection method) [32]. A compound flagged as PAINS might be a genuine hit in a well-designed, robust assay.