Building Trust in Science: A 2025 Guide to Research Integrity in Materials Science & Engineering

This article provides a comprehensive guide for researchers and professionals on upholding research integrity in materials science.

Building Trust in Science: A 2025 Guide to Research Integrity in Materials Science & Engineering

Abstract

This article provides a comprehensive guide for researchers and professionals on upholding research integrity in materials science. It covers foundational principles, including the latest 2025 ORI definitions of misconduct and the materials science research cycle. The guide explores methodological applications of AI-powered image checking tools like Proofig, troubleshooting for common issues like image duplication and self-plagiarism, and validation techniques through robust training and electronic oversight systems. By synthesizing these areas, the article offers a actionable framework to prevent misconduct, enhance data credibility, and accelerate the reliable translation of materials research into real-world applications.

Understanding Research Integrity: Core Principles and the 2025 Landscape

Research misconduct represents a fundamental breach of the ethical principles that underpin the scientific enterprise. In materials science, where findings directly influence technological advancement and product development, maintaining rigorous standards of integrity is paramount. The Office of Research Integrity (ORI) provides the foundational definition that has guided research integrity policy for decades: research misconduct is strictly defined as fabrication, falsification, or plagiarism (FFP) in proposing, performing, reviewing, or reporting research [1]. It is crucial to note that this definition explicitly excludes honest error or differences of opinion [2]. This technical guide examines the current state of FFP within the context of materials science research, incorporating 2025 regulatory updates, detection methodologies, and preventative frameworks essential for researchers, scientists, and drug development professionals dedicated to upholding the highest standards of scientific integrity.

Core Definitions and Regulatory Framework

The FFP Triad: Official Definitions

The U.S. Office of Research Integrity precisely defines the three core elements of research misconduct [2]:

- Fabrication: Making up data or results and recording or reporting them as actual findings. In materials science, this could involve inventing characterization data, such as spectroscopic readings or mechanical property measurements.

- Falsification: Manipulating research materials, equipment, or processes, or changing or omitting data or results such that the research is not accurately represented in the research record. Examples include improperly manipulating microscopy images or selectively omitting inconsistent experimental results.

- Plagiarism: The appropriation of another person's ideas, processes, results, or words without giving appropriate credit. This encompasses copying another researcher's synthesis procedures, theoretical models, or descriptive text without attribution.

The 2025 ORI Final Rule: Key Updates

On January 1, 2025, the ORI implemented its long-awaited Final Rule revising the Public Health Service (PHS) Policies on Research Misconduct, marking the first major overhaul since 2005 [1]. Key enhancements particularly relevant to materials science include:

- Clarified Terminology: The Final Rule provides clearer legal and operational definitions for terms like "recklessness," "honest error," and notably, "self-plagiarism." While self-plagiarism and authorship disputes are now explicitly excluded from the federal definition of misconduct, they remain subject to institutional policies or publishing standards [1].

- Procedural Efficiency: Institutions can now add new respondents or allegations to an ongoing investigation without restarting the entire process, significantly improving the efficiency of addressing complex cases that may involve multiple researchers or projects [1].

- Modern Research Structures: The rule introduces streamlined procedures for handling data confidentiality, record sequestration, and international collaborations, addressing common challenges in large, multi-institutional materials science research consortia [1].

Table 1: Key Provisions of the 2025 ORI Final Rule with Implications for Materials Science

| Provision | Key Change | Significance for Materials Science |

|---|---|---|

| Definition Clarification | Explicit exclusion of self-plagiarism from federal misconduct definition | Clarifies boundaries for reusing methodological descriptions in multiple papers |

| Investigation Flexibility | Ability to add respondents/allegations without restarting process | Efficient handling of multi-project, multi-researcher misconduct cases |

| International Collaboration | Streamlined procedures for cross-border investigations | Addresses complexities in global materials research partnerships |

| Implementation Timeline | Full compliance required by January 1, 2026 | Allows institutions time to adapt policies and training programs |

Quantitative Landscape of Research Misconduct

Global Disparities in Research Integrity

Recent data reveals significant patterns in research misconduct and integrity training across the global research ecosystem. A 2025 Springer Nature white paper analyzing surveys from seven countries demonstrated substantial variations in research integrity training access, with China (79%) and Japan (73%) reporting the highest access rates, followed by the United States (56%), and Brazil showing the lowest at 27% [3]. Despite these disparities, an overwhelming majority of researchers (84-94%) across all surveyed countries support mandatory research integrity training at some point in their careers [3].

Analysis of exclusion patterns from Clarivate's Highly Cited Researchers list reveals field-specific integrity challenges. In 2024, 2,045 unique individuals were excluded from the list due to behaviors indicative of research misconduct or metric manipulation, a dramatic increase from approximately 300 researchers (4.5% of candidates) excluded in 2021 [4]. Engineering had the highest exclusion rate at 8.9%, highlighting particular challenges in fields adjacent to materials science [4].

Table 2: Research Integrity Training Access and Outcomes by Country (2025)

| Country | Access to Training | Support for Mandatory Training | Retraction Rate Context |

|---|---|---|---|

| China | 79% | 84-94% (range across all countries) | Higher retraction rates despite high training access |

| Japan | 73% | 84-94% | Moderate retraction rates |

| United States | 56% | 84-94% | Moderate retraction rates |

| United Kingdom | 51% | 84-94% | Lower retraction rates despite lower training access |

| Brazil | 27% | 84-94% | Lower retraction rates despite lowest training access |

The persistence of citations to retracted research represents a significant integrity challenge across scientific disciplines, including materials science. Multiple studies confirm that retracted papers continue to be cited extensively after retraction, with most citations failing to acknowledge the retraction status [5]:

- In radiation oncology, 34 retracted papers were cited 576 times after retraction, with 92% of citing studies treating the work as legitimate [5].

- Exercise physiology saw 9 retracted papers cited 469 times after retraction, with no citations in a 20% sample acknowledging the retraction [5].

- COVID-19 literature demonstrated similar patterns, with 212 retracted papers cited approximately 650 times after retraction, and 80% of citations treating the retracted work as valid [5].

These citation patterns highlight the critical need for improved notification systems and researcher awareness regarding retracted literature, particularly in fast-moving fields like materials science where prior work heavily influences subsequent research directions.

Detection Methodologies and Experimental Protocols

Image Manipulation Detection in Materials Characterization

Image manipulation represents a prevalent form of falsification in materials science, particularly involving characterization techniques such as electron microscopy and spectroscopy. The following experimental protocol outlines a standardized approach for detecting image manipulation:

Protocol: Forensic Analysis of Scanning Electron Microscopy (SEM) Images

- Metadata Analysis: Extract and examine EXIF metadata from digital image files, verifying consistency between reported instrument settings and image characteristics [5].

- Error Level Analysis (ELA): Identify regions of inconsistent compression levels that may indicate copy-paste manipulation or alteration.

- Clone Detection Algorithm: Apply pixel-based duplication detection algorithms (e.g., using ImageTwin, Proofig) to identify copied and pasted image elements within or between figures.

- Background Consistency Analysis: Examine background noise patterns across different image regions for inconsistencies suggesting manipulation.

- Instrument Verification: Cross-reference reported magnification scales with known feature sizes and confirm consistency of instrumental signatures.

A 2025 forensic scan of 11,314 materials-science papers containing SEM images found that 2% had mismatched instrument metadata, and these papers were significantly more likely to contain analytic errors, establishing a link between poor reporting and potential fraud [5].

Data Fabrication Detection Through Statistical Forensics

Statistical analysis provides powerful tools for identifying potentially fabricated data in materials science research:

Protocol: Benford's Law Analysis for Experimental Data

- Data Extraction: Compile numerical data from reported measurements (e.g., material properties, synthesis yields, performance metrics).

- First-Digit Distribution: Apply Benford's Law to analyze the frequency distribution of leading digits in reported numerical values.

- Deviation Analysis: Calculate the chi-square statistic to quantify conformity between observed digit frequencies and expected Benford distribution.

- Contextual Interpretation: Consider field-specific contextual factors that may legitimately influence digit distributions before concluding potential fabrication.

This methodological approach is particularly valuable for identifying anomalies in large datasets reporting material properties or performance metrics that may indicate selective reporting or outright fabrication.

Emerging Threats: Paper Mills and AI in Materials Science

The Paper Mill Threat to Materials Science

Paper mills—fraudulent organizations that produce and sell fabricated research papers—represent an increasingly sophisticated threat to research integrity. A 2025 study detailed the scope of just one paper mill, Tanu.pro, which was linked to 1,517 fraudulent papers across 380 journals, involving more than 4,500 scholars from 46 countries [5]. Springer Nature reported receiving 8,432 submissions tied to this single paper mill, with nearly 80 making it into print despite detection efforts [5].

Paper mills targeting materials science research often exhibit specific characteristics:

- Citation Networks: Fabricated author identities cite each other's work, creating closed networks of validation that can artificially inflate perceived impact [5].

- Methodological Templates: Reuse of similar methodological approaches with minor variations across multiple papers.

- Image Recombination: Repurposing of legitimate characterization images with manipulated labels or contexts.

Artificial Intelligence: Dual-Use Potential

AI technologies present both challenges and solutions for research integrity in materials science:

Threats:

- AI-generated text can produce plausible-sounding but scientifically meaningless manuscripts [6].

- AI-powered image generation and manipulation tools can create convincing but fabricated characterization data [7].

- Automated paraphrasing tools can obscure plagiarized content through "tortured phrases"—unusual word substitutions that evade traditional plagiarism detection [7].

Solutions:

- AI-driven screening tools can flag suspicious phrasing patterns indicative of automated paraphrasing [7].

- Network analysis algorithms can identify anomalous citation patterns and authorship networks characteristic of paper mills [6].

- Automated image forensics can detect manipulation patterns across large publication volumes that would be impractical for human reviewers [5].

Table 3: Research Reagent Solutions for Integrity Verification

| Tool/Category | Specific Examples | Function in Integrity Verification |

|---|---|---|

| Image Analysis Tools | ImageTwin, Proofig | Detect image duplication and manipulation in microscopy data |

| Plagiarism Detection | iThenticate, Turnitin | Identify textual plagiarism and inappropriate duplication |

| AI-Anomaly Detection | Problematic Paper Screener, Springer Nature's tortured phrase detector | Flag AI-generated text and manipulated phrasing |

| Data Forensics | STM Integrity Hub, Benford's Law analysis | Identify statistical anomalies in reported data |

| Citation Analysis | Clarivate analytics, Citation network mapping | Detect citation cartels and anomalous citation patterns |

Prevention Frameworks for Materials Science Research

Institutional Responsibility and Culture

Creating a culture of research integrity requires committed engagement from research institutions. The Association for Practical and Professional Ethics (APPE) recommends several evidence-based strategies for institutions [8]:

- Periodic Program Inventory: Conduct regular internal, institution-wide inventories of Responsible Conduct of Research (RCR) programs to assess coverage and effectiveness.

- Adequate Resource Allocation: Assess the cost of effective RCR education and commit appropriate funding and resources to integrity initiatives.

- Tailored Training: Provide and encourage participation in appropriate, engaging RCR training tailored to specific researcher roles and career stages.

- Climate Assessment: Leverage existing tools to conduct periodic assessments of both the research integrity climate on campus and the effectiveness of RCR training programs.

Effective Training Methodologies

The 2025 Springer Nature white paper on research integrity training revealed that few researchers (7-29%) in any surveyed country are required to demonstrate understanding via mandatory testing; assessments often rely instead on simpler measures of self-awareness or participation in training discussions [3]. This highlights a critical gap in training effectiveness that materials science institutions should address through:

- Competency-Based Assessment: Implement mandatory testing with passing thresholds to verify comprehension of key integrity concepts.

- Case-Based Learning: Utilize real-world scenarios relevant to materials science research practices.

- Hybrid Delivery Models: Combine consistent core curriculum delivered online with practical, discipline-specific components delivered in person.

- Career-Stage Appropriateness: Tailor training content to researcher experience levels, from graduate students to principal investigators.

Addressing research misconduct in materials science requires a multi-faceted approach that combines clear definitions, robust detection methodologies, and preventative institutional cultures. The 2025 regulatory updates provide a more flexible framework for addressing misconduct, while technological advances offer both new challenges and powerful detection capabilities. For materials science researchers and drug development professionals, maintaining vigilance against FFP is not merely about compliance, but about preserving the foundational trust that enables scientific progress and the translation of research into practical applications that benefit society. As research practices continue to evolve, particularly with the integration of AI tools, the materials science community must remain proactive in developing and implementing integrity safeguards that match the sophistication of both legitimate research practices and emerging forms of misconduct.

In the field of materials science and engineering, the complex journey from hypothesis to validated knowledge requires a structured framework to ensure both scientific rigor and societal impact. Transitioning to independent research can be a culture shock for students and early-career professionals who may only understand research through the simple framework of the scientific method [9]. A comprehensive research cycle extends far beyond experimentation to include the dissemination, discussion, and further refinement of results, allowing them to become part of the collective body of knowledge [9]. Research integrity—guided by principles of honesty, transparency, and respect for ethical standards—serves as the foundational pillar supporting this entire process, upholding society's trust in science and fostering genuine scientific progress [10] [11]. This whitepaper outlines an explicit model for the materials science research cycle, integrating research integrity as a core component to advance the field's reliability and impact.

The Research+ Cycle: A Structured Framework for Knowledge Creation

To address the challenges of modern materials research, a refined model known as the Research+ cycle has been proposed. This model explicitly outlines the steps researchers can use to advance their field's collective knowledge [9]. It is based on an idealized six-step process but incorporates critical enhancements to reflect the real-world complexities of scientific inquiry.

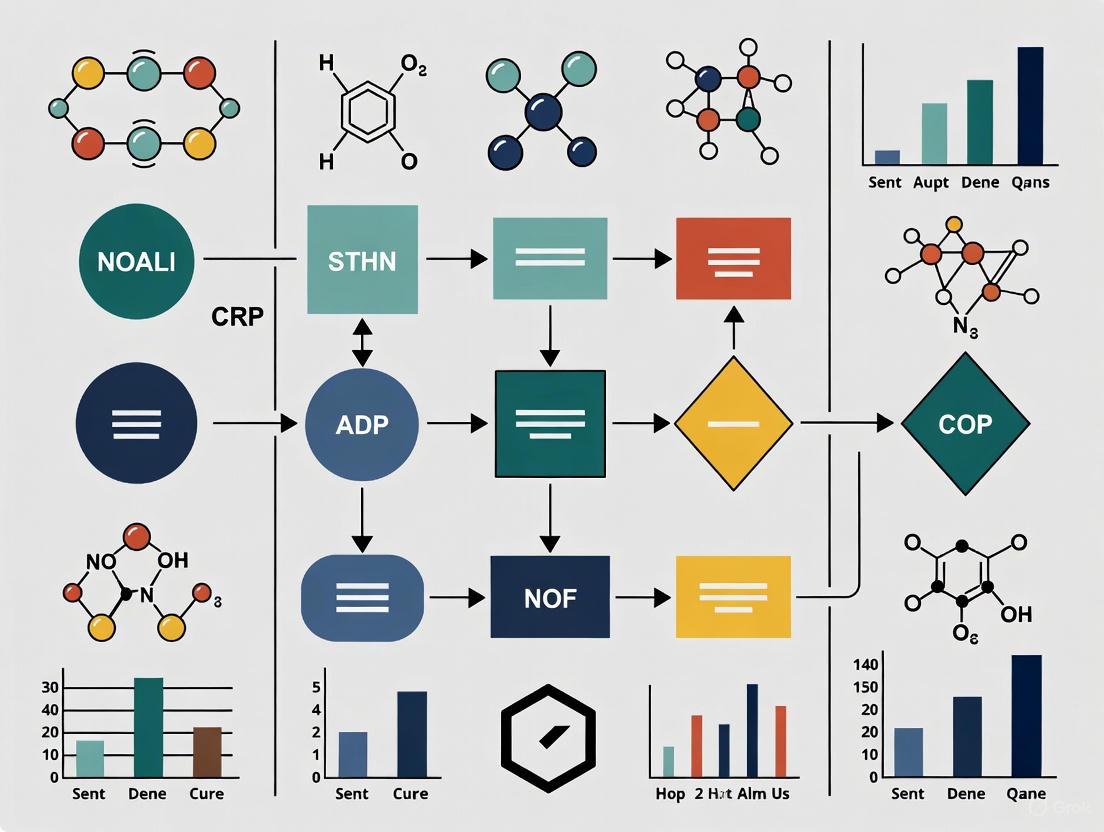

The following diagram illustrates the integrated Research+ Cycle, which places the understanding of the existing knowledge base at its core.

This model enhances the traditional research process with three critical, often overlooked steps [9]:

- Understand the existing body of knowledge: This foundational activity is placed at the center of the methodology, informing all aspects of being a researcher.

- Explicitly state how research questions align with societal goals: Research agendas often shift with societal focus, making this alignment crucial for relevance and funding.

- Refine methodologies and replicate results: Tacit knowledge is often used to iteratively refine methods; making this explicit helps early-career researchers develop critical evaluation skills.

A key strength of this framework is its inclusive definition of a researcher as "one who engages with any part of the research cycle with the intent of developing new structure–properties–performance–processing knowledge," regardless of whether they participate in all aspects [9]. This acknowledges the collaborative and specialized nature of modern materials science.

Quantitative Data Collection and Analysis in Materials Science

Methods for Quantitative Data Collection

Quantitative research in materials science relies on objective measurements and the statistical analysis of numerical data to quantify variables of interest and uncover patterns [12]. The table below summarizes the primary quantitative data collection methods relevant to materials science research.

Table 1: Quantitative Data Collection Methods for Materials Science

| Method | Description | Application in Materials Science |

|---|---|---|

| Online Surveys | Closed-ended questions distributed digitally to gather comparable data from large audiences [13]. | Collecting standardized performance data on new materials from multiple research institutions. |

| Structured Observations | Systematic recording of behaviors or processes using set parameters, focusing on numerical counts and measurements [13]. | Documenting the number of times a material fails under specific stress conditions in a controlled test. |

| Document Review & Secondary Data | Analysis of existing research, public records, company databases, and published literature [13]. | Leveraging existing material property databases to establish baseline performance metrics. |

| Structured Interviews | Verbal administration of surveys with mainly closed-ended questions (yes/no, multiple choice, rating scales) [13]. | Gathering standardized feedback from experts on the practical applicability of a new material synthesis technique. |

Statistical Analysis Techniques

Once collected, quantitative data undergoes statistical analysis to draw meaningful conclusions. The choice of technique depends on the research questions and the nature of the data.

Table 2: Statistical Techniques for Materials Science Data Analysis

| Technique | Purpose | Materials Science Application Example |

|---|---|---|

| Descriptive Statistics | Summarize and describe data features through measures of central tendency and dispersion [12]. | Calculating mean tensile strength, median fatigue cycles, and standard deviation of ceramic hardness measurements. |

| Inferential Statistics | Make predictions about a population based on a sample using hypothesis testing and confidence intervals [12]. | Determining if observed differences in alloy corrosion resistance are statistically significant between treatment groups. |

| Multivariate Analysis | Explore complex relationships between multiple variables simultaneously [12]. | Understanding how processing temperature, pressure, and cooling rate collectively affect polymer crystallinity and strength. |

Integrating Research Integrity Throughout the Research Cycle

Defining Research Integrity and Misconduct

Research integrity refers to a set of moral and ethical standards that serve as the foundation for executing research activities. It incorporates principles of honesty, transparency, and respect for ethical standards and norms throughout all research stages, from design and data collection to analysis, reporting, and publishing [11] [14]. The core of research misconduct is traditionally defined by three primary violations [11] [14]:

- Fabrication: Making up data or results and recording or reporting them.

- Falsification: Manipulating research materials, equipment, processes, or changing/omitting data or results such that the research is not accurately represented.

- Plagiarism: Appropriating another person's ideas, processes, results, or words without giving appropriate credit.

The following diagram illustrates how integrity principles are integrated into each stage of the Research+ Cycle to create a self-correcting, ethical research ecosystem.

Institutional Frameworks Supporting Research Integrity

Multiple stakeholders share responsibility for maintaining research integrity throughout the research cycle [11]:

- Researchers must adhere to the highest ethical standards, self-regulate, and assume responsibility for promoting scientific knowledge with integrity.

- Research Supervisors and Mentors should arrange comprehensive discussions about scientific misconduct and guide students through challenges, serving as role models and treating errors as teaching opportunities [11].

- Research Institutions play a crucial role in establishing an atmosphere that supports integrity ideals, providing guidance, instruction, and assistance to researchers. This includes establishing RI departments, creating mechanisms for preventing misconduct, and protecting whistleblowers [11].

- Journals and Editors act as protectors of quality and ethical standards in the dissemination of research results through enhanced peer review processes [11].

Experimental Protocols and Methodologies in Materials Science

Quantitative Research Workflow

The following diagram outlines a generalized experimental workflow for quantitative research in materials science, highlighting key stages from hypothesis development through data analysis and validation.

Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for Materials Science Experimentation

| Item | Function in Research | Application Example |

|---|---|---|

| Validated Measurement Tools | Instruments with proven reliability and accuracy for quantifying material properties. | Nanoindenters for hardness testing, spectrophotometers for optical properties, SEM for microstructural analysis. |

| Standard Reference Materials | Certified materials with known properties used for instrument calibration and method validation. | NIST standard reference materials for calibrating thermal analysis equipment. |

| Data Collection Instruments | Structured tools for gathering quantitative data according to research design. | Standardized survey instruments for collecting lab performance data across multiple research sites. |

| Statistical Analysis Software | Tools for performing descriptive, inferential, and multivariate analysis on research data. | Software like R, Python with scientific libraries, or specialized packages for analyzing structure-property relationships. |

| Laboratory Notebooks | Detailed, chronological records of experimental procedures, observations, and results. | Maintaining rigorous documentation for replication studies and intellectual property protection. |

Effective Data Visualization and Communication

Principles for Tables and Figures

Effective communication of research findings requires careful consideration of data presentation. Tables and figures should be used to present complicated information in ways that are accessible and understandable to the reader [15].

Table 4: Guidelines for Effective Data Presentation in Materials Science

| Element | Tables | Figures |

|---|---|---|

| Primary Purpose | Present lists of numbers or text in columns; synthesize literature; explain variables; present raw data [15]. | Visual presentations of results; show trends and patterns; communicate processes; display complicated data simply [15]. |

| Key Considerations | Organize so like elements read down, not across; ensure decimal points align; use clear column titles with units [15]. | Choose the simplest effective visualization; ensure sufficient size and resolution; consider color blindness [15] [16]. |

| Title/Caption Placement | Above table, left-justified [15]. | Below figure, left-justified [15]. |

| Accessibility Requirements | Use clear demarcation between parts; avoid gridlines in printed versions [15]. | Maintain minimum color contrast ratio of 3:1 for graphical objects and 4.5:1 for text [17] [16]. |

Color Contrast Requirements for Accessibility

When creating figures for publication, adherence to Web Content Accessibility Guidelines (WCAG) ensures that visual materials are accessible to all readers, including those with visual impairments [17] [16]:

- Normal text should have a contrast ratio of at least 4.5:1 (AA rating) or 7:1 (AAA rating)

- Large-scale text (120-150% larger than body text) should have a contrast ratio of at least 3:1 (AA) or 4.5:1 (AAA)

- Graphical objects and user interface components (such as graphs and icons) require a contrast ratio of at least 3:1 (AA)

These guidelines help ensure that research findings are communicated effectively to the broadest possible audience, a key component of research integrity and transparency.

The Materials Science Research Cycle, particularly the Research+ model, provides a comprehensive framework for robust knowledge creation when integrated with strong research integrity principles. This structured approach—encompassing understanding existing knowledge, identifying gaps, constructing hypotheses, designing methodologies, applying methods, evaluating results, and communicating findings—creates a self-correcting system that advances reliable scientific knowledge. By embedding integrity throughout this cycle and employing rigorous quantitative methods and transparent communication, materials science researchers can enhance the reliability and impact of their work, ultimately contributing to scientific progress that earns and maintains public trust. The collective responsibility of researchers, mentors, institutions, and publishers in upholding these standards ensures that the materials science field continues to develop knowledge that is both scientifically sound and socially beneficial.

The reliability of scientific research, particularly in fields with direct human impact like materials science and drug development, is the cornerstone of progress. However, the ecosystem is increasingly threatened by research misconduct, which encompasses fabrication, falsification, and plagiarism [18]. A 2025 analysis of the integrity landscape reveals that industrial-scale fraud operations, known as "paper mills," now pose a significant threat, having produced over 1,500 fraudulent papers across hundreds of journals [5]. This whitepaper delineates the severe, multi-faceted consequences of misconduct—from staggering financial costs to irreparable reputational harm—and frames them within a broader thesis on building a more resilient and ethical research culture in materials science. The stakes extend beyond individual careers to the very credibility of the scientific enterprise and the safety of the public that relies on its findings.

Quantifying the Impact: Financial and Career Consequences

The consequences of research misconduct are not merely theoretical; they can be measured in millions of wasted dollars and truncated careers. Understanding this quantitative impact is crucial for appreciating the full scope of the problem.

Direct Financial Costs

Public funds allocated for research are significantly squandered when misconduct leads to retraction. An analysis of papers retracted due to misconduct between 1992 and 2012 found they accounted for approximately $58 million in direct funding from the National Institutes of Health (NIH) [19] [18]. The financial burden per retracted paper is substantial, with a mean attributable cost of $392,582 and a median of $239,381 [19]. Furthermore, an estimate of the total funding for all NIH grants that contributed in any way to retracted papers reached nearly $2.3 billion when adjusted for inflation [19]. These figures represent pure waste—resources that could have supported valid, transformative research.

Table 1: Financial Costs of Research Misconduct (NIH, 1992-2012)

| Metric | Value | Details |

|---|---|---|

| Total Direct NIH Funding for Retracted Articles | $58 million | Accounts for articles retracted due to misconduct [19] [18] |

| Mean Attributable Cost per Article | $392,582 | Standard Deviation: $423,256 [19] |

| Median Attributable Cost per Article | $239,381 | More representative of a "typical" case due to skewed distribution [19] |

| Total Grant Funding for Grants Citing Retracted Papers | $2.32 billion | Value in 2012 dollars, accounting for inflation [19] |

Consequences for Research Careers

A finding of misconduct profoundly impacts the productivity and funding of the researchers involved. Analysis of senior authors named in Office of Research Integrity (ORI) findings shows a median 91.8% decrease in publication output after censure [19]. A stark 55% of these authors ceased publishing entirely in the three years following the ORI report [19]. This decline is also reflected in research funding, as censure often includes debarment from contracts with public health services for a period of time [19]. This data indicates that misconduct is typically, though not always, a career-ending event.

Table 2: Impact of Misconduct Finding on Researcher Productivity (ORI Data)

| Analysis Period | Pre-Misconduct Finding Publications | Post-Misconduct Finding Publications | Percentage Change |

|---|---|---|---|

| 3-Year Interval | 256 (Median 1.0/year) | 78 (Median 0/year) | -69.5% [19] |

| 6-Year Interval | 552 (Median 1.2/year) | 140 (Median 0/year) | -74.6% [19] |

| Career-Long Analysis | Median 2.9/year | Median 0.25/year | Median -91.8% [19] |

Detection and Analysis: Methodologies for Upholding Integrity

Combating research fraud requires sophisticated detection protocols. The following methodologies, drawn from current publisher practices, form a frontline defense.

Integrity Screening and Image Forensics

Protocol 1: Scalable Integrity Screening (e.g., PLOS) This multi-layered approach is designed to filter submissions at scale before peer review [5].

- Cross-Publisher Duplicate Submission Check: Utilize the STM Integrity Hub to detect manuscripts submitted simultaneously to multiple publishers.

- Automated Digital Forensics: Employ specialized software to conduct:

- Plagiarism Analysis: Identify textual duplication.

- Image Analysis: Flag potential manipulation in gels, blots, and micrographs (e.g., copy-paste duplication, spliced lanes). A 2025 forensic scan of over 11,000 materials-science papers with SEM images used metadata mismatches to identify papers with a higher likelihood of analytic errors [5].

- Contributor Behavior Audit: Scrutinize requests to add multiple authors post-submission, a known red flag.

- Targeted Screening: Apply pre-review checks to study types prone to misuse, such as systematic reviews and Mendelian randomization studies.

- Outcome: Implementation of this protocol raised desk rejection rates from 13% to 40%, conserving valuable peer-review resources [5].

Protocol 2: Network Analysis for Industrial-Scale Fraud This method identifies coordinated misconduct by mapping digital fingerprints across the literature [6].

- Data Collection: Aggregate data on authors, affiliations, citations, and methodological patterns from thousands of papers.

- Cluster Identification: Use AI and data analytics to map connections and identify closed citation networks, suspicious author clusters, and recurrent methodological templates.

- Pattern Recognition: Identify signals such as "tortured phrases" (awkward, synonym-substituted language to avoid plagiarism detectors) and abnormal citation concentrations around a specific author or journal cluster [5].

- Human Oversight: Expert investigators interpret the flagged patterns to confirm fraud, as AI excels at flagging anomalies but human judgment is required for final determination [5] [6].

Workflow Visualization: Research Integrity Pipeline

The following diagram illustrates the multi-stage defense system for maintaining research integrity, from submission to post-publication, integrating both technological tools and human judgment.

Diagram 1: Research integrity defense workflow.

Research Reagent Solutions for Integrity

Beyond detection, maintaining integrity involves using specific tools and frameworks to ensure transparency and accountability.

Table 3: Essential Tools and Frameworks for Research Integrity

| Tool/Framework | Primary Function | Application in Materials Science |

|---|---|---|

| ORCID ID | Provides a unique, persistent digital identifier for researchers. | Disambiguates author identity, ensures proper attribution of work, and links researchers to their affiliations and publications [20]. |

| CRediT (Contributor Roles Taxonomy) | Standardized taxonomy to clarify the specific contributions of each author. | Eliminates ghost authorship and clarifies roles in complex, multi-disciplinary materials science projects [5]. |

| STM Integrity Hub | A cross-publisher collaboration platform. | Allows journals to detect duplicate submissions across a wide portfolio of publications, a common tactic of paper mills [5]. |

| Image Forensics Software | Automated tools to detect image manipulation. | Scans SEM images, XRD patterns, and other graphical data for duplication, splicing, or inappropriate manipulation [5]. |

| DataSeer & Open Science Platforms | Tools to promote and monitor data sharing. | Encourages deposition of raw data and code for materials characterization and modeling, enabling reproducibility and validation [6]. |

Systemic Consequences and the Path to Improvement

The ripple effects of misconduct extend far beyond the immediate parties involved, damaging the entire scientific ecosystem and public trust.

Erosion of Trust and Persistence of Invalidated Work

Fraud in research undermines the public's trust in science and can lead to real-world harms, such as the release of ineffective drugs or unsafe medical devices [18]. A persistent problem is that even when fraud is uncovered, the scientific record is not always corrected. Fewer than 25% of known paper-mill articles are formally retracted [5]. Consequently, retracted papers continue to be cited as valid evidence. Studies across multiple disciplines (e.g., radiation oncology, dentistry, COVID-19 literature) show that a vast majority of post-retraction citations—often 80-90%—fail to acknowledge the retraction, meaning flawed or fraudulent data continues to pollute the literature and mislead future research [5].

Strategies for a Resilient Research Integrity Framework

Building a more robust system requires coordinated action from all stakeholders in the research enterprise. The following strategies, drawn from recent national dialogues and policy reports, provide a roadmap for improvement [21] [8].

Harmonize and Tier Federal Regulations: Inconsistencies across agencies create confusion and administrative burden. A 2025 National Academies report recommends creating a centralized role in the White House Office of Management and Budget to coordinate requirements and adopting a risk-based approach where oversight is "tiered to the nature, likelihood, and potential consequences of risks" [21]. For materials science, this could mean streamlining oversight for low-risk computational studies while maintaining rigorous oversight for research involving hazardous materials.

Foster Institutional Accountability and Culture: Research institutions must move beyond compliance-based training. The Association for Practical and Professional Ethics (APPE) recommends that institutions conduct periodic internal inventories of their Responsible Conduct of Research (RCR) programs, assess their cost-effectiveness, and leverage tools to evaluate the research integrity climate on campus [8]. Leadership must demonstrate an unwavering commitment to ethics.

Implement a Single Federal Misconduct Policy: Differing standards for research misconduct proceedings across agencies lead to confusion. A key policy option is to establish a single, flexible federal misconduct policy that all agencies adhere to, ensuring clarity in definitions and investigative processes [21].

Accelerate the Adoption of Open Science Practices: Transparency is a powerful antidote to fraud. When researchers openly share data, code, and methodologies, it becomes substantially more difficult to sustain deception [6]. Funders and institutions should create stronger incentives for data sharing and provide the tools to make sharing frictionless.

Reimagine Research Assessment: The current emphasis on publication quantity and journal impact factor perversely incentivizes misconduct. The research community must shift toward multifaceted metrics that consider transparency, reproducibility, and meaningful contribution over mere output [6]. This reduces the pressure to "publish or perish" that drives unethical behavior.

The stakes of research misconduct are unacceptably high, encompassing the massive waste of public funds, devastation of individual careers, erosion of public trust, and persistent contamination of the scientific record. For the fields of materials science and drug development, where progress directly impacts human health and safety, the cost of inaction is intolerable. Addressing this crisis requires a concerted shift from reactive detection to proactive prevention. By implementing harmonized policies, fostering accountable institutional cultures, mandating transparency, and re-evaluating the incentives that drive research, the scientific community can fortify its integrity. The path forward demands collaboration across disciplines, open dialogue between stakeholders, and a collective commitment to an ecosystem where reliability is demonstrated, quality is paramount, and ethical progress is the ultimate measure of success.

In the evolving landscape of academic publishing, retractions serve as a critical mechanism for maintaining the integrity of the scientific record. The year 2025 has provided significant case studies that highlight both persistent challenges and emerging trends in research integrity, particularly relevant for researchers in materials science and drug development. Analysis of the most highly cited retracted papers reveals a troubling pattern: many continue to accumulate citations years after their retraction, perpetuating the dissemination of unreliable science [22]. This comprehensive review examines these recent cases to extract actionable lessons for improving research practices, data integrity, and institutional responses within the materials science community.

Recent data from Retraction Watch reveals several highly cited papers retracted in 2024-2025, demonstrating the significant impact these publications continue to have despite their retracted status [22]. The scale of the problem is substantial; while retractions were once rare (1 in 5,000 papers in 2002), they have increased dramatically to approximately 1 in 500 papers by 2023 [23].

Table 1: Most Highly Cited Retracted Papers (2024-2025)

| Article Title | Journal | Year of Retraction | Citing Articles Before Retraction | Citing Articles After Retraction | Total Cites |

|---|---|---|---|---|---|

| Pluripotency of mesenchymal stem cells derived from adult | Nature | 2024 | 4,491 | 29 | 4,520 |

| Hydroxychloroquine and azithromycin as a treatment of COVID-19 | International Journal of Antimicrobial Agents | 2024 | 3,171 | 27 | 3,198 |

| A specific amyloid-β protein assembly in the brain impairs memory | Nature | 2024 | 2,359 | 31 | 2,390 |

| Predictive Validity of a Medication Adherence Measure | The Journal of Clinical Hypertension | 2023 | 1,931 | 271 | 2,202 |

| MicroRNA signatures of tumor-derived exosomes as diagnostic biomarkers | Gynecologic Oncology | 2023 | 1,868 | 79 | 1,947 |

The concerning trend of post-retraction citation is particularly evident in cases like the 2005 Science paper on visfatin, which received 1,340 citations after its 2007 retraction [22]. This persistent citation of retracted literature represents a significant contamination of the scientific ecosystem that researchers must actively guard against.

Analysis of Notable 2025 Retraction Cases

The "Arsenic Life" Controversy: A 15-Year Journey to Retraction

After nearly 15 years of controversy, Science formally retracted the influential "arsenic life" paper in 2025 [24]. The original 2010 publication claimed the discovery of a microbe, GFAJ-1, capable of using arsenic instead of phosphorus in its biochemical processes—a finding with potential implications for understanding life on Earth and beyond.

Experimental Methodology and Flaws: The researchers employed extreme environment sampling from Mono Lake, California, culturing the bacterium GFAJ-1 in increasingly phosphorus-depleted conditions with high arsenic concentrations. They reported incorporation of arsenic into DNA backbones using:

- Radioactive arsenic-74 tracing experiments

- Mass spectrometry analysis of extracted biomolecules

- Elemental composition analysis of nucleic acids

- Growth measurements under arsenic stress

The fundamental methodological flaw was the inability to completely eliminate trace phosphorus from growth media, creating ambiguity about whether observed growth resulted from arsenic incorporation or phosphorus scavenging. Independent replication attempts in 2012 by two separate research teams failed to reproduce the key findings when using more rigorous purification protocols [24].

Retraction Compromise: The 2025 retraction occurred without a finding of misconduct, with the journal citing experimental error as the reason. The retraction notice states that the "reported experiments do not support its key conclusions" [24]. Notably, the authors maintained their dissent in an accompanying letter, stating: "While our work could have been written and discussed more carefully, we stand by the data as reported" [24]. This case represents a compromise approach to retraction where fundamental methodological limitations undermine confidence in conclusions without evidence of deliberate misconduct.

Stem Cell Pluripotency and Image Manipulation Concerns

The most highly cited retracted paper of 2024, "Pluripotency of mesenchymal stem cells derived from adult" published in Nature, accumulated 4,520 citations despite its retraction [22]. While specific reasons for retraction aren't detailed in the available sources, this case aligns with a broader pattern of image manipulation concerns in high-impact biology and materials science research.

Methodological Considerations for Materials Science: The experimental protocols typically involved in such stem cell research include:

- Isolation and culture of mesenchymal stem cells (MSCs) from adult tissues

- Differentiation assays into multiple cell lineages (osteogenic, adipogenic, chondrogenic)

- Flow cytometry for surface marker characterization

- Gene expression analysis using RT-PCR and RNA sequencing

- Teratoma formation assays in immunodeficient mice

- Immunofluorescence and histochemical staining

The high citation rate post-retraction (29 citations) highlights the ongoing challenge of ensuring the scientific community acknowledges and respects retraction status, particularly for influential papers [22].

The Growing Retraction Crisis: Systemic Challenges

Paper Mills and AI-Enabled Fraud

The retraction landscape is increasingly complicated by sophisticated "paper mills" – for-profit organizations that systematically falsify the scientific record [23]. These operations have evolved into sophisticated businesses producing papers complete with fabricated data, charts, and manipulated images, often making them difficult to distinguish from legitimate research.

Paper Mill Operations: Paper mills typically offer:

- Complete fabricated manuscripts on demand

- Authorship slots on seemingly legitimate papers

- Falsified experimental data and images

- Fabricated peer review reports through suggested reviewer networks

- Guaranteed publication in indexed journals [23]

The emergence of AI tools has further exacerbated this problem by enabling more sophisticated fabrication while simultaneously providing journals with better detection capabilities, creating an "arms race" in research fraud [23].

Impact on Research Careers and Collaboration Networks

Recent research published in Nature Human Behaviour demonstrates that retractions have profound effects on scientific careers, particularly for early-career researchers [25]. The study analyzed over 4,578 retracted papers involving 14,579 authors, revealing that retracted authors often leave scientific publishing, especially when retractions attract significant attention.

Collaboration Network Analysis: The research found that retracted authors who remain active in science maintain and establish more collaborations compared with similar non-retracted counterparts. However, these networks are qualitatively different – retracted authors generally retain less senior and less productive co-authors, though they gain more impactful co-authors post-retraction [25]. This suggests a complex restructuring of professional relationships following retractions.

Research Integrity Framework and Practical Solutions

The Research Integrity Process

The pathway from publication to retraction involves multiple stakeholders and decision points, as illustrated below:

Table 2: Research Integrity Resources for Materials Scientists

| Tool/Resource | Type | Primary Function | Access |

|---|---|---|---|

| Retraction Watch Database | Database | Tracking retracted papers and reasons | Public |

| INSPECT-SR (Available 2025) | Checklist | Identifying problematic randomized trials | Public |

| Problematic Paper Screener | AI Tool | Detecting paper mill products | Journal use |

| Papermill Alarm | AI Tool | Identifying manipulated images/text | Journal use |

| LibKey Nomad | Browser Extension | Retraction alerts during research | Public |

| Edifix | Citation Tool | Identifying retracted references | Subscription |

| Zotero with Retraction Watch | Reference Manager | Flagging retracted papers | Public |

| Committee on Publication Ethics (COPE) | Guidelines | Retraction and ethics standards | Public |

Experimental Protocol Verification Framework

For materials scientists seeking to ensure the integrity of their experimental approaches, the following verification framework provides essential safeguards:

Materials Characterization Protocol:

- Independent Replication Planning: Design experiments with built-in checkpoints for key findings using different instrumentation or methodologies.

- Raw Data Preservation: Maintain complete, unprocessed instrument outputs (XRD patterns, SEM/TEM micrographs, spectroscopic data) with metadata.

- Control Experiment Validation: Include positive and negative controls that can detect reagent contamination or methodological flaws (e.g., trace element contamination).

- Image Integrity Documentation: Maintain original, uncropped microscopy images with consistent processing parameters across compared samples.

- Data Transparency: Share complete datasets through repositories to enable independent verification of analyses.

Recommendations for Strengthening Research Integrity in Materials Science

Institutional and Cultural Reforms

The "publish or perish" research culture remains a significant driver of research misconduct, placing unsustainable pressure on researchers [23] [26]. Addressing this requires systemic changes:

- Revised Incentive Structures: Academic institutions should prioritize research quality over quantity in promotion and tenure decisions, valuing reproducible methodologies and data transparency alongside publication metrics.

- Enhanced Research Integrity Training: Implement evidence-based training programs at multiple career stages, from undergraduate students to senior investigators [27]. The INTEGRITY Project provides scaffolded learning materials tailored to different experience levels.

- Protected Whistleblower Mechanisms: Establish clear, confidential channels for reporting concerns without fear of reprisal, particularly for early-career researchers.

- Dedicated Research Integrity Officers: Empower institutional officials with authority and resources to conduct thorough investigations.

Practical Strategies for Individual Researchers

- Pre-Publication Verification: Utilize tools like the INSPECT-SR checklist (available 2025) to identify potential issues before manuscript submission [23].

- Citation Vigilance: Implement reference manager tools with retraction alerts (Zotero with Retraction Watch integration, LibKey Nomad) to avoid citing retracted literature [23].

- Data Sharing Practices: Embrace open science frameworks by sharing raw data and analytical code through trusted repositories to enable verification.

- Image Management: Maintain original, unprocessed images with detailed metadata and processing documentation for all publications.

- Collaboration Transparency: Clearly define roles, responsibilities, and authorship criteria at project inception using established guidelines like CRediT.

The high-profile retractions of 2025 underscore both the vulnerabilities and resilience of the scientific enterprise. As materials science continues to advance with increasing complexity and interdisciplinary connections, maintaining research integrity requires proactive, multi-level approaches. By learning from these cases, implementing robust verification protocols, and fostering a culture that prioritizes transparency over mere publication metrics, the materials science community can strengthen the foundation upon which scientific progress depends. The tools and frameworks outlined here provide a practical starting point for researchers committed to these principles.

Practical Tools and Techniques for Ensuring Data Integrity

The integrity of scientific imagery forms a cornerstone of credible research, particularly in fields like materials science and drug development where visual data often constitutes primary evidence. The advent of sophisticated digital editing tools and generative artificial intelligence (AI) has introduced profound challenges to upholding this integrity. Studies indicate that approximately one in three life sciences manuscripts submitted for publication are flagged for image-related issues, which are frequently unintentional yet difficult to detect with the naked eye [28] [29]. These issues can lead to misinterpretation of data, flawed conclusions, and a erosion of trust in scientific findings. In response, the research community is increasingly turning to automated tools designed to safeguard image authenticity. This whitepaper provides an in-depth examination of Proofig AI, an AI-powered platform developed to address image duplication, manipulation, and plagiarism in scientific publications. Framed within a broader thesis on enhancing research integrity, this analysis details Proofig's technical capabilities, operational workflow, and specific value for researchers committed to ensuring the highest standards of data veracity.

Understanding Image Integrity Challenges

Image integrity in scientific research is threatened by a spectrum of issues, ranging from unintentional oversights to deliberate misconduct. The risks are particularly acute in data-intensive fields like materials science, where image-based evidence is paramount for validating experimental results, such as characterizing nanomaterial structures or documenting cell-drug interactions.

Common types of image integrity breaches include [30]:

- Image Duplication: Reusing the same image, or parts of it, to represent different experimental conditions or results.

- Image Manipulation: Altering an image through cloning, editing, deletion, or splicing to misrepresent the original data.

- Image Fabrication: Creating entirely non-existent images or data.

- Image Plagiarism: Using another researcher's images without proper permission or citation.

- AI-Generated Images: Substituting synthetic images created by AI models for genuine experimental data.

The consequences of these breaches are severe. A post-publication retraction due to image issues is estimated to cost over $1 million per article when accounting for investigations and associated legal costs [31]. Beyond financial damage, such events inflict lasting reputational harm on researchers and their institutions, potentially jeopardizing future funding and career advancement [28]. Furthermore, they undermine the collective trust in scientific literature and can mislead other researchers, who may waste valuable resources attempting to build upon invalidated findings [30].

Proofig AI: Core Capabilities and Technological Framework

Proofig AI is an AI-powered Software-as-a-Service (SaaS) platform designed to automate the detection of image integrity issues in scientific manuscripts. Its technology is built upon a foundation of advanced machine learning, pattern recognition, and statistical analysis [30] [32]. The system is trained on a vast, ethically sourced dataset comprising material developed in-house and open-source content designated for commercial use, ensuring it does not leverage user-uploaded data for model training [31] [28].

The platform's core detection capabilities are comprehensive, addressing both traditional and emerging threats to image integrity:

Comprehensive Image Integrity Detection

Table 1: Overview of Proofig AI's Primary Detection Capabilities

| Detection Type | Key Functionality | Supported Image Variants |

|---|---|---|

| Duplication & Reuse within a Manuscript | Identifies duplicate sub-images, even when scaled, rotated, flipped, or partially overlapped [31] [28]. | Microscopy, Western blots, FACS, histology slides, cell culture, in-vivo/in-vitro images [31] [33]. |

| Alteration or Manipulation | Detects cloning, editing, deletion, and splicing within a single sub-image [31] [28]. | Western blot bands, gel electrophoresis, microscopies [31] [34]. |

| Plagiarism from Published Works | Cross-references tens of millions of images in the PubMed Source database to identify reused sub-images [31] [28]. | All supported image types. |

| AI-Generated Image Detection | Identifies synthetic images created by the most widely used AI models [31] [34]. | Microscopy, Western blots & gels, histology, cell plates, animal imaging, medical scans [34]. |

| Self-Plagiarism | Compares images against a personalized repository of a researcher's prior work to prevent reuse of their own published images [31] [29]. | All supported image types. |

Performance and Accuracy Metrics

Proofig AI demonstrates high accuracy in its detection tasks. The platform reports a 99.4% success rate in core processing of sub-images and a 96.8% precision in text detection [31]. Its performance in detecting AI-generated images is particularly notable, as shown in the table below.

Table 2: Proofig AI's AI-Generated Image Detection Accuracy

| Image Category | True Positive Rate | False Positive Rate | Validation Basis |

|---|---|---|---|

| Multi-Modal Imaging (Microscopy, Histology, etc.) | 95.41% [34] | 0.0093% [34] | Proprietary benchmark testing [34]. |

| Western Blots | 97.68% [34] | 0.002% [34] | Proprietary curated test dataset [34]. |

| Real-World Validation | Data Not Specified | 0.01% [34] | 250,000+ published research images [34]. |

Workflow and Experimental Protocols

Integrating Proofig AI into a researcher's pre-submission process is a streamlined, four-step operation that ensures thorough image checking without significant time investment [28] [29]. The entire workflow is designed for confidentiality, with all analyses conducted on private, secure servers [31].

Step-by-Step Operational Protocol

- Manuscript Upload: The researcher begins by uploading the complete manuscript in PDF format to the Proofig platform. The system then automatically extracts all images and sub-images contained within the document for analysis [28] [29].

- Automated Image Analysis: Proofig AI initiates a multi-faceted analysis of the extracted images. This process involves several concurrent methodologies [30] [28]:

- Pattern Recognition and Comparison: The core of the duplication detection. The system creates digital fingerprints of each sub-image and compares them against each other within the manuscript. Its algorithms are robust against transformations like rotation, scaling, and flipping [31] [30].

- Forensic Analysis for Manipulation: To detect alterations within a single sub-image (e.g., in a Western blot), the software uses statistical analysis to identify inconsistencies in noise patterns, compression artifacts, and cloning traces that are invisible to the human eye [31] [32].

- AI-Generated Image Detection: This feature leverages machine learning models specifically trained on a vast dataset of known AI-generated scientific images. It identifies subtle, synthetic patterns and anomalies that are characteristic of the most widely used generative AI models [34] [32].

- Plagiarism Check: The system cross-references all extracted images against a database of tens of millions of images from published articles in PubMed, calculating similarity scores to flag potential reuse from existing literature [31] [28].

- Validation and Review of Results: The system generates a report highlighting all suspected integrity issues, each with a similarity score and details of any transformations detected. A crucial step involves human oversight: the researcher or an integrity officer manually reviews each flagged match using Proofig's advanced investigation tools (e.g., filters, image alteration tools) to confirm whether the finding represents a genuine issue [30] [28]. This human-in-the-loop protocol ensures that the final judgment is informed by scientific context.

- Report Generation: After validation, the user selects the confirmed findings, and Proofig AI compiles them into a comprehensive PDF report. This report includes both a page view for context and a detailed view of each specific image issue, providing clear documentation for the researcher's records or for communication with journals [30] [28].

The Scientist's Toolkit: Key Reagents and Materials for Image Integrity

Upholding image integrity is not solely a computational task; it requires a combination of digital tools and rigorous laboratory practices. The following table outlines essential "research reagents" and protocols for maintaining image integrity from data acquisition to publication.

Table 3: Essential Materials and Protocols for Upholding Image Integrity

| Item / Protocol | Function / Purpose in Image Integrity |

|---|---|

| Original, Unprocessed Image Files | Serve as the definitive raw data for verification. Must be retained with all metadata to prove authenticity and provide a baseline for any allowable adjustments [30]. |

| Electronic Lab Notebook (ELN) | Provides a secure, timestamped record of experimental procedures, instrument settings, and the direct linkage between raw image data and specific experiments, ensuring replicability [35]. |

| Journal Guidelines on AI Use | A critical reference document. Researchers must strictly adhere to publisher policies regarding the use of AI-generated images, which often prohibit their use for representing research results [30]. |

| Pre-Submission Image Check Protocol | The standardized operating procedure for using a tool like Proofig AI to scan all figures in a manuscript prior to submission, catching unintentional errors early [28] [36]. |

| Metadata-Rich Image Formats | File formats (e.g., TIFF with metadata) that preserve information about acquisition date, time, and instrument parameters, facilitating traceability and auditability [30]. |

Application in Materials Science and Research Integrity

For the materials science and drug development communities, Proofig AI offers targeted capabilities that align with the field's specific integrity needs. The platform's proficiency in analyzing microscopy images (including confocal, light, and electron) and material characterization data is directly applicable to common workflows in nanomaterials research, metallurgy, and polymer science [31] [33]. The ability to detect duplicated or manipulated microstructural images, for instance, prevents the publication of non-representative data that could mislead the entire community about a material's properties.

Furthermore, the emerging threat of AI-generated microscopy images is a significant concern. A recent article in Nature Nanotechnology highlighted that generative AI can now create nanomaterial images virtually indistinguishable from real ones, raising the risk of sophisticated fabrication [35]. Proofig's dedicated detection module for such synthetic images provides a critical defense, allowing journals and institutions to maintain trust in published data.

Adopting Proofig AI proactively aligns with the broader thesis of improving research integrity. Institutions like The Ohio State University and Stanford University now provide campus-wide access to Proofig, framing it as a resource to support researchers in producing ethical, publication-ready work and to avoid costly post-publication investigations [37] [36]. By integrating such tools into the pre-submission workflow, the materials science community can collectively enhance the credibility, reproducibility, and overall trustworthiness of its scientific output.

For researchers in materials science and drug development, maintaining research integrity is paramount to ensuring the credibility and reproducibility of scientific advancements. Proactive manuscript screening represents a critical step in this process, allowing scientists to identify and address potential integrity concerns before submission to journals. This practice is increasingly vital as publishers employ sophisticated tools to check all incoming manuscripts, and issues discovered post-submission can lead to delays, corrections, or even retractions that damage professional reputations [38].

The scholarly publishing landscape has witnessed growing vigilance concerning research integrity, with journals across disciplines implementing more rigorous checks. A startling statistic indicates that up to one-sixth of manuscripts submitted to journals might be affected by plagiarism, representing a significant waste of peer reviewer resources and potential intellectual property loss [39]. For materials scientists developing novel compounds, characterization methods, or therapeutic agents, ensuring the originality and proper documentation of their work is particularly crucial given the competitive nature and high stakes of the field.

This guide provides comprehensive methodologies for implementing proactive screening protocols within research workflows, detailing specific tools and approaches that can help identify potential issues early in the manuscript preparation process. By adopting these practices, researchers can better uphold the highest standards of academic integrity while streamlining their path to publication.

Essential Screening Tools and Their Functions

| Tool Name | Primary Function | Key Features | Applicability to Materials Science |

|---|---|---|---|

| Proofig [38] | Image duplication and manipulation detection | AI-powered analysis of various image types; handles microscopy, Western blots, in-vivo and in-vitro images | Essential for characterizing material structures, drug formulations, and experimental results |

| iThenticate [38] [39] | Plagiarism and AI detection | Compares manuscripts against academic literature database; generates similarity reports | Critical for literature reviews, methodology descriptions, and ensuring original content |

| Crossref Similarity Check [39] | Plagiarism detection | Powered by iThenticate; specifically designed for scholarly publishing | Useful for verifying originality of experimental procedures and results discussions |

Quantitative Comparison of Screening Tools

| Tool | Detection Capabilities | Output Metrics | Limitations & Considerations |

|---|---|---|---|

| Proofig [38] | • Image duplication• Image manipulation• Various scientific image types | • Visual report of detected issues• Location of potential problems | • Requires clear image quality• May flag acceptable image adjustments |

| iThenticate/Similarity Check [39] | • Text similarity• Potential plagiarism• AI-generated content | • Overall Similarity Score (percentage)• Source-by-source breakdown | • High scores don't automatically indicate plagiarism• Requires contextual interpretation by subject experts |

Implementation Protocols for Proactive Screening

Comprehensive Screening Workflow

Pre-Submission Screening Workflow

Protocol 1: Image Integrity Screening with Proofig

Purpose: To detect unintentional image duplications or manipulations in materials science microscopy, characterization data, and experimental results.

Materials and Equipment:

- Proofig software access (via institutional subscription) [38]

- Complete manuscript draft with all figures

- Original image files from experiments

Procedure:

- Prepare Image Files: Compile all figures included in the manuscript in their intended publication format, ensuring resolution meets journal requirements.

- Configure Analysis Settings:

- Select appropriate image categories (e.g., electron microscopy, spectroscopy, chromatography)

- Enable cross-figure comparison to detect duplication across different figure panels

- Run Analysis: Upload the complete manuscript PDF or image set to Proofig for AI-powered analysis.

- Interpret Results:

- Review flagged areas for potential duplication or manipulation

- Assess whether flagged issues represent:

- Honest errors (e.g., accidental duplicate placement)

- Standard image adjustments (e.g., brightness/contrast optimization)

- Potentially problematic manipulations (e.g., selective removal of artifacts)

- Document Review Process: Maintain records of analysis results and corrective actions taken.

Troubleshooting:

- If numerous false positives occur, verify that image compression hasn't introduced artifacts

- For unclear flags, consult original raw images to verify authenticity

- If uncertain about classification of findings, contact your institution's research integrity office [38]

Protocol 2: Text Originality Screening with iThenticate

Purpose: To identify potential text similarity issues, improper citation, or inadvertent plagiarism in manuscript text.

Materials and Equipment:

- iThenticate software access (institutional subscription) [38] [39]

- Complete manuscript text (including references, figure legends, and supplemental information)

- Journal-specific plagiarism policies

Procedure:

- Prepare Manuscript Text: Compile the complete manuscript text, excluding author identifiers if desired.

- Configure Exclusion Settings:

- Exclude quotes and references (if journal policies permit) [39]

- Exclude bibliographic elements

- Include/exclude preprint repositories based on journal policy

- Run Similarity Analysis: Submit manuscript to iThenticate for comparison against academic database.

- Interpret Similarity Report:

- Review Overall Similarity Score as initial indicator

- Examine source-by-source matches for context

- Differentiate between:

- Address Identified Issues:

- Properly paraphrase matched text with appropriate citation

- Use quotation marks for directly copied text with citation

- Obtain permissions for extensive reproductions

Troubleshooting:

- For high similarity with author's own work, determine if journal requires specific disclosure

- For discipline-specific terminology causing false matches, verify whether rewriting is possible

- Consult journal guidelines if uncertain about acceptable similarity thresholds

Research Reagent Solutions for Integrity Screening

Essential Digital Materials for Screening

| Research Reagent Solution | Function | Application Notes |

|---|---|---|

| Proofig Software [38] | AI-powered image integrity verification | Critical for materials characterization images; ensures microscopy and spectroscopy data authenticity |

| iThenticate Software [38] [39] | Text similarity and plagiarism detection | Essential for literature reviews and methodology sections; helps maintain textual originality |

| Reference Management Software | Citation organization and formatting | Reduces inadvertent citation errors; facilitates proper attribution |

| Institutional Research Integrity Office [38] | Guidance on ambiguous screening results | Consult for cases where error vs. misconduct is uncertain; provides protocol clarification |

Analysis and Interpretation of Screening Results

Evaluating Image Analysis Findings

When reviewing Proofig results, materials scientists must distinguish between acceptable image processing and problematic manipulation. For microscopic characterization of materials, certain adjustments like uniform brightness/contrast enhancement may be acceptable if applied to the entire image and properly disclosed. However, selective modification that misrepresents material structures or properties constitutes serious misconduct [38].

Common image issues in materials science manuscripts include:

- Inadvertent duplication of similar-looking material morphology images

- Inconsistent processing within figure panels showing comparative materials

- Cropping that eliminates important contextual scale information

Researchers should maintain original, unprocessed images for all published figures to verify authenticity if questioned. The screening process should be documented, including how flagged issues were addressed.

Interpreting Text Similarity Reports

iThenticate's Similarity Score requires careful contextual interpretation by subject-matter experts. For materials science manuscripts, certain technical descriptions of standard methodologies may naturally exhibit similarity without indicating plagiarism [39].

Consider these thresholds as guidelines for further investigation:

- <5% similarity: Typically minimal concern unless concentrated in single source

- 5-15% similarity: Requires source-by-source review for proper attribution

- >15% similarity: Warrants comprehensive revision and careful analysis

Materials scientists should pay particular attention to:

- Methodology sections describing standard synthesis protocols

- Literature reviews citing established foundational knowledge

- Descriptions of common characterization techniques (XRD, SEM, TEM, etc.)

When similarity is identified with the author's own previous publications, journals may have specific policies regarding acceptable text reuse, particularly for methods sections [39].

Integration with Broader Research Integrity Framework

Proactive manuscript screening represents one essential component of a comprehensive research integrity strategy for materials science and drug development. This practice aligns with broader initiatives such as the STM Integrity Hub, which provides a modular platform for identifying manuscripts that violate research integrity norms before they enter the publication cycle [40].

Implementing systematic screening protocols demonstrates institutional commitment to research quality and ethical scholarship. When integrated with proper mentorship, documentation practices, and reproducibility measures, pre-submission screening significantly strengthens the credibility of published materials science research.

By adopting these proactive approaches, researchers contribute to safeguarding the scholarly record while accelerating the dissemination of robust, reliable scientific knowledge in the materials science and drug development fields.

In the modern research landscape, particularly in fields like materials science and drug development, upholding research integrity has become increasingly complex. The fundamental principles of research integrity—reliability, honesty, respect, and accountability—form the bedrock of scientific progress [41]. However, these principles now face unprecedented challenges from digital threats, including sophisticated plagiarism and the rapid emergence of AI-generated content. The scientific community publishes over 2.5 million manuscripts annually, with studies indicating that a significant portion may contain integrity issues, including image duplication, manipulation, or textual plagiarism [31]. Furthermore, generative AI tools have introduced new ethical dilemmas, enabling the creation of seemingly original text that may obscure true authorship and originality.

Software solutions like iThenticate have emerged as critical tools for journals, universities, and research institutions to screen for potential misconduct. These tools help maintain the credibility of the scientific record by identifying textual overlaps and, increasingly, AI-generated content. For materials science researchers, whose work often involves substantial public funding and significant implications for technology development, ensuring originality is not merely an administrative requirement but a fundamental ethical obligation. This technical guide examines the capabilities, implementation, and limitations of plagiarism detection software, with a specific focus on iThenticate, within the broader context of research integrity frameworks.

Understanding Plagiarism Detection Software

Core Functionality and Databases

Plagiarism detection software like iThenticate operates by comparing submitted documents against an extensive database of scholarly content to identify textual overlaps. iThenticate's database includes premium scholarly journals, books, law reviews, patents, dissertations and theses, pre-prints, conference proceedings, and internet pages [42]. This comprehensive coverage is crucial for materials science research, where information spans journal articles, conference proceedings, patent literature, and technical reports. The system generates a "Similarity Report" that highlights matching text and provides an overall "Similarity Index" (percentage of overlapping text) while carefully using the term "similarity" rather than "plagiarism" to emphasize that human judgment is required for final assessment [43].

The technical workflow involves document submission in supported formats (including DOC, DOCX, PDF, TXT, and others), after which the system performs automated text extraction and comparison against its database [42]. For research institutions, iThenticate offers the option to establish a private repository to store internal documents, enabling detection of text similarity across submissions within the same organization [42]. This feature is particularly valuable for identifying research misconduct, including self-plagiarism, within large research institutions or corporate R&D departments.

Evolution into AI Detection