Breaking Through the Kinetic Barrier: AI-Driven Strategies to Overcome Sluggish Reactions in Autonomous Synthesis

Sluggish reaction kinetics present a critical bottleneck in autonomous synthesis, hindering the discovery and manufacturing of novel materials and pharmaceuticals.

Breaking Through the Kinetic Barrier: AI-Driven Strategies to Overcome Sluggish Reactions in Autonomous Synthesis

Abstract

Sluggish reaction kinetics present a critical bottleneck in autonomous synthesis, hindering the discovery and manufacturing of novel materials and pharmaceuticals. This article synthesizes the latest advances in artificial intelligence and robotic laboratories that are overcoming these kinetic limitations. We explore the foundational causes of kinetic barriers, detail cutting-edge methodological solutions from Bayesian optimization to active learning, provide actionable troubleshooting frameworks for experimental optimization, and validate these approaches through comparative analysis of real-world case studies. Tailored for researchers and drug development professionals, this resource provides a comprehensive roadmap for accelerating discovery timelines and improving the success rates of autonomous synthesis platforms in biomedical research.

Understanding the Kinetic Bottleneck: Why Sluggish Reactions Halt Autonomous Discovery

Defining Sluggish Kinetics in Solid-State and Solution-Phase Synthesis

FAQ: Troubleshooting Sluggish Kinetics

What are sluggish kinetics and how do I identify them in my synthesis?

Sluggish kinetics refer to reaction rates that are impractically slow, often halting the formation of a target material or significantly extending the synthesis time. This is a common barrier in both solid-state and solution-phase synthesis.

| Synthesis Type | Key Indicator of Sluggish Kinetics | Common Experimental Observation |

|---|---|---|

| Solid-State Synthesis | Reaction steps with low thermodynamic driving forces [1]. | A target material is not obtained even after extensive heating, or the reaction yield remains low despite seemingly optimal conditions. |

| Solution-Phase Synthesis | A slow rate of crystallization or phase separation [2] [3]. | A supersaturated solution remains for a long period without precipitating the desired crystalline product, or a polymer solution forms a gel-like network that separates slowly over many hours [3]. |

What are the primary causes of sluggish kinetics in solid-state synthesis?

In solid-state synthesis, the main cause is often sluggish reaction kinetics at the atomic level, where the driving force to form the target material from its intermediates is very small (e.g., less than 50 meV per atom) [1]. This low driving force results in extremely slow solid-state diffusion and reaction rates, preventing the system from reaching the thermodynamic equilibrium state within a practical timeframe.

How can sluggish kinetics be overcome in solid-state synthesis?

Advanced research platforms like the A-Lab use active learning algorithms grounded in thermodynamics to overcome this. The system identifies and avoids synthesis pathways that lead to intermediate compounds with a small driving force to form the final target. Instead, it prioritizes alternative precursor sets or reaction routes that have a much larger driving force (e.g., 77 meV per atom vs. 8 meV per atom), which can increase target yield by over 70% [1].

What solution-phase strategies can mitigate sluggish kinetics?

A key strategy is engineering material morphology to enhance transport and reaction pathways. For example, synthesizing nanoporous metal structures creates a high surface area and a percolating network that facilitates atomic or molecular diffusion. This has been shown to enhance sorption kinetics, as opposed to the sluggish kinetics observed in bulk or core-shell nanoparticle materials [4]. Furthermore, defect engineering, such as introducing oxygen vacancies into a catalyst, can improve electron transfer ability and accelerate key redox cycles, leading to a fast and deep degradation of contaminants within minutes [5].

Are there experimental techniques to better understand fast initial kinetics?

Yes. Traditional solid-state synthesis is often slow and limited by transport, but recent approaches using custom-designed reactors with in-situ X-ray scattering can capture the earliest stages of a reaction. These studies have revealed that significant product formation can occur within seconds to minutes under high temperatures, a period with fast initial kinetics that was previously overlooked. Analyzing these regimes with models like Avrami kinetics provides characteristic dimensionalities for each transformation step [6].

Research Reagent Solutions

The following table details key materials and their functions for experiments focused on overcoming sluggish kinetics.

| Reagent/Material | Function in Experiment |

|---|---|

| Lithium Naphthalenide Solution | A highly reductive organic solvent used in the synthesis of nanoporous Mg via reduction-induced decomposition, avoiding harsh corrosive environments [4]. |

| Oxygen Vacancies Enriched Biochar Catalyst (e.g., Mo-Co-ECM) | A heterogeneous catalyst where oxygen vacancies enhance electron transfer ability and accelerate the Co³⁺/Co²⁺ cycle, enabling rapid activation of oxidants like peroxymonosulfate for deep contaminant degradation [5]. |

| Precursor Powders (Various Oxides, Phosphates) | Starting materials for solid-state synthesis. Their selection is critical and can be optimized by machine learning models to avoid low-driving-force intermediates [1]. |

| In-situ X-ray Scattering Reactor | A custom reactor that enables real-time analysis of the earliest stages of a solid-state reaction, allowing researchers to capture and model fast initial kinetics [6]. |

Experimental Protocol: Overcoming Sluggish Kinetics via Active Learning

This methodology is adapted from the workflow of the A-Lab for the solid-state synthesis of novel inorganic powders [1].

1. Problem Identification and Initial Recipe Generation

- Input: A thermodynamically stable target material identified from computational screening (e.g., from the Materials Project database).

- Action: Generate up to five initial solid-state synthesis recipes using a natural-language model trained on historical literature. This model assesses "target similarity" to propose effective precursors and heating temperatures based on analogous known materials.

2. Robotic Execution and Analysis

- Action: The lab's robotic system automatically dispenses and mixes precursor powders, loads them into a furnace for heating, and then grinds and characterizes the resulting product via X-ray diffraction (XRD).

- Analysis: Machine learning models analyze the XRD patterns to identify phases and quantify the yield (weight fraction) of the target material.

3. Active Learning Optimization Cycle

- Condition: If the initial recipes fail to produce >50% yield of the target, an active learning algorithm (e.g., ARROWS³) is activated.

- Action: The algorithm uses two key principles:

- It leverages a growing database of observed pairwise solid-state reactions to infer pathways and prune the search space of ineffective recipes.

- It uses thermodynamic data to propose new synthesis routes that avoid intermediates with a small driving force to form the target, instead favoring pathways with larger driving forces.

- Iteration: The lab performs these new, optimized recipes robotically, repeating the cycle until the target is successfully synthesized or all options are exhausted.

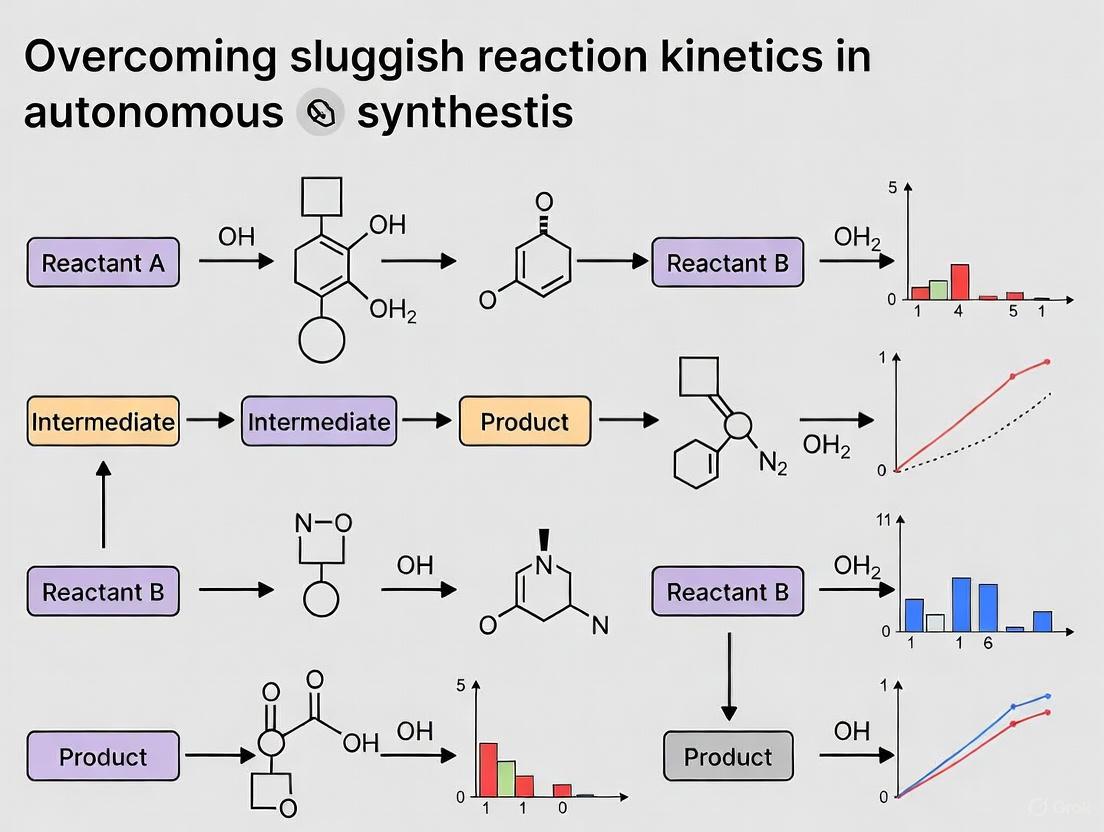

Workflow Diagram

Experimental Protocol: Fast Kinetics Analysis with In-Situ Scattering

This protocol outlines how to study the fast initial kinetics of solid-state reactions, often missed by traditional methods [6].

1. Reactor Setup and Calibration

- Equipment: Utilize a custom-designed reactor that allows for rapid heating and is integrated with an in-situ X-ray scattering (or diffraction) setup.

- Calibration: Ensure precise calibration of temperature control and the X-ray detector to accurately correlate time, temperature, and scattering signal.

2. Reaction Initiation and Data Collection

- Loading: Load a well-mixed precursor powder (e.g., TiO₂ and Li₂CO₃) into the reactor.

- Initiation: Rapidly heat the sample to the desired reaction temperature (e.g., 700-750°C for fast kinetics or a lower temperature like 482°C for comparison).

- Data Collection: Immediately begin collecting time-resolved X-ray scattering patterns with a high acquisition rate (on the order of seconds) to capture the earliest stages of the reaction.

3. Data Analysis and Kinetic Modeling

- Phase Identification: Analyze the sequential scattering patterns to identify the emergence and evolution of crystalline phases, including intermediates and the final product.

- Kinetic Modeling: Fit the time-dependent phase fraction data to a kinetic model, such as the Avrami model, to extract characteristic parameters (e.g., Avrami exponents) that provide insight into the reaction mechanism and dimensionality.

Kinetics Analysis Diagram

Economic and Temporal Costs of Kinetic Barriers in Drug Development Pipelines

Frequently Asked Questions (FAQs)

Q1: What are the most significant economic impacts of kinetic barriers in drug development? The primary economic impact is the cost of clinical failure. Developing a new drug takes 10–15 years and costs $1–2 billion on average [7]. A staggering 90% of drug candidates that enter clinical trials fail, with approximately 40-50% failing due to a lack of clinical efficacy, often a direct consequence of poor pharmacokinetics and insufficient drug exposure at the target site [7]. Each day a drug is in development costs approximately $37,000 in direct out-of-pocket expenses, plus an estimated $1.1 million in lost opportunity [8].

Q2: Why do kinetic barriers cause failures late in the pipeline rather than early? Many kinetic barriers are not detected in standard preclinical models. Compounds are often optimized for high in vitro potency and specificity, but without equal emphasis on their structure–tissue exposure/selectivity relationship (STR) [7]. Discrepancies in biology between animal models and human disease, as well as poor prediction of human efficacy from animal models, mean that problems with tissue exposure and selectivity often only become apparent in costly Phase II clinical trials, the stage where lack of efficacy is most frequently revealed [7] [9].

Q3: How can I optimize a reaction to improve the drug-like properties of a lead compound? Reaction optimization is the systematic process of adjusting experimental conditions to improve outcomes like yield, selectivity, and rate [10]. Key variables to optimize include solvent, temperature, catalyst, time, and stoichiometry [10]. A step-by-step approach is:

- Choose a target metric (e.g., yield).

- Select 2–3 key variables to test based on literature.

- Design a small matrix of experiments.

- Run experiments and record results.

- Analyze trends and iterate [10]. Tools like Bayesian optimization algorithms can help guide this process more efficiently by learning from each experimental result [11] [10].

Q4: What is the STAR framework and how can it guide candidate selection? The Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) is a framework proposed to improve drug optimization by classifying candidates into four categories, balancing potency, tissue exposure, and clinical dose [7]. The following table summarizes the STAR classification system for drug candidates:

| Class | Specificity/Potency | Tissue Exposure/Selectivity | Clinical Dose & Outcome | Recommendation |

|---|---|---|---|---|

| Class I | High | High | Low dose; superior efficacy/safety | High success rate; prioritize [7]. |

| Class II | High | Low | High dose; high toxicity | High risk; cautious evaluation [7]. |

| Class III | Adequate | High | Low dose; manageable toxicity | Often overlooked; promising [7]. |

| Class IV | Low | Low | Inadequate efficacy/safety | Terminate early [7]. |

Troubleshooting Guides

Problem: Lead candidate shows high in vitro potency but fails in vivo due to poor tissue exposure.

Potential Causes and Solutions:

- Cause 1: Over-reliance on Structure-Activity Relationship (SAR) alone.

- Solution: Integrate Structure–Tissue Exposure/Selectivity Relationship (STR) into the early optimization process. Use the STAR framework to classify compounds and select those with balanced properties (e.g., Class I or III) rather than just high potency (Class II) [7].

- Cause 2: Inadequate blood-brain barrier (BBB) penetration for CNS targets.

- Solution: Implement high-throughput in vitro BBB permeability models early in discovery to identify compounds that cannot reach the CNS target. This allows for early structural modification to improve intrinsic permeability or reduce interaction with efflux pumps like P-glycoprotein [8].

- Cause 3: Poor drug-like properties leading to unfavorable pharmacokinetics.

- Solution: Rigorously apply early Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) screening. Adhere to guidelines like Lipinski's Rule of 5 (Molecular Weight <500, cLogP <5, H-bond donors ≤5, H-bond acceptors ≤10) to prioritize compounds with a higher probability of oral bioavailability [8].

Problem: Translational failure—efficacy in animal models does not predict efficacy in human clinical trials.

Potential Causes and Solutions:

- Cause 1: The wrong animal model was used for the human disease.

- Solution: Critically evaluate the predictive validity of animal models. A failure rate of 60-70% in Phase II trials is consistent across therapeutic areas, including those where animal models are considered predictive, like cardiovascular disease [9]. Invest in developing better humanized models or human cell-based systems.

- Cause 2: The pharmacodynamic (PD) endpoint measured in animals does not correlate with the clinical endpoint.

- Solution: Ensure that the endpoints used in preclinical studies are as close as possible to the true clinical outcome. For example, a drug designed to protect the heart from ischemia-reperfusion damage must show improvement in hard endpoints like infarct size, not just surrogate markers [9].

- Cause 3: Species-specific differences in drug metabolism or target biology.

- Solution: Conduct thorough in vitro studies using human enzymes and cells (e.g., hepatocytes, recombinant enzymes) to identify significant metabolic differences early. This was a key lesson from the failure of the vasopressin V1 receptor antagonist, which was highly effective in rats but not in humans due to species differences in the receptor [9].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential materials and their functions for overcoming kinetic barriers in drug development.

| Reagent/Material | Function |

|---|---|

| Immortalized Cell Lines (e.g., brain capillary endothelial cells) | Form the basis of high-throughput in vitro models to study kinetic parameters like blood-brain barrier penetration [8]. |

| Primary Cultured Cells (e.g., bovine, porcine, or rat brain capillary endothelial cells) | Used in co-culture with astrocytes to create more physiologically relevant models for predicting tissue distribution and toxicity [8]. |

| P-glycoprotein (P-gp) Inhibitors | Used in assays to determine if a drug candidate is a substrate for efflux pumps, which can limit its tissue penetration and efficacy [8]. |

| hERG Assay Kits | Early in vitro assessment of a compound's potential to cause cardiotoxicity, a common reason for failure due to toxicity [7] [8]. |

| CYP450 Enzyme Assays | Determine the metabolic stability of a drug candidate and its potential for drug-drug interactions, key ADMET properties [8]. |

| Bayesian Optimization Software (e.g., ChemOS, Phoenics) | Algorithmic software that guides autonomous experimentation by proposing optimal conditions to test, dramatically accelerating reaction and formulation optimization [11]. |

Experimental Protocols & Workflow Visualization

Protocol 1: Autonomous Workflow for Optimizing Reaction Kinetics and Drug-Like Properties

This protocol outlines a closed-loop workflow for autonomous experimentation, which can be applied to optimize synthetic routes for key drug intermediates or to formulate compounds for improved solubility and bioavailability [11].

- Design: An experiment planning algorithm (e.g., a Bayesian optimizer) suggests a set of initial experimental conditions based on pre-defined objectives (e.g., maximize yield, minimize byproducts) and prior knowledge [11].

- Make: An automated synthesis platform (e.g., a robotic fluid-handling system) executes the suggested experiments, handling liquid reagents and performing reactions [11].

- Test: The reaction products are automatically transferred to an analysis platform (e.g., HPLC, MS) for characterization. The results (yield, purity) are recorded in a standardized database [11].

- Analyze: The algorithm learns from the new results, updating its internal model. It then uses this refined model to design the next, more optimal set of experiments, closing the loop [11].

The entire process is orchestrated by software like ChemOS, which is hardware-agnostic and manages scheduling, machine learning, and data storage [11]. This approach increases throughput, reproducibility, and the quality of data collected, while freeing researchers for higher-level tasks [11].

Diagram: Autonomous Optimization Cycle

Protocol 2: Early-Stage ADMET and Tissue Exposure Screening

This protocol is designed for the lead optimization stage to eliminate candidates with poor kinetic properties before they enter costly development phases [8].

- In Vitro Permeability Assessment:

- Use Caco-2 cell monolayers or artificial membranes (PAMPA) to model human intestinal absorption.

- For CNS targets, use a validated in vitro BBB model (e.g., a co-culture of brain endothelial cells and astrocytes).

- Test compounds with and without specific inhibitors of efflux transporters like P-gp to identify substrates.

- Metabolic Stability Assay:

- Incubate the drug candidate with liver microsomes (human and preclinical species) or hepatocytes.

- Measure the half-life (t₁/₂) of the parent compound over time. A t₁/₂ > 45–60 minutes in human microsomes is generally preferred [7].

- Tissue Binding Assessment:

- Determine the compound's plasma protein binding and tissue homogenate binding using methods like equilibrium dialysis.

- This data is critical for understanding the volume of distribution and the fraction of free, pharmacologically active drug.

- Data Integration and STAR Classification:

- Integrate the data on permeability, metabolic stability, and tissue binding with potency (IC₅₀, Ki) data.

- Classify the lead series according to the STAR framework to select the best candidates for in vivo studies [7].

Diagram: Drug Development Pipeline with Kinetic Barrier Checkpoints

Quantitative Data on Clinical Attrition

The high cost of drug development is driven predominantly by failure in clinical stages. The table below summarizes the primary reasons for clinical failure of drug candidates, based on an analysis of data from 2010–2017 [7].

| Reason for Clinical Failure | Attribution Rate |

|---|---|

| Lack of Clinical Efficacy | 40% - 50% [7] |

| Unmanageable Toxicity | ~30% [7] |

| Poor Drug-Like Properties (PK, Bioavailability) | 10% - 15% [7] |

| Lack of Commercial Needs / Poor Strategic Planning | ~10% [7] |

Fundamental Concepts FAQ

What are thermodynamic and kinetic control? In chemical synthesis, thermodynamic control and kinetic control describe which reaction pathway is favored under given conditions, determining the final product mixture when competing pathways lead to different products [12].

- Kinetic Product: Forms faster due to a lower activation energy barrier. It is favored under kinetic control at lower temperatures and shorter reaction times.

- Thermodynamic Product: Is more stable and has a lower overall free energy. It is favored under thermodynamic control when the reaction is allowed to reach equilibrium, typically at higher temperatures and with longer reaction times [12] [13].

Why is the distinction important for autonomous synthesis? Autonomous laboratories, like the A-Lab, use computation and active learning to plan and execute experiments. Understanding whether a reaction is under kinetic or thermodynamic control is crucial for the AI to:

- Propose effective synthesis recipes by correctly prioritizing precursor sets and reaction conditions.

- Diagnose and overcome failures, such as sluggish reaction kinetics, which are a major cause of unsuccessful synthesis attempts [1].

- Optimize pathways by leveraging knowledge of observed reaction intermediates and their driving forces to avoid low-yield traps [1].

How can I visually distinguish between the two? The following energy profile diagram illustrates the key differences. The kinetic product forms via a pathway with a lower activation energy (Ea), while the thermodynamic product is more stable (lower ΔG).

How do temperature and time influence the product? The table below summarizes how reaction conditions determine the dominant product [12] [13].

| Condition | Favored Control Type | Favored Product | Rationale |

|---|---|---|---|

| Low TemperatureShort Time | Kinetic Control | Kinetic Product | Insufficient thermal energy to overcome the higher barrier to the thermodynamic product; system is trapped by reaction speed. |

| High TemperatureLong Time | Thermodynamic Control | Thermodynamic Product | Sufficient thermal energy and time for reaction reversal and equilibration; system reaches the most stable state. |

A classic example is the electrophilic addition to 1,3-butadiene. At low temperatures, the kinetic 1,2-adduct dominates. At high temperatures, the thermodynamic 1,4-adduct prevails [12] [13].

Troubleshooting Guide: Overcoming Sluggish Kinetics

Sluggish reaction kinetics was identified as the primary failure mode for 11 out of 17 unobtained targets in a recent large-scale autonomous synthesis campaign [1]. This section provides a diagnostic workflow.

Diagnostic Workflow for Kinetic Failures The following flowchart outlines a step-by-step troubleshooting process for an autonomous system that fails to synthesize a target material.

Common Failure Modes in Autonomous Synthesis Analysis from the A-Lab operation categorized reasons for synthesis failures, providing actionable diagnostics [1].

| Failure Mode | Description | Evidence | Potential Solution |

|---|---|---|---|

| Sluggish Kinetics | Reaction steps have low driving force (<50 meV/atom). | Target absent; reaction intermediates persist even at high temperature. | Use active learning to find alternative precursor sets that form intermediates with a larger driving force to the target [1]. |

| Precursor Volatility | Key precursor is lost during heating before it can react. | Non-stoichiometric product mixture; deficiency of a specific element. | Use sealed ampoules or alternative precursor salts with lower volatility. |

| Amorphization | Product or key intermediate does not crystallize. | Broad, featureless XRD pattern despite reaction signatures. | Anneal at different cooling rates; use alternative grinding protocols. |

| Computational Inaccuracy | Target material is not actually thermodynamically stable. | No known synthesis route succeeds; contradictory computational data. | Re-evaluate computational predictions of phase stability. |

Protocol: Active Learning for Route Optimization (ARROWS3) When initial recipes fail, the A-Lab uses an active learning cycle to overcome kinetic barriers [1].

- Input Failed Data: Feed the unsuccessful recipe and the identified intermediates into the active learning algorithm.

- Query Observed Reaction DB: Check a growing database of pairwise solid-state reactions to infer known pathways and avoid retesting.

- Compute Driving Forces: Use formation energies from ab initio databases (e.g., Materials Project) to calculate the driving force (ΔG) from observed intermediates to the target.

- Propose New Recipe: Prioritize precursor sets that avoid intermediates with a very low driving force (<50 meV/atom) to the target, as these are kinetic traps.

- Iterate: The new recipe is tested robotically, and the cycle continues until the target is obtained or all options are exhausted.

The Scientist's Toolkit

Key Research Reagents and Materials The following table lists essential components for conducting and analyzing experiments in autonomous synthesis research.

| Item | Function in Experiment |

|---|---|

| Precursor Powders | High-purity metal oxides, carbonates, phosphates, etc., that serve as reactants for solid-state synthesis of inorganic powders [1]. |

| Alumina Crucibles | Chemically inert containers that hold powder samples during high-temperature heating in box furnaces [1]. |

| X-ray Diffractometer (XRD) | The primary characterization tool used to identify crystalline phases and determine the weight fraction of the target product in the synthesis output [1]. |

| Ab Initio Database (e.g., Materials Project) | A computational database providing pre-calculated formation energies and phase stability data, which are essential for predicting stability and calculating reaction driving forces [1]. |

| Probabilistic ML Model for XRD | A machine learning model trained on experimental structures to identify phases and their weight fractions from XRD patterns, even for previously unreported compounds [1]. |

Experimental Workflow of an Autonomous Laboratory The A-Lab integrates computation, robotics, and active learning into a closed-loop workflow for materials discovery [1].

Frequently Asked Questions (FAQs)

Q1: What does "sluggish reaction kinetics" mean in the context of solid-state synthesis? Sluggish reaction kinetics refers to solid-state reactions that proceed extremely slowly, often due to low thermodynamic driving forces (typically below 50 meV per atom) or slow diffusion rates in solid materials. This prevents reactions from reaching completion within practical experimental timeframes, causing synthesis attempts to fail even for thermodynamically stable compounds [1].

Q2: Why are kinetic limitations particularly problematic for autonomous laboratories? Autonomous labs operate with predefined experimental cycles and time constraints. Reactions with slow kinetics may not produce detectable amounts of target material within these cycles, leading the system to incorrectly classify viable syntheses as failures and abandon promising reaction pathways [1] [14].

Q3: What experimental strategies can help overcome slow kinetics? Key strategies include: (1) increasing reaction temperatures to accelerate reaction rates, (2) selecting precursor combinations that avoid intermediate phases with low driving forces, (3) extending reaction times for promising pathways, and (4) using finer precursor powders to reduce diffusion path lengths [1] [14].

Q4: How can I determine if my failed synthesis is due to kinetic limitations? Monitor for these indicators: (1) target formation begins but plateaus at low yield, (2) intermediate phases persist throughout the reaction, (3) calculations show low driving forces (<50 meV/atom) for critical reaction steps, or (4) extended reaction time at higher temperature increases target yield [1].

Troubleshooting Guide: Kinetic Limitations in Solid-State Synthesis

Problem Diagnosis Table

| Observation | Possible Causes | Diagnostic Tests | Suggested Solutions |

|---|---|---|---|

| Low target yield with persistent intermediate phases | Slow solid-state diffusion; Low driving force for final reaction step | Calculate decomposition energy of intermediates; Analyze reaction pathway driving forces | Increase reaction temperature; Modify precursor selection to avoid low-driving-force intermediates |

| Partial reaction with unreacted starting materials | Slow nucleation kinetics; Insufficient reaction energy | Perform stepwise heat treatments; Test with finer precursor powders | Introduce seeding crystals; Use mechanical activation; Employ multi-stage heating profiles |

| Inconsistent results between similar precursor sets | Varying kinetic pathways with different activation energies | Compare reaction pathways for different precursors; Analyze intermediate phases | Prioritize precursor combinations with simpler reaction pathways; Use combinatorial screening |

| Variable performance across temperature ranges | Temperature-dependent kinetic barriers | Conduct temperature-gradient experiments; Determine activation energy | Optimize temperature profile; Extend reaction time at critical temperature ranges |

Quantitative Analysis of A-Lab Synthesis Failures

Table: Root Causes for 17 Failed Syntheses in A-Lab Experiments [1]

| Failure Category | Number of Targets | Percentage of Total Failures | Characteristic Kinetic Issues |

|---|---|---|---|

| Sluggish reaction kinetics | 11 | 65% | Reaction steps with driving forces <50 meV/atom |

| Precursor volatility | 3 | 18% | Loss of reactive components before reaction completion |

| Amorphization | 2 | 12% | Failure to crystallize despite reaction occurrence |

| Computational inaccuracy | 1 | 6% | Incorrect stability predictions affecting precursor selection |

Experimental Protocols for Kinetic Analysis

Protocol 1: Driving Force Calculation for Reaction Steps

Purpose: Identify kinetic bottlenecks in proposed synthesis routes by quantifying thermodynamic driving forces [1].

Materials:

- Computational access to materials database (e.g., Materials Project)

- Formation energy data for target and potential intermediate phases

- Statistical analysis software

Procedure:

- Identify all possible intermediate phases that may form between precursors

- Retrieve or calculate formation energies (ΔGf) for all relevant phases

- Compute decomposition energy for each reaction step: ΔErxn = ΣΔGf(products) - ΣΔGf(reactants)

- Flag any reaction steps with driving forces <50 meV/atom as potential kinetic bottlenecks

- Prioritize synthesis routes that avoid low-driving-force steps

Expected Output: Quantitative assessment of reaction pathway viability with identification of specific kinetic barriers.

Protocol 2: Precursor Selection Optimization

Purpose: Select precursor combinations that maximize driving forces and minimize kinetic barriers [1].

Materials:

- Multiple precursor options for target composition

- Historical reaction database

- Pairwise reaction data

Procedure:

- Generate all chemically plausible precursor combinations for target material

- Consult historical data on similar systems to identify successful precursor patterns

- Evaluate predicted reaction pathways for each precursor set

- Calculate pairwise reaction energies between potential intermediates

- Select precursors that generate high-driving-force intermediates (>75 meV/atom)

- Validate selection with small-scale test reactions before full synthesis

Expected Output: Optimized precursor set with minimized kinetic barriers to target formation.

Research Reagent Solutions

Table: Essential Materials for Kinetic Studies in Solid-State Synthesis

| Reagent Category | Specific Examples | Function in Kinetic Analysis | Application Notes |

|---|---|---|---|

| Computational Databases | Materials Project, Google DeepMind | Provide formation energies for driving force calculations | Essential for predicting reaction pathways before experimentation |

| Precursor Libraries | Metal oxides, phosphates, carbonates | Enable screening of multiple reaction pathways | Maintain diverse selection to maximize finding kinetically favorable routes |

| Historical Reaction Databases | ICSD, literature mining datasets | Identify successful precursor patterns for analogous materials | Train ML models for improved precursor selection |

| In Situ Characterization | High-temperature XRD, Raman spectroscopy | Monitor phase evolution in real time | Critical for identifying rate-limiting steps in reaction pathways |

Workflow Diagrams

Diagram 1: Kinetic Failure Analysis Pathway

Diagram 2: Kinetic Optimization Workflow

The Role of Driving Force Calculations in Predicting Kinetic Traps

Frequently Asked Questions (FAQs)

1. What is a kinetic trap in self-assembly or synthesis reactions? A kinetic trap is a metastable state that hinders the formation of the thermodynamically stable, ordered product. Even when the final ordered state is energetically favorable, the system becomes trapped in a disordered structure due to dynamics that prevent the components from rearranging into the correct configuration [15].

2. How do driving force calculations help predict kinetic traps? Driving force calculations, rooted in thermodynamic free energy landscapes, help identify the energetic favorability of the desired product versus off-pathway intermediates. By quantifying this, researchers can predict if proposed reaction conditions provide sufficient thermodynamic driving force to overcome activation barriers or if they risk populating stable, but undesired, trapped states [15].

3. What are the common experimental signatures of a kinetic trap? Common signs include:

- The reaction stalls at a high yield of incomplete or disordered clusters instead of forming the target structure [15].

- The final yield of the desired product is highly dependent on the initial conditions, such as concentration or temperature, rather than converging to a thermodynamically predicted value.

- Experiments show the formation of amorphous aggregates or gels instead of crystalline or other ordered phases [15].

4. My autonomous synthesis platform is producing inconsistent yields. Could kinetic trapping be the cause? Yes. In autonomous synthesis, if the AI proposes reaction conditions with overly strong interparticle bonds or excessively high concentrations to maximize yield, it can inadvertently push the system into a kinetically trapped regime. This results in high yield in some experiments but low yield in others due to the formation of off-pathway aggregates. Implementing driving force estimates as a constraint in the AI's decision-making process can help avoid these regions of parameter space [15].

5. What is the relationship between bond strength and kinetic trapping? Strong interparticle bonds are a primary cause of kinetic trapping. While strong bonds stabilize the final ordered state, they also make it difficult for incorrectly bonded subunits to break apart and re-arrange properly. Effective self-assembly often relies on a balance of many relatively weak, transient interactions, which allow for error correction through frequent bond-breaking and re-formation [15].

Troubleshooting Guides

Problem: Low Yield of Desired Ordered Product in Self-Assembly

Description: The reaction predominantly forms disordered, polydisperse clusters or aggregates instead of the target monodisperse structure (e.g., a viral capsid or a specific metal-organic framework).

Diagnosis: This is a classic symptom of kinetic trapping, often caused by an interaction energy that is too strong, preventing molecular reorganization [15].

Solution: Weaken the effective interparticle interactions to allow for error correction.

Step-by-Step Protocol:

- Monitor Reaction Progress: Use an in-situ analytical technique (e.g., NMR, DLS, or UV-Vis) to track the formation of the target product versus aggregates over time [16].

- Modify Interaction Strength:

- For molecular systems, adjust the solvent composition to reduce binding affinity (e.g., increase polarity).

- For colloidal systems, modify the surface chemistry or electrolyte concentration.

- Consider using a protecting group or a reversibly binding ligand to temporarily moderate interaction strength.

- Optimize Thermodynamic Driving Force: Systematically vary the concentration and temperature. The optimal yield often occurs at an intermediate concentration and a specific temperature range that provides sufficient driving force without inducing trapping [15].

- Implement a Ramp-and-Hold Protocol: Start the reaction at a elevated temperature where bonds are weak and rearrange easily, then slowly cool (ramp) to the final temperature to anneal the correct structure.

Problem: Slow or Incomplete Phase Transformation in Solid-State Synthesis

Description: The synthesis fails to convert a starting material into a desired metastable phase, or the transformation is impractically slow.

Diagnosis: The kinetic pathway to the metastable phase is hindered by a large energy barrier or competition with the formation of the stable phase.

Solution: Use an autonomous experimentation platform to rapidly explore ultrafast annealing conditions that can kinetically trap the metastable phase [17].

Step-by-Step Protocol:

- Sample Preparation: Deposit an amorphous thin-film library of the target material on a substrate via reactive sputtering [17].

- Autonomous Exploration with lg-LSA: Employ a system like the Scientific Autonomous Reasoning Agent (SARA) integrated with lateral gradient laser spike annealing (lg-LSA). This setup creates a spatial gradient of temperature and dwell time across the sample [17].

- Hierarchical Active Learning:

- The AI agent proposes the next set of lg-LSA synthesis parameters (e.g., laser power, scan speed).

- An inner autonomous loop performs rapid optical characterization on the processed stripe.

- The AI uses the data to update its model of the synthesis phase diagram and proposes the next most informative experiment [17].

- Identify Conditions: The autonomous loop will efficiently map the synthesis phase boundaries, identifying the specific time-temperature conditions (e.g., high quench rates of 10⁴ to 10⁷ K/s) required to stabilize the metastable phase, such as δ-Bi₂O₃, at room temperature [17].

Problem: Autonomous Optimization Algorithm Suggests Impractical Conditions

Description: The AI guiding your self-driving lab consistently suggests reaction parameters that lead to gelling, precipitation, or inconsistent results.

Diagnosis: The AI's objective function is likely focused only on maximizing the yield of the final product, ignoring the stability of intermediate states.

Solution: Reformulate the AI's optimization problem to incorporate constraints based on driving force calculations and real-time diagnostics.

Step-by-Step Protocol:

- Define a Multi-Objective Reward Function: Instead of rewarding only high final yield, also penalize the formation of aggregates. This can be done by incorporating in-situ light scattering data or viscosity measurements as negative terms in the reward function [16].

- Incorporate Physical Models: Integrate coarse-grained physical models that estimate the driving force for aggregation into the AI's decision-making process. The AI should be designed to avoid regions of parameter space predicted to have an excessively high driving force for disordered aggregation [15].

- Implement Bayesian Optimization with Constraints: Use advanced optimization algorithms that can handle "no-go" constraints. Define a constraint based on a real-time diagnostic signal (e.g., turbidity) and instruct the AI to avoid conditions that trigger it [18].

- Validate with Orthogonal Analytics: After the AI identifies an optimal condition, run a final validation experiment using a high-information technique like NMR or LC-MS to confirm the identity and purity of the product [16].

Quantitative Data and Experimental Parameters

The following table summarizes key parameters from documented studies on kinetic trapping and autonomous synthesis, providing a reference for your experimental design.

Table 1: Experimental Parameters in Kinetic Trap and Autonomous Synthesis Studies

| System / Platform | Key Parameter | Value / Range | Role in Kinetic Trapping & Synthesis |

|---|---|---|---|

| Viral Capsid Model [15] | Bond Strength (εb/T) | ~4.5 (Optimal) | Intermediate strength maximizes yield; stronger bonds (>5) cause trapping via disordered clusters. |

| Bond Strength (εb/T) | >5 (Trapping) | ||

| Lattice Gas Model [15] | Bond Energy (εb) | Variable | Strong bonds frustrate phase separation dynamics, leading to gelation and trapping. |

| SARA (Bi₂O₃ System) [17] | Quench Rate | 10⁴ - 10⁷ K/s | High quench rates enable kinetic trapping of metastable phases (e.g., δ-Bi₂O₃) at room temperature. |

| Peak Temperature (Tp) | Up to 1400°C | Explored to find non-equilibrium conditions for metastable phase formation. | |

| Autonomous NMR Platform [16] | Analysis Cycle | Continuous / On-the-fly | Provides real-time feedback on reaction composition, allowing the AI to adjust parameters before traps dominate. |

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Lateral Gradient Laser Spike Annealing (lg-LSA) [17] | Enables ultra-fast thermal processing with spatial gradients, allowing high-throughput mapping of time-temperature transformation diagrams for metastable materials. |

| Ising Lattice Gas Model [15] | A computational model used to study generic mechanisms of kinetic trapping during phase separation, providing insights into how strong bonds frustrate ordering. |

| Advanced Chemical Profiling (ACP) Software [16] | Automates the analysis and quantification of NMR data, providing machine-readable output for real-time feedback and control in autonomous workflows. |

| Bayesian Optimization [18] | An AI-driven approach used to guide experiments, efficiently navigating complex parameter spaces to find optimal conditions while potentially avoiding kinetic traps. |

| Thiosulfate Ion & Starch Indicator [19] | A classic chemical clock reaction system used to indirectly measure the initial rate of slow redox reactions by monitoring the time until a color change occurs. |

Workflow and Relationship Diagrams

Autonomous Synthesis Workflow

Kinetic Trap Relationship

AI-Powered Methodologies to Accelerate Reaction Kinetics

Frequently Asked Questions (FAQs)

Q1: What makes Bayesian Optimization (BO) particularly suitable for optimizing composition-spread films?

BO is ideal for this application because it is designed to optimize black-box functions that are expensive to evaluate, which perfectly describes the time-consuming and resource-intensive nature of fabricating and testing composition-spread films [20] [21] [22]. Its ability to balance exploration (testing uncertain regions of the composition space) and exploitation (refining areas known to yield good results) allows it to find optimal material compositions with a minimal number of experimental cycles [23] [24]. Furthermore, specialized BO methods have been developed specifically to select which elements should be compositionally graded in a spread film, a capability not offered by conventional optimization packages [20].

Q2: My autonomous loop is taking too long per cycle. Where are the common bottlenecks in a high-throughput workflow for Hall effect materials?

The primary bottlenecks in conventional workflows are often device fabrication (using multi-step lithography requiring photoresists, taking ~5.5 hours) and measurement setup (wire-bonding for individual devices, taking ~0.5 hours) [25]. A modern high-throughput system overcomes this by implementing:

- Photoresist-free laser patterning for device fabrication (~1.5 hours for 13 devices) [20] [25].

- Custom multichannel probes with pogo-pins for simultaneous measurement of multiple devices, eliminating wire-bonding (~0.2 hours for 13 devices) [20] [25].

- Combinatorial sputtering for depositing composition-spread films (~1-2 hours) [20].

Q3: How do I handle noisy measurements of anomalous Hall resistivity (e.g., due to film inhomogeneity) in my Bayesian Optimization model?

Gaussian Processes (GPs), the common surrogate model in BO, can directly incorporate measurement noise [21] [26]. When configuring your GP model, you can set a noise variance parameter (often called alpha or noise). This informs the model to treat deviations in the data below a certain threshold as noise, preventing it from overfitting to spurious measurements and leading to more robust optimization [21].

Q4: Our experimental results are not matching the model's predictions. How can we improve the performance of the Bayesian Optimization process?

Performance issues can often be traced to the initial samples or the acquisition function.

- Initialization: The BO process is sensitive to the initial set of random samples. Ensure you run an adequate number of random trials (e.g., 5-10) before the optimization begins to build a reasonable initial surrogate model [22].

- Acquisition Function Tuning: The parameter

xiin the Expected Improvement (EI) function controls the balance between exploration and exploitation. A value that is too high leads to excessive exploration, while a value too low causes the algorithm to get stuck in local optima. Experiment with different values ofxi(a common default is 0.01) to improve convergence [21] [22].

Troubleshooting Guides

Issue: Poor Convergence or Suboptimal Material Proposal

This occurs when the BO algorithm fails to find a composition that significantly improves the target property (e.g., anomalous Hall resistivity) within a reasonable number of cycles.

| Potential Cause | Diagnostic Steps | Resolution |

|---|---|---|

| Inadequate initial sampling | Check if the initial random samples cover the entire composition space evenly. A clustered initial dataset limits the model's global understanding. | Increase the number of random initial trials. Use space-filling designs like Latin Hypercube Sampling for initial data collection if possible. |

| Misconfigured acquisition function | Plot the acquisition function over the composition space. It may show a flat profile or maxima only in known regions. | Adjust the xi parameter in the Expected Improvement function. Increase xi to encourage more exploration of unknown compositions [23] [21]. |

| Inappropriate kernel for the Gaussian Process | Review the model's predictions; they may be overly smooth or too "wiggly," failing to capture the true landscape. | Change the GP kernel. The Matérn kernel is a good default for modeling physical properties. Experiment with different kernel lengthsales [21]. |

Issue: Integration Failure Between BO and Automated Hardware

The software fails to control the combinatorial sputtering system or parse data from the multichannel Hall probe.

| Potential Cause | Diagnostic Steps | Resolution |

|---|---|---|

| Incorrect input file format for deposition system | Manually check the generated recipe file against the system's required format. | Develop or use a dedicated Python program (e.g., nimo.preparation_input function) that automatically generates a correctly formatted input file from the BO proposal [20]. |

| Data structure mismatch after combinatorial experiment | Confirm that the analyzed experimental data is correctly mapped back to the candidate compositions in the database (candidates.csv). |

Implement an automated analysis function (e.g., nimo.analysis_output in "COMBAT" mode) that removes tested composition ranges from the candidate list and adds the new results with the correct composition labels [20]. |

Experimental Protocols

High-Throughput Anomalous Hall Effect (AHE) Workflow

The following protocol describes the integrated, high-throughput method for discovering materials with a large Anomalous Hall Effect [20] [25].

Title: Autonomous Closed-Loop AHE Experiment

Procedure:

- Composition-Spread Film Deposition:

- Use a combinatorial sputtering system equipped with a linear moving mask and substrate rotation.

- Co-sputter from multiple targets to create a thin film with a continuous composition gradient in one direction on a substrate (e.g., SiO₂/Si).

- Duration: ~1.3 - 2 hours [20] [25].

- Key Parameters: The BO algorithm selects which two elements (e.g., two 3d-3d or 5d-5d pairs) to grade compositionally.

Photoresist-Free Device Fabrication:

Simultaneous AHE Measurement:

- Use a custom multichannel probe with spring-loaded pogo-pins that contact all device terminals simultaneously.

- Install the probe in a Physical Property Measurement System (PPMS) with a superconducting magnet.

- Measure the Hall voltage of all devices while sweeping a perpendicular magnetic field to saturation.

- Duration: ~0.2 hours for 13 devices [20] [25].

Automatic Data Analysis and Bayesian Optimization:

- A Python program automatically analyzes the raw voltage data to calculate the anomalous Hall resistivity (({\rho }_{{yx}}^{A})) for each composition.

- The results are fed into the specialized BO algorithm (e.g., via the NIMO orchestration system).

- The algorithm updates its surrogate model and uses the acquisition function to propose the next composition-spread film to fabricate, specifying the elements to grade.

- The loop returns to Step 1.

Bayesian Optimization Algorithm for Composition-Spread Films

This protocol details the specific BO method used for composition-spread films, which extends standard BO to select which elements to grade [20].

Input: Initial candidate composition list (candidates.csv), prior Gaussian Process model.

Output: Proposal for the next composition-spread film (proposals.csv).

Procedure:

- Select a Promising Base Composition:

- Find the composition with the highest value from the acquisition function (e.g., Expected Improvement) using the current GP model. This is the same as conventional BO [20].

Score All Possible Element Pairs for Grading:

- For each possible pair of elements (e.g., Ni/Co, Ta/Ir):

- Generate

Lcompositions by creating a linear gradient between the two elements, while keeping the other elements fixed at the values from Step 1. - Evaluate the acquisition function for each of these

Lcompositions. - Calculate the score for this element pair by averaging the acquisition function values across the

Lcompositions [20].

- Generate

- For each possible pair of elements (e.g., Ni/Co, Ta/Ir):

Propose the Next Experiment:

- Select the element pair with the highest score to be compositionally graded in the next film.

- The proposal (

proposals.csv) will include theLspecific compositions for this gradient [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential materials and software for autonomous AHE materials discovery.

| Item | Function/Description | Example/Reference |

|---|---|---|

| Combinatorial Sputtering System | Deposits thin films with a continuous composition gradient on a single substrate by co-sputtering from multiple targets. | Systems with linear moving masks and substrate rotation [20] [25]. |

| Laser Patterning System | Enables photoresist-free fabrication of multiple Hall bar devices by ablating the film, drastically increasing throughput. | Direct-write laser systems [20] [25]. |

| Custom Multichannel Probe | Allows simultaneous measurement of Hall voltage from multiple devices using pogo-pins, eliminating slow wire-bonding. | Probes with 28+ pogo-pins designed for PPMS [20] [25]. |

| Bayesian Optimization Software | Orchestrates the closed-loop experiment. Selects next compositions to test by modeling the composition-property landscape. | NIMO, PHYSBO, GPyOpt [20]. |

| Ferromagnetic 3d Elements | Base elements providing ferromagnetism, essential for the Anomalous Hall Effect. | Fe, Co, Ni [20] [25]. |

| 5d Heavy Metals | Dopant elements with strong spin-orbit coupling, used to enhance the Anomalous Hall Effect. | Ta, W, Ir, Pt [20] [25]. |

| SiO₂/Si Substrate | Common, thermally oxidized silicon substrate for depositing amorphous magnetic thin films at room temperature. | Readily available and suitable for device integration [20]. |

Troubleshooting Guides

Q: The autonomous vessel becomes unstable and oscillates violently when trying to hold position (loiter) at a waypoint. How can this be resolved?

A: This is a known issue related to autopilot gain settings. The solution involves adjusting the specific parameter that controls the angular velocity gain for steering.

- Investigation Steps:

- Confirm the issue occurs specifically in

LOITERmode, even if waypoint navigation is stable. - Check the autopilot's tuning parameters for steering gains.

- Confirm the issue occurs specifically in

- Solution:

- Locate the parameter

ATC_STR_ANG_P(or its equivalent in your autopilot system). - Reduce the value of this gain. For example, if the default value is 5, try reducing it to 1 to dampen the aggressive steering response.

- Test the new parameter in a safe environment. The vessel should be stable in loiter mode, though it may still spin rapidly to acquire its heading [27].

- Locate the parameter

Q: The system fails to accurately measure drift velocity, which is critical for the ARROWS3 algorithm's route optimization. What should I check?

A: Drift measurement relies on precise GPS data and correct script execution.

- Investigation Steps:

- Verify the Lua script is actively running on the autopilot and that the correct command ID (e.g.,

86) is triggered by your mission waypoints [27]. - Check the data logging functionality. Ensure the autopilot's SD card has space and that the script correctly writes the drift data (starting latitude, longitude, timestamp, drift speed, and direction) to a CSV file [27].

- Monitor the telemetry link in real-time to see if drift data is being transmitted. A value of

-1typically indicates the system is not in drift mode, while a positive number shows the live drift distance [27].

- Verify the Lua script is actively running on the autopilot and that the correct command ID (e.g.,

- Solution:

- Review and validate the mission plan. Ensure waypoints are set to

loiter_timeto stabilize the vessel before drift, and are followed by aSCRIPT_TIMEcommand to activate the drift script [27]. - If wind is a significant source of error, consider using a vessel with a minimal above-waterline profile or implementing a drogue system to better couple the vessel with water currents [27].

- Review and validate the mission plan. Ensure waypoints are set to

Q: The calculated optimal route does not yield the expected improvement in travel time or efficiency. What could be the cause?

A: This can stem from issues with the input data or the optimization constraints.

- Investigation Steps:

- Review Data Currency: The ARROWS3 algorithm uses a measured velocity field. If there is a significant time delay between measuring the currents and the vessel traversing the route, the flow conditions may have changed, making the data obsolete [27].

- Check Mission Definition: Verify that the mission's goals and constraints (e.g., patrol zone boundaries, no-go areas) are correctly programmed into the algorithm. An "optimal" route is only optimal for the defined mission [27].

- Validate the Velocity Field: Examine the raw drift measurements and the subsequent spatial interpolation. Ensure the survey points form a sensible grid (like a rectangle) to maximize the area where the algorithm performs reliable interpolation instead of less accurate extrapolation [27].

- Solution:

- Use a faster survey vessel to minimize the time between measurement and route execution.

- Re-run the current measurement survey immediately before the optimized mission.

- Visually inspect the interpolated velocity field for anomalies or inconsistencies with observed conditions [27].

Frequently Asked Questions (FAQs)

Q: What is the core principle behind the ARROWS3 algorithm for route optimization?

A: The ARROWS3 algorithm uses real-time, on-site measurements of surface currents (and other drift forces) to build a velocity field map. It then calculates a vessel's path through this dynamic field to minimize travel time or energy consumption by leveraging favorable currents and avoiding adverse ones [27].

Q: Why is a Lua script used in the data collection phase?

A: A Lua script is integrated into the autopilot to create a custom "drift mode" that is not a standard function. This script automates the process of stopping the propulsion, logging high-frequency GPS data to calculate drift velocity and direction, and resuming the mission—all essential for gathering the data the ARROWS3 algorithm needs to function [27].

Q: For scientific current measurement, what is a limitation of using a standard autonomous surface vessel (ASV)?

A: A standard ASV's drift is influenced by wind and waves in addition to current. For pure oceanographic data, this adds noise. Scientific drift buoys use a drogue (sea anchor) to minimize wind drift and better measure water movement. An ASV like n3m02 was observed to be noticeably susceptible to drifting with the wind [27].

Q: How does the algorithm handle the inherent delay between measuring currents and executing an optimized route?

A: This is a recognized source of uncertainty. The algorithm itself cannot compensate for changing conditions between the survey and the mission. The primary strategy is to minimize this delay by using a fast survey vessel and conducting measurements as close in time to the main mission as possible [27].

The Scientist's Toolkit: Research Reagent Solutions

The following materials are essential for implementing the ARROWS3-based autonomous measurement and optimization system.

| Item Name | Function |

|---|---|

| Autonomous Vessel Platform | A reliable, robotic boat that serves as the physical platform for transporting sensors, an autopilot, and a propulsion system. |

| GPS Receiver | Provides high-precision, real-time positional data essential for calculating speed, direction, and drift velocity [27]. |

| Programmable Autopilot | The central control unit (e.g., Matek F765-WING) that executes navigation commands, runs custom scripts, and manages sensor data [27]. |

| Lua Scripting Environment | Allows for the creation and execution of custom automation scripts on the autopilot, such as the one used to initiate and log drift measurements [27]. |

| Telemetry System | Enables real-time, wireless communication between the autonomous vessel and a ground control station for monitoring and intervention [27]. |

Experimental Protocol: Drift Measurement for Velocity Field Mapping

Objective: To autonomously collect surface drift data at predefined points within a survey area to construct a velocity field for the ARROWS3 route optimization algorithm.

Methodology:

- Mission Planning:

- Define a rectangular grid of waypoints within the target survey area to maximize the zone for reliable spatial interpolation [27].

- Program each waypoint in the autopilot mission as a

loiter_timepoint with a short hold time (e.g., 10 seconds) to allow the vessel to stabilize [27]. - Immediately after each

loiter_timewaypoint, program aSCRIPT_TIMEcommand (e.g., ID 86) with arguments to initiate drifting for a set duration (e.g., 50 seconds) and a safety radius (e.g., 10 meters) [27].

- Autonomous Execution:

- Deploy the vessel to execute the mission.

- The vessel will navigate to each waypoint, loiter, and then the Lua script will disable the motors and begin logging GPS data to calculate drift [27].

- Data Collection:

- Data is recorded in real-time via telemetry and stored in a CSV file on the autopilot's SD card. Key data points include [27]:

- Start Latitude and Longitude

- Timestamp

- Drift Speed

- Drift Direction

- Data is recorded in real-time via telemetry and stored in a CSV file on the autopilot's SD card. Key data points include [27]:

ARROWS3 System Workflow

The following diagram illustrates the core operational workflow of the ARROWS3 autonomous measurement and optimization system.

Data Flow for Route Optimization

This diagram details the data processing pipeline, from raw measurement to optimized route command.

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why is my multimodal model failing to outperform my best unimodal model? This common issue often stems from inadequate fusion techniques or poor modality alignment. The heterogeneity of data sources (e.g., spectral, imaging) means they may contain complementary but differently structured information. Evaluate different fusion strategies: late fusion (combining model decisions), early fusion (combining raw features), or advanced methods like MultConcat multimodal fusion, which achieved 89.3% accuracy in recognizing dangerous actions by effectively capturing cross-modal interactions [28] [29]. Ensure your modality encoders are robust enough to extract useful features before fusion.

Q2: How can I handle missing spectroscopic data in my kinetic analysis? Implement fusion techniques robust to missing modalities. Some advanced algorithms can compensate for information loss by using available modalities to infer missing data, which is particularly valuable in experimental settings where certain measurements might fail [29]. Consider coordinated representations that maintain relationships between modalities even when some are absent [28].

Q3: What's the optimal approach for fusing time-resolved spectroscopic and imaging data for kinetic modeling? For temporal data, consider techniques that preserve timing relationships across modalities. Alignment becomes crucial—explicit alignment for directly corresponding sub-components or implicit alignment using latent representations for loosely connected temporal sequences [28]. Ensure sufficient temporal resolution in your fastest modality to capture critical kinetic events.

Q4: How can I validate that my fused model truly leverages complementary information across modalities? Ablation studies are essential. Systematically remove each modality and observe performance degradation. Additionally, analyze whether the model captures expected complementary relationships; for instance, in spectroscopic data fusion, ensure the model leverages both MIR and Raman complementarities rather than relying predominantly on one modality [30].

Q5: What computational resources are typically required for complex multimodal fusion? Memory requirements vary significantly by fusion technique. Late fusion typically uses more memory during prediction as it maintains multiple models, while early fusion consumes more memory during training due to concatenated high-dimensional features [29]. For spectroscopic data, Complex-level Ensemble Fusion (CLF) adds computational overhead but provides superior predictive accuracy for complex regression tasks [30].

Troubleshooting Common Experimental Issues

Problem: Sluggish reaction kinetics hindering material synthesis Background: This parallels issues encountered in autonomous materials synthesis, where 19% of failed targets faced kinetic hurdles, particularly reactions with low driving forces (<50 meV per atom) [1]. Solution: Implement active learning cycles that identify and avoid kinetic traps. The A-Lab system successfully optimized synthesis routes by prioritizing intermediates with larger driving forces (e.g., increasing from 8 meV to 77 meV per atom) to overcome sluggish kinetics [1]. Consider designing alternative reaction pathways that bypass slow-reacting intermediates.

Problem: Discrepancies between different spectroscopic techniques during kinetic measurements Background: Each spectroscopic method (UV-visible, IR, fluorescence, Raman) has unique advantages and limitations for kinetic studies [31]. Solution: Systematically compare kinetic parameters from multiple techniques to validate results and gain comprehensive mechanistic understanding. For example, UV-visible and fluorescence excel at monitoring electronic transitions, while IR and Raman are better for vibrational transitions [31]. Use discrepancies to identify complex reaction mechanisms rather than treating them as experimental error.

Problem: Ineffective fusion of complementary spectroscopic data Background: Traditional data fusion methods often fall short with disparate spectroscopic data, limiting predictive performance [30]. Solution: Implement Complex-level Ensemble Fusion (CLF), which jointly selects variables from concatenated spectra (e.g., MIR and Raman), projects them with partial least squares, and stacks latent variables into a boosted regressor. This approach has demonstrated significantly improved predictive accuracy by capturing feature- and model-level complementarities in a single workflow [30].

Problem: Insufficient data for training robust multimodal kinetics models Background: Many real-world applications have limited samples (e.g., fewer than one hundred), making conventional ML approaches challenging [30]. Solution: Leverage co-learning techniques that transfer knowledge from data-rich modalities to data-poor ones [28]. Additionally, employ data augmentation specific to each modality and consider coordinated representation learning that creates a shared space across modalities even with limited data.

Comparison of Multimodal Fusion Techniques

Table 1: Performance comparison of fusion techniques across different applications

| Fusion Technique | Application Domain | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| MultConcat Fusion | Dangerous action recognition | Accuracy | 89.3% | [28] |

| Complex-level Ensemble Fusion (CLF) | Spectroscopic data (MIR+Raman) | Predictive accuracy | Significantly improved vs. established methods | [30] |

| Late Fusion | General classification | Model performance | Varies by modality impact | [29] |

| Early Fusion | General classification | Model performance | Effective for interconnected modalities | [29] |

| Sketch | General classification | Model performance | Creates common representation space | [29] |

Spectroscopic Methods for Kinetic Measurements

Table 2: Characteristics of spectroscopic methods for kinetic analysis

| Method | Timescale | Key Applications | Advantages | Limitations |

|---|---|---|---|---|

| UV-visible spectroscopy | Seconds to minutes | Concentration changes, electronic transitions | Broad applicability, follows Beer-Lambert law | Requires chromophore |

| Infrared spectroscopy | Seconds to minutes | Vibrational transitions, functional groups | Specific molecular information | Affected by solvent absorption |

| Fluorescence spectroscopy | Nanoseconds to microseconds | Fast reactions, aromatic compounds | High sensitivity, fast temporal resolution | Requires fluorescent species |

| Raman spectroscopy | Seconds to minutes | Aqueous solutions, inorganic compounds | Minimal water interference | Weak signals, specialized equipment needed |

Experimental Protocols

Protocol 1: Complex-level Ensemble Fusion for Spectroscopic Data

This protocol outlines the CLF method for fusing mid-infrared (MIR) and Raman spectroscopic data to enhance kinetic modeling [30].

Materials Required:

- Paired MIR and Raman spectra datasets

- Computational environment for machine learning

- Genetic algorithm implementation

- Partial Least Squares (PLS) regression capability

- XGBoost regressor

Procedure:

- Data Preprocessing: Normalize both MIR and Raman spectra to account for instrumental variations.

- Variable Selection: Jointly select informative variables from concatenated MIR and Raman spectra using a genetic algorithm.

- Projection: Project the selected variables using Partial Least Squares to create latent variables that capture covariance between spectroscopic features and target kinetic parameters.

- Ensemble Stacking: Stack the latent variables from both modalities into an XGBoost regressor.

- Model Validation: Validate using cross-validation against single-modality models and traditional fusion approaches.

Expected Outcomes: CLF consistently demonstrates significantly improved predictive accuracy compared to single-source models and classical fusion schemes by effectively leveraging complementary spectral information [30].

Protocol 2: Active Learning for Overcoming Sluggish Kinetics

Adapted from autonomous materials synthesis research, this protocol addresses kinetic barriers in reactions [1].

Materials Required:

- Robotic synthesis platform (optional but beneficial)

- In-situ characterization (e.g., XRD for materials, spectroscopy for molecular systems)

- Computational thermodynamics database

- Active learning implementation

Procedure:

- Initial Synthesis Proposal: Generate initial conditions using literature-inspired models or analogy to known systems.

- Reaction Execution: Perform synthesis under proposed conditions.

- Product Characterization: Quantify target yield using appropriate analytical techniques.

- Pathway Analysis: Identify intermediate phases or species and compute driving forces to final product using thermodynamic data.

- Recipe Optimization: Prioritize pathways with larger driving forces (>50 meV per atom) and avoid intermediates with small driving forces.

- Iterative Refinement: Continue active learning cycle until target is obtained or all possibilities exhausted.

Expected Outcomes: This approach successfully identified synthesis routes with improved yield for multiple targets, including a ~70% yield increase for CaFe₂P₂O₉ by avoiding low-driving-force intermediates [1].

Workflow Visualization

Multimodal Kinetics Analysis Workflow

Active Learning for Kinetics Optimization

Research Reagent Solutions

Table 3: Essential materials and computational tools for multimodal kinetics research

| Item | Function | Application Context |

|---|---|---|

| MIR and Raman spectrometers | Complementary vibrational spectroscopy | Capturing different aspects of molecular structure and changes [30] |

| Time-resolved transient absorption spectrometer | Studying fast reaction kinetics (subpicosecond) | Monitoring short-lived intermediates in photochemical reactions [32] |

| Fluorescence lifetime spectrometer | Tracking emission decay kinetics | Studying energy transfer processes and molecular interactions [32] |

| Genetic algorithm optimization | Variable selection from multimodal data | Identifying most informative features across spectroscopic modalities [30] |

| XGBoost regressor | Ensemble modeling for fused data | Integrating latent variables from multiple modalities for improved prediction [30] |

| Ab initio computational databases | Thermodynamic driving force calculations | Predicting reaction pathways and identifying kinetic barriers [1] |

Large Language Models for Precursor Selection and Reaction Condition Prediction

Core Concepts and Troubleshooting

What are the primary causes of sluggish reaction kinetics, and how can LLMs help diagnose them?

Sluggish reaction kinetics, a major failure mode in autonomous synthesis, often stems from reaction steps with low driving forces (typically <50 meV per atom), which present a significant energy barrier for the reaction to proceed [1]. Other common causes include slow solid-state diffusion, precursor volatility, and unwanted amorphization of materials [1].

LLMs, particularly when operating in an "active" environment with access to computational tools, can diagnose these issues by calculating and analyzing reaction energies and identifying potential kinetic traps [33] [34]. For instance, an LLM agent can be prompted to calculate the driving force for each proposed reaction step. If the driving force is below the 50 meV/atom threshold, it can flag this step as high-risk for kinetic failure and proactively suggest an alternative pathway [1].

My LLM keeps "hallucinating" implausible precursors or reaction conditions. How can I mitigate this?

Hallucination is a critical failure mode where the LLM generates information not grounded in chemical reality, which can be dangerous in an experimental context [33] [34]. This occurs most frequently when the LLM is used in a "passive" mode, relying solely on its training data without access to external, grounding tools [33].

Mitigation Strategies:

- Integrate External Tools: Ground the LLM's responses by connecting it to external knowledge bases and software. Essential tools include:

- Employ Fine-Tuning: Fine-tune a base LLM (e.g., LLaMA, Qwen) on high-quality, domain-specific datasets such as the USPTO (containing ~50k reactions) or Reaxys (containing over 1 million experimental reactions) [35] [36] [37]. This teaches the model the precise "grammar" of chemistry.

- Implement Agent Frameworks: Use frameworks like ReAct (Reason + Act) that force the LLM to cycle through a process of reasoning about the task, taking an action (e.g., querying a database), and observing the result before proceeding. This breaks down complex tasks and grounds each step in real data [34].

This is a fundamental challenge as most LLMs are primarily text-based, while chemical research is inherently multimodal [33]. The agent likely lacks a designed architecture to process and cross-reference different data types.

Solution: Implement a multi-agent or tool-based architecture. A well-designed system like ChemCrow uses a single LLM as a "reasoning engine" that orchestrates multiple specialized tools [34]. For example:

- One specialized tool (or a separately fine-tuned LLM) can be dedicated to interpreting spectral data [35].

- Another tool can search synthesis literature using natural language queries [36].

- The main LLM agent then takes the outputs from these specialized tools and synthesizes them into a coherent answer or plan [34] [37].

How reliable are current LLMs at predicting synthesizability compared to traditional methods?

Recent specialized LLMs have demonstrated superior performance in predicting the synthesizability of inorganic crystals compared to traditional thermodynamic or kinetic stability measures. The Crystal Synthesis LLM (CSLLM) framework, for instance, has achieved state-of-the-art accuracy.

Table 1: Comparison of Synthesizability Prediction Methods

| Prediction Method | Metric | Reported Accuracy | Key Limitation |

|---|---|---|---|

| Synthesizability LLM (CSLLM) [38] | Accuracy on testing data | 98.6% | Requires large, high-quality datasets for fine-tuning |

| Thermodynamic Stability [38] | Energy above hull ≥ 0.1 eV/atom | 74.1% | Many metastable phases are synthesizable |

| Kinetic Stability [38] | Lowest phonon frequency ≥ -0.1 THz | 82.2% | Computationally expensive; structures with imaginary frequencies can be synthesized |

What are the key safety considerations when using LLMs to guide actual laboratory synthesis?

Safety is paramount, as LLM errors can lead to hazardous situations [33].

- Grounding in Reality: Always use the LLM in an "active" environment with tools that can check for chemical incompatibilities (e.g., reactive functional groups, strong oxidizers/reducers) [34].

- Human-in-the-Loop: Implement mandatory human review and approval for all synthesis procedures, especially those involving high-energy reactions or toxic precursors, before any physical execution [33] [34].

- Explicit Safety Checks: Program the agent to explicitly run safety checks before finalizing a procedure. For example, ChemCrow can be instructed to check the safety data sheets (SDS) of all proposed chemicals [34].

- Fail-Safes for Robotics: When connected to automated labs, ensure robotic platforms have built-in physical safety protocols (e.g., pressure release valves, inert atmosphere capabilities) that are independent of the LLM's control [1].

Advanced Optimization and Workflows

Can you provide a detailed protocol for using an LLM agent to plan and optimize a synthesis?

The following protocol is adapted from the workflows of autonomous systems like Coscientist [33], A-Lab [1], and ChemCrow [34].

Objective: Plan and execute the synthesis of a target molecule (e.g., an organocatalyst) via an LLM-powered autonomous agent.

Experimental Protocol:

Task Formulation:

- Provide the agent with a clear, natural language prompt. Example: "Find a thiourea organocatalyst that accelerates the Diels-Alder reaction. Plan and execute its synthesis." [34].

Molecular Identification and Validation:

- The agent uses its integrated tools to search scientific literature for suitable candidate molecules.

- It then verifies the molecular structures and properties by cross-referencing chemical databases (e.g., PubChem via an API call).

Retrosynthesis Planning:

- The agent calls a retrosynthesis prediction tool (e.g., AIZynthFinder) with the validated molecule's SMILES string as input.

- The tool returns several possible retrosynthetic pathways. The LLM reasons about the most feasible route based on precursor complexity and cost.

Precursor and Condition Selection:

- The agent checks the availability and safety of the proposed precursors.